Virtual Screening of Natural Product Scaffold Libraries: A Strategic Guide for Modern Drug Discovery

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the virtual screening of natural product scaffold libraries.

Virtual Screening of Natural Product Scaffold Libraries: A Strategic Guide for Modern Drug Discovery

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the virtual screening of natural product scaffold libraries. It explores the foundational importance of natural product scaffolds in drug discovery, details advanced computational methodologies including machine learning and structure-based approaches, addresses common challenges and optimization strategies in screening workflows, and discusses critical validation and comparative analysis techniques. By integrating the latest research and case studies, the article aims to bridge computational predictions with experimental success, offering practical insights for leveraging nature's chemical diversity in the search for novel therapeutics.

Unlocking Nature's Chemical Blueprint: Foundations of Natural Product Scaffolds and Virtual Screening

Natural products (NPs) and their derivatives constitute a foundational pillar of modern pharmacotherapy, accounting for over one-third of all new chemical entities approved as drugs in the past four decades [1]. Despite historical dominance, their pursuit in drug discovery faced significant challenges, including technical barriers to screening and isolation. The current renaissance in NP research is fueled by advanced computational technologies, particularly virtual screening and artificial intelligence, which are overcoming these obstacles. By enabling the efficient exploration of NP chemical space, in silico methods have revitalized interest in NPs, especially for urgent needs like antimicrobial resistance and oncology. This article, framed within a broader thesis on virtual screening of NP scaffold libraries, provides detailed application notes and protocols for researchers. It highlights integrated workflows that combine computational prediction with experimental validation, demonstrating a powerful paradigm for identifying novel therapeutic agents from nature's chemical treasury [1] [2] [3].

Natural products have an unparalleled historical track record as sources of therapeutic agents. From ancient concoctions to modern, purified drugs, they have treated a vast array of human ailments. Historically, the discovery of bioactive NPs relied heavily on ethnobotanical knowledge, followed by bioactivity-guided fractionation—a process that is time-consuming, resource-intensive, and often yields low quantities of target compounds [2] [4].

In modern pharmaceutical pipelines, NPs have been particularly dominant in the fields of oncology and infectious diseases. Notable examples include the anticancer agents paclitaxel and vinblastine, and the antimalarial drugs quinine and artemisinin [1] [4]. The inherent biological relevance of NPs stems from their evolutionary roles as signaling molecules or chemical defense agents, making them inherently predisposed to interact with biological targets. Chemically, NPs exhibit greater structural complexity, a higher number of chiral centers, and a richer proportion of oxygen atoms compared to typical synthetic libraries, occupying a distinct and valuable region of chemical space [2] [4].

However, from the 1990s onward, major pharmaceutical companies de-prioritized NPs in favor of combinatorial chemistry and high-throughput screening (HTS) of synthetic libraries. This shift was driven by several perceived challenges associated with NPs: the complexity of isolating pure compounds, difficulties in synthesizing analogs, incompatibility with robotic HTS due to interference compounds like tannins, and concerns regarding sustainable supply and intellectual property [5] [1].

Today, the field is experiencing a robust resurgence. This revival is powered not by abandoning modern technology, but by leveraging it to solve traditional NP challenges. The integration of virtual screening, machine learning, and sophisticated analytical chemistry has created a new, rational paradigm for NP-based drug discovery. This paradigm allows researchers to prioritize the most promising candidates from vast digital libraries before committing to labor-intensive laboratory work, thereby increasing efficiency and success rates [1] [6] [3]. The following sections detail the methodologies and protocols underpinning this modern approach.

Table 1: The Impact and Characteristics of Natural Products in Drug Discovery

| Metric | Data | Source/Notes |

|---|---|---|

| FDA-Approved Drugs (1981-2019) | >50% are derived or inspired by natural products [2]. | Includes unaltered NPs, derivatives, and synthetic compounds with NP pharmacophores. |

| Plant-Based FDA Drugs | Approximately one-quarter are plant-based [4]. | Examples: morphine, paclitaxel, digoxin. |

| Chemical Space Distinctiveness | Higher structural complexity, more sp³-hybridized carbons, oxygen atoms, and chiral centers vs. synthetic libraries [2]. | Leads to unique, biologically relevant molecular shapes. |

| Primary Therapeutic Areas | Cancer, infectious diseases, cardiovascular & metabolic disorders [1] [4]. | Historically and currently the most productive areas. |

Foundational Methods and Strategies

Modern virtual screening (VS) of NP libraries employs a hierarchical, multi-filter strategy to manage the enormous chemical and structural diversity of NP collections. This process systematically narrows millions of compounds to a handful of experimentally testable candidates [2].

2.1. Library Preparation and Curation The initial and critical step is constructing a high-quality, digitally accessible NP library. Sources include public databases like COCONUT, ZINC Natural Products, NPASS, and commercial collections [3] [7]. The library must be "cleaned" by removing duplicates, salts, and metals, and standardizing structures (e.g., generating canonical SMILES). 3D conformer generation is essential for structure-based methods, while calculating molecular descriptors and fingerprints enables ligand-based screening and machine learning [2].

2.2. Core Virtual Screening Approaches Two primary computational philosophies are employed, often in tandem:

Ligand-Based Virtual Screening: Used when the 3D structure of the target is unknown but known active ligands exist. Methods include:

- Pharmacophore Modeling: Identifies compounds that match the essential spatial arrangement of chemical features (hydrogen bond donor/acceptor, hydrophobic region, etc.) required for bioactivity [5].

- Quantitative Structure-Activity Relationship (QSAR): Uses statistical or machine learning models to correlate calculated molecular descriptors with biological activity, predicting activity for new compounds [8].

- Similarity Searching: Ranks compounds based on molecular fingerprint similarity to known actives.

Structure-Based Virtual Screening: Used when a 3D protein structure (from X-ray crystallography or homology modeling) is available.

- Molecular Docking: Computationally "docks" small molecules into the target's binding site, scoring and ranking them based on predicted binding affinity and interaction geometry. This is the most common VS method [2] [7].

- Molecular Dynamics (MD) Simulations: Used on top-ranked docking hits to assess the stability of the protein-ligand complex over time and calculate more accurate binding free energies (e.g., via MM/GBSA) [7] [8].

2.3. Integration of Artificial Intelligence AI and machine learning are transforming VS. Graph Neural Networks (GNNs) can directly learn from molecular graph structures (atoms as nodes, bonds as edges) to predict bioactivity or binding affinity with high accuracy [6] [9]. Deep learning models can also be used for de novo design of NP-inspired compounds or to prioritize NPs from complex metabolomics datasets [6].

2.4. ADME/Tox and Drug-Likeness Prediction Computational filters predict Absorption, Distribution, Metabolism, Excretion, and Toxicity properties. Tools like QikProp or SwissADME assess compliance with rules like Lipinski's Rule of Five and predict parameters such as intestinal permeability, blood-brain barrier penetration, and potential hERG channel inhibition [7]. This ensures that hits have a viable path to becoming oral drugs.

2.5. Experimental Validation A critical final step. In silico hits must be validated through in vitro assays (e.g., enzymatic inhibition, cell-based viability assays) and, for the most promising, in vivo studies. As noted in a special issue on VS, "experimental validation of in silico results is mandatory" [3].

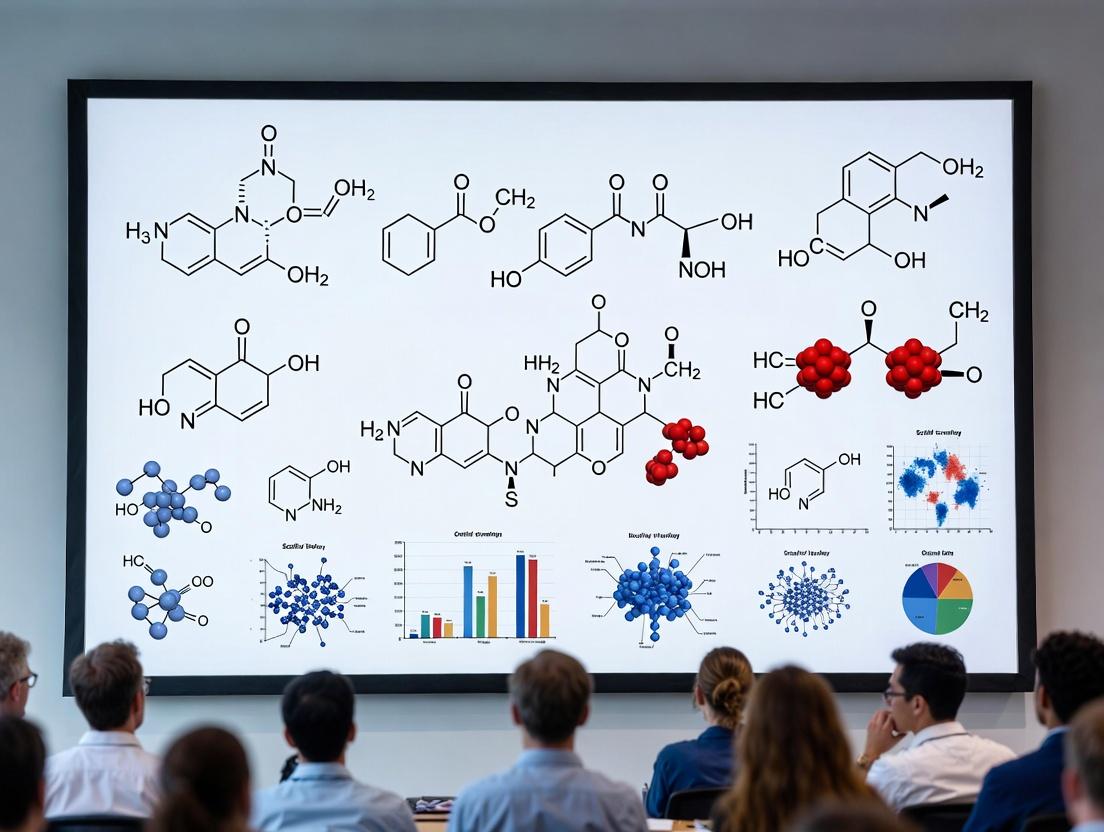

Diagram 1: Hierarchical Virtual Screening Workflow for NPs. This flowchart illustrates the multi-stage, integrated process for discovering bioactive natural products, from digital library preparation to experimental validation.

Application Notes & Protocols: Case Studies

Objective: To identify novel natural product inhibitors of HER2 tyrosine kinase for breast cancer therapy using a tiered structure-based virtual screening workflow. Thesis Context: This protocol exemplifies a high-throughput, structure-based VS pipeline applied to a large, diverse NP library (~639,000 compounds), demonstrating efficient hit identification for a well-defined oncology target.

Materials & Software:

- NP Library: Compiled from 9 databases (COCONUT, ZINC NP, SANCDB, etc.).

- Protein Structure: HER2 kinase domain (PDB ID: 3RCD).

- Software Suite: Schrödinger Suite (Maestro, LigPrep, Protein Preparation Wizard, Glide).

- Validation Set: 18 known HER2 inhibitors (actives) + decoy molecules.

Procedure:

Library and Target Preparation:

- Prepare the NP library using LigPrep: generate 3D structures, possible ionization states at pH 7.0 ± 2.0, and stereoisomers.

- Prepare the HER2 protein structure: remove water molecules, add hydrogens, optimize H-bonds, and perform restrained minimization.

- Generate a receptor grid box (20x20x20 Å) centered on the co-crystallized ligand (TAK-285).

Docking Protocol Validation:

- Use the GLIDE enrichment calculator. Dock the validation set (actives + decoys) into the prepared HER2 grid.

- Calculate enrichment metrics (e.g., ROC-AUC, EF at 1% and 5%). A robust protocol should significantly enrich known actives in the top-ranked positions.

Three-Tiered Virtual Screening:

- Tier 1 - High-Throughput Virtual Screening (HTVS): Dock the entire NP library (~639,000 compounds) using the fast HTVS mode in Glide.

- Tier 2 - Standard Precision (SP) Docking: Select the top 10,000 compounds from HTVS (score ≥ -6.00 kcal/mol) and re-dock using the more accurate SP mode.

- Tier 3 - Extra Precision (XP) Docking: Select the top 500 compounds from SP docking for final, rigorous XP docking.

Hit Selection and Analysis:

- Rank compounds by Glide XP docking score (GScore) and visual inspection of binding poses.

- Prioritize compounds based on commercial availability, structural novelty, and favorable interactions with key HER2 residues (e.g., Met801 gatekeeper).

Post-Docking Analysis & Experimental Triaging:

- Perform induced-fit docking (IFD) on top hits to account for side-chain flexibility.

- Predict ADME properties for selected hits using QikProp.

- Subject top-ranked, commercially available hits (e.g., liquiritin, oroxin B) to in vitro HER2 kinase inhibition and cell proliferation assays.

Key Outcomes: This protocol identified liquiritin as a potent HER2 inhibitor (nanomolar biochemical activity, selective anti-proliferative effect in HER2+ cells), validated through in vitro assays, demonstrating the pipeline's effectiveness [7].

Objective: To identify natural product inhibitors of New Delhi Metallo-β-Lactamase-1 (NDM-1) to combat antibiotic-resistant bacteria. Thesis Context: This protocol showcases the integration of machine learning-based activity prediction (QSAR) with molecular docking and dynamics, creating a focused, knowledge-guided screening funnel for a challenging antimicrobial target.

Materials & Software:

- NP Library: 4,561 compounds from a focused natural product-based library (ChemDiv).

- Protein Structure: NDM-1 (PDB ID: 4EYL).

- Software/Tools: RDKit (descriptor calculation), Scikit-learn (ML models), AutoDock Vina (docking), GROMACS (MD), MMPBSA.py (binding energy).

Procedure:

Develop ML-Based QSAR Model:

- Data Curation: From ChEMBL, extract compounds with reported MIC (Minimum Inhibitory Concentration) values against NDM-1. Preprocess by removing duplicates and normalizing activity values (e.g., pMIC).

- Descriptor Calculation: Calculate molecular descriptors (e.g., MACCS keys, Morgan fingerprints) for all compounds using RDKit.

- Model Training & Selection: Split data into training/test sets (70/30). Train multiple regression models (Random Forest, Gradient Boosting, SVM, etc.). Select the best model based on the coefficient of determination (R²) on the test set.

Predictive Screening of NP Library:

- Calculate the same descriptors for the 4,561 NP library compounds.

- Use the trained QSAR model to predict the inhibitory activity (pMIC) for each NP.

- Filter and select all NPs predicted to be more active than a control inhibitor (e.g., meropenem).

Structure-Based Virtual Screening:

- Prepare the NDM-1 protein (remove water, add hydrogens) and define the binding site grid around the catalytic zinc ions.

- Prepare the 3D structures of the QSAR-filtered NPs (energy minimization).

- Perform molecular docking of the filtered set against NDM-1 using AutoDock Vina (exhaustiveness=10). Retain compounds with better docking scores than the control.

Clustering and Pose Analysis:

- Cluster the top-scoring hits based on Tanimoto similarity of their fingerprints to ensure chemical diversity.

- Select representative compounds from major clusters for further study (e.g., S904-0022).

Validation via Molecular Dynamics (MD):

- Solvate the protein-ligand complex in a water box, add ions, and minimize.

- Run a 300 ns MD simulation for the top hits and the control.

- Analyze stability (Root Mean Square Deviation - RMSD), interactions, and calculate binding free energy using the MM/GBSA method.

Key Outcomes: This integrated protocol identified compound S904-0022 as a stable binder of NDM-1 with a predicted binding free energy (-35.77 kcal/mol) significantly more favorable than the control, marking it as a promising candidate for experimental validation [8].

Table 2: Comparison of Featured Virtual Screening Protocols

| Aspect | Protocol 1: HER2 Inhibitor Discovery [7] | Protocol 2: NDM-1 Inhibitor Discovery [8] |

|---|---|---|

| Primary VS Strategy | Structure-Based (Tiered Docking: HTVS > SP > XP) | Hybrid (Ligand-Based ML-QSAR + Structure-Based Docking) |

| Library Size | ~639,000 compounds | 4,561 pre-filtered compounds |

| Key Computational Tools | Schrödinger Glide, QikProp | RDKit, Scikit-learn, AutoDock Vina, GROMACS |

| Pre-Filtering Method | Docking score cut-offs at each tier | Machine Learning QSAR model for activity prediction |

| Post-Docking Validation | Induced-Fit Docking, ADME prediction, in vitro assays | Molecular Dynamics (300 ns), MM/GBSA binding energy calculation |

| Key Identified Hit | Liquiritin | S904-0022 |

| Experimental Validation | In vitro kinase assay & cell proliferation | In silico MD & binding energy (awaiting biochemical assay) |

Diagram 2: Integrated ML-QSAR & Docking Workflow. This diagram details the hybrid ligand- and structure-based protocol used for target-focused screening, such as in the discovery of NDM-1 inhibitors.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools & Resources for NP Virtual Screening

| Tool/Resource Name | Category | Primary Function in NP Research | Application Example |

|---|---|---|---|

| Schrödinger Suite (Maestro, Glide, QikProp) [7] | Integrated Drug Discovery Platform | End-to-end workflow: protein prep, molecular docking (HTVS/SP/XP), ADME prediction, and visualization. | Tiered docking and analysis of NP libraries against kinase targets (e.g., HER2). |

| AutoDock Vina / AutoDockTools [8] | Docking Software | Performing flexible ligand docking with a fast scoring function; defining protein grid boxes. | Structure-based screening of NPs against enzyme targets like NDM-1. |

| RDKit [9] [8] | Cheminformatics Toolkit | Handles molecular I/O, descriptor/fingerprint calculation, substructure searching, and molecule manipulation. | Preparing NP libraries, generating descriptors for QSAR, and clustering compounds. |

| PyTorch Geometric [9] | Deep Learning Library | Building and training Graph Neural Network (GNN) models directly on molecular graph data. | Creating AI models to predict NP bioactivity from structural graphs. |

| VirtuDockDL [9] | AI-Powered Pipeline | An integrated web platform using GNNs for activity prediction and docking for virtual screening. | High-throughput, automated screening of large compound libraries against viral or cancer targets. |

| GROMACS [8] | Molecular Dynamics Engine | Simulating the physical movements of atoms and molecules over time to assess complex stability. | Running 300 ns MD simulations on NP-protein complexes to validate docking poses and calculate free energy. |

| Open Babel | Chemical File Tool | Converting between numerous chemical file formats, essential for library curation. | Standardizing NP library files from different databases into a common format (e.g., SDF to MOL2). |

| COCONUT / ZINC Natural Products [3] [7] | NP Databases | Publicly accessible, curated collections of 2D/3D structures of natural products. | Source compounds for building in-house virtual screening libraries. |

The future of NP-driven drug discovery is inextricably linked to continued technological advancement. Artificial intelligence will move beyond prediction to generative design, proposing novel NP-inspired scaffolds with optimized properties [6]. Multi-omics integration—linking genomics, metabolomics, and bioactivity data—will enable the targeted discovery of NPs from previously unculturable or overlooked sources (e.g., marine microbiomes) [1] [10]. Furthermore, the application of quantum computing and more sophisticated free energy calculations promises to dramatically increase the accuracy of binding affinity predictions, reducing the false positive rate [4].

However, challenges persist. These include the need for larger, high-quality bioactivity datasets for training AI models, the development of better computational methods to handle NP stereochemistry and conformational flexibility, and navigating evolving international regulations like the Nagoya Protocol which governs access to genetic resources [1] [6].

In conclusion, natural products remain an indispensable source of molecular inspiration for modern therapeutics. The integration of virtual screening and computational technologies has not merely revived this field but has transformed it into a more rational, efficient, and powerful discovery engine. By employing the detailed protocols and strategies outlined here—from hierarchical docking to AI-integrated workflows—researchers can effectively harness the vast, untapped potential of nature's chemical repertoire to address pressing human diseases. The enduring role of NPs is now secured by the enduring innovation of computational science.

Core Concepts and Relevance to NP Libraries

Privileged scaffolds are molecular frameworks capable of providing biologically active, drug-like ligands for diverse protein targets through appropriate decoration with functional groups [11] [12]. The concept, first coined by Evans in the late 1980s, originated from the observation that the benzodiazepine nucleus could yield ligands for different receptor classes [11] [13]. These scaffolds possess an inherent "bioactive fitness," often due to their ability to mimic secondary protein structures like beta-turns, facilitating interactions with multiple biological targets [11].

In the context of virtual screening for natural product (NP) discovery, privileged scaffolds are indispensable. Natural products are a premier source of chemically novel, bioactive therapeutics, with approximately 30% of FDA-approved drugs (1981-2019) originating from NPs or their derivatives [14] [15]. Their complex, evolutionarily optimized scaffolds exhibit high levels of saturation, multiple chiral centers, and diverse ring systems (fused, spiro, bridged) [16], which are under-represented in traditional synthetic libraries. By defining and utilizing these NP-derived privileged scaffolds, researchers can construct focused, high-quality virtual libraries. This strategy significantly enhances the probability of identifying hits during virtual screening campaigns compared to screening vast, unfiltered chemical spaces [11] [12]. The process transforms NPs from singular active compounds into generative platforms for discovering novel, drug-like molecules.

Characteristics and Classifications

Privileged scaffolds are not defined by a single universal structure but by a set of common characteristics that confer their utility in drug discovery. Key features include:

- Target Promiscuity with Selectivity Potential: The bare scaffold shows an inherent propensity to bind to multiple, often related, biological targets. Crucially, this promiscuity can be tuned into high selectivity for a single target through strategic functional group modifications [11] [13].

- Favorable Drug-like Properties: These scaffolds typically exhibit good pharmacokinetic and physicochemical profiles, such as solubility and metabolic stability. When a new molecule is built upon such a scaffold, it is more likely to possess drug-like properties [13].

- Structural Mimicry: Many privileged scaffolds, like benzodiazepines or pyrrolinones, are considered privileged because their three-dimensional geometry effectively mimics common peptide secondary structures (e.g., β-turns, α-helices), allowing them to interfere with protein-protein interactions [11].

- Chemical Tractability: The scaffold must be synthetically accessible and allow for efficient, modular decoration at multiple points to generate large, diverse libraries for screening [11] [12].

It is critical to distinguish true privileged scaffolds from Pan-Assay Interference Compounds (PAINS). PAINS are molecules that produce false-positive assay results through non-specific, non-drug-like mechanisms like redox cycling or colloidal aggregation [13]. While a PAINS scaffold might show apparent activity across many assays, its utility as a lead for drug development is low. True privileged scaffolds interact with targets via specific, desirable molecular interactions [13].

Table: Exemplary Privileged Scaffolds and Their Natural Product Connections

| Privileged Scaffold (Class) | Exemplary Natural Product Source / Inspiration | Representative Biological Targets | Key Characteristic |

|---|---|---|---|

| Benzodiazepine | Designed scaffold mimicking NP β-turns [11] | GPCRs (e.g., CCK receptor), mitochondrial proteins [11] | Classic example; effective β-turn mimic. |

| Indole / 2-Arylindole | Tryptophan, serotonin, complex alkaloids [11] | Serotonin receptors, GPCRs [11] | Ubiquitous in nature; key biosynthetic precursor. |

| Purine | Fundamental nucleobase (ATP, GTP) [11] | Kinases (CDKs), ATP-binding enzymes [11] | Core of endogenous nucleotides and cofactors. |

| Diaryl Ether | Found in various NP antibiotics and drugs [13] | HIV reverse transcriptase, HCV RNA polymerase [13] | Confers metabolic stability and membrane permeability. |

| Tetrahydroisoquinoline | Numerous plant alkaloids (e.g., emetine) [17] | Mono-ADP-ribosyltransferases (PARPs) [17] | Rigid, polycyclic framework common in bioactive NPs. |

| Macrocycle | Cyclic peptides, depsipeptides, erythromycin [18] [16] | Protein-protein interfaces, membrane targets [16] | Ability to target large, flat binding surfaces. |

Application Notes: Virtual Screening of NP Scaffold Libraries

The integration of privileged scaffolds into virtual screening workflows for NP discovery addresses a major bottleneck: efficiently navigating the vast, complex chemical space of natural products and their analogs to find viable drug leads.

3.1 The Strategic Advantage Traditional high-throughput screening (HTS) of random compound collections often suffers from low hit rates due to poor library design [11] [12]. In contrast, virtual screening of libraries built around NP-derived privileged scaffolds leverages pre-validated bioactivity. This approach focuses computational and experimental resources on regions of chemical space with a higher prior probability of success. For instance, a "superscaffold" derived from reliable click chemistry (e.g., SuFEx) can be used to generate ultra-large virtual libraries of over 100 million compounds, which are then virtually screened against a target structure to identify novel, potent ligands [19].

3.2 From NP Scaffold to Screening Library: The Workflow A modern, AI-enhanced workflow for virtual screening of NP-inspired libraries involves several key stages, as illustrated below:

3.3 AI-Driven Structural Modification Strategies Artificial Intelligence (AIDD), particularly molecular generative models, has become transformative for modifying NP scaffolds. These models optimize NPs for druggability by enhancing potency, selectivity, and ADMET properties, moving beyond traditional trial-and-error [20] [15]. The choice of strategy depends on the availability of target information.

Table: Summary of AI Molecular Generation Models for NP Modification

| Model Category | Key Examples | Primary Strategy | Application in NP Context | Key Challenge |

|---|---|---|---|---|

| Target-Interaction-Driven | DeepFrag [15], FREED [15], FRAME [15] | Fragment splicing/growth guided by 3D target structure. | Optimize NP scaffold for a known target protein (e.g., viral protease). | Requires high-quality protein-ligand complex data; limited generalization. |

| Activity-Data-Driven | ScaffoldGVAE [20], SyntaLinker [20] | Scaffold hopping & decoration based on SAR data. | Improve NP properties (potency, solubility) when target is unknown. | Susceptible to dataset bias; lacks mechanistic interpretability. |

| 3D Diffusion Models | D3FG [15], AutoFragDiff [15] | Generate 3D structures conditioned on pocket or pharmacophore. | Design novel NP analogs with optimal 3D pose for binding. | Very high computational cost; synthetic feasibility not guaranteed. |

Detailed Experimental Protocols

Protocol 1: Construction of an Ultra-Large Virtual Library from a "Superscaffold"

- Objective: To enumerate a synthetically accessible virtual library of >100 million compounds using SuFEx (Sulfur Fluoride Exchange) click chemistry on a privileged sulfonyl fluoride heterocycle core [19].

- Materials: ICM-Pro molecular modeling software; access to building block servers (Enamine, ChemDiv, Life Chemicals, ZINC15); predefined reaction protocols for synthesizing sulfonamide-functionalized triazoles and isoxazoles [19].

- Procedure:

- Reaction Definition: Define the two-step SuFEx reaction sequence in the combinatorial chemistry module. The first step involves regioselective coupling of bromosulfonyl fluoride (Br-ESF) with an azide or nitrile oxide to form the core heterocycle. The second step involves the substitution of the fluorine atom with an amine building block [19].

- Building Block Curation: Download or access lists of commercially available primary and secondary amines, azides, and nitrile oxides from vendor databases. Apply filters for molecular weight (<350 Da), reactivity, and absence of undesirable functional groups.

- Combinatorial Enumeration: Use the software to combinatorially combine the approved building blocks according to the defined reaction rules. This will generate two separate libraries (triazole and isoxazole).

- Library Merging and Formatting: Combine the enumerated libraries. Generate standard molecular descriptor files (e.g., SMILES, 3D SDF) for the final virtual library. Store metadata linking each virtual compound to its constituent building blocks for rapid "on-demand" synthesis planning.

Protocol 2: Benchmarking and Preparing a Receptor Model for Virtual Screening

- Objective: To generate and validate a flexible 4D receptor model (multiple conformations) for a GPCR target to improve docking accuracy [19].

- Materials: High-resolution crystal structure of the target receptor (e.g., CB2 with an antagonist, PDB ID); software for molecular docking and side-chain optimization (e.g., ICM); known sets of active ligands and decoy compounds [19].

- Procedure:

- Initial Structure Preparation: Process the crystal structure: add hydrogen atoms, assign protonation states, and optimize side-chain orientations of unresolved residues.

- Binding Site Optimization: Use a ligand-guided optimization algorithm (e.g., in ICM) to refine the sidechains within an 8Å radius of the co-crystallized ligand. Generate multiple conformer models seeded with different sets of known agonists and antagonists [19].

- Model Benchmarking: Dock a benchmark set (known actives + decoys) into each generated receptor model and the original crystal structure. Calculate the Receiver Operating Characteristic (ROC) curve and the Area Under the Curve (AUC) for each model.

- 4D Model Assembly: Select the top-performing models (e.g., best agonist-bound and antagonist-bound states). Combine them into a single 4D screening model where compounds are docked against all conformations, and the best score is retained [19].

Protocol 3: AI-Guided Functionalization of a Natural Product Scaffold

- Objective: To use a target-interaction-driven AI model (DeepFrag) to suggest specific R-group modifications on a NP scaffold to improve binding affinity [15].

- Materials: A 3D structure of the target protein with the NP lead docked or co-crystallized; the DeepFrag software environment; a fragment library [15].

- Procedure:

- Complex Preparation: Prepare the protein-NP complex structure file. Define the NP scaffold as the core and identify a specific bond or atom where modification is desired (the "leaving group").

- Fragment Removal and Query: Using DeepFrag, digitally remove a small fragment (e.g., a -CH3 group) from the specified site on the bound ligand. The model uses the resulting context—the protein pocket plus the remainder of the ligand—as a query.

- Model Inference: The DeepFrag model, trained to predict optimal fragments in a given binding context, processes the query. It outputs a ranked list of suggested fragments (from its library) to replace the removed group.

- Analysis and Proposal: Review the top suggestions. Propose the synthesis of the NP analog decorated with the highest-ranked fragment(s) predicted to form favorable interactions with the target pocket.

Case Studies in Drug Discovery

Case Study 1: Discovery of CB2 Antagonists from a 140M-Member Virtual Library A 2024 study demonstrated the power of combining a privileged "superscaffold" with ultra-large virtual screening. Researchers constructed a library of 140 million sulfonamide-functionalized triazoles and isoxazoles using SuFEx click chemistry [19]. This virtual library was screened against a 4D model of the Cannabinoid Type 2 (CB2) receptor. From the top 500 virtual hits, 11 compounds were synthesized on-demand. Experimental testing yielded a 55% hit rate, with 6 compounds showing antagonist potency (Ki < 10 µM), two in the sub-micromolar range [19]. This case validates the strategy of using a reliable, privileged scaffold to generate vast, diverse, and synthetically tractable libraries for highly successful virtual screening.

Case Study 2: Diaryl Ether Scaffold in Antiviral Drug Discovery The diaryl ether (DE) scaffold is a classic privileged structure found in multiple FDA-approved drugs [13]. In antiviral research, it forms the core of non-nucleoside reverse transcriptase inhibitors (NNRTIs) for HIV, such as Etravirine and Doravirine [13]. The DE moiety typically interacts via π-π stacking with tyrosine residues (e.g., Y181, Y188) in the HIV-1 reverse transcriptase pocket, providing a key anchor for inhibition [13]. This scaffold's hydrophobicity improves cell membrane penetration, while its chemical stability is advantageous for drug development. The case highlights how a single privileged scaffold can be optimized through iterative structure-based design to yield multiple clinical drugs against a challenging target.

Case Study 3: Scaffold-Based Discovery of Selective Mono-ART Inhibitors A 2023 review on inhibitors of mono-ADP-ribosyltransferases (mono-ARTs) identified four recurring privileged scaffolds from the limited set of high-quality chemical probes: quinazolinedione, isoquinoline, phenanthridinone, and tetrahydroisoquinoline [17]. For example, potent and selective inhibitors of PARP10 and PARP14 were derived from the tetrahydroisoquinoline scaffold [17]. This demonstrates how, even for an emerging target family, focused exploration of a few privileged, often NP-inspired, scaffolds can rapidly yield selective tool compounds and drug candidates, guiding future medicinal chemistry campaigns.

Table: Key Resources for Privileged Scaffold-Based Virtual Screening

| Resource Category | Specific Item / Example | Function & Rationale |

|---|---|---|

| Commercial Screening Libraries | BioDesign Library [16], Signature Libraries [16] | Provide physical compounds based on NP-inspired, high Fsp3, chiral scaffolds for experimental validation of virtual hits. |

| Building Blocks | REAL Space Building Blocks (Enamine, etc.) [19], ASINEX Building Blocks [16] | High-quality, diverse chemical reagents for the on-demand synthesis of virtual hit compounds. Essential for "library to lab" workflow. |

| Fragment Libraries | Covalent Inhibitor Set [16], Glycomimetics Set [16] | Specialized fragment collections for targeting specific mechanisms (e.g., cysteine trapping) or mimicking bioactive motifs. |

| Computational Tools | ICM-Pro [19], Molecular Docking Software (AutoDock, Glide) | Software for library enumeration, receptor modeling, and high-throughput virtual screening. |

| AI/Generative Models | DeepFrag [15], ScaffoldGVAE [20] (Open-source) | AI models for target-driven or activity-driven optimization of NP scaffolds via fragment suggestion or scaffold hopping. |

| Specialized Databases | Natural Product Databases, DNA-Encoded Library Building Blocks [16] | Source of inspiration for new privileged scaffolds and for constructing next-generation chemically diverse libraries. |

Theoretical Foundations and Strategic Integration

Virtual screening (VS) has become an indispensable computational methodology in modern drug discovery, dramatically accelerating the identification of bioactive compounds from vast chemical libraries. Within the specialized context of natural product (NP) scaffold libraries, VS strategies must adapt to harness their unique structural diversity, complexity, and inherent "biological pre-validation." The core paradigm integrates two complementary philosophies: ligand-based screening, which exploits knowledge of known active compounds, and structure-based screening, which utilizes the three-dimensional structure of a biological target. For NPs, this integration is critical, as ligand-based methods can efficiently navigate broad chemical space to find structurally novel yet functionally similar scaffolds, while structure-based docking provides atomic-level rationalization of binding and selectivity [21] [22].

The process typically follows a multi-tiered workflow to manage computational load and improve enrichment. An initial ultra-high-throughput virtual screening step, often using fast ligand-based similarity searches or machine learning models, rapidly filters a multi-million compound library to a manageable subset (e.g., 50,000-100,000 compounds). This subset then undergoes more computationally intensive structure-based docking for precise pose prediction and affinity estimation. Top-ranking hits are finally subjected to rigorous molecular dynamics (MD) simulations and free energy calculations to assess binding stability and affinity [21] [8]. The ultimate goal within NP research is scaffold hopping—identifying novel core structures that retain or improve desired biological activity while offering new avenues for optimization regarding synthetic accessibility, pharmacokinetics, or intellectual property [22].

Ligand-Based Virtual Screening: Application Notes and Protocols

Ligand-based virtual screening (LBVS) operates without target structure information, relying on the principle that structurally similar molecules are likely to have similar biological activities. Its primary applications in NP screening include hit identification from massive libraries, activity prediction for new analogs, and scaffold hopping to discover novel chemotypes with conserved bioactivity [22].

Core Protocol 1: Similarity-Based Screening Using Molecular Fingerprints

- Reference Compound & Library Preparation: Select one or more known active ligands as reference(s). Prepare the NP library in a standardized format (e.g., SMILES, SDF). Generate 2D molecular fingerprints for all reference and library compounds. Common choices include ECFP4 (Extended-Connectivity Fingerprints) for broad similarity or MACCS keys for substructure patterns [23] [22].

- Similarity Calculation: Compute pairwise similarity between each library compound and the reference set(s). The Tanimoto coefficient (Tc) is the standard metric, calculated as Tc = (c)/(a + b - c), where 'a' and 'b' are the number of features in molecules A and B, and 'c' is the number of common features.

- Ranking & Selection: Rank all library compounds by their similarity score (e.g., highest Tc). Apply a threshold (e.g., Tc > 0.6) to select candidates for further evaluation [8].

Core Protocol 2: Shape-Based and Pharmacophore Screening

- Pharmacophore Model Generation: From a set of aligned active compounds, identify and encode essential steric and electronic features necessary for biological activity (e.g., hydrogen bond donor/acceptor, hydrophobic region, aromatic ring, positive/negative ionizable site).

- Conformational Sampling: Generate multiple low-energy 3D conformers for each compound in the NP library to account for flexibility.

- Database Screening: Search the conformational library for molecules that can spatially align with the pharmacophore model. Use scoring functions to rank hits based on the fit and overlap of features.

- Post-Processing: Visually inspect top-ranked hits to validate feature mapping and chemical novelty.

Core Protocol 3: Machine Learning QSAR Model Development & Deployment

Recent advances emphasize using imbalanced datasets to train models optimized for high Positive Predictive Value (PPV) over balanced accuracy, as this maximizes the hit rate in the experimentally testable batch of top-ranked compounds [24].

- Data Curation: Assemble a dataset of active and (many more) inactive compounds from public databases like ChEMBL. Use bioactivity thresholds (e.g., IC50 < 10 µM for actives) to define classes [8].

- Descriptor Calculation & Model Training: Compute molecular descriptors or fingerprints. Train a classification model (e.g., Random Forest, Gradient Boosting) on the imbalanced dataset. Optimize hyperparameters to maximize PPV for the top N predictions (e.g., top 128, corresponding to a screening plate) [24] [23].

- External Validation & Screening: Validate the model on a held-out test set. Apply the finalized model to the entire NP library to predict probability scores for activity. Select the top-ranked compounds for experimental testing or further computational analysis.

Table 1: Key Molecular Representations for Ligand-Based Screening [23] [22]

| Representation Type | Examples | Key Advantages | Primary Use in NP Screening |

|---|---|---|---|

| 2D Fingerprints | ECFP4, MACCS keys, PubChem 2D | Fast computation, excellent for similarity and ML models | Initial high-throughput triage, scaffold hopping based on substructure |

| 3D Descriptors | Pharmacophore features, Shape overlays | Captures steric and electronic complementarity | Identifying NPs with similar bioactivity but different 2D structure |

| AI-Driven Embeddings | Graph Neural Network (GNN) embeddings, Transformer-based (SMILES) embeddings | Learns complex structure-activity relationships directly from data | Navigating ultra-large chemical spaces, generating novel NP-like scaffolds |

Ligand-Based VS Workflow for NP Libraries

Structure-Based Virtual Screening: Application Notes and Protocols

Structure-based virtual screening (SBVS) predicts the binding mode and affinity of small molecules within a target's binding site. For NP targets where crystal or cryo-EM structures are available, SBVS is powerful for mechanistic understanding, predicting selectivity, and guiding structure-based optimization of complex scaffolds [21] [25].

Core Protocol 1: Molecular Docking Workflow

Target Preparation:

- Obtain the 3D protein structure (PDB format). Remove water molecules and non-relevant cofactors.

- Add missing hydrogen atoms and assign protonation states (e.g., using PROPKA) for key residues (His, Asp, Glu) at the desired pH.

- Perform energy minimization to relieve steric clashes.

Binding Site Definition & Grid Generation:

- Define the docking search space. Use the centroid of a co-crystallized ligand or known active site residues.

- Generate a 3D grid box encompassing the site. A typical size is 20x20x20 ų with a 1.0 Šgrid spacing [25]. For flexible side chains, consider specifying critical residues as flexible.

Ligand Library Preparation:

- Convert NP library compounds to 3D structures.

- Perform conformational search and geometry optimization using force fields (e.g., MMFF94, GAFF) to generate low-energy 3D conformers [8].

- Assign appropriate bond orders and protonation states (likely states at physiological pH, e.g., using LigPrep).

Docking Execution:

- Use docking software (e.g., AutoDock Vina, Glide, GOLD). For Vina, key parameters include an

exhaustivenessvalue of 8-24 for accuracy and generating 10-20 poses per ligand [8]. - Execute docking in parallel to screen thousands of compounds.

- Use docking software (e.g., AutoDock Vina, Glide, GOLD). For Vina, key parameters include an

Post-Docking Analysis:

- Rank compounds by docking score (estimated binding affinity in kcal/mol).

- Visually inspect top poses for key interactions (H-bonds, pi-stacking, salt bridges) with critical binding site residues.

- Cluster similar poses and scaffolds to prioritize chemical diversity.

Core Protocol 2: Binding Affinity Refinement with MM-GBSA/PBSA

- Complex Preparation: Extract the top docking pose for each hit compound. Solvate the protein-ligand complex in a water box and add ions to neutralize the system.

- Molecular Dynamics Equilibration: Run a short MD simulation (e.g., 2-5 ns) to equilibrate the solvent and relieve any minor steric clashes from docking.

- Free Energy Calculation: Use the MM-GBSA or MM-PBSA method. Extract multiple snapshots from the equilibrated MD trajectory. For each snapshot, calculate the binding free energy (ΔGbind) using the formula: ΔGbind = Gcomplex - (Gprotein + Gligand). An average ΔGbind more negative than -40 kcal/mol often indicates a strong binder [21] [8].

Core Protocol 3: Validation with Molecular Dynamics Simulations

- System Setup: Build simulation systems for 2-3 top-ranked complexes and the apo protein (control) using tools like tLEaP. Use an explicit solvent model (e.g., TIP3P water) and physiological ion concentration.

- Simulation Run: Perform unrestrained MD simulations for a sufficient duration (typically 100-500 ns) to observe stability and conformational changes [21] [8].

- Trajectory Analysis:

- Root Mean Square Deviation (RMSD): Calculate for the protein backbone and ligand heavy atoms. A stable plateau indicates a stable complex.

- Root Mean Square Fluctuation (RMSF): Identify flexible regions; low fluctuation in binding site residues is favorable.

- Interaction Fractions: Quantify the persistence of key hydrogen bonds and hydrophobic contacts throughout the simulation.

Table 2: Key Metrics from Recent NP Virtual Screening Studies [21] [8] [25]

| Study Target | NP Library & Size | VS Strategy | Key Computational Metrics | Experimental Validation Outcome |

|---|---|---|---|---|

| GLP-1 Receptor [21] | COCONUT & CMNPD (>700k) | Shape similarity → Docking (Vina) → 500ns MD | Docking Score: ≤ -10 kcal/mol; MM-GBSA ΔG: -102.78 kcal/mol (best hit); Stable RMSD over 500ns | 20 final hits identified for in vitro testing |

| NDM-1 Enzyme [8] | ChemDiv NP Library (4,561) | ML-QSAR → Docking → 300ns MD | ML-predicted activity; Docking Score: ≤ -9 kcal/mol; MM-GBSA ΔG: -35.77 kcal/mol (vs. -18.9 for control) | Compound S904-0022 identified as a potent prospective inhibitor |

| COX-2 Receptor [25] | 300 Phytochemicals | Multi-target cross-docking → 100ns MD | Docking Score: ≤ -9.0 kcal/mol (Apigenin: -9.9 kcal/mol); Stable RMSD/Rg in MD | Apigenin, Kaempferol, Quercetin prioritized as multi-target analgesics |

| 50S Ribosome (C. acnes) [23] | ZINC NPs (186,659) | Consensus ML-QSAR → Docking → Clustering | Consensus pMIC ≥6; Docking Score ≤ -9 kcal/mol; Cluster analysis for diversity | 6 compounds tested in vitro; Tripterin MIC = 0.5–2 μg/mL |

Structure-Based Docking & Validation Workflow

Integrated Strategies for Natural Product Scaffold Library Screening

Screening NP libraries demands integrated workflows that leverage the strengths of both LBVS and SBVS to manage complexity and maximize the discovery of novel scaffolds [21] [23].

Application Note: Tandem LBVS → SBVS Workflow

- Stage 1 - Broad Triage: Apply a fast LBVS method (e.g., ECFP4 similarity search or a pre-trained ML model) to reduce a multi-million compound NP library (e.g., ZINC, COCONUT) to a focused subset of ~50,000-100,000 compounds. This step enriches for compounds with a baseline potential for activity [23].

- Stage 2 - Focused Docking: Subject the LBVS-pre-filtered library to rigorous structure-based docking. This step evaluates the geometric and chemical complementarity of the NPs with the target binding site.

- Stage 3 - Deep Learning & Diversity Analysis: Apply AI-based activity prediction or advanced scoring functions to the docked poses. Perform clustering analysis (e.g., using Butina clustering or k-means on fingerprints) on the top 1-2% of compounds to select a final, chemically diverse set of 50-100 hits for purchase and experimental testing [8] [23].

- Stage 4 - Advanced Validation: For the very top candidates, perform MD simulations and MM-GBSA calculations to confirm binding stability and estimate free energy, providing high-confidence predictions for costly experimental follow-up [21].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools for NP Virtual Screening

| Tool / Resource | Type | Primary Function in NP Screening | Key Application |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Molecule I/O, fingerprint generation (ECFP, MACCS), descriptor calculation, clustering. | Preparing NP libraries, performing similarity searches, and analyzing chemical space [8]. |

| AutoDock Vina / AutoDock-GPU | Docking Software | Fast, efficient molecular docking and scoring. | Performing structure-based screening of large NP subsets [8]. |

| Schrödinger Suite (Glide, Maestro) | Commercial Modeling Suite | High-accuracy docking (Glide), protein & ligand preparation, MM-GBSA calculations. | Refined docking, binding pose analysis, and free energy estimation for top NP hits. |

| GROMACS / AMBER | Molecular Dynamics Software | Running all-atom MD simulations for protein-ligand complexes. | Validating binding stability and dynamics of NP hits over time [21] [8]. |

| ChEMBL / PubChem | Bioactivity Databases | Source of active/inactive compounds for training ML-QSAR models. | Building predictive models to triage NP libraries based on bioactivity [8] [23]. |

| COCONUT / ZINC Natural Products | NP-Specific Chemical Databases | Source of structurally diverse, often unique, natural product compounds for screening. | The primary chemical libraries for discovery of novel bioactive scaffolds [21] [23]. |

Integrated Multi-Stage Screening for NP Libraries

Natural products (NPs) and their derivatives constitute a historically unparalleled source of bioactive compounds and approved drugs [26]. However, their inherent structural complexity presents unique challenges for integration into modern, high-throughput drug discovery pipelines [3]. This document outlines detailed Application Notes and Protocols for constructing and analyzing comprehensive NP libraries, specifically framed within a research thesis focused on virtual screening of natural product scaffold libraries.

The strategic value lies in creating well-curated, chemically diverse libraries that are optimized for computational screening. Moving from traditional crude extracts to pre-fractionated libraries and intelligently designed virtual expansions significantly increases the success rate in virtual screening campaigns by reducing nuisance compounds, concentrating actives, and exploring novel chemical space [27] [28].

A comprehensive library begins with sourcing diverse biological material. This involves strategic collection, adherence to legal frameworks, and leveraging existing repositories.

Key Considerations for Novel Collection

- Ethical and Legal Compliance: All collection must follow the Convention on Biological Diversity (CBD) and Nagoya Protocol on Access and Benefit Sharing (ABS). Agreements must be established with source countries for equitable benefit sharing [27].

- Geographical and Taxonomic Diversity: Target biodiverse regions and under-explored organisms (e.g., marine microbes, extremophiles) to maximize chemical novelty [27].

- Metadata Documentation: For each sample, record essential voucher information: taxonomy, collector, GPS coordinates, date, and ecological notes. This is critical for reproducibility and database management [27].

Researchers can access pre-existing libraries to bypass the collection and initial extraction phases. The table below summarizes key resources.

Table 1: Selected Major Natural Product Libraries for Research Screening [29] [27] [30]

| Library Name / Provider | Type of Material Available | Approximate Scale | Key Features / Notes |

|---|---|---|---|

| NCI Natural Products Repository (Developmental Therapeutics Program, NIH) | Crude extracts, purified compounds, Traditional Chinese Medicine extracts [29]. | >230,000 crude extracts; >400 purified compounds [29]. | One of the world's largest collections; available at no cost (shipping only) in HTS-ready formats [29] [27]. |

| MEDINA Foundation | Microbial-derived extracts and fractions [29]. | >200,000 extracts [29]. | One of the largest microbial product libraries; available for screening at their facility or externally. |

| Axxam/AXXSense | Pure compounds, fractions, extracts, microbial strains [29]. | 11,500 pure compounds; 63,000 fractions; 40,000 strains [29]. | Comprehensive access to nature’s chemical diversity from plant and microbial sources. |

| AnalytiCon Discovery | Pure natural compounds, fractions, extracts [29] [30]. | ~5,000 pure compounds (library constantly growing) [30]. | High level of purity and structural novelty; strong focus on microbial and edible plant sources. |

| NatureBank (Griffith University) | Lead-like enhanced extracts, fractions, pure compounds [29]. | >18,000 extracts; >90,000 fractions; >100 pure compounds [29]. | Focuses on Australian biodiversity; samples processed into lead-like libraries for bioactive discovery. |

| Greenpharma Natural Compound Library | Pure compounds [29]. | Information not specified. | Provides calculated physico-chemical descriptors with structures. |

Library Sourcing and Acquisition Workflow

Detailed Protocols for Library Curation & Preparation

This section provides standardized protocols for transforming raw biological material into screening-ready libraries.

Protocol 1: Generation of a Pre-fractionated Natural Product Library

Objective: To convert crude natural product extracts into a partially purified (pre-fractionated) library in HTS-compatible formats, reducing complexity and enriching minor metabolites [27].

Materials:

- Crude natural product extracts (lyophilized or in solvent).

- HPLC system with fraction collector (or equivalent MPLC system).

- Solid Phase Extraction (SPE) cartridges (C18 or similar).

- 384-well microplates (polypropylene, low binding).

- Dimethyl sulfoxide (DMSO), LC-MS grade solvents (H₂O, MeCN, MeOH).

Procedure:

- Primary Fractionation (SPE):

- Reconstitute lyophilized crude extract in a suitable solvent (e.g., 10% DMSO in MeOH).

- Load onto a pre-conditioned C18 SPE cartridge.

- Elute with a step-gradient of increasing organic solvent (e.g., 20%, 40%, 60%, 80%, 100% MeOH in H₂O). Collect each step as a separate fraction.

- Evaporate solvents under reduced pressure or centrifugal vacuum.

Secondary Fractionation (HPLC/MPLC):

- Reconstitute each SPE fraction for further separation.

- Inject onto a reverse-phase C18 HPLC column.

- Use a broad linear gradient (e.g., 5% to 95% MeCN in H₂O over 20-40 minutes).

- Employ UV-based time-slicing: Collect fractions at fixed time intervals (e.g., every 12-24 seconds) rather than by peak, to ensure consistent, reproducible well-to-well volumes across all samples [27].

- Evaporate collected fractions.

Plating and Storage:

- Reconstitute each dried fraction in 100% DMSO to a standard concentration (e.g., 2 mg/mL relative to original crude extract weight).

- Using a liquid handler, transfer aliquots into 384-well polypropylene microplates.

- Seal plates with pierceable foil and store at -20°C or -80°C.

- Maintain a detailed plate map linking each well to the source organism, extract, and fractionation step.

Protocol 2: Creation of a Focused Virtual NP Subset

Objective: To generate a manageable, maximally diverse subset from a large virtual NP database (e.g., COCONUT, UNPD) for focused computational screening [31].

Materials:

- Access to a large NP database (e.g., UNPD ~200,000 compounds).

- Cheminformatics software (e.g., RDKit, KNIME).

- Computational hardware.

Procedure:

- Data Curation: Download SMILES strings and standardize structures using a pipeline (e.g., ChEMBL curational pipeline) to remove salts, neutralize charges, and generate canonical representations [28].

- Descriptor Calculation: Calculate molecular descriptors (e.g., molecular weight, logP, topological polar surface area, number of rotatable bonds) and/or generate molecular fingerprints (e.g., ECFP4) [31].

- Diversity Selection: Apply the MaxMin algorithm:

- Randomly select the first compound and add it to the subset.

- Iteratively select the next compound that has the maximum minimum distance (e.g., Tanimoto distance based on fingerprints) to all compounds already in the subset.

- Continue until the desired subset size (e.g., 5,000-15,000 compounds) is reached [31].

- Subset Characterization: Analyze the final subset's coverage of chemical space (e.g., via PCA or t-SNE) and its property distribution to ensure it represents the diversity of the parent library [31].

Chemical Diversity Analysis & Expansion

Quantifying and expanding chemical diversity is central to maximizing a library's value for discovering novel scaffolds.

Analytical Metrics for Diversity

- Physicochemical Descriptors: Analyze distributions of molecular weight, logP, hydrogen bond donors/acceptors, rotatable bonds, and fraction of sp³ carbons. NPs often occupy distinct, more three-dimensional regions of chemical space compared to synthetic libraries [26] [31].

- Structural Scaffolds: Classify compounds by core scaffolds (e.g., using NPClassifier for biosynthetic pathways: polyketide, terpenoid, alkaloid, etc.) [28]. Assess scaffold diversity and frequency.

- Natural Product-Likeness: Calculate scores like NP Score, a Bayesian model that estimates how "natural product-like" a molecule is based on substructure fragments [28].

- Visualization: Use dimensionality reduction (e.g., t-SNE, TMAP) on fingerprint descriptors to create visual maps of chemical space, showing coverage and clustering of library compounds [28] [31].

Protocol 3: Generative Expansion of NP-like Chemical Space

Objective: To use deep learning models to generate novel, synthetically accessible compounds that occupy the chemical space of natural products [28].

Materials: Pre-processed NP structure database (e.g., from COCONUT), software for model training (e.g., Python, TensorFlow/PyTorch).

Procedure (Based on SMILES-based RNN):

- Data Preparation: Curate a set of canonical SMILES strings (≥300,000) from a NP database. Tokenize the SMILES strings for model input [28].

- Model Training: Train a Recurrent Neural Network (RNN) with Long Short-Term Memory (LSTM) units to learn the probability of the next character in a SMILES string. This teaches the model the underlying "grammar" of NP structures [28].

- Sampling: Generate new SMILES strings by sampling from the trained model.

- Validation & Filtering:

- Use RDKit to validate chemical correctness of generated SMILES.

- Remove duplicates and undesirable structures (e.g., reactive functional groups).

- Filter for "NP-likeness" using the NP Score and for drug-like properties (e.g., Lipinski's Rule of Five).

- This process can generate tens of millions of novel, NP-like virtual compounds, dramatically expanding the searchable space [28].

Chemical Diversity Analysis and Virtual Expansion Workflow

Integration with Virtual Screening Pipelines

The curated physical and virtual NP libraries must be integrated into robust computational screening workflows.

Protocol 4: Structure-Based Virtual Screening of an NP Library

Objective: To computationally dock a library of NP structures (physical or virtual) into a target protein's binding site to identify potential hits [32] [33].

Materials:

- Target protein structure (PDB format).

- Prepared NP library in appropriate format (e.g., SDF, PDBQT).

- Docking software (e.g., AutoDock Vina, QuickVina, RosettaVS).

- High-Performance Computing (HPC) resources.

Procedure (Modular Script-Based Pipeline):

- Receptor Preparation:

- Use a script (e.g.,

jamreceptor) [32] or tool to prepare the protein PDB file: add hydrogens, assign charges, and convert to PDBQT format. - Define the docking grid box coordinates centered on the binding site of interest.

- Use a script (e.g.,

- Ligand Library Preparation:

- For virtual compounds, ensure energy minimization and conversion to 3D conformers.

- Convert all ligand structures to the required input format (e.g., PDBQT for Vina) using batch processing [32].

- High-Throughput Docking Execution:

- Use a scalable docking script (e.g.,

jamqvina) [32] or platform (e.g., OpenVS) [33] to distribute docking jobs across multiple CPU/GPU cores on an HPC cluster. - For ultra-large libraries (billions), employ an active learning strategy where a machine learning model is trained on-the-fly to prioritize docking of promising compounds, drastically reducing computation time [33].

- Use a scalable docking script (e.g.,

- Post-Docking Analysis:

- Consolidate results from all jobs.

- Rank compounds by docking score (estimated binding affinity).

- Visually inspect top-scoring poses for sensible binding interactions.

- Apply further filters (e.g., interaction with key residues, drug-likeness).

Table 2: Performance Comparison of Virtual Screening Tools for NP Libraries [32] [33]

| Tool / Platform | Key Features | Typical Use Case | Benchmark Performance (Example) |

|---|---|---|---|

| AutoDock Vina/QuickVina | Fast, free, open-source, command-line friendly [32]. | Screening libraries of low-to-medium size (up to millions). | Widely used baseline; good balance of speed and accuracy. |

| RosettaVS (OpenVS Platform) | High accuracy with receptor flexibility, active learning for ultra-large screens, open-source [33]. | Screening ultra-large virtual libraries (billions of compounds). | Top performer in CASF2016 benchmark (EF1% = 16.72) [33]. |

| Commercial Suites (e.g., Glide, GOLD) | Highly optimized, user-friendly GUI, extensive support. | Industrial-scale screening where budget permits. | Often show high performance in independent benchmarks. |

Post-Screening Triaging

Virtual hits must be triaged for experimental validation.

- Dereplication: Check virtual hit structures against databases of known bioactive NPs to avoid rediscovery.

- Purchasing/Synthesis: For virtual compounds, assess commercial availability or plan synthesis. For physical library hits, locate the source well for re-testing.

- Experimental Validation: Subject top-priority virtual hits to in vitro binding or activity assays (e.g., fluorescence polarization, enzyme inhibition, cell-based phenotypic assays) [27] [3]. Co-crystallization of the protein with a confirmed hit validates the predicted binding pose [33].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Software, and Resources for NP Library Research

| Category | Item | Function / Purpose |

|---|---|---|

| Physical Library Construction | C18 Solid Phase Extraction (SPE) Cartridges | Initial fractionation of crude extracts based on polarity [27]. |

| Reverse-Phase HPLC/MPLC Columns | High-resolution separation of SPE fractions into time-sliced subfractions [27]. | |

| 384-Well Polypropylene Microplates & Sealing Foils | HTS-compatible storage of library fractions in DMSO [27]. | |

| Computational & Cheminformatics | RDKit (Open-Source) | Core cheminformatics toolkit for structure manipulation, descriptor calculation, fingerprint generation, and filtering [28] [31]. |

| NP Score | Bayesian model to quantify how closely a molecule resembles known natural products [28]. | |

| NPClassifier | Deep learning tool to classify NPs into biosynthetic pathways (e.g., polyketide, alkaloid) [28]. | |

| AutoDock Vina/QuickVina | Free, widely-used docking software for structure-based virtual screening [32]. | |

| OpenVS/RosettaVS Platform | Open-source, AI-accelerated platform for high-accuracy, ultra-large library virtual screening [33]. | |

| Data Sources | COCONUT (Collection of Open NatUral ProdUcTs) | Largest open-access database of unique NP structures for building virtual libraries [28] [31]. |

| ZINC Database | Public resource of commercially available compounds, often used for control screens or purchasing virtual hits [32]. | |

| CASF, DUD/DUD-E Benchmarks | Standard datasets for validating and benchmarking docking protocols and scoring functions [33]. |

Computational Arsenal: Advanced Methodologies and Real-World Applications in Virtual Screening

This protocol forms a core computational pillar of a broader thesis investigating virtual screening of natural product (NP) scaffold libraries. Given the complex, often novel chemotypes of NPs, ligand-based approaches are indispensable when 3D target structures are unavailable. These methods leverage known bioactive molecules to identify novel NP-derived hits by mapping essential features (pharmacophores), encoding structural patterns (fingerprints), and quantifying molecular resemblance (similarity metrics). This document provides application notes and detailed protocols for implementing these techniques in a NP screening pipeline.

Key Concepts and Quantitative Comparisons

Table 1: Common Molecular Fingerprint Types and Their Parameters

| Fingerprint Type | Bit Length (Typical) | Encoding Method | Key Advantage for NP Screening |

|---|---|---|---|

| ECFP4 (Extended Connectivity) | 2048 | Circular substructures (radius=2) | Captures local topology, ideal for scaffold hopping. |

| MACCS Keys | 166 | Predefined structural fragments | Simple, interpretable, fast for preliminary filtering. |

| Path-Based (RDKit) | 2048 | All linear paths up to 7 bonds | Good for larger, flexible NP molecules. |

| Pharmacophore Fingerprint | Variable (e.g., 210) | 3D features & distances | Encodes bio-relevant feature pairs, less sensitive to scaffold. |

Table 2: Popular Similarity Metrics and Their Characteristics

| Similarity Metric | Formula | Range | Sensitivity |

|---|---|---|---|

| Tanimoto (Jaccard) | ( T = \frac{c}{a+b-c} ) | 0-1 | Balanced, most common for binary fingerprints. |

| Dice (Sørensen-Dice) | ( D = \frac{2c}{a+b} ) | 0-1 | Gives more weight to common bits. |

| Cosine | ( C = \frac{\sumi xi yi}{\sqrt{\sumi xi^2}\sqrt{\sumi y_i^2}} ) | 0-1 | Suitable for count-based or continuous vectors. |

| Euclidean Distance | ( E = \sqrt{\sumi (xi - y_i)^2} ) | 0 → ∞ | Direct distance measure; often converted to similarity. |

Experimental Protocols

Protocol 1: Pharmacophore Model Generation from a Known Active (LigandScout) Objective: To create a quantitative pharmacophore hypothesis for screening a NP library.

- Input Preparation: Prepare a 3D molecular structure of a known high-affinity ligand (e.g., a reference NP) in a low-energy conformation. Multiple aligned active conformers improve model quality.

- Feature Identification: Load the ligand into LigandScout. Use the "Create Pharmacophore from Ligand" function. Automatically identify key features: Hydrogen Bond Donor (HBD), Hydrogen Bond Acceptor (HBA), Hydrophobic (H), Aromatic (AR), Positive/Negative Ionizable (PI/NI).

- Model Definition & Refinement: Manually adjust feature tolerances (default ~1.2Å) based on known SAR. Add exclusion volume spheres (radius ~1.0Å) from the receptor binding site if co-crystal structure is available to penalize steric clashes.

- Validation: Validate the model by screening a small decoy set (actives + inactives). Calculate enrichment factors (EF) and use ROC curves to assess performance before full library screening.

Protocol 2: Similarity-Based Screening using Fingerprints (RDKit/Python) Objective: To rank a NP library based on similarity to one or more reference active compounds.

- Library & Reference Standardization: Standardize all NP library and reference molecule structures using RDKit: sanitize, remove salts, generate tautomers, and generate 3D conformations (

EmbedMolecule). - Fingerprint Generation: Encode molecules. For ECFP4:

AllChem.GetMorganFingerprintAsBitVect(mol, radius=2, nBits=2048). For pharmacophore fingerprints: userdMolDescriptors.GetHashedAtomPairFingerprintwith pharmacophore invariants. - Similarity Calculation: Compute pairwise similarity. For a single reference:

DataStructs.TanimotoSimilarity(ref_fp, query_fp). For multiple references, use average similarity or maximum similarity. - Ranking & Hit Selection: Rank the entire NP library in descending order of similarity. Apply a threshold (e.g., Tanimoto ≥ 0.5 for ECFP4) to select candidate hits for further biological evaluation.

Visualization of Workflows

Title: Dual Workflows for Ligand-Based NP Screening

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software/Tools for Implementation

| Item | Function & Application in NP Screening |

|---|---|

| RDKit (Open-Source) | Core cheminformatics toolkit for fingerprint generation, similarity calculations, and basic pharmacophore features. |

| LigandScout/Phase (Schrödinger) | Advanced software for creating, visualizing, and screening with 3D pharmacophore models. |

| KNIME/Analytics Platform | Visual workflow environment to integrate fingerprinting, similarity searches, and data processing without extensive coding. |

| ChEMBL/PubMed | Public databases to source known active ligands for building reference sets and validating methods. |

| NP Library Database | Curated in-house or commercial database (e.g., AnalytiCon, SPECS) of natural product scaffolds in standardized format (SDF). |

| Python/R Scripting | Custom scripts for batch processing, calculating enrichment metrics (EF, ROC AUC), and visualizing results. |

The discovery of new therapeutic agents from nature is being revolutionized by computational methods. Natural products (NPs) offer unparalleled structural diversity and bioactivity but pose challenges for traditional screening due to complexity, availability, and characterization difficulties [3]. Structure-based virtual screening (SBVS) serves as a powerful filter, enabling the efficient prioritization of NP candidates from vast digital libraries for experimental validation [34] [3]. This approach is critical within a research thesis focused on NP scaffold libraries, as it provides a rational, cost-effective strategy to navigate complex chemical space and identify novel scaffolds with high potential for specific therapeutic targets [7] [8].

Core Concepts and Components

2.1 Molecular Docking: Principles and Software Molecular docking predicts the preferred orientation (pose) and binding affinity of a small molecule (ligand) within a protein's target site. The process involves two key steps: a search algorithm that explores possible conformations and orientations, and a scoring function that ranks them [35]. Docking methodologies vary in their treatment of flexibility:

- Rigid Docking: Treats both receptor and ligand as rigid bodies; fastest but least accurate.

- Semi-Flexible Docking: Allows ligand flexibility while keeping the receptor rigid; the standard for most VS campaigns [34].

- Flexible Docking: Allows flexibility in both ligand and receptor sidechains; most accurate but computationally expensive [34].

A wide array of software implements these algorithms, each with specific strengths suitable for different stages of screening [34] [35].

Table 1: Common Molecular Docking Software for Virtual Screening

| Software | Type | Key Algorithm/Feature | Typical Use Case |

|---|---|---|---|

| AutoDock Vina | Free, Open-Source | Hybrid scoring function; iterated local search optimizer. | General-purpose docking, HTVS pre-screening [34] [8]. |

| Glide (Schrödinger) | Commercial | Hierarchical filters with systematic search; SP/XP precision modes. | High-accuracy docking, lead optimization [35] [7]. |

| GOLD | Commercial | Genetic algorithm; handles full ligand flexibility. | Binding mode prediction, scaffold hopping [34]. |

| rDock | Free, Open-Source | Fast stochastic search; good for high-throughput workflows [34]. | Large-scale virtual screening. |

| DOCK | Free, Academic | Anchor-and-grow algorithm; footprint similarity scoring. | Teaching, foundational VS protocols [35] [36]. |

2.2 High-Throughput Virtual Screening (HTVS) HTVS is the automated application of docking to screen libraries containing thousands to millions of compounds [34]. The goal is not absolute accuracy but the efficient enrichment of active molecules by rapidly filtering out obvious non-binders. Successful HTVS depends on balancing computational speed with reasonable predictive power, often using faster, less precise docking settings initially [7] [37]. Key metrics to evaluate HTVS performance include Enrichment Factor (EF), which measures the concentration of true actives in the top-ranked subset, and the area under the Receiver Operating Characteristic (ROC) curve (AUC-ROC), which assesses the model's overall ability to discriminate actives from inactives [7] [38].

2.3 Binding Mode Analysis and Pose Validation Post-docking analysis is critical to translate numerical scores into mechanistic understanding and to avoid false positives. This involves:

- Visual Inspection: Assessing key interactions (H-bonds, pi-stacking, hydrophobic contacts) with known active site residues.

- Interaction Fingerprints: Quantifying and comparing interaction patterns between poses (e.g., using Protein-Ligand Interaction Fingerprints - PLIFs) [38].

- Consensus Scoring: Using multiple scoring functions to rank poses; compounds consistently ranked high are more reliable.

- Root Mean Square Deviation (RMSD): Calculating the deviation (in Ångströms) of a predicted pose from an experimentally determined co-crystal structure. An RMSD < 2.0 Å is generally considered a successful prediction [35].

Detailed Application Notes and Protocols

3.1 Protocol 1: Hierarchical HTVS for Novel Kinase Inhibitors from NP Libraries This protocol is adapted from a study identifying HER2 kinase inhibitors from a ~639,000 compound NP library [7].

Objective: To identify novel natural product inhibitors against a kinase target (e.g., HER2) through a multi-tiered docking funnel.

Materials & Software: Schrödinger Suite (Maestro, Protein Prep Wizard, LigPrep, Glide), high-performance computing (HPC) cluster, NP library databases (e.g., COCONUT, ZINC NP) [7].

Procedure:

- Target Preparation:

- Retrieve a high-resolution crystal structure of the target kinase in complex with an inhibitor (e.g., PDB: 3RCD for HER2).

- Process the protein using the Protein Preparation Wizard: add hydrogens, assign bond orders, fill missing side chains, optimize H-bond networks, and perform restrained minimization (RMSD cutoff 0.3 Å) [7].

- Define a receptor grid box (e.g., 20x20x20 Å) centered on the co-crystallized ligand's centroid [7].

Ligand Library Preparation:

- Compile a library of NP structures from curated databases. Remove duplicates and salts.

- Prepare ligands using LigPrep: generate possible ionization states at pH 7.0 ± 2.0, generate stereoisomers, and perform energy minimization with the OPLS4 force field [7].

Hierarchical Docking Workflow:

- Stage 1 - HTVS: Dock the entire library using Glide's HTVS mode. Retain the top 10,000 compounds based on docking score (e.g., ≤ -6.00 kcal/mol) [7].

- Stage 2 - Standard Precision (SP): Redock the 10,000 hits using the more rigorous SP mode. Retain the top 500-1000 compounds.

- Stage 3 - Extra Precision (XP): Dock the final set using the most stringent XP mode to refine poses and scoring. Select the top 20-50 compounds for visual inspection and binding mode analysis.

Post-Processing & Selection:

- Visually inspect top XP poses for conserved key interactions (e.g., hinge region H-bond in kinases).

- Cluster compounds by scaffold and select representatives for purchase or further computational analysis (e.g., ADMET prediction, molecular dynamics).

Diagram: Hierarchical HTVS Workflow for Natural Products

3.2 Protocol 2: Integrating QSAR Pre-Filtering with Docking for Antimicrobial Discovery This protocol is adapted from a study identifying NDM-1 metallo-β-lactamase inhibitors [8].

Objective: To enhance HTVS efficiency by using a Machine Learning (ML) QSAR model to pre-filter a NP library for likely activity before docking.

Materials & Software: Python/R for ML, RDKit/OpenBabel for cheminformatics, AutoDock Vina, NP library (e.g., ChemDiv NP-based library) [8].

Procedure:

- Build a QSAR Model:

- Collect a dataset of known active and inactive compounds against the target from ChEMBL. Use descriptors (e.g., MACCS keys, Morgan fingerprints) to represent molecular structures.

- Train a regression model (e.g., Random Forest, Gradient Boosting) to predict activity (e.g., pIC50). Validate using cross-validation.

- Apply the trained model to predict the activity of all compounds in the NP library [8].

Library Pre-Filtering:

- Rank the NP library based on the predicted activity from the QSAR model.

- Select the top-performing fraction (e.g., top 20%) for subsequent molecular docking, dramatically reducing the docking workload [8].

Molecular Docking & Clustering:

- Prepare the target protein and the pre-filtered ligand set for docking with AutoDock Vina.

- Perform docking with appropriate exhaustiveness. Retain compounds with binding energy better than a control (e.g., a known inhibitor or substrate).

- Cluster the docking hits based on structural similarity (e.g., Tanimoto similarity on fingerprints) to ensure diversity in the final selection [8].

3.3 Protocol 3: Binding Mode Analysis and Pose Validation Objective: To critically assess and validate docking poses before selecting compounds for experimental testing.

Procedure:

- Interaction Profiling:

- Generate a 2D diagram of ligand-protein interactions for each top pose, detailing H-bonds, hydrophobic contacts, salt bridges, and pi-interactions.

- Compare the interaction fingerprint of novel NP hits to that of a known co-crystallized inhibitor. Identify conserved key interactions essential for binding.

Consensus Assessment:

- Redock the top hits using a second, independent docking program (e.g., if Glide was used first, try AutoDock Vina or GOLD).

- Check for consensus in the predicted binding mode (low RMSD between poses from different software) and ranking. Consensus hits are higher confidence.

Energy Decomposition:

Advanced Workflows and Case Studies