Untargeted Metabolomics for Natural Product Discovery: A Comprehensive Guide from Exploration to Clinical Translation

Untargeted metabolomics has emerged as a powerful, unbiased approach for discovering novel bioactive compounds from natural sources, directly linking metabolic profiles to phenotypic effects.

Untargeted Metabolomics for Natural Product Discovery: A Comprehensive Guide from Exploration to Clinical Translation

Abstract

Untargeted metabolomics has emerged as a powerful, unbiased approach for discovering novel bioactive compounds from natural sources, directly linking metabolic profiles to phenotypic effects. This article provides researchers and drug development professionals with a comprehensive framework covering foundational principles, advanced methodological applications using UPLC-MS/MS and FT-ICR-MS, strategies for overcoming analytical challenges like isomer separation and data complexity, and validation approaches through pathway analysis and biomarker identification. By integrating the latest technological advancements, including artificial intelligence and ion mobility spectrometry, we demonstrate how untargeted metabolomics accelerates natural product research from initial discovery through preclinical validation, offering transformative potential for drug development and precision medicine.

Foundations of Untargeted Metabolomics in Natural Product Research

Untargeted metabolomics has rapidly emerged as a pivotal profiling method in biological research, enabling the comprehensive analysis of small molecules within a biological system. Unlike genomics and proteomics, metabolomics directly surveys biochemical phenotypes, providing unique insights into health, disease, and natural product discovery [1]. This technical guide details the core principles, methodologies, and applications of untargeted metabolomics, with a specific focus on its utility in uncovering novel natural products. We present detailed experimental protocols, data analysis workflows, and visualization strategies essential for researchers and drug development professionals seeking to implement these techniques in their discovery pipelines.

Metabolomics is the quantitative study of endogenous and exogenous small molecules in a biological system [1]. Untargeted metabolomics aims to measure the entire complement of metabolites, providing a global, unbiased survey of biochemical activity. This approach is particularly valuable for hypothesis generation and biomarker discovery, as it can reveal unexpected metabolic alterations in response to disease, drug treatments, or environmental changes [1] [2]. In the context of natural product discovery, untargeted metabolomics serves as a powerful tool for characterizing the complex metabolic fingerprints of natural sources and identifying novel bioactive compounds with potential therapeutic applications.

The metabolome represents the downstream output of the genome, transcriptome, and proteome, making it the most proximal reflection of biological phenotype. Metabolites, typically defined as small molecules with molecular weights below 1,500 Da, include diverse classes such as amino acids, sugars, lipids, organic acids, and steroids [3]. Their comprehensive analysis can reveal disturbances in key metabolic pathways relevant to mitochondrial biology, cancer, diabetes, and other diseases, providing crucial insights for drug discovery [1] [3].

Core Principles and Analytical Platforms

Fundamental Principles

Untargeted metabolomics operates on several key principles that distinguish it from targeted approaches. First, it strives for comprehensive coverage of the metabolome, despite the profound physiochemical diversity of metabolites that makes complete coverage challenging in a single analytical run [1]. Second, it is a discovery-oriented approach, ideally suited for identifying novel metabolites and unexpected metabolic changes without prior hypothesis. Third, it requires high analytical sensitivity and resolution to detect and resolve thousands of metabolites across a wide dynamic range of concentrations [2].

There is an inherent tradeoff in metabolomics between molecular coverage and method optimization for specific compounds. While targeted methods excel at quantifying predefined metabolite sets, untargeted approaches sacrifice some precision for breadth of detection, making them ideal for exploratory research in natural product discovery [1].

Analytical Platform Selection

The two primary analytical platforms for untargeted metabolomics are mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy, each with distinct advantages and limitations [3].

MS-based platforms are the most widely used for untargeted metabolomics due to their high sensitivity and ability to detect thousands of metabolites without chemical derivatization [1]. MS is typically coupled with separation techniques such as liquid chromatography (LC-MS) or gas chromatography (GC-MS) to reduce sample complexity [3]. LC-MS is particularly versatile, suitable for detecting moderately polar to highly polar compounds including fatty acids, lipids, nucleotides, polyphenols, and flavonoids [3]. The Orbitrap mass spectrometer provides high-resolution accurate mass (HRAM) capability, essential for separating isobaric species and performing structural elucidation [1] [2].

NMR spectroscopy offers advantages as a non-destructive technique with high reproducibility that requires minimal sample preparation [3]. It provides detailed structural information but has lower sensitivity compared to MS, potentially missing lower abundance metabolites [3]. NMR applications extend to intact tissue samples using high-resolution magic angle rotation (HRMAS) technology [3].

Table 1: Comparison of Major Analytical Platforms in Untargeted Metabolomics

| Platform | Key Advantages | Limitations | Ideal Applications |

|---|---|---|---|

| LC-MS | High sensitivity; broad metabolite coverage; no derivatization required for most compounds | High instrument cost; requires sample separation | Detection of moderately to highly polar compounds; natural product profiling |

| GC-MS | High separation efficiency; well-established libraries | Limited to volatile compounds or those that can be derivatized | Analysis of amino acids, organic acids, sugars, and fatty acids |

| NMR | Non-destructive; highly reproducible; provides structural information | Lower sensitivity; limited dynamic range | Intact tissue analysis; absolute quantification; structural elucidation |

Experimental Workflow and Methodologies

Sample Preparation and Extraction

Proper sample preparation is critical for success in untargeted metabolomics. For biofluids such as plasma, urine, and cerebral spinal fluid, protein precipitation using organic solvents is the standard approach. A typical extraction solvent formulation for hydrophilic polar metabolites is acetonitrile:methanol:formic acid (74.9:24.9:0.2, v/v/v) [1].

Quality control (QC) is incorporated through stable isotope-labeled internal standards. Commonly used compounds include l-Phenylalanine-d8 and l-Valine-d8 added to the extraction solvent at specific concentrations (e.g., 0.1 μg/mL and 0.2 μg/mL, respectively) to monitor extraction efficiency and instrument performance [1]. These internal standards help account for technical variability during sample processing and analysis.

Chromatographic Separation

Chromatographic separation prior to MS analysis reduces ion suppression and increases metabolite detection. Hydrophilic interaction liquid chromatography (HILIC) is often applied to assess energy pathways associated with mitochondrial metabolism, as it effectively retains polar metabolites [1]. The Waters Atlantis HILIC Silica column provides excellent separation for a wide range of polar compounds.

Mobile phase preparation follows strict protocols to ensure reproducibility. Mobile phase A typically consists of 0.1% formic acid and 10 mM ammonium formate in water, while mobile phase B is 0.1% formic acid in acetonitrile [1]. These solutions should be prepared fresh approximately every month to maintain optimal performance.

Mass Spectrometry Analysis

High-resolution mass spectrometers such as Orbitrap instruments are preferred for untargeted metabolomics due to their high mass accuracy and resolution [1] [2]. Key instrumental capabilities required include:

- Large dynamic range to analyze metabolites of varying abundances

- High sensitivity to detect low-abundance metabolites

- High resolution accurate mass (HRAM) capability to separate isobaric species

- MS^n capability for compound identification and structural elucidation [2]

Data acquisition typically involves full-scan MS analysis in both positive and negative ionization modes to maximize metabolite coverage. The workflow produces large, complex data files that require sophisticated bioinformatics tools for processing and interpretation [1].

Data Processing and Statistical Analysis

Data Preprocessing Workflow

Raw data from untargeted metabolomics experiments undergo extensive preprocessing before statistical analysis. The preprocessing pipeline includes noise reduction, retention time correction, peak detection and integration, and chromatographic alignment [3]. Several software platforms are available for these tasks, including XCMS, MAVEN, and MZmine3 [3].

Quality control samples are essential throughout the analysis. Pooled QC samples are used to balance analytical platform bias and correct for signal noise. Data from QC samples determine the variance of metabolite features, and features with excessively high variance are removed from subsequent analysis [3]. Data normalization is then applied to reduce systematic bias or technical variation, with methods ranging to total ion intensity normalization to probabilistic quotient normalization.

Statistical Analysis Methods

Untargeted metabolomics employs both univariate and multivariate statistical methods to identify significant metabolic differences between sample groups.

Univariate methods analyze metabolite features independently and include:

- Fold change analysis to determine magnitude of differences

- Student's t-test for comparing two groups

- ANOVA for comparing multiple groups

- Volcano plots to visualize both statistical significance and magnitude of change [4] [5]

Multivariate methods analyze multiple metabolite features simultaneously and include:

- Principal Component Analysis (PCA) for unsupervised pattern recognition and data quality assessment

- Partial Least Squares-Discriminant Analysis (PLS-DA) for supervised classification and biomarker discovery

- Orthogonal PLS-DA (OPLS-DA) to separate predictive from non-predictive variation [4] [5]

These statistical approaches help uncover meaningful biological patterns in the complex, high-dimensional data generated by untargeted metabolomics.

Compound Identification and Annotation

Metabolite identification follows a tiered system established by the Metabolomics Standards Initiative (MSI), which defines four levels of confidence:

- Level 1: Identified metabolites - confirmed using authentic standards

- Level 2: Presumptively annotated compounds - based on spectral similarity to libraries

- Level 3: Presumptively characterized compound classes - based on chemical class characteristics

- Level 4: Unknown compounds - detectable but unidentifiable features [3]

For LC-MS and IC-MS workflows, high-resolution accurate mass features are searched against MS databases or MS/MS spectral libraries such as mzCloud, METLIN, and HMDB [2]. GC-MS workflows utilize electron ionization (EI) fragment patterns matched against NIST and Wiley libraries [2].

Table 2: Key Bioinformatics Tools for Untargeted Metabolomics Data Analysis

| Tool Category | Software/Platform | Primary Function | Application Context |

|---|---|---|---|

| Spectral Processing | XCMS, MZmine3, MS-DIAL | Peak detection, alignment, normalization | Raw data processing from LC-MS/GС-MS |

| Statistical Analysis | MetaboAnalyst, sklearn | Univariate and multivariate statistics | Pattern recognition, biomarker discovery |

| Metabolite Identification | mzCloud, METLIN, HMDB | Compound annotation using spectral libraries | Structural elucidation and identity confirmation |

| Pathway Analysis | KEGG, MetaCyc, MSEA | Biological interpretation and pathway mapping | Functional analysis of metabolic alterations |

Applications in Natural Product Discovery

Untargeted metabolomics provides powerful capabilities for natural product discovery research by enabling comprehensive characterization of complex metabolite mixtures without prior knowledge of their composition. This approach is particularly valuable for:

- Metabolic profiling of medicinal plants and microbial sources

- Identification of novel bioactive compounds with potential therapeutic applications

- Dereplication to quickly identify known compounds and focus resources on novel discoveries

- Biosynthetic pathway elucidation for engineered production of valuable natural products

In natural product research, untargeted metabolomics can reveal subtle metabolic changes in response to environmental factors, growth conditions, or genetic modifications, guiding the discovery of new drug leads from natural sources.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for Untargeted Metabolomics

| Item | Specification | Function/Purpose |

|---|---|---|

| Extraction Solvent | Acetonitrile:methanol:formic acid (74.9:24.9:0.2, v/v/v) | Protein precipitation and metabolite extraction from biofluids, cells, or tissues |

| Internal Standards | Stable isotope-labeled compounds (e.g., l-Phenylalanine-d8, l-Valine-d8) | Quality control; monitoring extraction efficiency and instrument performance |

| HILIC Column | Waters Atlantis HILIC Silica column | Chromatographic separation of polar metabolites prior to mass spectrometry analysis |

| Mobile Phase A | 0.1% formic acid, 10 mM ammonium formate in water | Aqueous mobile phase for HILIC chromatography; enhances ionization in positive mode |

| Mobile Phase B | 0.1% formic acid in acetonitrile | Organic mobile phase for HILIC chromatography; sample loading and initial separation |

| Quality Control Pool | Pooled sample from all experimental groups | Monitoring instrument stability and performance throughout the analytical sequence |

Untargeted metabolomics represents a powerful approach for capturing comprehensive metabolic fingerprints that reflect the functional state of biological systems. The methodology provides unique insights into metabolic pathways relevant to disease mechanisms and natural product discovery. While technically challenging due to the complexity of the metabolome and the analytical demands, following established protocols for sample preparation, chromatographic separation, mass spectrometry analysis, and data processing enables robust characterization of metabolic alterations. As analytical technologies continue to advance and bioinformatics tools become more sophisticated, untargeted metabolomics will play an increasingly important role in drug discovery and natural product research, offering unprecedented opportunities to identify novel therapeutic compounds and understand their mechanisms of action.

The search for new bioactive molecules is a fundamental challenge that limits the development of new therapeutics and chemical probes for studying biological processes. Chemical space—the theoretical domain encompassing all possible organic molecules—is estimated to contain approximately 10³³ drug-like compounds, rendering exhaustive exploration through chemical synthesis alone completely unfeasible [6]. Historically, chemists have explored this space unevenly, often relying on a limited palette of established chemical transformations and focusing on target-oriented synthesis of specific complex molecules [6]. This approach has inadvertently left biologically relevant regions of chemical space largely unexplored, creating a critical bottleneck in molecular discovery.

Natural products (NPs) represent privileged starting points for navigating this vast chemical space. These molecules, evolved over millennia through biological selection processes, possess inherent biological relevance as they have evolved to interact with specific macromolecular targets and modulate biochemical pathways [6]. Their structural complexity, characterized by high sp³ carbon count, diverse stereochemistry, and molecular scaffolds optimized through evolution, makes them ideal guiding structures for exploring bioactive regions of chemical space. Within this context, untargeted metabolomics has emerged as an essential technological paradigm, enabling the comprehensive detection and characterization of natural products without prior knowledge of their chemical structures, thus providing an unbiased portal into nature's chemical repertoire.

Theoretical Frameworks for Natural Product-Informed Exploration

Several systematic frameworks have been developed to leverage natural products as guides for exploring biologically relevant chemical space. These approaches bridge the gap between the structural diversity of natural products and the practical constraints of synthetic exploration.

Biology-Oriented Synthesis (BIOS)

Biology-Oriented Synthesis (BIOS) utilizes computational approaches to systematically simplify complex natural product scaffolds into synthetically accessible core structures that retain biological relevance [6]. The strategy employs the SCONP algorithm (Structural Classification of Natural Products) to deconstruct natural products into hierarchical scaffold trees, identifying simplified yet biologically pertinent molecular architectures [6]. This approach effectively identifies gaps in chemical space coverage by existing natural product libraries and focuses synthetic efforts on these unexplored regions.

Notable Success Cases of BIOS:

- Wnt Pathway Modulators: Inspired by the natural product sodwanone S, researchers designed a bicyclic oxepane scaffold library, leading to the discovery of Wntepane—a novel modulator of the Wnt signaling pathway that acts through binding to Vangl1, a protein previously lacking small-molecule ligands [6].

- Hedgehog Pathway Inhibitors: Simplification of the natural product sominone yielded novel chemotypes that inhibit the Hedgehog pathway through modulation of Smoothened, with potential applications in treating associated birth defects and cancers [6].

- Anti-Tuberculosis Agents: BIOS-guided simplification of yohimbine led to tetracyclic indoloquinolizidine scaffolds exhibiting selective inhibition of MptpB, a key virulence factor in Mycobacterium tuberculosis, without affecting mammalian phosphatases [6].

Complexity-to-Diversity (CtD)

In contrast to BIOS, the Complexity-to-Diversity (CtD) approach utilizes natural products themselves as synthetic starting materials for generating diverse compound libraries through strategic structural diversification [6]. This methodology employs chemoselective reactions—including ring cleavage, expansion, fusion, and rearrangement—to dramatically transform natural product cores into unprecedented scaffolds while potentially retaining their biological relevance.

Exemplary CtD Implementations:

- Diterpene Diversification: Gibberellic acid, a readily available diterpene, has been transformed through ring rearrangement and cleavage reactions into novel scaffolds with enhanced three-dimensionality [6].

- Alkaloid Transformation: Yohimbine and quinine have served as platforms for generating structurally diverse libraries through strategic ring system modifications, with resulting compounds exhibiting diverse bioactivities in phenotypic screens [6].

Table 1: Comparative Analysis of Natural Product-Informed Exploration Strategies

| Approach | Core Principle | Key Advantages | Exemplary Output |

|---|---|---|---|

| Biology-Oriented Synthesis (BIOS) | Systematic simplification of NP scaffolds | Retains biological relevance while improving synthetic accessibility | Wntepane (Vangl1 modulator) [6] |

| Complexity-to-Diversity (CtD) | Direct structural diversification of NP cores | Leverages inherent NP complexity while generating unprecedented diversity | Novel anti-inflammatory compounds from yohimbine [6] |

| Untargeted Metabolomics | Comprehensive detection of NP repertoire without prior targeting | Unbiased discovery of novel chemotypes directly from biological systems | Putative terpenes from Suillus fungi [7] |

The Untargeted Metabolomics Revolution in Natural Product Discovery

Untargeted metabolomics represents a paradigm shift in natural product discovery, enabling comprehensive, data-driven exploration of chemical space without the constraints of hypothesis-driven or targeted approaches. This methodology has become particularly powerful with advances in liquid chromatography-high-resolution mass spectrometry (LC-HRMS), which provides the sensitive, broad-spectrum chemical coverage necessary for detecting novel natural products [8].

Core Technological Foundations

The untargeted metabolomics workflow rests on several key technological pillars:

High-Resolution Mass Spectrometry: Modern LC-HRMS platforms, particularly those based on Orbitrap technology, provide the mass accuracy and resolution necessary to distinguish between thousands of metabolic features in complex biological extracts [7]. The typical configuration for natural product discovery employs ultra-high-pressure liquid chromatography coupled to a Q-Exactive Plus mass spectrometer, capable of resolution up to 70,000 at m/z 200 and mass accuracy within 5 ppm [7].

Chromatographic Separation: Reversed-phase C18 chromatography using nanospray columns (e.g., 75 μm × 150 mm packed with 1.7-μm C18 Kinetex resin) enables separation of complex natural product mixtures with high resolution [7]. The typical mobile phase employs a gradient from aqueous to organic solvents (e.g., 5% acetonitrile to 100% organic solvent over 30 minutes) to resolve metabolites across a wide polarity range.

Bioprospecting and Induction Strategies: Silent biosynthetic gene clusters (BGCs) often require specific induction conditions for activation. The OSMAC (One Strain Many Compounds) approach systematically varies cultivation parameters to trigger secondary metabolite production [7]. More specifically, co-culture techniques have proven particularly effective, mimicking ecological interactions and activating BGCs that remain silent in axenic cultures [7].

Data Mining and Annotation Strategies

The complexity of untargeted LC-HRMS datasets demands sophisticated data mining approaches:

Isotopic Signature Enrichment (ISE): This strategy filters features based on valid carbon isotope patterns, significantly reducing dataset complexity—demonstrated to achieve a six-fold reduction in features while retaining chemically relevant metabolites [8].

Mass Defect Analysis: Plotting Kendrick mass defects enables identification of homologous series and specific chemical classes, such as halogenated compounds or terpene families [8].

Biotransformation-Informed Feature Selection: This approach identifies putative metabolites by searching for expected biotransformation products (e.g., phase I/II modifications), facilitating discovery of biologically relevant metabolic pathways [8].

Integrative Approaches: Case Studies in Fungal Natural Product Discovery

The combination of genomics and metabolomics has emerged as a particularly powerful paradigm for natural product discovery. A recent study on Suillus fungi—ectomycorrhizal symbionts of pine trees—exemplifies this integrative approach [7].

Genomic Foundations

Genome mining of three Suillus species (S. hirtellus EM16, S. decipiens EM49, and S. cothurnatus VC1858) using antiSMASH revealed a remarkable richness of biosynthetic gene clusters, with 62 unique terpene BGCs predicted across the three species [7]. This genomic potential suggested a extensive, largely unexplored chemical repertoire.

Metabolomic Activation and Detection

To activate these silent BGCs, researchers employed a co-culture strategy, growing the fungi in all pairwise combinations for 28 days on solid media [7]. Metabolomic analysis of the interaction zones revealed:

- 41 putative prenol lipids (including 37 terpenes) were detected across the three species [7].

- Significant upregulation in co-culture conditions was observed for specific terpenes, including metabolites matching isomers of isopimaric acid, sandaracopimaric acid, and abietic acid—compounds typically associated with host defense mechanisms in pine trees [7].

- The chemical diversity detected through metabolomics corresponded well with the genomic potential predicted by antiSMASH analysis, validating the integrated approach [7].

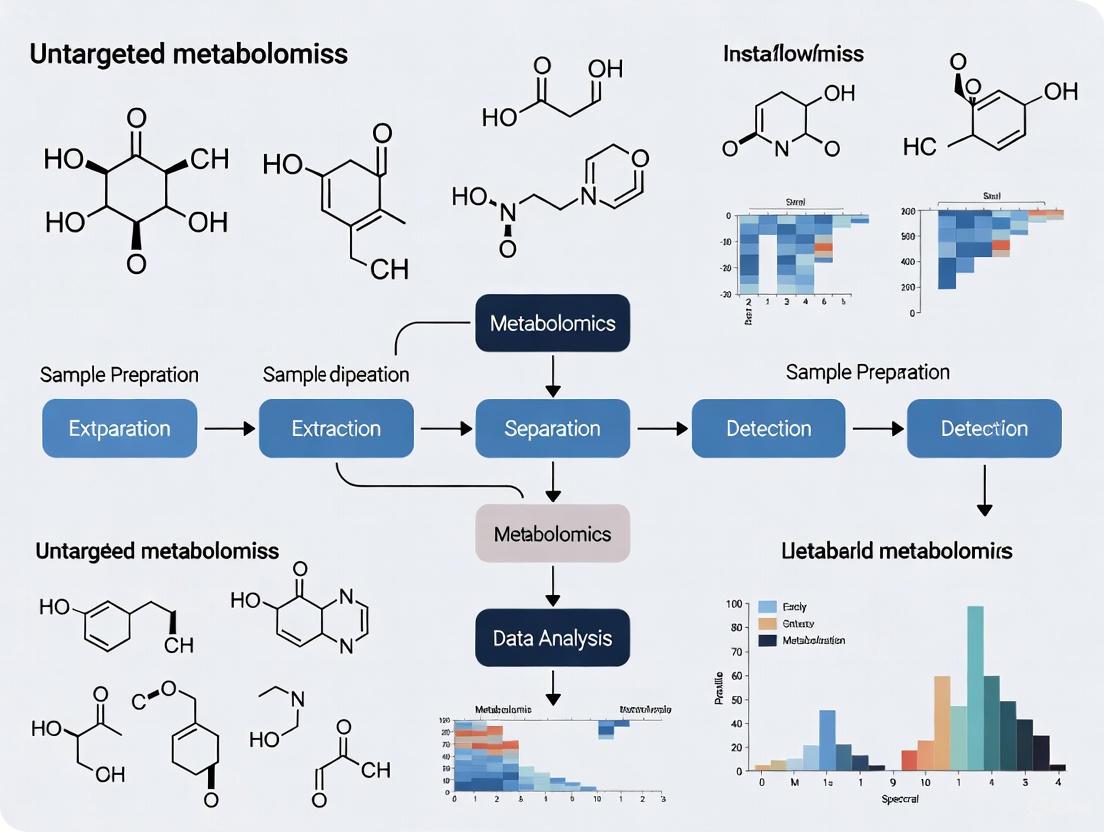

Figure 1: Integrated Genomics-Metabolomics Workflow for NP Discovery

Experimental Methodologies: Detailed Protocols for Untargeted Discovery

Fungal Co-culture and Metabolite Induction Protocol

Materials:

- Fungal strains: Suillus species (e.g., S. hirtellus EM16, S. decipiens EM49, S. cothurnatus VC1858)

- Growth medium: Solid high carbon Pachlewski's media in 100-mm Petri dishes

- Inoculation tools: Sterile brass core borer (4-mm diameter)

Procedure:

- Inoculate each Petri dish with two 4-mm fungal plugs placed exactly 2 cm apart, equidistant from a diameter line intersecting the plate.

- For co-culture treatments, use all pairwise combinations of species (n=5 biological replicates per combination).

- Include single-species controls inoculated with two plugs from the same species (n=5 biological replicates).

- Incubate cultures for 28 days in darkness at room temperature.

- Measure colony area twice weekly beginning at 7 days post-inoculation using background illumination and ImageJ analysis.

- After 28 days, collect three agar plugs along the interaction zone using a sterile brass borer, pool them, and immediately freeze in liquid nitrogen.

- Store samples at -80°C until processing [7].

Metabolite Extraction and LC-HRMS Analysis

Reagents and Equipment:

- Extraction solvents: LC-MS grade water, hydrated ethyl acetate

- Chromatography: Nanospray analytical column (75 μm × 150 mm) packed with 1.7-μm C18 Kinetex resin

- Mass spectrometer: ThermoFisher Q-Exactive Plus with Xcalibur software (v4.3)

Extraction Protocol:

- Lyophilize frozen agar plugs completely using a freeze dryer.

- Perform biphasic extraction by adding 0.5 mL cold LC-MS grade water and 0.5 mL cold hydrated ethyl acetate to dried samples.

- Vortex for 1 minute, then maintain at 4°C overnight for extraction.

- Separate ethyl acetate and aqueous fractions by aspiration.

- Filter aqueous fraction using 10-kDa filters (Sartorius Vivaspin) by centrifugation at 4,500 × g.

- Freeze-dry aqueous extract and resuspend in 5% acetonitrile, 0.1% formic acid.

- Air-dry ethyl acetate extract in a fume hood and resuspend in 70% acetonitrile, 0.1% formic acid.

- Store extracts at 4°C until LC-MS analysis [7].

LC-HRMS Parameters:

- Injection volume: 10 μL

- Flow rate: 250 nL/min

- Gradient: 30-minute linear gradient from 5% to 100% organic solvent

- MS resolution: 70,000 at m/z 200

- Mass range: 135-2,000 m/z

- Fragmentation: Stepped higher-energy C-trap dissociation (10, 20, 40 eV) [7]

Table 2: Essential Research Reagents and Platforms for Untargeted NP Discovery

| Category/Item | Specific Example/Platform | Function in NP Discovery |

|---|---|---|

| Chromatography | Nanospray C18 column (75 μm × 150 mm, 1.7-μm) | High-resolution separation of complex metabolite mixtures |

| Mass Spectrometry | ThermoFisher Q-Exactive Plus Orbitrap | High-resolution mass analysis for accurate metabolite identification |

| Genome Mining | antiSMASH v6.0.1 with fungal parameters | Prediction of biosynthetic gene clusters from genomic data |

| Bioinformatics | BiG-SCAPE, Scaffold Hunter | Analysis of BGC evolution and natural product scaffold relationships |

| Culture Induction | Co-culture on Pachlewski's medium | Activation of silent biosynthetic gene clusters through ecological interactions |

| Data Processing | Isotopic Signature Enrichment (ISE) algorithms | Reduction of feature complexity by filtering for valid isotopic patterns |

Figure 2: Untargeted Metabolomics Workflow for NP Discovery

The integration of natural product-informed exploration strategies with untargeted metabolomics technologies represents a transformative approach for navigating biologically relevant chemical space. Where traditional methods have provided uneven coverage of this space, the synergistic combination of BIOS and CtD frameworks with sensitive analytical platforms enables systematic identification of novel bioactive regions. The demonstrated success of these approaches—from discovering modulators of developmental signaling pathways to identifying novel chemical entities from fungal co-cultures—underscores their potential to revolutionize natural product discovery.

Looking forward, the continued advancement of untargeted metabolomics platforms, coupled with increasingly sophisticated data mining algorithms, promises to accelerate the exploration of nature's chemical repertoire. As these technologies become more accessible and integrated with synthetic methodologies, they will undoubtedly yield distinctive functional molecules that serve both as chemical probes for deciphering biological mechanisms and as starting points for therapeutic development. This systematic, data-driven approach to natural product discovery ultimately bridges the gap between the vastness of chemical space and our ability to explore it, unlocking nature's evolved chemical wisdom for fundamental biological insight and therapeutic innovation.

Untargeted metabolomics aims to comprehensively profile the complete set of small molecule metabolites (<1500 Da) within biological systems, providing critical insights into cellular metabolism, disease mechanisms, and biomarker discovery [9] [10]. This approach is particularly valuable for natural product discovery, where researchers seek to identify novel bioactive compounds from complex biological sources such as plants, microbes, and marine organisms [11] [12]. The field has gained significant traction in drug discovery workflows, with natural products comprising a substantial portion of our modern pharmacopeia due to their diverse biological relevance and structural complexity [11].

The analytical challenge in untargeted metabolomics lies in the vast chemical diversity and dynamic concentration range of metabolites present in biological samples. No single analytical platform can comprehensively cover the entire metabolome, making platform selection a critical consideration for research design [13]. Ultra-High Performance Liquid Chromatography-High Resolution Mass Spectrometry (UHPLC-HRMS) has emerged as one of the fastest-growing mass spectrometry methods in scientific fields including metabolomics, while Fourier Transform Ion Cyclotron Resonance Mass Spectrometry (FT-ICR-MS) offers unparalleled mass accuracy and resolving power, and Gas Chromatography-Mass Spectrometry (GC-MS) provides robust, reproducible analyses with extensive spectral libraries [14] [9] [13].

The fundamental goal in natural product discovery is to enhance the likelihood and improve the efficiency of discovering compounds with pharmaceutical potential while strategically harnessing data to reduce rediscovery and methodological redundancy [11]. This technical guide examines the comparative strengths of these three core analytical platforms within the context of untargeted metabolomics for natural product research, providing researchers with the information needed to select appropriate instrumentation for their specific investigations.

Platform Fundamentals and Technical Specifications

UHPLC-HRMS Platform

Ultra-High Performance Liquid Chromatography-High Resolution Mass Spectrometry (UHPLC-HRMS) couples advanced chromatographic separation with high-resolution mass detection, making it particularly suitable for analyzing semi-volatile and non-volatile compounds [14] [15]. The UHPLC component provides superior separation efficiency with sub-2μm particles operating at high pressures, resulting in sharper peaks, increased resolution, and shorter run times compared to conventional HPLC. When coupled with HRMS detectors such as Orbitrap or Q-TOF instruments, this platform delivers high mass accuracy (<5 ppm) and resolving power (typically 25,000-140,000 FWHM), enabling precise elemental composition determination [15].

The typical workflow involves liquid extraction of metabolites followed by UHPLC separation using reverse-phase or HILIC columns, with electrospray ionization (ESI) being the most common ionization technique. ESI efficiently ionizes a broad range of compounds, making it well-suited for diverse natural product analyses [15]. The major strengths of UHPLC-HRMS include its broad metabolome coverage, sensitivity for low-abundance metabolites, and ability to provide structural information through tandem MS experiments. These capabilities have made it a cornerstone technique in modern metabolomics research for natural product discovery [11] [14].

FT-ICR-MS Platform

Fourier Transform Ion Cyclotron Resonance Mass Spectrometry (FT-ICR-MS) represents the highest tier of mass analyzer in terms of resolution and mass accuracy [9]. This platform traps ions in a Penning trap under the influence of a strong magnetic field (typically 7T-15T for commercial instruments, up to 21T for research systems), where they undergo cyclotron motion at frequencies inversely proportional to their mass-to-charge ratios [9]. The detection system measures the free induction decay (FID) signal resulting from this ion motion, which is then Fourier transformed to produce a mass spectrum with unparalleled resolving power (10⁵-10⁶) and mass accuracy in the parts per billion (ppb) range [9].

The exceptional capabilities of FT-ICR-MS enable the separation of isobaric and isomeric species that would be indistinguishable on lower-resolution instruments. Additionally, the platform provides isotopic fine structure (IFS) analysis, which reveals the unique isotopic patterns of elements, allowing researchers to determine the exact number of atoms of specific elements (e.g., sulfur, oxygen) in unknown compounds [9]. This level of detailed molecular information is invaluable for characterizing novel natural products. The main limitations include longer acquisition times, higher instrumentation costs, complex data sets, and limited access, primarily through national mass spectrometry facilities [9].

GC-MS Platform

Gas Chromatography-Mass Spectrometry (GC-MS) has been a workhorse technique in metabolomics for decades, particularly for the analysis of volatile and thermally stable compounds [13]. The platform separates metabolites based on their volatility and interaction with the stationary phase in the GC column, followed by electron ionization (EI) which produces highly reproducible, characteristic fragmentation patterns [13]. The major advantage of GC-MS lies in its robust nature, with highly reproducible retention times and the availability of extensive spectral libraries such as the NIST Mass Spectral Library (containing over 250,000 spectra) and the Wiley Registry (over 700,000 spectra) [13].

For non-volatile metabolites, chemical derivatization (typically methoxylation and silylation) is required to increase volatility and thermal stability [13]. While this adds an extra step to sample preparation, it standardizes the analytical behavior of diverse metabolites and enhances detection sensitivity. Recent advancements include the introduction of Orbitrap GC-MS systems, which combine the separation power of GC with high-resolution mass detection, though computational tools for leveraging high-resolution GC-MS data remain underdeveloped compared to LC-MS platforms [13].

Comparative Performance Analysis

Table 1: Technical Specifications and Performance Metrics of Major Mass Analyzers

| Analyzer | Mass Accuracy | Resolution | m/z Range | Scan Speed | Key Strengths | Key Limitations |

|---|---|---|---|---|---|---|

| FT-ICR-MS | 100 ppb | 10⁵-10⁶ | 10,000 | 1-10 s | Highest accuracy and resolution; Isotopic fine structure analysis | Expensive; Large footprint; Complex data; Limited access |

| Orbitrap | 1-5 ppm | 10⁵-10⁶ | 10,000 | 1 s | High resolution and accuracy; Good sensitivity | Slower than TOF for some applications |

| Q-TOF | 5-10 ppm | 25,000-70,000 | >300,000 | ms | Fast acquisition; Good mass accuracy | Lower resolution than FT-ICR and Orbitrap |

| Quadrupole | 100 ppm | 4,000 | 4,000 | 1 s | Low cost; Robust; Quantitative capability | Unit mass resolution only |

| Ion Trap | 100 ppm | 4,000 | 1,000 | 1 s | MS^n capability; Good sensitivity | Limited resolution; Low mass accuracy |

Table 2: Analytical Performance Across Platforms in Metabolomics Applications

| Parameter | UHPLC-HRMS | FT-ICR-MS | GC-MS (Orbitrap) | GC-MS (Single Quad) |

|---|---|---|---|---|

| Typical Metabolic Coverage | 1,000-3,000 features | 3,000-5,000+ features | 300-500 compounds | 100-200 compounds |

| Mass Accuracy | 1-5 ppm | 100 ppb-1 ppm | 1-5 ppm | 100-500 ppm |

| Resolving Power | 25,000-140,000 | 100,000-1,000,000 | 60,000-120,000 | Unit resolution |

| Detection Sensitivity | fM-pM | fM-pM | pM-nM | nM-μM |

| Reproducibility | Moderate (retention time shift) | High | High (reproducible retention times) | High |

| Structural Elucidation | MS², MSⁿ capability | Isotopic fine structure; Ultra-high resolution | EI fragmentation libraries | EI fragmentation libraries |

Table 3: Application-Based Platform Selection Guide

| Application Need | Recommended Platform | Rationale | Example Use Cases |

|---|---|---|---|

| Comprehensive Metabolite Profiling | UHPLC-HRMS | Broad coverage of semi-polar metabolites; Good sensitivity and speed | Biomarker discovery; Metabolic pathway analysis [15] |

| Unknown Compound Characterization | FT-ICR-MS | Unparalleled resolution and mass accuracy for elemental composition | Natural product discovery; Metabolite identification [9] |

| Targeted Volatile Analysis | GC-MS (Quadrupole) | Robust quantification; Extensive libraries | Clinical diagnostics; Environmental analysis [13] |

| High-Throughput Screening | UHPLC-HRMS | Balance of speed, sensitivity, and information content | Drug discovery; Large cohort studies [11] [15] |

| Maximizing Metabolite Coverage | Multi-platform approach | Complementary coverage of different metabolite classes | Comprehensive metabolomics; Biomarker validation [13] |

The performance comparison reveals that each platform offers distinct advantages for specific applications in untargeted metabolomics. UHPLC-HRMS provides the best balance of metabolome coverage, sensitivity, and analytical throughput, making it suitable for most untargeted profiling studies [14] [15]. In a comparative study of critically ill patients, UHPLC-HRMS identified 13 metabolites predicting invasive mechanical ventilation and 8 associated with mortality, demonstrating its utility in biomarker discovery [16].

FT-ICR-MS delivers the highest quality data for structural elucidation, with sufficient resolution to separate isobaric compounds and perform isotopic fine structure analysis [9]. This capability is particularly valuable for natural product discovery, where researchers often encounter novel compounds not present in existing databases. The main constraint is practical accessibility, as these instruments are primarily available through core facilities and require significant expertise to operate and interpret data.

GC-MS platforms provide robust, reproducible analyses with the advantage of extensive spectral libraries [13]. The recent introduction of high-resolution Orbitrap GC-MS systems has improved metabolic coverage and sensitivity, with one study reporting 339 detected compounds compared to 114 with single-quadrupole systems using the same samples [13]. However, the requirement for derivatization limits the range of metabolites amenable to GC-MS analysis, particularly for unstable or non-volatile compounds.

Experimental Protocols and Methodologies

UHPLC-HRMS Protocol for Cell Metabolomics

The following protocol has been successfully applied to study the effects of anlotinib on glioma C6 cells using UHPLC-HRMS-based metabolomics and lipidomics [15]:

Sample Preparation:

- Cell Culture and Treatment: Seed C6 cells in 6-well plates at a density of 1 × 10⁷ cells/well. At 80% confluency, treat cells with the compound of interest (e.g., anlotinib) based on cell viability results for 24 hours. Include PBS-treated controls. Prepare six replicates per group.

- Metabolite Extraction: Wash cells twice with pre-chilled normal saline, trypsinize, and wash again with PBS. Count approximately 1 × 10⁷ cells per sample and centrifuge at 1500 rpm for 5 minutes. Remove supernatant and add five sample volumes of methanol/dichloromethane/water (3:3:2, v/v/v) previously stored at -40°C.

- Cell Disruption: Subject samples to three freeze-thaw (-80°C/room temperature) cycles and disrupt with an ultrasonic cell crusher under ice bath conditions. Vortex for 30 seconds, equilibrate for 10 minutes, and centrifuge at 13,000 rpm for 10 minutes.

- Sample Reconstitution: Collect the two-phase solutions separately and dry under N₂ gas. Reconstitute upper extracts in 80% methanol for metabolomic analysis and lower extracts in isopropanol for lipidomic analysis.

UHPLC Conditions:

- Column: ACQUITY UPLC BEH C18 (1.7 μm, 2.1 mm × 50 mm)

- Mobile Phase: A) water with 0.1% formic acid; B) acetonitrile

- Gradient: 0-2 min (5% B), 2-15 min (5-100% B), 15-18 min (100% B)

- Flow Rate: 0.3 mL/min

- Injection Volume: 5 μL

- Column Temperature: 40°C

HRMS Parameters (Q-Exactive Orbitrap):

- Ionization: ESI positive and negative modes

- Full MS Parameters: Resolution 70,000 FWHM; AGC target 3e6 ions; maximum IT 100 ms

- dd-MS² Parameters: Resolution 17,500 FWHM; AGC target 1e5 ions; loop count 5

- Mass Range: 80-1200 m/z

FT-ICR-MS Metabolomics Protocol

Sample Preparation for FT-ICR-MS:

- Extraction: Use appropriate extraction methods based on sample type (e.g., Bligh-Dyer for lipids, methanol/water for polar metabolites).

- Cleanup: Employ solid-phase extraction if necessary to remove salts and matrix interferents.

- Dilution: Optimize sample concentration to avoid space-charge effects in the ICR cell (typically 0.1-1 μg/μL).

FT-ICR-MS Data Acquisition:

- Calibration: Perform external calibration with a certified reference mixture or internal calibration using known ubiquitous compounds.

- Data Collection: Acquire data in broadband mode with sufficient transients to achieve desired signal-to-noise ratio (typically 64-256 scans).

- Ion Accumulation: Optimize ion accumulation time to maximize signal while avoiding overfilling the ICR cell.

Data Processing:

- Peak Picking: Use software with sophisticated algorithms to identify peaks with very high mass accuracy.

- Formula Assignment: Assign molecular formulas using the exact mass information with constraints such as element limits (e.g., C₀-₁₀₀, H₀-₂₀₀, O₀-₅₀, N₀-₁₀).

- Data Interpretation: Utilize visualization tools such as van Krevelen diagrams and Kendrick mass defect plots for compound classification.

GC-MS Metabolomics Protocol

Sample Derivatization:

- Methoximation: Add 20 μL of methoxyamine hydrochloride (20 mg/mL in pyridine) to the dried sample and incubate at 30°C for 90 minutes.

- Silylation: Add 80 μL of MSTFA (N-Methyl-N-(trimethylsilyl)trifluoroacetamide) and incubate at 37°C for 30 minutes.

GC-MS Conditions:

- Column: DB-5MS or similar (30 m × 0.25 mm ID, 0.25 μm film thickness)

- Inlet Temperature: 250°C

- Oven Program: 60°C (1 min), then 10°C/min to 325°C, hold 10 min

- Carrier Gas: Helium, constant flow 1.0 mL/min

- Injection Volume: 1 μL (split or splitless mode)

- Transfer Line Temperature: 280°C

MS Detection:

- Ionization: Electron ionization (70 eV)

- Ion Source Temperature: 230°C

- Scan Range: 50-600 m/z

Data Analysis and Bioinformatics

UHPLC-HRMS Data Processing Tools

The analysis of UHPLC-HRMS data requires sophisticated software tools for feature extraction, alignment, and annotation. A comprehensive evaluation of six advanced UHPLC-HRMS data analysis tools revealed significant differences in their feature detection capabilities [14] [17]. The study compared MS-DIAL, XCMS, MZmine, AntDAS, Progenesis QI, and Compound Discoverer using both targeted and untargeted plant datasets [14].

The results indicated that AntDAS provided the most acceptable feature extraction, compound identification, and quantification results in targeted compound analysis [14] [17]. For complex plant datasets, both MS-DIAL and AntDAS delivered more reliable results than the other tools [14]. The study also suggested that employing multiple data analysis tools may improve the quality of data analysis results, as different algorithms can complement each other in feature detection [14].

Advanced Annotation Strategies

Metabolite annotation remains a major challenge in untargeted metabolomics due to the vast chemical diversity of metabolites [10]. Traditional library-based matching is limited to known metabolites with available reference spectra. To address this limitation, novel computational approaches have emerged:

Two-Layer Interactive Networking: This approach integrates data-driven and knowledge-driven networks to enhance metabolite annotation [10]. The method involves:

- Curating a comprehensive metabolic reaction network using graph neural network-based prediction

- Pre-mapping experimental data onto this network via sequential MS1 matching

- Applying reaction relationship mapping and MS2 similarity constraints

- Enabling interactive annotation propagation with improved computational efficiency

This strategy has demonstrated the ability to annotate over 1,600 seed metabolites with chemical standards and more than 12,000 putatively annotated metabolites through network-based propagation [10]. Notably, it has led to the discovery of two previously uncharacterized endogenous metabolites absent from human metabolome databases [10].

Molecular Networking: This data-driven approach groups metabolites based on MS2 spectral similarity, allowing for the annotation of unknown compounds based on their structural relationship to known metabolites [10]. Molecular networking has proven particularly valuable in natural product discovery, where many compounds may be structurally related but not present in standard libraries.

Research Reagent Solutions and Materials

Table 4: Essential Research Reagents and Materials for Metabolomics

| Reagent/Material | Function | Application Notes |

|---|---|---|

| Methanol/Dichloromethane/Water (3:3:2) | Comprehensive metabolite extraction | Bligh-Dyer method; extracts both polar and non-polar metabolites [15] |

| Methoxyamine Hydrochloride | Methoximation of carbonyl groups | Stabilizes aldehydes and ketones for GC-MS analysis; reduces ring formation in sugars [13] |

| MSTFA | Silylation derivatizing agent | Adds trimethylsilyl groups to polar functional groups (-OH, -COOH, -NH) for GC-MS [13] |

| Retention Index Markers | Retention time standardization | Enables comparison of retention times across different GC-MS systems [13] |

| Internal Standards | Quality control and quantification | Correct for variations in extraction and analysis; use isotopically labeled analogs when possible |

| R2A/R2B Medium | Bacterial endophyte culture | Specific for maintaining bacterial endophytes for co-culture experiments [18] |

| Gamborg B5 Medium | Plant cell suspension culture | Used for maintaining Alkanna tinctoria cell suspensions [18] |

Applications in Natural Product Discovery

Plant-Microbe Interaction Studies

UHPLC-HRMS has proven invaluable for investigating plant-microbe interactions and their impact on secondary metabolite production. In a study examining the effects of bacterial endophytes on Alkanna tinctoria cell suspensions, UHPLC-HRMS-based untargeted metabolomics revealed significant modifications in secondary metabolite regulation patterns [18]. The approach led to the identification of 32 stimulated compounds in A. tinctoria cell suspensions, with four compounds putatively identified for the first time [18]. This research demonstrates how selected microbial inoculants under controlled conditions can effectively enhance or stimulate the production of specific high-value metabolites.

The experimental design involved co-culture experiments using cell suspensions of the medicinal plant A. tinctoria with eight of its bacterial endophytes [18]. Either bacterial homogenate (BaH) or bacterial endophyte culture supernatant (ECM) was inoculated into A. tinctoria cell suspensions, with metabolite extraction performed using a methanol/dichloromethane/water system [18]. The UHPLC-HRMS analysis employed a C18 column with water-formic acid and acetonitrile mobile phases, detecting metabolites across a mass range of 80-1200 m/z [18].

Drug Mechanism Elucidation

UHPLC-HRMS-based metabolomics and lipidomics have been successfully applied to investigate the mechanisms of action of potential therapeutic compounds. In a study of anlotinib, a multi-target tyrosine kinase inhibitor, in glioma C6 cells, the technique identified 24 disturbed metabolites in cells and 23 in cell culture medium responsible for the intervention effects [15]. Additionally, 17 differential lipids in cells were identified between anlotinib-exposed and untreated groups [15].

Pathway analysis revealed that anlotinib modulated several key metabolic pathways, including amino acid metabolism, energy metabolism, ceramide metabolism, and glycerophospholipid metabolism [15]. These findings provided insights into the anti-glioma mechanism of anlotinib from the perspective of metabolic reprogramming, suggesting that these affected pathways represent key molecular events in cells treated with this compound [15].

Integrated Workflow and Future Perspectives

Diagram 1: Integrated Workflow for Natural Product Discovery Using Multiple Analytical Platforms

The field of untargeted metabolomics continues to evolve with several emerging trends shaping future research directions. Open data initiatives are streamlining discovery workflows and facilitating data sharing across research groups [11]. Multi-platform approaches that combine the complementary strengths of UHPLC-HRMS, FT-ICR-MS, and GC-MS are increasingly being employed to maximize metabolome coverage [13]. Advanced computational tools that leverage artificial intelligence and machine learning are enhancing metabolite annotation and reducing reliance on spectral libraries [10].

For natural product discovery, the integration of metabolomics with other omics technologies (genomics, transcriptomics, proteomics) provides a more comprehensive understanding of biosynthetic pathways and regulation [12]. This systems biology approach is particularly powerful for studying microbiomes, where secondary metabolites mediate complex microbial interactions and impact host physiology [12]. As these technologies continue to advance and become more accessible, they will undoubtedly accelerate the discovery and development of novel natural products with therapeutic potential.

UHPLC-HRMS, FT-ICR-MS, and GC-MS each offer distinct strengths for untargeted metabolomics in natural product discovery research. UHPLC-HRMS provides the best balance of coverage, sensitivity, and throughput for most applications. FT-ICR-MS delivers unparalleled resolution and mass accuracy for characterizing novel compounds. GC-MS offers robust, reproducible analyses with extensive spectral libraries for volatile compounds. The choice of platform depends on specific research goals, sample types, and available resources. For comprehensive natural product discovery, a multi-platform approach that leverages the complementary strengths of these techniques often yields the most complete picture of the metabolome, ultimately enhancing the efficiency of discovering natural products with pharmaceutical potential.

In the field of natural product discovery, untargeted metabolomics serves as a powerful hypothesis-generating tool, capable of revealing the vast chemical diversity produced by biological systems. Unlike targeted analyses, exploratory studies aim to comprehensively profile small molecules without prior knowledge of the metabolome's composition. This unbiased approach is particularly valuable for discovering novel bioactive compounds from complex natural sources like plants, fungi, and marine organisms. However, the reliability of these discoveries hinges on rigorous experimental design, meticulous sample preparation, and robust quality control (QC) protocols. These foundational steps are critical for minimizing technical variability and ensuring that observed biological differences are genuine, thereby providing a solid foundation for downstream drug development pipelines.

The untargeted metabolomics workflow for natural products is a multi-stage process designed to transform raw biological samples into meaningful biochemical insights. Effective data visualization is crucial at every stage, serving not only for final presentation but also for real-time data inspection, evaluation, and sharing capabilities during analysis [19]. The overarching workflow, from sample collection to functional interpretation, can be visualized as follows:

Detailed Methodologies and Protocols

Sample Preparation for Natural Products

Proper sample preparation is the first critical step to ensure a comprehensive and unbiased extraction of metabolites. The protocol varies significantly based on the sample matrix.

3.1.1 Sample Collection and Storage

- Tissues and Microbial Cells: Snap-freeze in liquid nitrogen immediately after collection to quench metabolic activity. Store at -80°C until extraction.

- Plant Materials: Lyophilize (freeze-dry) tissues and homogenize to a fine powder using a ball mill or mortar and pestle under liquid nitrogen.

- Biofluids (e.g., fermentation broths): Centrifuge to remove cell debris. Aliquot supernatant and store at -80°C. Avoid repeated freeze-thaw cycles.

3.1.2 Metabolite Extraction A dual-phase extraction protocol is often recommended for natural products to capture both hydrophilic and lipophilic metabolites [1].

Materials:

- Extraction solvent: Acetonitrile:Methanol:Formic Acid (74.9:24.9:0.2, v/v/v) [1]

- Internal Standard Extraction Solution: Prepared in the extraction solvent (e.g., 0.1 μg/mL l-Phenylalanine-d8 and 0.2 μg/mL l-Valine-d8) [1]

- LC/MS-grade water

- Phosphate Buffered Saline (PBS)

Procedure:

- Weigh approximately 10 mg of lyophilized powder or 50 μL of liquid sample into a pre-chilled 2 mL microcentrifuge tube.

- Add 1 mL of the ice-cold Internal Standard Extraction Solution.

- Vortex vigorously for 30 seconds.

- Sonicate in an ice-water bath for 10 minutes.

- Incubate at -20°C for 1 hour to precipitate proteins.

- Centrifuge at 16,000 × g for 15 minutes at 4°C.

- Transfer the supernatant (the metabolite-containing extract) to a new LC/MS vial.

- Evaporate the extract to dryness under a gentle stream of nitrogen gas.

- Reconstitute the dried extract in 100 μL of a solvent compatible with the chosen LC method (e.g., 90% water, 10% acetonitrile for HILIC). Vortex thoroughly.

- Centrifuge again at 16,000 × g for 10 minutes before transferring the supernatant to an LC vial with insert for analysis.

Liquid Chromatography-Mass Spectrometry Data Collection

Liquid Chromatography tandem Mass Spectrometry (LC-MS/MS) is the cornerstone analytical platform for untargeted metabolomics due to its high sensitivity and ability to detect a wide range of compounds [20].

3.2.1 Liquid Chromatography (LC) Chromatography separates compounds to reduce sample complexity and ion suppression.

- Recommended Technique: Hydrophilic Interaction Liquid Chromatography (HILIC) is well-suited for separating polar metabolites central to energy pathways and primary metabolism [1].

- Column: Waters Atlantis HILIC Silica column (3 μm, 2.1 mm × 150 mm) or equivalent [1].

- Mobile Phase:

- Gradient:

- Start at 85% B.

- Ramp to 20% B over 20 minutes.

- Hold at 20% B for 5 minutes.

- Re-equilibrate to 85% B for 10 minutes.

- Flow Rate: 0.25 mL/min.

- Column Temperature: 40°C.

- Injection Volume: 5-10 μL.

3.2.2 Mass Spectrometry (MS) High-resolution accurate mass spectrometry is essential for determining elemental compositions.

- Recommended Instrumentation: Quadrupole Time-of-Flight (Q-TOF) or Orbitrap mass spectrometer [1] [20].

- Ionization Mode: Electrospray Ionization (ESI), in both positive and negative polarities.

- Scanning Mode:

- MS1: Full-scan mode (e.g., m/z 50-2000) for metabolite profiling.

- MS2: Data-Dependent Acquisition (DDA) to fragment the most intense ions from the MS1 scan for compound identification.

Comprehensive Quality Control Strategy

A multi-layered QC system is non-negotiable for generating high-quality, reliable data. The relationships and purposes of different QC elements are outlined below.

Table 1: Essential Research Reagent Solutions for Quality Control

| Reagent / Solution | Function / Purpose | Technical Specification / Preparation |

|---|---|---|

| Internal Standard Stock | Monitors extraction efficiency, instrument performance, and data quality [1]. | l-Phenylalanine-d8 and l-Valine-d8 at 1000 μg/mL in water:methanol [1]. |

| Internal Standard Extraction Solution | Incorporated into every sample for batch-normalization [1]. | Extraction solvent with 0.1 μg/mL l-Phenylalanine-d8 and 0.2 μg/mL l-Valine-d8 [1]. |

| Pooled QC Sample | Assesses analytical stability across the entire batch sequence. | A small aliquot of every experimental sample combined into a single, homogeneous pool. |

| Process Blank | Identifies background signals and contaminants from solvents and plasticware. | Extraction solvent processed identically to biological samples but without any biological material. |

| LC Mobile Phase A | Aqueous mobile phase for HILIC separation [1]. | 0.1% formic acid, 10 mM ammonium formate in LC/MS-grade water [1]. |

| LC Mobile Phase B | Organic mobile phase for HILIC separation [1]. | 0.1% formic acid in LC/MS-grade acetonitrile [1]. |

| Extraction Solvent | Precipitates proteins and extracts a broad range of metabolites [1]. | Acetonitrile:Methanol:Formic Acid (74.9:24.9:0.2, v/v/v) [1]. |

Data Processing and Functional Analysis

Following data acquisition, raw LC-MS/MS files require sophisticated processing to extract biological insights.

4.1 Data Preprocessing and Statistical Analysis

- Software Tools: Utilize platforms like MZmine or the LC-MS Spectral Processing module in MetaboAnalyst for peak picking, alignment, and normalization [20] [4].

- Multivariate Statistics: Apply Principal Component Analysis (PCA) to visualize overall data structure and detect outliers. Use Orthogonal Projections to Latent Structures-Discriminant Analysis (OPLS-DA) to maximize separation between predefined groups and identify biomarker candidates [4].

- Univariate Statistics: Perform t-tests or ANOVA, corrected for multiple testing (e.g., False Discovery Rate), and generate volcano plots to visualize significantly altered metabolites [4] [19].

4.2 Compound Annotation and Functional Interpretation Confidently identifying unknown natural products remains a key challenge.

- Annotation Confidence Levels:

- Level 1: Confident identification by matching standard's RT and MS/MS spectrum.

- Level 2: Probable structure based on MS/MS spectral similarity to libraries.

- Level 3: Putative characterization by compound class.

- Pathway Analysis: Input annotated metabolite lists into tools like MetaboAnalyst's "Pathway Analysis" or "Enrichment Analysis" modules to identify biologically relevant pathways (e.g., phenylpropanoid biosynthesis, terpenoid backbone biosynthesis) that are perturbed in the experimental condition [4].

A rigorously designed experimental framework for sample preparation and quality control is the bedrock of successful untargeted metabolomics in natural product discovery. By implementing the detailed protocols for sample extraction, chromatographic separation, and the multi-faceted QC strategy outlined in this guide, researchers can significantly enhance the reliability and biological relevance of their findings. This disciplined approach ensures that the novel chemical entities and biochemical insights generated are a true reflection of the biological system under study, thereby de-risking the subsequent stages of drug development and accelerating the translation of natural product discovery from the laboratory to the clinic.

Sanghuangporus spp. are medicinal macrofungi, traditionally referred to as "forest gold" in East Asia for their diverse pharmacological properties, which include the prevention and treatment of cancer, diabetes, and inflammatory diseases [21]. The significant therapeutic value of these fungi is primarily attributed to bioactive constituents such as polysaccharides, terpenoids, and flavonoids [21] [22]. However, taxonomic ambiguity and frequent market adulteration, stemming from historical reliance on morphological traits for identification, have hindered their standardized utilization [21] [22]. This case study employs untargeted metabolomics to systematically analyze the metabolic profiles of different Sanghuangporus species, providing a scientific basis for species authentication and quality control while demonstrating the power of metabolomics in natural product discovery [21] [11].

Methodology: Untargeted Metargeted Metabolomics Workflow

Sample Collection and Preparation

The study analyzed three representative species: Sanghuangporus sanghuang (SS), Sanghuangporus vaninii (SV), and Sanghuangporus baumii (SB), with six biological replicates each [21].

- Collection: Wild fruiting bodies were collected from specific host trees in Tibet, China [21].

- Preparation: Samples were vacuum freeze-dried, ground to powder, and extracted using 70% methanolic aqueous internal standard extract pre-cooled at -20°C. The extracts were vortexed, centrifuged, and filtered through a 0.22 μm microporous membrane for UPLC-MS/MS analysis [21].

UPLC-Q-TOF-MS Analysis

Chromatographic separation was performed using the following parameters [21]:

- Column: Waters ACQUITY UPLC HSS T3 (1.8 μm, 2.1 × 100 mm) at 40°C.

- Mobile Phase: Water with 0.1% formic acid (A) and acetonitrile with 0.1% formic acid (B).

- Gradient: 95% A to 35% A in 5 min, then to 1% A in 1 min, held for 1.5 min, returned to initial conditions in 0.1 min, and re-equilibrated for 2.4 min.

- Flow Rate and Injection Volume: 0.4 mL/min and 4 μL, respectively.

- Mass Spectrometry: Analysis was performed in both positive and negative electrospray ionization modes, with a TOF MS scan range of 50–1250 Da [21].

Data Processing and Metabolite Identification

- Raw Data Conversion: Raw data were converted to mzML format using ProteoWizard [21].

- Peak Processing: Peak extraction, alignment, and retention time correction were performed with the XCMS package in R. Peaks with a missing rate >50% were removed, and missing values were imputed [21].

- Metabolite Annotation: Processed peaks were annotated by MS/MS spectral matching against an integrated library (including in-house MVDB, HMDB, KEGG, Mona, and MassBank) with a mass accuracy threshold of ≤25 ppm [21].

- Statistical Analysis: Data were normalized and subjected to multivariate statistical analysis, including Principal Component Analysis (PCA) and Orthogonal Projections to Latent Structures-Discriminant Analysis (OPLS-DA) [21].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 1: Key research reagents, instruments, and software used in untargeted metabolomics of Sanghuangporus spp.

| Item Name | Function/Application | Specific Examples / Parameters |

|---|---|---|

| UPLC-Q-TOF-MS System | High-resolution separation and detection of metabolites. | Shimadzu LC-30A coupled with LCMS-8050 [21]. |

| Chromatography Column | Separation of metabolite compounds. | Waters ACQUITY UPLC HSS T3 (1.8 μm, 2.1 × 100 mm) [21]. |

| Extraction Solvent | Extraction of metabolites from fungal material. | 70% methanolic aqueous internal standard extract [21]. |

| Mobile Phase | Liquid chromatography solvent system. | Water + 0.1% formic acid (A); Acetonitrile + 0.1% formic acid (B) [21]. |

| Data Processing Software | Raw data conversion, peak picking, alignment. | ProteoWizard, XCMS package in R [21]. |

| Metabolite Databases | Annotation and identification of metabolites. | HMDB, KEGG, MassBank, in-house MVDB [21]. |

Results and Discussion

Metabolic Profile and Differential Analysis

A total of 788 metabolites were identified and classified into 16 categories [21]. Among these, 97 common differential metabolites were identified, including key bioactive compounds such as flavonoids, polysaccharides, and terpenoids [21]. Multivariate statistical analyses revealed distinct clustering and metabolic patterns among the three species, confirming substantial interspecies differences [21].

Table 2: Key differential bioactive compounds identified in Sanghuangporus species.

| Metabolite Name | Class | Significance / Bioactivity | Relative Abundance (SS/SV/SB) |

|---|---|---|---|

| Apigenin | Flavonoid | Anti-inflammatory, anticancer properties [22]. | Significantly higher in SV and SB vs. SS [21]. |

| D-glucuronolactone | Polysaccharide | Immunomodulatory, detoxification [22]. | Significantly higher in SV and SB vs. SS [21]. |

| Hispidin | Polyphenol | Antioxidant, anticancer, antiviral activities [22]. | Information not specified in search results. |

| Morin | Flavonoid | Cartilage protection, anti-inflammatory [22]. | Information not specified in search results. |

Pathway Analysis and Biological Interpretation

KEGG pathway enrichment analysis showed that the differential metabolites were predominantly involved in flavonoid and isoflavonoid biosynthesis [21]. This highlights the central role of these pathways in defining the pharmacological potential of Sanghuangporus species.

Implications for Natural Product Drug Discovery

This study exemplifies how untargeted metabolomics guides natural product discovery [11]. By rapidly characterizing metabolic profiles and identifying species-specific biomarkers, this approach efficiently prioritizes candidates like SV and SB for further pharmaceutical development. The methodology reduces the rediscovery of known compounds and helps link specific metabolites to biological activity, thereby streamlining the drug discovery pipeline from traditional medicinal sources [11] [12].

This untargeted metabolomics case study successfully delineated the distinct metabolic profiles of three Sanghuangporus species. The findings confirm significant interspecies differences in bioactive compound levels, with S. vaninii and S. baumii exhibiting higher abundances of key therapeutic metabolites. This work provides a robust scientific foundation for the authentication, quality control, and medicinal development of Sanghuangporus,

while firmly establishing the value of untargeted metabolomics as a powerful tool in modern natural product drug discovery research.

Advanced Methodologies and Real-World Applications in Natural Product Analysis

Fourier Transform Ion Cyclotron Resonance Mass Spectrometry (FT-ICR-MS) represents the pinnacle of mass resolution and accuracy in analytical chemistry, providing unprecedented capabilities for untargeted metabolomics and natural product discovery. This technical guide explores the core principles, advanced methodologies, and practical applications of FT-ICR-MS, framing them within the context of natural product research. We detail how its exceptional performance characteristics—including ultra-high mass resolution, parts-per-billion mass accuracy, and isotopic fine structure resolution—enable researchers to decipher complex metabolic mixtures, identify novel bioactive compounds, and expand the known chemical space of natural products. Through comprehensive protocols, data analysis workflows, and case studies, this whitepaper serves as an essential resource for scientists pursuing drug discovery from natural sources.

Untargeted metabolomics aims to provide a comprehensive, unbiased profile of all metabolites within a biological system, capturing dynamic biochemical processes that reflect physiological states and environmental influences [23]. For natural product discovery, this approach is invaluable for identifying novel bioactive compounds and understanding complex metabolic pathways in organisms. FT-ICR-MS has emerged as the highest performance mass spectrometry technology for this application, capable of simultaneously detecting thousands of compounds in a single analysis with extreme mass resolution and accuracy unmatched by other mass spectrometers [23] [24].

The technology's unparalleled capabilities make it particularly suited for natural product research, where researchers often encounter complex mixtures of unknown compounds with subtle structural differences. FT-ICR-MS enables precise identification and differentiation of metabolites within complex biological samples, providing highly accurate molecular formulas based on exact mass and isotopic distribution [23]. This technical guide explores the fundamental principles, methodologies, and applications of FT-ICR-MS, with specific emphasis on its transformative role in advancing natural product discovery through untargeted metabolomics.

Fundamental Advantages of FT-ICR-MS in Metabolomics

Unmatched Analytical Performance

FT-ICR-MS provides several key advantages that make it particularly valuable for untargeted metabolomics and natural product discovery:

Extreme Mass Resolution and Accuracy: FT-ICR-MS offers the highest resolving power (10⁵–10⁶) and mass accuracy (<1 ppm) among all mass analyzers, enabling separation of isobaric compounds and precise elemental composition determination [23] [24]. This allows researchers to distinguish between metabolites with minute mass differences—as small as a few electronvolts—that would be indistinguishable with other instruments.

Isotopic Fine Structure (IFS) Analysis: The exceptional resolution enables observation of isotopic fine structure, providing direct insight into the elemental composition of metabolites by resolving individual isotopic peaks [24]. For example, IFS can distinguish between ¹³C and ¹⁵N isotopes in metabolite identification, offering an additional dimension for molecular formula assignment.

High Dynamic Range: The technology enables simultaneous detection of both abundant and trace metabolites, providing a more comprehensive profile of the metabolome, which is crucial for identifying low-abundance bioactive natural products [23].

Comparative Technical Specifications

Table 1: Comparison of FT-ICR-MS with Other High-Resolution Mass Spectrometry Platforms

| Mass Analyzer | Mass Accuracy (ppm) | Resolving Power | Isotopic Fine Structure | Isobar Separation |

|---|---|---|---|---|

| FT-ICR-MS | <1 ppm | 10⁵–10⁶ | Yes | Excellent |

| Orbitrap | 1–5 ppm | 10⁴–5×10⁴ | Limited | Good |

| Q-TOF | 2–5 ppm | 10⁴–6×10⁴ | No | Moderate |

| Magnetic Sector | 1–10 ppm | 10⁴–10⁵ | Limited | Good |

Table 2: Key Performance Metrics of FT-ICR-MS Instruments by Magnetic Field Strength

| Magnetic Field (Tesla) | Typical Resolving Power | Mass Accuracy (ppb) | Transient Acquisition Time |

|---|---|---|---|

| 7 T | 500,000 | 100–500 ppb | 0.5–1 s |

| 12 T | 1,000,000 | 50–100 ppb | 1–2 s |

| 15 T | 1,500,000 | <50 ppb | 1–3 s |

| 21 T (custom) | >2,000,000 | <10 ppb | 2–4 s |

Experimental Workflows and Methodologies

Comprehensive FT-ICR-MS Workflow for Natural Product Discovery

The following diagram illustrates the integrated workflow for natural product discovery using FT-ICR-MS:

Sample Preparation and Extraction Protocols

Solid-Phase Extraction (SPE) Protocol for Natural Products:

- Sample Collection: Collect biological material (microbial cultures, plant tissues, marine organisms) and immediately freeze in liquid nitrogen to preserve metabolic profiles [25].

- Extraction: Homogenize material in appropriate solvents (methanol, ethanol, or dichloromethane-methanol mixtures) using a 10:1 solvent-to-biomass ratio [25] [26].

- Cleanup: Employ solid-phase extraction using PPL cartridges (1g Varian Bond Elut PPL) preconditioned with methanol followed by pH 2 Milli-Q water [26].

- Elution: Load acidified samples (pH 2 with HCl) onto cartridges, rinse with acidified Milli-Q water, and elute with 20 mL methanol [26].

- Concentration: Evaporate extracts under nitrogen gas and store at -20°C in pre-combusted glass vials until analysis [26].

Critical Considerations:

- For diverse natural product classes, implement sequential extraction with solvents of increasing polarity [25].

- For labile compounds, maintain low temperatures and minimize light exposure throughout the process.

- For complex environmental samples, additional purification steps may be necessary to remove interfering compounds [27].

Ionization Techniques for Diverse Natural Products

Table 3: Ionization Methods for Different Classes of Natural Products

| Ionization Technique | Mechanism | Optimal Compound Classes | Key Applications in Natural Products |

|---|---|---|---|

| Electrospray Ionization (ESI) | Proton transfer in solution | Polar compounds, acids, bases | Alkaloids, glycosides, polar secondary metabolites |

| Atmospheric Pressure Photoionization (APPI) | Gas-phase photon absorption | Non-polar, aromatic compounds | Terpenoids, polyketides, non-polar aromatics |

| Atmospheric Pressure Chemical Ionization (APCI) | Gas-phase chemical ionization | Medium polarity compounds | Lipids, fatty acids, medium polarity metabolites |

| Matrix-Assisted Laser Desorption/Ionization (MALDI) | Laser desorption with matrix | Broad range, imaging | Spatial metabolomics, tissue imaging |

Methodology Note: For comprehensive coverage, analyze samples in both positive and negative ionization modes, and consider combining data from multiple ionization techniques to overcome ionization suppression effects and achieve broader metabolome coverage [23].

Advanced Tandem MS Approaches

CASI-CID MS/MS Protocol for Structural Elucidation:

- Continuous Accumulation of Selected Ions (CASI): Implement m/z-selective accumulation to enhance sensitivity and dynamic range for targeted mass windows [26].

- Collision-Induced Dissociation (CID): Fragment selected precursors using optimized collision energies (typically 15-35 eV for natural products) [26].

- Data Acquisition: Collect both precursor and fragment molecular ions across defined m/z range (e.g., 261-477) [26].

- Structural Family Analysis: Identify connected precursors based on neutral mass loss patterns (Pn-1 + F1:n + C) across the 2D MS/MS space [26].

This approach has been shown to identify over 1900 structural families of compounds in complex natural organic matter samples, revealing a high degree of isomeric content not detectable through precursor ion analysis alone [26].

Data Processing and Molecular Formula Assignment

Computational Tools for FT-ICR-MS Data

Table 4: Software Tools for FT-ICR-MS Data Analysis in Natural Product Research

| Software Tool | Platform | Key Features | Natural Product Applications |

|---|---|---|---|

| MetaboDirect | Command-line, Python | Biochemical transformation networks, van Krevelen diagrams, statistical analysis | Microbial natural products, environmental metabolomics |

| ftmsRanalysis | R package | Statistical comparisons, interactive visualizations, group comparisons | Complex mixture analysis, metabolic profiling |

| CoreMS | Python framework | Molecular formula assignment, isotopic pattern analysis | General natural product discovery |

| FREDA | Web-based | Formula assignment, basic visualization | Rapid screening applications |

| PyKrev | Python | Van Krevelen diagrams, elemental ratios | Chemical space analysis |

Molecular Formula Assignment Workflow

The precise assignment of molecular formulas from FT-ICR-MS data involves a multi-step process:

Peak Picking and Calibration:

- Internal calibration using known homologous series or standard compounds

- Achieve mass accuracy < 0.1 ppm for reliable formula assignment [27]

Elemental Composition Constraints:

Isotopic Pattern Verification:

- Compare theoretical and observed isotopic distributions

- Utilize isotopic fine structure when resolution permits [24]

Chemical Intelligence Filtering:

- Apply rules for hydrogen deficiency, element ratios, and nitrogen rule

- Calculate aromaticity index (AI) and double bond equivalent (DBE) [27]

Database Matching:

- Query natural product databases (e.g., NPASS, COCONUT, MarinLit)

- Implement mass difference networks to identify related compounds

Advanced Structural Analysis Techniques