Unlocking the Black Box: A 2025 Guide to Interpretable AI in Pharmacology

As artificial intelligence reshapes drug discovery and development, the 'black box' nature of complex models has emerged as a critical bottleneck for regulatory approval, clinical translation, and scientific trust.

Unlocking the Black Box: A 2025 Guide to Interpretable AI in Pharmacology

Abstract

As artificial intelligence reshapes drug discovery and development, the 'black box' nature of complex models has emerged as a critical bottleneck for regulatory approval, clinical translation, and scientific trust. This article provides a comprehensive roadmap for researchers and drug development professionals on achieving model interpretability in AI pharmacology. We first establish why interpretability is a non-negotiable requirement for patient safety and scientific validity. We then explore cutting-edge methodological frameworks, from post-hoc explanation tools to inherently interpretable models, and address practical challenges in implementation, such as data quality and performance trade-offs. Finally, we discuss validation paradigms and comparative metrics essential for benchmarking interpretability solutions. The synthesis offers a strategic path forward for building transparent, reliable, and clinically actionable AI systems in biomedicine.

Why Interpretability is Non-Negotiable: The Pillars of Trust in AI-Driven Drug Discovery

The integration of Artificial Intelligence (AI) into drug discovery has transitioned from a promising technical curiosity to a core component of modern pharmacology. However, the "black box" nature of many advanced AI models—where inputs and outputs are visible, but the internal decision-making process is opaque—presents a critical challenge [1]. In high-stakes fields like drug development, where decisions impact patient safety and therapeutic efficacy, this lack of transparency is not merely an academic concern but a clinical imperative [2]. The inability to understand why an AI model recommends a specific drug target or predicts a particular toxicity undermines trust, complicates regulatory approval, and limits the full potential of AI to revolutionize R&D [3]. This technical support center is designed within the context of a broader thesis on improving model interpretability, providing researchers and drug development professionals with practical tools, troubleshooting guides, and validated methodologies to open the black box and build transparent, trustworthy AI systems for pharmacology.

Technical Support Center: Troubleshooting Guides & FAQs

This section addresses common technical and methodological challenges encountered when developing and deploying interpretable AI models in pharmacological research.

FAQ 1: My deep learning model for toxicity prediction has high accuracy but is rejected by regulatory reviewers for being a "black box." How can I improve its interpretability?

Answer: Regulatory agencies are increasingly emphasizing model transparency [4]. To address this, integrate Explainable AI (XAI) techniques post-hoc to elucidate your model's predictions.

- Recommended Action: Apply model-agnostic interpretation tools such as SHAP (Shapley Additive Explanations) or LIME (Local Interpretable Model-agnostic Explanations) [5]. These methods can help quantify the contribution of each input feature (e.g., molecular descriptor, protein sequence feature) to individual predictions.

- Protocol:

- Model Training: Train and finalize your deep learning model as usual.

- Interpretation Setup: Select a representative subset of your validation data (both correct and incorrect predictions).

- SHAP Analysis: Use the SHAP library to calculate Shapley values for each prediction in the subset. This reveals how much each feature moved the model's output from a base value.

- Visualization & Documentation: Generate summary plots (e.g., feature importance bar plots, dependence plots) and include them in your regulatory submission. Document for reviewers which molecular features the model consistently associates with higher predicted toxicity.

- Expected Outcome: You provide clear, auditable evidence of the model's decision-making logic, moving it from an opaque system to a substantiated tool, thereby addressing key regulatory concerns about effectiveness and safety [5] [4].

FAQ 2: During the validation of our AI-predicted drug target, wet-lab experiments fail to show the expected biological activity. How do I troubleshoot this disconnect?

Answer: A failure in experimental validation often stems from issues in training data or model generalization, not just the experiment itself.

- Troubleshooting Checklist:

- Interrogate Training Data: Was the model trained on data relevant to your specific cellular context or disease model? Check for data bias or lack of diversity in the source databases (e.g., ChEMBL, PubChem) [6].

- Analyze False Positive Rates: Re-examine your model's performance metrics. A high validation accuracy might mask a high false positive rate for the specific class of targets you are testing.

- Employ Interpretability Methods: Use XAI techniques (see FAQ 1) on the failed prediction. This may reveal that the model's prediction was driven by spurious correlations or features not biologically relevant to your experimental setup.

- Review the Biological Hypothesis: Use AI-driven network pharmacology (AI-NP) to map the predicted target within a broader signaling network. The target might require a specific cellular state or co-factor not present in your assay [7].

- Next Steps: Based on this analysis, you may need to retrain your model with more context-specific data, refine the experimental protocol, or reject the target as a model artifact—saving valuable R&D resources.

FAQ 3: Our graph neural network (GNN) for predicting drug-polypharmacy side effects is overfitting despite using dropout. What strategies can improve generalization?

Answer: Overfitting in GNNs, especially with complex biomedical graph data, is common. A multi-faceted approach is required.

- Strategic Solutions:

- Graph Augmentation: Introduce noise to your training graphs through techniques like random edge dropping, node feature masking, or subgraph sampling. This creates a more diverse training set and improves robustness [7].

- Simpler Architectures: Counterintuitively, reducing the number of GNN layers can prevent over-smoothing where node features become indistinguishable. Start with 2-3 layers and increase only if performance plateaus.

- Explainability-Guided Pruning: Use GNN explainers (e.g., GNNExplainer) to identify which parts of the molecular interaction graph are most influential for predictions. If the model is relying on a very small, non-generalizable subgraph, you can adjust the architecture or training to encourage broader feature utilization [7].

- Leverage Pre-trained Models: Utilize foundation models pre-trained on large-scale biological graphs (e.g., of protein-protein interactions). Fine-tuning such a model on your specific task often requires less data and generalizes better than training from scratch [6].

- Validation: Implement rigorous k-fold cross-validation at the graph level (ensuring molecules from the same scaffold are not split across training and test sets) to get a true estimate of generalization error.

Experimental Protocols for Interpretable AI Research

Protocol 1: Bibliometric Analysis of XAI Trends in Drug Research

This methodology, adapted from recent literature, allows for the systematic mapping of the evolving XAI field [5].

- Data Source & Search Strategy: Query the Web of Science Core Collection using a Boolean search string:

TS=(AI OR "Artificial Intelligence" OR "machine learning") AND TS=(interpretable OR explainable OR SHAP OR LIME) AND TS=(drug OR pharma*). Set a timeframe (e.g., 2002-2024) and filter for articles/reviews. - Screening & Eligibility: Two independent reviewers screen titles/abstracts, then full texts, for relevance to drug research employing interpretable models. Disagreements are resolved by a third reviewer.

- Data Extraction & Analysis: Use tools like Microsoft Excel and VOSviewer for basic statistics and network visualization. Extract: publication year, country, institution, journal, authors, citations, and keywords.

- Trend Synthesis: Analyze publication growth, identify leading countries/institutions, map collaboration networks, and perform keyword co-occurrence analysis to spot research hotspots (e.g., "SHAP," "toxicity prediction," "network pharmacology").

Protocol 2: Constructing an AI-Driven Network Pharmacology (AI-NP) Pipeline for Multi-Scale Mechanism Elucidation

This protocol outlines steps to use AI for revealing the "multi-component, multi-target, multi-pathway" mechanisms of complex therapeutics like Traditional Chinese Medicine [7].

- Multi-Source Data Integration: Compile heterogeneous data: active compounds from TCMSP, protein targets from UniProt, disease genes from GeneCards, and pathways from KEGG. Represent this as a heterogeneous network graph.

- Target Prediction with Interpretable ML: Train a model (e.g., a Graph Neural Network or Random Forest with SHAP) on known compound-target interactions. Input features for a new compound to predict its potential targets. Use SHAP values to explain which chemical substructures contribute to each target prediction.

- Network Construction & Analysis: Integrate predicted and known targets into a protein-protein interaction (PPI) network. Use community detection algorithms to identify functional modules. Enrich these modules for GO biological processes and KEGG pathways.

- Multi-Scale Validation: Design experiments to validate predictions across scales: molecular (binding assays), cellular (knockdown/overexpression of key targets), and in vivo (disease model phenotypes). The AI-generated explanations (e.g., key substructures, critical network nodes) should guide the design of these validation experiments.

Research Data and Reagent Solutions

This table summarizes quantitative trends in the field, highlighting its rapid evolution and geographic distribution.

| Year | Annual Publications (TP) | Cumulative Publications | Average Citations per Paper (TC/TP) | Key Developmental Phase |

|---|---|---|---|---|

| 2017 | < 5 | < 20 | Low | Early Exploration |

| 2019-2021 | ~36.3 | Rapid Growth | > 10 | Rapid Growth & High Quality |

| 2022-2024 | > 100 | > 500 | Remains High | Steady Development & Scaling |

This table compares the volume and impact of research output by country, identifying key contributors.

| Rank | Country | Total Publications (TP) | Total Citations (TC) | Citations per Paper (TC/TP) | Notable Research Focus |

|---|---|---|---|---|---|

| 1 | China | 212 | 2,949 | 13.91 | Broad applications in chemical and biological drug discovery |

| 2 | USA | 145 | 2,920 | 20.14 | AI-driven target identification and clinical trial optimization |

| 3 | Germany | 48 | 1,491 | 31.06 | Multi-target compounds, drug response prediction |

| 4 | Switzerland | 19 | 645 | 33.95 | Molecular property prediction, drug safety |

The Scientist's Toolkit: Key Research Reagent Solutions

This table lists essential digital reagents and platforms for conducting interpretable AI pharmacology research.

| Item Name | Type | Primary Function in Interpretable AI Research |

|---|---|---|

| SHAP Library | Software Library | Quantifies the contribution of each input feature to a model's prediction, providing local and global interpretability. |

| LIME | Software Framework | Approximates complex black-box models with locally interpretable models (e.g., linear regression) for individual predictions. |

| ChEMBL Database | Chemical Database | Provides curated bioactivity data for training and validating predictive models with structured, high-quality labels. |

| TCMSP Database | Traditional Medicine Database | Offers curated information on herbal compounds, targets, and diseases for network pharmacology studies. |

| VOSviewer / CiteSpace | Bibliometric Software | Enables visualization and analysis of scientific literature networks to identify research trends and collaborations. |

| Graph Neural Network (GNN) Frameworks (e.g., PyTorch Geometric) | ML Framework | Models molecular structures and biological networks as graphs, capturing relational data crucial for pharmacology. |

| AlphaFold / ESM-2 | Foundation Model | Provides highly accurate protein structure predictions, enabling structure-based interpretable target analysis. |

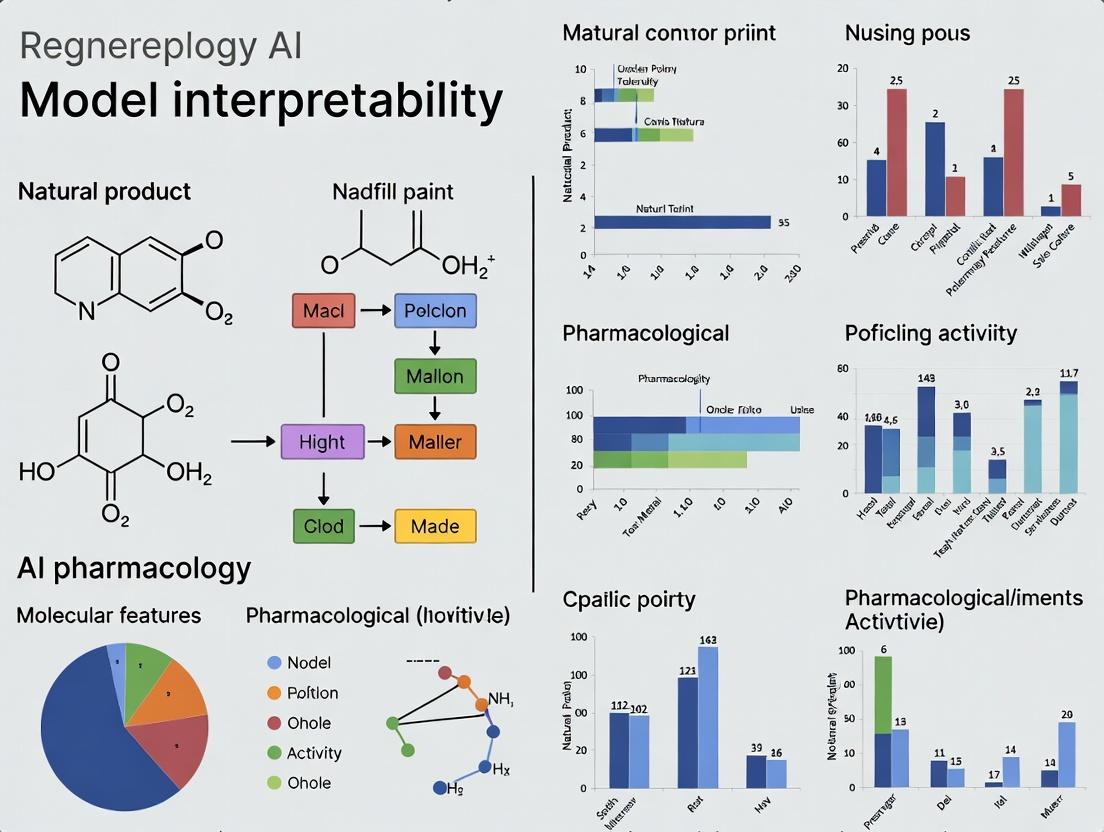

Visualizing Workflows and Relationships

Interpretable AI Workflow in Pharmacology

From Black Box to Clinical Insight with XAI

Interpretability as a Core Objective of AI Alignment (The RICE Framework)

Welcome to the AI Pharmacology Interpretability Support Center

This support center is designed for researchers, scientists, and drug development professionals integrating artificial intelligence into pharmacology workflows. A core thesis of modern AI-driven drug discovery is that improving model interpretability is not just a technical enhancement but a fundamental requirement for aligning complex systems with human values and scientific rigor [8]. This center operates within the RICE framework for AI alignment—encompassing Robustness, Interpretability, Controllability, and Ethicality—focusing specifically on providing actionable solutions for interpretability challenges [8].

The following guides address common technical issues, provide step-by-step protocols, and answer critical questions to ensure your AI models are transparent, trustworthy, and aligned with the critical safety standards required for biomedical research and development.

Diagnostics & System Status Check

Before beginning deep troubleshooting, use this quick diagnostic table to identify potential areas of concern in your AI pharmacology pipeline.

| Diagnostic Area | Common Symptoms & Warnings | Likely Associated RICE Component |

|---|---|---|

| Model Predictions | Unexplained drastic changes in output with minor input variations; inability to articulate why a compound was flagged as active/toxic. | Robustness, Interpretability [8] |

| Data Pipeline | Model performance degrades sharply on new demographic cohorts or real-world data vs. trial data; alerts for potential bias. | Robustness, Ethicality [8] [9] |

| Stakeholder Trust | Clinicians or regulatory reviewers reject model conclusions due to "black box" opacity; difficulty in peer review. | Interpretability, Controllability [10] [11] |

| Validation & Compliance | Struggling to meet documentation requirements for regulatory submissions (e.g., FDA draft guidance on AI). | Interpretability, Controllability, Ethicality [11] [9] |

Support Context: Traditional drug development is a high-stakes endeavor, with an average cost approaching $2.6 billion and a timeline of about 10 years, yet success rates from trial phases to market are often below 10% [8]. AI promises to transform this landscape but introduces new risks. Uninterpretable models can misguide research, lead to resource waste, and potentially allow unsafe candidates to progress [8]. Implementing interpretability is therefore a core objective for achieving AI alignment in this sensitive field.

Troubleshooting Guides

Guide 1: Problem – "My Deep Learning Model is a Black Box; I Cannot Explain Its Predictions to My Pharmacology Team."

Root Cause: Many high-performance models (e.g., deep neural networks) are inherently complex. The lack of transparency reduces trust and hinders scientific validation, which is critical for regulatory pathways and clinical adoption [10] [11].

Step-by-Step Solution: Implement a Model-Agnostic Interpretation Layer. This protocol adds explainability without retraining your core model.

Select an Explanation Tool: Integrate one of the following post-hoc explanation frameworks into your workflow:

- SHAP (SHapley Additive exPlanations): Ideal for quantifying the contribution of each input feature (e.g., molecular descriptor, gene expression level) to a single prediction. Use it to generate force plots or summary plots [11] [5].

- LIME (Local Interpretable Model-agnostic Explanations): Best for creating local, interpretable surrogate models (like linear models) to approximate the black-box model's predictions for a specific instance [10] [11].

- DeepLIFT (Deep Learning Important FeaTures): Specifically designed for deep neural networks, it assigns contribution scores by comparing the activation of each neuron to a reference activation [10].

Generate and Visualize Explanations: Run your model's prediction on a compound of interest through the chosen framework. For a toxicity prediction, the output should clearly list which chemical substructures or functional groups most strongly drove the "toxic" classification.

Expert-in-the-Loop Validation: Present the explanation (e.g., "This ester linkage and aromatic ring contributed 70% to the high toxicity score") to your team's medicinal chemists or pharmacologists. Their domain expertise is crucial for validating whether the model's reasoning aligns with established scientific knowledge [11].

Document for Compliance: Archive the explanation reports alongside the model predictions. This creates an audit trail that supports FDA guidance requirements for AI transparency and explainability in regulatory submissions [9].

Guide 2: Problem – "My Model Performed Well in Validation but Fails Badly on New, Real-World Patient Data."

Root Cause: Distributional Shift and Lack of Robustness. The model has likely overfitted to the training data's specific distribution and fails to generalize to data from different sources, populations, or experimental conditions [9].

Step-by-Step Solution: Enhance Robustness Through Data-Centric and Architectural Strategies.

Audit Training Data for Bias and Coverage: Analyze the demographic, genetic, and clinical characteristics of your training set. Use statistics to identify under-represented subgroups. This addresses both Robustness (improving generalization) and Ethicality (mitigating bias) [8] [9].

Employ Advanced Modeling Techniques: Consider implementing more robust model architectures or paradigms:

- Causal Inference Models: Move beyond correlation to model cause-effect relationships, which are more stable across different environments. Frameworks for estimating Conditional Average Treatment Effects (CATE) can personalize treatment effect predictions [12].

- Symbolic Regression & ODE Discovery: For dynamical systems (e.g., pharmacokinetic/pharmacodynamic modeling), use methods like the Data-Driven Discovery (D3) framework or Deep Generative Symbolic Regression to discover interpretable, closed-form equations (e.g., ordinary differential equations) from data. These are inherently more interpretable and generalizable than black-box neural networks for such tasks [12].

Implement Continuous Monitoring: Deploy systems to detect Out-Of-Distribution (OOD) data in real-time before making predictions. Flag any input data that falls outside the model's validated domain to prevent unreliable predictions [9].

Frequently Asked Questions (FAQs)

Q1: We're a small biotech lab. Are these interpretability tools feasible for us without a large AI team?

A: Yes. Many leading explainability tools like SHAP and LIME are open-source and have accessible Python libraries (e.g., shap, lime). Start by applying them to your most critical models, such as toxicity predictors or patient stratification algorithms. Focus on one tool at a time and leverage online tutorials and communities for support [11].

Q2: What's the practical difference between interpretability and explainability in a drug discovery context? A: In practice, these terms are often used interchangeably. However, a useful distinction is:

- Interpretability: Refers to designing a model that is inherently simple enough to be understood from its structure (e.g., a short decision tree, a linear model with few coefficients).

- Explainability: Refers to using external methods to post-hoc explain a complex model's predictions (e.g., using SHAP to explain a deep neural network). In AI pharmacology, you often need explainability to unlock the power of complex models while meeting the need for interpretability demanded by science and regulation [10].

Q3: How do I balance model accuracy with interpretability? Sometimes the most accurate model is the least interpretable. A: This is a key trade-off. The strategy is not to abandon complex models but to implement a tiered system:

- Use interpretable models (like logistic regression, decision trees) for initial screening and to build foundational trust.

- Deploy high-accuracy, complex models (like deep learning) for specific, high-value tasks.

- Mandatorily couple every complex model prediction with an explanation from a tool like SHAP or LIME.

- Define performance thresholds where the accuracy gain of a "black box" justifies the additional validation effort required for its explanations [11].

Q4: How is the regulatory landscape adapting to AI, and what does this mean for interpretability? A: Regulatory bodies are actively developing frameworks. The FDA's 2025 draft guidance is a key example, establishing a risk-based assessment for AI in clinical trials [9]. It emphasizes:

- Transparency and Explainability: Requiring that AI outputs be interpretable so clinicians can understand and validate them.

- Documentation: Demanding thorough validation, including details on training data and model performance across subgroups.

- Risk Management: Categorizing AI tools by their potential impact on patient safety. For high-risk applications (e.g., direct dosing recommendations), interpretability standards will be most stringent [9]. Proactively building interpretability into your workflow is essential for future regulatory compliance.

Experimental Protocols & Methodologies

Protocol: Implementing SHAP for Molecular Property Prediction

Objective: To explain the prediction of a machine learning model that classifies small molecules as "active" or "inactive" against a target protein.

Materials: Trained classifier model (e.g., Random Forest, GNN), dataset of molecular structures (e.g., SMILES strings), shap Python library.

Method:

- Feature Representation: Convert molecular structures into a numerical feature vector (e.g., using RDKit descriptors, Morgan fingerprints).

- Initialize SHAP Explainer: Choose an appropriate explainer. For tree-based models, use

shap.TreeExplainer(). For neural networks, useshap.KernelExplainer()orshap.DeepExplainer(). - Calculate SHAP Values: Run the explainer on a subset of your validation data (

shap_values = explainer.shap_values(X_val)). These values quantify each feature's contribution to the prediction for every sample. - Visualization & Analysis:

- Use

shap.summary_plot(shap_values, X_val)to see the global feature importance. - Use

shap.force_plot()on individual molecules to visualize how features pushed the prediction from the base value to the final output. - Correlate high-impact features with known pharmacophores or toxicophores from pharmacological literature.

- Use

Interpretation: This protocol provides both a global view of what the model considers important and a local explanation for any single compound, bridging the gap between data science and medicinal chemistry [11] [5].

The Scientist's Toolkit: Key Research Reagent Solutions

The following table lists essential software and frameworks for implementing interpretability in AI pharmacology research.

| Tool / Framework Name | Primary Function | Key Application in Pharmacology | Reference / Source |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) | Quantifies the contribution of each input feature to a model's prediction. | Explaining predictions of toxicity, binding affinity, or patient response; identifying critical molecular descriptors. | [11] [5] |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates a local, interpretable surrogate model to approximate a black-box model's prediction for a specific instance. | Explaining "why" a specific compound was classified as a hit or why a particular patient was stratified into a high-risk group. | [10] [11] |

| DeepLIFT | Assigns contribution scores to input features for deep neural networks by comparing neuron activations to a reference. | Interpreting deep learning models used for image-based histopathology analysis or complex biomarker identification. | [10] |

| Data-Driven Discovery (D3) Framework | Uses LLMs to iteratively discover and refine interpretable models (e.g., ODEs) of dynamical systems from data. | Discovering novel pharmacokinetic/pharmacodynamic models for precision dosing and understanding disease progression. | [12] |

| Symbolic Regression / ODE Discovery Methods | Discovers closed-form mathematical equations (e.g., ODEs) that underlie observed data. | Modeling drug concentration-time profiles, enzyme kinetics, and longitudinal treatment effects with interpretable equations. | [12] |

Visual Reference: Workflows & Frameworks

Diagram 1: The RICE Framework & Interpretability Workflow. This diagram illustrates how Interpretability (a core pillar of the RICE framework for AI Alignment) is operationally integrated into a pharmacology AI pipeline. The workflow shows how data flows through an AI model to an Explainable AI (XAI) tool, and must be validated by a human expert to produce aligned, trustworthy outputs [8] [11].

Diagram 2: Strategic Path for Implementing XAI. This flowchart provides a pragmatic, step-by-step path for integrating Explainable AI (XAI) into drug research and development processes, leading to key outcomes like stakeholder trust and regulatory readiness [11] [9].

Performance Data & Validation Benchmarks

Table: Measurable Impact of AI and Interpretability in Drug Development Data synthesized from current research and market analyses [8] [9] [5].

| Metric | Traditional Benchmark | AI-Enhanced Benchmark with Interpretability | Notes & Source |

|---|---|---|---|

| Development Timeline | ~10 years from concept to market [8] | Potentially reduced by 30-50% with AI acceleration [13]. | Interpretability is key for avoiding delays due to regulatory or validation questions. |

| Clinical Trial Patient Screening | Manual review, time-consuming. | AI screening can reduce time by ~42.6% with 87.3% accuracy in matching criteria [9]. | XAI explains why patients are matched, enabling audit and bias checking. |

| Market Growth (AI in Clinical Trials) | N/A | Market size grew from $7.73B (2024) to $9.17B (2025), projected to reach $21.79B by 2030 [9]. | Growth indicates sustained investment and confidence in the field. |

| Research Publication Volume (XAI in Pharma) | Minimal before 2018. | Annual publications exceeded 100 by 2022, with a 19.5% annual growth rate (2019-2024) [5]. | Reflects explosive academic and industrial focus on solving interpretability. |

The integration of Artificial Intelligence (AI) into drug discovery and development represents a transformative shift, enhancing efficiency, accuracy, and success rates across the pharmaceutical pipeline [14]. However, the advancement from powerful predictive models to trusted clinical tools is hindered by a central challenge: the "black box" problem. For AI to fulfill its potential in critical, life-sciences applications, it must earn the trust of three key stakeholder groups—Regulators, Clinicians, and Scientists—each with distinct but overlapping needs for model interpretability [15].

This technical support center is designed to address this gap. Framed within the broader thesis that robust model interpretability is the cornerstone of stakeholder trust, it provides researchers and drug development professionals with practical resources. The following troubleshooting guides and FAQs are crafted to help you diagnose, understand, and resolve common interpretability challenges, ensuring your AI models are not only accurate but also transparent, reliable, and ready for real-world application [16].

Troubleshooting Guide: Common Interpretability Issues in AI Pharmacology

This guide follows a systematic approach to identify, diagnose, and resolve frequent interpretability challenges that can undermine stakeholder confidence [17] [18].

| Problem Area | Common Symptoms | Potential Root Cause | Recommended Diagnostic Action | Solution & Fix |

|---|---|---|---|---|

| 1. Low Clinician Adoption | Clinicians ignore model predictions; feedback cites a lack of understandable rationale [16]. | Model provides only a final prediction (e.g., "high risk") without patient-specific feature contributions. | Review model output format with a clinical partner. Is the reasoning clear? | Implement local explainability methods (e.g., SHAP force plots, LIME) to show how specific patient variables (e.g., age, biomarker X) drove the prediction [16]. |

| 2. Regulatory Submission Hurdles | Regulatory queries focus on model generalizability, bias, and validation across sub-populations. | Insufficient documentation of model development, including bias audits and out-of-distribution (OOD) testing [15]. | Conduct a gap analysis of your model dossier against emerging FDA/EMA discussion papers on AI/ML. | Integrate a robust model card detailing performance across demographics. Implement and document OOD detection frameworks to identify non-generalizable data [15]. |

| 3. Scientist Skepticism of "Black Box" Models | Discrepancy between model-identified biomarkers and known biological pathways; difficulty in forming a testable hypothesis. | Complex deep learning models lack global interpretability, obscuring overall feature importance. | Perform a feature importance analysis (e.g., permutation importance, SHAP summary plots) and compare results to established domain knowledge [15]. | Use global explainability techniques to identify top predictive features. Combine with hybrid modeling (e.g., integrating known PK/PD equations) to ground predictions in mechanistic science [15]. |

| 4. Model Performance Degradation in Real-World Data | High accuracy during internal validation plummets upon deployment with new hospital EHR data. | Covariate shift or data drift—the real-world data distribution differs from the training set. | Use statistical tests (e.g., Kolmogorov-Smirnov) to compare distributions of key input features between training and new data batches. | Establish a continuous monitoring pipeline for data drift. Retrain models periodically with updated, curated data. Employ domain adaptation techniques [15]. |

| 5. Inconsistent Explanations | Slightly different input data for the same patient yields vastly different explanations, damaging trust. | Instability in post-hoc explanation methods (common with some implementations of LIME). | Generate multiple explanations for several similar input profiles and assess variance. | Switch to more stable explanation methods like SHAP or use intrinsically interpretable models (e.g., decision trees, linear models) where high accuracy permits [16]. |

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between "interpretability" and "explainability," and why does it matter for regulators? A: While often used interchangeably, a distinction exists. Interpretability refers to the ability to understand a model's mechanics intuitively (more inherent to simpler models). Explainability involves post-hoc techniques to articulate a complex model's behavior. For regulators, both are crucial. They require an explainable audit trail of a model's decisions and evidence that its interpretation aligns with known biological and clinical principles, ensuring safety and efficacy [15] [14].

Q2: We have a high-performing deep learning model for toxicity prediction. How can we make its predictions credible to our internal discovery scientists? A: Bridge the gap between correlation and causation by using explainable AI (XAI) outputs to generate testable biological hypotheses. For example, if your model highlights a specific molecular substructure as predictive of toxicity, use SHAP dependence plots to visualize this relationship. Scientists can then design wet-lab experiments to validate if that substructure causes the toxic effect, turning model output into a catalyst for traditional research [15] [16].

Q3: What are the minimum interpretability deliverables needed before discussing an AI-based diagnostic tool with clinical trial investigators? A: Clinicians need concise, actionable insights. Prepare: 1) Local Explanations: A clear display showing the top 3-5 patient factors contributing to the individual prediction. 2) Contextual Performance: Accuracy, sensitivity, and specificity metrics relevant to the intended clinical use case. 3) Failure Mode Analysis: Examples of cases where the model is less confident or likely to err, demonstrating awareness of its limitations [16].

Q4: Our model for patient stratification in a clinical trial protocol was rejected by an ethics committee. What interpretability-related issues might be the cause? A: Ethics committees focus on fairness and bias. The rejection likely stemmed from insufficient analysis of the model's performance across protected subgroups (e.g., age, race, gender). You must provide a bias audit report using tools like AI Fairness 360, showing equitable performance. Furthermore, you need a clear plan for how patients and physicians will be informed about the AI's role in stratification and its limitations [15].

Q5: Which is better for interpretability in pharmacology: a simpler, inherently interpretable model or a complex "black box" model with post-hoc explanations? A: There is a trade-off, often called the "accuracy-interpretability trade-off." The best choice depends on the stakes and the stakeholder. For high-stakes, regulatory-facing decisions (e.g., dose optimization), a simpler, interpretable model (e.g., pharmacometric model enhanced with ML) may be preferable. For early-stage discovery tasks where patterns are subtle (e.g., novel biomarker identification), a complex model with rigorous, validated post-hoc explanations may be necessary. The key is to use the simplest model that achieves the required performance for the specific task [15] [16].

Core Workflow for Developing Interpretable AI Pharmacology Models

The following diagram outlines a systematic workflow for building AI models that integrate interpretability at every stage, directly addressing stakeholder needs.

AI Model Interpretability Development Workflow

Quantitative Landscape of AI in Pharmacology

The table below summarizes key quantitative data from recent research, highlighting the performance and applications of AI models where interpretability is critical for translation [15].

Table 1: Performance and Applications of AI Models in Drug Discovery & Development

| Application Area | Model/Task Description | Reported Performance | Key Interpretability Need |

|---|---|---|---|

| Preclinical PK Prediction | ML model predicting rat pharmacokinetics from chemical structure [15]. | Comparable accuracy to traditional PBPK modeling [15]. | Scientists need to understand which molecular descriptors drive PK to guide lead optimization. |

| Clinical Trial Optimization | Gradient boosting model to predict placebo response in Major Depressive Disorder trials [15]. | Improved prediction over linear models [15]. | Regulators and clinicians need to see factors driving placebo response to design cleaner, more efficient trials. |

| Toxicity & Safety Prediction | Interpretable ML model predicting cisplatin-induced acute kidney injury from EMR data [15]. | Improved clinical trust through interpretability [15]. | Clinicians require patient-specific risk factors to guide monitoring and intervention. |

| Personalized Health Monitoring | PersonalCareNet (CNN with attention) for health risk prediction [16]. | 97.86% accuracy on MIMIC-III dataset [16]. | Clinicians need local explanations (e.g., SHAP force plots) to trust and act on individual predictions [16]. |

| Target Discovery | AI pipeline identifying NAMPT as a therapeutic target in neuroendocrine prostate cancer [15]. | Validated computationally and experimentally [15]. | Scientists need to understand the biological pathways and evidence linking target to disease. |

Experimental Protocol: Implementing SHAP for Model Explanation

This protocol details a standard method for applying SHapley Additive exPlanations (SHAP) to explain a machine learning model's predictions, a common requirement for clinician and scientist stakeholders [16].

Objective: To generate both global and local explanations for a trained binary classifier (e.g., predicting drug response or adverse event risk) using the SHAP framework.

Materials:

- Trained machine learning model (e.g., XGBoost, Random Forest, or Neural Network).

- Test dataset (

X_test). - Python environment with

shap,numpy,pandas, andmatplotliblibraries installed.

Procedure:

- Explainer Initialization:

- Select an appropriate SHAP explainer based on your model.

- For tree-based models (XGBoost, Random Forest), use

shap.TreeExplainer(model). - For neural networks or other models, use

shap.KernelExplainer(model.predict, X_train_summary)orshap.DeepExplainerfor deep learning. - Pass a background dataset (often a sample from the training set) to set a baseline for feature contribution calculation.

Calculate SHAP Values:

- Compute SHAP values for the instances you wish to explain:

shap_values = explainer.shap_values(X_test). - This generates a matrix of SHAP values equal in shape to

X_test, where each value represents the contribution of that feature to the prediction for that instance.

- Compute SHAP values for the instances you wish to explain:

Global Interpretability (For Scientists/Regulators):

- Summary Plot: Execute

shap.summary_plot(shap_values, X_test)to visualize the global feature importance and the distribution of each feature's impact across the dataset. - Bar Plot: Execute

shap.plots.bar(shap.mean(np.abs(shap_values), axis=0))to get a simple bar chart of mean absolute SHAP values, showing overall feature importance.

- Summary Plot: Execute

Local Interpretability (For Clinicians):

- Force Plot: For a single prediction (e.g., patient

i), executeshap.force_plot(explainer.expected_value, shap_values[i,:], X_test.iloc[i,:]). This shows how features pushed the model's output from the base value to the final prediction. - Decision Plot: For a clearer view of cumulative feature contributions for multiple instances, use

shap.decision_plot(explainer.expected_value, shap_values[sample_indices], X_test.iloc[sample_indices]).

- Force Plot: For a single prediction (e.g., patient

Validation & Documentation:

- Correlate high-importance features identified by SHAP with known biological or clinical domain knowledge to ensure plausibility.

- Document the explainer type, background data, and visualizations generated as part of the model's technical dossier for regulatory review.

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for Interpretable AI Pharmacology Research

| Item / Resource | Category | Function & Relevance to Interpretability |

|---|---|---|

| SHAP (Shapley Additive exPlanations) Library | Software Library | A unified framework for interpreting model predictions by attributing the output to each input feature based on game theory. Critical for generating local and global explanations [16]. |

| LIME (Local Interpretable Model-agnostic Explanations) | Software Library | Explains individual predictions by approximating the complex model locally with an interpretable one (e.g., linear model). Useful for creating intuitive, case-by-case explanations [16]. |

| What-If Tool (WIT) | Visualization Tool | An interactive visual interface for probing model behavior, investigating datasets, and analyzing model performance across subgroups—key for bias detection [15]. |

| AI Fairness 360 (AIF360) | Software Toolkit | An extensible open-source library containing metrics and algorithms to check and mitigate unwanted bias in datasets and ML models, addressing regulator and ethics concerns [15]. |

| Model Cards Toolkit | Documentation Framework | Facilitates the creation of "model cards"—short documents providing context, performance metrics, and ethical considerations for a trained ML model. Essential for transparent reporting [15]. |

| Integrated Gradients | Method/Algorithm | An attribution method for deep networks that assigns importance to input features by integrating gradients along the path from a baseline to the input. Provides high-fidelity explanations for complex models [16]. |

| PBPK/PD Simulation Software (e.g., GastroPlus, Simcyp) | Domain-Specific Tool | Pharmacokinetic/pharmacodynamic simulation platforms. Integrating ML with these mechanistic models creates hybrid, interpretable frameworks that are more readily trusted by scientists and regulators [15]. |

Visualizing the Stakeholder-Interpretability Feedback Loop

Trust is not a one-time achievement but a cycle of continuous feedback. The following diagram illustrates how interpretability outputs directly address specific stakeholder needs, which in turn generate feedback that improves the model and its explanations.

Stakeholder-Specific Trust Feedback Cycle

Technical Support Center: AI Pharmacology Research Hub

Welcome to the AI Pharmacology Research Support Center. This hub is designed to assist researchers, scientists, and drug development professionals in diagnosing, troubleshooting, and resolving critical issues related to the interpretability and reliability of artificial intelligence (AI) and machine learning (ML) models in drug discovery and development. Unexplainable "black-box" models create significant barriers to clinical translation and regulatory approval by obscuring the reasoning behind predictions, hiding model biases, and preventing the validation of biological plausibility [7] [19]. The guidance below is framed within the broader thesis that improving model interpretability is not merely a technical enhancement but a fundamental prerequisite for credible, translatable, and compliant AI-driven pharmacology research.

Core Troubleshooting Guides

This section addresses the most common and critical failure points in AI pharmacology workflows.

Troubleshooting Guide 1: Model Performance Degradation Upon External Validation

Problem: Your model demonstrated excellent performance (e.g., high AUC, accuracy) on internal test sets but suffers a severe, unexpected drop in performance when applied to data from a new clinical center, a different patient population, or a novel chemical library.

Diagnosis & Solution: This is a classic symptom of data shift and overfitting to spurious correlations in the training data [20]. The model has learned patterns specific to your training set's limited environment that do not generalize.

- Step 1 – Detect Data Shift with Explainability Tools: Use explainable AI (XAI) techniques post-hoc to analyze predictions on the failing external data.

- Action: Apply SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to a sample of failed predictions [19]. Analyze the top contributing features for these cases.

- Interpretation: If the explanations highlight features known to be institution-specific (e.g., scanner type in imaging, a specific lab assay protocol) or irrelevant (e.g., image background markings), this confirms a data shift problem [20].

- Step 2 – Perform Bias Auditing: Systematically check for performance disparities across patient subpopulations defined by sex, ethnicity, age, or disease subtype.

- Action: Stratify your external validation results by key demographic and clinical variables. Calculate performance metrics for each subgroup.

- Interpretation: Significant performance gaps between groups indicate algorithmic bias, often stemming from non-representative training data [21]. This is a major red flag for regulators.

- Step 3 – Mitigation Strategy: Retrain with intentional diversification and invariance.

- Action: Incorporate data from multiple sources during training. Employ techniques like domain adversarial training or invariant risk minimization to force the model to learn features that are robust across domains [20].

- Protocol: Use a hold-out "external test set" from a completely independent source for final validation only. Do not use it for model selection.

Troubleshooting Guide 2: Failure in Experimental Validation of AI-Predicted Targets

Problem: AI/network pharmacology models predict a novel drug-target or disease-gene association, but subsequent in vitro or in vivo experiments (e.g., binding assays, knockout models) fail to confirm the prediction.

Diagnosis & Solution: The failure likely stems from the opacity of the model's mechanistic reasoning. The prediction may be statistically valid within the training data but based on indirect or biologically implausible correlations.

- Step 1 – Interrogate the Model's Logic Path: Move beyond simple feature importance. Demand a causal, mechanistic explanation for the specific prediction.

- Action: For graph neural networks (GNNs) used in network pharmacology, use graph attention mechanisms or GNN explainers (e.g., GNNExplainer) [7]. These tools can identify which nodes (e.g., genes, proteins) and edges (e.g., interactions) in the biological network were most critical for the prediction.

- Interpretation: If the explanation highlights a path through proteins with no known direct biological interaction, or relies heavily on low-confidence database entries, the prediction is high-risk.

- Step 2 – Decouple Correlation from Causation: The model may have learned a confounding factor.

- Action: Conduct counterfactual analysis. Ask: "What is the minimal change to the input data that would flip this prediction?" [19]

- Protocol: Using the trained model, systematically perturb the input features (e.g., gene expression values) of the failed case. Identify which single feature change most easily reverses the prediction. This can pinpoint a fragile dependence on a potentially confounded variable.

- Step 3 – Validate the Assay, Not Just the Target: Ensure the experimental protocol is optimized to detect the predicted effect.

- Action: Review the Z'-factor of your high-throughput screening assay. A Z'-factor > 0.5 is considered excellent for screening; a low score indicates high noise that can obscure true signals [22].

- Protocol Reagent Check: For binding assays, confirm the use of the active form of the kinase/protein, as AI predictions often assume functional activity. Inactive forms will not bind as predicted [22].

Troubleshooting Guide 3: Regulatory or Peer-Review Scrutiny on Model "Black Box" Nature

Problem: Regulatory bodies (e.g., FDA, EMA) or journal reviewers reject your submission due to insufficient transparency, undocumented training data, or inability to explain model decisions, citing guidelines like GDPR's "right to explanation" or the FDA's SaMD principles [19] [21].

Diagnosis & Solution: The documentation lacks the necessary components of public transparency required for trustworthy AI in healthcare [21].

- Step 1 – Conduct a Transparency Self-Audit: Use a checklist based on trustworthy AI guidelines.

- Action: Score your public documentation (manuscript, supplement, whitepaper) against key categories [21].

- Step 2 – Address Critical Documentation Gaps:

- Action for Data: Document training data sources, demographics, inclusion/exclusion criteria, and annotation processes. Explicitly state the population for which the model is and is NOT validated [21].

- Action for Ethics: Describe steps taken to identify and mitigate bias, ensure fairness, and secure data (e.g., GDPR compliance, ethics board review) [21].

- Action for Limits: Clearly list the model's limitations, failure modes, and clinical scenarios where it should not be used.

- Step 3 – Implement a Layered Explanation System:

The following diagram illustrates the integrated workflow for validating and explaining an AI pharmacology model to overcome these translational barriers.

Diagram 1: Integrated Workflow for Explainable & Translatable AI Model Validation (Max Width: 760px)

Frequently Asked Questions (FAQs)

Q1: Our deep learning model for toxicity prediction is highly accurate but completely opaque. Do we need to sacrifice performance for interpretability to get regulatory approval? A: Not necessarily. The key is to augment your high-performance model with post-hoc explainability techniques. Regulators do not mandate intrinsically interpretable models for all cases but require that you can explain the model's decisions [19]. Use techniques like Integrated Gradients (for neural networks) or SHAP to provide feature attributions for individual predictions [19]. Document the limitations of these explanation methods (e.g., they approximate but do not reveal the model's true inner workings) but demonstrate their consistency. The goal is to show you can audit, debug, and trust the model's outputs.

Q2: What are the most practical explainability (XAI) methods for complex models in drug discovery, and what are their weaknesses? A: The choice depends on your model and question. Below is a comparison of key methods.

Table: Comparison of Key Explainable AI (XAI) Techniques for Pharmacology

| Method | Best For | Key Principle | Primary Weaknesses |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) [19] | Local & global explanation for any model. | Assigns each feature an importance value for a prediction based on game theory. | Computationally expensive. Can be misleading with highly correlated features [24]. |

| LIME (Local Interpretable Model-agnostic Explanations) [19] | Simple, local explanations for single predictions. | Creates a simple, interpretable model (like linear regression) to approximate the complex model locally around a prediction. | Explanations can be unstable; small input changes may lead to very different explanations. |

| Integrated Gradients [19] | Explaining deep neural networks (e.g., for molecular structures). | Computes the gradient of the prediction relative to the input along a path from a baseline. | Requires a meaningful baseline; explanations can be complex in high-dimensional spaces [24]. |

| Attention Mechanisms (in GNNs/Transformers) [7] | Understanding what the model "pays attention to" (e.g., which atoms in a molecule). | The model learns to weight different parts of the input (attention scores) during processing. | High attention weight does not always equal causal importance; it can be a shortcut. |

| Counterfactual Explanations [19] | Understanding what would change a model's decision. | Finds the minimal change to the input (e.g., "if molecular property X increased by 10%") to alter the prediction. | There may be multiple valid counterfactuals; finding the most "realistic" one is challenging. |

Q3: We used a public dataset to train our model. Why would regulators have a problem with that? A: Public datasets often contain hidden biases and lack demographic and clinical diversity. A model trained on such data will inherit these biases and may fail or cause harm when deployed in broader populations [20] [21]. Regulators will ask: 1) Is your training data representative of the intended-use population? 2) Have you performed and documented rigorous bias testing? You must characterize the demographics (age, sex, ethnicity) and clinical settings of your training data and explicitly test for performance disparities across subgroups [21].

Q4: How do we convincingly demonstrate "biological plausibility" for an AI model's novel mechanism prediction to skeptical reviewers? A: Combine computational evidence with a tiered experimental plan.

- Computational Evidence: Use XAI to show the prediction relies on a sub-network of genes/proteins with known biological relationships (e.g., a coherent pathway from your GNN explanation) [7]. Perform enrichment analysis on the top features from a SHAP summary plot.

- Prior Literature Link: Use NLP-based literature mining (AI-powered tools can help) to find indirect supporting evidence in published studies.

- Propose a Crucial Experiment: Design a clean, focused in vitro experiment (not just a phenotypic screen) that directly tests the hypothesized mechanism (e.g., a binding assay for the predicted target, a CRISPR knockout of the central gene in the explained pathway). A well-designed, mechanism-based test is more convincing than a correlative one.

Detailed Experimental Protocols for Validation

Protocol: Validating AI Model Robustness Against Data Shift

Objective: To proactively assess and document an AI model's susceptibility to performance degradation due to changes in data distribution (data shift) [20].

Materials:

- Trained AI/ML model.

- Internal validation set (IVS).

- At least two external validation sets (EVS1, EVS2) from independent sources (different institutions, patient cohorts, or chemical vendors).

- Computing environment with XAI libraries (SHAP, Captum, etc.).

Procedure:

- Baseline Performance: Calculate standard performance metrics (AUC, accuracy, etc.) on the IVS.

- External Performance Test: Run the model on EVS1 and EVS2. Record metrics.

- XAI-Driven Discrepancy Analysis: a. For predictions where the model is highly confident but incorrect on the external sets, compute SHAP values. b. Identify the top 3 features contributing to these erroneous predictions. c. Statistically compare the distributions of these top features between the IVS and the external sets (e.g., using Kolmogorov-Smirnov test).

- Bias Audit: Stratify performance on all datasets by key demographic variables (e.g., sex, age group). Calculate metrics per stratum.

- Documentation: Create a validation report containing:

- Performance tables for all datasets.

- Visualization of SHAP summary plots comparing IVS and external sets.

- Results of the feature distribution comparison and bias audit.

- A clear statement on the model's validated and non-validated use populations based on the data available.

Protocol: Experimental Validation of an AI-Predicted Drug-Target Interaction

Objective: To biochemically confirm a novel drug-target interaction predicted by an AI/network pharmacology model.

Materials:

- Purified, active form of the target protein [22].

- Compound of interest (predicted binder) and a structurally similar negative control compound.

- Appropriate binding assay kit (e.g., LanthaScreen TR-FRET kinase binding assay) [22].

- Microplate reader with correctly configured filters for TR-FRET [22].

Procedure:

- Assay Optimization: Before testing the AI prediction, optimize the assay using a known binder (positive control). a. Perform a titration of the development reagent to establish the optimal concentration [22]. b. Calculate the Z'-factor for the assay plate using positive and negative controls. Ensure Z' > 0.5 [22].

- Dose-Response Experiment: Set up a dose-response curve for the AI-predicted compound and the negative control.

- Data Analysis: a. Use ratiometric data analysis (acceptor signal / donor signal) to minimize noise from pipetting or reagent variability [22]. b. Plot the normalized response ratio vs. log(compound concentration). c. Determine the IC50 (concentration for 50% inhibition of binding).

- Interpretation: A sigmoidal dose-response curve with a potent IC50 for the predicted compound, and no activity for the negative control, provides strong validation. The lack of a curve suggests the AI prediction may be based on indirect correlations, necessitating a re-evaluation of the model's explanation for that prediction.

The Scientist's Toolkit: Research Reagent Solutions

This table catalogs essential computational and experimental resources for building explainable, translatable AI pharmacology models.

Table: Essential Research Reagents & Tools for Interpretable AI Pharmacology

| Category | Item/Technique | Function & Role in Interpretability | Key Considerations |

|---|---|---|---|

| Computational Tools | SHAP/LIME Libraries [19] | Provide post-hoc explanations for any model's predictions, crucial for debugging and validation. | SHAP is computationally intensive but theoretically sound; LIME is faster but less stable. |

| Graph Neural Network (GNN) Frameworks [7] | Model complex "drug-target-disease" networks directly, capturing multi-scale relationships. | Use GNN explainers (e.g., GNNExplainer) to identify influential nodes/edges in the biological network. | |

| Domain Adaptation/Generalization Algorithms [20] | Mitigate data shift by learning features invariant across different data sources (labs, cohorts). | Critical for improving model robustness and real-world generalizability. | |

| Experimental Assays | TR-FRET Binding Assays (e.g., LanthaScreen) [22] | Gold-standard for validating predicted biochemical interactions (e.g., kinase inhibition). | Must use the active form of the target. Ratiometric analysis (acceptor/donor) is essential [22]. |

| High-Content Screening (HCS) Assays | Validate phenotypic predictions (e.g., cytotoxicity, morphological changes) in cells. | Couple with image-based deep learning models for interpretable phenotype analysis. | |

| Data & Standards | Structured Electronic Lab Notebooks (ELN) | Ensure reproducible, well-documented training data provenance and experimental results. | Foundation for regulatory-grade transparency documentation [21]. |

| Bias Auditing Frameworks | Software toolkits to statistically evaluate model performance fairness across subgroups. | Non-negotiable for ethical AI and required for regulatory submissions [21]. | |

| Reporting Guidelines | TRIPOD+AI, MINIMAR | Checklists for reporting predictive model studies and their clinical validations. | Using these frameworks significantly improves manuscript and regulatory submission quality. |

The process of generating and interrogating a SHAP explanation for a single prediction is visualized below, highlighting how it deconstructs a model's output into contributive factors.

Diagram 2: SHAP Explanation Process for a Single Prediction (Max Width: 760px)

Finally, the following diagram provides a logical flowchart for diagnosing and resolving the most common issue in biochemical assay validation: a poor or absent assay window.

Diagram 3: Troubleshooting Flowchart for Biochemical Assay Failures (Max Width: 760px)

From Theory to Therapy: Technical Frameworks for Explainable AI in Pharmacology

Technical Support Center for AI Pharmacology Research

This technical support center provides troubleshooting guidance and best practices for implementing post-hoc explanation tools in AI-driven pharmacology research. The content is framed within a thesis focused on improving model interpretability to advance drug discovery, mechanism elucidation, and safety prediction [7] [25].

Tool Comparison and Selection Guide

The following tables summarize the core characteristics, strengths, and limitations of major post-hoc explanation tools to aid in appropriate selection.

Table 1: Core Methodological Comparison of Post-Hoc XAI Tools

| Feature | SHAP (SHapley Additive exPlanations) | LIME (Local Interpretable Model-agnostic Explanations) | Saliency Maps (Gradient-based) |

|---|---|---|---|

| Core Principle | Game theory: Fairly distributes prediction output among input features based on marginal contributions [26]. | Local surrogate: Approximates complex model locally with an interpretable model (e.g., linear) [27]. | Calculus: Computes gradients of output relative to input to estimate feature importance [28]. |

| Explanation Scope | Local & Global: Can explain single predictions and overall model behavior [26] [29]. | Strictly Local: Explains predictions for a single instance or a small region [27] [30]. | Primarily Local: Typically applied to explain individual predictions, especially in image/time-series models [31]. |

| Model Agnosticism | High (KernelSHAP). Lower for model-specific approximations (TreeSHAP, DeepSHAP). | High: Can explain any black-box model by perturbing inputs [30]. | Low: Typically integrated into specific model architectures (e.g., CNNs, RNNs). |

| Key Output | Shapley values: A consistent, additive measure of each feature's contribution [26]. | Feature weights for the local surrogate model. | A heatmap highlighting influential input regions (pixels, time points) [31]. |

| Primary Pharmacology Use Case | Identifying key molecular descriptors, patient features, or biomarkers driving ADMET or efficacy predictions [25] [26]. | Interpreting individual drug-target interaction predictions or patient-specific prognosis [30]. | Visualizing critical regions in spectral data (e.g., Raman) or temporal patterns in physiological time-series data [27] [31]. |

Table 2: Quantitative Performance and Resource Considerations

| Consideration | SHAP | LIME | Saliency Maps |

|---|---|---|---|

| Computational Cost | High for exact calculation (O(2^F)); requires approximations (Sampling, Kernel) for high-dimensional data [27]. | Moderate: Depends on number of perturbations used to create the local surrogate [27]. | Low: Requires typically one forward/backward pass. |

| Stability/Robustness | High theoretical foundation with guarantees. Approximations can vary [27]. | Can be unstable; explanations may vary significantly with different perturbation samples [27]. | Can be noisy; susceptible to gradient saturation and vanishing issues [28]. |

| Human Interpretability | Scores are intuitive but summary plots require training. May need clinical translation for end-users [29]. | Simple if linear surrogate is used. Direct but limited to local context. | Visually intuitive for structured data (images, spectra), less so for tabular data [31]. |

| Key Limitation | Computationally expensive; feature independence assumption; may produce unrealistic perturbations [27]. | Local fidelity may not reflect global model; sensitive to perturbation parameters [27]. | Explanations are heuristic, lack theoretical guarantees like SHAP; can highlight irrelevant features [28]. |

Detailed Experimental Protocols

Protocol 1: Implementing SHAP for Global Model Interpretation in a QSAR Pipeline Objective: To identify the most influential molecular descriptors across a trained model predicting cytochrome P450 2D6 (CYP2D6) inhibition [25] [26]. Procedure:

- Model & Data: Train a gradient boosting machine (e.g., XGBoost) model on a dataset of molecules with known CYP2D6 inhibition, using RDKit-derived molecular descriptors.

- SHAP Explainer: Instantiate the

TreeExplainerfrom theshapPython library on the trained model. - Value Calculation: Calculate SHAP values for all molecules in the validation set using

explainer.shap_values(X_valid). - Global Analysis: Generate a beeswarm plot (

shap.summary_plot(shap_values, X_valid)) to visualize the distribution of impact for the top descriptors. - Result Interpretation: Identify descriptors (e.g.,

NumHDonors,MolLogP) with the highest mean absolute SHAP values. Correlate high positive SHAP values forNumHDonorswith an increased probability of inhibition, suggesting a potential structural alert [26].

Protocol 2: Applying LIME for Local Prediction Explanation in Medical Imaging Analysis Objective: To explain an AI model's prediction of pathological tissue in a histological image slice [30]. Procedure:

- Model & Instance: Use a pre-trained Convolutional Neural Network (CNN) for classification. Select a specific image (

instance_x) where the model predicted "carcinoma." - LIME Explainer: Create an

ImageExplainerusing thelimePython library. - Perturbation & Explanation: Generate

num_samples=1000perturbed versions ofinstance_x. Fit a local interpretable model (e.g., a ridge regression) to these samples. - Visualization: Use

explanation.show_in_notebook()to display a heatmap overlay on the original image, highlighting the super-pixels (contiguous image regions) most positively weighted toward the "carcinoma" prediction. - Validation: A pathologist reviews the highlighted regions to assess if they align with known cytological features of carcinoma, thereby validating the model's focus [30].

Protocol 3: Generating Saliency Maps for Time-Series Model in Pharmacodynamic Analysis Objective: To interpret a deep learning model predicting blood glucose response from continuous multi-sensor patient data [31]. Procedure:

- Model: Use a 1D Temporal Convolutional Network (TCN) or LSTM model trained on the time-series data.

- Gradient Calculation: For a specific patient's time-series input

x, perform a forward pass to get predictiony. Calculate the gradient of the outputywith respect to the inputx:saliency = abs(∂y/∂x). - Map Generation: Aggregate the gradient magnitudes across all input channels (e.g., heart rate, skin temperature) to produce a time-aligned saliency map.

- Visualization & Analysis: Plot the original time-series signals with the saliency scores overlaid as a color intensity. Identify temporal windows (e.g., 30 minutes post-meal) where saliency is consistently high, indicating the model's primary focus for its prediction [31].

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My SHAP analysis on a Random Forest model is extremely slow. How can I speed it up?

A: Exact SHAP value calculation is exponential in complexity [27]. For tree-based models, always use TreeSHAP (e.g., shap.TreeExplainer), which is a fast, exact algorithm designed for trees. Avoid using the slower, model-agnostic KernelExplainer for these models [26].

Q2: The LIME explanations for the same data point change every time I run the algorithm. Is this a bug?

A: No, this is a known characteristic. LIME uses random sampling to create perturbations around the instance [27]. To improve stability, increase the num_samples parameter (e.g., from 1000 to 5000) to ensure the local surrogate is fitted on a more representative set. You can also set a random seed for reproducibility during explanation.

Q3: The saliency map for my CNN model highlights seemingly random background pixels in a cell image, not the cell structure. What's wrong? A: This is a common issue with basic gradient-based saliency. The model may be relying on superficial background noise (bias) rather than biological features. Troubleshooting steps:

- Check for Data Bias: Ensure your training data does not have a spurious correlation between the label and background artifacts.

- Use Advanced Techniques: Switch to more robust methods like Grad-CAM or Guided Backpropagation, which often provide more coherent visualizations by leveraging internal feature maps [28].

- Perform Sanity Checks: Apply randomization tests: if explanations do not change significantly when the model weights are randomized, the saliency method may be unreliable [28].

Q4: Clinicians on my team find the SHAP summary plots confusing and don't trust them. How can I bridge this gap? A: This is a critical human-factor challenge. A 2025 study found that SHAP plots alone (RS condition) were significantly less effective for clinician acceptance than when paired with a clinical explanation (RSC condition) [29].

- Actionable Solution: Never present a SHAP plot in isolation. Always accompany it with a concise, domain-specific narrative. For example: "The model suggests Patient A has a high risk of bleeding. The SHAP plot indicates the three largest contributing factors are: 1) low platelet count (known risk factor), 2) high dosage of anticoagulant X (per drug guideline), and 3) a genetic variant in enzyme Y (emerging evidence). This aligns with the clinical picture." This fusion of data-driven insight and clinical context is essential for adoption [29].

Q5: For spectral data (e.g., Raman), my feature-wise SHAP values show contradictory positive/negative contributions on adjacent wavenumbers. Is the model faulty? A: Not necessarily. This is a key limitation of individual feature perturbation in spectral data, as it ignores the natural correlation within spectral peaks [27]. Recommended Solution: Implement a spectral zone-based SHAP/LIME approach [27].

- Define Zones: Group adjacent wavenumbers into biologically/physically meaningful spectral zones (e.g., a full peak) using domain knowledge or peak detection algorithms.

- Perturb by Zone: Modify the SHAP/LIME algorithm to perturb entire zones together instead of single wavenumbers.

- Re-calculate: This yields zone-level importance scores, which are more realistic, less noisy, and more interpretable to chemists [27].

Q6: How do I validate if my post-hoc explanations are correct? A: Direct "ground truth" for explanations is rare, but you can assess their plausibility and consistency:

- Ablation Test: Sequentially remove or mask the top features identified as important. A sharp drop in model performance supports the explanation's validity.

- Randomization Test: Compare your explanation to one generated from a model with the same architecture but trained on randomized labels. A good explanation method should yield qualitatively different results for the trained vs. randomized model.

- Domain Expert Review: The ultimate test is having a pharmacologist or biologist assess whether the highlighted features (genes, pathways, chemical substructures) make mechanistic sense in the context of the disease or drug effect [7] [32].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software Libraries and Resources for XAI in Pharmacology

| Tool / Resource Name | Primary Function | Key Application in AI Pharmacology | Access/Reference |

|---|---|---|---|

| SHAP (shap) Python Library | Unified framework for calculating and visualizing SHAP values for various model types [26]. | Explaining feature importance in QSAR, patient stratification, and biomarker discovery models [25] [26]. | https://github.com/shap/shap |

| LIME (lime) Python Library | Generating local, model-agnostic explanations via perturbed samples and surrogate models [30]. | Interpreting individual predictions in drug-target interaction or diagnostic image analysis models [30]. | https://github.com/marcotcr/lime |

| Captum (for PyTorch) | A comprehensive library for model interpretability, including gradient, saliency, and integrated gradients methods. | Generating saliency maps for deep learning models analyzing omics data, time-series sensor data, or molecular graphs [28]. | https://github.com/pytorch/captum |

| AI-NP Integrative Framework | A conceptual and computational framework combining AI with Network Pharmacology [7]. | Elucidating the "multi-component, multi-target, multi-pathway" mechanisms of complex therapeutics (e.g., herbal medicines) across molecular, cellular, and patient scales [7]. | Described in AI-driven network pharmacology reviews [7]. |

| Spectral Zone Definition Algorithm | Algorithm to group correlated spectral features (wavenumbers) into contiguous zones for group-wise perturbation [27]. | Improving the realism and interpretability of SHAP/LIME explanations for vibrational spectroscopy data (Raman, IR) used in drug formulation or biomarker analysis [27]. | Methodology described in spectral zones-based SHAP/LIME literature [27]. |

Visual Workflows for XAI in Pharmacology

The following diagrams, defined in DOT language, illustrate standard and advanced workflows for implementing post-hoc explanations in pharmacological research.

Standard Workflow for Applying XAI in Pharmacology

Advanced Spectral Zone-Based XAI for Spectroscopy Data

This Technical Support Center is designed for researchers, scientists, and drug development professionals working at the intersection of artificial intelligence (AI) and pharmacology. As the field moves toward inherently interpretable, explainable-by-design architectures, new challenges and questions arise during model development, validation, and application [5] [32]. This resource provides targeted troubleshooting guides and detailed FAQs to support your experiments, framed within the critical thesis that improving model interpretability is foundational to advancing ethical, reliable, and regulatorily acceptable AI in drug research [33].

Troubleshooting Guide: Common Issues with Interpretable Model Development

Effective troubleshooting follows a structured process: understanding the problem, isolating the issue, and finding a fix or workaround [34]. The following guide applies this methodology to common technical problems in AI pharmacology research.

Phase 1: Understanding the Problem

- Ask Good Questions: When a model underperforms, define the specific symptom. Is it low predictive accuracy on validation data, poor mechanistic plausibility of the explanations generated, or failure to meet regulatory submission criteria? [35]

- Gather Information: Collect all relevant metadata: the model's architecture (e.g., self-explaining neural network, explainable boosting machine), the data source and preprocessing steps, the specific interpretability method used (e.g., SHAP, LIME), and the quantitative performance metrics [36].

- Reproduce the Issue: Attempt to replicate the problematic output using a controlled subset of your data and a documented script. This confirms whether the issue is consistent or sporadic [34].

Phase 2: Isolating the Issue

Simplify the problem to identify its root cause [34].

- Check Data Integrity: Problems often originate from the input data. For bioactivity or omics datasets, verify annotations, check for batch effects, and confirm that missing data has been handled appropriately. Compare model performance on a pristine, gold-standard benchmark dataset.

- Interrogate the Interpretability Method: Distinguish between a poorly performing model and a poor explanation of a good model. Test if a simple, inherently interpretable model (like a linear model with few features) can achieve reasonable performance on the same task. If it cannot, the problem may lie with the data or the task definition, not the complex model [33].

- Examine Feature Space: For models explaining predictions via feature importance, validate that the top-ranked features (e.g., specific genes, molecular descriptors) have established biological relevance to the endpoint (e.g., disease pathogenesis, drug response). A lack of alignment may indicate data leakage or spurious correlations [37] [36].

Phase 3: Finding a Fix or Workaround

- Solution 1 – Implement a Hybrid Approach: If a complex "black-box" model (like a deep neural network) has high accuracy but poor explainability, use a surrogate model. Train a simpler, interpretable model (like a decision tree or linear model) to approximate the predictions of the complex model locally or globally. This can provide actionable insights while retaining performance [33] [32].

- Solution 2 – Adopt Explainable-by-Design Architectures: For new projects, select inherently interpretable architectures from the start. Models like Explainable Boosting Machines (EBMs) or attention-based transformers provide transparency alongside prediction. In molecular modeling, use graph neural networks that can attribute importance to specific atoms or bonds within a compound [5] [32].

- Solution 3 – Enhance with Domain Knowledge: Integrate pharmacological and biological knowledge graphs directly into the model architecture as constraints or priors. This guides the learning process toward mechanistically plausible pathways, improving both the trustworthiness and the interpretability of the model's predictions for drug target discovery [37].

Table 1: Common Technical Issues & Recommended Actions

| Problem Symptom | Potential Root Cause | Recommended Diagnostic Action | Possible Solution |

|---|---|---|---|

| Low predictive accuracy on external validation set | Overfitting to training data; dataset shift | Perform dimensionality reduction; check distribution of key features between sets | Implement stronger regularization; use domain adaptation techniques |

| Model explanation lacks biological plausibility | Spurious correlation in data; model learning artifacts | Conduct ablation study by removing top features; consult domain expert for face validation | Integrate biological pathway knowledge as model constraints [37] |

| High variance in feature importance scores | Unstable model; highly correlated features | Use bootstrap sampling to calculate confidence intervals for importance scores | Switch to a model with inherent stability (e.g., EBMs); group correlated features |

| Inability to meet regulatory documentation standards | Lack of standardized explanation output; "black-box" core | Audit model against criteria like "right to explanation" | Implement a surrogate explainability layer with documented, validated methodology [35] [33] |

Frequently Asked Questions (FAQs)