Unlocking Nature's Pharmacy: How Deep Learning is Revolutionizing Virtual Screening for Natural Product Drug Discovery

This article provides a comprehensive exploration of the transformative role of deep learning (DL) in the virtual screening of natural products for drug discovery.

Unlocking Nature's Pharmacy: How Deep Learning is Revolutionizing Virtual Screening for Natural Product Drug Discovery

Abstract

This article provides a comprehensive exploration of the transformative role of deep learning (DL) in the virtual screening of natural products for drug discovery. Aimed at researchers and drug development professionals, it begins by establishing the unique value of natural products as drug sources and the paradigm shift enabled by artificial intelligence [citation:1][citation:6]. It then details cutting-edge methodological frameworks, including specialized foundation models [citation:5], multi-stage screening platforms [citation:2], and novel efficiency-focused architectures [citation:9]. The discussion critically addresses persistent challenges such as data limitations, model generalization, and interpretability, offering practical optimization strategies [citation:1][citation:3]. Finally, the article presents a comparative analysis of performance benchmarks and validation protocols, equipping scientists with the knowledge to evaluate and implement these advanced tools. The synthesis concludes with key takeaways and future directions for integrating DL-powered virtual screening into robust, accelerated biomedical research pipelines.

From Forest to Lab: Why Natural Products Are a Drug Discovery Goldmine and How AI Opens the Vault

The Enduring Legacy of Natural Products in Modern Medicine

Natural products (NPs)—chemical substances produced by living organisms—have served as humanity’s primary source of medicine for millennia and continue to underpin modern drug discovery [1]. Their enduring legacy is quantified by the fact that approximately 41% of all new drug approvals between 1981 and 2014 were natural products or direct derivatives thereof [1]. This success stems from their unparalleled structural diversity and evolutionary optimization for biological interaction, granting them a superior coverage of pharmacological space compared to synthetic compound libraries [2]. Despite a late-20th century shift toward combinatorial chemistry and high-throughput screening of synthetic libraries, the slowing pace of new drug approvals has refocused attention on NPs as a crucial resource for addressing complex diseases [1].

The integration of advanced computational methods, particularly deep learning (DL), is revolutionizing how researchers exploit this resource. Traditional NP discovery, reliant on bioactivity-guided fractionation, is labor-intensive and low-throughput. Contemporary in silico strategies now enable the systematic virtual screening of vast NP databases against therapeutic targets, efficiently identifying lead compounds with validated mechanisms of action [3] [4]. This document provides detailed application notes and protocols for leveraging deep learning in NP research, framing them within the essential experimental and cheminformatic workflows required for modern drug development.

Quantitative Landscape of Natural Products in Drug Discovery

The therapeutic utility of NPs spans broad chemical classes and disease areas. The following tables summarize their key contributions and characteristics, providing a quantitative foundation for research planning.

Table 1: Major Natural Product Classes in Therapeutics Table summarizing key classes of natural products, their sources, notable examples, and primary therapeutic uses.

| NP Class | Primary Source(s) | Representative Drug(s) | Key Therapeutic Areas | Unique Structural Traits |

|---|---|---|---|---|

| Phytochemicals | Plants (primary & secondary metabolites) | Paclitaxel, Digoxin, Aspirin [1] | Oncology, Cardiology, Analgesia | Phenolic acids, stilbenes, flavonoids; often compliant with "Rule of Five" [1]. |

| Fungal Metabolites | Fungi | Lovastatin, Ciclosporin [1] | Hypercholesterolemia, Immunosuppression | Diverse macrocyclic structures; prolific source of antibiotics. |

| Toxins & Venoms | Snakes, Cone snails, etc. | Captopril (derived from snake venom) [1] | Hypertension, Pain | Peptides and small proteins with high target specificity and potency. |

| Marine NPs | Sponges, Tunicates, etc. | Cytarabine (Ara-C) [1] | Oncology, Virology | Halogenated, sulfur-rich, and complex polycyclic structures. |

Table 2: Performance Metrics of a DL Model for NP Virtual Screening (Representative Study) Table detailing the architecture, hyperparameters, and performance outcomes of a deep learning model applied to virtual screening of NPs against TNF-α [4].

| Model Aspect | Specification / Result |

|---|---|

| Target Protein | Tumor Necrosis Factor-alpha (TNF-α), PDB: 2AZ5 (refined) [4] |

| Training Data | 953 compounds with pIC50 values from ChEMBL (ID:1825) [4] |

| Input Features | 342 PubChem binary fingerprints (from 881 initial descriptors) [4] |

| Model Architecture | 5 hidden layers (Neurons: 600, 560, 300, 420, 700) [4] |

| Key Performance Metrics | MSE: 0.6, MAPE: 10%, MAE: 0.5 [4] |

| Virtual Screening Library | 2563 compounds from Selleckchem database [4] |

| Top Candidates Identified | Imperialine, Veratramine, Gelsemine [4] |

Application Notes & Protocols

This section outlines core methodologies for integrating deep learning-based virtual screening into natural product research pipelines.

3.1 Protocol: Deep Learning Workflow for Target-Based Virtual Screening of NPs

This protocol details the process of developing and deploying a DL model to predict the bioactivity of natural compounds against a specific protein target, as exemplified in a study targeting TNF-α for rheumatoid arthritis [4].

3.1.1 Data Curation and Preparation

- Target Selection and Preparation: Identify a high-resolution 3D structure of the target protein (e.g., from the Protein Data Bank). Assess and refine the structure for completeness using homology modeling tools like SWISS-MODEL if necessary [4].

- Bioactivity Data Collection: Retrieve a robust set of known active and inactive compounds for the target. Public databases like ChEMBL are primary sources. For the TNF-α example, 953 compounds with reported IC50 values were obtained [4].

- Data Standardization:

- Convert concentration values (e.g., IC50) to a uniform negative logarithmic scale (pIC50).

- Standardize compound structures using canonical SMILES representations [4].

- Molecular Featurization: Generate molecular descriptors or fingerprints. The PubChem fingerprint (881 binary bits) is a common choice. Use software like PaDEL to calculate fingerprints from SMILES strings [4].

- Feature Selection: Apply variance thresholding to remove non-informative, low-variance descriptors, significantly reducing dimensionality (e.g., from 881 to 342 descriptors) [4].

3.1.2 Deep Learning Model Development

- Model Architecture Design: Construct a sequential neural network. The example model employed five hidden layers with varying neuron counts (600, 560, 300, 420, 700) and different activation functions (tanh, relu, elu) to capture complex structure-activity relationships [4].

- Hyperparameter Optimization: Utilize automated search methods like

RandomizedSearchCVto optimize hyperparameters (e.g., number of layers, neurons per layer, activation functions, dropout rates, initializers). The final architecture for the TNF-α model is specified in Table 2 [4]. - Model Training & Validation: Split the curated dataset into training, validation, and test sets (e.g., 80/10/10). Train the model using the training set, monitor performance on the validation set to prevent overfitting, and finally evaluate on the held-out test set. Standard regression metrics (MSE, MAE, R²) should be reported.

3.1.3 Virtual Screening and Hit Identification

- Library Preparation: Prepare a database of natural product structures for screening (e.g., the Selleckchem library of 2563 NPs). Apply the exact same featurization and feature selection pipeline used for the training data [4].

- Deployment & Prediction: Use the trained DL model to predict the bioactivity (pIC50) for every compound in the screening library.

- Hit Prioritization: Rank all compounds by their predicted activity. Select the top fraction (e.g., top 5%) for subsequent analysis. In the referenced study, the top 128 compounds were selected from 2563 [4].

3.2 Protocol: Post-Screening Validation Workflow

Computational hits require rigorous validation through established cheminformatic and biophysical methods.

- Drug-Likeness and ADMET Filtering: Filter top-ranked hits using rules (e.g., Lipinski's Rule of Five) and predictive models for Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET). This removes compounds with poor pharmacokinetic profiles early [4].

- Molecular Docking: Perform molecular docking of the filtered hits against the refined 3D structure of the target protein. Use software like AutoDock Vina or Glide. Set a stringent binding affinity threshold (e.g., ≤ -8.7 kcal/mol) to identify promising leads [4].

- Molecular Dynamics (MD) Simulation: Subject the best protein-ligand complexes from docking to all-atom MD simulations (e.g., for 200 ns) to assess binding stability and conformational dynamics. Key analyses include:

- Root Mean Square Deviation (RMSD) of the protein-ligand complex.

- Root Mean Square Fluctuation (RMSF) of protein residues.

- Radius of Gyration (Rg) and Solvent Accessible Surface Area (SASA).

- Hydrogen bond analysis [4].

- Binding Free Energy Calculation: Use end-point methods like MM/GBSA (Molecular Mechanics/Generalized Born Surface Area) on MD trajectories to calculate the final binding free energy, providing a quantitative estimate of binding strength [4].

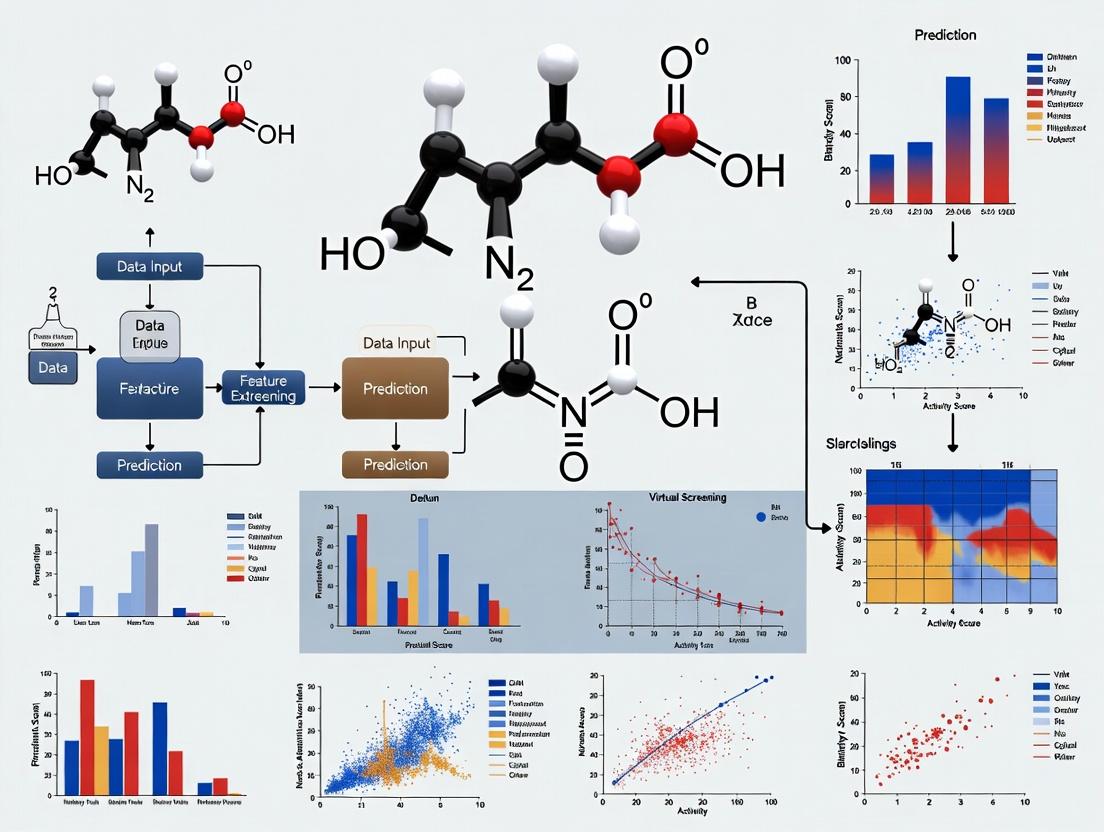

Visual Workflows and Pathways

The following diagrams illustrate the integrated computational-experimental pipeline for deep learning-driven NP discovery.

Deep learning workflow for virtual screening of natural products.

Integrated drug discovery pipeline from computation to experiment.

Target pathway for NP-based therapy in rheumatoid arthritis.

The Scientist's Toolkit: Research Reagent Solutions

A successful NP discovery program relies on integrated computational and experimental resources.

Table 3: Essential Research Resources for NP-Based Drug Discovery Table listing key databases, software tools, and laboratory materials required for computational and experimental research on natural products.

| Category | Resource Name | Primary Function & Utility in NP Research |

|---|---|---|

| Public Databases | PubChem [3], ChEMBL [3] | Source of chemical structures, bioactivity data, and pathways for model training and validation. |

| Specialized NP Libraries | Selleckchem Natural Product Library [4] | Curated, commercially available collections of purified NPs for virtual and experimental screening. |

| Cheminformatics Tools | PaDEL-Descriptor [4], RDKit | Generate molecular fingerprints and descriptors from compound structures for machine learning. |

| Deep Learning Frameworks | TensorFlow, PyTorch, Scikit-learn | Develop, train, and deploy custom predictive models for virtual screening. |

| Molecular Modeling Software | AutoDock Vina, GROMACS, AMBER | Perform molecular docking, molecular dynamics simulations, and binding free energy calculations. |

| Laboratory Reagents (for Validation) | Recombinant Target Proteins (e.g., TNF-α) | For in vitro binding affinity assays (SPR, ELISA) and enzymatic activity inhibition studies. |

| Cell-based Assay Kits (e.g., NF-κB reporter) | For functional cellular validation of anti-inflammatory or other target-specific activity. | |

| Analytical Standards (Pure NP Compounds) | For hit verification by LC-MS/MS and for use as benchmarks in biological assays. |

The discovery of new therapeutics from natural products represents a frontier of immense promise and formidable challenge. These compounds, derived from plants, microbes, and marine organisms, possess unparalleled chemical diversity and a proven historical track record in drug discovery. However, the very attributes that make them valuable—structural complexity, multi-target pharmacology, and intricate biosynthesis—also render them exceptionally difficult to study using conventional paradigms [5]. The modern drug discovery pipeline, already strained by high attrition rates and escalating costs, meets its match in natural product research. Traditional high-throughput screening (HTS) methods, while successful for synthetic compound libraries, are poorly suited to the unique demands of natural extracts and complex metabolites. The process is bottlenecked by the need for large quantities of rare biological material, the labor-intensive isolation of active principles, and the challenge of deconvoluting complex mixtures [6]. Consequently, the translation of nature's chemical wealth into viable drug candidates remains inefficient and prohibitively expensive.

This article posits that deep learning (DL) for virtual screening is not merely an incremental improvement but a necessary paradigm shift for revitalizing natural product-based drug discovery. By reframing the problems of molecular complexity as data patterns and scarcity as a challenge for generative models, artificial intelligence (AI) provides a coherent framework to overcome these historic barriers [7]. The following sections will dissect the core challenges of data scarcity and cost, detail contemporary AI-driven solutions and protocols, and provide a roadmap for integrating these technologies into a robust research workflow.

The Core Impediments: Scarcity, Cost, and Complexity

The Data Scarcity Dilemma in AI Model Training

The performance of deep learning models is fundamentally gated by the availability of large, high-quality, and well-annotated datasets. Natural product research suffers from a acute shortage of such data, creating a significant bottleneck for AI applications.

- Limited Annotated Bioactivity Data: Public repositories contain activity data for only a fraction of known natural products, and the data is often sparse, noisy, and generated from heterogeneous assay conditions, complicating model training [5].

- Scarcity of Negative Data: A critical and often overlooked issue is the profound lack of reliably confirmed inactive compounds. Publication bias favors positive results, meaning most datasets are heavily imbalanced. This lack of "negative data" severely limits the ability of models to learn what distinguishes an active compound from an inactive one, leading to over-optimistic predictions and poor real-world performance [8].

- The InertDB Solution: To address the negative data gap, resources like InertDB have been developed. It provides a curated set of experimentally confirmed inactive compounds and uses generative AI to expand this chemical space, offering a more robust dataset for training predictive models that require both positive and negative examples [8].

The High Cost of Traditional Genomic and Screening Workflows

The experimental foundation of modern natural product research—including genome sequencing for biosynthetic gene cluster discovery and HTS for bioactivity—requires substantial capital and operational investment.

- Sequencing and Library Preparation Costs: Next-generation sequencing (NGS) is essential for identifying the genetic potential of natural source organisms. The library preparation step, which converts DNA/RNA into a format readable by sequencers, is a major cost component. The market for NGS library preparation automation is growing rapidly (expected to reach USD 4.32 billion by 2032) [9], driven by the need for efficiency but indicative of high upfront technology costs. Specialized kits for challenging samples (e.g., degraded or low-input) carry a significant premium [10].

- Traditional Screening Economics: Conventional HTS involves physically testing thousands to millions of compounds against a biological target. The costs encompass reagent kits, specialized robotics, laboratory space, and personnel. The attrition rate is staggering, with approximately one million screened compounds yielding a single marketable drug [11]. For natural products, costs are further amplified by the need for extract preparation, compound isolation, and the frequent re-screening of fractions.

Table 1: Market and Cost Overview for Key Experimental Components

| Component | Market Size & Growth | Key Cost Drivers & Challenges |

|---|---|---|

| DNA Library Prep Kits | Global market valued at USD 1.87B (2024), projected CAGR of ~9-13% [10] [12]. | High cost of specialized kits (e.g., for low-input, single-cell); requirement for skilled personnel; instrument costs [10]. |

| NGS Library Prep Automation | Market growing from USD 2.34B (2025) to USD 4.32B (2032) at 9.1% CAGR [9]. | Capital investment in automated workstations; integration with existing workflows; reagent consumption. |

| Traditional HTS | Not a discrete market, but a pervasive cost center in drug discovery. | Compound/library acquisition, robotics maintenance, assay reagents, and high compound attrition rate leading to low return on investment [11]. |

AI-Enabled Solutions: Deep Learning for Virtual Screening

Deep learning offers a suite of tools to directly address the challenges of cost and data scarcity by enabling intelligent, in silico prioritization before any wet-lab experiment begins.

Virtual Screening as a Cost-Efficiency Multiplier

Virtual screening uses computational models to rank compounds by their predicted likelihood of activity, dramatically reducing the number of physical tests required. By filtering vast virtual libraries down to a manageable subset of high-probability hits, DL-powered virtual screening acts as a force multiplier for laboratory efficiency and budget [11] [7].

- Performance Advantage: Modern DL pipelines significantly outperform older computational methods. For example, the VirtuDockDL platform demonstrated 99% accuracy and an AUC of 0.99 on a HER2 inhibitor benchmark dataset, surpassing tools like AutoDock Vina (82% accuracy) and DeepChem (89% accuracy) [11]. This increased predictive accuracy translates directly into higher hit rates in subsequent experimental validation.

Table 2: Performance Comparison of Screening Methods

| Method | Typical Accuracy / Hit Rate | Key Advantage | Primary Limitation |

|---|---|---|---|

| Traditional HTS | Very low (0.01-0.1% hit rate); high absolute number of hits due to massive scale. | Experimental, empirical data. | Extremely high cost, low efficiency, massive resource consumption [11]. |

| Traditional VS (e.g., AutoDock Vina) | Moderate (varies widely; ~82% in benchmark [11]). | Low cost per compound screened; structure-based insights. | Computational intensity for large libraries; accuracy limited by scoring functions [13]. |

| DL-Powered VS (e.g., VirtuDockDL) | High (e.g., 99% accuracy on benchmark datasets) [11]. | Superior accuracy and speed; learns complex structure-activity relationships; ideal for large libraries. | Dependent on quality/quantity of training data; model interpretability can be low [5] [7]. |

Overcoming Data Scarcity with Advanced DL Architectures

Innovative DL model designs help mitigate the problem of small datasets.

- Graph Neural Networks (GNNs): GNNs are uniquely suited for natural products as they operate directly on molecular graphs, treating atoms as nodes and bonds as edges. This allows the model to inherently learn the structural and topological features critical to a compound's activity, making efficient use of available data [11] [7].

- Generative AI and Data Augmentation: As exemplified by InertDB, generative models can create novel, synthetically accessible compounds or expand datasets of inactive molecules. This "data augmentation" helps balance training sets and explores broader chemical spaces, partially alleviating the scarcity of real experimental data [8].

- Transfer Learning: Models pre-trained on large, general chemical databases (e.g., PubChem) can be fine-tuned on smaller, specialized natural product datasets. This allows the model to bring learned chemical knowledge from a data-rich domain to a data-poor one, improving performance with limited task-specific examples [5].

Diagram 1: AI-Enhanced vs. Traditional Virtual Screening Workflow

Application Notes & Experimental Protocols

Protocol: Implementing a GNN-Based Virtual Screening Pipeline (VirtuDockDL)

This protocol outlines the steps to deploy a deep learning virtual screening pipeline for identifying potential natural product-derived hits against a target of interest [11].

1. Objective: To computationally screen a library of natural product structures (in SMILES format) against a defined protein target to prioritize compounds for experimental validation.

2. Materials & Computational Environment:

- Software: Python (3.8+), PyTorch, PyTorch Geometric, RDKit, VirtuDockDL GitHub repository.

- Hardware: GPU-enabled system (e.g., NVIDIA CUDA) recommended for model training.

- Data:

- Target protein structure (PDB format).

- Curated library of natural product SMILES strings.

- Active/inactive training data for the target (if available for fine-tuning).

3. Procedure:

Step 1: Data Preparation and Molecular Representation.

- Convert all natural product SMILES strings into molecular graph objects using RDKit. Each atom becomes a node (with features like atom type, hybridization), and each bond becomes an edge (with features like bond type) [11].

- Calculate additional molecular descriptors (e.g., molecular weight, logP, topological polar surface area) using RDKit to be used as complementary features.

- Split data into training/validation/test sets if model training is required.

Step 2: Graph Neural Network Model Setup.

- Load or construct the GNN architecture. The core layers perform graph convolution operations: transforming node features, applying batch normalization and ReLU activation, and using residual connections to maintain gradient flow [11].

- The model aggregates information from atomic neighbors and combines the graph-level representation with the calculated molecular descriptors in a fully connected layer to produce a final prediction (e.g., binding affinity score or active/inactive probability).

Step 3: Model Training (If Fine-Tuning).

- Train the model using labeled data (e.g., known actives and inactives from InertDB [8]).

- Use a binary cross-entropy loss function and an Adam optimizer.

- Monitor performance on the validation set to prevent overfitting.

Step 4: Virtual Screening Execution.

- Process the entire natural product library through the trained GNN model to generate prediction scores for each compound.

- Rank all compounds based on the predicted scores.

Step 5: Post-Screening Analysis & Prioritization.

- Apply chemical property filters (e.g., Lipinski's Rule of Five, solubility predictions) to the top-ranked hits to ensure drug-likeness.

- Cluster the top hits based on molecular fingerprints to select chemically diverse leads.

- Visually inspect the predicted binding poses or interaction patterns for the most promising candidates.

4. Validation:

- In silico: Perform molecular docking (using a complementary tool like AutoDock Vina or Glide) on the top AI-prioritized hits to assess predicted binding modes and complement the GNN predictions [13].

- Experimental: The final, shortlisted compounds (typically 10-50, instead of thousands) proceed to in vitro biological assay testing for experimental confirmation.

Protocol: Integrating Negative Data from InertDB for Robust Model Training

1. Objective: To improve the accuracy and reliability of a DL activity prediction model by training it on a balanced dataset containing both active compounds and confirmed inactive compounds.

2. Procedure [8]:

- Access the InertDB database (publicly available resource).

- Download the Curated Inactive Compounds (CIC) list, which contains molecules rigorously verified to show minimal activity across diverse bioassays.

- Select a subset of CICs that are chemically matched or diverse relative to your active compound set for the target of interest.

- Combine your known active compounds with the selected inactive compounds from InertDB to create a balanced training dataset.

- Train your classification model (e.g., a GNN or random forest) on this balanced dataset. The model will learn the discriminatory features between active and inactive states more effectively than from an active-only or artificially balanced dataset.

- Evaluate model performance using standard metrics (AUC-ROC, precision-recall) on a held-out test set.

Diagram 2: Architecture of a GNN Model for Molecular Property Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Kits for Supporting AI-Driven Workflows

| Item | Function in Workflow | Relevance to AI/VS Research |

|---|---|---|

| Tn5 Transposase-Based DNA Library Prep Kits | Streamlines NGS library preparation via "tagmentation," combining fragmentation and adapter ligation [10]. | Enables rapid, cost-effective whole-genome sequencing of natural product-producing organisms to identify biosynthetic gene clusters, generating data for genomic mining AI tools. |

| Automated NGS Library Preparation Workstations | Integrated systems for hands-off, reproducible library construction (e.g., Agilent Magnis) [14] [9]. | Reduces manual labor and variability in generating high-quality sequencing data, ensuring the reliable genomic data needed to train and validate AI models. |

| Specialized Kits for Low-Input/Degraded Samples | Kits optimized for challenging samples (e.g., FFPE tissues, single cells) [10]. | Allows sequencing of rare or difficult-to-culture organisms, expanding the diversity of genomic data available for AI-powered discovery pipelines. |

| Targeted Sequencing Panels (e.g., for CYP450s) | Focuses sequencing on specific gene families related to drug metabolism or biosynthesis [12]. | Generates deep, targeted datasets ideal for training specialized AI models to predict enzyme substrate specificity or metabolic fate. |

The unique challenges of natural product research—chemical complexity, biological data scarcity, and the exorbitant cost of traditional screening—are formidable but not insurmountable. Deep learning for virtual screening offers a coherent and powerful framework to navigate this complex landscape. By leveraging GNNs to understand molecular structure, generative models to overcome data limitations, and robust pipelines like VirtuDockDL for accurate prediction, researchers can invert the traditional discovery model. Instead of "screen first, analyze later," the paradigm becomes "predict intelligently, validate precisely." This approach dramatically concentrates financial and laboratory resources on the most promising leads, mitigating cost and accelerating timelines. The integration of curated negative data resources and automated experimental platforms further strengthens this AI-centric pipeline. As these technologies mature and become more accessible, they democratize the ability to explore nature's chemical treasury, promising a new era of efficient, data-driven natural product drug discovery.

Abstract The integration of advanced computational techniques into cheminformatics represents a fundamental paradigm shift in natural product-based drug discovery. This article delineates the hierarchical relationship between artificial intelligence (AI), machine learning (ML), and deep learning (DL) within this domain, framing them as a continuum of increasing specificity and capability. We posit that while AI provides the overarching goal of simulating intelligent behavior in drug screening, ML offers the statistical framework for learning from chemical data, and DL delivers the architectural power for modeling complex, high-dimensional structure-activity relationships. In the context of a thesis on deep learning for virtual screening (VS) of natural products, this work provides detailed application notes and protocols for implementing a DL-accelerated VS pipeline. We present quantitative benchmarks for state-of-the-art methods, a stepwise protocol for a scalable AI-VS platform integrating active learning, and a novel method for validating model interpretability using synthetically generated data. Supporting materials include standardized workflow diagrams, a reagent toolkit, and performance tables, equipping researchers with the practical frameworks necessary to leverage this technological shift for uncovering bioactive natural compounds.

1. Introduction: The Hierarchical Shift in Cheminformatics The discovery of lead compounds from natural products presents unique challenges, including structural complexity, scaffold diversity, and sparse activity data [15]. Traditional computational methods often struggle with these dimensions. The emergence of AI, ML, and DL offers a transformative, hierarchical approach [16]. In the cheminformatics context, Artificial Intelligence (AI) is the broadest paradigm, encompassing any computational system that performs tasks typically requiring human intelligence, such as predicting bioactivity or planning a synthetic route for a natural product derivative [15] [16]. Machine Learning (ML) is a subset of AI focused on developing algorithms that can learn patterns and make predictions from data without explicit, rule-based programming. In VS, ML models use features (e.g., molecular fingerprints, physicochemical descriptors) to predict binding affinity [17]. Deep Learning (DL), a further subset of ML, utilizes artificial neural networks with multiple layers (deep architectures) to automatically learn hierarchical representations from raw or minimally processed data, such as 3D molecular structures or graph representations [18] [17]. This paradigm shift enables the direct modeling of intricate interactions between a natural product and its protein target, moving beyond handcrafted features to data-driven discovery.

2. Hierarchical Definitions in the Virtual Screening Context

Table 1: Definition and Application of AI, ML, and DL in Cheminformatics.

| Term | Core Definition in Context | Primary Role in Virtual Screening | Typical Application in Natural Product Research |

|---|---|---|---|

| Artificial Intelligence (AI) | The overarching science of creating systems capable of performing complex, intelligent tasks in drug discovery. | Orchestrating the entire VS pipeline, from target analysis to hit prioritization, often integrating multiple sub-systems. | Designing an end-to-end platform that integrates genomic data for biosynthetic gene cluster identification with subsequent VS of predicted metabolites [15]. |

| Machine Learning (ML) | A suite of algorithms that identify statistical patterns in data to make predictions or decisions, based on feature input. | Classifying compounds as active/inactive or regressing binding affinity scores using curated molecular feature sets. | Building a random forest model to predict the antibacterial activity of flavonoid analogs based on topological fingerprints [17]. |

| Deep Learning (DL) | A class of ML algorithms using multi-layered neural networks to learn high-level abstractions and representations directly from complex data. | Processing raw 3D structural data (e.g., protein-ligand complexes) to predict binding poses and affinities with high spatial awareness. | Using an equivariant graph neural network (e.g., PointVS) to screen a database of 3D-conformer natural products against a flexible binding pocket [18] [17]. |

3. Application Notes & Protocols for Deep Learning-Augmented VS This section provides actionable methodologies for implementing a DL-accelerated VS workflow, a core component of the broader thesis on natural product discovery.

3.1. Application Note: Performance Benchmarks for Physics-Informed DL VS A critical application is enhancing physics-based docking with DL for speed and accuracy. The RosettaVS AI-accelerated platform exemplifies this, combining a physics-based force field (RosettaGenFF-VS) with an active learning (AL) framework [18]. Its performance on standard benchmarks and real-world targets underscores the paradigm's value.

Table 2: Performance Metrics of the RosettaVS AI-Accelerated Platform. [18]

| Benchmark / Target | Key Metric | RosettaVS Performance | Comparative Context |

|---|---|---|---|

| CASF-2016 (Docking Power) | Success in identifying near-native poses | Top-performing method | Outperformed other physics-based scoring functions. |

| CASF-2016 (Screening Power) | Enrichment Factor at 1% (EF1%) | EF1% = 16.72 | Significantly higher than 2nd best method (EF1% = 11.9). |

| DUD Dataset | AUC & ROC Enrichment | State-of-the-art | Superior virtual screening accuracy across 40 targets. |

| Real-World Target: KLHDC2 | Experimental Hit Rate | 7 hits (14% hit rate) | From a focused library; single-digit µM affinity. |

| Real-World Target: NaV1.7 | Experimental Hit Rate | 4 hits (44% hit rate) | From initial screen; single-digit µM affinity. |

| Computational Speed | Screen Time for Billion-Compound Library | < 7 days | Using 3000 CPUs + 1 GPU per target. |

3.2. Protocol 1: Implementing an Active Learning-Enhanced VS Workflow This protocol details the steps for screening ultra-large libraries using the OpenVS platform architecture [18].

Objective: To efficiently identify hit compounds from a multi-billion compound library (e.g., ZINC20) against a defined protein target with a known binding site. Materials: Prepared target protein structure (PDB format), prepared chemical library (e.g., in SDF format), high-performance computing (HPC) cluster with CPU nodes and GPU nodes, OpenVS software suite. Procedure:

- Target and Library Preparation:

- Prepare the target protein structure (e.g., remove water, add hydrogens, optimize side chains).

- Pre-process the chemical library: standardize formats, generate credible 3D conformers, and filter using basic physicochemical rules.

- Initial Seed Docking:

- Randomly select a small subset (e.g., 0.1%) of the library.

- Dock this subset using the VSX (Virtual Screening Express) mode of RosettaVS, which uses a rigid receptor and a simplified scoring function for speed [18].

- Active Learning Loop:

- Train DL Model: Use the docking scores and compound features from the seed set to train a target-specific surrogate neural network model.

- Predict & Prioritize: Use the trained DL model to predict the docking scores for the entire remaining unscreened library.

- Select Batch: Choose the next batch of compounds (e.g., top 0.1% predicted by the DL model, plus a random exploration fraction).

- High-Precision Docking: Dock the selected batch using the VSH (Virtual Screening High-precision) mode, which includes full receptor side-chain flexibility [18].

- Update Training Set: Add the new VSH results to the training data.

- Iterate: Repeat steps a-d until a predefined stopping criterion is met (e.g., number of compounds screened, convergence of top-scoring compounds).

- Hit Identification & Validation:

- Cluster the top-ranked compounds from the final VSH results and select representatives for in vitro testing.

- Validate predictions, as exemplified by the high-resolution X-ray crystallographic structure that confirmed the KLHDC2 ligand pose predicted by RosettaVS [18].

3.3. Protocol 2: Synthetic Data Generation for Validating Model Interpretability A major challenge in DL-based VS is ensuring models learn genuine biophysical interactions rather than dataset biases [17]. This protocol, adapted from synthetic benchmark studies, tests a model's ability to identify critical functional groups.

Objective: To generate a synthetic dataset with known ground-truth "binding" rules to evaluate if a DL VS model correctly attributes importance to key ligand atoms [17].

Materials: A set of diverse ligand molecules (e.g., from natural product libraries), Python environment with rdkit and numpy.

Procedure:

- Define a Synthetic Protein Pocket:

- For each ligand in its 3D conformation, define a bounding box extended 5 Å beyond its atomic coordinates.

- Within this box, generate a random point cloud of "synthetic residues." Each residue has 3D coordinates and a randomly assigned pharmacophore type (e.g., hydrogen bond donor, acceptor, aromatic).

- Filter points to be at least 2 Å from any ligand atom and 3 Å from each other.

- Apply a Deterministic Binding Rule:

- Define a simple rule for "activity." For example: a ligand is labeled "active" if it contains at least one hydrogen bond donor atom within 2.5 Å of a synthetic acceptor residue AND one hydrophobic atom within 3.0 Å of a synthetic hydrophobic residue.

- Apply this rule to each ligand/synthetic-protein pair to generate a clear binary label (1/0).

- Model Training & Attribution Analysis:

- Train a DL-based VS model (e.g., a graph neural network) on this synthetic dataset.

- Use an attribution method (e.g., Integrated Gradients) on the trained model to calculate the importance of each atom in the ligand for the prediction.

- Validation: Compare the model-attributed importance scores against the ground-truth atoms defined by the binding rule. A model that has learned the correct spatial interaction will assign high importance to the key donor and hydrophobic atoms involved in the rule.

- Introduce Bias and Re-test: Degrade the dataset by adding a strong correlation between an irrelevant molecular feature (e.g., presence of a specific substructure) and the active label. Retraining a model on this biased data will show high predictive accuracy but poor attribution to the true binding rule, highlighting the risk of shortcut learning [17].

4. Visualizing the Paradigm Shift: Workflows and Relationships

Hierarchical Relationship from AI to DL Applications

Active Learning VS Workflow for Billion-Compound Libraries [18]

5. The Scientist's Toolkit: Essential Reagents & Resources

Table 3: Key Research Reagents and Computational Tools for DL-VS.

| Item Name | Type | Primary Function in Protocol | Reference/Resource |

|---|---|---|---|

| RosettaVS Software Suite | Software (Physics+AI) | Provides the core VSX (fast) and VSH (accurate) docking protocols, integrated with the RosettaGenFF-VS force field. | [18] |

| OpenVS Platform | Software Framework | An open-source, scalable platform implementing the active learning loop to coordinate docking and DL model training. | [18] |

| Synthetic Data Generation Framework (synthVS) | Software/Code | Python-based protocol for creating synthetic protein-ligand complexes with defined binding rules to test model interpretability. | [17] |

| Equivariant Graph Neural Network (e.g., PointVS) | DL Algorithm | A deep learning model architecture capable of learning from 3D molecular structures for direct affinity prediction or surrogate modeling. | [17] |

| Ultra-Large Chemical Library (e.g., ZINC, Enamine REAL) | Data | Provides the source pool of billions of purchasable compounds for virtual screening campaigns. | [18] |

| High-Performance Computing (HPC) Cluster | Hardware | Essential for performing billions of docking calculations and training large DL models within a practical timeframe (days). | [18] |

6. Conclusion The delineation of AI, ML, and DL within cheminformatics is more than semantic; it maps a strategic pathway for advancing natural product research. This paradigm shift, characterized by DL's ability to directly process complex chemical data, enables the development of highly accurate and astonishingly fast virtual screening platforms, as evidenced by hit rates exceeding 40% in sub-week screens [18]. However, the power of these "black box" models necessitates rigorous validation of their interpretability, using innovative protocols like synthetic data generation to ensure they learn true chemistry rather than artifacts [17]. For the thesis on deep learning in natural product screening, this framework clarifies that the core investigative power lies in DL's architectural depth. The provided protocols offer a concrete foundation for employing AI-accelerated platforms and for critically evaluating the learned models, ultimately guiding the field toward more rational, efficient, and insightful discovery of bioactive natural compounds.

The integration of deep learning (DL) into natural product (NP) research marks a paradigm shift from traditional, labor-intensive discovery to a data-driven, predictive science. Natural products, with their unparalleled chemical diversity and proven therapeutic history, are potent sources for novel drug leads. However, their development is hampered by challenges such as structural complexity, low abundance in source material, and multifaceted pharmacology [5] [19]. Deep learning directly addresses these bottlenecks by enabling the virtual screening of ultra-large chemical libraries, predicting bioactive compounds from complex mixtures, and inferring mechanisms of action, thereby accelerating hit identification and derisking the early development pathway [5] [6].

This application note frames these advancements within a broader thesis on deep learning for virtual screening in NP research. It provides detailed methodologies, validated protocols, and a curated toolkit to empower researchers to implement these transformative approaches, moving from AI-driven predictions to experimentally validated, de-risked leads.

Quantitative Performance & Impact Analysis

The application of DL models in NP discovery is validated by significant improvements in key screening metrics compared to traditional methods. The following tables summarize the quantitative impact on virtual screening efficiency and model performance.

Table 1: Performance Metrics of AI/ML Models in Natural Product Virtual Screening

| Model Type | Primary Application in NP Research | Key Performance Advantage | Reported Impact/Example |

|---|---|---|---|

| Graph Neural Networks (GNNs) | Molecular property prediction, activity classification | Captures complex structure-activity relationships | Enables direct learning from molecular graph representations of complex NPs [5]. |

| Convolutional Neural Networks (CNNs) | Image-based spectral analysis (NMR, MS), structure elucidation | High accuracy in pattern recognition from spectral data | Used in tools like DP4-AI for automated NMR analysis and structure determination [5]. |

| Large Language Models (LLMs) | Standardizing herbal prescription data, literature mining | Processes unstructured text from ethnopharmacology | Extracts chemical and pharmacological data from historical texts and patents [5] [19]. |

| Imbalanced Dataset Classifiers | Virtual screening of ultra-large libraries | Optimizes for Positive Predictive Value (PPV) | Achieves ≥30% higher hit rates in top candidate lists compared to models trained on balanced datasets [20]. |

Table 2: Impact of Deep Learning on Key Drug Discovery Risk and Efficiency Parameters

| Parameter | Traditional NP Discovery | DL-Augmented NP Discovery | Risk Reduction/Efficiency Gain |

|---|---|---|---|

| Hit Identification Rate | Low (fraction of a percent in HTS) | Significantly Enhanced | Focused experimental testing on top AI-ranked candidates improves success rate [20] [21]. |

| Early Attrition Due to ADMET | Late-stage experimental failure | Early in silico prediction | DL models predict absorption, distribution, metabolism, excretion, and toxicity (ADMET) properties upfront [19]. |

| Mechanistic De-risking | Post-hoc, target-centric assays | Integrated network pharmacology | Models construct herb–ingredient–target–pathway graphs to propose and validate polypharmacology in silico [5]. |

| Chemical Space Explored | Limited by physical library size (~10^5-6 compounds) | Ultra-large virtual libraries (~10^9-12 compounds) | Access to vastly larger, more diverse chemical space, including make-on-demand compounds [21]. |

Detailed Experimental Protocols & Workflows

Protocol: Building a High-PPV Virtual Screening Model for Natural Product Libraries

Objective: To construct a binary classification DL model optimized for high Positive Predictive Value (PPV) to identify novel bioactive NPs from an ultra-large virtual library.

Rationale: For hit identification, where only a small subset of top-ranked compounds (e.g., 128 for a screening plate) can be tested, a high PPV ensures maximal true actives in that subset. Recent evidence shows that models trained on inherently imbalanced datasets (typical of bioactivity data) outperform balanced models for this task [20].

Materials: See "The Scientist's Toolkit" (Section 5).

Procedure:

Data Curation & Imbalanced Training Set Preparation:

- Source bioactivity data from ChEMBL or other repositories for your target of interest [22].

- Do not balance the dataset. Retain the natural imbalance (typically a high ratio of inactive to active compounds). Define "active" using a relevant threshold (e.g., IC50 < 10 µM) [20].

- Represent molecules as feature vectors. For NPs, use extended connectivity fingerprints (ECFPs) or learned representations from a pre-trained GNN to capture complex ring systems and stereochemistry.

Model Training & PPV-Centric Validation:

- Implement a deep neural network (DNN) or GNN classifier. Use a weighted loss function (e.g., weighted binary cross-entropy) to slightly counterbalance class imbalance without resampling.

- Primary Validation Metric: Optimize and select models based on PPV calculated for the top N predictions, where N equals your practical testing capacity (e.g., 128, 256). This simulates the real-world virtual screening output [20].

- Secondary Metrics: Monitor area under the receiver operating characteristic curve (AUROC) and recall to ensure model robustness.

Virtual Screening & Hit List Generation:

- Prepare a ultra-large NP-inspired virtual library (e.g., from ZINC or REAL Space) [21].

- Use the trained model to score all library compounds.

- Rank compounds by predicted probability of activity and select the top N candidates with the highest scores for the experimental assay.

Experimental Validation & Model Refinement:

- Test the top N candidates in a primary in vitro assay.

- Use the experimental results (confirmed actives/inactives) as an external test set to calculate the realized PPV.

- Feed results back to augment the training data for model refinement in an iterative cycle.

Protocol: Integrated Multi-Omics Validation of AI-Predicted Natural Product Hits

Objective: To experimentally validate AI-predicted NP hits and elucidate their mechanism of action using a multi-omics gating strategy, thereby de-risking downstream development.

Rationale: AI predictions require rigorous validation. An integrated workflow using transcriptomics, proteomics, and metabolomics can confirm bioactivity, assess target engagement, and identify potential off-target effects early [5].

Materials: Cell line relevant to disease target, AI-predicted NP compounds, vehicle control, omics analysis platforms (RNA-Seq, LC-MS/MS for proteomics and metabolomics).

Procedure:

Transcriptomic Signature Reversal Assay:

- Treat disease-model cells with the AI-predicted NP hit at its IC50 concentration.

- Extract RNA and perform RNA-Sequencing. Compare the gene expression profile to both vehicle-treated diseased cells and healthy control cells.

- Success Criterion: The NP treatment significantly reverses the disease-associated gene expression signature toward the healthy state [5].

Proteome-Scale Target Engagement Check:

- Use a chemical proteomics approach (e.g., affinity-based protein profiling) with a functionalized derivative of the NP hit.

- Enrich and identify proteins that bind directly to the compound from a native cell lysate via mass spectrometry.

- Success Criterion: The primary target(s) are identified and include the intended protein. Minimal off-target binding to proteins associated with toxicity is observed [5] [22].

Mechanistic Confirmation via Untargeted Metabolomics:

- Analyze metabolic profiles of cells treated with the NP hit vs. control using LC-MS.

- Integrate data with feature-based molecular networking to identify altered metabolic pathways.

- Success Criterion: Observed metabolic changes align with the expected mechanism of action of the compound and the engaged target identified in Step 2 [5].

Diagram: AI-Driven Natural Product Discovery and Validation Workflow

Integration with Structure-Based Methods & Data Requirements

A robust DL workflow for NPs integrates with and enhances structure-based methods. While physics-based docking (e.g., molecular docking) is powerful, it can be computationally prohibitive for ultra-large libraries. A synergistic protocol is recommended:

- Initial Ultra-Fast DL Filter: Apply a high-PPV DL model to screen a multi-billion compound library, reducing it to a manageable subset (e.g., 100,000 compounds) with high probability of activity [21].

- Structure-Based Refinement: Subject the DL-filtered subset to more computationally intensive, high-accuracy physics-based docking or free-energy perturbation calculations using the target protein structure (from PDB or AlphaFold2) [22] [21].

- Final Prioritization: Integrate DL-based ADMET predictions (e.g., solubility, metabolic stability) with the refined activity scores to generate the final, synthetically accessible hit list for experimental testing [19].

Critical Data Considerations:

- Quality over Quantity: Use high-confidence bioactivity data (e.g., ChEMBL entries with high confidence scores) [22].

- Stratified Splits: For model validation, split data by structural clusters of proteins to test generalization to novel targets, not by random compound splits [22].

- NP-Specific Representations: Employ molecular representations that effectively capture the high stereochemical complexity and scaffold diversity of natural products.

Diagram: Synergy of AI & Physics-Based Methods in Screening

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Databases for DL-Driven NP Research

| Tool/Resource Category | Specific Name / Example | Function in Workflow | Key Considerations for NPs |

|---|---|---|---|

| Public Bioactivity Databases | ChEMBL [22], PubChem BioAssay [20] | Source of labeled data for model training. | Data is often imbalanced and may contain noise; apply confidence filters. |

| Ultra-Large Compound Libraries | ZINC20 [21], Enamine REAL Space [21] | Source of "make-on-demand" compounds for virtual screening. | Contains NP-inspired scaffolds; check for synthetic feasibility of complex NPs. |

| Cheminformatics & DL Libraries | RDKit, DeepChem, PyTorch/TensorFlow | For molecule featurization, model building, and training. | Ensure molecular representations (e.g., graphs) can handle NP complexity. |

| Structure Databases & Tools | PDB [22], AlphaFold Protein Structure Database [21] | Source of target structures for integrative SBVS. | For modeled structures (AlphaFold), assess local accuracy at the binding site. |

| Multi-Omics Data Portals | GEO (Transcriptomics), PRIDE (Proteomics), MetaboLights | Data for validation and network pharmacology models. | Crucial for constructing and validating herb–target–pathway networks [5]. |

| Specialized NP Databases | LOTUS, NPASS, COCONUT | Curated sources of NP structures and activities. | Smaller but highly relevant datasets for fine-tuning models. |

Inside the AI Toolbox: Architectures and Workflows for Screening Nature's Compounds

The discovery of bioactive natural products (NPs) is a cornerstone of drug development but is challenged by the immense structural diversity and complexity of NP space. Traditional computational methods, often adapted from synthetic molecule research, struggle to capture the unique biosynthetic and evolutionary patterns inherent to NPs [23]. The NaFM (Natural Products Foundation Model) framework addresses this by introducing a purpose-built pre-training strategy that learns generalizable molecular representations directly from large, unlabeled NP datasets [24]. This approach shifts the paradigm from training individual, task-specific models to leveraging a single, powerful foundation model that can be efficiently fine-tuned for diverse downstream applications in virtual screening and NP research [23].

NaFM's architecture is based on a Graph Neural Network (GNN) that processes molecules as graphs with atoms as nodes and bonds as edges. Its core innovation lies in a dual pre-training strategy combining Masked Graph Learning and Scaffold-Informed Contrastive Learning [24].

- Enhanced Masked Graph Learning: Unlike standard approaches that mask only atom or bond features, NaFM's strategy also masks the connectivity within subgraphs. This forces the model to reconstruct missing topological relationships, requiring a deeper, more global understanding of molecular structure that is critical for complex NPs [24].

- Scaffold-Informed Contrastive Learning: This component teaches the model to recognize fundamental NP similarities. It uses molecular scaffolds—core structural frameworks often conserved across biosynthetic pathways—as a basis for comparison. The learning objective incorporates scaffold similarity as a soft weight, allowing the model to distinguish between strong and weak negative examples and effectively integrate information from variable side-chain modifications [24].

This tailored pre-training enables NaFM to internalize the fundamental relationships between NP source organisms, their conserved biosynthetic scaffolds, and resulting bioactivities. It achieves state-of-the-art performance across key tasks, including taxonomic classification, bioactivity prediction, and biosynthetic gene cluster association, providing a powerful base model for accelerating virtual screening pipelines [23] [24].

Table 1: NaFM Model Specifications and Pre-training Data

| Component | Specification | Description |

|---|---|---|

| Base Architecture | Graph Neural Network (GNN) | Processes molecular graphs of atoms (nodes) and bonds (edges). |

| Core Pre-training Strategies | 1. Enhanced Masked Graph Learning2. Scaffold-Informed Contrastive Learning | Dual strategy for learning structural and evolutionary relationships [24]. |

| Primary Pre-training Data Source | COCONUT database | Source of ~400,000 NP structures for self-supervised pre-training [25]. |

| Key Downstream Evaluation Tasks | Taxonomy Classification, Bioactivity Prediction, BGC Mining, Virtual Screening | Tasks used to validate the model's generalizability and utility [24]. |

Application Notes and Experimental Protocols

NaFM’s pre-trained representations serve as a versatile starting point for various downstream tasks critical to NP drug discovery. The following protocols detail the fine-tuning and application process for three key use cases.

Protocol 1: Fine-tuning NaFM for NP Taxonomy Classification

Objective: To predict the biological origin (e.g., plant genus, fungal family) of a natural product based on its molecular structure. Background: Taxonomic classification aids in dereplication, sourcing, and understanding biosynthetic origins [24].

Data Preparation:

- Obtain labeled data linking NP structures (as SMILES) to taxonomic classes. A standard benchmark is the refreshed NPClassifier dataset [25].

- Split data into training, validation, and test sets (e.g., 80/10/10). Ensure no data leakage between splits.

- Use the

NaFMTokenizer(or equivalent) to convert SMILES strings into graph representations compatible with the pre-trained NaFM GNN.

Model Setup:

- Load the pre-trained NaFM weights.

- Attach a classification head (typically a multi-layer perceptron) on top of the NaFM graph encoder. The input to this head is the global graph representation vector produced by NaFM for each molecule.

- Initialize the classification head with random weights while the NaFM encoder weights start from the pre-trained state.

Fine-tuning:

- Use a cross-entropy loss function for multi-class classification.

- Employ a low initial learning rate (e.g., 1e-5 to 1e-4) and a scheduler (e.g., ReduceLROnPlateau) to avoid catastrophic forgetting of pre-trained knowledge.

- Monitor accuracy on the validation set. Early stopping is recommended to prevent overfitting.

- Key Consideration: Compared to training from scratch or using generic molecular models, fine-tuning NaFM should converge faster and achieve higher accuracy, especially on out-of-distribution or rare taxonomic classes, due to its NP-specific prior knowledge [24].

Protocol 2: Integrating NaFM into a Virtual Screening Pipeline

Objective: To rank a library of natural products by predicted activity against a specific protein target. Background: Virtual screening prioritizes compounds for experimental testing, dramatically reducing cost and time [11] [4].

Library and Target Preparation:

- Prepare the target protein structure (e.g., from PDB) by removing water, adding hydrogens, and assigning charges (e.g., using UCSF Chimera, AutoDock Tools).

- Prepare the NP screening library as SMILES. Clean and standardize structures (e.g., using RDKit).

Generating NP Representations:

- Pass the entire library of NP SMILES through the pre-trained NaFM encoder without fine-tuning.

- Extract the global graph representation (a fixed-size numerical vector) for each compound. These representations encode rich structural and implicit bioactivity information.

Activity Prediction & Screening:

- Option A (Similarity-based): If known active compounds are available, compute the similarity (e.g., cosine similarity) between their NaFM embeddings and those of the library compounds. Rank the library by similarity score.

- Option B (Predictive Model): If a dataset of compounds with measured activity (e.g., pIC50) against the target is available, train a simple regressor (e.g., Random Forest, Gradient Boosting) or a shallow neural network using the NaFM embeddings as input features. Use this model to score the NP library.

- Select the top-ranked compounds (e.g., top 100-500) for subsequent molecular docking analysis (e.g., with AutoDock Vina or Glide) to assess binding poses and affinity [11].

Protocol 3: Evolutionary Mining of Biosynthetic Gene Clusters (BGCs)

Objective: To associate NP structures with their putative biosynthetic gene clusters or enzyme families. Background: Linking molecules to genes enables genome mining and metabolic engineering [24].

Data Curation:

- Assemble a dataset of known NP-structure-to-BGC pairs. Public resources like MIBiG (Minimum Information about a Biosynthetic Gene Cluster) are essential starting points [25].

- Represent each BGC by features such as the presence/absence of Pfam enzyme domains.

Model Training for Association:

- Use the NaFM embeddings of NP structures as the input feature vector (X).

- Use the BGC feature vector (e.g., a binary vector of Pfam domains) as the target (Y).

- Train a multi-label prediction model (e.g., a neural network with sigmoid output activation) to predict BGC features from the NP structure embedding.

- This model learns the latent relationship between chemical structure and biosynthetic machinery [24].

Prospective Mining:

- For a novel NP of interest, generate its embedding using NaFM.

- Pass the embedding through the trained association model to predict the most likely Pfam domains or BGC types involved in its biosynthesis.

- These predictions can guide the search for the corresponding BGC in a sequenced genome or metagenome.

Table 2: Performance Benchmarks of NaFM on Core Downstream Tasks

| Downstream Task | Dataset | Evaluation Metric | NaFM Performance | Key Comparative Baseline |

|---|---|---|---|---|

| Taxonomy Classification | Refreshed NPClassifier [25] | Macro F1-Score | Outperforms NPClassifier (supervised tool) and generic molecular MLMs [24]. | NPClassifier [24] |

| Bioactivity Prediction | NPASS database [25] | RMSE (Regression) | Lower error in predicting pChEMBL values for targets like AChE, 5-HT2A compared to baseline GNNs [24]. | AttentiveFP [24] |

| BGC & Enzyme Family Prediction | MIBiG / Pfam [25] | Average Precision | Effectively associates structures with biosynthetic genes; captures evolutionary information [24]. | Molecular Transformer [24] |

Visualization of Workflows and Model Architecture

Diagram 1: NaFM Framework: From Pre-training to Application (98 chars)

Table 3: Key Databases and Software for NP Research with NaFM

| Resource Name | Type | Primary Function in NP Research | Relevance to NaFM Workflow |

|---|---|---|---|

| COCONUT | Database | A comprehensive open-source collection of natural product structures [25]. | Primary source of unlabeled data for pre-training the foundation model [25]. |

| NPASS | Database | Provides detailed natural product activity data against protein targets [25]. | Source for labeled data to fine-tune and evaluate NaFM for bioactivity prediction and virtual screening [24] [25]. |

| LOTUS | Database | Links NP structures to their biological source organisms [25]. | Provides data for pre-training and evaluating taxonomy classification and evolutionary mining tasks [24] [25]. |

| MIBiG | Database | A curated repository of known Biosynthetic Gene Clusters (BGCs) and their metabolites [25]. | Essential for creating datasets to train and test NaFM's ability to link chemical structures to genetic origins [24] [25]. |

| RDKit | Software | Open-source cheminformatics toolkit for working with molecular data [11]. | Used for standardizing SMILES, generating molecular descriptors, and converting structures to graph format for model input. |

| PyTorch Geometric | Software | A library for deep learning on graphs, built on PyTorch [11]. | Provides the core GNN layer implementations and data handling utilities for building and training models like NaFM. |

| AutoDock Vina | Software | A widely used program for molecular docking [11]. | Used in the virtual screening protocol to perform binding pose prediction and affinity estimation on compounds prioritized by NaFM. |

The discovery of new therapeutic agents from natural products (NPs) has historically been a cornerstone of pharmacology, particularly for complex diseases like cancer and infectious diseases [26]. NPs possess privileged chemical scaffolds, evolved over millennia to interact with biological systems, offering high structural diversity and potent bioactivity [27] [28]. However, NP-based drug discovery faces significant challenges, including labor-intensive isolation processes, structural complexity, and difficulties in achieving sustainable resupply [26] [27]. These hurdles have, in the past, led to a decline in industry interest in favor of high-throughput screening (HTS) of synthetic libraries.

The central thesis of modern computational pharmacology posits that deep learning (DL) can revitalize NP research by overcoming these historical bottlenecks. DL provides the tools to virtually screen vast, chemically diverse NP libraries with unprecedented speed and accuracy, predicting bioactive compounds before costly wet-lab experiments begin [29] [30]. This article explores the embodiment of this thesis in next-generation, multi-stage virtual screening (VS) pipelines. Platforms like HelixVS and VirtuDockDL represent a paradigm shift, moving beyond single-method docking to integrated workflows that synergistically combine classical physics-based methods with data-driven DL models [31] [11]. By dramatically improving enrichment factors (EF) and screening throughput, these pipelines are making the systematic exploration of massive NP libraries for novel drug leads a practical and cost-effective reality [31] [32].

Quantitative Performance Benchmarks: HelixVS and VirtuDockDL

The superiority of multi-stage DL pipelines is quantitatively demonstrated through rigorous benchmarking against established tools. The following tables summarize key performance metrics for HelixVS and VirtuDockDL.

Table 1: Virtual Screening Performance on the DUD-E Benchmark Dataset [31] [32]

| Method | EF at 0.1% (EF₀.₁%) | EF at 1% (EF₁%) | Screening Speed (Molecules/Day/Core) |

|---|---|---|---|

| AutoDock Vina | 17.065 | 10.022 | ~300 |

| Glide SP | 25.968 (approx.) | Not specified | Lower than Vina |

| HelixVS | 44.205 | 26.968 | ~4,000 |

| Performance Gain (HelixVS vs. Vina) | 2.6x fold increase | ~2.7x fold increase | >13x faster |

Table 2: Validation Metrics for VirtuDockDL on Specific Target Datasets [11]

| Target / Dataset | Metric | VirtuDockDL Performance | Comparative Tool Performance |

|---|---|---|---|

| HER2 (Cancer) | Accuracy | 99% | DeepChem (89%), AutoDock Vina (82%) |

| F1-Score | 0.992 | Not specified for others | |

| AUC | 0.99 | Not specified for others | |

| VP35 (Marburg Virus) | Experimental Hit Identification | Successfully identified non-covalent inhibitors | Outperformed RosettaVS, MzDOCK, PyRMD |

Table 3: Experimental Hit Rates from HelixVS-Driven Drug Discovery Campaigns [31] [32]

| Therapeutic Target | Library Size Screened | Key Experimental Outcome | Wet-Lab Hit Rate |

|---|---|---|---|

| CDK4/6 (Cancer) | 7.8 million | 6 of top 100 compounds showed >20% inhibition in BiFC assay. | 6% from selected subset |

| TLR4/MD-2 (Inflammation) | 200,000 | 2 compounds exhibited nanomolar (nM) activity in SEAP assay. | >0.5% actives from screened library |

| cGAS (Immunology) | 30,000 | 17 active compounds identified, with potencies <10 µM (one in nM range). | ~0.06% actives from screened library |

| Aggregate across pipelines | >18 million | Over 10% of molecules selected for testing demonstrated µM to nM activity. | >10% from prioritized hits |

Detailed Experimental Protocols

Protocol for HelixVS Multi-Stage Screening

Application Note: This protocol is designed for structure-based virtual screening (SBVS) against a defined protein target, utilizing the HelixVS platform to efficiently prioritize high-affinity ligands from ultra-large libraries (millions to billions of compounds) [31] [32].

Stage 1: High-Throughput Pose Generation with Classical Docking

- Input Preparation: Prepare the target protein structure (e.g., from PDB) by adding hydrogen atoms, assigning protonation states, and defining the binding pocket coordinates. Prepare the ligand library in a suitable format (e.g., SDF, SMILES). HelixVS automates protein pre-processing and supports both built-in and custom compound libraries [31].

- Docking Execution: Using the integrated AutoDock QuickVina 2 engine, perform molecular docking for each ligand in the library into the defined binding site [31].

- Pose Retention: Retain multiple (e.g., 5-10) top-scoring binding conformations and their empirical scores (estimated ΔG) for each ligand. Critical Step: Preserving conformational diversity at this stage is essential for downstream DL scoring [31].

Stage 2: Deep Learning-Based Affinity Re-scoring

- Model Input: Feed the ensemble of docking poses from Stage 1 into the DL-based affinity scoring model. This model is built upon the RTMscore architecture, augmented with extensive co-crystal structure data from the PDB [31] [32].

- Affinity Prediction: The DL model evaluates each pose, generating a more accurate predicted binding affinity score that captures complex interaction patterns often missed by classical scoring functions.

- Re-ranking: Re-rank all ligands based on their best DL score, effectively filtering out false positives favored by the empirical docking score.

Stage 3: Binding Mode Filtering & Clustering (Optional)

- Interaction Filtering: Apply rule-based or pharmacophore filters to select poses forming specific, desired interactions (e.g., a hydrogen bond with a key catalytic residue). This step enforces a user-defined binding mode hypothesis [31] [32].

- Diversity Selection: Cluster the top-ranked compounds based on molecular similarity (e.g., Tanimoto coefficient on fingerprints). Select a representative subset of compounds from each cluster to ensure chemical diversity in the final output for experimental testing [31].

Validation: Benchmark performance using the DUD-E dataset. Platform validation is confirmed by wet-lab testing, consistently yielding >10% active compounds from prioritized hits [31] [32].

Protocol for VirtuDockDL’s GNN-Driven Screening

Application Note: This protocol outlines the use of VirtuDockDL for ligand-based and structure-based screening, leveraging a Graph Neural Network (GNN) to predict bioactive compounds from a library, followed by molecular docking [11].

Phase 1: Data Preparation and GNN Model Training

- Dataset Curation: Assemble a labeled dataset of active ("hit") and inactive ("non-hit") compounds for the biological target of interest. For example, for β-microtubule inhibitors, a hit dataset of 637 known inhibitors and a non-hit dataset of 2932 diverse molecules were used [30].

- Molecular Featurization: Convert SMILES strings of all compounds into molecular graphs using RDKit. Nodes represent atoms (featurized with atomic number, degree, etc.), and edges represent bonds [11].

- Model Training: Train a Directed Message Passing Neural Network (DMPNN) or a similar GNN architecture using PyTorch Geometric. The model learns to aggregate information from a compound's graph structure to predict its activity class [11] [30].

- Performance Evaluation: Validate the trained model on a held-out test set. A well-trained model for β-microtubule screening achieved an AUC of 0.9962 and accuracy of 96% [30].

Phase 2: Virtual Screening and Docking

- Library Screening: Process the target NP or compound library through the trained GNN model to obtain a prediction score (hit probability) for each molecule.

- Compound Prioritization: Rank all library compounds by their predicted score. Apply filters such as Lipinski's Rule of Five and a similarity threshold to known actives (e.g., Tanimoto <0.7) to prioritize novel, drug-like leads [30].

- Structure-Based Validation: Perform molecular docking (e.g., using AutoDock Vina) for the top-ranked virtual hits against the 3D structure of the target protein to assess binding modes and approximate affinity.

- Experimental Triaging: Select the final candidates for in vitro validation based on a consensus of high GNN score, favorable docking pose, and desirable physicochemical properties.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Software, Libraries, and Resources for Implementing DL-VS Pipelines

| Tool/Resource Name | Type/Category | Function in DL-VS Pipeline | Primary Source/Reference |

|---|---|---|---|

| HelixVS Platform | Integrated Web Platform | End-to-end multi-stage VS service combining QuickVina2 docking, DL re-scoring (RTMscore-based), and binding-mode filtering. | Public service: paddlehelix.baidu.com [31] |

| VirtuDockDL | Python-based Pipeline | Automated DL screening via GNN models followed by docking. Integrates RDKit, PyTorch Geometric. | GitHub repository [11] |

| AutoDock QuickVina 2 | Docking Software | Fast, classical molecular docking engine used for initial pose generation in Stage 1 of HelixVS. | Alhossary et al., 2015 [31] |

| RTMscore | Deep Learning Model | Architecture foundation for the affinity prediction model in HelixVS; trained on PDB co-crystal data. | Shen et al., 2022 [31] |

| RDKit | Cheminformatics Library | Open-source toolkit for molecule manipulation, descriptor calculation, and SMILES-to-graph conversion. Used in VirtuDockDL [11]. | rdkit.org |

| PyTorch Geometric | Deep Learning Library | Library for building and training GNNs on irregular graph data (molecules). Core to VirtuDockDL's model [11]. | pytorch-geometric.readthedocs.io |

| DUD-E Dataset | Benchmark Dataset | Directory of Useful Decoys: Enhanced; standard benchmark for evaluating VS method performance. | Mysinger et al., 2012 [31] |

| ZINC / NP Libraries | Compound Databases | Sources of commercially available and natural product compounds for screening (e.g., ZINC15, COCONUT, NPASS). | Sterling & Irwin, 2015; Various [31] [30] |

Workflow Visualization: Architectural Diagrams of Multi-Stage Pipelines

HelixVS Multi-Stage Virtual Screening Pipeline

VirtuDockDL GNN-Based Screening and Docking Workflow

Applications in Natural Product Research: Case Studies

The integration of these advanced pipelines into NP research is already yielding promising results, validating the core thesis.

Case Study 1: Discovery of Novel β-Microtubule Inhibitors A dedicated DL screening pipeline was employed to discover new microtubule-stabilizing agents from NPs, inspired by the success of paclitaxel [30]. Researchers trained a DMPNN model on 637 known β-tubulin inhibitors and 2932 non-inactive molecules. This model was used to screen a library of 4247 natural products. The virtual hits were filtered for drug-likeness and novelty, leading to the experimental validation of Bruceine D and Phorbol 12-myristate 13-acetate (PMA) as new β-microtubule inhibitors. Both compounds demonstrated potent anti-proliferative activity (IC₅₀ ~10.7 µM) in MDA-MB-231 cells, induced cell cycle arrest, and promoted apoptosis [30]. This study exemplifies a successful ligand-based DL screen directly applied to an NP library.

Case Study 2: HelixVS for Challenging Protein-Protein Interaction (PPI) Targets NP-inspired scaffolds are often sought for "undruggable" PPI targets. HelixVS has been applied to several such challenging campaigns [31] [32]:

- Targeting cGAS: Screening 30,000 molecules against the ATP-binding pocket of cyclic GMP-AMP synthase (cGAS) identified 17 active compounds, with one exhibiting nanomolar potency in a cell-based luciferase assay.

- Targeting TLR4/MD-2: From 200,000 screened molecules, over 100 candidates were progressed, with two showing nanomolar inhibitory activity. These applications demonstrate that multi-stage pipelines like HelixVS can effectively screen for hits against complex binding sites, a task where NP diversity is particularly valuable.

The evolution of structure-based screening into multi-stage, DL-integrated pipelines represents a transformative advancement for drug discovery, with particular resonance for the field of natural products. Platforms like HelixVS and VirtuDockDL address the critical need for both high accuracy (2-3x improvement in enrichment) and high throughput (screening millions of compounds daily) that is essential for navigating the vast chemical space of NP libraries [31] [11] [32].

By framing this technological progress within the broader thesis of deep learning for NP research, it becomes clear that these tools are directly countering historical attrition points: they reduce reliance on slow, material-intensive bioactivity-guided fractionation and enable the in silico prioritization of the most promising NP leads. As these pipelines become more accessible and integrated with growing digitized NP databases [28], they pave the way for a sustainable and efficient renaissance in natural product-based drug discovery, accelerating the translation of nature's chemical ingenuity into novel therapeutics for global health challenges.

The integration of deep learning (DL) into virtual screening (VS) represents a paradigm shift in computational drug discovery, particularly for exploring the vast and structurally diverse chemical space of natural products (NPs). NPs are a prolific source of novel therapeutics, but their complex scaffolds pose significant challenges for conventional screening methods [4]. Within the broader thesis of employing DL for NP-based drug discovery, a critical challenge emerges: balancing the high predictive accuracy of state-of-the-art DL models with the computational efficiency required to screen ultra-large libraries [33] [34].

This application note focuses on Boltzina, a novel hybrid framework that directly addresses this efficiency-accuracy trade-off [35]. Boltzina strategically fuses rapid, classical molecular docking with the high-fidelity scoring power of a cutting-edge DL model, Boltz-2 [33]. By omitting Boltz-2's rate-limiting 3D structure prediction module and instead using poses generated by AutoDock Vina, Boltzina achieves a significant speedup while retaining superior screening performance over traditional docking [34]. This protocol details the implementation, optimization, and application of Boltzina-like hybrid workflows, positioning them as essential tools for accelerating the virtual screening of natural product libraries within a modern DL-driven research thesis.

Core Methodologies and Experimental Protocols

Architectural Framework of the Boltzina Hybrid Pipeline

The Boltzina framework is built upon a strategic decomposition of the Boltz-2 architecture. Boltz-2 itself comprises three core modules: a Trunk Module for extracting latent protein-ligand interaction features, a Structure Module that performs a diffusion-based prediction of the 3D complex coordinates, and an Affinity Module that predicts binding likelihood and affinity [35] [34].