Taxonomic Dereplication vs. Structure-Based Design: Choosing the Right Strategy for Modern Drug Discovery

This article provides a comprehensive comparative analysis of two dominant strategies in natural product and drug discovery: taxonomic-focused dereplication and structure-based approaches.

Taxonomic Dereplication vs. Structure-Based Design: Choosing the Right Strategy for Modern Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of two dominant strategies in natural product and drug discovery: taxonomic-focused dereplication and structure-based approaches. For researchers and drug development professionals, we explore the foundational principles, core methodologies, and practical applications of each paradigm. We detail how taxonomic dereplication, powered by molecular networking and mass spectrometry, enables rapid known-compound filtering to prioritize novelty[citation:2][citation:8]. In contrast, we examine structure-based methods, including virtual screening and molecular docking, which leverage target protein architecture to rationally design or discover bioactive leads[citation:1][citation:6]. The article directly compares their strengths in troubleshooting common pitfalls like rediscovery and off-target effects, and validates their performance through real-world applications in identifying anticancer agents and novel microbial metabolites[citation:1][citation:3]. Finally, we synthesize key decision-making criteria for project-specific strategy selection and outline the future of integrated, AI-enhanced workflows that promise to bridge these complementary philosophies[citation:4][citation:6][citation:7].

Core Philosophies: Defining Taxonomic Prioritization and Structure-Based Rational Design

In the search for new therapeutic agents and the understanding of complex biological systems, researchers are fundamentally guided by a central dichotomy: the identification of the known and the discovery of the unknown. This dichotomy is operationalized through two complementary methodological paradigms: dereplication and de novo discovery. Dereplication is the efficient process of identifying known compounds or taxa within a complex sample to avoid redundant rediscovery, thereby streamlining resource allocation [1] [2]. In contrast, de novo discovery aims to isolate, characterize, and identify entirely novel entities—be they chemical structures, microbial species, or genetic pathways—that are absent from existing databases [3] [4].

These approaches are framed within two distinct but increasingly convergent research strategies: taxonomic-focused analysis and structure-based approaches. Taxonomic-focused dereplication, prevalent in microbiome research and natural product discovery from biological sources, classifies entities based on evolutionary relationships and marker genes [3] [5]. Structure-based approaches, central to modern drug design, prioritize the three-dimensional architecture and physico-chemical properties of molecular targets and their ligands [6] [7]. This guide provides a comparative analysis of these methodologies, supported by experimental data and protocols, to inform strategic decisions in research and development.

Comparative Analysis: Dereplication vs. De Novo Discovery

In Natural Products & Microbiome Research

This domain leverages analytical chemistry and genomics to navigate the complexity of biological extracts and microbial communities.

Dereplication is a critical first pass to filter out known compounds. As demonstrated in the analysis of a polyherbal liquid formulation, an LC-MS/MS dereplication strategy successfully identified 70 compounds, with 44 uniquely attributed to specific plant species [2]. This process prevents the costly and time-consuming isolation of common metabolites. Similarly, in microbiology, alignment-based (AL) methods map sequencing reads to reference databases (e.g., GTDB, CHOCOPhlAn) to rapidly profile the known taxonomic composition of a sample [3].

De novo discovery targets the uncharted fraction. In soil microbiome research, the use of microbial diffusion chambers enabled the cultivation of previously "uncultivable" bacteria, yielding 1,218 isolates where 16% showed antibiotic activity [4]. This is the experimental counterpart to de novo (DN) bioinformatic approaches, which assemble genomes from sequence data without reference bias, enabling the discovery of novel microbial taxa and gene clusters [3]. An integrated pipeline combining cultivation, bioassay, mass spectrometry (MS) dereplication, and genome mining is optimal for novel natural product discovery [4].

Table 1: Comparison of Approaches in Natural Products & Microbiome Research

| Aspect | Dereplication (Known-First) | De Novo Discovery (Novelty-First) |

|---|---|---|

| Primary Goal | Rapid identification of known entities to avoid rediscovery. | Discovery and characterization of novel entities. |

| Core Methodology | LC-MS/MS spectral matching [1] [2]; Alignment to reference genomic databases [3]. | Bioassay-guided fractionation; Cultivation innovations (e.g., diffusion chambers) [4]; De novo genome assembly & binning [3]. |

| Key Tool/Platform | In-house or public spectral libraries (e.g., GNPS, MassBank) [1]; MetaPhlAn, HUMAnN [3]. | Global Natural Products Social Molecular Networking (GNPS) [8]; Metagenome-Assembled Genome (MAG) reconstruction pipelines. |

| Typical Output | List of annotated compounds or taxa with relative abundances. | Novel chemical structures; Novel microbial genomes & biosynthetic gene clusters (BGCs). |

| Strengths | High speed, efficiency, and reproducibility. Essential for quality control and standardization [2]. | Accesses untapped chemical and biological diversity. Potential for high-impact discovery. |

| Limitations | Limited by scope and quality of reference databases. Blind to novelty. | Resource-intensive, time-consuming, and often low-throughput. |

In Computational Structure-Based Drug Discovery (SBDD)

Here, the dichotomy manifests in the use of known structural information to predict new interactions or to generate novel molecular entities.

Dereplication in SBDD involves screening virtual or chemical libraries against a target to identify known binders or chemotypes. It relies heavily on knowledge-based methods (e.g., machine learning models trained on known protein-ligand complexes) that excel at interpolating within existing chemical space but struggle to generalize to novel scaffolds [6]. The goal is to quickly prioritize compounds with a higher probability of activity based on historical data.

De novo discovery in SBDD refers to the ab initio design or identification of novel molecular scaffolds that optimally fit a target binding site. This is the domain of physics-based methods like molecular docking, free energy perturbation (FEP) calculations, and de novo ligand design algorithms [6] [9]. These methods use principles of molecular mechanics and thermodynamics to evaluate interactions, potentially generating innovative solutions not present in training data.

Table 2: Comparison of Approaches in Computational Structure-Based Drug Discovery

| Aspect | Knowledge-Based (Dereplication-Oriented) | Physics-Based (De Novo-Oriented) |

|---|---|---|

| Primary Goal | Predict activity/affinity by learning from known data. | Predict binding pose and affinity from first physical principles. |

| Core Methodology | Machine Learning (ML) / Deep Learning on structural and bioactivity databases (e.g., PDBbind, ChEMBL) [6]. | Molecular Docking, Molecular Dynamics (MD), Free Energy Perturbation (FEP) [6] [9]. |

| Data Dependency | High; requires large, high-quality training datasets. Performance degrades for novel targets or chemotypes [6]. | Low in principle; but accuracy depends on force-field quality and sampling. Requires a high-resolution target structure. |

| Strength | Extremely fast screening of ultra-large libraries. Excellent for targets rich in data [6]. | Can handle novel scaffolds and make predictions where no ligand data exists. Provides mechanistic insight. |

| Limitation | Risk of overfitting; limited generalizability "outside the box" of training data [6]. | Computationally expensive; can be prone to scoring function inaccuracies; sensitive to input structure quality [6]. |

| Ideal Use Case | Early-stage virtual screening to filter known chemotypes. Lead optimization for data-rich targets. | Hit identification for novel targets. Scaffold hopping and lead optimization for precise affinity prediction. |

Experimental Protocols

This protocol is designed for the rapid identification of known bioactive compounds in complex plant extracts.

- Sample Preparation & Pooling: Prepare standard solutions of reference compounds. Use a log P-based pooling strategy to minimize co-elution and isomer interference. For crude extracts, employ Solid-Phase Extraction (SPE) with C-18 cartridges to remove sugars and interfering matrix components [2].

- LC-MS/MS Analysis: Analyze pools or samples using Reversed-Phase Liquid Chromatography coupled to high-resolution tandem Mass Spectrometry (LC-HRMS/MS). Optimize chromatography for compound separation.

- Data Acquisition: Acquire MS/MS spectra in data-dependent acquisition (DDA) mode. For each compound, collect fragmentation data at multiple collision energies (e.g., 10, 20, 30, 40 eV) and for different adducts ([M+H]⁺, [M+Na]⁺).

- Library Construction & Searching: Construct an in-house spectral library by cataloguing the retention time (RT), precursor m/z, and fragmentation patterns of reference standards. Search experimental MS/MS data against this library and public databases (e.g., GNPS, MassBank). Use a mass error tolerance of <5 ppm for confident annotation [1].

- Validation: Confirm identifications by comparing RT and MS/MS spectra with authentic standards analyzed under identical conditions.

This multi-omic protocol aims to discover novel antibiotics from uncultivated soil bacteria.

- In Situ Cultivation: Construct microbial diffusion chambers with 0.03 µm semi-permeable membranes. Inoculate chambers with a diluted soil slurry in low-nutrient agar and incubate them buried in the source soil for 2-4 weeks to allow growth of uncultivable bacteria.

- Strain Recovery & Screening: Retrieve agar plugs, domesticate isolates on R2A agar, and cryopreserve pure cultures. Screen isolates for bioactivity against target pathogens (e.g., S. aureus, E. coli) using agar overlay assays.

- MS-Based Dereplication: Culture bioactive strains and extract secondary metabolites. Analyze extracts via LC-HRMS/MS. Process data through the GNPS platform for molecular networking and dereplication against spectral libraries to identify known antibiotics.

- Genomic Analysis: Sequence the genome of prioritized bioactive strains. Perform genome mining using tools like antiSMASH to identify Biosynthetic Gene Clusters (BGCs) responsible for secondary metabolite production. This step can reveal the potential for novel compounds even if MS dereplication was unsuccessful.

- Targeted Isolation & Characterization: For strains with novel BGCs or ambiguous MS IDs, scale up fermentation, and use bioassay-guided fractionation to isolate the active compound(s). Elucidate structure using NMR and HRMS.

Visualizing Workflows and Relationships

Integrated De Novo Discovery Workflow

Structure-Based Drug Design Pathways

The Researcher's Toolkit

Table 3: Essential Reagents and Materials for Featured Experiments

| Item | Function / Application | Relevant Protocol |

|---|---|---|

| 0.03 µm Polycarbonate Membrane | Forms the semi-permeable barrier of diffusion chambers, allowing nutrient exchange while containing microorganisms [4]. | De Novo Antibiotic Discovery |

| R2A Agar / SMS Agar | Low-nutrient cultivation media used to recover and grow oligotrophic soil bacteria that fail to grow on rich media [4]. | De Novo Antibiotic Discovery |

| C-18 Solid Phase Extraction (SPE) Cartridge | Removes polar interfering substances (e.g., sugars, salts) from complex herbal extracts, reducing matrix effects and improving LC-MS signal clarity [2]. | LC-MS/MS Dereplication |

| LC-MS Grade Solvents (MeOH, H₂O with Formic Acid) | High-purity mobile phase for liquid chromatography to ensure reproducible retention times and prevent ion source contamination in MS [1] [2]. | Both Protocols |

| Authentic Chemical Standards | Reference compounds used to build in-house spectral libraries for definitive identification by matching retention time and MS/MS spectrum [1]. | LC-MS/MS Dereplication |

| Syto9 / DAPI Nucleic Acid Stains | Fluorescent dyes used to count microbial cells in soil slurries for standardized inoculation of diffusion chambers [4]. | De Novo Antibiotic Discovery |

| Global Natural Products Social (GNPS) Platform | A public online platform for sharing and analyzing mass spectrometry data, enabling spectral library matching and molecular networking [4] [8]. | Both Protocols |

The dichotomy between dereplication and de novo discovery is not a barrier but a strategic framework. The most effective research pipelines in both natural products and computational drug discovery are those that sequentially integrate both paradigms. The future lies in hybrid approaches: using dereplication to efficiently clear the known landscape, thereby focusing costly de novo efforts on the most promising unexplored territories [3] [4]. Similarly, in SBDD, combining the speed of knowledge-based methods with the rigorous, generative potential of physics-based simulations represents the state of the art [6] [7]. Whether focused on taxonomy or molecular structure, the ultimate goal remains the same: to navigate the vast universe of the unknown by first intelligently managing the known.

The discovery of new therapeutic agents has undergone a fundamental paradigm shift, evolving from observation-driven natural product isolation to prediction-enabled molecular design. This transition represents more than a mere technological upgrade; it signifies a profound change in the philosophical approach to interrogating biological systems and chemical space. Historically, bioactivity-guided fractionation served as the cornerstone of drug discovery, relying on systematic biological screening of complex natural extracts to isolate active compounds, often informed by traditional medicinal knowledge [10]. In parallel, the natural products field developed taxonomy-focused dereplication—a strategy to efficiently identify known compounds from biological sources based on taxonomic relationships and spectroscopic data, thereby avoiding redundant rediscovery [11].

Conversely, the rise of computational first principles and structure-based approaches has introduced a target-centric, rational framework. Enabled by advances in structural biology, high-performance computing, and machine learning, this paradigm uses the three-dimensional structure of therapeutic targets to design or discover ligands with precision [12] [13]. This guide provides an objective comparison of these foundational methodologies, examining their performance, experimental requirements, and ideal applications within modern drug development. The analysis is framed by the broader research thesis that contrasts the organism- and chemistry-centric viewpoint of taxonomy-focused dereplication with the target- and structure-centric viewpoint of computational design, highlighting how their integration is shaping the future of the field.

Performance Comparison: Key Metrics and Outcomes

The following tables quantitatively compare the core characteristics, outputs, and practical performance of taxonomy-focused dereplication and structure-based computational approaches.

Table 1: Foundational Characteristics and Strategic Focus

| Comparison Aspect | Taxonomy-Focused Dereplication & Bioactivity-Guided Fractionation | Computational First Principles & Structure-Based Design |

|---|---|---|

| Primary Objective | Identify novel bioactive compounds from nature; avoid re-isolation of knowns [11] [10]. | Design or discover novel ligands for a defined macromolecular target [12] [13]. |

| Starting Point | Biological material (plant, microbial extract) with observed bioactivity or taxonomic lineage [10]. | 3D structure of a target protein (experimental or predicted) [12]. |

| Core Principle | Leverage evolutionary conservation of biosynthetic pathways within taxa for targeted discovery [11]. | Apply principles of molecular recognition, thermodynamics, and docking physics [13] [6]. |

| Key Data Inputs | Taxonomic classification; LC-MS/MS, NMR spectroscopic data [11] [14]. | Protein atomic coordinates; chemical libraries; force field parameters [12] [6]. |

| Typical Output | Isolated and characterized novel natural product(s) with confirmed biological activity [10]. | Predicted high-affinity small molecule binders with a proposed binding pose [13]. |

Table 2: Quantitative Performance and Practical Metrics

| Performance Metric | Taxonomy-Focused/Bioactivity-Guided Approach | Computational/Structure-Based Approach | Supporting Data & Context |

|---|---|---|---|

| Historical Success (FDA-Approved Drugs) | A major source: ~35% of modern medicines are natural products or direct derivatives [10]. | Significant contributor: SBDD estimated to have contributed to >200 approved drugs; FBDD directly led to 4 [15]. | Natural products dominate in anti-infectives and oncology [10]. SBDD is versatile across target classes [12]. |

| Development Timeline (Early Stage) | Can be lengthy due to slow extraction, fractionation, and structure elucidation steps [10]. | Rapid virtual screening of ultra-large libraries (billions of compounds) is possible in weeks [13]. | Computational speed is offset by later synthetic and experimental validation requirements. |

| Cost Implications | High costs associated with large-scale biomass collection, purification, and wet-lab screening [10]. | Can reduce early discovery costs significantly; CADD estimated to cut discovery costs by up to 50% [13]. | Major costs in the natural product pipeline are front-loaded; computational costs are largely in infrastructure/software. |

| Hit Rate Efficiency | Low hit rate in random screening; greatly enhanced by ethnopharmacological or taxonomic focus [10]. | Typical experimental hit rates from structure-based virtual screening range from 10% to 40% [13]. | Hit rate for computational methods depends heavily on target "druggability" and library quality [6]. |

| Chemical Space Coverage | Explores biologically pre-validated, structurally complex, and often "drug-like" chemical space [14]. | Can theoretically access vast synthetic chemical space (e.g., >6.7 billion in REAL database) [13]. | Natural products cover regions of chemical space often inaccessible to synthetic libraries [14]. |

| Major Challenge | Supply, re-isolation of knowns, slow dereplication, complex structure determination [11] [10]. | Accurate scoring and affinity prediction, handling protein flexibility, synthetic accessibility of hits [13] [6]. | Both fields are developing solutions: genomic mining for NPs; better force fields & AI for SBDD [6] [14]. |

Experimental Protocols: Representative Methodologies

Protocol for Taxonomy-Focused Dereplication Using 13C NMR

This protocol, based on the CNMR_Predict pipeline, creates a targeted database for efficient dereplication of natural products from a specific organism [11].

1. Taxon Definition and Raw Data Acquisition:

- Select the organism of interest (e.g., Brassica rapa subsp. rapa L.).

- Query the LOTUS database (or similar taxonomy-aware NP database) using the species name as a keyword.

- Download all associated chemical structures in a standard file format (e.g., SDF V3000).

2. Data Curation and Standardization:

- Remove duplicate structures based on canonical identifiers (e.g., InChI keys).

- Apply tautomer normalization to ensure all structures are in a consistent, predictable form (e.g., converting iminol forms to amides).

- Adjust atomic valence representations to ensure compatibility with downstream prediction software.

3. Spectral Data Prediction and Database Creation:

- Import the curated structure list into specialized 13C NMR prediction software (e.g., ACD/Labs CNMR Predictor).

- Execute batch prediction of chemical shifts for all carbon atoms in all compounds.

- Export the combined dataset (structures + predicted spectra) into a searchable, taxon-specific database.

4. Experimental Dereplication:

- Obtain a 13C NMR spectrum (or a 1D 1H spectrum with 13C projections) of the purified unknown compound or complex mixture.

- Search the experimental chemical shifts against the created taxon-specific database.

- Evaluate matches based on chemical shift tolerance (e.g., ± 0.5-1.0 ppm) and the number of matching signals. A high-confidence match indicates a known compound, halting further costly isolation efforts.

Protocol for High-Throughput X-ray Crystallography Fragment Screening (FBDD)

This protocol outlines a primary fragment screening approach using high-throughput X-ray crystallography, as implemented at facilities like XChem [15].

1. Target and Library Preparation:

- Protein Target: Produce and purify a stable, crystallizable target protein (>10 mg, >95% pure). Engineer constructs if necessary for crystallization.

- Fragment Library: Obtain a curated library of 500-1500 fragments compliant with the "Rule of Three" (MW <300, cLogP ≤3, ≤3 H-bond donors/acceptors, etc.) [15].

- Co-crystallization or Soaking: Prepare high-quality, reproducible protein crystals. For screening, use a fragment soaking method:

- Group fragments into cocktails of 4-8 compounds, each at high concentration (e.g., 200 mM in DMSO).

- Briefly soak crystals in mother liquor containing the fragment cocktail.

- Alternatively, perform co-crystallization with individual fragments or cocktails.

2. High-Throughput Data Collection and Processing:

- Harvest and cryo-cool soaked crystals.

- Collect X-ray diffraction data for hundreds to thousands of crystals in an automated, high-throughput beamline setup.

- Process data automatically: integrate diffraction images, scale intensities, and solve structures by molecular replacement using the native protein model.

3. Hit Identification and Analysis:

- Use automated electron density analysis software (e.g., PanDDA) to detect bound fragments in the difference electron density maps, even for weak binders [15].

- Manually validate hits: inspect electron density for clear fragment shape, assess fit, and model the fragment into the density.

- Output: A list of confirmed fragment hits with precise, experimentally determined binding modes, locations (orthosteric/allosteric), and protein-ligand interaction maps.

4. Hit-to-Lead Progression:

- Prioritize hits based on binding pose, ligand efficiency, and chemical tractability.

- Initiate fragment growing, linking, or optimization using the detailed structural information to design more potent lead compounds [15].

Workflow and Relationship Diagrams

Diagram 1: Bioactivity-Guided Fractionation with Dereplication Workflow (Max Width: 760px).

Diagram 2: Computational First Principles Drug Discovery Workflow (Max Width: 760px).

Table 3: Key Reagents and Resources for Dereplication and Structure-Based Research

| Tool/Resource Name | Category | Primary Function | Relevant Paradigm |

|---|---|---|---|

| LOTUS Database (lotus.naturalproducts.net) | Database | Provides rigorously curated links between natural product structures and their taxonomic sources for targeted queries [11]. | Taxonomy-Focused Dereplication |

| ACD/Labs CNMR Predictor and DB | Software | Predicts 13C NMR chemical shifts from molecular structure to create searchable spectral databases for dereplication [11]. | Taxonomy-Focused Dereplication |

| GNPS (Global Natural Products Social Molecular Networking) | Data Platform | Enables community-wide sharing and curation of MS/MS spectral data for annotation and dereplication of natural products [14]. | Bioactivity-Guided Fractionation |

| Rule of Three (Ro3) Fragment Library | Chemical Library | A curated collection of small, simple molecules (MW <300) used to probe protein binding sites and identify weak but efficient starting points for drug design [15]. | Fragment-Based Drug Discovery (FBDD) |

| XChem/High-Throughput X-ray Crystallography Platform | Experimental Platform | Enables primary screening of fragment libraries by obtaining protein-ligand co-crystal structures at scale, providing direct structural data on binding [15]. | FBDD / Structure-Based Design |

| AlphaFold Protein Structure Database | Database/Algorithm | Provides highly accurate predicted protein 3D models for targets with no experimental structure, massively expanding the scope of SBDD [13]. | Computational First Principles |

| Enamine REAL (REadily AccessibLe) Database | Chemical Library | An ultra-large, commercially available virtual library of synthesizable compounds (>6.7 billion) for virtual screening [13]. | Structure-Based Virtual Screening |

| Relaxed Complex Method | Computational Method | Uses conformational ensembles from Molecular Dynamics simulations for docking, accounting for protein flexibility and cryptic pockets [13]. | Dynamics-Based Drug Discovery |

Discussion and Concluding Comparison

The contrast between taxonomy-focused dereplication and computational first-principles design encapsulates a broader evolution in drug discovery: from observation and exploitation of nature's chemical bounty to prediction and engineering of molecular interactions. The former approach, rooted in biology and chemistry, excels at delivering structurally novel, biologically pre-validated scaffolds that have historically been a major source of drugs, especially in challenging areas like oncology and infection [10] [14]. Its strengths lie in its connection to biologically relevant chemical space and its clear path to a bioactive compound. Its primary weaknesses are throughput, scalability, and the inherent uncertainty of the discovery process.

The latter approach, rooted in physics and computer science, offers speed, scalability, and rational design. It can rapidly explore vast synthetic chemical spaces, provide atomic-level insight into mechanism, and systematically optimize compounds. Its success is evident in the hundreds of approved drugs it has contributed to [15] [12]. Its critical limitations revolve around the accuracy of scoring functions, the challenges of modeling flexible biological systems, and the ultimate need to synthesize and test predicted compounds in the lab [13] [6].

The forward-looking thesis of modern drug discovery is not the supremacy of one paradigm over the other, but their strategic integration. Computational methods are revolutionizing natural product research through genome mining, spectral prediction, and database dereplication [11] [14]. Conversely, natural product-derived scaffolds are inspiring the design of focused libraries for virtual screening [10]. The most powerful future workflows will likely be hybrid: using taxonomic and genomic intelligence to guide the selection of natural sources, applying advanced analytics and dereplication to quickly identify novel chemotypes, and then using structure-based design to optimize these natural scaffolds into potent, drug-like candidates. This synthesis of biological wisdom and computational power represents the next chapter in the historical context of therapeutic discovery.

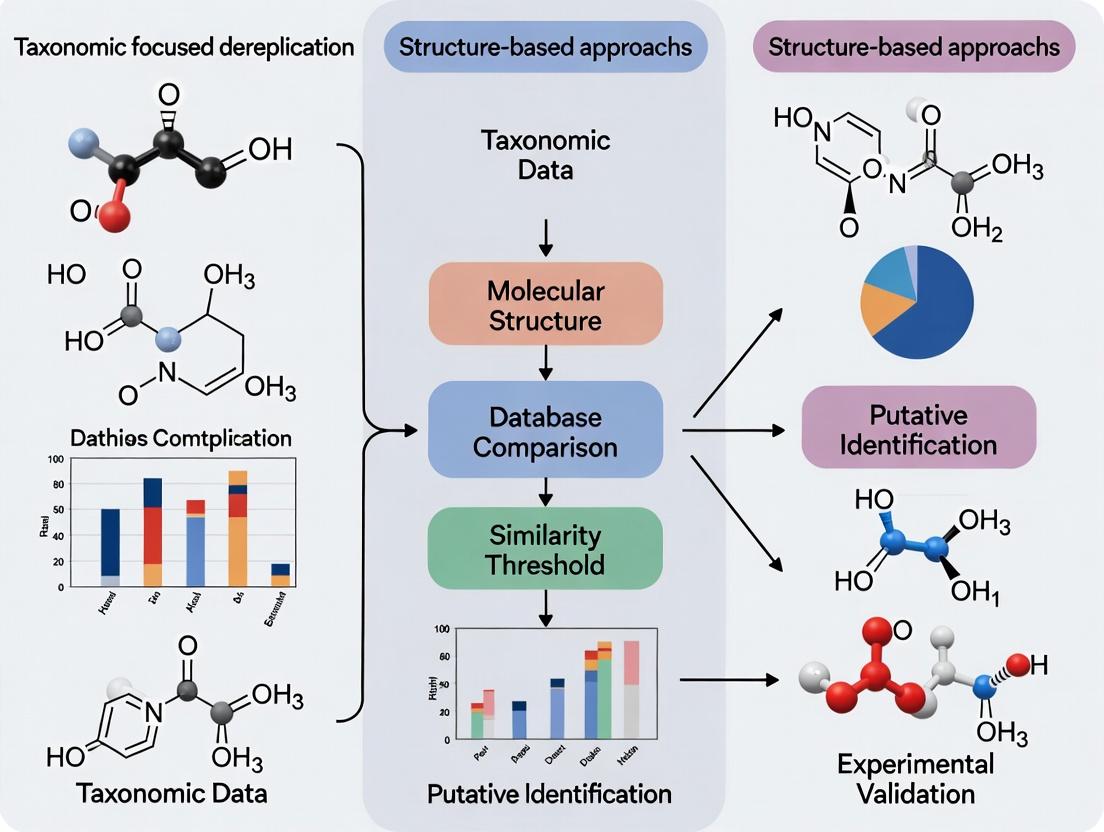

The process of dereplication—the rapid identification of known compounds in complex mixtures to prioritize novel entities—is a critical bottleneck in natural product discovery and microbiome analysis. Traditionally, approaches have been bifurcated into structure-based methods, which prioritize chemical features, and taxonomic-focused methods, which leverage the evolutionary relationships of the source organism [16] [11]. This guide objectively compares the performance of taxonomic-focused dereplication, which integrates phylogeny and spectral libraries, against alternative structure-based and similarity-based approaches.

The core thesis posits that taxonomic-focused dereplication provides a more efficient framework for annotating known compounds and predicting novel chemical space by constraining identification within evolutionary boundaries. This approach is particularly powerful when coupled with public spectral libraries and phylogenetic placement algorithms, enabling researchers to bypass the re-isolation of known compounds and accelerate the discovery pipeline [17] [18].

Core Concepts and Comparative Frameworks

Taxonomic-focused dereplication operates on the principle that biosynthetic pathways are often conserved within taxonomic groups (e.g., genera, families). By knowing the source organism's phylogeny, the search space for compound identification is significantly reduced. This method commonly utilizes phylogenetic placement of marker genes or whole genomes, alongside targeted or non-targeted mass spectrometry, to identify compounds [19] [18].

In contrast, structure-based dereplication is agnostic to taxonomy. It relies on direct comparison of analytical data—such as mass spectra, NMR shifts, or fragmentation patterns—against comprehensive libraries of pure compound data. The identification is based purely on spectral similarity, often using computational tools like molecular networking [17] [16].

A third paradigm, prominent in metagenomics, is the alignment-based (AL) vs. de novo (DN) approach. AL methods map sequencing reads to reference databases for rapid profiling of known taxa, while DN methods assemble reads without references to discover novel genomic elements [20] [21]. The choice between these strategies presents a trade-off between speed, reliance on existing knowledge, and the ability to discover novelty.

Table 1: Core Conceptual Comparison of Dereplication Strategies

| Strategy | Primary Data Input | Key Mechanism | Main Advantage | Primary Limitation |

|---|---|---|---|---|

| Taxonomic-Focused | Genetic material (DNA/RNA) & Spectral Data | Phylogenetic placement & taxonomic spectral filtering | Constrains search space, predicts novel related compounds | Requires phylogenetic knowledge; limited by reference databases |

| Structure-Based | Spectroscopic data (MS, NMR) | Direct spectral matching & molecular networking | Taxonomy-agnostic; direct compound-level identification | Prone to ambiguous matches for isomers; overlooks taxon-specific novelty |

| Alignment-Based (AL) | Sequencing reads (e.g., shotgun) | Mapping to reference genomes/marker genes | Fast, efficient for profiling known communities | Biased against novel taxa not in reference databases [20] |

| De Novo (DN) | Sequencing reads (e.g., shotgun) | De novo assembly and binning | Discovers novel taxa and genes; reference-independent | Computationally intensive; higher data sparsity [20] [21] |

Performance Comparison: Experimental Data and Outcomes

Spectral Library Development and Application

The development of specialized, public spectral libraries exemplifies the power of focused resources. The Pyrrolizidine Alkaloid Spectral Library (PASL) contains 165 MS/MS spectra from 102 compounds (84 standards, 18 from crude extracts). When applied to dereplicate compounds in plant extracts, this taxonomy-focused library (targeting Asteraceae, Boraginaceae, Fabaceae) enabled rapid annotation without pure standards. In a comparative sense, a broader, untargeted search of generic MS/MS libraries would yield higher rates of false annotations due to the structural diversity across all plant taxa [17].

Metagenomic Profiling: Alignment vs. De Novo

A direct comparison of AL and DN methods on the same gut microbiome dataset (346 samples) reveals a clear trade-off [20]:

- AL Methods (e.g., MetaPhlAn) produced taxonomic profiles with lower sparsity and identified a greater number of differentially abundant taxa associated with host BMI. They explained a greater proportion of variance (~8.7%).

- DN Methods (MAG reconstruction) captured more novel microbial diversity (e.g., higher relative abundance of Archaea) but produced sparser matrices, identifying only a subset of the significant taxa found by AL. However, DN enabled functional insights, such as identifying a novel 2,5-diketo-D-gluconate reductase A enzyme in Alistipes onderdonkii MAGs [20].

An integrative tool like the Read Annotation Tool (RAT), which combines signals from MAGs, contigs, and reads, demonstrates the hybrid performance gain. In benchmark tests on CAMI2 data, RAT achieved superior precision and sensitivity by first inheriting reliable taxonomy from assembled sequences before annotating remaining reads [21].

Table 2: Quantitative Performance Comparison from Key Studies

| Study / Tool | Approach | Key Performance Metric | Result | Implication for Dereplication |

|---|---|---|---|---|

| Pyrrolizidine Alkaloid Library (PASL) [17] | Taxonomy-focused spectral matching | Library scope & application | 102 PAs, 165 MS/MS spectra; successful dereplication in plant extracts | Focused libraries reduce false positives in targeted taxon groups. |

| AL vs. DN Microbiome Analysis [20] | Alignment-based vs. De novo assembly | Number of significant taxa & novelty discovery | AL identified more diff. abundant taxa; DN found novel enzyme genes. | AL is efficient for known communities; DN is essential for functional novelty. |

| RAT (Read Annotation Tool) [21] | Integrative (MAGs + contigs + reads) | Precision & sensitivity on CAMI2 data | Outperformed state-of-the-art profilers by integrating multi-level signals. | Leveraging assembled data significantly improves read annotation accuracy. |

| Phylogenetic Placement [18] | Phylogeny-based sequence placement | Taxonomic assignment accuracy | Provides evolutionary context, increasing accuracy over simple similarity. | Essential for placing novel sequences from poorly characterized taxa. |

Phylogenetic Placement for Taxonomic Assignment

Phylogenetic placement, a cornerstone of taxonomic-focused analysis, directly addresses the limitations of similarity-based (BLAST) searches. By placing query sequences within a fixed reference tree, it considers evolutionary history and branch lengths, leading to more accurate taxonomic assignment, especially for novel or divergent sequences [18]. A review of the first decade of these methods confirms they eliminate the requirement for exact database matches and reduce misidentification, providing a robust framework for analyzing metabarcoding data from diverse environments [18].

Detailed Experimental Protocols

This protocol details the creation of the Pyrrolizidine Alkaloid Spectral Library (PASL).

- Sample Preparation: Dilute pure analytical standards to ~500 μg/L in 10% methanol. For crude extracts, homogenize 10 mg of freeze-dried plant material in 1 mL of 0.2% formic acid, filter (0.45 µm), and analyze directly.

- LC-HRMS/MS Analysis:

- System: UHPLC (e.g., Thermo Vanquish) coupled to an Orbitrap mass spectrometer (e.g., Q Exactive).

- Chromatography: C18 column (2.1 x 150 mm, 1.7 µm). Gradient: 10 mM ammonium carbonate buffer (pH 9) and acetonitrile over 18.5 minutes.

- MS Acquisition: Full-scan MS1 (resolution: 120,000 FWHM) followed by data-dependent MS/MS (resolution: 15,000 FWHM) using stepped collision energies (e.g., 15, 30, 45 eV).

- Data Processing & Library Curation:

- Convert raw files to open

.mzMLformat. - Use workflows (e.g., MSMSChooser on GNPS) to extract clean, representative MS/MS spectra for each compound.

- Annotate compounds with validated structures and isomeric SMILES, correcting for database errors in stereochemistry.

- Validate the library by performing molecular networking against public GNPS libraries and applying it to dereplicate known PAs in test plant extracts.

- Convert raw files to open

This protocol uses assembly-derived signals to improve read annotation.

- Assembly and Binning: Perform de novo assembly of quality-filtered metagenomic reads using a tool like MEGAHIT or metaSPAdes. Bin contigs into Metagenome-Assembled Genomes (MAGs) using tools like MetaBAT2.

- Annotation of Long Sequences:

- Annotate contigs using the Contig Annotation Tool (CAT).

- Annotate MAGs using the Bin Annotation Tool (BAT). Both CAT and BAT predict Open Reading Frames (ORFs), query them against a protein database (e.g., NCBI nr or GTDB) via DIAMOND, and assign taxonomy based on consensus.

- Read Mapping and Inheritance: Map all quality-filtered reads back to the contigs using BWA-MEM. A read inherits the taxonomy of the contig (or MAG) it maps to.

- Direct Annotation of Unmapped Reads: Annotate reads that do not map to contigs, and contigs not annotated by CAT, by directly querying them against the protein database using DIAMOND blastx.

- Profile Integration: Combine the inherited annotations (high reliability) with the direct read annotations (higher coverage) to produce a comprehensive, high-fidelity taxonomic profile.

This protocol outlines creating a custom 13C NMR database for a specific taxon.

- Compound Retrieval: Query the LOTUS database (or other NP database) using the target taxon name (e.g., Brassica rapa) to download associated chemical structures in SDF format.

- Structure Curation: Process the SDF file with scripts to remove duplicates, correct tautomeric forms (e.g., iminol to amide), and standardize valence descriptions for compatibility with prediction software.

- Spectral Prediction: Import the curated SDF file into spectral prediction software (e.g., ACD/Labs CNMR Predictor). Calculate and predict 13C NMR chemical shifts for all structures.

- Database Deployment: Export the combined structural and predicted NMR data into a searchable format. This custom database can then be used to dereplicate mixtures from the target taxon by matching observed 13C NMR chemical shifts.

Visualization of Workflows and Logical Frameworks

Integrated Dereplication Workflow for Natural Products and Microbiomes

Taxonomic vs. Structure-Based Dereplication Strategy Comparison

Table 3: Key Reagents, Tools, and Databases for Taxonomic-Focused Dereplication

| Category | Item / Resource | Specific Example / Vendor | Primary Function in Dereplication |

|---|---|---|---|

| Spectral Libraries | Public MS/MS Libraries | GNPS Mass Spectrometry Libraries [17] | Repository for experimental spectra for spectral matching and networking. |

| Taxon-Focused MS Library | Pyrrolizidine Alkaloid Spectral Library (PASL) [17] | Targeted library for rapid, accurate dereplication within a toxin class. | |

| NMR Prediction Software | ACD/Labs CNMR Predictor [11] | Predicts 13C NMR shifts to build custom taxon-focused databases. | |

| Phylogenetic & Genomic Tools | Phylogenetic Placement Engine | EPA-ng, pplacer [18] | Places query sequences on a reference tree for taxonomy and evolution insight. |

| Profiling & Assembly Tools | MetaPhlAn4 (AL), MEGAHIT (DN) [20] | AL: Rapid taxonomic profiling. DN: De novo metagenomic assembly. | |

| Integrative Annotation Pipeline | CAT/BAT/RAT Pack [21] | Annotates contigs, bins, and reads for comprehensive, accurate profiles. | |

| Databases | Natural Product Database | LOTUS [11] | Links NP structures to taxonomic origin for taxon-focused queries. |

| Taxonomic Reference Database | Genome Taxonomy Database (GTDB) [21] | Standardized microbial taxonomy for robust classification. | |

| General Protein Database | NCBI non-redundant (nr) database [21] | Reference for homology searches in annotation pipelines. | |

| Experimental Materials | Chromatography Column | Waters Acquity UPLC BEH C18 (1.7µm) [17] | High-resolution separation of complex extracts prior to MS analysis. |

| Internal Standard (for quant.) | Deuterated or analog compounds (e.g., Heliotrine) [17] | Ensures quantitative reliability and reproducibility in LC-MS. |

The pursuit of new therapeutic agents stands at a crossroads between two fundamental philosophies: the taxonomy-focused dereplication of known chemical entities and the de novo structure-based design of novel compounds [11] [22]. Dereplication efficiently identifies known compounds within complex natural extracts, preventing redundant research by leveraging databases linked to biological taxonomy and spectroscopic data [11]. In contrast, structure-based drug discovery (SBDD) utilizes the three-dimensional architecture of a biological target to rationally design or discover novel ligands that modulate its function [6] [23].

This guide focuses on the computational core of SBDD, charting the evolution from rapid, static docking methods to sophisticated, dynamic simulations. Molecular docking provides a crucial first pass, predicting how a small molecule might fit into a protein's binding site [24] [25]. However, this static snapshot often fails to capture the dynamic reality of biomolecular recognition. Molecular dynamics (MD) simulations address this by modeling the physical movements of atoms over time, offering insights into conformational changes, binding pathways, and binding stability [24] [25]. The field is now being revolutionized by artificial intelligence and deep learning models that predict ligand-specific protein conformational changes, heralding a new era of "dynamic docking" [26] [27].

The integration of these computational tiers—from fast screening to high-fidelity simulation—creates a powerful pipeline. This pipeline is increasingly augmented by experimental structural biology techniques like cryo-EM and solution-state NMR, which provide critical high-resolution data and validate computational predictions [6] [28]. This guide will objectively compare the performance, data requirements, and optimal applications of these approaches, providing researchers with a framework for method selection within the broader drug discovery landscape.

Comparative Performance Analysis: Static Docking vs. Dynamic Simulations

The choice between static docking and dynamic simulation is governed by a trade-off between computational speed and biological fidelity. The table below summarizes their core performance characteristics, supported by benchmark data and practical applications.

Table 1: Performance Comparison of Static Docking and Dynamic Simulation Approaches

| Aspect | Static Molecular Docking | Molecular Dynamics (MD) Simulation | AI-Driven Dynamic Docking (e.g., DynamicBind) [27] |

|---|---|---|---|

| Primary Objective | Predict optimal binding pose and rank ligands by affinity [24] [25]. | Model time-dependent behavior, stability, and conformational changes of the complex [24] [25]. | Predict ligand-specific protein conformations and poses from apo structures [27]. |

| Timescale | Seconds to minutes per ligand [24]. | Nanoseconds to microseconds, requiring days to weeks of compute time [24]. | Minutes to hours per ligand on GPU hardware [27]. |

| Treatment of Flexibility | Limited; typically fully flexible ligand with a rigid or semi-flexible (side-chains only) receptor [25]. | Full atomic flexibility for both ligand and receptor, including solvent [24] [25]. | Models large-scale backbone and side-chain conformational changes driven by the ligand [27]. |

| Key Output Metrics | Docking score (kcal/mol), predicted binding pose, interaction maps [24]. | Trajectory files, RMSD/RMSF, hydrogen bond occupancy, free energy of binding (ΔG) [24]. | Predicted ligand pose RMSD, protein pocket RMSD (vs. holo structure), clash scores [27]. |

| Typical Application | High-throughput virtual screening of 1,000 - 10^6 compounds [24] [25]. | Detailed mechanistic study, binding stability validation, and lead optimization for a few candidates [24] [29]. | Pose prediction and virtual screening where large receptor flexibility or cryptic pockets are involved [27]. |

| Pose Prediction Accuracy (RMSD < 2Å) | Varies (20-70%) highly dependent on target and software; degrades with receptor flexibility [25]. | High for stable binding modes sampled from a correct starting pose; not used for primary screening. | Reported 33-39% success on challenging benchmarks using only AlphaFold-predicted apo structures [27]. |

| Success in Virtual Screening (Enrichment) | Moderate; limited by scoring function accuracy and rigid receptor approximation [6] [25]. | Not directly applicable due to prohibitive cost. | Demonstrates state-of-the-art performance in virtual screening benchmarks [27]. |

| Computational Cost | Very Low | Very High | Moderate |

Supporting Experimental Data: A 2024 study on antiviral discovery provides a direct comparison. Molecular docking screened 200 natural metabolites against viral RNA polymerase, identifying leads like cytochalasin Z8 (docking score: -8.9 kcal/mol). Subsequent 200-ns MD simulations on the top candidates confirmed complex stability, with root-mean-square deviation (RMSD) profiles plateauing, validating the docking-predicted poses [29]. This two-tiered approach is a standard validation protocol.

AI-driven dynamic docking, as exemplified by DynamicBind, addresses a key weakness of static docking. Benchmarking on the PDBbind and Major Drug Target sets showed DynamicBind successfully predicted ligand poses within 2Å RMSD in 33-39% of cases using only AlphaFold-predicted apo structures, outperforming traditional docking tools like GNINA and GLIDE [27]. Its ability to sample large conformational changes (e.g., DFG-in/out transitions in kinases) is particularly notable where static methods fail [27].

Experimental Protocols: From Virtual Screening to Simulation

A robust structure-based workflow typically progresses from broad screening to focused, high-fidelity analysis. Below are detailed protocols for a standard docking-MD validation pipeline and an emerging AI-based dynamic docking approach.

Protocol 1: Integrated Docking and MD Simulation for Lead Validation [29]

Target Preparation:

- Obtain the 3D structure of the target protein from the Protein Data Bank (PDB) or via homology modeling.

- Process the structure: remove water molecules and heteroatoms (except crucial cofactors), add hydrogen atoms, assign protonation states, and repair missing residues/loops.

- For docking, define the binding site using a grid box centered on known catalytic residues or a reference ligand.

Ligand Library Preparation:

- Compile 2D structures (e.g., SMILEs) of compounds from chemical databases (e.g., PubChem, ZINC).

- Generate 3D conformations, minimize energy, and assign appropriate charges and rotatable bonds.

- Convert both protein and ligand files to the required format (e.g., PDBQT for AutoDock).

Molecular Docking Execution:

- Perform docking using software like AutoDock Vina or Glide.

- Set exhaustiveness/search parameters appropriately. Run the docking simulation to generate multiple poses per ligand.

- Rank compounds based on docking score (estimated binding affinity in kcal/mol).

Post-Docking Analysis & Selection:

- Visually inspect top-ranked poses for sensible interaction patterns (hydrogen bonds, hydrophobic contacts).

- Apply drug-likeness filters (e.g., Lipinski's Rule of Five).

- Select the top 1-5 compounds for further dynamic analysis.

Molecular Dynamics Simulation:

- Solvate the top docked complex in an explicit water box (e.g., TIP3P model). Add ions to neutralize the system.

- Energy minimize the system to remove steric clashes.

- Gradually heat the system to 310 K under constant volume (NVT ensemble), then equilibrate at constant pressure (NPT ensemble, 1 atm).

- Run the production MD simulation for a defined time (e.g., 50-200 ns). Use a 2-fs integration time step.

- Analyze trajectories: calculate RMSD (complex stability), RMSF (residue flexibility), hydrogen bond occupancy, and MM/PBSA-based binding free energy.

Protocol 2: AI-Based Dynamic Docking with DynamicBind [27]

Input Preparation:

- Protein Input: Use an apo (unbound) protein structure, preferably an AlphaFold2-predicted model in PDB format.

- Ligand Input: Provide the small molecule in a standard format (SMILES or SDF). The model uses RDKit to generate an initial 3D conformation.

Model Inference:

- The ligand is initially placed randomly around the protein.

- The DynamicBind model, an SE(3)-equivariant geometric diffusion network, executes a series of iterative updates (e.g., 20 steps).

- Initially, it adjusts only the ligand's conformation and placement. Subsequently, it jointly optimizes both ligand pose and protein side-chain (and in part backbone) conformations to reach a complementary, low-energy complex.

Output and Selection:

- The model generates an ensemble of predicted complex structures.

- A built-in scoring module (contact-LDDT or cLDDT) ranks the predictions based on estimated accuracy.

- The top-ranked structure is selected as the final predicted holo-complex, which can be used for virtual screening prioritization or detailed interaction analysis.

Visualization of Workflows and Relationships

Diagram: Parallel Workflows of Dereplication and Structure-Based Design

Diagram: Method Spectrum in Structure-Based Approaches

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Data Resources for Structure-Based Research

| Category | Item Name | Function & Application | Key Characteristics |

|---|---|---|---|

| Docking & Screening | AutoDock Vina [24] [25] | Open-source software for molecular docking and virtual screening. | Uses a gradient-optimized scoring function; fast and widely used in academia. |

| Glide (Schrödinger) [25] [26] | High-accuracy docking software for pose prediction and virtual screening. | Employs systematic search and empirical scoring; a commercial industry standard. | |

| Molecular Dynamics | GROMACS [24] | Open-source, high-performance MD simulation package. | Extremely fast for biomolecular systems; runs on CPUs and GPUs. |

| AMBER [24] | Suite of MD simulation programs with specialized force fields. | Includes sophisticated tools for free energy calculation (MM/PBSA/GBSA). | |

| AI & Advanced Modeling | DynamicBind [27] | Deep learning model for dynamic docking and ligand-induced conformational prediction. | Predicts holo-like complexes from apo structures; handles large conformational changes. |

| AlphaFold2 [6] [27] | Deep learning system for highly accurate protein structure prediction. | Provides reliable apo-structure models for targets without experimental structures. | |

| Data Resources | Protein Data Bank (PDB) [6] | Repository for 3D structural data of proteins and nucleic acids. | Primary source of experimental structures for target preparation and benchmarking. |

| PDBbind [6] [27] | Curated database of protein-ligand complexes with binding affinity data. | Provides a core set for training and benchmarking scoring functions and AI models. | |

| ChEMBL [6] | Large-scale database of bioactive molecules with drug-like properties. | Source of ligand structures and bioactivity data for model training and validation. | |

| Specialized Analysis | PyMOL / ChimeraX | Molecular visualization system for analyzing structures, poses, and trajectories. | Indispensable for visual inspection of docking results and MD simulation frames. |

| RDKit [27] | Open-source cheminformatics toolkit. | Used for ligand preparation, descriptor calculation, and file format manipulation. |

The landscape of structure-based approaches is defined by a strategic continuum from speed to accuracy. Static molecular docking remains an indispensable tool for the initial exploration of vast chemical space, efficiently prioritizing candidates for more resource-intensive study [24] [23]. Molecular dynamics simulations provide the necessary biophysical depth to validate these candidates, offering unparalleled insights into stability, dynamics, and the thermodynamics of binding [25] [29].

The emerging paradigm, powerfully demonstrated by AI models like DynamicBind, is the fusion of these concepts: achieving dynamic insights at near-docking speeds [27]. This capability to predict ligand-specific protein conformations directly addresses the historical "static receptor" limitation and is particularly promising for targeting cryptic pockets and highly flexible proteins.

Ultimately, the most effective discovery pipeline is not reliant on a single method but on their intelligent integration. This computational cascade should be further informed by and validated with complementary experimental techniques. NMR-driven SBDD, for instance, can provide atomic-level details on dynamics and weak interactions in solution, informing and refining computational models [28]. Furthermore, the dereplication paradigm serves as a crucial checkpoint to ensure that novel structural predictions are translated into genuinely novel chemical matter, avoiding redundant rediscovery [11] [22]. The future of rational drug discovery lies in this synergistic, multi-faceted approach, leveraging the unique strengths of each tool to navigate the complex journey from target structure to viable drug candidate.

This guide compares two fundamental strategies in natural product (NP) research: taxonomy-focused dereplication, which prioritizes the efficient identification of known compounds to avoid rediscovery, and structure-based approaches, which aim to predict bioactivity and mechanism of action (MoA) to discover novel therapeutic leads [30] [22]. Framed within the broader thesis of cataloging known chemistry versus discovering new biology, this analysis provides researchers with a clear comparison of objectives, experimental protocols, performance, and applications.

Core Philosophical and Methodological Comparison

The divergence between these approaches originates from their primary objectives. Taxonomy-focused dereplication is a defensive, efficiency-driven strategy designed to filter out known compounds early in the discovery pipeline. Its core thesis is that focusing on the known chemical space of a specific taxon (species, genus, family) accelerates research by preventing redundant work [11] [31]. In contrast, structure-based discovery is an offensive, novelty-driven strategy. It uses analytical data to prioritize unknown or novel chemical scaffolds, with the explicit goal of uncovering new bioactivities and MoAs, accepting that this may sometimes lead to compounds with no immediate known biological function [22].

The methodological pathways reflect this philosophical split. Dereplication typically begins with a taxonomically defined biological sample, using tools like the LOTUS database to create a focused library of known compounds from related organisms [11]. Structure-based discovery often starts with broad analytical profiling (e.g., LC-MS) of extracts or engineered systems, using computational tools to flag spectral features that do not match known compounds in universal databases [30] [22].

Quantitative Performance Comparison

The following table summarizes the key performance characteristics and outcomes of the two approaches.

| Performance Metric | Taxonomy-Focused Dereplication | Structure-Based Bioactivity Prediction |

|---|---|---|

| Primary Objective | Avoid redundant isolation and characterization of known compounds [11] [31]. | Discover novel chemical scaffolds and predict their biological activity [30] [22]. |

| Typical Success Rate | High identification rate for known compounds within a well-studied taxon [11]. | Lower hit rate for novel bioactive compounds, but higher scaffold novelty [22]. |

| Key Analytical Tools | ¹³C NMR, LC-MS, taxon-specific spectral databases [11] [31]. | HR-MS/MS, Molecular Networking (e.g., GNPS), in silico docking, QSAR models [30]. |

| Time to Initial Result | Rapid (hours to days) for known compound identification [11]. | Longer (days to weeks) for novel compound prioritization and bioassay [30]. |

| Data Integration | Relies on the "Three Pillars": Taxonomy, Molecular Structure, and Spectroscopy [31]. | Integrates genomics, metabolomics, chemoinformatics, and phenotypic screening data [30]. |

| Main Output | Confirmed identity of a known natural product. | Prioritized list of unknown features for isolation & a predicted bioactivity/MoA hypothesis [22]. |

Detailed Experimental Protocols

Protocol 1: Taxonomy-Focused Dereplication via ¹³C NMR Prediction

This protocol, exemplified by the CNMR_Predict workflow for Brassica rapa, details the creation and use of a taxon-specific database for dereplication [11].

Step 1: Taxon-Specific Compound Library Creation

- Query: Perform a search for a target organism (e.g., Brassica rapa) in the comprehensive LOTUS database (https://lotus.naturalproducts.net/).

- Export: Download all associated chemical structures in a standard format (e.g., SDF V3000).

- Clean & Standardize: Use cheminformatics scripts (e.g., RDKit) to remove duplicates, correct tautomeric forms (e.g., converting iminols to amides), and standardize valency representations to ensure compatibility with prediction software [11].

Step 2: Spectral Data Augmentation

- Prediction: Import the cleaned structure library into spectroscopic prediction software (e.g., ACD/Labs CNMR Predictor).

- Calculation: Generate predicted ¹³C NMR chemical shifts for every compound in the library. ¹³C NMR is favored for its wide spectral dispersion and accurate predictability [11].

- Database Compilation: Merge the structural data, taxonomic origin, and predicted NMR shifts into a searchable, taxon-focused database (e.g., a .NMRUDB file).

Step 3: Experimental Sample Analysis & Matching

- Acquisition: Analyze a crude or fractionated extract of the target organism using ¹³C NMR spectroscopy.

- Search: Query the experimental chemical shift list against the custom-built, taxon-focused database.

- Identification: Match the experimental spectrum to a predicted spectrum in the database to confidently identify the known compound, thereby halting further costly isolation efforts [11].

Protocol 2: Structure-Based Workflow for Novel Bioactivity Prediction

This protocol integrates metabolomics and bioinformatics to prioritize novel compounds and suggest their MoA [30] [22].

Step 1: Untargeted Metabolic Profiling

- Analysis: Subject microbial or plant extracts to high-resolution LC-MS/MS analysis.

- Processing: Convert raw data to identify chromatographic peaks (features) with associated m/z and MS/MS fragmentation patterns.

Step 2: Molecular Networking & Novelty Prioritization

- Networking: Upload MS/MS data to the Global Natural Products Social Molecular Networking (GNPS) platform. This clusters compounds with similar fragmentation spectra, visually grouping related molecules [30].

- Dereplication: Annotate nodes (clusters) by matching spectra against public spectral libraries to flag known compounds.

- Prioritization: Target for isolation clusters that are not linked to known compounds or that originate from high-priority biological sources (e.g., uncultured microbes, engineered strains) [22].

Step 3: Bioactivity Prediction & Testing

- In-silico Prediction: For prioritized unknown features, use computational tools to:

- Predict molecular structure from MS/MS fragments or NMR data.

- Perform in-silico docking to suggest potential protein targets.

- Apply Quantitative Structure-Activity Relationship (QSAR) models to forecast biological activity [30].

- Hypothesis-Driven Assay: Design and execute targeted biological screens (e.g., against a specific enzyme or cellular phenotype) based on the computational predictions to validate bioactivity.

Visualizing the Workflows

The following diagrams illustrate the logical flow and key decision points for each methodological approach.

Taxonomy-Focused Dereplication Workflow

Structure-Based Discovery & Bioactivity Prediction

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful implementation of either strategy requires specific tools and resources. The table below details essential solutions for each approach.

| Item | Function in Taxonomy-Focused Dereplication | Function in Structure-Based/Bioactivity Prediction |

|---|---|---|

| LOTUS Database | Primary source for retrieving NP structures linked to a specific taxonomic lineage [11]. | Used for background dereplication within molecular networking to filter known compounds [30]. |

| ACD/Labs CNMR Predictor | Software for generating predicted ¹³C NMR chemical shifts to populate taxon-specific databases [11]. | Less central; may be used later in the pipeline for structural verification of isolated novel compounds. |

| GNPS (Global Natural Products Social) Platform | Can be used to cross-check MS/MS data, but secondary to NMR-based methods. | Core platform for MS/MS data analysis, molecular networking, and community-wide dereplication [30]. |

| RDKit Cheminformatics Toolkit | Used for scripting the cleanup, standardization, and format conversion of structure libraries [11]. | Used for chemical structure manipulation, fingerprint generation, and supporting QSAR/modeling efforts. |

| KnapsackSearch / CNMR_Predict Scripts | Tools for automating the generation of taxon-focused, NMR-augmented databases [11] [31]. | Not typically used. |

| High-Resolution Mass Spectrometer (HR-MS/MS) | Supports identification via exact mass and formula. | Essential for untargeted profiling, generating MS/MS data for networking, and determining molecular formulas [22]. |

| Bioassay Kits & Reagents | Used for general activity screening, but not the primary driver. | Core component. Includes enzyme substrates, cell lines, fluorescent dyes, and reporter systems for HTS and MoA studies [30]. |

| In-silico Docking Software (e.g., AutoDock) | Rarely used. | Predicts the interaction between a putative novel compound and a protein target to hypothesize MoA [30]. |

The choice between taxonomy-focused dereplication and structure-based bioactivity prediction is not mutually exclusive but rather strategic. Taxonomy-focused dereplication excels in efficiency, systematically mapping the chemistry of taxonomic groups and conserving resources [11] [31]. Structure-based approaches excel in novelty discovery, leveraging modern analytics and computation to venture into unknown chemical space with a guided hypothesis for bioactivity [30] [22].

The most effective modern NP discovery programs integrate both philosophies into a single pipeline. Initial rapid dereplication against focused and global databases removes known compounds, while subsequent molecular networking and bioinformatics prioritize the remaining unknown features for isolation. The isolated novel compounds can then be directed toward targeted bioassays based on in-silico MoA predictions. This synergistic strategy, leveraging the strengths of both primary objectives, maximizes the probability of efficiently discovering truly novel and biologically active natural products.

Workflow Deep Dive: From Molecular Networking to Virtual Screening Pipelines

The systematic discovery of novel natural products (NPs) is fundamentally hindered by the challenge of dereplication—the rapid identification of known compounds to avoid redundant rediscovery. Modern dereplication strategies have crystallized into two complementary paradigms: taxonomic-focused and structure-based approaches [22].

The taxonomic-focused approach is historically rooted. It begins with the biological or ecological selection of source material (e.g., a novel microbial species from a unique environment like mangrove sediments [32]), followed by bioactivity-guided fractionation. Chemical analysis is typically performed late in the pipeline, primarily to confirm the structure of an already-isolated bioactive compound. This method risks rediscovery but is driven by specific biological hypotheses.

In contrast, the structure-based approach inverts this workflow. It employs analytical techniques like liquid chromatography-tandem mass spectrometry (LC-MS/MS) at the very beginning to profile the chemical content of a crude extract [22]. The goal is to prioritize unknown chemical entities for isolation before bioactivity testing. This paradigm is powered by three core technologies:

- LC-MS/MS: Provides the sensitive detection and fragmentation data for compounds.

- Molecular Networking via GNPS: Visualizes and clusters MS/MS data to identify novel chemical families and annotate known ones.

- Genome Mining: Predicts the biosynthetic potential of an organism by identifying Biosynthetic Gene Clusters (BGCs) in its genome.

The integration of these tools creates a powerful, hypothesis-driven toolkit for NP discovery. This guide compares the performance, experimental protocols, and synergistic application of these core technologies within the modern structure-based dereplication framework.

Technology Comparison: Core Tools for Dereplication

LC-MS/MS: The Foundational Analytical Engine

LC-MS/MS serves as the indispensable analytical core, generating the primary data upon which molecular networking and integration depend.

- Principle: Separates compounds chromatographically (LC) and then analyzes them via mass spectrometry. The first mass stage (MS1) provides the intact mass-to-charge ratio (m/z) and intensity. The second stage (MS2) fragments selected ions, producing a characteristic spectrum that serves as a structural fingerprint [33].

- Role in Dereplication: Enables the rapid profiling of complex extracts. High-resolution MS1 allows for precise formula prediction, while MS2 spectra are used for database searching and similarity comparisons in molecular networking [22] [34].

Table: Comparison of Key Detection Technologies in Dereplication

| Detection Method | Key Advantages | Primary Limitations | Typical Role in Pipeline |

|---|---|---|---|

| LC-MS/MS | High sensitivity (ng level), provides molecular formula & structural fingerprints, high-throughput compatible [22]. | Ionization bias, requires spectral libraries for confident identification, destructive analysis [22]. | Frontline analysis. Profiling crude extracts, generating data for GNPS and database dereplication. |

| NMR Spectroscopy | Unmatched structural detail, non-destructive, universal for all compounds [22]. | Low sensitivity (mg-µg required), expensive, low-throughput, requires pure compounds [22]. | Late-stage confirmation. Definitive structural elucidation of purified compounds. |

| UV/Vis Spectroscopy | Inexpensive, non-destructive, easily coupled online [22]. | Provides minimal structural information, requires chromophores [22]. | Supplementary detection. Often used in-line with LC for initial profiling. |

Molecular Networking (GNPS): Visualizing Chemical Relationships

Molecular Networking (MN), particularly via the Global Natural Products Social Molecular Networking (GNPS) platform, is a computational-visual tool for organizing and interpreting MS/MS data [34].

- Principle: It operates on the core hypothesis that structurally similar molecules yield similar MS/MS spectra. GNPS calculates spectral similarity (e.g., modified cosine score) between all MS/MS spectra in a dataset and visualizes them as a network where nodes represent spectra and connecting edges represent significant similarity [34] [33]. This clusters analogs, knowns, and unknowns into molecular families.

- Evolution: Classical MN has evolved into more advanced workflows like Feature-Based Molecular Networking (FBMN), which integrates chromatographic information (retention time, peak area) to distinguish isomers and enable quantitative analysis [34] [35].

- Performance: GNPS can achieve limits of detection for specific compounds in complex matrices as low as 0.1–1 ng/g [33]. It significantly increases annotation rates in untargeted studies and has guided the isolation of over 40 known and novel compounds in single studies [34] [33].

Table: Key Molecular Networking Tools and Their Functions

| Tool Name | Type | Primary Function | Key Advantage |

|---|---|---|---|

| Classical MN | Clustering | Groups MS/MS spectra by similarity [34]. | Visualizes chemical relationships, identifies novel clusters. |

| Feature-Based MN (FBMN) | Enhanced Clustering | Integrates LC-MS1 feature data (RT, abundance) [34] [35]. | Handles isomers, links to quantitative data, reduces redundancy. |

| ION Identity MN (IIMN) | Annotation | Groups different ion forms (adducts, dimers) of the same molecule [34]. | Deconvolutes complex MS1 signals, simplifies networks. |

| Network Annotation Propagation (NAP) | Annotation | Propagates annotations within a network based on structural similarity [34]. | Annotates unknown molecules based on known neighbors. |

Diagram 1: A Generalized Molecular Networking Workflow via GNPS. The process begins with LC-MS/MS analysis of a sample, followed by data conversion and upload to the GNPS platform. Core workflows include molecular network construction and library matching, culminating in an annotated network used to prioritize unknown compounds for isolation.

Genome Mining: Predicting Biosynthetic Potential

Genome mining shifts the discovery focus from the expressed metabolite to the genetic potential encoded in an organism's DNA.

- Principle: It involves sequencing an organism's genome and using bioinformatic tools (e.g., antiSMASH) to scan for Biosynthetic Gene Clusters (BGCs)—groups of genes that encode the enzymes for NP biosynthesis [32] [36].

- Role in Dereplication: Identifies strains with high novelty potential (e.g., many unknown BGCs) and provides a genetic hypothesis for the structures one might find (e.g., non-ribosomal peptides, polyketides) [32]. Advanced tools like antiSMASH now include specialized detection modules; for example, its metallophore prediction algorithm achieves 97% precision and 78% recall [36].

- Taxonomic vs. Genomic Selection: While taxonomy can guide strain selection, genome mining is far more predictive. A study on Streptomyces sp. B1866 showed that despite its novel taxonomy, its genome contained 42 BGCs, over half with low similarity to known clusters, correctly predicting chemical novelty later confirmed by isolation [32].

Table: Genome Mining Tools and Comparative Genomics Approaches

| Tool / Approach | Primary Target | Key Metric | Utility in Dereplication |

|---|---|---|---|

| antiSMASH | BGC Identification | BGC count, novelty, class [32] [36]. | Priority ranking. Identifies strains with high/novel biosynthetic potential. |

| skDER / CiDDER | Genomic Dereplication | Average Nucleotide Identity (ANI), protein cluster saturation [37]. | Strain selection. Reduces redundancy in strain collections for sequencing. |

| Alignment-based (AL) Metagenomics | Taxonomic/Functional Profiling | Relative abundance of known taxa/genes [3]. | Community context. Useful for microbiome studies to profile known functions. |

| De novo (DN) Metagenomics | Novel Genome Assembly | Metagenome-Assembled Genomes (MAGs), novel gene discovery [3]. | Novelty discovery. Uncovers novel BGCs from uncultured organisms. |

Integrated Protocol: A Synergistic Workflow

The true power of the modern toolkit is realized through integration. The following detailed protocol, exemplified by the discovery of streptoxazole A from Streptomyces sp. B1866, outlines this synergistic workflow [32].

Stage 1: Strain Selection & Genomic Prioritization

- Strain Isolation & Taxonomic Assessment: Isolate a strain from a unique biotope (e.g., mangrove sediments). Perform 16S rRNA sequencing for preliminary taxonomic placement. For B1866, 16S showed <97% similarity to known species, suggesting a novel Streptomyces sp [32].

- Whole Genome Sequencing & Mining: Sequence the genome. Use antiSMASH to identify and categorize BGCs. Key Decision Point: Prioritize strains with a high number of BGCs, especially those with low similarity (<70%) to known clusters in databases. Strain B1866 was prioritized because its genome contained 42 BGCs, 21 of which were involved in polyketide (PKS), non-ribosomal peptide (NRPS), or hybrid biosynthesis [32].

Stage 2: Metabolomic Analysis & Network-Driven Dereplication

- Cultivation & Extraction: Culture the strain under appropriate conditions (e.g., fermentation). Extract secondary metabolites using organic solvents (e.g., ethyl acetate).

- LC-MS/MS Data Acquisition: Analyze the crude extract using UPLC-MS/MS in data-dependent acquisition (DDA) mode to collect both MS1 and MS2 spectra [32].

- Molecular Networking & Dereplication:

- Process raw data (conversion to .mzML format) and upload to GNPS.

- Perform FBMN analysis and a spectral library search against public repositories.

- Interpretation: Nodes (metabolites) that match library spectra are annotated as known compounds. Nodes that form clusters but have no library match represent novel or rare compound families and are prioritized for isolation [32] [34].

Stage 3: Targeted Isolation & Structure Elucidation

- Guided Isolation: Use the molecular network as a map. Target the precursor ions (m/z) corresponding to prioritized nodes in the network for purification using preparative chromatography.

- Structure Elucidation: Purity is assessed by LC-MS. The structure of the isolated compound is determined using spectroscopic techniques: HRESIMS for molecular formula, and 1D/2D NMR for full structural assignment. For streptoxazole A, this confirmed a novel benzoxazole structure [32].

- Bioactivity Testing: Test purified compounds for biological activity. Streptoxazole A exhibited anti-inflammatory activity with an IC₅₀ of 38.4 μM against LPS-induced NO production [32].

Diagram 2: The Integrated Discovery Workflow. This synergistic protocol begins with genomic assessment to prioritize a strain, uses metabolomics to visualize its chemical output and pinpoint novelty, and culminates in the isolation and characterization of new compounds.

Table: Key Research Reagents and Computational Tools for Integrated Dereplication

| Item / Resource | Category | Function / Purpose | Example / Note |

|---|---|---|---|

| antiSMASH | Software | Identifies and annotates biosynthetic gene clusters in genomic data [32] [36]. | Central tool for genome mining; now includes specialized detectors (e.g., for metallophores [36]). |

| GNPS Platform | Web Platform | Performs molecular networking, library searches, and collaborative annotation of MS/MS data [38] [34]. | Core infrastructure for structure-based metabolomics. |

| UPLC-HRMS System | Instrumentation | Provides high-resolution MS1 and MS2 data for metabolomic profiling and dereplication. | Systems like Q-Exactive Orbitrap are commonly used [32] [35]. |

| Fermentation Media | Reagent | Supports the growth and secondary metabolite production of microbial strains. | Composition is varied (OSMAC approach) to elicit BGC expression [22]. |

| NMR Solvents | Reagent | Required for the final structural elucidation of purified compounds. | Deuterated solvents (e.g., CDCl₃, DMSO-d₆) are essential for 1D/2D NMR experiments [32]. |

| Public Spectral Libraries | Database | Enables dereplication by matching experimental MS/MS spectra to known compounds. | Libraries within GNPS (e.g., MassBank, ReSpect) are critical for annotation [34]. |

| skDER / CiDDER | Software | Performs genomic dereplication to select a non-redundant set of representative genomes for analysis [37]. | Reduces computational burden and bias in comparative genomics. |

The contemporary toolkit for dereplication—LC-MS/MS, GNPS-based molecular networking, and genome mining—transcends the old dichotomy of taxonomic versus structure-based approaches. It forges a unified, iterative framework where each technology informs and validates the others.

Genome mining provides a genetic hypothesis for an organism's chemical capacity, allowing researchers to prioritize the most promising strains before any cultivation. LC-MS/MS and molecular networking then deliver the chemical reality, offering a rapid snapshot of the actual metabolome, dereplicating knowns, and visually highlighting clusters of unknown metabolites for targeted isolation. This integrated workflow, as demonstrated by the discovery of streptoxazole A [32], efficiently bridges an organism's genetic potential with its chemical expression.

The future of NP discovery lies in deepening this integration through automated annotation tools (e.g., DEREPLICATOR+, SIRIUS [34]), large-scale genomic censuses [36], and the application of machine learning to predict molecular properties directly from MS/MS spectra or BGC sequences. For researchers and drug development professionals, mastering this interconnected toolkit is no longer optional but essential for the efficient and targeted discovery of the next generation of natural product-based therapeutics.

The discovery of novel bioactive compounds, such as antibiotics, relies on two primary strategic paradigms: taxonomic-focused dereplication and structure-based drug design (SBDD). These approaches operate on fundamentally different principles and inform distinct stages of the discovery pipeline [4] [11].