Target-Based vs. Phenotypic Assays in Natural Product Discovery: 2025 Strategic Guide

This article provides a comprehensive analysis for researchers navigating the evolving landscape of natural product-based drug discovery.

Target-Based vs. Phenotypic Assays in Natural Product Discovery: 2025 Strategic Guide

Abstract

This article provides a comprehensive analysis for researchers navigating the evolving landscape of natural product-based drug discovery. It explores the foundational principles, comparative strengths, and inherent challenges of target-based and phenotypic screening paradigms. The discussion is grounded in current methodological advances, including AI-integrated phenotypic profiling and cutting-edge target deconvolution techniques. A critical evaluation of validation strategies and performance metrics is presented, synthesizing recent evidence to offer actionable insights for optimizing assay selection and workflow design. The conclusion posits that a synergistic, integrated approach, leveraging the biological relevance of phenotypic screens and the mechanistic clarity of target-based methods, represents the most promising path forward for translating the therapeutic potential of natural products into clinical successes.

Decoding the Paradigms: Core Principles and Evolution of Assay Strategies in NP Discovery

The discovery of new therapeutics from natural products (NPs) operates at the intersection of two dominant screening philosophies: the hypothesis-driven target-based approach and the observation-driven phenotypic approach. The former begins with a known disease-associated molecular target, while the latter starts with a desired change in cellular or organismal biology, agnostic to the specific mechanism [1] [2]. For NP research, characterized by structurally complex compounds with potentially polypharmacological effects, this philosophical divide has profound implications. Phenotypic screening offers an unbiased path to discover novel biology from NPs, but leaves the challenging task of target deconvolution [3] [4]. Target-based screening accelerates optimization but may overlook the multifaceted, systems-level activities that make many NPs therapeutically valuable [5] [6]. This guide objectively compares the performance, experimental frameworks, and technological integrations of both strategies within contemporary NP-based drug discovery.

Foundational Philosophies and Strategic Objectives

The core distinction between the two paradigms lies in their starting point and primary objective.

Target-Based Screening is a deductive, hypothesis-driven process. It commences with the selection and validation of a specific protein, nucleic acid, or pathway believed to be critically involved in a disease pathology. The primary objective is to identify molecules that potently and selectively modulate the activity of this predefined target. This approach is built on a deep understanding of disease biology and allows for rational drug design. Its success is exemplified by drugs like imatinib (targeting BCR-Abl kinase) and HIV integrase inhibitors [2].

Phenotypic Screening is an inductive, observation-driven process. It begins by defining a clinically relevant phenotypic endpoint—such as inhibition of pathogen growth, reduction of a toxic protein aggregate, or restoration of normal cell morphology—in a biologically complex system (cell, organoid, or whole organism). The primary objective is to discover compounds that elicit this beneficial phenotype without any prior assumption about the molecular mechanism involved. This approach is particularly powerful for diseases with complex or poorly understood etiologies and has been instrumental in discovering first-in-class medicines, including the antimalarial artemisinin [1] [2].

Performance and Output Comparison

Historical and contemporary data reveal distinct success patterns for each strategy, particularly in the context of first-in-class drug discovery. A seminal 2013 analysis of new molecular entities provides a clear quantitative comparison [1].

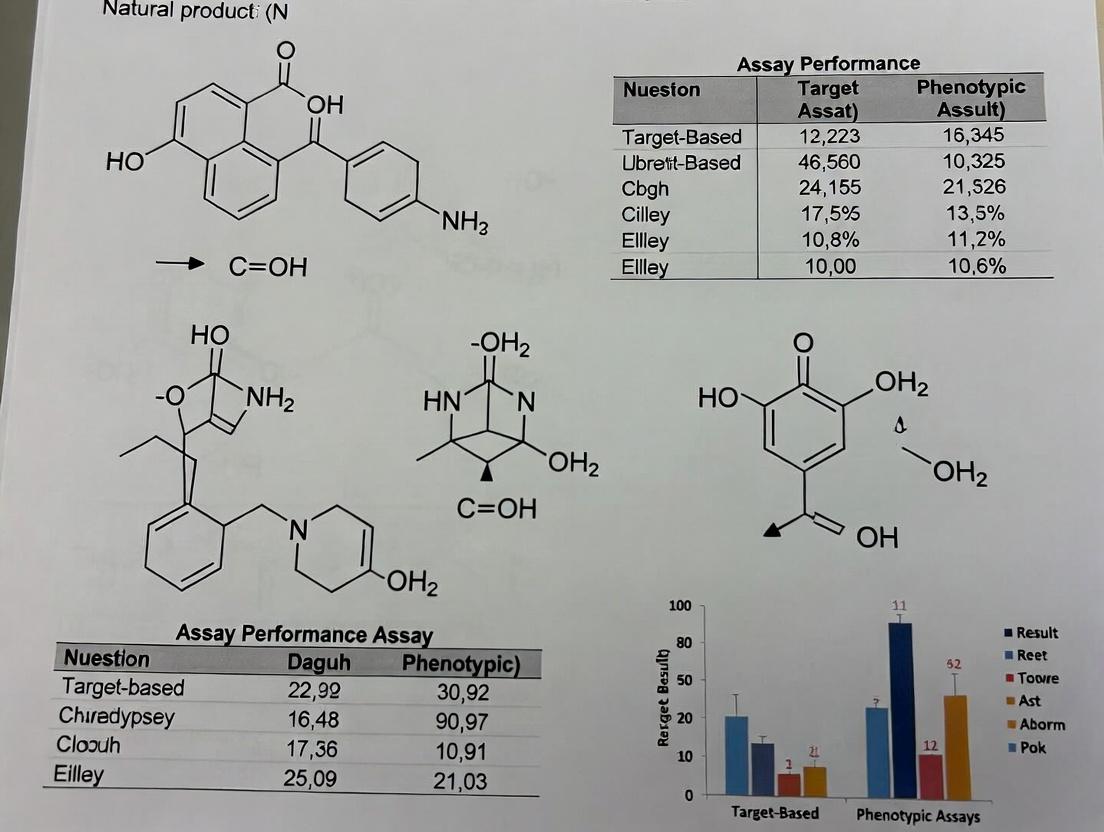

Table 1: Comparative Analysis of Screening Strategies for First-in-Class Medicines (1999-2008)

| Metric | Phenotypic Screening | Target-Based Screening | Implications for NP Research |

|---|---|---|---|

| Number of First-in-Class Drugs Discovered | 28 | 17 | Phenotypic approaches have been more successful at discovering novel therapeutic mechanisms [1]. |

| Percentage of Total First-in-Class Drugs | 62.2% | 37.8% | Highlights the value of unbiased discovery for novel disease biology [1]. |

| Typical Molecular Mechanism | Often novel, previously unknown | Known, hypothesis-derived | Phenotypic screening of NPs is a key source of novel target discovery [1] [4]. |

| Key Challenge | Target identification/deconvolution | Target validation & relevance | For NPs, the "target ID" challenge is significant but is being addressed by new technologies [3] [5]. |

The resurgence of phenotypic screening is supported by technological advances. High-resolution phenotypic profiling, which uses multiplexed imaging to generate cytological "fingerprints" of compound effects, can both identify bioactive NPs and predict their mechanism of action by comparing their profiles to those of compounds with known targets [4].

Experimental Protocols and Methodologies

Target-Based Screening Protocol

A standard biochemical target-based screen for an enzyme inhibitor involves:

- Target & Assay Development: A purified, recombinant target protein (e.g., a kinase) is prepared. A biochemical assay is configured to measure its activity, often using a change in fluorescence, luminescence, or absorbance. A key metric like Z'-factor (>0.5) is validated to ensure assay robustness for high-throughput screening (HTS) [7].

- Library Screening: A diverse compound library, which can include purified natural products or NP-inspired synthetic derivatives, is dispensed into microplates (384- or 1536-well format). The target and substrates are added robotically, and the reaction is allowed to proceed [7].

- Primary Hit Detection: Plate readers measure the assay signal. Compounds causing a significant deviation from control activity (e.g., >50% inhibition) are flagged as "hits."

- Counter-Screening & Selectivity: Hits are tested against unrelated enzymes to rule out non-specific interference or aggregation-based artifacts (PAINS). They may also be screened against related protein family members to assess initial selectivity [7].

- Secondary Validation: Confirmed hits are titrated to generate dose-response curves (IC50 values). Their binding and mechanism are further validated using orthogonal techniques like surface plasmon resonance (SPR) or isothermal titration calorimetry (ITC) [7].

Phenotypic Screening Protocol (Imaging-Based High-Content Screening)

A phenotypic screen for compounds that alter specific cellular structures or pathways involves:

- Model System & Assay Development: A disease-relevant cell line is selected or engineered. A panel of fluorescent dyes or antibodies is chosen to stain key cellular components (e.g., nuclei, cytoskeleton, lysosomes, specific phosphorylated proteins) [4].

- Cell Seeding & Compound Treatment: Cells are seeded into multi-well imaging plates. After adherence, they are treated with the NP library for a defined period.

- Fixation, Staining, and Imaging: Cells are fixed, permeabilized, and stained with the fluorescent panel. Automated high-content microscopes capture high-resolution images from multiple channels in each well [4].

- Image & Data Analysis: Software algorithms segment individual cells and quantify hundreds of morphological and intensity features (e.g., nuclear size, lysosomal count, filament density). These features are normalized to control treatments to generate a cytological profile for each NP [4].

- Hit Identification & MoA Prediction: Compounds inducing the desired phenotype (e.g., reduced pathogenic protein aggregation) are identified. Their multidimensional cytological profiles can be computationally compared to a reference database of profiles for compounds with known mechanisms to generate testable hypotheses about the NP's molecular target(s) [4].

Diagram 1: Foundational Workflow of Target-Based vs. Phenotypic Screening

The Critical Challenge: Target Identification for Phenotypic Hits

The major bottleneck in phenotypic NP discovery is target deconvolution—identifying the specific biomolecule(s) through which a hit compound exerts its effect. Modern "target fishing" strategies are mitigating this challenge [3] [5].

1. Affinity-Based Proteomics: A bioactive NP is chemically modified with a linker to create a "pull-down" probe without destroying its activity. This probe is incubated with a cell lysate or live cells, allowing it to bind its protein targets. The probe-protein complexes are then immobilized on beads, purified, and the bound proteins are identified using mass spectrometry [3] [5].

2. Label-Free Techniques: * Drug Affinity Responsive Target Stability (DARTS): Exploits the principle that a protein's susceptibility to proteolysis often decreases when bound to a ligand. Treated and untreated lysates are digested with a protease; proteins protected in the treated sample are identified by proteomics [3]. * Cellular Thermal Shift Assay (CETSA): Measures the thermal stabilization of a target protein upon ligand binding in a cellular context. Protein melting curves are generated with and without compound, and stabilized proteins are identified [3].

3. Computational & Integrative Approaches: Emerging strategies like the NP-VIP (Virtual-Interact-Phenotypic) framework combine virtual screening, chemical proteomics, and phenotypic metabolomics to triangulate high-confidence targets. For example, this approach identified PARP1 and STAT3 as key targets for Salvia miltiorrhiza in treating ischemic stroke [8].

Diagram 2: Target Deconvolution Pathways for Phenotypic Natural Product Hits

The Scientist's Toolkit: Essential Research Reagents and Platforms

The execution of both screening paradigms relies on specialized tools and reagents.

Table 2: Key Research Reagent Solutions for Screening and Target ID

| Tool/Reagent | Primary Function | Typical Application | Considerations for NP Research |

|---|---|---|---|

| HTS Biochemical Assay Kits (e.g., Transcreener) | Universal, homogenous assays to measure enzyme activity (kinase, ATPase, etc.) via fluorescence polarization (FP) or TR-FRET [7]. | Target-based primary screening and hit validation. | Must ensure NP auto-fluorescence or interference does not create false signals. |

| Fluorescent Cell Staining Dyes & Antibodies | Label specific cellular compartments (nuclei, lysosomes) or post-translational modifications (phospho-proteins) [4]. | Generating multiparametric cytological profiles in phenotypic HCS. | NP-induced autofluorescence must be controlled for using appropriate filter sets. |

| Activity-Based NP Probes | Chemically modified NPs with linkers (biotin, alkyne) for immobilization or click chemistry [3] [5]. | Affinity purification pull-down experiments for target fishing. | Synthetic modification must not abolish the NP's biological activity. |

| CRISPR-Cas9 Libraries | Enable genome-wide knockout or activation screens to identify genes essential for a phenotype or compound sensitivity [9]. | Functional validation of putative targets from deconvolution. | Can confirm if a hypothesized target is genetically required for the NP's effect. |

| AI-Powered Target Prediction Servers | Use QSAR, pharmacophore modeling, and deep learning to predict protein targets based on compound structure [5] [8]. | Generating initial target hypotheses for computational triage. | Accuracy is highly dependent on training data; novel NP scaffolds may be challenging. |

Synthesis and Strategic Integration

The dichotomy between target-based and phenotypic screening is not absolute, and the most effective modern NP research integrates both. A combined targeted-phenotypic approach is increasingly common, where a cellular assay is designed to report on a specific pathway or target activity within its native physiological context [9]. Furthermore, phenotypic hits can be reverse-engineered via target deconvolution to fuel new target-based discovery campaigns. Conversely, NP structures identified in target-based screens can be subjected to broad phenotypic profiling to uncover additional, potentially therapeutic off-target activities or to predict toxicity [4] [6].

The choice of strategy depends on the research goal: phenotypic screening for novel biology and first-in-class mechanisms, and target-based screening for optimizing selectivity and developing best-in-class drugs against validated targets [1] [9]. For the unique challenges and opportunities presented by natural products, leveraging both philosophies in a complementary cycle represents the most robust path from complex mixtures to novel therapeutics.

The dominant paradigm in drug discovery has cycled between phenotypic and target-based approaches. Historically, most medicines, including natural products (NPs), were discovered by observing their effects on whole organisms or tissues—a phenotypic approach [10] [11]. The late 20th century saw a decisive shift toward target-based drug discovery (TDD), driven by advances in genomics and molecular biology that promised rational design and high-throughput efficiency [11] [9]. However, analyses revealing that a majority of first-in-class drugs (1999-2008) originated from phenotypic drug discovery (PDD) have fueled a significant resurgence of this approach over the past decade [10] [11]. This resurgence is particularly pronounced in natural products research, where the complex chemistry and polypharmacology of NPs often defy reductionist target-based screening. Modern PDD is now characterized by high-resolution profiling technologies, advanced disease models, and sophisticated target deconvolution methods, creating a synergistic interplay with target-based strategies [4] [12] [6]. This guide compares the performance of contemporary phenotypic and target-based assay paradigms within NP research, supported by experimental data and protocols.

Historical Context and Performance Comparison

The shift between paradigms is rooted in their fundamental strategies. PDD identifies compounds based on their modulation of a disease-relevant phenotype in a cellular or organismal system, without preconceived notions of the molecular target [10]. TDD, in contrast, begins with a hypothesized protein target implicated in a disease and screens for compounds that modulate its activity in a purified or engineered system [11] [9].

A landmark analysis by Swinney and Anthony (2011) demonstrated that between 1999 and 2008, 28 of 50 (56%) first-in-class small-molecule drugs were discovered through phenotypic screening, compared to 17 (34%) through target-based approaches [10] [11]. This disproportionate contribution of PDD to innovative therapeutics is attributed to its target-agnostic nature, which can reveal novel biology and unexpected mechanisms of action (MOA), such as modulators of protein folding, splicing, or multi-protein complexes [10]. Notable NP-derived examples include the immunosuppressant rapamycin (sirolimus), whose target (mTOR) was identified years after its phenotypic discovery, and the anti-malarial artemisinin [11] [6].

The following table compares the core characteristics and outputs of the two paradigms, particularly in the context of NP research:

Table: Comparative Analysis of Phenotypic vs. Target-Based Drug Discovery for Natural Products

| Aspect | Phenotypic Drug Discovery (PDD) | Target-Based Drug Discovery (TDD) |

|---|---|---|

| Starting Point | Disease phenotype in a biologically complex system (cell, tissue, organism) [10]. | Hypothesis about a specific protein target's role in disease [11] [9]. |

| Primary Screening Readout | Holistic measurement of phenotype reversal (e.g., cell viability, morphology, functional recovery) [4] [10]. | Biochemical activity on an isolated target (e.g., enzyme inhibition, receptor binding) [9]. |

| Advantages in NP Research | Unbiased discovery of novel targets/MOAs; captures polypharmacology and systems-level effects; suitable for NPs with unknown targets [10] [6]. | Straightforward structure-activity relationship (SAR) and hit optimization; high throughput; clear mechanistic hypothesis [11] [9]. |

| Key Challenges | Target deconvolution can be difficult; assays may be lower throughput and more complex; hit chemistry may be challenging [10] [13]. | May fail due to poor target validation or lack of cellular activity; misses complex, multi-target mechanisms common to NPs [10] [11]. |

| Contribution to First-in-Class Drugs (1999-2008) | 56% (28 of 50 drugs) [10] [11]. | 34% (17 of 50 drugs) [10] [11]. |

| Target Identification Necessity | Required after hit discovery (downstream) [13] [14]. | Defined before screening (upstream) [9]. |

The Modern Phenotypic Assay Toolkit

The resurgence of PDD is powered by technological advances that address its historical limitations. Modern phenotypic screening employs high-resolution, multi-parameter profiling to generate rich data far beyond simple viability readouts.

High-Content Imaging and Cytological Profiling: As demonstrated by a 2017 study, high-content screening (HCS) can profile NP-induced effects using a panel of 14 fluorescent markers targeting major organelles and pathways [4]. This generates cytological profiles (CPs)—unique phenotypic fingerprints—for each compound. Testing 124 NPs revealed that small structural changes could cause profound phenotypic shifts, enabling cell-based structure-activity relationship studies and prediction of MOA by comparing NP profiles to a library of reference compounds with known targets [4].

Cell Painting and Predictive Profiling: The Cell Painting assay, a standardized morphological profiling technique, stains eight cellular components to create a high-dimensional phenotypic profile [15]. A 2023 large-scale study evaluated the power of chemical structure (CS), gene expression (GE from L1000), and morphological profiles (MO from Cell Painting) to predict bioactivity in 270 unrelated assays. Morphological profiling alone predicted the highest number of assays (28) with high accuracy (AUROC > 0.9). Critically, combining morphological profiles with chemical structure data nearly doubled the number of predictable assays compared to chemical structure alone (31 vs. 16), demonstrating powerful complementarity [15].

Table: Key Phenotypic Profiling Technologies and Performance Data

| Technology | Key Metrics/Output | Experimental Findings in NP/Compound Screening | Reference |

|---|---|---|---|

| High-Content Cytological Profiling | 134 cellular features distilled to 20 core features; profiles 14 cellular markers [4]. | Screened 124 NPs; identified sub-classes (e.g., topoisomerase inhibitors) via profile matching; enabled SAR for 17 podophyllotoxin derivatives [4]. | [4] |

| Cell Painting (Morphological Profiling) | 5-channel fluorescence imaging capturing ~1,500 morphological features [15]. | MO profiles predicted 28/270 assays (AUROC>0.9); combined with chemical structure (CS+MO), predicted 31 assays, showing strong synergy [15]. | [15] |

| Gene Expression Profiling (L1000) | Measures expression of 978 landmark genes; infers whole transcriptome [15]. | GE profiles predicted 19/270 assays (AUROC>0.9); provided complementary information to MO and CS [15]. | [15] |

Target Identification and Validation for Phenotypic Hits

A major challenge in PDD is identifying the molecular target(s) underlying an observed phenotype, a process known as target deconvolution. For NPs with complex structures, traditional chemical proteomics methods requiring compound modification can be prohibitively difficult [13] [14]. This has driven the development and adoption of label-free target identification methods.

Key Label-Free Methodologies:

- Cellular Thermal Shift Assay (CETSA): This method detects target engagement by measuring ligand-induced changes in a protein's thermal stability within cells or lysates. When a drug binds, the protein typically becomes more stable and resists heat-induced unfolding and aggregation. The proportion of remaining soluble protein is quantified at different temperatures (melting curve) or at a single temperature (isothermal dose-response), often using mass spectrometry [13] [16]. CETSA confirms binding in a physiologically relevant cellular context.

- Drug Affinity Responsive Target Stability (DARTS): DARTS exploits the principle that a protein is less susceptible to proteolysis when bound to a ligand. Cell lysates treated with or without the compound are subjected to limited proteolysis, and the resulting protein fragments are compared. Proteins protected from degradation in the compound-treated sample are potential targets [13] [14].

- Stability of Proteins from Rates of Oxidation (SPROX): SPROX measures the rate of methionine oxidation in proteins as a function of chemical denaturant concentration. Ligand binding can alter a protein's thermodynamic stability, shifting its denaturation curve, which is detected via mass spectrometry [13].

Integrated Multi-Omics Strategy (NP-VIP): A 2024 study on Salvia miltiorrhiza introduced a Natural Product Virtual screening-Interaction-Phenotype (NP-VIP) strategy that synergistically combines target-based and phenotypic concepts [12]. The workflow involves: 1) Virtual Screening (VS) of NP constituents against protein databases to predict potential targets; 2) CETSA to experimentally validate direct protein binding in cells; and 3) Metabolomics to observe phenotypic changes in cellular metabolism and identify functionally relevant pathways [12]. Applying this to Salvia miltiorrhiza extract identified 29, 100, and 78 potential targets from VS, CETSA, and metabolomics, respectively. Integration pinpointed five high-confidence targets (e.g., PARP1, STAT3), demonstrating how multi-modal integration overcomes the limitations of any single approach [12].

Experimental Protocols for Key Assays

Objective: To generate high-resolution cytological profiles (CPs) of NP-induced effects for MOA prediction and SAR analysis. Workflow:

- Cell Culture and Treatment: Seed U2OS cells in 384-well plates. Treat with a library of NPs (e.g., 124 compounds) and reference pharmacologically active compounds across a range of concentrations (e.g., 0.1-30 µM) for 24 hours. Include DMSO vehicle controls.

- Multiplexed Staining: Fix cells and stain with a panel of fluorescent dyes targeting:

- Nucleus: DNA (Hoechst).

- Nucleolus: RNA (SYTO RNASelect).

- Endoplasmic Reticulum: Concanavalin A.

- Golgi Apparatus: BODIPY TR ceramide.

- Lysosomes: LysoTracker Deep Red.

- Actin & Microtubules: Phalloidin and anti-α-tubulin antibody.

- Additional Markers: For cell health, plasma membrane, NF-κB translocation, etc.

- Image Acquisition: Acquire images using a high-content imaging system (e.g., ImageXpress Micro) with a 20x objective. Capture 4-9 fields per well to analyze 500-1000 cells per condition.

- Image and Data Analysis: Use image analysis software (e.g., MetaXpress) to segment cells and extract ~134 morphological and intensity-based features (e.g., organelle count, size, intensity, texture). Normalize data to vehicle control.

- Profile Generation and Analysis: Reduce features to a core set of 20 descriptors. Generate CPs as heatmaps. Use hierarchical clustering to compare NP profiles to reference compound libraries for MOA prediction. Perform principal component analysis to visualize compound clustering and identify SAR trends within chemical series.

Objective: To detect direct binding of an NP to its cellular protein targets by measuring thermal stabilization. Workflow (MS-based CETSA):

- Compound Treatment: Treat intact cells (e.g., HeLa, 1-2 million per sample) with the NP of interest or vehicle control for a predetermined time (e.g., 1 hour).

- Heat Challenge: Aliquot cell suspensions into PCR tubes. Heat each aliquot at a distinct temperature across a gradient (e.g., 37°C to 67°C) for 3 minutes using a thermal cycler.

- Cell Lysis and Clarification: Lyse heated cells, freeze-thaw, and centrifuge at high speed (e.g., 20,000 x g) to separate soluble protein from aggregated precipitates.

- Protein Digestion and TMT Labeling: Quantify protein in supernatants. Digest proteins with trypsin. Label peptides from different temperature points with tandem mass tag (TMT) reagents.

- LC-MS/MS Analysis and Data Processing: Pool labeled samples and analyze by liquid chromatography-tandem mass spectrometry (LC-MS/MS). Identify and quantify proteins.

- Melting Curve Analysis: For each protein, plot the relative soluble amount (log2(compound/control)) against temperature. A rightward shift in the melting curve (increased Tm) in the compound-treated sample indicates thermal stabilization and direct target engagement.

Objective: To identify high-confidence target ensembles for complex natural product extracts. Workflow:

- Virtual Screening (Interaction Prediction):

- Establish the chemical profile of the NP extract using UPLC-MS/MS.

- Dock identified constituent molecules against human protein targets using software like LeDock.

- Prioritize predicted targets based on docking scores and literature association with the disease of interest.

- CETSA (Interaction Validation):

- Perform MS-based CETSA (as in Protocol 4.2) on cells treated with the whole NP extract.

- Identify proteins with significantly altered thermal stability (Tm shifts) upon extract treatment.

- Metabolomics (Phenotype Analysis):

- Treat cells with the NP extract and perform untargeted metabolomics via LC-MS.

- Identify significantly altered metabolites and map them to affected biochemical pathways using KEGG or MetaboAnalyst.

- Infer the proteins/enzymes regulating those pathways as phenotypically relevant targets.

- Data Integration:

- Intersect the target lists from VS, CETSA, and metabolomics analyses.

- Proteins appearing in multiple datasets constitute a high-confidence target ensemble for the NP extract.

- Validate key targets through orthogonal methods like western blot, siRNA knockdown, or functional assays.

The Scientist's Toolkit: Key Research Reagent Solutions

Table: Essential Reagents and Materials for Featured Assays

| Item | Function/Description | Typical Application |

|---|---|---|

| Fluorescent Dye Panel (Hoechst, LysoTracker, ConA, etc.) | A multiplexed set of dyes for staining specific organelles and cellular structures to create cytological profiles [4]. | High-content phenotypic screening (HCS). |

| LOPAC1280 or Similar Library | A library of pharmacologically active compounds with known mechanisms of action, used as a reference for phenotypic profile matching [4]. | MOA prediction and annotation in phenotypic screens. |

| Tandem Mass Tag (TMT) Reagents | Isobaric chemical labels for multiplexed quantitative proteomics. Allows simultaneous quantification of proteins from multiple samples (e.g., different temperatures in CETSA) [12]. | MS-based CETSA and other quantitative proteomics workflows. |

| Chemical Denaturants (Urea, GdmCl) | Chaotropic agents that disrupt protein non-covalent structure, used to measure protein folding stability [13]. | SPROX, Pulse Proteolysis, CPP experiments. |

| Non-ionic Detergent (e.g., NP-40) | Used in cell lysis buffers to solubilize membranes while maintaining protein-protein interactions and complex integrity [13]. | Preparation of cell lysates for DARTS, CETSA (lysate mode). |

| Thermostable Protease (e.g., Pronase) | A broad-spectrum protease used for limited proteolysis in DARTS experiments [13]. | DARTS target identification. |

| Silica Gel for Column Chromatography | Stationary phase for fractionating complex natural product extracts based on polarity [12]. | Pre-fractionation of NP extracts prior to screening or analysis. |

| CETSA-Compatible Cell Line | A robust, adherent cell line (e.g., HeLa, U2OS) suitable for the heating and processing steps of CETSA [16] [12]. | CETSA target engagement studies. |

The discovery and development of therapeutics from natural products present a unique paradox. These compounds, derived from plants, microbes, and marine organisms, have been the source of numerous first-in-class medicines and possess inherent bioactivity and structural complexity often unmatched by synthetic libraries [3]. However, their very advantages constitute the core challenges for modern, mechanism-driven drug development. This article frames these challenges within the long-standing strategic dichotomy in pharmaceutical research: target-based versus phenotypic screening approaches [17].

Target-based discovery begins with a well-characterized molecular target, leveraging structural biology and rational design to develop highly specific inhibitors or modulators [17]. In contrast, phenotypic discovery identifies compounds based on a measurable biological response in cells or whole organisms, often without prior knowledge of the specific molecular target [17]. For natural products, this dichotomy is critical. Their frequent polypharmacology (action on multiple targets) and unknown mechanisms of action (MoAs) align more naturally with the holistic view of phenotypic screening. Yet, the demand for mechanistic understanding and safety validation pushes research toward target deconvolution, a process that remains notoriously difficult [18]. This guide objectively compares the performance of research strategies for natural products, focusing on their ability to navigate complexity, elucidate polypharmacology, and reveal unknown MoAs, supported by experimental data and protocols.

Comparative Analysis: Phenotypic vs. Target-Based Screening for Natural Products

The choice between phenotypic and target-based screening paradigms significantly impacts the trajectory of natural product research. The table below summarizes the performance of each approach against key criteria relevant to natural products' unique challenges.

Table 1: Performance Comparison of Phenotypic vs. Target-Based Screening for Natural Product Research

| Evaluation Criterion | Phenotypic Screening Approach | Target-Based Screening Approach | Supporting Data & Evidence |

|---|---|---|---|

| Ability to Discover Novel Mechanisms | High. Unbiased by prior target hypotheses; historically responsible for most first-in-class drugs [17]. | Low. Constrained by pre-selected, known targets; cannot identify novel biology outside the target hypothesis. | Discovery of immunomodulatory drugs like thalidomide and its analogs (lenalidomide) via phenotypic effects on TNF-α inhibition, with target (cereblon) identified years later [17]. |

| Handling of Polypharmacology | High. Captures net functional outcome of multi-target interactions; polypharmacology is an inherent advantage. | Low. Designed for single-target specificity; polypharmacology is typically seen as an off-target liability to be eliminated. | Artemisinin's antimalarial action may involve multiple mechanisms (alkylation, oxidative stress); a phenotypic screen identified its activity while target identification remains complex [18]. |

| Target Identification / MoA Deconvolution | Major Challenge. Requires extensive, often difficult follow-up work (affinity purification, chemoproteomics). | Not Applicable. Target is known from the outset, though full MoA may still require elaboration. | A 2025 review notes that target ID for natural products is a "significant challenge," driving innovation in chemical proteomics methods [3]. |

| Hit Rate for Bioactive Natural Products | Moderate to High. Filters for compounds that can penetrate cells and induce a relevant biological effect. | Very Low. Requires the natural product to be a potent, specific ligand for a single, pre-chosen protein target. | Phenotypic screens of natural product libraries consistently yield bioactive hits affecting complex processes like immune cell activation or cancer cell death [17]. |

| Optimization & Medicinal Chemistry | Complex. Requires iterative cycling between phenotypic optimization and target identification. Can be guided by structure-activity relationships (SAR). | Straightforward. SAR is directly informed by the structure of the target binding site, enabling rational design. | Optimization of thalidomide to lenalidomide was guided by phenotypic SAR (increased potency for TNF-α downregulation, reduced sedation) [17]. |

| Risk of Clinical Attrition | Potentially Lower. Compounds have demonstrated efficacy in a complex, disease-relevant system early on. | Potentially Higher. High target specificity may not translate to clinical efficacy if the target hypothesis is flawed or compensatory pathways exist [17]. | Analysis shows targeted approaches often fail due to lack of clinical efficacy stemming from incomplete disease biology understanding [17]. |

Experimental Protocols for Mode of Action Deconvolution

Overcoming the "unknown MoA" challenge requires a suite of sophisticated experimental techniques. Below are detailed protocols for two cornerstone methodologies.

Protocol 1: Affinity Purification (Target Fishing) with Quantitative Proteomics

This classic strategy remains a mainstay for identifying direct protein binders of a natural product [3].

1. Probe Design & Synthesis:

- Procedure: A functionalized derivative of the natural product is synthesized. This typically involves chemically adding a linker (like a polyethylene glycol chain) terminated with a handle (e.g., an alkyne for "click chemistry," or biotin for strong affinity capture). Critical Control: A structurally similar but inactive analog should be modified identically to serve as a negative control for non-specific binding.

- Validation: The modified probe must be validated in the original phenotypic assay to ensure it retains bioactivity comparable to the parent natural product.

2. Cell Lysis and Probe Incubation:

- Procedure: Prepare whole-cell lysates from relevant cell lines or primary tissues under non-denaturing conditions to preserve native protein structures. Incubate the lysates with the bioactive probe (experimental) or the inactive control probe. Competition experiments can be performed by co-incubating with an excess of unmodified natural product to identify binding that is specifically displaced.

- Materials: Lysis buffer (e.g., 50 mM Tris-HCl pH 7.5, 150 mM NaCl, 1% NP-40, protease inhibitors), rotator for gentle mixing.

3. Affinity Capture & Wash:

- Procedure: If using a biotinylated probe, incubate the lysate-probe mixture with streptavidin-conjugated beads. For "click chemistry" probes, perform a copper-catalyzed azide-alkyne cycloaddition (CuAAC) reaction to conjugate the probe-bound proteins to azide- or cyclooctyne-functionalized beads.

- Wash: Wash the beads stringently with lysis buffer followed by high-salt buffers (e.g., 1 M NaCl) and possibly mild detergent buffers to remove non-specifically adsorbed proteins.

4. Protein Elution & Identification:

- Procedure: Elute bound proteins using Laemmli buffer for western blot analysis, or by on-bead trypsin digestion for mass spectrometry (MS).

- MS Analysis: Subject digested peptides to liquid chromatography-tandem MS (LC-MS/MS). Identify and quantify proteins in the experimental sample relative to the negative control.

- Key Reagents: Streptavidin/NeutrAvidin beads, CuAAC kit reagents (if needed), sequencing-grade trypsin, LC-MS/MS system.

5. Hit Validation:

- Procedure: Candidate target proteins require orthogonal validation. Techniques include Cellular Thermal Shift Assay (CETSA) to demonstrate ligand-induced thermal stabilization of the target protein in intact cells, surface plasmon resonance (SPR) to measure binding kinetics in vitro, or gene knockdown/knockout to see if it phenocopies or rescues the natural product's effect [3] [18].

Protocol 2: Photoaffinity Labeling (PAL) for Capturing Transient Interactions

PAL is crucial for identifying low-abundance or transient protein-ligand interactions by covalently "capturing" the binding event upon UV irradiation [3].

1. Photoaffinity Probe Design:

- Procedure: Synthesize a derivative of the natural product incorporating a photoactivatable group (e.g., diazirine, benzophenone) and a reporter tag (biotin or alkyne). The photoactivatable group is inert until exposed to UV light of a specific wavelength, generating a highly reactive carbene or radical that forms a covalent bond with nearby proteins.

- Key Consideration: The linker and photoaffinity group must be positioned to minimize interference with the compound's bioactivity.

2. Live-Cell or Lysate Labeling:

- Procedure (Live-Cell): Incubate live cells with the photoaffinity probe. Wash cells to remove excess probe. Irradiate the cell culture with UV light (e.g., 365 nm for diazirines) to activate crosslinking. Harvest and lyse cells.

- Procedure (Lysate): Incubate cell lysates with the probe, then irradiate the mixture.

- Materials: UV crosslinker with appropriate wavelength control.

3. Capture and Analysis:

- Procedure: Following crosslinking, proceed with affinity capture using the reporter tag (e.g., streptavidin pull-down for biotin) as described in Protocol 1. The covalent bond formed during PAL allows for even more stringent washing conditions, reducing background.

- Analysis: Elute and identify proteins by MS. The covalent labeling also allows for mapping the binding site by digesting the captured protein and identifying the specific peptide(s) carrying the probe modification via MS.

4. Data Analysis:

- Procedure: Use bioinformatics software to compare protein abundance in probe-labeled samples vs. negative control samples (no UV, no probe, or inactive probe). Proteins significantly enriched in the experimental sample are high-confidence candidate targets [3].

Diagram 1: Target Deconvolution Workflow for Natural Products (Max. Width: 760px)

Navigating Polypharmacology: From Challenge to Therapeutic Strategy

Polypharmacology—the action of a single compound on multiple molecular targets—is not a bug but a fundamental feature of many effective natural products [19]. This multi-target action can be harnessed for superior efficacy, especially in complex diseases like cancer and autoimmune disorders, but requires precise characterization to avoid unwanted side effects.

Table 2: Documented Polypharmacology Mechanisms of Natural Products & Drugs

| Compound | Primary Known Target/Pathway | Additional Identified Targets/Effects | Therapeutic Consequence | Identification Method |

|---|---|---|---|---|

| Thalidomide / Lenalidomide | Cereblon (CRL4 E3 ubiquitin ligase substrate receptor), leading to degradation of Ikaros (IKZF1) and Aiolos (IKZF3) [17]. | Binds cereblon to induce degradation of multiple other "neosubstrates" (e.g., CK1α, SALL4). Also modulates TNF-α production and COX-2 expression [17]. | Efficacy in multiple myeloma and myelodysplastic syndromes; also causes teratogenicity (via SALL4 degradation) and other side effects. | Biochemical purification, phenotypic screening, and later structural biology [17]. |

| Artemisinin | Heme activation leading to alkylation and oxidative stress in malaria parasite [18]. | Shown to bind to multiple human proteins in a chemoproteomic screen, suggesting potential host-directed effects. Also has reported anti-cancer and anti-viral activity [18]. | Potent, rapid antimalarial action; potential for drug repurposing. | Reverse chemical proteomics, phenotypic screening in other diseases [18]. |

| Curcumin | Pleiotropic effects, but identified targets include KEAP1 (NRF2 pathway), IKK (NF-κB pathway), and various enzymes [3]. | Interacts with a wide network of signaling proteins, transcription factors, and enzymes (e.g., amyloid-β, STAT3). Poor bioavailability complicates analysis. | Broad anti-inflammatory and antioxidant effects claimed; but clinical efficacy is debated due to pharmacokinetics. | Affinity purification, chemoproteomics, computational docking [3]. |

| Kinase Inhibitors (e.g., Staurosporine - natural product origin) | Originally identified as a potent inhibitor of Protein Kinase C (PKC). | Profiling shows potent inhibition of a broad spectrum of kinases (e.g., PKA, CAMK, CK1) [19]. | Excellent research tool, but too promiscuous for clinical use as an anticancer drug. Led to development of more selective analogs. | Kinase activity profiling panels, chemoproteomics [19]. |

Diagram 2: Polypharmacology Network of a Natural Product (Max. Width: 760px)

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for Natural Product MoA Studies

| Tool/Reagent | Function in Experiment | Key Consideration for Natural Products |

|---|---|---|

| Functionalized Natural Product Probes (Biotin-, Alkyne-, Photoaffinity-tagged) | Serve as molecular bait to fish out target proteins from complex biological mixtures for affinity purification or photoaffinity labeling [3]. | Synthetic derivatization must not abolish bioactivity. An inactive analog probe is a critical negative control. |

| Streptavidin/Avidin Beads | High-affinity capture matrix for biotinylated probes and their bound protein complexes. | High binding capacity is needed for low-abundance targets. Non-specific binding can be high; requires stringent wash optimization. |

| "Click Chemistry" Kits (CuAAC or Copper-free) | Enable bioorthogonal conjugation of an alkyne-bearing probe to an azide-bead (or vice versa) after the binding event occurs in cells [3]. | Useful for probes where direct biotin conjugation harms activity. Copper-catalyzed (CuAAC) reactions can be toxic to some proteins. |

| Photoaffinity Groups (Diazirine, Benzophenone) | Upon UV irradiation, form highly reactive intermediates that covalently crosslink the probe to its binding site on the target protein [3]. | Allows capture of transient or low-affinity interactions. Placement on the natural product scaffold is critical for successful labeling. |

| Cell Lysate/Membrane Protein Prep Kits | Generate functional protein extracts from cells or tissues under native conditions for in vitro binding assays. | Natural products often target membrane proteins or multiprotein complexes; lysis conditions must preserve these structures. |

| Thermal Shift Assay Dyes (e.g., SYPRO Orange) | Detect ligand-induced stabilization of a target protein during a Cellular Thermal Shift Assay (CETSA), indicating direct binding. | An orthogonal, cell-based validation method. Works best with purified recombinant protein or simple lysates for target validation. |

| CRISPR/Cas9 Knockout Libraries or siRNA Pools | Enable genome-wide or targeted gene knockdown to identify genes essential for the natural product's phenotypic effect (genetic MoA studies). | Complementary to biochemical methods. Can reveal synthetic lethal interactions or pathway dependencies, even if not a direct target. |

The unique challenges posed by natural products—structural complexity, polypharmacology, and unknown MoAs—necessitate a move beyond the rigid dichotomy of phenotypic versus target-based screening. The future lies in integrated hybrid approaches [17]. This involves initiating discovery with complex phenotypic screens to leverage the holistic bioactivity of natural products, followed by rapid target deconvolution using the advanced chemical proteomics and computational methods outlined here. The resulting multi-target profiles must then be understood not as a list of off-target effects, but as polypharmacology networks that can be mapped and optimized. By systematically applying this comparative framework, researchers can transform the inherent challenges of natural products into a structured strategy for discovering novel, effective, and mechanistically rich therapeutics.

Natural products (NPs) have been a cornerstone of drug discovery, historically providing a significant percentage of new therapeutics. Their structural complexity and evolutionary optimization allow them to engage with challenging biological targets—such as protein-protein interactions, nucleic acid complexes, and spliceosomes—that often remain intractable to conventional synthetic, "drug-like" libraries [20]. However, the path from a bioactive natural extract to a characterized therapeutic candidate is fraught with complexity. This journey is navigated using two primary, and often philosophically opposed, methodological roadmaps: target-based assays and phenotypic assays.

Target-based screening operates on a reductionist principle. It involves testing compounds against a purified protein or a well-defined molecular target in a controlled biochemical environment. Success is measured by the compound's ability to modulate the specific target's activity [21]. In contrast, phenotypic screening adopts a holistic approach. It assesses the effects of compounds on whole cells or organisms, measuring complex, multifaceted outputs like cell morphology, viability, or reporter gene expression without pre-supposing the mechanism of action (MOA) [22] [4]. The resulting "phenotypic fingerprint" can reveal bioactivity against any biomolecular component within the living system.

The central thesis of modern NP research is that an exclusive commitment to either paradigm is suboptimal. The "imperative for integration" arises from the need to leverage the precision of target-based methods with the biological relevance and de novo discovery power of phenotypic approaches. This guide provides a comparative analysis of these strategies, supported by experimental data and protocols, to inform researchers and drug development professionals on building a synergistic discovery pipeline.

Methodological Comparison: Core Principles, Applications, and Data

The following tables provide a structured comparison of the two assay paradigms, summarizing their defining characteristics, strengths, limitations, and representative experimental outcomes.

Table 1: Foundational Comparison of Target-Based vs. Phenotypic Assay Paradigms

| Aspect | Target-Based Assays | Phenotypic Assays |

|---|---|---|

| Core Principle | Tests interaction with or modulation of a predefined, isolated molecular target (e.g., enzyme, receptor) [21]. | Measures observable change in cell or organism phenotype without assumption of a specific target [22] [4]. |

| Typical Readout | Biochemical signal (e.g., fluorescence, luminescence, binding affinity). | Multidimensional imaging features, cell viability, morphological changes, gene expression profiles [22] [4]. |

| Primary Strength | High precision, mechanistic clarity, amenable to high-throughput screening (HTS), direct structure-activity relationship (SAR) studies. | Biologically relevant context, discovers novel targets/pathways, identifies polypharmacology, captures complex phenotypes like mitotic arrest [22]. |

| Key Limitation | May not translate to cellular activity; misses off-target effects; requires a prior, validated target hypothesis. | Mechanism of action (MOA) is initially unknown; can be lower throughput; data analysis is complex; may identify cytotoxic compounds nonspecifically [22]. |

| Ideal Application | Optimizing leads for a known target, fragment-based screening, selectivity profiling. | De novo drug discovery, investigating complex diseases, natural product MOA exploration, toxicology assessment [4]. |

| Target Identification | Built into the assay design (the target is known). | Requires follow-up techniques (e.g., chemical proteomics, genetic screens) for deconvolution [8] [3]. |

Table 2: Comparative Performance Data from Representative Studies

| Study Focus | Target-Based Approach (Data) | Phenotypic Approach (Data) | Comparative Insight |

|---|---|---|---|

| Hit Discovery Rate | In screens for challenging targets (e.g., protein-protein interactions), hit rates from synthetic libraries can be very low [20]. | Screening 5,304 microbial extracts via cytological profiling identified 41 discrete bioactivity clusters, including a specific antimitotic cluster [22]. | Phenotypic screening of NP libraries can yield richer, more diverse hit clusters for complex biology, as NPs sample broader chemical space [20] [22]. |

| Mechanism Elucidation | Affinity chromatography (e.g., Cell Membrane Chromatography) directly isolates receptor ligands from complex mixtures [21]. | Cytological profiles of extracts clustered with known microtubule poisons; subsequent isolation confirmed diketopiperazine XR334 as the antimitotic agent [22]. | Target-based methods directly link compound to target. Phenotypic methods predict MOA by profile matching, requiring confirmation but enabling discovery of unexpected mechanisms. |

| Complexity & Throughput | Affinity selection mass spectrometry (ASMS) can screen complex mixtures against a single target in a semi-high-throughput manner [21]. | High-content screening (HCS) of 124 NPs generated 134-dimensional phenotypic profiles per compound, but requires sophisticated image analysis [4]. | Target-based assays are generally higher in throughput. Phenotypic assays generate vastly more information per well but at a slower rate and with greater computational demand. |

| Data Output | Quantitative binding constants (IC50, Kd), enzyme kinetic parameters. | Quantitative multiparametric "fingerprints" (e.g., nuclear size, lysosomal count, tubulin intensity) that can be clustered [4]. | Target-based data is unidimensional and direct. Phenotypic data is multidimensional and integrative, revealing system-wide effects. |

Experimental Protocols

Protocol for Phenotypic Screening: Cytological Profiling (CP)

This protocol, adapted from high-content phenotypic screening studies, is used to generate multiparametric fingerprints of natural product effects [22] [4].

- Cell Preparation & Plating: Seed adherent cells (e.g., HeLa, U2OS) into multi-well imaging plates at a density ensuring ~70% confluence at the time of assay. Allow cells to adhere overnight in standard culture conditions.

- Compound Treatment: Treat cells with natural product extracts or pure compounds at a range of concentrations. Include positive controls (e.g., paclitaxel for mitotic arrest, nocodazole for microtubule destabilization) and vehicle (DMSO) controls. Incubate for a defined period (typically 6-24 hours).

- Fixation and Staining: Fix cells with paraformaldehyde (e.g., 4% in PBS). Permeabilize with Triton X-100. Block with a suitable protein (e.g., BSA). Apply a multiplexed fluorescent stain panel to mark key cellular components. A typical panel includes:

- DAPI/Hoechst: Nuclear DNA (cell count, nuclear morphology).

- Phalloidin: Actin cytoskeleton.

- Anti-α-tubulin antibody: Microtubule network.

- LysoTracker/LAMP1 antibody: Lysosomes.

- Antibodies for markers like phosphorylated histone H3 (pHH3, mitosis), cleaved caspase-3 (apoptosis), or NF-κB localization.

- High-Content Imaging: Image each well using an automated high-content microscope with environments for each fluorescence channel. Acquire multiple fields per well to capture a statistically significant cell population (500-1000 cells).

- Image & Data Analysis:

- Use image analysis software (e.g., CellProfiler, IN Carta) to segment cells and identify subcellular compartments.

- Extract quantitative features for each cell: intensities, textures, morphological measurements (size, shape), and counts for each channel.

- Calculate population means for each feature per well, normalized to vehicle controls.

- Reduce dimensionality (e.g., to 20 core features) and perform hierarchical clustering. Compare compound profiles to a reference database of profiles from compounds with known MOAs to predict biological activity [22] [4].

Protocol for Target-Based Screening: Cell Membrane Chromatography (CMC) with MS

This protocol describes an online, affinity-based method to fish out active components from complex NP mixtures targeting specific membrane receptors [21].

- Preparation of Cell Membrane Stationary Phase (CMSP): Harvest cells expressing the target receptor of interest. Isolate cell membranes via homogenization and differential centrifugation. Immobilize the membrane fragments onto activated silica particles using a phospholipid-assisted method. Pack the CMSP into a liquid chromatography column.

- On-line Screening Setup: Connect the CMC column as the first dimension in an LC system. Connect a reverse-phase analytical column coupled to a mass spectrometer as the second dimension via a multi-port switching valve.

- Screening Run: Inject the crude natural product extract onto the CMC column. Use a mild mobile phase (e.g., isotonic PBS) to maintain receptor integrity. Compounds with no affinity for the receptor elute in the void volume and are wasted.

- Target Compound Capture & Identification: Switch the valve to trap the retained fraction (containing receptor-bound ligands) on a trapping column. Elute the bound ligands from the CMC column onto the trapping column using a denaturing organic solvent. Then, switch the valve to forward the trapped ligands to the second-dimension RP column for separation. Detect and identify the individual ligands using tandem mass spectrometry (MS/MS).

- Validation: Synthesize or purify the identified compound and validate its activity and binding affinity for the target receptor in a secondary biochemical or cellular assay.

Visualizing the Pathways and Workflows

Diagram 1: Dual Pathways in Natural Product Drug Discovery

Diagram 2: Target Deconvolution Strategies Post-Phenotypic Screen

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Materials for Integrated NP Screening

| Reagent/Material | Primary Function | Application Context |

|---|---|---|

| Prefractionated NP Libraries | Chemically simplified extracts that reduce complexity while preserving natural chemical diversity. | Primary screening input for both phenotypic and target-based assays to improve hit resolution and deconvolution [22]. |

| Multiplex Fluorescent Stain Kits (e.g., DAPI, Phalloidin, LysoTracker, Antibody Panels) | Simultaneously label multiple organelles and cellular states for high-content imaging. | Generating multiparametric cytological profiles in phenotypic screening [22] [4]. |

| Cell Lines with Engineered Reporters | Express fluorescent or luminescent proteins under pathway-specific control (e.g., NF-κB response element). | Enabling targeted phenotypic readouts or reporter-gene assays within a cellular context. |

| Immobilized Target Proteins / CMSP Columns | Purified protein or cell membrane fragments fixed to a solid support for affinity capture. | Target-based screening via affinity chromatography, SPR, or ASMS to "fish out" ligands from mixtures [21]. |

| Biotin or Photoaffinity Tags (e.g., Diazirine, Benzophenone) | Chemical handles for conjugating a small molecule to a solid support or enabling UV-induced covalent crosslinking. | Creating chemical probes for affinity-based pull-down and target identification (chemoproteomics) [3] [23]. |

| Streptavidin-Coated Magnetic Beads | High-affinity solid support for capturing biotin-tagged chemical probes and their bound target proteins. | Isolating protein-compound complexes from lysates after affinity pull-down experiments [23]. |

| LC-MS/MS Systems | High-sensitivity analytical instrumentation for separating compounds and determining their structure or identifying proteins. | NP Research: Dereplication, compound identification. Target ID: Protein identification from pull-downs [21]. |

| Bioinformatic Software (e.g., CellProfiler, MetaboAnalyst, Clustering Algorithms) | Automated image analysis, multivariate statistical analysis, and pattern recognition for complex datasets. | Extracting quantitative features from HCS images and clustering phenotypic or metabolomic profiles to predict MOA [22] [4]. |

The dichotomy between target-based and phenotypic screening is a false crossroads. As the data and protocols illustrate, each has irreplaceable strengths and inherent blind spots. The modern imperative is for integration.

A forward-looking strategy begins with phenotypic screening of diverse NP libraries to identify extracts that produce a desirable, biologically relevant phenotype. Advanced cytological profiling can then prioritize hits and suggest a MOA [22] [4]. Following this, target deconvolution techniques—leveraging affinity pull-downs with chemical probes [3] [23] or label-free methods like thermal proteome profiling—are employed to identify the molecular target(s). Finally, target-based assays are used to characterize the compound-target interaction with precision, enabling medicinal chemistry optimization.

This synergistic cycle, leveraging phenotypic assays for discovery and target-based methods for mechanistic elucidation and optimization, bridges the gap between biological complexity and molecular precision. It represents the most robust path forward for unlocking the full therapeutic potential of natural products in the development of novel drugs for challenging diseases.

Modern Toolkits: Deploying Phenotypic Profiling and Target Engagement Assays for NPs

The discovery of bioactive molecules from natural sources is undergoing a transformative shift, moving beyond single-target assays toward system-level phenotypic profiling. This evolution addresses a core challenge in natural products research: the frequent mismatch between a compound's in vitro target affinity and its in vivo efficacy or unexpected toxicity [24] [25]. Traditional target-based screening, while precise, operates within a predefined biological understanding, potentially missing novel mechanisms and polypharmacology—a hallmark of many natural products [4]. Phenotypic screening, in contrast, begins with a measurable cellular or organismal change, agnostic to the specific molecular target, making it exceptionally powerful for discovering first-in-class therapies and novel biology [24] [26].

High-content phenotypic profiling technologies, such as Cell Painting and L1000, have matured to bridge this gap. They offer a middle ground, providing deep, multi-parametric data on compound effects that is richer than a single readout but more tractable than whole-organism studies [27] [28]. Cell Painting captures hundreds of morphological features from microscopy images, creating a visual "fingerprint" of cellular state [27]. The L1000 assay quantifies the expression of 978 "landmark" genes, from which the majority of the transcriptome can be computationally inferred, offering a complementary molecular signature of perturbation [28]. The integration of AI-driven analysis is now unlocking the full potential of these rich datasets, enabling the prediction of mechanism of action (MOA), toxicity, and the identification of promising candidates from complex natural product libraries [29] [30]. This guide provides a detailed, data-driven comparison of these two pivotal profiling platforms within the context of modern, systems-level natural products discovery.

Platform Deep Dive: Mechanisms and Methodologies

Cell Painting: Morphological Profiling at Single-Cell Resolution

Cell Painting is a high-content, image-based assay designed to provide an unbiased, comprehensive view of cellular morphology. Its protocol involves staining cultured cells with a cocktail of six fluorescent dyes to highlight eight major cellular components or organelles, which are then imaged across five fluorescence channels [27].

- Core Staining Panel: The assay uses:

- Hoechst 33342 / DAPI: Labels DNA (nucleus).

- Concanavalin A, Alexa Fluor 488 conjugate: Labels the endoplasmic reticulum and Golgi apparatus.

- Wheat Germ Agglutinin, Alexa Fluor 555 conjugate: Labels the plasma membrane and Golgi.

- Phalloidin, Alexa Fluor 568 conjugate: Labels polymerized actin (cytoskeleton).

- SYTO 14: Labels nucleoli and cytoplasmic RNA.

- MitoTracker Deep Red: Labels mitochondria [27].

- Feature Extraction: Automated image analysis software (e.g., CellProfiler) identifies individual cells and measures approximately 1,500 morphological features per cell. These features include size, shape, texture, intensity, and correlations between channels, capturing subtle phenotypic changes [27] [31].

- Key Advantage: It provides single-cell resolution, allowing researchers to detect heterogeneity in cell populations and identify phenotypic effects in specific subpopulations, which is critical for understanding complex natural product effects [27] [4].

L1000: A High-Throughput Gene Expression Profiling Platform

The L1000 platform, developed as part of the NIH LINCS Consortium, is a cost-effective, high-throughput method for gene expression profiling. It is based on a "reduced representation" strategy that measures a carefully selected set of 978 informative "landmark" transcripts, from which the expression levels of ~81% of non-measured transcripts can be accurately inferred using computational models [28].

- Assay Mechanism: The technology uses ligation-mediated amplification (LMA) followed by detection on fluorescently addressed microspheres (beads). Briefly, mRNA is captured, converted to cDNA, and amplified with barcoded, gene-specific primers. These products are hybridized to color-coded beads, and expression is quantified via a phycoerythrin signal [28].

- Scale and Cost: This bead-based approach enables massive scale; the published Connectivity Map (CMap) contains over 1.3 million L1000 profiles. The reagent cost is remarkably low, at approximately $2 per sample, making large-scale screening of natural product libraries feasible [28].

- Key Advantage: It delivers a direct molecular readout of cellular response (transcriptional changes) at a very high throughput and low cost, facilitating the creation of massive reference databases for connectivity analysis [32] [28].

The AI-Driven Analysis Layer

AI and machine learning are not standalone assays but a critical analytical layer that maximizes the value of data from both Cell Painting and L1000. Modern approaches move beyond simple clustering to predictive and generative models.

- For Image-Based Data: Advanced workflows use deep learning (e.g., convolutional autoencoders) to learn compact, informative representations from raw Cell Painting images or extracted features. These representations improve performance in key tasks like Mechanism of Action (MOA) classification and batch effect correction [26] [30]. Self-supervised anomaly detection models, trained on control well data, can identify subtle, unexpected phenotypes that might be missed by standard analysis [30].

- For Transcriptomic Data: AI models integrate L1000 data with other biological networks. For example, the Pathway and Transcriptome-Driven Drug Efficacy Predictor (PTD-DEP) uses a dual-modality architecture combining pathway prediction with graph convolutional networks (GCNs) on L1000 transcriptomic profiles to identify multi-target therapeutics, as demonstrated in the discovery of melatonin's dual anti-aging and anti-Alzheimer's activity [29].

- Integrated Analysis: The most powerful applications involve multi-modal AI that jointly learns from both morphological and gene expression profiles, along with chemical structure data, to build more robust predictors of bioactivity, toxicity, and MOA [32] [29].

Comparative Performance Analysis

A systematic, head-to-head comparison of Cell Painting and L1000 reveals distinct strengths, guiding platform selection for specific research goals in natural products discovery [32].

Technical and Performance Metrics

Table 1: Core Technical Specifications and Performance Metrics [27] [32] [28]

| Feature | Cell Painting Assay | L1000 Assay |

|---|---|---|

| Primary Readout | Cellular morphology (image-based) | Gene expression (bead-based luminescence) |

| Profiling Dimension | ~1,500 morphological features per cell | 978 directly measured landmark transcripts |

| Resolution | Single-cell | Population-averaged |

| Key Advantage | Detects spatial/organelle-level phenotypes & heterogeneity | Direct molecular signature; massive scalability |

| Typical Assay Cost | Low (dye-based) | Very Low (~$2/sample) |

| Throughput | High (384-well plate) | Very High (optimized for 384-well) |

| Data Reproducibility | Higher (Median Pairwise Correlation) | High, but slightly lower than Cell Painting |

Information Content and Predictive Utility

A landmark study profiling 1,327 compounds from the Drug Repurposing Hub in A549 cells with both assays provided quantitative insights into their information content and utility for drug discovery tasks [32].

Table 2: Comparative Information Content & Predictive Performance (Based on 1,327 Compound Study) [32]

| Metric | Cell Painting | L1000 | Interpretation for Natural Products Research |

|---|---|---|---|

| Profile Reproducibility | Higher | High | Cell Painting profiles are more consistent across replicates, crucial for reliable phenotyping of complex extracts. |

| Signal Diversity | Higher | High | Cell Painting captures a wider variety of distinct phenotypic states, better for novel MOA discovery. |

| # of Independent Feature Groups | Lower | Higher | L1000 measures more orthogonal biological axes, potentially capturing more distinct pathways. |

| MOA Classification Accuracy | Complementary | Complementary | Each assay excels for different MOA classes; combined use yields best overall prediction. |

| Sensitivity to Batch/Position Effects | Higher (requires correction) | Lower | Cell Painting requires careful normalization (e.g., spherize transform) for plate-edge effects [32]. |

Application to Natural Products Research

Phenotypic profiling is particularly suited to natural products, which often have complex, unknown, or multiple mechanisms of action [4]. A broad-spectrum cytological profiling platform using 14 cellular markers successfully classified natural products, predicted MOA (e.g., identifying topoisomerase inhibitors), and elucidated structure-activity relationships (SAR) by clustering compounds with similar phenotypic fingerprints [4]. This approach moves beyond simple cytotoxicity to a multi-parameter assessment of physiological impact, distinguishing between selective agents and broadly toxic compounds [4].

Experimental Protocols and Data Analysis

- Cell Seeding & Perturbation: Plate cells in 384-well plates. Treat with natural product compounds/extracts (typically for 48 hours).

- Staining & Fixation: Fix cells, then stain with the 6-dye cocktail as described in Section 2.1.

- Image Acquisition: Automatically image plates using a high-throughput microscope with 5 fluorescence channels.

- Image Analysis & Feature Extraction: Use CellProfiler software to identify cells and measure ~1,500 features/cell.

- Data Normalization: Apply correction for plate positional effects (e.g., spherize transform using DMSO control wells) [32] [31].

- Profiling & Analysis: Generate median profiles per well, then use similarity metrics (e.g., correlation) for clustering, MOA prediction, or anomaly detection via AI models.

- Cell Perturbation: Treat cells in 384-well plates with compounds (typically for 24 hours).

- Lysate Preparation: Lyse cells and capture mRNA on oligo-dT-coated plates.

- cDNA Synthesis & Amplification: Synthesize cDNA and perform Ligation-Mediated Amplification (LMA) with barcoded gene-specific primers.

- Bead-Based Detection: Hybridize amplified products to fluorescently color-coded beads. Quantify expression via phycoerythrin signal on a Luminex bead reader.

- Data Processing: Normalize data and infer the expression of ~81% of the transcriptome from the 978 landmark genes using a pre-trained model.

- Signature Generation & Connectivity Analysis: Generate differential expression signatures and compare them to the CMap database to identify similar profiles and hypothesize MOA.

Visualizing Workflows and Integration

Diagram 1: Cell Painting generates morphological profiles from images for AI-driven analysis.

Diagram 2: Integrated AI models combine multimodal data for enhanced prediction.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Phenotypic Profiling

| Item | Primary Function | Example/Note |

|---|---|---|

| Cell Painting Dye Cocktail | Multiplexed staining of organelles for morphological profiling. | Hoechst 33342, Concanavalin A-AF488, WGA-AF555, Phalloidin-AF568, SYTO 14, MitoTracker Deep Red [27]. |

| L1000 Detection Beads & Primers | Bead-based detection of 978 landmark transcripts. | Color-coded Luminex beads coupled to barcode-specific oligonucleotides [28]. |

| Reference Compound Libraries | Assay controls & training data for AI models. | Drug Repurposing Hub, LOPAC library for MOA annotation [32] [4]. |

| Normalization Controls | Corrects technical variation (plate, batch effects). | DMSO controls distributed across plates for spherize transform [32] [31]. |

| Validated Chemical Probes | Establishes disease relevance & pathway modulation in phenotypic assays [25]. | Used for assay validation and connecting targets to phenotypes. |

| AI/ML Software Platforms | Analyzes high-dimensional data for prediction & clustering. | Includes tools for deep learning on images (TensorFlow, PyTorch) and transcriptomic analysis (CMap tools) [29] [30]. |

Cell Painting and L1000 are not competing technologies but powerful orthogonal pillars of modern phenotypic profiling. For natural products research, Cell Painting offers unparalleled insight into direct cellular morphology and heterogeneity, while L1000 provides a cost-effective, scalable window into the transcriptional landscape. The choice depends on the primary research question: phenotypic characterization and novel MOA discovery favor Cell Painting, whereas large-scale library screening and connectivity mapping leverage L1000's strengths.

The future lies in their strategic integration, powered by AI-driven analysis. Combining morphological, transcriptomic, and chemical data within multi-modal AI frameworks creates a system more predictive of in vivo outcomes than any single modality [32] [29]. This integrated approach is perfectly poised to decode the complex mechanisms of natural products, accelerating the transition from hit identification to validated lead with a known phenotypic signature and a deconvoluted mechanism, ultimately enriching the pipeline for safer and more effective therapeutics.

The landscape of drug discovery has undergone a significant strategic evolution, marked by a renaissance in phenotypic screening approaches that demand sophisticated deconvolution methodologies. Historically, the pharmaceutical industry heavily favored target-based screening, where compounds were tested against isolated, purified proteins with known disease relevance. While this approach benefits from straightforward chemistry optimization and clear intellectual property pathways, analyses reveal its limitations in generating first-in-class medicines [1]. The fundamental challenge lies in the reductionist nature of target-based methods, which often fail to capture the complex pathophysiology of disease as it manifests in living systems.

In contrast, phenotypic screening observes compound effects in cells, tissues, or whole organisms, producing hits that modulate a disease-relevant phenotype without prior bias toward a specific molecular target [33]. This approach operates within a physiologically relevant context, accounting for compound permeability, metabolism, and off-target effects early in discovery. For natural products research—where compounds often possess complex structures and unknown mechanisms—phenotypic screening is particularly valuable as it allows biological activity to guide discovery without requiring target hypotheses [34].

However, the major challenge following a phenotypic hit is target deconvolution: identifying the specific biomolecule(s) through which the compound exerts its effect. This process transforms a phenotypic observation into mechanistic understanding, enabling medicinal chemistry optimization, predictive toxicology, and intellectual property protection [35]. The "deconvolution revolution" refers to the expanding toolkit of chemical, proteomic, genetic, and computational strategies that have matured to address this critical bottleneck, making phenotypic screening a more powerful and reliable discovery engine [36].

Core Deconvolution Strategies: A Comparative Framework

Target deconvolution strategies can be broadly categorized into affinity-based methods, which rely on the direct physical interaction between compound and target, and functional inference methods, which deduce targets through analysis of downstream biological effects [34]. The choice of strategy depends on compound properties, available instrumentation, and the biological system.

Table 1: Comparison of Major Target Deconvolution Strategies

| Method | Core Principle | Typical Timeframe | Key Advantages | Major Limitations | Best Suited For |

|---|---|---|---|---|---|

| Affinity Chromatography [34] | Immobilized compound pulls down binding proteins from lysate. | 2-4 weeks | Direct, conceptually simple; can detect weak binders with cross-linking. | Requires compound derivatization which may alter activity/selectivity; high background common. | Stable, potent compounds with known site for linker attachment. |

| Activity-Based Protein Profiling (ABPP) [34] | Reactive probe labels active-site nucleophiles of enzyme families. | 1-3 weeks | Reports on enzyme activity (not just abundance); can profile entire enzyme families. | Limited to enzymes with susceptible nucleophiles (e.g., Ser, Cys hydrolases); requires probe design. | Covalent inhibitors or modulators of specific enzyme classes (proteases, lipases). |

| Photoaffinity Labeling (PAL) [36] | Photoreactive compound crosslinks to proximal proteins upon UV irradiation. | 3-6 weeks | Captures transient, low-affinity interactions in live cells; can map binding sites. | Synthesis of bifunctional (photoreactive + handle) probes is challenging; potential for non-specific labeling. | Compounds where binding site is tolerant to modification; studying membrane proteins. |

| Cellular Thermal Shift Assay (CETSA) | Target protein stabilization upon compound binding measured via thermostability. | 1-2 weeks | Label-free; works in cells and tissues; can monitor target engagement. | Does not identify novel/unknown targets; requires antibody or MS readout. | Validation of suspected targets and engagement studies. |

| Genomic Profiling (CRISPR, RNAi) [36] | Identification of genetic alterations that confer resistance or sensitivity to the compound. | 4-8 weeks | Unbiased, genome-wide; can identify pathways, not just single proteins. | Labor-intensive; hits may be indirect; resistance mutations can be rare. | Compounds with strong, selective phenotype in proliferating cells. |

| Transcriptomic/Proteomic Profiling [35] | Comparison of gene or protein expression signatures to reference databases. | 2-3 weeks | Label-free; provides MoA context and pathway information. | Identifies downstream consequences, not direct binders; requires robust signature. | Elucidating pathway-level mechanism of action (MoA). |

Detailed Experimental Protocols for Key Methods

This protocol merges affinity purification with photoaffinity labeling to capture lower-affinity interactions.

- Probe Synthesis: Derivatize the hit compound to incorporate both a photoreactive group (e.g., diazirine, benzophenone) and a bio-orthogonal handle (e.g., alkyne). The modification site is informed by structure-activity relationship (SAR) data to minimize activity loss.

- Cell Treatment and Crosslinking: Treat live cells or use cell lysates with the probe (1-10 µM). For live cells, incubate to allow cellular uptake (1-6 hours). Irradiate sample with UV light (~365 nm for diazirine) to induce crosslinking.

- Click Chemistry Conjugation: Lyse cells if using live cells. React the alkyne on the crosslinked probe with an azide-bearing affinity tag (e.g., azide-biotin) via copper-catalyzed azide-alkyne cycloaddition (CuAAC).

- Affinity Enrichment: Incubate the lysate with streptavidin-coated magnetic beads to capture biotinylated protein complexes. Wash stringently to remove non-specific binders.

- Elution and Identification: Elute proteins via boiling in SDS-PAGE buffer or competitive biotin elution. Resolve by gel electrophoresis, stain, and excise bands for in-gel tryptic digestion. Analyze resulting peptides by liquid chromatography-tandem mass spectrometry (LC-MS/MS).

Multiplexed Proteomic Profiling Using Tandem Mass Tag (TMT) Labeling

This label-free quantitative proteomics protocol identifies proteins whose abundance or state changes in response to compound treatment.

- Sample Preparation: Treat multiple cell populations (e.g., vehicle, hit compound, inactive analog) in biological triplicate. Harvest cells and lyse.

- Protein Digestion and Labeling: Reduce, alkylate, and digest lysates with trypsin. Label the resulting peptides from each sample with a unique isobaric TMT reagent.

- Pooling and Fractionation: Combine all TMT-labeled samples into one tube. Fractionate the pooled sample via high-pH reverse-phase chromatography to reduce complexity.

- LC-MS/MS Analysis: Analyze each fraction by nano-flow LC-MS/MS on an Orbitrap mass spectrometer. Quantify peptide abundance by measuring the intensity of reporter ions released during MS2 fragmentation.