Synergy in Spectrometry: A Comprehensive Guide to LC-HRMS and NMR Data Fusion for Advanced Food Classification

This article explores the powerful combination of Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy through data fusion strategies for food classification and authentication.

Synergy in Spectrometry: A Comprehensive Guide to LC-HRMS and NMR Data Fusion for Advanced Food Classification

Abstract

This article explores the powerful combination of Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy through data fusion strategies for food classification and authentication. Aimed at researchers, scientists, and drug development professionals, it covers the foundational principles of these complementary analytical techniques, delves into multi-level data fusion methodologies (low-, mid-, and high-level), and presents cutting-edge applications from wine and nut traceability to pharmaceutical quality control. The content further addresses critical troubleshooting for data integration challenges, provides frameworks for model validation and performance comparison, and discusses the translational potential of these robust analytical frameworks for biomedical and clinical research, including metabolomic profiling and quality attribute prediction.

Understanding the Core Synergy: Why LC-HRMS and NMR are a Powerful Combination for Food Profiling

The Inherent Strengths and Weaknesses of LC-HRMS and NMR Spectroscopy

In the evolving landscape of analytical chemistry, Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) Spectroscopy have emerged as two pivotal techniques for metabolomics and food classification research. While each method possesses distinct capabilities, their integration through data fusion strategies presents a powerful approach for comprehensive sample characterization. This application note delineates the inherent strengths and limitations of both platforms, providing detailed experimental protocols and contextualizing their application within food authentication research. The complementary nature of LC-HRMS and NMR enables researchers to leverage the high sensitivity of the former with the quantitative robustness and structural elucidation power of the latter, creating a synergistic workflow that surpasses the capabilities of either technique used independently [1] [2].

The growing need for food authenticity verification, particularly for high-value products like coffee, wine, and honey, has driven the development of sophisticated analytical methodologies that can detect adulteration and verify geographical origin. Within this framework, understanding the technical advantages and constraints of LC-HRMS and NMR becomes imperative for designing effective classification models that integrate data from both platforms [3] [4].

Technical Comparison: LC-HRMS versus NMR Spectroscopy

The selection between LC-HRMS and NMR spectroscopy requires careful consideration of their fundamental operational principles and performance characteristics. The following section provides a detailed technical comparison to guide researchers in selecting the appropriate technology for their specific application needs.

Table 1: Comprehensive Comparison of Technical Specifications between LC-HRMS and NMR Spectroscopy

| Parameter | LC-HRMS | NMR Spectroscopy |

|---|---|---|

| Sensitivity | Very high (can detect compounds at ng/mL or lower levels) [5] | Moderate to low; limited by insufficient sample concentrations [6] [2] |

| Sample Preparation | Requires extraction, often complex; protein precipitation for serum [5] | Minimal; typically just dissolution in deuterated solvent [6] [7] |

| Destructive Nature | Destructive technique | Non-destructive; sample can be recovered [6] [7] |

| Quantitation | Requires standards; susceptible to matrix effects | inherently quantitative without need for calibration curves [2] |

| Structural Elucidation | Provides molecular formula via exact mass; fragmentation patterns | Provides definitive 3D structural information, including stereochemistry [6] |

| Throughput | Moderate (chromatographic separation required) | Rapid once sample is loaded |

| Reproducibility | Subject to retention time shifts, requiring alignment algorithms [3] | Excellent; highly reproducible across instruments and laboratories [3] |

| Molecular Size Limitation | Suitable for a wide range, but can be challenged by very large molecules | Difficulty with higher molecular weight molecules due to spectral complexity [6] |

| Key Detectable Nuclei | Not applicable (mass-based) | 1H, 13C, 15N, 31P, 23Na, 19F [6] |

| Operational Costs | High (instrumentation, maintenance) | Very high (cryogenic liquids, powerful magnets) [6] |

In-Depth Analysis of Strengths and Weaknesses

LC-HRMS excels in sensitivity and specificity, capable of detecting thousands of metabolite features in a single analysis through untargeted acquisition [5] [4]. This technique provides specific identifications based on monoisotopic mass, retention time, isotopic patterns, and fragmentation spectra, enabling the creation of extensive shared spectral libraries [8]. However, limitations persist, particularly for low-concentration compounds or low-abundance ion fragments, where obtaining sufficient fragmentation for complete identification becomes challenging [8]. Additionally, LC-HRMS generates massive datasets that require sophisticated software tools and impose significant demands on data storage and processing infrastructure [8] [5]. A notable technical challenge is the lack of long-term robustness, where variations in column age and instrument contamination can lead to retention time shifts, complicating data comparison across different batches and laboratories [3].

NMR spectroscopy offers distinct advantages in structural elucidation at the atomic level, providing comprehensive information about molecular structure, dynamics, and interactions within the natural environment while preserving sample integrity [6]. As a non-destructive technique, NMR allows sample recovery for subsequent analyses, and its inherently quantitative nature enables precise concentration measurements without requiring external standards [2] [7]. The exceptional reproducibility of NMR data facilitates direct comparison across different instruments and time periods, a crucial advantage for long-term studies [3]. Primary limitations include relatively low sensitivity compared to mass spectrometry and high instrumentation and maintenance costs due to requirements for powerful superconducting magnets and cryogenic cooling systems [6] [2]. Furthermore, NMR faces challenges in analyzing large molecular weight compounds due to increased spectral complexity and is restricted to studying nuclei with magnetic moments [6].

Experimental Protocols for Food Classification Research

Protocol: LC-HRMS Analysis for Coffee Authentication

This protocol is adapted from a published methodology for the classification of Arabica and Robusta coffee samples from different geographical origins [4].

1. Sample Preparation:

- Materials: Green or roasted coffee beans, methanol, acetonitrile, formic acid, ultrapure water.

- Procedure:

- Grind coffee beans to a consistent particle size using a laboratory mill.

- Weigh 0.5 g of ground coffee into a conical flask.

- Add 50 mL of hot water (92-96°C) and brew for 4-6 minutes, mimicking standard preparation.

- Cool the brew to room temperature and filter through a 0.22 μm PVDF syringe filter.

- Transfer 100 μL of filtrate to an LC vial for analysis.

2. LC-HRMS Analysis:

- Instrumentation: UHPLC system coupled to Q-Exactive Orbitrap or similar high-resolution mass spectrometer.

- Chromatographic Conditions:

- Column: C18 reversed-phase column (e.g., 100 × 2.1 mm, 1.8 μm)

- Mobile Phase A: Water with 0.1% formic acid

- Mobile Phase B: Methanol with 0.1% formic acid

- Gradient: 5% B to 95% B over 25 minutes, hold for 5 minutes

- Flow Rate: 0.3 mL/min

- Column Temperature: 40°C

- Injection Volume: 5 μL

- Mass Spectrometric Conditions:

- Ionization: Electrospray Ionization (ESI) in both positive and negative modes

- Scan Range: m/z 100-1500

- Resolution: >70,000 (at m/z 200)

- Capillary Temperature: 320°C

- Sheath Gas Flow: 40 arbitrary units

- Aux Gas Flow: 15 arbitrary units

3. Data Processing:

- Convert raw files to mzML format using MSConvert.

- Process data using XCMS or similar software for peak detection, retention time alignment, and feature quantification.

- Perform statistical analysis using Principal Component Analysis (PCA) and Partial Least Squares-Discriminant Analysis (PLS-DA) to identify discriminant features.

Protocol: NMR Analysis for Wine Metabolomic Fingerprinting

This protocol is adapted from a study on the classification of Amarone wines based on grape withering time and yeast strain using 1H NMR [1].

1. Sample Preparation:

- Materials: Wine samples, deuterated water (D2O), sodium azide, phosphate buffer, 3-(trimethylsilyl) propionic acid-d4 sodium salt (TSP).

- Procedure:

- Mix 300 μL of wine with 300 μL of phosphate buffer (0.1 M, pD 7.4) in D2O.

- Add 0.25 mM TSP as an internal chemical shift reference and 0.05% sodium azide to inhibit microbial growth.

- Centrifuge at 13,000 rpm for 10 minutes to remove any particulate matter.

- Transfer 550 μL of the supernatant to a 5 mm NMR tube.

2. NMR Spectroscopy:

- Instrumentation: 600 MHz NMR spectrometer with a cryoprobe for enhanced sensitivity.

- Acquisition Parameters:

- Experiment Type: 1D NOESY-presat (noesygppr1d) for water suppression

- Temperature: 298 K

- Spectral Width: 20 ppm

- Relaxation Delay: 4 seconds

- Mixing Time: 10 ms

- Number of Scans: 64

- Acquisition Time: 3 seconds

3. Data Processing:

- Process Free Induction Decays (FIDs) by applying exponential multiplication (line broadening of 0.3 Hz) followed by Fourier transformation.

- Manually phase and baseline correct all spectra.

- Calibrate spectra to TSP signal at δ 0.0 ppm.

- Reduce data by segmenting spectra into regions (bucketing) of equal width (δ 0.04 ppm), excluding the water region (δ 4.7-5.0 ppm).

- Normalize the integrated bucket regions to total intensity for multivariate statistical analysis.

Data Fusion Strategy for Enhanced Classification

Integrating data from LC-HRMS and NMR platforms enhances classification accuracy by capturing complementary aspects of the sample metabolome. The following workflow outlines a standardized procedure for mid-level data fusion, which has demonstrated superior performance in food classification tasks [1] [9].

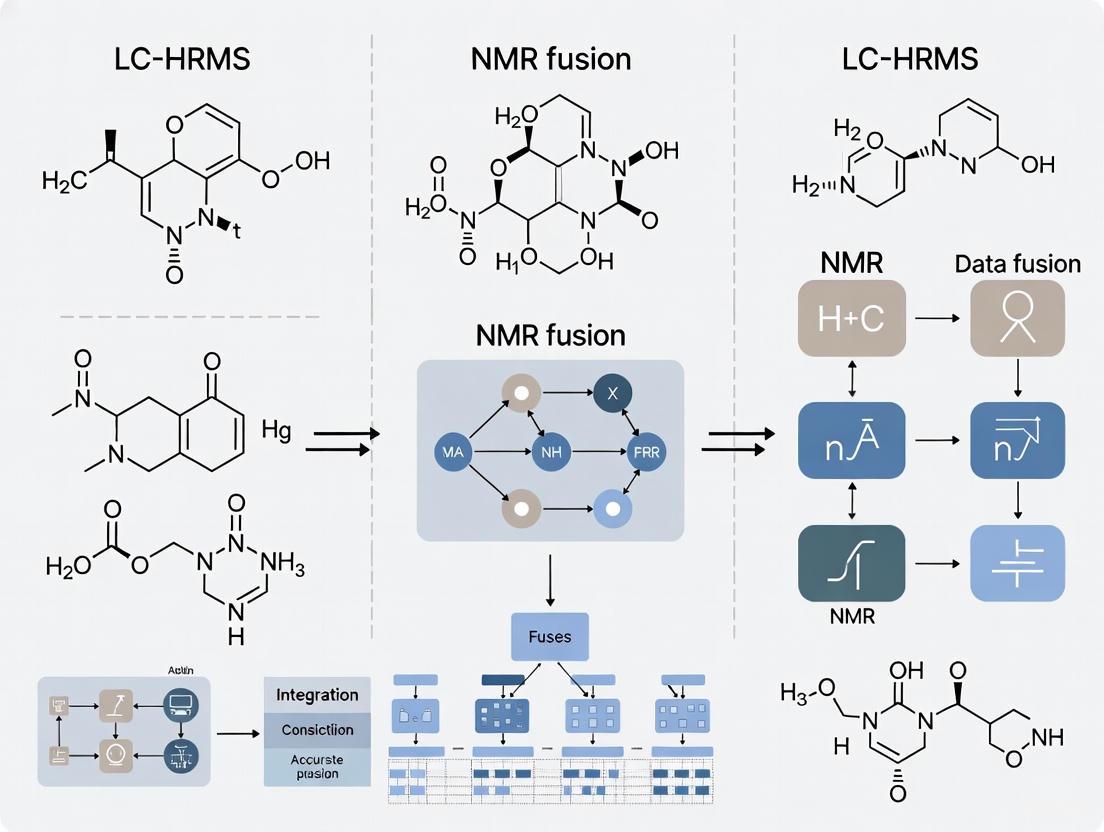

Diagram 1: Data fusion workflow for LC-HRMS and NMR integration in food classification.

Research Reagent Solutions and Essential Materials

Successful implementation of LC-HRMS and NMR methodologies requires specific reagents and materials optimized for each platform. The following table catalogues essential items for researchers establishing these techniques in their laboratories.

Table 2: Essential Research Reagents and Materials for LC-HRMS and NMR Experiments

| Item | Function/Application | Technical Specifications | Example Use Case |

|---|---|---|---|

| Deuterated Solvents (e.g., CD3OD, D2O, CDCl3) | NMR solvent; provides deuterium lock signal | 99.8% deuterium enrichment; NMR tubes (5 mm) | Dissolving samples for NMR analysis without interfering proton signals [7] |

| Chemical Shift Reference (e.g., TSP) | Internal chemical shift standard for NMR | δ 0.0 ppm for ¹H NMR; soluble in water | Referencing NMR spectra in aqueous solutions [7] |

| C18 LC Columns | Reversed-phase separation for LC-HRMS | 100-150 mm length; 2.1 mm ID; 1.7-1.8 μm particle size | Separating complex metabolite mixtures in coffee, wine [4] |

| Mass Calibration Solution | Daily mass accuracy calibration for HRMS | Covers broad m/z range; compatible with ionization mode | Ensuring sub-ppm mass accuracy during untargeted screening |

| Deuterated Mobile Phase Additives | LC-NMR hyphenation; minimal interference | Deutero-acetonitrile, deutero-methanol, D2O with buffers | Online LC-NMR applications for structural ID [10] |

| Solid Phase Extraction (SPE) Cartridges | Sample clean-up and metabolite concentration | C18, HILIC, or mixed-mode chemistries; 30-100 mg bed weight | Pre-concentrating dilute food samples prior to LC-HRMS |

Decision Framework for Technique Selection

The choice between LC-HRMS and NMR, or the decision to implement both, depends on specific research goals, sample characteristics, and available resources. The following diagram provides a systematic approach for technique selection based on key experimental requirements.

Diagram 2: Decision framework for selecting between LC-HRMS and NMR based on research requirements.

LC-HRMS and NMR spectroscopy represent complementary analytical pillars within modern food classification research. LC-HRMS delivers exceptional sensitivity and broad metabolome coverage, while NMR provides unparalleled structural elucidation capabilities and quantitative robustness. The strategic integration of these platforms through data fusion approaches, as demonstrated in wine and coffee authentication studies, creates a synergistic analytical framework that significantly enhances classification accuracy and metabolic insight. As food authentication challenges grow increasingly complex, leveraging the combined strengths of LC-HRMS and NMR will be essential for developing robust classification models that protect consumers and ensure product integrity within global food supply chains.

Data fusion is the process of integrating multiple data sources to produce more consistent, accurate, and useful information than that provided by any individual data source [11]. In scientific research, particularly in fields requiring high-precision classification such as food authenticity testing, data fusion provides a powerful framework for combining complementary analytical techniques. The core principle involves merging diverse data streams—such as Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy—to create a unified, comprehensive profile that surpasses the capabilities of any single method [3] [12].

The fundamental model for understanding data fusion processes is the JDL/DFIG model, which categorizes fusion into increasingly refined levels from Source Preprocessing (Level 0) to Mission Refinement (Level 6) [11]. For analytical chemistry applications, this translates to a workflow that progresses from raw instrumental data acquisition through feature extraction, multidimensional integration, and finally to classification and decision-making, enabling researchers to transform disconnected data points into actionable knowledge about geographical origin, adulteration, and quality of food products.

Data Fusion in Food Classification Research

The application of data fusion in food classification addresses significant challenges in modern food authentication. As global trade expands, so does the scope for food fraud, creating an urgent need for analytical methods that can detect increasingly sophisticated adulteration practices [3]. Traditional targeted analysis approaches, which focus on specific known markers, struggle to identify novel adulterants as fraudsters continuously adapt their methods. Data fusion enables a comprehensive untargeted screening approach that can detect deviations from authentic profiles even before specific adulteration methods are defined.

For complex classification problems such as determining the geographical origin of honey, data fusion is particularly valuable. Samples within a single country can be highly diverse due to different varieties, regional variations, and changing weather conditions between harvest years [3]. By combining complementary analytical techniques—typically LC-HRMS for sensitive detection of numerous compounds and NMR for robust, reproducible fingerprinting—researchers can build more accurate and robust classification models that capture the multifaceted nature of food authenticity.

Table 1: Analytical Techniques in Food Classification Data Fusion

| Technique | Key Advantages | Role in Data Fusion | Typical Data Output |

|---|---|---|---|

| LC-HRMS | High sensitivity; detects numerous compounds | Provides detailed compositional data | Chromatographic peaks with mass/charge ratios and intensities |

| NMR | High robustness and reproducibility; quantitative | Creates stable spectral fingerprint | Spectral bins with intensity values |

| Sensory Analysis | Direct quality assessment | Adds consumer-relevant attributes | Numerical scores from trained panels |

| Stable Isotope Analysis | Geographic discrimination | Provides origin traceability | Isotopic ratio values |

Experimental Protocols for LC-HRMS and NMR Data Fusion

LC-HRMS Analysis Protocol

Sample Preparation:

- Honey Extraction: Dilute 1 g of honey with 10 mL of acetonitrile:water (50:50, v/v) mixture.

- Centrifugation: Centrifuge at 10,000 × g for 10 minutes to remove particulate matter.

- Filtration: Pass supernatant through a 0.22 μm nylon membrane filter prior to analysis.

Instrumental Parameters:

- Chromatography: Utilize two complementary LC approaches: Hydrophilic Interaction Liquid Chromatography (HILIC, Accucore-150-Amide-HILIC 150 × 2.1 mm) in negative ion mode for polar compounds, and Reverse Phase (RP, Hypersil Gold C18* 150 × 2.1 mm) in positive ion mode for non-polar compounds.

- Mobile Phase: For both methods, use water and acetonitrile with acetic acid as modifier with gradient elution.

- Mass Spectrometry: Employ electrospray ionization (ESI) and acquire data in profile mode using variable Data-Independent Acquisition (vDIA) with full scan range of 100–1500 Da (MS1) and six fragmentation windows (MS2) [3].

Quality Control:

- Include procedural blanks to identify contamination.

- Use quality control samples (pooled from all samples) every 10 injections to monitor instrument stability.

- Add internal standards (e.g., sorbic acid in acetonitrile-water mixture) for normalization strategy.

NMR Analysis Protocol

Sample Preparation:

- Buffer Preparation: Prepare 0.2 M sodium phosphate buffer in D₂O (pH 6.0) containing 0.025% trimethylsilylpropanoic acid (TSP) as chemical shift reference.

- Honey Solution: Mix 40 mg of honey with 600 μL of NMR buffer.

- Centrifugation: Centrifuge at 12,000 × g for 10 minutes to remove any insoluble material.

- Transfer: Pipette 550 μL of supernatant into 5 mm NMR tubes.

Data Acquisition:

- Instrument: 600 MHz NMR spectrometer with cryoprobe.

- Temperature: Maintain at 298 K.

- Standard Protocol: Utilize one-dimensional NOESY-presat sequence for water suppression with 256 scans, 4s relaxation delay, and 100 ms mixing time.

- Spectral Parameters: Acquire 64k data points with spectral width of 20 ppm.

Processing Parameters:

- Apply exponential line broadening of 0.3 Hz before Fourier transformation.

- Manually correct phase and baseline.

- Calibrate spectrum to TSP at 0.0 ppm.

- Perform binning (bucketting) of 0.04 ppm regions for multivariate analysis.

Data Fusion and Analysis Protocol

The BOULS (Bucketing of Untargeted LCMS Spectra) approach provides a specialized workflow for fusing LC-HRMS data from different devices and timepoints, addressing the critical challenge of combining disparate datasets in routine analysis [3].

Data Preprocessing:

- LC-HRMS Processing: Convert raw files to mzML format using MSConvert with peak picking filter to centroid profile mode data. Import into R using Bioconductor package mzR.

- NMR Processing: Process NMR data with established protocols including phase correction, baseline correction, and reference alignment.

- Retention Time Alignment: For LC-HRMS data, align retention times using a centrally maintained reference spectrum to enable comparison across different analytical batches.

- Three-Dimensional Bucketing: Divide LC-HRMS data into buckets across retention time, mass-to-charge ratio (m/z), and feature intensity dimensions. For NMR data, employ traditional spectral binning (typically 0.04 ppm regions).

Feature Integration and Model Building:

- Data Fusion: Combine LC-HRMS buckets with NMR bins to create integrated feature matrix.

- Normalization: Apply probabilistic quotient normalization to account for overall concentration differences.

- Multivariate Analysis: Utilize Random Forest algorithm with 1000 trees for classification modeling, using out-of-bag error estimation for internal validation.

- Model Validation: Employ independent test set validation (typically 70:30 training:test split) and cross-validation to assess model performance.

Data Fusion Workflow for Food Authentication

Mathematical Foundations of Data Fusion

Data fusion methodologies are underpinned by sophisticated mathematical frameworks that enable the integration of heterogeneous data sources. Two prominent approaches for fusing diverse datasets are Collective Matrix Factorization (CMF) and Coupled Matrix and Tensor Factorizations (CMTF) [12].

Collective Matrix Factorization (CMF)

CMF is a powerful data fusion technique based on joint matrix decomposition that simultaneously analyzes multiple datasets from diverse sources. The core concept involves factorizing multiple relation matrices that share one or more common modes, thereby revealing hidden or latent associations that might not be apparent when analyzing individual datasets separately [12].

Given two matrices ( X \in \mathbb{R}^{I \times J} ) and ( Y \in \mathbb{R}^{I \times K} ) that are coupled through a common mode, the CMF can be formally represented as:

[ \min{A,B,C} f(A,B,C) = \| X - AB^T \|F^2 + \| Y - AC^T \|_F^2 ]

Where:

- ( A \in \mathbb{R}^{I \times R} ) is the shared factor matrix of both ( X ) and ( Y )

- ( B \in \mathbb{R}^{J \times R} ) and ( C \in \mathbb{R}^{K \times R} ) are factor matrices specific to ( X ) and ( Y ), respectively

- ( \| \cdot \|_F ) denotes the Frobenius norm

- ( R ) represents the number of latent components

This formulation enables the transfer of information through the common mode between different matrices, with fused multiple data sources achieving higher accuracy than single data sources [12].

Multi-Kernel Learning for Heterogeneous Data Fusion

For heterogeneous data types that cannot be directly combined, multi-kernel learning schemes provide an effective fusion approach by transforming disparate data into a homogeneous kernel space where similarities can be meaningfully compared and combined [13].

The kernel transformation of information from each modality ( \phim ) results in a corresponding kernel gram matrix ( Km ). These may then be combined in a weighted manner as:

[ \tilde{K}(i,j) = \sum{m=1}^M \gammam K_m(i,j) ]

Where ( \gamma_m ) represents the weight assigned to modality ( m ), which can be optimized based on its relative importance or discriminative power. This kernel combination approach, particularly the Semi-Supervised Multi-Kernel (SeSMiK) method, has demonstrated superior performance in integrating imaging and non-imaging data for biomedical applications, and shows significant promise for food authentication challenges [13].

Table 2: Data Fusion Methodologies and Applications

| Fusion Method | Mathematical Basis | Advantages | Application in Food Analysis |

|---|---|---|---|

| Collective Matrix Factorization (CMF) | Joint matrix decomposition of coupled matrices | Information transfer through common modes; reveals latent associations | Integrating LC-HRMS data with sensory evaluation scores |

| Coupled Matrix Tensor Factorization (CMTF) | Joint analysis of matrices and tensors | Handles heterogeneous data orders; natural extension of CMF | Fusing multi-dimensional NMR data with compositional tables |

| Multi-Kernel Learning | Kernel space projections and combinations | Handles diverse data representations; optimal weighting | Combining spectral data with chromatographic fingerprints |

| Consensus Embedding | Ensemble of embeddings from feature subsets | Robust to noise and parameter selection | Geographic origin classification using multiple analytical techniques |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful implementation of data fusion strategies for food classification requires carefully selected reagents, materials, and computational tools. The following table details essential components for LC-HRMS and NMR data fusion experiments.

Table 3: Research Reagent Solutions for LC-HRMS NMR Data Fusion

| Item | Specification/Type | Function in Protocol |

|---|---|---|

| Chromatography Columns | HILIC (Accucore-150-Amide-HILIC) and RP (Hypersil Gold C18) | Separation of polar and non-polar compounds respectively |

| LC-MS Grade Solvents | Acetonitrile, Water, Methanol with 0.1% Formic Acid | Mobile phase preparation; sample extraction |

| Internal Standards | Sorbic Acid, TSP (Trimethylsilylpropanoic acid) | Retention time alignment (LC-MS); chemical shift reference (NMR) |

| NMR Buffer | 0.2 M Sodium Phosphate Buffer in D₂O, pH 6.0 | Provides consistent pH and deuterium lock for NMR |

| Quality Control Materials | Certified Reference Materials (CRMs), Pooled Quality Control Samples | Monitoring instrument performance; batch-to-batch normalization |

| Data Processing Software | R packages (xcms, mzR), Python (scikit-learn), MATLAB | Data preprocessing, feature extraction, and model building |

Data Fusion Method Classification

Data fusion represents a paradigm shift in analytical chemistry for food classification, moving beyond single-technique approaches to integrated methodologies that leverage the complementary strengths of multiple analytical platforms. The fusion of LC-HRMS and NMR data, supported by robust mathematical frameworks such as collective matrix factorization and multi-kernel learning, creates synergistic effects that enhance classification accuracy, enable detection of novel adulteration patterns, and provide comprehensive product authentication.

For researchers and drug development professionals, implementing the protocols and methodologies outlined in this article requires careful attention to experimental design, data preprocessing consistency, and appropriate selection of fusion algorithms based on the specific characteristics of the data being integrated. As the field advances, the development of standardized data fusion workflows and validation frameworks will be crucial for widespread adoption in regulatory and quality control environments, ultimately strengthening global food supply chains and protecting consumer interests through more sophisticated authentication capabilities.

Untargeted metabolomics has emerged as a powerful analytical strategy for comprehensive food fingerprinting, enabling the simultaneous analysis of a wide range of small-molecule metabolites to verify authenticity, ensure quality, and detect adulteration [14]. This approach provides a snapshot of the metabolic activity in food products, reflecting factors such as geographical origin, raw material composition, and processing techniques [14] [15]. Within food authentication, untargeted metabolomics is technically implemented to ensure consumer protection through strict inspection and enforcement of food labeling, ultimately detecting deliberate adulteration that compromises food quality and safety [14].

The core principle of untargeted metabolomics lies in its ability to perform global analysis of all detectable analytes in a sample without prior knowledge of which metabolites will be detected [14]. This extensive nature makes it particularly valuable for uncovering emerging fraudulent practices in the food industry, as it can reveal unexpected compositional differences without targeting specific compounds [14]. When integrated with chemometric techniques, untargeted metabolomics can identify subtle patterns in complex data that serve as characteristic fingerprints for authentic products [3] [15].

Core Analytical Techniques and Instrumentation

Fundamental Analytical Platforms

The application of untargeted metabolomics in food fingerprinting primarily relies on two analytical platforms: mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy. Each platform offers distinct advantages that contribute complementary information to food authentication studies [16].

Liquid Chromatography-Mass Spectrometry (LC-MS), particularly high-resolution mass spectrometry (HRMS), provides exceptional sensitivity and a wide dynamic range for detecting metabolites at various concentration levels [3] [16]. The coupling with chromatography (liquid or gas) enables the separation of complex matrices, facilitating the detection and quantification of trace metabolites in food samples [16]. Common configurations include reverse-phase (RP) chromatography for non-polar compounds and hydrophilic interaction liquid chromatography (HILIC) for polar compounds, often analyzed in positive and negative ion modes respectively to maximize metabolite coverage [3].

NMR spectroscopy, while less sensitive than MS, offers significant advantages as a non-destructive technique that provides valuable structural elucidation and enables precise metabolite quantification without extensive sample preparation [16]. Proton NMR (1H-NMR) is particularly valuable for profiling major metabolites in food samples and generates highly reproducible data that can be compared across different instruments and laboratories over time [3].

Data Fusion Strategies for Enhanced Classification

The integration of data from multiple analytical platforms through data fusion (DF) strategies significantly enhances the classification power of untargeted metabolomics for food fingerprinting [1] [16]. Data fusion methodologies combine the complementary strengths of different techniques, such as LC-HRMS and 1H-NMR, to provide a more comprehensive view of the food metabolome than any single technique can achieve alone [16].

Table 1: Data Fusion Strategies in Untargeted Metabolomics

| Fusion Level | Description | Methodologies | Advantages | Limitations |

|---|---|---|---|---|

| Low-Level | Direct concatenation of raw or pre-processed data matrices | PCA, PLS | Preserves all original information | High dimensionality; Requires careful data scaling |

| Mid-Level | Concatenation of features extracted from individual datasets | PCA, PARAFAC, MCR-ALS | Reduces dimensionality; Highlights relevant features | Potential loss of information during feature extraction |

| High-Level | Combination of model outputs or decisions | Bayesian consensus, majority voting | Flexible; Can integrate heterogeneous models | Complex interpretation; May not exploit variable interactions |

Research demonstrates that data fusion approaches significantly improve predictive accuracy in food classification. A study on Amarone wine authentication achieved a lower classification error rate (7.52%) when using LC-HRMS and 1H NMR data fusion compared to individual techniques, with notable variations in amino acids, monosaccharides, and polyphenolic compounds during the withering process [1]. The limited correlation between datasets (RV-score = 16.4%) highlighted their complementarity, confirming the value of multi-platform approaches [1].

Experimental Protocols for Food Fingerprinting

Sample Preparation and Analysis

Proper sample preparation is critical for obtaining reliable metabolomic data. While specific protocols vary depending on the food matrix and analytical platform, the following general procedures apply to most food authentication studies:

Sample Extraction and Metabolite Isolation:

- Homogenization of food samples to ensure representative sampling

- Metabolite extraction using appropriate solvent systems (e.g., methanol-water or chloroform-methanol mixtures) to cover diverse metabolite classes

- Centrifugation and filtration to remove particulate matter and macromolecules

- Concentration or dilution to appropriate levels for analytical detection

LC-HRMS Analysis:

- Chromatographic separation: Utilize both RP and HILIC methods to cover polar and non-polar metabolites [3]

- Mass spectrometric detection: Employ high-resolution instruments such as Orbitrap or Q-TOF mass analyzers for accurate mass measurements [3] [15]

- Data acquisition: Use both full-scan MS and data-dependent MS/MS acquisition to enable compound identification [3]

- Quality control: Include pooled quality control samples (from all samples) throughout the sequence to monitor instrument stability

1H-NMR Analysis:

- Sample preparation: Mix food extracts with deuterated solvent (e.g., D₂O or CD₃OD) containing a reference standard (e.g., TSP or DSS) for chemical shift calibration [16]

- Data acquisition: Acquire spectra with sufficient scans to achieve adequate signal-to-noise ratio, using standard pulse sequences (e.g., NOESY-presat for water suppression) [16]

- Temperature control: Maintain constant temperature during analysis to ensure chemical shift reproducibility [16]

Data Processing Workflows

Raw data from analytical instruments require extensive processing to extract meaningful biological information. The workflow typically involves multiple steps:

Table 2: Key Data Preprocessing Steps in Untargeted Metabolomics

| Processing Step | Description | Common Tools/Approaches |

|---|---|---|

| Feature Detection | Identification of chromatographic peaks and spectral features | XCMS, MS-DIAL, Progenesis QI |

| Retention Time Alignment | Correction of retention time shifts between samples | XCMS, MZmine |

| Missing Value Imputation | Handling of missing data points | K-nearest neighbors, minimum value replacement |

| Data Pretreatment | Scaling and transformation to enhance biological information | Pareto scaling, autoscaling, log transformation [17] |

For LC-HRMS data, the BOULS (Bucketing of Untargeted LCMS Spectra) approach enables analysis of data obtained from different devices and times without reprocessing entire datasets [3]. This method uses a central spectrum for retention time alignment and implements three-dimensional bucketing (retention time, m/z, and intensity), allowing newly acquired spectra to be classified and added to training datasets efficiently [3].

Statistical Analysis and Model Building

Chemometric analysis is essential for interpreting complex metabolomic data and building classification models:

- Unsupervised methods such as Principal Component Analysis (PCA) explore natural clustering patterns without prior knowledge of sample classes [15]

- Supervised methods including Partial Least Squares-Discriminant Analysis (PLS-DA) and Random Forest (RF) build predictive models using known class information to maximize separation between groups [3] [15]

Random Forest is particularly effective for food authentication, as it handles high-dimensional data well and provides variable importance measures for identifying discriminatory metabolites [3]. In honey authentication studies, RF models based on LC-HRMS data achieved 94% classification accuracy for 126 test samples from six different countries [3].

Experimental Workflow and Data Fusion Strategy

The following diagrams illustrate the core workflows and relationships in untargeted metabolomics for food fingerprinting.

Untargeted Metabolomics Workflow for Food Fingerprinting

Data Fusion Strategies for Enhanced Classification

Essential Research Reagents and Materials

Successful implementation of untargeted metabolomics for food fingerprinting requires specific reagents and analytical materials. The following table outlines key solutions and their functions:

Table 3: Essential Research Reagent Solutions for Untargeted Metabolomics

| Reagent/Material | Function | Application Notes |

|---|---|---|

| LC-MS Grade Solvents (acetonitrile, methanol, water) | Mobile phase preparation; Sample extraction | High purity minimizes background interference and ion suppression |

| Deuterated NMR Solvents (D₂O, CD₃OD) | NMR sample preparation; Field frequency locking | Provides deuterium signal for instrument locking; minimizes solvent background |

| Internal Standards (stable isotope-labeled compounds) | Quality control; Quantification | Corrects for instrument variation; enables semi-quantitative analysis |

| Chemical Shift References (TSP, DSS) | NMR chemical shift calibration | Provides reference point (0 ppm) for spectral alignment |

| Ionization Additives (formic acid, ammonium acetate) | LC-MS mobile phase modifiers | Enhances ionization efficiency in positive/negative MS modes |

| Metabolite Extraction Solvents (chloroform, methanol, water) | Comprehensive metabolite extraction | Biphasic system extracts both polar and non-polar metabolites |

Applications in Food Authentication

Untargeted metabolomics has demonstrated significant utility across various food authentication applications:

Geographical Origin Verification

The geographical authentication of traditional foods represents a major application of untargeted metabolomics. Studies on products such as Pempek (traditional Indonesian fish cake) have successfully identified region-specific metabolite markers using HRMS-based approaches [15]. Similarly, research on honey authentication achieved high classification accuracy (94%) for geographical origin using LC-HRMS profiling combined with machine learning [3]. These approaches detect subtle variations in metabolic profiles resulting from differences in raw materials, soil composition, climate, and traditional production methods unique to each region [15].

Adulteration Detection

Untargeted metabolomics effectively identifies food adulteration through unexpected metabolic patterns. Common issues detected include:

- Undeclared addition of water, sugar, acid, pulp wash, or peel extracts to fruit juices [14]

- Oil blending without declaration, particularly addition of lower-quality oils to extra virgin olive oil [14]

- Species substitution in meat and fish products [14]

- Mislabelling of conventional products as organic [14]

The non-targeted nature of this approach is particularly valuable for detecting novel adulterants that may not be identified through targeted methods [3].

Processing Method Verification

Metabolomic fingerprints can distinguish processing techniques such as:

- Withering time in Amarone wine production [1]

- Yeast strain differentiation in fermentation processes [1]

- Thermal processing methods (irradiation, freezing, microwave heating) [14]

- Cheese production methods (raw vs. heat-treated milk) [14]

Untargeted metabolomics represents a powerful framework for comprehensive food fingerprinting, offering unprecedented capability to verify authenticity, detect adulteration, and ensure food quality. The integration of multiple analytical platforms through data fusion strategies significantly enhances classification power beyond the capabilities of individual techniques [1] [16]. As food fraud methods evolve, the untargeted nature of this approach provides a critical advantage in identifying emerging fraudulent practices without prior knowledge of specific adulterants [3].

The successful implementation of untargeted metabolomics requires careful attention to experimental design, sample preparation, data processing, and statistical modeling to generate robust classification models. With proper validation, these approaches can achieve high classification accuracy exceeding 90% for complex authentication challenges such as geographical origin determination [3]. As databases of authentic food fingerprints expand and analytical technologies advance, untargeted metabolomics is poised to play an increasingly vital role in global food authentication systems.

Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy are two cornerstone analytical techniques in modern foodomics. Their integration through data fusion strategies provides a powerful framework for addressing complex challenges in food authenticity, origin traceability, and quality control [1] [18] [19]. LC-HRMS offers exceptional sensitivity, enabling the detection and identification of numerous metabolites at low concentration levels, while NMR provides highly reproducible, quantitative data and unparalleled structural elucidation capabilities, distinguishing between isomers that are often indistinguishable by MS alone [18] [3]. The synergy created by fusing datasets from these platforms delivers a more comprehensive metabolic fingerprint of a food product than any single technique can achieve, significantly enhancing the accuracy of classification models [1] [20] [19].

Table 1: Comparison of LC-HRMS and NMR Spectroscopy in Food Metabolomics

| Feature | LC-HRMS | 1H NMR |

|---|---|---|

| Sensitivity | High (femtomole level) [18] | Low (microgram level) [18] |

| Selectivity | High | Moderate |

| Structural Information | Molecular formula, fragmentation patterns [18] | Direct information on functional groups and connectivity [18] |

| Quantitation | Semi-quantitative, suffers from matrix effects [18] | Inherently quantitative [18] |

| Sample Throughput | Moderate | High |

| Robustness & Reproducibility | Requires careful standardization [3] | Highly robust and reproducible [3] |

| Key Metabolites | Polyphenols, lipids, semi-polar compounds [1] | Amino acids, sugars, organic acids, polar metabolites [1] |

Application Note 1: Classification of Amarone Wine

Experimental Protocol

Objective: To classify Amarone wine samples based on grape withering time and yeast strain using a multi-omics data fusion approach [1].

Sample Preparation:

- Wine Samples: Analyze 80 Amarone wine samples representing different withering times and yeast strains [1].

- LC-HRMS Analysis:

- Instrumentation: Utilize a UHPLC system coupled to a Q-Exactive Orbitrap mass spectrometer or equivalent.

- Chromatography: Employ a reversed-phase C18 column (e.g., 2.1 x 100 mm, 1.7 µm). The mobile phase consists of (A) water and (B) acetonitrile, both with 0.1% formic acid.

- Gradient: Use a linear gradient from 5% to 95% B over 25 minutes.

- MS Parameters: Acquire data in both positive and negative ionization modes with a mass resolution of >70,000 full width at half maximum (FWHM) [3].

- NMR Analysis:

- Sample Preparation: Mix 300 µL of wine with 300 µL of phosphate buffer (pH 7.4) in D₂O containing 0.1% TSP (sodium trimethylsilylpropanesulfonate) as an internal chemical shift reference [19].

- Instrumentation: Conduct 1D ¹H NMR experiments on a 600 MHz spectrometer equipped with a cryoprobe.

- Acquisition: Collect spectra at 25°C with a sufficient number of scans to achieve a high signal-to-noise ratio [1].

Data Processing and Fusion:

- LC-HRMS Data: Pre-process raw data using software like XCMS for peak picking, alignment, and integration. Perform compound annotation using accurate mass and MS/MS spectra against databases [3].

- NMR Data: Process free induction decays (FIDs) by applying Fourier transformation, phase correction, and baseline correction. Align spectra to TSP (δ 0.0 ppm) and bin the data (e.g., δ 0.04 ppm buckets) to reduce dimensionality [19].

- Mid-Level Data Fusion: Export the normalized and scaled peak tables from LC-HRMS and NMR as separate data blocks. Fuse the datasets by concatenating the significant features (Variables Important in Projection, VIP >1.0) identified from preliminary multivariate analysis of each block [1] [21].

Multivariate Data Analysis:

- Unsupervised Analysis: Perform Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS) on the fused data matrix to explore natural clustering without a priori sample knowledge [1].

- Supervised Analysis: Develop a classification model using Sparse Partial Least Squares-Discriminant Analysis (sPLS-DA) on the fused dataset to discriminate samples based on withering time and yeast strain. Validate the model using cross-validation and permutation tests [1].

Key Findings and Significance

The data fusion approach successfully classified Amarone wines with a lower classification error rate (7.52%) compared to models built with individual techniques [1]. The multi-omics pseudo-eigenvalue space revealed a limited correlation between the LC-HRMS and NMR datasets (RV-score = 16.4%), underscoring their complementarity [1]. Significant variations in amino acids, monosaccharides, and polyphenolic compounds were identified as key discriminators for the withering time, providing a broader characterization of the wine metabolome [1].

Figure 1: Experimental workflow for the multi-omics analysis of Amarone wine, from sample preparation to data analysis [1] [19].

Application Note 2: Geographical Origin Authentication of Salmon

Experimental Protocol

Objective: To determine the geographical origin and production method (wild vs. farmed) of salmon using a mid-level data fusion strategy [20].

Sample Preparation:

- Salmon Samples: Collect muscle tissue from 522 salmon samples of known provenance (e.g., Alaska, Norway, Iceland, Scotland) and production method [20].

- REIMS Analysis (Lipidomics):

- Instrumentation: Use a REIMS source coupled to a high-resolution mass spectrometer.

- Procedure: Apply a bipolar radiofrequency to the salmon tissue to generate an aerosol of charged ions. This is typically done in conjunction with an electrosurgical knife for rapid analysis.

- MS Parameters: Acquire mass spectra in negative ion mode over a mass range of m/z 150–1500 [20].

- ICP-MS Analysis (Elemental Profile):

- Sample Digestion: Accurately weigh ~0.2 g of dried salmon tissue. Digest with 5 mL of concentrated nitric acid using a closed-vessel microwave system.

- Instrumentation: Analyze the digestate using an ICP-MS.

- Parameters: Monitor relevant isotopes (e.g., ⁵⁵Mn, ⁷⁵As, ¹¹¹Cd, ²⁰⁸Pb) and use internal standards (e.g., Sc, Ge, Rh) for quantification [20].

Data Processing and Fusion:

- REIMS Data: Normalize the spectral data to the total ion count. Perform peak picking and alignment.

- ICP-MS Data: Normalize the elemental concentration data. Log-transform the data if necessary to achieve a normal distribution.

- Mid-Level Data Fusion: From the REIMS data, select the top 18 significant lipid features (e.g., fatty acids, phospholipids). From the ICP-MS data, select the top 9 significant elemental markers. Combine these selected features into a single fused data matrix for subsequent modeling [20].

Multivariate Data Analysis:

- Exploratory Analysis: Use Principal Component Analysis (PCA) on both individual and fused datasets to visualize natural clustering and identify outliers.

- Supervised Modeling: Construct an Orthogonal Partial Least Squares-Discriminant Analysis (OPLS-DA) model on the fused dataset to classify salmon origin. Validate the model with cross-validation and a separate test set (e.g., n=17) [20].

Table 2: Key Metabolite and Elemental Markers for Salmon Authenticity

| Analytical Platform | Marker Class | Example Compounds/Markers | Role in Discrimination |

|---|---|---|---|

| REIMS (Lipidomics) | Unsaturated Fatty Acids [20] | C7H12O2 (m/z 127.0759), C15H28O2 (m/z 239.2011) | Differentiate regional diets & metabolism |

| Diacylglycerophosphocholines [20] | GP0101 species | Indicate farming conditions & species | |

| Triacylglycerols [20] | GL0301 species | Reflect energy storage & nutritional status | |

| ICP-MS (Elemental) | Trace Elements [22] [20] | Mn, As, Cd, Pb | Fingerprint of water & sediment geology |

Key Findings and Significance

The mid-level data fusion of REIMS and ICP-MS data achieved a cross-validation classification accuracy of 100% for salmon origin, a performance not attainable with single-platform methods [20]. All independent test samples (n=17) were correctly assigned to their geographical origin. The study identified 18 robust lipid markers and 9 elemental markers that provided strong evidence for the provenance of the salmon, demonstrating the power of this fused omics approach for high-stakes authenticity control in complex food supply chains [20].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents and Materials for LC-HRMS and NMR Foodomics

| Item | Function/Application | Example/Note |

|---|---|---|

| LC-HRMS Grade Solvents | Mobile phase preparation; minimizes background noise & ion suppression [18] | Acetonitrile, Methanol, Water (all LC-MS grade) |

| Deuterated NMR Solvents | Provides field-frequency lock and solvent signal for NMR; crucial for quantitative analysis [18] | D₂O, Deuterated Chloroform (CDCl₃), Deuterated Methanol (CD₃OD) |

| Internal Standards | Data normalization; correction for instrumental drift [18] [3] | Sorbic acid (for LC-HRMS) [3], TSP (for NMR) [18] |

| Solid Phase Extraction (SPE) Cartridges | Sample clean-up; metabolite enrichment/purification prior to analysis | C18, HLB, Ion Exchange phases |

| Chemical Reference Standards | Metabolite identification and confirmation; required for definitive annotation [19] | Forsythiaside A, Phillyrin [21] |

Standardized Protocol for LC-HRMS/NMR Data Fusion

Sample Preparation Workflow

- Homogenization: For solid food matrices, homogenize the sample to a fine powder under liquid nitrogen to preserve metabolite integrity.

- Metabolite Extraction:

- Weigh an aliquot (e.g., 100 mg) of the homogenized sample.

- Add a mixture of a polar solvent (e.g., methanol:water, 4:1 v/v) and a non-polar solvent (e.g., chloroform) for comprehensive metabolite extraction. Include appropriate internal standards at this stage.

- Vortex vigorously, sonicate in an ice bath, and centrifuge to separate phases.

- Collect the polar (upper) phase for LC-HRMS and NMR analysis, and the non-polar (lower) phase for lipidomics [19].

- Preparation for LC-HRMS: Dilute the polar extract with LC-MS grade water, if necessary, and filter through a 0.22 µm membrane into an LC vial [3].

- Preparation for NMR: Mix an aliquot of the polar extract with a phosphate buffer in D₂O (pH 7.4) containing TSP. Transfer to a standard 5 mm NMR tube [19].

Data Integration and Analysis Workflow

The logical flow for processing and integrating data from the two analytical platforms is outlined below. This workflow ensures that the complementary data are effectively combined for a robust classification model.

Figure 2: Generic data processing and fusion workflow for food classification using LC-HRMS and NMR data [1] [19] [21].

Critical Steps and Troubleshooting

- Mobile Phase Compatibility: For online LC-NMR-MS, the use of deuterated mobile phases is ideal but costly. A practical compromise is using deuterated water (D₂O) in the aqueous mobile phase to reduce solvent interference in NMR, while accepting a slight retention time shift compared to all-protonated LC-MS methods [18].

- Sensitivity Balance: The inherent sensitivity difference between LC-HRMS and NMR is a major challenge. To mitigate this, consider using a cryoprobed NMR spectrometer and microcoil probes, or off-line fraction collection followed by concentration of LC eluent for NMR analysis of low-abundance metabolites [18].

- Data Quality Control: For LC-HRMS, analyze a pooled Quality Control (QC) sample throughout the run to monitor instrument stability and for data normalization [3]. For NMR, ensure consistent sample pH and temperature during acquisition to prevent chemical shift drift [19].

From Theory to Practice: Implementing Data Fusion Strategies and Analytical Workflows

In the field of food authenticity and metabolomics, no single analytical technique can comprehensively capture the full complexity of a sample's chemical composition. Data fusion has emerged as a powerful multidisciplinary strategy that integrates datasets obtained from various independent analytical techniques to provide insights that surpass those achievable from any single approach [16] [23]. This integrated approach is particularly valuable in food classification research, where combining complementary data from techniques such as Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy provides a more holistic characterization of food samples [1] [24].

The fundamental principle behind data fusion is that different analytical techniques offer unique yet complementary information. For instance, LC-HRMS provides high sensitivity for detecting trace metabolites, while NMR offers robust structural elucidation and precise quantification capabilities [16]. When combined, these techniques enable researchers to build more robust classification models for determining geographical origin, production methods, and authenticity of various food products [1] [25] [26]. Studies have demonstrated that data fusion significantly enhances classification accuracy, with one review noting positive effects in 81% of food authenticity applications [23] [27].

Table 1: Comparison of Data Fusion Levels in Analytical Chemistry

| Fusion Level | Data Handling Approach | Key Advantages | Common Algorithms |

|---|---|---|---|

| Low-Level | Direct concatenation of raw or pre-processed data matrices | Preserves all original information; simple implementation | PCA, PLS [16] [23] |

| Mid-Level | Integration of extracted features from each dataset | Reduces dimensionality; removes noise | PCA, PLS, PARAFAC, MCR-ALS [16] [23] |

| High-Level | Combination of model outputs or decisions | Handles heterogeneous data well; reduces uncertainty | Bayesian methods, voting schemes, fuzzy aggregation [16] [23] |

The Three-Level Hierarchy of Data Fusion

Low-Level Data Fusion (LLDF)

Low-Level Data Fusion, also referred to as block concatenation, represents the most straightforward approach to data integration [16]. This method involves the direct concatenation of two or more data matrices originating from different analytical sources into a single, combined matrix for subsequent analysis [23] [27]. The fusion occurs at the most basic level of data representation, typically using raw or minimally pre-processed data from each technique.

The implementation of LLDF requires careful pre-processing to ensure meaningful integration, which can be divided into three critical stages: (1) correction of signal acquisition artefacts for each individual analytical platform; (2) equalization of contributions from different data blocks using methods such as mean centering or unit variance scaling; and (3) adjustment of weights assigned to each data block to account for differences in variance and dimensionality [16]. Without proper inter-block equalization, the analysis tends to be dominated by the data block with the greatest variance, potentially obscuring valuable information from other sources [16].

The primary advantage of LLDF is its simplicity and preservation of all original data, making it particularly useful when the relationships between variables from different sources are important to maintain. However, this approach faces significant challenges, especially when dealing with high-dimensional data. The concatenated matrix often contains a vast number of variables, frequently exceeding the number of observations, which can lead to computational inefficiencies and model overfitting [16] [23]. Additionally, LLDF can amplify noise and include redundant information, potentially diluting the relevant chemical signals [23] [27].

Mid-Level Data Fusion (MLDF)

Mid-Level Data Fusion addresses several limitations of low-level fusion by incorporating a feature extraction step before data integration [16] [23]. This two-stage methodology first reduces the dimensionality of each individual data matrix to extract the most meaningful features, then concatenates these selected features into a single matrix for subsequent analysis [16]. This approach significantly decreases data complexity while preserving the most relevant information from each analytical technique.

The feature extraction process typically employs dimensionality reduction techniques such as Principal Component Analysis (PCA), which transforms the original variables into a smaller set of principal components that capture the maximum variance in the data [16] [23]. For higher-order data structures, alternative factorization methods may be employed, including Parallel Factor Analysis (PARAFAC), Multivariate Curve Resolution-Alternating Least Squares (MCR-ALS), or more recently developed approaches like Multimodal Multitask Matrix Factorization [16]. These techniques effectively distill the essential information from each data block while filtering out noise and irrelevant variables.

The advantages of MLDF are particularly evident in food classification applications. For example, in a study distinguishing green and ripe Forsythiae Fructus, mid-level fusion of LC-MS and HS-GC-MS data produced an OPLS-DA model with superior performance (R²Y = 0.986, Q² = 0.974) compared to models built from either technique alone [21]. Similarly, research on salmon authenticity demonstrated that mid-level fusion of REIMS and ICP-MS data achieved 100% classification accuracy for geographical origin and production method, a feat not possible with single-platform methods [25] [28]. This fusion approach also identified 18 lipid markers and 9 elemental markers that provided robust evidence of salmon provenance [25].

High-Level Data Fusion (HLDF)

High-Level Data Fusion, also known as decision-level fusion, represents the most complex approach in the data fusion hierarchy [16] [23]. Rather than integrating raw data or extracted features, HLDF combines the outputs or decisions from multiple models built on individual data blocks [23] [27]. This approach operates at the highest level of abstraction, aggregating predictions, classifications, or statistical measures from separate analyses to produce a consensus outcome with reduced uncertainty [16].

The implementation of HLDF involves building independent classification or regression models for each analytical technique and then combining their outputs using aggregation strategies. These may include heuristic rules, Bayesian consensus methods, fuzzy aggregation strategies, or majority voting schemes [16] [23]. A relevant example in food authenticity is the multiblock DD-SIMCA method, which combines full distances from individual models into a single cumulative metric known as the Cumulative Analytical Signal [16]. This strategy preserves interpretability while allowing the contribution of each data block to be traced in the final classification.

The primary advantage of HLDF is its ability to effectively handle highly heterogeneous data from disparate analytical platforms that may have different dimensionality, scale, and pre-processing requirements [16]. Since each data block is modeled separately, platform-specific characteristics can be optimally addressed without compromising the integrity of individual analyses. Additionally, HLDF typically requires less computational resources for the final fusion step compared to low and mid-level approaches [23]. However, this method may not fully exploit potential interactions between variables from different sources, and the interpretability of the final fused model can be more challenging [16].

Table 2: Data Fusion Applications in Food Authenticity Research

| Application Area | Analytical Techniques | Fusion Level | Key Findings | Reference |

|---|---|---|---|---|

| Amarone Wine Classification | LC-HRMS, ¹H NMR | Multi-omics integration | Improved predictive accuracy of wine fingerprint; identified amino acids, monosaccharides, polyphenolics | [1] |

| Salmon Origin Authentication | REIMS, ICP-MS | Mid-level | 100% classification accuracy; identified 18 lipid and 9 elemental markers | [25] [28] |

| Hazelnut Geographical Origin | ¹H NMR, LC-HRMS, BSIA | Supervised multivariate (DIABLO) | Minimum error rate for origin and cultivar classification | [26] |

| Forsythiae Fructus Maturity | LC-MS, HS-GC-MS | Mid-level | Superior model performance (R²Y=0.986, Q²=0.974) vs single techniques | [21] |

Experimental Protocols for LC-HRMS and NMR Data Fusion

Sample Preparation Protocol

Materials and Reagents:

- Methanol (HPLC grade)

- Chloroform (HPLC grade)

- Deuterated solvent (e.g., D₂O, CD₃OD)

- Reference standard compounds (e.g., forsythiaside A, phillyrin)

- NMR reference compound (e.g., TSP, DSS)

Sample Extraction Procedure:

- Homogenization: Grind food samples (e.g., hazelnuts, salmon, grapes) to a fine powder using a laboratory mill [26].

- Dual Extraction: Weigh 100 mg of homogenized material and perform parallel extractions:

- For LC-HRMS: Extract with 1 mL methanol:water (80:20, v/v) by vortexing for 30 seconds, followed by sonication for 15 minutes at room temperature [26] [21].

- For NMR: Prepare a separate aliquot extracted with 1 mL deuterated methanol:deuterated water buffer (80:20, v/v) containing 0.01% TSP as internal reference [1].

- Centrifugation: Centrifuge both extracts at 14,000 × g for 10 minutes at 4°C to pellet insoluble material.

- Storage: Transfer supernatants to clean vials and store at -80°C until analysis.

Instrumental Analysis Parameters

LC-HRMS Analysis:

- Instrument Configuration: UPLC system coupled to Q/Orbitrap mass spectrometer [21]

- Chromatography Column: C18 column (100 × 2.1 mm, 1.7 μm)

- Mobile Phase:

- A: 0.1% formic acid in water

- B: 0.1% formic acid in acetonitrile

- Gradient Program: 5% B to 95% B over 25 minutes, hold 5 minutes, re-equilibrate [21]

- Mass Spectrometry:

- Ionization Mode: Electrospray ionization (ESI) in positive and negative modes

- Resolution: 70,000 full width at half maximum (FWHM)

- Mass Range: m/z 100-1500

- Collision Energies: Stepped energy (20, 40, 60 eV) for MS/MS fragmentation

NMR Spectroscopy:

- Instrument Configuration: 600 MHz NMR spectrometer with cryoprobe [1]

- Temperature: 298 K

- Pulse Sequence: NOESYPR1D with water presaturation [1]

- Spectral Width: 12 ppm

- Relaxation Delay: 4 seconds

- Acquisition Time: 2.5 seconds per scan

- Number of Scans: 64-128 depending on sample concentration

Data Pre-processing Workflow

LC-HRMS Data Processing:

- Peak Detection and Alignment: Use software (e.g., XCMS, Progenesis QI) for peak picking, alignment, and integration [21].

- Compound Identification: Match accurate mass and fragmentation patterns against databases (e.g., HMDB, LipidMaps) with Tier 1-4 confidence levels [25] [16].

- Data Matrix Construction: Create a matrix with samples as rows and normalized peak intensities as columns.

NMR Data Processing:

- Fourier Transformation: Process Free Induction Decay (FID) signals with exponential line broadening (0.3 Hz).

- Phase and Baseline Correction: Apply manual phase correction and automated baseline correction.

- Spectral Alignment: Reference spectra to internal standard (TSP at 0.0 ppm).

- Bucketing: Divide spectra into regions (0.04 ppm buckets) and integrate area [1].

- Data Matrix Construction: Create a matrix with samples as rows and bucket intensities as columns.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Materials for LC-HRMS/NMR Data Fusion

| Category | Item | Specification | Function in Protocol |

|---|---|---|---|

| Chromatography | C18 UPLC Column | 100 × 2.1 mm, 1.7 μm particle size | Separation of complex metabolite mixtures prior to MS detection |

| MS Calibration | Reference Mass Solution | ESI-L Low Concentration Tuning Mix | Daily mass accuracy calibration of HRMS instrument |

| NMR Standards | Deuterated Solvents | D₂O, CD₃OD (99.9% deuterated) | Provides locking signal for NMR spectrometer; dissolution medium |

| NMR Reference | TSP (Trimethylsilylpropanoic acid) | Sodium salt, 98% purity | Chemical shift reference (0.0 ppm) and quantification standard |

| Extraction Solvents | Methanol, Chloroform | HPLC grade, ≥99.9% purity | Extraction of broad range of metabolites (polar to non-polar) |

| Mobile Phase | Formic Acid | LC-MS grade, ≥99.8% purity | Modifier for mobile phase to enhance ionization in ESI-MS |

| Quality Control | Reference Compounds | Forsythiaside A, phillyrin (>95% purity) | System suitability testing and quality control of analyses |

The hierarchical framework of data fusion—comprising low-level, mid-level, and high-level approaches—provides food scientists with a systematic methodology for integrating complementary data from LC-HRMS and NMR platforms. As demonstrated across numerous food authenticity applications, including wine classification [1], salmon origin verification [25], and hazelnut geographical tracing [26], data fusion consistently enhances classification accuracy and provides a more comprehensive chemical characterization than single-technique approaches. The selection of an appropriate fusion level depends on multiple factors, including data dimensionality, computational resources, and the specific research objectives. As the field continues to evolve, data fusion strategies will play an increasingly vital role in addressing complex challenges in food authenticity, quality control, and metabolomics research.

This application note provides a detailed protocol for generating and processing data from Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy for food classification studies. The integration of these two analytical techniques, a strategy known as data fusion, significantly enhances the comprehensiveness of food metabolome coverage and improves the accuracy of classifying samples based on attributes like geographical origin, production method, or processing techniques [1] [25]. This protocol is framed within a broader research context focused on authenticating Amarone wine, though the principles are applicable to a wide range of food commodities [1].

The workflow is structured into three critical phases: sample preparation, data acquisition, and data pre-processing. Standardized procedures in each phase are crucial for ensuring data quality, reproducibility, and the successful integration of the two complementary data streams. LC-HRMS offers high sensitivity for detecting a wide array of metabolites, while ¹H NMR provides a highly reproducible and quantitative overview of the main components [1]. Their fusion creates a powerful tool for food authenticity analysis.

Sample Preparation Protocols

Consistent and correct sample preparation is the foundation for obtaining high-quality analytical data. The following protocols are tailored for wine analysis but can be adapted for other liquid food matrices.

General Sample Handling

- Initial Handling: For wine samples, ensure they are stored at 4°C prior to analysis. Allow samples to reach room temperature and mix by inversion to ensure homogeneity.

- Filtration: Filter all samples through a 0.22 µm syringe filter (e.g., nylon or PVDF) to remove any particulate matter that could damage instrumentation or cause spectral distortions [29] [30].

LC-HRMS Sample Preparation

The goal for LC-HRMS is to remove non-volatile salts and proteins while concentrating metabolites.

- Solid Phase Extraction (SPE):

- Use reversed-phase SPE cartridges (e.g., C18, 100 mg/3 mL bed volume).

- Conditioning: Sequentially pass 3 mL of methanol and 3 mL of acidified water (0.1% formic acid) through the cartridge.

- Loading: Load 1 mL of the filtered wine sample.

- Washing: Wash with 3 mL of acidified water (0.1% formic acid) to remove sugars, acids, and salts.

- Elution: Elute metabolites using 2 mL of a methanol/acetonitrile (80:20, v/v) solution.

- Post-Extraction Processing:

- Evaporate the eluent to dryness under a gentle stream of nitrogen gas at 40°C.

- Reconstitute the dry residue in 100 µL of a methanol/water (50:50, v/v) solution.

- Transfer to a low-volume LC vial with insert for analysis [1].

¹H NMR Sample Preparation

The goal for NMR is to prepare a perfectly clear, particulate-free solution in a deuterated solvent in a high-quality NMR tube.

- Sample Preparation:

- NMR Tube Preparation:

- Use high-quality 5 mm NMR tubes (e.g., from Wilmad or Norell) suitable for at least 400 MHz frequency. Avoid disposable or scratched tubes [29] [30].

- Transfer the 600 µL sample solution to the NMR tube using a Pasteur pipette, ensuring the filling height is approximately 4 cm.

- Cap the tube securely and label it with a permanent marker directly on the tube or cap.

Table 1: Key Research Reagent Solutions for LC-HRMS/NMR Workflow

| Item | Function/Brief Explanation |

|---|---|

| C18 SPE Cartridges | To isolate and concentrate semi-polar and non-polar metabolites from the aqueous wine matrix for LC-HRMS analysis. |

| Deuterated Solvent (e.g., D₂O) | Provides a signal for the spectrometer's lock system and shimming, and creates an "invisible" background for ¹H NMR. |

| Internal Standard (TSP) | Provides a precise internal reference point (0.0 ppm) for chemical shift calibration in ¹H NMR spectra of aqueous solutions. |

| Deuterated Buffer (pH 7.4) | Maintains a constant pH for all samples, ensuring reproducibility of chemical shifts in NMR spectra. |

| High-Quality NMR Tubes | Precision tubes ensure magnetic field homogeneity, which is critical for achieving high-resolution NMR spectra. |

Data Acquisition Parameters

Standardized acquisition methods are vital for generating consistent and comparable datasets.

LC-HRMS Data Acquisition

- Chromatography:

- Column: Reversed-phase C18 column (e.g., 150 x 2.1 mm, 1.7 µm).

- Mobile Phase A: Water with 0.1% formic acid.

- Mobile Phase B: Acetonitrile with 0.1% formic acid.

- Gradient: Use a linear gradient from 2% to 98% B over 25 minutes.

- Flow Rate: 0.3 mL/min.

- Column Temperature: 40°C.

- Injection Volume: 5 µL.

- Mass Spectrometry:

- Ionization: Electrospray Ionization (ESI) in both positive and negative modes.

- Mass Resolution: > 50,000 Full Width at Half Maximum (FWHM).

- Mass Range: 50 - 1200 m/z.

- Collision Energy: Use data-dependent acquisition (DDA) with a stepped collision energy (e.g., 10, 30, 50 eV) to generate MS/MS spectra for metabolite annotation [1] [31].

¹H NMR Data Acquisition

- Spectrometer: A 600 MHz NMR spectrometer is recommended for optimal spectral dispersion and sensitivity.

- Probe: A triple-resonance inverse detection cryoprobe for enhanced sensitivity.

- Key Acquisition Parameters:

- Pulse Sequence: 1D NOESY-presat for water suppression.

- Spectral Width: 14 ppm.

- Relaxation Delay (D1): 4 seconds.

- Number of Scans: 128.

- Temperature: 298 K (25°C) [1].

Data Pre-processing Workflows

Pre-processing converts raw instrumental data into a structured data matrix suitable for statistical analysis and data fusion.

LC-HRMS Data Pre-processing

LC-HRMS data processing aims to detect metabolic features (defined by m/z and retention time (RT)) and align them across all samples.

- Software: Tools like XCMS (in R), MS-DIAL, or MZmine 2 can be used [31].

- Key Steps:

- Format Conversion: Convert raw data files to an open format like .mzML.

- Peak Picking: Identify chromatographic peaks with a signal-to-noise ratio above a set threshold.

- Retention Time Alignment: Correct for minor RT shifts between runs (e.g., using Obiwarp or LOESS).

- Grouping: Group corresponding peaks across samples.

- Gap Filling: Re-integrate missing peaks in samples where they were initially undetected.

- Annotation: Use in-house or public databases (e.g., HMDB, LipidMaps) to annotate features based on exact mass and MS/MS spectra [31].

- Output: A peak intensity table (data matrix) where rows are samples, columns are metabolic features (m/z/RT pairs), and values are peak intensities.

¹H NMR Data Pre-processing

NMR data processing focuses on extracting quantitative spectral information.

- Software: TopSpin (Bruker), Chenomx NMR Suite, or in-house scripts in MATLAB/R.

- Key Steps:

- Fourier Transformation: Convert the raw time-domain (FID) data to a frequency-domain spectrum.

- Phasing & Baseline Correction: Ensure a flat baseline and purely absorptive peaks.

- Referencing: Calibrate the chemical shift scale to the TSP peak at 0.0 ppm.

- Spectral Bucketing (Binning): Reduce the complexity of the data by integrating the spectral intensity into consecutive, small regions (e.g., 0.04 ppm buckets). This minimizes the effects of small pH-induced shifts.

- Normalization: Apply probabilistic quotient normalization (PQN) to correct for overall concentration differences between samples [1].

- Output: A data matrix where rows are samples, columns are spectral buckets (chemical shift regions), and values are the integrated spectral intensities.

Table 2: Summary of Pre-processing Steps and Objectives for LC-HRMS and ¹H NMR

| Technique | Key Pre-processing Steps | Primary Objective |

|---|---|---|

| LC-HRMS | Peak picking, RT alignment, gap filling, annotation. | Generate a comprehensive table of metabolite features (m/z, RT) and their relative abundances across all samples. |

| ¹H NMR | Phasing, baseline correction, chemical shift referencing, bucketing, normalization. | Generate a quantitative profile of the main components, resolved by chemical shift, that is comparable across all samples. |

Integrated Data Fusion and Analysis Workflow

The power of this approach lies in the fusion of the two distinct but complementary data blocks.

- Data Fusion Strategy: A mid-level data fusion approach is recommended. In this strategy, the pre-processed data from LC-HRMS (peak table) and NMR (bucketed spectra) are first subjected to feature selection or extraction (e.g., using Multiblock Partial Least Squares - Discriminant Analysis, MB-PLS-DA). The most discriminant variables from each block are then concatenated into a single, fused data matrix [1] [25].

- Multivariate Analysis: The fused matrix is analyzed using supervised methods like sparse PLS-DA (sPLS-DA) to build a classification model that predicts the class of unknown samples (e.g., short vs. long withering time for grapes). This approach has been shown to provide a broader characterization of the food metabolome and a lower classification error rate compared to using either technique alone [1].

Workflow for LC-HRMS/NMR Food Analysis

Mid-Level Data Fusion Concept

Chemometric and Machine Learning Models for Integrated Data (e.g., sPLS-DA, OPLS-DA, RF, DIABLO)

The pursuit of food authentication and quality control has entered a new era with the adoption of advanced analytical techniques such as Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy. These platforms generate complementary data profiles that, when integrated, provide a more comprehensive view of the food metabolome. LC-HRMS offers high sensitivity and is capable of detecting thousands of metabolomic features in complex matrices, while NMR provides structural elucidation capabilities and precise quantification despite its lower sensitivity [16] [31]. The synergy between these techniques has created unprecedented opportunities for food classification research, particularly when coupled with sophisticated chemometric and machine learning models designed to handle multi-platform datasets.

The challenge of integrating these diverse data streams has catalyzed the development of specialized computational approaches. Data fusion strategies, which systematically combine information from multiple analytical sources, have emerged as a powerful framework for leveraging the complementary strengths of LC-HRMS and NMR [16]. These strategies operate at different levels of abstraction—from raw data concatenation to model-level integration—each with distinct advantages for specific research contexts. Simultaneously, machine learning algorithms ranging from traditional partial least squares discriminant analysis to more advanced ensemble and deep learning methods have been adapted to handle the high-dimensional, multi-block data structures characteristic of integrated food metabolomics studies [32] [33]. This application note provides a comprehensive overview of these methodologies, with detailed protocols for implementing integrated data analysis pipelines in food classification research.

Analytical Platforms and Data Characteristics

LC-HRMS and NMR: Complementary Platforms