Supervised Machine Learning for Sample Classification in Drug Discovery: A 2025 Guide for Researchers

This article provides a comprehensive guide for researchers and drug development professionals on applying supervised machine learning for sample classification.

Supervised Machine Learning for Sample Classification in Drug Discovery: A 2025 Guide for Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on applying supervised machine learning for sample classification. It covers foundational principles, key algorithms like Random Forests and SVMs, and their specific applications in biomedical contexts such as patient stratification, toxicity prediction, and disease diagnosis. The content addresses common challenges including data imbalance and overfitting, explores validation techniques and comparisons with self-supervised methods, and offers practical insights for implementing robust, interpretable models to accelerate drug discovery and development.

Understanding Supervised Learning: Core Concepts and Its Vital Role in Biomedical Sample Classification

What is Supervised Learning? Defining Labeled Data and the Learning Process

Supervised learning is a fundamental paradigm in machine learning where an algorithm learns to map input data to specific outputs based on example input-output pairs [1] [2]. This process involves training a statistical model using labeled data, meaning each piece of input data is provided with the correct output or answer [3] [4]. The primary goal is to create a model that can generalize from the training examples and accurately predict outputs for new, unseen data [1] [2]. In the context of sample classification research, such as classifying cell types or disease states, supervised learning provides a framework for building predictive models from known examples, enabling researchers to classify new, uncharacterized samples based on learned patterns [5] [6].

The "supervision" in this learning approach comes from the labeled datasets used to train models, which provide a ground truth that explicitly teaches the model to identify relationships between input features and output labels [1]. These labeled datasets consist of sample data points along with their correct outputs, allowing the algorithm to adjust its parameters until the model has been fitted appropriately to minimize prediction errors [1] [7]. For drug development professionals and researchers, supervised learning offers a methodical approach to transform experimental data into predictive models that can inform decision-making processes in areas such as patient stratification, drug response prediction, and diagnostic classification [5] [8].

Core Concepts and Definitions

Labeled Data

Labeled data represents the foundational element of supervised learning, consisting of raw data that has been assigned meaningful labels to provide context and enable model training [3]. In a supervised learning context, each data point in a labeled dataset contains both input features and a corresponding target label [7] [4]. The features are the descriptive attributes or measurements that characterize each example, while the label represents the "answer" or value that the model needs to predict [7]. For example, in a medical diagnosis scenario, the features might include patient vital signs, lab results, and demographic information, while the label would indicate the presence or absence of a specific condition [5].

Labeled data provides the "supervision" that guides the learning process by establishing a ground truth against which the model can compare its predictions and adjust its parameters accordingly [1]. This ground truth data is typically verified against real-world outcomes, often through human annotation or measurement, and serves as the ideal outputs for any given input data during training [1]. The quality, size, and diversity of labeled datasets significantly impact model performance, with larger and more diverse datasets generally leading to models that can better generalize to new data [7].

The Learning Process

The supervised learning process follows a systematic workflow that transforms labeled data into a predictive model:

Data Collection and Preparation: Gathering a representative dataset containing input features and corresponding target labels [4] [6]. This step may involve data cleaning, handling missing values, and transforming raw data into a suitable format for analysis [4].

Model Selection: Choosing an appropriate algorithm based on the problem type (classification or regression), data characteristics, and performance requirements [2] [6].

Training: Feeding the training data into the chosen algorithm, allowing the model to learn patterns and relationships between inputs and outputs [7] [4]. During this phase, the model iteratively adjusts its parameters to minimize the difference between its predictions and the actual labels [7].

Evaluation: Assessing model performance using a separate test dataset not seen during training [7] [5]. This step measures how well the model generalizes to new data.

Deployment and Inference: Using the trained model to make predictions on new, unlabeled examples in real-world applications [7] [5].

This structured approach enables researchers to develop models that can classify samples, predict continuous outcomes, or identify patterns in complex biological data [5] [6].

Types of Supervised Learning

Classification

Classification represents a fundamental category of supervised learning where the goal is to assign input data to predefined categories or classes [1] [5]. In classification tasks, the target labels are discrete values representing different classes or groups [4]. This approach is particularly relevant to sample classification research, where the objective is to categorize samples into distinct groups based on their features [5] [6].

Classification problems can be further divided based on the number of classes involved:

- Binary Classification: Involves distinguishing between exactly two classes, such as classifying medical samples as "diseased" or "healthy," or predicting whether a patient will respond to a particular treatment [1] [4].

- Multiclass Classification: Involves categorizing data into more than two classes, such as classifying cell types into multiple categories or identifying different stages of disease progression [4].

Classification algorithms learn decision boundaries that separate different classes in the feature space, enabling them to assign appropriate labels to new, unclassified samples [1] [5]. In biomedical research, classification models support various applications including disease diagnosis, cancer cell classification, and patient stratification [5].

Regression

Regression constitutes the second major category of supervised learning, focusing on predicting continuous numerical values rather than discrete categories [1] [5]. In regression tasks, the target variable represents a quantifiable measure that exists on a continuous scale [1] [8]. This approach is essential when the research objective involves estimating numerical values rather than assigning class labels.

Regression analysis models the relationship between input features and a continuous output variable, enabling prediction of numerical outcomes [1] [6]. In pharmaceutical and biomedical research, regression techniques facilitate various applications:

- Predicting patient survival times or disease progression rates [6]

- Estimating drug dosage responses or compound potency [5]

- Forecasting biomarker expression levels [6]

- Modeling the relationship between genetic variants and quantitative traits [5]

While both classification and regression utilize labeled training data, they address fundamentally different types of prediction problems—categorical versus continuous—requiring different algorithmic approaches and evaluation metrics [5] [6].

Supervised Learning Workflow: A Protocol for Researchers

Protocol: End-to-End Model Development

Objective: To provide a standardized protocol for developing supervised learning models for sample classification in research settings.

Pre-requisites: Labeled dataset with known input-output pairs, computational environment with machine learning capabilities.

Table 1: Data Preparation Protocol

| Step | Procedure | Considerations |

|---|---|---|

| Data Collection | Gather representative labeled data with input features and corresponding target labels. | Ensure data quality and relevance to research question [2]. |

| Feature Representation | Transform raw input data into descriptive features. | Feature selection critically impacts model accuracy [2]. |

| Data Splitting | Partition dataset into training (~70-80%) and testing (~20-30%) subsets. | Maintain class distribution balance across splits [5]. |

| Data Preprocessing | Handle missing values, normalize features, address class imbalance. | Preprocessing reduces noise and improves model stability [4] [6]. |

Table 2: Model Training and Evaluation Protocol

| Step | Procedure | Considerations |

|---|---|---|

| Algorithm Selection | Choose appropriate algorithm based on problem type and data characteristics. | Consider bias-variance tradeoff and model interpretability needs [2] [6]. |

| Model Training | Feed training data to algorithm to learn input-output relationships. | Monitor learning curves to detect overfitting/underfitting [7]. |

| Hyperparameter Tuning | Optimize model parameters using validation set or cross-validation. | Systematic tuning improves model performance [5]. |

| Model Evaluation | Assess performance on held-out test set using appropriate metrics. | Use metrics aligned with research objectives [7] [6]. |

| Model Interpretation | Analyze feature importance and decision boundaries. | Critical for scientific validation and insight generation [6]. |

Supervised Learning Workflow

Protocol: Model Validation and Selection

Objective: To establish rigorous validation procedures for selecting optimal supervised learning models.

Table 3: Model Validation Protocol

| Step | Procedure | Purpose |

|---|---|---|

| Cross-Validation | Partition training data into k folds; train on k-1 folds, validate on held-out fold. | Maximize use of limited data while reducing overfitting [1] [6]. |

| Performance Metrics | Calculate classification accuracy, precision, recall, F1-score, ROC-AUC, or regression metrics (MSE, R²). | Quantify model performance using multiple perspectives [4] [6]. |

| Statistical Testing | Perform significance tests to compare different models or against baseline. | Ensure performance differences are statistically significant [6]. |

| Error Analysis | Examine examples where model predictions are incorrect. | Identify systematic weaknesses and guide improvements [2]. |

Essential Algorithms for Sample Classification

Algorithm Selection Guide

Selecting appropriate algorithms is critical for successful sample classification research. Different algorithms offer varying strengths in terms of accuracy, interpretability, computational efficiency, and ability to handle different data types [6].

Table 4: Supervised Learning Algorithms for Classification

| Algorithm | Best Suited For | Advantages | Limitations | Research Applications |

|---|---|---|---|---|

| Logistic Regression [1] [5] | Binary classification problems with linear relationships | Highly interpretable, fast training, probabilistic outputs | Limited capacity for complex nonlinear patterns | Initial feasibility studies, biomarker identification |

| Support Vector Machines (SVM) [1] [5] | High-dimensional data, clear margin of separation | Effective in high dimensions, memory efficient | Performance depends on kernel choice | Gene expression analysis, medical diagnosis |

| Decision Trees [5] [6] | Complex nonlinear relationships, interpretable models | Intuitive visualization, handles mixed data types | Prone to overfitting, unstable to small variations | Clinical decision rules, patient stratification |

| Random Forests [1] [5] | Large datasets with complex interactions | Reduces overfitting, handles missing data, feature importance | Less interpretable than single trees | Drug response prediction, multi-omics integration |

| K-Nearest Neighbors (KNN) [1] [5] | Small to medium datasets with meaningful distance metrics | Simple implementation, no training phase | Computationally intensive for large datasets | Cell type classification, pattern recognition |

Advanced Ensemble Methods

Ensemble methods combine multiple models to improve predictive performance and robustness beyond what can be achieved with individual algorithms [1]. These approaches are particularly valuable in research settings where prediction accuracy is paramount and sufficient computational resources are available.

Random Forests: Construct multiple decision trees during training and output the mode of classes (classification) or mean prediction (regression) of the individual trees [1] [5]. This approach reduces overfitting compared to single decision trees and provides natural feature importance measures [5].

Gradient Boosting: Builds models sequentially where each new model corrects errors made by previous models [5]. This approach typically achieves high performance but requires careful tuning of hyperparameters and computational resources [5].

Ensemble methods are particularly effective for complex classification tasks in biomedical research, such as integrating multi-omics data for patient stratification or predicting treatment outcomes from heterogeneous clinical data sources [5].

Research Reagent Solutions

Table 5: Essential Resources for Supervised Learning Research

| Resource Category | Specific Tools/Solutions | Function in Research |

|---|---|---|

| Data Labeling Platforms | Crowdsourcing platforms, expert annotation tools [9] | Generate high-quality labeled datasets for model training |

| Machine Learning Libraries | Scikit-learn, TensorFlow, PyTorch, MATLAB Statistics and ML Toolbox [6] | Provide implementations of algorithms and utilities |

| Model Evaluation Frameworks | Cross-validation utilities, metric calculation libraries [6] | Standardized assessment of model performance |

| Hyperparameter Optimization | Grid search, random search, Bayesian optimization tools [5] | Systematic tuning of model parameters |

| Feature Selection Tools | Filter methods, wrapper methods, embedded methods [2] | Identify most relevant variables for prediction |

Evaluation and Interpretation

Performance Metrics

Rigorous evaluation is essential for validating supervised learning models in research contexts. The choice of evaluation metrics should align with the specific research objectives and the consequences of different types of prediction errors [6].

Table 6: Model Evaluation Metrics

| Metric | Formula | Interpretation | When to Use |

|---|---|---|---|

| Accuracy | (TP+TN)/(TP+TN+FP+FN) | Overall correctness of predictions | Balanced class distributions |

| Precision | TP/(TP+FP) | Ability to avoid false positives | When false positives are costly |

| Recall (Sensitivity) | TP/(TP+FN) | Ability to identify all positives | When false negatives are dangerous |

| F1-Score | 2×(Precision×Recall)/(Precision+Recall) | Harmonic mean of precision and recall | Balanced view of both false positives and negatives |

| ROC-AUC | Area under ROC curve | Overall performance across classification thresholds | Compare models regardless of threshold |

Addressing Common Challenges

Several challenges frequently arise in supervised learning projects for sample classification:

Overfitting: When a model learns patterns specific to the training data that do not generalize to new data [2] [5]. Mitigation strategies include collecting more training data, applying regularization techniques, simplifying the model, using ensemble methods, and employing cross-validation [2] [5].

Data Bias: Models may learn and amplify biases present in training data [5]. Address through balanced sampling, collecting representative data, and auditing model predictions across subgroups [5].

Class Imbalance: When some classes are underrepresented in the training data [6]. Mitigation approaches include resampling techniques, class weighting, and anomaly detection methods [6].

Curse of Dimensionality: High-dimensional data with many features can confuse learning algorithms [2]. Address through feature selection, dimensionality reduction techniques, and regularization [2].

Advanced Applications in Research

Biomedical Implementation Scenarios

Supervised learning enables numerous advanced applications in biomedical research and drug development:

Drug Discovery and Repurposing: Predicting drug-target interactions, classifying compounds by mechanism of action, and identifying novel therapeutic applications for existing drugs [5] [8].

Personalized Medicine: Developing classifiers that predict individual patient responses to specific treatments based on genetic, clinical, and lifestyle factors [5] [8].

Diagnostic and Prognostic Models: Creating systems that classify medical images, identify disease subtypes from molecular data, or predict disease progression and patient outcomes [5] [6].

Biomarker Discovery: Identifying molecular signatures or clinical features that robustly classify disease states or treatment responses [5] [6].

These applications demonstrate how supervised learning transforms complex biomedical data into actionable models that can accelerate research and improve healthcare decisions.

From Raw Data to Predictions

Future Directions and Emerging Trends

The field of supervised learning continues to evolve with several emerging trends particularly relevant to sample classification research:

Automated Machine Learning (AutoML): Systems that automate the process of algorithm selection, hyperparameter tuning, and feature engineering [4].

Explainable AI (XAI): Methods that enhance model interpretability through feature importance measures, attention mechanisms, and model-agnostic explanation techniques [6].

Integration with Domain Knowledge: Approaches that incorporate existing biological knowledge and constraints into machine learning models [4].

Federated Learning: Frameworks that enable model training across multiple institutions without sharing sensitive data [4].

These advancements are making supervised learning more accessible, interpretable, and applicable to challenging research problems while addressing important considerations around reproducibility and data privacy.

Supervised learning provides a methodological framework for building predictive models from labeled data, offering powerful approaches for sample classification across biomedical research domains. The structured workflow encompassing data preparation, model selection, training, validation, and interpretation enables researchers to transform complex data into actionable insights. As the field advances with improved algorithms, visualization tools, and interpretation methods, supervised learning continues to grow in its capacity to address challenging classification problems in drug development and biomedical science. By adhering to rigorous protocols and maintaining focus on biological relevance, researchers can leverage these approaches to advance scientific discovery and translational applications.

In sample classification research within biomedical and drug development, selecting the appropriate supervised learning approach is a critical first step in building predictive models from empirical data. Supervised learning algorithms learn to map input data (your sample features) to specific outputs based on example input-output pairs, forming the foundation for most predictive tasks in scientific research [2]. The nature of the question you are asking of your data fundamentally determines whether your problem is one of classification or regression—a distinction that dictates everything from algorithm choice to evaluation methodology [10] [11].

This guide provides a structured framework for researchers to correctly identify their problem type and implement the corresponding analytical protocols, ensuring that predictive models for sample analysis are both statistically sound and biologically meaningful.

Core Concepts: Classification and Regression

Regression: Predicting Continuous Outcomes

Regression analysis is used when the target variable—the outcome you wish to predict—is a continuous numerical value [10] [12]. It models the relationship between independent variables (features) and a continuous dependent variable (target) to make quantitative predictions [11].

- Objective: To predict a quantity—answering "how much?" or "how many?" [10].

- Example Research Questions:

- What is the predicted IC50 value of a compound based on its chemical descriptors?

- How will varying a formulation parameter affect drug dissolution rate?

- What is the relationship between gene expression levels and patient survival time?

Classification: Predicting Categorical Labels

Classification is used when the target variable is categorical or discrete, meaning it can take on a limited set of values representing different classes or groups [10] [12].

- Objective: To assign a category—answering "which type?" or "what class?" [10].

- Example Research Questions:

- Does this tissue sample indicate a malignant or benign tumor? [11]

- Based on its structural fingerprint, does this molecule belong to a class of kinase inhibitors?

- Will this patient respond to a specific therapy based on their biomarker profile?

The table below summarizes the fundamental differences between regression and classification tasks to guide initial task selection.

Table 1: Fundamental Differences Between Regression and Classification Tasks

| Feature | Regression | Classification |

|---|---|---|

| Output Type | Continuous numerical value (e.g., concentration, EC50, binding affinity) [12] | Categorical label (e.g., 'Toxic'/'Non-Toxic', 'Responder'/'Non-Responder') [12] |

| Primary Goal | Fit a line or curve that minimizes prediction error (e.g., least squares) [12] [11] | Learn a decision boundary that separates classes and minimizes misclassification [12] |

| Common Algorithms | Linear Regression, Polynomial Regression, Ridge/Lasso, SVR [10] | Logistic Regression, SVM, Decision Trees, Random Forest, k-NN [10] [1] |

| Model Output | A specific numerical value on a continuous scale. | A probability of class membership or a direct class label assignment [11]. |

| Primary Evaluation Metrics | Root Mean Squared Error (RMSE), Mean Absolute Error (MAE), R-squared [10] | Accuracy, Precision, Recall, F1-Score, AUC-ROC [10] [12] |

A Decision Framework for Researchers

Choosing the correct task requires a systematic examination of your research objective and data. The following protocol provides a step-by-step methodology.

Protocol: Task Selection and Model Setup

Objective: To provide a standardized procedure for determining the appropriate supervised learning task (classification or regression) and initiating model development.

Materials:

- Dataset with feature matrix (X) and target variable (y)

- Computational environment (e.g., Python with scikit-learn, R)

- Domain knowledge regarding the biological or chemical context

Procedure:

Define the Research Objective Formally:

- Phrase your goal as a specific question.

- Regression Trigger Words: "Predict the value of...", "Forecast the magnitude...", "Model the relationship between X and level of Y".

- Classification Trigger Words: "Identify whether...", "Categorize into group A or B...", "Diagnose the presence of...".

Analyze the Target Variable (Critical Step):

- Inspect the data type and nature of your target variable (y).

- Continuous Target: If the target is numerical and the intervals between values are meaningful (e.g., IC50 = 1.2 µM, 2.5 µM, 5.1 µM), proceed to regression [10].

- Categorical Target: If the target is a label, class, or status (e.g., "High", "Medium", "Low"; or "Active", "Inactive"), proceed to classification [10].

- Note: Ordinal categories (e.g., toxicity severity scores of 1, 2, 3) can sometimes be approached with either method, depending on the goal, but are often best handled by specialized ordinal classification techniques.

Select an Appropriate Algorithm:

- Based on the outcome of Step 2, select a suitable starting algorithm from Table 1.

- For Regression: Begin with Linear Regression for linear relationships or Random Forest Regression for complex, non-linear relationships.

- For Classification: Begin with Logistic Regression for binary outcomes or Random Forest Classification for multi-class problems and complex decision boundaries [10].

Define the Evaluation Metric a Priori:

- For Regression: Pre-specify an error metric like RMSE or MAE. The choice depends on whether you need to penalize large errors heavily (RMSE) or not (MAE) [12].

- For Classification: Pre-specify metrics based on the business/research cost of errors. For imbalanced datasets (e.g., rare event detection), prioritize Precision, Recall, and F1-Score over raw Accuracy [10].

Implement and Validate the Model:

- Split data into training, validation, and test sets.

- Train the model on the training set.

- Tune hyperparameters using the validation set.

- Report final performance only on the held-out test set to obtain an unbiased estimate of generalization error [2].

Application Notes and Experimental Protocols

Application Note 1: Predicting Compound Potency (Regression)

Research Context: In early drug discovery, predicting the continuous potency (e.g., IC50, Ki) of novel chemical compounds based on molecular descriptors saves significant synthetic and screening resources.

Protocol: Regression Analysis for IC50 Prediction

Data Preparation:

- Input Features (X): Calculate molecular descriptors (e.g., molecular weight, logP, polar surface area, number of rotatable bonds) or use fingerprints for a library of compounds.

- Target Variable (y): Collect experimentally determined pIC50 (-logIC50) values for a training set of compounds. The logarithmic transformation often improves model performance by normalizing the value distribution.

- Data Cleaning: Handle missing values (e.g., imputation or removal). Scale features to a similar range (e.g., StandardScaler in scikit-learn) as many algorithms are sensitive to feature magnitudes [2].

Model Training:

- Split the data (e.g., 70% training, 15% validation, 15% test).

- Train a Random Forest Regressor as a robust, non-linear starting model.

- Use the validation set to tune hyperparameters such as

n_estimators(number of trees) andmax_depth(tree depth) to prevent overfitting.

Model Evaluation:

- Predict pIC50 values for the test set compounds.

- Calculate RMSE and R-squared.

- Interpretation: An RMSE of 0.5 in pIC50 units implies the model's predictions are typically within ~0.5 log units of the true value. An R-squared of 0.7 indicates that 70% of the variance in pIC50 is explained by the model.

Application Note 2: Classifying Compound Mechanism of Action (Classification)

Research Context: Accurately categorizing compounds by their putative Mechanism of Action (MoA) enables target deconvolution and understanding of polypharmacology.

Protocol: Multi-Class Classification for MoA Categorization

Data Preparation:

- Input Features (X): Use high-dimensional data such as gene expression profiles from cell lines treated with compounds (e.g., L1000 data) or high-content imaging features.

- Target Variable (y): Assign categorical MoA labels (e.g., "HDAC inhibitor", "Kinase inhibitor", "DNA damager") based on prior knowledge. Ensure a sufficient number of examples per class.

- Data Preprocessing: Perform dimensionality reduction (e.g., PCA) or feature selection to mitigate the "curse of dimensionality" [2]. Standardize features.

Model Training:

- Implement a Support Vector Machine (SVM) classifier with a non-linear kernel (e.g., RBF) to handle complex class boundaries.

- Use the validation set to optimize the regularization parameter

Cand kernel parameters.

Model Evaluation:

- Predict MoA labels for the test set.

- Generate a confusion matrix to visualize per-class performance.

- Report Precision and Recall for each MoA class, as overall accuracy can be misleading if class distribution is imbalanced. The F1-Score provides a single balanced metric per class.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagent Solutions for Supervised Modelling in Sample Research

| Item | Function & Application Notes |

|---|---|

| scikit-learn (Python) | A comprehensive open-source library providing robust implementations of both classification (e.g., LogisticRegression, SVC) and regression (e.g., LinearRegression, RandomForestRegressor) algorithms [10]. |

| Labeled Training Dataset | A curated set of sample data (e.g., compounds, tissue samples) where each instance is paired with a known, validated output (the label). This is the ground truth essential for model training [1] [2]. |

| Molecular Descriptors / Feature Vectors | Quantitative representations of samples (e.g., chemical structures, biomarker panels). These form the input feature matrix (X) for the model. Quality and relevance of features are paramount. |

| Data Standardization Tool (e.g., StandardScaler) | A preprocessing module used to transform features to have a mean of 0 and standard deviation of 1. This is critical for algorithms like SVMs and those reliant on gradient descent [2]. |

| Cross-Validation Module (e.g., GridSearchCV) | A utility for automated hyperparameter tuning and model validation. It helps in finding the optimal model parameters while providing a robust estimate of model performance without overfitting the test set. |

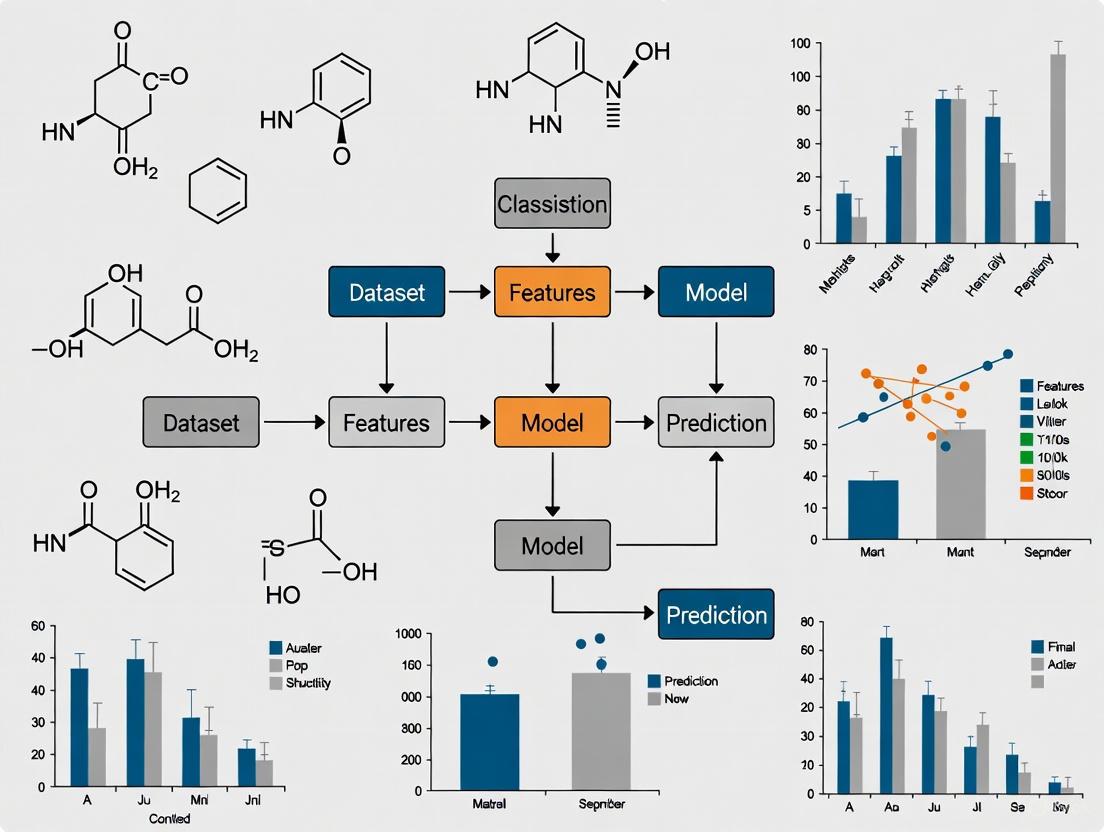

Workflow Visualization for a Research Project

The following diagram outlines the end-to-end logical workflow for a typical supervised learning project in a research setting, incorporating the decision point between classification and regression.

Within the framework of supervised modelling for sample classification research, the selection of an appropriate algorithm is paramount to the success of drug development and biomedical studies. This document provides detailed application notes and experimental protocols for four cornerstone classification algorithms: Logistic Regression, Support Vector Machines (SVM), Random Forest, and Neural Networks. Each method offers a distinct balance of interpretability, flexibility, and predictive power, making them suitable for various stages of the research pipeline, from initial exploratory data analysis to final predictive model deployment. The following sections synthesize their theoretical bases, performance characteristics, and practical implementation workflows to guide researchers and scientists in their application.

Algorithm Performance Comparison

The choice of algorithm is often dictated by the dataset size, nature of the classification problem, and the need for interpretability versus pure predictive accuracy. The following table summarizes key performance metrics and characteristics to guide algorithm selection.

Table 1: Comparative Analysis of Classification Algorithms for Research Applications

| Algorithm | Ideal Dataset Size | Key Strengths | Key Limitations | Interpretability | Sample Performance Metrics |

|---|---|---|---|---|---|

| Logistic Regression | Very small (<100 samples) to moderate [13] | Probabilistic outputs, high speed, low computational cost, resilience to overfitting with regularization [14] [13] | Assumes linear relationship between features and log-odds; struggles with complex, non-linear patterns [13] | High (Provides feature coefficients) [14] [13] | Accuracy: Up to 94.58%; AUC: 0.85 on complex image data [14] |

| Support Vector Machine (SVM) | Small to moderate [13] | Effective in high-dimensional spaces; handles non-linear data via kernel trick; strong theoretical foundations [15] [13] [16] | Computationally intensive for large datasets; sensitive to hyperparameter tuning; less interpretable [13] [16] | Moderate to Low (Decision boundary defined by support vectors) [13] | High accuracy reported in image classification (e.g., 95% for skin lesions) [17] |

| Random Forest | Moderate (500+ samples) to large [13] | Handles non-linearity; robust to outliers and missing data; provides feature importance scores [18] [13] [19] | Computationally expensive; "black box" model; can overfit on very small datasets [18] [13] [19] | Moderate (Via feature importance) [19] | Outperforms logistic regression in ~69% of 243 real-world datasets [14] |

| Neural Networks | Large [20] | High accuracy; automatically learns feature hierarchies; models highly complex, non-linear patterns [21] [20] | High computational cost; requires large amounts of data; highly complex and opaque [21] [20] | Low (Complex "black box") [21] | Superior performance on complex tasks like image and speech recognition [21] |

Detailed Algorithmic Workflows

Logistic Regression

Logistic regression is a linear model that predicts the probability of a sample belonging to a particular class. It transforms a linear combination of input features using a sigmoid function to output a value between 0 and 1 [14] [13].

Figure 1: Logistic regression workflow for sample classification.

Experimental Protocol: Binary Classification for Medical Imaging

- Objective: To classify medical images (e.g., X-rays) as showing signs of disease (1) or not (0).

- Data Preprocessing: Standardize pixel intensity values to have a mean of 0 and a standard deviation of 1. Split data into training, validation, and test sets (e.g., 70/15/15).

- Model Training: Use Maximum Likelihood Estimation (MLE), often implemented via Iteratively Reweighted Least Squares (IRLS), to find the optimal coefficients (β) that minimize the binary cross-entropy loss function [14] [20].

- Regularization: Apply L1 (Lasso) or L2 (Ridge) regularization to prevent overfitting, especially with high-dimensional data [13].

- Evaluation: Assess model performance on the held-out test set using Area Under the ROC Curve (AUC), accuracy, and F1-score [14].

Support Vector Machines (SVM)

SVMs classify data by finding the optimal hyperplane that maximizes the margin between classes in a high-dimensional space. The samples closest to the hyperplane are the "support vectors" that define the classifier [15] [16].

Figure 2: SVM finds the hyperplane that maximizes the margin between two classes.

Experimental Protocol: Protein Classification using SVM

- Objective: Classify protein sequences into functional families based on their features.

- Feature Extraction: Generate features from protein sequences (e.g., amino acid composition, physicochemical properties).

- Kernel Selection: For non-linearly separable data, use the kernel trick. The Radial Basis Function (RBF) kernel is a common default choice [17] [16].

- Hyperparameter Tuning: Use grid search with cross-validation to find the optimal values for:

- C (Regularization): Controls the trade-off between achieving a low training error and a low testing error.

- Gamma (RBF kernel): Defines how far the influence of a single training example reaches [17].

- Model Evaluation: Report precision, recall, and accuracy on an independent test set. The model's decision function can be used to plot an ROC curve.

Random Forest

Random Forest is an ensemble method that constructs a multitude of decision trees at training time. The final classification is the mode of the classes output by individual trees, which reduces overfitting and improves generalization [18] [19].

Figure 3: Random Forest uses multiple decorrelated trees and aggregates their results.

Experimental Protocol: Drug Sensitivity Prediction

- Objective: Predict patient sensitivity to a drug based on genomic and clinical features.

- Data Preparation: Handle missing values (Random Forest can work with missing data, but imputation may still be beneficial). Ensure categorical variables are encoded.

- Model Training:

- Feature Importance: After training, extract the Gini importance or mean decrease in impurity to identify the genomic markers most predictive of drug response [18].

- Evaluation: Use accuracy and AUC. Perform cross-validation to obtain robust estimates of model performance and to mitigate overfitting.

Neural Networks

Neural networks consist of interconnected layers of artificial neurons that learn hierarchical representations of data. They are particularly powerful for complex patterns in high-dimensional data like images or genetic sequences [21] [20].

Figure 4: A simple feedforward neural network with multiple hidden layers.

Experimental Protocol: Image-Based Cell Phenotype Classification using CNN

- Objective: Classify microscope images of cells into different phenotypic categories.

- Architecture Selection: Use a Convolutional Neural Network (CNN), which is designed for image data [21].

- Training with Backpropagation:

- Optimizer: Use Adaptive Moment Estimation (Adam) for efficient convergence [20].

- Loss Function: For multi-class classification, use Categorical Cross-Entropy [20].

- Activation Function: Use ReLU in hidden layers to mitigate vanishing gradients and Softmax in the output layer to obtain class probabilities [20].

- Regularization: Employ techniques like Dropout and early stopping to prevent overfitting.

- Evaluation: Use a confusion matrix and top-1 accuracy on a test set of images not seen during training.

The Scientist's Toolkit: Essential Research Reagents & Software

The following table lists key software and libraries required for implementing the described classification algorithms in a research environment.

Table 2: Key Research Reagents and Software Solutions for Algorithm Implementation

| Item Name | Function / Application | Example Use Case |

|---|---|---|

| scikit-learn | A comprehensive open-source machine learning library for Python. | Provides efficient and easy-to-use implementations for Logistic Regression, SVM, and Random Forest [18] [19]. |

| PyTorch / TensorFlow | Open-source libraries for deep learning and numerical computation. | Used for building and training complex Neural Networks, including CNNs and RNNs [21] [22]. |

R e1071 / kernlab |

R packages for statistics and machine learning. | Contain functions for fitting Support Vector Machines with various kernels [15] [16]. |

| Weka | A Java-based workbench for machine learning and data mining. | Offers a GUI and API for applying a collection of classification algorithms, including Random Forest, without programming [16]. |

| Imbalanced Learn (sklearn-contrib) | A Python package providing techniques for handling imbalanced datasets. | Used for oversampling (SMOTE) or undersampling when one class is underrepresented, a common issue in medical datasets [17]. |

Supervised learning (SL), a foundational machine learning paradigm, has transitioned from an experimental tool to a core component of modern pharmaceutical research and development [23]. This methodology employs algorithms to learn from labeled datasets, where each example is paired with a known outcome, enabling the model to discern complex patterns and make predictions on new, unseen data [24]. In the context of drug discovery, these labels can represent a vast array of critical information, including a compound's biological activity, binding affinity for a target, toxicity profile, or a patient's likely response to a therapy [25] [26]. The ability to predict such outcomes from molecular or clinical data is fundamentally reducing the reliance on serendipity and labor-intensive trial-and-error approaches that have long characterized the field.

The transformative impact of SL stems from its direct addressal of the pharmaceutical industry's most pressing challenges: escalating costs, lengthy timelines, and high attrition rates [25] [27]. Traditional drug discovery can require over a decade and investments exceeding $2.5 billion per approved drug, with nearly 90% of candidates failing during clinical trials [27]. By providing data-driven predictions, SL enhances decision-making, prioritizes the most promising candidates, and derisks the development process. Its application spans the entire drug discovery and development pipeline, compressing timelines that once took years into months and substantially lowering associated costs [28] [25]. As of 2024, SL was the dominant algorithmic type in the machine learning drug discovery market, holding a 40% revenue share, a testament to its widespread adoption and proven utility [29].

Core Supervised Learning Algorithms and Their Pharmaceutical Applications

The power of supervised learning is realized through a suite of algorithms, each with distinct strengths suited to specific tasks in the drug discovery workflow. These models are broadly categorized based on their prediction target: classification for categorical outcomes and regression for continuous values [24].

Table 1: Key Supervised Learning Algorithms in Drug Discovery

| Algorithm | Learning Type | Primary Drug Discovery Applications | Brief Rationale |

|---|---|---|---|

| Random Forests | Classification, Regression | Virtual screening, toxicity prediction, patient stratification [27] [30] [29] | Robust, handles high-dimensional data, reduces overfitting via ensemble learning [24] [30]. |

| Support Vector Machines (SVM) | Classification, Regression | Compound classification, bioactivity prediction, image analysis (e.g., histology) [27] [26] [30] | Effective in high-dimensional spaces, finds optimal separation boundaries between classes [27] [30]. |

| Neural Networks/Deep Learning | Classification, Regression | De novo molecular design, ADMET prediction, advanced image recognition [27] [26] [29] | Captures highly complex, non-linear relationships in large, intricate datasets [26]. |

| Logistic Regression | Classification | Binary outcome prediction (e.g., active/inactive, toxic/non-toxic) [27] [30] | Provides a simple, interpretable baseline model for probabilistic classification [24] [30]. |

| Gradient Boosting (XGBoost, etc.) | Classification, Regression | Quantitative Structure-Activity Relationship (QSAR) modeling, predictive toxicology [24] [23] | State-of-the-art performance on structured data; builds models sequentially to correct errors [24]. |

The selection of an algorithm depends on the specific problem, data type, and dataset size. For instance, Random Forest and Gradient Boosting are frequently top performers for structured data from chemical assays, while Deep Neural Networks excel in tasks involving raw, complex data like molecular structures or medical images [24] [26]. The trend is moving towards increasingly sophisticated models, with the deep learning segment projected to be the fastest-growing in the coming years due to its power in structure-based predictions and generative design [29].

Application Notes: Supervised Learning Across the Drug Discovery Pipeline

Target Identification and Validation

The initial stage of drug discovery involves pinpointing a biological target (e.g., a protein) implicated in a disease. SL models are trained on diverse multi-omics data (genomics, proteomics) and vast scientific literature to identify and prioritize novel targets [25] [23]. For example, algorithms can be trained on labeled data linking specific gene mutations to disease phenotypes, enabling them to predict the causal role of new genes. A notable application is the identification of NAMPT as a therapeutic target in neuroendocrine prostate cancer through a computational drug discovery pipeline [23]. By analyzing complex biological data, these models can uncover previously unknown therapeutic targets, expanding the universe of treatable diseases.

Compound Screening and Lead Optimization

Once a target is identified, the search for a molecule that can effectively and safely modulate it begins. This phase has been revolutionized by SL.

- Virtual Screening: SL models can rapidly predict the binding affinity and biological activity of millions of compounds from virtual libraries, a process far more efficient than traditional high-throughput screening [25] [26]. Companies like Atomwise use convolutional neural networks to predict molecular interactions, having identified two drug candidates for Ebola in less than a day [25].

- Lead Optimization: This critical stage involves refining a "hit" compound into a "lead" candidate with optimal drug-like properties. SL dominates here, accounting for nearly 30% of the ML in drug discovery market share in 2024 [29]. Models are trained on historical data to predict key parameters such as potency, selectivity, and ADMET properties (Absorption, Distribution, Metabolism, Excretion, Toxicity) [26] [29]. Exscientia reports using such models to design drug candidates with 70% faster cycle times and requiring 10-fold fewer synthesized compounds than industry norms [28].

Clinical Trial Design and Patient Stratification

Clinical trials represent one of the most costly and high-attrition phases of drug development. SL is introducing much-needed efficiency and precision [25] [23].

- Patient Recruitment and Stratification: SL models analyze Electronic Health Records (EHRs), genetic data, and clinical notes to identify eligible patients for trials, significantly accelerating enrollment [25]. More importantly, they can stratify patients into subgroups based on their predicted response to therapy, enabling more targeted and powerful trials. For instance, ML models have been developed to predict metastasis in early-stage lung cancer or cognitive progression in Parkinson's patients, which can be used to enrich trial populations [23].

- Trial Outcome Prediction: SL helps design more efficient trials by predicting potential outcomes. This includes forecasting placebo response in major depressive disorder trials and predicting the risk of adverse events, such as edema in patients treated with tepotinib [23]. These insights allow for better trial design and patient monitoring, increasing the probability of success.

Table 2: Quantitative Impact of Supervised Learning in Drug Discovery

| Application Area | Exemplary Achievement | Impact Metric |

|---|---|---|

| Discovery Speed | Insilico Medicine's idiopathic pulmonary fibrosis drug candidate [28] [25] | Target discovery to Phase I trials achieved in 18 months (versus ~5 years traditionally) [28]. |

| Compound Efficiency | Exscientia's AI-designed compounds [28] | 70% faster design cycles and 10x fewer compounds synthesized than industry standards [28]. |

| Virtual Screening | Atomwise's screening for Ebola [25] | Two drug candidates identified in less than a day [25]. |

| Market Impact | Lead Optimization Segment [29] | Held ~30% share of the ML in drug discovery market in 2024 [29]. |

Experimental Protocols

Protocol 1: Building a QSAR Model for Activity Prediction

This protocol details the use of supervised learning to create a Quantitative Structure-Activity Relationship (QSAR) model that predicts a compound's biological activity from its chemical structure.

1. Problem Formulation & Data Collection

- Objective: To classify compounds as "active" or "inactive" against a specific protein target.

- Data Source: Public repositories like ChEMBL provide large, labeled datasets of chemical structures and their associated bioactivity measurements (e.g., IC50, Ki) [26].

- Label Definition: Compounds with potency (e.g., IC50) stronger than a defined threshold (e.g., < 1 µM) are labeled "active" (1); weaker compounds are labeled "inactive" (0).

2. Data Preparation and Featurization

- Featurization: Convert chemical structures (e.g., SMILES strings) into numerical descriptors that the model can process. Common features include:

- Molecular descriptors: Molecular weight, logP, number of hydrogen bond donors/acceptors.

- Fingerprints: Binary vectors indicating the presence or absence of specific chemical substructures.

- Data Splitting: Randomly split the dataset into a training set (~70-80%) to train the model, a validation set (~10-15%) for tuning hyperparameters, and a hold-out test set (~10-15%) for the final unbiased evaluation [24].

3. Model Training and Validation

- Algorithm Selection: Start with a robust, baseline algorithm like Random Forest [24].

- Training: The model learns the relationship between the input features (molecular descriptors) and the output labels (active/inactive) on the training set.

- Hyperparameter Tuning: Use the validation set to optimize model parameters (e.g., the number of trees in the forest) via techniques like grid search or random search.

4. Model Evaluation

- Metrics: Evaluate the final model on the untouched test set using classification metrics [24]:

- Accuracy: Overall correctness.

- Precision: Proportion of true actives among all predicted actives.

- Recall (Sensitivity): Proportion of true actives correctly identified.

- F1-Score: Harmonic mean of precision and recall.

- Confusion Matrix: A table visualizing true vs. predicted labels to understand the nature of errors.

The workflow for this QSAR modeling protocol is standardized and can be visualized as follows:

Protocol 2: Predicting Patient-Specific Toxicity from EHR Data

This protocol uses SL to predict a patient's risk of a specific adverse drug reaction (e.g., cisplatin-induced acute kidney injury) using clinical data [23].

1. Problem Formulation & Data Extraction

- Objective: To predict a binary outcome: whether a patient will experience a specific toxicity (e.g., Acute Kidney Injury) within a defined timeframe after treatment initiation.

- Data Source: Electronic Health Records (EHRs). Extract structured data (lab values, vital signs, demographics, medications) and/or unstructured clinical notes [23].

- Label Definition: Patients who developed the toxicity according to clinical criteria (e.g., KDIGO guidelines for AKI) are labeled "1" (case), and matched controls who did not are labeled "0".

2. Data Preprocessing and Feature Engineering

- Handling Missing Data: Impute missing lab values (e.g., using mean/median) or exclude variables with excessive missingness.

- Feature Engineering: Create predictive features from raw data:

- Data Splitting: Split patient-level data into training, validation, and test sets, ensuring all records from a single patient reside in only one set to prevent data leakage.

3. Model Training with Interpretability

- Algorithm Selection: Use interpretable models like Logistic Regression or Gradient Boosting (XGBoost) coupled with SHAP analysis for explainability [23].

- Training: Train the model on the training set to find the relationship between clinical features and toxicity risk.

- Interpretability: Apply SHAP (SHapley Additive exPlanations) analysis to understand which features (e.g., baseline creatinine, age) most heavily influenced the model's prediction, fostering clinical trust [23].

4. Model Validation and Performance Assessment

- Metrics: Evaluate performance on the test set. Key metrics include AUC-ROC (Area Under the Receiver Operating Characteristic curve) to assess overall ranking capability, and Precision-Recall curves, which are informative for imbalanced datasets [24].

- Clinical Validation: The model's predictions should be reviewed by clinical experts to ensure they are medically plausible before deployment.

The process for developing this clinical prediction model is outlined below:

The Scientist's Toolkit: Essential Research Reagent Solutions

The effective application of supervised learning requires a suite of computational "reagents" and data resources.

Table 3: Essential Research Reagent Solutions for Supervised Learning

| Tool/Resource Name | Type | Primary Function in SL Drug Discovery |

|---|---|---|

| ChEMBL [26] | Public Database | A manually curated database of bioactive molecules with drug-like properties, providing the labeled data essential for training models on bioactivity and binding affinity. |

| AlphaFold Protein Structure Database [25] [26] | Public Database | Provides highly accurate protein structure predictions, which serve as critical input features for structure-based SL models in virtual screening and target validation. |

| Amazon Web Services (AWS) / Google Cloud [28] [23] | Cloud Computing Platform | Offers scalable computational power and storage for training complex SL models on large datasets, with cloud-based deployment holding a ~70% market share in 2024 [29]. |

| SHAP (SHapley Additive exPlanations) [23] | Software Library | Provides post-hoc interpretability for "black box" models like neural networks and random forests, explaining which features drove a prediction to build trust with scientists and regulators. |

| Scikit-learn [24] | Software Library | A core Python library providing robust, efficient, and easy-to-use implementations of a wide variety of SL algorithms, from logistic regression to random forests. |

| TensorFlow/PyTorch [26] | Software Library | Open-source libraries for building and training deep neural networks, enabling complex tasks like de novo molecular design and advanced image-based phenotyping. |

| Electronic Health Records (EHRs) [25] [23] | Data Resource | A source of real-world patient data that, when properly curated and labeled, is used to train SL models for clinical trial recruitment, outcome prediction, and toxicity risk forecasting. |

Challenges and Future Outlook

Despite its promise, the implementation of supervised learning in drug discovery is not without hurdles. A primary challenge is the requirement for large, high-quality labeled datasets, which can be expensive and time-consuming to generate, particularly in domains like preclinical toxicology where data is scarce [30]. Furthermore, the issue of model interpretability remains significant; complex models like deep neural networks often function as "black boxes," making it difficult for researchers to understand the rationale behind a prediction, which can hinder trust and adoption in a highly regulated environment [30] [23]. Data bias and the potential for models to make unreliable predictions when faced with out-of-distribution data also pose substantial risks that must be managed [23].

The future of SL in drug discovery is bright and evolving. Key trends include the rise of Explainable AI (XAI) to demystify model decisions and the integration of SL with other AI paradigms [30] [23]. For instance, semi-supervised learning techniques are being developed to make better use of the vast amounts of unlabeled data available, mitigating the data-scarcity problem [31]. There is also a growing emphasis on creating AI-augmented workflows, where SL models do not replace scientists but rather empower them as "centaur chemists," providing data-driven insights to guide human intuition and experimentation [28]. As these technologies mature and overcome existing challenges, supervised learning is poised to become an even more deeply embedded infrastructure, accelerating the delivery of novel therapeutics to patients.

Supervised learning is a cornerstone of machine learning (ML) in scientific research, where models learn from labeled datasets to perform classification or prediction tasks [24]. For sample classification research—a critical component in fields like drug development and biomedical sciences—this involves training algorithms to categorize data into predefined classes based on input features [30]. The process enables enhanced decision-making by learning patterns from known examples where the "right answer" is provided, then applying these patterns to new, unlabeled data [24] [32].

The complete workflow extends far beyond initial model training, encompassing a structured, iterative pathway from problem definition through to continuous monitoring in production environments. This end-to-end process is essential for developing reliable, accurate, and generalizable models that can withstand the challenges of real-world application [24] [33]. In domains like healthcare research, robust supervised learning models (SMLMs) offer the potential to support complex prediction and classification tasks with speed and precision, thereby augmenting researcher capabilities and informing strategic decisions [32].

A successful supervised learning project for sample classification follows a structured, iterative workflow comprising five core stages [24]. This pathway ensures the development of a reliable and impactful model, from initial problem scoping to operational deployment and maintenance.

- Stage 1: Import and Frame the Data → This initial phase focuses on defining the research problem and gathering corresponding data. It involves framing a specific, measurable question and identifying what labeled data is needed to answer it [24].

- Stage 2: Data Preparation → The collected data must be cleaned, encoded, and scaled. New features are engineered, and the data is split into training, validation, and test sets to prevent bias in subsequent evaluation [24] [32].

- Stage 3: Choose and Train the Model → An appropriate algorithm is selected based on the problem type (e.g., classification). It is trained on the prepared data, often starting with a simple baseline model before progressing to more complex architectures [24].

- Stage 4: Evaluate and Validate → The model's performance is assessed on unseen test data using relevant metrics. This determines if its predictions are accurate and generalizable, or if further refinement is needed [24] [32].

- Stage 5: Deploy and Monitor → The validated model is put into a real-world production environment. Its performance is continuously tracked to detect any degradation over time, triggering retraining if necessary [24] [33].

This workflow is not strictly linear; it often requires iterating on previous steps based on findings from later stages [24]. For instance, performance issues detected during monitoring (Stage 5) may necessitate additional data preparation (Stage 2) or model retraining (Stage 3).

Phase 1: Data Framing and Preparation

Data Acquisition and Feature Selection

The foundation of any robust supervised learning model is high-quality, relevant data. The initial step involves acquiring a labeled dataset where each sample is associated with a known class or outcome [32]. For sample classification in research, features (independent variables) must be predictive of the target label (dependent variable). Domain knowledge is critical here for identifying meaningful features and designing informative data collection tools [32].

Redundant or irrelevant features increase model complexity and can reduce generalizability. Techniques like statistical tests, feature importance scores from tree-based models, and clinical domain expertise can help select the most predictive features [32].

Data Cleansing and Preprocessing Protocols

Raw data is often unsuitable for immediate model training and requires rigorous preparation. Key steps in this protocol include:

- Handling Missing Data: Common approaches include deletion of records or features with excessive missingness, or imputation using the mean, median, mode, or more advanced methods like K-nearest neighbors or multiple imputation by chained equations (MICE) [32].

- Data Encoding: ML models require numerical inputs. Categorical features must be encoded using techniques like one-hot encoding or label encoding [32].

- Feature Scaling: Variables on different scales can bias certain algorithms. Scaling values to a comparable range (e.g., [0, 1] or [-1, 1]) through normalization or standardization is often necessary [32].

Data Splitting Strategy

Prepared data must be partitioned into distinct sets to properly train and evaluate a model. A common practice is to allocate a larger portion (e.g., 70-80%) to the training set and the remainder to the testing set [32]. The training set is used to teach the model parameters, while the held-out test set provides an unbiased estimate of its performance on unseen data.

Phase 2: Model Selection and Training

Algorithm Selection for Sample Classification

The choice of algorithm depends on the problem nature, data size, and desired model interpretability. For sample classification research, several core algorithms are commonly employed [24] [30].

Table 1: Core Classification Algorithms for Sample Classification Research

| Algorithm | Primary Purpose | Key Applications in Research | Considerations |

|---|---|---|---|

| Logistic Regression [24] [30] | Models probability of a binary outcome. | Baseline modeling, medical diagnosis [30]. | Simple, fast, highly interpretable. |

| Decision Trees & Random Forests [24] [30] | Makes classification via a series of rules. Random Forest combines many trees. | Credit scoring, customer churn prediction, robust performance on structured data [24] [30]. | Random Forest is robust and often a strong performer. |

| Gradient Boosting (XGBoost, LightGBM) [24] | Sequentially builds models to correct errors of previous ones. | State-of-the-art performance on structured data [24]. | Powerful, but can be more complex to tune. |

| Support Vector Machines (SVM) [30] | Finds optimal boundary to separate classes in high-dimensional space. | Text categorization, image recognition, bioinformatics [30]. | Effective in high-dimensional spaces. |

| Naive Bayes [30] | Probabilistic classifier based on Bayes' theorem. | Text classification, sentiment analysis, spam detection [30]. | Performs well despite its simplifying assumptions. |

| Neural Networks [30] [32] | Captures complex, non-linear patterns through interconnected layers. | Image and speech recognition, complex pattern recognition [30] [32]. | Requires large data, less interpretable ("black box"). |

Model Training and Hyperparameter Tuning Protocol

Training involves using an algorithm to learn the relationship between features and labels from the training dataset. The algorithm iteratively adjusts its internal parameters to minimize prediction error [32]. A critical step in this phase is hyperparameter tuning. Hyperparameters are configuration external to the model itself (e.g., the depth of a tree, the learning rate of a neural network) that must be set before training [32].

A standard protocol for optimization is Grid Search with Cross-Validation (CV):

- Define a Hyperparameter Grid: Specify a set of possible values for each hyperparameter you wish to tune.

- Perform K-Fold Cross-Validation: For each combination of hyperparameters in the grid, the training data is split into k folds (e.g., k=5 or 10). The model is trained on k-1 folds and validated on the remaining fold, repeated k times so each fold serves as the validation set once.

- Select Optimal Configuration: The hyperparameter combination that yields the best average performance across all k validation folds is selected.

- Final Training: The model is retrained on the entire training set using these optimal hyperparameters [32].

Phase 3: Model Evaluation and Validation

Performance Metrics for Classification

Evaluating a model requires metrics that accurately reflect its performance on unseen data (the test set). Relying on a single metric can be misleading; a suite of metrics provides a comprehensive view [24].

Table 2: Key Evaluation Metrics for Classification Models

| Metric | Definition | Interpretation & Use Case |

|---|---|---|

| Accuracy [24] | Proportion of total correct predictions (both positive and negative). | Best when classes are balanced. Misleading with class imbalance. |

| Precision [24] | Proportion of positive predictions that were actually correct. | Critical when the cost of false positives is high (e.g., in spam detection). |

| Recall (Sensitivity) [24] | Proportion of actual positive cases that were successfully identified. | Critical when the cost of false negatives is high (e.g., in disease screening). |

| F1-Score [24] | Harmonic mean of Precision and Recall. | Provides a single score that balances both concerns. |

| Confusion Matrix [24] | A table showing true vs. predicted labels (True Positives, False Positives, True Negatives, False Negatives). | Gives a detailed breakdown of where the model is making errors. |

| Area Under the Receiver Operating Characteristic Curve (AUC) [33] | Measures the model's ability to distinguish between classes across all classification thresholds. | A value of 1.0 indicates perfect separation, 0.5 indicates no discriminative power. |

Validation in Practice: A Clinical Case Study

The Vent.io model, developed to predict the need for mechanical ventilation in ICU patients, demonstrates rigorous validation. The model was first trained and tested internally on data from one health system. It was then prospectively deployed in a "silent mode" where it made predictions in a real clinical environment without directing care, allowing for real-world validation which showed an AUC of 0.908 [33].

To test generalizability, the model was also validated on the external MIMIC-IV dataset. Here, its performance dropped to an AUC of 0.73, highlighting a common challenge: model performance can deteriorate when applied to data from different sources or populations [33]. This triggered a model fine-tuning process per a pre-defined plan, which successfully improved the AUC to 0.873 on the external dataset [33].

Phase 4: Model Deployment and Monitoring

Deployment Strategies and the Predetermined Change Control Plan (PCCP)

Deployment is the process of integrating a trained and validated model into a real-world environment to make predictions on new data. This can be done as a batch process or, more commonly, via a real-time API [24]. In regulated fields like healthcare, a Predetermined Change Control Plan (PCCP) is a critical component of deployment. A PCCP is a proactive strategy that outlines planned modifications to a model, the protocol for implementing them, and how to assess their impact [33].

The PCCP for the Vent.io model systematically tracked the model's AUC in production. It pre-specified an AUC threshold of 0.85; performance dropping below this level would automatically trigger model fine-tuning. This provides a structured, regulatory-compliant framework for maintaining model performance and safety over time [33].

Continuous Model Monitoring Framework

Once deployed, models are susceptible to performance decay due to changes in the underlying data environment. Continuous monitoring is essential to detect these issues [34].

Table 3: Key Metrics and Challenges in Production Model Monitoring

| Aspect to Monitor | Description | Common Challenges |

|---|---|---|

| Model Quality (Accuracy, Precision, etc.) [34] | Track performance metrics on new, labeled data as it becomes available. | Lack of Ground Truth: Labels for new data are often delayed, making real-time quality assessment impossible. Proxy metrics must be used [34]. |

| Data Drift [34] | Change in the statistical properties of the model's input features over time. | Requires comparing the distribution of live data to a reference (training) distribution, which is computationally intensive [34]. |

| Concept Drift [34] | Change in the relationship between the input features and the target variable. | Can be gradual (e.g., evolving user preferences) or sudden (e.g., a global pandemic), making it difficult to detect and attribute [34]. |

| Data Quality [34] | Issues with the incoming data, such as missing values, incorrect data types, or values outside expected ranges. | Bugs in upstream data pipelines can silently corrupt the model's inputs, leading to unreliable outputs without obvious system failures [34]. |

Silent failures are a key challenge in ML monitoring. Unlike traditional software that may crash, an ML model with corrupted input data will still produce a prediction, albeit a potentially low-quality one, without raising an alarm [34]. Monitoring must therefore be designed to detect these non-obvious errors.

The Scientist's Toolkit: Research Reagent Solutions

This table details key software and methodological "reagents" essential for implementing the supervised learning workflow for sample classification.

Table 4: Essential Research Reagents for Supervised Sample Classification

| Tool / Reagent | Type / Category | Primary Function in the Workflow |

|---|---|---|

| scikit-learn [24] | Python Library | Provides a unified interface for a wide array of ML algorithms (classification, regression, clustering) and essential utilities for model evaluation (metrics, train-test splits) and preprocessing (scalers, encoders). |

| XGBoost / LightGBM [24] | Algorithm Library | Offers high-performance, scalable implementations of gradient boosting frameworks, which are often top performers in classification tasks on structured data. |

| TensorFlow/PyTorch [33] [32] | Deep Learning Framework | Provides the foundation for building and training complex neural network models, from simple feedforward networks to advanced architectures for image or text data. |

| Evidently AI [34] | ML Monitoring Library | An open-source Python library specifically designed to calculate and track data and model quality metrics, detect drift, and visualize performance in production environments. |

| Pandas & NumPy [24] | Python Library | The fundamental packages for data manipulation and numerical computation. Used for loading, cleaning, transforming, and exploring datasets at all stages of the workflow. |

| Cross-Validation [32] | Methodology | A resampling procedure used to robustly assess model generalizability and tune hyperparameters when data is limited, by maximizing the use of available data for both training and validation. |

| Predetermined Change Control Plan (PCCP) [33] | Regulatory & Process Framework | A formal plan for managing post-deployment model changes, required for software as a medical device (SaMD) and critical for maintaining compliance and performance in regulated research. |

Integrated View: Connecting Workflow Phases

The following diagram synthesizes the core components of the supervised learning workflow, highlighting the critical processes, outputs, and feedback loops that connect data preparation to sustained performance in production.

This integrated view illustrates that model deployment is not an endpoint. The Monitoring System continuously validates the Deployed Model's Predictions, creating essential feedback loops. Alerts on data quality can trace back to the source Raw Data, while performance decay below a threshold, as governed by a PCCP, triggers model retraining. This closed-loop system is vital for maintaining a reliable and effective sample classification model in a dynamic research or clinical environment [24] [33] [34].

From Theory to Practice: Implementing Classification Models in Drug Development Pipelines

In the field of sample classification research, particularly within biological sciences and drug development, the selection of an appropriate supervised machine learning algorithm is a critical determinant of experimental success. This process must carefully balance model performance with interpretability, a consideration of paramount importance when research outcomes inform high-stakes decisions in areas like diagnostic marker identification or patient stratification. The core challenge for scientists lies in aligning the algorithmic choice with the specific characteristics of their dataset and the overarching goals of their classification task [35].

This guide provides a structured framework for this selection process, focusing on the interplay between data size, problem complexity, and analytical task. It moves beyond a theoretical discussion to offer application notes and detailed experimental protocols, providing a practical toolkit for researchers to systematically develop, evaluate, and deploy robust classification models. The principles outlined are universally applicable, yet are framed within the context of supervised modelling for sample classification, ensuring direct relevance to scientific research.

Core Principles of Algorithm Selection

Selecting a classification algorithm is not a one-size-fits-all process; it is a strategic decision based on a clear understanding of both the data and the project's objectives. The following principles provide a foundation for a reasoned and effective selection strategy.

Understand the Problem and Data Structure: The first step is to precisely define the classification problem, including the number of classes and the nature of the input features. A thorough exploratory data analysis is essential to understand data distribution, the presence of missing values, and potential outliers [24]. This phase should also characterize the dataset's scale, as this directly influences which algorithms are computationally feasible [36].

Evaluate the Need for Interpretability: In scientific research, the ability to interpret a model's predictions is often as important as its accuracy. For instance, understanding which genes or proteins a model uses for classification can yield novel biological insights. Linear models and decision trees offer high interpretability, whereas complex ensemble methods or neural networks are often "black boxes," though techniques like feature importance analysis can provide some post-hoc explanation [37] [35].

Prioritize Scalability and Computational Efficiency: The resource consumption of an algorithm—in terms of time and memory—must be considered, especially with large-scale omics data. Algorithmic complexity theory provides a framework for predicting how resource requirements grow with input size [36]. An algorithm that is efficient on a small, pilot dataset may become prohibitively slow or memory-intensive when applied to a full dataset, a common pitfall known as confusing "small-n performance with scalability" [36].

Adopt an Iterative Approach to Model Selection: Algorithm selection is rarely linear. It is best practice to start with a simple, interpretable model as a baseline (e.g., Logistic Regression) [24] [38]. The performance of this baseline can then be used to benchmark more complex models. This iterative process involves training multiple candidates, evaluating them rigorously using hold-out validation sets, and fine-tuning the most promising ones [24] [35].

A Structured Selection Framework

To operationalize the core principles, researchers can use the following decision framework, which matches algorithm families to common data and problem scenarios in sample classification. The subsequent table provides a quantitative summary for easy comparison.

Algorithm Selection Guide

| Algorithm Family | Typical Data Size | Handled Complexity | Primary Classification Task | Key Strengths | Key Weaknesses |

|---|---|---|---|---|---|

| Logistic Regression [38] | Small to Large | Linear | Binary, Multinomial | Highly interpretable, efficient, stable baseline [35] | Limited to linear decision boundaries |

| Decision Trees [39] | Small to Medium | Non-linear | Binary, Multinomial | Intuitive, handles mixed data, no strict scaling need [38] | Prone to overfitting, high variance |

| Random Forest [24] | Medium to Large | High, Non-linear | Binary, Multinomial | Robust, handles non-linearity, reduces overfitting [39] | Less interpretable, memory-intensive |

| Gradient Boosting (XGBoost, etc.) [24] | Medium to Large | High, Non-linear | Binary, Multinomial | State-of-the-art accuracy on structured data [24] | Requires careful tuning, computationally heavy |

| Support Vector Machine (SVM) [38] | Small to Medium | High, Non-linear (with kernel) | Binary | Effective in high-dimensional spaces (e.g., genomics) [38] | Poor scalability, slow on very large datasets |

| Naive Bayes [38] | Small to Large | Linear | Binary, Multinomial | Very fast, works well with high-dimensional data | Relies on strong feature independence assumption |

| K-Nearest Neighbor (KNN) [38] | Small | Instance-based | Binary, Multinomial | Simple, no training phase, naturally handles multi-class | Slow prediction, sensitive to irrelevant features |

| Neural Networks [40] [35] | Very Large | Very High, Non-linear | Binary, Multinomial | Superior for complex patterns (e.g., imaging) [40] | "Black box," needs massive data, computationally expensive [35] |

The workflow for navigating this framework begins with assessing the dataset size. For small to medium-sized datasets, a wide range of algorithms from Logistic Regression to SVMs are suitable. For very large datasets, efficient algorithms like Logistic Regression, Naive Bayes, or tree-based ensembles are preferable, with Neural Networks becoming a viable option only if data is truly massive and computational resources are available [36] [35].

Next, the complexity of the underlying problem must be considered. If the relationship between features and the class label is presumed to be simple and linear, Logistic Regression is an excellent starting point. For capturing complex, non-linear interactions, Decision Trees, Random Forests, Gradient Boosting, or Neural Networks are necessary [38] [35].