Structural Similarity, Shared Action: Decoding the Mechanisms of Related Natural Compounds for Drug Discovery

This article provides a comprehensive analysis of modern strategies for comparing the mechanisms of action (MOA) of structurally similar natural compounds, a critical task for researchers and drug development professionals.

Structural Similarity, Shared Action: Decoding the Mechanisms of Related Natural Compounds for Drug Discovery

Abstract

This article provides a comprehensive analysis of modern strategies for comparing the mechanisms of action (MOA) of structurally similar natural compounds, a critical task for researchers and drug development professionals. It explores the foundational principle that shared molecular scaffolds often predict common biological targets and pathways. The article details contemporary methodological frameworks that integrate computational tools, such as large-scale molecular docking and transcriptomics, with experimental validation. It further addresses key challenges in the field, including data variability and the complexity of multi-component mixtures, while reviewing advanced solutions involving artificial intelligence and systems pharmacology. Finally, it establishes a framework for the rigorous comparative validation of MOA hypotheses, synthesizing insights to guide the rational design of natural product-based therapies and the identification of novel drug leads[citation:1][citation:2][citation:10].

The Scaffold Hypothesis: Why Similar Natural Compounds Often Share Mechanisms of Action

The Historical and Modern Significance of Natural Products in Drug Discovery

Historical Foundations: From Folklore to Formal Pharmacology

The use of natural products (NPs) as therapeutics is a practice deeply rooted in human history, forming the original foundation of pharmacology [1]. Ancient civilizations systematically documented the medicinal properties of plants, fungi, and other natural sources. The earliest records, such as Mesopotamian clay tablets (c. 2600 B.C.), describe oils from Cupressus sempervirens (Cypress) and Commiphora species (myrrh) for treating coughs and inflammation—remedies whose derivatives are still in use today [1]. Similarly, the Egyptian Ebers Papyrus (c. 2900 B.C.) catalogs over 700 plant-based drugs, while ancient Chinese texts like the Shennong Herbal (c. 100 B.C.) document hundreds of medicinal substances [1].

This traditional knowledge was not limited to plants. Folklore applications extended to fungi and marine organisms. For instance, the birch fungus Piptoporus betulinus was used as an antiseptic and to staunch bleeding, and red algae like Chondrus crispus were prepared as remedies for colds and respiratory infections [1]. These practices were based on empirical observation and trial-and-error over centuries, effectively conducting early-phase clinical testing through community use [2].

The critical transition from crude extracts to defined active agents marked the birth of modern chemistry-driven drug discovery. This is exemplified by the isolation of morphine from opium poppy (Papaver somniferum) in the early 1800s, and the derivation of acetylsalicylic acid (aspirin) from salicin in willow bark (*Salix alba) [1] [2]. These successes established the paradigm of identifying, isolating, and characterizing the bioactive chemical entities within natural remedies.

Table 1: Comparison of Historical and Modern Approaches to Natural Product Drug Discovery

| Aspect | Historical/Traditional Approach | Modern/Technology-Driven Approach |

|---|---|---|

| Source of Knowledge | Empirical observation, ethnobotany, folklore, and traditional medical systems (e.g., TCM, Ayurveda) [1] [2]. | Systematic screening, genomics, metabolomics, and database mining [3] [4]. |

| Lead Identification | Based on observed physiological effects in humans or animals [1]. | High-throughput screening (HTS) of compound libraries, target-based assays, and virtual screening [3]. |

| Compound Characterization | Use of crude extracts or partially purified mixtures [2]. | Advanced analytical chemistry (LC-MS, NMR), precise structure elucidation [3] [4]. |

| Mechanism of Action | Inferred from traditional use or observed outcomes; largely unknown [5]. | Investigated via molecular docking, transcriptomics, proteomics, and network pharmacology [5] [4]. |

| Scale & Supply | Limited to natural harvest, leading to sustainability and variability issues [3]. | Synthetic biology, total chemical synthesis, and cultivation optimization [3]. |

| Key Limitation | Unreliable efficacy, undefined composition, potential toxicity [1]. | Technical complexity of screening NPs, dereplication challenges, supply chain issues [3]. |

Evolution of Natural Product Drug Discovery Paradigms

Modern Revival: Technological Advances Overcoming Historical Hurdles

After a period of decline in the late 20th century due to the rise of combinatorial chemistry and technical challenges in screening natural extracts, NP drug discovery is experiencing a significant revival [3]. This resurgence is fueled by technological innovations that address long-standing bottlenecks such as dereplication (the rapid identification of known compounds), supply sustainability, and mechanistic elucidation.

Modern approaches leverage multi-omics strategies. Genomics and metagenomics allow researchers to mine the biosynthetic gene clusters of microbes and plants for novel compounds without traditional cultivation [3]. Metabolomics, particularly via LC-MS (Liquid Chromatography-Mass Spectrometry), enables the rapid profiling of complex natural extracts, annotating known molecules and highlighting novel ones for isolation [3] [4]. This is complemented by advanced nuclear magnetic resonance (NMR) techniques for definitive structure elucidation [3].

A pivotal modern shift is from a single-target "magic bullet" model to a multi-component, multi-target understanding of NP action, which aligns more closely with the holistic nature of traditional remedies [5]. Network pharmacology and systems biology approaches are essential for this, mapping the complex interactions between multiple compounds in an extract and their collective impact on biological pathways [5] [6]. Furthermore, large-scale molecular docking allows for the virtual screening of thousands of NP structures against protein targets to predict potential mechanisms of action (MOA) [5].

Table 2: Core Experimental Technologies in Modern NP Research

| Technology | Primary Function in NP Discovery | Key Advantage |

|---|---|---|

| Next-Generation Sequencing (NGS) & Genomics | Mining biosynthetic gene clusters from unculturable organisms; identifying enzymes for synthesis [3]. | Accesses vast untapped chemical diversity from environmental DNA. |

| High-Resolution LC-MS / MS-MS | Rapid metabolomic profiling of extracts; dereplication; tentative identification of novel compounds [3] [4]. | High sensitivity and throughput; generates data for molecular networking. |

| Advanced NMR Spectroscopy | Definitive structural elucidation and stereochemistry determination of isolated compounds [3]. | Provides atomic-level structural information non-destructively. |

| High-Content Screening (HCS) | Phenotypic screening using automated microscopy to capture multi-parameter cellular responses to extracts [4]. | Reveals complex biological activity beyond single-target assays. |

| Molecular Docking & AI/ML | Predicting binding affinities and interactions of NPs with protein targets; virtual screening [5]. | Prioritizes compounds for testing; proposes mechanistic hypotheses. |

| Heterologous Biosynthesis | Expressing NP biosynthetic pathways in engineered host organisms (e.g., yeast, E. coli) [3]. | Solves supply issues for complex molecules; enables engineering. |

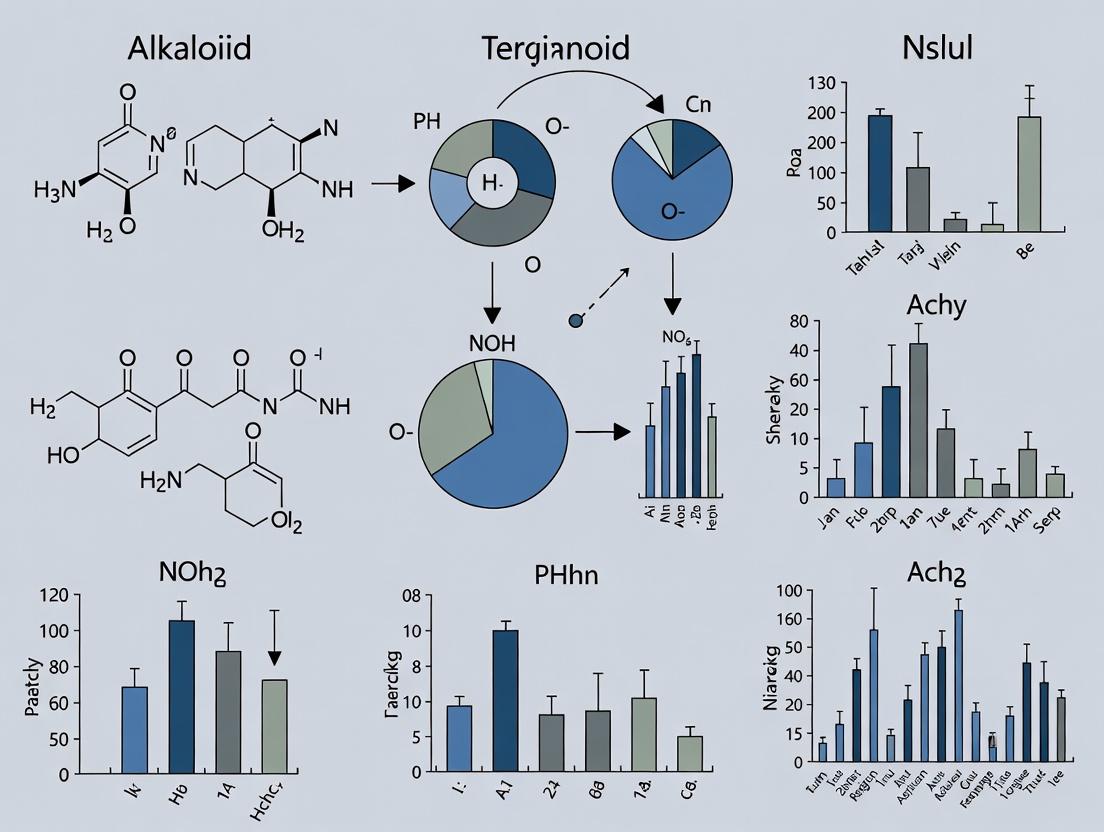

Comparative Analysis of Mechanism of Action: A Case Study on Similar Compounds

A central thesis in modern NP research is that structurally similar compounds often share similar mechanisms of action, yet subtle differences can lead to significant variations in efficacy and biological impact [5]. This is critical for understanding complex botanical medicines where multiple analogs coexist. A 2023 study provides a seminal experimental protocol for this comparative analysis, using the triterpenoids oleanolic acid (OA) and hederagenin (HG) as a model [5] [7].

Experimental Protocol for Comparing MOA of Similar NPs

The following stepwise methodology was employed to systematically compare OA and HG [5]:

Physicochemical Descriptor Calculation & Similarity Assessment:

- Source: 2D/3D structures of OA and HG were sourced from PubChem.

- Analysis: 1,116 molecular descriptors (e.g., molecular weight, logP, topological indices) were calculated using software like the Mordred library in Python.

- Similarity Metrics: Structural similarity was quantified using Euclidean, Cosine, and Tanimoto distances based on the descriptor arrays. This confirmed OA and HG are highly similar.

In Silico Systems Pharmacology & Target Prediction:

- Platform: The BATMAN-TCM platform was used for initial drug-target interaction (DTI) prediction.

- Process: Inputting OA and HG yielded predicted protein targets with a DTI score. Targets with scores ≥10 were selected as "druggable targets."

- Network Construction: A compound-target-pathway network was built in Cytoscape software. Over-representation analysis (ORA) of the target sets identified significantly enriched KEGG pathways and Gene Ontology (GO) terms for each compound.

Large-Scale Molecular Docking for Target Validation:

- Scope: Docking was performed against a druggable proteome (~150-200 proteins) rather than a single target.

- Software & Parameters: Docking software (e.g., AutoDock Vina) was used to simulate binding. The binding affinity (kcal/mol) and precise binding pose (orientation in the protein pocket) were analyzed for both compounds across all targets.

- Key Comparison: The study confirmed that OA and HG not only bound to the same subset of proteins but also occupied identical binding sites on those proteins, strongly suggesting a shared primary MOA.

Transcriptomic Validation via RNA-Seq:

- Experiment: Cell lines were treated with OA, HG, and a combination of both (OA+HG).

- Analysis: RNA sequencing (RNA-seq) was performed on treated vs. control cells. Differentially expressed genes (DEGs) were identified.

- Comparison: The gene expression profiles induced by OA and HG were highly correlated. Crucially, the profile from the OA+HG combination was not additive but highly similar to each compound alone, confirming their functional mechanism is conserved and not synergistic in this context.

Results and Significance of the Comparative Study

The integrated analysis confirmed that OA and HG, due to their shared core scaffold, interact with an overlapping set of protein targets in an identical manner, leading to highly concordant changes in gene expression [5]. This work provides a validated experimental framework for comparing similar NPs. It proves that scaffold-based grouping of NPs is a valid strategy for predicting MOA and that combining such similar compounds may not yield synergistic effects but rather reinforce the same biological networks [5] [7]. This has profound implications for standardizing botanical drugs and designing combination therapies.

Table 3: Comparative Analysis of Oleanolic Acid (OA) and Hederagenin (HG) [5]

| Analysis Method | Oleanolic Acid (OA) | Hederagenin (HG) | Interpretation & Conclusion | |

|---|---|---|---|---|

| Structural Similarity (Descriptor Distance) | Used as reference compound. | Showed minimal Euclidean/Cosine/Tanimoto distance from OA. | High structural similarity confirmed, implying potential functional similarity. | |

| Predicted Targets (BATMAN-TCM) | 87 high-score (DTI≥10) protein targets identified. | 79 high-score protein targets identified. | High degree of target overlap observed. Shared targets involved in cancer, lipid metabolism, and inflammatory pathways. | |

| Molecular Docking (Proteome-wide) | Bound to a specific subset of proteins with high affinity. | Bound to the same protein subset as OA with comparable affinity and identical binding site poses. | Confirms shared mechanism at the molecular interaction level. Similar scaffold leads to identical target engagement. | |

| Transcriptome Response (RNA-seq) | Induced a specific profile of differentially expressed genes (DEGs). | Induced a DEG profile highly correlated (R² > 0.9) with OA's profile. | Consistent downstream biological activity. The compounds perturb the same gene networks. | |

| Combination Treatment (OA+HG) | N/A | N/A | The DEG profile of the combination closely matched individual treatments, not an additive or novel profile. | Suggests combination acts via the same, non-synergistic mechanism. |

Workflow for Comparative Mechanism of Action (MOA) Studies

Integrated Platforms: The Future of NP Discovery and Development

The future lies in integrating the aforementioned technologies into cohesive platforms. An exemplar is the TCMs-Compounds Functional Annotation (TCMs-CFA) platform [4]. This platform systematically integrates:

- Knowledge Base Screening: Selecting herbs from a database of 100,000 TCM formulas for a desired indication (e.g., myocardial protection).

- Chemome Profiling: Analyzing a library of herb extracts using LC-MS to obtain all mass signals (potential compounds).

- Cytological Profiling: Screening the same extract library using high-content imaging to capture multi-parametric cell phenotypes.

- Data Integration & Correlation: Using algorithms to correlate specific mass signals (compounds) with specific phenotypic outcomes, thereby rapidly pinpointing bioactive lead compounds and proposing their mechanisms without isolating every single constituent first [4].

This "smart screening" approach, championed by agencies like the U.S. National Center for Complementary and Integrative Health (NCCIH), dramatically increases efficiency and directly links chemistry to biology [6] [4]. NCCIH's strategic priorities emphasize developing such methods, studying multi-component interactions, and investigating the complex pharmacokinetics and microbiome interactions of NPs [6].

Table 4: Key Research Reagent Solutions and Resources

| Category | Resource/Solution | Function & Description | Example/Source |

|---|---|---|---|

| Chemical Databases | PubChem | Central repository for chemical structures, properties, and bioactivity data of pure NPs and extracts. | https://pubchem.ncbi.nlm.nih.gov/ |

| NP-MRD (Natural Products Magnetic Resonance Database) | Open-access, FAIR-compliant database for NMR spectra and structural data of NPs, crucial for dereplication [6]. | https://np-mrd.org/ | |

| Bioinformatics & Pharmacology Platforms | BATMAN-TCM | Specialized platform for predicting drug-target interactions and network pharmacology analysis for TCM/herbal compounds [5]. | http://bionet.ncpsb.org/batman-tcm/ |

| GNPS (Global Natural Products Social Molecular Networking) | Community-contributed platform for sharing and analyzing MS/MS data to identify known compounds and discover new analogs within molecular families [3]. | https://gnps.ucsd.edu/ | |

| Specialized Research Centers | NaPDI Center (Natural Product Drug Interaction Center) | NIH/NCCIH-funded center developing best practices and conducting clinical research on NP-drug interactions [6]. | University of Washington. |

| Analytical Standards | Certified Reference Materials (CRMs) for Botanicals | Highly characterized, stable extracts or purified compounds essential for assay development, method validation, and product quality control. | Commercial suppliers (e.g., NIST, Phytolab). |

| Software & Libraries | Mordred Descriptor Calculator | Python library for calculating a comprehensive set of molecular descriptors from chemical structures, used for similarity analysis [5]. | https://github.com/mordred-descriptor/mordred |

| Cytoscape | Open-source software platform for visualizing and analyzing complex molecular interaction networks [5]. | https://cytoscape.org/ | |

| Biological Resources | Gene Expression Omnibus (GEO) / ArrayExpress | Public repositories for functional genomics data, including RNA-seq datasets from NP treatments, useful for validation and meta-analysis. | NCBI / EBI archives. |

The Molecular Similarity Triad: A Framework for Comparative Analysis

In the quest to understand the mechanisms of action of natural compounds, researchers are often confronted with molecules of intricate and diverse structures. Accurately defining their similarity is not a single task but a multi-faceted challenge, central to which are three complementary approaches: scaffold analysis, functional group identification, and physicochemical descriptor profiling. Scaffold-based methods reduce molecules to their core ring systems and linkers, providing a top-level view of structural kinship that is invaluable for classifying compound families and understanding broad structure-activity relationships (SAR) [8] [9]. Functional group analysis focuses on the reactive and interactive moieties attached to these scaffolds, which are often directly responsible for binding to biological targets and triggering a pharmacological response [10] [11]. Finally, physicochemical descriptors translate molecular structure into numerical representations of properties like polarity, hydrogen-bonding capacity, and volume, enabling quantitative similarity searches and predictive modeling of behavior in biological systems [12] [13].

This triad forms a hierarchical framework for comparative research. While a shared scaffold suggests a common evolutionary or synthetic origin and a similar overall shape, the decoration with specific functional groups fine-tunes target selectivity and potency. Underpinning both are the physicochemical properties that ultimately determine a molecule's bioavailability, distribution, and complementarity to a protein binding site. For natural products, which are characterized by complex scaffolds and unique functional group combinations optimized by evolution, this integrated view is particularly critical for deciphering their polypharmacology and for targeted genome mining [14] [15]. The following sections provide a detailed comparison of the tools, methods, and applications defining each vertex of this molecular similarity triad.

Figure: Workflow for Defining Molecular Similarity in Natural Products Research

Scaffold-Centric Approaches: From Core Identification to Hierarchical Networks

The scaffold, or molecular framework, serves as the foundational skeleton for classifying compounds. The Bemis-Murcko scaffold—defined as the union of all ring systems and the linker atoms connecting them—remains the standard for extracting a molecule's core [8]. This method effectively groups derivatives and analogs, enabling large-scale analysis of drug and bioactive compound collections. Studies have used this approach to reveal that many approved drugs contain scaffolds not found in common bioactive compound libraries, highlighting the unique chemical space occupied by drug molecules [8]. However, a single, rigid scaffold definition can be limiting, often collapsing diverse molecules into a single overpopulated cluster (like benzene) or failing to capture meaningful relationships between scaffolds that differ by a single ring [9].

To overcome these limitations, advanced hierarchical and multi-representation methods have been developed. The "Molecular Anatomy" (MA) framework introduces a multi-dimensional approach by defining nine levels of scaffold abstraction [9]. These range from the most concrete (the full Bemis-Murcko scaffold with atom and bond types) to the most abstract (a cyclic skeleton where all atoms are carbons and all bonds are single). This allows relationships to be established not just between molecules with identical cores, but also between those with topological or shape similarity. For instance, a pyridine and a benzene ring would be distinct in a Bemis-Murcko analysis but would converge at a higher abstraction level in MA, allowing researchers to identify potential bioisosteres or shape-based mimics [9].

Tools like Scaffold Hunter and network-based visualizations leverage these hierarchical relationships to map chemical space. The core application is in Structure-Activity Relationship (SAR) analysis and library design. After a high-throughput screen, clustering actives by their scaffold can immediately highlight privileged chemotypes. Furthermore, by organizing scaffolds in a tree or network based on structural relationships (e.g., matched molecular pairs, substructure links), researchers can systematically explore analog series and plan chemical exploration around the most promising cores [8] [9].

Figure: The Multi-Dimensional Molecular Anatomy Framework

Table 1: Comparison of Scaffold Representation and Analysis Methods

| Method | Core Definition | Key Advantages | Primary Applications | Tools/Examples |

|---|---|---|---|---|

| Bemis-Murcko | Rings + aliphatic linkers [8]. | Simple, standardized, widely adopted. Facilitates frequency analysis. | Identifying most common cores in drugs/actives; coarse-grained clustering. | Fundamental algorithm in RDKit, OpenEye. |

| Matched Molecular Pairs (MMP) | Pairs differing at a single site (R-group) [8]. | Quantifies effect of specific structural changes on activity/property. | SAR analysis, lead optimization, property prediction. | In-house algorithms, OpenEye toolkits [8]. |

| Molecular Anatomy (MA) | Nine hierarchical levels from concrete to abstract [9]. | Flexible, captures shape & topological similarity beyond exact structure. Unbiased. | Detailed SAR, linking diverse chemotypes, library diversity analysis. | MA web interface [9]. |

| Scaffold Tree/Network | Hierarchical deconstruction of scaffold via rule-based pruning [9]. | Visualizes relationships between scaffolds; organizes chemical space. | Navigating scaffold space, identifying analog series, scaffold-hopping. | Scaffold Hunter, in-house networks. |

Functional Group Analysis: Identifying Key Pharmacophoric Elements

Functional groups (FGs) are the pharmacophoric elements that dictate a molecule's chemical reactivity and its specific interactions with biological targets (e.g., hydrogen bonding, ionic interactions). Traditional analysis relies on searching for a predefined list of substructures (e.g., carboxylic acid, amine, guanidine). This approach is implemented in tools like Checkmol and ClassyFire, which can classify molecules into hundreds of chemical classes based on curated FG lists [11]. While useful, this method is inherently limited to known, pre-coded patterns and may miss novel or complex combinations.

A more comprehensive approach is offered by algorithmic FG identification, which automatically identifies all FGs in a molecule through an iterative atom-marking process [11]. The algorithm marks heteroatoms, multiply-bonded carbons, and acetal centers, then merges connected marked atoms into a group. This method can identify thousands of unique FGs, as demonstrated in an analysis of the ChEMBL database that revealed 3080 distinct groups [11]. The most common FGs in bioactive molecules were amides (41.8%), esters (37.8%), and tertiary amines (25.4%) [11]. This data-driven method is essential for comparing the functional group landscape of different compound collections, such as natural product databases versus synthetic libraries.

The power of FG analysis is showcased in diversity studies of natural product (NP) databases. An analysis of the Mexican NP database BIOFACQUIM using algorithmic FG identification found that over 15% of its compounds and 11% of its scaffolds were unique compared to large reference databases like ChEMBL and a comprehensive NP collection [10]. This highlights how focused NP databases can expand biologically relevant chemical space. FG analysis is crucial for mechanism of action (MoA) studies because similar target profiles often correlate with specific FG patterns. Furthermore, FG frequency is a key descriptor in target prediction tools like CTAPred, which uses similarity in FG fingerprints (like PubChem FP) to suggest protein targets for uncharacterized natural products [15].

Figure: Workflow for Functional Group Analysis of Compound Databases

Table 2: Approaches to Functional Group Analysis

| Approach | Methodology | Strengths | Weaknesses | Use Case Example |

|---|---|---|---|---|

| Predefined Substructure Search | Uses a curated library of SMARTS patterns (e.g., 200-500+ groups) [11]. | Fast, chemically intuitive, easy to implement. | Limited to known patterns; cannot identify novel/unusual FGs. | Toxicity filtering (PAINS), chemical classification (ClassyFire). |

| Algorithmic Identification [11] | Iterative atom marking based on connectivity and bond order. | Exhaustive, discovers all FGs without a priori knowledge. Identifies rare/unique groups. | May require post-processing to merge chemically equivalent forms. | Profiling FG diversity of novel NP databases (e.g., BIOFACQUIM) [10]. |

| Fingerprint-Based | Uses molecular fingerprints (e.g., PubChem, MACCS) that encode FG presence. | Computationally efficient, integrated into similarity search. | Not a explicit FG list; more opaque interpretation. | Similarity-based target prediction (CTAPred) [15]. |

| Consensus Diversity Plots | Combines multiple fingerprint & descriptor views to assess chemical space [10]. | Holistic view of diversity, reduces bias of any single method. | Complex to interpret; requires multiple computational tools. | Comparing chemical space of NP DB vs. drug-like DB (e.g., BIOFACQUIM vs. ChEMBL) [10]. |

Physicochemical Descriptors: Quantifying Molecular Properties for Predictive Modeling

Physicochemical descriptors translate structural information into numerical values that encode molecular properties, enabling quantitative similarity assessment and predictive modeling. These descriptors range from simple one-dimensional properties (e.g., molecular weight, logP) to complex topological indices and solvation parameter models.

The Abraham solvation parameter model is a particularly powerful framework that uses six descriptors to characterize a compound's capability for intermolecular interactions: excess molar refraction (E), dipolarity/polarizability (S), overall hydrogen-bond acidity (A) and basicity (B), McGowan's characteristic volume (V), and the gas-hexadecane partition coefficient (L) [12]. These descriptors are experimentally determined from chromatographic retention data and are used in Quantitative Structure-Property Relationship (QSPR) models to predict a wide range of pharmacokinetic, environmental, and chromatographic behaviors. The WSU-2025 database is a curated collection of these descriptors for 387 compounds, offering improved precision over its predecessor for property prediction [12].

For more specialized or rapid predictions, topological descriptors offer a computational alternative. These are calculated directly from the molecular graph (atoms as vertices, bonds as edges). K-Banhatti indices are a recent example used to model the physicochemical properties (e.g., enthalpy, molar refractivity) of anti-pneumonia drugs via linear and polynomial regression [13]. While such graph-based descriptors are easy to compute, their chemical interpretability can be lower than that of experimentally grounded descriptors like Abraham's.

In the realm of natural products, choosing the right descriptor for similarity searching is critical. A comparative study using the LEMONS algorithm to enumerate hypothetical modular natural products (like non-ribosomal peptides and polyketides) evaluated 17 different fingerprint methods [14]. The study found that circular fingerprints (ECFP/FCFP) generally performed well across different NP classes. Notably, for structures where rule-based retrobiosynthesis could be applied (using tools like GRAPE/GARLIC), this retrobiosynthetic alignment approach outperformed conventional 2D fingerprints, as it directly incorporates biosynthetic logic into the similarity metric [14]. This is a key insight for genome mining, where the goal is to connect a predicted biosynthetic gene cluster to a known natural product family.

Table 3: Comparison of Key Physicochemical Descriptor Methods

| Descriptor Type | Representative Examples | Origin/Calculation | Key Applications | Performance Notes |

|---|---|---|---|---|

| Solvation Parameters | Abraham descriptors (E, S, A, B, V, L) [12]. | Experimentally derived from chromatographic retention factors. | Predicting log P, solubility, blood-brain barrier penetration, environmental distribution. | High predictive accuracy for free-energy related properties; requires experimental data or reliable models. |

| Topological Indices | K-Banhatti indices, Wiener index, Zagreb indices [13]. | Calculated from the hydrogen-suppressed molecular graph. | QSPR/QSAR modeling of boiling point, molar refractivity, biological activity. | Fast to compute; interpretability can vary; performance depends on the modeled property. |

| 2D Molecular Fingerprints | ECFP4, FCFP4, MACCS, PubChem fingerprints [14]. | Encoded structural patterns (substructures, atom environments). | Similarity search, virtual screening, clustering, machine learning. | ECFP4 circular fingerprints show strong all-around performance for NPs [14]. |

| 3D & Shape-Based Descriptors | Rapid Overlay of Chemical Structures (ROCS), Electroshape. | Based on 3D conformation and molecular volume/shape. | Scaffold hopping, identifying bioisosteres, target prediction where shape is key. | Computationally intensive; performance can be sensitive to conformation generation. |

| Retrobiosynthetic Alignments | GRAPE/GARLIC algorithm [14]. | Decomposes NPs into biosynthetic building blocks (e.g., amino acids, acyl units). | Similarity search within NP classes (e.g., peptides, polyketides); genome mining. | Can outperform 2D fingerprints for modular NPs when biosynthetic rules apply [14]. |

Experimental Protocols for Data Generation

Reliable molecular similarity analysis depends on high-quality underlying data. This section details standardized protocols for generating key descriptor sets.

Protocol 1: Assigning Abraham Solvation Parameter Descriptors (for the WSU Database) [12]: This experimental method assigns the descriptors (E, S, A, B, V, L) for a neutral compound.

- Compound Preparation: Purify the target compound to >95% homogeneity. For liquids, measure the refractive index (η) at 20°C for sodium D-line.

- Chromatographic Measurements: Obtain retention factors (log k) for the compound on a minimum of 6-8 calibrated chromatographic systems with known system constants (e, s, a, b, l, v). Systems typically include gas chromatography (GC) on 2-3 stationary phases, reversed-phase liquid chromatography (RPLC) with 3-4 different mobile phase compositions, and optionally micellar electrokinetic chromatography (MEKC).

- Descriptor Calculation via Solver Method: Use the Solver optimization algorithm (e.g., in Microsoft Excel) to find the set of descriptors that minimizes the difference between the experimentally measured log k values and those predicted by the solvation parameter equations (log SP = c + eE + sS + aA + bB + lL for GC; log SP = c + eE + sS + aA + bB + vV for condensed phases).

- Validation: The derived descriptors should predict retention in additional, orthogonal chromatographic systems within an acceptable error margin (typically <0.05-0.08 log units).

Protocol 2: Evaluating Similarity Methods with the LEMONS Algorithm [14]: This in silico protocol benchmarks fingerprint performance for natural product-like space.

- Library Generation: Use LEMONS to generate a library of 100+ hypothetical "original" modular natural products (e.g., linear nonribosomal peptides of length 8-12) by specifying monomers (e.g., 20 proteinogenic amino acids) and optional starter units.

- Creation of Modified Structures: Systematically modify each original structure by substituting one monomer, adding/removing a tailoring reaction (e.g., glycosylation, N-methylation), or inducing macrocyclization to create a "modified" structure.

- Similarity Search: For each modified structure, calculate its similarity (using Tanimoto coefficient) to every original structure in the library using the fingerprint method under evaluation (e.g., ECFP4, MACCS).

- Performance Scoring: A "correct match" is scored if the modified structure's highest similarity is to its parent original structure. The performance metric is the percentage of correct matches across all modified structures.

- Parameter Investigation: Repeat steps 1-4 while varying biosynthetic parameters (e.g., size, cyclization, monomer set) to assess the fingerprint's robustness across NP chemical space.

Protocol 3: Algorithmic Functional Group Identification [11]:

- Atom Marking: Parse the molecular structure. Mark all heteroatoms (any atom other than C or H), including halogens.

- Carbon Marking: Additionally mark the following carbon atoms: (a) those connected by a non-aromatic double/triple bond to any heteroatom; (b) those in non-aromatic carbon-carbon double/triple bonds; (c) acetal-type sp3 carbons connected to two or more O, N, or S atoms (where these heteroatoms have only single bonds); (d) all atoms in small, strained rings (oxirane, aziridine, thiirane).

- Group Formation: Merge all connected marked atoms to form a single functional group.

- Environment Capture: Extract the identified functional group along with its immediate unmarked carbon neighbors (to retain aliphatic/aromatic context).

- Generalization (Optional): For frequency analysis, generalize groups by replacing peripheral carbon substituents with a generic "R" symbol, while preserving critical distinctions (e.g., keeping the -OH hydrogen, distinguishing aldehydes from ketones).

Table 4: Key Research Reagent Solutions for Molecular Similarity Analysis

| Item / Resource | Type | Function / Purpose | Example in Research Context |

|---|---|---|---|

| ChEMBL Database [8] [15] | Bioactivity Database | Source of standardized bioactive compounds with target annotations. | Reference set for scaffold/FG frequency analysis; source of known actives for target prediction models. |

| RDKit or OpenEye Toolkits [8] [14] | Cheminformatics Software | Open-source/commercial libraries for chemical informatics. Core functionality for structure manipulation, fingerprint generation, and descriptor calculation. | Used to implement MMP analysis [8], generate fingerprints for LEMONS [14], and standardize structures. |

| WSU-2025 Descriptor Database [12] | Curated Physicochemical Data | Provides experimentally derived Abraham solvation parameters for 387 varied compounds. | Used as a training set or benchmark for developing and validating predictive QSPR models for pharmacokinetic properties. |

| BIOFACQUIM & COCONUT [10] | Natural Product Databases | Curated collections of natural product structures (regional and global). | Primary data for analyzing the unique scaffold and FG diversity of NPs compared to synthetic libraries [10]. |

| Checkmol / ClassyFire [11] | Functional Group Classifier | Software for identifying predefined functional groups and chemical classes. | Rapid chemical taxonomy assignment and filtering based on functional group presence. |

| CTAPred Tool [15] | Target Prediction Software | Open-source, command-line tool for similarity-based target prediction optimized for natural products. | Generating testable MoA hypotheses for uncharacterized NPs by finding similar compounds with known targets. |

| LEMONS Algorithm [14] | In Silico Enumeration Software | Generates hypothetical modular NP structures for benchmarking similarity methods. | Systematically testing which fingerprint (e.g., ECFP4 vs. GRAPE) best recovers biosynthetically related NP pairs. |

| Solvation Parameter Model System Constants | Calibrated Chromatographic Data | Pre-determined (e, s, a, b, l, v) constants for specific GC, LC, or MEKC systems [12]. | Essential for the experimental determination of Abraham descriptors for new compounds (Protocol 1). |

Defining molecular similarity requires a strategic choice of perspective—scaffold, functional group, or physicochemical profile—each illuminating different aspects of a compound's identity and potential bioactivity. For natural products research, an integrated approach is paramount: a shared scaffold may point to a common biosynthetic origin, distinct functional groups can explain divergent target selectivity, and the overall physicochemical profile dictates bioavailability. The experimental and computational protocols detailed here provide a roadmap for generating robust data to fuel these analyses.

Future directions point towards increased integration and prediction. The development of bioactivity descriptors, as seen in the Chemical Checker and its "signaturizer" neural networks, aims to infer a molecule's biological profile (target, cell response, clinical effect) directly from structure, creating a powerful new similarity metric for MoA prediction [16]. Furthermore, the success of retrobiosynthetic alignment tools like GRAPE for NP similarity suggests a promising path: incorporating biosynthetic logic directly into cheminformatic algorithms will enhance genome mining and the discovery of new members of valuable NP families [14]. As databases grow and machine learning models become more sophisticated, the definition of molecular similarity will evolve from a static comparison of structure to a dynamic prediction of biological function, accelerating the unraveling of natural products' complex mechanisms of action.

Figure: Integrated Workflow for the WSU-2025 Solvation Descriptor Database

The Evolution of the Mechanistic Paradigm

For over a century, drug discovery was dominated by the “magic bullet” paradigm, a concept pioneered by Paul Ehrlich which envisioned a single, selective drug acting on a single, well-defined target to treat a disease [17] [18]. This reductionist approach, focused on achieving high affinity and selectivity, has been the cornerstone of modern pharmacology, leading to numerous successful therapies [19] [17]. However, its limitations became starkly apparent when addressing complex, multifactorial diseases like cancer, neurodegeneration, and metabolic syndromes, where clinical efficacy was often insufficient or accompanied by drug resistance and adverse effects [19].

Natural products (NPs), with their millennia of empirical use in traditional medicine, have long presented a challenge to this single-target model. They are inherently multi-component, multi-target agents, whose therapeutic effects arise from the synergistic modulation of biological networks rather than the inhibition of a single protein [5] [19]. This inherent polypharmacology was initially an obstacle to their standardization and development within the conventional drug discovery pipeline [5]. The paradigm has now decisively shifted. Driven by the understanding of disease complexity and enabled by advances in systems biology and computational power, research has moved towards a multi-target paradigm [19] [17]. The new goal is to identify “master key” compounds that favorably interact with multiple targets to produce a coordinated, clinically beneficial effect with reduced toxicity [17]. This guide compares the contemporary methodological frameworks used to elucidate these complex mechanisms of action (MOA) for natural products and similar compounds.

Comparative Analysis of Methodological Approaches

The elucidation of multi-target MOA requires a suite of complementary methodologies, moving beyond simple target identification to understanding systems-level effects. The table below summarizes the core approaches.

Table 1: Comparison of Core Methodological Approaches for Natural Product MOA Elucidation

| Methodology | Primary Objective | Key Advantage | Primary Limitation | Example Output/Data |

|---|---|---|---|---|

| Systems Pharmacology & Network Analysis | To construct and analyze compound-target-pathway-disease networks from existing knowledge bases [5] [20]. | Provides a holistic, hypothesis-generating view of potential polypharmacology. | Relies on prior knowledge; does not confirm novel interactions or functional activity. | Network graphs; enriched pathway lists (e.g., KEGG, GO) [5]. |

| Large-Scale Molecular Docking | To computationally predict binding affinities and poses of a compound (or library) against a large panel of protein structures [5]. | Can screen thousands of potential targets in silico; identifies potential binding sites for similar compounds. | Accuracy depends on protein structure quality; predicts binding, not functional outcome. | Docking scores; predicted binding poses and target lists [5]. |

| Transcriptomics & Connectivity Mapping | To compare the gene expression signature induced by a compound to signatures of drugs with known MOA [5] [21]. | Captures the functional, systems-level cellular response; enables MOA inference by similarity. | Results are cell-context dependent; changes may be indirect downstream effects. | Differential gene expression profiles; similarity scores to reference drugs [5] [20]. |

| Integrated Functional Genomics (e.g., DeepTarget) | To correlate drug sensitivity profiles with genetic dependency (e.g., CRISPR knockout) data across many cell lines [22]. | Identifies context-specific primary and secondary targets directly linked to cell killing/viability. | Requires large, matched multi-omics datasets; computationally intensive. | Drug-Knockout Similarity (DKS) scores; predicted primary and secondary targets [22]. |

The performance and utility of these methods vary significantly. A benchmark study evaluating target prediction tools on eight high-confidence cancer drug-target datasets found that integrated functional genomic methods (DeepTarget) achieved a mean AUC of 0.73, outperforming state-of-the-art structure-based prediction tools like RosettaFold All-Atom (AUC 0.58) in capturing clinically relevant, context-specific mechanisms [22]. This highlights the strength of methods that incorporate functional cellular response data over purely structural or knowledge-based approaches.

Detailed Experimental Protocols for MOA Comparison

To objectively compare the MOA of similar natural compounds, researchers employ integrated workflows. The following protocols detail key methodologies cited in recent literature.

Protocol 1: Integrated In Silico Workflow for Comparing Similar Compounds

This protocol, adapted from a 2023 study, is designed to test the hypothesis that compounds with identical molecular scaffolds share similar MOAs [5].

- Compound Selection & Descriptor Calculation: Select compounds of interest (e.g., oleanolic acid (OA) and hederagenin (HG)). Retrieve their structures (e.g., SMILES) from PubChem. Calculate a comprehensive set of molecular descriptors (e.g., using the Mordred library) to quantify physicochemical properties [5].

- Similarity Quantification: Compute molecular similarity between compounds using multiple distance metrics (Euclidean, Cosine, Tanimoto) based on the calculated descriptors. This establishes a baseline for structural and property similarity [5].

- Network Pharmacology Analysis: Use a platform like BATMAN-TCM to predict druggable protein targets for each compound. Select high-confidence targets (e.g., Drug-Target Interaction score ≥10). Perform Over-Representation Analysis (ORA) on the target sets using databases like KEGG and Gene Ontology to identify significantly enriched pathways. Construct and visualize a compound-target-pathway network using software like Cytoscape [5].

- Large-Scale Molecular Docking: Prepare a library of protein structures representing the human “druggable proteome.” Perform automated molecular docking of each compound against the entire library. Analyze results to identify shared high-affinity targets and, critically, to determine if similar compounds bind in the same protein binding site, which strongly suggests a shared mechanism [5].

- Transcriptomic Validation (RNA-seq): Treat a relevant cell line with individual compounds and their combination. Perform RNA-sequencing. Analyze differentially expressed genes (DEGs). Compare the gene expression signatures: similar compounds should induce correlated transcriptional responses, and the signature of the combination should resemble that of the individual components, confirming mechanistic consistency [5].

Protocol 2: Pathway Fingerprint Similarity via Heterogeneous Networks

This approach uses a “drug-target-pathway” heterogeneous network to compare a natural product’s MOA to that of approved reference drugs [20].

- Network Construction:

- Target Prediction: Compile potential targets for the natural product (e.g., Xiyanping injection, XYPI) from multiple sources: literature mining, bioassay databases (PubChem), and prediction tools (BATMAN-TCM, STITCH). Calculate a combined confidence score for each drug-target interaction [20].

- Pathway Association: Link the compiled targets to biological pathways using annotation databases (Gene Ontology Biological Process, Reactome, KEGG) [20].

- Build Heterogeneous Network: Create a network with three node types (drugs, targets, pathways) and two edge types (drug-target and target-pathway associations) [20].

- Similarity Calculation: Use a meta-path-based similarity algorithm (e.g., PathSim) to compute the pathway fingerprint similarity between the natural product and reference drugs (e.g., NSAIDs and glucocorticoids) within the network. This measures how similar their target-pathway footprints are [20].

- Experimental Validation: In a disease-relevant model (e.g., LPS-activated macrophages), treat cells with the natural product and reference drugs. Perform transcriptomic analysis. Compare the gene expression patterns to validate whether the natural product’s profile aligns with the reference drug predicted to be most similar by the network analysis [20].

Protocol 3: Context-Specific MOA Prediction Using Functional Genomics

This protocol leverages large-scale public datasets to predict primary, secondary, and context-dependent targets [22].

- Data Curation: Obtain matched datasets for a panel of cancer cell lines: drug sensitivity profiles (e.g., AUC or IC50 from DepMap), genetic dependency profiles (Chronos-processed CRISPR knockout viability scores), and omics data (gene expression, mutation) [22].

- Primary Target Prediction (DKS Score): For a given drug, calculate the Drug-Knockout Similarity (DKS) score for every gene. This involves computing the Pearson correlation between the drug’s viability effect profile across all cell lines and the viability effect profile of knocking out that gene. High DKS scores indicate genes whose knockout phenocopies the drug’s effect, suggesting they are direct or proximal targets [22].

- Secondary Target & Context-Specific Analysis:

- Identify secondary mechanisms by performing de novo decomposition of the drug response profile in cell subsets, or by calculating DKS scores specifically in cell lines where the primary target is not expressed or mutated [22].

- Determine mutant vs. wild-type targeting preference by comparing the DKS score for a target in mutant versus wild-type cell lines. A positive mutant-specificity score indicates the drug is more effective against the mutant form [22].

- Validation: Benchmark predictions against gold-standard drug-target pairs. Validate novel predictions experimentally, for example, by showing that a predicted drug (e.g., pyrimethamine) modulates its predicted pathway (e.g., oxidative phosphorylation) using relevant functional assays [22].

Visualizing Workflows and Pathways

Diagram: Multi-Method MOA Elucidation Workflow

Diagram Title: Integrative Workflow for Multi-Target MOA Elucidation

Diagram: "Drug-Target-Pathway" Heterogeneous Network Logic

Diagram Title: Heterogeneous Network for MOA Similarity Inference

The Scientist's Toolkit: Key Reagents & Research Solutions

Successful MOA research relies on specific reagents, databases, and software tools. The following table details essential components for the featured methodologies.

Table 2: Essential Research Toolkit for Multi-Target MOA Studies

| Tool/Reagent Category | Specific Example(s) | Function & Role in MOA Research |

|---|---|---|

| Chemical Structure & Property Databases | PubChem, ChEBI, TCM Database [5] [20] [17] | Provide canonical structures (SMILES), physicochemical properties, and chemical ontology for natural compounds, essential for similarity analysis and descriptor calculation. |

| Molecular Descriptor & Docking Software | Mordred Python Library, AutoDock Vina, Glide [5] | Enable quantitative characterization of molecular properties and high-throughput prediction of compound binding to protein targets. |

| Target Prediction & Network Platforms | BATMAN-TCM, STITCH, SwissTargetPrediction [5] [20] | Predict potential protein targets based on chemical similarity, bioassay data, and literature mining, forming the basis for network pharmacology. |

| Pathway & Functional Annotation Databases | KEGG, Gene Ontology (GO), Reactome, WikiPathways [5] [20] | Provide curated knowledge on gene-pathway and protein-function relationships, required for over-representation analysis and pathway fingerprinting. |

| Transcriptomics & Functional Genomics Data Portals | DepMap, GEO, LINCS [21] [22] | Host large-scale, public drug response, gene expression, and genetic dependency datasets crucial for connectivity mapping and integrated analyses like DeepTarget. |

| Network Visualization & Analysis Suites | Cytoscape [5] | Allow for the construction, visualization, and topological analysis of complex compound-target-pathway-disease networks. |

| Specialized Computational Tools | DeepTarget [22], PathSim algorithm [20] | Perform specific advanced analyses: integrating multi-omics data for target prediction or computing similarity within heterogeneous networks. |

The field has conclusively moved from seeking a single “magic bullet” to mapping the multi-target “master key” properties of natural products [17]. This paradigm shift is supported by a robust and growing toolkit of complementary methodologies. As evidenced, the most powerful insights arise from integrating multiple approaches—combining in silico predictions from network pharmacology and docking with functional validation from transcriptomics and genetic screens [5] [22].

Future progress hinges on several key developments: First, the creation of larger, more standardized, and publicly accessible multi-omics datasets for natural product treatments will fuel more accurate computational models [21] [22]. Second, artificial intelligence and machine learning will play an increasing role in integrating these disparate data layers to generate testable MOA hypotheses and even design multi-targeted natural product derivatives [23] [19]. Finally, advanced experimental models, such as 3D organoids and sophisticated co-culture systems, will provide more physiologically relevant contexts in which to validate the complex, systems-level mechanisms predicted by these integrated workflows [24]. By embracing this multi-target paradigm and its associated technologies, researchers can fully decipher the therapeutic language of natural products, accelerating the development of effective, safe, and complex-disease-modifying therapies.

Understanding the mechanism of action (MOA) of bioactive compounds, particularly multi-component natural products, remains a central challenge in pharmacology and drug discovery [5]. The complexity arises from the polypharmacology inherent to many natural compounds, which engage multiple targets simultaneously. A promising paradigm for deconvoluting this complexity is the systematic comparison of structurally similar compounds [5].

The core hypothesis guiding this comparison guide posits that structural congruence—defined as shared molecular scaffolds and physicochemical profiles—predicts congruent target engagement and downstream pathway modulation. This hypothesis is grounded in the principle that a compound's three-dimensional structure dictates its complementary binding interactions with biological targets [5]. Consequently, compounds with high structural similarity are likely to interact with overlapping sets of proteins, leading to activation or inhibition of convergent signaling pathways and biological processes.

This guide objectively evaluates this hypothesis by comparing experimental approaches and data for assessing structural congruence and its biological implications. The focus is on methodologies that bridge chemoinformatics, systems biology, and cellular pharmacology to move beyond single-target analysis towards a holistic understanding of compound action [5] [25]. The thesis context is the broader effort to establish reliable frameworks for comparing the MOA of similar natural compounds, which is essential for their standardization, therapeutic application, and development as novel drug leads [5] [26].

Defining and Measuring Structural Congruence

The first step in testing the core hypothesis is to quantitatively define and measure "structural congruence." Research employs a multi-descriptor approach, moving beyond simple visual similarity to capture nuanced physicochemical properties that influence binding.

Computational Analysis of Molecular Descriptors: A foundational method involves calculating a wide array of molecular descriptors. One study compared the triterpenes oleanolic acid (OA) and hederagenin (HG)—which share a pentacyclic scaffold—against the structurally distinct gallic acid (GA) [5]. Using the Mordred library, 1,116 molecular descriptors were computed for each compound. The similarity between paired compounds was then measured using Euclidean, Cosine, and Tanimoto distances. As shown in Table 1, OA and HG demonstrated significantly higher structural similarity to each other than to GA across all distance metrics [5].

Table 1: Quantitative Measures of Structural Similarity Between Natural Compounds [5]

| Compound Pair | Euclidean Distance | Cosine Distance | Tanimoto Distance | Interpretation |

|---|---|---|---|---|

| OA vs. HG | 0.138 | 0.013 | 0.165 | High similarity |

| OA vs. GA | 1.000 | 0.419 | 0.877 | Low similarity |

| HG vs. GA | 0.999 | 0.412 | 0.878 | Low similarity |

Temporal Evolution of Structural Properties: A macro-level analysis comparing Natural Products (NPs) and Synthetic Compounds (SCs) over time reveals that NPs have evolved to become larger, more complex, and more hydrophobic [26]. Despite this evolution, NPs maintain a broader and more diverse chemical space than SCs, which are constrained by synthetic feasibility and "drug-like" rules [26]. This historical divergence underscores that NPs offer unique structural templates, and comparing compounds within this NP space requires specialized metrics sensitive to their complex, often hydroxyl-rich, architectures.

Experimental Validation: From In Silico Prediction to Cellular Engagement

Testing the hypothesis requires moving from computational prediction to experimental validation of target and pathway engagement. The following sections compare key methodologies and their findings.

Network Pharmacology and Pathway Analysis

Protocol: Systems pharmacology platforms like BATMAN-TCM predict drug-target interactions (DTI) by integrating chemical structure, side effects, gene expression, and protein network data [5]. For compounds like OA and HG, potential targets are identified (DTI score ≥ 10). Over-representation analysis (ORA) is then performed on these target sets using databases like KEGG to identify significantly enriched pathways (adjusted p-value < 0.05) [5].

Comparative Data: Research shows that structurally similar compounds enrich highly similar biological pathways. OA and HG significantly shared pathways related to lipid metabolism, atherosclerosis, and endocrine resistance [5]. In contrast, the pathways enriched by the structurally dissimilar GA were distinct, primarily involving chemical carcinogenesis and viral infection [5]. This supports the hypothesis that structural congruence leads to congruent pathway-level effects.

Diagram 1: Network Pharmacology Workflow for MOA Comparison [5]

Large-Scale Molecular Docking

Protocol: To confirm shared target engagement at an atomic level, large-scale molecular docking simulations are performed. This involves calculating the binding affinity and binding pose of a compound (e.g., OA, HG) against a library of human protein targets (the "druggable proteome") [5]. Congruent MOA is suggested when similar compounds dock into the same binding site of a target protein with comparable affinity.

Findings: Studies confirm that compounds with identical molecular scaffolds dock to identical locations on target proteins [5]. This provides direct computational evidence that structural congruence predicts specific, shared biophysical interactions with target proteins, forming the physical basis for the observed overlap in pathways.

Cellular Target Engagement and Phenotypic Linking (CeTEAM)

Protocol: The Cellular Target Engagement by Accumulation of Mutant (CeTEAM) platform provides experimental validation in live cells [27]. It utilizes engineered, destabilized variants of target proteins (e.g., PARP1-L713F) that are rapidly degraded. When a drug binds, it stabilizes the mutant, causing its accumulation, which is quantified via a fluorescent tag (e.g., GFP). Crucially, this readout can be measured concurrently with downstream phenotypic assays in the same cells.

Comparative Insight: CeTEAM directly tests the link between target binding (engagement) and biological effect. For example, it can dissect how different inhibitors engaging the same target (PARP1) result in divergent cellular outcomes like DNA trapping [27]. This demonstrates that while structural congruence predicts target engagement, the final phenotypic output may be modified by other factors, such as the compound's specific binding kinetics or effects on protein conformation.

Diagram 2: CeTEAM for Concurrent Target & Phenotype Measurement [27]

Pharmacogenomic Network Analysis (B-Index)

Protocol: A pharmacogenomic approach analyzes transcriptomic and drug sensitivity data across cell lines (e.g., NCI-60 panel) to infer drug-gene relationships [25]. A novel similarity metric, the B-index, was developed to compare drugs based on their shared inferred gene targets. The B-index is calculated as: B(x,y) = (1/2) * |x ∩ y| * (1/|x| + 1/|y|), where x and y are sets of gene targets for two drugs. It is less penalized by asymmetric set sizes than traditional indices [25].

Comparative Data: This method validates that structurally similar drugs have highly overlapping target profiles. For instance, the antimetabolites cytarabine and gemcitabine show both high B-index similarity (0.86) and high chemical structural similarity (Tanimoto: 0.75) [25]. This correlation between structural congruence and target-set congruence provides strong network-based evidence for the core hypothesis.

Table 2: Comparison of Drug Pairs by Target-Based (B-Index) and Structural Similarity [25]

| Drug Pair | Therapeutic Class | B-Index (Target Similarity) | Tanimoto (Structural Similarity) | Shared Target Example |

|---|---|---|---|---|

| Cytarabine & Gemcitabine | Antimetabolites | 0.86 | 0.75 | DNA Polymerase, RRM1, RRM2 |

| Afatinib & Neratinib | EGFR Tyrosine Kinase Inhibitors | 0.82 | 0.73 | EGFR, ERBB2, ERBB4 |

| Methotrexate & Pemetrexed | Antifolates | 0.78 | 0.51 | DHFR, TYMS, ATIC |

| Doxorubicin & Daunorubicin | Anthracyclines | 0.91 | 0.89 | TOP2A, TOP2B, PRKDC |

Integrated Analysis: Convergence of Evidence and Key Insights

The multi-method comparisons converge to support the core hypothesis but also reveal important nuances and limitations.

Strong Predictive Relationship: Evidence consistently shows that structural congruence is a powerful predictor of overlapping target engagement and pathway modulation. This holds true across computational (docking, network pharmacology), cellular (CeTEAM), and pharmacogenomic (B-index) levels of analysis [5] [25].

The Scaffold as a Key Unit: The shared molecular scaffold (core framework) appears to be a primary determinant of target selection. Natural products containing compounds derived from the same scaffold via biotransformation (e.g., OA and HG) are highly likely to share an MOA [5].

Divergence in Downstream Effects: While target engagement may be similar, final phenotypic outcomes can diverge. Factors such as binding affinity, kinetics, off-target effects, and cell-specific context can modulate the downstream pharmacology, as illustrated by CeTEAM's ability to uncouple binding from phenotype [27]. Therefore, structural congruence is a strong predictor of the initial pharmacological interaction, but not always the final therapeutic effect.

Utility in Drug Discovery: This paradigm is highly useful for drug repurposing and understanding combination therapies. The B-index can identify drugs with similar target profiles but different structures for repurposing [25]. Conversely, understanding shared pathways can help predict synergy or antagonism when combining structurally related natural compounds [5].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents, Platforms, and Materials for Comparative MOA Studies

| Item Name | Type | Primary Function in Research | Example/Supplier |

|---|---|---|---|

| BATMAN-TCM Platform | Bioinformatics Database & Tool | Predicts drug-target interactions (DTI) and constructs compound-target-pathway networks for natural products [5]. | Publicly available web platform |

| Destabilized Mutant Biosensors (e.g., PARP1-L713F-GFP) | Cellular Reagent | Engineered protein variant used in CeTEAM to quantitatively measure cellular target engagement of a compound in live cells [27]. | Can be engineered in-house or sourced |

| NCI-60 Cancer Cell Line Panel & Data | Biological Model & Dataset | Provides standardized transcriptomic and drug sensitivity data for pharmacogenomic analysis and drug-gene correlation studies [25]. | NCI Developmental Therapeutics Program |

| EnrichR Platform | Bioinformatics Tool | Performs over-representation analysis (ORA) to identify KEGG pathways, GO terms, or diseases significantly linked to a target gene set [5]. | Publicly available web platform |

| Mordred Molecular Descriptor Calculator | Cheminformatics Software | Calculates a comprehensive set of 1,826+ molecular descriptors from chemical structure for quantitative similarity analysis [5]. | Python library |

| Druggable Proteome Library | Computational Database | A curated library of human protein structures used for large-scale, parallel molecular docking simulations to predict potential targets [5]. | Various public and commercial sources |

Key Compound Classes and Case Studies (e.g., Triterpenes like Oleanolic Acid and Hederagenin)

Within the broad field of natural product drug discovery, a critical and informative approach involves the direct comparison of structurally and biosynthetically related compounds. This thesis employs such a framework, focusing on oleanolic acid (OA) and hederagenin (HG), two prototypical oleanane-type pentacyclic triterpenoids [28]. Despite sharing a core 30-carbon skeleton, subtle differences in their functionalization—specifically, HG possesses an additional hydroxyl group at the C-23 position—lead to significant divergences in their biological activity profiles, pharmacokinetic properties, and optimization strategies [29]. This comparison guide objectively analyzes their performance, supported by experimental data, to elucidate structure-activity relationships (SAR) and inform the rational development of triterpenoid-based therapeutics for researchers and drug development professionals.

Comparative Analysis of Biological Performance

Cytotoxicity and Anticancer Activity Profiles

The baseline cytotoxicity of OA and HG provides a foundation for comparing their anticancer potential. Studies across various cell lines reveal distinct potency ranges, which can be significantly enhanced through targeted structural modifications.

Table 1: Comparative Cytotoxicity of Oleanolic Acid, Hederagenin, and Select Derivatives

| Compound | Core Structure | Typical IC₅₀ Range (Parent Compound) | Example Potent Derivative & IC₅₀ | Key Cancer Cell Lines Tested | Primary Mechanism (Example) |

|---|---|---|---|---|---|

| Oleanolic Acid (OA) | C30H48O3 [30] | ~10 - 100 µM [31] | CDDO-Im (20c): < 0.1 µM [29] | HepG2, A549, MCF-7 [31] | Apoptosis induction, Nrf2 activation [32] [31] |

| Hederagenin (HG) | C30H48O4 [33] | ~20 - 80 µM [34] | C-28 Pyrazine Deriv. (Cpd 9): 3.45 µM [33] | A549, A2780, KBV [33] [35] | Apoptosis, cell cycle arrest (G2/M), P-gp inhibition [33] [34] |

| HG Derivative (Compound 15) | Modified HG [35] | N/A (Synthetic derivative) | Reported as highly active [35] | KBV (Multidrug-resistant) | Non-substrate P-glycoprotein inhibition [35] |

Key Findings:

- Baseline Activity: Both parent compounds exhibit moderate, micromolar-range cytotoxicity against a broad spectrum of cancer cell lines [34] [31]. HG often shows slightly greater potency in direct comparisons, which may be attributable to its additional hydrophilic hydroxyl group influencing target engagement [29].

- Derivative Potential: Both scaffolds are highly amenable to synthetic modification, leading to dramatic increases in potency. For OA, synthetic derivatives like the CDDO-series (e.g., CDDO-Im) achieve nanomolar IC₅₀ values [29]. For HG, modifications at the C-28 position (e.g., with pyrazine or pyrrolidinyl amide groups) have produced derivatives with IC₅₀/EC₅₀ values in the low micromolar to sub-micromolar range, representing a 25 to 30-fold increase over the parent compound [33].

- Unique Application of HG: A standout advantage of the HG scaffold is its successful development into derivatives that reverse multidrug resistance (MDR). Compound 15, for instance, was identified as a non-substrate inhibitor of P-glycoprotein (P-gp) [35]. It binds to P-gp without being effluxed, effectively increasing the intracellular concentration of co-administered chemotherapeutics like paclitaxel and demonstrating significant tumor growth inhibition (63.71%) in vivo [35].

Pharmacokinetic and Bioavailability Challenges

A major translational challenge common to both OA and HG is poor drug-like properties, though the strategies to overcome these barriers differ in focus.

Table 2: Pharmacokinetic Properties and Optimization Strategies

| Parameter | Oleanolic Acid (OA) | Hederagenin (HG) | Common Optimization Strategies |

|---|---|---|---|

| Solubility | Very low water solubility [31]. | Very low water solubility [33]. | Chemical Derivatization: Glycosylation, PEGylation, salt formation [32] [29].Formulation: Nanoparticles, liposomes, micelles, nanoemulsions [32] [33]. |

| Bioavailability | Low oral bioavailability due to poor solubility and extensive metabolism [31]. | Low oral bioavailability [33]. | Advanced delivery systems (see above) to enhance absorption and stability. |

| Primary PK Limitation | Extensive first-pass metabolism [31]. | Short half-life, rapid clearance [33]. | Structural modification to block metabolic soft spots; controlled-release formulations. |

| Key Optimization Focus | Enhancing systemic exposure for chronic diseases (e.g., cancer, metabolic disorders) [32] [31]. | Improving solubility and target engagement for potent cytotoxic/chemo-sensitizing agents [33] [35]. | |

| Example Tech. | Oleanolic acid-loaded nanoparticles for sustained release [32]. | HG derivative Compound 15 designed as non-substrate P-gp inhibitor to evade efflux [35]. |

Experimental Protocols for Key Studies

Protocol: In Vivo Evaluation of Oleanolic Acid for Psoriasis

This protocol, based on a 2025 study, details the assessment of OA's therapeutic efficacy in an immune-mediated disease model [30].

- 1. Animal Model Induction:

- Animals: Female BALB/c mice (6-8 weeks old).

- Psoriasis Model: The psoriasis-like lesion is induced by daily topical application of Imiquimod (IMQ) cream (62.5 mg/day) on the shaved back skin for 7 consecutive days [30].

- 2. Treatment Groups & Intervention:

- Mice are randomly divided into groups (n=10): Control (cream base), IMQ-only, positive control (e.g., hydrocortisone butyrate), and OA treatment groups.

- Treatment: Two hours after IMQ application, mice receive topical application of OA formulated in a cream base at varying concentrations (e.g., 1%, 5%, 10% w/w) [30].

- 3. Efficacy Assessment:

- Clinical Scoring: From day 0, skin severity is scored daily using a modified Psoriasis Area and Severity Index (PASI), evaluating erythema, scaling, and infiltration on a scale of 0-4 [30].

- Histopathological Analysis: On day 7, skin biopsies are processed for H&E staining. Epidermal thickness is measured, and a Baker score is assigned to grade histological features [30].

- Biomarker Analysis: Serum levels of inflammatory cytokines (e.g., IL-17, IL-23, TNF-α) are quantified using Enzyme-Linked Immunosorbent Assay (ELISA) kits [30].

- 4. Mechanistic Analysis:

- Network Pharmacology & Molecular Docking: Potential protein targets of OA are predicted using SwissTargetPrediction and SuperPred. Molecular docking (e.g., with AutoDock Vina) is performed to evaluate binding affinity between OA and key targets like STAT3 or MAPK3 [30].

Protocol: In Vitro Assessment of Hederagenin Derivatives as P-gp Inhibitors

This protocol outlines the evaluation of HG derivatives for overcoming multidrug resistance, a critical oncology challenge [35].

- 1. Cell Culture & Model:

- Cell Line: Use KBV cells, a multidrug-resistant subline of human oral epidermoid carcinoma KB cells that overexpress P-glycoprotein (P-gp) [35].

- Cytotoxicity Assay (MTT): Seed KBV cells in 96-well plates. Treat with a range of concentrations of the HG derivative alone and in combination with a chemotherapeutic agent (e.g., paclitaxel). After incubation, measure cell viability using MTT to determine IC₅₀ values and reversal fold (RF) [35].

- 2. P-gp Functional Assay:

- Rhodamine 123 (Rh123) Efflux Assay: Load KBV cells with Rh123, a fluorescent P-gp substrate. Treat cells with the HG derivative and incubate. Measure intracellular fluorescence via flow cytometry. Inhibitors of P-gp function will increase intracellular Rh123 retention [35].

- 3. Mechanism of Inhibition Studies:

- ATPase Activity Assay: Use a P-gp ATPase activity assay kit to determine if the compound stimulates or inhibits P-gp's ATP hydrolytic activity, indicating interaction with the transporter [35].

- Molecular Docking: Perform docking simulations of the HG derivative against a cryo-EM structure of human P-gp to predict its binding site (e.g., transmembrane drug-binding pockets vs. nucleotide-binding domains) [35].

- Cellular Accumulation & Efflux: Confirm the derivative is not a P-gp substrate by comparing its intracellular concentration in P-gp overexpressing vs. sensitive cells with or without a P-gp inhibitor [35].

Comparative Mechanistic Pathways and Molecular Targets

OA and HG modulate overlapping yet distinct cellular signaling networks. OA frequently exhibits antioxidant and anti-inflammatory activity, often acting as an Nrf2 activator and NF-κB inhibitor [32]. In contrast, HG and its derivatives can display a context-dependent pro-oxidant effect in cancer cells, partly through inhibiting the Nrf2-ARE pathway, while also strongly targeting P-glycoprotein (P-gp) to reverse multidrug resistance [33] [35].

Comparative Signaling Pathways of OA and HG

Experimental Workflow for Comparative Mechanism of Action Study

A systematic workflow for comparing the mechanism of action (MoA) of OA and HG derivatives integrates computational, in vitro, and in vivo approaches.

Workflow for Comparative Mechanism of Action Study

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagent Solutions for Triterpenoid Studies

| Reagent / Material | Function in Research | Application Example in OA/HG Studies |

|---|---|---|

| Imiquimod (IMQ) Cream | Disease Model Inducer. Topically applied to induce psoriasis-like skin inflammation and hyperplasia in mice [30]. | In vivo evaluation of OA's anti-psoriatic efficacy [30]. |

| KBV Cell Line | Multidrug Resistance Model. A P-glycoprotein-overexpressing subline of KB cells used to study drug resistance reversal [35]. | Screening HG derivatives for P-gp inhibition and chemosensitization potential [35]. |

| Rhodamine 123 (Rh123) | P-gp Substrate & Probe. A fluorescent dye actively effluxed by P-gp; used to assess P-gp functional activity [35]. | Rh123 efflux assay to confirm HG derivatives inhibit P-gp function [35]. |

| P-gp ATPase Activity Assay Kit | Mechanistic Biochemical Assay. Measures the stimulation or inhibition of P-gp's ATP hydrolytic activity upon compound binding [35]. | Determining if a HG derivative interacts with P-gp as a substrate or inhibitor [35]. |

| Specific ELISA Kits | Biomarker Quantification. Enzyme-linked immunosorbent assays for precise measurement of cytokine/concentration in serum or tissue lysates [30]. | Quantifying IL-17, TNF-α, etc., in OA-treated psoriasis mouse models [30]. |

| Network Pharmacology Databases | Target Prediction. Bioinformatics platforms (SwissTargetPrediction, SuperPred) to predict potential protein targets of small molecules [30]. | Identifying putative targets (e.g., STAT3, MAPK3) for OA in psoriasis [30]. |

| Molecular Docking Software | Binding Mode Analysis. Computational tools (AutoDock Vina, Glide) to simulate and score the interaction between compound and protein target [30] [35]. | Validating predicted interactions, e.g., OA-STAT3 docking [30] or HG derivative-P-gp docking [35]. |

Integrated Toolkit: Computational and Experimental Methods for MOA Comparison

Core Platform Comparison

The following table provides a high-level comparison of major systems pharmacology platforms, highlighting their primary functions, data integration capabilities, and suitability for different research stages in natural compound analysis.

Table 1: Comparative Overview of Key Systems Pharmacology Platforms

| Platform Name | Primary Function & Specialization | Key Data Sources & Integration | Core Analytical Strengths | Ideal Research Phase |

|---|---|---|---|---|

| BATMAN-TCM [36] [37] | Bioinformatics tool specifically for TCM molecular mechanism analysis. | Integrates data on herbs, compounds, and predicted targets. Supports user-customized compound/herb lists [37]. | Target prediction for novel compounds; Functional enrichment (pathway, GO, disease); Direct comparison of multiple TCM formulas [37]. | Early-stage hypothesis generation for TCM formulas and natural product mixtures. |

| TCMSP (Traditional Chinese Medicine Systems Pharmacology Database and Analysis Platform) [36] [38] | Comprehensive database and systems pharmacology platform. | Contains herbs, compounds, targets, diseases, and ADME properties (e.g., oral bioavailability) [36] [38]. | ADME screening to filter bioactive compounds; Network construction for "Herb-Compound-Target-Disease" relationships [38]. | Compound screening and prioritization based on pharmacokinetic properties. |

| NeXus [39] | Automated platform for network pharmacology and multi-method enrichment analysis. | Handles multi-layer relationships (genes, compounds, plants). Automates data processing and network construction [39]. | Integrated ORA, GSEA, and GSVA enrichment analyses; High-throughput automated analysis; Publication-quality visualization [39]. | High-throughput, in-depth mechanistic analysis of complex multi-compound systems. |

| TCMID (Traditional Chinese Medicine Integrative Database) [36] [38] | Large-scale integrative database. | Aggregates data on formulas, herbs, compounds, targets, and diseases from multiple sources [38]. | Data mining and retrieval; Visualization of complex herb-compound-target-disease networks [36]. | Data collection and exploratory network analysis for broad research questions. |

| Cytoscape [39] [40] | General-purpose, open-source network visualization and analysis software. | Functions as a visualization and integration hub for data from other databases and analyses. | Highly customizable network visualization and topology analysis; Large plugin ecosystem for extended functionality [40]. | Final-stage network visualization, customization, and presentation of results. |

Performance Benchmarking and Experimental Data

Empirical data on processing efficiency and predictive accuracy are critical for selecting the appropriate tool. The benchmarks below highlight the performance of leading platforms.

Table 2: Performance Benchmarking of Analytical Platforms

| Platform / Method | Dataset Scale | Processing Time | Key Performance Metric | Experimental Validation Correlation |

|---|---|---|---|---|

| BATMAN-TCM Target Prediction [37] | Golden standard drug-target interaction dataset. | N/A (Model Training) | ROC AUC = 0.9663 ("leave-one-interaction-out" cross-validation) [37]. | Successfully predicted targets for Qishen Yiqi dripping Pill, with Renin-Angiotensin System function validated in vitro [37]. |

| NeXus v1.2 [39] | 111 genes, 32 compounds, 3 plants. | 4.8 seconds (peak memory: 480 MB) [39]. | Automated detection of 15 format inconsistencies, 3 duplicate entries [39]. | Enrichment results for functional modules (e.g., inflammatory response p=3.4×10⁻¹⁰) align with known biology [39]. |