Rational vs. Random: Navigating Antibody Library Design for Optimal Discovery Outcomes

This article provides a comparative analysis of rational and random design methodologies for constructing antibody libraries, a cornerstone of modern biotherapeutic discovery.

Rational vs. Random: Navigating Antibody Library Design for Optimal Discovery Outcomes

Abstract

This article provides a comparative analysis of rational and random design methodologies for constructing antibody libraries, a cornerstone of modern biotherapeutic discovery. Aimed at researchers and drug development professionals, it explores the foundational principles and historical context of both paradigms. The discussion details specific methodological workflows, from target-focused rational design and synthetic CDR resampling to random mutagenesis and degenerate codon strategies. It addresses common experimental challenges and optimization techniques for maximizing functional diversity. Finally, the piece establishes a framework for validating library quality and directly comparing the performance of rational versus random approaches in terms of hit rates, affinity, and developability. The synthesis offers strategic guidance for selecting and integrating these methods to accelerate therapeutic discovery.

Foundations of Diversity: Understanding the Core Philosophies of Rational and Random Library Design

The pursuit of novel materials, biologics, and therapeutics is fundamentally driven by the strategies employed to explore vast design spaces. This guide objectively compares two foundational paradigms: rational (knowledge-driven) design and random (stochastic) design. The rational approach uses prior knowledge, mechanistic models, and computational predictions to guide targeted experimentation [1]. In contrast, the random approach relies on stochastic sampling, diversification, and screening to discover solutions without prior mechanistic bias [2].

Recent advancements, particularly in machine learning (ML) and high-throughput experimentation, have transformed both paradigms, leading to sophisticated hybrids [3] [4]. This comparison is framed within a broader thesis that the optimal choice of paradigm is not absolute but depends on the specific research problem, the quality of available data, and the desired outcome, whether it is deep mechanistic understanding or broad exploration of uncharted space.

Defining the Paradigms

| Aspect | Rational (Knowledge-Driven) Design | Random (Stochastic) Design |

|---|---|---|

| Core Philosophy | Hypothesis-driven; uses existing knowledge to predict and design optimal candidates. | Exploration-driven; uses randomness to generate diversity for empirical screening. |

| Knowledge Dependency | High dependency on prior mechanistic understanding, structural data, or reliable models. | Low initial dependency; thrives in areas with limited prior knowledge or complex rules. |

| Typical Workflow | Model creation → In silico prediction → Targeted synthesis → Validation. | Library generation (randomized) → High-throughput screening → Hit identification → Iteration. |

| Role of Computation | Central: Used for simulation, prediction, and filtering candidates before any lab work. | Supportive/Optimizing: Often used to analyze results post-screening or to guide later iterations. |

| Key Advantage | High efficiency and deep understanding; aims for "first-time-right" designs. | Broad exploration; capable of discovering novel, unexpected solutions. |

| Primary Risk | Failure due to flawed or incomplete models; paradigm blindness to solutions outside the model. | Resource-intensive; low hit rates; may miss optimal candidates due to sampling limitations. |

| Common Applications | Protein & enzyme engineering [5], pharmaceutical formulation [1], materials design [3]. | Directed evolution, early-stage drug discovery, combinatorial chemistry, A/B testing [2]. |

Performance Comparison: Experimental Data and Outcomes

The following table summarizes quantitative findings from key studies implementing each paradigm, highlighting their performance in practical research scenarios.

| Study Focus | Design Paradigm & Method | Key Performance Outcome | Experimental Scale / Notes |

|---|---|---|---|

| Signal Peptide Engineering [4] | Hybrid (Rational + Random): Directed evolution of XPR2-pre signal peptide using degenerate oligos, coupled with ML analysis. | Identified novel signal peptides with up to 2.91-fold increase in secreted Nanoluc luciferase activity versus native sequence. | Characterized 447 SP mutants; top performers validated across 3 additional enzymes. |

| MOF Stability Prediction [3] | Rational/ML-Driven: Machine learning models trained on literature-extracted experimental data. | Predicted water/thermal stability of MOFs; models enabled screening of ~10,000 CoRE MOF structures for stable candidates. | Data extracted from ~4,000 manuscripts; created datasets of ~3,000 Td values and ~1,092 water stability labels. |

| Clinical Trial Randomization [6] | Random (Structured): Compared covariate adaptive vs. simple randomization in pre-post study designs. | Covariate adaptive randomization yielded substantial power gains, especially as number of covariates increased. | Simulation study showing superior statistical efficiency over simple randomization. |

| Protein Stability Design [5] | Rational (Evolution-guided): Combines analysis of natural sequence diversity with atomistic design calculations. | Enabled robust heterologous expression of challenging proteins (e.g., malaria vaccine candidate RH5) with ~15°C higher thermal stability. | Applied to dozens of protein families resistant to experimental optimization alone. |

| Pharmaceutical Formulation [1] | Rational (Mechanistic): Used conceptual/mechanistic models to identify rate-limiting step in drug absorption. | Formulations designed to enhance diffusion rate showed strong in vitro-in vivo correlation, leading to optimized solution. | Contrasted with traditional "trial-and-error" approach, highlighting efficiency gains. |

Detailed Experimental Protocols

To ensure reproducibility and provide clear methodological insight, this section details the protocols for one seminal study from each paradigm.

Protocol 1: Rational/ML-Driven Design of Metal-Organic Framework (MOF) Stability

This protocol, based on the work described in [3], outlines the process of using extracted experimental data to train ML models for predicting material properties.

- Data Curation & Extraction:

- Source: Begin with a curated structural database (e.g., CoRE MOF 2019 ASR with ~10,000 structures) [3].

- Text Mining: Use natural language processing (NLP) and named entity recognition to mine associated scientific literature for target properties (e.g., thermal degradation temperature

T_d, water stability). - Data Digitization: For graphical data (e.g., thermogravimetric analysis (TGA) curves), use tools like WebPlotDigitizer to extract numerical data [3]. Establish uniform rules for interpreting plots (e.g., defining

T_das the onset of weight loss).

- Feature Engineering:

- Structural Descriptors: Calculate geometric and chemical descriptors (e.g., pore size, volume, surface area, metal/linker identity) from the crystal structures.

- Stability Labels: Assign binary or continuous labels (e.g., stable/unstable in water,

T_dvalue) to each MOF based on extracted data.

- Model Training & Validation:

- Split the dataset into training, validation, and test sets (e.g., 80/10/10).

- Train supervised ML models (e.g., random forest, gradient boosting, or neural networks) to predict stability labels from structural descriptors.

- Optimize hyperparameters using the validation set. Final model performance is reported on the held-out test set.

- Prediction & Design:

- Use the trained model to screen a large database of MOF structures for candidates with predicted high stability.

- Select top candidates for targeted synthesis and experimental validation.

Protocol 2: Hybrid Directed Evolution of Signal Peptides

This protocol, derived from [4], describes a method that incorporates stochastic library generation with rational analysis and ML.

- Degenerate Library Construction:

- Design: Synthesize two long, overlapping oligonucleotides encoding the parent signal peptide (e.g., XPR2-pre) but containing a high proportion of degenerate nucleotides (N,N) at targeted positions to introduce randomness.

- Assembly: Use Gibson assembly to incorporate the degenerate oligos into a reporter vector (e.g., containing Nanoluc luciferase) upstream of the gene.

- Transformation: Transform the assembled library into the host organism (Yarrowia lipolytica) to create a diverse mutant library.

- High-Throughput Screening:

- Culture individual clones in a microtiter plate format.

- Assay: Measure intracellular and extracellular reporter enzyme activity (e.g., Nanoluc luciferase luminescence) for each clone. The key metric is the secretion efficiency (extracellular/total activity).

- Hit Identification & Validation:

- Rank all screened mutants (e.g., 447 mutants) by secretion efficiency [4].

- Isolate the gene sequences of top-performing variants.

- Generalizability Test: Clone the novel signal peptides in front of other genes of interest (e.g., β-galactosidase, PET hydrolase) and quantify secretion performance to confirm broader utility.

- Machine Learning Analysis (Post-hoc):

- Use the sequence and performance data from the screen as a training set.

- Engineer sequence-based features and train ML models (e.g., linear regression, tree-based models) to predict secretion efficiency from sequence.

- The model can provide insights into sequence-activity relationships and guide future rational design rounds.

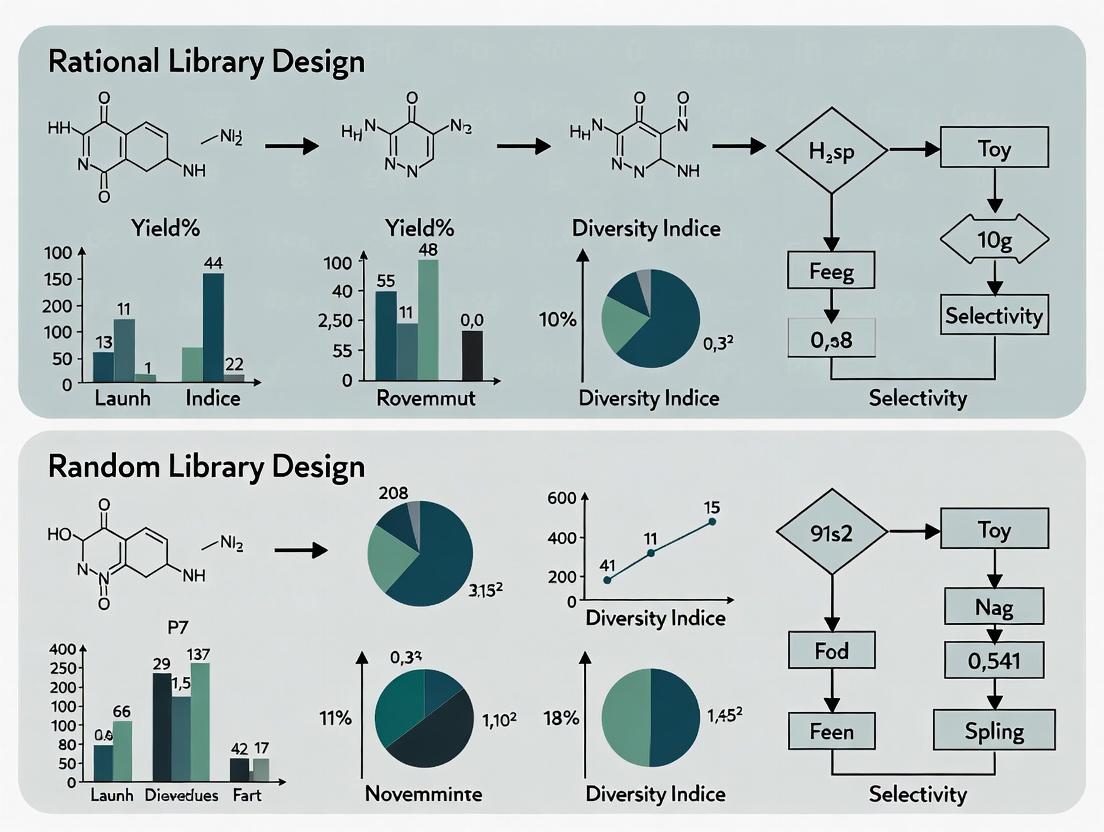

Visualization of Design Paradigms

Diagram 1: Rational Design Workflow

Diagram 2: Stochastic Random Design Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key materials and resources central to executing experiments within the compared design paradigms, as referenced in the cited studies.

| Item Name | Category | Primary Function in Design Research | Relevant Paradigm |

|---|---|---|---|

| Cambridge Structural Database (CSD) [3] | Data Resource | A repository of over half a million experimentally determined crystal structures for small molecules and materials (e.g., MOFs, TMCs). Serves as the foundational source of structural data for rational model building and training. | Rational |

| CoRE MOF Database [3] | Curated Dataset | A collection of ~10,000 experimentally derived, geometrically refined MOF structures. Provides a ready-to-screen library for computational property prediction and materials discovery. | Rational |

| Gibson Assembly Kit | Molecular Biology Reagent | An enzyme-based method for seamless assembly of multiple DNA fragments. Crucial for constructing variant libraries, such as those with degenerate oligonucleotides in directed evolution [4]. | Random/Hybrid |

| Degenerate Oligonucleotides | Synthetic DNA | Oligos containing randomized nucleotides (N, K, etc.) at specific positions. Used to introduce controlled randomness into gene sequences for creating diverse mutant libraries [4]. | Random/Hybrid |

| Nanoluc (Nluc) Luciferase [4] | Reporter Protein | A small, bright, and highly stable enzyme used as a quantitative reporter. Enables high-throughput screening of secretion efficiency by measuring extracellular vs. intracellular luminescence. | Random/Hybrid |

| WebPlotDigitizer [3] | Data Tool | A semi-automated software tool for extracting numerical data from published plot images (e.g., isotherms, TGA curves). Essential for curating experimental datasets from literature for ML. | Rational |

| Covariate Adaptive Randomization Algorithm [6] [2] | Statistical Software | A dynamic allocation algorithm (e.g., minimization by Pocock and Simon) that adjusts group assignments in real-time to balance prognostic factors across trial arms. Improves statistical power in complex experiments. | Random |

| Natural Language Processing (NLP) Toolkit [3] | AI/Software | Tools (e.g., ChemDataExtractor, custom models) for automated extraction of material names, properties, and synthesis conditions from scientific text. Automates the creation of large training datasets. | Rational |

The central thesis of modern protein engineering interrogates the comparative efficacy of rational versus random library design methods. This debate is rooted in a fundamental challenge: the sequence space for even a modest 100-residue protein is astronomically large (20¹⁰⁰ possibilities), making exhaustive exploration impossible [7]. Early combinatorial chemistry, pioneered in the 1990s, embraced unconstrained randomness, generating vast libraries of small molecules or random peptides with the hope of identifying rare, functional hits through high-throughput screening [8] [9]. However, this purely random approach proved inefficient for proteins, as randomly generated amino acid sequences rarely fold into stable, functional structures [7].

This limitation catalyzed an evolution towards informed design strategies. The field has progressively integrated increasing levels of rational insight to constrain and focus library diversity into productive regions of sequence space [5] [10]. This guide objectively compares the performance of key library design paradigms—from early random combinatorial libraries to modern semi-rational and fully computational de novo design—by examining their foundational principles, experimental success rates, and practical applications in drug development and biocatalyst engineering.

Historical Progression and Methodological Comparison

The evolution of library design is characterized by a shift from size-to-smart, where the emphasis moved from screening immense, random collections to constructing smaller, smarter libraries enriched for functional variants.

Early Combinatorial Chemistry (Random Libraries): The initial paradigm, drawing from small-molecule chemistry, relied on solid-phase split-and-pool synthesis to generate libraries of millions to billions of random compounds [9]. For peptides, this method involves dividing solid support beads into batches, coupling a different amino acid to each, re-mixing, and repeating cycles to create vast, one-bead-one-compound libraries. While powerful for discovering simple binding motifs, this purely stochastic approach is poorly suited for protein folding, as it ignores the fundamental biophysical rules governing secondary structure formation and hydrophobic core packing [7].

The Rational Turn: Binary Patterning and Focused Libraries: A critical advance was the introduction of the "binary code" strategy for de novo protein design [7]. This rational method constrains randomness by specifying the pattern of polar (hydrophilic) and nonpolar (hydrophobic) amino acids along a sequence to match the structural periodicity of the desired secondary structure (e.g., a 3.6-residue repeat for α-helices). The precise identity of residues at each position remains variable, creating a focused combinatorial library where all members are predisposed to fold into amphiphilic structures with buried hydrophobic cores. This strategy successfully produced well-ordered de novo four-helix bundles, demonstrating that rational constraints could dramatically improve the functional yield of libraries [7].

The Modern Integration: Semi-Rational and Computational Design: Contemporary practice leverages hybrid semi-rational approaches and computational power [10] [11]. Semi-rational design uses evolutionary data (from multiple sequence alignments) or structural insights to identify "hot spot" residues for randomization, creating small, high-quality libraries (< 1000 variants) with a high probability of containing improved functions [10]. Fully computational de novo design, supercharged by machine learning (ML) and AI like RFdiffusion and AlphaFold, now writes entirely novel protein sequences and structures to meet precise functional specifications [5] [12]. This represents the apex of rational design, moving from filtering randomness to ab initio generation.

Table 1: Comparison of Library Design Methodologies

| Design Paradigm | Key Principle | Typical Library Size | Level of Rational Input | Primary Experimental Screening Burden |

|---|---|---|---|---|

| Early Random Combinatorial | Stochastic generation of all possible sequences/compounds [9]. | Millions to Billions [8] | None | Extremely High |

| Focused/Rational (e.g., Binary Patterning) | Biophysical rules (polar/nonpolar patterning) constrain sequence space [7]. | Thousands to Millions | Medium (Scaffold Design) | High |

| Semi-Rational & Knowledge-Based | Randomization focused on evolutionarily or structurally informed "hot spots" [10]. | Hundreds to Thousands | High | Medium |

| Computational De Novo Design | Ab initio sequence generation based on physics & AI models for target structure/function [5] [12]. | Tens to Hundreds (computationally pre-filtered) | Very High (Full In Silico Modeling) | Low |

Experimental Protocols and Performance Data

The performance of different design strategies is best evaluated through direct experimental outcomes, including success rates in producing stable, folded proteins and conferring novel functions.

Protocol 1: Binary-Patterned De Novo Library Construction & Screening [7]

- Design: Define a target fold (e.g., four-helix bundle) and impose a binary pattern of polar (O) and nonpolar (●) residues matching its structural periodicity.

- Gene Library Synthesis: Encode the pattern using degenerate DNA codons (e.g., VAN for polar residues; NTN for nonpolar).

- Expression & Initial Screening: Express the library in E. coli and screen for soluble expression.

- Biophysical Characterization: Purify individual clones and assess structure via circular dichroism (for secondary structure) and NMR spectroscopy (for tertiary fold and dynamics). Key Finding: Early 74-residue libraries primarily yielded dynamic "molten globule" states. A redesigned 102-residue library with longer helices produced proteins with well-dispersed NMR spectra, and the solved solution structure of S-824 confirmed a native-like four-helix bundle [7].

Protocol 2: Evolution-Guided Stability Design (A Semi-Rational Optimization Protocol) [5]

- Sequence Analysis: Generate a deep multiple sequence alignment (MSA) of homologs to the target protein.

- Variability Filtering: At each residue position, filter out amino acid identities that are extremely rare in natural evolution, as these may compromise folding.

- Atomistic Design Calculation: Use computational protein design software (e.g., Rosetta) to identify stabilizing mutations within the evolutionarily allowed sequence space.

- Experimental Validation: Express and test a small set of designed variants (often <20) for stability (e.g., thermal melting temperature, Tm) and function. Key Finding: This method robustly increases protein stability and heterologous expression yields. For example, it enabled the stable bacterial expression of the malaria vaccine candidate RH5, raising its thermal denaturation midpoint by nearly 15°C [5].

Protocol 3: AI-Driven De Novo Design of Protein Binders [12]

- Specification: Define the target protein surface or small molecule for binding.

- Generative AI Design: Use a diffusion model or other generative neural network (e.g., RFdiffusion) to produce amino acid sequences predicted to fold into structures with complementary binding interfaces.

- In Silico Filtering: Score and rank designs using structure prediction networks (e.g., AlphaFold2 or RoseTTAFold).

- Experimental Testing: Synthesize a small number (often <100) of top-ranking designs. Key Finding: This purely computational pipeline can generate high-affinity protein binders and enzymes from scratch, with success rates for binding validated in the lab reaching significant fractions for some target classes [12].

Table 2: Comparative Experimental Performance Metrics

| Design Method & Example | Key Experimental Readout | Reported Performance/ Success Rate | Functional Outcome |

|---|---|---|---|

| Early Random Library (Fully random peptide library) [9] | Binding to a target (e.g., via phage display). | Very low hit rate; requires screening vast libraries. | Simple binding motifs (e.g., linear epitopes). |

| Focused Rational Library (Binary-patterned 102-residue 4-helix bundle) [7] | NMR structure determination & stability. | High fraction of soluble, helical proteins; native-like structure confirmed for specific clones. | De novo folded proteins with defined topology. |

| Semi-Rational Optimization (Evolution-guided stability design) [5] | Increase in thermal melting temperature (ΔTm) and soluble expression yield. | Reliable ΔTm increases of 5–15°C; can enable expression of previously intractable proteins. | Stabilized proteins with retained or improved function. |

| Computational De Novo Design (AI-generated protein binders) [12] | Affinity measurement (e.g., Kd) and structural validation. | Significant success rates for novel binding; high accuracy in structure prediction. | De novo enzymes, inhibitors, and vaccines. |

The Scientist's Toolkit: Essential Research Reagent Solutions

Implementing these methodologies requires specialized tools and reagents.

Table 3: Key Research Reagent Solutions for Library Design & Screening

| Reagent / Material | Function | Typical Application Context |

|---|---|---|

| Degenerate Oligonucleotides/Codon Sets (e.g., NNK, V/N) [7] | Encodes controlled amino acid diversity at specified positions during gene synthesis. | Constructing focused combinatorial libraries (binary patterning, site-saturation mutagenesis). |

| Solid-Phase Synthesis Resins & Linkers [9] | Provides an insoluble support for the stepwise chemical synthesis and compartmentalization of library compounds. | Split-and-pool combinatorial synthesis of peptides and small molecules. |

| Error-Prone PCR (EP-PCR) Kits [11] | Introduces random mutations throughout a gene during amplification. | Creating unbiased mutant libraries for directed evolution. |

| Phage or Yeast Display Vectors | Genetically links a protein variant to its encoding DNA, enabling selection based on binding. | Screening large (10⁷–10¹¹) libraries for binding interactions. |

| Next-Generation Sequencing (NGS) Services | Enables deep, parallel sequencing of entire library populations before and after selection. | Analyzing library diversity and tracking enrichment during directed evolution. |

| High-Throughput Thermal Shift Assay Dyes (e.g., SYPRO Orange) | Reports protein unfolding as a function of temperature in a plate-based format. | Rapid stability screening of hundreds of protein variants. |

Practical Applications and Current Frontiers

The choice of library design strategy directly impacts applications in drug development and industrial biotechnology.

Therapeutic Protein & Vaccine Development: Rational and computational design are paramount for crafting high-stability vaccine immunogens (e.g., for malaria [5]) and engineering viral vectors like AAV capsids for gene therapy [13]. Directed evolution remains crucial for optimizing antibody affinity and specificity [11].

Industrial Biocatalysis: Semi-rational design is highly effective for tailoring enzyme properties such as thermostability, solvent tolerance, and substrate specificity for green chemistry applications [10]. Autonomous protein engineering platforms (e.g., SAMPLE) that combine AI design with robotic assembly and testing are emerging to accelerate this cycle [11].

The frontier of the field is the integration of generative AI and physics-based models to solve the "inverse function" problem: not just designing a fold, but designing a protein to perform a specified chemical or biological function from first principles [5] [12]. This promises a future where design is truly predictive and programmable.

Protein Library Design and Testing Workflow

The evolution from early combinatorial chemistry to modern protein engineering demonstrates a clear trajectory: increasing rational guidance dramatically improves the efficiency and success of library design. Pure random search, while theoretically comprehensive, is experimentally intractable for complex functions like protein folding. The integration of biophysical principles (binary patterning), evolutionary wisdom (semi-rational design), and computational intelligence (AI-driven de novo design) successively constrains the search space to fruitful regions.

The comparative performance thesis finds its synthesis in hybrid empirical-rational strategies. The most powerful modern workflows use computational models to generate smart, small libraries, which are then validated experimentally. The resulting data further refine the models, creating a virtuous cycle [5] [3] [12]. Therefore, the dichotomy between rational and random methods is largely obsolete; the leading edge of the field lies in their intelligent integration, leveraging the predictive power of computation to guide empirical exploration for accelerating drug and biocatalyst discovery.

This guide provides a comparative analysis of protein and peptide library design strategies, focusing on the central challenge of balancing three competing objectives: maximizing sequence diversity to explore a broad search space, ensuring functional fitness to yield viable candidates, and managing practical library size constraints. The field is defined by a paradigm shift from traditional, large random libraries toward smaller, rational, and semi-rational designs empowered by computational tools [14] [15]. Key findings indicate that purely random methods (e.g., NNK saturation mutagenesis) often create oversized libraries with low functional fitness, while modern rational methods (e.g., machine learning-guided design) co-optimize for diversity and fitness, achieving superior results with libraries orders of magnitude smaller [16] [17]. The integration of high-throughput data to map sequence-performance landscapes is now central to advancing both fundamental science and applied protein engineering [15].

Comparative Analysis of Library Design Methodologies

The following table summarizes the performance of major library design strategies against the three key objectives.

| Design Methodology | Typical Library Size | Primary Diversity Mechanism | Fitness Enrichment Strategy | Key Advantage | Major Limitation |

|---|---|---|---|---|---|

| Random Saturation Mutagenesis [16] | Very Large (10^6 - 10^9) | Degenerate codons (NNK, NNS) at selected sites. | Screening/selection of large variant pools; fitness not considered in design. | Simplicity; unbiased exploration of local sequence space. | Vast majority of variants are non-functional; screening burden is high. |

| Semi-Rational Design [14] | Small to Medium (10^2 - 10^4) | Focused diversity at "hotspot" positions informed by sequence/structure. | Evolutionary analysis (e.g., consensus sequences, phylogenetics) to prioritize likely functional substitutions. | High fraction of functional clones; enables hypothesis-driven engineering. | Requires prior knowledge (structure, MSA); diversity is restricted to pre-defined regions. |

| Algorithm-Supported Diversity Optimization [18] [19] | Tailored (Reduced from theoretical max) | Multi-objective genetic algorithms to maximize unique masses/sequences. | Not directly optimized; fitness is a downstream screening parameter. | Simplifies hit deconvolution (e.g., by MS); maximizes analytical diversity per library member. | Focuses on physicochemical diversity, not necessarily functional fitness. |

| Machine Learning-Guided Co-Optimization (e.g., MODIFY) [17] | Tailored & Optimized | Pareto optimization balancing sequence diversity and predicted fitness. | Ensemble ML models (e.g., protein language models) for zero-shot fitness prediction. | Actively balances exploration and exploitation; designs high-quality libraries from scratch. | Computational complexity; requires careful model training and validation. |

Detailed Experimental Protocols

Protocol 1: Machine Learning-Guided Library Design and Validation (MODIFY Framework) [17] This protocol outlines the steps for designing a combinatorial library using the MODIFY algorithm, which co-optimizes predicted fitness and sequence diversity.

- Input Specification: Define the parent protein sequence and the set of

Mamino acid residues to be targeted for randomization. - Zero-Shot Fitness Prediction: Utilize an ensemble machine learning model. This model combines a pre-trained protein language model (e.g., ESM-2) with a sequence density model (e.g., EVmutation) to predict the fitness

F_vof any variantvwithout requiring experimental training data on the target protein. - Pareto Frontier Calculation: Solve the multi-objective optimization problem: max [ Fitness(Library) + λ · Diversity(Library) ], where

λis a user-defined hyperparameter. This generates a Pareto front, a set of optimal libraries where fitness cannot be increased without decreasing diversity, and vice versa. - Library Selection and Filtering: Select a library from the Pareto front based on project needs (e.g., bias toward higher fitness or greater diversity). Filter the selected variant sequences based on additional criteria like predicted stability or foldability using tools like FoldX or Rosetta.

- DNA Synthesis and Library Construction: Encode the final list of variant sequences into oligonucleotides for gene synthesis or assembly (e.g., using TRIM oligos for antibody CDRs) [20].

- Validation with Next-Generation Sequencing (NGS): Sequence the constructed library using NGS to confirm the intended sequence diversity and coverage, identifying and quantifying any construction biases [20].

Protocol 2: Traditional Saturation Mutagenesis with NNK Codons [16] This protocol describes a standard method for creating random diversity at specific positions.

- Target Selection: Choose

Mspecific amino acid positions for randomization. - Primer Design: Design mutagenic primers where the triplet codon for each target position is replaced with the degenerate NNK codon (N = A/T/G/C; K = G/T). This encodes all 20 amino acids and one stop codon with reduced bias compared to NNN.

- Library Construction: Perform PCR-based site-directed mutagenesis or gene assembly using the degenerate primers. Clone the resulting pool of genes into an appropriate expression vector.

- Transformation and Size Determination: Transform the plasmid library into a microbial host (e.g., E. coli). The number of transformants defines the experimental library size

L. To ensure coverage, aim forLto be 3-5 times the theoretical diversity (e.g., for 4 M positions with NNK, theoretical diversity = 20^4 = 160,000; target ~500,000 – 800,000 clones) [16]. - Functional Screening/Selection: Express the protein library and subject it to a high-throughput screen or selection (e.g., growth assay, FACS, absorbance/fluorescence assay) to identify functional variants.

Visualization of Key Workflows and Relationships

Diagram 1: MODIFY Algorithm Workflow for Library Co-Optimization

Diagram 2: Comparative Landscape of Library Design Strategies

The Scientist's Toolkit: Research Reagent Solutions

| Item/Reagent | Function in Library Design/Construction | Key Consideration |

|---|---|---|

| Degenerate Codon Oligos (NNK, NNS, etc.) [16] [20] | Introduce controlled randomness at DNA level during library construction. | NNK (32 codons) reduces stop codon frequency and amino acid bias vs. NNN (64 codons). TRIM oligos can offer more precise control [20]. |

| TRIM Oligonucleotides [20] | Pre-synthesized pools of trinucleotides representing specific codons used in gene assembly. | Minimizes codon bias and eliminates stop codons, leading to higher-quality libraries with more accurate amino acid distribution. |

| One-Bead-One-Compound (OBOC) Resins [18] [19] | Solid support for parallel synthesis of peptide libraries where each bead carries a single sequence. | Enables screening of synthetic peptide libraries without a cellular system; compatible with unnatural amino acids. |

| Phagemid or Yeast Display Vectors [20] | Genetic constructs for linking genotype (gene) to phenotype (displayed protein) in a cellular system. | Choice affects library size (phage: large, yeast: smaller) and screening method (panning vs. FACS). Eukaryotic yeast often improves folding of complex proteins [20]. |

| Next-Generation Sequencing (NGS) Services [20] | For deep sequencing of constructed DNA libraries pre- or post-selection. | Critical for quality control: validates library diversity, identifies biases, and deconvolutes hits from selection rounds. |

| Protein Language Models (e.g., ESM-2) [17] | Pre-trained deep learning models that learn evolutionary constraints from protein sequence databases. | Used for zero-shot fitness prediction and estimating variant stability, guiding rational library design without experimental data. |

The evolution of antibody discovery and protein engineering has been fundamentally shaped by the advent of in vitro display technologies. These platforms serve as critical technological enablers, bridging the gap between vast genetic libraries and functional protein leads. Within the broader thesis of comparative performance between rational and random library design methods, display technologies are not merely selection tools but active participants that influence evolutionary outcomes. Rational design employs structural knowledge and computational modeling to create focused, intelligent diversity, while random mutagenesis explores sequence space broadly but less efficiently [21]. The choice of display platform—phage, yeast, or ribosome display—profoundly affects the accessibility of this sequence space, the fidelity of selection, and the ultimate success of a campaign [22] [23]. This guide provides a comparative analysis of these three pivotal platforms, focusing on their operational parameters, experimental data, and their synergistic roles with different library design philosophies in modern drug discovery.

Platform Comparison: Principles, Performance, and Experimental Data

Phage Display

- Core Principle & Workflow: Phage display involves the genetic fusion of a protein (e.g., an antibody fragment) to a coat protein of a bacteriophage, typically the M13 phage. The resulting fusion is displayed on the phage surface while its genetic material resides inside. The selection process, called biopanning, involves incubating a phage library with an immobilized target, washing away unbound phages, and eluting and amplifying specifically bound phages for iterative rounds of selection [24] [25].

- Experimental Performance Data: A 2025 study demonstrated the construction of a synthetic nanobody library with a diversity of 2.4×10^10 individual clones displayed on phage. In screening against eight Drosophila secreted proteins, this platform yielded specific binders for five targets. For Carbonic anhydrase-related protein B (CARPB), polyclonal phage ELISA signals showed clear enrichment over three selection rounds, leading to the identification of five distinct monoclonal nanobodies [24] [26]. The technology's robustness is further validated by its clinical impact: 14 FDA-approved therapeutic antibodies, including adalimumab (Humira) and belimumab (Benlysta), have been developed using phage display [25].

- Protocol: Nanobody Screening via Phage Display [24]:

- Library Preparation: A phagemid library encoding nanobody-pIII fusions is electroporated into E. coli and rescued with helper phage to produce the display-ready phage library.

- Negative Selection: The phage library is incubated in a well coated with a negative control protein (e.g., mCherry-hIgG) to deplete non-specific binders.

- Positive Panning: The pre-cleared library is transferred to a target antigen-coated well (e.g., antigen-hIgG fusion).

- Stringent Washing: Non-specifically bound phages are removed with buffer washes. Stringency increases in subsequent rounds by reducing antigen density and increasing wash cycles.

- Elution & Amplification: Bound phages are eluted via bacterial infection. The infected bacteria are amplified, and helper phage is added to produce an enriched phage pool for the next panning round.

- Screening: After 2-3 rounds, polyclonal and monoclonal phage populations are screened by ELISA. DNA from positive clones is sequenced to identify nanobody variants.

Yeast Surface Display

- Core Principle & Workflow: In yeast display, proteins are fused to the Aga2p mating adhesion subunit, which anchors them to the cell wall of Saccharomyces cerevisiae. The display is coupled with a epitope tag (e.g., c-myc) for quantification. Selections are performed using fluorescence-activated cell sorting (FACS), which allows quantitative, multiparameter sorting based on binding affinity and expression level [24] [23].

- Experimental Performance Data: Yeast display excels in discriminating fine specificity, such as for conformational states of GPCRs [24]. However, its library diversity is typically constrained by yeast transformation efficiency. A prominent synthetic nanobody library used for yeast display contained approximately 10^8 variants—orders of magnitude lower than typical phage or ribosome libraries [24]. This platform requires relatively large amounts of soluble antigen (µg-mg quantities) for labeling and sorting, and the FACS-based process can be costly and require specialized instrumentation [24].

- Protocol: Affinity Maturation via Yeast Display [23]:

- Library Transformation: A mutagenized library is cloned into a yeast display vector and transformed into yeast cells to create the display library.

- Induction & Labeling: Library expression is induced. Cells are labeled with two fluorescent reagents: one for the displayed protein (via an epitope tag) and one for target binding (via a fluorescently labeled antigen).

- FACS Analysis & Sorting: Cells are analyzed by FACS. Dual-positive cells (high expression and high target binding) are gated and sorted. Gates can be set to collect only the top 0.1-1% of binders for very stringent selection.

- Recovery & Iteration: Sorted cells are recovered in growth medium, induced, and subjected to additional rounds of sorting with increasing stringency (e.g., lower antigen concentration).

- Characterization: Individual clones from later rounds are isolated, and their binding affinity is quantified via flow cytometry or surface plasmon resonance (SPR).

Ribosome Display

- Core Principle & Workflow: Ribosome display is a cell-free system. DNA libraries are transcribed and translated in vitro, but the lack of a stop codon causes the ribosome to stall, forming a stable ternary complex of mRNA, ribosome, and the nascent protein. This complex can be used for selection against an immobilized target. After selection, the bound mRNA is recovered, reverse-transcribed to cDNA, and amplified by PCR for the next round [22] [23].

- Experimental Performance Data: Its most significant advantage is the elimination of transformation, allowing for exceptionally high library diversities (>10^12 variants). A direct comparative study on affinity maturing an anti-IL-1R1 antibody found that ribosome display generated antibodies with distinct mutation patterns and greater structural diversity in the CDR3 loops compared to phage display. The lead candidate from ribosome display, Jedi067, achieved a ~3700-fold improvement in binding affinity (KD) over the parent antibody [22]. This highlights its superior capacity for exploring vast sequence spaces and recombining beneficial mutations.

- Protocol: VH/VL Recombination and Selection via Ribosome Display [22]:

- Library Construction: Separate libraries of mutated VH and VL CDR3 regions are generated by PCR. These are then spliced together by overlap extension PCR to create a full scFv library with recombined diversity.

- In Vitro Transcription-Translation: The DNA library is purified and used as a template in a cell-free transcription-translation system (e.g., E. coli extract) lacking stop codons in the construct.

- Selection: The ribosomal complexes are incubated with biotinylated target antigen immobilized on streptavidin beads. The mixture is extensively washed.

- mRNA Recovery: mRNA from bound complexes is eluted, typically by EDTA-mediated ribosome dissociation.

- RT-PCR & Reiteration: The eluted mRNA is reverse-transcribed to cDNA and amplified by PCR. The product is used directly as the template for the next round of ribosome display, typically for 3-5 rounds.

- Clone Analysis: The final PCR product is cloned into an expression vector for sequencing and affinity characterization of individual clones.

Comparative Analysis Table

Table 1: Comparative Performance of Phage, Yeast, and Ribosome Display Platforms

| Parameter | Phage Display | Yeast Surface Display | Ribosome Display |

|---|---|---|---|

| Max Library Diversity | ~10^10 - 10^11 [24] [23] | ~10^7 - 10^9 [24] [23] | >10^12 - 10^13 [22] [23] |

| Selection Mechanism | Biopanning on immobilized antigen [24] | FACS/MACS using soluble antigen [24] | Selection of mRNA-ribosome-protein complexes [22] |

| Throughput | High (panning of whole library) | Medium-High (FACS throughput) | Very High (cell-free, no cloning) |

| Typical Antigen Requirement | Low (ng-µg, immobilized) [24] | High (µg-mg, soluble) [24] | Medium (µg, usually biotinylated) [22] |

| Affinity Maturation Efficacy | Proven, enables 10-1000x improvements [25] | Excellent for fine discrimination and stability [23] | Superior for large jumps; enables 1000-10,000x improvements [22] |

| Key Advantage | Robust, cost-effective, well-established [24] [25] | Direct link between phenotype & genotype, enables quantitative FACS [24] | Largest library size, no transformation bias, in vitro evolution [22] [23] |

| Primary Limitation | Limited by bacterial transformation efficiency [23] | Limited library diversity, requires soluble antigen [24] | Requires optimized cell-free system, protein folding in vitro [23] |

Table 2: Selected Approved Therapeutics Derived from Display Platforms [25]

| Platform | Therapeutic (Brand) | Target | Indication (First Approved) | Note |

|---|---|---|---|---|

| Phage Display | Adalimumab (Humira) | TNFα | Rheumatoid Arthritis (2002) | First fully human antibody from guided selection |

| Phage Display | Belimumab (Benlysta) | BLyS | Systemic Lupus Erythematosus (2011) | Isolated from a human naïve scFv library |

| Phage Display | Avelumab (Bavencio) | PD-L1 | Merkel Cell Carcinoma (2017) | Isolated from a human naïve Fab library |

| Phage Display | Caplacizumab (Cablivi) | vWF | aTTP (2018) | Nanobody derived from camelid library |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Research Reagent Solutions for Display Technologies

| Reagent/Material | Function/Description | Primary Platform |

|---|---|---|

| Phagemid Vector (e.g., pComb3X) | Plasmid containing phage origin and pIII fusion for antibody fragment display; allows helper phage-driven packaging [24]. | Phage Display |

| Helper Phage (e.g., M13KO7) | Provides all structural proteins in trans to package the phagemid DNA and display the fusion protein [24]. | Phage Display |

| MaxiSorp Plates | High protein-binding plates used for immobilizing antigens during biopanning selections [24]. | Phage Display |

| Protein A or G Resin | Used for purification of Fc-fused antigens (e.g., antigen-hIgG) prior to panning [24]. | Phage, Yeast, Ribosome |

| Fluorescently Labeled Antigen | Soluble antigen conjugated to a fluorophore (e.g., Alexa Fluor 647) for labeling yeast cells during FACS [24] [23]. | Yeast Display |

| Anti-epitope Tag Antibody (e.g., anti-c-myc) | Conjugated to a different fluorophore to quantify surface expression levels on yeast [23]. | Yeast Display |

| Cell-Free Transcription-Translation System | Commercially available extract (e.g., from E. coli or wheat germ) for generating ribosome display complexes [22] [23]. | Ribosome Display |

| Streptavidin Magnetic Beads | Used to capture biotinylated antigen during ribosome display selection steps [22]. | Ribosome Display |

Integrating Display Platforms with Rational and Random Design

The interplay between library design and display technology is critical. Random mutagenesis libraries (e.g., using error-prone PCR or NNS codon randomization) benefit immensely from the vast capacity of ribosome display, which can accommodate and effectively search their immense diversity [22] [21]. Conversely, rationally designed libraries—such as those focused on specific CDR residues or based on structural models—are highly compatible with yeast display. Yeast display's quantitative FACS can precisely select for desired traits (e.g., high stability, specific conformational recognition) from these more focused libraries [24] [27]. Phage display serves as a versatile and robust workhorse, effective for both naïve library screening and affinity maturation campaigns derived from either rational or random design starting points [24] [25].

The future lies in hybrid approaches. A common strategy is to use a rationally designed library for initial lead discovery on phage display, followed by affinity maturation using random mutagenesis and the superior diversity-handling of ribosome display [22]. Furthermore, the rise of computational and AI-driven protein design is generating in silico rational libraries of unprecedented quality, which will require high-fidelity display platforms for experimental validation and optimization [27].

Workflow and Relationship Diagrams

Diagram 1: Phage Display Biopanning and Screening Workflow

Diagram 2: Ribosome Display In Vitro Evolution Cycle

Diagram 3: Logic Flow for Integrating Library Design with Display Platform Selection

From Theory to Bench: Practical Workflows for Rational and Random Library Construction

The preclinical drug discovery pipeline is undergoing a fundamental shift from a trial-and-error mode to a rational, data-driven mode [27]. This transition is central to a critical thesis in modern pharmaceutical research: that rational design strategies, underpinned by structural biology and bioinformatics, systematically outperform random or naive screening methods in efficiency, cost, and success rate [28] [27]. Rational design leverages prior knowledge—be it a protein's three-dimensional structure, bioinformatic predictions of function, or the chemical scaffolds of known ligands—to make informed decisions. In contrast, random library design, while conceptually simple and unbiased, explores chemical space inefficiently [29]. This guide provides a comparative analysis of these paradigms, presenting experimental data and methodologies that quantify their performance within the broader drug and nanomaterial discovery workflow [28] [30] [27].

Quantitative Performance Comparison

The superiority of rational design is quantifiable across multiple metrics, from library efficiency to the predictive power of generated models.

Table 1: Comparative Efficiency of Rational vs. Random Library Design

| Performance Metric | Rational Design (Maximum Dissimilarity) | Random Selection | Efficiency Gain (Rational/Random) | Experimental Context |

|---|---|---|---|---|

| Library Size for Target Coverage | Minimal subset required [29] | 3.5 - 3.7x larger subset required [29] | 3.5x - 3.7x more efficient [29] | Covering 90% of biological target classes in a database [29]. |

| Model Predictive Power | Higher predictive power & more stable QSAR models [29] | Lower predictive power [29] | Significantly superior [29] | Comparative Molecular Field Analysis (CoMFA) on ACE inhibitors [29]. |

| Parameter Estimation Error | Lower mean absolute error [30] | Higher mean absolute error [30] | ~2x - 4x more accurate [30] | Parameter estimation for a saturating kinetic model using optimal vs. naive sampling [30]. |

| Optimal Sampling Density | 6-7 time points [30] | 12+ time points [30] | ~50% fewer measurements needed [30] | Informed by Parameter Sensitivity Clustering (PARSEC) for kinetic modeling [30]. |

Table 2: Experimental Data from a Model-Based Design of Experiments (MBDoE) Study [30]

| Experiment Design Strategy | Mean Absolute Error (Parameter θ₁) | Mean Absolute Error (Parameter θ₂) | Key Finding |

|---|---|---|---|

| PARSEC-Optimal Design (6 time points) | 0.081 | 0.134 | Clustering parameter sensitivities yields maximally informative samples. |

| Time-Equidistant Sampling (12 time points) | 0.165 | 0.287 | Doubling sample points does not compensate for poor design. |

| Random Sampling (6 time points) | 0.332 | 0.521 | Naive exploration yields the highest estimation error. |

Experimental Protocols & Methodologies

This protocol is used to create a diverse, non-redundant compound library for screening.

- Database Preparation: Compile a chemical database and encode all molecules using 2D molecular fingerprints (e.g., MACCS keys, ECFP4) as structural descriptors.

- Similarity Metric Definition: Select a similarity coefficient (e.g., Tanimoto coefficient) to quantify pairwise molecular similarity.

- Seed Selection: Choose an initial compound at random as the first member of the subset.

- Iterative Selection: a. Calculate the similarity between all unchosen compounds in the database and the current subset. b. For each unchosen compound, identify its maximum similarity to any compound already in the subset (its "nearest neighbor" in the subset). c. Select the compound with the lowest maximum similarity (i.e., the most dissimilar compound) and add it to the subset.

- Threshold Application: Implement a similarity threshold (e.g., 0.70 or 0.85). If the selected compound's nearest neighbor similarity is below this threshold, add it. If above, the process can be terminated, ensuring no two subset members are excessively similar.

- Validation: Use the final subset in a validation assay (e.g., QSAR modeling for a specific target like angiotensin-converting enzyme) and compare hit rates or model quality against a randomly selected subset of equal size.

This protocol designs experiments to estimate model parameters with minimal measurements.

- Model Definition: Formulate a mathematical model (e.g., system of ODEs for a kinetic pathway) with parameters θ to be estimated.

- Parameter Sensitivity Analysis (PSA): a. For each candidate measurement (e.g., metabolite concentration at time t), compute its sensitivity to each parameter: PSIᵢⱼ = (∂yᵢ/∂θⱼ) × (θⱼ/yᵢ), where yᵢ is the measurable output. b. Sample parameter values from prior distributions to account for uncertainty, creating a conjoined PARSEC-PSI vector for each measurement.

- Clustering: Apply a clustering algorithm (e.g., k-means) to the set of all PARSEC-PSI vectors. Each cluster groups measurements that provide redundant information about the parameters.

- Design Selection: Select one representative measurement (e.g., a specific time point) from each cluster. The number of clusters (k) defines the optimal sample size.

- Evaluation via ABC-FAR: a. Use the Approximate Bayesian Computation - Fixed Acceptance Rate (ABC-FAR) method to estimate parameters from synthetic data generated for the PARSEC design. b. Iteratively sample parameters, simulate data, and accept samples where the χ² goodness-of-fit statistic between simulated and "observed" data is below a dynamically adjusted threshold. c. Compare the accuracy and precision of parameter estimates from PARSEC designs versus equidistant or random time-point designs.

Visualizing Strategic Workflows

Diagram 1: Integrated Rational Drug Design Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Resources for Rational Design Research

| Category & Item | Function & Purpose in Rational Design | Representative Example / Note |

|---|---|---|

| Structural Biology | ||

| Cryo-Electron Microscopy (Cryo-EM) System | Determines high-resolution structures of large targets and complexes for structure-based design. | Essential for membrane proteins and RNA complexes. |

| High-Throughput Crystallography Platform | Accelerates fragment and co-crystal screening to inform ligand binding. | Key for fragment-based drug discovery (FBDD). |

| Bioinformatics & Data | ||

| Curated Structural Database | Provides experimentally resolved protein structures for homology modeling and docking. | Cambridge Structural Database (CSD) [3]; Protein Data Bank (PDB). |

| NLP-Powered Data Extraction Toolkit | Mines literature to build datasets linking material structures to experimental properties [3]. | ChemDataExtractor [3]; used for MOF stability data. |

| Computational Screening | ||

| Molecular Docking Suite | Screens virtual compound libraries against a target structure to predict binding poses and affinity. | Widely used in structure-based virtual screening (SBVS) [28]. |

| Molecular Dynamics (MD) Simulation Software | Simulates dynamic interactions, stability, and binding kinetics of designed compounds [27]. | Coarse-grained MD enables high-throughput screening [27]. |

| Library Design & Synthesis | ||

| Microfluidic Synthesis Platform | Enables high-throughput, reproducible synthesis of nanoparticle or compound libraries for testing [27]. | Crucial for creating lipid nanoparticle (LNP) libraries for mRNA delivery [27]. |

| Fragment Library | A curated collection of small, simple molecules for screening by X-ray crystallography or NMR to identify weak binders. | Foundation of FBDD campaigns. |

| Validation & Assays | ||

| Surface Plasmon Resonance (SPR) | Measures real-time binding kinetics (ka, kd) and affinity (KD) of designed ligands. | Gold-standard for biophysical interaction validation. |

| Isothermal Titration Calorimetry (ITC) | Quantifies binding affinity and thermodynamic profile (ΔH, ΔS) of molecular interactions. | Provides full thermodynamic signature. |

The comparative data substantiates the thesis that rational design strategies offer a decisive advantage in preclinical discovery [29] [30]. The integration of structural bioinformatics, experimental data mining [3], and model-based experiment design [30] creates a virtuous cycle that systematically outperforms random exploration. The future of rational design is being shaped by the convergence of AI/ML models for property prediction [28], the automation of high-throughput experimentation [27], and the creation of ever-larger, higher-quality experimental datasets [3]. This will further widen the efficiency gap, cementing rational, knowledge-driven strategies as the indispensable foundation for the next generation of drug and advanced material discovery [28] [27].

The discovery of monoclonal antibodies as therapeutics relies fundamentally on the quality of the starting library—the diverse collection of antibody variants from which binders are selected. The central challenge lies in maximizing functional diversity: the number of unique, well-folded, and expressible antibody clones capable of engaging antigens [31]. Traditional methods for library generation often prioritize maximizing sequence space through random or semi-random mutagenesis, particularly within the Complementarity Determining Regions (CDRs). Common techniques include using degenerate nucleotide codons (e.g., NNK) or error-prone PCR across variable regions [32]. While capable of generating vast theoretical diversity, these random approaches have a significant drawback: they inevitably produce a high percentage of non-functional clones due to stop codons, misfolding, aggregation, or framework-CDR incompatibility [31] [20]. This inefficiency necessitates screening larger library sizes to find rare, functional hits, increasing cost and time.

In contrast, rational design strategies seek to build quality into the library from inception by applying prior knowledge to enrich for functional sequences. This thesis operates within a broader research context comparing these paradigms, arguing that rational design yields libraries with superior functional clone percentages, leading to higher success rates in discovery campaigns [31]. A prime case study in this rational approach is the construction of antibody libraries via CDR resampling from validated databases. This method bypasses random sequence generation by combinatorially assembling naturally occurring, experimentally validated CDR sequences onto a single, optimized framework [31] [33]. It is predicated on the hypothesis that CDRs sourced from antibodies known to fold and function will maintain that functionality when transplanted, preserving critical intra-loop and loop-framework compatibilities often disrupted by random mutagenesis [31]. This guide provides a detailed comparison of this rational CDR resampling method against traditional and next-generation alternatives, supported by experimental data and methodological detail.

Core Methodology: The CDR Resampling Pipeline

The rational CDR resampling pipeline is a multi-step bioinformatic and molecular biology process designed to maximize functional output [31] [33].

- 1. Database Curation and Annotation: The process begins with a legacy database of antibody variable region sequences derived from experimentally validated binders (e.g., from phage display campaigns). Sequences are clustered based on framework similarity, and a subset with nearly identical frameworks is selected to ensure compatibility. CDR regions (primarily H2, H3, L2, L3) within these sequences are computationally identified and annotated using standard numbering schemes (e.g., Kabat, Chothia) [31] [32].

- 2. CDR Harvesting and Filtering: Unique CDR amino acid sequences are extracted, forming a curated "CDR database." This step captures natural diversity while filtering out improbable or problematic sequences. The sequences are back-translated into DNA using codon optimization for the desired expression system (e.g., E. coli) [31] [34].

- 3. Combinatorial Assembly via TRIM Technology: The predefined CDR DNA sequences are synthesized, often using trinucleotide mutagenesis (TRIM) technology. TRIM synthesizes codons as single units, allowing precise control over amino acid incorporation and eliminating stop codons and frameshifts, which are common in libraries built with degenerate nucleotides [20] [34]. These CDR cassettes are then assembled combinatorially into the chosen master framework vector using techniques like overlap extension PCR and Golden Gate assembly [33] [34].

- 4. Library Validation: The final physical library is transformed into a display system (e.g., phage). Its quality is assessed by next-generation sequencing (NGS) to confirm diversity and the absence of sequence skew. Functional validation involves small-scale panning against control antigens to verify the library's ability to generate specific binders [20] [34].

Diagram 1: Workflow for Rational CDR Resampling Library Construction. This diagram outlines the key bioinformatic and molecular biology steps in constructing a library from validated sequences [31] [33] [34].

Performance Comparison: CDR Resampling vs. Alternative Methods

The efficacy of the rational CDR resampling approach is best demonstrated through direct comparison with other library generation strategies. Key performance metrics include the success rate (percentage of targets yielding specific binders), the number of unique hits per target, and the binding affinity of early-stage leads.

Table 1: Comparative Performance of Antibody Library Design Strategies

| Design Strategy | Core Principle | Typical Library Size | Key Advantage | Key Limitation | Experimental Success Rate (vs. Diverse Targets) | Representative Affinity of Initial Hits | Source |

|---|---|---|---|---|---|---|---|

| Rational CDR Resampling | Combinatorial assembly of validated natural CDRs on a single framework. | 10^10 - 10^11 | Very high percentage of functional, well-folded clones; preserves natural CDR motifs and correlations. | Diversity limited to known, curated CDR sequences; may miss novel structural motifs. | 93% (13/14 targets) [31] | Low nanomolar to sub-nanomolar (from panning) [31] [34] | [31] [33] [34] |

| Traditional Degenerate Codon (NNK/NNS) | Randomization of CDR positions using nucleotide mixtures. | 10^9 - 10^11 | Simple, low-cost design; can explore novel sequence space. | High frequency of stop codons and non-functional clones; disrupted CDR-framework compatibility. | Not explicitly stated; significantly lower than CDR resampling in head-to-head study [31]. | Variable; often requires affinity maturation. | [31] [32] [20] |

| Machine Learning-Guided Design | In silico sequence generation/optimization using models trained on natural antibody repertoires or binding data. | 10^4 - 10^7 (designed subset) | Can extrapolate beyond natural sequences to optimize specific properties (affinity, stability). | Requires large, high-quality training data; computational complexity; risk of generating non-expressible "in-silico" sequences. | N/A (target-specific) | 28.7-fold improved affinity over directed evolution baseline in a head-to-head study [35]. | [35] [36] |

| De Novo Computational Design (e.g., RFdiffusion) | Generative AI creates entirely new CDR loops and paratopes to fit a target epitope. | 10^3 - 10^4 (for screening) | Potential for atomic-level precision targeting of cryptic or conserved epitopes. | Emerging technology; requires high-resolution target structure; initial affinities often modest (µM-nM range). | Demonstrated for specific epitopes on viral proteins (e.g., Influenza HA, TcdB) [37]. | Tens to hundreds of nanomolar (initial designs), improved via maturation [37]. | [37] |

| Naïve/Large Synthetic Library | Large-scale synthesis mimicking natural human antibody diversity, often using TRIM tech. | 10^10 - 10^11 (e.g., >2.5x10^10) | Extremely large size and human-centric design aim for broad antigen coverage. | High construction cost; functional percentage may be lower than focused rational designs. | Successful against multiple therapeutically relevant antigens (e.g., TIM-3) [34]. | Sub-nanomolar to nanomolar (from panning) [34]. | [34] |

The data from the foundational CDR resampling study is particularly telling. In a head-to-head evaluation against libraries built with traditional degenerate codon methods, the rationally designed "PDC library" demonstrated a 93% success rate (13 out of 14 diverse targets, including peptides, cytokines, and folded proteins) in generating specific binders [31]. Furthermore, it yielded over 20-fold more unique hits per target on average [31]. This directly translates to a more efficient screening campaign, where less resource is spent sequencing and characterizing non-binders or identical clones.

Table 2: Head-to-Head Experimental Outcome: CDR Resampling vs. Traditional Method

| Metric | Rational CDR Resampling Library (PDC Library) | Traditional Degenerate Codon Library | Fold Improvement/ Outcome |

|---|---|---|---|

| Success Rate (Targets yielding binders) | 13 / 14 targets (93%) | Significantly lower (specific rate not published) | Dramatically Higher [31] |

| Unique Hits per Target (Average) | >20-fold more hits | Baseline (1x) | >20x [31] |

| Functional Clone Percentage | Maximized by design (using pre-validated CDRs) | Reduced by stop codons, misfolding, incompatibility | Not quantified, but fundamental to design principle [31] |

| Key Experimental Evidence | Phage display panning against 14 biotinylated peptide and protein antigens, followed by ELISA and sequencing of ~200 clones per target [31] [33]. | Parallel panning under identical conditions with a library of comparable size but constructed via degenerate codon randomization [31]. |

Diagram 2: Performance Comparison of Antibody Library Design Strategies. This diagram visually summarizes key success metrics from different rational design approaches, highlighting the high hit rate of CDR resampling [31], the affinity gains from ML [35], and the capabilities of de novo AI design [37].

Detailed Experimental Protocols

This section outlines the core protocols used to generate and validate the performance data for the CDR resampling approach, enabling researchers to reproduce or adapt the methodology.

- Template Design: Select a single, well-expressed, and stable scFv or Fab framework. The framework from which the CDRs were originally extracted is optimal for compatibility.

- CDR Cassette Synthesis: Based on the bioinformatic pipeline output, design oligonucleotides encoding each unique CDR sequence with flanking regions homologous to the framework. Synthesize these cassettes using TRIM technology to ensure fidelity.

- Assembly PCR: Perform a series of overlap extension PCRs. First, amplify individual CDR cassettes. Then, use these as megaprimers in a PCR with the master framework vector as template to insert the CDR. This is done sequentially or, for multiple CDRs, in a combinatorial assembly reaction.

- Cloning and Transformation: Digest the final assembled scFv gene and the phage display vector (e.g., pCANTAB 5E). Ligate and purify the product. Electroporate the ligation product into competent E. coli (e.g., TG1 strain). Pool all transformations to create the master library stock. Calculate library size by plating serial dilutions.

- Phage Production: Incubate the library stock with helper phage (e.g., M13KO7) to produce phage particles displaying the scFv library.

- Antigen Immobilization: Coat immunotubes or magnetic beads with the target antigen (5-20 µg/mL in PBS, overnight at 4°C). Block with 2-5% MPBS (milk protein in PBS).

- Selection Rounds: Incubate the phage library with the immobilized antigen for 1-2 hours at room temperature. Wash extensively with PBST (PBS + 0.1% Tween-20) to remove non-specific binders. Elute bound phage with acidic glycine buffer (0.1 M, pH 2.2) or competitively with soluble antigen. Immediately neutralize the eluate.

- Amplification: Infect log-phase E. coli with the eluted phage to amplify the enriched pool for the next round. Typically, 3-4 rounds of panning are performed with increasing wash stringency.

- Monoclonal Phage ELISA: After the final panning round, pick ~200 individual bacterial colonies to produce monoclonal phage in a 96-well format. Use these supernatants in an ELISA against the target antigen (coated on a plate) and a non-target control (e.g., BSA). Detect binding with an anti-M13-HRP antibody.

- Sequencing and Clustering: Sequence the scFv gene from ELISA-positive clones. Cluster sequences based on CDR-H3/L3 identity to identify unique hits.

- Soluble Expression and Characterization: Subclone unique scFv hits into an expression vector with a purification tag (e.g., His-tag). Express in E. coli, purify via immobilized metal affinity chromatography (IMAC), and assess binding affinity using surface plasmon resonance (SPR) or bio-layer interferometry (BLI).

Table 3: Essential Research Reagents for Rational CDR Resampling & Validation

| Item | Function in the Workflow | Example/Details | Source |

|---|---|---|---|

| Validated Antibody Sequence Database | Source of natural, functional CDR sequences for resampling. | Private legacy databases from past campaigns; public repositories like SAbDab (Structural Antibody Database) can be filtered for quality. | [31] [32] |

| TRIM Oligonucleotide Synthesis | Enables synthesis of predefined CDR cassettes without stop codons or frameshifts, maximizing functional clones. | Services from specialized providers (e.g., Twist Bioscience, Integrated DNA Technologies). Essential for building high-quality synthetic or semi-synthetic libraries. | [20] [34] |

| Phage Display System | The primary workhorse for displaying and screening large (>10^10) antibody fragment libraries. | Vectors: pCANTAB 5E, pHEN. Host: E. coli TG1/SS320. Helper phage: M13KO7, Hyperphage (for valency modulation). | [31] [33] [34] |

| Next-Generation Sequencing (NGS) | Critical for quality control: validating library diversity, checking for synthesis errors, and tracking clonal enrichment during panning. | Platforms: Illumina MiSeq/NextSeq. Used pre-panning to assess library composition and post-panning to analyze enriched sequences. | [20] [34] |

| Biotinylated Antigens & Streptavidin Capture | Flexible antigen presentation for panning. Allows solution-phase binding followed by capture on streptavidin-coated beads, preserving conformation. | Biotinylated peptides and proteins used in the case study. Magnetic streptavidin beads (e.g., Dynabeads) enable efficient washing. | [31] [33] |

| High-Throughput Binding Assay | Rapid screening of hundreds of monoclonal outputs from panning (e.g., clones in 96-well plates). | Monoclonal phage ELISA or soluble expression followed by capture ELISA. Automated systems can increase throughput. | [31] [33] |

The case study of functional CDR resampling provides compelling evidence for the rational design paradigm in antibody library construction. By leveraging nature's own solutions—curated, validated CDR sequences—this method achieves a high functional clone percentage that directly translates to superior experimental outcomes: higher success rates and more unique hits per campaign compared to traditional random mutagenesis [31].

This approach does not seek to explore the entire theoretical sequence space but rather to densely populate the most productive regions of that space. It sits strategically between purely naive/random methods and cutting-edge de novo AI design. While machine learning [35] [36] and generative AI like RFdiffusion [37] represent the vanguard, capable of designing entirely novel paratopes, they often require significant experimental validation and affinity maturation. CDR resampling offers a robust, reliable, and immediately practical route to high-quality leads, especially for standard antigen classes.

For the drug development professional, the choice of library strategy involves a trade-off between novelty, resource allocation, and project risk. The rational CDR resampling method minimizes risk and maximizes efficiency for most conventional antibody discovery goals, solidifying its role as a cornerstone technique in the rational design toolkit. Its proven performance validates the core thesis that applying informed, data-driven constraints at the design phase yields libraries that outperform those built by the mere accumulation of random sequences.

In the field of protein and antibody engineering, library design methodologies are broadly categorized into rational and random approaches. Rational design relies on structural bioinformatics, computational modeling, and prior knowledge to predict and construct focused variant libraries [38]. In contrast, random design methods embrace stochasticity to explore vast sequence spaces without predefined hypotheses, making them invaluable for probing unknown function-structure relationships and discovering novel solutions. This guide focuses on two cornerstone random techniques: the use of NNK/NNS degenerate codons for targeted saturation mutagenesis and error-prone PCR (epPCR) for untargeted diversification. Framed within a broader thesis comparing rational and random strategies, this article provides an objective, data-driven comparison of these random methods, detailing their performance, optimal applications, and implementation protocols [39] [40] [41].

Method Comparison: Core Principles, Advantages, and Limitations

The choice between degenerate codon mutagenesis and error-prone PCR is fundamental and dictates the library's character. The following table summarizes their core attributes, drawing from established service data and research [39] [40].

Table 1: Comparative Overview of Degenerate Codon and Error-Prone PCR Methods

| Parameter | NNK/NNS Degenerate Codon Mutagenesis | Error-Prone PCR (epPCR) |

|---|---|---|

| Core Principle | Uses oligonucleotides with mixed bases (N=A/C/G/T, K=G/T, S=C/G) at defined codon positions to systematically encode all 20 amino acids [39] [41]. | Employs sub-optimal PCR conditions (low-fidelity polymerase, Mn²⁺, unbalanced dNTPs) to introduce random point mutations throughout the amplified sequence [39] [40]. |

| Control & Targeting | High. Mutations are confined to pre-selected codons (e.g., within antibody CDRs), allowing focused exploration [40]. | Low. Mutations are distributed randomly across the entire gene, including framework regions [40]. |

| Library Complexity | Defined and calculable. For n saturated sites, theoretical diversity is 32ⁿ for NNK. Practical libraries often range from 10⁵ to 10⁸ clones [39] [40]. | Uncontrolled and variable. Diversity depends on error rate and screening depth; libraries can exceed 10¹⁰ variants but with high redundancy [39] [40]. |

| Amino Acid Bias | Predictable bias based on genetic code redundancy (e.g., Serine has 3 codons, Tryptophan has 1 in NNK). Stop codon frequency is ~3.1% [41]. | Unpredictable, polymerase-dependent bias. For example, Mutazyme II shows skewed transitions/transversions and cannot mutate certain amino acids to charged residues in a single step [40]. |

| Primary Application | Saturation mutagenesis for affinity maturation, enzyme active site engineering, and deep mutational scanning [40] [41]. | Directed evolution, stability engineering, and creating initial diversity when no structural guidance is available [39] [40]. |

| Key Technical Challenge | Cost and complexity of long degenerate oligonucleotide synthesis. Risk of stop codons in functional proteins [39]. | Difficulty in controlling mutational load and avoiding deleterious multi-mutation combinations that hinder functional screening [39]. |

Comparative Performance in Key Applications

Case Study: Antibody Affinity Maturation

A direct comparative study of the two methods for antibody scFv affinity maturation provides robust experimental performance data [40].

Table 2: Experimental Outcomes from Antibody Affinity Maturation Study [40]

| Metric | NNK Combinatorial Mutagenesis (CDR-Targeted) | Error-Prone PCR (Full scFv) |

|---|---|---|

| Average Mutations per scFv | 2 (range 0–13) | 3 (range 0–11) |

| Mutation Distribution | >99% localized to Complementarity-Determining Regions (CDRs). | Even distribution across Framework Regions (FRs) and CDRs. |

| Theoretical Library Size | 3–6 × 10⁵ variants. | ~1 × 10¹⁰ variants. |

| Amino Acid Representation | Even representation of all 20 amino acids per NNK probability. | Skewed by parental codon; e.g., Gln rarely mutated to polar, Val rarely to negative. |

| Affinity Improvement (Outcome) | Successfully generated binders with improved KD for multiple targets. | Successfully generated binders with improved KD for multiple targets, with similar efficiency. |

| Key Finding | Focused diversity leads to smaller, more efficient libraries. | Broad diversity can yield similar affinity gains, but with more screening burden and potential for destabilizing FR mutations. |

Interpretation: Both methods were effective at generating higher-affinity antibodies, demonstrating that random methods can achieve results comparable to rational design in this context. The choice hinges on resource allocation: NNK offers a smaller, more targeted library, while epPCR offers broader exploration at the cost of larger screening campaigns [40].

Technical Performance Metrics

Service provider data offers insight into the practical execution and quality of libraries constructed via these methods [39].

Table 3: Technical Performance and Quality Control Metrics [39]

| Performance Metric | Degenerate Codon/Chip-Based Libraries | Error-Prone PCR Libraries |

|---|---|---|

| Library Coverage | Typically >98% of designed variants. | Not specifically reported; highly variable based on conditions. |

| Uniformity | High sequence uniformity reported. | Often lower uniformity due to stochastic incorporation. |

| Achievable Complexity | Can exceed 10⁸ clones. | Can exceed 10⁸ clones. |

| Nucleotide Distribution | Closely matches theoretical frequencies (e.g., N=25% each base) [39]. | Deviates based on polymerase bias and condition bias [40]. |

| Primary Validation Method | Next-Generation Sequencing (NGS) for precise variant confirmation. | Often Sanger sequencing of random clones; full NGS is challenging due to high diversity. |

Detailed Experimental Protocols

Protocol for Error-Prone PCR Library Construction

This protocol is adapted from standard practices using commercial low-fidelity polymerase mixes [39] [40].

- Reaction Setup: Prepare a 50-100 µL PCR reaction containing:

- 1-10 ng of template DNA.

- 1X proprietary reaction buffer (often supplied with Mg²⁺).

- 0.2 mM each dATP and dGTP.

- 1.0 mM each dCTP and dTTP (imbalanced dNTPs increase misincorporation).

- 0.5 mM MnCl₂ (critical for reducing polymerase fidelity).

- 0.3 µM each forward and reverse primer.

- 5 U of a low-fidelity polymerase blend (e.g., Mutazyme II).

- Thermocycling: Use standard cycling conditions for your template but extend elongation time by 1-2 minutes per kb to promote misincorporation.

- Product Purification: Run the PCR product on an agarose gel, excise the correct band, and purify using a gel extraction kit.

- Cloning and Transformation: Digest the purified epPCR product and vector with appropriate restriction enzymes. Ligate and transform into a highly competent E. coli strain (e.g., >10⁹ cfu/µg) to maximize library size. Plate on selective agar to obtain the library as a glycerol stock.

- Quality Control: Sequence 10-20 random clones via Sanger sequencing to estimate mutation rate and spectrum.

Protocol for NNK Degenerate Codon Library Construction