Orthogonal Validation of LC-HRMS with NMR: A Strategic Framework for Enhanced Confidence in Metabolomics and Pharmaceutical Analysis

This article provides a comprehensive guide for researchers and drug development professionals on the strategic integration of Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy.

Orthogonal Validation of LC-HRMS with NMR: A Strategic Framework for Enhanced Confidence in Metabolomics and Pharmaceutical Analysis

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the strategic integration of Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy. It explores the foundational principles of this orthogonal validation approach, detailing practical methodologies and data fusion techniques for applications ranging from drug impurity characterization to foodomics and clinical metabolomics. The content addresses common troubleshooting scenarios and optimization strategies for seamless instrument coupling, and finally establishes a robust framework for the comparative analysis and validation of findings, underscoring how this synergy delivers superior structural elucidation, accurate quantification, and increased confidence in metabolite identification.

The Power of Two: Unveiling the Synergistic Principles of LC-HRMS and NMR

In modern analytical chemistry, particularly within drug development and complex mixture analysis, the convergence of Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy represents a powerful orthogonal validation strategy. While each technique operates on fundamentally different physical principles, their combined application provides a comprehensive analytical framework that compensates for their individual limitations. LC-HRMS excels in sensitivity and specificity for targeted compound analysis, whereas NMR offers unparalleled structural elucidation capabilities and absolute quantification without requiring identical standards. This comparative guide objectively examines the performance characteristics of both platforms, providing researchers with a scientific foundation for selecting appropriate methodologies based on their specific analytical requirements. The integration of findings from these complementary techniques significantly enhances the credibility of analytical data in regulatory submissions, metabolomics studies, and complex product characterization, forming a robust backbone for modern analytical workflows in pharmaceutical and biotechnology industries.

Technical Principles and Instrumentation

LC-HRMS Fundamentals

Liquid Chromatography-High-Resolution Mass Spectrometry combines the physical separation capabilities of liquid chromatography with the precise mass measurement of high-resolution mass analyzers. In typical operation, samples are introduced via liquid chromatography where compounds are separated based on their chemical interactions with the stationary and mobile phases. The eluent then enters the mass spectrometer interface, where ionization sources such as Electrospray Ionization (ESI) or Atmospheric Pressure Chemical Ionization (APCI) convert analytes into gas-phase ions. These ions are subsequently separated in high-resolution mass analyzers including Time-of-Flight (ToF), Orbitrap, or Fourier Transform Ion Cyclotron Resonance (FTICR) instruments, which provide mass measurements with accuracy typically better than 5 ppm [1]. This high mass accuracy enables confident elemental composition assignment and facilitates the identification of unknown compounds in complex matrices. Tandem mass spectrometry (MS/MS) capabilities further enhance structural elucidation by producing diagnostic fragment ions through collision-induced dissociation (CID) or advanced fragmentation techniques like electron-activated dissociation (EAD), which provides complementary fragmentation pathways for challenging compounds such as steroids [2].

NMR Spectroscopy Fundamentals

Nuclear Magnetic Resonance spectroscopy exploits the magnetic properties of certain atomic nuclei, most commonly protons (¹H) and carbon-13 (¹³C), which when placed in a strong static magnetic field absorb and re-emit electromagnetic radiation at characteristic frequencies. These resonance frequencies are exquisitely sensitive to the local chemical environment, providing detailed information about molecular structure, conformation, and dynamics. Modern NMR spectrometers utilize superconducting magnets producing field strengths ranging from 400 MHz to 1 GHz and higher, with increased field strength directly improving both spectral resolution and sensitivity [3] [4]. NMR experiments range from simple one-dimensional (1D) ¹H or ¹³C spectra to complex two-dimensional (2D) experiments such as COSY (Correlation Spectroscopy), TOCSY (Total Correlation Spectroscopy), HSQC (Heteronuclear Single Quantum Coherence), and HMBC (Heteronuclear Multiple Bond Correlation) that establish connectivity between nuclei through chemical bonds and through space [5]. The technique is inherently quantitative, as NMR signal intensity is directly proportional to the number of nuclei generating the signal, enabling absolute quantification without compound-specific calibration curves.

Comparative Performance Analysis

Sensitivity and Limits of Detection

Table 1: Sensitivity Comparison Between LC-HRMS and NMR Techniques

| Technique | Typical LOD | Matrix Effects | Key Sensitivity Factors |

|---|---|---|---|

| LC-HRMS (Triple Quadrupole) | 0.1-0.2 ng/mL (in urine) [6] | Significant ion suppression/enhancement | Ionization efficiency, matrix interference, mass analyzer type |

| LC-HRMS (High-Resolution) | 0.9-1.2 ng/mL (in urine) [6] | Moderate to significant | Mass resolving power, scan speed, ionization source |

| NMR (Conventional) | μM to mM range [3] | Minimal | Magnetic field strength, probe design, isotope nature |

| NMR (Hyperpolarized d-DNP) | >10,000x sensitivity enhancement [3] | Minimal | Polarization method, nucleus, T₁ relaxation |

The sensitivity disparity between LC-HRMS and NMR represents the most significant performance differentiator. LC-HRMS, particularly when operated in targeted multiple reaction monitoring (MRM) mode on triple quadrupole instruments, achieves exceptional sensitivity with limits of detection (LOD) in the sub-nanogram per milliliter range for many analytes in biological matrices [6]. This exquisite sensitivity enables the detection and quantification of low-abundance metabolites, pharmaceutical compounds, and biomarkers in complex samples. High-resolution MS systems, while slightly less sensitive than triple quadrupole instruments for targeted analysis, provide the advantage of untargeted screening capabilities with typical LODs around 1 ng/mL [6].

In stark contrast, conventional NMR spectroscopy operates with sensitivity limitations that typically restrict detection to compounds in the micromolar to millimolar concentration range [3]. This fundamental sensitivity challenge stems from the small energy differences between nuclear spin states, resulting in low population differences and consequently weak signals. However, recent technological advances have begun to address this limitation through hyperpolarization techniques, particularly dissolution dynamic nuclear polarization (d-DNP), which can enhance NMR signals by several orders of magnitude—with some studies reporting sensitivity increases greater than 10,000-fold [3]. This dramatic improvement potentially bridges the sensitivity gap between NMR and MS, enabling the detection and quantification of minute metabolite concentrations in biological samples at natural abundance.

Structural Elucidation Capabilities

Table 2: Structural Elucidation Capabilities of NMR and LC-HRMS

| Aspect | NMR | LC-HRMS |

|---|---|---|

| Molecular Formula | Indirectly via ¹³C NMR, ¹H-¹³C correlation | Direct from accurate mass (< 5 ppm) [1] |

| Connectivity | Through-bond (COSY, TOCSY, HSQC, HMBC) [5] | Indirect via fragmentation patterns (MS/MS) |

| Stereochemistry | Excellent (NOESY, ROESY, J-couplings) | Limited |

| Unknown Identification | De novo structure elucidation [7] | Requires libraries or computational approaches |

| Isobar Discrimination | Excellent | Limited without separation or MS/MS |

| Through-space Interactions | Yes (NOE, ROE) | No |

Structural elucidation represents the most significant strength of NMR spectroscopy, providing comprehensive atomic-level information about molecular structure, including connectivity, stereochemistry, and conformation. Two-dimensional NMR experiments such as COSY and TOCSY establish through-bond connectivity between protons, while HSQC and HMBC correlations provide direct evidence of carbon-hydrogen bonding relationships and longer-range couplings, respectively [5]. The nuclear Overhauser effect (NOE), observed through NOESY or ROESY experiments, provides critical through-space distance constraints that enable determination of three-dimensional molecular structure and relative stereochemistry [5]. This comprehensive structural information allows NMR to perform complete de novo structure elucidation of unknown compounds, with recent advances in machine learning frameworks demonstrating the ability to predict molecular structure directly from 1D ¹H and ¹³C NMR spectra with high accuracy [7].

LC-HRMS provides complementary structural information primarily through accurate mass measurement and fragmentation patterns. High-resolution mass measurements enable confident determination of elemental composition, while tandem mass spectrometry produces fragment ions that reveal structural motifs and functional groups [1]. However, MS-based structural elucidation typically requires reference to spectral libraries or computational approaches for complete structural assignment, and struggles with stereochemical differentiation and positional isomers without additional separation dimensions or advanced fragmentation techniques. Recent innovations such as electron-activated dissociation (EAD) have improved structural characterization capabilities for challenging compounds like steroids, providing diagnostic fragments that enable distinction of isomers and isobars [2].

Quantification Performance

Table 3: Quantitative Performance Comparison

| Parameter | NMR | LC-HRMS |

|---|---|---|

| Dynamic Range | 10²-10³ | 10³-10⁶ |

| Accuracy | High (absolute quantification possible) [3] | Variable (matrix-dependent) |

| Precision | High (CV 1.5-4.6%) [8] | Moderate to high (CV 4-15%) |

| Matrix Effects | Minimal | Significant (ion suppression) |

| Standard Requirement | Not essential (absolute quantification) | Essential (relative quantification) |

| Linearity | Excellent inherently linear | Requires calibration curves |

Quantitative analysis represents an area where both techniques exhibit distinct advantages and limitations. NMR spectroscopy provides inherently quantitative data, as signal intensity is directly proportional to the number of nuclei generating the signal. This property enables absolute quantification without compound-specific calibration curves, using certified reference materials or internal standards for concentration determination [3]. NMR quantification demonstrates excellent precision, with reported coefficients of variation (CV) between 1.5-4.6% for validated metabolomics applications [8]. The technique is remarkably robust against matrix effects, with minimal signal variation due to sample composition differences, making it particularly valuable for complex biological samples where matrix-matched calibration is challenging.

LC-HRMS typically provides superior sensitivity and dynamic range for quantitative analysis, but requires compound-specific calibration curves and suffers from matrix effects that can cause significant ion suppression or enhancement [6]. Quantitative performance varies considerably based on instrument platform, with triple quadrupole MS systems generally providing better precision and lower limits of quantification compared to high-resolution instruments, though HRMS systems offer the advantage of simultaneous targeted and untargeted analysis [6]. Method validation studies for untargeted LC-HRMS metabolomics have demonstrated the capability to validate 47-55 metabolites with excellent reproducibility (median repeatability CV of 4.5-4.6%), supporting the use of these platforms for large-scale quantitative studies [8].

Experimental Protocols for Orthogonal Validation

LC-HRMS Method for Steroid Analysis

A representative protocol for sensitive quantification and structural elucidation of steroids using LC-HRMS illustrates the technical capabilities of modern mass spectrometry platforms [2]:

Sample Preparation:

- Add 5 μL of deuterated internal standard mixture to 200 μL of plasma sample

- Precipitate proteins using 250 μL of 0.1M zinc sulfate and 500 μL cold methanol

- Centrifuge at 2,637 rcf for 10 minutes and collect supernatant

- Perform solid-phase extraction using HLB SPE 30 mg (30 μm) plates

- Elute steroids with acetonitrile, dry under N₂, and reconstitute in 50 μL 50:50 methanol:water

Chromatography:

- Column: Kinetex biphenyl (2.6 μm, 100 × 2.1 mm)

- Mobile Phase A: Water with 0.2 mM ammonium fluoride

- Mobile Phase B: Methanol

- Gradient: 50% B to 95% B over 9 minutes, hold for 0.8 minutes

- Flow Rate: 400 μL/min

- Column Temperature: 50°C

- Injection Volume: 15 μL

Mass Spectrometry:

- System: ZenoTOF 7600

- Ionization: ESI positive/negative mode

- Acquisition: Scheduled high-resolution multiple reaction monitoring (sMRMHR)

- MS/MS: Collision-induced dissociation and electron-activated dissociation

- Mass Range: m/z 100-500 (positive mode), m/z 100-800 (negative mode)

This method enables robust, high-throughput quantitative analysis of hormonal steroids with lower limits of quantification sufficient for accurate measurement in plasma samples, while EAD fragmentation provides structural characterization that distinguishes steroid isomers and isobars [2].

NMR Method for Complex Mixture Analysis

A quantitative NMR protocol for complex mixture analysis demonstrates the application of NMR in metabolomics and complex sample characterization [3] [9]:

Sample Preparation:

- For wine metabolomics: Mix 300 μL of wine with 300 μL of deuterated phosphate buffer (pH 3.0) containing 0.1% TSP as internal standard [9]

- Centrifuge at 14,000 rpm for 10 minutes to remove particulate matter

- Transfer 550 μL to standard 5 mm NMR tubes

Data Acquisition:

- Spectrometer: 400 MHz Bruker Avance Neo

- Probe: 5 mm BBO cryoprobe

- Temperature: 300 K

- ¹H NMR Parameters: 128 scans, 4 prior dummy scans, 90° pulse, acquisition time 2.7 s, relaxation delay 5 s

- Water Presaturation: Employed during relaxation delay

- 2D Experiments: ¹H-¹H TOCSY (mixing time 80 ms), ¹H-¹³C HSQC

Data Processing:

- Exponential line broadening: 0.3 Hz

- Fourier transformation followed by phase and baseline correction

- Referencing: TSP methyl signal at δ 0.00 ppm

- Spectral binning: 0.01 ppm buckets for multivariate analysis

- Quantification: Relative to TSP internal standard or absolute quantification using electronic reference

This protocol enables both relative and absolute quantification of metabolites in complex mixtures, with applications demonstrated in wine metabolomics where it successfully discriminated samples based on withering time and yeast strains [9].

Integrated Workflows and Data Integration

Orthogonal Analysis Workflow

The complementary nature of LC-HRMS and NMR spectroscopy makes them ideal partners in orthogonal validation workflows. Integrated approaches leverage the sensitivity and compound-specific detection capabilities of LC-HRMS with the unambiguous structural elucidation and absolute quantification strengths of NMR. Studies have demonstrated that multi-omics data integration combining LC-HRMS and ¹H NMR provides superior classification accuracy and a more comprehensive metabolic characterization compared to either technique alone [9]. In wine metabolomics, this integrated approach successfully discriminated samples based on grape withering time and yeast strains, with significant variations observed in amino acids, monosaccharides, and polyphenolic compounds [9]. Similarly, in biopharmaceutical analysis, orthogonal characterization of monoclonal antibody glycosylation patterns using HRMS, NMR, and HILIC-FLD demonstrated agreement across all methods for major glycoforms, while increasing confidence in glycan characterization through methodological triangulation [10].

Essential Research Reagent Solutions

Table 4: Essential Research Reagents for LC-HRMS and NMR

| Reagent/Material | Application | Function | Example |

|---|---|---|---|

| Deuterated Solvents | NMR spectroscopy | Provides field frequency lock; minimizes solvent interference | D₂O, CD₃OD [9] |

| Internal Standards | Quantitative NMR | Chemical shift reference; quantification | TSP [9] |

| Deuterated Internal Standards | LC-HRMS quantification | Compensates for matrix effects; improves accuracy | Deuterated steroids [2] |

| SPE Cartridges | Sample preparation | Matrix clean-up; analyte concentration | HLB SPE 30 mg [2] |

| Ion Pairing Reagents | LC-HRMS of polar compounds | Improves retention of hydrophilic analytes | Ammonium fluoride [2] |

| Stable Isotope Labels | Metabolic flux studies | Tracks metabolic pathways | ¹³C-labeled compounds |

| NMR Reference Compounds | Method validation | System suitability testing | Traceable standards |

The orthogonal validation of LC-HRMS findings with NMR spectroscopy represents a powerful paradigm in modern analytical chemistry, particularly for drug development and complex mixture analysis. LC-HRMS provides unparalleled sensitivity, broad dynamic range, and excellent specificity for targeted compound quantification, while NMR offers definitive structural elucidation, absolute quantification capabilities, and minimal matrix effects. The strategic integration of these complementary techniques creates an analytical framework whose combined capabilities exceed those of either technique in isolation, enabling comprehensive characterization of complex samples from molecular structure to quantitative abundance. Advances in both technologies—including hyperpolarization methods for NMR and EAD fragmentation for HRMS—continue to expand their synergistic potential, offering researchers increasingly powerful tools for solving complex analytical challenges in pharmaceutical development, metabolomics, and quality control. The objective selection criteria and performance data presented in this guide provide a scientific foundation for technique selection based on specific analytical requirements, supporting robust analytical workflows that deliver validated, defensible results for critical applications in research and regulatory environments.

The unambiguous identification and characterization of small molecules in complex biological and environmental matrices represents one of the most significant challenges in modern analytical science. Researchers in fields ranging from drug development to environmental exposomics consistently face the reality that even the most advanced analytical techniques possess inherent limitations that prevent comprehensive mixture analysis when used in isolation [11]. Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy have emerged as the two most powerful platforms for structural elucidation, yet they provide fundamentally different and complementary information [11] [12]. The integration of these techniques creates a powerful synergistic workflow that overcomes the limitations of either method used alone, enabling researchers to achieve a level of analytical confidence unattainable with single-technique approaches [13] [12]. This guide examines the technical foundations of this complementarity, provides experimental protocols for orthogonal validation, and demonstrates through case studies and quantitative data why a multi-technique approach is essential for confident characterization of complex mixtures.

Technical Comparison: Fundamental Differences and Complementarity Between LC-HRMS and NMR

The analytical advantages of LC-HRMS and NMR stem from their fundamentally different physical principles of operation, which in turn define their respective strengths and limitations in characterizing complex mixtures. Understanding these core differences is essential for designing effective integrated workflows.

Table 1: Fundamental Comparison of LC-HRMS and NMR for Complex Mixture Analysis

| Parameter | LC-HRMS | NMR |

|---|---|---|

| Analytical Principle | Mass-to-charge ratio of ions in gaseous phase [11] | Absorption of radiofrequency by atomic nuclei in magnetic field [11] [14] |

| Primary Structural Information | Molecular weight, elemental composition, fragmentation patterns [11] | Molecular framework, functional groups, atomic connectivity, stereochemistry [11] [14] |

| Sensitivity | Femtomole range (10⁻¹³ mol) [11] | Microgram range (10⁻⁹ mol) [11] |

| Quantitation Capability | Requires standards/internal calibrants [14] | Inherently quantitative without standards [11] [15] |

| Isomer Discrimination | Limited ability to distinguish positional isomers and stereoisomers [11] [14] | Excellent for distinguishing isobaric compounds and positional isomers [11] |

| Sample Throughput | High (seconds per analysis) [11] | Low (minutes to hours for 1D spectra; hours to days for 2D) [11] |

| Sample Recovery | Destructive analysis [14] | Non-destructive; sample can be recovered for further analysis [11] [14] |

| Key Limitations | Matrix effects, difficulty distinguishing isomers, requires authentic standards for definitive ID [11] | Low sensitivity, long acquisition times, solvent interference challenges [11] |

The complementarity between these techniques is perhaps most evident in their respective abilities to handle specific analytical challenges. MS can detect certain functional groups such as sulfate and nitro groups that are effectively "NMR silent," while NMR can distinguish isobaric compounds and positional isomers that MS cannot differentiate based on mass alone [11]. Furthermore, NMR provides direct information on molecular conformation and dynamics, including three-dimensional configuration through experiments like NOESY/ROESY [14], while MS provides superior sensitivity for detecting low-abundance metabolites in complex matrices [11].

Experimental Protocols: Methodologies for Orthogonal Validation

Integrated LC-HRMS and NMR Workflow for Natural Products Discovery

The application of complementary LC-HRMS and NMR methodologies is particularly valuable in natural product discovery, where researchers must identify novel compounds in complex biological extracts. The following protocol, adapted from research on endophytic fungi, demonstrates a robust approach for unambiguous structural identification [13]:

Sample Preparation: Extract fungal biomass (e.g., Fusarium petroliphilum) using ethyl acetate. Concentrate under reduced pressure and resuspend in appropriate LC-MS compatible solvents [13].

UHPLC-HRMS/MS Analysis:

- Column: Reversed-phase C18 column (e.g., 2.1 × 100 mm, 1.7 μm)

- Mobile Phase: Gradient of water (A) and acetonitrile (B), both with 0.1% formic acid

- Gradient: 5% to 100% B over 15 minutes

- Mass Analyzer: High-resolution mass spectrometer (e.g., Q-TOF) with data-dependent acquisition

- Ionization: Electrospray ionization in positive and negative modes

- Mass Range: 50-1200 m/z

- Collision Energies: Ramped (e.g., 20-40 eV) for MS/MS fragmentation [13]

Semi-Preparative HPLC Fractionation:

- Column: Reversed-phase C18 column (e.g., 10 × 250 mm, 5 μm)

- Mobile Phase: Optimized gradient based on analytical separation

- Flow Rate: 3 mL/min

- Fraction Collection: Automated collection every 30 seconds

- Detection: UV at 210, 254, and 280 nm [13]

NMR Analysis:

- Sample Preparation: Reconstitute fractions in 600 μL deuterated solvent (e.g., CD₃OD)

- NMR Instrumentation: High-field NMR spectrometer (e.g., 600 MHz)

- Experiments: ¹H NMR, ¹³C NMR, COSY, HSQC, HMBC, NOESY/ROESY

- Temperature: 298K

- Processing: Fourier transformation with appropriate window functions [13]

Data Integration:

- Assemble ¹H-NMR spectra from all fractions into a 2D contour map ("pseudo-LC-NMR")

- Correlate MS-derived molecular formulae and fragmentation patterns with NMR-derived structural information

- Combine data for unambiguous structural elucidation of both known and novel compounds [13]

Statistical Heterospectroscopy (SHY) for Foodomics Applications

In food quality assessment, a multilevel correlation approach integrating LC-HRMS and NMR data has been successfully applied to table olives, providing a template for other complex mixtures [12]:

Parallel Sample Preparation:

- Extract identical samples using standardized protocols (e.g., methanol-water extraction)

- Split extracts for simultaneous LC-HRMS and NMR analysis

- Maintain consistent sample handling to minimize technical variability [12]

Untargeted UPLC-HRMS/MS Analysis:

- Chromatography: Reversed-phase UPLC with appropriate gradient

- Mass Detection: High-resolution mass spectrometer with ESI source

- Data Acquisition: Full-scan MS with data-dependent MS/MS

- Quality Control: Inject pooled quality control samples throughout sequence [12]

NMR Spectroscopy:

- Sample Preparation: Mix aliquots with deuterated solvent containing TMS reference

- Acquisition: Standard ¹H NMR with water suppression

- Parameters: Sufficient scans to achieve adequate S/N for low-abundance metabolites [12]

Multilevel Data Integration:

- Process LC-HRMS and NMR datasets independently using standard software

- Apply Statistical Heterospectroscopy (SHY) to analyze covariance between signal intensities from both platforms

- Identify statistically significant biomarkers through combined statistical power

- Validate annotations through comparison with authentic standards where available [12]

Integrated LC-HRMS/NMR Workflow for Complex Mixtures

Quantitative Data: Comparative Performance Metrics

The orthogonal nature of LC-HRMS and NMR produces distinct but complementary quantitative data that, when combined, provides a more comprehensive understanding of complex mixtures than either technique alone.

Table 2: Quantitative Comparison of Detection and Identification Capabilities

| Analysis Parameter | LC-HRMS Performance | NMR Performance | Complementary Advantage |

|---|---|---|---|

| Limit of Detection | Femtomole range (10⁻¹³ mol) [11] | Microgram range (10⁻⁹ mol) [11] | >10,000x sensitivity difference enables broad concentration range coverage |

| Quantitative Precision | Requires internal standards [14] | <0.1% with internal reference method [15] | NMR provides absolute quantitation; MS provides sensitivity for trace analytes |

| Isomer Identification | Limited capability [11] | Distinguishes positional isomers and stereochemistry [14] | NMR resolves structural ambiguities remaining after MS analysis |

| Annotation Confidence | Tentative without standards [11] | Definitive structural determination [11] | Combined approaches achieve highest confidence levels (MSI Level 1) |

| Analysis Time | Seconds to minutes [11] | Minutes to days [11] | MS rapidly screens for features of interest; NMR provides definitive ID on selected targets |

The quantitative NMR (qNMR) approach demonstrates particularly robust performance characteristics, with the internal reference method showing exceptional precision (stable within 0.1% for at least 4 weeks) [15]. When applied to complex mixture analysis, integrated approaches have demonstrated the ability to correctly classify samples with error rates as low as 7.52% in wine metabolomics studies, significantly outperforming single-technique approaches [16].

Case Studies: Real-World Applications in Pharmaceutical and Food Science

Accelerated Drug Development Through Structural Elucidation

In pharmaceutical development, the integration of LC-HRMS and NMR has demonstrated significant value in accelerating drug discovery timelines. A case study involving the structural confirmation of a novel antihypertensive small molecule illustrates this advantage [14]. When internal analytical teams struggled to identify the stereochemical integrity of a chiral compound critical to the drug's efficacy, outsourcing to specialists employing 2D-NMR (COSY, HSQC, HMBC) and chiral NMR techniques revealed a stereochemical inversion at the 4th carbon that was subsequently corrected in synthesis. This application of complementary techniques resulted in a 30% reduction in development time and significant cost savings due to early correction, ultimately leading to a successful Investigational New Drug (IND) application [14].

Food Quality Assessment Through Multi-Omics Data Fusion

In foodomics, the integration of LC-HRMS and NMR has proven valuable for quality control and authentication of valuable food commodities. A comprehensive study of table olives employed statistical heterospectroscopy (SHY) to combine LC-HRMS and NMR datasets, enabling the identification of biomarkers correlated to geographical origin, botanical variety, and processing parameters [12]. The approach identified phenyl alcohols, phenylpropanoids, flavonoids, secoiridoids, and triterpenoids as responsible for observed classifications, providing a methodological framework that could be extended to other food quality control applications [12]. Similarly, a study on Amarone wine classification demonstrated that data fusion of LC-HRMS and ¹H NMR profiles significantly improved predictive accuracy for classifying wines based on grape withering time and yeast strain, with significant variations observed in amino acids, monosaccharides, and polyphenolic compounds [16].

Essential Research Reagent Solutions

Successful implementation of integrated LC-HRMS/NMR workflows requires specific reagents and materials optimized for the technical requirements of both platforms.

Table 3: Essential Research Reagents and Materials for Integrated LC-HRMS/NMR Workflows

| Reagent/Material | Function/Purpose | Technical Considerations |

|---|---|---|

| Deuterated Solvents (e.g., CD₃OD, D₂O) | NMR solvent with minimal interference | Required for solvent suppression; D₂O relatively inexpensive while deuterated organics cost >$1/mL [11] |

| Internal Quantitative Standards (e.g., TMS, DSS) | Chemical shift reference for NMR; quantitation | Provides consistent internal reference for chemical shift calibration [15] |

| LC-MS Grade Solvents | Mobile phase for chromatography | High purity minimizes background noise and ion suppression in MS [11] |

| Formic Acid/Acetic Acid | Mobile phase modifiers | Improves ionization efficiency in MS; concentration (typically 0.1%) must be compatible with NMR [11] [13] |

| SPE Cartridges (C18, polymeric) | Sample cleanup and concentration | Removes interfering matrix components; enables analyte concentration for NMR detection [11] |

| Deuterated Internal Standards (e.g., D₄-TSP) | Quantitative NMR reference | Enables precise concentration determination without interference [15] |

| Cryoprobes/Microcoil Probes | NMR sensitivity enhancement | Cryoprobes provide 4x S/N improvement in organic solvents; microcoil probes enable <1.5 μL active volumes [11] |

The comprehensive analysis of complex mixtures represents a fundamental challenge that cannot be adequately addressed by any single analytical technique. LC-HRMS provides exceptional sensitivity, selectivity, and molecular weight information but struggles with definitive isomer identification and requires authentic standards for unambiguous compound identification. NMR spectroscopy delivers definitive structural information, stereochemical resolution, and inherent quantitation capabilities but suffers from relatively low sensitivity and longer analysis times. The integration of these complementary techniques creates a synergistic analytical platform that overcomes the limitations of either method used in isolation, enabling researchers to achieve a level of analytical confidence essential for demanding applications in pharmaceutical development, foodomics, clinical metabolomics, and environmental exposomics. As the complexity of analytical challenges continues to grow in scientific research, the implementation of orthogonal validation strategies employing both LC-HRMS and NMR will become increasingly essential for definitive characterization of complex mixtures.

Orthogonal validation, the practice of verifying results using methods that operate on different scientific principles, is a cornerstone of robust scientific research. In the analysis of complex biological samples, no single analytical technique can provide a complete picture. The synergistic use of Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy represents a powerful orthogonal partnership, merging their distinct strengths to deliver a level of confidence in data interpretation that neither could achieve alone [17].

This guide explores the core concepts of this approach, providing a detailed comparison of the techniques, standardized experimental protocols, and visual workflows to help researchers build an unshakeable foundation for their findings.

The "Why": Fundamental Principles of an LC-HRMS and NMR Partnership

Orthogonal validation is akin to using a reference weight to verify a scale's measurement; it uses antibody-independent data to cross-reference and verify the results of an antibody-driven experiment [18]. In the context of LC-HRMS and NMR, this means combining two powerful, yet fundamentally different, analytical techniques to control for the inherent limitations and potential biases of each.

- LC-HRMS excels at sensitivity and identification. It can detect and provide precise molecular weights for countless compounds in a complex mixture, even at very low concentrations. Its ability to generate fragmentation patterns (MS/MS) is invaluable for proposing molecular structures [19] [17].

- NMR provides definitive structural elucidation. It is a robust and reproducible technique that offers unparalleled insight into molecular conformation, functional groups, stereochemistry, and dynamics. It is quantitative by principle and does not require compound-specific optimization [14] [17].

The table below summarizes their complementary roles in an orthogonal framework:

Table 1: Orthogonal Roles of LC-HRMS and NMR

| Attribute | LC-HRMS | NMR |

|---|---|---|

| Primary Strength | High sensitivity; broad metabolite coverage; tentative identification via accurate mass and MS/MS | Absolute structural elucidation; stereochemistry resolution; quantification without standards |

| Structural Detail | Molecular formula, fragmentation pattern | Full molecular framework, atomic connectivity, 3D conformation |

| Sensitivity | High (picogram to nanogram) | Moderate (microgram) |

| Quantification | Possible, but requires internal standards | Inherently quantitative |

| Key Advantage | Identifies features of interest in complex mixtures | Confirms identity and resolves isomers with high confidence |

| Major Limitation | Structural ambiguity, especially for isomers | Lower sensitivity; signal overlap in complex mixtures |

The "How": Experimental Protocols for Integrated Workflows

Implementing an orthogonal strategy requires careful experimental planning. Below are detailed protocols for leveraging LC-HRMS and NMR, both in parallel and in a directly integrated fashion.

Protocol 1: Parallel Analysis with Data Fusion

This is a widely used approach where the same sample set is analyzed separately by LC-HRMS and NMR, with the data combined computationally.

Sample Preparation:

- Extract samples (e.g., plant material, biofluids) using a solvent system appropriate for the metabolite classes of interest (e.g., hydroethanolic for polar phenolics) [20].

- For LC-HRMS, clarify the extract via centrifugation and filtration (e.g., 0.22 µm membrane).

- For NMR, take an aliquot of the extract and dry it under a nitrogen stream. Reconstitute the dried material in a deuterated solvent (e.g., D₂O, CD₃OD) containing a known concentration of an internal standard (e.g., TSP for ¹H NMR) for chemical shift referencing and quantification [20] [17].

LC-HRMS Analysis:

- Chromatography: Use a UHPLC system with a reversed-phase column (e.g., C18, 100 x 2.1 mm, 1.7 µm). Employ a water-acetonitrile gradient with 0.1% formic acid over 15-20 minutes [20].

- Mass Spectrometry: Acquire data on a high-resolution mass spectrometer (e.g., Orbitrap) in both positive and negative electrospray ionization (ESI) modes. Use a data-dependent acquisition (DDA) method to collect full-scan MS and subsequent MS/MS spectra for the most intense ions [21].

NMR Analysis:

- Acquisition: Perform ¹H NMR experiments on a high-field spectrometer (e.g., 600 MHz). The standard 1D experiment with water suppression is essential. For complex mixtures, 2D experiments such as J-resolved (JRES), COSY, and HSQC are invaluable for disentangling signals and establishing atom connectivity [20] [14].

Data Fusion and Analysis:

- Process LC-HRMS data (peak picking, alignment) and NMR data (Fourier transformation, phasing, baseline correction) using specialized software.

- The datasets are concatenated using data fusion methods (e.g., Statistical HetetospectroscopY - SHY) and submitted to multivariate statistical analysis (e.g., PCA, sPLS-DA) to identify key discriminating metabolites responsible for sample classification [16] [17].

Protocol 2: Integrated LC-MS-NMR Platform with Active Segmentation

For the highest confidence in correlating data, a platform that physically splits the LC eluent for simultaneous MS and NMR analysis can be employed.

Platform Setup:

- The system uses an active segmentation platform with a four-port, two-way valve to transform the analytical LC flow rate eluent into a segmented flow [22].

- The LC eluent is mixed with a carrier phase (e.g., perfluorotributylamine, FC43) that is immiscible, lipophobic, non-ionizable in ESI, and transparent in ¹H NMR [22].

- The valve switches at a high frequency (~1 Hz) to create microliter-sized segments of eluent separated by the carrier phase [22].

Post-Separation Workflow:

- The segmented flow is split into two parallel paths.

- One path is directed online to the HRMS for real-time analysis.

- The other path is stored in capillary tubing as a "queue" of segments.

- Based on the MS results, segments containing analytes of interest are selected and delivered offline to a capillary NMR flow cell (e.g., a Protasis microcoil probe) for segment-specific ¹H NMR analysis [22].

Table 2: Key Research Reagent Solutions

| Reagent / Material | Function in the Workflow |

|---|---|

| Deuterated Solvents (e.g., D₂O, CD₃OD) | NMR solvent; provides a lock signal and defines the chemical shift reference [20]. |

| Internal Standard (e.g., TSP) | Chemical shift reference and quantitative standard in NMR spectroscopy [17]. |

| Perfluorotributylamine (FC43) | Immiscible carrier phase in active segmentation; MS-silent and NMR-transparent [22]. |

| PNGase F Enzyme | Enzymatically releases N-glycans from monoclonal antibodies for glycosylation analysis [21]. |

| Fluorophore (e.g., 2-AB) | Labels released glycans for sensitive fluorescence detection (FLD) in HILIC analysis [21]. |

| Trypsin Protease | Proteolytically digests proteins into peptides (including glycopeptides) for MAM analysis [21]. |

Visualizing the Workflow: From LC Separation to Structural Confidence

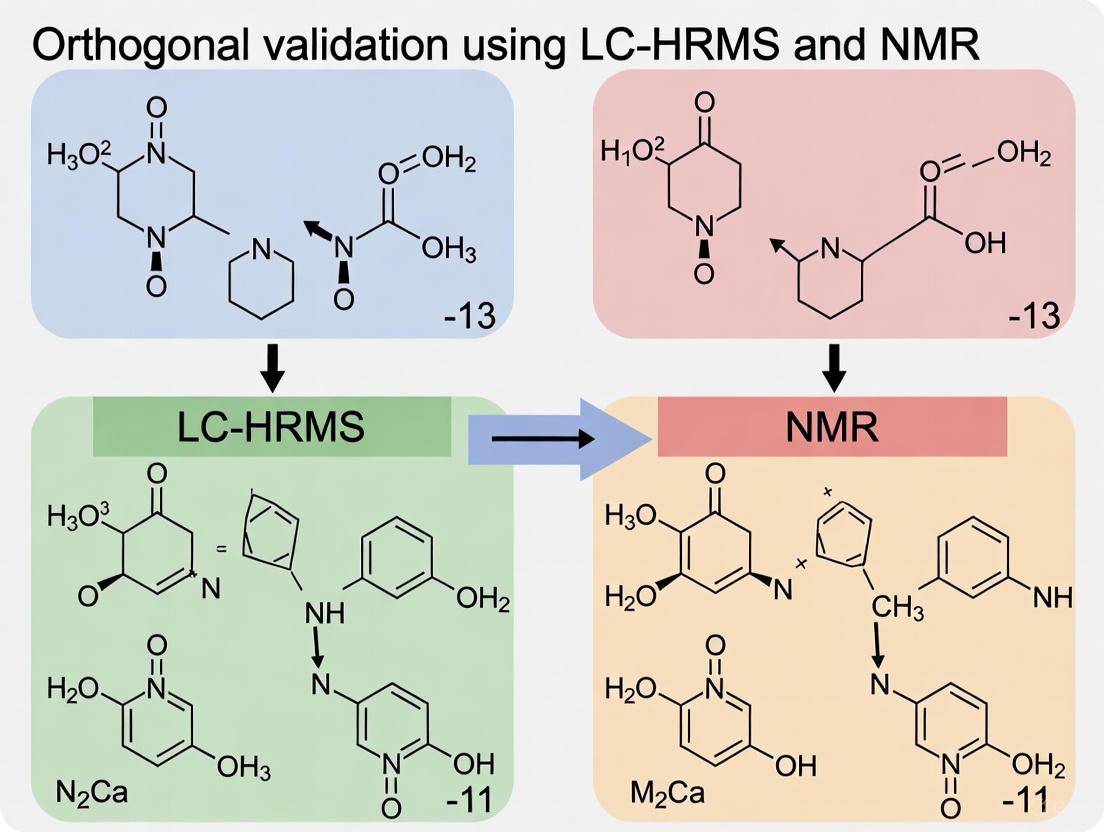

The following diagram illustrates the logical pathway of an orthogonal validation strategy, highlighting how LC-HRMS and NMR data converge to build confidence in metabolite identification.

Orthogonal LC-HRMS and NMR Workflow

Application in Action: Case Studies in Biopharma and Beyond

The power of this orthogonal approach is best demonstrated through real-world applications.

Case Study 1: Biosimilar Characterization A study compared the glycosylation profiles of an FDA-approved innovator product (Rituximab) and a foreign-sourced product. The panel of methods included HILIC-FLD, MAM (LC-HRMS of glycopeptides), intact mass LC-MS, and middle-down NMR. The results demonstrated agreement across all methods for major glycoforms, showing how confidence in glycan characterization is dramatically increased by combining orthogonal methodologies. This is critical for demonstrating analytical comparability for biosimilar approval [21].

Case Study 2: Foodomics and Authentication In the classification of Amarone wines, a data fusion approach that integrated LC-HRMS and ¹H NMR datasets provided a much broader characterization of the wine metabolome than either technique alone. The multi-omics model successfully classified wines based on withering time and yeast strains with a low error rate (7.52%), identifying discriminators like amino acids and polyphenols. The study noted a limited correlation between the datasets (RV-score=16.4%), underscoring their complementarity [16].

Table 3: Performance Comparison in Metabolite Identification

| Metric | LC-HRMS Alone | NMR Alone | Orthogonal LC-HRMS + NMR |

|---|---|---|---|

| Confidence Level | Tentative annotation (MSI level 2) | Confident identification (MSI level 1) | Confirmed structure (Highest confidence) |

| Isomer Resolution | Limited; requires advanced MS/MS or separation | Excellent; via chemical shift and J-coupling | Definitive |

| Quantification | Semi-quantitative (needs standards) | Fully quantitative | Robust and quantitative |

| Throughput | High | Moderate | Moderate (workflow dependent) |

| Application Example | Detecting 100s of features in plant extract [20] | Profiling monosaccharide content in mAbs [21] | Classifying wine origin & processing [16] |

In an era demanding rigorous reproducibility and deep scientific insight, orthogonal validation is not a luxury but a necessity. The partnership between LC-HRMS and NMR forms a powerful foundation for confidence, transforming ambiguous data into confirmed chemical structures. As the presented protocols and case studies show, this integrated approach is indispensable for critical tasks in drug development, from characterizing complex biologics like monoclonal antibodies to ensuring the quality and authenticity of natural products. By systematically implementing these core concepts, researchers and drug development professionals can build a robust, defensible, and trustworthy analytical practice.

Metabolomics, the comprehensive analysis of small-molecule metabolites in biological systems, has become an indispensable tool across multiple scientific disciplines. Its power is greatly amplified when analytical techniques are combined to leverage their complementary strengths. Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) provides exceptional sensitivity for detecting and quantifying a vast array of metabolites, while Nuclear Magnetic Resonance (NMR) spectroscopy offers unparalleled structural elucidation capabilities and precise quantification without requiring reference standards [23] [24]. This orthogonal validation—using independent methods to confirm analytical findings—represents a cornerstone of rigorous metabolomics research, enhancing data reliability and biological interpretation across drug development, foodomics, and clinical applications [23].

The following sections explore key applications in these fields, supported by experimental data, detailed methodologies, and visualizations of the integrated workflows that leverage both LC-HRMS and NMR technologies.

Drug Development and Pharmacometabolomics

Application in Target Identification and Mechanism of Action

Metabolomics has revolutionized early drug discovery by identifying novel therapeutic targets and elucidating mechanisms of action (MoA). A prominent example is the development of Ivosidenib and Enasidenib for treating acute myeloid leukemia (AML). Metabolomics studies identified D-2-hydroxyglutarate (D-2HG) as an oncometabolite that contributes to disease processes in AML and gliomas. This discovery revealed mutated isocitrate dehydrogenase (IDH) as a promising drug target, leading to the development of inhibitors that specifically block D-2HG production [25]. Similarly, glutamine metabolism was identified as a hallmark of cancer metabolism through metabolomics, leading to the development of CB-839 (Telaglenastat), a glutaminase inhibitor that demonstrated antitumor activity in triple-negative breast cancer models by reducing glutamate and downstream metabolites [25].

Table 1: Drug Development Applications Supported by Metabolomics

| Application Area | Specific Use Case | Metabolomics Contribution | Techniques Employed |

|---|---|---|---|

| Target Identification | IDH inhibitors for AML | Discovery of oncometabolite D-2-hydroxyglutarate | LC-MS, Stable Isotope Tracing [25] |

| MoA Elucidation | Glutaminase inhibitors | Revealed reduction in glutamate & downstream metabolites | LC-MS, Metabolic Flux Analysis [25] |

| Drug Safety | Preclinical toxicity assessment | Identification of metabolic signatures of adverse effects | NMR, LC-MS [26] |

| Pharmacometabolomics | Predicting drug response | Pre-treatment metabolome predicts efficacy and toxicity | LC-MS, NMR [26] |

Experimental Protocol: Target Engagement Studies Using Metabolic Flux Analysis

Objective: Determine whether a compound modulates its intended metabolic target in cellular models.

Methodology:

- Cell Treatment: Expose cell lines (e.g., cancer cells) to the drug candidate and appropriate controls.

- Stable Isotope Labeling: Incubate cells with 13C-labeled nutrients (e.g., [1-13C]-glucose or [3-2H]-glucose) to track metabolic activity [25].

- Sample Collection: Harvest cells at multiple time points and extract metabolites using methanol:water:chloroform solvent systems.

- LC-HRMS Analysis:

- NMR Validation:

- Employ 1H NMR to confirm structural identity of key metabolites showing significant changes.

- Utilize 2D NMR techniques (e.g., COSY, HSQC) to resolve structural ambiguities for novel metabolites [24].

- Data Integration: Combine flux measurements from LC-HRMS with structural confirmation from NMR to comprehensively assess target engagement.

This orthogonal approach confirmed the MoA of glutaminase inhibitors by showing reduced flux from glutamine to glutamate while structurally validating the identity of these metabolites [25].

Figure 1: Experimental workflow for orthogonal validation in drug target engagement studies

Foodomics and Agricultural Applications

Food Authentication and Quality Control

Foodomics applies metabolomics to address challenges in food safety, authenticity, and quality. LC-HRMS and NMR are powerfully combined to detect food adulteration and verify geographical origin. A study on Aloe vera leaf extracts from different geographical regions utilized untargeted LC-HRMS to analyze hydroalcoholic extracts, revealing 77 organic compounds including primary metabolites and natural products. Principal Component Analysis clearly separated samples based on geographical origins, with specific metabolites (e.g., aloe-emodin, tropine, and jasmonic acid) serving as discriminatory markers [27]. NMR provides complementary validation by confirming the structural identity of key marker compounds, essential for developing reliable authentication methods.

In halal meat authentication, metabolomics approaches combining LC-MS or GC-MS with multivariate data analysis successfully discriminate between halal and non-halal meats, addressing significant religious, ethical, and economic concerns in global food markets [28].

Experimental Protocol: Geographical Origin Authentication of Botanicals

Objective: Establish a reliable method to verify the geographical origin of botanical samples using orthogonal metabolomics.

Methodology:

- Sample Collection: Collect plant materials (e.g., Aloe vera leaves) from different geographical regions with documented cultivation practices [27].

- Metabolite Extraction: Prepare hydroalcoholic extracts (e.g., methanol:water mixtures) using standardized protocols.

- LC-HRMS Analysis:

- Chromatography: Employ reversed-phase C18 columns with gradient elution.

- Mass Spectrometry: Acquire data in positive and/or negative ionization modes using high-resolution mass analyzers.

- Data Processing: Use software tools (e.g., Compound Discoverer) with spectral libraries (mzCloud) for metabolite annotation following Metabolomics Standards Initiative guidelines [27].

- NMR Analysis:

- Prepare samples in deuterated solvents.

- Acquire 1D 1H NMR spectra and 2D spectra (COSY, HSQC, HMBC) for structural confirmation of key discriminatory metabolites [23].

- Chemometric Analysis:

- Apply unsupervised methods (Principal Component Analysis) to explore natural clustering.

- Use supervised models (OPLS-DA) to identify significant biomarkers.

- Data Fusion:

- Implement mid-level data fusion to combine selected features from LC-HRMS and NMR datasets [23].

- Build consolidated classification models with enhanced predictive power.

Table 2: Key Metabolites for Geographical Discrimination of Aloe Vera

| Geographical Origin | Discriminatory Metabolites | Analytical Technique | Identification Confidence Level |

|---|---|---|---|

| Portici, Italy (PO) | Aloe-emodin, Tropine, Tropinone | LC-HRMS, NMR | Level 1 (confirmed with standards) [27] |

| Gran Canaria (CAN) | Jasmonic acid, Quinic acid, Aloesin | LC-HRMS, NMR | Level 1-2 [27] |

| Brindisi, Italy (CA, E, MM) | Limonene, α-linolenic acid, Erythrose-4-phosphate | LC-HRMS | Level 1-2 [27] |

Clinical Metabolomics and Precision Medicine

Advancing Personalized Therapeutics through Pharmacometabolomics

Pharmacometabolomics, an emerging branch of metabolomics, integrates pre-treatment metabolome data with drug exposure information to predict individual variations in drug response. This approach addresses the critical challenge that approximately 60% of drugs failing in Phase III clinical trials and only 25-60% of patients exhibiting anticipated treatment responses [26]. By capturing the combined influence of genetics, environment, gut microbiome, and lifestyle on metabolic phenotype (metabotype), pharmacometabolomics provides a powerful tool for personalized therapy.

Notable applications include monitoring tamoxifen and its metabolites in breast cancer patients using LC-MS/MS to predict side effects and discontinuation rates [29]. Similarly, MS-based pharmacometabolomics identified citrate, oxaloacetate, α-ketoglutarate, and malate as predictors of response to spironolactone in resistant hypertension patients, achieving an AUC of 0.96 for discriminating responders from non-responders [29].

Experimental Protocol: Predicting Drug Response in Clinical Trials

Objective: Identify pre-treatment metabolic biomarkers that predict interindividual differences in drug response.

Methodology:

- Study Design: Prospective cohort study with baseline sample collection before treatment initiation.

- Sample Collection: Obtain biofluids (plasma, serum, urine) following standardized protocols to minimize pre-analytical variations.

- LC-HRMS Analysis (Untargeted):

- Sample Preparation: Protein precipitation with organic solvents (e.g., acetonitrile or methanol).

- Chromatographic Separation: Use reversed-phase and HILIC chromatography to maximize metabolite coverage.

- Data Acquisition: Employ full-scan high-resolution MS with data-dependent MS/MS for metabolite annotation.

- NMR Analysis:

- Sample Preparation: Mix biofluids with deuterated phosphate buffer for field locking.

- Spectral Acquisition: Collect 1D 1H NMR spectra with water suppression.

- Quantification: Use qNMR with internal standards for absolute quantification of key predictive metabolites [24].

- Data Integration and Modeling:

- Pre-processing: Normalize, scale, and transform data from both platforms.

- Feature Selection: Identify significantly altered metabolites associated with treatment outcome.

- Model Building: Develop predictive models using machine learning algorithms.

- Validation: Verify biomarkers in an independent cohort with orthogonal methods.

Figure 2: Pharmacometabolomics workflow for predicting individual drug response

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Materials for LC-HRMS and NMR Metabolomics

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Deuterated Solvents (D₂O, CD₃OD) | Provides NMR field frequency lock; minimizes solvent interference | Sample preparation for NMR spectroscopy [23] [24] |

| Internal Standards (Maleic acid, Benzoic acid, DSS) | Enables quantitative NMR; reference compound for chemical shift calibration | Absolute quantification in qNMR [24] |

| Stable Isotope Tracers ([1-13C]-glucose, [3-2H]-glucose) | Tracks metabolic pathway activity in living systems | Metabolic flux analysis [25] |

| Derivatization Reagents (MSTFA, Methoxyamine) | Enhances volatility and detection of non-volatile compounds | GC-MS sample preparation [29] |

| Chromatography Columns (C18 RP, HILIC) | Separates complex metabolite mixtures prior to detection | LC-MS analysis of diverse metabolite classes [25] [30] |

| Quality Control Pools | Monitors instrument performance; normalizes batch effects | Quality assurance in large-scale metabolomic studies |

The orthogonal validation of LC-HRMS findings with NMR represents a powerful paradigm in modern metabolomics, enhancing data reliability across drug development, foodomics, and clinical applications. LC-HRMS provides exceptional sensitivity for detecting subtle metabolic alterations, while NMR delivers robust structural elucidation and absolute quantification. This synergistic combination enables more confident biomarker discovery, mechanistic understanding, and translational applications. As both technologies continue to advance—with improvements in MS sensitivity, NMR throughput, and data integration algorithms—their combined utility will further expand, ultimately accelerating precision medicine and enhancing quality control across diverse sectors.

From Theory to Practice: Implementing Integrated LC-HRMS and NMR Workflows

In analytical chemistry and metabolomics, the pursuit of a comprehensive understanding of complex biological systems often necessitates the use of multiple analytical platforms. Data fusion has emerged as a powerful multidisciplinary field that allows the integration of different datasets obtained using various independent techniques to provide better insights than each approach alone [23]. This approach is particularly valuable in the context of orthogonal validation, where techniques such as Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy provide complementary information that, when combined, offer a more holistic view of biochemical profiles [23] [31].

The most widely accepted classification system for data fusion in analytical science is based on levels of abstraction, categorizing approaches as low-level, mid-level, and high-level data fusion [23]. This progression represents increasing complexity in data handling, from the direct concatenation of raw data to the combination of model outputs. The fundamental principle underpinning all data fusion strategies is the synergistic combination of complementary data sources to achieve refined assessments that would be impossible with single-source data [32].

Theoretical Frameworks and Classifications

Historical Development and Definitions

The data fusion field was formally defined by the Joint Directors of Laboratories (JDL) workshop as "A multi-level process dealing with the association, correlation, combination of data and information from single and multiple sources to achieve refined position, identify estimates and complete and timely assessments of situations, threats and their significance" [33] [32]. This definition highlights the comprehensive nature of data fusion as a process that extends beyond simple data combination to include assessment and refinement.

A more specific definition provided by Hall and Llinas describes data fusion techniques as methods that "combine data from multiple sensors and related information from associated databases to achieve improved accuracy and more specific inferences than could be achieved by the use of a single sensor alone" [32]. In the context of analytical chemistry, this translates to combining data from orthogonal analytical platforms like LC-HRMS and NMR to achieve more accurate and comprehensive metabolite identification and quantification than either technique could provide independently.

Dasarathy's Classification System

One of the most influential classification systems in data fusion was developed by Dasarathy, who categorized techniques based on input and output data types [32]. This system comprises five distinct categories that provide a framework for understanding how data is transformed through the fusion process:

- Data In-Data Out (DAI-DAO): This most basic level inputs and outputs raw data, with results typically being more reliable or accurate data than the original sources.

- Data In-Feature Out (DAI-FEO): At this level, the fusion process employs raw data from sources to extract features or characteristics that describe entities in the environment.

- Feature In-Feature Out (FEI-FEO): Both input and output are features, with the fusion process aimed at improving, refining, or obtaining new features.

- Feature In-Decision Out (FEI-DEO): This category takes features as input and provides decisions as output, encompassing most classification systems.

- Decision In-Decision Out (DEI-DEO): This level fuses input decisions to obtain better or new decisions, also known as decision fusion [32].

This classification system is particularly valuable because it specifies the abstraction level of both inputs and outputs, providing a structured framework for classifying different methods and techniques used in data fusion workflows.

Levels of Data Fusion: Mechanisms and Methodologies

Low-Level Data Fusion (LLDF)

Low-level data fusion, also referred to as data-level fusion or block concatenation, represents the most straightforward strategy for integrating data from different analytical platforms [23]. This approach involves the concatenation of two or more data matrices originating from different sources into a single composite matrix [23] [34]. The fused matrix comprises m-rows (individual samples) and n-columns (measurement variables from each source) [34].

The implementation of LLDF requires careful pre-processing, which can be divided into three critical stages [23]:

- Pre-processing to correct artefacts from signal acquisition for each sensor or instrument.

- Equalizing contributions from each dataset using methods such as mean centering or unit variance scaling.

- Correcting weights of each block from different analytical sources to prevent dominance by blocks with the greatest variance.

In a practical demonstration, researchers applied LLDF to combine Raman and infrared spectroscopy data for assessing meat quality, resulting in a fused matrix of 713 variables (314 from Raman and 399 from infrared) [34]. The performance of this approach showed particular promise in predicting the percentage of intramuscular fat (% IMF) in red meat, with a normalized root mean square error of prediction (NRMSEP) of 8.5% [34].

Mid-Level Data Fusion (MLDF)

Mid-level data fusion addresses a significant limitation of LLDF: the challenge of managing datasets where the number of observations is much smaller than the number of variables [23]. MLDF employs a two-step methodology that first extracts the most important characteristics from the individual matrices before concatenating the outputs to build a single matrix for processing [23] [34].

The feature extraction step typically employs dimensionality reduction techniques, with Principal Component Analysis (PCA) being the most popular method for first-order data [23]. For more complex second-order data, methods such as Parallel Factor Analysis (PARAFAC), PARAFAC2, or Multivariate Curve Resolution–Alternating Least Squares (MCR-ALS) may be employed [23].

The power of MLDF was demonstrated in a comprehensive study on salmon authenticity, where researchers fused data from Rapid Evaporative Ionisation Mass Spectrometry (REIMS) and Inductively Coupled Plasma Mass Spectrometry (ICP-MS) [35]. This approach achieved remarkable performance, with a cross-validation classification accuracy of 100% for determining geographical origin and production methods, correctly identifying all test samples (n=17) - a feat not possible with single-platform methods [35].

High-Level Data Fusion (HLDF)

High-level data fusion, also known as decision-level fusion, represents the most complex approach to data integration [23] [34]. In this strategy, classification or regression models are built using data from individual techniques, and the model predictions are subsequently fused to obtain a final outcome [23] [34]. This approach is the least explored of the three fusion strategies but offers unique advantages in certain applications.

HLDF is particularly advantageous when integrating heterogeneous analytical platforms such as NMR and MS, which differ in dimensionality, scale, and pre-processing requirements [23]. Rather than fusing variables directly, this approach aggregates model-level outputs using strategies like majority voting, probabilistic averaging, or supervised meta-modeling [23]. A relevant application is the multiblock DD-SIMCA method, where full distances from individual models are combined into a single cumulative metric known as the Cumulative Analytical Signal (CAS) [23].

In the assessment of red meat quality, HLDF demonstrated superior performance in predicting pH values, achieving a determination coefficient (R²) of 0.73 and NRMSEP of 12.9% [34]. This performance advantage over individual techniques and other fusion strategies highlights the potential of HLDF for specific analytical challenges.

Comparative Performance Analysis

Table 1: Performance Comparison of Data Fusion Strategies in Practical Applications

| Fusion Level | Application Context | Performance Metrics | Key Advantages | Limitations |

|---|---|---|---|---|

| Low-Level Fusion | Prediction of % IMF in red meat using Raman & IR spectroscopy [34] | NRMSEP = 8.5% [34] | Simple implementation; Preserves all original information | Susceptible to dominant blocks; Requires careful preprocessing [23] |

| Mid-Level Fusion | Geographical origin authentication of salmon using REIMS & ICP-MS [35] | 100% classification accuracy; All test samples (n=17) correctly identified [35] | Handles high-dimensional data; Balances information content and complexity [23] | Feature extraction critical; Potential information loss if features poorly selected [23] |

| High-Level Fusion | Prediction of pH in red meat using Raman & IR spectroscopy [34] | R² = 0.73; NRMSEP = 12.9% [34] | Robust to technique failures; Can integrate heterogeneous data structures [23] | Significant information loss; Complex interpretation [34] |

Table 2: Characteristics of Data Fusion Levels for NMR and MS Integration

| Characteristic | Low-Level Fusion | Mid-Level Fusion | High-Level Fusion |

|---|---|---|---|

| Data Representation | Raw or pre-processed data matrices [23] | Extracted features (e.g., PCA scores) [23] | Model predictions or decisions [23] |

| Information Preservation | High - retains all original data [23] | Medium - preserves most relevant information [23] | Low - only final decisions preserved [34] |

| Implementation Complexity | Low [34] | Medium [23] | High [23] |

| Interpretability | Challenging with high-dimensional data [23] | Moderate - depends on feature extraction method [23] | Low - decision aggregation obscures individual contributions [23] |

| Suitability for NMR-MS Fusion | Good with proper block scaling [23] | Excellent - handles different data structures effectively [31] | Good for final decision integration [23] |

Experimental Protocols and Methodologies

Protocol for Low-Level Data Fusion

Implementing effective low-level data fusion requires systematic pre-processing to ensure meaningful integration of data from different analytical platforms:

Data Collection: Acquire data from complementary techniques such as LC-HRMS and NMR spectroscopy. For example, in a hazelnut authentication study, researchers acquired ¹H-NMR and LC-HRMS data from different cultivars and origins [31].

Individual Pre-processing:

Data Concatenation: Merge pre-processed data matrices from different techniques into a single composite matrix [34]. In a spectroscopy study, this resulted in a fused matrix with 713 variables (314 from Raman and 399 from infrared) [34].

Inter-block Scaling: Apply scaling methods such as Pareto scaling (1/√σ²) or adjust weights to provide equal sums of standard deviation (1/(∑σ)block) to equalize contributions from different techniques [23].

Model Building: Utilize the fused matrix to build multivariate models using methods such as Principal Component Analysis (PCA) or Partial Least Squares regression (PLS) [23] [34].

Protocol for Mid-Level Data Fusion

The mid-level fusion protocol emphasizes feature extraction before integration:

Individual Data Processing: Process data from each analytical technique separately, including necessary pre-processing steps [35].

Feature Extraction: Apply dimensionality reduction techniques to each dataset independently. Common approaches include:

Feature Concatenation: Combine the extracted features (e.g., PCA scores) from different techniques into a single fused matrix [23] [35].

Model Development: Build classification or regression models using the fused feature matrix. In the salmon authentication study, this approach achieved 100% classification accuracy for geographical origin [35].

Validation: Implement rigorous cross-validation and testing with independent samples to validate model performance [35].

Protocol for High-Level Data Fusion

High-level fusion focuses on combining model outputs rather than raw data or features:

Individual Model Development: Develop separate models for each analytical technique using appropriate algorithms [34]. In the red meat quality study, independent PLS models were built for Raman and infrared spectroscopy data [34].

Prediction Generation: Use each individual model to generate predictions for the samples of interest [34].

Decision Fusion: Apply fusion rules to combine the predictions from individual models. Common approaches include:

Performance Evaluation: Assess the fused model against validation datasets and compare performance with individual techniques and other fusion strategies [34].

Workflow Visualization

Diagram 1: Workflow of Data Fusion Strategies showing the parallel pathways for low-level, mid-level, and high-level fusion approaches in analytical data integration.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Essential Research Reagents and Solutions for Data Fusion Studies

| Reagent/Equipment | Function in Data Fusion Workflows | Application Examples |

|---|---|---|

| Deuterated Solvents (e.g., D₂O, CD₃OD) | NMR spectroscopy solvent for metabolite profiling and quantification [36] | Sample preparation for NMR analysis in metabolomic studies [36] [23] |

| Derivatization Reagents (e.g., propyl chloroformate) | Chemical modification of compounds to improve volatility and detection in GC-MS [36] | SCFA profiling via propyl esterification method in GC-MS [36] |

| Internal Standards (e.g., caproic acid-6,6,6-d3, TSP-d4) | Quantification reference and quality control for both NMR and MS techniques [36] | Concentration determination in NMR and MS analyses [36] |

| Chromatography Columns | Compound separation before MS detection in LC-HRMS workflows [31] | Metabolite separation in LC-HRMS analysis of hazelnuts [31] |

| Multivariate Analysis Software (e.g., SIMCA, MATLAB) | Statistical analysis and model building for fused datasets [35] | OPLS-DA, PCA-LDA, and PLS-DA modeling in salmon authentication [35] |

The strategic implementation of data fusion approaches—whether low-level, mid-level, or high-level—offers powerful capabilities for enhancing analytical outcomes when combining orthogonal techniques such as LC-HRMS and NMR. The selection of an appropriate fusion strategy depends on multiple factors, including data characteristics, analytical goals, and computational resources.

Mid-level fusion has demonstrated particular effectiveness in authentication studies, achieving perfect classification in salmon origin determination [35], while high-level fusion excelled in specific quantitative applications such as pH prediction in meat quality assessment [34]. Low-level fusion, though conceptually straightforward, requires careful implementation to balance the contributions of different analytical platforms [23].

As the field of metabolomics and pharmaceutical research continues to evolve, data fusion strategies will play an increasingly critical role in extracting maximum information from complementary analytical techniques, ultimately leading to more robust and comprehensive chemical and biological insights.

This guide objectively compares two principal workflow designs in analytical science for orthogonal validation: Sequential Analysis and Instrument Couplication. The comparison is framed within the critical context of validating Liquid Chromatography-High-Resolution Mass Spectrometry (LC-HRMS) findings with Nuclear Magnetic Resonance (NMR) spectroscopy, a cornerstone of rigorous research in drug development and complex matrix analysis.

The core distinction lies in how and when data from the complementary techniques are integrated. Sequential Analysis involves separate, independent experiments with subsequent data correlation, whereas Instrument Coupling involves the direct, automated physical or data-level integration of analytical systems [37] [21].

Core Conceptual Comparison

The following table summarizes the fundamental characteristics of each approach, highlighting their operational and philosophical differences.

| Feature | Sequential Analysis | Instrument Coupling |

|---|---|---|

| Core Principle | Independent experiments; data correlation post-analysis [37]. | Direct physical or data-level integration; automated transfer [21]. |

| Workflow Design | Linear, segmented phases (LC-HRMS then NMR) [37]. | Integrated, concurrent, or tandem operation [21]. |

| Typical Data Flow | Unidirectional, from one completed technique to the next [37]. | Bidirectional or real-time; can inform subsequent analyses [38]. |

| Automation Level | Lower; requires manual intervention and sample handling between steps. | Higher; streamlined, automated workflows reduce manual steps [39]. |

| Primary Application | In-depth, offline structural elucidation and confirmation [14]. | High-throughput analysis, quality control, and biomarker discovery [39] [21]. |

Experimental Protocols and Performance Data

Protocol for Sequential Analysis in Biosimilar Characterization

A detailed study comparing an FDA-approved innovator monoclonal antibody (mAb) product with a non-approved product demonstrates the sequential approach [21].

Methodology:

- Sample Preparation: Multiple lots of mAb drug products (Rituxan and Reditux) were prepared.

- LC-HRMS Analysis (Released Glycans): N-glycans were enzymatically released from the mAbs, labeled with a fluorophore (2-AB), and separated using Hydrophilic Interaction Chromatography (HILIC). Detection and quantification were performed via Fluorescence Detection (FLD) and High-Resolution Mass Spectrometry [21].

- NMR Analysis (Middle-Down): The Fc domain of the mAbs was separated and denatured. A middle-down NMR method was then used to generate monosaccharide fingerprints, providing a quantitative profile of glycan components like galactosylation [21].

- Data Correlation: Results from the independent LC-HRMS and NMR analyses were compared post-hoc to assess glycan distribution and demonstrate product comparability.

Performance Data: The study successfully identified and quantified major glycoforms using both techniques. The table below summarizes the agreement and focus of each method in the sequential workflow for quantifying key glycan attributes [21].

| Analytical Technique | Measured Attribute | Role in Sequential Workflow | Quantitative Agreement |

|---|---|---|---|

| HILIC-FLD | Relative abundance of specific glycan structures (e.g., FA2) | Primary separation and quantification of released glycans. | Strong correlation for major glycoforms between orthogonal methods. |

| LC-HRMS (MAM) | Site-specific glycosylation and other product quality attributes | Detailed characterization of glycopeptides and precise mass confirmation. | |

| Middle-Down NMR | Monosaccharide content (e.g., galactosylation) | Orthogonal validation of monosaccharide-level composition. |

Protocol for Integrated Data Fusion in Metabolomics

A study on classifying Amarone wines based on withering time and yeast strain exemplifies a coupled approach through data fusion, integrating results from separate instrument runs into a unified model [16].

Methodology:

- Parallel Data Generation: 80 Amarone wine samples were analyzed using two untargeted metabolomics platforms:

- LC-HRMS: For broad metabolite profiling.

- ¹H NMR: For complementary quantitative structural data.

- Data Fusion and Integration: The datasets from both techniques were integrated using multi-omics data fusion approaches.

- Unsupervised Exploration: Multiple Co-inertia Analysis (MCIA) was used to explore the combined dataset.

- Supervised Modeling: Sparse Partial Least Squares-Discriminant Analysis (sPLS-DA) was used to build a classification model leveraging the fused LC-HRMS and NMR data [16].

- Validation: The model's predictive accuracy for classifying wines based on their characteristics was evaluated.

- Parallel Data Generation: 80 Amarone wine samples were analyzed using two untargeted metabolomics platforms:

Performance Data: The multi-omics data fusion approach demonstrated a limited correlation (RV-score = 16.4%) between the LC-HRMS and NMR datasets, confirming their complementarity. The fused model achieved a lower classification error rate of 7.52%, providing a much broader characterization of the wine metabolome than either technique could achieve alone [16].

Direct Comparison: Sequential vs. Simultaneous Injection

A foundational chromatography study directly compared sequential and simultaneous injection methods for competitive binding immunoassays, highlighting inherent performance trade-offs [37].

- Methodology: The study compared two methods for injecting a sample and a labeled analyte analog onto an antibody-coated HPLC column:

- Sequential Injection: Sample is injected first, followed by the labeled analog.

- Simultaneous Injection: Sample and labeled analog are applied to the column at the same time [37].

- Performance Data: The results, summarized below, show a clear trade-off between sensitivity and dynamic range [37].

| Injection Method | Lower Limit of Detection & Sensitivity | Dynamic Range & Upper Limit of Detection |

|---|---|---|

| Sequential | Superior - Better lower limit of detection and higher sensitivity. | Narrower |

| Simultaneous | Inferior | Broader - Wider dynamic range and higher upper limit of detection. |

Workflow Visualization

Diagram 1: Sequential Analysis Workflow

Diagram 2: Instrument Coupling & Data Fusion Workflow

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key materials and software solutions critical for implementing the workflows discussed above.

| Tool / Reagent | Function in Workflow | Application Context |

|---|---|---|

| PNGase F Enzyme | Enzymatically releases N-glycans from glycoproteins for subsequent LC-HRMS or HILIC-FLD analysis [21]. | Sequential Analysis of biotherapeutics (mAbs). |

| Fluorophore Labels (2-AB, RapiFluor-MS) | Tags released glycans with a fluorescent group for sensitive detection in HILIC-FLD and improved MS performance [21]. | Sequential Analysis; Glycan quantification. |

| HILIC Column | Separates polar compounds, such as released and labeled glycans, based on hydrophilicity [21]. | Sequential Analysis; Metabolomics. |

| Deuterated NMR Solvents | Provides the locking signal for NMR spectrometers and avoids overwhelming solvent proton signals [14]. | All workflows involving NMR spectroscopy. |

| KNIME / OpenMS | Open-source workflow systems that combine small processing tools into complex, customizable analysis pipelines for LC-MS data [39]. | Automating and integrating data processing in coupled workflows. |