Optimizing Molecular Docking for Bioactive Analogs: A Computational Guide for Accelerated Natural Product Discovery

This article provides a comprehensive guide for researchers and drug development professionals on optimizing molecular docking workflows specifically for structurally related natural compounds and their analogs.

Optimizing Molecular Docking for Bioactive Analogs: A Computational Guide for Accelerated Natural Product Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing molecular docking workflows specifically for structurally related natural compounds and their analogs. We begin by establishing the foundational rationale for focusing on analog series from bioactive natural products, highlighting their advantages in drug discovery[citation:1]. The guide then details advanced methodological pipelines, from virtual screening of analog libraries to interaction pattern analysis[citation:1][citation:2]. A critical troubleshooting section addresses common pitfalls, such as scoring function inconsistencies and physically implausible AI-generated poses, offering practical optimization strategies[citation:5][citation:7]. Finally, we outline a rigorous multi-layered validation framework, integrating molecular dynamics, binding free energy calculations, and ADMET profiling to translate computational hits into viable leads[citation:1][citation:6]. This structured approach aims to enhance the efficiency and predictive accuracy of docking studies for similar natural compounds, bridging the gap between in silico prediction and experimental development.

The Strategic Rationale: Why Focus on Analog Series from Bioactive Natural Products?

This technical support center is designed for researchers engaged in the computationally driven discovery of bioactive natural products and their optimized analogs. The guidance provided here is framed within the critical thesis that optimizing molecular docking protocols—through rigorous validation, multi-conformer approaches, and integrated AI tools—is essential for accurately translating ethnopharmacological knowledge into viable drug candidates [1] [2]. The following troubleshooting guides and FAQs address recurrent challenges in this workflow, from initial virtual screening to advanced dynamic simulation.

Troubleshooting Guides & FAQs

FAQ: Core Concepts and Workflow

Q1: What is the primary value of molecular docking in natural product research, and how does it connect to ethnopharmacology? Molecular docking is a computational "handshake" that predicts how a small molecule (ligand) binds to a target protein [3]. In natural product research, it provides a rational framework to explain the traditional use of medicinal plants. By docking phytochemicals from an ethnobotanically relevant plant (e.g., Zingiber officinale for inflammation) against a modern disease target (e.g., COX-2 for pain), researchers can identify which specific compounds and molecular interactions are likely responsible for the observed therapeutic effect, moving from traditional knowledge to testable mechanistic hypotheses [4].

Q2: What are the key steps in a standard docking workflow for natural product screening? A robust workflow involves sequential steps:

- Target & Ligand Preparation: Obtain a 3D protein structure (e.g., from PDB or AlphaFold) and prepare it by removing water, adding hydrogens, and assigning charges. Prepare natural product ligands from databases (e.g., ChEMBL, PubChem) or by sketching, ensuring correct protonation states [3].

- Active Site Definition & Grid Generation: Define the binding site coordinates (often based on a co-crystallized ligand) and create a search grid [4].

- Docking Simulation: Run the docking algorithm (e.g., AutoDock Vina, Glide) to generate multiple ligand poses and binding affinity scores (in kcal/mol) [3].

- Pose Analysis & Validation: Visually inspect top poses for plausible interactions (hydrogen bonds, hydrophobic contacts). Critically, validate the docking protocol by re-docking a known co-crystal ligand and calculating the Root Mean Square Deviation (RMSD); a value < 2.0 Å is acceptable [4].

- Prioritization & Advanced Analysis: Prioritize hits based on affinity, interaction patterns, and drug-likeness. Refine top hits with molecular dynamics (MD) simulations to assess complex stability over time [2].

Q3: How can I improve the accuracy of my docking predictions for flexible natural product scaffolds? Natural products are often flexible. To address this:

- Use Ensemble Docking: Dock your ligand into multiple pre-generated conformations of the target protein (e.g., from MD simulations) to account for receptor flexibility [2].

- Employ Advanced Sampling: For ligands with many rotatable bonds, use docking programs that implement robust conformational search algorithms like Genetic Algorithms (AutoDock) or Monte Carlo methods [2].

- Post-Docking Refinement: Subject your top docked complexes to short MD simulations. This allows for induced-fit adjustments where both the ligand and protein side chains relax, providing a more realistic binding pose [2].

FAQ: Common Technical Issues and Solutions

Q4: My docking runs keep crashing. What could be wrong? Crashes are often related to system setup or resource limits.

| Problem Area | Possible Cause | Solution |

|---|---|---|

| Ligand Preparation | Incorrect format, excessive rotatable bonds, or unusual valence. | Simplify the ligand by removing non-essential side chains for initial screening. Ensure file format (.mol2, .pdbqt) is correct and charges are properly assigned [3]. |

| Grid Parameters | Grid box size is too large, leading to an exponential increase in search space. | Reduce the grid box dimensions to focus on the active site. A size of 20-25 Å per side is often sufficient [3]. |

| System Resources | The docking job exceeds available memory (RAM) or CPU time. | Reduce the number of exhaustiveness or GA run parameters. Run docking on a machine with higher computational capacity or use high-performance computing (HPC) clusters. |

Q5: How do I interpret binding affinity scores, and why might a good score not translate to biological activity? A more negative binding affinity (ΔG, in kcal/mol) indicates a stronger predicted interaction. However, a good score alone is not enough [3].

- Pose Quality: A high-affinity pose must be biologically plausible. Always visually inspect interactions with key catalytic or binding residues [4].

- Scoring Function Limitations: Scoring functions are approximations and may not accurately capture specific interactions like halogen bonds or complex solvation effects [2].

- Cell Permeability & Metabolism: The compound may not reach the target in a living system. Always integrate docking results with ADMET predictions (Absorption, Distribution, Metabolism, Excretion, Toxicity) [5].

- Off-Target Effects: High affinity for your target does not preclude stronger, undesired binding to other proteins.

Q6: How should I handle and dock natural product derivatives or analogs from a database? This is a core strategy for scaffold optimization [5].

- Analog Identification: Use chemical similarity algorithms (e.g., Tanimoto coefficient) in databases like ChEMBL to find structural analogs of your promising natural product hit [5].

- Focused Library Docking: Create a small, focused library of these analogs (often 100-1000 compounds) rather than screening millions of random compounds.

- Analysis: Compare the binding modes and affinities of the analogs. Look for derivatives that maintain or improve key interactions while showing better predicted pharmacokinetic properties in ADMET analysis [5].

FAQ: Validation and Best Practices

Q7: What are the minimum validation steps required to trust my docking results for publication? At a minimum, you must perform and report:

- Self-Docking/Re-docking: Re-dock the native co-crystallized ligand into its original binding site. A successful reproduction, with an RMSD < 2.0 Å, validates your parameters (grid location, software settings) [4].

- Cross-Docking Benchmark: If multiple crystal structures of the target with different ligands exist, try docking each ligand into the other structures' coordinates to test the protocol's robustness to protein conformational changes [4].

- Comparison to Controls: Include known active and inactive compounds in your docking set. Your protocol should successfully rank the active compounds higher than the inactive ones.

Q8: When should I move from simple docking to more advanced simulations like Molecular Dynamics (MD)? MD simulations are resource-intensive but critical for:

- Validating Stability: When your docking pose looks good but is potentially strained. A 50-100 ns MD simulation will show if the complex remains stable or if the ligand drifts away [4].

- Evaluating Induced Fit: When you suspect significant side-chain or backbone movement upon ligand binding, which rigid docking cannot capture.

- Calculating Binding Free Energy: Methods like MM/GBSA or MM/PBSA applied to MD trajectories provide a more rigorous estimate of binding affinity than docking scores alone [4].

Data Presentation: Quantitative Results from Case Studies

The following tables summarize key quantitative findings from recent studies that exemplify the optimized docking workflow for natural product scaffolds.

Table 1: Summary of Optimized Natural Product Analogs Against SARS-CoV-2 Proteases [5]

| Analog (Parent Scaffold) | Target Protease | Binding Affinity (kcal/mol) | Key Interacting Residues | Notable Property |

|---|---|---|---|---|

| CHEMBL1720210 (Shogaol) | PLpro | -9.34 | GLY163, LEU162, GLN269 (H-bonds); TYR268 (hydrophobic) | Strongest binder to PLpro in study |

| CHEMBL1495225 (6-Gingerol) | 3CLpro | -8.04 | ASP197, ARG131, TYR239 (H-bonds); LEU287 (hydrophobic) | High affinity for main protease (3CLpro) |

| CHEMBL4069090 (Not specified) | PLpro | Favorable (score not listed) | Analysis not detailed in abstract | Highlighted for favorable drug-likeness |

Table 2: Binding Affinities of Top Natural Compounds for the COX-2 Receptor [4]

| Compound (Class) | Binding Affinity to COX-2 (kcal/mol) | Comparative Stability (RMSD from MD) | MM/GBSA Binding Free Energy (kcal/mol) |

|---|---|---|---|

| Diclofenac (Reference Drug) | - | Stable over 100 ns | Most favorable (exact value not listed) |

| Apigenin (Flavonoid) | Among top scores | Stable over 100 ns | Favorable (second to diclofenac) |

| Kaempferol (Flavonoid) | Among top scores | Stable over 100 ns | Calculated, value not specified |

| Quercetin (Flavonoid) | Among top scores | Stable over 100 ns | Calculated, value not specified |

Experimental Protocols

Protocol 1: Multi-Target Virtual Screening of Analgesic Phytochemicals [4] This protocol details the comprehensive cross-docking study that identified apigenin, kaempferol, and quercetin as top COX-2 inhibitors.

- Ligand & Target Curation: Compile 3D structures of ~300 phytochemicals from 12 analgesic plants (e.g., Zingiber officinale, Curcuma longa). Retrieve 3D structures for 8 pain/inflammation targets (COX-2, TNF-α, opioid receptors, etc.) from the PDB.

- Docking Preparation: Prepare all proteins and ligands using AutoDockTools: add polar hydrogens, merge non-polar hydrogens, assign Kollman/Gasteiger charges. Save in .pdbqt format.

- Grid Box Definition: For each target, define a docking grid centered on the native ligand with dimensions 30 x 30 x 30 ų to encompass the entire binding site.

- Protocol Validation: For each target, re-dock the co-crystallized ligand. Confirm protocol accuracy by achieving an RMSD < 2.0 Å between the docked and crystal pose.

- Cross-Docking Virtual Screening: Dock the entire library of 300 phytochemicals against all 8 prepared targets using AutoDock Vina.

- Hit Prioritization: Apply a binding energy cutoff (e.g., ≤ -6.0 kcal/mol). Prioritize compounds showing good affinity across multiple targets (multi-target potential) and analyze their interaction patterns with key catalytic residues.

- Advanced Validation: Subject top hits (e.g., apigenin) and a reference drug (diclofenac) to 100 ns Molecular Dynamics simulation in explicit solvent using software like GROMACS. Analyze RMSD, RMSF, and ligand-protein contacts to confirm stability.

- Energetics Calculation: Perform MM/GBSA calculations on frames from the stable MD trajectory to obtain a refined estimate of the binding free energy.

Protocol 2: Structure-Based Optimization of Natural Product Analogs [5] This protocol describes the scaffold-hopping approach used to discover improved shogaol and gingerol analogs against SARS-CoV-2 proteases.

- Seed Compound Selection: Start with a natural product scaffold of interest with documented bioactivity (e.g., shogaol from ginger for anti-inflammatory/antiviral effects).

- Analog Retrieval: Use the chemical similarity search function in the ChEMBL database to retrieve over 600 structurally related analogs.

- Molecular Docking & Scoring: Dock the analog library against the target proteins (SARS-CoV-2 PLpro and 3CLpro) using automated docking software. Rank compounds based on docking score (binding affinity).

- Interaction Fingerprinting: For top-ranked analogs, perform detailed analysis of the predicted binding pose. Map specific interactions (hydrogen bonds, pi-stacking, hydrophobic contacts) with key amino acid residues in the active site.

- Multi-Criteria Profiling: Filter hits further using integrated ADMET prediction tools. Evaluate drug-likeness (Lipinski's Rule of Five), potential toxicity, and other pharmacokinetic parameters.

- Gene Expression Prediction: Use tools like DIGEP-Pred to predict if the top-ranked compounds influence biological pathways relevant to the disease pathology (e.g., inflammation and oxidative stress in COVID-19).

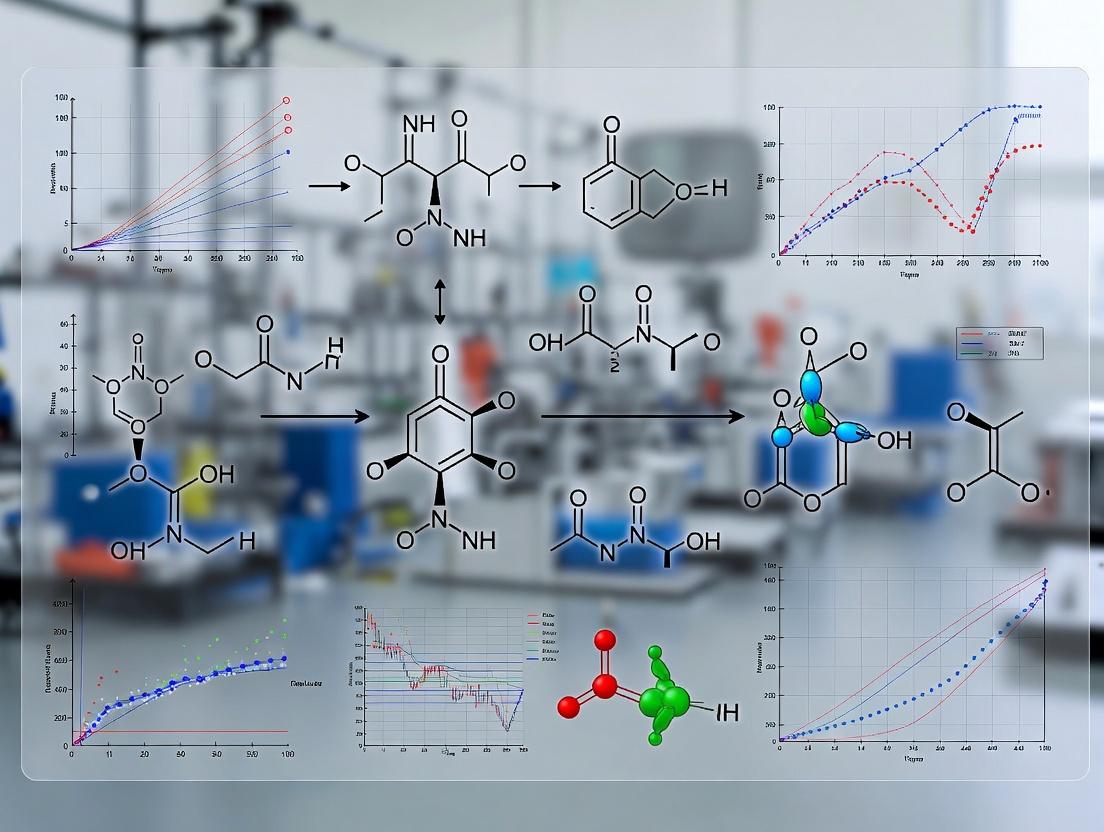

Mandatory Visualizations

Diagram 1: Multi-Stage Computational Workflow

Diagram 2: Ligand Preparation & Conformational Search

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital Tools & Resources for Computational NP Research

| Tool/Resource Name | Category | Primary Function in Research | Key Consideration for NP Scaffolds |

|---|---|---|---|

| RCSB Protein Data Bank (PDB) | Target Structure | Source of experimentally determined 3D protein structures for docking targets. | Check if structure contains a bound ligand to inform active site definition. Prefer structures with high resolution (<2.5 Å). |

| ChEMBL / PubChem | Compound Database | Repositories of bioactive molecules with associated data. Used for finding natural products, their analogs, and bioactivity data [5]. | Use chemical similarity search to find analogs of a promising NP hit for scaffold optimization [5]. |

| AutoDock Vina / AutoDockTools | Docking Software | Widely used, open-source programs for molecular docking and ligand/receptor preparation [3]. | Handle NP flexibility by adjusting the number of energy evaluations and considering all rotatable bonds as flexible. |

| PyMOL / UCSF ChimeraX | Visualization Software | Critical for visualizing protein-ligand complexes, analyzing binding interactions, and creating publication-quality figures. | Essential for inspecting the binding pose of complex NP scaffolds within the protein's active site. |

| GROMACS / NAMD | Molecular Dynamics (MD) | Software for running MD simulations to validate docking pose stability and study dynamic interactions [4]. | Requires parameterization (force fields) for unusual chemical moieties often found in NPs (e.g., specific glycosides, terpenes). |

| SwissADME / pkCSM | ADMET Prediction | Online tools to predict pharmacokinetics, drug-likeness, and toxicity of compounds from their chemical structure. | Important to flag NPs that may have poor bioavailability or potential toxicity despite good docking scores [5]. |

| AlphaFold Protein Structure Database | Predicted Structures | Source of highly accurate predicted protein structures for targets lacking experimental 3D data [2]. | Use with caution for docking; the predicted conformation may not represent the ligand-bound state. Can be used for ensemble docking. |

| Cell Painting Assay (CPA) | Phenotypic Profiling | A high-content imaging-based assay used to elucidate the mode of action of novel scaffolds by comparing their morphological impact on cells to known reference compounds [6]. | Particularly valuable for characterizing the biological activity of novel pseudo-natural product scaffolds created by fragment recombination [6]. |

Technical Support Center

Troubleshooting Guide: Common Issues in Docking Studies of Similar Compounds

This guide addresses frequent challenges researchers encounter when performing molecular docking studies on series of similar natural compounds, such as structural analogs and derivatives, within the context of drug discovery projects.

Issue 1: Poor Correlation Between Docking Scores and Experimental Activity

- Problem: High-affinity docking poses are obtained for compounds that show weak activity in biochemical assays, or vice versa.

- Diagnosis: This often stems from an over-reliance on a single scoring function or improper handling of ligand and receptor flexibility. Scoring functions have different accuracies and biases [7].

- Solution:

- Implement Multi-Objective Docking: Instead of minimizing only binding energy, use algorithms that optimize multiple contradictory objectives simultaneously, such as intermolecular (Einter) and intramolecular energies (Eintra) [8]. Algorithms like NSGA-II, SMPSO, and MOEA/D have shown promise in managing this trade-off [8].

- Use Consensus Scoring: Rank your docked poses using more than one scoring function (e.g., force-field based, empirical, knowledge-based) to improve prediction reliability [7].

- Validate with a Known Binder: Always dock a native ligand or a known active compound from literature to verify your protocol predicts a correct binding mode (Root Mean Square Deviation, RMSD < 2.0 Å is a common benchmark) [7].

Issue 2: Inconsistent Binding Poses within a Congeneric Series

- Problem: Structurally similar compounds dock in wildly different orientations within the same binding pocket, making Structure-Activity Relationship (SAR) analysis impossible.

- Diagnosis: This can be caused by insufficient sampling during the docking search or a lack of pharmacophoric constraints.

- Solution:

- Increase Search Exhaustiveness: Significantly increase the number of runs, energy evaluations, and population size in genetic algorithm-based docks (e.g., in AutoDock) [8].

- Apply Interaction Fingerprints: Post-docking, analyze poses using interaction fingerprints. Compare the patterns of hydrogen bonds, hydrophobic contacts, and ionic interactions. Clusters of compounds with similar fingerprints likely represent the correct binding mode [9].

- Define a Common Scaffold Anchor: If your congeneric series shares a core scaffold, apply soft constraints to keep this moiety in a consistent region of the binding site during docking.

Issue 3: Handling Receptor Flexibility for Broad Analog Series

- Problem: Docking fails when analogs induce or require side-chain movements in the receptor that are not accounted for in a rigid protein structure.

- Diagnosis: Using a single, rigid crystal structure is insufficient for docking diverse analogs, especially if the binding site is flexible [8].

- Solution:

- Use Ensemble Docking: Dock your compound library into an ensemble of multiple receptor conformations. These can be derived from different crystal structures, NMR models, or molecular dynamics simulation snapshots.

- Employ Flexible Residue Docking: If supported by your software (e.g., AutoDock 4.2, Glide), designate key binding site side chains as flexible during the docking simulation [8].

- Consider Induced-Fit Docking (IFD): For high-priority compounds, use an IFD protocol that allows both the ligand and the receptor side chains to adapt to each other.

Issue 4: Difficulty Prioritizing Analogs for Synthesis or Purchase

- Problem: After virtual screening a database of analogs, numerous candidates have favorable scores, but resources for experimental validation are limited.

- Diagnosis: Relying solely on docking score is an incomplete metric for prioritization.

- Solution:

- Apply Multi-Objective Pareto Front Analysis: When using multi-objective optimization, the result is a set of non-dominated solutions (the Pareto front). Select compounds distributed along this front, representing different optimal balances between objectives (e.g., strongest binding vs. most favorable internal ligand strain) [8].

- Incorporate Drug-Likeness and ADMET Filters: Filter top-scoring docking hits using calculated properties like Lipinski's Rule of Five, polar surface area, and predicted toxicity flags to prioritize compounds with a higher probability of being developable drugs.

- Analyze Synthetic Accessibility: Prioritize analogs that are commercially available or appear synthetically tractable based on the complexity of the required chemical modifications from your lead compound.

Frequently Asked Questions (FAQs)

Q1: What exactly defines a "congeneric series" in computational studies? A: A congeneric series refers to a set of compounds that share a common core molecular framework or scaffold but differ by specific, usually small, structural modifications at defined positions (e.g., different substituents on a phenyl ring). In docking studies, the consistent behavior of this shared scaffold is key to generating interpretable SAR data [9].

Q2: Should I use rigid or flexible ligand docking for studying derivatives? A: For derivatives and analogs, flexible ligand docking is essential. These compounds share a core but have different rotatable bonds and substituent conformations. The docking algorithm must be able to sample the conformational space of each unique ligand to find its optimal fit in the binding pocket [7]. Rigid docking is only suitable for very preliminary screens of highly similar molecules.

Q3: How can I visually compare the binding modes of multiple analogs? A: After docking, generate a superimposed view of all top-ranked poses. Quality molecular visualization software (e.g., PyMOL, UCSF Chimera) allows you to align the poses based on the protein receptor and color-code by compound. This visual inspection is crucial for confirming a consistent binding mode and identifying specific interactions made by different substituents.

Q4: My natural compound lead is a flavonoid. How do I find or generate a library of similar compounds for docking? A: You have several options:

- Public Databases: Search specialized databases like ZINC, PubChem, or NPASS using the scaffold as a substructure query.

- Virtual Library Enumeration: Use chemical informatics software (e.g., RDKit, OpenEye) to systematically generate analogs by applying a set of defined chemical transformations (e.g., methylation, hydroxylation, halogenation) to the core positions of your lead compound.

- Commercial Libraries: Many vendors offer "focused libraries" based on common natural product scaffolds like flavonoids, alkaloids, or terpenoids.

Q5: What is a key validation step before docking a new series of analogs? A: The most critical step is redocking and cross-docking. If a crystal structure of the target with a known ligand (preferably similar to your series) exists:

- Extract the native ligand and re-dock it into the prepared receptor. A successful protocol should reproduce the experimental pose (low RMSD).

- Cross-dock other known ligands from different crystal structures into a single receptor structure to test the protocol's ability to handle slight variations in ligand structure [9].

Table 1: Performance Comparison of Multi-Objective Optimization Algorithms for Molecular Docking (Based on a benchmark of 11 HIV-protease complexes) [8].

| Algorithm | Key Strength | Notable Feature |

|---|---|---|

| NSGA-II | Good convergence & diversity | Widely used; many variants available |

| SMPSO | High convergence speed | Uses speed modulation in particle swarm |

| MOEA/D | Effective for many objectives | Decomposes problem into single-objective subproblems |

| SMS-EMOA | Excellent distribution of solutions | Selection based on dominated hypervolume |

| GDE3 | Robust performance | Based on differential evolution |

Table 2: Classification Criteria for "Similar Compounds" in Research Contexts.

| Category | Core Definition | Typical Variation | Utility in Docking Studies |

|---|---|---|---|

| Structural Analogs | Share a common functional core but may have significant structural differences. | Different ring systems, core scaffold modifications. | Explore bioisosteric replacements and scaffold hopping. |

| Derivatives | Direct chemical modifications of a parent compound (semi-synthetic). | Addition/removal of functional groups (e.g., -OH, -OCH₃, halogens). | Build quantitative Structure-Activity Relationships (QSAR). |

| Congeneric Series | A set of derivatives with systematic variations at specific positions. | Systematic changes at one or two defined sites (R-groups). | Ideal for computational SAR analysis and pharmacophore mapping. |

Experimental Protocols for Key Cited Studies

Protocol 1: Multi-Objective Docking for Binding Pose Optimization [8] This protocol is implemented by integrating the jMetalCpp optimization framework with AutoDock 4.2.3.

- Receptor & Ligand Preparation: Prepare the target protein (e.g., HIV protease) and ligand files in PDBQT format, defining flexible torsions in the ligand and, optionally, in key receptor side chains.

- Grid Box Setup: Define a search space box centered on the binding site, ensuring it encompasses all relevant residues.

- Algorithm Configuration: Select a multi-objective algorithm (e.g., NSGA-II, SMPSO). Set objectives to minimize both intermolecular energy (Einter) and intramolecular energy (Eintra).

- Run Execution: Execute the docking run with a predefined number of function evaluations (e.g., 500,000). The output is a Pareto front of non-dominated solutions.

- Pose Analysis & Selection: Analyze the Pareto front. Solutions can be clustered, and representatives from the front's extremes and center can be selected for further analysis, rather than choosing a single "best" score.

Protocol 2: Structure-Based 3D-QSAR Analysis of a Congeneric Series [9] This protocol uses docking poses to align molecules for 3D-QSAR model generation.

- Dataset Curation: Collect a series of analogs with reported biological activity (e.g., Ki). Convert activities to pKi (-logKi).

- Molecular Docking: Dock all compounds into the target's binding site using a precise method (e.g., Glide XP). Ensure poses are consistent with a known pharmacophore or crystallographic reference ligand.

- Receptor-Based Alignment: Align all docked ligand poses based on the coordinates of the protein receptor, ensuring the common scaffold overlaps.

- 3D-QSAR Model Generation: Using the aligned poses, calculate interaction fields (e.g., steric, electrostatic) around the molecules. Use partial least squares (PLS) regression to correlate these fields with the biological activity (pKi).

- Model Validation: Validate the model using external test sets or robust cross-validation. The model contours can reveal regions where steric bulk or negative charge increases/decreases activity, guiding future analog design.

Visualizing Compound Relationships and Workflows

Diagram 1: Logical Flow for Defining and Using Similar Compounds

Diagram 2: Optimized Docking Workflow for Similar Compounds

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Resources for Docking Studies of Similar Compounds.

| Item Name | Category | Function in Research | Key Feature for Analog Studies |

|---|---|---|---|

| AutoDock Vina / AutoDock4 | Docking Software | Predicts ligand binding modes and affinities [7]. | Supports flexible ligand docking; AutoDock4 allows for side-chain flexibility [8]. |

| Glide (Schrödinger) | Docking Software | Performs high-precision flexible ligand docking and virtual screening [9]. | Extra-precision (XP) mode is useful for refining poses of closely related analogs [9]. |

| jMetal / jMetalCpp | Optimization Framework | Provides multi-objective optimization algorithms (NSGA-II, SMPSO, etc.) [8]. | Enables docking optimization against multiple energy objectives simultaneously [8]. |

| RDKit | Cheminformatics Toolkit | Handles chemical I/O, fingerprinting, and substructure searching. | Generate and enumerate virtual libraries of analogs from a core scaffold. |

| PyMOL / UCSF Chimera | Molecular Visualization | Visualizes 3D structures, docking poses, and interactions. | Superimpose and compare binding modes of multiple analogs to analyze interaction patterns. |

| Protein Data Bank (PDB) | Structural Database | Source of 3D atomic coordinates for target proteins. | Provides structures for ensemble docking; may contain structures with bound ligands similar to your series. |

| ZINC / PubChem | Compound Database | Source of purchasable compounds for virtual screening. | Allows substructure searches to find commercially available analogs of a lead compound. |

Technical Support Center: Optimizing Molecular Docking for Natural Compound Research

This technical support center is designed for researchers applying molecular docking in the discovery and optimization of bioactive natural compounds. Within the broader thesis that systematic in silico protocols can identify analogs with superior efficacy and safety profiles, this guide addresses common practical challenges [5].

Troubleshooting Guides & FAQs

FAQ 1: How can I ensure my docking results are reproducible and comparable across different studies or research groups?

- Answer: Reproducibility is a major challenge in computational studies. To standardize your workflow:

- Use Standardized Pipelines: Employ validated, open-source packages that automate and standardize preparation steps. For example, the

dockstringpackage provides a robust protocol for ligand and target preparation, controlling sources of randomness (like random seeds) to minimize variance between runs [10]. - Start from Curated Data: When possible, begin your study with prepared protein structures from curated databases like the Directory of Useful Decoys Enhanced (DUD-E), which have been processed to improve correlation with experimental data [10].

- Document All Parameters: Exhaustively record every software parameter, including protonation states (e.g., pH 7.4), box center coordinates, dimensions, and exhaustiveness settings for stochastic algorithms.

- Use Standardized Pipelines: Employ validated, open-source packages that automate and standardize preparation steps. For example, the

FAQ 2: During virtual screening of a natural product library, most compounds show poor docking scores. Should I conclude the library is inactive?

- Answer: Not necessarily. Poor initial scores often relate to preparation issues rather than true inactivity.

- Check Ligand Preparation: Ensure the ligand's 3D conformation is realistic and its protonation state is correct for physiological pH. Unrealistic starting conformations or incorrect charges can prevent proper binding [10].

- Verify the Binding Site: Confirm your defined search space (the docking "box") fully encompasses the known active site and adjacent allosteric pockets. An incorrectly placed box will yield non-meaningful scores.

- Consider Scaffold Expansion: Instead of discarding the library, use the core scaffolds of moderately scoring compounds for analog generation. As demonstrated in SARS-CoV-2 protease research, structurally related analogs of natural scaffolds (e.g., gingerol or shogaol derivatives) can achieve significantly better binding affinities (e.g., -9.34 kcal/mol for a shogaol analog vs. PLpro) than their parent compounds [5].

FAQ 3: My top-docking compound has an excellent score, but ADMET predictions show high toxicity risk. How should I proceed?

- Answer: This is a critical step in prioritizing leads. An excellent docking score alone is insufficient.

- Integrate Multi-Parameter Optimization: Do not rely on a single scoring function. Use the docking score as one filter among many. Immediately after docking, perform in silico ADMET profiling (absorption, distribution, metabolism, excretion, toxicity) to flag compounds with poor pharmacokinetics or high toxicity risks [5].

- Analyze the Binding Pose: Examine why the score is good. If the binding is driven by unrealistic forces or poses clashing with the protein, discard it. Focus on compounds with networks of specific, consensus interactions (e.g., hydrogen bonds with key catalytic residues).

- Explore the Analogs: Use the high-scoring but toxic compound as a structural template. Search databases like ChEMBL for similar analogs with minor modifications that may retain binding but have improved predicted safety profiles, applying a true multi-objective optimization strategy [10] [5].

FAQ 4: How can I move from a single-target docking result to a credible hypothesis about enhanced bioactivity in a cellular or physiological context?

- Answer: To bridge the gap between computational prediction and expected bioactivity:

- Perform Pathway Analysis: Use the chemical structure of your top-ranked compound(s) as input for gene expression prediction tools (e.g., DIGEP-Pred). This can predict whether the compound is likely to influence biological pathways relevant to the disease pathology, such as inflammation or oxidative stress in COVID-19 [5].

- Conduct Multi-Target Docking: Bioactivity often involves polypharmacology. Dock your promising compound against a panel of related targets (e.g., other kinases in a family) or off-targets linked to adverse effects (e.g., CYP450 enzymes) to predict selectivity and potential side effects [10] [11].

- Validate Experimentally: The final, essential step is to prioritize in silico hits for in vitro validation. This includes binding affinity assays (e.g., SPR, FRET) and functional cellular assays to confirm the predicted biological effect.

FAQ 5: What are the most common pitfalls that lead to a failure of docking hits in subsequent molecular dynamics (MD) simulations or experimental validation?

- Answer: Failures often originate in the docking stage itself.

- Ignoring Protein Flexibility: Traditional docking often uses a rigid protein. If your ligand induces side-chain or backbone movements, the static pose may be unstable. Consider using induced-fit docking protocols or short MD refinements of the top poses [11].

- Over-Reliance on Scoring Functions: Scoring functions are approximations and can be inaccurate. They may favor poses with excessive hydrophobic contacts or penalize correct polar interactions. Visually inspect the chemical reasonableness of every top pose [11].

- Poor Selection of Compound Library: Screening libraries with inappropriate chemical space (e.g., non-druglike molecules) yields useless hits. Always pre-filter libraries for drug-likeness (e.g., Lipinski's Rule of Five) and synthetic feasibility before docking [5].

The following table summarizes quantitative data from relevant studies that illustrate the application and outcomes of optimized molecular docking protocols.

Table 1: Summary of Key Molecular Docking Studies and Datasets

| Study / Resource | Key Objective | Scale & Data | Primary Outcome/Utility |

|---|---|---|---|

| DOCKSTRING Bundle [10] | Standardized benchmarking for ML & docking. | 260,000+ molecules docked against 58 targets (>15M scores). | Provides reproducible dataset & pipeline for virtual screening & multi-objective optimization. |

| SARS-CoV-2 Protease Screening [5] | Identify optimized natural analogs against viral proteases. | 600+ candidate analogs screened via docking & ADMET. | Identified lead analogs (e.g., CHEMBL1720210) with strong binding (PLpro: -9.34 kcal/mol) and favorable drug-likeness. |

| Breast Cancer Therapeutics Review [11] | Apply docking/MD to target discovery (e.g., ERα, HER2). | Analysis of studies targeting key breast cancer proteins. | Highlights docking's role in understanding resistance and designing selective inhibitors. |

Detailed Experimental Protocol: Virtual Screening of Natural Compound Analogs

This protocol outlines the steps for expanding a bioactive natural product scaffold into a set of analogs and virtually screening them against a target, as demonstrated in recent research [5].

Step 1: Analog Identification & Library Curation

- Input: Select a parent natural compound with documented bioactivity but suboptimal potency or ADMET properties.

- Process: Use a chemical database (e.g., ChEMBL, PubChem) and similarity search algorithms (e.g., Tanimoto similarity on molecular fingerprints) to retrieve structurally related analogs.

- Output: A curated library of 500-1000 analogs. Filter this library using rules for drug-likeness (e.g., molecular weight <500, LogP <5) and remove pan-assay interference compounds (PAINS).

Step 2: Target & Ligand Preparation

- Protein Target: Obtain a high-resolution crystal structure (e.g., from DUD-E or PDB). Prepare the protein by adding polar hydrogens, assigning charges (e.g., using AutoDock Tools), and defining the binding site grid [10].

- Ligands: Generate 3D conformations for each analog. Ensure correct protonation states at physiological pH (e.g., pH 7.4) using tools like Open Babel or MOE [10] [12].

Step 3: Automated Molecular Docking

- Execution: Use a standardized docking wrapper (e.g.,

dockstringwhich utilizes AutoDock Vina) to dock every prepared analog into the target's binding site [10]. - Parameters: Set an appropriate search space to encompass the entire binding pocket. Use a consistent, high exhaustiveness value to ensure thorough sampling.

Step 4: Post-Docking Analysis & Prioritization

- Primary Filter: Rank compounds by docking score (estimated binding affinity in kcal/mol).

- Pose Inspection: Visually examine the top 50-100 poses. Prioritize compounds forming key interactions (e.g., hydrogen bonds with catalytic residues, optimal hydrophobic contacts).

- Secondary Profiling: Subject the top 20-30 compounds to in silico ADMET and toxicity prediction. Use gene expression perturbation tools to hypothesize on broader bioactivity [5].

Step 5: Experimental Triaging

- Output: A final shortlist of 3-5 lead analogs that combine strong predicted binding, favorable interactions, and acceptable ADMET profiles for in vitro testing.

Visualization of Workflows & Relationships

Virtual Screening & Optimization Workflow for Natural Compound Analogs

Decision Logic for Analyzing Molecular Docking Poses

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for Molecular Docking Studies

| Tool/Resource Name | Category | Primary Function in Research | Key Benefit for Natural Products |

|---|---|---|---|

| DOCKSTRING [10] | Python Package & Dataset | Standardized computation of docking scores and access to a massive pre-computed dataset (58 targets). | Enables reproducible benchmarking and screening against pharmaceutically relevant targets. |

| AutoDock Vina [10] | Docking Engine | Predicts ligand poses and binding affinities. | Fast, widely used core algorithm integrated into pipelines like dockstring. |

| ChEMBL Database [5] | Chemical Database | Repository of bioactive molecules with curated properties. | Source for finding structurally related analogs of natural product scaffolds. |

| Molecular Operating Environment (MOE) [12] | Software Suite | Integrated platform for structure preparation, visualization, docking, and molecular modeling. | Provides comprehensive tools for interactive analysis of docking poses and interactions. |

| Directory of Useful Decoys Enhanced (DUD-E) [10] | Benchmarking Database | Curated set of protein structures and ligands for validating docking protocols. | Provides high-quality, prepared protein structures to start a project. |

| ADMET Prediction Tools (e.g., pkCSM, SwissADME) | In Silico Profiling | Predicts pharmacokinetic and toxicity properties from chemical structure. | Critical for filtering out docking hits with poor predicted safety or drug-likeness [5]. |

This technical support center is framed within a broader research thesis aimed at optimizing molecular docking and integrated computational workflows for the discovery of bioactive natural compounds. The case studies presented focus on analogs derived from Zingiber officinale (ginger) and Allium sativum (garlic), which have shown promising in silico potential as inhibitors of key SARS-CoV-2 viral proteases [13] [5]. These success stories exemplify how advanced computational strategies—moving beyond simple docking to include covalent docking, molecular dynamics (MD) simulations, and multi-criteria pharmacological profiling—can efficiently prioritize candidates for experimental validation [14] [15]. The guidance provided here addresses common technical challenges in replicating and building upon these studies, facilitating robust and reproducible research in natural product-based drug discovery.

Technical Support Center

Troubleshooting Common Experimental Issues

Researchers often encounter specific issues when performing computational studies on natural compound analogs. The following table diagnoses common problems and provides evidence-based solutions derived from recent successful studies.

| Problem Category | Specific Issue | Possible Cause | Recommended Solution | Reference Methodology |

|---|---|---|---|---|

| Molecular Docking | Unrealistically favorable binding scores (e.g., below -12 kcal/mol) for all compounds. | Docking grid box is too large or incorrectly centered, allowing unrealistic ligand poses outside the binding pocket. | Center the grid precisely on key catalytic residues (e.g., Cys145 for Mpro) and use a box size just large enough to accommodate ligand flexibility (e.g., 25-40 ų) [14]. | Grid centered at coordinates (x = -20.111, y = -11.153, z = 2.684) for Mpro with 40 ų box [14]. |

| Inconsistent or poor binding poses for covalent inhibitors. | Using standard docking for covalent ligands without accounting for bond formation. | Employ covalent docking protocols (e.g., in AutoDock4.2.6) that define the reactive residue (CYS145) and warhead (e.g., nitrile group) [14]. | Reversible covalent docking of nirmatrelvir analogs against SARS-CoV-2 Mpro [14]. | |

| Molecular Dynamics (MD) | System instability: rapid increase in RMSD or simulation crash. | Inadequate system equilibration, incorrect water model, or missing counterions for neutralization. | Perform multi-step minimization and equilibration (NVT then NPT). Use TIP3P water model and add Na⁺/Cl⁻ ions to achieve physiological concentration (0.15 M) [14]. | Systems solvated in TIP3P water, neutralized, and salted to 0.15 M NaCl before 100 ns production MD [14]. |

| Ligand parameterization errors during MD setup. | Using generic force fields without deriving specific parameters for novel covalent adducts. | For covalent complexes, optimize the capped residue-ligand adduct (e.g., CYS145-analog) at the DFT level (B3LYP/6-31G*) and derive RESP charges [14]. | GAFF2 for ligands, AMBER14SB for protein, with RESP charges for covalent adducts [14]. | |

| Pharmacological Profiling | Promising docking hits fail ADMET filters or show toxicity. | Over-reliance on binding affinity without early-stage integrated pharmacokinetic assessment. | Integrate ADMET prediction early in the workflow. Use rules like Lipinski's Rule of Five and predict off-target effects using dedicated tools [13] [5]. | Multi-criteria optimization included drug-likeness, GI absorption, and CYP inhibition profiles for top analogs [13] [5]. |

| Data & Visualization | Published visualizations are misleading or inaccessible to color-blind readers. | Use of non-uniform, rainbow-like color palettes that distort data gradients [16]. | Adopt perceptually uniform color maps (e.g., viridis, cividis) for molecular surfaces and data plots. Use tools to check for color vision deficiency (CVD) accessibility [16] [17]. | Guidelines for scientific use of color to prevent data distortion and ensure universal readability [16]. |

Frequently Asked Questions (FAQs)

Q1: Why focus on ginger and garlic analogs for antiviral protease inhibition? A1: Ginger and garlic contain foundational phytochemical scaffolds (e.g., gingerols, shogaols, organosulfur compounds) with documented anti-inflammatory and antioxidant properties, which are relevant to managing viral disease pathology [13] [5]. Computational studies have shown that analogs built upon these scaffolds can exhibit enhanced binding affinity to viral proteases like SARS-CoV-2 PLpro and 3CLpro compared to their parent compounds, making them excellent starting points for optimized drug design [13] [5] [18].

Q2: What are the key advantages of using covalent docking for protease inhibitors? A2: Covalent inhibitors can form stable, reversible bonds with catalytic cysteine residues (e.g., CYS145 in Mpro), leading to prolonged inhibition and high potency [14]. Covalent docking explicitly models this bond formation, providing more accurate binding modes and energies for such inhibitors compared to standard docking, which only accounts for non-covalent interactions [14].

Q3: How long should molecular dynamics simulations be to ensure reliable results? A3: While simulation time depends on the system, studies on protease-inhibitor complexes suggest that 100 ns simulations are often sufficient to assess complex stability, calculate robust binding free energies via MM-GBSA, and capture key conformational dynamics [14]. Essential stability metrics, like RMSD and RMSF, typically plateau well before this point [15].

Q4: How can I validate my computational workflow before screening a large library? A4: Always begin with a positive control. Re-dock a known co-crystallized inhibitor (e.g., nirmatrelvir for Mpro) and ensure your protocol can reproduce the experimental binding pose within an acceptable RMSD (typically < 2.0 Å). Additionally, use a negative control (a known non-binder) to verify your scoring function can differentiate between binders and non-binders [14] [15].

Q5: What makes a natural compound analog a promising lead candidate beyond good binding affinity? A5: A promising lead requires a multi-parameter optimization. Beyond strong binding (ΔG), candidates should exhibit favorable drug-likeness (adhering to rules like Lipinski's), desirable ADMET properties (good absorption, low toxicity), and structural stability in MD simulations [13] [5]. Some analogs may also show predicted immunomodulatory effects, adding therapeutic value [13].

Detailed Experimental Protocols from Featured Studies

This protocol details the workflow for discovering ginger/garlic-derived analogs with dual inhibitory potential.

- Analog Library Curation: Using the ChEMBL database, perform a similarity search (e.g., Tanimoto coefficient > 0.85) using known antiviral phytochemicals (e.g., 6-gingerol, ajoene) as query structures. Retrieve over 600 structurally related analogs.

- Molecular Docking:

- Prepare protein structures (SARS-CoV-2 3CLpro and PLpro) by removing water, adding hydrogens, and assigning charges.

- Perform automated docking (e.g., using AutoDock Vina) for all analogs against both protease targets.

- Select top hits based on docking score (e.g., ≤ -8.0 kcal/mol) and visual inspection of interactions with catalytic residues.

- Interaction Analysis: For top-scoring complexes, analyze hydrogen bonds and hydrophobic interactions with key binding pocket residues (e.g., GLY163 and TYR268 for PLpro).

- ADMET & Drug-likeness Profiling: Predict pharmacokinetic properties (absorption, distribution, metabolism, excretion, toxicity) using tools like SwissADME or pkCSM. Filter compounds that violate more than one Lipinski rule or show high predicted toxicity.

- Gene Expression Prediction: Use the DIGEP-Pred tool to predict if the lead compounds might influence host pathways relevant to COVID-19 (e.g., inflammation, oxidative stress).

This protocol is for evaluating covalent inhibitors, such as nitrile-based analogs targeting the Mpro catalytic cysteine.

- System Preparation:

- Enzyme: Use a high-resolution Mpro crystal structure (e.g., PDB: 7VLP). Remove water and co-crystallized ligands, add hydrogens, and optimize protonation states at pH 7.

- Ligands: Obtain 3D structures of covalent analog libraries (e.g., from PubChem). Perform energy minimization using the MMFF94S force field.

- Covalent Docking:

- Use AutoDock4.2.6 with a defined covalent map for the reactive atom (e.g., nitrile carbon on the ligand and sulfur on CYS145).

- Set a grid box of 40 ų centered on the catalytic site. Run multiple genetic algorithm (GA) runs (e.g., 250) for thorough conformational sampling.

- Molecular Dynamics Simulations:

- Parameterize the covalent protein-ligand complex using GAFF2 for the ligand and AMBER14SB for the protein.

- Solvate the system in a TIP3P water box, add ions, and minimize energy.

- Gradually heat the system to 310 K, equilibrate, and then run a 100 ns production simulation under NPT conditions.

- Binding Free Energy Calculation:

- Use the MM-GBSA method on frames extracted from the stable trajectory phase (e.g., last 50 ns).

- Calculate the ΔGbinding for each analog. Compounds with more negative ΔG than the reference inhibitor (e.g., nirmatrelvir at -40.7 kcal/mol) are considered superior.

Performance of Top Ginger and Garlic Analogs Against SARS-CoV-2 Proteases

The following table summarizes the most promising analogs identified in recent computational studies, highlighting their enhanced binding over parent compounds.

| Analog ID (Source) | Target Protease | Docking Score (kcal/mol) | Key Interacting Residues | MM-GBSA ΔG (kcal/mol) | ADMET Profile Highlights | Ref. |

|---|---|---|---|---|---|---|

| CHEMBL1720210 (Shogaol-derived) | PLpro | -9.34 | H-bonds: GLY163, LEU162, GLN269, TYR265, TYR273. Hydrophobic: TYR268, PRO248. | N/A | Favorable drug-likeness, predicted immunomodulatory potential. | [13] [5] |

| CHEMBL1495225 (6-Gingerol derivative) | 3CLpro | -8.04 | H-bonds: ASP197, ARG131, TYR239, LEU272, GLY195. Hydrophobic: LEU287. | N/A | Good oral bioavailability, no major toxicity alerts. | [13] [5] |

| PubChem-162-396-453 (Nirmatrelvir analog) | Mpro (Covalent) | Lower than -13.3 | Covalent bond with CYS145, supplemented by multiple non-covalent contacts. | -49.7 | Desirable oral bioavailability, compliant with drug-likeness rules. | [14] |

| L17 (Garlic TL extract) [18] | Spike RBD (ACE2 interface) | -7.5 to -6.9 | Binds at the RBD-ACE2 interface, blocking interaction. | N/A | High GI absorption, BBB permeable, compliant with drug-likeness. | [18] |

Comparison of Computational Methodologies and Outcomes

This table contrasts the methods used in different studies, providing a guide for selecting an appropriate research pipeline.

| Study Focus | Primary Method | Simulation Time | Binding Validation Method | Key Advantage | Identified Leads |

|---|---|---|---|---|---|

| Covalent Mpro Inhibitors [14] | Covalent Docking + MD | 100 ns | MM-GBSA ΔG Calculation | Accurate modeling of covalent bond formation; rigorous energy validation. | Three PubChem analogs with ΔG < -44.9 kcal/mol. |

| Multi-Target Natural Analogs [13] [5] | Virtual Screening + Multi-parameter Optimization | Not Applied | Docking Score + ADMET | Holistic evaluation against two targets with integrated safety profiling. | CHEMBL1720210 (PLpro) & CHEMBL1495225 (3CLpro). |

| PLpro Binder Prediction [15] | MD + Docking + Machine Learning | Long-timescale MD | Random Forest Classifier (76.4% accuracy) | Captures protein flexibility and uses ML for efficient screening of drug libraries. | Five repurposed FDA-approved drug candidates. |

The Scientist's Toolkit: Key Research Reagent Solutions

| Tool / Reagent Category | Specific Item / Software | Primary Function in Research | Key Consideration / Application |

|---|---|---|---|

| Protein Structure Database | Protein Data Bank (PDB) | Source of high-resolution 3D structures of target proteases (e.g., PDB: 7VLP for Mpro). | Select structures solved with covalent inhibitors for covalent docking studies [14]. |

| Compound Database | PubChem, ChEMBL | Repository of small molecules for retrieving analogs of natural product scaffolds. | Use similarity search tools to build focused analog libraries from ginger/garlic phytochemicals [14] [13]. |

| Covalent Docking Software | AutoDock4.2.6 | Predicts binding mode and energy for ligands that form reversible covalent bonds with the target. | Essential for screening nitrile-based or other electrophilic warheads targeting catalytic cysteines [14]. |

| Molecular Dynamics Suite | AMBER20, GROMACS | Simulates the physical movement of atoms in the protein-ligand complex over time to assess stability. | Used for 100 ns simulations to validate docking poses and calculate binding free energies via MM-GBSA [14] [15]. |

| Pharmacokinetics Predictor | SwissADME, pkCSM | Predicts ADMET properties and drug-likeness of hit compounds in silico. | Critical filter applied after docking to prioritize leads with a higher chance of in vivo success [13] [5]. |

| Visualization & Color Palette | PyMOL, SAMSON, Matplotlib | Visualizes molecular interactions and creates publication-quality figures. | Use perceptually uniform color maps (e.g., viridis) for surfaces and plots to ensure accurate, accessible data representation [16] [17] [19]. |

Visual Workflows and Mechanisms

Integrated Computational Workflow for Natural Analog Discovery

The diagram below outlines the multi-step in silico pipeline for discovering and optimizing natural compound analogs, integrating methodologies from the featured studies [14] [13] [5].

Diagram Title: Integrated In Silico Pipeline for Natural Analog Lead Prioritization.

Mechanism of Viral Protease Inhibition by Key Analogs

This diagram illustrates the proposed dual mechanism of action for successful analogs, combining direct protease inhibition with potential host immunomodulation [13] [5] [18].

Diagram Title: Dual Mechanism of Action for Ginger and Garlic Analogs Against SARS-CoV-2.

Current Trends and Databases for Sourcing and Curating Natural Product Analog Libraries

Technical Support Center: FAQs & Troubleshooting

This technical support center addresses common challenges researchers face when sourcing natural product (NP) analogs and applying them in molecular docking studies for drug discovery. The guidance is framed within the context of a thesis focused on optimizing docking protocols for similar natural compounds.

Section 1: Database Sourcing and Curation

FAQ 1.1: Which databases provide the most comprehensive and chemically diverse sets of natural products for building analog libraries?

- Problem: A researcher finds that their initial screening library lacks chemical diversity, leading to repetitive hits with similar scaffolds.

- Solution & Explanation: Prioritize large-scale, open-access databases that offer broad chemical space coverage. The chemical diversity of your starting library is critical for exploring novel bioactive scaffolds. Key databases include:

- COCONUT (Collection of Open Natural Products): This is one of the largest open resources, containing over 695,133 unique natural products [20]. Its size makes it an excellent starting point for comprehensive virtual screening campaigns.

- LANaPDB (Latin America Natural Product Database): This database provides a curated collection of 13,578 unique natural products specifically from Latin America, offering region-specific chemical diversity that can complement broader libraries [20].

- Supernatural 3.0: Used in recent studies for pharmacophore-based screening, this database contains hundreds of thousands of compounds suitable for discovering inhibitors against specific targets like TIM-3 [21].

- Troubleshooting Steps:

- Assess Scope: For a broad, untargeted search, start with COCONUT.

- Augment Diversity: Integrate niche collections like LANaPDB to introduce underrepresented scaffolds.

- Check for Fragments: Utilize pre-computed fragment libraries derived from these databases (see Table 1) for fragment-based drug design approaches [20] [22].

FAQ 1.2: How can I efficiently curate a high-quality, drug-like subset from a massive natural product database?

- Problem: Downloading a database with millions of compounds results in an unmanageable dataset containing many non-drug-like molecules, slowing down computational analysis.

- Solution & Explanation: Apply sequential filtering criteria based on physicochemical properties and structural alerts to focus on "drug-like" chemical space. This process is essential for optimizing computational resources and identifying viable leads.

- Troubleshooting Steps:

- Property Filtering: Use tools like RDKit or OpenBabel to filter compounds based on Lipinski's Rule of Five (e.g., molecular weight < 500, LogP < 5).

- Structural Curation: Remove compounds with reactive or undesirable functional groups (e.g., pan-assay interference compounds, or PAINS).

- Utilize Pre-curated Libraries: Leverage existing fragment libraries generated from major NP databases. These have already been processed and can be directly used for screening (Table 1).

Table 1: Key Natural Product Databases and Derived Fragment Libraries

| Database Name | Total Unique NPs | Derived Fragment Count | Key Feature & Use-Case |

|---|---|---|---|

| COCONUT | >695,133 [20] | 2,583,127 [20] [22] | Largest open-access collection; ideal for initial broad virtual screening. |

| LANaPDB | 13,578 [20] | 74,193 [20] [22] | Geographically curated (Latin America); use to augment scaffold diversity. |

| CRAFT Library | N/A (Synthetic & NP-derived) | 1,214 [20] | Focused library of heterocyclic & NP fragments; benchmark for diversity analysis [22]. |

FAQ 1.3: What are current trends for discovering new analogs or variants of known natural products?

- Problem: A researcher identifies a promising NP hit but needs to find structurally similar analogs to build a structure-activity relationship (SAR).

- Solution & Explanation: Move beyond exact structure searching to use analog search algorithms that can identify structural variants. This is a major trend in modern NP curation [23].

- Troubleshooting Steps:

- Use Advanced Spectral Tools: For mass spectrometry data, employ algorithms like VInSMoC (Variable Interpretation of Spectrum–Molecule Couples). It can search spectral libraries to identify both known molecules and novel variants, having identified 85,000 previously unreported variants in a benchmark study [23].

- Leverage AI-Enhanced Prediction: Integrate tools that use machine learning to predict mass spectra from structures, facilitating the identification of unknown analogs in complex mixtures [23].

Section 2: Molecular Docking & Validation

FAQ 2.1: How do I validate my molecular docking protocol before screening a natural product analog library?

- Problem: Docking results are inconsistent or fail to reproduce known bioactive poses, casting doubt on the virtual screening outcome.

- Solution & Explanation: Perform a self-docking (or re-docking) validation. This is a critical step to ensure your docking parameters (software, grid box, search algorithm) are correctly configured for your specific target protein [4].

- Experimental Protocol:

- Prepare the Protein: Obtain the target protein structure (e.g., COX-2, PDB: 1pxx) from the Protein Data Bank. Remove water molecules and original ligand, then add hydrogen atoms and charges using your docking software tools [4].

- Define the Grid: Center a grid box on the co-crystallized ligand's binding site. Common dimensions are 30x30x30 ų to ensure adequate sampling [4].

- Re-dock the Native Ligand: Extract the original co-crystallized ligand, re-prepare it, and dock it back into the defined grid.

- Calculate RMSD: Superimpose the docked ligand pose onto its original crystallized pose. Calculate the Root-Mean-Square Deviation (RMSD). An RMSD value below 2.0 Å generally indicates a reliable, validated protocol [4].

FAQ 2.2: What advanced computational steps should follow initial docking to prioritize NP analogs for experimental testing?

- Problem: Hundreds of NP analogs show good docking scores, but resources only allow for the experimental testing of a handful.

- Solution & Explanation: Implement a multi-stage computational filtering pipeline. Initial docking scores alone are insufficient; follow-up analyses assess stability, binding energy, and drug-likeness [4] [21].

- Troubleshooting Steps:

- Molecular Dynamics (MD) Simulations: Run short (50-100 ns) MD simulations on top-scoring complexes. Analyze Root-Mean-Square Deviation (RMSD) and Root-Mean-Square Fluctuation (RMSF) to confirm the stability of the protein-ligand complex over time [4] [21].

- Binding Free Energy Calculation: Use methods like MM/GBSA (Molecular Mechanics/Generalized Born Surface Area) on frames from the MD trajectory. This provides a more rigorous estimate of binding affinity than docking scores alone [4].

- ADMET Prediction: Finally, filter compounds by predicted Absorption, Distribution, Metabolism, Excretion, and Toxicity profiles to eliminate those with poor pharmacokinetic or safety profiles [4].

Diagram 1: Workflow for NP Analog Library Screening & Validation

Section 3: Building & Utilizing Fragment Libraries

FAQ 3.1: What is the value of a Natural Product Fragment Library compared to a full compound library?

- Problem: A researcher is exploring fragment-based drug design (FBDD) and wants to leverage the unique structural motifs found in nature.

- Solution & Explanation: NP fragment libraries decompose large, complex natural products into smaller, simpler chemical units. These fragments cover chemical spaces often different from synthetic libraries and can serve as optimal starting points for growing or linking strategies in FBDD [22].

- Troubleshooting Steps:

- Source a Pre-built Library: Download freely available NP fragment libraries, such as the one derived from COCONUT (2.58 million fragments) [22].

- Perform Diversity Analysis: Compare the coverage of your NP fragment library in chemical space (e.g., using principal component analysis on descriptors) against synthetic fragment libraries (like CRAFT) to understand its unique value [22].

- Screen for Fragment Hits: Use a lower docking score threshold when screening fragments, as they form weaker but potentially more efficient interactions with the target.

Diagram 2: From Natural Product to Fragment Library & Application

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Resources for NP Analog Docking Workflows

| Tool/Resource Name | Category | Primary Function in Workflow |

|---|---|---|

| AutoDock Vina / AutoDock 4 | Docking Software | Performs the molecular docking simulation, predicting binding poses and scores [4] [24]. |

| GROMACS / AMBER | MD Simulation | Runs molecular dynamics simulations to assess complex stability and dynamics post-docking [4]. |

| PyMOL / Chimera | Visualization | Used for protein-ligand complex visualization, analysis of interactions, and figure generation [24]. |

| VInSMoC Algorithm | Spectral Analysis | Enables database search of mass spectra to identify known NPs and novel structural variants [23]. |

| RDKit | Cheminformatics | Facilitates chemical curation, descriptor calculation, fingerprint generation, and fragment library management. |

| MM/GBSA Method | Energy Calculation | Calculates more accurate binding free energies from MD trajectories than docking scores alone [4]. |

| ADMET Predictor | PK/PD Modeling | Estimates pharmacokinetic and toxicity properties to filter out compounds with poor drug-like profiles [4]. |

Building the Pipeline: A Step-by-Step Docking Workflow for Analog Libraries

The following diagram encapsulates the integrated computational and experimental workflow for lead discovery from large compound libraries, optimized for research on similar natural compounds.

Key Experimental Protocols and Benchmarks

The table below summarizes core methodologies from recent, successful virtual screening campaigns, highlighting protocols applicable to natural compound research.

Table 1: Summary of Key Virtual Screening Protocols and Outcomes [25] [13] [26]

| Study Focus & Target | Compound Library & Scale | Core Virtual Screening Protocol | Key Experimental Validation | Outcome & Hit Rate |

|---|---|---|---|---|

| Schistosomiasis Kinase Inhibitors (SmERK1, SmJNK, etc.) [25] | Managed Chemical Compounds Collection (MCCC); ~85,000 molecules. | Molecular docking against homology models of five S. mansoni kinases. Selection based on predicted binding to ATP site. | In vitro phenotypic screening against schistosomula and adult worms. Assessment of viability and morphological changes. | 52.6% (89/169) of selected molecules were active in vitro, demonstrating high enrichment over random screening [25]. |

| SARS-CoV-2 Protease Inhibitors (3CLpro, PLpro) [13] | >600 analogs derived from antiviral phytochemicals (e.g., gingerol, shogaol) via ChEMBL similarity search. | Automated molecular docking, interaction pattern analysis, and integrated ADMET profiling. Focus on analogs of bioactive natural scaffolds. | In silico validation via gene expression prediction (DIGEP-Pred) for immunomodulatory effects. | Identification of analogs (e.g., CHEMBL1720210) with enhanced binding scores over parent scaffolds and favorable drug-likeness [13]. |

| AI-Accelerated Platform Demonstration (KLHDC2, NaV1.7) [26] | Multi-billion compound libraries. | RosettaVS workflow: 1) AI-triaged express docking (VSX), 2) High-precision flexible docking (VSH). Active learning to guide screening. | Surface plasmon resonance (SPR) for binding affinity (µM). X-ray crystallography for pose validation (KLHDC2). | Hit rates of 14% (KLHDC2) and 44% (NaV1.7). Full screening completed in <7 days [26]. |

Technical Support Center: Troubleshooting Guides and FAQs

This section addresses common technical challenges within the described workflow, providing targeted solutions based on current methodologies.

Troubleshooting Guide: Virtual Screening Phase

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| Poor enrichment in initial screening (low hit rate). | Inaccurate scoring function or poor handling of target flexibility [27]. | Switch or validate the docking protocol. For known binding sites, physics-based methods like Glide SP or RosettaVS (VSH mode) that model side-chain flexibility often outperform pure deep learning models in pose accuracy and physical validity [26] [27]. |

| High computational cost for screening large libraries. | Exhaustive docking of ultra-large libraries is prohibitively expensive [26]. | Implement an AI-accelerated active learning platform. Use tools like OpenVS to train a target-specific model that triages compounds, directing intensive docking calculations only to the most promising subsets [26]. |

| Predicted poses lack physical realism (bad bonds, steric clashes). | Limitation of certain deep learning-based docking methods which may prioritize RMSD over physical constraints [27]. | Use PoseBusters or similar to check validity. Incorporate a physical plausibility check step. Prioritize hybrid methods (AI scoring + traditional search) or traditional methods which consistently show >94% physical validity rates [27]. |

| Difficulty identifying analogs of a weak natural product hit. | Limited search in conventional vendor libraries. | Perform a similarity-based analog search in large bioactivity databases. Use the ChEMBL database to find structurally related analogs with potentially improved properties, as demonstrated for gingerol derivatives [13]. |

Frequently Asked Questions (FAQs)

Q1: For a novel target with a known active natural compound, should I start with a large diverse library or focus on analogs? A: A hybrid strategy is most efficient. Begin with analog screening based on your active natural scaffold (using similarity searches in ChEMBL or ZINC) to rapidly identify structure-activity relationships (SAR) and improve potency [13]. In parallel, run a targeted screen of a diverse library (50k-100k compounds) to identify novel chemotypes. This dual approach balances speed and the potential for discovery.

Q2: How do I decide between pursuing promiscuous (polypharmacology) vs. selective hits? A: The choice depends on the therapeutic context. For complex diseases like parasitic infections or multi-factorial viral infections, promiscuity can be advantageous. The schistosomiasis study successfully prioritized compounds predicted to bind multiple kinases, leading to a higher rate of in vitro activity [25]. For targets where off-site effects cause toxicity, prioritize selectivity. Analyze docking scores across a panel of related human and target ortholog proteins early on.

Q3: What are the most critical filters to apply between virtual screening and placing compound orders for testing? A: Beyond docking score, implement this cascade:

- Interaction Filter: Manually inspect top poses for key interactions (e.g., H-bonds with catalytic residues, hydrophobic filling).

- Drug-Likeness Filter: Apply rules (e.g., Lipinski, PAINS filters) to remove compounds with undesirable properties [25].

- ADMET Prediction: Use tools like ADMETlab or those integrated in platforms like OpenVS to predict permeability, metabolic stability, and toxicity risks [13] [26].

- Commercial Availability: Check sourcing and cost.

Q4: Our experimental hit rate is consistently lower than the virtual screening enrichment factor suggests. Where is the bottleneck? A: This disconnect often arises from assay-specific factors not captured in silico. Re-evaluate:

- Compound Integrity: Confirm solubility and stability in your assay buffer.

- Target Flexibility: If your docking used a static crystal structure, critical induced-fit motions may be missed. Use a docking protocol that incorporates side-chain and limited backbone flexibility [26].

- Biological Relevance: The compound may bind but not inhibit function, or your phenotypic assay may involve additional pathways. Incorporate a secondary, target-specific biochemical assay to confirm mechanism.

Visualizing the Screening Cascade and Lead Prioritization Logic

The following diagram details the decision-making process for prioritizing virtual hits for experimental testing and advancing confirmed hits to lead series.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software, Databases, and Resources for the Workflow

| Tool/Resource Name | Type/Category | Primary Function in the Workflow | Key Application Note |

|---|---|---|---|

| RosettaVS (OpenVS Platform) [26] | Docking & Virtual Screening Software | Provides a high-accuracy, flexible docking protocol (VSX/VSH modes) integrated with AI-based active learning for screening ultra-large libraries. | Ideal for projects requiring high pose accuracy and the ability to model receptor flexibility. The open-source platform facilitates large-scale screening on HPC clusters. |

| ChEMBL [13] | Bioactivity Database | A curated database of bioactive molecules. Used to find structurally similar analogs (via similarity search) of promising natural product hits for lead expansion. | Critical for moving from a weak natural product hit to a series of patentable synthetic analogs with known bioactivity data. |

| PoseBusters [27] | Validation Toolkit | Checks the physical plausibility and chemical correctness of predicted protein-ligand complexes (e.g., bond lengths, steric clashes, atom hybridization). | Essential for benchmarking docking methods and filtering out physically unrealistic poses before experimental testing, especially when using some DL-based methods. |

| DIGEP-Pred [13] | Gene Expression Predictor | Predicts biological pathways and processes modulated by a compound based on its structure. Used for in silico assessment of potential immunomodulatory or anti-inflammatory effects. | Provides a layer of mechanistic insight during prioritization, especially relevant for natural compounds and complex disease phenotypes. |

| Schistosoma mansoni Kinase Homology Models [25] | Target Structure | Custom-built 3D protein models for docking when experimental structures are unavailable. Demonstrated successful application against parasitic kinase targets. | Highlights the utility of well-constructed homology models for neglected disease drug discovery. Template selection and loop modeling are critical steps. |

| Managed Chemical Compounds Collection (MCCC) [25] | Screening Compound Library | A curated, drug-like library used for successful virtual and phenotypic screening. Represents a high-quality, medium-size library ideal for focused projects. | An example of a well-curated physical screening library that can be paired with virtual screening to achieve high hit rates. |

This technical support center provides troubleshooting guides and FAQs for researchers conducting molecular docking studies within a thesis focused on optimizing protocols for similar natural compounds. The questions address specific, practical issues encountered during the critical first step of preparing computational inputs.

Troubleshooting & FAQ

Q1: My docking results show unrealistic binding poses or poor affinity scores. Could this originate from errors in the initial preparation of my ligand library? Yes, errors in ligand preparation are a leading cause of poor docking outcomes [3]. Common preparation pitfalls include incorrect protonation states, inappropriate charge assignment, or the presence of unfavorable 3D geometries that do not reflect the ligand's bioactive conformation [2]. Before docking, ensure all ligands are in their correct ionization state at physiological pH and that their 3D structures have been energy-minimized. For natural compounds, verify the stereochemistry of chiral centers retrieved from databases.

Q2: When preparing my target protein from the PDB, what should I remove or modify, and what is essential to keep? Protein preparation is crucial for accurate docking. You should typically:

- Remove: extraneous water molecules, original co-crystallized ligands, and any heteroatoms not involved in binding (e.g., crystallization ions) [3] [24].

- Add/Model: Missing hydrogen atoms and any missing side chains in the binding site region using modeling software.

- Assign appropriate atomic charges (e.g., Kollman or Gasteiger charges) [24]. The choice of whether to keep structural water molecules crucial for ligand binding requires careful analysis of the crystal structure.

Q3: For my research on similar natural compounds, is it better to use a single protein conformation or an ensemble? Using an ensemble of receptor conformations (ensemble docking) is a best practice that can significantly improve the identification of true binders, especially for flexible targets like GPCRs [3] [28]. For a thesis focused on optimization, comparing results from the apo (unbound), holo (bound), and computationally sampled conformations of your target protein is recommended. This approach accounts for conformational changes like induced fit and reduces the risk of missing valid binding modes due to receptor rigidity [2].

Q4: How do I define the docking search space (grid box) if there is no known ligand in my target's active site? When a co-crystallized ligand is absent, you must define the putative binding site bioinformatically. You can:

- Use computational tools to predict binding pockets based on geometry and energy [28].

- Reference known active sites from closely related homologous proteins with published structures.

- Perform blind docking over a larger portion of the protein surface, though this is computationally expensive. Once a potential site is identified, ensure your grid box is large enough (e.g., at least 25 Å in each dimension) to allow full ligand rotation and translation [3].

Q5: What are the critical validation steps I should perform before proceeding to large-scale docking of my analog library? Prior to your main screen, run control calculations to validate your entire preparation and docking pipeline [28].

- Re-docking: Dock the native co-crystallized ligand back into its original structure. A successful protocol should reproduce the experimental pose with a Root Mean Square Deviation (RMSD) of < 2.0 Å.

- Decoy Screening: Perform docking with a small set of known active compounds and known inactive decoys. Calculate enrichment factors to assess if your protocol can distinguish between them. These controls are essential for establishing the biological relevance and reproducibility of your workflow [2].

Core Experimental Protocols

Protocol for Preparing a Natural Compound Analog Library

This protocol outlines the creation of a focused library for docking studies on natural product derivatives [24].

- Source Compound Selection: Identify core natural scaffold(s) of interest (e.g., quercetin, resveratrol) based on preliminary bioactivity or literature.