Open Access Natural Product Databases: A 2025 Guide for Researchers in Drug Discovery

Natural products are a cornerstone of drug discovery, with over 50% of new drugs developed from 1981-2014 originating from these compounds [citation:2].

Open Access Natural Product Databases: A 2025 Guide for Researchers in Drug Discovery

Abstract

Natural products are a cornerstone of drug discovery, with over 50% of new drugs developed from 1981-2014 originating from these compounds [citation:2]. However, researchers face a fragmented landscape of over 120 databases, with only about 50 being truly open access [citation:2][citation:5]. This guide provides a comprehensive comparison of open-access natural product databases tailored for researchers, scientists, and drug development professionals. It covers foundational knowledge of available resources, practical methodologies for database utilization, strategies for troubleshooting common challenges like data quality and accessibility, and a framework for comparative evaluation to select the best tools for specific research intents, from virtual screening to dereplication.

Navigating the Landscape: An Introduction to Open-Access Natural Product Databases

The Critical Role of Natural Products in Modern Drug Discovery

The landscape of natural product (NP) discovery has undergone a profound transformation, driven by the digitization of chemical information and the advent of computational power. Historically, the discovery of bioactive NPs was a labor-intensive process rooted in ethnobotany and systematic bioassay-guided fractionation of crude extracts [1]. While this traditional approach yielded foundational therapeutics—such as the anticancer agent paclitaxel from the Pacific yew tree and the heart medicine digoxin from the foxglove plant—it is inherently low-throughput and resource-heavy [2]. The modern paradigm has shifted towards in silico screening and data-driven discovery, leveraging vast, curated databases of NP structures and properties [3]. This evolution is central to a broader thesis on open-access NP database research, which posits that the accessibility, quality, and interoperability of digital NP collections are now critical bottlenecks and opportunities in drug discovery [4].

Open-access databases have democratized research, allowing scientists to perform virtual screening of hundreds of thousands of compounds before any wet-lab work begins [3]. However, the field is fragmented, with over 120 different NP resources cited since 2000, of which only about 50 are truly open-access and provide retrievable molecular structures [4]. This comparison guide will objectively analyze the performance of different database strategies—from traditional, manually curated repositories to modern, computationally generated libraries—providing researchers with the experimental data and protocols needed to navigate this complex ecosystem.

Comparative Analysis of Database Strategies and Performance

The methodologies for building and utilizing NP databases fall into two primary categories: experimental compilation and computational generation. Each strategy offers distinct advantages and trade-offs in terms of data volume, novelty, and direct biological relevance, fundamentally shaping their utility in different stages of the drug discovery pipeline.

Performance Comparison: Experimental vs. Computational Databases

The table below summarizes the core characteristics of representative databases from both paradigms, highlighting their complementary roles.

Table 1: Comparison of Experimental and Computational Natural Product Database Strategies

| Strategy | Representative Database | Key Characteristics | Volume (Unique Compounds) | Primary Use Case | Key Limitation |

|---|---|---|---|---|---|

| Experimental Compilation | SuperNatural 3.0 (2022) [2] | Manually curated from literature; includes mechanisms, toxicity, vendors. | ~450,000 | Target identification, lead optimization, dereplication. | Limited to known chemical space; curation is time-intensive. |

| COCONUT (2020) [3] [4] | Aggregated open-access NP collections; sparse annotations. | ~400,000 | Virtual screening foundation, dataset for model training. | Heterogeneous data quality; often lacks standardized metadata. | |

| Computational Generation | Generated NP-Like Database (2023) [5] | Created by an LSTM neural network trained on known NPs. | ~67,000,000 | Exploring novel chemical space, ultra-large virtual screening. | Compounds are hypothetical; requires experimental validation. |

| ZINC (for commercially available NPs) [6] | Curates and standardizes compounds from vendor catalogs. | Billions (subset are NPs) | Purchasable lead-like compound sourcing. | Not exclusively NPs; may lack detailed biological annotations. |

Experimental databases like SuperNatural 3.0 provide high-confidence data essential for dereplication (avoiding rediscovery) and understanding mechanisms of action [2]. Their main constraint is scale, being confined to the several hundred thousand NPs that have been isolated and characterized. In contrast, computational strategies achieve a massive 165-fold expansion of accessible chemical space, as demonstrated by the 67 million compound database [5]. This generated library maintains "natural product-likeness" but consists of hypothetical structures that prioritize scaffold novelty and require subsequent synthesis or sourcing for biological testing.

Database Functionality and Usability Comparison

Beyond content, the utility of a database is determined by its search functionalities and data interoperability. Advanced query capabilities directly impact a researcher's efficiency in identifying candidate molecules.

Table 2: Functionality Comparison of Major Open-Access NP Databases

| Database | Search Modalities | Key Integrated Features | Data Export & Interoperability | Target/Action Annotation |

|---|---|---|---|---|

| SuperNatural 3.0 [2] | Name/ID, properties, similarity, substructure. | Predicted toxicity (ProTox-II), mechanism of action, vendor data, taste prediction. | Downloadable structures and data. | Pathway mapping (via KEGG/ChEMBL), focused libraries (e.g., anticancer, antiviral). |

| TCM Database@Taiwan [1] | Chemical properties, substructures, TCM classification. | ChemAxon plugin for structure drawing. | Downloads in 2D (.cdx) and 3D (.mol2) formats. | Limited; focuses on herb-ingredient-compound relationships. |

| TCMID [1] | Network-based (herb, ingredient, target, disease). | Self-developed network visualization tools. | Network data accessible. | Strong; links herbal ingredients to disease-related protein targets. |

| CEMTDD [1] | Herb, compound, target queries. | Integrated Cytoscape Web for network visualization. | Network data accessible. | Strong; displays compound-target-disease networks. |

This comparison reveals a trend from simple structure repositories toward integrated knowledge systems. Modern platforms like SuperNatural 3.0 and TCMID do not just list compounds; they connect them to targets, diseases, and pathways, bridging traditional medicine and molecular pharmacology [1] [2]. This enables systems pharmacology approaches and multi-target drug discovery.

Experimental Protocols: From Data Generation to Validation

The creation and use of these databases rely on rigorous, reproducible experimental and computational protocols. Below are detailed methodologies for two critical processes: the computational generation of novel NP-like libraries and the experimental validation pathway for database-sourced hits.

Protocol for Generating a Novel NP-Like Chemical Library

This protocol, based on the work generating 67 million NP-like molecules, outlines the steps for creating a validated virtual screening library using deep learning [5].

1. Data Curation and Preparation:

- Source Data: Obtain canonical SMILES (Simplified Molecular Input Line Entry System) strings for known natural products from a comprehensive open-source collection like COCONUT [3].

- Preprocessing: Remove stereochemistry information to reduce model complexity. Split the data into training (e.g., 80%) and validation sets.

- Tokenization: Break SMILES strings into a vocabulary of unique characters (e.g., 'C', '=', 'O', '(', ')') to create a "molecular language" for the model to learn.

2. Model Training:

- Architecture Selection: Employ a Recurrent Neural Network (RNN) with Long Short-Term Memory (LSTM) units, which is effective for sequence generation tasks.

- Training: Train the LSTM model on the tokenized SMILES sequences. The model learns the statistical likelihood of character sequences that define valid and NP-like chemical structures.

3. Library Generation and Sanitization:

- Generation: Use the trained model to generate a massive set (e.g., 100 million) of novel SMILES strings.

- Validity Filtering: Use cheminformatics toolkits (e.g., RDKit) to parse each SMILES and filter out syntactically invalid structures.

- Deduplication: Convert all valid SMILES to canonical form and remove duplicates.

- Chemical Curation: Apply a standardized pipeline (e.g., the ChEMBL curation pipeline) to correct valency issues, remove salts, and generate "parent" structures [5].

4. Characterization and Scoring:

- Calculate NP-Likeness: Use a tool like NP-Score to assess how closely each generated molecule's structural fragments resemble those in known NP databases [5].

- Pathway Classification: Use a classifier (e.g., NPClassifier) to predict the likely biosynthetic origin (e.g., polyketide, alkaloid) of the novel molecules [5].

- Descriptor Calculation: Compute key physicochemical properties (molecular weight, logP, polar surface area) to profile the chemical space of the new library.

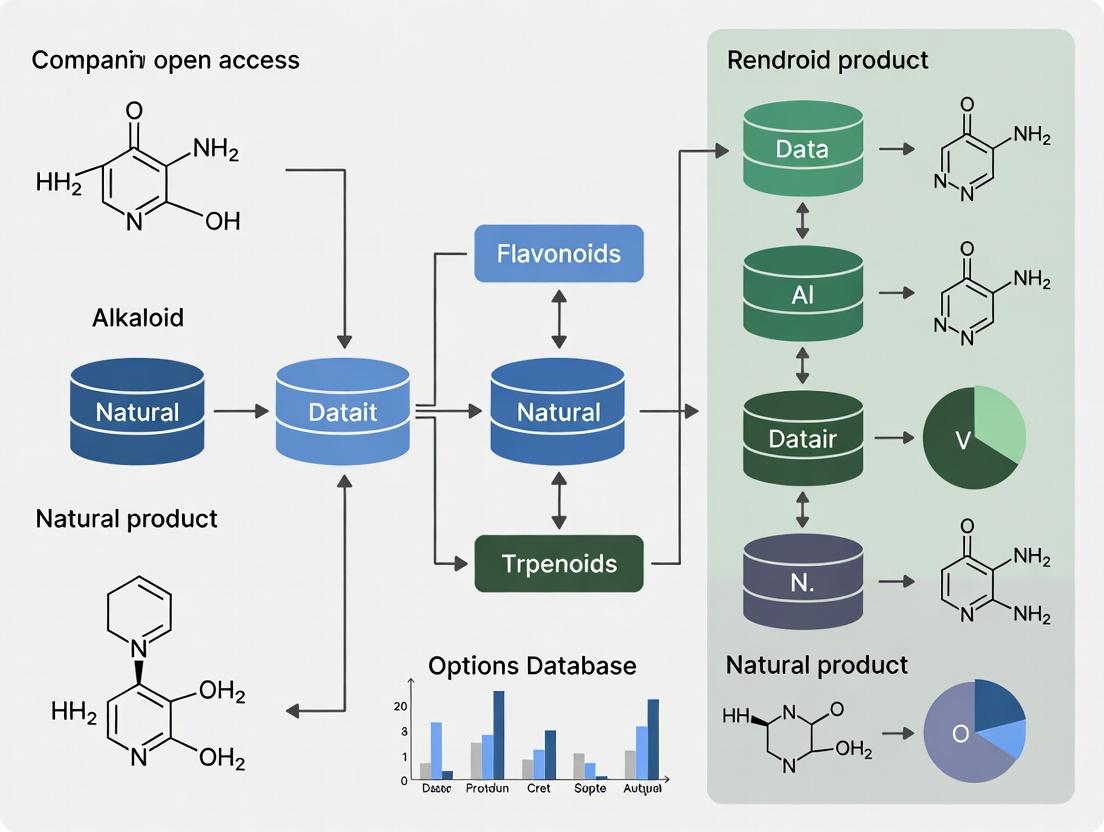

Diagram Title: Workflow for Generative AI in NP Library Design

Protocol for Validating Database-Hits via Biological Assay

This protocol describes the critical path from in silico hit identification to in vitro confirmation, a cornerstone of modern NP-driven discovery.

1. Virtual Screening:

- Target Preparation: Obtain a high-resolution 3D structure of the target protein (e.g., from the Protein Data Bank, PDB [6]) and prepare it for docking (add hydrogens, assign charges).

- Library Docking: Using docking software (e.g., AutoDock Vina, Glide), screen the prepared NP database (e.g., SuperNatural 3.0 or a generated library) against the target's active site.

- Hit Selection: Rank compounds by docking score (binding affinity estimate) and visually inspect top candidates for sensible binding interactions and chemical tractability.

2. Compound Sourcing:

- Source from Vendor: For hits found in annotated databases like SuperNatural 3.0 or ZINC, purchase the physical compound from the listed commercial supplier [2] [6].

- Custom Synthesis: For novel, computationally generated hits, initiate synthetic chemistry routes to produce the compound for testing.

3. In Vitro Bioactivity Assay:

- Assay Design: Develop or adopt a biochemical or cell-based assay relevant to the target's function (e.g., enzyme inhibition, cell proliferation).

- Dose-Response Testing: Treat the assay system with a serial dilution of the sourced/synthesized hit compound.

- Data Analysis: Calculate potency metrics (e.g., IC50, EC50) to confirm the activity predicted by virtual screening.

4. Counter-Screening and Specificity:

- Selectivity Testing: Test active compounds against related but non-target proteins to assess selectivity and reduce the risk of off-target effects.

- Cytotoxicity Assay: Perform a general cell viability assay (e.g., against mammalian cell lines) to identify compounds with non-specific toxic effects.

Effective natural product research in the modern era requires a suite of interoperable databases and software tools. The following table details key resources that form the essential toolkit for researchers.

Table 3: Research Reagent Solutions: Key Databases and Tools for NP Discovery

| Tool / Database | Type | Primary Function in NP Research | Access |

|---|---|---|---|

| COCONUT [3] [4] | NP Structure Collection | Provides the largest open collection of unique NP structures; serves as a foundational dataset for training generative models or virtual screening. | Open Access |

| SuperNatural 3.0 [2] | Annotated NP Database | Offers richly annotated data (target, pathway, toxicity, vendor) for hypothesis-driven search and lead prioritization. | Open Access |

| ChEMBL [6] | Bioactivity Database | Provides bioactivity data (IC50, Ki) for millions of compounds; crucial for understanding structure-activity relationships (SAR) and target profiling. | Open Access |

| ZINC [6] | Purchasable Compound Database | Hosts ready-to-dock 3D structures of commercially available compounds, including NPs, enabling the transition from virtual hit to purchasable lead. | Open Access |

| RDKit | Cheminformatics Software | An open-source toolkit for cheminformatics; used for handling chemical data, calculating descriptors, fingerprinting, and integrating with machine learning pipelines [5] [2]. | Open Source |

| NP-Score [5] | Scoring Function | Quantifies the "natural product-likeness" of a molecule based on substructure fragments, guiding the design or prioritization of NP-like compounds. | Open Source / Algorithm |

| Cytoscape [1] | Network Analysis Software | Visualizes and analyzes complex herb-compound-target-disease interaction networks extracted from databases like TCMID and CEMTDD. | Open Source |

Discussion and Future Perspectives

The comparative analysis reveals that the future of NP discovery lies in the strategic integration of computational and experimental database paradigms. The sheer scale of computationally generated libraries (~67 million compounds) solves the problem of limited chemical novelty but introduces the challenge of validation [5]. Conversely, traditional experimental databases offer high-fidelity, biologically annotated data but are constrained to known chemical space [1] [2]. The most efficient discovery pipeline will likely use generative models to explore vast, novel regions of chemical space, followed by stringent filtering for drug-likeness and NP-likeness, and finally mapping the filtered hits onto the rich biological context provided by curated knowledge bases like SuperNatural 3.0 and ChEMBL [5] [2] [6].

Key future directions include: 1) Improving FAIRness: Enhancing the Findability, Accessibility, Interoperability, and Reusability of open NP data to prevent information loss [4]; 2) Standardizing Metadata: Developing community standards for reporting NP source organism, extraction, and bioactivity data to improve database quality and comparability [3]; and 3) Integrating Omics Data: Linking NP databases with genomic and metabolomic data to predict biosynthetic pathways and discover new analogs intelligently [5].

In conclusion, within the thesis of open-access NP database research, the critical role of natural products in modern drug discovery is increasingly defined by digital access and computational exploitation. The performance of one strategy over another is context-dependent. For understanding traditional medicine or dereplication, curated experimental databases are superior. For pioneering unprecedented chemotypes, computationally generated libraries are indispensable. The synergistic use of both, facilitated by the tools and protocols outlined here, represents the most powerful approach to unlocking the next generation of natural product-derived therapeutics.

Open access to research data, particularly in fields like natural product discovery, is foundational to accelerating scientific progress. The FAIR principles—ensuring data is Findable, Accessible, Interoperable, and Reusable—provide a critical framework for this endeavor [7]. In the context of natural product research, high-quality, open-access databases that adhere to these principles are indispensable tools for virtual screening, AI-driven discovery, and drug development [8]. This guide objectively compares leading open-access natural product databases, evaluating their performance, scale, and implementation of FAIR principles to aid researchers in selecting the most appropriate resources for their work.

The landscape of open-access natural product databases varies significantly in scale, origin, and specialization. The table below provides a high-level comparison of three distinct types of resources: a large-scale aggregated database, a focused regional collection, and a virtually generated library.

Table 1: Comparison of Open-Access Natural Product Database Characteristics

| Database Name | Primary Type & Scale | Key Features & Curation Approach | FAIR Emphasis & Access |

|---|---|---|---|

| COCONUT 2.0 [7] | Aggregated Collection (~400,000 known compounds) | Community curation; detailed provenance (organism, geography); substructure/similarity search. | High: Enables user submissions, has detailed metadata, and provides bulk downloads in multiple formats. |

| NAPRORE-CR [9] | Regional/Focused Collection (~1,161 compounds) | Compounds from Costa Rica; annotated with calculated properties (e.g., LogP, TPSA). | Medium: Freely available; includes structural data and properties but is smaller in scale. |

| 67M NP-Like Database [5] | AI-Generated Virtual Library (67 million compounds) | Generated via LSTM neural network; expands known chemical space by 165x; filtered for validity. | Medium: Openly available dataset; focuses on structural information with natural product-likeness scoring. |

Performance Analysis and Implementation of FAIR Principles

A deeper analysis of database performance and utility requires examining how each resource implements the core tenets of the FAIR principles.

Findability and Accessibility

Findability is achieved through persistent identifiers and rich metadata. COCONUT 2.0 excels here by assigning Digital Object Identifiers (DOIs) to contributed collections, making specific datasets citable and traceable [7]. Its advanced search interface allows queries by structure, substructure, name, and organism. In contrast, the 67M NP-Like Database is primarily findable as a single, massive dataset focused on structural information [5].

Accessibility is demonstrated by long-term retrieval and open protocols. All databases discussed are freely accessible online. COCONUT 2.0 enhances accessibility by offering multiple bulk download formats (SDF, CSV, SQL dump), facilitating offline analysis [7]. The regional NAPRORE-CR database is also openly available, supporting its mission of sharing biodiversity data [9].

Interoperability and Reusability

Interoperability refers to the ability to integrate with other data systems. COCONUT 2.0 uses standardized schemas (e.g., InChI, SMILES) and links to external ontology terms for organisms, fostering integration with other bioinformatics resources [7]. The AI-generated database uses canonical SMILES, a universal chemical language, ensuring compatibility with most cheminformatics software [5].

Reusability is paramount for data utility. It is ensured by rich descriptions of data provenance and licensing. COCONUT is strongest here, as each entry is annotated with source organism, geographic origin, and literature citations, providing essential context for reuse [7]. The virtual library, while vast, has less contextual metadata but is explicitly generated for reuse in in silico screening campaigns [5].

Table 2: Analysis of Database Performance in FAIR Principles

| FAIR Principle | COCONUT 2.0 [7] | NAPRORE-CR [9] | 67M NP-Like Database [5] |

|---|---|---|---|

| Findability | DOI for collections; rich metadata; multiple search modes. | DOI for dataset; basic metadata. | Accessible via repository; identified by study DOI. |

| Accessibility | Free web interface; bulk downloads (SDF, CSV, SQL). | Free download via Zenodo. | Free download from repository. |

| Interoperability | Uses standard identifiers; links to external taxonomies. | Uses standard chemical descriptors. | Uses canonical SMILES format. |

| Reusability | High: Detailed provenance, licensing, and community curation. | Moderate: Clear license but limited scope. | High: Created for screening; clear generation protocol. |

Experimental Protocols for Database Utilization and Validation

The value of these databases is realized through their application in structured research workflows. Below are detailed protocols for two key applications: virtual screening using existing databases and the generation of novel virtual libraries.

Protocol 1: Virtual Screening for Bioactive Compounds

This protocol is based on studies that screen databases like COCONUT for specific biological targets [10].

- Target and Database Selection: Define the protein target (e.g., NLRP3 inflammasome) [10]. Select a database (e.g., COCONUT, NAPRORE-CR) and download the structural data (SDF or SMILES format).

- Ligand Preparation: Use cheminformatics toolkits (e.g., RDKit) to sanitize structures: standardize tautomers, remove duplicates, add hydrogens, and generate plausible 3D conformations [5].

- Molecular Docking: Perform high-throughput docking against the target's active site using software like AutoDock Vina or Glide. Rank compounds based on docking scores (e.g., kcal/mol) [10].

- Post-Docking Analysis: Select top-ranked compounds (leads) for further analysis. Calculate binding free energies using more rigorous methods (e.g., MM-PBSA/GBSA) and run molecular dynamics simulations (100-200 ns) to assess complex stability [10].

- ADMET Prediction: Evaluate leads for drug-like properties (absorption, distribution, metabolism, excretion, toxicity) using in silico tools to prioritize candidates for experimental validation [10].

Protocol 2: Generating and Validating an AI-Based Virtual Library

This protocol outlines the methodology for creating expansive virtual databases, as demonstrated in the 67M compound study [5].

- Data Curation: Assemble a high-quality training set of known natural products (e.g., 325,535 SMILES from COCONUT). Remove stereochemistry to simplify the chemical language for the model [5].

- Model Training: Train a deep generative model (e.g., a Long Short-Term Memory Recurrent Neural Network) on the tokenized SMILES strings. The model learns the statistical patterns and "rules" of natural product structures [5].

- Library Generation: Use the trained model to generate a massive number of novel SMILES strings (e.g., 100 million) [5].

- Validation and Filtering: Employ a multi-step cheminformatics pipeline to ensure quality:

- Validity: Use RDKit's

Chem.MolFromSmiles()to filter out syntactically invalid strings. - Uniqueness: Remove duplicates by converting SMILES to canonical forms and InChI identifiers.

- Natural Product-Likeness: Score remaining structures using the NP Score tool to assess their similarity to known natural products [5].

- Validity: Use RDKit's

- Characterization: Analyze the final library's physicochemical space (e.g., molecular weight, logP) and classify compounds by biosynthetic pathway using tools like NPClassifier to demonstrate its coverage and novelty [5].

Diagram: Two primary workflows for leveraging open-access natural product (NP) databases in research.

The effective use and development of natural product databases rely on a suite of specialized software tools and resources.

Table 3: Essential Research Reagent Solutions for NP Database Work

| Tool/Resource Name | Category | Primary Function in NP Research |

|---|---|---|

| RDKit [5] | Cheminformatics Library | Core functions for reading/writing chemical structures, calculating molecular descriptors, and performing substructure searches. Used for database sanitization and analysis. |

| COCONUT Web Interface [7] | Database Portal | Provides user-friendly access to search (text, structure, similarity) and browse a large aggregated collection of natural products with metadata. |

| NP Score [5] | Scoring Algorithm | Quantifies the "natural product-likeness" of a molecule by comparing its structural fragments to those in known NP databases. Critical for validating AI-generated libraries. |

| MARCUS Tool [11] | Literature Curation | An integrated platform that uses AI (GPT-4, OCSR engines) to extract chemical structures and metadata from PDFs, streamlining submission to databases like COCONUT. |

| DECIMER/MolScribe [11] | Optical Chemical Recognition (OCSR) | Converts images of chemical structures in literature into machine-readable SMILES or InChI format, a key step in automated database curation. |

Diagram: The workflow for making unstructured natural product data FAIR using the MARCUS curation platform and the COCONUT database.

The field of natural product (NP) research is defined by both immense chemical wealth and significant infrastructural complexity. With over 400,000 fully characterized compounds known to date, NPs are a cornerstone of drug discovery, forming the basis for a substantial proportion of approved therapeutics [5]. However, this valuable data is dispersed across a vast, fragmented ecosystem of resources. Researchers have cataloged over 120 distinct databases and libraries, ranging from physical sample repositories to virtual screening libraries [12] [7]. Within this, approximately 50 maintain a commitment to open-access principles, creating a critical but heterogeneous resource for the global scientific community.

This comparison guide aims to bring clarity to this complex landscape. We objectively evaluate the scope, functionality, and performance of key open-access databases and the computational tools built upon them. The analysis is framed within a broader thesis: that while fragmentation presents a challenge, the synergistic use of expansive open databases and advanced in silico methodologies—such as AI-driven molecular generation and target prediction—is revolutionizing NP-based discovery by making it more systematic, predictive, and cost-effective [5] [13] [14].

Database Classification and Comparative Analysis

The NP resource ecosystem can be categorized by content type and access model. The following table summarizes the core characteristics of major categories, highlighting key examples and their primary applications in research.

Table 1: Classification and Comparison of Major Natural Product Resource Types

| Resource Category | Description & Scope | Key Examples (Source) | Primary Research Application |

|---|---|---|---|

| Comprehensive Open-Access NP Databases | Large-scale, digitally curated collections of chemical structures and associated metadata (e.g., source organism, literature). | COCONUT (~406,919 compounds) [5] [7], NPASS, CMAUP [13] | Virtual screening, chemoinformatic analysis, data mining for biodiscovery. |

| Physical Extract & Compound Libraries | Collections of tangible samples (crude extracts, prefractionated libraries, pure compounds) available for biological screening. | NCI Natural Products Repository (>230,000 extracts) [12], MEDINA (>200,000 extracts) [12], Axxam Library (11,500 compounds) [12] | High-throughput phenotypic and target-based screening, assay-guided isolation. |

| Broad Cheminformatics Repositories | General-purpose chemical databases that include substantial NP data alongside synthetic molecules. | PubChem (119M+ compounds) [6], ChEMBL (2.4M+ bioactive molecules) [6] [15], ZINC (54B+ compounds for virtual screening) [6] | Large-scale virtual screening, bioactivity data mining, ligand-based prediction. |

| Specialized & Regional Databases | Focused collections centered on specific source types (e.g., marine, microbial) or geographic origins. | Dictionary of Marine Natural Products [16], NAPRORE-CR (Costa Rican NPs) [9], StreptomeDB [13] | Targeted discovery from specific ecological niches, study of regional biodiversity. |

| AI-Generated Virtual Libraries | Expansive libraries of novel, NP-like chemical structures created by deep generative models. | 67M NP-like molecule database [5], NPGPT-generated libraries [14] | Exploration of novel chemical space, in silico hit discovery beyond known compounds. |

Experimental Protocols for Database Utilization and Validation

The effective use of NP databases often relies on standardized computational workflows. Below are detailed methodologies for two key applications: the creation/validation of AI-generated virtual libraries and the prediction of biological targets for NP compounds.

3.1 Protocol for Generating and Validating AI-Driven NP-like Libraries This protocol, based on the work of Tay et al. (2023) and subsequent studies, outlines the steps for creating a novel virtual library of NP-like molecules using deep learning [5] [14].

- Data Acquisition and Preprocessing: Obtain a canonical set of known NP structures, such as the ~406,919 compounds from COCONUT [5]. Standardize SMILES representations using a toolkit like RDKit or MolVS, which includes steps like removing salts and normalizing functional groups [14]. Filter structures based on desired criteria (e.g., atom count ≤ 150) [14].

- Model Training: Select a generative chemical language model architecture. Common choices include Recurrent Neural Networks with Long Short-Term Memory (RNN-LSTM) or Generative Pre-trained Transformers (GPT) [5] [14]. Tokenize the preprocessed SMILES or SELFIES strings from the training set. Train the model to learn the statistical patterns and "language" of NP structures.

- Sampling and Generation: Use the trained model to generate a large number (e.g., 100 million) of novel molecular string representations [5].

- Validation and Curation:

- Syntactic Validity: Parse generated strings with RDKit's

Chem.MolFromSmiles()to filter invalid outputs [5]. - Uniqueness & Deduplication: Convert valid structures to canonical SMILES and InChI keys to identify and remove duplicates [5].

- Chemical Sanity: Apply a chemical curation pipeline (e.g., ChEMBL's) to standardize structures and flag severe structural issues [5].

- NP-likeness Evaluation: Calculate a Natural Product-likeness score (NP Score) for generated molecules and compare the distribution to that of the known NP training set [5].

- Syntactic Validity: Parse generated strings with RDKit's

- Characterization: Use tools like NPClassifier to assign biosynthetic pathway-based classifications [5]. Calculate key physicochemical descriptors (e.g., molecular weight, logP) and use dimensionality reduction (e.g., t-SNE) to visualize the library's coverage of chemical space compared to known NPs [5].

3.2 Protocol for Similarity-Based Target Prediction of Natural Products This protocol details the use of the open-source tool CTAPred for predicting potential protein targets of a query NP [13].

- Reference Dataset Preparation: Compile a focused Compound-Target Activity (CTA) dataset from public sources like ChEMBL [13] [15]. Filter for compounds with measured bioactivities (e.g., IC50, Ki) against protein targets, prioritizing data relevant to NP-like chemical space.

- Query Input: Prepare the query NP compound(s) in an accepted format, such as SMILES.

- Fingerprint Calculation & Similarity Search: Encode both the query and reference compounds using a molecular fingerprint (e.g., Morgan fingerprint). Calculate the pairwise similarity (e.g., Tanimoto coefficient) between the query and all compounds in the reference dataset [13].

- Target Inference: Rank the reference compounds based on similarity to the query. Aggregate the known protein targets associated with the top N most similar reference compounds (N is optimized, often between 1-5) [13]. The frequency and potency of a target across this set contribute to its prediction score.

- Output & Prioritization: The tool outputs a ranked list of predicted protein targets for the query NP. Predictions can be prioritized based on the similarity scores, the prevalence of the target in the hit list, and the known biological context.

Visualizing Workflows and Relationships

Diagram 1: AI-driven workflow for virtual natural product library generation and screening.

Diagram 2: Similarity-based ligand-to-target prediction workflow for natural products.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Tools and Resources for Computational Natural Product Research

| Tool/Resource Name | Type | Primary Function in NP Research | Key Feature / Note |

|---|---|---|---|

| COCONUT [7] | Open Database | Provides the largest consolidated collection of open NP structures for dereplication and virtual screening. | Implements community curation and links to original source collections. |

| RDKit [5] | Cheminformatics Toolkit | Enables fundamental operations: molecule manipulation, descriptor calculation, fingerprinting, and image rendering. | Open-source; essential for preprocessing and analyzing chemical data. |

| ChEMBL [6] [15] | Bioactivity Database | Serves as a critical source of experimentally measured compound-target activities for building prediction models. | Manually curated; includes quantitative data (IC50, Ki) for model training. |

| CTAPred [13] | Target Prediction Tool | An open-source, command-line tool for predicting protein targets of NPs using similarity-based methods. | Focuses on NP-relevant chemical space; allows batch processing. |

| NP Score [5] | Computational Metric | Quantifies how "natural product-like" a molecule is based on substructure analysis. | Used to validate the chemical space of AI-generated libraries. |

| NPClassifier [5] | Classification Tool | Automatically classifies NPs into biosynthetic pathways (e.g., polyketide, alkaloid). | Helps in organizing and understanding the origin of novel or generated structures. |

| ZINC [6] | Virtual Screening Library | Provides commercially available compounds and 3D conformers for large-scale virtual docking screens. | Acts as a bridge between virtual hits and purchasable compounds for testing. |

This guide provides a comparative analysis of three fundamental categories of databases—generalistic, thematic, and spectral libraries—within the critical and expanding domain of open-access (OA) natural product research. As OA models face pivotal deadlines and evolving policies, the infrastructure for discovering and analyzing scientific data is more important than ever [17]. This comparison, framed within a broader thesis on OA resources, is designed for researchers, scientists, and drug development professionals who require efficient, high-fidelity data to accelerate discovery. We objectively evaluate these databases based on scope, data type, application, and supporting experimental evidence.

Understanding the Database Categories

The landscape of research databases can be effectively organized into three major categories, each serving a distinct purpose in the scientific workflow.

Generalistic Databases: These are broad repositories that aggregate chemical and biological data from a vast array of sources without a narrow focus on a single discipline. They excel at providing a comprehensive "first look" at a compound, integrating information on structure, properties, bioactivities, and literature. A premier example is PubChem, a public NIH resource containing over 119 million unique compounds and 295 million bioactivity data points from more than 1,000 sources [18]. It serves as a central hub for initial compound identification, sourcing, and high-level biological activity screening, crucial for early-stage drug discovery and cross-disciplinary research [18] [19].

Thematic Databases: These are specialized resources focused on a specific research domain, organism, or data type. They provide deep, curated content tailored to experts within that field. Examples include PubMed for biomedical literature [19], NPASS for natural products and their source species [18], and ERIC for education research [19]. In natural product research, thematic databases offer curated datasets on metabolites from specific organisms (e.g., Yeast Metabolome Database) or dedicated repositories for chemical spectra, which are essential for confident compound annotation and dereplication [18].

Spectral Libraries: These are highly specialized databases containing reference fragmentation patterns (spectra) of molecules, acquired via techniques like mass spectrometry (MS). They are the core tools for analytical identification and quantification. Libraries can be empirical (built from experimentally measured standards) or in silico (predicted using machine learning models like Prosit) [20]. Their primary application is in metabolomics, proteomics, and chemical analysis, where they enable the automated, high-throughput identification of compounds in complex biological samples by matching observed spectra to reference entries [20].

The following table summarizes the core characteristics of these database categories.

Table: Comparison of Major Database Categories for Natural Product Research

| Feature | Generalistic Databases (e.g., PubChem) | Thematic Databases (e.g., NPASS, PubMed) | Spectral Libraries (Empirical & Predicted) |

|---|---|---|---|

| Primary Scope | Broad, cross-disciplinary aggregation [18]. | Deep, domain-specific focus [18] [19]. | Analytical fingerprint matching [20]. |

| Core Data Type | Chemical structures, properties, bioactivities, literature links [18]. | Curated compound sets, species-source data, domain-specific literature [18] [19]. | Reference mass spectra (MS/MS), retention times, collision cross-section values [20] [18]. |

| Key Application | Compound discovery, sourcing, initial bioactivity screening [18]. | Targeted discovery, dereplication, in-depth literature review [18] [19]. | Definitive identification & quantification in complex mixtures (e.g., metabolomics) [20]. |

| Research Stage | Early discovery & prioritization. | Focused investigation & validation. | Analytical confirmation & quantification. |

| Access Model | Open Access (e.g., PubChem) [18]. | Mix of OA and subscription [19]. | Often institutional/commercial; growing OA repositories. |

Comparative Performance and Experimental Data

The utility of these databases is best demonstrated through experimental data. Recent advancements highlight the performance gains achievable with modern spectral libraries and intelligent data acquisition.

Quantitative Performance of Spectral Libraries: A landmark 2023 study developed a Real-Time Library Searching (RTLS) workflow for proteomics, demonstrating the power of large-scale spectral libraries. The researchers used a library of 4 million predicted spectra to enable intelligent, real-time decision-making on a mass spectrometer [20].

- Throughput and Efficiency: The RTLS method doubled instrument acquisition efficiency compared to traditional data-dependent methods. It quantified 15% more significantly regulated proteins in half the gradient time when profiling proteome responses to drug perturbations [20].

- Comparative Advantage: In a separate application integrating RTLS with tandem mass tags (TMTpro), researchers achieved a 42-fold increase in sample throughput for quantifying reactive cysteine residues, a critical task in chemical proteomics and drug mechanism studies [20].

These figures underscore the transformative impact of specialized spectral libraries paired with intelligent informatics. For context, the scale of generalistic databases is immense but serves a different purpose. PubChem, for instance, adds value through integration, connecting compounds to 41.5 million scientific articles and 50.8 million patents [18].

Table: Key Experimental Metrics from Spectral Library Study [20]

| Performance Metric | Traditional Method | RTLS with Spectral Library | Improvement |

|---|---|---|---|

| Instrument Acquisition Efficiency | Baseline | 2-fold increase | 100% improvement |

| Gradient Time for Equivalent Protein Regulation Data | 120 minutes | 60 minutes | 50% reduction |

| Significantly Regulated Proteins Quantified | Baseline | 15% more proteins | Increased sensitivity |

| Sample Throughput for Reactive Cysteine Quantification | Baseline | 42-fold increase | 4200% improvement |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear understanding of the data generation behind spectral library performance, the following protocol is summarized from the cited RTLS study [20].

Protocol: Real-Time Library Searching (RTLS) for Sample-Multiplexed Quantitative Proteomics

1. Sample Preparation:

- Cell Culture & Lysis: Human cell lines (e.g., HCT116, A549) or yeast (S. cerevisiae) are grown, harvested, and lysed in a buffer containing 8M urea and protease inhibitors.

- Peptide Labeling: Proteins are digested, and the resulting peptides are labeled with isobaric tandem mass tags (TMTpro) to enable multiplexed quantification.

- Sample Mixing: A standard sample is prepared by mixing peptides from human and yeast cells in a known ratio (e.g., 90:10 human:yeast) to create a ground-truth benchmark [20].

2. Spectral Library Generation:

- An in silico spectral library is generated for the whole proteome (human and yeast) using a prediction tool like Prosit. The library contains sequence, precursor charge, precursor m/z, and predicted fragment ion intensities for millions of peptides [20].

3. Mass Spectrometry with RTLS:

- Chromatography: Peptides are separated on a reversed-phase C18 column using a 30-180 minute liquid chromatography gradient.

- Instrumentation: Analysis is performed on a high-resolution Orbitrap mass spectrometer (e.g., Eclipse or Ascend) equipped with FAIMS (High-Field Asymmetric Waveform Ion Mobility Spectrometry) for additional gas-phase separation.

- Real-Time Search: As MS2 spectra are acquired, software matches them against the pre-loaded spectral library in milliseconds. Based on a high-confidence match (using scores like dot product), the system intelligently triggers quantitative MS3 scans, avoiding wasted time on unidentifiable or low-quality spectra [20].

4. Data Analysis:

- Quantification is based on reporter ion intensities from the triggered MS3 scans. The increased efficiency of RTLS allows for more comprehensive and accurate quantification across the multiplexed sample set [20].

Visualizing Workflows and Relationships

The integration of different database types is key to a successful research pipeline. The following diagrams illustrate a spectral library matching workflow and the logical relationship between database categories.

Diagram 1: Real-Time Spectral Library Matching Workflow This diagram details the computational and instrumental workflow for real-time spectral library matching, as described in the experimental protocol [20].

Diagram 2: Database Categories in the Research Pipeline This diagram shows how the three database categories logically connect and support different stages of the natural product research pipeline, from discovery to confirmation.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table lists key reagents, instruments, and software solutions essential for conducting experiments that generate and utilize spectral library data, as derived from the featured protocol [20].

Table: Essential Research Reagents and Materials for Spectral Library-Based Proteomics

| Item | Function/Description | Example/Note |

|---|---|---|

| TMTpro 16/18plex Isobaric Labels | Chemical tags for multiplexed sample quantification, allowing simultaneous analysis of up to 18 samples. | Critical for high-throughput quantitative experiments [20]. |

| FAIMS Device | High-Field Asymmetric waveform Ion Mobility Spectrometry; adds a separation dimension to reduce sample complexity and improve sensitivity. | Used with CV values typically at -40, -60, -80 [20]. |

| High-Resolution Mass Spectrometer | Instrument for accurate mass measurement and fragmentation (e.g., Orbitrap Eclipse/Ascend). | Enables the MS1, MS2, and SPS-MS3 scans required for the workflow [20]. |

| Prosit Software | A deep learning tool for predicting high-quality peptide MS/MS spectra from sequences. | Used to generate in silico spectral libraries for whole proteomes [20]. |

| Real-Time Search Software (Custom) | Software application that performs spectral matching against a large library within milliseconds of scan acquisition. | The core innovation enabling intelligent data acquisition [20]. |

| C18 Reverse-Phase LC Column | Chromatography column for separating peptides based on hydrophobicity prior to MS injection. | Standard for bottom-up proteomics; column length (e.g., 30cm) affects resolution [20]. |

This comparison establishes that generalistic, thematic, and spectral libraries are complementary pillars of modern natural product research. The future points toward greater integration and intelligence. Trends include the use of AI not just for spectral prediction but for autonomous database operations, anomaly detection, and enhanced data analytics [21]. Furthermore, the push for Open Access and FAIR data principles is making specialized resources like spectral libraries more accessible, fostering reproducibility and collaboration [17] [22]. Initiatives like NFDI4Chem aim to build a federated, FAIR data infrastructure for chemistry, which would seamlessly connect compound information from generalistic databases with analytical data from spectral libraries [23]. For the researcher, this evolving landscape means that strategic database selection—starting broad with generalistic resources, diving deep with thematic tools, and confirming with spectral libraries—will remain essential for efficient and impactful discovery.

The field of natural product (NP) discovery is undergoing a profound transformation, driven by the digitization of chemical information and the adoption of computational methodologies. This shift has precipitated a move from traditional, resource-intensive assay-guided exploration to data-driven, in silico discovery paradigms [5]. At the heart of this revolution are open-access databases, which serve as the foundational infrastructure for modern computational screening, machine learning, and genome mining. This comparison guide evaluates key databases within the broader thesis that accessible, well-curated, and interoperable data resources are critical for accelerating NP research and drug development.

The current landscape is characterized by a tension between breadth and specialization. Generalist databases aim to aggregate all known NPs into unified resources, thereby simplifying large-scale computational screening. In contrast, specialized microbial databases offer deep, contextual metadata—such as biosynthetic gene cluster (BGC) links and taxonomic provenance—that is essential for hypothesis-driven discovery [24] [25]. Furthermore, the advent of deep generative models has introduced a new category: ultra-large virtual libraries that dramatically expand the explorable chemical space beyond known compounds [5]. This guide objectively compares the scope, performance, and applications of these diverse resources, providing researchers with a framework to select the optimal tools for their specific workflows.

Comparative Analysis of Database Scale, Content, and Curation

A primary differentiator among NP databases is their scale, source of data, and the rigor of their curation pipelines. These factors directly impact their suitability for various research applications, from virtual screening to ecological studies.

Table 1: Comparison of Major Open Access Natural Product Databases by Scale and Content

| Database Name | Primary Scope | Number of Compounds | Key Data Sources & Curation Features | Primary Use Case |

|---|---|---|---|---|

| COCONUT [25] | Generalist: All known NPs | 406,919 (unique, flat structures) | Aggregated from 53 open sources; ChEMBL curation pipeline; 5-star annotation quality system. | Large-scale virtual screening, machine learning model training, broad chemical space analysis. |

| Generated NP-like DB [5] | Generative: AI-expanded library | 67,064,204 (generated molecules) | Created by LSTM-RNN trained on COCONUT; filtered via RDKit & ChEMBL pipeline. | Exploring novel chemical space, ultra-high-throughput in silico screening. |

| Natural Products Atlas [24] [26] | Specialist: Microbial NPs | 25,523 (as of 2019) | Expert-curated from literature; linked to MIBiG (BGCs) and GNPS (mass spectra). | Microbial NP discovery, dereplication, linking chemistry to genomics. |

| NPASS [24] | Specialist: NPs with activity data | ~35,032 (incl. ~9,000 microbial) | Focus on biological activities and source organisms. | Activity-guided discovery, target identification, pharmacology research. |

| StreptomeDB [24] [26] | Specialist: Streptomyces metabolites | >7,125 | Focus on compounds from the genus Streptomyces; includes some bioactivity data. | Research on actinobacterial metabolism, antibiotic discovery. |

COCONUT (Collection of Open Natural Products) establishes the benchmark for generalist, aggregated databases. Its construction involved unifying compounds from 53 disparate sources, followed by stringent standardization using the ChEMBL curation pipeline to check structural validity, remove salts, and generate parent structures [25]. A key innovation is its 5-star annotation system, which rates compounds based on the completeness of metadata (name, taxonomic origin, literature reference), guiding users toward higher-quality entries [25]. In contrast, specialist databases like the Natural Products Atlas prioritize depth over breadth. Its value lies in expert manual curation and its bi-directional links to genomic (MIBiG) and metabolomic (GNPS) databases, creating a networked resource for microbial natural products research [24].

The 67-million compound generated database represents a paradigm shift from curation to creation [5]. Its scale is enabled by a recurrent neural network (RNN) with long short-term memory (LSTM) units trained on the SMILES strings of known NPs from COCONUT. This model learned the underlying "molecular language" of NPs to generate novel, syntactically valid structures. While it sacrifices the detailed metadata of curated databases, it offers an unprecedented 165-fold expansion of NP-like chemical space for virtual screening [5].

Experimental Validation and Performance Metrics

The utility of a NP database is ultimately determined by the quality and chemical relevance of its contents. Rigorous experimental validation, using both cheminformatic and statistical measures, is essential to establish trust in these resources.

Validation of the Generative Database

The creation and validation of the 67-million compound database followed a multi-step computational protocol designed to ensure chemical validity, uniqueness, and "natural product-likeness" [5].

Experimental Protocol: Generation and Validation of AI-Derived NPs [5]

- Model Training: An LSTM-RNN was trained on 325,535 tokenized SMILES strings (stereochemistry removed) from the COCONUT database.

- Library Generation: The trained model generated 100 million novel SMILES strings.

- Validity & Uniqueness Filtering:

- Syntax Check: RDKit's

Chem.MolFromSmiles()filtered out 9.6 million invalid SMILES. - Deduplication: Structures were canonicalized and converted to InChI keys, removing 22.5 million duplicates.

- Structural Curation: The ChEMBL pipeline removed 0.85 million molecules with severe structural issues (penalty score >5).

- Syntax Check: RDKit's

- Natural Product-Likeness Assessment:

- The NP Score was calculated for all generated and known COCONUT molecules.

- The Kullback-Leibler (KL) divergence between the score distributions of the two sets was computed (0.064 nats), indicating high similarity.

- Structural Classification: The NPClassifier tool was used to assign biosynthetic pathway classes, with 88% of generated molecules receiving a classification.

- Chemical Space Analysis: 10 key molecular descriptors were calculated, and t-SNE dimensionality reduction was performed to visualize and compare the physiochemical space covered by known versus generated compounds.

Table 2: Key Validation Metrics for the 67M+ Generated NP Database [5]

| Validation Metric | Result | Interpretation & Significance |

|---|---|---|

| Final Library Size | 67,064,204 compounds | A 165-fold expansion over known NPs (~400k), enabling exploration of vast novel space. |

| Syntactic Validity Rate | ~90.4% (90.4M valid from 100M generated) | Demonstrates the model's proficiency in learning chemical grammar. |

| Uniqueness Rate | 77% of valid SMILES were unique. | Indicates the model generates novel diversity, not just repetitions. |

| NP Score KL Divergence | 0.064 nats | Distribution statistically indistinguishable from known NPs, confirming "NP-likeness". |

| NPClassifier Coverage | 88% classified | Suggests most generated structures align with known biosynthetic logic; unclassified 12% may represent novel classes. |

| Chemical Space Expansion | t-SNE shows significant expansion beyond COCONUT space. | Generated molecules cover new regions of physiochemical property space, promising novel scaffolds. |

Cheminformatic Benchmarking of Database Utility

Specialized computational fingerprints and scores have been developed to better handle the unique structural complexity of NPs. A key study benchmarked a novel neural network-derived fingerprint against traditional methods using NP-specific tasks [27].

Experimental Protocol: Benchmarking NP-Specific Fingerprints [27]

- Data Curation: A training set was created from COCONUT (394,939 NPs) and similar synthetic decoys from ZINC (210,412 compounds).

- Model Training: A multi-layer perceptron was trained to distinguish NPs from synthetic molecules.

- Fingerprint Extraction: The activations from a hidden layer of the trained network were used as a new "neural fingerprint."

- Benchmarking: This neural fingerprint was evaluated on three external validation tasks against traditional (ECFP4, MACCS) and NP-specific fingerprints:

- NP Identification: Distinguishing NPs from synthetic molecules.

- Target Identification: Distinguishing active from inactive NPs for specific protein targets.

- Mixed Screening: A realistic virtual screening scenario containing both NP and synthetic actives/inactives.

- Score Development: The activation of the network's output neuron was proposed as a new, data-driven Natural Product Likeness score.

The study concluded that the neural fingerprint outperformed all other methods in the "Mixed Screening" task, which most closely resembles a real-world drug discovery campaign [27]. This demonstrates that databases like COCONUT are not merely static repositories but are essential for training next-generation tools that unlock more effective NP discovery.

Diagram: Workflow for Generating and Validating an AI-Expanded NP Library

Leveraging NP databases effectively requires a suite of complementary software tools and reagents. The following table details key resources frequently employed in conjunction with databases for discovery workflows.

Table 3: Essential Research Tools and Reagents for NP Database Workflows

| Tool/Resource Name | Type | Primary Function in NP Research | Typical Application with Databases |

|---|---|---|---|

| RDKit [5] | Cheminformatics Toolkit | Provides fundamental functions for reading, writing, and manipulating chemical structures (SMILES, InChI), calculating molecular descriptors, and generating fingerprints. | Used for standardizing database structures, filtering invalid entries, and computing properties for analysis [5] [27]. |

| ChEMBL Curation Pipeline [5] [25] | Standardization Protocol | A standardized set of rules for checking chemical structure validity, removing salts and solvents, and generating parent molecules according to FDA/IUPAC guidelines. | Applied to raw data in COCONUT and the generated DB to ensure high-quality, consistent chemical representations [5]. |

| NP Score [5] | Computational Metric | A Bayesian score quantifying a molecule's similarity to the structural space of known natural products based on atom-centered fragments. | Used to validate the "natural product-likeness" of AI-generated libraries and to prioritize compounds from virtual screens [5]. |

| NPClassifier [5] | Deep Learning Classifier | A tool that classifies NPs into biosynthetic pathway classes (e.g., polyketide, non-ribosomal peptide) based on structural features. | Annotates database entries with putative biosynthetic origin, enabling organized exploration and targeted mining [5]. |

| antiSMASH [24] | Genomic Analysis Platform | Identifies and annotates Biosynthetic Gene Clusters (BGCs) in genomic DNA sequences. | Used alongside genomic data to link database compounds to their genetic blueprints, enabling genome-mining approaches. |

| GNPS [24] | Tandem MS Database | A platform for community-wide organization and sharing of raw, processed, or annotated tandem MS data. | Used with the Natural Products Atlas for spectral dereplication, identifying known compounds in mixtures quickly. |

Microbial natural products are a prolific source of antibiotics and other therapeutics. Research in this area relies on both digital databases and tangible strain collections, each playing a complementary role.

Specialized Microbial Databases

For microbial NPs, deep annotation is as critical as chemical structure. The Natural Products Atlas is the leading open-access resource, distinguished by its manual curation by NP specialists and its integration with genomic (MIBiG) and metabolomic (GNPS) data [24]. NPASS provides valuable supplemental bioactivity data, while StreptomeDB offers a focused lens on the chemically rich genus Streptomyces [24] [26]. These resources address a critical gap, as generalist databases often lack the detailed taxonomic and biosynthetic metadata required for microbial strain prioritization and dereplication.

Bridging Digital and Physical Collections

The ultimate source of novel microbial NPs is biological material. Large-scale strain collections, such as the Natural Products Discovery Center (NPDC) at The Wertheim UF Scripps Institute, represent an indispensable physical counterpart to digital databases [28]. The NPDC houses over 125,000 microbial strains, estimated to encode the potential for more than 3.75 million natural products—a figure that contextualizes the scale of known chemical space (~20,000 microbial NPs) and highlights the vast potential that remains unexplored [28].

The workflow connecting these resources is powerful: Genomic sequencing of strain collections identifies promising BGCs (digital data). These BGCs can be compared against databases like MIBiG to assess novelty. Subsequently, strains are cultured, and their extracts are analyzed with techniques like NMR-based metabolomics [29]. The resulting spectroscopic data is used to dereplicate against structural databases (e.g., Natural Products Atlas) to avoid rediscovery and to identify truly novel compounds for isolation.

Diagram: Integrated Workflow Linking Physical Repositories and Digital Databases

The expanding ecosystem of open-access NP databases offers tailored solutions for different research objectives. The choice of resource should be guided by the specific stage and goal of the discovery campaign.

For large-scale virtual screening and machine learning, comprehensive and computationally ready resources like COCONUT and the 67M+ generated database are indispensable. Their scale and structural consistency enable the application of AI models and high-throughput in silico screens [5] [27]. For microbial natural product discovery and dereplication, deeply annotated and expertly curated resources like the Natural Products Atlas are critical. Their links to genomic and spectroscopic data provide the contextual information needed to guide experimental work and avoid rediscovery [24]. Furthermore, access to physical strain collections like the NPDC is essential for translating digital predictions into novel chemical entities [28].

The future of NP discovery lies in the deeper integration of these resources. Advancing the FAIR (Findable, Accessible, Interoperable, Reusable) principles for all databases will enable more powerful meta-analyses and cross-domain searches [24]. Continued development of specialized computational tools—such as NP-optimized fingerprints and scores—will further enhance the utility of these databases. By strategically leveraging the complementary strengths of generalist aggregators, specialist repositories, AI-generated libraries, and physical collections, researchers can more effectively navigate the vast chemical potential of nature to address pressing challenges in drug development.

From Data to Discovery: Practical Workflows for Database Utilization

The systematic comparison of open-access natural product (NP) databases represents a critical thesis in modern cheminformatics, focusing on their utility, chemical diversity, and integration into efficient drug discovery pipelines. Virtual screening (VS) stands as the computational cornerstone of this research, enabling the systematic interrogation of these expansive chemical libraries to identify novel bioactive compounds [30]. The evolution of publicly available databases—from curated collections of known NPs like LOTUS and SuperNatural 3.0 to generated libraries of billions of novel, NP-like structures—has fundamentally transformed the scale and scope of computer-aided drug design [2] [5]. This guide objectively compares the performance of various database structures, virtual screening methodologies, and computational platforms, providing researchers with a framework to select optimal strategies for lead discovery. The discussion is grounded in experimental data and protocols that highlight the tangible outputs of integrating open-access NP databases into virtual workflows, from initial virtual hits to experimentally validated leads [31] [32].

Comparative Analysis of Database Structures and Screening Platforms

The performance of a virtual screening campaign is intrinsically linked to the characteristics of the compound database and the computational platform used. The following tables provide a comparative overview of prominent open-access natural product databases and virtual screening software.

Table 1: Comparison of Key Open-Access Natural Product Databases for Virtual Screening

| Database Name | Size (Compounds) | Key Features & Content | Access & Format | Primary Use Case in VS |

|---|---|---|---|---|

| LOTUS [33] | ~276,518 | Dedicated NP database; provides species origin (e.g., Kingdom Plantae). | Freely available online. | Structure-based screening for specific biological targets (e.g., acetylcholinesterase). |

| SuperNatural 3.0 [2] | ~449,058 | Annotated with predicted toxicity, mechanism of action, pathways, and vendor data. Includes targeted libraries for diseases. | Freely available via web server. | Ligand- and structure-based screening with pre-filtered libraries for specific indications. |

| Zimbabwe NP Database (ZiNaPoD) [32] | 6,220 | Curated library of natural products from Zimbabwe. | Presumably accessible upon request/research collaboration. | Regional NP discovery and pharmacophore-based screening. |

| 67M NP-Like Database [5] | ~67 million | Generated via machine learning (RNN) on known NPs; greatly expands novel chemical space. | Openly available data descriptor. | Exploration of ultra-large, novel NP-like chemical space for de novo hit discovery. |

| COCONUT [5] | ~406,919 | A large collection of open natural products; used as a training set for generative models. | Freely accessible online. | Benchmarking, training generative models, and general NP screening. |

Table 2: Performance Comparison of Virtual Screening Software & Platforms

| Software / Platform | Type | Key Algorithmic Features | Reported Performance Metrics | Access Model |

|---|---|---|---|---|

| RosettaVS / OpenVS Platform [31] | Structure-Based (SBVS) | Physics-based force field (RosettaGenFF-VS); models receptor flexibility; integrates active learning for billion-scale libraries. | Hit rates of 14% (KLHDC2) and 44% (NaV1.7); top enrichment factor (EF1% = 16.72) on CASF2016. | Open-source. |

| VSFlow [34] | Ligand-Based (LBVS) | Integrates 2D fingerprint, substructure, and 3D shape-based screening within one tool. Built on RDKit. | Enables rapid screening of large databases on standard CPUs; demonstrated with FDA-approved drug library. | Open-source command-line tool. |

| AutoDock Vina [32] | Structure-Based (SBVS) | Widely used docking program for binding pose and affinity prediction. | Used in pipeline yielding hits with binding energies ≤ -8 kcal/mol; part of validated workflow [32]. | Open-source. |

| LigandScout [32] | Ligand-Based (LBVS) | Used for pharmacophore model generation and screening. | Generated model with 80% accuracy, 95% sensitivity, 80% specificity for glucokinase activators [32]. | Commercial. |

| SwissSimilarity [34] | Ligand-Based (LBVS) | Web tool for 2D fingerprint and 3D shape screening against public and vendor libraries. | Enables easy web-based screening of common databases. | Freely accessible web server. |

Experimental Protocols and Validation from Case Studies

Protocol: Integrated NP Virtual-Interaction-Phenotypic (NP-VIP) Target Characterization

This novel protocol combines virtual screening with experimental 'omics' to deconvolute the complex targets of natural product extracts [35].

- Virtual Screening: A multi-target docking approach is performed against a proteome-wide target panel using constituents of an NP extract (e.g., Salvia miltiorrhiza).

- Chemical Proteomics: The NP extract is immobilized on a resin to create a affinity-based probe. Incubation with cell or tissue lysates pulls down putative protein targets, which are identified via mass spectrometry.

- Metabolomics: The biological system (e.g., cell or animal model) is treated with the NP extract, and subsequent changes in the endogenous metabolite profile are analyzed.

- Data Integration & Triangulation: Overlap analysis is performed on the target lists from the three independent methods. Targets identified by at least two methods are considered high-confidence. For S. miltiorrhiza, this identified five high-confidence targets for ischemic stroke treatment, including PARP1 and STAT3 [35].

- Experimental Validation: High-confidence targets are validated using methods like surface plasmon resonance (SPR), isothermal titration calorimetry (ITC), or functional enzymatic assays.

Diagram 1: NP-VIP Multi-Method Target Identification Workflow

Protocol: Structure-Based Virtual Screening for Glucokinase Activators

This protocol details a classic structure-based virtual screening cascade applied to a regional NP database [32].

- Pharmacophore Generation & Validation:

- A set of known active compounds (pEC50 ≥ 8) is used to generate a common feature pharmacophore model using software like LigandScout.

- The model is validated using the DUD-E benchmark dataset, calculating accuracy, sensitivity, and specificity.

- Database Filtering:

- The validated pharmacophore model is used as a rapid pre-filter against the target database (e.g., 6,220 compounds in ZiNaPoD). This step reduces the number of compounds for more computationally intensive docking.

- Molecular Docking:

- The pharmacophore hits are docked into the target protein's active site (e.g., glucokinase, PDB: 4NO7) using programs like AutoDock Vina in PyRx.

- Compounds are ranked by predicted binding affinity (kcal/mol). A threshold (e.g., ≤ -8 kcal/mol) is applied to select top candidates.

- ADME/Tox Prediction:

- The top docked hits are subjected to in silico ADME (Absorption, Distribution, Metabolism, Excretion) screening using tools like SwissADME to filter out compounds with poor drug-like or pharmacokinetic properties.

- Molecular Dynamics (MD) Simulation:

- The stability of the final shortlisted protein-ligand complexes is assessed using MD simulations (e.g., with GROMACS using the CHARMM36m force field).

- Key metrics include root mean square deviation (RMSD) of the ligand-protein complex and calculation of binding free energies (e.g., via MM-PBSA/GBSA). This protocol identified four stable glucokinase activators from ZiNaPoD, with two (Sphenostylisin I and DMDBC) showing particularly favorable binding free energies (-30.30 and -30.20 kcal/mol) and stable RMSD profiles [32].

Diagram 2: Cascade for Structure-Based VS of NP Databases

Table 3: Key Research Reagent Solutions for NP Virtual Screening

| Tool / Resource | Category | Primary Function | Access / Example |

|---|---|---|---|

| Curated NP Databases (LOTUS, SuperNatural 3.0) | Chemical Library | Provide structurally diverse, annotated, and often biologically pre-characterized starting points for screening. | [33] [2] |

| Generated NP-Like Libraries (e.g., 67M Database) | Chemical Library | Drastically expand accessible chemical space with novel, synthetically tractable NP-like scaffolds for discovery. | [5] |

| VSFlow | Software Tool | An integrated, open-source tool for performing 2D (substructure, fingerprint) and 3D shape-based ligand screening on local databases. | [34] |

| OpenVS / RosettaVS | Software Platform | An open-source, AI-accelerated platform for high-performance structure-based screening of ultra-large libraries, incorporating receptor flexibility. | [31] |

| AutoDock Vina & PyRx | Software Tool | A widely adopted, open-source docking suite for predicting binding poses and affinities in structure-based VS. | [32] |

| RDKit | Software Library | The fundamental open-source cheminformatics toolkit used for molecule handling, descriptor calculation, fingerprinting, and more in custom VS pipelines. | [2] [34] [5] |

| Pharmacophore Modeling Software (e.g., LigandScout) | Software Tool | Creates and validates 3D pharmacophore queries from active compounds for efficient database filtering. | [32] |

| ADME Prediction Tools (e.g., SwissADME) | Software Service | Provides in silico predictions of key pharmacokinetic and drug-likeness parameters to prioritize viable leads. | [32] |

| Molecular Dynamics Software (e.g., GROMACS) | Software Tool | Simulates the dynamic behavior of protein-ligand complexes to assess binding stability and calculate free energies. | [32] |

Within the paradigm of natural product (NP) discovery, dereplication constitutes the critical process of rapidly identifying known compounds early in the discovery pipeline to avoid redundant rediscovery and conserve resources [36]. This process is fundamentally reliant on the comparison of analytical data—typically from mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy—against reference databases [37]. The efficiency and success of dereplication are directly governed by the scale, quality, and accessibility of these reference databases.

The shift toward open-access databases is a central theme in modern NP research, aiming to democratize data and accelerate discovery. These repositories vary from large-scale, global collections to specialized, region-specific libraries, each employing different strategies for data organization and querying. This guide objectively compares the performance of these varying database architectures and dereplication methodologies, providing a framework for researchers to select optimal tools within the context of a broader, computationally-driven NP discovery workflow [36].

Database Architectures and Strategic Comparison

The performance of a dereplication strategy is intrinsically linked to the design and scope of its underlying database. The following table summarizes the core characteristics of representative database types, from curated knowledgebases to generative libraries.

Table 1: Comparison of Open-Access Natural Product Database Architectures for Dereplication

| Database / Strategy | Core Approach & Scale | Key Query Method | Primary Advantage | Notable Limitation |

|---|---|---|---|---|

| COCONUT (Curated Knowledgebase) | Collection of ~406,919 fully characterized, known natural products [5]. | Spectral matching; substructure search; metadata filtering. | High confidence in annotations; direct link to literature and experimental data. | Limited to known chemical space; scale is static and resource-intensive to expand. |

| DEREP-NP (Fragment-Based Screening) | Database of 65 structural fragments derived from 229,358 pre-2013 NP structures [37]. | Matching counts of structural features inferred from NMR/MS data. | Rapid pre-filtering; handles complex or novel scaffolds via partial feature matching. | Dependent on accurate spectral interpretation to infer fragments; older core dataset. |

| Generative Database (e.g., 67M NP-like) | 67,064,204 computer-generated, natural product-like molecules (165x expansion) [5]. | Virtual screening (docking, similarity); AI-based property prediction. | Explores vast, novel chemical space beyond known NPs; enables in silico discovery. | Contains hypothetical molecules without known biological or spectral data; requires validation. |

| Specialized Repository (e.g., NAPRORE-CR) | Focused collection (e.g., 1,161 compounds from Costa Rica) with curated metadata [9]. | Taxonomy/ecology-based filtering; combined property and structural search. | High relevance for targeted biogeographic studies; enriched contextual metadata. | Limited general applicability; small scale reduces chance of random hits in broad screening. |

Performance Metrics and Experimental Validation

Query Performance and Specificity

The practical utility of a database is measured by its query speed and accuracy. Traditional spectral matching against curated libraries like COCONUT offers high specificity but can be computationally intensive for large-scale searches. In contrast, fragment-based methods like DEREP-NP use a cheminformatic pre-filter. This strategy first reduces the search space by matching simple structural feature counts deduced from spectra, leading to faster retrieval of candidate structures for final confirmation [37].

For the largest-scale databases, such as generative libraries, conventional spectral search is not applicable. Performance is instead measured by virtual screening throughput and the enrichment of bioactive hits in in silico campaigns. The 67-million-compound database, for example, was shown to occupy a significantly expanded physicochemical space compared to known NPs, increasing the probability of identifying novel scaffolds [5].

Experimental Validation of Dereplication Workflows

The effectiveness of a dereplication strategy must be validated experimentally. The following table synthesizes key experimental data from validation studies.

Table 2: Experimental Validation of Dereplication Strategies

| Validated System / Study | Experimental Input | Methodology | Reported Outcome | Key Performance Insight |

|---|---|---|---|---|

| DEREP-NP [37] | 1H, HSQC, and/or HMBC NMR data and/or MS data from purified compounds or simple fractions. | 1. Infer structural fragments from spectra. 2. Query database with fragment count vector. 3. Retrieve matching structures for verification. | Successfully dereplicated compounds from plant, marine invertebrate, and fungal sources, including in mixtures. | Fragment-based query is robust for partial or mixed compound data, accelerating the identification step before full structure elucidation. |

| Generative Model (67M NP-like) [5] | Known NP structures from COCONUT (training set: 325,535 molecules). | 1. Train RNN (LSTM) on SMILES strings. 2. Generate 100M novel SMILES. 3. Filter for validity, uniqueness, and NP-likeness (NP Score). | Produced 67M valid, unique structures. NP Score distribution of generated molecules closely matched that of known NPs (KL divergence: 0.064 nats). | AI can generate chemically valid molecules that occupy NP-like chemical space, providing a vast resource for in silico screening. |

| NAPRORE-CR [9] | Computed molecular descriptors (MW, LogP, TPSA, etc.) for NPs, drugs, pesticides, and cosmetics. | Chemical space visualization (e.g., PCA) and diversity analysis to compare property profiles. | NAPRORE-CR compounds showed property overlap with approved drugs and natural pesticides, suggesting potential cross-applications. | Focused, well-annotated databases enable efficient analysis of chemical space for specific bioactivity or application prediction. |

Detailed Experimental Protocols

Protocol: Fragment-Based Dereplication with DEREP-NP

This protocol outlines the core experimental workflow for using a fragment-based dereplication system, as validated in the literature [37].

1. Sample Preparation & Data Acquisition:

- Purify the natural product extract to obtain individual compounds or simple fractions containing 2-3 components.

- Acquire spectroscopic data. Minimum required: 1H NMR spectrum. Enhanced capability with: 2D NMR (HSQC, HMBC) and/or Mass Spectrometry (MS) data.

2. Spectral Analysis & Fragment Inference:

- Analyze the NMR/MS spectra to identify diagnostic structural features present in the unknown compound (e.g., presence of a phenolic group, olefinic protons, sugar moieties, specific heterocycles).

- Map these identified features to a predefined list of structural fragments (e.g., the 65 fragments used in DEREP-NP).

3. Database Query:

- In the database interface (e.g., DataWarrior platform for DEREP-NP), input the numeric count of each identified structural fragment.

- Execute the search. The database engine retrieves all structures whose fragment profile matches the input vector.

4. Result Verification:

- Review the list of candidate structures returned by the query.