Navigating Complexity: Modern Strategies for Deconvoluting Bioactive Natural Products from Complex Extract Libraries

This article provides a comprehensive guide for researchers and drug development professionals on the contemporary challenges and solutions in handling complex natural product extract libraries.

Navigating Complexity: Modern Strategies for Deconvoluting Bioactive Natural Products from Complex Extract Libraries

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the contemporary challenges and solutions in handling complex natural product extract libraries. It details the fundamental bottlenecks of traditional workflows and the necessity for standardized library construction. The article systematically explores a suite of advanced methodological tools, from bioassay-guided fractionation and dereplication techniques to AI-driven predictive modeling and modern extraction technologies like ultrasound-assisted and supercritical fluid extraction. It addresses common troubleshooting scenarios, including analytical interferences and scalability issues, while offering optimization strategies. Finally, the article establishes a framework for validation and comparative analysis, covering biological confirmation, analytical benchmarking, and the regulatory considerations essential for translating discoveries into viable therapeutic candidates. By synthesizing these four core intents, the article aims to equip scientists with a practical, integrated strategy to accelerate bioactive natural product discovery.

Understanding the Tapestry: Defining Complexities and Bottlenecks in Natural Product Extract Libraries

Within the field of natural product research for drug discovery, the central, inherent challenge is the effective definition, handling, and analysis of complex mixtures. These mixtures, derived from botanical, microbial, or marine sources, are not simple solutions but intricate matrices containing hundreds to thousands of unique chemical constituents with diverse polarities, concentrations, and biological activities [1] [2]. The core thesis of this technical support framework is that overcoming methodological hurdles in managing these mixtures—from reproducible extraction and standardized analysis to intelligent screening and accurate target identification—is the fundamental prerequisite for meaningful discovery [1] [3].

This Technical Support Center is designed within that thesis context. It provides researchers, scientists, and drug development professionals with targeted troubleshooting guides and FAQs to navigate the specific, recurring experimental issues encountered when working with natural product extract libraries. Our goal is to translate the theoretical challenge of "complexity" into practical, actionable solutions for the laboratory.

Technical Support & Troubleshooting Hub

Frequently Asked Questions (FAQs)

Q1: Our natural product extracts yield inconsistent bioactivity results between assay runs. What are the most likely causes and how can we fix this? A: Inconsistent bioactivity is a critical issue often stemming from the complex nature of the samples. Primary causes and solutions are systematized in the table below:

Table 1: Troubleshooting Inconsistent Bioactivity in Natural Product Screens

| Potential Cause | Diagnostic Check | Corrective Action |

|---|---|---|

| Variable Extract Composition | Compare HPLC-UV/PDA chromatograms of different extract batches [4]. | Implement standardized, validated extraction protocols (e.g., Accelerated Solvent Extraction) and rigorous quality control of source material [2] [5]. |

| Presence of Assay Interferants | Run interference counterscreens (e.g., testing for fluorescence quenching, promiscuous aggregation) [2]. | Employ prefractionation to separate interferants (e.g., tannins, chlorophyll) [2] or switch to a more robust assay format less susceptible to interference. |

| Compound Degradation | Re-analyze "inactive" sample plates via HPLC after storage and compare to fresh samples [4]. | Optimize storage conditions (e.g., -80°C, inert atmosphere, DMSO as solvent). Use lyophilized fractions and reconstitute immediately before screening [2]. |

| Low Concentration of Active Principle | Test a dose-response of the crude extract; weak concentration-dependence suggests a minor constituent is active. | Switch from crude extract to a prefractionated library to concentrate minor metabolites, thereby increasing the probability of detection [2] [3]. |

Q2: When performing bioassay-guided fractionation (BGF), we frequently "lose" activity after the first chromatographic step. Why does this happen? A: Loss of activity during BGF is a classic problem in complex mixture analysis. It can occur due to:

- Synergistic Effects: The biological activity is the result of multiple compounds acting together. Separation destroys the synergistic interaction [1]. Solution: Use mixture-based screening approaches or network pharmacology analysis to identify co-dependent active fractions [1] [6].

- Compound Instability: The active compound is labile and degrades under the chromatographic conditions used (e.g., specific pH, solvent, or exposure to light). Solution: Use milder, faster separation techniques (e.g., low-temperature HPLC) and characterize stability profiles early [3].

- Inefficient Recovery: The active compound has poor solubility in the collection solvent or adheres irreversibly to the stationary phase. Solution: Modify the mobile phase, use alternative column chemistries, or employ mass-directed fractionation to track the compound of interest directly [4] [3].

Q3: How can we rapidly prioritize which active fractions to pursue for costly and time-consuming isolation and structure elucidation? A: Prioritization is essential for efficiency. Implement a dereplication pipeline before full isolation:

- Early-Stage Profiling: Subject active fractions immediately to high-resolution LC-MS/MS for molecular formula determination [3].

- Database Mining: Query the obtained molecular features against natural product databases (e.g., NP-MRD, UNPD) to check for known compounds [1] [3].

- Bioactivity Correlations: Use techniques like (bio)chemometric analysis, which correlates LC-MS data with bioactivity data across multiple fractions, to pinpoint the spectral features most likely linked to the observed effect [3].

- Advanced NMR: Apply microcoil or capillary NMR on partially purified material for preliminary structural insight [3].

Troubleshooting Guides

Guide 1: Addressing Low Spectral Resolution in HPLC-UV/MS Analysis of Crude Extracts

- Problem: Overlapping peaks (co-elution) in chromatograms, preventing clear compound differentiation.

- Step-by-Step Solution:

- Modify Gradient: Increase gradient time or shallow the organic solvent slope for better separation [4].

- Change Stationary Phase: Switch to a column with different chemistry (e.g., from C18 to phenyl-hexyl or HILIC) to alter selectivity [4] [7].

- Optimize Temperature: Increase column temperature (typically 30-60°C) to improve peak shape and resolution [7].

- Implement 2D-LC: For persistently complex regions, employ comprehensive two-dimensional liquid chromatography (LCxLC) for vastly increased peak capacity [4].

- Prevention: Develop methods using design-of-experiment (DoE) software to optimally combine variables like gradient, temperature, and pH.

Guide 2: Overcoming Challenges in Heterologous Expression of Biosynthetic Gene Clusters (BGCs)

- Problem: A BGC cloned from an environmental isolate fails to produce the target compound in a heterologous host (e.g., Streptomyces albus) [8].

- Step-by-Step Solution:

- Verify Cluster Integrity: Sequence the entire cloned construct to ensure no deletions or mutations occurred [8].

- Check Transcription: Use RT-PCR to confirm key biosynthetic genes are being transcribed. Silence often indicates missing native regulation [8].

- Supply Missing Regulation: Identify and co-express predicted pathway-specific positive regulatory genes from the BGC (e.g., SARP family regulators) [8].

- Address Host-Specific Bottlenecks: Compare transcription levels of all cluster genes between native and heterologous hosts. Identify and supplement rate-limiting steps (e.g., a poorly expressed ketoreductase) [8].

- Related Protocol: Activating Silent BGCs via Regulatory Gene Overexpression

- Clone the putative pathway-specific regulator gene into an expression vector with a strong, constitutive promoter (e.g., ermE*).

- Introduce this regulator plasmid into the heterologous host carrying the silent BGC.

- Culture the engineered strain under appropriate production conditions.

- Analyze metabolite profiles via LC-MS and compare to controls to detect newly produced compounds [8].

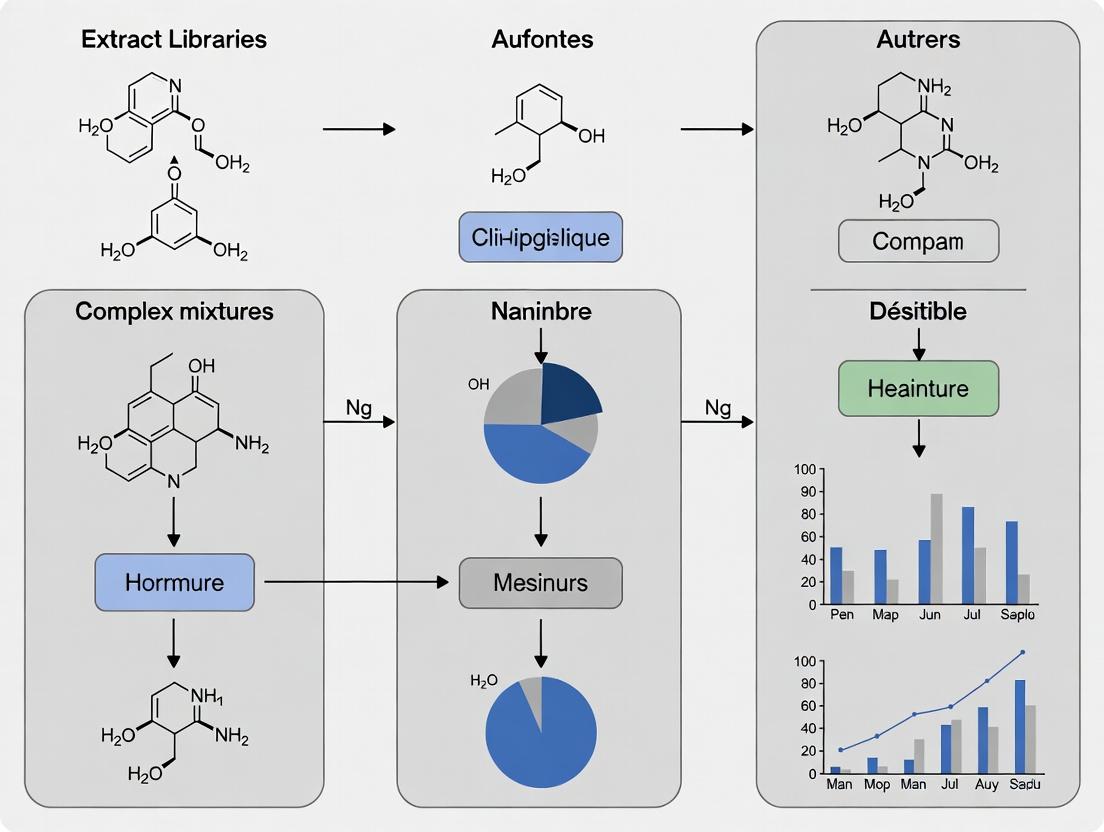

Visualization of Workflows & Relationships

Natural Product Discovery from Complex Mixtures Workflow

Troubleshooting Bioactivity Loss in Fractionation

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Research Reagent Solutions for Complex Mixture Analysis

| Item / Reagent | Primary Function in Natural Product Research | Key Considerations for Use |

|---|---|---|

| Solid Phase Extraction (SPE) Cartridges (C18, Diol, Ion-Exchange) | Pre-fractionation of crude extracts to remove nuisance compounds (e.g., salts, pigments) and fractionate by polarity/charge [5]. | Select sorbent chemistry based on target compound properties. Use orthogonal phases (e.g., C18 then Ion-Exchange) for comprehensive clean-up. |

| HPLC/UHPLC Columns (C18, Phenyl, HILIC, Chiral) | High-resolution analytical and preparative separation of complex mixtures for profiling, purification, and isolation [4] [7]. | Column choice dictates selectivity. Maintain a toolkit of columns with different chemistries to resolve diverse compound classes. |

| LC-MS Grade Solvents & Buffers | Mobile phase for HPLC-MS analysis, ensuring low background noise, high sensitivity, and preventing ion source contamination. | Essential for reproducible MS and NMR results. Avoid non-volatile buffers (e.g., phosphate) in MS mobile phases; use formate/ammonium acetate instead. |

| Deuterated Solvents for NMR (DMSO-d6, CD3OD, D2O) | Solvents for nuclear magnetic resonance spectroscopy, required for structure elucidation of purified compounds [3]. | Choose solvent based on compound solubility. Use highest isotopic purity (>99.8% D) for optimal spectral quality. |

| Stable Isotope-Labeled Precursors (13C-acetate, 15N-glycine) | Feeding experiments to elucidate biosynthetic pathways of natural products in microbial cultures [8]. | Crucial for tracing atom incorporation. Requires careful experimental design and MS/NMR analysis for detection. |

| Bioassay Kits & Reagents | Functional screening of extracts and fractions for specific biological activities (e.g., enzyme inhibition, receptor antagonism). | Validate kit performance in the presence of natural product matrix (solvent, potential interferants) before large-scale screening [2]. |

Detailed Experimental Protocols

Protocol 1: Creation of a Prefractionated Natural Product Library for HTS

- Objective: To generate a semi-purified fraction library from plant biomass suitable for high-throughput screening, minimizing assay interference.

- Materials: Freeze-dried plant material, accelerated solvent extractor (ASE), solid-phase extraction (SPE) vacuum manifold, C18 SPE cartridges (10g), methanol, water, dichloromethane, ethyl acetate, 96-well deep-well plates, centrifugal evaporator.

- Method:

- Extraction: Load ~5g of dried, powdered plant material into an ASE cell. Perform sequential extraction with solvents of increasing polarity (e.g., hexane -> dichloromethane -> ethyl acetate -> methanol). Collect extracts separately [5].

- Solvent Removal: Concentrate each organic extract using rotary evaporation. Lyophilize the aqueous methanol extract.

- Prefractionation: Reconstitute each dried extract in a minimal volume of methanol. Load onto a pre-conditioned C18 SPE cartridge. Elute using a step-gradient of increasing methanol in water (e.g., 20%, 40%, 60%, 80%, 100% MeOH). Collect 5 fractions per crude extract [2].

- Library Formatting: Concentrate each fraction, weigh, and dissolve in DMSO at a standardized concentration (e.g., 10 mg/mL). Transfer to 96-well or 384-well microplates using a liquid handler. Store at -80°C [2].

- Quality Control: Randomly select plates for analysis by UHPLC-UV/PDA to confirm chromatographic reproducibility and complexity reduction across fractions.

Protocol 2: Dereplication of an Active Fraction Using LC-HRMS and Database Mining

- Objective: To rapidly identify known compounds in an active natural product fraction prior to undertaking isolation.

- Materials: Active fraction in solution, UHPLC system coupled to high-resolution mass spectrometer (Q-TOF or Orbitrap), data analysis software (e.g., Compound Discoverer, MZmine), access to natural product databases (GNPS, NP-MRD, SciFinder).

- Method:

- Data Acquisition: Inject the active fraction onto a UHPLC-HRMS system. Use a generic reversed-phase gradient (e.g., 5-95% acetonitrile in water over 15 min). Acquire MS data in both positive and negative ionization modes with data-dependent MS/MS fragmentation [3].

- Feature Extraction: Process the raw data to deconvolute peaks, align features, and assign molecular formulas based on accurate mass and isotopic patterns.

- Database Querying: Export the list of molecular formulas and MS/MS spectra. Search against public spectral libraries (e.g., GNPS) for spectral matches. Query molecular formulas against structural databases [1] [3].

- Activity Correlation: If multiple fractions are active, perform statistical analysis (e.g., PCA) to correlate MS features with bioactivity intensity, highlighting ions likely responsible for the effect [3].

- Output: A report listing putative identifications for major and minor components, flagging known bioactive compounds, and prioritizing unknown features for further investigation.

Technical Support Center

Traditional bioassay-guided fractionation (BGF) is a sequential process of separating complex natural product extracts and testing each fraction for biological activity to isolate the active constituent[sitation:4]. While historically successful, this approach faces significant bottlenecks that hinder efficiency in modern drug discovery [3]. The primary challenges researchers encounter include the time-consuming and labor-intensive iterative cycle of separation and testing, the high risk of rediscovering known compounds after lengthy purification, and the potential for active compounds to be lost or degraded during multi-step processes [9]. Furthermore, the inherent complexity of crude extracts can lead to assay interference, producing false positives or negatives [10]. This technical support center addresses these specific operational hurdles with targeted troubleshooting guides and FAQs.

Troubleshooting Guides

Problem 1: Low Throughput and Prolonged Discovery Timelines

- Symptoms: The process from crude extract to identified active compound takes months to years; only a limited number of samples can be processed.

- Root Cause: Reliance on sequential, low-resolution separation techniques (e.g., open column chromatography, flash chromatography) coupled with off-line bioassay steps that require gram quantities of material [11].

- Solution: Implement microfractionation techniques. Utilize analytical-scale or semi-preparative HPLC/UPLC coupled with automated fraction collectors to generate many fractions from milligram quantities of extract in a single run [12] [11]. This allows for parallel bioactivity testing and dramatically accelerates the initial screening phase.

- Preventive Measures: Adopt an at-line or on-line profiling strategy where possible. Methods like HPLC-based activity profiling link the separation directly to a biochemical or cellular assay, enabling real-time or rapid identification of active chromatographic zones [12].

Problem 2: Frequent Rediscovery of Known Compounds (Dereplication Failure)

- Symptoms: Isolated compound is a known natural product with previously reported activity, wasting significant resources.

- Root Cause: Lack of early-stage chemical characterization. Fractions are tested for activity before their chemical composition is assessed.

- Solution: Integrate early and robust dereplication. Employ High-Resolution Mass Spectrometry (HRMS) and tandem MS/MS on active fractions or crude extracts before major purification efforts. Compare data against natural product databases (e.g., GNPS, Dictionary of Natural Products) [9] [13].

- Preventive Measures: Establish a standardized dereplication pipeline. As soon as bioactivity is detected in a crude extract or early fraction, acquire HRMS and MS/MS data. Use molecular networking tools (like GNPS) to visualize chemical relationships and quickly pinpoint potentially novel compounds [9].

Problem 3: Loss of Bioactivity During Purification

- Symptoms: Strong activity in a crude extract diminishes or disappears as fractions become more purified.

- Root Cause: Synergistic effects (multiple compounds acting together), instability of the pure compound under isolation conditions, or irreversible adsorption to chromatographic media [11].

- Solution: Investigate synergy early. Use designed mixture experiments (e.g., testing recombined sub-fractions) to check for synergistic interactions [11]. Optimize isolation conditions: Use inert solvents, control temperature and light exposure, and consider alternative stationary phases to minimize compound degradation or loss.

- Diagnostic Step: After each purification step, recombine all inactive fractions and re-test. If activity reappears, it strongly suggests synergistic activity or that the active component was split across multiple fractions due to poor resolution.

Problem 4: Assay Interference from Extract Components

- Symptoms: False positive hits in target-based assays (e.g., enzyme inhibition) due to non-specific binding, aggregation, or fluorescence/quenching; high cytotoxicity masking specific activity in cell-based assays.

- Root Cause: Crude extracts contain "nuisance compounds" like tannins, polyphenols, lipids, or colored pigments that interfere with assay readouts [2] [14].

- Solution: Employ prefractionation with cleanup steps. Use solid-phase extraction (SPE) with selective phases (e.g., polyamide to remove polyphenols) to simplify the extract before bioassay [14]. Switch to a more robust assay format: For problematic extracts, consider moving from a biochemical assay to a phenotypic or whole-organism assay (e.g., zebrafish) that is less prone to certain interferences [12].

- Verification: Always include appropriate control experiments for assay interference, such as testing fractions in a counter-screen or using detection methods orthogonal to the primary readout.

Frequently Asked Questions (FAQs)

Q1: How can I make my BGF workflow faster and more efficient? A: Transition from large-scale, low-resolution separations to micro-scale, high-resolution platforms. Ultra-Micro-Scale-Fractionation (UMSF) using UPLC systems can fractionate sub-milligram extracts into 96- or 384-well plates in under 15 minutes, enabling direct high-throughput screening of simplified mixtures [11]. This replaces months of iterative work with a week-long, parallelizable process.

Q2: What is the best strategy to avoid isolating known compounds? A: Implement a "dereplication-first" strategy. Before embarking on full isolation, use LC-HRMS/MS to generate a chemical fingerprint of your active extract or fraction. Process this data with computational tools like the Global Natural Product Social Molecular Networking (GNPS) platform. This visual map clusters related molecules, allowing you to quickly see if your active component is related to known compounds and prioritize novel chemical scaffolds [9] [13].

Q3: My crude extract is active, but I can't isolate a single active compound. What should I do? A: This may indicate synergy or compound instability.

- Test for Synergy: Recombine your purified but inactive fractions in various combinations and re-test for activity.

- Check Stability: Analyze your pure compound immediately after isolation by NMR and HRMS to confirm it hasn't degraded.

- Consider Alternative Goals: The field is increasingly recognizing the value of defined, multi-component mixtures. If a specific combination of 2-3 compounds shows reproducible synergy, this can be a valid research outcome [11].

Q4: How little starting material do I need with modern methods? A: Modern integrated platforms can complete a full BGF cycle with as little as 20 mg of crude extract. By coupling microfractionation, microflow NMR for structure elucidation, and microtiter plate-based bioassays (e.g., using zebrafish embryos), researchers can identify bioactive compounds at the microgram scale [12].

Q5: Are there public libraries of pre-fractionated natural products to screen? A: Yes. Initiatives like the NCI Program for Natural Product Discovery (NPNPD) are creating publicly accessible libraries. The NPNPD aims to generate over 1,000,000 partially purified fractions from more than 125,000 extracts, plated in 384-well plates and available free of charge for screening against any disease [2] [14]. This bypasses the initial extraction and prefractionation bottlenecks entirely.

The following tables summarize key quantitative data related to library scale, method efficiency, and bioactive compound identification.

Table 1: Scale of Selected Natural Product Libraries [2] [14]

| Company/Institute | Sample Type | Number of Extracts | Number of Fractions | Key Feature |

|---|---|---|---|---|

| U.S. National Cancer Institute (NCI) Repository | Plant, Marine, Microbial | > 230,000 | Not Applicable | One of the world's largest and most diverse collections [14]. |

| NCI Program for Natural Product Discovery (NPNPD) | Prefractionated Libraries | > 125,000 (source) | Target: >1,000,000 | Publicly available, HTS-amenable library in 384-well plates [14]. |

| Various Academic/Industry Libraries | Prefractionated Extracts | Not Specified | Few hundred to >30,000 | Demonstrate the trend towards prefractionated sample sets for screening [2]. |

Table 2: Correlation of Molecular Features with Bioactivity in a Case Study [9] Case Study: Identifying neuroprotective compounds in Centella asiatica using 21 fractions and computational modeling.

| Rank (Elastic Net Model) | m/z Value | Annotation | Key Role in Bioactivity (Neuroprotection) |

|---|---|---|---|

| 1 | 515.1191 | Dicaffeoylquinic Acids (Di-CQAs) | Top predictor of cell viability in MC65 Alzheimer's model. |

| 2 (tie) | 353.0874 | Monocaffeoylquinic Acids (Mono-CQAs) | Strong predictor of neuroprotective activity. |

| 2 (tie) | 257.0554 | Not Annotated | High importance in Selectivity Ratio model. |

| 47 | 303.0502 | Quercetin | Top compound identified by Selectivity Ratio model. |

Experimental Protocols

Protocol 1: Ultra-Micro-Scale-Fractionation (UMSF) for High-Throughput Screening [11]

- Sample Preparation: Dissolve 1-5 mg of crude natural product extract in a suitable solvent (e.g., methanol). Centrifuge and filter (0.2 µm) to remove particulates.

- UPLC-MS Analysis & Fractionation:

- Use an analytical UPLC system equipped with a fraction collector manager (e.g., Waters W-FMA).

- Inject 1-10 µL of the sample onto a reversed-phase column (e.g., C18).

- Run a fast, linear gradient (e.g., 5-95% acetonitrile in water over 10 minutes).

- Simultaneously collect MS and UV data.

- Program the fraction collector to dispense eluent into a 96- or 384-well microtiter plate at fixed time intervals (e.g., every 0.2 or 0.5 minutes).

- Solvent Removal: Dry the plates using a centrifugal evaporator or lyophilizer.

- Bioassay: Re-dissolve fractions in a small volume of assay-compatible buffer directly in the plate and proceed with your high-throughput bioassay.

Protocol 2: Integrated Microfractionation, Bioassay, and Microflow NMR Analysis [12]

- Microfractionation: Separate 5-20 mg of extract using semi-preparative HPLC. Collect fractions based on UV peaks into 96-well plates.

- In Vivo Bioassay: Test each fraction using a microscale in vivo model (e.g., zebrafish embryo). Use a quantitative endpoint (e.g., angiogenesis inhibition, survival).

- Hit Identification & Dereplication: Analyze active fractions by HRMS and search natural product databases for known compounds.

- Microflow NMR Structure Elucidation: For novel or promising hits, inject the entire microfraction (tens of micrograms) into a microflow NMR probe. Acquire 1D and 2D NMR spectra (e.g., 1H, COSY, HSQC, HMBC) for structure determination.

- Quantitative Analysis (qNMR): Using an internal standard in the NMR solvent, quantify the amount of the bioactive compound directly in the mixture via 1H-NMR integration. This allows for accurate dose-response experiments with the isolated material.

Visualization of Workflows and Strategies

Diagram 1: Traditional BGF Bottleneck Workflow (100 chars)

Diagram 2: Modern Integrated BGF Strategy (100 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Modern Bioassay-Guided Fractionation

| Item | Function & Rationale | Key Consideration |

|---|---|---|

| Solid-Phase Extraction (SPE) Cartridges (C18, Diol, Polyamide) | Pre-fractionation and clean-up. Removes nuisance compounds (e.g., salts, polyphenols) and simplifies extracts into broad polarity-based fractions, enhancing assay compatibility [14]. | Select phase chemistry based on target compound classes and interference removal needs. |

| UPLC/HPLC Columns (Analytical & Semi-Prep, C18) | High-resolution chromatographic separation. Essential for microfractionation (UMSF) and final compound purification. Provides the peak resolution needed to separate complex mixtures [11]. | Balance between resolution, loading capacity, and solvent consumption. |

| 384-Well Microtiter Plates | The standard platform for high-throughput bioassays and fraction collection. Compatible with automated liquid handlers and readers, enabling parallel processing of hundreds of fractions [2] [11]. | Ensure plate material is compatible with your solvents and assay detection method (e.g., low fluorescence background). |

| High-Resolution Mass Spectrometer (HRMS) | The cornerstone of dereplication. Provides accurate mass for formula prediction and enables MS/MS fragmentation for structural characterization and database matching [9] [13]. | Q1TOF or Orbitrap instruments are preferred for their high mass accuracy and resolution. |

| Microflow NMR Probe | Structure elucidation at the microgram scale. Allows acquisition of critical 2D NMR spectra (COSY, HSQC, HMBC) with very limited sample, enabling structure determination early in the pipeline [12]. | Drastically reduces the amount of plant material needed and speeds up the final identification step. |

| Bioassay-Specific Reagents (e.g., MTT, Fluorogenic Substrates) | Detection of biological activity. The choice of assay endpoint (viability, enzyme activity, fluorescence) must be robust and validated for use with natural product mixtures, which may interfere [10]. | Include appropriate controls (interference, cytotoxicity) to validate hits from natural product libraries. |

The construction of high-quality natural product extract libraries is a foundational pillar of modern drug discovery. These libraries provide access to unparalleled chemical diversity, with natural products and their derivatives constituting a significant percentage of approved drugs worldwide [13]. However, the inherent complexity of natural extracts—each a unique mixture of compounds with varying polarity, solubility, and concentration—poses significant challenges for reliable screening and data interpretation [2]. Strategic standardization is therefore not merely a procedural step but a critical scientific requirement to ensure biological activity is attributable to genuine hits rather than to assay interference, nuisance compounds, or inconsistent sample preparation [2]. This technical support center is designed to guide researchers in building robust, reproducible, and high-performing natural product libraries, framed within the essential thesis that managing complexity through standardization is the key to unlocking the true potential of natural products in drug discovery.

Technical Support Center: Troubleshooting Common Experimental Challenges

Frequently Asked Questions (FAQs)

Q1: Why is prefractionation recommended over screening crude extracts? A1: Crude natural product extracts are complex mixtures that often contain colored compounds, fluorophores, or toxins that can interfere with modern high-throughput screening (HTS) assays, leading to false positives or negatives [2]. Prefractionation reduces this complexity by separating the extract into simpler fractions. This concentrates minor active metabolites, sequesters common nuisance compounds, and improves screening performance by providing higher confidence in hit identification [2].

Q2: What are the primary regulatory considerations when sourcing biological material? A2: Ethical and legal sourcing is paramount. Researchers must comply with the United Nations Convention on Biological Diversity (CBD) and the Nagoya Protocol on Access and Benefit-Sharing (ABS) [2] [15]. This requires obtaining prior informed consent from source countries and establishing mutually agreed terms for fair and equitable sharing of benefits arising from research. In countries like Brazil, research involving native biodiversity often requires registration with national systems (e.g., SisGen) and collaboration with a local institution [15].

Q3: How can I assess whether my library provides sufficient chemical diversity? A3: A combined genetic and metabolomic strategy is effective. Sequencing a barcode region (e.g., fungal ITS) clusters organisms into genetic clades [16]. Parallel LC-MS metabolomics analysis of these clades generates chemical feature accumulation curves. This data reveals how many isolates are needed to capture the majority of chemical diversity within a group, allowing for rational, data-driven library expansion [16].

Q4: What is dereplication, and why is it a critical step post-screening? A4: Dereplication is the process of rapidly identifying known compounds from active library samples early in the discovery pipeline. Its purpose is to avoid redundant investment of resources in the re-isolation of known substances. By using techniques like LC-MS with databases of known natural products, researchers can prioritize novel chemistry for further investigation [2] [13].

Troubleshooting Guide

| Problem | Possible Cause | Recommended Solution |

|---|---|---|

| High rate of false-positive hits in HTS | Assay interference from compounds in crude extracts (e.g., promiscuous inhibitors, fluorescent compounds) [2]. | Implement a prefractionation step (e.g., SPE, HPLC) to separate components [2]. Use counter-screening assays to identify and filter nuisance compounds. |

| Low biological hit rate from library | Insufficient chemical diversity; library is biased toward common metabolites [16]. | Employ clade-based collection strategies informed by genetic barcoding to target phylogenetically distinct organisms [16]. |

| Irreproducible activity during hit confirmation | Inconsistent extract composition due to variable extraction protocols or degradation [15]. | Standardize all protocols: specimen drying, particle size, solvent system, extraction time/temperature, and storage conditions. Document all parameters meticulously. |

| Difficulty isolating the active compound | Activity is due to synergy of multiple compounds, or the active is present in very low concentration [17]. | Use bioassay-guided fractionation. If activity is lost upon fractionation, test combinations of fractions for synergistic effects. Employ LC-MS to identify low-abundance ions in active fractions [13]. |

| Poor yield of extract from scaled-up material | Inefficient extraction method does not fully capture metabolites [2]. | Optimize extraction technique (e.g., switch from maceration to accelerated solvent extraction or ultrasound-assisted extraction) for the specific source material [2]. |

Standardized Methodologies for Library Construction

1. Protocol for Building a Prefractionated Natural Product Library

This protocol outlines the creation of a semi-purified fraction library from plant material, designed to reduce complexity and enhance screening reliability [2].

Step 1: Source Material Authentication & Documentation Collect voucher specimens and document taxonomy, location, date, and collector. Obtain necessary permits and comply with ABS agreements [2] [15]. Material should be cleaned, freeze-dried, and milled to a consistent particle size.

Step 2: Standardized Extraction Perform extraction using a standardized solvent system (e.g., 1:1 methanol-dichloromethane) and method (e.g., sonication for 30 min at room temperature). The goal is reproducible metabolic profiling, not exhaustive extraction. Filter and concentrate the crude extract under reduced pressure [2].

Step 3: Solid Phase Extraction (SPE) Prefractionation Use a reversed-phase C18 SPE cartridge. Condition with methanol followed by water. Load the crude extract. Elute with a step-gradient of increasing organic solvent (e.g., 20%, 50%, 80%, 100% methanol in water). This generates 4-5 fractions of increasing polarity from a single extract, simplifying the mixture [2].

Step 4: Normalization & Plating Redissolve each fraction in DMSO to a standardized concentration (e.g., 2 mg/mL for a fraction, versus 10 mg/mL for a crude extract). Transfer to 384-well plates using an automated liquid handler. Seal plates with inert seals and store at -20°C or -80°C.

2. Protocol for Chemical Diversity Assessment

This methodology uses LC-MS metabolomics and genetic data to quantitatively guide library development, ensuring maximal chemical diversity [16].

Step 1: Genetic Barcoding For microbial or fungal isolates, extract genomic DNA and amplify the Internal Transcribed Spacer (ITS) region via PCR. Sequence the amplicons and perform phylogenetic analysis to group isolates into genetic clades [16].

Step 2: LC-MS Metabolomic Profiling Prepare standardized extracts from all isolates. Analyze each extract using a consistent LC-MS method with a C18 column and a water-acetonitrile gradient with mass detection in positive and negative modes. Use software (e.g., MZmine, XCMS) to detect, align, and quantify all ion features (m/z-retention time pairs) [16].

Step 3: Generating Feature Accumulation Curves Using the metabolomic data, perform rarefaction analysis. Randomly select an increasing number of isolates from a clade and plot the cumulative number of unique chemical features detected against the number of isolates sampled. This curve shows the rate at which new chemistry is discovered [16].

Step 4: Data-Driven Library Curation Analyze the curves to determine the point of diminishing returns (e.g., where 95% of chemical features are captured). Use this to decide how many isolates per clade are necessary. Identify "singleton" features (unique to one isolate) to prioritize for preservation and bioactivity screening [16].

Data Presentation: Key Performance Metrics

Table 1: Comparative Analysis of Natural Product Library Formats This table summarizes the key characteristics of different library types, aiding in strategic selection.

| Library Format | Typical Sample Concentration | Key Advantage | Primary Challenge | Best Suited For |

|---|---|---|---|---|

| Crude Extract | 5-20 mg/mL [2] | Lower cost, faster production, captures full metabolic profile [2] | High complexity, assay interference, high false-positive rate [2] | Initial, broad-scale phenotypic screening |

| Prefractionated (SPE/HPLC) | 1-5 mg/mL (per fraction) [2] | Reduced complexity, concentrated actives, fewer nuisance compounds [2] | Higher initial production cost and time | Targeted and HTS campaigns with molecular assays |

| Pure Natural Product | 0.1-1 mM | No interference, straightforward structure-activity relationship (SAR) | Extremely resource-intensive to isolate and curate | Confirmatory screening and lead optimization |

Table 2: Essential Research Reagent Solutions for Extract Library Work This table lists critical materials and their functions in the library construction and analysis pipeline.

| Reagent / Material | Function in Library Construction | Key Consideration |

|---|---|---|

| Solid Phase Extraction (SPE) Cartridges (C18, Diol, Ion-Exchange) | Prefractionates crude extracts by polarity or chemical function, reducing complexity for screening [2]. | Select cartridge chemistry based on target compound classes in your source material. |

| LC-MS Grade Solvents | Used for extraction, chromatography, and mass spectrometry to minimize background noise and ion suppression. | Purity is critical for reproducible chromatographic separation and sensitive MS detection [13]. |

| Stable Isotope-Labeled Internal Standards | Enables quantitative metabolomics and corrects for instrument variability during chemical diversity assessment [16]. | Use a mix of standards covering a range of polarities and masses. |

| Standardized Natural Product Reference Compounds | Serves as controls for dereplication via LC-MS retention time and fragmentation pattern matching [13]. | Build a curated in-house library of common secondary metabolites relevant to your source organisms. |

| Bioassay-Ready Solvent (e.g., DMSO) | Universal solvent for re-dissolving dried extracts/fractions for biological screening. | Ensure high purity and store under anhydrous conditions to prevent sample degradation. |

Visualization of Workflows and Relationships

Standardized Workflows for Extract Library Construction & Assessment

SPE Prefractionation Simplifies Crude Extract Complexity

Technical Support Center for Complex Mixture Research

This technical support center is designed to assist researchers navigating the challenges of screening and characterizing complex natural product libraries. The guidance is framed within the critical thesis that effective handling of these mixtures—from crude extracts to semi-purified fractions—is foundational to successful dereplication, target identification, and the eventual development of synthetic mimetics.

Section 1: Troubleshooting Common Experimental Issues

FAQ 1: Our high-throughput screening (HTS) of a crude extract library is yielding an unacceptably high rate of false positives or nonspecific inhibition. What steps should we take?

- Problem Analysis: Crude extracts are complex mixtures containing compounds that can interfere with assay readouts (e.g., colored compounds, fluorophores, reactive toxins) or cause general cytotoxicity [2].

- Solution & Protocol:

- Confirm Activity: Repeat the assay for the putative "hit" extracts. Use a dose-response curve to assess potency and confirm the effect is concentration-dependent [18].

- Employ Orthogonal Assays: Test active extracts in a functionally different secondary assay targeting the same biological pathway. True hits should show activity across multiple relevant assays.

- Implement Prefractionation: Transition to a semi-purified fraction library. A single-step solid-phase extraction (SPE) or low-resolution HPLC prefractionation can separate major nuisance compounds from active components, significantly improving assay performance and hit confidence [2].

- Include Robust Controls: Ensure your assay plate includes controls for nonspecific inhibition, fluorescence/quenching interference, and general cell health (viability assays) [18].

FAQ 2: During the dereplication of an active fraction using LC-MS, the mass spectra are overly complex, and we cannot pinpoint the active constituent. How do we proceed?

- Problem Analysis: Semi-purified fractions, while less complex than crude extracts, still contain multiple compounds with similar physicochemical properties.

- Solution & Protocol:

- Fractionation & Bioactivity-Guided Isolation: Sub-fractionate the active primary fraction using a orthogonal chromatographic method (e.g., if the first pass was reversed-phase HPLC, use a normal-phase or size-exclusion method). Test all sub-fractions for biological activity to pinpoint the active sub-pool [2].

- Apply Advanced MS Techniques: For the active sub-fraction, switch from single-stage MS to tandem MS/MS (e.g., GC/MS/MS or LC-MS/MS). This provides fragmentation patterns that are crucial for structural elucidation and database searching [19].

- Utilize Accurate Mass: Use high-resolution accurate mass (HRAM) spectrometry (e.g., GC/Q-TOF) to determine the elemental composition of molecular ions, dramatically narrowing the list of possible candidates [19] [20].

- Cross-Check Specialized Databases: Search the obtained accurate mass and MS/MS spectra against natural product-specific databases (e.g., NAPROC-13, LOTUS) in addition to general libraries like NIST [19].

FAQ 3: Our GC-MS analysis for metabolite profiling is showing poor peak shape, low sensitivity, or inconsistent results. What are the key maintenance and setup checks?

- Problem Analysis: GC-MS performance degrades due to column issues, a dirty ion source, improper calibration, or suboptimal method parameters [19].

- Solution & Protocol:

- System Suitability Test: Regularly run a test mixture of known standards. Check for parameters like peak resolution, symmetry, retention time stability, and signal-to-noise ratio [19].

- Maintain the Ion Source: A dirty source is a leading cause of sensitivity loss. Follow a routine cleaning schedule or consider instrumentation with self-cleaning ion source technology [19].

- Verify Instrument Tune: Perform an autotune (and periodic "check tunes") to ensure the mass spectrometer's electronic parameters (lens voltages, detector gain) are optimized for peak performance [19].

- Check the GC System: Inspect the liner and septum for degradation, ensure the carrier gas is pure and leak-free, and confirm the GC oven temperature program is stable. For active compounds, use an Ultra Inert liner and column to reduce adsorption and tailing [19].

- Optimize Sample Preparation: Ensure your sample is clean and dry. Use appropriate derivatization techniques for non-volatile metabolites, and select a suitable internal standard (e.g., a stable isotope-labeled analog) that elutes near your analytes of interest [19].

FAQ 4: We have isolated a pure natural product hit and want to develop a synthetic mimetic. How do we use spectroscopic data to guide synthetic chemistry?

- Problem Analysis: The leap from structure elucidation to synthetic design requires identifying the core pharmacophore and regions amenable to modification.

- Solution & Protocol:

- Define the Minimum Pharmacophore: Use data from structure-activity relationship (SAR) studies. If sub-fractions or analogs are available, correlate structural features (e.g., presence/absence of a hydroxyl group, stereochemistry) with biological activity to identify essential moieties.

- Analyze for Synthetic Handles: Examine the 2D NMR spectra (COSY, HSQC, HMBC) to identify key carbon-carbon and carbon-hydrogen connectivities. Look for simpler, synthetically accessible fragments within the complex molecule that can serve as starting points for a total synthesis or a divergent synthesis library.

- Plan for Analog Generation: Identify sites on the molecule that are likely tolerant of modification (e.g., ester groups, non-critical hydroxyls). These become points for introducing diversity via semi-synthesis or for improving drug-like properties (e.g., solubility, metabolic stability).

Section 2: Quantitative Data and Sample Library Comparisons

The following table summarizes key characteristics of different sample types used in natural product screening, highlighting the trade-offs between complexity, cost, and informational value [2].

Table 1: Comparison of Natural Product Sample Types for Screening Libraries

| Sample Type | Typical Composition | Relative Screening Cost | Hit Confidence | Downstream Work (Dereplication) | Primary Utility |

|---|---|---|---|---|---|

| Crude Extract | Thousands of compounds, full metabolic profile | Low | Low; high interference potential | Very High; highly complex mixtures | Initial, low-cost biodiversity surveys |

| Semi-Purified Fraction | 10s-100s of compounds, simplified mixtures | Medium | High; reduced interference | Moderate; simplified mixtures | Mainstream HTS campaigns, reliable hit identification |

| Pure Natural Product | Single chemical entity | Very High (isolation cost) | Definitive | None (structure known) | SAR studies, mechanism of action, synthetic target |

| Synthetic Mimetic | Single chemical entity | High (synthesis cost) | Definitive | None (structure known) | Lead optimization, patentability, scalable production |

The performance of analytical instruments is critical for dereplication. The table below outlines key specifications for common mass spectrometry configurations [19] [20].

Table 2: Key Specifications for Mass Spectrometry Methods in Dereplication

| MS Configuration | Ionization Technique | Typical Mass Accuracy | Key Advantage for Natural Products | Best Use Case |

|---|---|---|---|---|

| GC-MS (Single Quad) | Electron Ionization (EI) | Unit mass (1 Da) | Extensive, searchable library spectra (e.g., >300,000 in NIST) [19] | Volatile metabolite profiling, dereplication of known compounds |

| GC-MS/MS (Triple Quad) | EI or Chemical Ionization (CI) | Unit mass | High selectivity in MRM mode; reduces background noise | Targeted analysis of specific compound classes in complex matrices |

| GC/Q-TOF | EI, CI, or Low-energy EI | High Resolution (<5 ppm) | Accurate mass for elemental composition; soft ionization preserves molecular ion [19] | Identification of unknown compounds, structural elucidation |

| LC-MS/MS (Q-TOF) | Electrospray (ESI) | High Resolution (<5 ppm) | Analysis of non-volatile, polar compounds; MS/MS for sequencing | Peptide, glycoside, and other large NP analysis; biomolecule interaction |

Section 3: Detailed Experimental Protocols

Protocol 1: Generation of a Semi-Purified Natural Product Fraction Library via Solid-Phase Extraction (SPE) [2]

- Objective: To partially purify a crude natural product extract, sequestering common nuisance compounds (e.g., tannins, chlorophyll) and enriching secondary metabolites into distinct fractions based on polarity.

- Materials: Crude dry extract, C18 or polyamide SPE cartridges (e.g., 500 mg/6 mL), HPLC-grade solvents (water, methanol, ethyl acetate, hexane), vacuum manifold, collection tubes.

- Procedure:

- Conditioning: Activate the C18 sorbent by passing 5 mL of methanol through the cartridge, followed by 5 mL of water. Do not let the cartridge run dry.

- Sample Loading: Dissolve 50-100 mg of crude extract in a minimal volume of water-methanol mixture (e.g., 1:1). Load the solution onto the conditioned cartridge.

- Stepwise Elution (Fractionation): Apply a gradient of solvents of increasing elution strength, collecting each eluate separately in a pre-weighed tube. A typical sequence may be:

- Fraction 1: 5 mL H₂O (elutes polar salts, sugars).

- Fraction 2: 5 mL 25% Methanol/H₂O.

- Fraction 3: 5 mL 50% Methanol/H₂O.

- Fraction 4: 5 mL 75% Methanol/H₂O.

- Fraction 5: 5 mL 100% Methanol (elutes mid-polarity compounds).

- Fraction 6: 5 mL Ethyl Acetate.

- Fraction 7: 5 mL Hexane (elutes non-polar lipids, chlorophyll).

- Concentration: Evaporate all fractions to dryness under a gentle stream of nitrogen or by centrifugal evaporation. Weigh the dried fractions to determine yield.

- Storage & Plating: Re-dissolve each fraction in DMSO at a standardized concentration (e.g., 10 mg/mL) for storage and transfer into 384-well assay plates.

Protocol 2: Dereplication of an Active Fraction Using LC-HRMS/MS and Database Mining

- Objective: To identify the chemical structure of a bioactive compound within an active semi-purified fraction.

- Materials: Active dried fraction, UHPLC system coupled to a high-resolution tandem mass spectrometer (e.g., Q-TOF), data analysis software, natural product and generic MS/MS spectral libraries.

- Procedure:

- LC-HRMS Analysis: Reconstitute the fraction in a suitable solvent and inject onto a reversed-phase UHPLC column. Use a water-acetonitrile gradient with 0.1% formic acid. Acquire data in both positive and negative electrospray ionization modes with data-dependent acquisition (DDA) enabled. The DDA method should select the top N most intense ions from the full MS scan for subsequent MS/MS fragmentation.

- Data Processing: Process the raw data to find chromatographic peaks. The software will generate a list of molecular features, each with a retention time, measured accurate mass (m/z), and associated MS/MS spectrum.

- Database Search:

- Perform a precursor ion search using the measured accurate mass (± 5 ppm) against natural product databases.

- For significant matches, compare the experimental MS/MS spectrum with the library reference spectrum. A high spectral match score (e.g., >80%) indicates a probable identity.

- If no match is found, use the accurate mass to calculate possible elemental formulas. Use the MS/MS fragmentation pattern to propose a partial structure or identify characteristic substructures [20].

- Validation: If a standard for the proposed compound is commercially available, co-inject it with your sample to confirm matching retention time and mass spectra. Otherwise, proceed to micro-scale isolation for NMR confirmation.

Section 4: Visual Workflows for Experiment Design and Analysis

Workflow for Natural Product Discovery to Synthetic Mimetic

Dereplication Strategy for an Active Fraction

Section 5: The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for Natural Product Library Research

| Item | Function / Application | Key Considerations |

|---|---|---|

| Solid-Phase Extraction (SPE) Cartridges (C18, Polyamide) | Prefractionation of crude extracts to remove nuisance compounds and simplify mixtures [2]. | Select sorbent chemistry based on target compound classes (C18 for broad-range, polyamide for polyphenols). |

| Ultra-Inert (UI) GC Liners & Columns | Gas chromatography analysis of volatile metabolites; reduces adsorption and tailing of active compounds [19]. | Essential for maintaining peak shape and sensitivity, especially for trace-level or polar analytes. |

| High-Resolution Accurate Mass (HRAM) Mass Spectrometer | Provides exact mass measurements for elemental composition determination and confident compound identification [19] [20]. | Q-TOF and Orbitrap instruments are industry standards for dereplication workflows. |

| Stable Isotope-Labeled Internal Standards | Used in quantitative GC-MS or LC-MS to correct for sample loss and matrix effects during analysis [19]. | Deuterated analogs of target analytes are ideal for ensuring accurate quantification. |

| 384-Well Microtiter Plates | Standard format for high-throughput screening of extract and fraction libraries [2]. | Use low-binding plates to prevent adsorption of hydrophobic natural products. |

| Electron Ionization (EI) & Chemical Ionization (CI) Sources | GC-MS ionization; EI provides reproducible, library-searchable spectra, while CI is a "softer" technique that preserves the molecular ion [19]. | Most analyses use EI; CI is valuable when the molecular ion is weak or absent in EI mode. |

| NIST/ Wiley Mass Spectral Libraries | Reference databases for compound identification by matching experimental GC-EI-MS spectra to known standards [19]. | The NIST library contains >300,000 spectra and is a foundational tool for dereplication. |

The Modern Deconvolution Toolkit: Advanced Techniques for Isolating and Identifying Bioactives

Within the broader thesis of handling complex mixtures in natural product extract libraries, a significant challenge lies in efficiently identifying novel bioactive compounds amidst thousands of known metabolites [21]. Traditional bioassay-guided fractionation, while effective, is often slow and labor-intensive, risking the re-isolation of known compounds. Conversely, high-throughput dereplication can quickly annotate metabolites but may overlook novel or synergistically active components [22]. The evolved workflow integrates these two paradigms, creating a cyclical, informatics-driven process where biological activity and chemical annotation continuously inform each other. This technical support center is designed to help researchers implement and troubleshoot this integrated approach, which is critical for advancing drug discovery from natural sources [21] [23].

Troubleshooting Guide: Common Experimental Issues & Solutions

This section addresses specific, practical problems researchers may encounter when implementing the integrated workflow.

Issue Category 1: Bioassay Interference & False Positives

- Problem: Non-selective activity or cytotoxicity in initial crude extracts, halting further fractionation.

- Root Cause: Often due to polyphenols (tannins) or other nuisance compounds that cause false positives by non-specifically binding proteins or altering cellular redox potential [24].

- Solution:

- Implement a Pre-fractionation Clean-up Step: Use polyamide solid-phase extraction (SPE) cartridges to selectively remove polyphenols prior to biological screening [24].

- Protocol - Polyamide SPE for Polyphenol Removal:

- Condition cartridge with methanol, then water.

- Load crude extract dissolved in water.

- Elute non-polyphenolic compounds with a stepwise gradient of methanol in water (e.g., 20%, 50%, 100%). Most flavonoids and desired alkaloids will elute, while polyphenols remain bound [24].

- Test for polyphenol removal using a FeCl₃ solution spot test: a bluish-black (hydrolyzable tannins) or greenish-black (condensed tannins) color indicates presence [24].

- Adjust Screening Concentration: Titrate the concentration of crude extracts in primary assays to a level that minimizes non-specific toxicity while retaining specific activity.

Issue Category 2: Inefficient or Low-Resolution Fractionation

- Problem: Poor separation during chromatography leads to complex fractions that obscure structure-activity relationships.

- Root Cause: Inappropriate chromatographic scale, solvent system, or method for the extract's chemical complexity.

- Solution:

- Adopt an Automated High-Throughput Fractionation System: As described by [24], a system using preparative HPLC with automated fraction collection, drying, and weighing can process thousands of samples per year, yielding 0.5-10 mg fractions suitable for 384-well plate screening.

- Protocol - Automated Reversed-Phase Fractionation:

- Column: C18 preparative column.

- Mobile Phase: Methanol and water (no additives to maximize broad compound compatibility).

- Gradient: 2% to 100% methanol over 12-15 minutes, hold at 100% methanol for 6 minutes to elute non-polar compounds.

- Collection: Collect fractions every 30 seconds [24].

- Detection: Use both Photodiode Array (PDA) and Evaporative Light Scattering (ELSD) detectors in tandem. ELSD signal often correlates well with fraction mass [24].

Issue Category 3: Failed or Ambiguous Dereplication

- Problem: Mass spectrometry data does not lead to confident compound identification, or known compounds are incorrectly prioritized.

- Root Cause: Over-reliance on molecular formula search alone; inadequate MS/MS fragmentation data; poor database matching [22].

- Solution:

- Utilize Molecular Networking: Platforms like GNPS (Global Natural Products Social Molecular Networking) can visualize the chemical relationships within your fractions. Clusters of similar MS/MS spectra often correspond to structurally related compounds, guiding isolation toward novel scaffolds [23].

- Protocol - LC-MS/MS for Dereplication:

- Use High-Resolution ESI-MS (e.g., Q-TOF, Orbitrap).

- Employ Data-Dependent Acquisition (DDA): Perform MS/MS on the top 5-10 most intense ions from the full scan.

- Process raw data with software like MZmine to detect features, align peaks, and annotate isotopes.

- Search processed data against specialized NP databases (e.g., Dictionary of Natural Products, AntiMarin) using both molecular formula and MS/MS spectral matching where possible [22].

- Target Halogenated Clusters: For marine invertebrates, prioritize clusters with isotopic patterns indicative of bromine or chlorine, as these are often bioactive and taxonomically significant [23].

Frequently Asked Questions (FAQs)

Q1: How do we balance throughput with the need for sufficient material for structure elucidation? A1: The evolved workflow is designed for efficient triage. High-throughput fractionation generates sub-milligram quantities suitable for hundreds of bioassays in nanoliter formats [24]. Only fractions displaying promising and reproducible activity are scaled up. The key is using microgram-scale analytical techniques (microcoil NMR, capillary HPLC) early in the dereplication phase to obtain structural hints before committing to larger-scale isolation.

Q2: Our active fraction contains a mixture of several compounds with similar masses. How do we pinpoint the true active? A2: This is a core strength of integration. First, use molecular networking to see if all compounds are structurally related (suggesting a compound family). Second, employ bioactivity correlation: if you have a series of sub-fractions with varying potencies, plot bioactivity against the chromatographic peak area/intensity of each candidate compound. The one with the strongest correlation is the most likely active constituent [23].

Q3: What are the most common pitfalls in interpreting LC-MS/MS data for dereplication? A3:

- Misassigning Molecular Ions: Mistaking in-source fragments (e.g., [M+H-H₂O]⁺) or adducts (e.g., [M+Na]⁺) for the protonated molecule [22].

- Solution: Carefully examine the chromatogram for related peaks with mass differences corresponding to common neutral losses (18 Da for water, 44 Da for CO₂) or adducts (22 Da difference between [M+H]⁺ and [M+Na]⁺).

- Overlooking Isomers: Many natural products share the same molecular formula [22].

- Solution: Do not rely on accurate mass alone. Use retention time and, critically, MS/MS spectral comparison against standards or literature data. Isomers often have distinct fragmentation patterns.

Q4: How can we manage the data from these parallel processes? A4: A Laboratory Information Management System (LIMS) or a dedicated workflow application is essential [24]. It should link sample IDs, chromatographic data (PDA, ELSD traces), mass spectra, fraction weights, biological assay results (e.g., IC₅₀ values), and dereplication annotations in a searchable format. This integrated data view is critical for making informed decisions.

Data Presentation: Key Metrics from Integrated Workflows

Table 1: Performance Metrics of an Automated High-Throughput Fractionation System [24]

| Metric | Specification/Output | Implication for Workflow |

|---|---|---|

| System Throughput | ~2,600 unique extracts/year | Enables screening of large, diverse libraries. |

| Fraction Output | ~62,000 fractions/year | Generates a vast resource for HTS campaigns. |

| Fraction Mass Range | 0.5 - 10 mg | Sufficient for 100s of assays using nanogram transfers. |

| Polyphenol Removal Recovery | 49.3% - 84.4% (Avg. ~60%) | Significant mass loss acceptable for removing assay interferents. |

| Chromatographic Resolution | 24 fractions/extract (30-sec intervals) | Good separation for medium-complexity mixtures. |

Table 2: Bioactivity Tracking During Integrated Isolation of a Marine Sponge Metabolite [23]

| Fraction / Step | IC₅₀ on HepG2 Cells (µg/mL) | Action & Rationale |

|---|---|---|

| Crude Organic Extract | 214.29 ± 2.06 | Proceed with fractionation; confirmed baseline activity. |

| RP-C18 Fraction A4 | 134.28 ± 1.82 | Selected for dereplication; showed increased potency. |

| HPLC Sub-fraction (A4_HPLC 3) | 37.49 ± 1.94 | Activity peak; target for isolation and structure elucidation. |

| Isolated Pure Compound (N,N,N-trimethyl-3,5-dibromotyramine) | 37.49 ± 1.94 (Confirmed) | Validated target. Dereplication via molecular networking confirmed it was a brominated alkaloid cluster. |

Detailed Experimental Protocols

Protocol 1: Integrated Bioassay-Guided Fractionation with Real-Time Dereplication

This protocol outlines the core cyclical workflow.

- Primary Screening & Triage: Screen a prefractionated library in a target bioassay. Select hits with a threshold activity (e.g., IC₅₀ < 100 µg/mL).

- Microscale Re-fractionation & Analysis: Subject the active crude extract to an analytical-scale LC separation, collecting 96+ micro-fractions in a plate. Use a splitter to simultaneously analyze via HR-LC-MS/MS.

- Dereplication & Network Analysis:

- Process MS data with MZmine.

- Upload to GNPS for molecular networking.

- Annotate nodes using databases.

- Correlate the bioactivity of micro-fractions (from a parallel miniaturized bioassay) with specific clusters in the network.

- Targeted Isolation: Scale up the isolation of compounds from the cluster most correlated with bioactivity.

- Validation & Mechanism: Confirm the activity of the pure compound and initiate mechanistic studies (e.g., gene expression as in [23]).

Protocol 2: Rapid LC-MS/MS Profiling for Dereplication

- Instrument Setup:

- Column: C18 (50 x 2.1 mm, 1.7-1.8 µm).

- Gradient: 5-100% Acetonitrile in water (with 0.1% formic acid) over 15-20 min.

- MS: ESI positive/negative switching, DDA mode. Resolution > 25,000.

- Data Processing (MZmine): Perform peak picking, deisotoping, alignment, and gap filling. Export feature lists (m/z, RT, intensity) and associated MS/MS spectra.

- Database Query: Search exact mass (± 5 ppm) against an in-house or commercial NP database. For matches, compare isotopic patterns and MS/MS spectra if available.

Workflow & Process Visualizations

Diagram 1: Integrated NP Discovery Workflow. This cyclical process integrates biological screening with chemical analysis to prioritize novel bioactive compounds [24] [23].

Diagram 2: Dereplication Decision Logic. The process for determining whether an active contains novel or known compounds, guiding the decision to isolate or deprioritize [22] [23].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Equipment & Materials for the Integrated Workflow

| Item | Function in Workflow | Key Specification/Note |

|---|---|---|

| Polyamide SPE Cartridges | Pre-fractionation to remove polyphenols and tannins, reducing assay false positives [24]. | Test loading capacity (~700 mg polyamide per 100 mg extract) [24]. |

| Automated Prep-HPLC System | High-throughput, reproducible fractionation of active extracts into discrete samples for screening and analysis [24]. | Should interface with auto-samplers, fraction collectors, and weighing stations. |

| Photodiode Array (PDA) & Evaporative Light Scattering (ELSD) Detectors | Complementary detection during prep-HPLC. PDA identifies chromophores; ELSD responds to non-UV active compounds and correlates with mass [24]. | Use in tandem for comprehensive detection. |

| High-Resolution LC-MS/MS System | Core of dereplication. Provides accurate mass for formula assignment and MS/MS spectra for structural comparison/networking [22] [23]. | Q-TOF or Orbitrap with ESI source capable of Data-Dependent Acquisition. |

| Molecular Networking Software (GNPS) | Visualizes relationships between MS/MS spectra from fractions, grouping similar compounds to identify novel chemical families [23]. | Cloud-based platform; requires formatted .mzML or .mzXML files. |

| Microtiter Plates (384-/1536-well) | Enable miniaturized bioassays and nanogram-scale compound screening, matching the scale of fraction output [24]. | Compatible with liquid handling robots and plate readers. |

Thesis Context: Managing Complexity in Natural Product Libraries

The research journey from a complex natural product extract to a characterized bioactive compound is fraught with bottlenecks. Modern high-throughput screening (HTS) of the vast chemical space contained within natural product libraries is hindered by the inherent complexity of the extracts, which can cause assay interference and obscure true hits [2]. The subsequent processes of dereplication (identifying known compounds), isolating novel entities, and elucidating their structures remain time-intensive [25]. This technical support center is framed within a thesis aimed at overcoming these hurdles through an integrated workflow. The core thesis posits that by applying machine learning (ML) for in-silico bioactivity prediction and advanced analytics for mixture deconvolution, researchers can strategically prioritize the most promising leads from complex libraries, thereby accelerating the discovery pipeline.

Technical Support Center: Troubleshooting Guides & FAQs

This section addresses common technical challenges encountered when integrating AI/ML and advanced analytical techniques into natural product research.

Troubleshooting Guide 1: Poor Performance in Bioactivity Prediction from Genomic Data

- Problem: Machine learning models trained on biosynthetic gene cluster (BGC) data show low accuracy or poor generalizability when predicting bioactivity (e.g., antibacterial, antifungal).

- Investigation & Solution:

- Check Training Data Quality & Balance: The most common issue is an imbalanced or small training set. For example, a model trained to predict antifungal activity may fail if such BGCs represent only 20% of the data [26]. Solution: Apply synthetic minority over-sampling techniques (SMOTE) or collect more data for underrepresented classes.

- Evaluate Feature Selection: Models relying on limited genetic features (e.g., only PFAM domains) may lack predictive power [26]. Solution: Expand the feature vector to include sub-PFAM domains (from sequence similarity networks), resistance gene identifiers (RGI), and predictions of chemical substructures (e.g., sugars, halogens) [26].

- Validate Model Rigorously: Accuracy metrics can be misleading. Solution: Use 10-fold cross-validation and report balanced accuracy, especially for imbalanced datasets. Compare model performance against a classifier trained on scrambled data to ensure it learns true signals [26].

Troubleshooting Guide 2: Challenges in Annotating Metabolites from Complex Mixtures

- Problem: LC-HRMS/MS data from prefractionated libraries yields thousands of features, but manual annotation via spectral matching is slow and hits are limited by reference library coverage [27].

- Investigation & Solution:

- Leverage Molecular Networking: Group MS/MS spectra into molecular networks based on spectral similarity. This clusters related analogues and can propagate annotations within a cluster [27].

- Implement Annotation Tools: Use platforms like SNAP-MS (Structural similarity Network Annotation Platform for Mass Spectrometry), which annotates molecular networking clusters by matching the distribution of molecular formulae in a subnetwork to known compound families, bypassing the need for a direct spectral match [27].

- Apply In-Silico MS/MS Prediction: For novel scaffolds not in libraries, use tools that predict MS/MS fragmentation patterns from chemical structures to rank candidate matches [27].

Frequently Asked Questions (FAQs)

Q1: We have a large library of microbial extracts. Should we screen crude extracts or prefractionated libraries?

- A: Prefractionation is generally recommended for modern target-based HTS. While more expensive initially, it reduces complexity, concentrates minor metabolites, sequesters nuisance compounds (like tannins or pigments), and leads to higher confidence hit rates and streamlined downstream isolation [2].

Q2: How can we make our chromatographic isolation workflow more efficient and targeted?

- A: Move from traditional bioassay-guided fractionation to a "profiling-guided" approach. Use UHPLC-HRMS/MS for in-depth metabolite profiling of active extracts to pinpoint the exact features correlating with activity. Then, use chromatographic calculation software to accurately transfer the high-resolution analytical separation conditions to the semi-preparative scale for targeted isolation of those specific peaks [25].

Q3: Are there sustainable ("green") alternatives for our chromatography work that won't compromise performance?

- A: Yes. Techniques like Supercritical Fluid Chromatography (SFC), which uses recycled CO₂ as the primary mobile phase, and Micellar Liquid Chromatography (MLC) can significantly reduce consumption of toxic organic solvents. Natural Deep Eutectic Solvents (NADES) are also emerging as green alternatives for extraction [28].

Q4: Can AI really predict toxicity early in the discovery process?

- A: Yes, predictive toxicology is a major application of AI in drug discovery. ML models can integrate chemical structures, omics data, and historical assay data to forecast adverse drug reactions (ADRs) and toxicity risks, helping to prioritize safer compounds and reduce late-stage attrition [29].

Detailed Experimental Protocols

Protocol 1: Building a Classifier for BGC-based Bioactivity Prediction

This protocol outlines the method for training a machine learning model to predict biological activity from Biosynthetic Gene Cluster sequences [26].

- Dataset Curation: Assemble a labeled dataset from the MIBiG database. For each BGC, manually curate literature-reported bioactivities (e.g., antibacterial, antifungal) as binary labels (active/inactive).

- Feature Engineering:

- Run BGC sequences through antiSMASH to identify core biosynthetic genes and domains.

- Annotate protein families (PFAM), biosynthetic domains (CDS motifs, smCOGs), and predicted chemical monomers.

- Use the Resistance Gene Identifier (RGI) to flag potential resistance genes.

- For enriched PFAM domains, perform Sequence Similarity Network (SSN) analysis to create more precise sub-PFAM features.

- Encode each BGC as a feature vector counting the occurrences of all annotations.

- Model Training & Validation:

- Test classifiers like Random Forest, Support Vector Machine (SVM), and Logistic Regression (e.g., using Python's scikit-learn).

- Optimize hyperparameters via grid search.

- Critical: Evaluate performance using 10-fold cross-validation and report the balanced accuracy metric to account for class imbalance.

- Validate that the model performs significantly better (p < 0.001) than a classifier trained on scrambled/randomized feature data.

Table 1: Example Performance of BGC Classifiers (Based on [26])

| Predicted Activity | Best Model Balanced Accuracy | Key Predictive Features |

|---|---|---|

| Antibacterial (Broad) | ~80% | Presence of specific resistance genes (RGI), certain sub-PFAM domains related to peptide synthesis [26]. |

| Anti-Gram-positive | ~78% | Similar to broad antibacterial, with specific monomer predictions [26]. |

| Antifungal | ~57-64% | Often co-occurs with antitumor/cytotoxic activity; prediction benefits from a combined "anti-eukaryotic" class [26]. |

| Antitumor/Cytotoxic | ~69-73% | Features related to polyketide synthase (PKS) tailoring domains and oxidation levels [26]. |

Protocol 2: AI-Driven Virtual Screening for Bioactivity

This protocol describes a virtual screening workflow to prioritize compounds from libraries against a specific target, as demonstrated for SARS-CoV-2 3CLpro [30].

- Data Collection & Curation: Gather bioactivity data (IC50, Ki, active/inactive labels) for your target from public databases like ChEMBL and PubChem. Carefully clean the data, removing duplicates and standardizing measurements.

- Molecular Featurization: Compute molecular descriptors or fingerprints (e.g., using PaDEL software) for all compounds. These numerical representations capture structural and physicochemical properties.

- Model Development:

- Split data into training and test sets.

- Train and compare multiple ML classifiers (e.g., XGBoost, Random Forest, Neural Networks).

- For deep learning models, consider architectures like Graph Convolutional Networks (GCNs) that operate directly on molecular graphs.

- Interpretation & Prioritization:

- Use explainable AI (XAI) tools like SHAP (SHapley Additive exPlanations) to identify which molecular substructures contribute most to predicted activity.

- Use the trained model to screen an in-house virtual compound library or a purchasable library, ranking compounds by predicted activity.

- Select the top-ranked compounds for in vitro experimental validation.

Table 2: Selected AI/ML Tools for Natural Product Research

| Tool Name | Primary Application | Key Feature | Access |

|---|---|---|---|

| DeepChem | General ML in Drug Discovery | Open-source Python library with pre-built models for toxicity, activity prediction [31]. | Open-Source |

| IBM RXN | Retrosynthesis & Reaction Prediction | Predicts forward chemical reactions and plans retrosynthetic pathways [31]. | Freemium |

| SNAP-MS | Metabolite Annotation | Annotates molecular networking clusters using formula distributions without need for MS/MS libraries [27]. | Open Access Web Tool |

| Schrödinger Suite | Molecular Modeling & Docking | Physics-based and AI-enhanced platform for virtual screening and binding affinity prediction [31]. | Commercial |

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials for Integrated AI/Analytics Workflow

| Item / Reagent | Function in the Workflow | Specific Application Notes |

|---|---|---|

| antiSMASH Software | Identifies and annotates Biosynthetic Gene Clusters (BGCs) in genomic data [26]. | Critical for generating the feature vectors used to train BGC-based bioactivity predictors. |

| PaDEL Descriptor Software | Calculates chemical fingerprints and molecular descriptors from compound structures [30]. | Converts chemical structures into numerical data suitable for machine learning model training. |

| HPLC/SFC-grade CO₂ | Mobile phase for Green Chromatography. | Primary solvent in Supercritical Fluid Chromatography (SFC), reducing organic solvent use [28]. |

| Natural Deep Eutectic Solvents (NADES) | Green extraction and chromatography solvent. | Biodegradable solvents formed from natural primary metabolites, used in sample prep and separations [28]. |

| Semi-Preparative HPLC Columns (e.g., C18, 5µm) | High-resolution purification of target compounds. | Used for the final targeted isolation of compounds pinpointed by analytical profiling [25]. |

| MIBiG & NP Atlas Databases | Curated repositories of known natural products and their BGCs. | Essential sources of training data for AI models and reference for dereplication [26] [27]. |

Workflow Visualization

AI-Powered Workflow for Complex Mixture Analysis

Annotation of Complex Mixtures via Molecular Networking

Technical Support Center: Troubleshooting Complex Natural Product Extractions

Welcome to the Technical Support Center for Modern Extraction Methodologies. This resource is designed for researchers and drug development professionals working with complex natural product extract libraries. Efficiently navigating the challenges of extraction and separation is critical for obtaining high-yield, high-purity bioactive compounds for downstream analysis and screening. The following guides address common operational issues, provide preventive protocols, and frame solutions within the context of handling intricate biological matrices [32].

Core Troubleshooting Guides

This section provides diagnostic flowcharts and targeted solutions for the most frequent issues encountered in modern extraction and purification workflows.

1.1. Extraction Process Troubleshooting

Problems during the initial extraction can compromise yield and quality. Use this guide to diagnose common issues with Ultrasound-Assisted Extraction (UAE) and Supercritical Fluid Extraction (SFE).

1.2. Chromatographic Separation Troubleshooting

Following extraction, chromatographic purification is often hindered by peak anomalies. This guide addresses common HPLC/GC issues critical for isolating pure compounds from complex mixtures [35] [36].

Frequently Asked Questions (FAQs)

Q1: We have a limited amount of rare plant material. Which extraction technique should we prioritize to maximize information from a single sample?

- A: For precious, limited samples, Ultrasound-Assisted Extraction (UAE) is often recommended for initial screening. It requires smaller sample amounts (e.g., 1-5 g), uses less solvent, and is highly effective at disrupting cells to release a broad profile of metabolites quickly [34] [32]. The extract can then be used for initial bioactivity screening and chemical profiling. For targeted isolation of specific non-polar compounds, SFE can be applied subsequently or in a hybrid approach, as it provides cleaner extracts with less co-extraction of chlorophylls and waxes, simplifying downstream analysis [33] [32].