Navigating Chemical Space: A Comparative Analysis of Natural Products, Approved Drugs, and Combinatorial Libraries

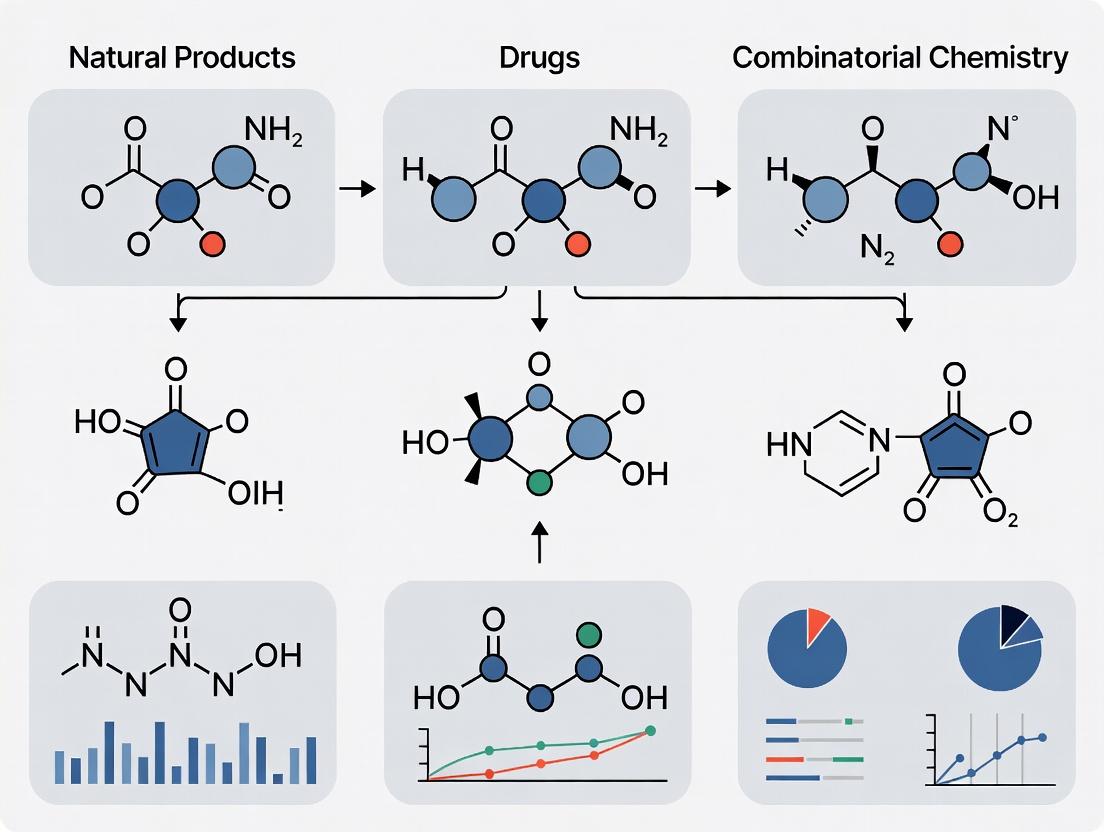

This article provides a comprehensive analysis for researchers and drug development professionals on the distinct and overlapping regions of chemical space occupied by natural products (NPs), approved drugs, and combinatorial...

Navigating Chemical Space: A Comparative Analysis of Natural Products, Approved Drugs, and Combinatorial Libraries

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on the distinct and overlapping regions of chemical space occupied by natural products (NPs), approved drugs, and combinatorial compounds. It explores foundational definitions and historical evolution, delves into modern computational methodologies for exploration and analysis, addresses key challenges in data and methodology, and presents a comparative validation of their structural diversity and biological relevance. The synthesis offers actionable insights for library design and future hybrid strategies in drug discovery.

Defining the Terrain: Foundational Concepts and Historical Evolution of Chemical Spaces

Conceptualizing Chemical Space and the Biologically Relevant Chemical Space (BioReCS) Framework

The concept of chemical space (CS) provides a fundamental framework for understanding and navigating the universe of all possible chemical compounds [1]. This multidimensional space is defined by molecular properties—both structural and functional—that serve as coordinates, positioning compounds based on their characteristics and relationships [1]. Within this vast theoretical universe lies the Biologically Relevant Chemical Space (BioReCS), the subset of molecules that interact with living systems, encompassing both beneficial and detrimental biological activities [1].

BioReCS spans numerous application domains including drug discovery, agrochemistry, flavor and odor science, food chemistry, and natural product research [1]. It includes not only therapeutic agents but also promiscuous compounds, poly-active molecules, and substances with toxic or allergenic effects [1]. The systematic exploration of this space is central to modern chemoinformatics and drug discovery, requiring specialized databases, molecular descriptors, and visualization techniques to map its complex topography [1] [2].

This comparison guide examines key regions of BioReCS—specifically natural products, combinatorial libraries, and approved drugs—within the context of a broader thesis on chemical space exploration. We provide objective performance comparisons, supporting experimental data, detailed methodologies, and essential resources to equip researchers with tools for effective navigation of biologically relevant chemical territories.

Quantitative Comparison of Chemical Subspaces

The exploration of BioReCS proceeds through distinct chemical subspaces (ChemSpas), each characterized by shared structural or functional features [1]. The following tables provide a quantitative foundation for comparing the key regions relevant to drug discovery.

Table 1: Representative Public Databases for BioReCS Exploration [1] [3]

| Type of Data Set / Area Covered | Exemplary Data Sets | Size Range (Number of Compounds) | Primary Utility in BioReCS Mapping |

|---|---|---|---|

| Drugs & Clinical Candidates | DrugBank, ChEMBL, ClinicalTrials.gov | ~4,500 approved (DrugBank) to ~2.4 million (ChEMBL) | Source of annotated bioactive molecules; defines "drug-like" subspace [3]. |

| Natural Products | COCONUT, NPASS | ~695,000 (COCONUT) to ~13,500 (NPASS with activity) | Covers evolved bioactive scaffolds; high structural diversity [3]. |

| Peptides | Peptipedia v2.0 | ~3.9 million sequences | Represents beyond Rule of 5 (bRo5) space; important for PPI modulation [3]. |

| Macrocycles | MacrolactoneDB | ~14,000 | Specialized class for challenging targets (e.g., PPIs, membrane proteins) [3]. |

| Food & Flavor Chemicals | FooDB, Flavor Molecule Compilations | >14,000 unique flavor molecules | Maps sensory BioReCS; intersection with nutraceuticals [1] [3]. |

| Toxic Chemicals | TOXNET, DSSTox | >35,000 chemical weapons | Defines "dark" BioReCS; crucial for safety prediction [3]. |

| Virtual Libraries (Synthetically Accessible) | Enamine REAL, GDB | Billions to 10^26 (proprietary spaces) | Represents vast unexplored synthetic regions of chemical space [4]. |

Table 2: Comparison of Natural Products, Combinatorial Compounds, and Approved Drugs [1] [5] [6]

| Property / Metric | Natural Products (NPs) & NP-Derived Drugs | Combinatorial & Synthetic Libraries | Approved Drugs (All Sources) |

|---|---|---|---|

| Chemical Space Coverage | Explore evolved, biologically pre-validated regions; high scaffold diversity. | Can target specific regions theoretically; bias towards synthetic feasibility. | Occupies a well-defined "drug-like" subspace within BioReCS. |

| Typical Molecular Complexity | Higher: More sp3 carbons, stereocenters, oxygen atoms; often macrocyclic [6]. | Lower: Designed for synthesis; often comply with Rule of 5. | Variable, but trend towards increased complexity for novel targets [6]. |

| Bioactivity Hit Rate | Historically high due to evolutionary selection. | Lower, but improving with DNA-encoded libraries and better design. | N/A (Endpoint). |

| Role in New Approvals (2014-2024) | 45 NP-derived NCEs approved (11.3% of all NCEs) [5]. | Primary source for synthetic NCEs (majority of small-molecule approvals). | 579 total drugs approved (388 NCEs, 191 NBEs) [5]. |

| Major Challenge | Supply, synthesis, and characterization [6]. | Achieving sufficient complexity and 3D shape diversity. | Optimizing multiple properties simultaneously (efficacy, safety, PK). |

| Key Discovery Method | Bioassay-guided isolation, genome mining, phenotypic screening [6]. | High-throughput screening (HTS), virtual screening (VS), combinatorial chemistry [4]. | Lead optimization from various starting points [4]. |

Table 3: Clinical Pipeline Analysis of Natural Product-Derived Compounds (Data up to 2025) [5]

| Category | Number Identified | Key Trend |

|---|---|---|

| NP-derived NCEs Approved (2014-Jun 2025) | 45 | Average of ~5 approvals per year; includes antibiotics, anticancer agents. |

| NP-Antibody Drug Conjugates (ADCs) Approved | 13 | Growing modality; uses NP toxins as warheads. |

| NP Compounds in Clinical Trials / Registration (End of 2024) | 125 | Demonstrates continued pipeline activity. |

| New NP Pharmacophores in Development | 33 | Indicates ongoing innovation, though only one discovered in the past 15 years. |

Experimental Protocols for BioReCS Navigation

High-Throughput Screening (HTS) of Compound Libraries

Objective: To experimentally probe regions of BioReCS by testing large physical libraries for activity against a therapeutic target. Protocol Summary:

- Library Curation: Select a diverse collection of 100,000 to several million compounds from corporate or commercial sources [4].

- Assay Development: Implement a robust biochemical or cell-based assay with a high signal-to-noise ratio, suitable for automation.

- Automated Screening: Utilize robotic liquid handlers and plate readers to test compounds at a single concentration (typically 10 µM) in microtiter plates.

- Hit Identification: Apply statistical thresholds (e.g., >3 standard deviations from mean) to identify initial "hits" from the primary screen.

- Hit Validation: Confirm activity of primary hits through dose-response experiments to generate IC50/EC50 values. Performance Consideration: While HTS directly tests physical-chemical space, it is constrained by library size (typically <5 million compounds), a mere fraction of the vast virtual chemical space estimated to contain up to 10^63 drug-like molecules [4].

Virtual Screening (VS) of Ultra-Large Chemical Spaces

Objective: To computationally search massively enlarged regions of chemical space (billions to trillions of virtual molecules) for potential hits. Protocol Summary:

- Target Preparation: Generate a 3D structure of the target protein, often from crystallography or homology modeling.

- Virtual Library Preparation: Access an on-demand database like Enamine REAL (containing billions of makeable compounds) or a proprietary chemical space [4].

- Molecular Docking: Use high-performance computing (e.g., thousands of CPU cores) to predict how each virtual molecule binds to the target. Advanced platforms like VirtualFlow can dock billions of compounds within weeks [4].

- Hit Selection: Rank compounds based on docking scores and visual inspection of predicted binding poses.

- Synthesis & Testing: Procure or synthesize the top-ranking virtual hits for experimental validation. Performance Data: A landmark study docked 281 million compounds from the ZINC database over a week using 500 CPU cores [4]. This approach can explore a chemical space orders of magnitude larger than HTS, accessing novel scaffolds outside traditional libraries.

Genome Mining for Natural Product Discovery

Objective: To explore the biosynthetic gene cluster (BGC) encoded region of BioReCS by predicting and engineering novel natural products. Protocol Summary:

- Genome Sequencing: Sequence the genome of a microbial strain (bacteria, fungi) or an environmental metagenomic sample [6].

- BGC Prediction: Use bioinformatics tools (e.g., antiSMASH, DeepBGC) to identify genomic regions encoding NP biosynthetic machinery [6].

- Priority Assessment: Predict the chemical structure of the putative NP and prioritize BGCs based on novelty and bioactivity potential.

- Cluster Activation/Heterologous Expression: Employ synthetic biology to "awaken" silent BGCs in a host organism for production [6].

- Compound Isolation & Characterization: Isolve the produced novel NP and determine its structure and biological activity. Performance Consideration: This method accesses the underexplored "dark matter" of microbial BioReCS, potentially yielding completely novel scaffolds with evolved bioactivity, but requires significant effort in genetic engineering and characterization [6].

Visualizing Chemical Space Relationships and Workflows

Diagram 1: Hierarchical Organization of Chemical Space and BioReCS

Diagram 2: Integrated Workflow for Exploring BioReCS

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents and Materials for Chemical Space Research

| Item / Solution | Function in BioReCS Research | Example / Application |

|---|---|---|

| Curated Bioactivity Databases | Provide ground-truth data to map known regions of BioReCS and train AI/ML models. | ChEMBL: Annotated bioactive molecules for target-based exploration [1]. InertDB: Curated inactive molecules to define boundaries of BioReCS [1]. |

| Molecular Descriptors & Fingerprints | Translate chemical structures into numerical vectors for computational analysis and similarity searching. | Molecular Quantum Numbers (MQNs): 42 integer descriptors for universal chemical space mapping [2]. MAP4 Fingerprint: Works across small molecules to peptides [1]. |

| On-Demand Virtual Libraries | Provide access to synthetically tractable, ultra-large regions of chemical space for virtual screening. | Enamine REAL Space: Billions of makeable compounds for structure-based VS [4]. GDB Databases: Enumerated small molecules from first principles [2]. |

| Specialized Compound Libraries | Probe specific chemical subspaces with focused diversity. | Natural Product Libraries: Isolated or semi-synthetic NPs for phenotypic screening [6]. Macrocycle Libraries: For targeting PPIs and membrane proteins [1]. |

| Gene Cluster Prediction Software | Identifies biosynthetic potential in genomes to access novel NP chemical space. | antiSMASH: Predicts BGCs in microbial genomes [6]. DeepBGC: Uses deep learning for improved BGC prediction [6]. |

| Metabolomics Platforms | De-replicates known compounds and validates the production of novel NPs from activated BGCs. | LC-MS/MS with GNPS: Annotates NP structures by mass spectrometry networking [6]. |

| Color Palette Tools (for Visualization) | Ensures clarity, accessibility, and effective communication in chemical space visualizations. | SAMSON HCL Palette: Perceptually uniform color mapping for molecular attributes [7]. Color Deficiency Emulators: Check visualizations for colorblind accessibility [7] [8]. |

Thesis Context: Chemical Space and Drug Discovery

The exploration of chemical space—the theoretical universe of all possible organic molecules—remains a central challenge in drug discovery. This guide frames the comparison between natural products (NPs) and combinatorial/synthetic compound libraries within the broader thesis that these two sources occupy complementary and often non-overlapping regions of biologically relevant chemical space [9]. NPs are the result of evolutionary tuning over millions of years, yielding structures pre-validated for interactions with biological macromolecules [10]. In contrast, combinatorial chemistry offers rapid, exhaustive exploration of synthetic accessibility but may not consistently probe regions of chemical space with high biological relevance [10]. Modern strategies, including pseudo-natural product design and generative AI, seek to merge these paradigms, leveraging evolutionary wisdom to guide synthetic exploration toward novel, bioactive chemotypes [10] [11].

Comparative Performance Guide: Natural Products vs. Combinatorial Libraries

The following tables provide an objective, data-driven comparison of the performance, structural characteristics, and screening outcomes of NPs and combinatorial compounds.

Clinical Success and Molecular Characteristics

Table 1: Comparative Analysis of Clinical Output and Drug-Likeness

| Metric | Natural Products & NP-Derived Drugs | Combinatorial/Synthetic Libraries (Typical) | Data Source & Notes |

|---|---|---|---|

| New Chemical Entities (NCEs) Approved (2014-2024) | 44 (7.6% of all 579 approved drugs; 11.3% of NCEs) [5]. | Majority of small molecule NCEs. | Analysis of global drug approvals [5]. |

| Average Annual Approval Rate (2014-2025) | ~5 NP/NP-derived drugs per year [5]. | Variable; dominates annual NCE output. | Includes 45 NP/NP-D NCEs and 13 NP-antibody drug conjugates [5]. |

| Novel Pharmacophores in Pipeline (as of 2024) | 33 new pharmacophores in clinical development [5]. | Predominant source of novel scaffolds, but often less complex. | Only one new NP pharmacophore discovered in the past 15 years, highlighting a discovery gap [5]. |

| Typical Molecular Complexity | Higher fraction of sp³-hybridized carbons, more stereogenic centers, increased oxygenation [10] [6]. | Higher fraction of sp²-hybridized carbons, more aromatic rings, simpler stereochemistry. | Complexity is linked to evolutionary selection for specific bioactivity [10]. |

| Compliance with "Rule of Five" | Often non-compliant (higher MW, more H-bond donors/acceptors) [6]. | Designed for high compliance. | Despite non-compliance, many NPs show excellent oral bioavailability [6]. |

| Structural Uniqueness | Scaffolds often not represented in synthetic libraries; high density of functional groups [9]. | Scaffolds may be over-represented in corporate screening collections [9]. | Uniqueness underpins ability to hit "difficult" biological targets. |

Screening and Hit Identification Performance

Table 2: Comparison of Screening and Hit-Finding Efficiency

| Aspect | Natural Product Extracts/Libraries | Combinatorial/Diversity-Oriented Libraries | Supporting Experimental Data & Context |

|---|---|---|---|

| Hit Rate in Phenotypic Screens | Historically high; NPs account for a disproportionate number of first-in-class drugs [9]. | Often lower, but improved with better library design (e.g., fragment-based, NP-inspired) [10]. | High hit rate attributed to evolutionary pre-validation for bioactivity [10]. |

| Chemical Feasibility & Resupply | Major challenge: sourcing, total synthesis, or engineered production required [12]. | High: synthesis routes and building blocks are defined from the outset. | A key historical reason for pharma's shift away from NPs [12]. |

| Speed from Hit to Identified Compound | Slow: requires bioassay-guided fractionation and structure elucidation [12]. | Fast: compound structure is known immediately upon hit identification. | Technological advances (LC-MS/MS, metabolomics) are accelerating NP dereplication [6]. |

| Exploration of Chemical Space | Covers a deep but narrow region honed by evolution [10]. | Can explore broad, synthetically accessible regions, but may be biologically sparse [10]. | Pseudo-NP design aims to combine depth and breadth [10]. |

| Cost of Library Curation | High: collection, extraction, standardization [9] [12]. | Lower: based on automated, parallel synthesis. | Early combinatorial chemistry promised lower cost and unlimited size [9]. |

Chemical Space Analysis: Coverage and Overlap

Advanced cheminformatic methods enable the comparison of vast chemical spaces that cannot be fully enumerated [13].

Table 3: Comparison of Large, Defined Chemical Spaces

| Chemical Space / Library Type | Estimated Size | Design Principle & Coverage | Key Characteristic |

|---|---|---|---|

| Natural Product Space (defined by known NPs) | ~2,000 core fragment groups [10]. | Defined by biosynthetic pathways and evolutionary selection. | Biologically pre-validated but limited by evolutionary constraints [10]. |

| REAL Space (Enamine) | ~4 billion (10⁹) accessible compounds [13]. | Built from reliable reactions and in-stock building blocks; high synthesis success rate (>80%). | Focus on readily accessible and synthesizable molecules [13]. |

| KnowledgeSpace (Public) | Up to 10¹⁴ virtual compounds [13]. | Built from published reactions and commercial building blocks. | Large and diverse, but variable chemical feasibility [13]. |

| BICLAIM (Corporate) | >10²⁰ virtual products [13]. | Scaffold-centric, defined by deconstructing known products into cores and side chains. | Focus on scaffold exploration and novelty [13]. |

Key Finding from Comparative Analysis: A study comparing BICLAIM, REAL, and KnowledgeSpace using 100 drug-like query molecules found a remarkably low structural overlap. Only three compounds were found in the nearest-neighbor hit sets of all three spaces, demonstrating their complementarity [13]. This supports the thesis that NP space and high-quality synthetic spaces are likely non-redundant.

Diagram 1: Chemical Space Relationships (100 chars)

Experimental Protocols for Key Comparisons

Protocol: Phenotypic Screening of Pseudo-Natural Product Libraries

This protocol is used to evaluate novel pseudo-NP scaffolds designed to explore new regions of biologically relevant chemical space [10].

- Library Design & Synthesis: Deconstruct known NPs into fragment-sized pieces (MW 120-350 Da, AlogP < 3.5) [10]. Combine fragments from different biosynthetic origins in unprecedented connectivities (e.g., fused, spiro, bridged) via complexity-generating synthesis to create a pseudo-NP library [10].

- Cell-Based Phenotypic Assay: Treat target cells (e.g., reporter cell lines, primary immune cells) with compounds at relevant concentrations (e.g., 1-10 µM). Use unbiased, target-agnostic assays to probe broad biology [10].

- Examples: Monitor glucose uptake, autophagy flux, Wnt/Hedgehog signaling activity, T-cell differentiation markers, or induction of reactive oxygen species [10].

- Morphological Profiling (Cell Painting Assay): As a higher-content follow-up. Stain cells with fluorescent dyes for multiple organelles. Acquire high-content images and extract ~1,000 morphological features to generate a perturbation "fingerprint" for each active compound [10].

- Hit Validation & Target Identification: Confirm activity in dose-response. For promising hits, use chemoproteomics (e.g., affinity-based protein profiling), CRISPR-based genetic screens, or biophysical methods to identify the molecular target[s].

Protocol: Cheminformatic Comparison of Large Chemical Spaces

This protocol is used to assess the overlap and complementarity of virtual chemical spaces too large to enumerate [13].

- Query Panel Selection: Curate a panel of 100 reference molecules. For drug-relevant comparisons, filter approved small molecule drugs by standard drug-like properties (e.g., MW < 600, cLogP < 6) and select randomly [13].

- Nearest-Neighbor Search: For each query molecule, search each chemical space (e.g., BICLAIM, REAL) using a topological pharmacophore descriptor like Feature Trees (FTrees). Retrieve the 10,000 most similar molecules from each space without full enumeration [13].

- Overlap Analysis: Pool the unique hits from each space for all queries. Calculate pairwise structural overlaps using traditional fingerprints (e.g., MDL public keys, ECFP4) and Tanimoto similarity [13].

- Feasibility Scoring: Assess the synthetic feasibility of the retrieved hits using computational scores such as the Synthetic Accessibility score (SAscore) or retrosynthetic analysis tools [13].

Research Reagent Solutions: The Scientist's Toolkit

Table 4: Essential Reagents and Materials for NP/Combinatorial Comparative Research

| Reagent / Material | Function in Research | Key Application in Comparison Studies |

|---|---|---|

| Feature Trees (FTrees) Software [13] | A topological, pharmacophore-based molecular descriptor and search tool. | Enables similarity searching and comparison of non-enumerable fragment-based chemical spaces [13]. |

| Cell Painting Assay Kits [10] | A multiplexed fluorescent dye set for staining organelles (nucleus, ER, mitochondria, etc.). | Provides an unbiased phenotypic fingerprint to compare the bioactivity profiles of NP-derived vs. synthetic compounds [10]. |

| Validated Building Block Sets (e.g., for REAL Space) [13] | Curated collections of chemically diverse and synthetically reliable reagents. | Used to construct high-quality combinatorial libraries or pseudo-NP scaffolds with a high predicted synthesis success rate. |

| DNA-Encoded Library (DEL) Kits | Allows combinatorial synthesis where each compound is linked to a unique DNA barcode. | Facilitates the ultra-high-throughput screening (billions of compounds) of synthetic combinatorial spaces against purified protein targets. |

| LC-MS/MS and GNPS Platform [6] | Liquid chromatography-tandem mass spectrometry for compound separation, detection, and identification. | Critical for dereplicating natural product extracts (avoiding rediscovery) and characterizing novel pseudo-NPs [6]. |

Diagram 2: Integrated Drug Discovery Workflow (99 chars)

The comparative data underscore that natural products and combinatorial compounds are not mutually exclusive but are powerful complements. NPs provide evolutionarily refined starting points with high success rates in hitting novel biology, while combinatorial methods offer scalable exploration [9]. The future lies in integrative strategies—such as pseudo-NP design [10], biosynthetic engineering [6], and CSP-informed evolutionary algorithms [14]—that use computational tools to translate the lessons of evolutionary tuning into the efficient exploration of synthetically accessible chemical space. This synergy aims to generate novel, "beautiful" molecules that are both biologically relevant and pragmatically developable [11].

The pursuit of new therapeutic agents is a fundamental exploration of chemical space—the vast universe of all possible small organic molecules. Historically, this exploration has followed two parallel paths: the investigation of natural products (NPs) evolved by biology and the construction of combinatorial compound libraries synthesized by chemists. These two paradigms occupy complementary yet distinct regions of chemical space, a fact with profound implications for drug discovery success [15] [9].

The advent of combinatorial chemistry in the late 20th century promised a revolution: the ability to synthesize thousands to millions of compounds in parallel, creating an "explosion" of synthetic molecules for high-throughput screening (HTS) [16]. This shifted industry focus away from natural products, which were seen as difficult and costly to source and characterize [9]. However, the initial promise of combinatorial chemistry—that sheer volume would yield a plethora of new drugs—was not fully realized, leading to a critical reassessment of library design principles [17] [9].

Today, the field recognizes that quality and design trump sheer quantity. The modern thesis posits that the most effective drug discovery strategy lies not in choosing between natural or synthetic sources, but in intelligently integrating their strengths. This involves designing combinatorial libraries that capture the desirable, biologically relevant molecular features of natural products while leveraging synthetic efficiency and scalability [17] [18]. This comparison guide objectively examines the performance, design principles, and experimental approaches of combinatorial libraries relative to natural products, providing researchers with a framework for strategic chemical space exploration.

Comparative Analysis of Molecular Properties and Chemical Space

Combinatorial compounds and natural products differ systematically in their underlying structural and physicochemical properties. These differences directly influence their performance in biological screens, their "drug-likeness," and their success in progressing through development pipelines.

Key Property Distributions: A landmark comparative study analyzed the property distributions of drugs, natural products, and early-generation combinatorial compounds [18]. The findings reveal that combinatorial libraries often occupy a different, and sometimes narrower, region of chemical space than natural products and marketed drugs.

Table 1: Comparative Analysis of Molecular Properties Across Compound Classes [18]

| Molecular Property | Typical Combinatorial Compounds (Early Libraries) | Natural Products | Marketed Drugs |

|---|---|---|---|

| Average Molecular Weight | Lower (often <500 Da) | Higher | Intermediate |

| Number of Chiral Centers | Fewer (often 0 or 1) | More numerous | Intermediate |

| Aromatic Ring Count | Higher prevalence | Lower prevalence | Intermediate |

| Saturation (Fsp3) | Lower (more flat, aromatic) | Higher (more complex, 3D shapes) | Intermediate |

| Heteroatom Ratio (O, N, S) | Different patterns (e.g., more N) | Distinct, varied patterns | Balanced |

| Structural Complexity | Often simpler, more linear | High (complex ring systems, bridged cycles) | Variable, optimized for synthesis |

The data indicates that while drug molecules derive from both synthetic and natural sources, they often occupy a hybrid property space. Early combinatorial libraries, designed for synthetic ease, tended to be achiral, aromatic, and planar, lacking the stereochemical and scaffold complexity characteristic of many natural products [18]. This "complexity gap" may explain why some large combinatorial screens failed to produce high-quality leads, as the molecules did not sufficiently interrogate the biologically relevant regions of chemical space occupied by natural macromolecule-interacting ligands [9].

Chemical Space Coverage: Natural products are the result of billions of years of evolutionary selection for biological interaction. Consequently, they exhibit privileged scaffold architectures and pharmacophores that are pre-validated for binding to proteins and nucleic acids [15]. Combinatorial chemistry, in its modern, more sophisticated form, seeks to mimic this by designing libraries based on natural product-inspired scaffolds or by using computational methods to ensure library members populate desirable, "drug-like" regions of property space [17].

Library Design Principles: From Diversity-Oriented to Focused Synthesis

The philosophy of combinatorial library design has evolved significantly, moving from massive, diversity-driven collections to smaller, smarter, and more focused libraries.

The Evolution of Design Strategy: The initial paradigm of maximizing molecular diversity as the primary goal proved insufficient [17]. Contemporary design is a multi-objective optimization problem that balances synthetic feasibility, predicted Absorption, Distribution, Metabolism, Excretion, and Toxicity (ADMET) properties, and relevance to a biological target or target family [17].

Table 2: Evolution of Combinatorial Library Design Principles

| Design Paradigm | Primary Goal | Typical Library Size | Advantages | Limitations |

|---|---|---|---|---|

| Early Diversity-Oriented | Maximize structural diversity | Very Large (10⁵ - 10⁶) | Broad exploration of chemical space; many novel structures. | Often poor drug-likeness; high attrition; high cost of synthesis/screening. |

| Focused/Target-Family | Optimize binding to a specific target or protein family | Medium (10³ - 10⁴) | Higher hit rates; more relevant chemical space; incorporates known SAR. | Requires prior target/structure knowledge; limited serendipity. |

| Lead-Like/Drug-Like | Optimize physicochemical properties for developability | Medium (10³ - 10⁴) | Improved pharmacokinetic predictions; lower late-stage attrition. | May exclude valid chemotypes; relies on accuracy of predictive models. |

| Natural Product-Inspired | Mimic structural complexity & features of NPs | Variable | Biologically pre-validated scaffolds; novel yet relevant chemical space. | Synthetic challenge; complex chiral synthesis. |

| Dynamic Combinatorial (DCC) | Identify best binders via template-directed amplification | Small (10² - 10³) | Direct selection by biological target; thermodynamic optimization of binders [19]. | Requires compatible, reversible chemistry; analytical complexity. |

Modern Computational Design: Computational tools are now central to library design. They enable virtual screening of proposed libraries for ADMET properties, prediction of synthetic accessibility, and selection of building blocks to maximize desired diversity or similarity metrics [17]. This in-silico filtering helps ensure that synthesized libraries have a higher probability of containing viable lead compounds.

Dynamic Combinatorial Chemistry (DCC): DCC represents a powerful convergence of synthesis and screening. In DCC, libraries are formed under thermodynamic control using reversible chemical reactions (e.g., formation of acylhydrazones, imines, or disulfides) [19]. When a biological target (a protein or nucleic acid) is introduced, it acts as a template, selectively amplifying the library members that bind to it strongest, according to Le Chatelier's principle. This process directly identifies high-affinity ligands from a complex mixture, effectively performing synthesis and screening simultaneously [19].

Diagram: Workflow for Target-Directed Dynamic Combinatorial Chemistry (DCC). The process involves generating a library under thermodynamic control, introducing the biological target to amplify the best binders, and analyzing the shifted equilibrium to identify hits [19].

Experimental Protocols & Analytical Comparisons

Robust experimental and analytical methods are critical for both generating combinatorial libraries and comparing their outputs to natural product leads. Key protocols involve parallel synthesis, purification, and high-throughput characterization.

Representative Synthetic & Screening Protocol: Dynamic Combinatorial Library (DCL) Formation and Analysis This protocol, adapted from contemporary DCC practices, is used to generate and screen a library for binders to a protein target [19].

- Objective: To identify novel acylhydrazone-based inhibitors of a target enzyme (e.g., α-Glucosidase) from a dynamic combinatorial library.

- Materials:

- Building Blocks: A set of 5 acylhydrazides and 3 aldehydes, each solubilized in DMSO to create 100 mM stock solutions.

- Template: Purified target protein in a compatible aqueous buffer (e.g., PBS, pH ~6.5).

- Catalyst: Aniline (100 mM in buffer).

- Controls: Library without template; template alone.

- Procedure: a. DCL Assembly: In a 96-well plate, combine building blocks in buffer (final concentration 1-2 mM each) with 5 mM aniline catalyst. Final DMSO concentration ≤ 5%. b. Equilibration: Divide the master library mix. To the test sample, add the target protein (final concentration 1-5 µM). The control sample receives buffer only. Seal the plate and incubate at room temperature with gentle shaking for 48-72 hours to reach thermodynamic equilibrium. c. Quenching: Lower the pH of the solution to ~3.0 using a dilute acid (e.g., formic acid) to freeze the dynamic exchange by protonating the aniline catalyst. d. Analysis: Analyze both test and control samples via LC-MS (Liquid Chromatography-Mass Spectrometry). Use reverse-phase chromatography (C18 column) with a water/acetonitrile gradient.

- Data Analysis: Compare the LC-MS chromatograms and extracted ion counts for all possible acylhydrazone products between the test (+protein) and control (-protein) samples. Ligands amplified in the presence of the template will show a significant increase in peak area/height. Identify these hits by their mass.

- Validation: Independently synthesize the amplified hits and measure their binding affinity (e.g., IC50, Kd) using standard enzymatic or biophysical assays (e.g., Microscale Thermophoresis - MST) [19].

Analytical Method Comparison: HPLC vs. UPLC for Library Analysis The analysis of complex mixtures from combinatorial or natural product extracts demands high-resolution chromatography. Ultra-Performance Liquid Chromatography (UPLC) has largely superseded HPLC for this purpose.

Table 3: Performance Comparison of HPLC vs. UPLC for Compound Library Analysis [20]

| Parameter | High-Performance LC (HPLC) | Ultra-Performance LC (UPLC) | Implication for Library Analysis |

|---|---|---|---|

| Typical Particle Size | 3-5 μm | <2 μm | Smaller particles in UPLC reduce band broadening. |

| Operating Pressure | <6000 psi | Up to 15,000 psi | Higher pressure enables use of smaller particles. |

| Theoretical Plates | Lower | ≥2x Higher | Greatly improved resolution of complex mixtures. |

| Analysis Time | Longer (10-60 min) | ~3-5x Faster (2-10 min) | Higher throughput for screening fractions or purity checks. |

| Mobile Phase Consumption | Higher | ≥80% Reduction [20] | Lower cost and environmental impact (Green Chemistry). |

| Peak Capacity | Lower | Higher | Can separate more components in a single run, crucial for complex natural product extracts or DCLs. |

A specific comparative study demonstrated that for gradient separations of active pharmaceutical ingredients (APIs) and intermediates, UPLC methods provided equivalent or superior resolution while saving over 80% of mobile phase solvent compared to HPLC methods [20].

The Scientist's Toolkit: Essential Reagents & Materials

Successful execution of combinatorial and comparative natural product research requires specialized reagents, materials, and instrumentation.

Table 4: Key Research Reagent Solutions & Materials

| Category | Item | Typical Function & Application | Key Consideration |

|---|---|---|---|

| Library Synthesis | Diverse Building Blocks (e.g., amino acids, carboxylic acids, boronic acids, aldehydes, acylhydrazides). | Provide structural variation in combinatorial libraries. Sourced from commercial "large stock" collections. | Chemical diversity, purity, compatibility with chosen reaction chemistry. |

| Library Synthesis | Solid Supports (e.g., polystyrene resins, functionalized PEG). | Enable solid-phase parallel synthesis; excess reagents drive reactions; simplifies purification. | Swelling properties, loading capacity, linker chemistry for cleavage. |

| Dynamic Chemistry | Reversible Reaction Components (e.g., aniline, p-anisidine, nucleophilic catalysts). | Catalyze the reversible formation of imines, acylhydrazones, etc., in DCC for library equilibration [19]. | Biocompatibility (aqueous buffer, mild pH), catalytic efficiency. |

| Analytical | UPLC/HPLC Columns (e.g., C18 reverse-phase, sub-2 μm particles). | High-resolution separation of complex library mixtures or natural product extracts [20]. | Particle size, pressure rating, stationary phase chemistry for analyte retention. |

| Analytical | LC-MS & HRMS Systems | Primary tool for analyzing DCLs, purity checks, and identifying compounds in mixtures. Provides mass and fragmentation data. | Sensitivity, mass accuracy, compatibility with high-flow UPLC. |

| Screening | Validated Biological Targets (e.g., purified enzymes, protein domains, nucleic acid constructs). | Act as templates in DCC or targets in HTS for identifying bioactive library members [19]. | Stability under assay conditions, purity, relevance to disease pathway. |

| Natural Products | Metabolomics Tools (e.g., LC-MS with multivariate analysis software). | Profiling and comparing chemical feature diversity across natural product extracts to guide library building [21]. | Ability to detect a broad range of secondary metabolites. |

The rise of combinatorial chemistry has fundamentally transformed drug discovery from a linear, one-compound-at-a-time endeavor into a parallelized, systems-oriented science. However, its greatest lesson has been that synthetic explosion must be guided by intelligent design. The comparative analysis clearly shows that the most promising path forward is a hybrid one.

Future research will continue to blur the lines between natural and synthetic chemical space. This will be achieved through:

- Advanced Library Design: Increased use of AI and machine learning to design libraries that optimally populate the biologically relevant "middle earth" of chemical space between flat combinatorial compounds and highly complex natural products.

- Integration of Biosynthesis: Employing synthetic biology to create engineered natural product "libraries" via pathway refactoring and combinatorial biosynthesis.

- Broader DCC Applications: Expanding dynamic combinatorial and DNA-encoded library technologies to more challenging targets, including protein-protein interactions and RNA structures [19].

- Quantitative Natural Product Library Development: Implementing metabolomics-driven strategies, as demonstrated with fungal genera like Alternaria, to rationally build natural product libraries with maximized chemical diversity from a minimal set of isolates [21].

The ultimate goal is not to declare one approach the winner, but to develop a synergistic toolkit. By leveraging the synthetic power of combinatorial chemistry, the biologically validated inspiration of natural products, and the predictive power of computational design, researchers can more efficiently navigate the vastness of chemical space toward new and more effective therapeutics.

The concept of "chemical space" represents the total possible configuration of all organic molecules, estimated to exceed 10⁶⁰ compounds for small carbon-based molecules alone [22]. Within this vast universe, the subset of biologically relevant chemical space—where molecules interact with living systems—is the primary hunting ground for drug discovery. This guide provides a comparative analysis of three principal sources that populate this space: clinically approved drugs, natural products (NPs), and compounds from combinatorial chemistry.

Approved drugs represent a unique, pre-validated region of chemical space. Their passage through clinical trials confirms not only their efficacy against specific biological targets but also their adherence to critical pharmacokinetic and safety profiles in humans. Consequently, they serve as an indispensable benchmark for evaluating and mapping new chemical entities. Understanding how the chemical spaces of NPs and combinatorial libraries overlap with, or diverge from, this validated region is fundamental to designing more efficient discovery strategies. This comparison is framed within an ongoing paradigm shift: from serendipitous discovery and massive random screening toward rational, target-aware design informed by computational power and a deeper understanding of chemical biology [23] [24].

Comparative Analysis of Chemical Space Occupancy

The physicochemical and structural properties of molecules from different origins reveal distinct footprints within chemical space. Analysis using tools like ChemGPS-NP and Principal Component Analysis (PCA) allows for the visualization and comparison of these footprints [22].

Table 1: Comparative Physicochemical and Structural Profiles of Chemical Spaces

| Property / Characteristic | Approved Drugs (Benchmark) | Natural Products (NPs) | Combinatorial Compounds | Implication for Discovery |

|---|---|---|---|---|

| Primary Source | Synthetic, semi-synthetic, natural-derived | Biological organisms (plants, microbes, marine life) | Synthetic combinatorial libraries [25] | Defines starting diversity and novelty potential. |

| Molecular Complexity & Rigidity | Moderate complexity; balance of flexibility/rigidity | High complexity and structural rigidity; more stereocenters [22] | Often lower complexity; more flexible bonds [22] | NP rigidity favors selective target binding; combinatorial flexibility aids optimization. |

| Aromaticity | Moderate aromatic ring count | Lower aromaticity; more aliphatic and heterocyclic rings [22] | Higher aromaticity on average [22] | Impacts planarity, solubility, and protein interaction modes. |

| Compliance with "Rule of 5" (Ro5) | ~95% compliant for oral drugs [24] | ~60% compliant; many are bioavailable "beyond Ro5" [22] | Designed for high Ro5 compliance [24] | NPs access unique, "druggable" space beyond traditional rules. |

| Typical Molecular Weight | Optimized for oral bioavailability (often <500 Da) | Broader distribution; can be higher | Tightly controlled for library design | Influences membrane permeability and ADME properties. |

| Chemical Space Coverage | Defines the "clinically validated" region | Covers unique regions sparsely populated by synthetic libraries [22] | Often clusters in high-density regions around common scaffolds [26] | NPs can pioneer novel target interactions; combinatorial libraries may over-sample known areas. |

| Lead/Drug-Likeness | Inherently "drug-like" (post-validation) | High "lead-likeness"; pre-validated by evolution [22] | Varies; can be optimized for "drug-likeness" | NPs provide privileged starting points; combinatorial libraries require filtering. |

The data indicates that NPs occupy regions of chemical space distinct from typical synthetic medicinal chemistry compounds, including many combinatorial libraries. They exhibit greater structural rigidity, higher sp³ carbon count (greater three-dimensionality), and lower aromatic character [22]. Importantly, a significant portion of NPs violates Lipinski's Rule of Five while remaining pharmacologically active, demonstrating that the orally druggable chemical space extends beyond these classic guidelines [22]. This makes NPs invaluable for targeting challenging protein classes like protein-protein interactions.

Conversely, combinatorial chemistry, while capable of generating immense numbers of compounds, has faced criticism for producing libraries with limited structural diversity and a bias toward flat, aromatic structures that may not optimally interact with complex biological targets [23]. The modern trend has shifted from "larger is better" to designing smaller, focused, and smarter libraries based on known pharmacophores or target structural information [23] [27].

Benchmarking Performance: Computational and Experimental Metrics

Evaluating how well compounds from different sources perform in the drug discovery pipeline requires robust benchmarking. The Compound Activity benchmark for Real-world Applications (CARA) provides a framework for assessing computational activity prediction models by distinguishing between two key real-world tasks: Virtual Screening (VS) and Lead Optimization (LO) [26].

Table 2: Benchmarking Compound Libraries: A CARA Framework Perspective [26]

| Benchmarking Aspect | Virtual Screening (VS) Assay Context | Lead Optimization (LO) Assay Context | Implications for Library Strategy |

|---|---|---|---|

| Objective | Identify initial "hit" compounds from large, diverse libraries. | Optimize potency & properties of a congeneric series from a hit. | Guides library design for specific discovery phases. |

| Chemical Distribution | Diffused pattern: Compounds are structurally diverse with low pairwise similarity. | Aggregated pattern: Compounds are highly similar (congeneric). | VS requires broad, diverse libraries (e.g., diverse NP sets). LO requires focused, analog libraries. |

| Typical Library Source | Diverse NP extracts, large combinatorial libraries, commercial screening collections. | Focused combinatorial libraries, medicinal chemistry analog series. | Matches library diversity to the task. |

| Key Predictive Challenge | Identifying active scaffolds from vast chemical space ("needle in a haystack"). | Accurately ranking subtle potency changes from minor structural modifications. | VS models require good recall of actives; LO models require precise quantitative prediction. |

| Performance of Data-Driven Models | Meta-learning and multi-task learning strategies show effectiveness [26]. | Traditional single-assay QSAR models can perform decently [26]. | No single model excels at both tasks; strategy must be task-aware. |

This benchmarking reveals a critical insight: no single chemical library or computational model is optimal for all stages of discovery. Natural product libraries, with their broad, evolutionarily pre-validated diversity, are exceptionally well-suited for the Virtual Screening phase, where the goal is to identify novel chemical starting points [28]. In contrast, focused combinatorial libraries are indispensable for the Lead Optimization phase, where systematic, incremental structural changes are needed to refine potency and drug-like properties [23] [27].

Methodologies for Comparative Analysis and Validation

Core Experimental Protocols

To systematically compare and validate compounds from different chemical spaces against the approved drug benchmark, researchers employ several key methodologies.

Protocol 1: Adjusted Indirect Comparison for Efficacy Benchmarking This statistical method is used to compare the efficacy of two treatments (e.g., a new NP-derived candidate vs. an approved drug) when head-to-head trial data are unavailable but both have been tested against a common comparator (e.g., placebo or standard therapy) [29].

- Identify Studies: Locate two separate randomized controlled trials (RCTs). RCT A compares Drug X to Common Comparator C. RCT B compares Approved Drug Y to the same Common Comparator C.

- Extract Effect Estimates: For each trial, extract the relative treatment effect (e.g., mean difference, risk ratio, hazard ratio) of the experimental drug versus C, along with its variance (standard error²).

- Calculate Indirect Effect: The adjusted indirect comparison estimate for X vs. Y is the difference between the two direct effects: Effect(X vs. Y) = Effect(X vs. C) – Effect(Y vs. C).

- Calculate Variance: The variance of the indirect estimate is the sum of the variances of the two direct comparisons: Var(X vs. Y) = Var(X vs. C) + Var(Y vs. C). This results in a wider confidence interval, reflecting greater uncertainty [29].

- Interpretation: A result where the confidence interval for the indirect effect excludes the null value (e.g., 0 for mean difference, 1 for risk ratio) suggests a statistically significant difference between X and Y.

Protocol 2: Chemical Space Mapping with ChemGPS-NP This protocol maps and visualizes the position of compound collections within a global chemical space framework [22].

- Compound Set Preparation: Curate datasets (e.g., a list of approved drugs, an NP library, a combinatorial library) in SMILES or structure file format.

- Descriptor Calculation: For each compound, calculate a standard set of 35 molecular descriptors covering size, lipophilicity, polarity, polarizability, flexibility, and hydrogen-bonding capacity.

- PCA Score Prediction: Using the web-based ChemGPS-NP tool, project the descriptor values for the new compounds onto the existing principal component analysis (PCA) model. This model is built on a reference set that defines the chemical space map [22].

- Visualization & Analysis: Plot the compounds using the first few principal components (e.g., PC1 vs. PC2, PC3 vs. PC4). Analyze clusters, outliers, and density distributions. Regions densely populated by approved drugs define the "clinically validated" space. Sparsely populated regions by synthetic compounds but occupied by NPs indicate opportunity zones for novel discovery [22].

Protocol 3: In vitro Bioactivity and Selectivity Profiling This protocol benchmarks the biological performance of new hits against approved drugs.

- Panel Selection: Assemble a panel of related target proteins (e.g., a kinase family, GPCR subtypes) including the primary intended target.

- Dose-Response Assays: Test the new hit compound and a relevant approved drug in parallel across the panel at a range of concentrations (e.g., 0.1 nM – 100 µM) using standardized biochemical or cell-based assays.

- Data Analysis: Calculate IC₅₀ or EC₅₀ values for each compound/target pair. Generate a selectivity heatmap or radar chart.

- Benchmarking: Compare the potency (IC₅₀) and selectivity index (ratio of IC₅₀ for off-target vs. primary target) of the new hit to the approved drug. A new NP-derived hit may show comparable potency but a distinct selectivity profile, indicating a potentially differentiated therapeutic mechanism.

The Scientist's Toolkit: Essential Reagents & Platforms

Table 3: Key Research Reagents and Platforms for Chemical Space Exploration

| Tool / Reagent | Category | Primary Function in Benchmarking | Key Consideration |

|---|---|---|---|

| ChEMBL Database [26] | Bioactivity Database | Provides curated bioactivity data for approved drugs and millions of other compounds, enabling the extraction of assay data for indirect comparisons and model training. | Critical for defining benchmark activity values and understanding structure-activity relationships (SAR). |

| Cortellis Drug Discovery Intelligence [30] | Commercial Intelligence Platform | Integrates biological, chemical, and pharmacological data to benchmark experimental performance of drug candidates against historical and competitor data. | Used for assessing the competitive landscape and validating target-drug-disease linkages. |

| DNA-Encoded Library (DEL) Technology [25] | Combinatorial Library Platform | Enables the synthesis and affinity-based screening of ultra-large libraries (billions of compounds) to identify novel binders for a protein target. | Useful for rapidly exploring vast synthetic chemical space and generating hits for difficult targets. |

| High-Resolution Mass Spectrometry (HR-MS) & NMR [28] | Analytical Chemistry | Enables the dereplication (identification of known compounds) and structural elucidation of novel natural products, crucial for mapping NP space. | Essential for quality control and confirming the novelty of isolates from NP sources. |

| ChemGPS-NP Web Service [22] | Computational Chemistry Tool | Provides a publicly available platform for mapping and navigating the chemical space of large compound collections relative to a defined reference space. | The standard 35-descriptor set ensures consistent, comparable projections across studies. |

| Rule of 5 (Ro5) and PAINS Filters | Computational Filters | Initial filters to assess drug- or lead-likeness and flag compounds with substructures prone to assay interference. | While useful, they should not be used rigidly, especially for NPs which may be active beyond Ro5 [22]. |

Integrated Pathways and Strategic Workflows

The following diagrams illustrate the logical relationships between chemical sources, discovery strategies, and benchmarking outcomes.

Chemical Space Navigation to Clinical Validation

Decision Tree for Comparative Efficacy Analysis

Synthesis and Strategic Outlook

Mapping chemical space with approved drugs as the benchmark reveals a complementary relationship between NPs and combinatorial chemistry. Natural products serve as pioneering explorers, uncovering biologically relevant but synthetically underserved regions of chemical space. They provide privileged, evolutionarily refined scaffolds ideal for initial hit discovery, particularly for challenging targets. Combinatorial chemistry, guided by computational design, serves as the optimizing engineer, efficiently populating the regions around these hits to refine potency, selectivity, and drug-like properties toward the validated benchmark space [23] [27].

The future of effective chemical space navigation lies in integrating these paradigms. Strategies include:

- Biology-Inspired Combinatorial Synthesis: Using NP scaffolds as cores for generating combinatorial libraries to explore related chemical space more systematically [28].

- AI-Enabled De Novo Design: Using generative models trained on approved drugs and NP structures to propose novel compounds that inherently possess drug-like properties while exploring new regions [24] [27].

- Advanced Benchmarking Platforms: Utilizing comprehensive platforms like CARA [26] and Cortellis [30] to make data-driven decisions by continuously benchmarking new entities against the validated performance of approved drugs across multiple parameters.

The clinically validated chemical space defined by approved drugs is not a static endpoint but a dynamic, expanding frontier. By using it as a foundational benchmark, researchers can strategically direct the exploration of natural product diversity and the power of combinatorial synthesis to populate this frontier with the next generation of effective therapeutics.

Tools for Navigation: Computational and Analytical Methods for Chemical Space Exploration

The systematic representation of chemical structures is a cornerstone of modern computational drug discovery. Molecular descriptors and fingerprints translate the vast, multidimensional space of chemical structures into quantifiable data, enabling comparison, prediction, and navigation [31]. This capability is critical within the broader thesis of chemical space comparison, which seeks to understand the relationships and coverage differences between the rich, evolutionarily refined space of Natural Products (NPs), the vast, synthetically accessible realm of combinatorial compounds, and the focused libraries of drug-like molecules [5] [6].

Natural products are distinguished by high structural complexity, including more sp³-hybridized carbons and oxygen atoms, which often translate to potent and selective bioactivity [6]. Despite a historical decline in focus, NPs and NP-derived compounds accounted for 9.7% (56 of 579) of all new drug approvals between 2014 and 2024, underscoring their enduring relevance [5]. Conversely, combinatorial chemistry can generate libraries of unprecedented size, with proprietary collections like GSK's XXL space containing up to 10²⁶ virtual compounds [32]. Bridging these domains requires robust molecular representations that can capture essential structural and chiral features to enable meaningful comparison and identify complementary regions of chemical space for new therapeutic leads [31] [33].

Comparative Performance of Molecular Representations

Different molecular representations capture varying aspects of chemical structure, leading to significant differences in performance for predictive modeling tasks. The following tables summarize key experimental findings from benchmarking studies.

Table 1: Performance Benchmark of Fingerprints and Descriptors in Odor Prediction [34]

| Feature Set | Model | AUROC | AUPRC | Accuracy (%) | Precision (%) | Recall (%) |

|---|---|---|---|---|---|---|

| Morgan Fingerprints (ST) | XGBoost | 0.828 | 0.237 | 97.8 | 41.9 | 16.3 |

| Morgan Fingerprints (ST) | LightGBM | 0.810 | 0.228 | 97.7 | 39.5 | 17.4 |

| Morgan Fingerprints (ST) | Random Forest | 0.784 | 0.216 | 97.6 | 37.2 | 15.8 |

| Classical Descriptors (MD) | XGBoost | 0.802 | 0.200 | 97.6 | 36.1 | 15.1 |

| Functional Group (FG) | XGBoost | 0.753 | 0.088 | 97.0 | 22.3 | 9.8 |

Table Note: Benchmark on a dataset of 8,681 odorants. Results show Morgan (circular) fingerprints paired with a gradient-boosting algorithm (XGBoost) deliver superior performance for capturing complex structure-property relationships [34].

Table 2: Performance of Chirality-Sensitive Descriptors in Enantiomer Separation Prediction [33]

| Descriptor Type | Base Model | Chirality Enhancement | Prediction Accuracy (Elution Order) |

|---|---|---|---|

| Morgan Fingerprints | Random Forest | Integrated CIP labels | 0.82 |

| Latent Space Vector (Transformer) | Random Forest | Delta (ori-opp) | 0.75 |

| Latent Space Vector (CDDD) | Random Forest | Delta (ori-ns) | 0.71 |

| Latent Space Vector (Transformer) | Random Forest | Original (no enhancement) | 0.65 |

Table Note: Evaluation on a dataset of 1,929 enantiomer pairs for Chiralpak AD-H column. Classical fingerprints outperformed latent space vectors from SMILES encoders, but "delta" operations (arithmetic between molecule and enantiomer descriptors) significantly improved chiral encoding [33].

Table 3: Drug Approvals by Origin (2014-2024) and Representation Challenge [5]

| Compound Class | Number of Approvals | % of Total (579) | Key Representation Challenges |

|---|---|---|---|

| All NP-derived | 56 | 9.7% | High complexity, stereochemistry, polycyclic scaffolds |

| NP-derived New Chemical Entities | 44 | 7.6% | Capturing 3D conformation and pharmacophore geometry |

| NP Antibody-Drug Conjugates | 12 | 2.1% | Linker chemistry and payload-specific descriptors |

| Synthetic/Small Molecule | 523 | 90.3% | Focus on drug-likeness, lead-like property ranges |

Experimental Protocols for Benchmarking Representations

Protocol: Benchmarking Fingerprints and Descriptors for Property Prediction

This protocol is adapted from a large-scale comparative study of machine learning models for odor decoding [34].

Dataset Curation:

- Source: Assemble a multi-label dataset from curated public sources (e.g., Pyrfume-data archive).

- Standardization: Merge entries by PubChem CID. Retrieve canonical SMILES via PubChem's PUG-REST API.

- Label Consolidation: Standardize diverse property labels (e.g., odor descriptors) into a controlled vocabulary with expert guidance to minimize noise.

Feature Generation:

- Morgan Fingerprints: Generate using the RDKit library (radius=2, nBits=2048 is common). Consider using the Morgan algorithm from optimized MolBlock conformations [34].

- Classical 2D Descriptors: Calculate using RDKit or similar. Standard set includes Molecular Weight, LogP, Topological Polar Surface Area (TPSA), hydrogen bond donors/acceptors, and rotatable bond count [34].

- Functional Group Fingerprints: Generate by scanning SMILES against a predefined list of SMARTS patterns for key functional groups.

Model Training & Evaluation:

- Algorithm Selection: Benchmark tree-based algorithms (Random Forest, XGBoost, LightGBM) known for handling high-dimensional, sparse fingerprint data [34].

- Validation: Implement stratified k-fold cross-validation (e.g., 5-fold) on an 80/20 train/test split. For multi-label tasks, use a one-vs-rest strategy.

- Metrics: Report Area Under the ROC Curve (AUROC), Area Under the Precision-Recall Curve (AUPRC), accuracy, precision, and recall.

Protocol: Evaluating Chirality-Sensitive Descriptors

This protocol is based on a study evaluating descriptors for chiral chromatography prediction [33].

Chiral Data Preparation:

- Dataset: Obtain a set of enantiomer pairs with an associated chiral property (e.g., chromatographic elution order).

- Critical Splitting: Split data into training and test sets by enantiomer pair to prevent data leakage. Both enantiomers must reside in the same set.

Descriptor Calculation:

- Baseline Fingerprints: Generate Morgan fingerprints with chirality tags enabled (e.g., use

useChirality=Truein RDKit). - Latent Space Descriptors:

- Use a pre-trained SMILES encoder (e.g., Transformer, CDDD model) to generate a latent vector for each canonical SMILES.

- Create "delta" descriptors to enhance chiral information: calculate the vector difference between (a) original molecule and its enantiomer ("ori-opp"), or (b) original molecule and its stereochemistry-depleted SMILES ("ori-ns") [33].

- Baseline Fingerprints: Generate Morgan fingerprints with chirality tags enabled (e.g., use

Modeling & Analysis:

- Train a classifier (e.g., Random Forest) to predict the chiral property.

- Compare the performance of different descriptor sets. Analyze misclassifications to understand model and descriptor limitations.

Visualization of Workflows and Chemical Space

Molecular Representation to Chemical Space Analysis

Molecular Representation for Predictive Modeling

Table 4: Key Software Tools and Resources for Molecular Representation

| Tool/Resource Name | Type | Primary Function in Representation | Application Context |

|---|---|---|---|

| RDKit | Open-source Cheminformatics Library | Calculates molecular descriptors, generates Morgan fingerprints, handles SMILES I/O and stereochemistry. | Core toolkit for standard descriptor/fingerprint generation [34] [33]. |

| CDDD Model | Pre-trained Neural Network | Generates continuous latent space vector descriptors from SMILES strings. | Exploring novel, data-driven descriptors; transfer learning [33]. |

| GTM (Generative Topographic Mapping) | Dimensionality Reduction Algorithm | Creates interpretable 2D maps of chemical space from high-dimensional descriptors. | Visualizing and comparing libraries (e.g., NP vs. combinatorial) [31] [32]. |

| CoLiNN | Specialized Neural Network | Predicts chemical space projection for combinatorial products directly from building blocks, avoiding enumeration. | Ultra-large combinatorial library (e.g., DEL) analysis and design [32]. |

| PUG-REST API (PubChem) | Web API | Retrieves canonical SMILES and standardized compound data by identifier. | Essential for dataset curation and standardization [34]. |

| AntiSMASH/DeepBGC | Bioinformatics Platform | Identifies biosynthetic gene clusters (BGCs) in genomic data for NP discovery. | Genome mining for novel natural product scaffolds [6]. |

The systematic exploration of chemical space—a theoretical multi-dimensional space where each point represents a unique molecule defined by its properties—is foundational to modern drug discovery and cheminformatics [35]. With public repositories like ChEMBL and PubChem now containing millions of compounds and the emergence of ultra-large virtual libraries exceeding a billion molecules, the practical analysis of this space presents a monumental computational challenge [35] [36]. A core thesis in contemporary research interrogates whether the rapid growth in the number of available compounds translates to a corresponding increase in meaningful chemical diversity, particularly when comparing distinct regions such as natural products, approved drugs, and combinatorial synthetic compounds [35].

Traditional tools for assessing similarity and diversity, such as pairwise Tanimoto similarity calculations and classic clustering algorithms like Taylor-Butina, scale quadratically (O(N²)) with library size. This scaling makes them prohibitively expensive for analyzing today's massive datasets [37] [38]. This guide provides a comparative analysis of two innovative solutions to this bottleneck: the iSIM (instant similarity) framework and the BitBIRCH clustering algorithm. We objectively evaluate their performance against established alternatives, detailing experimental protocols and presenting data within the critical context of comparative chemical space research.

Methodological Foundations: iSIM and BitBIRCH Explained

The iSIM Framework: Linear-Scaling Similarity Assessment

The iSIM framework provides an exact or highly accurate approximation of the average pairwise similarity within a set of N molecules in linear time (O(N)), bypassing the need for N² comparisons [39].

Core Protocol: For a library represented by binary fingerprints (e.g., ECFP4, RDKit), molecules are arranged in an N×M matrix, where M is the fingerprint length. The key step is the column-wise sum, producing a vector K = [k₁, k₂, …, kₘ], where each kᵢ is the count of "on" bits in that column [39]. From this vector, the instant Tanimoto (iT) is calculated as:

iT = Σᵢ [kᵢ(kᵢ−1)] / Σᵢ [kᵢ(kᵢ−1) + kᵢ(N−kᵢ)] [35] [39].

This iT value represents the library's average internal similarity (lower values indicate greater diversity). The framework also introduces the concept of complementary similarity to identify molecules central to (medoids) or on the periphery of (outliers) the chemical space [35].

The BitBIRCH Algorithm: Efficient Hierarchical Clustering

BitBIRCH is a clustering algorithm designed for binary fingerprints that adapts the Balanced Iterative Reducing and Clustering using Hierarchies (BIRCH) approach for cheminformatics [37] [38].

Core Protocol: BitBIRCH constructs a CF-tree (Clustering Feature tree) using compact Bit Feature (BF) vectors to represent subclusters. A BF for a cluster j is defined as BFⱼ = [Nⱼ, lsⱼ, cⱼ, molsⱼ], where:

Nⱼ: Number of molecules in the cluster.lsⱼ: Linear sum vector of the fingerprints.cⱼ: Centroid of the cluster.molsⱼ: List of molecule indices [37] [38].

The lsⱼ vector, in conjunction with iSIM, allows for the efficient calculation of cluster radius and diameter using the Tanimoto metric as molecules are absorbed into leaf nodes of the tree. This structure enables single-pass clustering with O(N) time complexity [37].

Table 1: Core Technical Specifications of iSIM and BitBIRCH

| Feature | iSIM Framework | BitBIRCH Algorithm |

|---|---|---|

| Primary Function | Calculate average similarity/internal diversity of a set | Partition molecules into similarity-based clusters |

| Computational Scaling | O(N) with number of molecules (N) | O(N) with number of molecules (N) |

| Core Innovation | Column-wise fingerprint summation enabling n-ary comparison | Bit Feature (BF) vector & CF-tree for binary data |

| Key Metric Output | Instant Tanimoto (iT), Complementary Similarity | Cluster membership, centroids, and diameters |

| Representation Compatibility | Binary fingerprints, real-value descriptors (normalized) | Binary molecular fingerprints |

Diagram Title: iSIM Calculation Workflow for Library Diversity

Quantitative Performance Comparison with Alternative Tools

Computational Efficiency Benchmarks

The most significant advantage of iSIM and BitBIRCH is their transformative computational efficiency compared to traditional pairwise methods.

Experimental Protocol for Timing Benchmarks: Libraries of varying sizes (e.g., 50k to 1.5 million molecules) are prepared using standardized RDKit 2048-bit fingerprints [40]. For each library, the time to compute the average Tanimoto similarity is measured for iSIM versus the exhaustive pairwise method. Similarly, total clustering time is measured for BitBIRCH versus the standard RDKit implementation of Taylor-Butina clustering. Experiments are run on identical hardware (e.g., a single 10 GB compute node) [41] [40].

Table 2: Computational Performance Benchmark

| Library Size (Molecules) | Task | Traditional Method (Time) | iSIM / BitBIRCH (Time) | Speed-Up Factor | Source/Experimental Context |

|---|---|---|---|---|---|

| ~5,000 | Clustering | Taylor-Butina (RDKit): ~1.46 s | BitBIRCH: ~0.78 s | ~1.9x | OpenCADD dataset; user time measured [40]. |

| 1,500,000 | Clustering | Taylor-Butina (RDKit): Projected days-hours | BitBIRCH: Minutes | >1,000x | Theoretical projection based on O(N) vs. O(N²) scaling [37] [42]. |

| 1,000,000,000 | Clustering | Taylor-Butina: Impossible on standard hardware | BitBIRCH: ~5 hours | Not Applicable | Parallel/iterative BitBIRCH approximation on high-performance computing resources [42]. |

| Variable (N) | Avg. Similarity | Pairwise Tanimoto: O(N²) scaling | iSIM: O(N) scaling | Increases with N | Fundamental algorithmic scaling [39]. |

Clustering Quality and Outcome Analysis

Increased speed is meaningless if it compromises result quality. Studies compare clustering outcomes using internal validation metrics and structural analysis.

Experimental Protocol for Quality Assessment: A standardized library (e.g., ChEMBL33 natural products subset, n=64,086) is clustered using BitBIRCH and Taylor-Butina at a comparable Tanimoto threshold [41]. Quality is assessed using:

- Internal Validation Indices: Calinski-Harabasz (higher is better) and Davies-Bouldin (lower is better) indices [42].

- Structural Analysis: The number of unique Murcko scaffolds within generated clusters, measuring chemical diversity preservation [41].

- Visual Inspection: t-SNE visualization of chemical space colored by cluster assignment [41].

Table 3: Clustering Quality Comparison (ChEMBL33 Natural Products)

| Quality Metric | Taylor-Butina Clustering | Original BitBIRCH | BitBIRCH with Refinement (Prune+Diameter) | Interpretation |

|---|---|---|---|---|

| Number of Clusters | Baseline | Often fewer, with one very large cluster | More balanced cluster distribution | Refinement strategies correct over-absorption. |

| Avg. Molecules per Cluster | Varies widely | Skewed by dominant cluster | More uniform distribution | Improved "granularity" of chemical space dissection [41]. |

| Unique Scaffolds per Cluster | Baseline | High count in large cluster indicates mixing | Tighter scaffold focus per cluster | Refined BitBIRCH produces more structurally coherent clusters [41]. |

| Internal Validation Indices | Baseline | Comparable or superior [38] | Improved over original BitBIRCH | BitBIRCH efficiency does not come at the cost of quality. |

Diagram Title: BitBIRCH Tree Structure and Molecule Absorption

Application in Chemical Space Comparison Research

The primary thesis context involves comparing the chemical space of natural products (NPs), approved drugs, and combinatorial libraries. iSIM and BitBIRCH enable this research at scale.

Experimental Protocol for Time-Evolution Analysis: Using successive yearly releases of databases like ChEMBL and DrugBank [35]:

- Subset Extraction: Isolate NPs, approved drugs, and synthetic compounds using metadata.

- Diversity Trend Analysis: Apply iSIM to each subset for each release year to calculate iT. Plot iT over time to assess if diversity increases with size [35].

- Space Zone Tracking: Use complementary similarity to identify medoids (core) and outliers (periphery) for each subset. Compute the Jaccard similarity (J) of these zones between consecutive years to measure core/periphery stability [35].

- Granular Clustering: Apply BitBIRCH to the entire library for key release years. Analyze the distribution of NPs, drugs, and synthetic compounds across the resulting clusters to visualize overlap and uniqueness.

Table 4: Hypothetical iSIM Analysis of Chemical Space Subsets (Time-Evolution)

| Database Release | Natural Products (iT) | Approved Drugs (iT) | Combinatorial Compounds (iT) | Key Insight |

|---|---|---|---|---|

| ChEMBL25 (2017) | 0.152 | 0.189 | 0.121 | Initial baseline diversity measures. |

| ChEMBL29 (2021) | 0.149 | 0.185 | 0.119 | Minimal iT change suggests new compounds expand space without collapsing diversity. |

| ChEMBL33 (2023) | 0.148 | 0.184 | 0.118 | Stabilizing iT indicates managed diversity growth across all subsets [35]. |

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 5: Key Research Reagents and Software for Large-Scale Chemical Space Analysis

| Item Name | Type | Function in Workflow | Relevance to iSIM/BitBIRCH |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Molecule I/O, standardization, fingerprint generation (Morgan/ECFP), scaffold analysis. | Primary tool for preparing the binary fingerprint matrices required as input for both iSIM and BitBIRCH [43] [40]. |

| ChEMBL / DrugBank / PubChem | Public Chemical/Bioactivity Databases | Source of curated, annotated molecular structures for natural products, drugs, and synthetic compounds. | Provides the raw data for time-evolution studies and comparative chemical space analysis [35]. |

| BitBIRCH Python Package | Specialized Clustering Algorithm | Efficient O(N) clustering of binary fingerprints. | The implementation of the algorithm, available on GitHub (mqcomplab/bitbirch), includes refinement options like pruning [41]. |

| SciKit-Learn | Machine Learning Library | Provides t-SNE for visualization and utilities for calculating cluster validation indices (Calinski-Harabasz). | Used for post-clustering analysis and quality validation [41]. |

| High-Performance Computing (HPC) Node | Computational Resource | Provides the memory and parallel processing capabilities for billion-molecule clustering. | Essential for running the parallel/iterative version of BitBIRCH on ultra-large libraries [42]. |

Practical Implementation and Integration

BitBIRCH is designed for integration into modern cheminformatics pipelines. Its Python API follows a scikit-learn-like syntax for ease of adoption [40]. The package includes refinement strategies such as:

- Pruning: Removing and reinserting the largest cluster to prevent dominance.

- Diameter Merge Criterion: Enforcing that new molecules are similar to all cluster members, not just the centroid, creating tighter clusters [41].

- Tolerance Parameter (ε): Controlling how much a new molecule can decrease a cluster's internal similarity [41].

These refinements ensure the algorithm is not only fast but also robust and tunable for specific research needs, such as ensuring high purity in clusters derived from mixed-origin chemical spaces.

The iSIM framework and BitBIRCH algorithm represent a significant leap forward in handling the scale of modern chemical data. As evidenced by comparative benchmarks, they offer a multi-order-of-magnitude speed advantage over traditional pairwise methods without sacrificing analytical quality. Within the thesis of chemical space comparison, these tools enable rigorous, large-scale temporal and structural analyses that were previously impractical—allowing researchers to quantitatively test hypotheses about the growth and convergence of spaces occupied by natural products, drugs, and synthetic compounds.

Future development lies in tighter integration with active learning and generative AI pipelines in drug discovery, where rapid, iterative diversity assessment and cluster-based selection are crucial. By overcoming the computational bottleneck, iSIM and BitBIRCH shift the research question from "Can we analyze this?" to "What meaningful patterns can we find?"

The pursuit of novel therapeutics is a journey through immense and structurally diverse chemical spaces. Historically, these spaces have been navigated via two primary, often divergent, paths: the exploration of Natural Products (NPs) and the construction of Synthetic Compounds (SCs). NPs, the products of biological evolution, occupy a region of chemical space characterized by high scaffold complexity, rich stereochemistry, and biological pre-validation [10]. In contrast, SCs, particularly those from combinatorial chemistry, often explore areas defined by synthetic accessibility and adherence to drug-like rules, resulting in different structural and property profiles [44]. A time-dependent chemoinformatic analysis reveals that while NPs have evolved to become larger and more complex, SCs have undergone more constrained shifts in physicochemical properties, influenced by NPs but not fully converging with them [44].

This divergence presents both a challenge and an opportunity for modern drug discovery. Virtual Screening (VS) has long been the computational workhorse for sifting through large libraries, but its success is inherently limited to the chemical space defined by the screened collection [45]. AI-Driven De Novo Design promises a paradigm shift, generating novel, optimized molecules from scratch rather than selecting from a pre-defined list [46]. This article provides a comparative guide to these methodologies, framing their performance and experimental validation within the broader thesis of bridging the distinct but complementary chemical spaces of natural products and synthetic compounds. By integrating the biological relevance of NPs with the expansive explorative power of generative AI and large synthetic libraries, researchers can now design novel chemical entities—pseudo-natural products and optimized synthetic leads—that transcend traditional boundaries [10] [47].

Performance Comparison of Virtual Screening Methodologies

Virtual screening is a critical first step in computationally identifying potential drug candidates. Its efficacy depends on accurate scoring functions and robust benchmarking. Recent advances have focused on improving both the metrics for evaluation and the algorithms for screening ultra-large libraries.

Benchmarking Metrics and Model Performance