Multi-Omics Integration in Natural Product Research: A Comprehensive Roadmap from Gene Discovery to Clinical Translation

This article provides a comprehensive guide for researchers and drug development professionals on leveraging multi-omics data integration to revolutionize natural product discovery and development.

Multi-Omics Integration in Natural Product Research: A Comprehensive Roadmap from Gene Discovery to Clinical Translation

Abstract

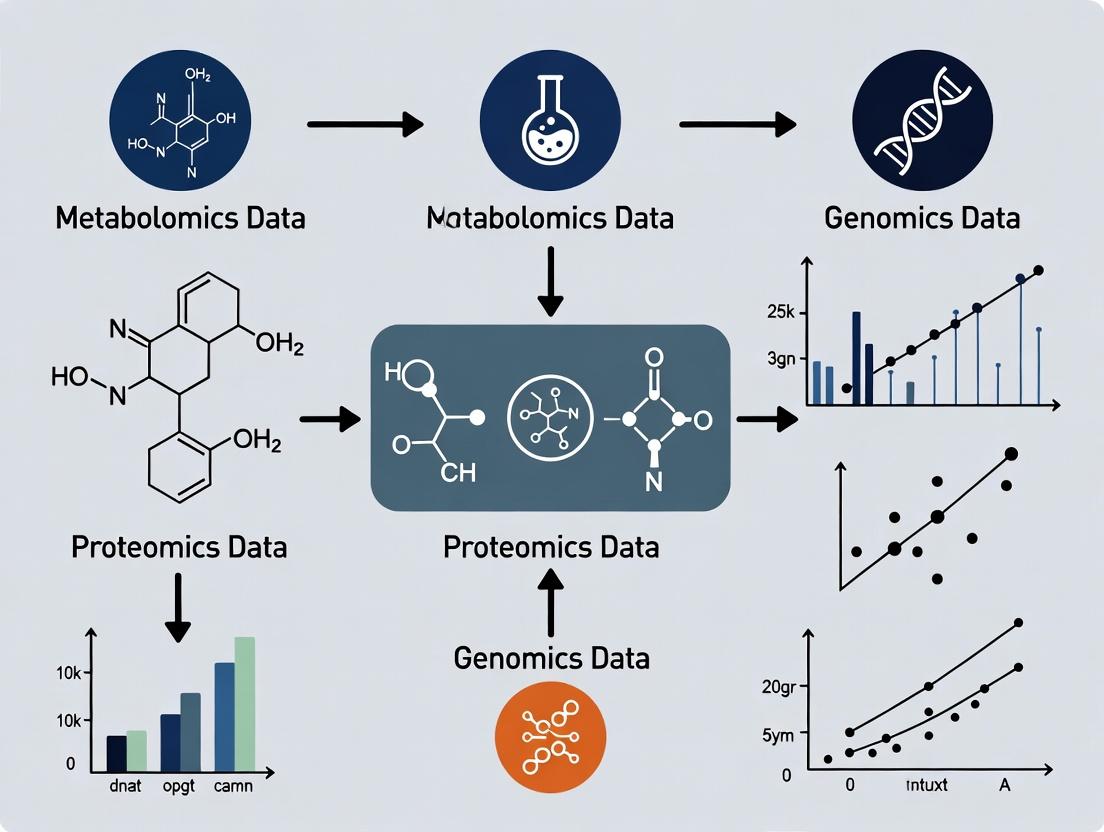

This article provides a comprehensive guide for researchers and drug development professionals on leveraging multi-omics data integration to revolutionize natural product discovery and development. It begins by establishing the foundational principles of genomics, transcriptomics, proteomics, and metabolomics, and their synergistic role in moving from gene clusters to bioactive molecules [citation:1][citation:9]. The core of the article details methodological workflows, from genome mining and molecular networking to AI-driven predictive modeling, with practical applications in identifying novel antibiotics and plant-derived medicines [citation:6][citation:8]. To ensure robust research, we address critical troubleshooting steps for overcoming data heterogeneity, batch effects, and integration challenges [citation:3][citation:10]. Finally, the article evaluates and compares state-of-the-art computational frameworks and validation strategies for biomarker and target identification, essential for translating discoveries into clinical candidates [citation:2][citation:4]. This integrated roadmap aims to equip scientists with the knowledge to accelerate the pipeline from natural resource to novel therapeutic.

Building the Base: Core Omics Technologies and Experimental Design for Natural Product Discovery

This technical guide deconstructs the foundational omics technologies—genomics, transcriptomics, proteomics, and metabolomics—within the critical context of multi-omics data integration for natural product research. The integration of these disparate but complementary data layers is revolutionizing the discovery, characterization, and mechanistic understanding of bioactive natural compounds. By moving beyond single-layer analysis, researchers can connect a compound's genetic blueprint in a host organism to its expression, protein synthesis, and ultimate metabolic output, thereby accelerating the translation of natural products into viable therapeutics. This primer details the core principles, state-of-the-art methodologies, and integrative computational strategies essential for modern, systems-level research in this field [1] [2].

The Four Pillars of Omics Technology

Genomics: The Static Blueprint

Genomics involves the comprehensive study of an organism's complete set of DNA, including all its genes and non-coding sequences. It provides the static, heritable blueprint that encodes the potential for natural product biosynthesis.

- Core Technology: Next-Generation Sequencing (NGS), including Whole Genome Sequencing (WGS) and targeted amplicon sequencing.

- Key Output: Identifies Biosynthetic Gene Clusters (BGCs) responsible for producing secondary metabolites. It reveals single nucleotide polymorphisms (SNPs) and structural variations that may influence compound production [1].

- Role in Natural Product Research: Serves as the starting point for identifying the genetic potential of microbial communities or plants to produce novel compounds.

Transcriptomics: The Dynamic Expression

Transcriptomics measures the complete set of RNA transcripts (the transcriptome) produced by the genome under specific conditions. It reflects the dynamically expressed genes at a given time point.

- Core Technology: RNA sequencing (RNA-seq), including bulk and single-cell modalities.

- Key Output: Quantifies gene expression levels (mRNA abundance), revealing which BGCs are actively transcribed in response to environmental stimuli, co-culture, or stress [1].

- Role in Natural Product Research: Connects genetic potential to active biosynthesis, guiding the optimization of fermentation or cultivation conditions to activate silent gene clusters.

Proteomics: The Functional Effectors

Proteomics is the large-scale study of the entire complement of proteins (the proteome), including their structures, modifications, interactions, and abundances. Proteins are the functional executors of cellular processes, including the enzymes that catalyze natural product synthesis.

- Core Technology: Mass spectrometry (MS), often coupled with liquid chromatography (LC-MS/MS).

- Key Output: Identifies and quantifies proteins and their post-translational modifications (PTMs). Confirms the translation of key enzymes in a biosynthetic pathway [1].

- Role in Natural Product Research: Validates the functional expression of predicted biosynthetic machinery and can elucidate regulatory mechanisms controlling metabolic flux.

Metabolomics: The Phenotypic Signature

Metabolomics focuses on the comprehensive profiling of small-molecule metabolites (the metabolome) within a biological system. It represents the ultimate downstream output of genomic, transcriptomic, and proteomic activity.

- Core Technology: Mass spectrometry (MS) and Nuclear Magnetic Resonance (NMR) spectroscopy.

- Key Output: Identifies and quantifies endogenous and exogenous metabolites, providing a direct snapshot of the physiological state [1].

- Role in Natural Product Research: Directly detects and characterizes the final natural products and their intermediates. It is essential for profiling chemical diversity and understanding the metabolic response of a host to a bioactive compound.

Table 1: Core Omics Layers: Technologies, Outputs, and Applications in Natural Product Research

| Omics Layer | Core Molecular Target | Primary Technologies | Key Output for Natural Products | Temporal Dynamics |

|---|---|---|---|---|

| Genomics | DNA | NGS, WGS, PacBio | Biosynthetic Gene Clusters (BGCs), genetic potential | Static (with variation) |

| Transcriptomics | RNA | RNA-seq, scRNA-seq | Expression levels of BGC genes | Highly dynamic (minutes/hours) |

| Proteomics | Proteins | LC-MS/MS, 2D-Gels | Abundance/activity of biosynthetic enzymes | Dynamic (hours/days) |

| Metabolomics | Metabolites | LC/GC-MS, NMR | Identification/quantification of natural products & intermediates | Highly dynamic (seconds/minutes) |

Foundational Experimental Protocols

Multi-Omics Sample Preparation Workflow

A critical first step is designing an experiment that yields high-quality, integrable data from the same biological source material [3].

Diagram: Parallel sample processing workflow for multi-omics

Key Protocol Steps:

- Standardized Sampling: Collect and immediately snap-freeze material in liquid nitrogen to preserve all molecular layers. For microbial cultures, ensure harvest is during the target production phase.

- Homogenization: Use a standardized method (e.g., bead beating in a chilled homogenizer) to simultaneously lyse cells for parallel extractions.

- Parallel Fractionation: Aliquot the homogenate for dedicated, optimized extraction protocols:

- Genomics: Use silica-column or CTAB-based methods for high-molecular-weight DNA.

- Transcriptomics: Use guanidinium thiocyanate-phenol solutions (e.g., TRIzol) to simultaneously isolate RNA and stabilize it from degradation.

- Proteomics: Use urea- or detergent-based lysis buffers with protease/phosphatase inhibitors. Follow with protein precipitation, digestion (e.g., with trypsin), and desalting.

- Metabolomics: Use cold methanol/water/chloroform extraction for polar and non-polar metabolites. Quench metabolism rapidly.

Data Preprocessing and Normalization

Raw data from each platform must be standardized to be comparable and integrable [3] [1].

- Genomics: Quality trimming (FastQC, Trimmomatic), adapter removal, and alignment to a reference genome or de novo assembly. BGC prediction using tools like antiSMASH.

- Transcriptomics: Read alignment (HISAT2, STAR), gene/transcript quantification (featureCounts, Salmon), and normalization (e.g., TPM, FPKM) to account for library size and gene length.

- Proteomics: Peak picking and alignment from MS raw files, peptide identification via database searching (against a genome-informed proteome database), and label-free (MaxLFQ) or label-based quantification normalization.

- Metabolomics: Peak detection, alignment, and annotation using libraries (e.g., GNPS, HMDB). Normalization by total ion count, sample weight, or internal standards, followed by scaling (e.g., Pareto scaling).

Critical Preprocessing Step: Batch Effect Correction Technical variation from different processing batches can obscure biological signals. Methods like ComBat or ANOVA are essential to apply before integration [1].

Strategies for Multi-Omics Data Integration

Integration is not a one-size-fits-all process; the strategy depends on the biological question and data structure [4] [1].

Table 2: Multi-Omics Data Integration Strategies

| Integration Type | Description | Key Methods/Tools | Advantages | Challenges |

|---|---|---|---|---|

| Early (Feature-level) | Concatenating raw or preprocessed features from all omics into a single matrix before analysis. | Simple concatenation, some deep learning models. | Preserves all raw information; can capture complex, unforeseen interactions. | Extremely high dimensionality; prone to noise; dominant datasets may overshadow others [1]. |

| Intermediate (Model-level) | Analyzing omics datasets separately and then combining the results or model predictions. | Similarity Network Fusion (SNF), Multiple Kernel Learning, MOFA+ [3] [4]. | Reduces complexity; can incorporate biological context (e.g., pathways). Effective for patient/subtype stratification. | Requires careful design; may lose some granular information [1]. |

| Late (Decision-level) | Building separate predictive models for each omics type and combining their final outputs (e.g., predictions). | Ensemble methods (stacking, weighted voting). | Robust to missing data; computationally efficient; uses best model per data type. | May miss subtle cross-omics interactions not captured by individual models [1]. |

| Knowledge-Based | Using existing biological knowledge (pathways, networks) as a scaffold to overlay and connect multi-omics data. | Pathway enrichment (KEGG, Reactome), network analysis (Cytoscape). | Highly interpretable; leverages prior knowledge to guide integration. | Limited to known biology; may miss novel interactions. |

Diagram: Conceptual flow of multi-omics data integration strategies

A prominent example of a structured integration pipeline is XomicsToModel, a semi-automated protocol that integrates bibliomic, transcriptomic, proteomic, and metabolomic data with a generic genome-scale metabolic reconstruction to generate a thermodynamically consistent, context-specific metabolic model [5]. This is particularly powerful for natural product research, as it can predict how an organism redistributes metabolic flux in response to the production of a secondary metabolite or upon exposure to a bioactive compound.

Application in Natural Product Research: A Multi-Omics Workflow

Integrating multi-omics transforms the natural product discovery pipeline from a linear process to a systems-level cycle of hypothesis generation and testing.

- Discovery & Prioritization: Metagenomic or genomic sequencing of complex microbiomes or plant tissues identifies novel BGCs. Transcriptomic data from induced vs. control conditions prioritizes which of these BGCs are actively expressed and likely producing compounds [2].

- Characterization & Validation: Proteomic data confirms the production of key enzymes from the prioritized BGC. Metabolomic profiling (e.g., using Molecular Networking on GNPS) links the expressed BGC to its specific chemical products, discovering novel analogs [5].

- Mode-of-Action Studies: Treating a target organism (e.g., a pathogenic bacterium or cancer cell line) with a purified natural product and applying multi-omics (transcriptomics, proteomics, metabolomics) reveals the compound's system-wide impact, identifying pathways involved in its therapeutic effect and potential resistance mechanisms [1] [2].

- Biosynthesis Optimization: Integrating transcriptomic, proteomic, and metabolomic data from a producing host under different fermentation conditions identifies bottlenecks in the biosynthetic pathway. This guides metabolic engineering strategies to overproduce the desired compound.

Table 3: Essential Research Reagent Solutions for Multi-Omics Studies

| Reagent/Tool Category | Specific Example | Function in Multi-Omics Workflow |

|---|---|---|

| Nucleic Acid Stabilization | RNAlater, TRIzol Reagent | Preserves RNA integrity at sample collection for accurate transcriptomics; TRIzol allows simultaneous isolation of RNA, DNA, and proteins. |

| Protease/Phosphatase Inhibitors | EDTA, PMSF, Commercial Cocktails (e.g., from Roche) | Added during protein extraction to prevent degradation and preserve post-translational modification states for proteomics. |

| Metabolite Quenching Solvents | Cold 60% Aqueous Methanol | Rapidly halts cellular metabolism during sample harvest for metabolomics, providing a true snapshot of the metabolome. |

| Internal Standards for MS | Labeled Amino Acids (¹³C, ¹⁵N), SILAC kits; Stable Isotope-Labeled Metabolites | Enables accurate quantification in proteomics and metabolomics by correcting for technical variation during mass spectrometry. |

| Bioinformatics Pipelines | nf-core pipelines, COBRA Toolbox [5] | Standardized, version-controlled computational workflows for reproducible analysis and integration of omics data (e.g., for building metabolic models). |

| Multi-Omics Databases | The Cancer Genome Atlas (TCGA) [2], GNPS (for metabolomics) | Public repositories providing reference datasets for method benchmarking and discovery of connections between molecular layers. |

The future of multi-omics in natural product research lies in temporal and spatial integration, single-cell omics, and advanced artificial intelligence. Time-series (longitudinal) omics data will map the dynamic sequence of events leading to compound production or therapeutic response. Spatial transcriptomics and metabolomics will localize biosynthesis within a tissue or microbial biofilm. AI and graph neural networks will increasingly mine integrated datasets to predict novel BGC-product relationships and optimize synthetic biology designs [4] [1].

Successful multi-omics integration requires meticulous experimental design, rigorous standardization, and choosing an integration strategy aligned with the research goal [3]. By embracing this holistic approach, researchers can fully deconstruct the complexity of natural product biosynthesis and mechanism, leading to a new era of rational discovery and development.

The field of natural product research is undergoing a paradigm shift, driven by the exponential growth of genomic data. Sequencing technologies have revealed a staggering reservoir of biosynthetic potential, with marine bacterial genomes alone predicted to contain tens of thousands of biosynthetic gene clusters (BGCs) [6]. In the fungal subphylum Pezizomycotina, estimates suggest the existence of 1.4 to 4.3 million secondary metabolites, indicating that over 90% of fungal chemical diversity remains undiscovered [7]. However, this genomic promise is met with a central experimental challenge: the majority of these BGCs are "silent" or "cryptic," not expressed under standard laboratory conditions, creating a profound disconnect between genetic potential and characterized chemical output [8]. Establishing a definitive link between a BGC and its corresponding bioactive metabolite is therefore the critical bottleneck in modern drug discovery from natural sources.

This challenge is framed within the essential context of multi-omics data integration. Isolated genomics or metabolomics provides only a fragment of the picture. The solution lies in the concurrent and correlative application of genomics, transcriptomics, proteomics, and metabolomics to illuminate the complex pathway from gene sequence to functional small molecule [9] [10]. This technical guide details the core strategies, experimental protocols, and integrative analytical frameworks designed to solve this central challenge and accelerate the discovery of novel therapeutic agents.

Multi-Omics Integration: The Core Analytical Framework

The linkage of BGCs to metabolites is not a linear process but a cyclical, hypothesis-generating workflow powered by multi-omics integration. This framework systematically layers biological data to converge on validated gene-metabolite pairs.

Foundational Omics Layers and Their Interplay

- Genomics & Metagenomics: Serves as the starting point for in silico discovery. Tools like antiSMASH (antibiotics & Secondary Metabolite Analysis Shell) are used to scan sequenced genomes or metagenome-assembled genomes (MAGs) for BGCs [6] [11]. Clustering algorithms like BiG-SCAPE (Biosynthetic Gene Similarity Clustering and Prospecting Engine) group predicted BGCs into Gene Cluster Families (GCFs) based on sequence similarity, prioritizing novelty [6] [7]. For example, a study of marine bacteria clustered vibrioferrin BGCs into 12 families at 10% sequence similarity, highlighting fine-scale diversity [6].

- Transcriptomics: Identifies which prioritized BGCs are actively transcribed under specific conditions (e.g., stress, co-culture). RNA sequencing (RNA-seq) and co-expression network analysis can reveal clusters of co-regulated genes, providing strong circumstantial evidence for a BGC's boundary and activity [10].

- Metabolomics: Provides the chemical phenotype. High-resolution mass spectrometry (HR-MS) and molecular networking platforms like GNPS (Global Natural Products Social Molecular Networking) analyze the metabolome, grouping detected ions by spectral similarity into "molecular families" [12] [13].

- Proteomics: Validates the translation of BGC genes into functional enzymes. Quantitative techniques can confirm the production of key biosynthetic proteins when a pathway is activated [14] [10].

The integrative power is realized by correlating these layers: a BGC (genomics) that is highly transcribed (transcriptomics) should coincide with the production of its corresponding enzymes (proteomics) and a specific molecular family in the metabolome (metabolomics). Pathway-targeted molecular networking is a key strategy that refines this correlation. By comparing metabolomes of a wild-type strain and a mutant with a deleted or inactivated BGC, metabolites that disappear in the mutant can be specifically linked to that genetic locus [13].

Diagram: Multi-Omics Integration Workflow for BGC-Metabolite Linking. This diagram illustrates the parallel generation and integration of omics data layers to form and validate testable hypotheses linking specific BGCs to their metabolite products.

Quantitative Landscape of BGC Diversity

The scale of the challenge is underscored by quantitative surveys of BGC diversity across different environments and taxa.

Table 1: BGC Diversity in Selected Genomic and Metagenomic Studies

| Study Source / Environment | Number of Genomes/MAGs Analyzed | Predominant BGC Types Identified | Key Quantitative Findings | Reference |

|---|---|---|---|---|

| Marine Bacteria (21 species) | 199 genomes | Non-ribosomal peptide synthetases (NRPS), Betalactone, NI-siderophores | 29 total BGC types identified; Vibrioferrin BGCs formed 12 distinct families at 10% sequence similarity. | [6] |

| Alkaline Soda Lake Chitu (Metagenomic) | Metagenome-assembled genomes (MAGs) | Terpene-precursors (32%), Terpenes (25%), RiPPs (9%), NRPS (7%) | 13 major BGC types identified; highlights extremophiles as a rich source of diverse biosynthesis. | [11] |

| Fungal Genus Aspergillus | 135 genomes | Multiple classes (NRPS, PKS, Terpene, Hybrid) | Avg. ~52 BGCs per genome; 80% of Gene Cluster Families (GCFs) were species-specific. | [7] |

| Pezizomycotina Fungi (Projection) | Modeled from genomic surveys | Not Specified | Estimated 2.55 - 4.25 million BGCs across known species, encoding 1.4 - 4.3 million metabolites. | [7] |

Core Experimental Strategies for Establishing BGC-Metabolite Links

Beyond bioinformatic correlation, definitive proof requires experimental perturbation of the BGC and observation of the corresponding metabolic change. Two primary, complementary strategies are employed.

Strategy 1: BGC-First (Gene Manipulation in Native or Heterologous Host)

This approach starts with a genetically tractable BGC and aims to elicit or transfer its expression to observe metabolic output.

- Protocol: Heterologous Expression of Marine Bacterial BGCs.

- Step 1 - BGC Selection & Capture: A prioritized BGC (e.g., >10 kb) is captured from genomic DNA. For large clusters, this often involves cosmids, Bacterial Artificial Chromosomes (BACs), or direct synthesis [8].

- Step 2 - Host Selection: The choice of heterologous host is critical. Common hosts include Streptomyces spp. (for actinomycete BGCs), Escherichia coli (optimized with accessory genes), or Aspergillus nidulans (for fungal BGCs). Selection criteria include genetic tractability, native precursor supply, and compatibility with BGC regulatory elements [8].

- Step 3 - Vector Assembly & Transformation: The captured BGC is cloned into an appropriate expression vector, often containing strong, constitutive promoters to drive expression of "silent" clusters. The vector is then introduced into the heterologous host via transformation or conjugation.

- Step 4 - Fermentation & Metabolite Analysis: Transformed hosts are cultured. Metabolite extracts are compared to controls (host with empty vector) using HPLC-MS and molecular networking to identify new compounds specific to the BGC [8] [10]. A successful example is the heterologous expression of a vibrioferrin siderophore BGC from marine metagenomic DNA in E. coli [8].

Strategy 2: Metabolite-First (Comparative Omics and Pathway-Targeted Analysis)

This approach begins with an observed metabolite or metabolic profile and works backward to identify the responsible BGC.

- Protocol: Pathway-Targeted Molecular Networking with Genetic Mutants.

- Step 1 - Cultivation & Metabolomics: The native producing organism is cultivated under conditions that stimulate metabolite production (OSMAC approach: One Strain Many Compounds). A crude metabolite extract is analyzed by untargeted HR-LC/MS-MS [12].

- Step 2 - Genetic Inactivation: A key gene within the suspected BGC (e.g., a core biosynthetic enzyme like a polyketide synthase) is knocked out via homologous recombination or CRISPR-Cas9, creating an isogenic mutant strain [15].

- Step 3 - Comparative Analysis: The mutant and wild-type strains are cultivated identically, and their metabolomes are analyzed. The resulting MS/MS data files are processed and uploaded to the GNPS platform.

- Step 4 - Network Construction & Analysis: A molecular network is created from all fragmentation spectra. Spectra are clustered by similarity. Metabolites that are absent in the mutant network but present in the wild-type are directly linked to the inactivated BGC. This method was pivotal in characterizing metabolites from the colibactin BGC [13].

Diagram: Pathway-Targeted Molecular Networking Workflow. This workflow uses genetic inactivation of a BGC to pinpoint its specific metabolic products through comparative analysis of molecular networks.

The Scientist's Toolkit: Key Research Reagent Solutions

Successful execution of these strategies depends on a suite of specialized reagents, software, and biological materials.

Table 2: Essential Research Toolkit for Linking BGCs and Metabolites

| Tool / Reagent Category | Specific Example(s) | Primary Function in Workflow | Key Consideration / Application |

|---|---|---|---|

| Bioinformatics Software | antiSMASH [6], DeepBGC, PRISM | BGC Prediction & Annotation: Identifies and annotates BGCs in genome sequences. | Foundation of genome mining; accuracy is critical for downstream steps. |

| Clustering & Analysis Tools | BiG-SCAPE [6] [7], CORASON | GCF Analysis: Clusters BGCs by similarity to prioritize novelty and study diversity. | Used to contextualize a BGC within known chemical space. |

| Molecular Networking Platform | GNPS (Global Natural Products Social) [12] [10] | Metabolome Visualization & Dereplication: Organizes MS/MS data into networks of related molecules. | Core platform for metabolite-first and comparative strategies; essential for dereplication. |

| Heterologous Host Strains | Streptomyces coelicolor, Aspergillus nidulans, E. coli (BAP1) [8] | BGC Expression Chassis: Provides a genetically tractable background to express silent BGCs. | Host must supply necessary precursors, folding machinery, and tolerate pathway products. |

| Cloning & Assembly Systems | Gibson Assembly, Yeast Recombination, Cosmids/BACs [8] | BGC Capture & Engineering: Enables isolation, manipulation, and transfer of large DNA clusters. | Critical for handling BGCs often >30 kb in size. |

| Genetic Manipulation Tools | CRISPR-Cas9, Lambda-RED Recombination [15] | Gene Knockout/Knock-in: Creates isogenic mutant strains for comparative analysis. | Allows precise genetic perturbation to establish causality. |

| Mass Spectrometry Standards | Deuterated solvents, stable isotope-labeled precursors (e.g., ¹³C-acetate) | Metabolite Detection & Tracing: Aids in compound identification and elucidates biosynthetic pathways. | Used in isotopic labeling experiments to confirm a metabolite originates from a specific pathway. |

Future Perspectives: AI and Integrative Knowledge Graphs

The future of solving the BGC-metabolite linkage challenge lies in deeper, automated integration. Artificial Intelligence (AI) and Machine Learning (ML) are being harnessed to predict BGC boundaries, substrate specificity of enzymes, and even the chemical structures of final metabolites from sequence data alone [10]. The next frontier is the construction of integrative knowledge graphs that systematically link genomic entities (BGCs, enzymes), chemical entities (metabolites, spectra), and phenotypic data (bioactivity, regulation) [10]. These graphs, analyzed by graph neural networks, will allow for predictive reasoning across the entire natural product discovery pipeline, transforming the central challenge from a serial bottleneck into an integrated, predictive science. This evolution within the framework of multi-omics integration is poised to unlock the vast, untapped reservoir of bioactive metabolites encoded in the global microbiome [9] [7].

Principles of Systems Biology and Holistic Experimental Design for Multi-Omics Studies

The discovery and development of therapeutics from natural products represent a cornerstone of modern pharmacology, yielding compounds with unprecedented chemical structures and potent biological activities [16]. However, the transition from identifying a bioactive natural extract to understanding its precise mechanism of action remains a significant bottleneck. Traditional reductionist approaches, which study molecular components in isolation, often fail to capture the complex, multi-layered interactions through which natural products exert their effects. This gap necessitates a paradigm shift toward systems biology, a holistic framework that examines biological systems as integrated and interacting networks of genes, proteins, and metabolites [17].

Within this thesis on multi-omics data integration for natural product research, this whitepaper establishes the foundational principles and practical methodologies for designing and executing holistic multi-omics studies. The integration of genomics, transcriptomics, proteomics, and metabolomics data provides a comprehensive, systems-level view of a biological response to a natural product, moving beyond single-target identification to elucidate entire perturbed pathways and networks [14]. This guide details the core tenets of systems biology as they apply to experimental design, outlines actionable protocols for generating robust multi-omics data, and reviews computational strategies for integrative analysis, all aimed at accelerating and de-risking natural product-based drug discovery.

Foundational Principles of Systems Biology for Experimental Design

Systems biology is defined by several key principles that directly inform the design of meaningful multi-omics experiments, particularly in the context of natural products with potentially pleiotropic effects.

2.1 The Hierarchical and Interconnected Nature of Biological Systems Biological function emerges from the dynamic interactions across multiple organizational layers. The flow of information and regulation is not strictly linear but involves complex feedback and feedforward loops across these layers [17]. A natural product intervention can induce changes at the epigenetic or transcriptional level that subsequently alter the proteome and metabolome, while metabolic changes can themselves signal back to modify gene expression. A effective experimental design must therefore plan to capture data from multiple, complementary omics layers to map these interactions.

Diagram: Hierarchical & Interconnected Nature of Biological Systems

2.2 Dynamic and Context-Dependent Responses The cellular state is not static. The effect of a natural product is dependent on the temporal context (time of exposure), the cellular context (cell type, tissue), and the environmental context (nutrient availability, co-treatments) [17]. Systems biology experiments must incorporate these variables. For instance, a time-series design is critical to distinguish primary, direct targets from secondary, adaptive responses. Similarly, comparing omics profiles across different relevant cell types can reveal cell-specific mechanisms of action or toxicity.

2.3 Emergent Properties and Network Analysis The core analytic approach in systems biology is network-based. The goal is to integrate omics data to reconstruct molecular interaction networks (e.g., gene regulatory, protein-protein interaction, metabolic networks). Perturbations by a natural product are analyzed not just as a list of differentially expressed entities, but as localized or global rewiring of these networks. Key emergent properties, such as the identification of highly connected "hub" nodes or disrupted functional modules, can point to critical leverage points in the mechanism of action that might not be apparent from single-omics analysis [18].

Holistic Experimental Design Framework

Designing a multi-omics study requires careful upfront planning to ensure biological relevance, technical feasibility, and analytical power. The following framework outlines the critical decision points.

3.1 Defining the Precise Research Question The design is dictated by the question. In natural product research, common questions include:

- Target Deconvolution: What are the direct protein targets of this compound? [16]

- Mechanism of Action: What signaling pathways and biological processes are altered?

- Toxicity Prediction: What off-target or stress responses are induced at different doses?

- Biomarker Discovery: What omics signatures predict sensitivity or resistance to the compound?

The question determines the choice of omics layers, experimental model, and sampling strategy [19].

3.2 Selection of Omics Technologies Each omics layer provides unique and complementary information. The table below compares key technologies relevant to natural product research.

Table 1: Comparative Analysis of Core Omics Technologies in Natural Product Research

| Omics Layer | Key Technologies | Information Gained | Advantages for NP Research | Key Challenges |

|---|---|---|---|---|

| Genomics | Whole Genome Sequencing, SNP Arrays | Genetic blueprint, mutations, polymorphisms. | Identify genetic biomarkers of response; assess compound's effect on genome stability. | Static information; does not directly inform dynamic response [17]. |

| Transcriptomics | RNA-Seq, Single-Cell RNA-Seq (scRNA-Seq) | Global gene expression (mRNA) levels. | Highly sensitive; reveals regulated pathways; scRNA-Seq uncovers heterogeneity in response [17] [14]. | mRNA levels may not correlate with protein activity; post-transcriptional regulation missed. |

| Proteomics | LC-MS/MS (Label-free, TMT), Affinity Proteomics | Protein abundance, post-translational modifications (PTMs). | Directly profiles functional effectors; chemical proteomics can identify direct drug-binding proteins [16] [14]. | Lower throughput & depth than transcriptomics; dynamic range challenges [17]. |

| Metabolomics | LC/GC-MS, NMR | Abundance of small-molecule metabolites. | Closest readout of phenotypic state; reveals metabolic rewiring and potential on-/off-target effects [17]. | Extreme chemical diversity; requires multiple platforms; compound identification difficult. |

3.3 Critical Design Considerations

- Temporal Design: A time-course experiment is superior to a single endpoint. It allows for the construction of causal networks and distinguishes direct from indirect effects. Key time points should capture early signaling events, mid-term transcriptional responses, and longer-term phenotypic adaptations.

- Dose-Response Design: Including multiple concentrations (from sub-therapeutic to toxic) helps differentiate specific mechanisms from general stress responses and aids in understanding the therapeutic window.

- Replication and Batch Effects: Biological replication (multiple independent samples) is non-negotiable for statistical power. Technical replication and randomization are crucial to minimize batch effects, which are particularly pernicious when integrating data generated from different platforms at different times [19].

- Sample Matched Design: The most powerful design for integration is when all omics layers are profiled from the same biological sample aliquot or from aliquots taken from a homogenized pool. This eliminates inter-sample variability and is ideal for network inference [19].

Diagram: Holistic Multi-Omics Experimental Workflow

Detailed Methodologies and Protocols

4.1 Protocol for Single-Cell Multi-Omics from Primary Cells Single-cell technologies are emerging as powerful tools for natural product research, as they can resolve heterogeneous cell populations within a tissue or tumor that may respond differently to treatment [14]. The following adapts a protocol for high-quality single-cell multi-omics from human peripheral blood mononuclear cells (PBMCs) [20], a model relevant for immunomodulatory natural products.

- Sample Collection & PBMC Isolation: Collect fresh blood in anticoagulant tubes. Isolate PBMCs via density gradient centrifugation (e.g., Ficoll-Paque). Maintain samples at 4°C throughout. Assess cell viability (>95%) using Trypan Blue or an automated cell counter.

- Cell Processing for Single-Cell Sequencing: Resuspend PBMC pellet in a suitable buffer containing a viability dye. Use fluorescence-activated cell sorting (FACS) to sort single, live cells into 384-well plates containing lysis buffer. Plates are immediately frozen.

- Library Preparation: Perform reverse transcription and PCR amplification to generate cDNA. Construct sequencing libraries using kits compatible with your single-cell technology (e.g., 10x Genomics). Include unique molecular identifiers (UMIs) and cell barcodes to track transcripts to individual cells.

- Parallel Omics from the Same Population: From an aliquot of the same PBMC pool used for single-cell sorting, isolate material for bulk omics.

- Bulk RNA-seq: Extract total RNA for sequencing to provide a high-depth transcriptome baseline.

- Proteomics: Pellet cells, lyse, digest proteins with trypsin, and prepare peptides for LC-MS/MS analysis.

- Metabolomics: Quench metabolism (e.g., cold methanol), extract metabolites, and analyze by LC-MS.

4.2 Chemical Proteomics for Direct Target Identification This protocol is central to natural product target deconvolution [16] [14].

- Probe Synthesis: Chemically modify the natural product to incorporate a "handle" (e.g., an alkyne or azide for click chemistry) and a photoreactive group (e.g., a diazirine). Control: Synthesize an inactive analog with the same handle.

- Cell Treatment and Photo-Crosslinking: Treat live cells or cell lysates with the active probe or inactive control. Irradiate with UV light (e.g., 365 nm) to activate the diazirine, covalently crosslinking the probe to its interacting proteins.

- Click Chemistry and Enrichment: Lyse cells. Use copper-catalyzed azide-alkyne cycloaddition (CuAAC) to "click" a biotin or a solid-support tag onto the alkyne handle of the probe. Capture probe-bound proteins using streptavidin beads or the solid support.

- Protein Identification and Validation: Wash beads stringently. Elute bound proteins and identify them by high-sensitivity LC-MS/MS. Compare proteins enriched by the active probe versus the inactive control. Validate top hits through orthogonal methods (e.g., cellular thermal shift assay - CETSA, surface plasmon resonance).

Data Integration and Computational Analysis Strategies

The integration of heterogeneous omics datasets is the most critical analytical step. Methods can be categorized by the stage at which integration occurs [19].

5.1 Integration Methodologies

- Early Integration (Concatenation): Datasets from different omics are merged into a single large matrix (e.g., genes + proteins + metabolites as features) for analysis with multivariate statistics or machine learning. This is simple but challenging due to different data scales, noise structures, and the "curse of dimensionality" [19].

- Late Integration (Model-based): Analyses are performed separately on each omics dataset (e.g., differential expression analysis), and the results (p-values, pathway enrichments) are combined meta-analytically. This is flexible but may miss cross-omics interactions [19].

- Intermediate Integration (Transformation-based): This is often the most powerful approach. Dimensionality reduction (e.g., PCA, MOFA) is applied to each dataset to extract latent factors, which are then integrated. Network-based integration (e.g., constructing multi-layered networks where edges connect different entity types) is particularly aligned with systems biology principles and can reveal key inter-omic drivers [17] [18].

Diagram: Multi-Omics Data Integration Strategies

5.2 Pathway and Network Analysis The final analytical step involves interpreting integrated results in a biological context. Enrichment analysis tools (e.g., Gene Ontology, KEGG) are applied to combined gene/protein/metabolite lists. More sophisticated approaches involve mapping data onto prior knowledge networks (PKNs) of protein-protein interactions, signaling pathways, or metabolic models. The natural product's impact is visualized as a subnetwork of significantly perturbed interactions, highlighting key hubs and bridging molecules that connect different omics layers, thereby proposing testable mechanistic hypotheses [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Platforms for Multi-Omics Studies

| Category | Item/Reagent | Function in Multi-Omics Workflow | Key Consideration for Natural Product (NP) Research |

|---|---|---|---|

| Sample Preparation | Phase Lock/Barrier Tubes | Provides clean separation of organic and aqueous phases during metabolite/protein extraction, minimizing cross-contamination. | Critical for preparing high-quality samples for both proteomics and metabolomics from the same lysate. |

| Membrane-based Protein Extraction Kits | Efficiently separates cytoplasmic, nuclear, and membrane protein fractions for deeper proteome coverage. | Many NP targets are membrane-bound receptors or transporters. | |

| Stable Isotope-Labeled Internal Standards (SIL-IS) | Spiked into samples pre-extraction for metabolomics & proteomics to correct for technical variability and enable absolute quantification. | Essential for robust quantification, especially when comparing NP-treated vs. control samples. | |

| Target Identification | Alkyne/Azide-modified NP Probes | Chemically modified versions of the NP for click chemistry-enabled target enrichment (chemical proteomics) [16]. | Probe design must retain the biological activity of the parent NP. An inactive control probe is mandatory. |

| Diazirine-based Photo-Crosslinkers | Incorporated into NP probes to covalently capture transient or low-affinity protein interactions upon UV exposure. | Crucial for "fishing" direct targets from complex cellular milieus. | |

| Streptavidin Magnetic Beads | Used to capture biotin-tagged proteins after click chemistry for subsequent mass spec analysis. | High binding capacity and low non-specific binding are required. | |

| Single-Cell Analysis | Cell Viability Dyes (e.g., Propidium Iodide) | Distinguishes live from dead cells during FACS sorting for single-cell sequencing, ensuring high-quality input. | Dead cells can cause significant background noise in single-cell data. |

| Single-Cell 3' or 5' Gene Expression Kits | Enables barcoding and library construction from thousands of individual cells for transcriptomic profiling. | Allows dissection of heterogeneous responses to NP treatment within a tumor or tissue sample [14]. | |

| Data Analysis | Multi-Omics Integration Software (e.g., MOFA+, mixOmics) | Statistical packages designed specifically for the integration of heterogeneous omics datasets. | Prefer tools that provide visualization of inter-omic relationships and factor trajectories over time/dose. |

| Network Visualization & Analysis Tools (e.g., Cytoscape) | Platforms for building, visualizing, and analyzing molecular interaction networks from integrated data. | Essential for moving from lists to systems-level models of NP action. Plugins allow connection to pathway databases. |

Abstract Within the paradigm of multi-omics data integration for natural product discovery, the initial biological handling phases are paramount. This technical guide delineates the critical, interconnected procedures for sample collection, preservation, and biomass standardization that underpin successful genomics, metabolomics, and proteomics workflows. Drawing from contemporary studies on microbial and environmental sources, we detail standardized protocols for maintaining molecular integrity from field to lab, discuss biomass requirements for diverse analytical platforms, and present a unified workflow. Adherence to these foundational steps is essential for generating high-fidelity, interoperable data layers required for comprehensive biosynthetic gene cluster (BGC) mining, metabolite profiling, and ultimate natural product target discovery [21] [9] [14].

The discovery of novel natural products (NPs) has been fundamentally transformed by multi-omics approaches, which integrate genomics, transcriptomics, proteomics, and metabolomics to deconstruct the complex biosynthetic networks of source organisms [9]. However, the analytical power of these advanced technologies is contingent upon the quality and integrity of the starting biological material. Inconsistencies introduced during initial sample handling—such as metabolite degradation, RNA hydrolysis, or protein denaturation—propagate irreversibly through downstream workflows, leading to data artifacts that compromise integration and confound biological interpretation [21] [22].

This guide frames these technical prerequisites within the broader thesis of multi-omics integration for NP research. Effective integration relies on data layers that are not only individually robust but also temporally and contextually aligned. For instance, correlating the expression of a specific BGC (genomics/transcriptomics) with the production of its associated metabolite (metabolomics) requires that biomass for each analysis is harvested from an identical physiological state [14] [22]. Therefore, the standardization of sample collection, arrested preservation, and biomass partitioning is not merely a preliminary step but the critical first step that dictates the success of the entire multi-omics enterprise.

Core Methodologies for Sample Collection and Preservation

The chosen methodology must align with the target omics layers and the nature of the source material, whether it is environmental biomass, microbial cultures, or plant tissue.

2.1 Collection Strategies for Diverse Sources

- Environmental & Marine Samples: As demonstrated in a multi-omics characterization of tropical marine cyanobacteria, macroscopic tufts were collected from subtidal ecosystems. A key consideration for meta-omics is minimizing heterotrophic bacterial contamination, which can complicate genome assembly and metabolite attribution. Immediate processing or preservation in the field is essential [21].

- Microbial Cultures: For controlled omics studies, automated cultivation platforms (e.g., custom Tecan or BioLector systems) enable reproducible growth and precise, time-resolved sampling from microtiter plates or bioreactors. These systems facilitate the acquisition of samples from a defined physiological state (e.g., mid-log phase), which is crucial for integrating data across platforms [22].

2.2 Preservation Protocols for Molecular Integrity Preservation aims to instantaneously "snapshot" the molecular profile of the sample at the point of harvest.

- For Genomics/Transcriptomics: Immediate freezing in liquid nitrogen is the gold standard. The use of commercial stabilizing reagents like RNAlater is highly effective for field samples, as it permeates tissue to stabilize and protect RNA (and DNA) at ambient temperatures for later processing [21].

- For Metabolomics: Metabolism must be quenched within sub-second timescales. Methods include rapid filtration followed by immersion in cold (-40°C to -80°C) quenching solvents (e.g., 60% methanol), or directly spraying culture broth into cold solvent. Speed is critical to prevent turnover of labile metabolites [22].

- For Proteomics: Similar to metabolomics, samples are typically snap-frozen in liquid nitrogen. Subsequent storage at -80°C prevents protein degradation and modification.

Table 1: Standardized Preservation Methods by Omics Layer

| Omics Layer | Primary Goal | Recommended Method | Key Consideration |

|---|---|---|---|

| Genomics | Preserve DNA integrity & prevent shearing. | Snap-freeze in liquid N₂; or RNAlater for composite samples [21]. | Avoid repeated freeze-thaw cycles. |

| Transcriptomics | Arrest RNase activity & prevent degradation. | Immediate immersion in RNAlater or snap-freeze in liquid N₂ [21]. | Ensure preservative fully penetrates tissue. |

| Metabolomics | Quench enzymatic activity instantaneously. | <1 sec transfer to cold (-40°C) methanol/buffer [22]. | Speed is paramount; validate quenching efficiency. |

| Proteomics | Prevent proteolysis & post-translational modifications. | Snap-freeze in liquid N₂; store at -80°C. | Add protease/phosphatase inhibitors if needed. |

Biomass Considerations for Multi-Omics Workflows

Different omics techniques have varying biomass requirements and compatibility with extraction protocols. Planning for sufficient biomass and its rational subdivision is a key strategic element.

3.1 Biomass Requirements and Sample Partitioning A single sample harvest must often be partitioned for concurrent multi-omics analysis. The following workflow, adapted from automated microbial studies, illustrates this division [22]:

- Harvest: Collect biomass from a homogeneous culture or sample under defined conditions.

- Quench/Preserve: Immediately process for metabolomics (most time-critical), then stabilize aliquots for other analyses.

- Partition:

- Metabolomics/Lipidomics: Allocate biomass for cold solvent extraction (typically 1-10 mg wet cell weight).

- Proteomics: Allocate pellet for lysis and protein digestion.

- Genomics/Transcriptomics: Allocate pellet for nucleic acid extraction.

3.2 Scaling and High-Throughput Considerations Advanced automated platforms enable high-throughput omics by cultivating microorganisms in 96-well plates and integrating automated sampling. Key innovations include custom 3D-printed lids that control gas exchange (for aerobic/anaerobic studies) and enable reproducible sampling, minimizing "edge effects" that cause variance between wells [22]. This automation ensures that the biomass used for different omics analyses originates from an identical, controlled microenvironment.

Table 2: Typical Biomass and Handling Parameters for Microbial Omics

| Parameter | Genomics | Metabolomics | Proteomics | Primary Challenge |

|---|---|---|---|---|

| Min. Biomass | ~10⁸ cells [21] | 1-5 mg (wet weight) [22] | ~10⁷ cells [22] | Metabolomics requires minimal biomass but maximal speed. |

| Processing Temp. | 4°C (post-thaw) | -20°C to -40°C (quench) | 4°C (post-thaw) | Maintaining cold chain for metabolomics/proteomics. |

| Compatible w/ Auto. | Yes (cell lysis) | Yes (rapid quenching & extraction) | Yes (digestion protocols) | Integrating fast sampling (<1s) for metabolomics [22]. |

Detailed Experimental Protocols for Key Workflows

4.1 Protocol: Genomic DNA Extraction and Sequencing for BGC Mining (Adapted from [21])

- Sample Lysis: For filamentous cyanobacteria or tough tissues, use mechanical disruption (bead beating) combined with chemical lysis (CTAB/SDS buffers).

- DNA Purification: Purify lysate using phenol-chloroform-isoamyl alcohol extraction, followed by precipitation with isopropanol. RNase treatment is recommended.

- Quality Control: Assess DNA purity (A260/A280 ~1.8), integrity (via gel electrophoresis), and quantity. Use fluorometric assays for accuracy.

- Library Prep & Sequencing: For complex genomes rich in repetitive BGCs, employ a hybrid sequencing strategy. Use Illumina short-read data for accuracy combined with Oxford Nanopore or PacBio long-read data to scaffold and resolve repetitive regions [21].

- Bioinformatic Processing: Assemble reads using hybrid-aware assemblers (e.g., metaSPAdes). Identify BGCs using specialized tools like antiSMASH and perform phylogenomic analysis with BiG-SCAPE to prioritize novel clusters [21].

4.2 Protocol: LC-MS/MS-Based Metabolomics for Natural Product Dereplication

- Metabolite Extraction: To a quenched cell pellet, add cold extraction solvent (e.g., 80% methanol). Agitate vigorously for 15 minutes at 4°C, then centrifuge. Transfer supernatant for analysis.

- LC-MS/MS Analysis:

- Chromatography: Use reversed-phase C18 columns with a water-acetonitrile gradient (both modifiers containing 0.1% formic acid) for broad metabolite separation.

- Mass Spectrometry: Employ data-dependent acquisition (DDA) in positive and negative ionization modes. Collision-induced dissociation (CID) generates MS/MS spectra for compound identification.

- Data Processing: Convert raw files to open formats (e.g., .mzML). Use tools like MZmine or MS-DIAL for peak picking, alignment, and annotation. Dereplicate by matching MS/MS spectra against public libraries (GNPS, MassBank) [21].

Integration with Downstream Multi-Omics Analysis

The meticulously collected and preserved samples feed into parallel analytical pipelines whose data converge for integrated analysis. Genomics reveals the potential (BGCs), transcriptomics and proteomics reveal the expression, and metabolomics reveals the chemical output. Bioinformatics integration, often facilitated by KEGG or antiSMASH pathway mapping, links compound spectra to biosynthetic genes, guiding targeted isolation of novel NPs [9] [14]. This integrated workflow, from critical first steps to final discovery, is visualized below.

Multi-Omics Integration Workflow from Sample to Insight

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Research Reagent Solutions for Multi-Omics Sample Preparation

| Reagent/Material | Function | Primary Omics Application |

|---|---|---|

| RNAlater Stabilization Solution | Penetrates tissue to stabilize and protect RNA (and DNA) integrity at ambient temperatures, crucial for field-collected samples [21]. | Genomics, Transcriptomics |

| Cold Methanol/Quenching Buffer | Rapidly quenches cellular metabolism to "snapshot" the metabolome, preventing turnover of labile compounds [22]. | Metabolomics |

| CTAB or SDS Lysis Buffer | Effective for lysing difficult cell types (e.g., filamentous cyanobacteria, plant tissue) to release high-molecular-weight DNA [21]. | Genomics |

| Solid Phase Extraction (SPE) Cartridges | Used post-extraction to clean metabolite samples, remove salts, and fractionate compounds prior to LC-MS to reduce complexity [21]. | Metabolomics |

| Protease & Phosphatase Inhibitor Cocktails | Added to lysis buffers to prevent protein degradation and preserve post-translational modification states during protein extraction [14]. | Proteomics |

| Automated Cultivation Platform | Enables high-throughput, reproducible growth and precise sampling of microbial cultures under controlled conditions (e.g., Tecan robot with custom lid) [22]. | All (Sample Generation) |

From Data to Discovery: Integrated Workflows and AI-Powered Applications in NP Research

The discovery of natural products (NPs), such as antibiotics and anticancer agents, has historically relied on activity-guided screening of microbial extracts. While successful, this approach is plagued by high rediscovery rates and inefficiency [23]. The advent of rapid, low-cost genome sequencing revealed a vast untapped potential: a single bacterial genome can harbor over 30 biosynthetic gene clusters (BGCs), with less than 0.25% of all identified BGCs experimentally linked to known compounds [23]. This disparity underscores the paradigm shift towards genome mining—the use of computational tools to identify, analyze, and prioritize BGCs for targeted natural product discovery [24].

This shift aligns with the broader thesis of multi-omics data integration, which seeks to synthesize information from genomics, transcriptomics, metabolomics, and proteomics to fully elucidate biosynthetic pathways and their regulation [25]. Within this framework, genome mining provides the essential genomic blueprint. Tools like antiSMASH and PRISM serve as the critical first step, translating raw DNA sequence into testable biochemical hypotheses about potential novel metabolites [26] [27]. This guide details the core functionalities, applications, and integration of these pivotal tools within a modern multi-omics workflow for natural product research.

Table 1: The Scale of Opportunity and Challenge in Microbial Genome Mining

| Metric | Figure | Implication for Discovery | Source |

|---|---|---|---|

| Sequenced bacterial genomes (as of 2019) | >211,000 | Vast genetic resource for mining. | [23] |

| BGCs per bacterial genome (average) | Up to 30 | Each genome is a rich source of potential compounds. | [23] |

| Characterized BGCs (experimentally linked to product) | <0.25% | Immense unexplored chemical space remains. | [23] |

| BGCs in Streptomyces avermitilis (model strain) | 40 total (23 "silent") | Even well-studied strains harbor unexpressed potential. | [23] |

Core Genome Mining Tools: antiSMASH and PRISM

antiSMASH: The Comprehensive BGC Detection Platform

The Antibiotics & Secondary Metabolite Analysis Shell (antiSMASH) is the most widely used tool for the identification and annotation of BGCs in bacterial, fungal, and archaeal genomes [27]. Its core strength lies in a rule-based system that uses profile hidden Markov models (pHMMs) to detect signature biosynthetic enzymes across a growing number of BGC families.

Key Features and Advancements (antiSMASH 7.0):

- Expanded Detection: Identifies 81 distinct BGC types (up from 71), including newly added clusters like methanobactins and crocagins [27].

- Structure Prediction: Provides chemical structure predictions for major classes like nonribosomal peptides (NRPs), polyketides (PKs), and ribosomally synthesized and post-translationally modified peptides (RiPPs). Its new NRPyS library improves adenylation (A) domain substrate prediction using an expanded database of over 2,300 entries [27].

- Regulatory Insights: A new transcription factor binding site (TFBS) finder module scans for putative regulatory sites using position weight matrices from the LogoMotif database, offering clues on BGC regulation [27].

- Dereplication and Comparison: Integrates with the MIBiG (Minimum Information about a Biosynthetic Gene Cluster) repository for comparing identified BGCs against known clusters [27].

PRISM: The Chemical Structure Prediction Engine

Where antiSMASH excels at broad detection, the PRediction Informatics for Secondary Metabolomes (PRISM) platform specializes in detailed, accurate prediction of the final chemical structure encoded by a BGC [26] [28]. PRISM 4 employs a combinatorial approach, mapping genes to enzymatic reactions to reconstruct biosynthetic pathways in silico.

Key Features and Advancements (PRISM 4):

- Comprehensive Reaction Library: Utilizes 1,772 HMMs and implements 618 in silico tailoring reactions to predict structures for 16 classes of metabolites, including all clinically relevant bacterial antibiotic classes (e.g., β-lactams, aminoglycosides) [26].

- Combinatorial Logic: When enzyme specificity is ambiguous (e.g., a halogenase that could act on multiple sites), PRISM generates all chemically plausible product variants, providing a ranked set of predictions [26].

- Activity Prediction: The accuracy of its structural predictions enables the use of machine learning models to predict the likely biological activity (e.g., antibacterial) of the encoded molecule [26].

Table 2: Comparative Performance: antiSMASH 5 vs. PRISM 4

| Evaluation Metric | antiSMASH 5 | PRISM 4 | Implication |

|---|---|---|---|

| Detection Sensitivity (on 1,281 known BGCs) | Detected 1,212 BGCs (94.6%) | Detected 1,230 BGCs (96.0%) | Both tools show high sensitivity for BGC identification. |

| Structure Prediction Rate (on detected BGCs) | Predicted structures for 753 BGCs | Predicted structures for 1,157 BGCs | PRISM generates chemical hypotheses for a significantly larger subset of BGCs. |

| Structural Accuracy (Tanimoto Coefficient to known product) | Lower median similarity | Significantly higher median similarity (p < 10⁻¹⁵) | PRISM's predicted structures are more chemically accurate. |

| Predicted "Natural-Product-Likeness" | Lower molecular complexity, more "drug-like" | Higher molecular weight & complexity, closer to known NPs | PRISM's predictions better capture the complex scaffolds typical of natural products. |

Diagram 1: Genome mining tool workflow integration (74 characters)

Experimental Protocols for Tool Validation and Application

Protocol: Benchmarking Structure Prediction Accuracy (PRISM 4 Evaluation)

This protocol outlines the methodology used in [26] to validate PRISM 4's predictive power against known benchmarks.

- Reference Set Curation: Assemble a manually curated set of 1,281 BGCs with experimentally verified products from public databases (e.g., MIBiG) and literature. Correct any errors in deposited chemical structures or BGC boundaries.

- Tool Execution: Run both PRISM 4 and antiSMASH 5 on the genomic sequences harboring these reference BGCs using default parameters.

- Detection & Prediction Metrics: Record the number of BGCs detected and the number for which each tool outputs a predicted chemical structure.

- Structural Similarity Analysis:

- For each BGC with a predicted structure, calculate the Tanimoto Coefficient (Tc) between the predicted molecule(s) and the known true product. Tc is a measure of chemical similarity based on shared molecular fingerprints.

- Use statistical tests (e.g., paired Brunner-Munzel test) to compare the distribution of Tc scores between tools.

- Chemical Property Analysis: Compare predicted structures against known products using metrics like Bertz topological complexity index, molecular weight, and "natural product-likeness" score to assess if predictions inhabit biologically relevant chemical space.

Protocol: Activating and Linking a Cryptic BGC to Its Product

Following computational prioritization, this core experimental protocol connects a "silent" or cryptic BGC to its metabolic product [23] [24].

- BGC Prioritization: Use antiSMASH/PRISM to identify a cryptic BGC of interest (e.g., one with novel architecture or predicted novel activity).

- Host Strain Engineering:

- Heterologous Expression: Clone the entire predicted BGC into an amenable expression host (e.g., Streptomyces lividans). This often requires specialized techniques like Transformation-Associated Recombination (TAR) cloning due to large cluster sizes.

- Native Host Activation: Manipulate the native producer by:

- Overexpressing a predicted pathway-specific transcriptional activator.

- Deleting or inhibiting a global repressor (e.g., using CRISPR interference).

- Culturing under various OSMAC (One Strain Many Compounds) conditions to elicit production.

- Metabolite Analysis: Analyze the culture extract of the engineered strain versus the control using High-Resolution Liquid Chromatography-Mass Spectrometry (HR-LC-MS).

- Metabolite Purification & Structure Elucidation: Purify the compound(s) unique to the activated strain using preparative chromatography. Determine the complete 2D structure using Nuclear Magnetic Resonance (NMR) spectroscopy.

- Genetic Confirmation: Perform gene knockout or mutation of a core biosynthetic gene in the activated strain. Confirm the loss of compound production, providing genetic evidence linking the BGC to the metabolite.

Multi-Omics Integration for BGC Prioritization and Analysis

Genome mining is the foundational genomic layer in a multi-omics strategy. Integrating its outputs with other data types dramatically improves BGC prioritization and functional prediction [25].

- Transcriptomics: RNA-Seq data identifies which BGCs are actively transcribed under specific conditions, helping prioritize "silent" clusters that can be awakened. Tools like antiSMASH can integrate expression data for visualization [24].

- Metabolomics: Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) profiling of culture extracts, coupled with molecular networking (e.g., via Global Natural Products Social Molecular Networking), can detect novel metabolites. Correlating their production with BGC activation provides a direct link between genotype and chemotype [24].

- Proteomics: Detecting the biosynthetic enzymes themselves confirms BGC translation and can help pinpoint the timing of production.

Diagram 2: Multi-omics BGC prioritization workflow (63 characters)

Advanced Integration Strategies

- Phylogeny-Based Mining: Construct phylogenetic trees of core biosynthetic genes (e.g., polyketide synthase ketosynthase domains). Clades that diverge from known systems may produce novel chemical variants, guiding targeted exploration [24].

- Resistance Gene-Guided Mining: BGCs for antibiotics often include a self-resistance gene. Identifying novel resistance genes (e.g., divergent antibiotic efflux pumps) can pinpoint clusters producing compounds with new mechanisms of action [24].

- Metagenomic Mining: Tools like biosyntheticSPAdes are designed to reconstruct complete BGCs from fragmented metagenomic assembly graphs, unlocking the biosynthetic potential of unculturable microbes [29].

Table 3: Research Reagent Solutions for Genome Mining & Validation

| Tool / Resource Name | Type | Primary Function in Workflow |

|---|---|---|

| antiSMASH [27] | Software / Web Server | The standard for comprehensive BGC identification, annotation, and boundary prediction in genomic sequences. |

| PRISM [26] [28] | Software / Web Server | Predicts the detailed chemical structure of the natural product encoded by a BGC, with high accuracy for multiple classes. |

| MIBiG (Minimum Information about a BGC) [23] [27] | Curated Database | A repository of experimentally characterized BGCs used as a gold-standard reference for comparison and dereplication. |

| biosyntheticSPAdes [29] | Software | A specialized assembler that reconstructs complete BGCs from fragmented genomic or metagenomic assembly graphs. |

| BiG-SCAPE / BiG-FAM [23] [24] | Software / Database | Analyzes and classifies BGCs into gene cluster families (GCFs) based on protein domain sequence similarity, enabling global analysis of BGC diversity. |

| Flexynesis [30] | Software Toolkit | A deep learning framework for integrating bulk multi-omics data (transcriptome, methylome, etc.), useful for building predictive models of BGC activity or compound bioactivity. |

Challenges and Future Directions

Despite advances, significant challenges remain in realizing the full potential of genome mining [23].

- BGC Assembly and "Cryptic" Clusters: Long, repetitive BGCs are frequently fragmented during genome sequencing. Tools like biosyntheticSPAdes address this by leveraging assembly graphs [29]. Furthermore, a large majority of BGCs are "silent" under laboratory conditions, necessitating advanced activation strategies.

- Prediction Limitations: Structure prediction for highly modified peptides (e.g., glycopeptides) or clusters with unusual biochemistry remains error-prone. Tailoring enzyme function is especially difficult to predict precisely from sequence alone [23].

- Integration with AI and Automation: The future lies in deeper integration of artificial intelligence. Machine learning models are already being used to predict biological activity from PRISM's structures [26]. Tools like Flexynesis demonstrate the power of deep learning to integrate multi-omics data for predictive modeling in biology [30]. The next generation of tools will likely feature end-to-end AI pipelines that prioritize BGCs, predict products and activities, and suggest optimal expression hosts—moving closer to a fully in silico guided discovery cycle.

In conclusion, genome mining tools like antiSMASH and PRISM have fundamentally transformed natural product research from a screening-based to a hypothesis-driven endeavor. By providing the critical link between genetic sequence and chemical structure, they form the indispensable genomic core of a multi-omics integration thesis. As these tools evolve with improved algorithms and embrace AI-driven integration, they will continue to accelerate the targeted discovery of novel bioactive molecules from the microbial world.

This technical guide details the integration of metabolomics and molecular networking via the Global Natural Products Social Molecular Networking (GNPS) platform as a cornerstone strategy for dereplication and novel compound detection in natural product research. The core analytical workflow, exemplified by a 2025 Sophora flavescens study [31], combines Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) with complementary Data-Dependent (DDA) and Data-Independent Acquisition (DIA) modes to enable comprehensive metabolite profiling. Within a broader multi-omics framework [14] [9], this approach accelerates the identification of known compounds and prioritizes unique chemical entities for downstream pharmacological investigation. The guide provides explicit experimental protocols, data processing parameters, and visualization strategies to implement a reference data-driven analysis pipeline [32], directly addressing the critical bottlenecks of time and resource allocation in drug discovery [12].

Natural products (NPs) remain an unparalleled source of novel chemical scaffolds for drug development [14] [12]. However, traditional bioactivity-guided fractionation is plagued by the frequent re-isolation of known compounds, a costly and time-consuming obstacle. Dereplication—the rapid identification of known metabolites early in the discovery pipeline—is essential to focus resources on truly novel leads [12].

Metabolomics, particularly untargeted LC-MS/MS, provides a high-throughput solution by generating comprehensive chemical profiles of complex extracts [33]. The principal challenge lies in annotating the hundreds to thousands of mass spectral features in each analysis. Molecular networking, as implemented by the GNPS platform, transforms this challenge by organizing MS/MS spectra based on spectral similarity, creating a visual map where structurally related molecules cluster together [31] [34]. This strategy not only facilitates the propagation of annotations within clusters but also highlights orphan nodes that may represent novel compounds [32].

Integrating this metabolomic layer with other omics data (genomics, transcriptomics) creates a powerful, hypothesis-generating framework for targeted NP discovery, allowing researchers to connect chemical signatures to biosynthetic gene clusters [9].

Core Concepts: Metabolomics, Molecular Networking, and the GNPS Ecosystem

- Untargeted Metabolomics via LC-MS/MS: This analytical foundation uses liquid chromatography to separate metabolites in a complex sample, followed by mass spectrometry to measure their mass-to-charge ratio (m/z). Tandem MS (MS/MS) fragments precursor ions, generating unique spectral fingerprints crucial for identification [31] [33]. Two primary acquisition modes are employed:

- Data-Dependent Acquisition (DDA): Selects the most intense ions for fragmentation. It yields cleaner, simpler MS/MS spectra ideal for library matching but may undersample low-abundance ions.

- Data-Independent Acquisition (DIA): Fragments all ions within sequential, broad m/z windows (e.g., SWATH). It provides comprehensive data on all detectable analytes but generates complex, multiplexed spectra that require specialized deconvolution software (e.g., MS-DIAL) prior to analysis [31].

- Molecular Networking Logic: Molecular networking calculates pairwise similarity scores (e.g., cosine score) between all MS/MS spectra in a dataset. Spectra with scores above a defined threshold are connected, forming nodes (metabolites) and edges (structural relationships) in a network graph [34]. This visualization groups analogs and derivatives, enabling compound family-based analysis.

- The GNPS Platform: GNPS is a web-based ecosystem that provides workflows for creating molecular networks, searching spectra against reference libraries, and performing reference data-driven analysis [34] [32]. Its crowd-sourced libraries and publicly available reference datasets dramatically enhance annotation confidence and contextual interpretation.

The following diagram illustrates the integration of these core concepts into a cohesive dereplication strategy, from sample preparation to biological insight.

Diagram 1: Integrated Dereplication and Discovery Workflow (76 characters)

Experimental Protocol: A Case Study onSophora flavescens

A 2025 study on the medicinal plant Sophora flavescens provides a robust, published protocol for dereplication [31]. The following table summarizes key quantitative outcomes from this integrated DIA/DDA approach.

Table 1: Dereplication Results from Sophora flavescens Root Extract [31]

| Analytical Metric | Result | Technical Significance |

|---|---|---|

| Total Compounds Annotated | 51 | Demonstrates the comprehensiveness of the combined workflow. |

| Primary Compound Classes | Alkaloids, Flavonoids, Triterpenoids | Confirms known phytochemistry and validates method accuracy. |

| Key Annotation Outcome | DIA and DDA approaches were complementary. | DIA provided broader coverage; DDA provided cleaner spectra for matching. |

| Strategic Advantage | Molecular networking overcame trace compound identification challenges vs. direct DB matching. | Highlights the power of network context for annotating low-abundance ions. |

3.1. Step-by-Step Methodology

- A. Sample Preparation:

- Material: Dried root powder of Sophora flavescens.

- Extraction: 50 mg powder extracted with 10 mL methanol/water/formic acid (49:49:2, v/v/v) via 60-minute sonication [31].

- Processing: Centrifugation, supernatant collection, drying under nitrogen, and reconstitution in H2O/ACN (95:5). Final concentration: 10 mg/mL. Filter through 0.22 µm PTFE membrane before LC-MS injection [31].

B. LC-MS/MS Analysis (Dual Acquisition):

- Instrumentation: UPLC system coupled to a high-resolution Q-TOF mass spectrometer [31].

- Chromatography: C18 column; gradient elution with ammonium acetate in water (mobile phase A) and acetonitrile (B); 20-minute run [31].

- Mass Spectrometry: Positive ionization mode.

- DDA Parameters: Top 4 ions selected for fragmentation per cycle; collision energy (CE): 50 eV [31].

- DIA Parameters: SWATH acquisition with 50 Da windows covering 100-1000 Da; CE: 50 eV [31].

C. Data Processing for GNPS:

- DIA Data: Convert .raw files to mzML. Process with MS-DIAL (v5.3) for feature detection, deconvolution of multiplexed DIA spectra, and alignment across replicates. Export a "MS/MS spectral file" for GNPS [31].

- DDA Data: Convert .raw files to mzML. Process with MZmine (v4.3.0) for chromatogram building, deconvolution, and feature alignment. Export a "MS/MS spectral file" for GNPS [31].

D. GNPS Molecular Networking & Analysis:

- Upload: Submit the generated MS/MS spectral files (.mgf) to the GNPS platform [34].

- Parameters (Critical for Results):

- Precursor Ion Mass Tolerance: 0.02 Da (for high-res QTOF data) [32].

- Fragment Ion Mass Tolerance: 0.02 Da [32].

- Minimum Cosine Score (Min Pairs Cos): 0.7 (or as determined by FDR analysis) [32].

- Minimum Matched Fragment Peaks: 6 [34].

- Library Search Parameters: Set score threshold and min matched peaks for searching public libraries (e.g., GNPS, NIST14) [34].

- Job Submission & Visualization: Execute the workflow. Results can be visualized online or explored in Cytoscape after downloading the network file (GraphML) [32]. The analysis yields a table of library matches and a network where annotated nodes (e.g., matrine, kurarinone) serve as references for characterizing nearby unknown nodes [31].

Integration within a Multi-Omics Thesis Framework

Metabolomics and GNPS-based dereplication do not operate in isolation. They gain predictive power when integrated into a multi-omics data triangulation strategy, forming the core thesis of modern NP research [14] [9].

- Genomics/Transcriptomics: Genome mining can reveal biosynthetic gene clusters (BGCs) encoding pathways for novel NPs. Transcriptomics under specific stimuli (e.g., stress, co-culture) can identify upregulated BGCs. The molecular fingerprints from metabolomics provide the crucial link to confirm the actual production of the compounds predicted by these genetic clues [9].

- Proteomics & Target Discovery: Bioactive fractions flagged by GNPS can be subjected to chemical proteomics (e.g., affinity-based protein profiling) to identify potential molecular targets, elucidating the Mode of Action (MoA) [14] [12].

This integrated framework creates a virtuous cycle for discovery, as depicted in the following diagram.

Diagram 2: Multi-Omics Integration for NP Discovery (76 characters)

The GNPS Analysis Workflow: From Raw Data to Novelty Detection

The process within the GNPS environment is highly configurable. The following diagram details the key steps and decision points in a reference data-driven analysis workflow [32], which is essential for robust novel compound detection.

Diagram 3: GNPS Reference Data-Driven Analysis Steps (76 characters)

The Scientist’s Toolkit: Essential Research Reagents & Software

Successful implementation of this workflow requires specific materials and computational tools.

Table 2: Essential Research Reagents and Software Solutions

| Category | Item/Software | Function & Rationale |

|---|---|---|

| Analytical Standards | Matrine, Sophoridine, Kurarinone [31] | Provides retention time and MS/MS spectral validation for key compounds, anchoring network annotations. |

| Chromatography | UPLC/HPLC-grade solvents (MeOH, ACN, H₂O); Formic Acid/Ammonium Acetate [31] | Ensures optimal separation (chromatography) and ionization (mass spec) for a broad metabolite range. |

| Sample Prep | PTFE Syringe Filters (0.22 µm) [31] | Removes particulates to protect LC column and instrument. |

| Data Conversion | MSConvert (ProteoWizard) [31] | Universal tool to convert proprietary MS vendor files (.raw, .d) to open formats (.mzML, .mgf) for GNPS. |

| DIA Deconvolution | MS-DIAL [31] | Specialized software to demultiplex complex DIA (e.g., SWATH) data into pseudo-MS/MS spectra for networking. |

| DDA Processing | MZmine [31] | Open-source platform for feature detection, alignment, and MS/MS spectral export from DDA data. |

| GNPS Platform | GNPS Web Interface [34] [32] | Cloud-based ecosystem for molecular networking, library search, and reference data-driven analysis. |

| Network Visualization | Cytoscape [32] | Powerful desktop software for in-depth exploration, customization, and analysis of molecular networks. |

| Statistical Analysis | R & Python (e.g., ggplot2, seaborn) [35] |

Essential for downstream statistical analysis, quantification, and generation of publication-quality figures. |

The future of dereplication lies in deeper automation and intelligence. This includes:

- Advanced Analytics: Integration of MS²LDA to discover substructural motifs (Mass2Motifs) within networks, providing another layer of structural insight beyond spectral similarity [36].

- Automated Structure Prediction: Coupling GNPS outputs with in-silico tools (e.g., CSI:FingerID, DEREPLICATOR+) to predict molecular structures directly from MS/MS spectra for novel nodes [12].