Large-Scale Molecular Docking for Natural Products: Strategies, Benchmarks, and AI-Driven Advances in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on implementing and optimizing large-scale molecular docking for natural product discovery.

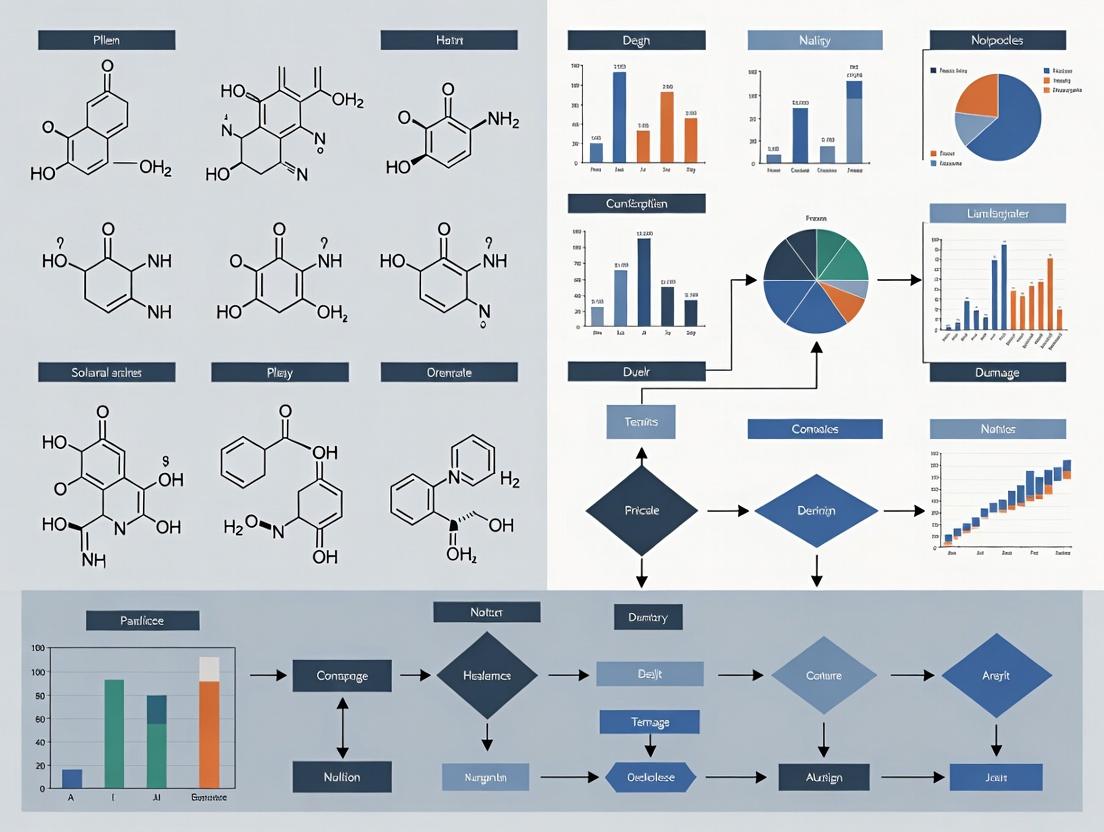

Large-Scale Molecular Docking for Natural Products: Strategies, Benchmarks, and AI-Driven Advances in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing and optimizing large-scale molecular docking for natural product discovery. It covers the foundational role of natural products as drug leads and the core principles of docking[citation:3]. The methodological section details end-to-end workflows for screening ultra-large libraries, including preparation, tool selection, and integration with machine learning for hit enrichment[citation:2][citation:7]. A critical troubleshooting section addresses common pitfalls in screening natural products, such as handling structural complexity and scoring function limitations, and offers optimization strategies[citation:1][citation:5]. Finally, the article presents a framework for the validation and comparative analysis of docking protocols, emphasizing the importance of benchmarking against experimental data and employing consensus approaches[citation:1][citation:5]. The synthesis aims to equip scientists with practical knowledge to design efficient and reliable computational campaigns for identifying novel bioactive compounds from nature.

The Foundation: Why Natural Products and Molecular Docking are Cornerstones of Modern Drug Discovery

The Historical and Contemporary Significance of Natural Products as Drug Leads

Natural products have been a cornerstone of pharmacotherapy for millennia, serving as the original source of a significant proportion of modern therapeutics, particularly in the realms of anti-infectives and oncology [1] [2]. These compounds, derived from plants, microorganisms, and marine organisms, possess unique chemical diversity and evolutionary-optimized biological activities that are difficult to replicate with synthetic libraries [3] [2]. Historically, their discovery was largely serendipitous or based on traditional knowledge, leading to blockbuster drugs like penicillin, artemisinin, and paclitaxel [1].

Despite a decline in interest from the late 20th century due to challenges in sourcing, isolation, and compatibility with high-throughput screening, natural products are experiencing a powerful renaissance [2]. This resurgence is driven by technological advancements in analytical chemistry (e.g., high-resolution mass spectrometry), genomics, and critically, computational power [3] [2]. Today, the field is being redefined within a new paradigm that integrates these traditional assets with large-scale molecular docking and virtual screening. This computational approach allows researchers to systematically evaluate billions of compound-target interactions in silico, positioning natural product libraries—both pure compounds and virtual databases of natural product-like scaffolds—as indispensable resources for identifying novel drug leads against increasingly challenging therapeutic targets [4] [5].

Large-Scale Docking: A Foundational Tool for Modern Natural Product Research

Large-scale molecular docking is a computational technique that predicts how a small molecule (ligand) binds to a target protein receptor and estimates the strength of that interaction (binding affinity) [6] [7]. In the context of natural product research, it serves as a high-throughput pre-filter to prioritize a handful of promising candidates from vast chemical libraries for costly and time-consuming experimental validation [4] [8].

The process is based on simulating the "lock and key" or, more accurately, the "induced fit" mechanism, where both ligand and binding site can adjust conformation [7]. Search algorithms (systematic, stochastic) explore possible binding poses, which are then ranked by scoring functions (force-field, empirical, knowledge-based) [6]. Modern advancements enable the screening of ultra-large libraries containing hundreds of millions to billions of compounds on reasonable computing clusters, making the exploration of expansive natural product-derived chemical space feasible [4].

Table 1: Key Quantitative Data on Natural Products in Drug Discovery

| Metric | Value/Statistic | Context & Source |

|---|---|---|

| FDA-approved drugs based on natural products or derivatives | Approx. 25-33% of all small-molecule drugs | Significant contribution over the past 40 years [1] [2]. |

| Exemplar: Marine-derived natural products | >26,680 compounds identified by 2015 | Illustrates the vast, underexplored chemical space in nature [7]. |

| Docking success exemplar | Subnanomolar agonists discovered for melatonin receptor | Achieved by following a controlled, large-scale docking protocol [4]. |

| Typical drug discovery timeline & cost | 10-15 years, >$1 billion | Highlights the value of computational tools in reducing early-stage risk and cost [8]. |

| Success rate for new drug approvals | < 15% | Emphasizes the need for efficient lead identification strategies [8]. |

Application Notes: Integrating Docking into the Research Pipeline

The integration of molecular docking transforms the natural product research workflow from a purely bioassay-guided fractionation process to a targeted, hypothesis-driven endeavor.

3.1 Target Identification and Mechanism Elucidation For a natural product with observed phenotypic activity but an unknown molecular target, reverse docking can be employed. The compound is docked against a panel of potential protein targets to identify the most likely binding partners, thereby elucidating its mechanism of action [7] [5].

3.2 Virtual Screening of Natural Product Libraries This is the most direct application within large-scale docking research. Custom libraries are constructed from several sources:

- Pure Compound Libraries: Digitized 3D structures of isolated natural compounds.

- Fraction Libraries: Virtual representations of semi-purified fractions, though this is computationally complex.

- Natural Product-Inspired Virtual Libraries: Billions of readily synthesizable compounds generated using rules derived from natural product scaffolds, designed for synthesizability [4].

3.3 Lead Optimization and Analogue Design Once a natural product hit is identified, docking guides the rational design of analogues. By analyzing the binding pose and interaction map, chemists can predict which structural modifications (e.g., adding or removing functional groups) might enhance affinity or selectivity [5].

Table 2: Exemplar Applications of Docking in Natural Product Research

| Therapeutic Area | Target | Natural Product/Class | Key Finding from Docking | Source |

|---|---|---|---|---|

| Respiratory & Cardiovascular | β2-Adrenergic Receptor (GPCR) | Quercetin, Catechin, Resveratrol | Quercetin showed highest binding affinity; interactions with key residues (Asp113, Ser203) mapped. | [9] |

| Oncology & Infectious Diseases | Various (e.g., tubulin, DNA polymerase) | Marine compounds (Cytarabine, Eribulin) | Docking used to elucidate protein-ligand interaction mechanisms for approved drugs. | [7] |

| General Drug Discovery | Melatonin Receptor (GPCR) | Ultra-large virtual library | Protocol exemplar leading to discovery of subnanomolar agonists. | [4] |

| Nutraceutical Research | Various disease targets (cancer, neurodegenerative) | Dietary bioactive compounds | Identifies molecular targets and predicts mechanisms for disease management. | [6] |

Detailed Experimental Protocols

Protocol 1: Large-Scale Docking Screen for Natural Product Hit Identification This protocol adapts established large-scale docking guidelines [4] for natural product libraries.

Library Preparation:

- Source natural product structures from databases (e.g., PubChem, ZINC Natural Products subset) or generate 3D structures from isolated compounds.

- Prepare ligands: Convert to appropriate format (e.g., MOL2, SDF). Add hydrogen atoms, compute partial charges (e.g., Gasteiger), and minimize energy. For virtual libraries, apply standard cheminformatics filters (e.g., for reactivity, drug-likeness).

- Output: A curated library file in a docking-ready format.

Target Protein Preparation:

- Obtain a high-resolution 3D structure of the target protein from the PDB (e.g., PDB ID: 2RH1 for β2-AR [9]).

- Process the structure: Remove water molecules and non-essential cofactors. Add missing hydrogen atoms and side chains. Assign correct protonation states for key residues (e.g., Asp, Glu, His).

- Define the binding site: Use the coordinates of a co-crystallized ligand or known active site residues to generate a 3D grid box that encompasses the site with sufficient margin.

Docking Execution & Control:

- Software Selection: Choose docking software suited for large-scale tasks (e.g., DOCK3.7, AutoDock Vina, FRED). Configure parameters (search algorithm, scoring function) as per software documentation.

- Control Calculations: Perform a control dock of a known active ligand (positive control) and decoy molecules to validate that the setup can correctly identify and rank true binders (enrichment control) [4].

- Large-Scale Run: Execute the docking job on a computing cluster, screening the entire natural product library against the prepared target grid.

Post-Docking Analysis:

- Rank all docked compounds by their computed binding energy (kcal/mol).

- Visually inspect the top-ranking poses (e.g., using PyMOL, Chimera) to assess the quality of interactions (hydrogen bonds, hydrophobic contacts, salt bridges) with key binding site residues [9].

- Cluster results to identify recurring chemotypes or scaffolds among the top hits.

Protocol 2: Experimental Validation of Docking Hits Computational predictions require empirical confirmation [6] [5].

- Compound Acquisition/Synthesis: Source the top-ranked natural product hits from commercial suppliers, or if novel, initiate isolation or synthesis.

- Primary In Vitro Bioassay: Test the compounds in a biochemical or cell-based assay relevant to the target's function (e.g., enzyme inhibition, receptor binding assay, cell viability). Determine IC50/EC50 values.

- Secondary Profiling & Specificity: Confirm activity is on-target using counter-screens (e.g., related receptor subtypes) and assess selectivity. Evaluate cytotoxicity in relevant cell lines.

- Characterization of Binding: For direct binding confirmation, employ techniques like Surface Plasmon Resonance (SPR) or Isothermal Titration Calorimetry (ITC) to measure binding affinity (KD), validating the docking-predicted energies.

Table 3: Key Research Reagent Solutions for NP Docking & Validation

| Category | Item/Resource | Function & Application | Exemplars / Notes |

|---|---|---|---|

| Computational Software | Molecular Docking Suite | Performs the virtual screening calculation. | DOCK3.7 [4], AutoDock Vina [6] [9], Glide [6]. |

| Computational Databases | Compound Structure Library | Provides the digital ligands for screening. | ZINC database [4], PubChem [9], commercial NP libraries. |

| Computational Databases | Protein Structure Repository | Source of 3D target protein coordinates. | Protein Data Bank (PDB) [7] [9]. |

| Visualization & Analysis | Molecular Graphics Software | Visualizes docking poses and protein-ligand interactions. | PyMOL [9], UCSF Chimera [9], BIOVIA Discovery Studio. |

| Wet-Lab Reagents | Purified Target Protein | Essential for biochemical binding or activity assays. | Recombinantly expressed and purified protein. |

| Wet-Lab Reagents | Validated Bioassay Kit | Measures the functional activity or binding of hit compounds. | Kinase inhibition, GPCR functional, cell viability assay kits. |

| Wet-Lab Instruments | Biophysical Characterization Instrument | Quantifies binding affinity and kinetics of confirmed hits. | Surface Plasmon Resonance (SPR), Isothermal Titration Calorimetry (ITC). |

The historical significance of natural products as drug leads is undeniable, but their contemporary value is now being unlocked through computational methodologies like large-scale molecular docking. This synergy creates a powerful engine for drug discovery, enabling the efficient navigation of nature's vast chemical diversity towards specific, modern therapeutic targets [3] [5].

Future progress hinges on overcoming current challenges. Improved scoring functions are needed to more accurately predict binding affinities, especially for the complex, often flexible, structures of natural products [6] [7]. Integrating machine learning models trained on bioactivity data can reduce false positives and improve hit rates [3]. Furthermore, advances in handling molecular flexibility and simulating more realistic solvated binding environments will enhance predictive accuracy [7].

Ultimately, the most productive path forward is a tightly integrated cycle of in silico prediction and in vitro/vivo validation. Docking prioritizes nature's most promising molecules, and experimental feedback refines the computational models. As these technologies mature, natural products, framed within the context of large-scale computational screening, will remain an essential and vibrant wellspring for the next generation of therapeutic agents [1] [2].

Molecular docking is a cornerstone computational technique in structure-based drug design, primarily used to predict how a small molecule (ligand) binds to a target protein and to estimate the strength of that interaction [10]. For natural products research, which deals with structurally complex and diverse chemical scaffolds, molecular docking enables the rapid in silico screening of vast phytochemical libraries against biological targets, prioritizing the most promising candidates for costly and time-consuming experimental validation [11]. This approach is framed within a broader thesis on large-scale molecular docking, which aims to systematically interrogate extensive chemical space—including millions of natural and synthetic compounds—to identify novel bioactive entities [4].

The core challenge of molecular docking is two-fold: accurate pose prediction (determining the correct binding geometry of the ligand) and reliable affinity scoring (ranking the predicted poses or different ligands based on estimated binding strength) [10]. Traditional methods rely on physics-based scoring functions and heuristic search algorithms, but they face well-known limitations in accuracy and speed [10]. The field is undergoing a paradigm shift with the integration of data-driven deep learning (DL) methods, which leverage large datasets of protein-ligand complexes to achieve superior performance in certain tasks, though not without new challenges related to generalizability and physical plausibility [12]. For natural products, which often exhibit high flexibility and unique chemotypes, these challenges are accentuated, requiring robust and well-validated protocols [11].

Core Principles and Methodologies

Pose Prediction: From Traditional Docking to Data-Driven Templates

The primary goal of pose prediction is to generate the three-dimensional conformation and placement (pose) of a ligand within a protein's binding site that most closely resembles the biologically active binding mode [10]. This prediction serves as the critical starting point for downstream modeling and analysis.

2.1.1 Traditional Molecular Docking Traditional docking software (e.g., AutoDock Vina, GLIDE, GOLD, LeDock) operates on a core principle combining a search algorithm and a scoring function [10] [11]. The search algorithm (e.g., genetic algorithm, Monte Carlo, incremental construction) explores the rotational, translational, and conformational degrees of freedom of the ligand within the defined binding site. The scoring function, which is often a simplified empirical or force-field based equation, evaluates and ranks each generated pose based on estimated interaction energy [13]. A significant limitation is the approximate nature of these scoring functions, which trade physical rigor for computational speed, sometimes leading to incorrect pose ranking [4].

2.1.2 The Rise of Data-Driven and Deep Learning Methods Recent advancements have introduced powerful data-driven alternatives that often outperform traditional docking in pose prediction accuracy on standard benchmarks [10] [12]. These can be categorized into:

- Deep Learning-based Pose Prediction: Methods like EquiBind and DiffDock use E(3)-equivariant networks or diffusion models trained on protein-ligand complex datasets to directly predict ligand poses [10].

- Cofolding Methods: Exemplified by AlphaFold3, these approaches predict the protein structure and ligand pose concurrently from sequence, eliminating the need for a pre-existing protein structure [10].

- Template-Based Baseline (TEMPL): This simpler, ligand-based approach underscores the "template effect." It identifies the Maximal Common Substructure (MCS) between a new ligand and a reference ligand with a known pose, then uses constrained 3D embedding to generate poses where the common substructure atoms are locked to the reference coordinates [10]. Its strong performance in certain challenges highlights the importance of using known structural data and the risks of data leakage in evaluating more complex DL methods [10].

2.1.3 Performance Comparison of Pose Prediction Methods The table below summarizes the characteristics and performance considerations of different pose prediction paradigms, particularly in the context of large-scale natural product screening.

Table 1: Comparison of Pose Prediction Methodologies for Large-Scale Screening

| Method Category | Key Example(s) | Core Principle | Relative Speed | Key Advantage | Key Limitation for Natural Products |

|---|---|---|---|---|---|

| Traditional Docking | AutoDock Vina, LeDock [13] [11] | Physics-based scoring + heuristic search | Fast | Well-established, interpretable, high throughput | Scoring function inaccuracies; handling of ligand flexibility |

| Deep Learning Pose Prediction | DiffDock, EquiBind [10] | Deep learning on 3D complex structures | Very Fast (after training) | High pose accuracy on benchmarks | Risk of generating physically implausible poses; steric clashes [12] |

| Cofolding | AlphaFold3 [10] | Joint protein-ligand structure prediction | Moderate to Slow | No pre-existing protein structure needed | Computationally intensive; less suitable for ultra-large libraries |

| Template-Based (Ligand-Centric) | TEMPL [10] | Maximal Common Substructure (MCS) alignment | Fast | Excellent for analogs; simple baseline | Requires a close template; limited for novel scaffolds |

Binding Affinity Scoring: Beyond Docking Scores

While docking programs produce a score, these values are typically not accurate predictors of absolute binding affinity (e.g., Ki, ΔG) [14]. Refined scoring is therefore a crucial secondary step.

2.2.1 End-Point Free Energy Methods More rigorous, physics-based methods like MM/PBSA and MM/GBSA are widely used for binding affinity estimation and pose re-ranking. These end-point free energy methods calculate the free energy difference between the bound and unbound states using molecular mechanics energies and implicit solvation models [15]. A study on protein-cyclic peptide complexes showed that a fine-tuned MM/PBSA(GBSA) workflow could double the correlation (Rp = -0.732) with experimental binding affinities compared to a standard docking program [15]. This makes them valuable for refining results from large-scale docking screens, though they are more computationally demanding.

2.2.2 Machine Learning-Enhanced Scoring A modern approach integrates traditional docking poses with machine learning. The DockBind framework, for instance, uses docking poses generated by DiffDock as input to a graph neural network (MACE) that learns to predict affinity from atomic interactions [14]. Key strategies include using multiple top poses for training as data augmentation and ensembling predictions across poses to improve robustness [14]. This hybrid approach aims to overcome the limitations of classical scoring functions by learning complex patterns from data while retaining structural information.

2.2.3 Performance of Affinity Scoring Methods The accuracy of different scoring strategies varies significantly, as shown in the comparison below.

Table 2: Performance of Binding Affinity Scoring and Re-Ranking Methods

| Scoring Method | Typical Use Case | Theoretical Basis | Computational Cost | Reported Performance (Example) | Suitability for Large-Scale NP Screening |

|---|---|---|---|---|---|

| Docking Score (e.g., Vina) | Initial pose ranking & virtual screening | Empirical or force-field-based | Low | Variable; often poor correlation with experiment [14] | Core method for initial screening; requires downstream validation |

| MM/PBSA/GBSA [15] | Pose re-ranking & affinity estimation | Molecular Mechanics + Implicit Solvent | Medium to High | Rp = -0.73 for cyclic peptides [15] | Applicable for top hits refinement; too costly for entire libraries |

| ML-based (e.g., DockBind) [14] | Affinity prediction from poses | Machine Learning on physical graphs | Low (after pose generation) | Superior to classical scoring on kinase datasets [14] | Promising for post-docking prioritization; depends on quality of input poses |

Application Notes for Natural Product Discovery

A Workflow for Large-Scale Virtual Screening of Natural Product Libraries

The following diagram outlines a complete, optimized workflow for identifying natural product inhibitors against a defined protein target, integrating the principles and methods discussed above.

3.2 Protocol: Optimizing and Validating the Virtual Screening Protocol Before screening a large library, the docking protocol must be optimized and validated for the specific target to minimize the risk of failure [4] [11]. This involves two key sequential phases, detailed in the protocol below.

Diagram Title: Virtual Screening Protocol Optimization & Validation Workflow

Phase 1: Pose Prediction Accuracy

- Objective: Ensure the docking software can reproduce known experimental poses.

- Procedure:

- Re-docking: Extract the native ligand from a protein's co-crystal structure (e.g., from PDB). After standard protein preparation, re-dock this ligand back into its original binding site. The root-mean-square deviation (RMSD) between the top-ranked docked pose and the experimental pose is calculated. An RMSD ≤ 2.0 Å is typically considered successful [11].

- Cross-docking: To test robustness, dock known active ligands from other crystal structures of the same target into the prepared receptor. This assesses the protocol's ability to handle slight variations in ligand structure [11].

- Optimization: If RMSD values are poor, adjust docking parameters (e.g., search space size, exhaustiveness) or try different docking software/scoring function combinations.

Phase 2: Virtual Screening Enrichment

- Objective: Ensure the protocol can distinguish true binders from non-binders in a blind screen.

- Procedure:

- Prepare Active & Decoy Sets: Curate a set of molecules with confirmed experimental activity against the target (actives). Generate a set of decoy molecules that are physically similar but topologically distinct to likely be inactive (resources like DUD-E provide these) [11].

- Perform Screening: Dock the combined active and decoy library using the protocol from Phase 1.

- Analyze Enrichment: Plot a Receiver Operating Characteristic (ROC) curve and calculate Enrichment Factors (EFs), such as EF1% (the fraction of actives found in the top 1% of the ranked list). A good protocol will rank actives significantly higher than decoys [11].

- Optimization: Use the enrichment metrics to select the best-performing scoring function or protein conformation before proceeding to the full natural product library.

The Scientist's Toolkit: Reagents, Software, and Data

Table 3: Essential Research Reagent Solutions for Molecular Docking

| Category | Item / Software | Primary Function in Docking Workflow | Key Notes for Natural Product Research |

|---|---|---|---|

| Protein Structure | RCSB Protein Data Bank (PDB) | Source of experimental 3D structures of targets and target-ligand complexes. | Prioritize high-resolution structures co-crystallized with a ligand to define the binding site [11]. |

| Preparation & Modeling | RDKit [10], Biotite [10], Open Babel, MOE, Schrödinger Suite | Prepare protein (add H, assign charges, optimize sidechains) and ligand (generate tautomers, conformers, assign charges) structures. | Essential for handling diverse natural product stereochemistry and charge states. |

| Docking Software | AutoDock Vina [13] [11], LeDock [13] [11], GOLD [11], GLIDE, DOCK3.7 [4] | Core engines for performing pose search and initial scoring. | Use multiple programs or the consensus of multiple scoring functions to improve reliability [11]. |

| Data-Driven Tools | DiffDock [10] [14], AlphaFold3 [10], TEMPL [10] | Provide alternative, data-driven pose prediction, especially useful if no template exists (AF3) or if many analogs are known (TEMPL). | Test against traditional docking during protocol validation [12]. |

| Scoring & Refinement | gmx_MMPBSA (for MM/PBSA) [15], DockBind [14] | Re-rank docked poses and estimate binding affinities with greater accuracy than docking scores. | Apply to top hits (e.g., 100-1000) from the initial virtual screen. |

| Compound Libraries | In-house NP Databases, ZINC, COCONUT, NPASS | Sources of natural product structures for virtual screening. | Curate carefully: standardize structures, check for duplicates, consider accessible conformations [11]. |

| Validation & Analysis | Directory of Useful Decoys (DUD-E) [11], PyMOL, RDKit, Matplotlib | Generate decoy sets for validation; visualize poses and interactions; analyze and plot results. | Critical for the pre-screening optimization phase to avoid false positives [4] [11]. |

The field of virtual screening in drug discovery is undergoing a profound transformation, moving from the docking of curated libraries containing thousands to millions of compounds, to the systematic computational exploration of ultra-large, make-on-demand libraries encompassing billions to trillions of synthesizable molecules [16]. This paradigm shift is driven by the advent of tangible virtual libraries, such as the Enamine REAL Space, which has grown from 3.5 million "in-stock" compounds to over 37 billion readily accessible molecules [17] [18]. Where traditional high-throughput virtual screening (HTVS) was limited by synthetic and computational feasibility, new methodologies now enable researchers to interrogate unprecedented swathes of chemical space to discover novel, potent ligands with high hit rates [19] [20].

This shift holds particular significance for natural products research. Natural products (NPs) have historically been a rich source of drug leads but are often characterized by structural complexity and limited availability for large-scale experimental screening [5]. Ultra-large library docking offers a complementary strategy: it can identify novel, synthetically accessible scaffolds that mimic the favorable binding properties of NPs or directly screen vast digital repositories of natural compounds [21]. Furthermore, as libraries expand, the inherent bias of traditional screening decks toward "bio-like" molecules (metabolites, NPs, drugs) diminishes [18]. This allows for the discovery of entirely new chemotypes that are not inherently similar to known natural products but may possess superior drug-like properties, thereby expanding the therapeutic landscape beyond traditional NP-inspired chemistry.

Quantitative Evidence: The Impact of Scale

The theoretical advantage of screening larger libraries is now supported by compelling empirical data. Comparative studies demonstrate that increasing the library size by orders of magnitude directly enhances key discovery metrics, including hit rates, ligand potency, and scaffold novelty.

Table 1: Impact of Library Size on Virtual Screening Outcomes

| Target Protein | Small Library Size | Large Library Size | Key Improvement with Larger Library | Source |

|---|---|---|---|---|

| AmpC β-lactamase | 99 million molecules | 1.7 billion molecules | 2-fold increase in hit rate; 50x more inhibitors found; discovery of more new scaffolds [17]. | [17] |

| KLHDC2 (Ubiquitin Ligase) | N/A (Focused library follow-up) | Multi-billion library | 14% hit rate (7 hits) with single-digit µM affinity achieved from initial ultra-large screen [19]. | [19] |

| NaV1.7 (Sodium Channel) | N/A | Multi-billion library | 44% hit rate (4 hits) with single-digit µM affinity achieved [19]. | [19] |

| D4 Dopamine, σ2, 5HT2A Receptors | 10^5 molecules | Over 10^9 molecules | Docking scores of top-ranked molecules improve log-linearly with library size [18]. | [18] |

Table 2: Performance of Advanced Ultra-Large Screening Platforms

| Platform/Method | Core Strategy | Library Size Screened | Computational Efficiency | Reported Enrichment/Performance | Source |

|---|---|---|---|---|---|

| OpenVS (RosettaVS) | AI-accelerated active learning | Multi-billion compounds | ~7 days on 3000 CPUs + 1 GPU | SOTA performance on CASF2016 (EF1%=16.72); High hit rates (14-44%) [19]. | [19] |

| REvoLd | Evolutionary algorithm in combinatorial space | 20+ billion compounds (Enamine REAL) | ~50,000-76,000 docking calculations per target | Hit rate improvements by factors of 869 to 1622 vs. random selection [20]. | [20] |

| HIDDEN GEM | Generative modeling + similarity search | 37 billion compounds | ~2 days (single GPU + CPU cluster) | Up to 1000-fold enrichment over random; docks <600k molecules per cycle [22]. | [22] |

Application Notes: Methodologies for the Ultra-Large Scale

Navigating billion-scale chemical spaces requires innovative strategies that move beyond exhaustive brute-force docking. The following application notes summarize leading methodologies.

2.1 Active Learning & AI-Acceleration (OpenVS/RosettaVS) This approach integrates a high-accuracy physics-based docking method (RosettaVS) with an active learning framework to dynamically prioritize docking calculations [19]. The platform uses a target-specific neural network trained iteratively during the screening process. It starts by docking a random subset, uses the results to train a model that predicts promising regions of chemical space, and then selectively docks compounds from those regions. This cycle repeats, dramatically reducing the number of full docking calculations required to identify top hits. The method employs two docking modes: a fast initial screen (VSX) and a high-precision mode with full receptor flexibility (VSH) for final ranking [19]. This is particularly useful for targets requiring induced-fit docking.

2.2 Evolutionary Algorithms in Combinatorial Space (REvoLd) Designed explicitly for make-on-demand libraries built from chemical reactions and building blocks, REvoLd uses an evolutionary algorithm to optimize molecules directly within the vast combinatorial space without enumerating all possibilities [20]. It starts with a population of random molecules from the space, docks them, and selects the best scorers ("fittest"). Through operations mimicking mutation (swapping fragments) and crossover (combining parts of high-scoring molecules), it generates new candidate molecules for the next "generation." This process efficiently explores the chemical landscape, discovering high-scoring scaffolds with a minimal number of docking evaluations (tens of thousands versus billions) [20].

2.3 Generative Chemistry-Guided Workflows (HIDDEN GEM) This methodology synergizes molecular docking with generative AI and massive chemical similarity searching [22]. The workflow begins by docking a small, diverse initial library (e.g., ~460,000 compounds). The results are used to fine-tune a generative AI model and train a filter to create and select novel, high-scoring virtual compounds. These de novo hits are then used as queries for ultra-fast similarity searches against a multi-billion compound purchasable library (e.g., Enamine REAL). The most similar purchasable compounds are subsequently docked to finalize the hit list. This approach leverages generative AI to explore beyond the enumerated library while ensuring final hits are synthetically accessible via similarity matching [22].

2.4 Integrating Natural Product Libraries While ultra-large synthetic libraries offer novelty, dedicated screening of natural product libraries remains crucial. Protocols exist for constructing and curating phytochemical libraries for virtual screening against targets like quorum-sensing receptors [11]. A best-practice workflow involves: 1) Library Preparation: Curating 3D structures of natural compounds from databases like ZINC; 2) Protocol Validation: Performing control re-docking of known co-crystallized ligands and benchmarking against decoy sets to optimize docking parameters; 3) Hierarchical Screening: Employing multi-step docking (e.g., HTVS → SP → XP in Glide) to filter large libraries down to a manageable number of high-confidence hits for further study [21] [11]. This structured approach brings the rigor of ultra-large screening methodologies to the unique chemical space of natural products.

Experimental Protocols

3.1 Protocol: Preparation for an Ultra-Large Virtual Screen Adapted from best-practice guides for large-scale docking [4] [11].

Objective: To properly prepare the target protein structure and define parameters prior to launching a resource-intensive ultra-large screen.

Steps:

- Target Selection and Preparation:

- Obtain a high-resolution crystal structure of the target protein, preferably in a ligand-bound conformation.

- Using software like Schrödinger's Protein Preparation Wizard or UCSF Chimera, process the structure: add missing hydrogen atoms, assign correct protonation states at biological pH (paying special attention to histidine residues), and optimize hydrogen bonding networks.

- Perform restrained energy minimization to relieve steric clashes.

Binding Site Definition:

- Define the binding site using the coordinates of a co-crystallized native ligand.

- Alternatively, use computational site detection tools (e.g., FTMap, SiteMap) if the site is unknown.

- Create a 3D grid box centered on the binding site. The box dimensions should be large enough to accommodate potential ligands but not so large as to drastically increase computation time. A common starting point is a box extending 10-15 Å from the site center.

Control Docking and Validation:

- Re-docking: Extract the native ligand, re-dock it into the prepared binding site, and calculate the Root-Mean-Square Deviation (RMSD) between the docked and crystal poses. An RMSD < 2.0 Å typically indicates a well-validated setup [21] [11].

- Decoy Enrichment: If known active compounds for the target are available, perform a small-scale enrichment test using the Directory of Useful Decoys (DUD-E). A good protocol should successfully rank known actives above decoy molecules, as measured by the area under the ROC curve (AUC) [11].

Hardware and Resource Assessment:

- Estimate the computational cost based on library size and docking speed. For brute-force docking of billions, a large CPU cluster (thousands of cores) is required [19].

- For accelerated methods (Active Learning, REvoLd, HIDDEN GEM), confirm access to necessary hardware (GPUs for AI models) and software licenses.

3.2 Protocol: Hit Triage and Post-Docking Analysis for a Billion-Compound Screen Adapted from large-scale experimental validation studies [17].

Objective: To rationally select a manageable number of diverse, high-priority compounds for synthesis and experimental testing from millions of top-scoring virtual hits.

Steps:

- Initial Ranking and Filtering:

- Rank the entire docked library by its primary scoring function (e.g., docking score, binding energy).

- Apply basic property filters (e.g., Lipinski's Rule of Five, pan-assay interference compound (PAINS) filters, synthetic accessibility score) to remove undesirable chemotypes.

Clustering and Diversity Selection:

- From the top 0.1%-1% of ranked compounds (which may still number in the hundreds of thousands), cluster molecules based on chemical similarity (e.g., using ECFP4 fingerprints and Tanimoto similarity).

- Select cluster heads (representative compounds) from major clusters to ensure scaffold diversity. Prioritize clusters that are chemically distinct from known binders.

Visual Inspection and Interaction Analysis:

- Manually inspect the predicted binding poses of the selected cluster heads.

- Prioritize compounds that form key, sensible interactions with the protein target (e.g., hydrogen bonds with catalytic residues, optimal hydrophobic packing). Discard poses with strained conformations or unrealistic interactions.

Commercial Availability and Synthesis Planning:

- For make-on-demand libraries, check the synthetic feasibility and estimated delivery time for selected compounds.

- For novel designs or natural product analogs, plan a synthetic route or source the natural material.

Experimental Validation Cascade:

- Primary Assay: Test purchased or synthesized compounds in a primary biochemical or cellular assay at a single high concentration (e.g., 100 µM) to confirm activity.

- Dose-Response: For confirmed hits, determine half-maximal inhibitory concentration (IC50) or binding affinity (Ki/Kd) values.

- Orthogonal Assays: Use secondary, orthogonal assays to rule out false positives from assay-specific artifacts (e.g., aggregation, fluorescence interference) [17].

- Structural Validation: If possible, solve a co-crystal structure of the hit compound bound to the target to confirm the predicted docking pose, as was successfully done for a KLHDC2 hit [19].

The Scientist's Toolkit for Ultra-Large Screening

Table 3: Essential Research Reagents & Resources

| Category | Item/Resource | Function & Relevance | Example/Note |

|---|---|---|---|

| Software & Platforms | DOCK3.7/3.8, AutoDock Vina, Rosetta, Schrödinger Glide | Core docking engines for pose prediction and scoring. Open-source options (DOCK, Vina, Rosetta) are critical for accessible large-scale work [4]. | RosettaLigand enables flexible receptor docking [20]. |

| Active Learning Platforms (OpenVS, DeepDocking) | AI-driven platforms that reduce computational cost by orders of magnitude for billion-compound screens [19] [22]. | OpenVS integrates RosettaVS with active learning [19]. | |

| Generative Chemistry Software | Used to design novel, optimized hit compounds in-silico, which can then be mapped to purchasable libraries [22]. | Used in the HIDDEN GEM workflow [22]. | |

| Computational Resources | High-Performance Computing (HPC) Cluster | Essential for brute-force docking of large libraries. Scaling to thousands of CPU cores is standard [19] [4]. | Cloud computing (AWS, Google Cloud) offers scalable alternatives. |

| GPUs (e.g., NVIDIA RTX/V100) | Accelerate training of AI/ML models used in active learning and generative workflows [19] [22]. | A single high-end GPU can be sufficient for some accelerated workflows [22]. | |

| Chemical Libraries | Make-on-Demand Virtual Libraries (Enamine REAL, eMolecules eXplore) | Ultra-large spaces (billions to trillions) of synthetically accessible compounds, representing the new frontier for screening [17] [16]. | Enamine REAL Space >37B compounds; eXplore Space >7T compounds [22] [16]. |

| Natural Product Databases (ZINC, COCONUT, NPASS) | Curated collections of natural product structures for virtual screening and inspiration [5] [21]. | ZINC contains over 80,000 natural compounds [21]. | |

| Validation Tools | Directory of Useful Decoys (DUD-E) | Provides decoy molecules to benchmark and optimize virtual screening protocols for enrichment [11]. | Critical for control calculations before a large screen [11]. |

| Visualization Software (PyMOL, Chimera, Discovery Studio) | For visualizing protein-ligand interactions, inspecting docking poses, and preparing publication-quality figures. | Used in pose inspection and triage steps. |

Diagrams of Core Workflows and Concepts

Ultra-Large vs Focused Library Screening Workflow

HIDDEN GEM Accelerated Screening Cycle

The Evolution from Traditional to Ultra-Large Screening

Virtual screening of ultra-large chemical libraries presents a transformative opportunity for natural product (NP) research, enabling the systematic exploration of vast, synthetically accessible chemical space derived from or inspired by biological sources. However, the structural complexity, three-dimensionality, and distinct physicochemical profiles of NPs introduce significant challenges that extend beyond conventional small-molecule docking. These include managing the computational cost of flexible docking for large, flexible scaffolds, accurately scoring interactions driven by unique functional groups, and ensuring the synthetic feasibility and favorable pharmacokinetic profiles of identified hits. This application note, framed within a thesis on large-scale molecular docking for NP discovery, details these unique considerations. It provides targeted protocols for the preparation of NP-focused libraries, the implementation of advanced sampling algorithms like evolutionary frameworks for efficient screening, and a comprehensive post-docking validation workflow integrating molecular dynamics and ADMET prediction. By outlining these specialized strategies, this guide aims to equip researchers with a robust methodological framework to harness the potential of complex NP libraries in computational drug discovery.

The integration of natural products (NPs) into modern drug discovery pipelines offers an unparalleled source of molecular diversity, structural complexity, and evolved bioactivity. Framed within a broader thesis on large-scale molecular docking, this work addresses the critical junction between the immense potential of NP chemical space and the computational realities of screening billion-compound libraries. Contemporary "make-on-demand" libraries, such as the Enamine REAL space containing tens of billions of readily synthesizable compounds, now include vast sections inspired by NP scaffolds, providing a golden opportunity for in-silico discovery [20]. The core challenge transitions from merely accessing chemical space to efficiently and intelligently exploring it.

Traditional virtual high-throughput screening (vHTS) often relies on rigid docking for speed, sacrificing accuracy in modeling the flexible interactions characteristic of many NPs [20]. Conversely, flexible docking, while more accurate, becomes computationally prohibitive at the billion-molecule scale [23]. This is exacerbated by the unique attributes of NPs: they often possess high stereochemical complexity, a greater proportion of sp³-hybridized carbons, and macrocyclic or polycyclic ring systems that challenge conformational search algorithms [24]. Furthermore, their "drug-likeness" often falls outside Lipinski's Rule of Five, necessitating specialized assessment of pharmacokinetics and synthetic accessibility [25] [24].

Recent advances in algorithmic screening and deep learning (DL) are beginning to bridge this gap. Evolutionary algorithms can efficiently traverse combinatorial library space without exhaustive enumeration, while DL-based docking methods promise faster, accurate pose prediction [20] [26]. However, as highlighted in a 2025 review, DL methods can struggle with generalization to novel protein pockets and often produce physically implausible poses, indicating that hybrid or carefully validated approaches are essential [26] [23]. This application note details the specific considerations and provides actionable protocols for docking complex NP libraries, from initial library preparation to final hit validation.

Defining the Computational Challenge

Docking complex NP libraries amplifies standard vHTS challenges. The primary bottlenecks are computational cost, accurate scoring, and the biological relevance of predictions, each intensified by NP properties.

Table 1: Key Computational Challenges in Docking Complex Natural Product Libraries

| Challenge Category | Specific Issue | Impact on Natural Product Docking |

|---|---|---|

| Sampling & Flexibility | High-dimensional conformational space of flexible NPs [23]. | Macrocycles and long aliphatic chains require extensive torsion sampling. Rigid docking is often inadequate [20]. |

| Scoring & Interactions | Scoring functions trained on synthetic, lead-like compounds [26]. | May poorly estimate affinity for NP-specific interactions (e.g., complex hydrogen-bonding networks, halogen bonds). |

| Chemical Space & Library Preparation | NP libraries contain high stereochemical and 3D complexity [24]. | Requires accurate 3D conformer generation, stereochemistry assignment, and potential tautomer enumeration. |

| Synthetic Feasibility | NP-inspired hits must be readily synthesizable from available building blocks [20]. | "Make-on-demand" compatibility is crucial. Hits from de novo design may be synthetically inaccessible. |

| Pharmacokinetic (PK) Profile | NPs frequently violate standard drug-likeness rules [25]. | Early filtering using NP-aware ADMET models is essential to avoid late-stage attrition due to poor PK. |

The performance gap between docking methods is critical. A 2025 benchmark study categorized docking methods into four tiers: traditional physics-based methods (e.g., Glide SP) and hybrid AI-scoring methods showed the highest combined success rates (accurate and physically valid poses), followed by generative diffusion models (e.g., SurfDock), with regression-based DL models performing poorest [26]. Importantly, while diffusion models excelled in pose accuracy on known targets, their physical validity and generalization to novel pockets were weaker [26]. This underscores the need for rigorous validation in NP screening, where targets and scaffolds may be novel.

Diagram Title: Challenges in Docking Complex Natural Products

Application Note 1: Protocol for Focused NP Library Preparation & Docking

This protocol adapts the automated virtual screening pipeline principles for NP-focused libraries, emphasizing 3D conformer generation and property filtering [27].

Objective: To generate a target-ready, property-filtered 3D compound library from a curated list of NP structures for initial flexible docking screens.

Materials & Software:

- Input: A list of NP SMILES or SDF files, curated from sources like the Natural Products Atlas or internal collections.

- Software: Open Babel (v3.0.0+), RDKit (v2023+), UCSF Chimera or PyMOL for visualization, AutoDockTools [27].

- Computing: Unix/Linux command-line environment or Windows Subsystem for Linux (WSL) [27].

Experimental Protocol:

Step 1: Structure Standardization & Tautomer Enumeration

- Standardize: Using RDKit, load the initial SDF/SMILES. Apply chemical standardization: neutralize charges, remove solvents, and generate canonical tautomers. This ensures consistency.

- Enumerate: For NPs known to have significant tautomeric forms, use RDKit's

TautomerEnumeratorto generate relevant tautomers for docking. Limit to a maximum of 3-5 predominant physiological forms to manage library size.

Step 2: 3D Conformer Generation & Minimization

- Generate: Use RDKit's

ETKDGv3method, which is superior for capturing the complex 3D geometry of NPs. Generate a minimum of 50 conformers per molecule. For macrocycles, increase this to 100-200 and consider using specialized macrocycle conformer generators (e.g., ConfGen-Macrocycle). - Minimize & Select: Perform a brief MMFF94 force field minimization on each conformer. Select the lowest-energy conformer as the representative 3D structure for initial docking. Save the multi-conformer model for possible later use in flexible docking.

Step 3: Property-Based Filtering for NP "Developability"

- Apply NP-Aware Filters: Instead of strict Rule of Five, use softened metrics or NP-specific guidelines [24]. Filter based on:

- Molecular Weight (MW): < 800 Da.

- Calculated LogP (cLogP): < 6.

- Rotatable Bonds: < 15.

- Pan-Assay Interference Compounds (PAINS): Remove structures matching PAINS substructures using an RDKit filter.

- Synthetic Accessibility: Calculate the Synthetic Accessibility Score (SAScore). Flag or filter compounds with a score > 6 (on a 1-10 scale, where 10 is most difficult) [24].

Step 4: Preparation for Docking (AutoDock Vina Example)

- Convert Format: Use Open Babel to convert the final filtered SDF to PDBQT format, adding Gasteiger charges:

obabel -isdf filtered_library.sdf -opdbqt -O library.pdbqt --partialcharge gasteiger. - Prepare Receptor: Prepare the target protein PDB file (remove water, add hydrogens, assign charges) using AutoDockTools or

jamreceptorfrom the automated pipeline [27]. Define the docking grid box centered on the binding site with sufficient size (e.g., 25x25x25 ų) to accommodate large NP scaffolds.

Step 5: Execution & Initial Analysis

- Docking Run: Execute docking using AutoDock Vina or QuickVina 2:

qvina02 --receptor protein.pdbqt --ligand library.pdbqt --config config.txt --out docked_results.pdbqt. - Ranking: Rank compounds by docking score (binding affinity estimate). Visually inspect the top 50-100 poses for key interactions and sensible binding geometry.

Application Note 2: Protocol for Evolutionary Algorithm-Guided Ultra-Large Screening

For screening billion-scale make-on-demand NP-inspired libraries, exhaustive flexible docking is impossible. This protocol outlines the use of the REvoLd algorithm as a case study [20].

Objective: To efficiently identify high-affinity NP-like hits from an ultra-large combinatorial library (e.g., Enamine REAL) using an evolutionary algorithm (EA) integrated with flexible docking in Rosetta.

Materials & Software:

- Target: Prepared protein structure (PDB format).

- Library: Access to the REACTION-R1R2R3 definition of a make-on-demand library (e.g., Enamine REAL Space) [20].

- Software: Rosetta software suite with REvoLd application installed [20].

- Computing: High-performance computing (HPC) cluster with multiple cores.

Experimental Protocol:

Step 1: Define the Combinatorial Space & Algorithm Parameters

- Configure REvoLd to access the desired subset of the REAL space (e.g., a specific set of reactions and building blocks known to generate NP-like scaffolds).

- Set EA hyperparameters as optimized in the REvoLd study [20]:

- Population size: 200 random initial molecules.

- Generations: 30.

- Individuals advancing per generation: 50.

- Selection pressure and mutation rates as per default REvoLd protocol.

Step 2: Execute the Evolutionary Screening

- Launch REvoLd runs (minimum 20 independent runs are recommended to explore diverse chemical space [20]).

- The algorithm will iteratively [20]:

- Select: Choose high-scoring ligands (parents) from the current population.

- Crossover: Combine fragments from different parents to create new child molecules.

- Mutate: Replace fragments with chemically similar alternatives or change the core reaction.

- Dock & Score: Use RosettaLigand's flexible docking to score new individuals.

- Populate: Form the next generation from the best individuals.

Step 3: Analysis & Hit Selection

- Aggregate Results: Combine the output from all independent runs. REvoLd typically docks only 50,000-80,000 unique molecules per target to achieve significant enrichment [20].

- Identify Top Hits: Sort all docked molecules by Rosetta interface score (docking score). The top-ranking compounds represent the evolutionary "fittest" hits.

- Assess Diversity: Cluster the top 1000 hits by molecular fingerprint (e.g., Tanimoto similarity on Morgan fingerprints) to ensure a diversity of chemotypes, not just variations of a single scaffold.

Diagram Title: REvoLd Evolutionary Screening Workflow

Table 2: REvoLd Performance Benchmark on Drug Targets [20]

| Drug Target | Total Unique Molecules Docked | Approx. Library Size Searched | Reported Hit Rate Enrichment vs. Random |

|---|---|---|---|

| Target A | 49,000 | >20 Billion | 869-fold |

| Target B | 76,000 | >20 Billion | 1622-fold |

| Target C | ~65,000 (avg.) | >20 Billion | ~1200-fold (avg. factor) |

Application Note 3: Protocol for Post-Docking Validation & ADMET Profiling

Docking scores are initial filters. This protocol details a multi-stage validation cascade for NP hits, as exemplified in a 2025 study on natural analgesics [28].

Objective: To validate the stability, interaction fidelity, and drug-like potential of top docking hits from an NP library screen.

Materials & Software:

- Input: Top scoring protein-ligand complexes from docking (PDB format).

- Software: GROMACS or AMBER for MD, PyMOL for analysis, SwissADME or ADMETLab for PK prediction [28] [25].

- Computing: HPC cluster for MD simulations.

Experimental Protocol:

Step 1: Molecular Dynamics (MD) Simulation for Stability

- System Setup: Solvate the docked complex in a water box (e.g., TIP3P), add ions to neutralize charge. Use force fields like CHARMM36 or GAFF2.

- Equilibration: Perform energy minimization, followed by NVT and NPT equilibration (100-500 ps each).

- Production Run: Run an unrestrained MD simulation for a minimum of 100 ns (recommended for NP complexes) [28]. Use two or more independent replicas.

- Analysis:

- Root Mean Square Deviation (RMSD): Calculate for the protein backbone and ligand heavy atoms. A stable plateau indicates a stable complex.

- Root Mean Square Fluctuation (RMSF): Identify flexible protein regions; the binding site should show reduced fluctuation.

- Ligand-Protein Interactions: Use tools like VMD or Maestro to monitor key hydrogen bonds and hydrophobic contacts over time. Critical docking-predicted interactions should be maintained >60% of the simulation time.

Step 2: Binding Free Energy Refinement (MM/GBSA)

- Extract 100-200 equally spaced snapshots from the stable phase of the MD trajectory.

- Perform MM/GBSA (Molecular Mechanics/Generalized Born Surface Area) calculations on each snapshot to estimate the binding free energy (ΔG_bind).

- Compare the average MM/GBSA ΔG_bind with the initial docking score. While absolute values may differ, a strong correlation in ranking lends credibility to the docking results [28].

Step 3: In-silico ADMET and Toxicity Profiling

- Profile Prediction: Submit the SMILES of validated hits to web servers like SwissADME or ADMETLab [25].

- Key Parameters to Assess:

- Absorption: Gastrointestinal (GI) absorption prediction, Caco-2 permeability.

- Metabolism: Interaction with major Cytochrome P450 isoforms (CYP3A4, 2D6 inhibitors/substrates).

- Distribution: Blood-Brain Barrier (BBB) permeability if relevant to target.

- Toxicity: hERG channel inhibition (cardiotoxicity risk), Ames test (mutagenicity).

- Decision: Prioritize hits with favorable predicted ADMET profiles for experimental testing.

Diagram Title: Post-Docking Validation Cascade for NP Hits

Table 3: Key In-silico ADMET Prediction Methods for Natural Products [25]

| ADMET Property | Common In-silico Method | Application Note for NPs |

|---|---|---|

| Metabolism (CYP450) | QSAR models, Pharmacophore modeling, Docking to CYP isoforms. | Particularly crucial for polyphenols and terpenoids. Docking can predict regioselectivity of oxidation [25]. |

| Permeability/Absorption | PAMPA prediction models, Rule-based filters (e.g., modified RO5). | NPs like glycosides may have poor passive permeability; models must account for this [24]. |

| Toxicity (e.g., hERG) | Ligand-based classifiers, Structure-alert screening. | Essential for alkaloid-containing NPs, which can have intrinsic ion channel activity. |

| Solubility | Quantum-mechanical (QM) calculations (logS), Empirical models. | Low solubility is a major NP hurdle; QM can inform salt or prodrug design [25]. |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 4: Key Research Reagent Solutions for Docking NP Libraries

| Item / Resource | Function / Purpose | Relevance to NP Docking |

|---|---|---|

| Enamine REAL Space | A >20 billion compound "make-on-demand" combinatorial library defined by reaction rules [20]. | Provides a vast, synthetically accessible chemical space that includes NP-like scaffolds for ultra-large screening. |

| ZINC Database | A free public resource of commercially available compounds for virtual screening [27]. | Source for purchasable NP analogs or building blocks for validation. |

| Rosetta Software Suite | A comprehensive modeling software for macromolecular structures. Includes RosettaLigand for flexible docking [20]. | The backend for the REvoLd algorithm, enabling flexible docking within evolutionary screening. |

| AutoDock Vina / QuickVina 2 | Widely used, open-source docking programs with a good balance of speed and accuracy [27] [26]. | Accessible workhorses for initial library screening and protocol validation. |

| RDKit | Open-source cheminformatics toolkit. | Essential for NP library preprocessing: standardization, tautomer enumeration, 3D conformer generation, and property calculation [24]. |

| GROMACS/AMBER | Molecular dynamics simulation packages. | Required for post-docking validation of NP-complex stability via MD and MM/GBSA [28]. |

| SwissADME / ADMETLab | Free web tools for predicting pharmacokinetic and toxicity properties. | Critical for early-stage filtering of NP hits based on predicted ADMET profiles [28] [25]. |

Blueprint for Success: Designing and Executing a Large-Scale Docking Campaign

In large-scale molecular docking campaigns for natural products research, the meticulous preparation of targets and libraries is not merely a preliminary step but the critical determinant of success. This phase involves curating high-quality, three-dimensional protein structures and assembling chemically diverse, well-characterized natural product libraries. The exponential growth of structural data, fueled by experimental methods and AI-based predictions like AlphaFold, alongside massive natural product repositories, presents both an opportunity and a challenge [29] [30]. Effective curation filters this wealth of data to construct reliable, docking-ready inputs. A well-prepared target ensures the accurate modeling of the binding site, while a well-prepared library maximizes the chemical space screened and minimizes artifacts [4] [31]. This foundational work directly impacts the accuracy of binding pose predictions, the enrichment of true hits, and the ultimate translation of computational findings into biologically active leads [32] [5]. The following protocols detail systematic approaches to navigate these expansive datasets and prepare robust resources for billion-compound virtual screens.

Curating Target Protein Structures for Docking

The selection and preparation of a target protein structure require careful evaluation of experimental quality, functional relevance, and conformational state to ensure the docking grid accurately represents a biologically relevant, ligand-binding competent site.

Structure Sourcing and Evaluation

The primary source for experimental structures is the Protein Data Bank (PDB). For targets lacking experimental data, predicted structures from AlphaFold DB or similar repositories are invaluable alternatives [29] [30]. Selection criteria must be applied rigorously [4] [31]:

- Resolution and Quality Metrics: Prefer crystal structures with resolution ≤ 2.5 Å. For cryo-EM maps, assess local quality using metrics like the LIVQ or DAQ score to ensure reliability in the binding pocket region [31].

- Functional State and Completeness: Select structures in the desired functional state (e.g., active/inactive). Ensure the binding pocket is fully resolved, with no missing loops or side chains critical for ligand interaction.

- Biological Relevance: Structures co-crystallized with a native ligand or receptor-specific modulator provide the most reliable template, as they often capture a relevant conformation.

Pre-docking Structure Preparation

A standardized preparation protocol minimizes variability and error. The workflow involves:

- Protein Cleaning: Remove all non-essential molecules (water, ions, solvents, and heteroatoms), except for crystallographic waters or ions that are structurally integral to the binding site.

- Protonation and Assignment: Add hydrogen atoms. Assign correct protonation states and tautomers to key binding site residues (e.g., His, Asp, Glu) at the intended physiological pH, typically using computational tools like PROPKA.

- Side-Chain and Loop Modeling: Optimize the orientation of ambiguous side chains and model any missing loops near the binding site using comparative modeling or refinement software.

- Binding Site Definition: Precisely define the docking search space. This can be done based on the centroid of a cocrystallized ligand, through computational pocket detection algorithms (e.g., FPocket), or using functional site prediction tools [4].

Validation through Control Docking

Before proceeding to large-scale screening, validate the prepared target and chosen parameters through control docking experiments [4]:

- Self-Docking: Redock the native co-crystallized ligand. A successful protocol should reproduce the experimental pose with a root-mean-square deviation (RMSD) of ≤ 2.0 Å.

- Decoy Enrichment: Perform a small-scale screen against a database containing known active ligands and inactive decoys for the target (e.g., from DUD-E or DEKOIS). A robust setup should show significant enrichment of actives in the top-ranked compounds.

Table 1: Key Public Databases for Target and Ligand Curation

| Database Name | Type | Key Content/Utility | Scale/Size | Reference |

|---|---|---|---|---|

| Protein Data Bank (PDB) | Experimental Structures | Curated 3D structures of proteins, nucleic acids, and complexes from X-ray, cryo-EM, NMR. | >200,000 entries | [33] [31] |

| AlphaFold DB | Predicted Structures | AI-predicted protein structures for entire proteomes. | 214+ million structures | [29] [30] |

| RepeatsDB | Specialized Structures | Annotated database of tandem repeat proteins (STRPs) from PDB and AlphaFold DB. | 34,319 unique sequences | [29] |

| GNDC (Gene-encoded Natural Diverse Components) | Natural Product Library | AI-curated repository of secondary metabolites, peptides, RNAs, and carbohydrates from herbal genomes. | 234 million components | [34] |

| NCI Natural Products Repository | Natural Product Library | Physical library of crude extracts and prefractionated samples from global biodiversity collections. | >230,000 extracts; 1M fractions planned | [35] |

| ChEMBL / PubChem | Bioactivity Data | Public repositories of bioactivity data (IC50, Ki, etc.) for drug-like compounds and natural products. | 24.2M+ activity records (ChEMBL) | [31] |

Diagram 1: Workflow for Curating a Docking-Ready Protein Structure.

Curating Natural Product Libraries for Screening

Natural product (NP) libraries offer unparalleled chemical diversity but present unique challenges in standardization, complexity, and potential interference. Effective curation involves strategic sourcing, chemical standardization, and rigorous quality control to create libraries suitable for high-throughput virtual screening [35].

Library Sourcing and Ethical Collection

Libraries can be sourced from physical sample collections or virtual compound databases.

- Physical Sample Libraries: Initiatives like the NCI Program for Natural Product Discovery create massive libraries (e.g., 1 million prefractionated samples) from globally collected organisms [35]. Critical ethical and legal requirements include adherence to the Nagoya Protocol on Access and Benefit Sharing (ABS) and obtaining all necessary collection and export permits [35].

- Virtual Compound Databases: Digital repositories like the Gene-encoded Natural Diverse Components (GNDC) database use AI and genomics to catalog hundreds of millions of virtual NP compounds, offering a vast, pre-curated chemical space for in silico screening [34].

From Crude Extract to Screen-Ready Library

Processing raw biological material into a screen-ready library is a multi-step pipeline designed to balance chemical diversity with sample quality [35].

- Extraction: Use standardized, high-throughput methods (e.g., accelerated solvent extraction) to generate crude extracts that capture the metabolic profile of the source organism.

- Prefractionation: This critical step reduces complexity and concentrates minor metabolites. Common techniques include:

- Solid-Phase Extraction (SPE): Separates compounds based on polarity into distinct fractions.

- High-Performance Liquid Chromatography (HPLC): Provides higher-resolution separation, generating well-defined fractions ideal for bioassay and dereplication.

- Chemical Standardization & Dereplication: Early-stage identification of known compounds (dereplication) using hyphenated techniques like LC-MS/MS with NP spectral libraries is essential to prioritize novel chemistry. AI tools are increasingly used to annotate massive virtual NP libraries [34].

- Library Formatting for Docking: Virtual libraries require conversion into 3D chemical structures with correct protonation states and tautomers. Generate multiple conformers for each compound to account for flexibility.

Quality Control and Challenge Mitigation

Natural product libraries pose specific screening challenges that must be addressed during curation [35]:

- Assay Interference: Remove or flag fractions containing common nuisance compounds (e.g., tannins, saponins, fluorescent or colored compounds) that can cause false positives.

- Solubility and Stability: Curate libraries in DMSO or other suitable solvents, and assess stability over time for physical libraries.

- Redundancy and Diversity Analysis: Apply cheminformatic analysis to ensure chemical diversity and minimize structural redundancy within the virtual library.

Diagram 2: Pipeline for Preparing a Screen-Ready Natural Product Library.

Experimental Protocols

This protocol ensures a protein structure is suitable for a high-throughput virtual screen.

- Step 1: Structure Selection and Retrieval. Identify all available structures for your target from the PDB. Prioritize human (or relevant species) structures co-crystallized with a high-affinity ligand. If none exist, use a high-confidence predicted structure from AlphaFold DB. Download the PDB file.

- Step 2: Initial Processing and Cleaning. Using molecular visualization/editing software (e.g., UCSF Chimera, Maestro):

- Remove all non-protein entities except for crystallographic waters within 5Å of the binding site.

- Add missing hydrogen atoms.

- Optimize the orientation of asparagine, glutamine, and histidine side chains using a hydrogen-bonding network analysis tool.

- Step 3: Binding Site Preparation and Grid Generation. Define the binding site using the centroid of the co-crystallized ligand or a key catalytic residue. Generate a docking grid that encompasses the entire site with an additional 5-10 Å margin in all directions to allow for ligand flexibility.

- Step 4: Control Docking and Enrichment Test. To validate the setup:

- Perform self-docking of the native ligand. A successful result yields an RMSD < 2.0 Å.

- Conduct an enrichment test using a known actives/decoys set. Screen this small library and calculate the enrichment factor (EF) at 1% of the database. An EF₁% > 10 typically indicates a well-prepared target capable of distinguishing actives.

This protocol outlines the creation of a physical prefractionated library from plant material.

- Step 1: Sample Acquisition and Documentation. Acquire plant material with proper permits and ABS agreements. Create a detailed voucher specimen deposited in a recognized herbarium. Record all metadata (location, date, collector, taxonomic ID).

- Step 2: Bulk Extraction. Lyophilize and mill 100g of plant material. Perform exhaustive extraction using a sequential solvent system (e.g., hexane, dichloromethane, methanol) in an accelerated solvent extractor (ASE). Combine and evaporate each solvent extract under reduced pressure to yield three crude dried extracts.

- Step 3: Medium-Throughput Prefractionation. Using an automated HPLC system with a fraction collector:

- Reconstitute the methanol extract (typically the most bioactive) and inject onto a reverse-phase C18 column.

- Employ a linear gradient from 5% to 100% acetonitrile in water over 20 minutes.

- Collect fractions every 30 seconds, yielding ~40 fractions per extract.

- Dry fractions in a speedvac and store in tared 384-well plates at -20°C.

- Step 4: Library Quality Control. Randomly select 5% of fractions for:

- LC-MS Analysis: To create a chemical fingerprint and identify major components.

- Dereplication: Compare MS/MS spectra against natural product databases (e.g., GNPS) to flag known nuisance compounds or major metabolites.

Table 2: Key Reagents, Software, and Databases for Target and Library Curation

| Category | Item/Resource | Function in Preparation | Key Features / Notes |

|---|---|---|---|

| Target Preparation Software | UCSF Chimera / ChimeraX | Structure visualization, cleaning, hydrogen addition, basic editing. | Open-source, extensible. Essential for initial PDB inspection. |

| Schrödinger Maestro / BIOVIA Discovery Studio | Comprehensive suite for protein preparation, protonation, grid generation. | Industry-standard, includes robust algorithms for H-bond optimization. | |

| DOCK3.7, AutoDock Vina, Glide | Docking software used for control validation and large-scale screening. | DOCK3.7 is specifically cited for large-scale protocols [4]. | |

| Structural Data & Search | Protein Data Bank (PDB) | Primary repository for experimental 3D structural data. | Use quality filters (resolution, R-factor) during search [31]. |

| AlphaFold Database | Repository for AI-predicted protein structures. | Critical for targets without experimental structures [30]. | |

| SARST2 | High-throughput protein structure alignment tool. | Enables rapid similarity searches against massive structural DBs [30]. | |

| Natural Product Libraries | NCI NP Repository | Source of physical prefractionated natural product samples. | Available to researchers via application; includes extensive metadata [35]. |

| GNDC Database | Virtual database of gene-encoded natural components. | Contains 234M+ AI-annotated entries for virtual screening [34]. | |

| NP Analysis & Dereplication | LC-MS/MS System | Chemical profiling and dereplication of fractions. | Couples separation with mass spectral identification. |

| Global Natural Products Social (GNPS) | Platform for crowd-sourced MS/MS spectral matching. | Essential for dereplication against known NP spectra. | |

| Bioactivity Data | ChEMBL / PubChem | Source of bioactivity data for validation and benchmarking. | Provides pChEMBL values for known ligands [31]. |

| Computational Infrastructure | High-Performance Computing (HPC) Cluster | Running large-scale docking and structural searches. | Necessary for screening libraries >1 million compounds. |

Within the framework of a thesis dedicated to large-scale molecular docking for natural products research, the selection of computational tools transitions from a mere technical step to a foundational strategic decision. The unique challenges of natural products—structural complexity, diverse scaffolds, and often novel mechanisms of action—demand a nuanced understanding of available docking paradigms. The landscape has evolved dramatically from purely physics-based algorithms to include artificial intelligence (AI)-powered predictions and sophisticated hybrids [26] [23]. This evolution offers unprecedented opportunities but also introduces complexity in choosing the right tool for a given research question.

This guide provides a detailed, practical comparison of Traditional, AI-Powered, and Hybrid docking software. It moves beyond theoretical performance to offer application notes and experimental protocols tailored for researchers embarking on large-scale virtual screening of natural product libraries. The goal is to equip scientists with the decision-making framework and methodological details necessary to efficiently identify hits with a high probability of experimental validation, thereby accelerating the translation of complex natural product chemistry into viable drug leads.

Software Classification and Strategic Comparison

Molecular docking software can be categorized into three distinct paradigms, each with a unique operational philosophy and performance profile. The following table provides a high-level strategic comparison to guide initial selection.

Table 1: Strategic Comparison of Docking Software Paradigms

| Paradigm | Core Philosophy | Representative Tools | Key Strengths | Primary Limitations | Ideal Use Case in Natural Products Research |

|---|---|---|---|---|---|

| Traditional (Physics-Based) | Uses force fields and empirical scoring functions to search conformational space and rank poses based on calculated binding energy. | Glide (Schrödinger), AutoDock Vina, GOLD, DOCK [36] [37] [38] | High physical plausibility, interpretable results, robust with well-defined pockets, extensive validation history. | Computationally intensive; limited by rigid receptor approximation; scoring function inaccuracies can miss key interactions [26] [23]. | Target-focused screening where a high-quality holo (ligand-bound) protein structure is available. Excellent for lead optimization of known scaffolds. |

| AI-Powered (Deep Learning) | Employs deep neural networks (e.g., diffusion models, GNNs) trained on protein-ligand complex databases to directly predict binding poses and affinities. | DiffDock, DynamicBind, SurfDock, EquiBind [26] [23] | Exceptional speed (seconds per compound); superior performance on novel or cryptic pockets; strong pose accuracy on known complexes [26]. | Can generate physically implausible structures (bad bond lengths, clashes) [26]; poor generalization to protein/ligand types outside training data; "black box" predictions [26] [23]. | Ultra-high-throughput primary screening of massive libraries (e.g., >1 million compounds). Exploration of proteins with significant flexibility or predicted structures. |

| Hybrid | Integrates AI-driven scoring functions with traditional conformational search algorithms, or uses AI to pre-filter poses. | Interformer, Glide (with NN scoring), Gnina [26] | Optimal balance of speed and accuracy; combines physical realism of sampling with pattern recognition of AI scoring; improved virtual screening enrichment [26]. | More complex setup than pure AI methods; performance depends on the quality of both the search algorithm and the AI model. | Tiered screening campaigns. Ideal for re-ranking top poses from traditional or AI docking to improve hitlist confidence and biological relevance. |

The selection process is not static. A rational workflow for choosing and applying these tools is visualized below, outlining a path from project definition to final candidate selection.

Diagram: A logical workflow for selecting molecular docking software based on project-specific parameters such as target structure quality, need for speed, and site knowledge.

Quantitative Performance Benchmarks

Recent comprehensive studies provide critical data for informed tool selection. A 2025 benchmark evaluated nine methods across five dimensions critical for drug discovery: pose prediction accuracy, physical plausibility, interaction recovery, virtual screening (VS) efficacy, and generalization [26]. The data reveals clear performance tiers.

Table 2: Quantitative Performance Benchmark of Docking Methods (2025 Data) [26]

| Method (Paradigm) | Pose Accuracy (RMSD ≤ 2 Å) | Physical Validity (PB-Valid) | Combined Success Rate (RMSD ≤ 2 Å & PB-Valid) | Virtual Screening Enrichment (AUC) | Key Finding & Recommendation |

|---|---|---|---|---|---|

| Glide SP (Traditional) | 85.0% | 97.7% | 83.0% | 0.80 | Gold standard for physical validity. Best choice when pose realism is critical. |

| AutoDock Vina (Traditional) | 78.0% | 94.0% | 74.0% | 0.75 | Robust, open-source benchmark. Good balance for general use. |

| SurfDock (AI: Diffusion) | 91.8% | 63.5% | 61.2% | 0.78 | Best pure pose accuracy, but many poses are physically invalid. Use with strict post-filtering. |

| DiffBindFR (AI: Diffusion) | 75.3% | 47.2% | 33.9% | 0.72 | Moderate accuracy, poor physical validity. Limited utility in rigorous campaigns. |

| DynamicBind (AI: Diffusion) | 65.0% | 55.0% | 40.0% | N/A | Designed for flexible/blind docking. Performance lags in standard tests [26]. |

| Interformer (Hybrid) | 82.0% | 92.0% | 76.0% | 0.82 | Best virtual screening enrichment. Excellent balance, highly recommended for hit identification. |