Intelligent Prioritization: Transformative Strategies for Screening Natural Product Extracts in Drug Discovery

This article provides a comprehensive guide for researchers and drug development professionals on modern, efficient methods for prioritizing natural product extracts for biological screening.

Intelligent Prioritization: Transformative Strategies for Screening Natural Product Extracts in Drug Discovery

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on modern, efficient methods for prioritizing natural product extracts for biological screening. It explores the foundational challenges of complexity and redundancy inherent in natural product libraries and details advanced methodological approaches, including artificial intelligence (AI)-driven prediction, in silico screening, and bioaffinity techniques. The guide addresses practical troubleshooting for common assay interferences and offers optimization strategies for library design and data analysis. Finally, it presents frameworks for the validation and comparative evaluation of prioritization methods, synthesizing these insights into a strategic roadmap to accelerate the discovery of novel bioactive compounds from nature.

Navigating the Complexity: Foundational Principles for Prioritizing Natural Product Libraries

Technical Support & Troubleshooting Center

Welcome to the Technical Support Center for Natural Product Screening. This resource is designed to assist researchers, scientists, and drug development professionals in navigating common experimental challenges related to natural product (NP) libraries. Framed within a broader thesis on methods for prioritizing natural product extracts for biological screening, this guide focuses on overcoming the inefficiencies of structural redundancy and compound rediscovery [1].

This center employs a structured troubleshooting framework to help you diagnose and solve problems efficiently [2]. The following FAQs and guides are organized by category, moving from broad conceptual challenges to specific experimental protocols.

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Category 1: Library Sourcing and Prioritization

Q1: How can I select a natural product library that minimizes my risk of screening predominantly known or redundant compounds?

- Problem: Initial screens yield high hit rates that dereplicate to common nuisance compounds or known actives, wasting resources [1].

- Root Cause: Many commercial libraries, while diverse, may over-represent certain taxonomic groups or compound classes. A library's construction method (crude extract vs. prefractionated) significantly impacts its "dereplication burden" [1].

- Solutions:

- Prioritize Prefractionated Libraries: Libraries of partially purified fractions (e.g., the NCI's NP Library) separate compounds during production, reducing interference and increasing the probability of isolating novel chemotypes [1].

- Demand Metadata: Choose libraries that provide extensive sample metadata (e.g., taxonomic source, geographic origin, genomic data of microbial strains). This allows for intelligent pre-selection and diversity analysis before screening [3] [1].

- Consider Source Novelty: Explore libraries specializing in understudied sources (e.g., microbial microbiomes from extreme environments, cyanobacteria) [3].

Q2: What are the key considerations for establishing ethical and legally compliant access to novel biological resources?

- Problem: Uncertainty regarding permits, benefit-sharing agreements, and compliance with international treaties delays or halts research.

- Impact: Inability to access novel biodiversity, legal repercussions, and damage to institutional reputations [1].

- Context: Essential for international collection efforts and receiving samples from external partners.

- Solutions:

- Adhere to International Frameworks: Ensure all collections comply with the Nagoya Protocol on Access and Benefit-Sharing (ABS) and the Convention on Biological Diversity (CBD). The NCI's Letter of Collection (LOC) provides a historical model for equitable agreements [1].

- Secure Comprehensive Documentation: Obtain all necessary collecting, export, and import permits. For domestic "citizen science" soil collections, ensure institutional permits from relevant agencies (e.g., USDA) are in place [1].

- Actionable Step: Before collection, consult your institution's technology transfer or legal office to establish a Material Transfer Agreement (MTA) template that includes benefit-sharing terms.

Category 2: Assay Design and Screening

Q3: My cell-based phenotypic assay is plagued by high toxicity or nonspecific inhibition from natural product extracts. How can I adapt my assay?

- Problem: Crude extracts cause widespread cell death or assay interference, masking specific bioactive signals [1].

- Context: Common with extracts containing tannins, saponins, or other promiscuous inhibitors.

- Solutions:

- Employ Prefractionated Libraries: This is the most effective strategy to dilute nuisance compounds and separate them from actives [1].

- Adjust Assay Conditions:

- Reduce Test Concentration: Screen extracts/fractions at lower concentrations (e.g., 10 µg/mL instead of 100 µg/mL).

- Add Quenchers: Include non-interfering agents like polyvinylpolypyrrolidone (PVPP) to bind polyphenols or albumin to sequester fatty acids.

- Use Orthogonal Assays: Confirm hits in a secondary, mechanistically different assay to filter out false positives.

- Protocol - Cell Viability Counter-Screen: Run a parallel cell viability assay (e.g., ATP-based luminescence) on all samples. Flag samples where general cytotoxicity correlates with the primary assay signal for cautious follow-up.

Q4: My target-based biochemical assay shows no activity. Are natural products incompatible with my purified enzyme target?

- Problem: Lack of hits in a high-throughput screening (HTS) campaign against a molecular target.

- Root Cause: Crude extracts have low concentrations of any single compound, which may be below the assay's limit of detection. The target's active site may also be inaccessible to certain natural product scaffolds [1].

- Solutions:

- Switch to a Purified or Prefractionated Library: Prefractionation enriches individual components, increasing the effective concentration in the well and the probability of detection [1].

- Re-evaluate Assay Sensitivity: Optimize the assay for a lower signal-to-noise ratio to detect weaker inhibitors. Consider using a more sensitive detection method (e.g., fluorescence polarization, TR-FRET).

- Consider Alternative Mechanisms: If targeting protein-protein interactions, explore natural product libraries known for complex molecular scaffolds, such as those from microbes or marine invertebrates [3].

Category 3: Hit Triage and Dereplication

Q5: My primary screen generated hundreds of hits. What is a systematic, triage protocol to prioritize the most promising ones for dereplication?

- Problem: Overwhelming number of active samples with limited resources for follow-up.

- Solution - Implement a Prioritization Workflow:

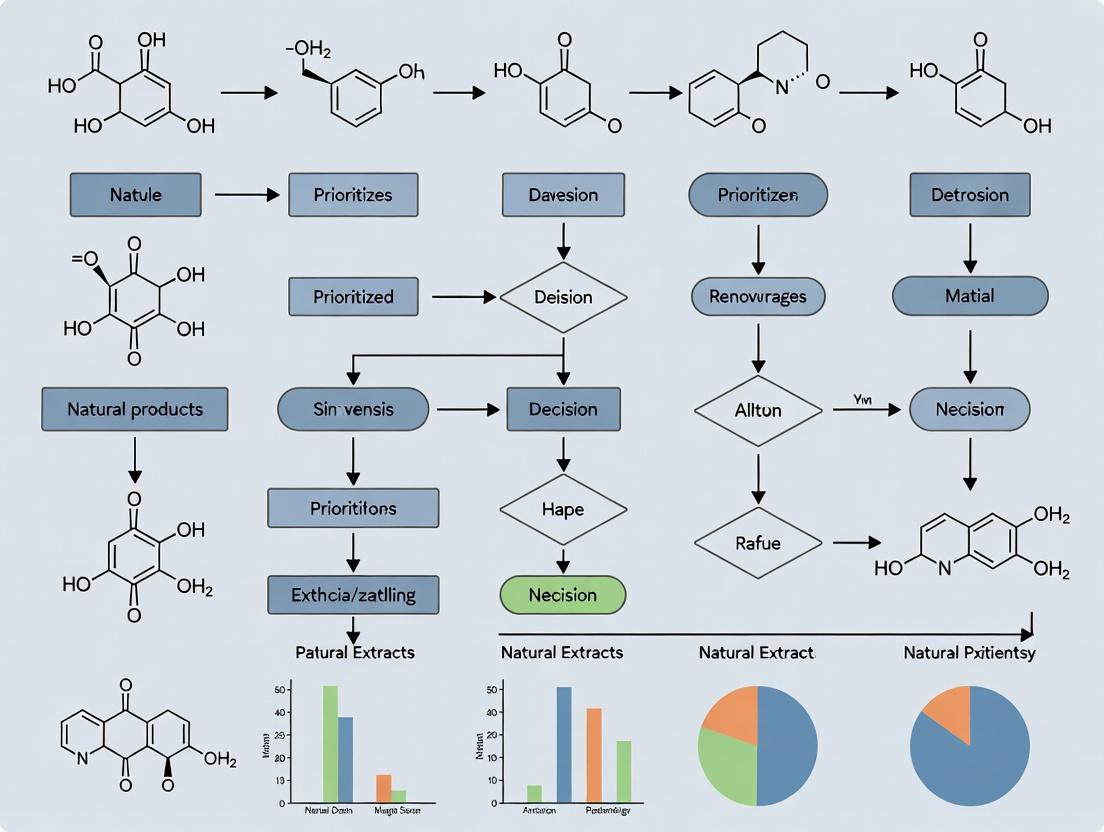

The following diagram outlines a decision-making framework to efficiently triage screening hits based on activity, chemical novelty, and resource allocation.

Q6: During dereplication, how do I distinguish a genuinely novel compound from a new derivative of a known scaffold?

- Problem: LC-MS data shows a molecular weight not in databases, but the MS/MS fragmentation pattern looks familiar.

- Solutions:

- Utilize Molecular Networking: Visualize the MS/MS data as a molecular network. A genuinely novel scaffold will often form a distinct cluster separate from known compound families. New derivatives will cluster closely with their parent compound [1].

- Consult Genomic Data (if available): For microbial hits, check if the source strain's genome contains biosynthetic gene clusters (BGCs) predicted to produce known compound families. This can provide early warning of potential redundancy.

- Rapid Mini-Purification: Isolate a microgram quantity of the compound via analytical-scale HPLC and acquire 1D NMR (e.g., 1H). Even this minimal data can often confirm or deny structural novelty.

Detailed Experimental Protocols

This protocol reduces redundancy by separating components early, creating a more screening-friendly library.

Principle: Crude extract is subjected to a standardized mid-pressure liquid chromatography (MPLC) separation to generate a consistent number of fractions across all samples, deconvoluting the mixture.

Materials:

- Crude natural product extracts (lyophilized)

- MPLC system (e.g., CombiFlash series) with C18 reversed-phase column

- Solvents: Water (Milli-Q), HPLC-grade Acetonitrile, Methanol

- Fraction collector

- Deepwell 96-well or 384-well plates for library storage

- Centrifugal vacuum concentrator

Procedure:

- Sample Preparation: Weigh 100-200 mg of crude extract. Dissolve in a suitable solvent (e.g., 50% DMSO in methanol) and centrifuge to remove particulate matter.

- MPLC Method Development: Establish a generic gradient suitable for a wide polarity range. Example: C18 column, 30g; Flow rate: 40 mL/min; Gradient: 5% to 100% acetonitrile in water (with 0.1% formic acid) over 15 column volumes.

- Fractionation: Inject the prepared sample. Collect fractions based on either (a) fixed time intervals (e.g., every 15 seconds) or (b) UV peak detection. The NCI method typically generates 96 fractions per extract.

- Pooling Strategy (Critical): To create a manageable library size, combine fractions according to a strategic pooling algorithm (e.g., combine fractions 1-4, 5-8, etc., or use a "windowed" pooling method). This retains separation while controlling library scale.

- Transfer to Screening Plates: Concentrate pooled fractions using a centrifugal evaporator. Reconstitute in DMSO at a standardized concentration (e.g., 2 mg/mL based on original crude extract weight). Transfer to 96-well or 384-well master plates using a liquid handler.

- Quality Control: For each plate, include control wells (solvent blank, reference inhibitor/activator). Randomly select fractions for LC-UV analysis to verify consistency of separation across samples.

Protocol 2: Rapid Dereplication of Active Fractions using LC-HRMS and Molecular Networking

Principle: Uses high-resolution mass spectrometry and database mining to quickly identify known compounds, focusing resources on unknowns.

Materials:

- Active fraction in solvent (e.g., DMSO, methanol)

- UHPLC system coupled to High-Resolution Mass Spectrometer (Q-TOF or Orbitrap)

- Software: Compound Discoverer, MZmine, or Global Natural Products Social Molecular Networking (GNPS) platform.

- Databases: In-house NP library, public databases (PubChem, NP Atlas, MarinLit).

Procedure:

- LC-HRMS Analysis:

- Column: C18, 2.1 x 100 mm, 1.7 µm.

- Gradient: 5% to 100% acetonitrile in water (0.1% formic acid) over 12 min.

- MS Settings: Data-Dependent Acquisition (DDA) mode. Full MS scan (m/z 150-2000) followed by MS/MS fragmentation of top ions.

- Data Processing:

- Convert raw files to .mzML or .mzXML format.

- Use MZmine or similar to detect features, align peaks, and deisotope.

- Database Search:

- Search exact mass (± 5 ppm) of the [M+H]+ or [M-H]- ion against in-house and online NP databases.

- If a match is found, compare the observed MS/MS spectrum with the reference spectrum (if available).

- Molecular Networking (for novel compounds):

- Upload the processed MS/MS data to the GNPS website (https://gnps.ucsd.edu).

- Create a molecular network using the classic workflow.

- Visualize the network (e.g., in Cytoscape). Active fractions containing known compounds will cluster with database nodes. Isolated clusters or nodes with no edges to known compounds represent priority novel leads.

- Reporting: Document the putative identification, confidence level (Level 1-5 as per Metabolomics Standards Initiative), and recommendation for follow-up (discard or prioritize).

The Scientist's Toolkit: Research Reagent Solutions

The following table details key resources for accessing diverse natural product libraries and essential tools for screening and dereplication [3] [1].

Resource Name / Reagent

Type

Key Function / Description

Relevance to Reducing Redundancy

NCI Program for Natural Product Discovery Repository [3] [1]

Prefractionated Library

One of the world's largest, most comprehensive collections. Provides ~230,000 crude extracts and is producing 1,000,000 prefractionated samples in 384-well plates free of charge.

High. Prefractionation separates components, reducing interference. Extensive source diversity (global collections) increases novelty potential.

MEDINA Natural Products Library [3]

Microbial Extract Library

One of the world’s largest libraries of microbial-derived NPs (>200,000 extracts from terrestrial and marine microbes).

High. Specialization in under-explored microbial diversity from unique environments targets novel chemotypes.

Axxam/AXXSense Natural Compound Library [3]

Pure Compounds & Extracts

Offers 11,500 pure NPs, 63,000 purified fractions, and 21,200 pre-purified extracts from plants and microbes.

Medium-High. Access to purified fractions and pure compounds simplifies screening but requires due diligence on source novelty.

ChromaDex Natural Product Libraries [3]

Botanical Extracts & Fractions

Proprietary extraction process yielding 1,200 characterized botanical extracts and 2,550 fractions, preserving cross-fraction synergy.

Medium. Focus on characterized extracts aids dereplication. Synergy preservation can reveal new bioactivity from known plants.

NatureBank (Griffith University) [3]

Extract, Fraction & Pure Compound Libraries

A unique, lead-like enhanced library from Australian biodiversity (>18,000 extracts, >90,000 fractions).

High. Focus on biogeographically unique (Australian) biota and generation of lead-like enhanced fractions targets novel chemical space.

Global Natural Products Social Molecular Networking (GNPS)

Analysis Platform

A web-based platform for crowdsourced MS/MS data analysis and molecular networking.

Critical. Enables rapid visual dereplication and identification of novel molecular families by comparing MS/MS spectra to a global library.

Solid Phase Extraction (SPE) Cartridges (e.g., C18, Diatomaceous Earth)

Laboratory Consumable

Used for rapid, low-pressure fractionation of crude extracts to remove nuisance compounds (e.g., chlorophyll, tannins) before screening.

Medium. A simple, low-cost prefractionation step that can reduce assay interference and simplify downstream active fraction analysis.

Visual Guide: Strategic Approaches to Overcome Redundancy

The following diagram synthesizes the core strategies discussed to form a cohesive workflow for managing structural redundancy, from library selection through to novel compound identification.

This technical support center provides researchers with practical troubleshooting guides and FAQs for defining and optimizing key success metrics in natural product (NP) screening campaigns. Framed within the broader thesis of prioritizing NP extracts for biological screening, this resource addresses the common experimental and analytical challenges in measuring hit rates, assessing scaffold diversity, and confirming chemical novelty. The guidance below is based on current literature and protocols to help you efficiently triage screening results, validate findings, and build high-quality libraries for drug discovery.

Foundational Concepts & Quantitative Benchmarks

Before troubleshooting, it is essential to understand standard metrics and benchmarks. The tables below summarize key performance data from recent screening campaigns and library design studies.

Table 1: Representative Hit Rates Across Screening Campaigns This table compares hit rates from different screening approaches, highlighting the impact of library design and assay type [4] [5] [6].

| Screening Campaign / Library Type | Assay Target | Initial Library Size | Hit Rate (%) | Key Activity Cut-off (µM) | Citation |

|---|---|---|---|---|---|

| Full Fungal Extract Library | Plasmodium falciparum (phenotypic) | 1,439 extracts | 11.3 | Not Specified | [4] |

| Rational Library (80% Scaffold Diversity) | Plasmodium falciparum (phenotypic) | 50 extracts | 22.0 | Not Specified | [4] |

| AnalytiCon NATx Library | Clostridioides difficile (whole-cell) | 5,000 compounds | 0.2 (10 hits) | MIC: 0.5-2 µg/mL | [6] |

| AI-Driven Hit Identification (ChemPrint) | BRD4 (target-based) | 12 compounds tested | 58.3 | ≤ 20 µM | [5] |

| Virtual Screening (Literature Analysis) | Various | Variable | Highly Variable | Often 1-100 µM | [7] |

Table 2: Impact of Scaffold-Centric Library Design on Performance Data demonstrates how prioritizing scaffold diversity reduces library size while increasing hit rates and retaining bioactive features [4].

| Metric | Full Library (1,439 Extracts) | 80% Scaffold Diversity Library (50 Extracts) | 100% Scaffold Diversity Library (216 Extracts) |

|---|---|---|---|

| Library Size Reduction | Baseline | 28.8-fold reduction | 6.6-fold reduction |

| Hit Rate vs. P. falciparum | 11.26% | 22.00% | 15.74% |

| Hit Rate vs. Neuraminidase | 2.57% | 8.00% | 5.09% |

| Retention of Bioactive Features* | 10 features | 8 features retained | 10 features retained |

Features significantly correlated with anti-Plasmodium* activity in the full library [4].

Core Experimental Protocols & Workflows

Protocol: MS/MS-Based Library Prioritization for Scaffold Diversity

This protocol details a method to rationally minimize a natural product extract library based on liquid chromatography-tandem mass spectrometry (LC-MS/MS) data to maximize scaffold diversity and hit rates [4].

1. Sample Preparation & Data Acquisition:

- Prepare crude natural product extracts (e.g., fungal, bacterial) in appropriate solvents for LC-MS/MS analysis.

- Acquire untargeted LC-MS/MS data for all extracts in the library. Use standardized gradients and collision energies to ensure reproducible fragmentation spectra.

2. Molecular Networking & Scaffold Detection:

- Process the raw MS/MS data using the GNPS (Global Natural Products Social Molecular Networking) platform or similar software.

- Use classical molecular networking to group MS/MS spectra based on fragmentation pattern similarity. Each spectral family (or molecular network node) corresponds to a unique molecular scaffold. Adducts and in-source fragments of the same molecule will group together [4].

3. Rational Library Selection:

- Use custom algorithms (e.g., in R or Python) to select extracts based on scaffold diversity.

- Step 1: Rank all extracts by the number of unique scaffolds they contain.

- Step 2: Select the extract with the highest scaffold count for your new "rational library."

- Step 3: Iteratively add the extract that contributes the most new scaffolds not already present in the rational library.

- Step 4: Continue until a pre-defined threshold (e.g., 80% or 100% of total scaffolds in the full library) is reached [4].

4. Validation:

- Screen the rationally selected minimal library and the full library in parallel using your biological assay.

- Compare hit rates. The rational library should yield a significantly higher hit rate due to reduced redundancy [4].

Protocol: Hit Validation Cascade for Natural Product Hits

This protocol outlines the standard workflow to confirm and prioritize initial hits from a primary screen, crucial for accurate hit rate calculation [7] [6].

1. Primary Screening:

- Conduct a high-throughput screen (e.g., 384-well plate) of your library. Use a single-point activity measurement (e.g., % inhibition at 3-10 µM).

- Define a hit threshold: Use statistical methods (e.g., Z'-factor, 3 standard deviations above mean) or a fixed percentage inhibition (e.g., >50%) to identify primary hits [7].

2. Hit Confirmation (Cherry-Picking & Re-test):

- Physically cherry-pick the primary hit samples from source plates.

- Re-test them in the same primary assay, ideally in a dose-response format (e.g., 8-point dilution series), to confirm activity and rule out false positives from screening artifacts [6].

3. Counter-Screens & Selectivity:

- Test confirmed hits in a counter-screen against related but undesired targets or in a cytotoxicity assay (e.g., MTS assay on mammalian cell lines like Caco-2) to identify non-selective or cytotoxic compounds [7] [6].

- For antimicrobials, test against commensal or probiotic strains (e.g., Bifidobacterium, Bacteroides) to assess selectivity over beneficial flora [6].

4. Orthogonal Assay & Mechanism:

- Validate activity in an orthogonal, mechanistically distinct assay. For a whole-cell phenotype, use a target-based enzyme assay. For an enzyme target, use a cellular reporter assay [7].

- Employ biophysical methods (e.g., SPR, ITC, NMR) to confirm direct binding to the intended target [7].

5. Hit Criteria Definition:

- A validated hit should meet predefined potency (e.g., IC50/EC50/Ki < 10-20 µM for Hit Identification), demonstrate selectivity in counter-screens, and show confirmed activity in an orthogonal assay [7] [5].

- The final hit rate is calculated as: (Number of validated hits) / (Number of extracts/compounds tested in the primary screen) * 100.

Protocol: Assessing Novelty via Genome Mining and Dereplication

This protocol describes a strategy to prioritize extracts with high novelty potential by targeting silent biosynthetic gene clusters (BGCs) and dereplicating known compounds [8].

1. Genomic DNA Extraction & Sequencing:

- Isolate high-quality genomic DNA from microbial strains in your collection.

- Perform whole-genome sequencing (e.g., Illumina, Nanopore).

2. In Silico Genome Mining for BGCs:

- Annotate sequenced genomes using the antiSMASH (antibiotics & Secondary Metabolite Analysis Shell) tool. This identifies and characterizes putative BGCs for polyketides, non-ribosomal peptides, terpenes, etc. [8].

- Prioritize strains containing a high number of "silent" or "cryptic" BGCs—clusters not associated with known metabolites from that strain under standard lab conditions.

3. Elicitation to Activate Silent BGCs:

- Use High-Throughput Elicitor Screening (HiTES). Grow prioritized strains in 96- or 384-well microtiter plates, each well with a different growth condition (varying media, co-culture, small molecule inducers, etc.) [8].

- After cultivation, perform rapid chemical analysis directly from the culture broth or extracts using techniques like LAESI-IMS (Laser Ablation Electrospray Ionization-Imaging Mass Spectrometry) [8].

4. Metabolomic Dereplication:

- Analyze LC-MS/MS data from elicited cultures and standard extracts.

- Use molecular networking (GNPS) to compare MS/MS spectra of your active hits against public databases (e.g., GNPS, Reaxys, SciFinder) to identify known compounds quickly.

- Prioritize hits that form new molecular network nodes not connected to known compounds, indicating potential novelty [4].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for NP Screening & Hit Triage

| Item | Function & Rationale | Example/Specification |

|---|---|---|

| Prefractionated NP Libraries | Increases hit confidence by separating bioactive minor metabolites from nuisance compounds; reduces assay interference [9]. | Libraries generated via HPLC, SFC, or SPE; e.g., NCI's prefractionated library [9]. |

| LC-MS/MS System with UHPLC | Essential for chemical profiling, quality control, molecular networking, and dereplication. Enables scaffold diversity analysis [4]. | Systems capable of high-resolution mass spectrometry and data-dependent MS/MS acquisition. |

| GNPS Platform Access | Free, cloud-based platform for processing MS/MS data to create molecular networks, essential for scaffold analysis and dereplication [4]. | https://gnps.ucsd.edu |

| AntiSMASH Software | Key bioinformatics tool for in silico genome mining to identify Biosynthetic Gene Clusters (BGCs) and prioritize strains for novelty [8]. | https://antismash.secondarymetabolites.org |

| Cell-Based Viability Assay Kits | For counter-screens to assess cytotoxicity of hits, a critical selectivity filter. | MTS, MTT, or CellTiter-Glo assays for mammalian cells (e.g., Caco-2) [6]. |

| Orthogonal Assay Reagents | Materials for secondary, mechanistically distinct assays to confirm primary hit activity and target engagement [7]. | May include purified recombinant enzyme, substrate, detection antibodies, or reporter cell lines. |

| Reference Standard Antibiotics/Inhibitors | Essential positive and negative controls for biological assays to ensure proper function and for comparison of hit potency/selectivity [6]. | e.g., Vancomycin for C. difficile assays; staurosporine for kinase panels. |

Troubleshooting Guides & FAQs

FAQ 1: Our primary screen yielded a high hit rate (>15%). Is this promising or indicative of an artifact?

- Investigate: A very high hit rate can signal assay interference. Common culprits with natural product extracts include:

- Pan-assay interference compounds (PAINS): Perform a chemical database check for known PAINS scaffolds in your hits.

- Aggregators: Test hits in the presence of a non-ionic detergent (e.g., 0.01% Triton X-100); if activity is lost, aggregation is likely.

- Fluorescence/Quenching: Check if hit samples are fluorescent or absorb at assay detection wavelengths.

- Non-selective cytotoxicity: Run a rapid viability counter-screen.

- Solution: Progress only hits that pass the Hit Validation Cascade (Section 2.2). Your true, validated hit rate will likely be lower but more reliable [7].

FAQ 2: How do we meaningfully calculate and report scaffold diversity for our library?

- Problem: Simple compound counts are poor proxies for diversity. Two libraries of equal size can have vastly different scaffold diversities.

- Solution: Use MS/MS-based molecular networking as described in Protocol 2.1.

- The number of distinct spectral families (nodes) in the network represents the number of unique molecular scaffolds.

- Report diversity as "scaffold hit rate" (number of unique active scaffolds / total tested) or as the percentage of total library scaffold space captured in a subset [4].

- A rational, scaffold-diverse library of 50 extracts can capture 80% of the scaffolds found in a full 1,400-extract library [4].

FAQ 3: Our active compound appears to be novel from MS dereplication but we later found it is a known compound. What went wrong?

- Investigate: This is typically a database coverage issue. Public MS/MS libraries are not exhaustive.

- Solution: Implement a multi-tiered dereplication strategy:

- MS/MS level: Compare against GNPS, MassBank, and in-house libraries.

- Physicochemical property level: Calculate the exact mass and search against comprehensive structure databases (e.g., SciFinder, Reaxys, MarinLit) applying relevant filters (source organism, phylum).

- Literature level: Perform a thorough literature search on the proposed structural class and biological activity.

- Ultimate confirmation requires isolation and full structure elucidation by NMR [8].

FAQ 4: How do we define an appropriate activity cut-off for declaring a "hit" in a natural product screen?

- Context: For early-stage Hit Identification from NPs, the goal is to find a novel scaffold with a promising structure-activity relationship (SAR) starting point, not a final drug candidate.

- Guideline: A cutoff of ≤ 20 µM (IC50/EC50/MIC) is a common and pragmatic threshold for hit declaration. It balances the need for meaningful activity with the reality that minor metabolites in extracts may be diluted but are highly optimizable [5].

- Critical Step: Ligand Efficiency (LE) or Lipophilic Ligand Efficiency (LLE) should be calculated for pure compounds. This normalizes activity for molecular size/lipophilicity and is a better indicator of optimization potential than potency alone [7].

This technical support center provides essential guidance for researchers prioritizing natural product extracts for biological screening within the legal and ethical frameworks of the Convention on Biological Diversity (CBD) and the Nagoya Protocol on Access and Benefit-Sharing (ABS). The Nagoya Protocol, which entered into force on 12 October 2014 and has been ratified by 142 Parties as of August 2025, establishes legally binding international obligations for accessing genetic resources and sharing the benefits from their utilization [10]. Non-compliance can lead to legal disputes, research embargoes, and reputational damage.

The core challenge is integrating robust ABS due diligence into the early stages of research, where biological material is often prioritized based on scant preliminary data. This guide offers troubleshooting and FAQs to navigate these complexities.

The following table summarizes the core components of the ABS framework that researchers must understand.

Table: Core Components of the ABS Framework for Researchers

| Component | Definition & Scope | Key Obligations for Researchers (Users) |

|---|---|---|

| Genetic Resource (GR) | Biological material containing functional units of heredity, of actual or potential value. Includes plants, animals, microbes (in-situ or ex-situ) [11]. | Determine if your sample is a GR under the Protocol. Access requires prior informed consent (PIC) and mutually agreed terms (MAT) with the provider country [11]. |

| Traditional Knowledge (TK) | Knowledge, innovations, and practices of indigenous and local communities associated with GR [11]. | If research is based on TK, additional PIC and MAT with the relevant communities are required [11]. |

| Utilization | Conducting research and development on the genetic or biochemical composition of GR, including through biotechnology [11]. | All research (non-commercial and commercial) on biochemical composition constitutes "utilization" and triggers ABS obligations [11]. |

| Prior Informed Consent (PIC) | The permission given by a provider country (or indigenous community) for access to GR or TK, based on full information [11]. | Obtain PIC before accessing the resource. Document the process and keep the permit/certificate. |

| Mutually Agreed Terms (MAT) | A contract between provider and user outlining the terms of access, use, and benefit-sharing [11]. | Negotiate and sign MAT before access. MAT must address benefit-sharing (monetary: royalties; non-monetary: collaboration, capacity building) [10]. |

| Internationally Recognized Certificate of Compliance (IRCC) | A permit issued by a provider country's Competent National Authority (CNA) proving legal access under PIC and MAT [12]. | Request an IRCC from the provider country. It is the key document for proving due diligence to funders, publishers, and checkpoints [12]. |

Troubleshooting Guides & FAQs

Scenario 1: "My sample was obtained from an international culture collection before 2014. Do I need ABS documentation?"

- Problem: Uncertain applicability of the Nagoya Protocol to ex-situ collections.

- Diagnosis: The Nagoya Protocol applies to GR accessed on or after 12 October 2014, provided the provider country has ratified it and established domestic ABS measures [11]. However, many countries established national ABS laws before 2014 under the CBD. The legality of access is determined by the national law in effect at the time and place of collection [11].

- Solution:

- Provenance Investigation: Contact the culture collection to request all available documentation (collection date, location, original PIC/MAT, Material Transfer Agreements).

- Check National Law: Use the ABS Clearing-House (ABSCH) to check the national legislation of the country of origin. Determine if ABS measures were in force at the time of collection [12] [11].

- Risk Assessment: If documentation is incomplete and the country had strict pre-2014 laws, treat the sample as potentially non-compliant. Seek new, fully documented access or discontinue use.

- Prevention: Source materials exclusively from repositories that provide "Nagoya-compliant" certifications and detailed provenance metadata [10].

Scenario 2: "My preliminary screening identified a promising extract, but I have no ABS documents. Can I proceed with lead optimization?"

- Problem: Discovery of promising activity in a sample with unclear or non-existent legal provenance.

- Diagnosis: Proceeding with utilization (including further R&D) without complying with ABS obligations constitutes a breach of international and likely national law (e.g., EU ABS Regulation). This jeopardizes future patent applications, publications, and collaborations [10] [13].

- Solution:

- Immediate Pause: Halt all research on the specific extract.

- Retrospective Due Diligence: Attempt to trace the sample back through all lab notebooks, suppliers, and collaborators to its country of origin.

- Contact Authorities: Use the ABSCH to find the National Focal Point (NFP) and Competent National Authority (CNA) of the provider country [11]. Inquire about the possibility of obtaining PIC and MAT retrospectively. Document all communication attempts [11].

- Contingency Plan: If retroactive compliance is impossible, deprioritize this lead. The cost, time, and legal risk outweigh the potential benefit.

- Prevention: Implement a mandatory pre-screening documentation check. No biological material enters the screening pipeline without a completed ABS checklist and supporting documents.

Scenario 3: "I am collaborating with a researcher in a provider country. They sent me extracts. Is their permit valid for my lab?"

- Problem: Validity of permits across jurisdictions and for secondary users.

- Diagnosis: PIC and MAT are often specific to the original researcher/institution and the stated research purpose. Sharing materials or results with a new user (you) usually requires an amendment to the MAT or a new Material Transfer Agreement (MTA) that references the original terms [10].

- Solution:

- Request Original Documents: Ask your collaborator for a copy of the IRCC, PIC, and MAT.

- Analyze MAT Terms: Scrutinize the MAT for clauses on "third-party transfers," "collaborators," and "change of purpose." Many MATs explicitly forbid transfer without provider country approval.

- Joint Amendment: Work with your collaborator and the provider country's CNA to formally amend the MAT to include your institution, role, and the terms of benefit-sharing.

- Prevention: Address future collaboration and material transfer explicitly during initial MAT negotiations. Use standardized MTA templates that align with ABS principles.

Scenario 4: "My genomic study uses only Digital Sequence Information (DSI) from a public database, derived from a foreign GR. Does the Nagoya Protocol apply?"

- Problem: Legal ambiguity surrounding DSI (e.g., gene sequence data).

- Diagnosis: This is a highly contentious area. The Nagoya Protocol text does not explicitly mention DSI. In 2022, the CBD COP15 agreed to develop a new multilateral benefit-sharing mechanism for DSI, decoupling it from the bilateral access rules of the Protocol [10]. Currently, most national ABS laws do not regulate the use of DSI alone, but the legal landscape is evolving.

- Solution:

- Check Specific Laws: Consult the ABSCH for the latest position and laws of the country from which the original physical material was sourced [12].

- Practice Diligence: Although not strictly required, documenting the provenance of DSI (source organism, country) is a best practice for future-proofing your research.

- Engage with Policy: Stay informed on the development of the new global DSI mechanism through institutional research offices.

- Prevention: When generating new DSI, publish with rich, FAIR (Findable, Accessible, Interoperable, Reusable) metadata that includes ABS compliance information for the source material [14].

Experimental Protocols: Integrating Provenance with Prioritization

To prioritize extracts for screening while ensuring ethical and legal provenance, follow this integrated workflow.

Protocol: Tiered Prioritization of Natural Product Extracts with ABS Due Diligence

A. Initial Triage & Documentation Audit

- Objective: To quickly eliminate samples with insurmountable legal risks before committing scientific resources.

- Materials: Sample inventory, ABS Clearing-House, document management system.

- Method:

- For each extract, create a record with: Unique ID, taxonomic ID, date/geographic origin of collection, name of collector, and current custodian.

- Demand the following documents: Internationally Recognized Certificate of Compliance (IRCC), or equivalent permit; Signed Mutually Agreed Terms (MAT); Material Transfer Agreement (MTA) for ex-situ sourced materials [12] [10].

- Cross-check the country of origin against the ABSCH to confirm its status as a Party to the Nagoya Protocol and review its specific domestic requirements [11].

- Prioritization Criterion: Samples with complete, valid documentation (IRCC, MAT) proceed to Tier 1. Samples with partial or unclear documentation are placed on hold. Samples with no documentation are deprioritized or discarded.

B. Tier 1: High-Throughput Biochemical Profiling (Legal & Safe Samples)

- Objective: To generate preliminary chemical data for prioritization from compliant samples.

- Materials: Liquid Chromatography-High Resolution Mass Spectrometry (LC-HRMS), metabolomics software, 96-well plate systems.

- Method:

- Perform untargeted LC-HRMS on all compliant extracts [13].

- Process data to identify molecular features (mass-to-charge ratio, retention time).

- Use computational tools (e.g., Molecular Networking via GNPS) to dereplicate features against known natural product databases, identifying novelty and potential chemical classes [13].

- Prioritization Criterion: Extracts with a high number of unique molecular features not found in databases ("chemical novelty score") are promoted to Tier 2. Document all raw and processed data with the sample's unique ABS ID to maintain an unbroken chain of provenance [14].

C. Tier 2: Targeted Bioactivity Screening & Genomic Correlation

- Objective: To assess biological activity and explore genetic basis of production.

- Materials: Phenotypic or target-based assay kits, RNA/DNA extraction kits, RT-qPCR or RNA-Seq platforms.

- Method:

- Subject prioritized extracts to relevant biological assays (e.g., antimicrobial, cytotoxicity).

- For promising hits, if the source organism is available, conduct gene expression analysis. For example, quantify expression levels of key biosynthetic genes (e.g., β-glu-1 for acetophenone defence in Picea glauca) using RT-qPCR [15].

- Correlate bioactivity levels with gene expression data and chemical profiles from Tier 1.

- Prioritization Criterion: Extracts showing significant, reproducible bioactivity with a plausible chemical-genetic correlation become high-priority leads for further development. This integrated data package (chemical + biological + genetic + legal) is critical for securing downstream funding and partnerships.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table: Essential Reagents and Tools for ABS-Compliant Natural Product Research

| Item / Solution | Function in Research | Role in ABS Compliance & Provenance |

|---|---|---|

| ABS Clearing-House (ABSCH) | Online global information portal [12]. | Primary tool for due diligence. Check country profiles, National Focal Points, Competent National Authorities, and published Internationally Recognized Certificates of Compliance (IRCC) [11]. |

| Document Management System | Digital repository for research data (e.g., Electronic Lab Notebook). | Maintains an immutable, timestamped record of all ABS documents (PIC, MAT, IRCC, MTAs), correspondence with authorities, and links to experimental data [14]. |

| Material Transfer Agreement (MTA) | Contract governing the transfer of tangible research materials between institutions. | Legally binds the recipient to the terms (including ABS terms) under which the material was originally accessed. Critical for transfers from ex-situ collections [10]. |

| Metabolomics/LC-HRMS Platform | Analytical chemistry for characterizing small molecules in extracts [13]. | Generates chemical provenance data ("metabolomic fingerprint"). Links biological activity to specific chemical features of a legally-sourced sample. |

| RNA/DNA Extraction & Sequencing Kits | Molecular biology tools for genomic and transcriptomic analysis. | Enables gene expression studies (e.g., RT-qPCR for β-glu-1 [15]) that can correlate bioactivity with genetic traits of the source organism, adding value to the resource. |

| Standardized MAT Template | A model contract for benefit-sharing negotiations. | Expedites negotiations and helps ensure all legally required elements (benefit-sharing, dispute resolution, reporting) are included, providing legal certainty [11]. |

Technical Support Center: Frequently Asked Questions & Troubleshooting

This technical support center provides targeted guidance for researchers facing common experimental challenges in the early stages of natural product research. The following FAQs and troubleshooting guides are framed within the critical context of prioritizing high-quality, reproducible extracts for downstream biological screening.

Frequently Asked Questions (FAQs)

Q1: How can I ensure my botanical extract is both representative of consumer products and scientifically authenticated for a screening campaign?

- Answer: You must reconcile two key requirements: representativeness and authentication. First, identify the most commonly used consumer product via national surveys, sales reports, or the NIH's Dietary Supplement Label Database to ensure ecological validity [16]. Second, for authentication, procure material from a lot for which a voucher specimen can be obtained. This specimen—a pressed, dried sample of the plant including key morphological features (e.g., flowers, leaves)—must be verified by a trained botanist and deposited in a publicly accessible herbarium with a unique accession number [16] [17]. This two-pronged approach satisfies both real-world relevance and the rigorous scientific standard required for publication and grant funding (e.g., NCCIH policy) [18].

Q2: What are the minimum characterization requirements for a natural product extract before it can be used in a biological screening assay?

- Answer: Prior to biological screening, a multi-tiered characterization is essential to define your "test article." The minimum requirements, as outlined by guidelines like ConPhyMP, include [19]:

- Plant Material Description: Full taxonomic identification (genus, species, authority), plant part used, geographic origin, and harvest time.

- Extract Preparation Details: Exact extraction protocol (solvent, solvent-to-material ratio, time, temperature, equipment).

- Chemical Fingerprint: A chromatographic profile (e.g., HPLC-UV or LC-MS) of the extract batch used for screening.

- Quantification of Markers: Measurement of one or more key bioactive constituents or chemical markers, expressed as weight percent.

- Contaminant Screening: Testing for heavy metals, pesticide residues, and microbial contaminants to avoid false positives/negatives in bioassays [18]. Without this baseline data, bioactive assay results cannot be reliably reproduced or attributed to specific chemistries.

Q3: My initial biological screen showed promising activity, but I cannot replicate it with a new batch of extract. Where should I start troubleshooting?

- Answer: Irreproducible bioactivity most commonly stems from inconsistent starting material or extraction. Follow this troubleshooting cascade:

- Audit the Source: Verify the new batch is from the same authenticated species and plant part. Re-examine the voucher specimen from the original active batch [16].

- Compare Chemical Fingerprints: Run HPLC or TLC analyses of both the active and inactive batches side-by-side. Significant differences in the chromatographic profile point to material or processing variability [20] [19].

- Review Extraction Parameters: Meticulously compare all extraction variables: particle size of plant powder, solvent purity, extraction duration, and temperature. Even minor deviations can alter metabolite profiles [21].

- Check Stability: Determine if the original extract was stored properly (e.g., -20°C, protected from light) and if degradation could explain the loss of activity [16].

Q4: What is the most efficient extraction method for an untargeted screening program where the active constituents are unknown?

- Answer: For untargeted screening, the goal is broad metabolite recovery. A sequential or graded solvent extraction is often the most efficient strategy. Start with a mid-polarity solvent like methanol or ethanol-water mixtures, which extract a wide range of semi-polar compounds (e.g., alkaloids, flavonoids, saponins) [20]. Follow this with a more non-polar solvent (e.g., dichloromethane) to capture terpenoids and fatty acids. This approach generates multiple fractions for screening from a single plant sample, increasing the probability of identifying bioactive chemotypes while providing preliminary information on compound polarity [22].

Troubleshooting Guides

Problem: Suspected misidentification of plant material.

- Symptoms: Chemical profile differs drastically from literature for the claimed species; biological activity is absent or contradictory to published data.

- Solution:

- Immediate Action: Cease all experimentation with the material in question.

- Re-authentication: If possible, have a second taxonomic expert re-examine the original voucher specimen or raw plant material [23].

- DNA Barcoding: As a definitive check, perform DNA barcoding (e.g., using rbcL, matK, or ITS2 regions) on the material and compare sequences with trusted databases [24].

- Prevention: For future work, always obtain a voucher specimen before processing material for extraction and ensure it is deposited in a recognized herbarium. Document the identification with high-quality photographs of key morphological characters [17] [23].

Problem: Low yield of target bioactive compounds from an optimized extract.

- Symptoms: The extraction produces adequate total mass but a low concentration of the marker/active compounds of interest.

- Solution:

- Parameter Optimization: Systematically optimize extraction parameters using Design of Experiments (DOE), such as a Box-Behnken design. Test variables like solvent concentration, temperature, extraction time, and solid-to-liquid ratio to find the ideal conditions for your target compounds [25].

- Technique Upgrade: Transition from conventional methods (e.g., maceration) to an advanced technique like Ultrasound-Assisted Extraction (UAE) or Microwave-Assisted Extraction (MAE). These methods enhance cell wall disruption and improve compound release efficiency, often leading to higher yields of sensitive bioactive molecules [21] [22].

- Post-Extraction Concentration: Employ techniques like rotary evaporation under reduced pressure to gently concentrate the extract without degrading thermolabile constituents.

Problem: Complex extract causes interference in a high-throughput screening (HTS) assay.

- Symptoms: High background noise, false positives (e.g., assay compound precipitation, fluorescence quenching), or cytotoxic effects at low concentrations that mask specific activity.

- Solution:

- Prefractionation: Simplify the extract by pre-fractionation using solid-phase extraction (SPE) or quick flash chromatography to separate it into less complex fractions (e.g., by polarity) before screening [20].

- Assay Controls: Include rigorous controls: a) an extract vehicle control, b) a "spiked" control where a known assay inhibitor is added to the extract to see if activity is masked, and c) a counter-screen for general assay interference (e.g., fluorescence at relevant wavelengths).

- Bioassay-Guided Fractionation: If activity is confirmed, immediately initiate a bioassay-guided fractionation (BGF) process. Use a rapid, small-scale bioassay to track the activity through subsequent purification steps (e.g., TLC-bioautography for antimicrobials) [20], isolating the responsible compound(s) for cleaner follow-up assays.

Core Experimental Protocols

Protocol 1: Creation and Deposition of a Voucher Specimen

Objective: To preserve a permanent, verifiable record of the biological material used in research. Materials: Fresh plant material (including reproductive structures if possible), plant press, blotting paper, herbarium mounting sheets, labels, access to a recognized herbarium. Procedure:

- Collection: Collect representative plant samples in triplicate from the same population and lot used for extraction. Include all key morphological parts (roots, stems, leaves, flowers/fruits) [16].

- Pressing & Drying: Place specimens in a plant press with absorbent paper. Change paper daily until specimens are completely dry to prevent mold.

- Labeling: Create a durable label with essential data: taxonomic identification (when confirmed), GPS coordinates, collection date, collector's name, habitat notes, and a unique project code [17].

- Taxonomic Authentication: Submit one pressed specimen to a qualified taxonomist or botanist for definitive identification. Attach their determination label to the specimen sheet.

- Deposition: Formally request deposition of the authenticated specimen in a public herbarium. Obtain a unique accession number (e.g., "OSUC 123456" for the Triplehorn Insect Collection) [17].

- Citation: In all related publications, cite the voucher specimen by its herbarium and accession number [16].

Protocol 2: Chemical Profiling by HPLC-UV/PDA for Extract Standardization

Objective: To generate a reproducible chemical fingerprint for batch-to-batch comparison and quality control. Materials: Test extract, analytical-grade solvents, HPLC system with photodiode array (PDA) detector, reversed-phase C18 column, analytical balance, syringe filters (0.22 or 0.45 µm). Procedure:

- Sample Prep: Accurately weigh ~10 mg of extract. Dissolve in appropriate HPLC-grade solvent (e.g., methanol), sonicate, and dilute to a known concentration (e.g., 1 mg/mL). Filter through a syringe filter before injection.

- Chromatographic Conditions:

- Column: C18 (e.g., 150 x 4.6 mm, 5 µm particle size).

- Mobile Phase: Binary gradient. Common: Water (A) and Acetonitrile (B), both with 0.1% Formic Acid.

- Gradient: Example: 5% B to 95% B over 30-40 minutes.

- Flow Rate: 1.0 mL/min.

- Detection: PDA from 200-400 nm. Monitor at 254 nm and 280 nm as standard wavelengths.

- Injection Volume: 10-20 µL.

- Analysis: Run the sample and a solvent blank. Integrate the chromatogram to note retention times and peak areas of major signals. This profile serves as the "fingerprint" for the extract [20] [19].

- Standardization: If marker compounds are available, run authentic standards under identical conditions to calibrate and quantify their levels in the extract.

Protocol 3: Bioassay-Guided Fractionation Using TLC-Bioautography

Objective: To rapidly localize antimicrobial compounds within a crude extract on a chromatographic plate. Materials: Crude extract, TLC plates (silica gel), solvents for mobile phase, microbial culture (e.g., Staphylococcus aureus), nutrient agar, incubation chamber. Procedure (Agar Overlay Method):

- TLC Development: Spot the crude extract on a TLC plate and develop in a suitable solvent system to separate constituents. Dry the plate thoroughly to remove all solvent [20].

- Agar Overlay: Prepare molten, sterile nutrient agar seeded with a log-phase microbial culture. Carefully pour a thin layer of the seeded agar over the developed and dried TLC plate. Allow it to solidify [20].

- Incubation: Incubate the plate (agar-side up) at the microbe's optimal growth temperature (e.g., 37°C for 24 hours).

- Visualization: After incubation, clear zones of growth inhibition in the agar layer correspond to the location of antimicrobial compounds on the underlying TLC plate. Mark these zones against the Rf value.

- Isolation: Use preparative TLC to isolate the compound(s) from the active zone(s) for further purification and identification [20].

Data Presentation

Table 1: Key Steps for Authentication and Vouchering of Plant Material

| Step | Description | Purpose | Key Considerations & References |

|---|---|---|---|

| 1. Literature Review | Research traditional use, common species, and plant parts. | Ensures study material is relevant and justifies species selection. | Use consumer surveys, sales data, ethnopharmacological literature [16]. |

| 2. Sourcing | Procure material from reputable supplier or collect wild/cultivated plants. | Obtains sufficient, consistent raw material. | For wild collection, obtain necessary permits; document location (GPS) [18]. |

| 3. Voucher Collection | Collect representative plant samples (flowers, leaves, stem) in triplicate. | Provides physical specimen for taxonomic verification. | Must be from the exact same batch used for extraction [16] [17]. |

| 4. Taxonomic Authentication | Have specimen identified by a trained botanist. | Confirms genus, species, and authority. | Essential for publication; attach determiner's label [23]. |

| 5. Herbarium Deposition | Deposit authenticated specimen in a public herbarium. | Creates permanent, citable record for scientific community. | Obtain accession number; cite in all publications [16] [17]. |

| 6. Documentation | Maintain detailed records and high-quality photographs. | Enables verification and supports reproducibility. | Photos allow preliminary remote verification [23]. |

Table 2: Comparison of Common Extraction Techniques for Natural Products

| Method | Principle | Typical Conditions | Advantages | Disadvantages | Best For |

|---|---|---|---|---|---|

| Maceration | Solvent soaking at room temperature. | Room temp, 3-4 days, variable solvent volume [20]. | Simple, no special equipment, good for thermolabile compounds. | Slow, inefficient, high solvent use. | Initial exploration, fragile compounds. |

| Soxhlet | Continuous reflux and percolation. | Solvent boiling point, 3-18 hrs, 150-200 mL solvent [20]. | High efficiency, good for exhaustive extraction of non-polar compounds. | High heat, long time, not suitable for thermolabile compounds. | Exhaustive extraction of stable, non-polar compounds. |

| Ultrasound-Assisted (UAE) | Cell disruption via acoustic cavitation. | Lower temps (30-60°C), minutes to 1 hour, reduced solvent [21]. | Fast, efficient, lower temperatures, improves yield. | Potential for radical formation, scale-up challenges. | General purpose, improving yield from many matrices. |

| Microwave-Assisted (MAE) | Heating via microwave dielectric effect. | Elevated temps, very fast (minutes), reduced solvent [21]. | Extremely rapid, efficient, highly controllable. | Requires specialized equipment, thermal degradation risk. | Fast, targeted extraction of compounds stable to brief heating. |

Table 3: Summary of Essential Material Characterization Protocols

| Characterization Type | Recommended Technique(s) | Minimum Reporting Requirement | Purpose in Prioritization | Reference |

|---|---|---|---|---|

| Authentication | Voucher specimen + taxonomic ID; DNA barcoding (if disputed). | Herbarium name and accession number in publication. | Ensures biological reproducibility; prevents misidentification. | [16] [17] [23] |

| Chemical Fingerprinting | HPLC-UV/PDA or LC-MS. | Chromatogram showing major peaks (e.g., in publication appendix). | Provides a "batch fingerprint" for quality control and comparison. | [20] [19] |

| Marker Quantification | HPLC with reference standard calibration. | Concentration (e.g., % w/w) of 1-3 key markers in the extract. | Enables standardization and dose-reproducibility in bioassays. | [19] [18] |

| Contaminant Screening | ICP-MS (metals), GC-MS (pesticides), microbial tests. | Statement of testing and that levels were below permissible limits. | Eliminates bioactivity from contaminants; ensures safety. | [18] |

| Stability Assessment | Repeated chemical fingerprinting over time under storage conditions. | Description of storage conditions and stability duration. | Guarantees consistent material throughout the study period. | [16] [18] |

Mandatory Visualizations

Workflow for Plant Material Authentication & Vouchering

Material Characterization & Standardization Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 4: Essential Materials for Authentication and Characterization

| Item | Function/Description | Key Application & Notes |

|---|---|---|

| Herbarium-Grade Press & Blotter Paper | For properly drying and flattening plant specimens to preserve morphological integrity. | Voucher specimen preparation. Prevents rotting and facilitates mounting [16]. |

| Acid-Free, Rag Paper Labels | Durable labels for specimen data that will not degrade or damage the voucher over decades. | Labeling voucher specimens with collection metadata. Ensures permanent legibility [17]. |

| DNA Extraction & PCR Kits | For isolating and amplifying specific genomic regions (e.g., rbcL, ITS2) from plant tissue. | Molecular authentication via DNA barcoding. Used to resolve ambiguous identifications [24]. |

| HPLC-Grade Solvents | Ultra-pure solvents (MeOH, ACN, Water, with modifiers like formic acid) for reproducible chromatography. | Preparing mobile phases and sample solutions for HPLC/LC-MS analysis. Minimizes background interference [20]. |

| Chemical Reference Standards | Authentic, high-purity samples of known marker or bioactive compounds. | Quantifying specific constituents in extracts via HPLC calibration. Essential for standardization [19] [18]. |

| Certified Reference Materials (CRMs) for Contaminants | Standard solutions with known concentrations of heavy metals, pesticide residues, etc. | Calibrating instruments (ICP-MS, GC-MS) for accurate contaminant quantification [18]. |

| Stable Isotope-Labeled Internal Standards | Compounds identical to analytes but with heavier isotopes (e.g., ¹³C, ²H) for mass spectrometry. | Used in LC-MS for precise, matrix-effect-corrected quantification of metabolites [19]. |

| Solid-Phase Extraction (SPE) Cartridges | Cartridges with various sorbents (C18, silica, ion-exchange) for fractionation or clean-up. | Simplifying complex extracts pre-screening or removing interfering compounds before analysis [20]. |

Within a research thesis focused on methods for prioritizing natural product (NP) extracts for biological screening, the format of the screening "library" is a fundamental variable that dictates experimental strategy, resource allocation, and ultimate success. The evolution from testing crude, complex extracts toward using pre-fractionated or highly defined genetic libraries represents a critical path from discovery to mechanistic understanding. This technical support center provides guidance on selecting and implementing different library formats, framed within the NP drug discovery workflow, to efficiently identify bioactive compounds and their cellular targets [26].

Library Formats Comparison and Selection

Choosing the appropriate library format is the first critical step in designing a screening campaign. The decision balances the breadth of discovery against the depth of mechanistic insight and is constrained by available resources.

Comparative Analysis of Primary Library Formats

The table below summarizes the core characteristics, advantages, and applications of three primary library types relevant to modern natural product and functional genomics research.

Table 1: Comparison of Key Screening Library Formats [26] [27] [28]

| Library Format | Description & Composition | Key Advantages | Primary Screening Applications | Typical Hit Rate & Complexity |

|---|---|---|---|---|

| Crude Natural Product Extracts | Complex mixtures of metabolites from microbial, plant, or marine sources. | • Maximizes chemical diversity and novelty potential.• Preserves natural synergies (additive/potentiating effects).• Lower initial preparation cost. | • Primary bioactivity screening (antibacterial, anticancer, etc.).• Identifying novel pharmacophores. | Highly variable (0.1-1%). Very high complexity, leading to major deconvolution challenges. |

| Pre-fractionated Libraries | Crude extracts separated into distinct fractions (e.g., by HPLC) based on chemical properties. | • Reduces mixture complexity for easier target identification.• Enriches minor components, increasing detection sensitivity.• Provides early chemical profiling data (LC-MS/NMR). | • Bioactivity-guided fractionation.• Prioritizing extracts for full dereplication.• Creating semi-purified sublibraries for HTS. | More consistent than crude extracts. Moderate complexity; activity can often be traced to a single fraction. |

| CRISPR-based Genetic Libraries (Pooled) | Defined pools of sgRNAs delivered via lentivirus to perturb genes genome-wide in a cell population [27]. | • Enables systematic, unbiased interrogation of gene function (knockout, inhibition, activation) [29] [28].• High consistency and reproducibility.• Direct link between phenotype and target gene. | • Identifying host genes essential for pathogen infection or drug resistance.• Uncovering genetic modifiers of NP toxicity or efficacy (target deconvolution).• Synthetic lethality screens. | Designed for high signal-to-noise; hit rates depend on screen type (positive/negative selection) [27]. Low complexity per cell (single guide), high complexity for the pool. |

Key Decision Factors for Library Selection

Selecting a format depends on your specific project goals within the NP screening pipeline.

Table 2: Decision Matrix for Selecting a Library Format

| Decision Factor | Favoring Crude/Pre-fractionated NP Libraries | Favoring CRISPR Genetic Libraries |

|---|---|---|

| Project Goal | Discovery of novel chemical entities with bioactivity. | Discovery of gene functions and pathways involved in a phenotype. |

| Stage in Workflow | Early-stage, phenotype-first discovery. | Mid- to late-stage, mechanism-first investigation (e.g., target ID). |

| Available Resources | Access to unique biological source material and analytical chemistry (LC-MS, NMR) [26]. | Access to cell culture facilities, viral work, and NGS sequencing capabilities [27]. |

| Deconvolution Strategy | Willing to invest in bioassay-guided fractionation and compound purification. | Requires bioinformatics pipelines for NGS data analysis (e.g., MAGeCK, CRISPResso2). |

Frequently Asked Questions (FAQs)

Q1: When should I move from screening crude extracts to a pre-fractionated library? Prioritize pre-fractionation when you have a confirmed bioactive crude extract and need to reduce complexity for the next step. This is crucial when the crude extract activity is weak (to enrich minor components) or when early LC-MS data suggests the presence of a known compound you wish to quickly exclude. Pre-fractionation is the essential bridge between crude discovery and compound isolation [26].

Q2: Can I use CRISPR screens to find the target of my natural product? Yes, this is a powerful application called target deconvolution. You would perform a positive selection CRISPR knockout or activation screen in the presence of a lethal dose of your NP. Cells with genetic perturbations that confer resistance will survive and enrich for sgRNAs targeting the NP's direct cellular target or genes in its resistance pathway [27] [28].

Q3: What is the critical difference between a pooled and an arrayed CRISPR screen, and which should I use?

- Pooled Screens: All sgRNAs are delivered together to a large cell population, which is then subjected to a bulk selection pressure (e.g., a drug). The relative abundance of each sgRNA before and after selection is quantified by NGS. Ideal for simple, selectable phenotypes (viability, drug resistance) and whole-genome screening [27].

- Arrayed Screens: Each sgRNA or gene perturbation is delivered to cells in separate wells of a plate. This allows for complex, multi-parameter phenotypic readouts (e.g., high-content imaging, metabolomics). Best for focused libraries and when you need per-well data [27]. For most genome-wide loss-of-function screens in NP research (e.g., finding essential genes or resistance mechanisms), pooled formats are the standard due to their lower cost and operational simplicity.

Q4: Why is a low Multiplicity of Infection (MOI ~0.3-0.4) critical for pooled CRISPR screens? A low MOI ensures that most transduced cells receive only a single sgRNA. This maintains a clear, unambiguous link between an observed phenotype and the genetic perturbation causing it. High MOI leads to multiple sgRNAs per cell, making it impossible to determine which one is responsible for the phenotype [27].

Q5: How many sgRNAs per gene are optimal in a library? Modern optimized libraries (e.g., Brunello, Dolcetto) use 4-5 highly active sgRNAs per gene. This provides sufficient redundancy to overcome occasional inactive guides while maintaining a compact library size, which reduces screening cost and increases cell coverage per guide [29] [28]. Historical libraries used 6-10 guides, but improved algorithms for predicting sgRNA efficiency have made smaller libraries more effective.

Troubleshooting Common Experimental Issues

| Problem | Possible Causes | Recommended Solutions |

|---|---|---|

| Low or No Hit Rate in Crude Extract Screen | • Extract toxicity masking specific bioactivity.• Concentration too low for minor active components.• Inappropriate assay or readout. | • Test a range of concentrations.• Pre-fractionate to enrich components and reduce toxicity.• Validate assay with known controls. |

| Activity "Disappears" During Pre-fractionation | • Bioactive compound is unstable under separation conditions.• Activity depends on synergy between multiple compounds separated into different fractions. | • Use milder chromatographic conditions (e.g., avoid acidic/basic mobile phases).• Test combinations of adjacent inactive fractions for restored activity. |

| Poor Dynamic Range in CRISPR Positive Selection Screen | • Selection pressure is too weak or too strong.• Insufficient library coverage or cell population bottlenecking. | • Titrate the selecting agent (e.g., NP concentration) to achieve 90-99% cell death in control population.• Maintain a minimum of 500 cells per sgRNA through the entire screen to prevent stochastic dropout [28]. |

| High False-Positive Rate in CRISPR Negative Selection (Dropout) Screen | • "Cutting toxicity" from non-specific DNA damage by Cas9, especially with promiscuous sgRNAs [28].• Inadequate number of biological replicates. | • Use nuclease-dead dCas9 for CRISPRi screens, which lack cutting toxicity and are ideal for essential gene identification [29] [28].• Perform at least 3 biological replicates and use robust statistical models (e.g., MAGeCK RRA) that account for guide-level variance. |

| Inconsistent Results Between sgRNAs Targeting the Same Gene | • Variable on-target activity due to local chromatin state or sequence features [29].• Off-target effects from individual sgRNAs. | • Use sgRNAs designed with modern algorithms (e.g., Rule Set 2) that account for chromatin accessibility [29] [28].• Base hit calls on consistent phenotype across multiple sgRNAs targeting the same gene, not a single guide. |

Detailed Experimental Protocols

Protocol: Generating a Pre-fractionated Natural Product Library for Activity Screening

Objective: To fractionate a bioactive crude extract into a manageable sub-library for facilitated dereplication and target isolation.

Materials: Active crude NP extract, analytical and preparative HPLC systems with UV/ELSD/MS detection, fraction collector, 96-deep well plates, solvent evaporator (nitrogen or centrifugal), bioassay plates and reagents.

Workflow:

- Profiling: Analyze the crude extract via analytical LC-MS to establish a chromatographic baseline and gain initial metabolomic data [26].

- Method Scaling: Scale the chromatographic method (column dimensions, flow rate, gradient) to preparative HPLC.

- Fractionation: Inject the crude extract onto the preparative column. Collect fractions based on a fixed time interval (e.g., every 15-30 seconds) or triggered by UV threshold.

- Processing: Transfer fractions to 96-well plates. Evaporate solvents completely using a nitrogen blowdown or centrifugal evaporator.

- Re-dissolution: Re-dissolve each fraction residue in a uniform volume of assay-compatible solvent (e.g., DMSO).

- Sublibrary Creation: This plate now constitutes your pre-fractionated library. It can be used directly in bioassays, and the associated LC-MS data for each well enables rapid correlation of activity with specific chemical features [26].

Protocol: Essential Gene Discovery Using a Pooled CRISPRi Knockdown Screen

Objective: To identify genes essential for cell viability in your model cell line using a pooled, genome-wide CRISPR interference (CRISPRi) library.

Principle: A lentiviral library of sgRNAs is transduced at low MOI into cells stably expressing dCas9-KRAB (a transcriptional repressor). Cells expressing sgRNAs that knock down essential genes are depleted from the population over time. NGS-based quantification reveals depleted sgRNAs and their target genes [29] [28].

Workflow Diagram: The following diagram illustrates the key steps in a pooled CRISPRi knockout screen workflow.

Procedure:

- Cell Line Preparation: Generate a cell line (e.g., K562, A375) that stably expresses dCas9-KRAB via lentiviral transduction and blasticidin selection. Confirm expression by western blot.

- Viral Transduction: Transduce the dCas9 cells with the pooled sgRNA library (e.g., Dolcetto CRISPRi library) [28] at an MOI of 0.3-0.4 to ensure most cells get ≤1 sgRNA. Include puromycin selection 24 hours post-transduction.

- Coverage and Passaging: Maintain a representation of at least 500 cells per sgRNA throughout the screen. Passage cells for a minimum of 14 population doublings to allow for the depletion of cells targeting essential genes.

- Genomic DNA (gDNA) Harvest: Harvest gDNA from a minimum of 20 million cells at the point of puromycin selection completion (T0 baseline) and at the end of the screen (Tfinal). Use maxiprep-scale kits to ensure high-quality, high-quantity gDNA.

- NGS Library Prep & Sequencing: Amplify the integrated sgRNA sequences from the gDNA via a two-step PCR. The first PCR amplifies the region from genomic DNA; the second adds Illumina adapters and sample barcodes for multiplexing. Sequence to a depth of 50-100 reads per sgRNA.

- Data Analysis: Align sequencing reads to the sgRNA library reference. Count reads per sgRNA in T0 and Tfinal samples. Use statistical packages like MAGeCK to compare the depletion of sgRNAs, rank target genes, and identify essential genes with high confidence [28].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Reagents for Library-Based Screening

| Item | Function in Workflow | Key Considerations & Examples |

|---|---|---|

| LC-MS/MS System | Profiling crude extracts and annotating fractions. Provides molecular weight and fragmentation data for dereplication [26]. | High-resolution mass accuracy (HRMS) is critical for formula prediction. Coupling with NMR (LC-MS/NMR) is powerful but specialized. |

| Optimized CRISPR Library | The core reagent for genetic screens. Defines the quality and coverage of the screen. | Use modern, algorithm-designed libraries (e.g., Brunello for KO, Dolcetto for CRISPRi) [28]. Ensure it's cloned in your required backbone (lentiGuide, lentiCRISPR). |

| Lentiviral Packaging System | Producing the infectious virus to deliver the CRISPR library into target cells. | 2nd or 3rd generation systems for safety. Include necessary packaging plasmids (psPAX2, pMD2.G). Always follow institutional biosafety protocols. |

| Next-Generation Sequencer | Quantifying sgRNA abundance in pooled screens. The readout for CRISPR screen results. | Illumina platforms (NextSeq, NovaSeq) are standard. Ensure sufficient read depth and multiplexing capacity for your library size [27]. |

| Bioinformatics Pipeline | Analyzing NGS data from CRISPR screens to identify hit genes. | Essential for statistical analysis. MAGeCK is the most widely used open-source tool. Commercial software (e.g., Geneious Biomanger) offers user-friendly interfaces. |

| Validated Control sgRNAs/Compounds | Assay validation and quality control. | Include non-targeting control sgRNAs in your library. Use known essential gene targeting sgRNAs (e.g., RPA3) and non-essential gene targets as positive/negative controls. For NP screens, use standard bioactive compounds (e.g., staurosporine). |

The Modern Toolbox: AI, Omics, and Advanced Assays for Strategic Prioritization

Technical Support Center: Troubleshooting AI-Driven Natural Product Research

This technical support center is designed for researchers employing artificial intelligence (AI) and machine learning (ML) to predict the bioactivity and mechanism of action (MoA) of natural product extracts. Its purpose is to troubleshoot common experimental and computational hurdles within the broader thesis context of developing robust methods for prioritizing natural product extracts for biological screening [24]. The following FAQs and guides address specific, practical issues encountered in this interdisciplinary workflow.

Frequently Asked Questions (FAQs)

1. My ML model performs well on training data but fails to generalize to new natural product libraries. What could be wrong? This is a classic sign of overfitting or a domain shift problem, highly prevalent in natural product research due to small, imbalanced datasets [24]. First, audit your data for batch variability and incomplete provenance (e.g., missing extraction method or species taxonomy), which can create hidden biases [24]. Ensure your training set encompasses the chemical diversity you intend to screen. Implement scaffold and time-split benchmarks during model validation instead of simple random splits; this tests the model's ability to predict truly novel scaffolds [24]. Furthermore, use applicability domain (AD) estimation techniques. Before applying your model to a new library, calculate whether the new compounds fall within the chemical space of the training data. Compounds outside the AD should be flagged as low-confidence predictions [24].

2. How can I reliably predict the Mechanism of Action (MoA) for a hit compound from a complex natural product extract? Predicting MoA for mixtures is challenging. Move beyond single-target docking. Implement network pharmacology approaches that construct herb–ingredient–target–pathway graphs to propose synergistic effects [24]. For a more rigorous test, design mechanistic add-back experiments based on AI predictions [24]. For instance, if the model predicts inhibition of a specific kinase pathway, you can attempt to rescue the observed phenotype in a cell-based assay by adding a downstream activator. Also, leverage multi-omics operational gates [24]. Compare the transcriptomic or proteomic signature of cells treated with your extract against signatures from treatments with compounds of known MoA (reference databases). AI can then infer the MoA by identifying reversed disease signatures or shared pathway perturbations [24].

3. What are the best practices for integrating diverse and messy natural product data (structures, spectra, bioactivity) for AI analysis? The core challenge is data fragmentation. The leading strategy is to construct or utilize a Natural Product Knowledge Graph [30]. Unlike simple tables, a knowledge graph can link heterogeneous nodes (e.g., a plant species, a mass spectrometry peak, a gene cluster, a bioactivity result) with defined relationships (e.g., "produces," "has_fragment," "inhibits") [30]. This structure preserves multimodal data context and enables advanced AI reasoning. Start by standardizing your data using minimal information metadata standards for provenance [24]. Tools like the Experimental Natural Products Knowledge Graph (ENPKG) demonstrate how to convert unstructured data into connected, queryable resources [30]. This foundational work is critical for models to emulate a scientist's decision-making by traversing connected biological and chemical evidence [30].