Innovative Strategies to Minimize Bioactive Rediscovery in Natural Product Screening: A 2025 Guide for Drug Discovery Researchers

This article provides a comprehensive framework for overcoming the pervasive challenge of bioactive compound rediscovery in natural product-based drug discovery.

Innovative Strategies to Minimize Bioactive Rediscovery in Natural Product Screening: A 2025 Guide for Drug Discovery Researchers

Abstract

This article provides a comprehensive framework for overcoming the pervasive challenge of bioactive compound rediscovery in natural product-based drug discovery. It details foundational principles on the origins of structural redundancy, explores modern methodological solutions like mass spectrometry-based library rationalization and in-silico dereplication, and offers troubleshooting for common implementation hurdles. By comparing the efficacy of emerging strategies—from genome mining to artificial intelligence—and outlining robust validation protocols, the article equips researchers with the knowledge to design efficient screening campaigns that maximize the discovery of novel chemical scaffolds and accelerate the identification of new therapeutic leads.

Understanding the Rediscovery Problem: Sources of Redundancy and Its Impact on Natural Product Screening Efficiency

In natural product screening, structural redundancy refers to the presence of identical or highly similar bioactive molecular scaffolds across multiple extracts or fractions within a library [1]. This redundancy is an inherent challenge because it leads directly to bioactive rediscovery, where valuable screening resources are wasted repeatedly identifying the same known compounds.

From a technical support perspective, this problem manifests as diminishing returns in high-throughput screening (HTS). You invest significant time and resources screening thousands of extracts, only to find that a high percentage of "hits" are the same familiar compounds or chemical classes [2]. This not only wastes assay reagents and personnel time but also obscures potentially novel, low-abundance bioactives.

The core thesis for overcoming this challenge is that strategic library design and informatics-driven prioritization can dramatically reduce redundancy before screening begins. By focusing on scaffold diversity rather than sheer sample number, you can design smaller, smarter libraries that increase your probability of discovering novel bioactives [1].

Troubleshooting Guide: Identifying and Solving Redundancy Issues

Problem: My screening hit rate is high, but most leads are known compounds.

This is the classic symptom of library redundancy. A high initial hit rate followed by rapid dereplication of known compounds indicates your library has high chemical similarity across many samples.

Diagnostic Steps:

- Perform Retrospective LC-MS Analysis: Re-examine 10-20 of your most potent "hit" extracts by LC-MS. Create a simple molecular network using freely available tools like GNPS (Global Natural Products Social Molecular Networking). Clustering of MS/MS spectra from different hits will visually indicate if they share similar metabolites [1].

- Check Source Metadata: Correlate hit data with the source of your extracts (e.g., fungal strain, plant genus, collection site). Redundancy often clusters around specific taxonomic groups or isolation conditions [2].

Solution: Implement Pre-Screen Informatics Filtering

- Procedure: Before your next screen, analyze a representative subset of your library (e.g., 10%) by untargeted LC-MS/MS.

- Software: Process data through GNPS to generate a molecular network.

- Action: Identify large "molecular families" (clusters of >10 similar spectra) that represent common scaffolds. If your library's goal is novel discovery, consider temporarily deprioritizing or blending extracts that are over-represented in these large clusters for the next screen [1].

Problem: My assay is overwhelmed by nuisance compounds (e.g., tannins, saponins).

Nuisance compounds cause false positives or non-specific inhibition, clogging the pipeline. Their structural redundancy makes them appear in many extracts.

Diagnostic Steps:

- Assay Interference Profile: Run your assay with purified standards of common nuisance compounds (e.g., tannic acid) to establish their interference signature.

- LC-MS Correlation: Use computational tools to correlate the presence of specific mass features (m/z and retention time) with the nuisance activity across hundreds of screening data points.

Solution: Apply Targeted Fractionation or Library Pre-Treatment

- Protocol for Solid-Phase Extraction (SPE) Cleanup:

- Condition a reverse-phase C18 SPE cartridge with methanol, then equilibrate with water.

- Load your crude natural product extract dissolved in water.

- Wash with 10-20% aqueous methanol to elute highly polar nuisance compounds like salts and sugars.

- Elute your desired mid-to-nonpolar compounds with 80-100% methanol. This step often removes many polyphenolic nuisance compounds [2].

- Alternative Strategy: Switch from crude extracts to a pre-fractionated library. A single crude extract separated into 8-12 fractions by HPLC reduces the concentration of any single nuisance compound per well and separates it from potential true bioactives [2].

Screening a 10,000-extract library is impractical for many academic labs. The key is not to screen randomly but to select an informative subset.

Diagnostic Step: Quantify your library's diversity. If you lack LC-MS data, use phylogenetic diversity as a proxy. Map your extracts on a phylogenetic tree based on source organism. Tight clustering indicates potential for chemical redundancy [3].

Solution: Construct a Rational Mini-Library Using MS-Based Diversity Selection This method uses LC-MS/MS data to select the minimal set of extracts that capture maximal scaffold diversity [1].

- Experimental Protocol:

- Data Acquisition: Analyze all library extracts by standardized untargeted LC-MS/MS.

- Molecular Networking: Process all files through GNPS to group MS/MS spectra into molecular families (scaffolds).

- Scaffold Inventory: For each extract, create a list of all unique molecular family scaffolds it contains.

- Rational Selection via Iterative Algorithm: a. Select the single extract with the highest number of unique scaffolds. b. Add this extract to your new "Rational Mini-Library." c. From the remaining unsampled extracts, select the one that adds the greatest number of new scaffolds not already present in the Rational Mini-Library. d. Repeat step (c) until you reach your desired sample count (e.g., 5% of the original library) or until 80-90% of the total unique scaffolds in the full library are represented.

- Expected Outcome: Research shows this method can reduce a library by 6.6- to 28.8-fold while increasing bioassay hit rates by removing redundant, non-bioactive extracts [1].

Table 1: Comparison of Library Reduction Methods

| Method | Key Principle | Data Required | Typical Library Size Reduction | Risk of Losing Novel Bioactives |

|---|---|---|---|---|

| Random Selection | Simple random sampling | None | User-defined | High, uncontrolled |

| Phylogenetic Selection | Diverse source organisms | DNA barcoding or taxonomy | Moderate | Medium, chemistry ≠ phylogeny |

| MS-Based Rational Selection [1] | Maximize unique MS/MS scaffolds | LC-MS/MS data | High (6.6 to 28.8-fold) | Low (controlled by algorithm) |

| Bioactivity-Guided Selection | Prioritize historically active extracts | Historical screening data | Low to Moderate | High (biased toward known chemistry) |

Detailed Experimental Protocols

Protocol 1: LC-MS/MS-Based Library Dereplication and Redundancy Assessment

Goal: To quickly identify groups of extracts in your library that share identical major metabolites.

Materials:

- LC-MS/MS system (Q-TOF or Orbitrap preferred)

- Standardized extraction solvent (e.g., 80% methanol)

- GNPS account (gnps.ucsd.edu)

Procedure:

- Sample Preparation: Reconstitute all dried extracts to a standard concentration (e.g., 1 mg/mL) in MS-grade methanol. Centrifuge and transfer supernatant to MS vials.

- LC-MS/MS Data Acquisition:

- Column: C18 reversed-phase (e.g., 2.1 x 100 mm, 1.7-1.9 µm).

- Gradient: 5% to 100% acetonitrile in water (both with 0.1% formic acid) over 20 minutes.

- MS Settings: Data-Dependent Acquisition (DDA) mode. Collect full scan MS1 (m/z 100-1500), then fragment the top 10 most intense ions for MS2.

- Data Processing:

- Convert raw files to .mzML format using MSConvert (ProteoWizard).

- Upload to GNPS and create a Classical Molecular Network using default parameters.

- Analyze the Network: Large clusters of nodes (MS/MS spectra) connected by thick edges (high spectral similarity) indicate commonly occurring, redundant metabolites. Extracts whose spectra appear in the same cluster are chemically redundant.

Protocol 2: Building a Redundancy-Minimized Screening Library

Goal: To select a subset of 100 extracts from a 2000-extract library that maximizes chemical diversity.

Materials:

- Full library LC-MS/MS data (from Protocol 1)

- R statistical software with

ggplot2andtidyverse - Custom R script for iterative selection (publicly available code from [1] can be adapted).

Procedure:

- From the GNPS output, generate a binary matrix: rows = extracts, columns = molecular families (scaffolds), value = 1 (present) or 0 (absent).

- Run the iterative selection algorithm:

- The resulting

selected_extractslist is your optimized, redundancy-minimized library. Physically retrieve these 100 extracts for screening.

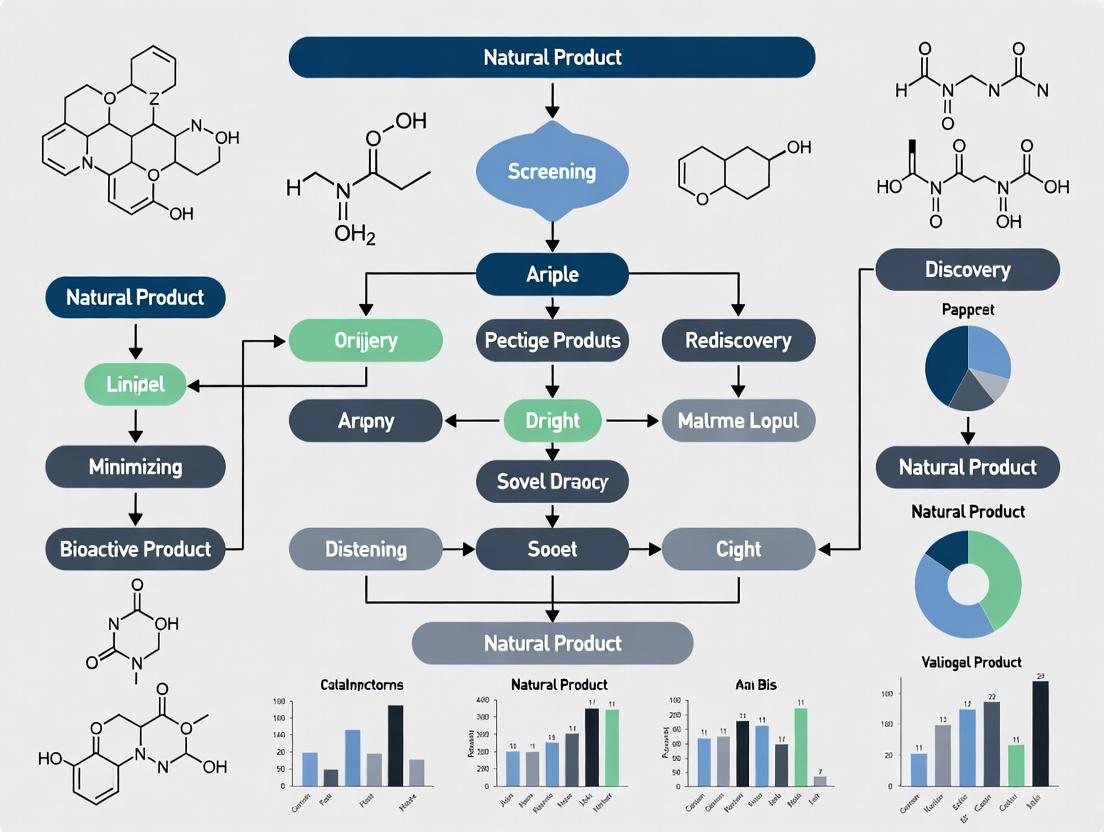

Visualization of Concepts and Workflows

Diagram: Workflow for Rational Library Minimization [1]

Diagram: Structural Redundancy in Library Design

Data Interpretation Guide

How to Read Molecular Networks for Redundancy

- Large, Dense Clusters: Indicate a commonly produced scaffold present in many extracts (high redundancy). The thickness of the lines (edges) between nodes indicates spectral similarity.

- Many Singletons: Many unconnected nodes (single MS/MS spectra) indicate high unique chemical diversity.

- Extract Overlap: If the spectra from 10 different extracts all fall into one large cluster, those 10 extracts are chemically redundant for that major compound class.

Quantifying the Benefit: Key Performance Indicators (KPIs)

After implementing redundancy reduction, track these metrics:

Table 2: Expected Improvement from Redundancy Reduction (Based on Published Data) [1]

| Performance Metric | Typical Full Library | Rational Mini-Library (80% Diversity) | Improvement Factor |

|---|---|---|---|

| Library Size (No. of Extracts) | 1,439 | 50 | 28.8x smaller |

| Hit Rate vs. P. falciparum | 11.26% | 22.00% | 1.95x higher |

| Hit Rate vs. T. vaginalis | 7.64% | 18.00% | 2.36x higher |

| Hit Rate vs. Neuraminidase | 2.57% | 8.00% | 3.11x higher |

| Scaffold Diversity Retained | 100% | 80% | Controlled trade-off |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Redundancy Assessment

| Item | Function in Redundancy Minimization | Example/Supplier Notes |

|---|---|---|

| Fungal/Bacterial Extract Library | The raw material for screening. Diversity of source organisms is the starting point for chemical diversity. | In-house collections; commercially available from suppliers like AnalytiCon Discovery or the NCI Program for Natural Product Discovery [2]. |

| LC-MS/MS System with DDA | Generates the spectral data required for molecular networking and scaffold-based analysis. | Q-TOF (e.g., Agilent 6545/6546) or Orbitrap (e.g., Thermo Exploris) systems are ideal [1]. |

| GNPS Platform Access | Free, cloud-based platform for performing molecular networking and analyzing LC-MS/MS data for redundancy. | Essential. Accounts are free at https://gnps.ucsd.edu. |

| Solid Phase Extraction (SPE) Cartridges | For quick clean-up of crude extracts to remove common nuisance compounds that cause assay interference. | C18 reversed-phase cartridges (e.g., Waters Oasis, Agilent Bond Elut). Used for partial fractionation [2]. |

| Standardized Bioassay Kits | To test the performance of your minimized library. Higher hit rates validate the reduction strategy. | Use assays relevant to your field (e.g., anti-parasitic, enzyme inhibition) [1]. |

| R or Python Software Environment | For running custom scripts to perform the iterative diversity selection algorithm. | R packages: tidyverse, igraph. Python libraries: pandas, networkx. |

Frequently Asked Questions (FAQs)

Q: I don't have an LC-MS/MS. Can I still reduce redundancy in my library? A: Yes, but with less precision. You can:

- Use Phylogenetic Data: If your library is from microbial isolates, use 16S rRNA gene sequencing data. Select strains that are phylogenetically distant, as this often (but not always) correlates with metabolic diversity [3].

- Use Historical Bioactivity Data: If you have past screening data, use chemometric tools like Principal Component Analysis (PCA) to cluster extracts based on their bioactivity profiles across multiple assays. Select one representative from each major bioactivity cluster.

- Employ Simple Chromatography: Run all extracts on standardized thin-layer chromatography (TLC). Visually cluster extracts with similar TLC profiles and select a subset from each cluster.

Q: Doesn't a smaller library mean I'm more likely to miss a rare, potent bioactive? A: This is a common and valid concern. The rational selection method is designed to maximize scaffold diversity. Rare scaffolds are, by definition, unique. The algorithm will prioritize an extract containing a single, unique rare scaffold over an extract containing many common scaffolds. Published data shows that 95-100% of features correlated with bioactivity in a full library were retained in a rationally selected mini-library [1]. The method minimizes the loss of rare actives.

Q: How do I handle regulatory and ethical issues related to sourcing natural products? A: This is critical. Always ensure compliance with the Convention on Biological Diversity (CBD) and the Nagoya Protocol on Access and Benefit-Sharing (ABS). Before collecting or acquiring samples, you must have:

- Prior Informed Consent (PIC) from the source country.

- Mutually Agreed Terms (MAT) that outline fair and equitable benefit-sharing arising from commercialization [2] [4].

- Properly documented voucher specimens deposited in a recognized herbarium or culture collection. Work with established programs that have these frameworks in place, such as the NCI Natural Products Repository [2].

Q: What's the difference between "structural redundancy" and "biological redundancy" in this context? A: This is an important distinction.

- Structural Redundancy (our focus): The presence of the same or highly similar chemical molecules or scaffolds across multiple library samples. It's a property of your chemical library.

- Biological Redundancy [3]: A property of living systems where multiple genes, pathways, or processes can perform the same function, ensuring resilience. In screening, biological redundancy in a target pathway can make it harder to find a single potent inhibitor, but that is a separate challenge from library design.

A persistent and costly challenge in natural product (NP) screening is the frequent rediscovery of known bioactive compounds, which diminishes the efficiency and economic viability of discovery pipelines [5]. This technical support center provides researchers and drug development professionals with targeted troubleshooting guides and methodological protocols framed within a strategic thesis: overcoming rediscovery requires an integrated understanding of the primary causes—phylogenetic relationships, common biosynthetic pathways, and environmental factors [6] [7]. By employing advanced pre-screening strategies such as phylogenetic dereplication, genome mining, and reactivity-based screening, researchers can prioritize novel chemical space and silence the expression of common pathways [8] [5].

Troubleshooting Guide & FAQ

This section addresses common experimental pitfalls and provides solutions based on contemporary research strategies.

Section 1: Troubleshooting Phylogenetic & Genomic Analysis

Problem: My microbial isolate is phylogenetically related to a known prolific producer, leading to high rediscovery rates. How can I prioritize it for novel discovery?

- Solution & Strategy: Move beyond species-level taxonomy. Perform phylogenomic analysis of Biosynthetic Gene Clusters (BGCs) and their regulatory elements. Clusters in common pathways (e.g., polyketide synthases (PKS) and nonribosomal peptide synthetases (NRPS)) can be highly conserved across species [8]. Use tools like the Natural Product Domain Seeker (NaPDoS) to classify ketosynthase (KS) and condensation (C) domains phylogenetically. This can predict structural motifs and differentiate between common and unique biosynthetic potentials [8]. Focus on strains where BGC phylogeny suggests divergent evolution or novel domain architecture, even if the organismal phylogeny is common.

Problem: Genome mining predicts many BGCs, but they remain "silent" under standard laboratory culture conditions.

- Solution & Strategy: This is often an environmental and regulatory factor issue. Silence is frequently due to the lack of correct environmental or regulatory triggers [7]. Implement a phylogenetic classification of regulatory mechanisms. Analyze and compare the regulatory genes (e.g., transcription factors, histidine kinases) associated with silent BGCs to those of characterized clusters in databases like MiBIG [7]. If the regulatory apparatus is phylogenetically similar to a cluster activated by a known chemical elicitor (e.g., a specific histone deacetylase inhibitor or signaling molecule), apply that elicitor to your culture. This "phylogenetic activation" strategy leverages conserved regulatory logic [7].

Experimental Protocol: Phylogenetic Analysis of BGC Regulatory Elements [7]

- BGC Prediction & Curation: Use antiSMASH on your target genome(s). Filter results for "complete" or "high confidence" BGCs using the BiG-FAM database to ensure analyzable genetic units.

- Regulatory Protein Identification: From the BGC sequences, identify genes encoding putative regulatory proteins (e.g., transcription factors (TFs), histidine kinases (HKs)). Use Hidden Markov Models (HMMs) from the Pfam database (e.g., PF00512 for Histidine Kinase CA domain) with HMMER software (E-value < 0.01) for domain detection.

- Sequence Alignment & Tree Construction: Extract the protein sequences of the regulatory domains. Perform a multiple sequence alignment with reference sequences from characterized BGCs (e.g., from MiBIG) using CLUSTAL Omega or MAFFT. Construct a phylogenetic tree using a maximum-likelihood method (e.g., RAxML) with appropriate bootstrapping (e.g., 100 replicates).

- Phylogenetic Classification & Hypothesis Generation: Classify your unknown regulatory element within the phylogenetic tree. If it clusters closely with a regulator known to respond to a specific stimulus, design a cultivation experiment incorporating that stimulus (e.g., sub-inhibitory antibiotic, metal stress, co-culture).

Section 2: Troubleshooting Biosynthetic Pathway-Driven Rediscovery

Problem: My extracts show promising activity, but dereplication consistently identifies common scaffold molecules (e.g., macrolides, tetracyclines).

- Solution & Strategy: Employ a chemistry-first, reactivity-based screening (RBS) approach before bioassay [5]. Functional groups common to well-known compound classes can be targeted. Use chemoselective probes to tag metabolites containing specific reactive moieties (e.g., epoxides, Michael acceptors, electron-rich olefins) in crude extracts. Analysis via LC-MS identifies only tagged metabolites, effectively filtering out non-reactive common compounds and highlighting potentially novel reactive scaffolds [5].

Problem: I want to explore novel chemical space inspired by natural scaffolds without synthesizing vast libraries blindly.

- Solution & Strategy: Adopt a Biology-Oriented Synthesis (BIOS) or Diversity-Oriented Synthesis (DOS) strategy informed by phylogenetics [6]. Use phylogenetic analysis of successful natural product classes to identify core "privileged" scaffolds that target specific protein families (e.g., protein-protein interactions). Then, synthesize focused libraries around these scaffolds by ring distortion, pruning, or hybridization [6]. This balances exploration of chemical space with a higher probability of bioactivity.

Experimental Protocol: Reactivity-Based Screening with a Thiol Probe [5]

- Objective: To selectively label and identify natural products containing electrophilic functional groups (e.g., Michael acceptors, epoxides) in a bacterial extract.

- Materials: Crude ethyl acetate extract of bacterial culture, thiol-reactive probe (e.g., 2-mercaptoethanol-derived probe with a biotin or fluorophore tag), dimethyl sulfoxide (DMSO), LC-MS system.

- Procedure:

- Probe Reaction: Dissolve the crude extract in a suitable buffer (e.g., PBS pH 7.4) or aqueous acetonitrile. Add the thiol-reactive probe (final concentration ~100 µM) from a DMSO stock solution. Incubate at 25°C for 1-2 hours.

- Control Reaction: Prepare an identical sample with no probe or with an inactive, "scrambled" probe.

- Analysis: Quench reactions and analyze by LC-MS. Compare the total ion chromatograms (TIC) and extracted ion chromatograms (XIC) of the probe-treated vs. control samples.

- Data Interpretation: Look for new chromatographic peaks present only in the probe-treated sample. These correspond to probe-metabolite adducts. The mass shift (e.g., +119 Da for a 2-mercaptoethanol tag) helps identify the parent ion of the reactive natural product. This parent ion can then be targeted for isolation and structure elucidation.

Section 3: Troubleshooting Environmental & Cultivation Issues

- Problem: Isolates from unique environments still produce common metabolites when cultured in standard media.

- Solution & Strategy: Standard lab media create a common environmental factor that selects for the expression of common, "fast-growth" BGCs. To access environment-specific chemistry, you must mimic key ecological parameters. This goes beyond nutrient composition. Consider:

- Physical Stressors: Simulate substrate attachment (biofilm reactors), shear stress (agitation variations), or light cycles.

- Chemical Cues: Use extracts from co-habitating organisms or supplement with species-specific signaling molecules (e.g., acyl-homoserine lactones).

- Co-culture: Cultivate with other microbes from the same niche to trigger defensive or communicative metabolite production [9].

- Solution & Strategy: Standard lab media create a common environmental factor that selects for the expression of common, "fast-growth" BGCs. To access environment-specific chemistry, you must mimic key ecological parameters. This goes beyond nutrient composition. Consider:

Table 1: Quantitative Performance of Strategies to Minimize Rediscovery

| Strategy | Core Principle | Key Metric/Outcome | Example/Reference |

|---|---|---|---|

| Phylogenetic Dereplication | Analyze evolutionary relatedness of BGCs to predict novelty. | Classification of KS domains into >8 distinct clades predicting enzyme architecture [8]. | NaPDoS tool for KS/C domain analysis [8]. |

| Regulatory Phylogenetics | Use phylogeny of regulatory genes to activate silent BGCs. | Framework tested on 2,694 BGCs from diverse environments; identified common regulatory patterns across habitats [7]. | Prediction of activators for uncharacterized BGCs in actinobacteria [7]. |

| Reactivity-Based Screening (RBS) | Chemoselective tagging of metabolites with specific functional groups. | Direct detection of rare electrophilic NPs, bypassing bioactivity screens dominated by common hits [5]. | Probes for thiols, tetrazines, aminooxy groups target unique chemotypes [5]. |

| Diversity-Oriented Synthesis (DOS) | Generate skeletally diverse libraries from NP-inspired scaffolds. | A 2,070-member macrolactone library identified robotnikin, a Hedgehog inhibitor with 91% efficacy (ECmax) [6]. | Discovery of novel antibiotic gemmacin from a 242-molecule NP-like library [6]. |

Visualization of Integrated Strategies

Diagram 1: Integrated Workflow for Minimizing Rediscovery

Diagram 2: Reactivity-Based Screening (RBS) Process

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Reagents and Materials for Featured Strategies

| Item | Function / Application | Example/Note |

|---|---|---|

| antiSMASH Software | Predicts BGCs in genomic data. Foundational for genome mining and phylogenetic analysis of BGCs [7]. | Use version 6.0 or higher for comprehensive predictions [7]. |

| NaPDoS (Web Tool) | Performs phylogenetic analysis of KS and C domains from PKS/NRPS sequences to predict cluster type and novelty [8]. | Input: KS or C domain sequence. Output: Phylogenetic clade assignment. |

| MIBiG Database | Repository of experimentally characterized BGCs. Essential reference for comparative phylogenetics and regulatory analysis [7]. | Use as a source of reference sequences for tree building. |

| Reactivity-Based Probes | Chemoselective tags for functional groups (thiol, aminooxy, tetrazine). Enrich or detect NPs with specific reactive moieties [5]. | Example: Thiol probes label epoxide- or β-lactone-containing metabolites [5]. |

| Histidine Kinase HMMs (Pfam) | Hidden Markov Models (e.g., PF00512) used to identify and classify regulatory domains within BGCs for phylogenetic studies [7]. | Used with HMMER software for sensitive domain detection. |

| Solid-Supported Phosphonate | Building block for DOS libraries. Enables divergent synthesis of multiple NP-like scaffolds from a common intermediate [6]. | Key reagent in the synthesis of gemmacin and related antibiotic libraries [6]. |

| Elicitor Molecules | Chemical signals (e.g., antibiotics, metals, quorum sensing molecules) used to mimic environmental cues and activate silent BGCs [7] [9]. | Choice is guided by phylogenetic analysis of BGC regulators. |

Technical Support Center: Troubleshooting Rediscovery in Natural Product Screening

Welcome to the Technical Support Center. This resource is designed within the broader thesis that strategic pre-screening analysis is paramount to minimizing bioactive rediscovery—a major inefficiency that consumes time, budgets, and scientific momentum. The following guides and FAQs address common experimental pitfalls and provide data-driven solutions to optimize your natural product screening campaigns.

Troubleshooting Guide: Common Issues & Solutions

Problem 1: Declining or Stagnant Hit Rates in High-Throughput Screening (HTS)

- Symptoms: Consistently low hit rates (<2-3%) in phenotypic or target-based assays; high rate of hits identifying known compounds upon validation.

- Diagnosis: This is a classic sign of screening a library with high chemical redundancy, where the same or similar scaffolds are represented repeatedly, drowning out novel bioactivity [1].

- Solution: Implement a rational library reduction strategy prior to HTS.

- Protocol: Utilize untargeted LC-MS/MS with molecular networking (e.g., GNPS) to profile your extract library [1]. Construct a rational sub-library by iteratively selecting extracts that add the most new molecular scaffolds, aiming for 80-95% of total diversity [1].

- Expected Outcome: A dramatically smaller library (e.g., 50 extracts vs. 1,439) that delivers a higher hit rate (e.g., 22% vs. 11.3% for P. falciparum) and preserves most bioactive compounds correlated with activity in the full library [1].

Problem 2: High Costs and Long Timelines for Library Screening

- Symptoms: Screening budgets exhausted on early phases; lead discovery timeline is protracted.

- Diagnosis: Screening excessively large, redundant libraries multiplies reagent, labor, and time costs without improving output.

- Solution: Adopt a tiered, in silico-first screening pipeline.

- Protocol: Before wet-lab assays, employ virtual screening. Use molecular docking (e.g., AutoDock) against your target and apply machine learning models to predict ADMET properties and bioactivity [10] [11]. Prioritize only the top-ranking, computationally validated candidates for experimental testing.

- Expected Outcome: Significant reduction in the number of extracts or compounds requiring physical screening, compressing the initial discovery timeline and focusing resources on high-probability leads [10].

Problem 3: Frequent Rediscovery of Known Bioactives (Dereplication Failure)

- Symptoms: Promising assay hits are later identified as well-known compounds (e.g., common flavonoids, mycotoxins).

- Diagnosis: Inadequate dereplication at the screening or early hit-confirmation stage.

- Solution: Integrate real-time, automated dereplication into the workflow.

- Protocol: Couple your primary bioassay with high-resolution LC-MS/MS analysis. Use tools like the Global Natural Products Social Molecular Networking (GNPS) platform to automatically compare MS/MS spectra of active fractions against public libraries of known natural products [12] [1].

- Expected Outcome: Immediate flagging of known compounds, allowing researchers to deprioritize them early and focus resources on novel chemotypes.

Problem 4: Difficulty Linking Bioactivity to a Specific Compound in Crude Extracts

- Symptoms: An extract shows activity, but isolation efforts fail or lead to an inactive pure compound due to synergy or trace components.

- Diagnosis: Lack of a method to pinpoint the exact feature (mass-retention time pair) responsible for activity within a complex mixture.

- Solution: Perform bioactivity-correlation analysis using metabolomic data.

- Protocol: After acquiring LC-MS/MS data for all library samples, use statistical tools (e.g., Spearman correlation) to find MS features whose intensity across samples strongly correlates with the bioactivity score from your HTS [1]. These features become priority targets for isolation.

- Expected Outcome: Precise targeting of the ions responsible for bioactivity, increasing the efficiency and success rate of the subsequent isolation and structure elucidation phases.

Frequently Asked Questions (FAQs)

Q1: What is the tangible cost of rediscovery in natural product screening? A1: Rediscovery is a multi-faceted cost sink. Primarily, it wastes the direct screening budget (reagents, assay plates, instrumentation time) on uninformative data points. A study demonstrated that by rationally reducing a fungal extract library from 1,439 to 50 samples (aiming for 80% scaffold diversity), the hit rate against P. falciparum increased from 11.3% to 22% [1]. This means the unreduced library required screening 28.8 times more samples to achieve the same number of unique hits, representing a massive multiplier on screening costs and time.

Q2: How can I justify the upfront time and cost of LC-MS/MS profiling for rational library design? A2: The investment is rapidly offset. The quantitative data shows that reaching 80% scaffold diversity required screening 109 random extracts versus only 50 rationally selected extracts [1]. The cost of LC-MS/MS analysis for 1,439 extracts is fixed. The ongoing, variable cost of screening an additional 59 samples per assay—and across multiple future assays—is where savings compound. Furthermore, the increased hit rate means more valuable leads are identified sooner, accelerating the entire discovery pipeline and improving return on investment.

Q3: Are these strategies only relevant for microbial or fungal extract libraries? A3: No. The principle of minimizing redundancy via pre-screening analysis is universal. The rational library design method based on LC-MS/MS spectral similarity has been validated on fungal libraries [1], but the underlying workflow is applicable to plant, marine, or any other crude extract libraries. Similarly, in silico docking and AI-based prediction tools are agnostic to the compound source and are being widely applied across all domains of natural product research [12] [10].

Q4: We have a small lab. Can we implement these strategies without extensive computational infrastructure? A4: Yes, with strategic use of public resources. For molecular networking and dereplication, the free, web-based GNPS platform is a powerful starting point [1]. For basic in silico screening, user-friendly software like SwissADME (for drug-likeness) and AutoDock (for docking) are accessible [11]. Cloud-based computing services can also be used on-demand for more intensive tasks. Collaboration with bioinformatics groups is another effective pathway.

Q5: How do AI and machine learning specifically help reduce rediscovery? A5: AI/ML tackles rediscovery proactively. Models can:

- Predict Novelty: Train models on known natural product libraries to score new compounds for structural novelty or similarity to known bioactives [12].

- Prioritize Unlikely Targets: Predict potential targets for a compound, helping avoid pathways saturated with known inhibitors [12].

- Design Focused Libraries: Generative AI can propose novel, synthetically accessible structures inspired by but distinct from known natural scaffolds, creating intellectual property space [12].

Quantitative Impact of Rediscovery & Strategic Solutions

The following tables summarize the direct experimental impact of bioactive rediscovery and the quantifiable benefits of implementing rational library design.

Table 1: The Cost of Redundancy - Hit Rate Penalty in Full vs. Rational Libraries [1]

| Bioassay Target | Hit Rate: Full Library (1,439 extracts) | Hit Rate: 80% Diversity Rational Library (50 extracts) | Hit Rate: 100% Diversity Rational Library (216 extracts) |

|---|---|---|---|

| Phenotypic: P. falciparum | 11.26% | 22.00% (95% increase) | 15.74% |

| Phenotypic: T. vaginalis | 7.64% | 18.00% (136% increase) | 12.50% |

| Target-Based: Neuraminidase | 2.57% | 8.00% (211% increase) | 5.09% |

Table 2: Efficiency Gains from Rational Library Design [1]

| Metric | Random Selection | Rational LC-MS/MS-Based Selection | Efficiency Gain |

|---|---|---|---|

| Extracts needed for 80% scaffold diversity | 109 (average) | 50 | 2.2-fold more efficient |

| Extracts needed for 100% scaffold diversity | 755 (average) | 216 | 3.5-fold more efficient |

| Library size reduction (to 100% diversity) | N/A | From 1,439 to 216 extracts | 6.6-fold reduction |

| Retention of bioactivity-correlated features | N/A | 8 out of 10 retained in 80% library; All retained in 100% library [1] | Minimal loss of key actives |

Detailed Experimental Protocols

Protocol: Rational Natural Product Library Design via LC-MS/MS and Molecular Networking

Objective: To create a minimized screening library that maximizes chemical diversity and bioactive potential while minimizing redundancy.

Materials:

- Library of crude natural product extracts (e.g., microbial, plant).

- UHPLC system coupled to a high-resolution tandem mass spectrometer (e.g., Q-TOF, Orbitrap).

- Classical Molecular Networking workflow on the GNPS platform (https://gnps.ucsd.edu).

- Custom R scripts for iterative diversity selection (see data availability in [1]).

Method:

- Untargeted LC-MS/MS Profiling: Analyze all library extracts using a standardized, untargeted LC-MS/MS method in data-dependent acquisition (DDA) mode.

- Molecular Networking: Process the raw MS/MS data through the GNPS classical molecular networking workflow. This clusters MS/MS spectra based on similarity, forming "molecular families" that represent similar chemical scaffolds [1].

- Scaffold Diversity Matrix: Generate a binary matrix where rows are extracts and columns are molecular families (scaffolds). A value of 1 indicates the presence of that scaffold in the extract.

- Iterative Library Construction: a. Select the extract containing the highest number of unique scaffolds. b. Add this extract to the "rational library" list and remove all scaffolds it contains from the available pool. c. Recalculate and select the next extract that adds the most new scaffolds to the rational library. d. Repeat until a pre-defined threshold of total scaffold diversity (e.g., 80%, 95%, 100%) is achieved [1].

- Validation: The resulting minimal library of 'n' extracts is ready for high-throughput screening. Its performance can be benchmarked retrospectively or prospectively against random subsets of equal size.

Visualizing the Workflows

Rational vs. Legacy Natural Product Screening Pipelines

Algorithm for Rational Natural Product Library Design [1]

The Scientist's Toolkit: Essential Reagents & Solutions

Table 3: Key Research Reagents & Tools for Minimizing Rediscovery

| Item / Solution | Function / Role in Pipeline | Key Benefit for Avoiding Rediscovery |

|---|---|---|

| High-Resolution LC-MS/MS System | Untargeted metabolomic profiling of extract libraries. | Enables molecular networking and scaffold-based diversity analysis prior to bioassay [1]. |

| GNPS (Global Natural Products Social) Platform | Public web-platform for mass spectrometry data analysis and molecular networking [1]. | Provides free, community-powered tools for dereplication and visualizing chemical redundancy. |

| CETSA (Cellular Thermal Shift Assay) | Confirms target engagement of hits in physiologically relevant cellular environments [11]. | Validates mechanism early, ensuring resource investment in compounds with confirmed, relevant bioactivity. |

| AI/ML Model for ADMET & Bioactivity Prediction | In silico prediction of pharmacokinetics and biological activity (e.g., SwissADME) [10] [11]. | Filters out compounds with poor developability or predictable off-target effects before experimental screening. |

| AutoDock / Similar Docking Software | Predicts binding affinity and pose of small molecules to a protein target [10]. | Enables virtual screening to prioritize extracts or compounds with a higher probability of specific activity. |

| Stable Isotope Labeling Precursors | Used in microbial cultivation to aid in tracing biosynthetic origins and differentiating novel compounds [13]. | Accelerates the deconvolution of novel versus known biosynthetic pathways in active hits. |

The persistent rediscovery of known bioactive compounds remains a critical bottleneck in natural product-based drug discovery. Screening large libraries of extracts often leads to high costs and lengthy timelines with diminishing returns, as these libraries are frequently burdened with structural redundancy [1]. This technical support center is designed within the context of a strategic thesis: proactively maximizing scaffold diversity—the representation of distinct molecular cores or frameworks—is the most effective method to minimize bioactive rediscovery and enhance the identification of novel chemotypes.

This guide provides researchers and drug development professionals with targeted troubleshooting advice, experimental protocols, and essential tools to implement the scaffold diversity principle, transforming natural product screening from a numbers game into a rational search for novelty.

Core Concepts: Scaffolds, Diversity, and Novelty

- Molecular Scaffold: The core ring system and linker structure of a molecule, excluding peripheral side chains. It defines the fundamental topology and shape [14]. In natural products, this core is often honed by evolution for specific biological interactions [13].

- Scaffold Diversity: A measure of the variety and distribution of unique scaffolds within a compound library. High scaffold diversity is a key indicator of broad functional diversity and increased probability of identifying novel bioactive compounds [15].

- Scaffold Hopping: A medicinal chemistry strategy to modify a known active compound's core structure to generate a novel chemotype while retaining or improving biological activity [16]. This principle is reverse-applied in screening: seeking diverse cores to find novel activities.

- Bioactive Rediscovery: The repeated identification of already known active compounds during screening campaigns, a direct consequence of low scaffold diversity in screening libraries [1].

Troubleshooting Guide: Common Issues & Solutions

Problem 1: Persistently Low Hit Rates in High-Throughput Screening (HTS)

- Symptoms: Screening large natural product extract libraries yields very few confirmed hits, or hits are consistently from known chemical classes.

- Root Cause: The library has low effective scaffold diversity due to chemical redundancy. Many extracts contain similar sets of common, well-characterized natural products [1].

- Solution: Implement pre-screening library rationalization.

- Profile your extract library using untargeted LC-MS/MS to obtain fragmentation (MS/MS) data for all detectable metabolites [1].

- Process the data through molecular networking software (e.g., GNPS) to cluster MS/MS spectra based on structural similarity, creating networks where each cluster represents a distinct molecular scaffold or closely related analogues [1].

- Analyze the network to assess scaffold redundancy.

- Select a rational subset of extracts. Use an algorithm to iteratively choose the extract that adds the greatest number of new, unrepresented scaffold clusters to the subset until a desired coverage threshold (e.g., 80-95% of total scaffolds) is reached [1].

- Expected Outcome: Dramatically reduced library size with minimal loss of chemical diversity, leading to significantly increased bioassay hit rates. For example, one study achieved an 84.9% reduction in library size needed to reach maximal scaffold diversity, which translated to hit rate increases from 2.57% to 8.00% in a target-based assay [1].

Problem 2: Frequent Dereplication of Known Compounds

- Symptoms: Promising hits from primary screens are repeatedly identified as well-known natural products (e.g., staurosporine, geldanamycin).

- Root Cause: Library construction is biased towards prolific, easily cultured source organisms or does not prioritize taxonomic and metabolic novelty.

- Solution: Integrate genomic and metabolomic prioritization.

- Sequence potential source organisms (bacteria, fungi) to identify Biosynthetic Gene Clusters (BGCs) using tools like AntiSMASH [13].

- Prioritize strains that contain a high abundance of "cryptic" or rare BGCs not commonly associated with known metabolites [13].

- Use metabolomics (LC-MS) to correlate gene cluster expression with the production of novel secondary metabolites under varied culture conditions [13].

- Focus screening efforts on extracts from these pre-validated, genetically novel strains.

- Expected Outcome: A higher proportion of hits correspond to novel chemical scaffolds, reducing the time and resources wasted on dereplicating known compounds.

Problem 3: Inability to Quantify Library Diversity

- Symptoms: No objective metric to compare libraries or guide procurement and synthesis decisions.

- Root Cause: Reliance on subjective measures or single parameters (e.g., compound count) instead of multi-faceted diversity assessment.

- Solution: Employ a Consensus Diversity Plot (CDP) analysis.

- Calculate scaffold diversity using metrics like the area under the cyclic system recovery (CSR) curve or Shannon Entropy [17].

- Calculate fingerprint diversity using molecular fingerprints (e.g., MACCS keys, ECFP) and Tanimoto similarity [17].

- Plot each library as a point on a 2D CDP with scaffold diversity on one axis and fingerprint diversity on the other. A third dimension (e.g., physicochemical property diversity) can be represented by color [17].

- Interpret: Libraries in the high-scaffold/high-fingerprint quadrant have the greatest global diversity and are most likely to explore novel chemical space [17].

- Expected Outcome: Data-driven decision-making for library design, acquisition, and synthesis focus, enabling strategic investment in areas of chemical space with low representation.

Table 1: Impact of Scaffold-Based Library Rationalization on Screening Efficiency [1]

| Activity Assay | Hit Rate in Full Library (1,439 extracts) | Hit Rate in 80% Scaffold Diversity Library (50 extracts) | Fold Library Size Reduction |

|---|---|---|---|

| P. falciparum (phenotypic) | 11.26% | 22.00% | 28.8-fold |

| T. vaginalis (phenotypic) | 7.64% | 18.00% | 28.8-fold |

| Neuraminidase (target-based) | 2.57% | 8.00% | 28.8-fold |

Frequently Asked Questions (FAQs)

Q1: What exactly is a "scaffold" and how is it different from the whole molecule? A scaffold is the core structure of a molecule—its ring systems and the linkers that connect them—with all variable side chains trimmed back to attachment points [14]. Think of it as the molecular "backbone." Two molecules can have identical scaffolds but very different side chains (appendages), leading to different properties. Focusing on scaffolds prioritizes fundamental shape and topology, which are primary determinants of biological activity [15].

Q2: Why is scaffold diversity more important than just having a large number of compounds? Large compound libraries are often dominated by many analogues of the same few scaffolds [14]. This leads to redundancy. Since similar scaffolds often produce similar biological activities, screening a massive but redundant library increases cost and time without increasing the chance of finding truly novel hits. A smaller library deliberately constructed for high scaffold diversity samples a broader area of biologically relevant chemical space, making novel discoveries more probable [1] [15].

Q3: Can you give a real-world example of scaffold modification leading to a new drug? Yes. The evolution from morphine to tramadol is a classic "scaffold hopping" example. By breaking open morphine's complex, fused multi-ring system (scaffold A), chemists created the simpler, single-ring scaffold of tramadol (scaffold B) [16]. Despite the dramatic 2D structural change, key 3D pharmacophore elements (a basic amine and an aromatic ring) were maintained, preserving analgesic activity while significantly improving the safety and pharmacokinetic profile [16].

Q4: How do I balance exploring novel scaffolds with the need for "drug-like" properties? Novelty and drug-likeness are not mutually exclusive. The strategy is to apply property filters after scaffold selection. When designing or selecting a diverse scaffold set, first ensure synthetic feasibility and structural novelty. Then, during the decoration phase—where side chains are added to the scaffold to create actual screening compounds—apply stringent medicinal chemistry filters (e.g., modified Lipinski's rules, Veber parameters) to the building blocks used for decoration [18] [19]. This ensures the final compound library is both novel and has a high probability of favorable physicochemical properties.

Table 2: Glossary of Key Scaffold Analysis Terms

| Term | Definition | Application in Troubleshooting |

|---|---|---|

| Murcko Framework | An objective, algorithmic definition of a scaffold: all ring systems and the linkers between them [14]. | Standardized scaffold assignment for consistent library analysis and comparison. |

| Scaffold Tree | A hierarchical breakdown of a molecule, iteratively removing rings to reveal scaffold relationships [14]. | Useful for analyzing scaffold complexity and for clustering similar scaffolds. |

| Cyclic System Recovery (CSR) Curve | A plot showing the cumulative percentage of compounds recovered as a function of the cumulative percentage of scaffolds, ordered from most to least frequent [17]. | Quantifies scaffold redundancy. A steep initial curve indicates high redundancy (few scaffolds account for many compounds). |

| Shannon Entropy (SE) | A metric from information theory that measures the "evenness" of the distribution of compounds across scaffolds [17]. | A high SE indicates a library where compounds are evenly distributed across many scaffolds (high diversity). A low SE indicates a library dominated by a few scaffolds. |

Detailed Experimental Protocols

Protocol 1: LC-MS/MS and Molecular Networking for Extract Library Rationalization

- Objective: To reduce the size of a natural product extract library while retaining >95% of its scaffold diversity.

- Materials: Natural product extract library in suitable solvent (e.g., DMSO), UHPLC system coupled to high-resolution tandem mass spectrometer (e.g., Q-TOF, Orbitrap), GNPS account or similar molecular networking software.

- Procedure:

- Data Acquisition: Analyze each extract via untargeted LC-MS/MS. Use a generic gradient (e.g., water/acetonitrile + 0.1% formic acid) and data-dependent acquisition (DDA) to fragment the top ions in each cycle.

- Data Conversion: Convert raw mass spectral files to open formats (.mzML, .mzXML).

- Molecular Networking: Upload files to the GNPS platform. Use the "Classical Molecular Networking" workflow with standard parameters. This clusters MS/MS spectra based on similarity, where each cluster represents a unique molecular family (scaffold) [1].

- Scaffold Diversity Analysis: Download the network information. Each extract is associated with a list of scaffold clusters it contains.

- Rational Library Selection: Implement a greedy algorithm:

- Step 1: Select the extract containing the highest number of scaffold clusters.

- Step 2: Add the extract that contributes the greatest number of scaffold clusters not already present in the selected set.

- Step 3: Iterate Step 2 until a predefined percentage (e.g., 95%) of all unique scaffold clusters in the full library is represented in the selected subset [1].

- Validation: Test the bioactivity of the rationalized library versus the full library in a pilot screen. The rationalized library should achieve a comparable or higher hit rate [1].

Protocol 2: Build-Up Library Synthesis for Natural Product Optimization

- Objective: To rapidly generate and screen analogue libraries of a complex natural product lead without full synthesis of each analogue.

- Materials: A core fragment of the natural product containing a reactive handle (e.g., an aldehyde), a diverse collection of accessory fragments with a complementary handle (e.g., hydrazides), 96-well plates, centrifugal concentrator.

- Procedure (Adapted from MraY inhibitor optimization [20]):

- Design: Divide the natural product into two fragments: a core (containing essential pharmacophore elements) and an accessory part (modifiable for SAR).

- Fragment Synthesis: Chemically prepare the core fragment with a ketone/aldehyde group. Acquire or synthesize a library of accessory fragments with a hydrazide group.

- In-Situ Library Synthesis:

- Pipette 10 mM DMSO solutions of the core fragment and one accessory fragment into a well of a 96-well plate.

- Mix and allow the hydrazone formation reaction to proceed at room temperature for 30-60 minutes.

- Remove solvent via centrifugal evaporation.

- The residue contains the crude product for direct testing.

- In-Situ Screening: Redissolve the residue in buffer/DMSO and directly add to a biochemical or cell-based assay plate. No purification is needed as the reaction is high-yielding and clean [20].

- Validation: Identify active wells, then synthesize and purify the corresponding hydrazone analogue for full characterization and dose-response analysis.

Scaffold-Based Library Rationalization Workflow

Scaffold Hopping Strategies for Novelty

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools for Scaffold-Diverse Discovery

| Category | Item / Resource | Function & Relevance | Example / Source |

|---|---|---|---|

| Analytical & Computational | High-Resolution LC-MS/MS System | Generates the spectral data for molecular networking and dereplication. Essential for Protocol 1. | Q-Exactive Orbitrap (Thermo), timsTOF (Bruker) |

| Molecular Networking Platform | Clusters MS/MS data by structural similarity to visualize and quantify scaffold diversity. | GNPS (Global Natural Products Social Molecular Networking) [1] | |

| Consensus Diversity Plot (CDP) Tool | Provides a 2D visualization of library diversity using multiple metrics (scaffold, fingerprint, properties). | Online Shiny App [17] | |

| Biosynthetic Gene Cluster (BGC) Miner | Identifies cryptic BGCs in genomic data to prioritize organisms likely to produce novel scaffolds. | AntiSMASH [13], DeepBGC | |

| Chemical Libraries | Scaffold-Diverse Screening Libraries | Commercially available libraries designed explicitly for high scaffold/chemotype diversity. | Life Chemicals Scaffold Library (1,580 scaffolds) [19], ChemDiv Novel Scaffolds [18] |

| Building Block Collections | Diverse sets of fragments for decorating core scaffolds during library synthesis, ensuring final compounds are drug-like. | Enamine, Sigma-Aldrich Building Blocks | |

| Synthetic & Optimization | In-Situ Screening Kits | Microplates and reagents optimized for performing reactions directly in assay plates (e.g., amine aldehydes, hydrazides). | Useful for implementing Protocol 2 (Build-Up Libraries). |

| Diversity-Oriented Synthesis (DOS) Pathways | Synthetic routes designed to yield multiple distinct scaffolds from common intermediates, maximizing skeletal diversity [15]. | Published DOS pathways (e.g., using branching cascades). |

Overcoming the challenge of bioactive rediscovery requires a paradigm shift from screening sheer volume to screening intelligent diversity. The Scaffold Diversity Principle provides the framework for this shift. By leveraging modern analytical techniques like LC-MS/MS-based molecular networking to rationally design screening libraries, employing computational tools like Consensus Diversity Plots for assessment, and adopting efficient strategies like build-up libraries for optimization, researchers can systematically prioritize novel core structures.

This focused approach minimizes redundancy, increases hit rates, and maximizes the return on investment in natural product drug discovery, ensuring that this historically fertile field continues to deliver the novel chemotypes needed to address emerging therapeutic challenges.

Practical Solutions: Methodologies for Rational Library Design and Advanced Dereplication

Technical Support Center: Troubleshooting and FAQs

This technical support center provides resources for researchers implementing LC-MS/MS and molecular networking to rationally minimize natural product screening libraries. The content is framed within the broader thesis that reducing chemical redundancy is a primary strategy for minimizing bioactive rediscovery and accelerating drug discovery pipelines [1].

Frequently Asked Questions (FAQs)

Q1: What is the core principle behind MS-based library rationalization? A1: The method uses untargeted LC-MS/MS data to group molecules from large extract libraries into scaffolds based on MS/MS spectral similarity, which correlates with structural similarity [1]. A computational algorithm then selects the smallest subset of extracts that capture the maximum scaffold diversity from the original library, dramatically reducing its size while retaining bioactive potential [1].

Q2: How significant is the library size reduction, and does it affect bioactivity? A2: The reduction is substantial. In one study, a full library of 1,439 fungal extracts was reduced to a rational library of 216 extracts (an 85% reduction) while retaining 100% of the detected scaffolds [1]. Crucially, bioassay hit rates often increase in the rationalized library because chemical redundancy is minimized. For example, hit rates against P. falciparum increased from 11.3% in the full library to 22.0% in a highly reduced (50-extract) rational library [1].

Q3: What types of screening assays is this method validated for? A3: The method has been validated across major assay types used in high-throughput screening (HTS). This includes phenotypic whole-organism assays (e.g., against the parasites P. falciparum and T. vaginalis) and target-based assays using purified enzymes (e.g., influenza neuraminidase) [1]. The increased hit rate holds true across these different formats.

Q4: Are the specific chemical features correlated with bioactivity lost during rationalization? A4: Data shows excellent retention of bioactive features. In a validation study, 10 MS features significantly correlated with anti-Plasmodium activity were identified in the full library. All 10 were retained in the rational library designed for 100% scaffold diversity, and 8 were retained in the more aggressively reduced (80% diversity) library [1].

Q5: What software and computational tools are required? A5: The workflow requires standard LC-MS/MS data processing software, the GNPS (Global Natural Products Social Molecular Networking) platform for classical molecular networking, and custom R code for the scaffold-based selection algorithm [1]. The referenced study makes its R code freely available.

Troubleshooting Guides

Guide 1: Low MS/MS Signal or Poor-Quality Spectra

Problem: Weak, noisy, or inconsistent MS/MS spectra, leading to poor molecular networking results.

- Potential Causes & Solutions:

- Cause: Contaminated ion source or mobile phase.

- Solution: Perform systematic maintenance. Clean the MS/MS interface and source components [21]. Replace mobile phases and solvents with fresh, high-purity grades. Check for buffer deposits or discolored fittings indicating slow leaks [21].

- Cause: Declining LC column performance or pump issues.

- Solution: Review pressure traces against archived records to detect overpressure or leaks [21]. Replace the LC column if peak shape deteriorates (e.g., fronting, tailing). Ensure pump seals and check valves are functioning correctly.

- Cause: Incorrect MS/MS parameters or calibration drift.

- Solution: Regularly perform and review System Suitability Tests (SST) with neat standards to monitor instrument health [21]. Verify mass calibration, detector voltage, and resolution settings. Compare post-column infusion peak heights to historical data to isolate sensitivity loss to the MS/MS [21].

Guide 2: Ineffective Library Rationalization (Poor Diversity or Bioactivity Loss)

Problem: The rationalized library does not achieve expected scaffold coverage or shows decreased bioactivity.

- Potential Causes & Solutions:

- Cause: Inadequate molecular networking parameters.

- Solution: Optimize parameters on the GNPS platform (precursor/product ion mass tolerance, cosine score threshold) to ensure scaffolds meaningfully group structurally related molecules. Validate networking results with known standards.

- Cause: The selection algorithm is not prioritizing true scaffold diversity.

- Solution: Verify that the algorithm selects extracts based on unique, non-overlapping scaffolds. Ensure adducts and in-source fragments are properly accounted for to avoid inflating apparent diversity [1].

- Cause: The original extract library has extremely low chemical diversity.

- Solution: The method relies on existing diversity. Assess the chemical space of your full library via principal component analysis (PCA) of MS data prior to rationalization.

Guide 3: Failed Bioactivity Correlation with MS Features

Problem: Unable to reliably correlate bioactive assay hits with specific MS/MS features or scaffolds.

- Potential Causes & Solutions:

- Cause: Bioactivity is caused by synergistic effects of multiple compounds, not a single scaffold.

- Solution: Consider more complex correlation models or use bioaffinity-guided purification techniques (e.g., affinity ultrafiltration, magnetic separation) to directly isolate target-binding compounds from active extracts [22].

- Cause: The active compound is present at very low abundance or ionizes poorly.

- Solution: Employ alternative ionization modes (e.g., switch from ESI+ to ESI-). Use fractionation prior to LC-MS/MS to concentrate minor components.

- Cause: The bioactive scaffold is not detected under the used chromatographic conditions.

- Solution: Broaden the chromatographic method (e.g., wider polarity gradient) or use multiple separation methods to capture a more comprehensive metabolome.

Experimental Protocols

Protocol 1: Core Workflow for LC-MS/MS-Based Library Rationalization

This protocol is adapted from the method validated for fungal extract libraries [1].

Sample Preparation:

- Prepare crude natural product extracts (e.g., from microbial fermentation, plants) in a suitable solvent for LC-MS/MS, typically at a standardized concentration.

- Include a solvent blank and quality control (QC) samples pooled from all extracts.

Untargeted LC-MS/MS Data Acquisition:

- Analyze all library extracts using a standardized, high-resolution LC-MS/MS method.

- LC Conditions: Use a reversed-phase C18 column with a water/acetonitrile gradient (e.g., 5% to 100% organic over 20-30 minutes) containing 0.1% formic acid.

- MS Conditions: Use data-dependent acquisition (DDA) in positive and/or negative electrospray ionization (ESI) mode. Acquire full-scan MS spectra (e.g., m/z 100-1500) followed by MS/MS scans of the top N most intense ions.

Data Processing and Molecular Networking:

- Convert raw data files (.d, .raw) to open formats (.mzML, .mzXML).

- Upload all files to the GNPS platform.

- Perform "Classical Molecular Networking" analysis. Key parameters: precursor ion mass tolerance (0.02 Da), product ion tolerance (0.02 Da), minimum cosine score for network edges (0.7).

- The output is a network where nodes represent consensus MS/MS spectra and edges connect spectra with high similarity. Each connected cluster (scaffold family) groups molecules with shared structural cores [1].

Scaffold-Based Library Selection:

- Using custom R code (as provided in the original study [1]), analyze the molecular network.

- The algorithm identifies all unique scaffold clusters present in the library.

- It iteratively selects the extract that contributes the highest number of scaffolds not yet represented in the growing rational library.

- The process continues until a user-defined threshold (e.g., 80%, 95%, 100%) of total scaffold diversity is captured.

Validation:

- Test the bioactivity of the rationalized library versus the full library in relevant phenotypic or target-based assays [1].

- Use statistical correlation (e.g., Spearman rank) to link bioactivity scores in the full library to specific MS1 features (m/z-RT pairs). Confirm the retention of these bioactive features in the rational library [1].

Protocol 2: System Suitability Test (SST) for LC-MS/MS Performance Monitoring

A robust SST is critical for troubleshooting [21].

- SST Solution: Prepare a neat standard mixture containing 5-10 known natural products or metabolites covering a range of masses and polarities.

- Daily Procedure: Inject the SST solution at the beginning and end of each sequencing batch.

- Key Performance Indicators (KPIs) to Monitor and Archive:

- Chromatography: Retention time stability (±0.1 min), peak shape (asymmetry factor), and column pressure.

- MS: Signal intensity (peak height/area), signal-to-noise ratio (S/N), and mass accuracy (ppm error).

- Action: Establish acceptable ranges for each KPI. Investigate the root cause if any KPI falls outside its range before processing experimental samples [21].

Data Presentation

Table 1: Bioactivity Hit Rate Comparison: Full Library vs. Rationalized Libraries [1]

| Activity Assay | Hit Rate: Full Library (1,439 extracts) | Hit Rate: 80% Scaffold Diversity Library (50 extracts) | Hit Rate: 100% Scaffold Diversity Library (216 extracts) |

|---|---|---|---|

| P. falciparum (phenotypic) | 11.26% | 22.00% | 15.74% |

| T. vaginalis (phenotypic) | 7.64% | 18.00% | 12.50% |

| Neuraminidase (target-based) | 2.57% | 8.00% | 5.09% |

Table 2: Retention of Bioactivity-Correlated MS Features in Rational Libraries [1]

| Activity Assay | # of Correlated Features in Full Library | # Retained in 80% Diversity Library | # Retained in 100% Diversity Library |

|---|---|---|---|

| P. falciparum | 10 | 8 | 10 |

| T. vaginalis | 5 | 5 | 5 |

| Neuraminidase | 17 | 16 | 17 |

Experimental and Data Analysis Workflows

Workflow for LC-MS/MS-Based Library Rationalization

LC-MS/MS Troubleshooting Decision Tree

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagents and Materials for MS-Based Library Rationalization

| Item | Function in the Workflow | Key Considerations |

|---|---|---|

| High-Purity Solvents (ACN, MeOH, Water) | Mobile phase components for LC-MS/MS. | Use LC-MS grade to minimize background noise and ion suppression [21]. |

| Volatile Additives (Formic Acid, Ammonium Acetate) | Mobile phase modifiers to promote ionization. | Typically used at 0.1% concentration. Choose acid or buffer based on ionization mode. |

| U/HPLC Column (e.g., C18) | Chromatographic separation of complex extracts. | Column choice (length, particle size, pore size) defines resolution and run time. |

| MS Calibration Solution | Accurate mass calibration of the mass spectrometer. | Required daily or per sequencing batch to ensure mass accuracy < 5 ppm. |

| System Suitability Test (SST) Mix | A cocktail of standard compounds to verify LC and MS performance [21]. | Should include compounds covering a range of RT and m/z relevant to your samples. |

| Solid Support for Bioaffinity Fishing (e.g., Magnetic Beads) | For validating bioactive scaffolds via target-binding assays [22]. | Beads can be coated with target proteins (e.g., enzymes) to directly isolate ligands from active extracts. |

| Molecular Networking Software (GNPS) | Cloud-based platform for processing MS/MS data into scaffold networks [1]. | Central to the workflow; requires data in open formats (.mzML). |

FAQs: Common Technical Challenges in Virtual Dereplication

This section addresses frequent technical issues encountered by researchers when implementing AI-driven virtual dereplication workflows.

FAQ 1: My AI model for activity prediction consistently yields high false-positive rates. What could be the root cause, and how can I address it?

- Answer: High false-positive rates often stem from biased or imbalanced training data [12]. If your dataset overrepresents certain compound classes or active motifs, the model will be biased toward them. To address this:

- Audit Your Data: Use cheminformatics toolkits (e.g., RDKit) to analyze the chemical space coverage of your training set. Check for overrepresentation of specific scaffolds.

- Apply Data Balancing: Implement techniques like Synthetic Minority Over-sampling Technique (SMOTE) for small datasets or under-sampling for major classes [12].

- Validate Rigorously: Use a time-split or scaffold-split validation protocol instead of random splitting. This tests the model's ability to generalize to truly novel chemotypes, a critical requirement in dereplication [12].

- Answer: Integration failures typically involve data formatting or preprocessing discrepancies. Follow this protocol:

- Standardize Your Data: Convert all raw spectra to open formats (e.g., .mzML) using tools like MSConvert (ProteoWizard). Ensure consistent collision energy settings across datasets.

- Align Metadata: Confirm that your compound metadata (precursor m/z, retention time, ionization mode) matches the required fields for the target platform, such as the Global Natural Products Social Molecular Networking (GNPS) platform [23].

- Pre-process with GNPS Tools: Use the GNPS data pipeline (available on GitHub) to filter noise, peak-pick, and align spectra before uploading. This maximizes spectral matching fidelity [23] [9].

FAQ 3: When performing virtual screening, my docking scores do not correlate with subsequent experimental bioassay results. Why does this happen?

- Answer: A lack of correlation suggests a disconnect between the computational model and the biological reality. Key factors include:

- Inappropriate Protein Structure: The crystal structure used may be in an inactive conformation or lack crucial water molecules/cofactors. Solution: Use homology modeling with MD relaxation or try ensemble docking against multiple protein conformations.

- Over-simplified Scoring: Standard docking scores estimate affinity poorly for complex NPs. Solution: Post-process hits with more rigorous Molecular Mechanics/Generalized Born Surface Area (MM/GBSA) calculations or apply machine-learning-based affinity predictors trained on NP-like compounds [24].

- Ignoring Compound Stability: The simulation assumes a pure, stable ligand. In reality, compounds in a crude extract may degrade or interact. Always cross-reference virtual hits with analytical chemistry data (LC-MS) from your extract [23].

FAQ 4: How can I assess the "novelty" of a natural product candidate identified through an AI dereplication pipeline to avoid rediscovery?

- Answer: Novelty assessment requires multi-layered database interrogation. Do not rely on a single source.

- Perform Multi-Database Queries: Search candidate structures against comprehensive, curated NP databases (e.g., COCONUT, NPASS, LOTUS) using both exact and substructure searches [23].

- Analyse the Chemical Context: Use a tool like ChemMN to visualize the candidate's position within a larger molecular network of known compounds. True novelty is suggested by a cluster of related, unknown spectra distinct from clusters of known compounds [23] [9].

- Check Patent Literature: Use commercial tools like SciFinder or Reaxys to search patent claims, which often contain novel structures not yet in academic databases.

FAQ 5: My institution has limited HPC resources. Can I run meaningful AI-based dereplication?

- Answer: Yes, by leveraging optimized, pre-trained models and cloud-based workflows.

- Use Pre-trained Models: Platforms like Insilico Medicine's Chemistry42 or Atomwise offer access to cloud-based, pre-trained models for property prediction and target identification, requiring only compound SMILES as input [24].

- Employ Transfer Learning: Start with a large, pre-trained model on general chemical libraries (e.g., PubChem). Finetune it on your smaller, specialized NP dataset using cost-effective cloud GPU instances, which requires less computational power than training from scratch [12].

- Utilize Efficient Algorithms: For similarity searching and clustering, use highly optimized algorithms like Tanimoto similarity with bit-based fingerprints or the GROUPE algorithm for MS/MS spectra, which are less resource-intensive than deep learning [23].

Step-by-Step Troubleshooting Guides

This guide adapts the structured five-step troubleshooting framework for technical problem-solving to the context of computational NP research [25].

Guide 1: Troubleshooting Failed Spectral Dereplication in GNPS

Issue: Molecular networking in GNPS fails to link new MS/MS spectra to any known library spectra, resulting in no annotations.

Step 1: Identify the Problem

- Action: Precisely define the failure. Is it a universal match failure, or only for specific ionisation modes or m/z ranges? Check job logs on the GNPS website for error messages [23].

- Success Indicator: A clear statement such as: "MS/MS spectra from positive-mode ESI data in the 300-800 m/z range yield zero library matches, despite strong signal intensity."

Step 2: Establish Probable Cause

- Action: Analyze the data preprocessing steps. The most common causes are:

- Incorrect preprocessing: Poor peak picking or excessive noise filtering removed genuine fragment ions.

- Parameter mismatch: The precursor/fragment mass tolerance set in the GNPS job is stricter than your instrument's accuracy.

- Gap in library coverage: The natural product chemotype in your sample is not represented in the selected reference libraries [23] [9].

- Success Indicator: Hypothesis formation, e.g., "The most probable cause is a mismatch between the 0.05 Da fragment tolerance setting and the instrument's true 0.1 Da accuracy."

Step 3: Test a Solution

- Action: Test one parameter change at a time. First, re-submit a subset of data with relaxed mass tolerance parameters (e.g., increase from 0.01 Da to 0.02 Da for precursor mass, and 0.05 Da to 0.1 Da for fragment ions). Use the "Filter Spectrum" module in GNPS to apply minimal noise filtering [23].

- Success Indicator: The GNPS job completes and yields a higher number of spectral matches for the test subset.

Step 4: Implement the Solution

- Action: Apply the validated parameter set (e.g., relaxed mass tolerances) to the entire dataset. Re-run the full molecular networking job. Document the exact parameters used in your laboratory information management system (LIMS) [25].

- Success Indicator: The full job completes successfully with improved annotation rates.

Step 5: Verify Functionality

- Action: Validate the biological relevance of the new annotations. Cross-check a few matched compounds against your bioassay data. Does the predicted compound class align with the observed activity? Perform a manual check of raw vs. library spectra for a high-scoring match to confirm quality [9].

- Success Indicator: A significant proportion of new annotations are biologically plausible, and manual inspection confirms spectral match quality.

Guide 2: Debugging a Machine Learning Model with Poor Generalization

Issue: An in-house ML model for predicting antibacterial activity performs well on training/validation data but fails to predict the activity of new, structurally distinct NP batches.

Step 1: Identify the Problem

- Action: Confirm the generalization failure. Use the model to predict a held-out external test set comprising compounds from a new microbial source. Quantify the drop in performance metrics (e.g., AUC-ROC, precision) [12].

- Success Indicator: Clear metrics showing a large performance gap between internal validation (>0.8 AUC) and external testing (<0.6 AUC).

Step 2: Establish Probable Cause

- Action: Diagnose model bias. Analyze the model's applicability domain. Project the external test set compounds into the chemical space of the training set using Principal Component Analysis (PCA) or t-SNE. The probable cause is that the new compounds fall outside the chemical space the model learned from (domain shift) [12].

- Success Indicator: Visualization shows a clear separation between the chemical space clusters of the training and new test compounds.

Step 3: Test a Solution

- Action: Implement a domain-adversarial neural network (DANN) or similar technique designed to learn features invariant to the source (e.g., microbial genus). Train a simplified version on a small subset of data that includes both old and new chemotypes [12].

- Success Indicator: The retrained model shows improved (though not perfect) predictive accuracy on the new test subset.

Step 4: Implement the Solution

- Action: Retrain the main production model using the domain-adversarial architecture on the entire available dataset, ensuring representation from diverse biological sources. Deploy the new model with a built-in applicability domain checker that flags predictions for compounds outside the training space [24] [12].

- Success Indicator: New model is deployed, and its uncertainty estimation module actively flags low-confidence predictions.

Step 5: Verify Functionality

- Action: Establish a continuous validation pipeline. Routinely test the model's predictions on new, experimentally confirmed active/inactive compounds. Monitor performance drift over time and schedule periodic model retraining with newly acquired data [12].

- Success Indicator: A dashboard tracking model performance on new data shows stable, reliable metrics over several months.

Detailed Experimental Protocols

Protocol 1: Molecular Networking for Dereplication via GNPS

This protocol details the creation of a molecular network to group related spectra and identify known compounds [23] [9].

Objective: To rapidly group MS/MS data from a natural product extract and annotate known compounds via spectral matching.

Materials: LC-MS/MS data file (.raw, .d, .wiff format), computer with internet access, GNPS account.

Method:

- Data Conversion: Convert raw LC-MS/MS files to open .mzML format using MSConvert (ProteoWizard). Enable peak picking for MS2 spectra.

- File Upload: Navigate to the GNPS website (gnps.ucsd.edu). Use the "Upload Files" function to transfer your .mzML files to the GNPS/MassIVE server.

- Job Parameterization: In the "Molecular Networking" workflow, set key parameters:

- Precursor Ion Mass Tolerance: 0.02 Da.

- Fragment Ion Mass Tolerance: 0.02 Da.

- Minimum Cosine Score: 0.7 (for relatedness).

- Minimum Matched Fragment Ions: 6.

- Library Search Parameters: Set to search against public GNPS libraries and enable advanced search options like "search analogs."

- Job Submission & Monitoring: Submit the job. Processing time varies with data size. Monitor job status via the provided link.