Harnessing AI for Drug Discovery: A Guide to Molecular Docking with Natural Products

This article provides a comprehensive guide for researchers and drug development professionals on implementing AI-guided molecular docking for bioactive natural products.

Harnessing AI for Drug Discovery: A Guide to Molecular Docking with Natural Products

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on implementing AI-guided molecular docking for bioactive natural products. It explores the foundational principles of virtual screening, details practical workflows from target selection to pose prediction, addresses common computational challenges and optimization strategies, and compares AI-driven methods with traditional docking and experimental validation. The content synthesizes current tools, best practices, and future directions to accelerate the discovery of novel therapeutics from nature's chemical library.

From Nature to Code: The Foundational Principles of AI-Driven Docking

Application Note ANP-2024-01: AI-Prioritized Screening of Natural Product Libraries for α-Glucosidase Inhibition

Context: As part of a thesis on AI-guided molecular docking, this note details the integration of computational pre-screening with experimental validation to identify novel anti-diabetic leads from natural product (NP) libraries. AI models are trained on known bioactivity data to prioritize compounds for docking, which in turn predicts high-affinity binders for in vitro assay.

Quantitative Data Summary: Table 1: AI-Docking Performance Metrics (Virtual Screening of 10,000 NP-like Compounds)

| Metric | Value | Description |

|---|---|---|

| Enrichment Factor (EF1%) | 28.5 | Fold increase in hit rate over random screening in top 1% of ranked list. |

| Area Under ROC Curve (AUC) | 0.91 | Overall ranking accuracy (1.0 is perfect). |

| Number of Virtual Hits | 125 | Compounds with docking score ≤ -9.0 kcal/mol. |

| Experimental Hit Rate | 12.8% | 16 confirmed inhibitors from 125 virtual hits tested. |

| Most Potent IC₅₀ | 0.85 µM | Isolated flavonoid derivative (NP-ASF-102). |

Table 2: Top 5 Validated Hits from *Morus alba Root Extract*

| Compound ID | AI Docking Score (kcal/mol) | Experimental IC₅₀ (µM) | Compound Class |

|---|---|---|---|

| NP-ASF-101 | -10.2 | 2.34 | Prenylated flavonoid |

| NP-ASF-102 | -11.5 | 0.85 | Geranylated chalcone |

| NP-ASF-103 | -9.8 | 5.67 | Stilbene glycoside |

| NP-ASF-104 | -9.3 | 12.91 | Moracinoside analog |

| NP-ASF-105 | -10.7 | 1.89 | Diels-Alder adduct |

Protocol 1: AI-Guided Virtual Screening Workflow for α-Glucosidase Inhibitors

Objective: To computationally identify high-probability bioactive NPs from a digital library.

Materials (Research Reagent Solutions & Key Tools):

- Natural Product Digital Library: (e.g., COCONUT, NPASS) – A curated database of NP structures in SDF format.

- Target Protein Structure: Human α-glucosidase (PDB ID: 5NN8), prepared by removing water, adding hydrogens, and assigning charges.

- AI/ML Software: A random forest or deep neural network model pre-trained on known α-glucosidase inhibitors.

- Molecular Docking Suite: AutoDock Vina or GNINA.

- Scripting Environment: Python with RDKit and PyMOL for ligand/target preparation.

Procedure:

- Library Preparation: Standardize the NP library (desalting, tautomer generation) using RDKit. Generate 3D conformers.

- AI-Based Prioritization: Input the prepared library into the pre-trained AI model. The model scores each compound based on predicted bioactivity likelihood. Select the top 5% for docking.

- Molecular Docking: a. Define the active site on α-glucosidase using coordinates from a known co-crystallized ligand. b. Configure the docking grid box to encompass the active site with 1Å spacing. c. Execute batch docking of the AI-prioritized subset using Vina. Use an exhaustiveness value of 32. d. For each compound, retain the pose with the most favorable (lowest) binding affinity score.

- Hit Selection: Rank all docked compounds by score. Apply a filter for compounds forming key hydrogen bonds with catalytic residues (Asp616, Asp518). Select the top 125 for experimental validation.

Protocol 2: In Vitro Validation of AI-Derived Hits Using α-Glucosidase Inhibition Assay

Objective: To experimentally confirm the inhibitory activity of virtually screened hits.

Materials (Research Reagent Solutions & Key Tools):

- Enzyme Solution: α-Glucosidase from Saccharomyces cerevisiae (0.2 U/mL in 0.1 M phosphate buffer, pH 6.8).

- Substrate Solution: 5 mM p-Nitrophenyl-α-D-glucopyranoside (pNPG) in buffer.

- Test Compounds: NP fractions or pure compounds, dissolved in DMSO (final [DMSO] ≤ 1% v/v).

- Positive Control: Acarbose, prepared as a 1 mM stock in buffer.

- Stop Solution: 1 M Na₂CO₃.

- Microplate Reader: Capable of reading absorbance at 405 nm.

Procedure:

- In a 96-well plate, add 70 µL of phosphate buffer to each well.

- Add 10 µL of test compound (or buffer for control, or acarbose for reference) to respective wells.

- Initiate the reaction by adding 10 µL of enzyme solution. Pre-incubate at 37°C for 10 min.

- Add 10 µL of pNPG substrate to start the enzymatic reaction. Incubate at 37°C for 30 min.

- Terminate the reaction by adding 100 µL of Na₂CO₃ stop solution.

- Immediately measure the absorbance at 405 nm (A₅ₐₘₚₗₑ).

- Calculate Inhibition: % Inhibition = [1 - (A₅ₐₘₚₗₑ - A₅ₐₘₚₗₑ ᵇˡᵃⁿᵏ) / (A₀ₙₜᵣₒₗ - A₀ₙₜᵣₒₗ ᵇˡᵃⁿᵏ)] × 100.

- Determine IC₅₀ values by testing a range of concentrations (e.g., 0.1-100 µM) and fitting the dose-response data.

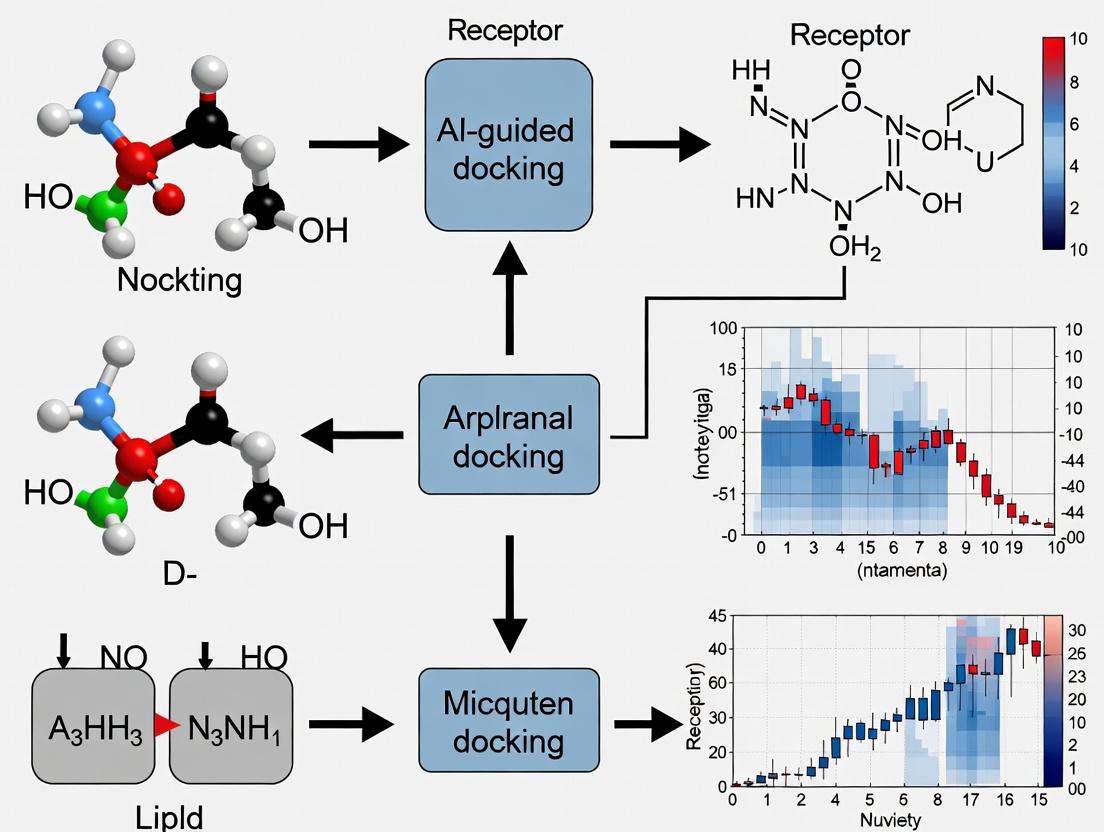

Visualization: Diagram 1: AI-Guided NP Drug Discovery Pipeline

Title: AI & Docking Pipeline for Natural Product Screening

Visualization: Diagram 2: α-Glucosidase Inhibition Signaling Pathway

Title: NP Inhibition of α-Glucosidase in Glucose Regulation

The Scientist's Toolkit: Key Reagents for NP α-Glucosidase Research

| Item | Function & Application |

|---|---|

| pNPG Substrate | Chromogenic substrate; cleavage by α-glucosidase releases yellow p-nitrophenol, measurable at 405 nm. |

| Recombinant α-Glucosidase | Standardized, pure enzyme source for consistent, high-throughput inhibition assays. |

| Acarbose Control | Gold-standard inhibitor; essential positive control for validating assay performance. |

| DMSO (Anhydrous) | Universal solvent for dissolving diverse, often hydrophobic, natural product compounds. |

| 96-Well Assay Plates | Platform for high-throughput screening of multiple NP extracts or fractions simultaneously. |

| Pre-Trained AI Model | Accelerates discovery by computationally prioritizing NPs with high bioactive potential. |

| Curated NP-SDF Library | Digital starting point for virtual screening; contains essential 2D/3D structural data. |

This application note details the traditional molecular docking workflow, its established protocols, and inherent limitations. This foundational knowledge is critical within our broader thesis on developing AI-guided molecular docking pipelines. The objective is to enhance the discovery and optimization of bioactive natural products, which are characterized by complex chemistry and often poor pharmacokinetic profiles, by moving beyond traditional docking's constraints.

The Traditional Molecular Docking Workflow

The standard workflow is a sequential, multi-step process aimed at predicting the preferred orientation and binding affinity of a small molecule (ligand) within a target protein's binding site.

Traditional Molecular Docking Sequential Workflow

Detailed Experimental Protocols

Protocol 1: Protein Target Preparation

- Objective: Generate a clean, biologically relevant protein structure for docking.

- Software Tools: UCSF Chimera, AutoDock Tools, Schrödinger Protein Preparation Wizard.

- Steps:

- Retrieve Structure: Download the 3D crystal structure (e.g., of a kinase or protease) from the Protein Data Bank (PDB). Prefer structures with high resolution (<2.0 Å) and co-crystallized ligands.

- Remove Extraneous Molecules: Delete all water molecules, ions, and non-essential cofactors. Retain crucial cofactors (e.g., Mg²⁺, heme) if involved in binding.

- Add Missing Components: Use modeling tools to add missing hydrogen atoms, side chains, or loop regions.

- Assign Protonation States: At physiological pH (7.4), assign correct protonation states to histidine, aspartate, glutamate, and lysine residues using empirical pKa prediction algorithms (e.g., PROPKA).

- Energy Minimization: Perform a restrained minimization (RMSD cutoff: 0.3 Å) to relieve steric clashes introduced during hydrogen addition and protonation, using an OPLS3e or AMBER force field.

Protocol 2: Ligand Library Preparation

- Objective: Create a library of 3D small molecule structures in a docking-ready format.

- Software Tools: Open Babel, RDKit, LigPrep (Schrödinger).

- Steps:

- Source Compounds: Obtain 2D structures (SMILES or SDF) from databases like PubChem, ZINC, or in-house natural product libraries.

- Generate 3D Conformations: Convert 2D structures to 3D. For each ligand, generate multiple low-energy 3D conformers (e.g., 10-50) to account for flexibility.

- Optimize Geometry: Perform a molecular mechanics minimization (using MMFF94 or OPLS3e) to ensure reasonable bond lengths and angles.

- Assign Charges and Tautomers: Calculate partial atomic charges (e.g., Gasteiger-Marsili, AM1-BCC) and generate relevant tautomeric and stereoisomeric states at pH 7.4 ± 0.5.

- Output Format: Save all structures in a unified format (e.g., MOL2, SDF) with correct charge information.

Protocol 3: Docking Execution with AutoDock Vina

- Objective: Perform the computational docking of the ligand library into the defined protein binding site.

- Software: AutoDock Vina.

- Steps:

- Define Search Space: Create a configuration file (

config.txt). Set thecenter_x, center_y, center_zcoordinates to the centroid of the known binding site or a reference ligand. Define thesize_x, size_y, size_zof the search box to encompass the entire site with a margin of ~5-10 Å. - Prepare Input Files: Convert the prepared protein to PDBQT format using AutoDock Tools, preserving assigned charges and atom types. Convert the ligand library to PDBQT format.

- Run Docking: Execute the command:

vina --config config.txt --ligand ligand.pdbqt --out output.pdbqt --log log.txt. For a library, script this process to run sequentially. - Set Parameters: Use an

exhaustivenessvalue of 8-32 (higher for more thorough search). The default number of binding modes (num_modes) is 9. The energy range (energy_range) is typically set to 3-4 kcal/mol.

- Define Search Space: Create a configuration file (

Protocol 4: Post-Docking Analysis

- Objective: Evaluate and rank docking poses based on scoring functions and interaction analysis.

- Software Tools: UCSF Chimera, PyMOL, Discovery Studio.

- Steps:

- Extract Scores: Parse the output log files to extract the binding affinity scores (in kcal/mol) for each ligand pose.

- Rank Ligands: Rank all ligands from the library by their best (most negative) docking score.

- Visualize Top Poses: Visually inspect the top 10-100 poses. Check for:

- Correct placement of key pharmacophoric features.

- Formation of specific hydrogen bonds, hydrophobic contacts, and π-π stacking.

- Absence of severe steric clashes.

- Complementarity with the binding site shape.

- Cluster Similar Poses: Cluster remaining poses by root-mean-square deviation (RMSD) to identify consensus binding modes.

Limitations of the Traditional Workflow

The traditional approach, while foundational, suffers from well-documented limitations that are particularly acute for natural products research.

Quantitative Comparison of Key Limitations

Table 1: Core Limitations of Traditional Molecular Docking

| Limitation Category | Specific Issue | Typical Impact on Results | Quantitative Example/Evidence |

|---|---|---|---|

| Scoring Function Accuracy | Over-reliance on simplified physics/empirical terms. Poor at estimating absolute binding free energy. | High false positive/negative rates. | RMSD between predicted and experimental ΔG can exceed 2-3 kcal/mol, equating to >100-fold error in Ki. |

| Protein Rigidity | Treatment of protein as a static structure (rigid receptor). | Misses induced-fit binding and allosteric effects. | For targets with >1.5 Å backbone movement upon binding, docking accuracy can drop by 30-50%. |

| Solvent & Entropy | Implicit or absent solvent. Poor handling of entropic contributions (e.g., water displacement). | Overestimates affinity for polar, solvent-exposed ligands. | Neglecting explicit water networks can invert the rank order of congeneric series. |

| Chemical Space Bias | Standard scoring functions trained on synthetic drug-like molecules (e.g., Lipinski-compliant). | Systematic bias against complex natural product scaffolds (polycyclic, glycosylated). | Success rates for macrocycles or saponins can be 20-40% lower than for benzodiazepines. |

| Conformational Sampling | Limited exploration of ligand and protein conformational space due to computational cost. | May miss the true bioactive pose. | Exhaustiveness values >256 required for thorough sampling, often computationally prohibitive for large libraries. |

Causal Map of Traditional Docking Limitations

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools and Reagents for Traditional Docking Experiments

| Item / Resource | Category | Primary Function & Relevance |

|---|---|---|

| RCSB Protein Data Bank (PDB) | Database | Primary repository for experimentally determined 3D structures of proteins and nucleic acids. Source of the initial target coordinates. |

| PubChem / ZINC20 Database | Database | Public repositories of millions of purchasable and virtual small molecule compounds. Source for ligand libraries. |

| UCSF Chimera / PyMOL | Visualization Software | Critical for visualizing protein-ligand complexes, analyzing binding interactions, and preparing publication-quality figures. |

| AutoDock Vina / GNINA | Docking Engine | Widely used, open-source programs that perform the core docking calculation and scoring. |

| Schrödinger Suite / MOE | Commercial Software | Integrated platforms offering robust, validated workflows for protein prep, docking (Glide, Induced Fit), and advanced scoring. |

| RDKit | Cheminformatics Library | Open-source toolkit for ligand preprocessing, conformer generation, fingerprint calculation, and chemical analysis. |

| High-Performance Computing (HPC) Cluster | Hardware | Essential for docking large compound libraries (>10,000 molecules) in a feasible timeframe through parallelization. |

| Reference Inhibitor / Substrate | Chemical Reagent | A known bioactive molecule for the target. Used to validate the docking setup (reproduce crystallographic pose) and as a positive control in subsequent assays. |

| Virtual Screening Library (e.g., Selleckchem, Enamine) | Commercial Library | Curated collections of drug-like molecules, FDA-approved drugs, or diverse chemical scaffolds for virtual screening campaigns. |

This overview details key AI technologies transforming structural bioinformatics, directly supporting a thesis on AI-guided molecular docking for bioactive natural products research. The integration of deep learning for structure prediction, affinity scoring, and binding site characterization accelerates the discovery and optimization of natural product-derived therapeutics by overcoming traditional limitations of docking vast, structurally complex chemical spaces.

Key Technologies & Application Notes

AlphaFold2 and Related Protein Structure Prediction

Application Note: AlphaFold2 and its successors (e.g., AlphaFold3, RoseTTAFold2) have revolutionized the initial phase of structure-based drug discovery. For natural products research, accurate ab initio prediction of target protein structures (often without experimental templates) is critical, as many targets (e.g., novel plant or microbial enzymes) lack crystallographic data. These models provide reliable frameworks for docking studies.

Protocol: Generating a Custom Protein Structure Prediction

- Target Sequence Preparation: Obtain the canonical amino acid sequence (UniProt format). Use multiple sequence alignment (MSA) tools like HHblits or JackHMMER against genetic databases (e.g., UniClust30) to generate aligned sequence files.

- Model Selection & Configuration: Choose a model (e.g., AlphaFold2 via ColabFold implementation for speed). Configure to use defined templates if relevant, but typically run in "no-template" mode for novel targets.

- Hardware Setup: Utilize GPU-accelerated environment (e.g., NVIDIA A100, 40GB+ VRAM). For ColabFold, a Google Colab Pro+ session is sufficient.

- Execution: Run the prediction pipeline. Key parameters:

num_recycles=12,num_models=5,rank_by=plDDT. - Model Analysis: Select the top-ranked model based on predicted Local Distance Difference Test (pLDDT) and predicted Aligned Error (PAE). A pLDDT > 70 indicates high confidence. Use the PAE plot to assess domain-level confidence.

- Preparation for Docking: Subject the predicted model to energy minimization using a molecular dynamics package (e.g., AMBER, GROMACS) or a quick minimization in UCSF Chimera to correct minor steric clashes.

AI-Driven Molecular Docking & Scoring Functions

Application Note: Traditional docking scores (e.g., Vina, Glide) often fail to accurately predict binding affinities for natural products due to their complex, flexible scaffolds. Graph Neural Networks (GNNs) and 3D convolutional neural networks (3D-CNNs) trained on massive protein-ligand complex datasets learn nuanced physical interactions, offering superior pose prediction and affinity ranking.

Protocol: Implementing an AI-Scoring Docking Workflow

- Protein Preparation: Using the predicted or experimental structure, prepare the protein file (PDQT format) by adding polar hydrogens, assigning charges (e.g., Gasteiger), and defining rotamer states for flexible side chains (if using flexible docking).

- Ligand Library Preparation: Generate 3D conformers of natural product compounds (e.g., from ZINC Natural Products library). Apply necessary force fields (MMFF94) and optimize geometry.

- Initial Pose Generation: Perform a rapid, broad-search docking using a geometry-based method (e.g., QuickVina, FRED) to generate an initial set of 20-50 candidate poses per ligand.

- AI-Scoring & Re-ranking: Feed the generated protein-ligand complexes (coordinates) into an AI scoring model (e.g., DeepDock, EquiBind, or a customized GNN). The model outputs a refined binding affinity score and may refine the pose.

- Pose Selection & Validation: Select the top 3 poses per ligand based on AI scores. Subject these to visual inspection for interaction fidelity (e.g., hydrogen bonds, pi-stacking) and subsequent MM/GBSA free energy calculations for further validation.

Binding Site Prediction & Druggability Assessment

Application Note: For novel targets implicated by phenotypic screening of natural products, identifying the functional binding pocket is a prerequisite. AI tools like DeepSite and PUResNet predict binding pockets from structure alone, assessing their druggability—a key step in prioritizing targets for a natural product docking campaign.

Protocol: Identifying and Evaluating Potential Binding Pockets

- Input Structure Processing: Provide a cleaned, solvent-removed PDB file of the target protein.

- Pocket Prediction Run: Execute DeepSite or similar tool on the protein grid. The tool returns coordinates and properties of top predicted pockets.

- Analysis of Results: Review the predicted pockets. Key metrics include volume (>150 ų), hydrophobicity, and presence of polar anchor residues. Overlap with known functional sites (from conserved domain databases) increases priority.

- Druggability Scoring: Use an accompanying or separate model (e.g., DeepDrug) to assign a druggability score (0-1) to each pocket. A score >0.7 indicates a highly druggable site suitable for small-molecule (including natural product) engagement.

Generative AI for Natural Product-Inspired Analog Design

Application Note: When a promising natural product hit is found but has suboptimal ADMET properties, generative models (VAEs, GANs, Transformers) can design novel analogs that retain the core pharmacophore while improving synthesizability, solubility, or reduce toxicity.

Protocol: Generating Optimized Analogues from a Lead Compound

- Lead Compound Encoding: Convert the 2D or 3D structure of the natural product lead into a numerical representation (e.g., SMILES string, molecular graph, or 3D fingerprint).

- Constraint Definition: Set property constraints for the generative model: desired ranges for LogP (<5), molecular weight (<500 Da), and number of rotatable bonds (<10). Define the core scaffold as a "must-retain" substructure.

- Model Execution: Run a conditional generative model (e.g., REINVENT, MolGPT) which samples the chemical space under the defined constraints.

- Output Filtering & Scoring: Generate 1,000-10,000 candidate structures. Filter first by physicochemical rules (Lipinski, Veber), then score using the previously validated AI docking model against the target to prioritize 50-100 candidates for in silico or synthetic evaluation.

Data Presentation

Table 1: Performance Comparison of Key AI Structural Bioinformatics Tools

| Technology Category | Example Tool(s) | Key Metric | Performance (Reported) | Relevance to Natural Product Docking |

|---|---|---|---|---|

| Protein Structure Prediction | AlphaFold2, RoseTTAFold | RMSD (Å) on CASP14 targets | <1.0 Å (for many targets) | Provides accurate targets for docking when experimental structures absent. |

| AI Docking Scoring | DeepDock, EquiBind | RMSD (Å) of top pose / Pearson R vs. experimental ΔG | ~1.5 Å / R=0.8+ | Outperforms classical scoring on diverse test sets, including natural product-like molecules. |

| Binding Site Prediction | DeepSite, PUResNet | DCC (Distance to True Pocket) / Matthews Correlation Coef. | DCC ~2.5 Å / MCC >0.7 | Correctly identifies allosteric or novel pockets for unconventional natural products. |

| Generative Design | REINVENT, MolGPT | % Valid/Unique/Novel Molecules / Success Rate in Optimization | >90% Valid, >80% Novel | Can propose synthesizable, drug-like analogs of complex natural product scaffolds. |

| Mutation Effect Prediction | AlphaFold3, ESMFold | Spearman's ρ for ΔΔG prediction | ρ ~0.6-0.8 | Predicts target susceptibility to natural product binding upon mutation. |

Table 2: Essential Research Reagent Solutions (The Scientist's Toolkit)

| Item | Function in AI-Guided Docking Pipeline | Example/Notes |

|---|---|---|

| GPU Computing Resource | Accelerates training & inference of deep learning models. | NVIDIA Tesla V100/A100, or cloud equivalents (AWS p3/p4 instances, Google Colab Pro+). |

| Structural Biology Software Suite | Protein preparation, visualization, and analysis. | UCSF ChimeraX, PyMOL, BIOVIA Discovery Studio. |

| Cheminformatics Toolkit | Ligand preparation, descriptor calculation, library management. | RDKit, Open Babel, Schrödinger LigPrep. |

| Molecular Docking Software | Generation of initial pose libraries for AI re-scoring. | AutoDock Vina, GNINA, FRED (OpenEye). |

| AI Model Repositories | Source for pre-trained models and pipelines. | GitHub repositories for ColabFold, DiffDock, HuggingFace MolGPT. |

| Curated Compound Libraries | Source of natural product and analog structures for screening. | ZINC Natural Products, COCONUT, NPASS. |

| Free Energy Calculation Suite | Validation of top AI-docked poses. | AMBER (for MM/GBSA), GROMACS. |

Protocol Diagrams

AI-Guided Natural Product Docking Workflow

AI Scoring Function Architecture

From AI-Docked Pose to Phenotype

Data Curation for AI-Guided Docking

High-quality, curated datasets are foundational for training and validating AI models in molecular docking. The primary sources include public databases and proprietary collections.

Table 1: Key Data Sources for Bioactive Natural Product Research

| Data Source | Data Type | Estimated Size (2024) | Primary Use in AI/Docking |

|---|---|---|---|

| ChEMBL | Bioactivity | >2.5M compounds, >1.8M assays | Training binding affinity prediction models |

| PDB | 3D Structures | >210,000 structures | Source of target conformations for docking grids |

| ZINC20 | Purchasable Compounds | >230M ready-to-dock molecules | Virtual screening library sourcing |

| COCONUT | Natural Products | >407,000 unique structures | Building NP-focused libraries |

| NPASS | Natural Products Activity | >35,000 NPs, >600 targets | Activity data for target prioritization |

Protocol 1.1: Curation of a Target-Structure Dataset from the PDB Objective: To compile a non-redundant, high-quality set of protein structures suitable for molecular docking studies.

- Query and Download: Use the RCSB PDB API to query for human protein targets with relevance to disease (e.g., kinases, GPCRs). Filter for X-ray crystallography structures with resolution ≤ 2.5 Å.

- Redundancy Reduction: Cluster remaining structures at 95% sequence identity using MMseqs2. Select the highest-resolution structure from each cluster.

- Preprocessing: For each selected PDB file:

- Remove all non-protein entities (water, ions, buffers) except co-crystallized ligands.

- Add missing hydrogen atoms and optimize protonation states at pH 7.4 using PDBFixer or MOE.

- Generate a clean

.pdbfile for docking grid preparation.

- Metadata Annotation: Create a companion table listing PDB ID, target name, UniProt ID, resolution, bound ligand (if any), and relevant biological pathway.

Protocol 1.2: Curation of Bioactivity Data from ChEMBL Objective: To extract and standardize bioactivity data for model training.

- Target Selection: Identify UniProt IDs for targets of interest (e.g., HSP90, EGFR).

- Data Extraction: Use the

chembl_webresource_clientin Python to extract all compounds listed as "Active" against the target, with standard IC50, Ki, or Kd values. - Data Standardization: Convert all activity values to pIC50 (-log10(IC50)). Filter out compounds with activity values < 1 µM (pIC50 > 6) to ensure high-affinity data.

- Compound Standardization: Canonicalize SMILES strings using RDKit, removing salts and standardizing tautomers.

- Final Dataset: Compile into a CSV file with columns:

canonical_smiles,pIC50,target_id,source_chembl_id.

Target Selection and Prioritization

Target selection is guided by therapeutic relevance, structural data availability, and "druggability" assessments.

Table 2: Quantitative Metrics for Target Prioritization

| Prioritization Metric | High-Priority Threshold | Data Source/Tool | Rationale |

|---|---|---|---|

| Druggability Score | ≥ 0.7 | CANVAS, DoGSiteScorer | Predicts likelihood of binding small molecules |

| Pocket Volume (ų) | 300 - 1000 | FPocket | Optimal size for ligand binding |

| Sequence Conservation | High across homologs | BLAST, Clustal Omega | Indicates functional importance |

| Disease Association | GWAS p-value < 1e-8 | Open Targets Platform | Validates therapeutic relevance |

| Structural Coverage | ≥ 3 unique ligand-bound PDBs | RCSB PDB | Ensures robust conformational data for docking |

Protocol 2.1: Computational Assessment of Target Druggability Objective: To rank potential protein targets based on the predicted feasibility of binding small-molecule inhibitors.

- Input Preparation: Provide a prepared protein structure (from Protocol 1.1) in PDB format.

- Binding Site Detection: Run FPocket (

fpocket -f target.pdb) to identify potential binding pockets. - Pocket Analysis: For the top-ranked pocket by score, extract metrics: volume, hydrophobicity, and residue composition.

- Score Calculation: Calculate a composite druggability score (D-score): D-score = (Normalized Pocket Volume * 0.4) + (Hydrophobicity Score * 0.3) + (Conservation Score * 0.3)

- Output: Generate a report listing all pockets, their metrics, and D-scores. Targets with a top-pocket D-score ≥ 0.7 are prioritized.

Library Preparation for Virtual Screening

A well-prepared compound library is essential for efficient virtual screening.

Table 3: Library Preparation Steps and Filters

| Preparation Step | Typical Parameters | Tool/Software | Purpose |

|---|---|---|---|

| Desalting & Standardization | Remove counterions, generate canonical tautomer | RDKit, Open Babel | Creates a consistent molecular representation |

| Physicochemical Filtering | 180 ≤ MW ≤ 500, -2 ≤ LogP ≤ 5, HBD ≤ 5, HBA ≤ 10 | RDKit, Lipinski's Rule of 5 | Enforces drug-like properties |

| Reactive/Unwanted Moieties | Filter PAINS, toxicophores, pan-assay interference compounds | RDKit Filter Catalog | Removes promiscuous or unstable compounds |

| 3D Conformer Generation | Generate up to 50 conformers per compound, minimize energy | Omega (OpenEye), RDKit ETKDG | Prepares molecules for 3D docking |

| Final Format Conversion | Convert to docking-ready format (e.g., .sdf, .mol2) | Open Babel | Creates input for docking software |

Protocol 3.1: Preparation of a Natural Product-Focused Screening Library Objective: To create a clean, drug-like, ready-to-dock library from a raw natural product collection.

- Source Acquisition: Download SMILES strings from COCONUT or other NP databases.

- Initial Cleaning (Python/RDKit):

- Property Filtering: Filter molecules using RDKit's

Descriptorsmodule to enforce: 180 ≤ MW ≤ 600, LogP ≤ 5, HBD ≤ 5, HBA ≤ 10, Rotatable Bonds ≤ 10. - Structural Filtering: Apply a PAINS filter using an RDKit substructure matching to remove known problematic motifs.

- 3D Preparation: For the filtered list, use Omega to generate a multi-conformer 3D structure file in .sdf format, with MMFF94 energy minimization.

- Final Output: The library is a multi-conformer .sdf file, accompanied by a metadata file with original source and calculated properties.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Materials for AI-Guided Docking Workflow

| Item/Category | Specific Example/Product | Function in Workflow |

|---|---|---|

| Protein Expression & Purification | HEK293 or Sf9 Insect Cell Systems, HisTrap HP column | Produces high-quality, soluble protein for crystallography or SPR validation. |

| Crystallography Reagents | Hampton Research Crystal Screen, 24-well VDX plates | Facilitates growth of protein-ligand co-crystals for structure determination. |

| Surface Plasmon Resonance (SPR) | Cytiva Series S Sensor Chip CM5, HBS-EP+ Buffer | Provides label-free kinetic data (Ka, Kd) for validating docking hits. |

| High-Performance Computing | NVIDIA A100 or V100 GPU clusters | Accelerates AI model training and large-scale virtual docking simulations. |

| Commercial Compound Libraries | Enamine REAL Space, Life Chemicals NP Library | Source of physically available compounds for virtual screening and purchase. |

| Docking & Simulation Software | Schrödinger Suite, AutoDock Vina, GROMACS | Performs molecular docking, scoring, and molecular dynamics simulations. |

| AI/ML Framework | PyTorch or TensorFlow with DGL/LifeSci | Enables building and training custom models for binding affinity prediction. |

Visualizations

Title: AI-Driven Docking Workflow from Data to Hits

Title: Key Metrics for Target Prioritization

Title: Natural Product Library Preparation Pipeline

1. Introduction The convergence of Artificial Intelligence (AI) and natural products (NP) research represents a paradigm shift in drug discovery. Framed within a thesis on AI-guided molecular docking for bioactive NPs, these application notes detail how AI-driven virtual screening accelerates the identification of novel therapeutics from NP libraries by predicting binding affinities and mechanisms of action with unprecedented efficiency.

2. Application Notes & Data Presentation 2.1. Performance Metrics of AI-Docking Tools Recent benchmarks (2023-2024) highlight the accuracy and speed of integrated AI/molecular docking platforms for NP screening.

Table 1: Comparative Performance of AI-Enhanced Docking Platforms for Natural Product Libraries

| Platform/Tool | Core AI/Docking Method | Avg. Docking Time per NP Ligand (s) | Enrichment Factor (EF1%)* | Key Application in NP Research |

|---|---|---|---|---|

| AlphaFold2 + AutoDock Vina | Deep Learning Structure Prediction + Physics-based Docking | ~45 | 15.2 | Target-specific screening for novel NP targets with unknown structures. |

| GNINA (CNN-Score) | Convolutional Neural Network Scoring | ~12 | 22.7 | High-throughput virtual screening of large NP databases; improved pose prediction. |

| SMINA (AutoDock4/ Vina) | Customizable Scoring & Optimization | ~8 | 18.5 | Rapid scaffold hopping and bioactivity prediction for NP analogs. |

| Molecular Docking + MM/GBSA | Docking followed by AI-accelerated Molecular Mechanics Scoring | ~180 (full workflow) | 25.1 | High-accuracy binding affinity ranking for lead NP optimization. |

*EF1%: Enrichment Factor at 1% of the screened database, measuring the ability to prioritize active compounds.

2.2. Key Research Reagent Solutions Essential materials and computational tools for implementing AI-NP docking workflows.

Table 2: The Scientist's Toolkit: Key Research Reagent Solutions

| Item Name | Type/Provider | Function in AI-NP Docking |

|---|---|---|

| ZINC20 Natural Products Subset | Database (UCSF) | Curated, purchasable NP library for virtual screening (~120,000 compounds). |

| COCONUT Database | Database (COCONUT) | Extensive open NP database for novel structure discovery (~400,000 compounds). |

| AutoDock Vina/ SMINA | Software (The Scripps Research Institute) | Open-source docking engine for pose prediction and scoring. |

| RDKit | Python Library | Cheminformatics toolkit for NP structure preprocessing, descriptor calculation, and fingerprinting. |

| PyMOL/ ChimeraX | Visualization Software | 3D visualization of NP-protein docking complexes and interaction analysis. |

| Google Colab Pro/ AWS EC2 | Cloud Computing | GPU-accelerated (e.g., NVIDIA T4, V100) platforms for running AI-docking models. |

3. Experimental Protocols

3.1. Protocol: AI-Guided Virtual Screening of a Natural Product Library Against a Novel Therapeutic Target Objective: To identify high-affinity NP hits against a protein target (e.g., SARS-CoV-2 Mpro) using an integrated AI and molecular docking workflow.

A. Preparation Phase

- Target Preparation:

- Retrieve the 3D protein structure (PDB ID: 7TLL) from the RCSB PDB. For targets without a structure, use AlphaFold2 (via ColabFold) to generate a predicted model.

- Using UCSF ChimeraX:

- Remove water molecules and heteroatoms.

- Add hydrogen atoms and assign partial charges (AMBER ff14SB).

- Define the binding site grid coordinates (e.g., centroid of a known co-crystallized ligand). Save target as

target_prepared.pdbqt.

- NP Ligand Library Preparation:

- Download the "Clean Leads" subset from the ZINC20 NP database.

- Use Open Babel (in command line): Convert

zinc_np.sdfto individual.pdbqtfiles. Apply Gasteiger charges and detect rotatable bonds. obabel zinc_np.sdf -O ligand_.pdbqt -m --gen3d

- AI-Based Pre-Screening (Filtering):

- Utilize a pre-trained graph neural network (GNN) model (e.g., from DeepChem) to predict binary activity.

- Input Morgan fingerprints (radius=2, 2048 bits) of the NP library. Filter and retain the top 10,000 compounds predicted as "active" for docking.

B. Docking & AI Re-Scoring Phase

- High-Throughput Molecular Docking:

- Employ SMINA for docking. Use a batch script to process all filtered ligands.

- Example command:

smina -r target_prepared.pdbqt -l ligand_1.pdbqt --autobox_ligand reference_crystal.pdb --exhaustiveness 32 -o docked_1.pdbqt - Extract the best docking pose score (kcal/mol) for each NP.

- AI Re-Scoring and Pose Selection:

- Process all docking output poses with GNINA's built-in CNN scoring function.

gnina -r target.pdbqt -l docked_poses.sdf --score_only --cnn_scoring- Rank the final NP list by the CNN score, which correlates better with experimental binding affinity than classical scoring functions.

C. Post-Docking Analysis

- Interaction Analysis & Visualization:

- Load the top 10 NP-protein complexes in PyMOL.

- Generate 2D ligand-protein interaction diagrams using the

posevieworligplotplugin to identify key hydrogen bonds, hydrophobic contacts, and pi-stacking.

- Consensus Scoring & Hit Selection:

- Apply a consensus ranking strategy. Prioritize NPs that rank in the top 5% by both classical docking score (SMINA) and AI-based score (GNINA CNN).

- Manually inspect the top 50 consensus hits for drug-likeness (Lipinski's Rule of Five) and synthetic accessibility.

3.2. Protocol: Validation via Molecular Dynamics (MD) Simulations Objective: To validate the stability of the AI-docked NP-protein complex.

- System Setup: Use the top-ranked docking pose. Solvate the complex in a TIP3P water box (10 Å padding). Add ions to neutralize charge (e.g., NaCl to 0.15M).

- Simulation: Run minimization, equilibration (NVT and NPT ensembles), and a production run (100 ns) using GPU-accelerated AMBER or GROMACS.

- Analysis: Calculate the Root Mean Square Deviation (RMSD) of the protein backbone and ligand, and the ligand-protein interaction fraction over the full trajectory. Stable RMSD (< 2.5 Å) and persistent key interactions confirm the docking pose.

4. Visualizations

AI-NP Docking Screening Workflow

Mechanism of Action Prediction

Building Your Pipeline: A Step-by-Step AI-Docking Workflow

Application Notes

In the context of AI-guided molecular docking for bioactive natural products research, the selection of a docking platform is critical. Traditional suites, like AutoDock Vina and Glide, operate on principles of systematic search and empirical/scoring function optimization. In contrast, emerging AI-docking platforms leverage deep learning to predict binding poses and affinities, often with dramatic speed advantages and, in some cases, improved accuracy for novel protein-ligand pairs. The integration of AI methods is particularly promising for natural products, which often possess complex, rigid scaffolds that challenge traditional conformational sampling.

Table 1: Platform Comparison for Natural Products Docking

| Platform | Core Methodology | Key Strength in NP Research | Typical Runtime | Accuracy Metric (Avg. RMSD) | Recommended Use Case |

|---|---|---|---|---|---|

| AutoDock Vina | Gradient-optimized Monte Carlo search, empirical scoring. | High flexibility in handling diverse ligand chemistry; free, open-source. | 1-10 minutes/ligand | ~2.0-3.0 Å | Initial virtual screening of NP libraries; user-customizable protocols. |

| Glide (Schrödinger) | Systematic, hierarchical search with proprietary scoring (SP, XP). | Excellent pose prediction accuracy and robust scoring for lead optimization. | 2-15 minutes/ligand (SP/XP) | ~1.5-2.5 Å | High-accuracy docking for prioritized NP hits; detailed interaction analysis. |

| AlphaFold2 | Deep learning (Evoformer, structure module) for protein structure prediction. | Enables docking when no experimental protein structure exists (e.g., novel NP targets). | Hours (per protein) | N/A (for docking) | Generate reliable protein models for subsequent docking with other tools. |

| EquiBind | Geometric deep learning (E(3)-equivariant GNN). | Ultra-fast, direct pose prediction without traditional search; handles protein flexibility. | < 1 second/ligand | ~2.5-4.0 Å (on novel targets) | Rapid screening of ultra-large NP databases or real-time docking. |

| DiffDock | Diffusion generative model on the SE(3) manifold. | State-of-the-art pose prediction accuracy, especially for unseen proteins. | ~10 seconds/ligand | ~1.5-2.5 Å (on novel targets) | High-accuracy, blind docking of promising NPs to challenging targets. |

Table 2: Key Research Reagent Solutions & Essential Materials

| Item | Function in NP Docking Workflow |

|---|---|

| Protein Data Bank (PDB) Files | Source of experimentally solved 3D structures of target proteins for traditional and AI docking input. |

| AlphaFold Protein Structure Database | Source of high-accuracy predicted protein models for targets lacking experimental structures. |

| NP Library (e.g., COCONUT, ZINC Natural Products) | Curated, often 3D-ready, databases of natural product structures for virtual screening. |

| Ligand Preparation Tool (e.g., Open Babel, LigPrep) | Prepares ligand files (ionization, tautomers, minimization) for docking input. |

| Protein Preparation Suite (e.g., Schrödinger Maestro, UCSF Chimera) | Prepares protein structures (add H, assign charges, optimize H-bonding, remove water). |

| Molecular Dynamics Software (e.g., GROMACS, Desmond) | Used for post-docking refinement and stability assessment of top NP docking poses. |

Experimental Protocols

Protocol 1: High-Throughput Virtual Screening of an NP Library using AutoDock Vina

- Target Preparation: Download a PDB file (e.g., 3ABC). Remove water molecules and co-crystallized ligands. Add polar hydrogens and Kollman charges using UCSF Chimera.

- Ligand Library Preparation: Download an SDF file of NPs (e.g., from COCONUT). Use Open Babel to convert to PDBQT format, generating possible tautomers and protonation states at pH 7.4.

- Grid Box Definition: Using the target's known active site (from literature), define a search space in Vina. Center coordinates (x, y, z) and box dimensions (e.g., 25x25x25 Å) are set in the configuration file.

- Docking Execution: Run Vina via command line:

vina --config config.txt --ligand ligand.pdbqt --out output.pdbqt. Use a batch script to process the entire library. - Post-Processing: Analyze output PDBQT files. Sort compounds by binding affinity (kcal/mol). Visually inspect the top 50 poses for key interactions (H-bonds, pi-stacking).

Protocol 2: Blind Docking of a Bioactive NP using DiffDock

- Environment Setup: Install DiffDock in a Python 3.9+ environment with PyTorch and required dependencies (as per GitHub repository).

- Input Preparation: Provide a protein file (.pdb) of the target and a ligand file (.sdf or .mol2) of the prepared natural product. No active site specification is needed.

- Model Inference: Run the DiffDock prediction script:

python inference.py --protein_path protein.pdb --ligand_path ligand.sdf --out_dir results/. The model will generate multiple (e.g., 40) candidate poses with confidence scores. - Pose Selection & Analysis: Rank poses by the model's confidence score. The top-ranked pose typically offers the most reliable prediction. Analyze the interaction profile using PyMOL or Maestro.

Protocol 3: Structure-Based Screening with an AlphaFold Model using Glide

- Target Modeling: Retrieve the predicted structure of your target protein from the AlphaFold Database. If unavailable, run local AlphaFold2 inference.

- Protein Preparation in Maestro: Use the Protein Preparation Wizard. Assign bond orders, fill missing side chains/loops, optimize H-bonding networks, and perform a restrained minimization (OPLS4 force field).

- Receptor Grid Generation: In Glide, select the centroid of the predicted binding pocket (from domain knowledge or computational mapping) to generate the grid. Set the inner box (10x10x10 Å) and outer box (30x30x30 Å).

- Ligand Docking: Prepare the NP library using LigPrep. Execute Virtual Screening Workflow using the Standard Precision (SP) mode. Post-docking, filter results by GlideScore and visual inspection.

- Induced-Fit Refinement (Optional): For top hits, run an Induced Fit Docking protocol to account for side-chain flexibility upon NP binding.

Visualizations

AI vs Traditional Docking Workflow for NPs

DiffDock Simplified Inference Logic

Glide Hierarchical Screening Protocol

Application Notes

Within the thesis context of AI-guided molecular docking for bioactive natural products research, the initial step of target preparation is foundational. Errors introduced here propagate, leading to false positives or negatives in virtual screening. AI-driven preparation enhances reproducibility and biological relevance by integrating structural bioinformatics, phylogenetic data, and experimental constraints. This protocol details the use of AlphaFold2 for model generation, DeepSite for binding site prediction, and MD simulations for refinement to create a reliable target for docking natural product libraries.

Table 1: AI Tool Performance for Target Preparation Tasks

| Task | Tool/Algorithm | Key Metric | Typical Performance/Output | Primary Use Case in Natural Products Research |

|---|---|---|---|---|

| Protein Structure Prediction | AlphaFold2 (v2.3.1) | pLDDT (per-residue confidence) | >90 (Very high), 70-90 (Confident), <50 (Low) | Generating high-confidence models for natural product targets with no experimental structure (e.g., plant enzyme isoforms). |

| Binding Site Prediction | DeepSite | AUC (Area Under Curve) | 0.80 - 0.92 on benchmark sets | Identifying potential allosteric or novel binding pockets for complex natural product scaffolds. |

| Binding Site Prediction | PrankWeb 2.0 | AUC | 0.75 - 0.89 on benchmark sets | Complementary, conservation-aware prediction. |

| Structure Refinement | GROMACS (MD Simulation) | RMSD (Root Mean Square Deviation) | Backbone RMSD plateau < 2.0 Å over 50 ns | Solvating and relaxing AI-predicted structures to a stable conformation for docking. |

Experimental Protocols

Protocol 1: AI-Assisted Protein Structure Acquisition and Validation

Objective: To obtain a reliable, ready-to-dock 3D structure of the target protein.

Materials (Research Reagent Solutions & Essential Materials):

| Item | Function/Description |

|---|---|

| Target Protein Sequence (FASTA) | The amino acid sequence of the protein of interest. Sourced from UniProt. |

| AlphaFold2 (ColabFold implementation) | AI system for predicting protein 3D structures from sequence with confidence metrics. |

| PyMOL or UCSF ChimeraX | Molecular visualization software for structural analysis, cleaning, and preparation. |

| PROCHECK/PDBSum | Online servers for stereochemical quality assessment of protein structures. |

| GROMACS 2023.x | Molecular dynamics package for solvation and simulation in explicit solvent. |

| AMBER ff19SB Force Field | A modern force field for accurate simulation of protein dynamics. |

| TP3P Water Model | A standard water model for solvating the protein system. |

Methodology:

- Sequence Retrieval: Obtain the canonical sequence of your target protein (e.g., Homo sapiens AKT1) in FASTA format from the UniProt database. Note any key isoforms relevant to the disease pathway.

- Structure Prediction via ColabFold: Access the ColabFold notebook (github.com/sokrypton/ColabFold). Input the FASTA sequence. Set parameters:

use_amberfor refinement,num_recycles=3. Execute. The output includes a predicted model (.pdb) and a per-residue pLDDT confidence score JSON file. - Model Selection & Initial Cleaning: Download the highest-ranked model (ranked_0.pdb). In PyMOL, remove all heteroatoms and solvent molecules. Isolate the protein chain of interest.

- Structural Validation: Upload the cleaned model to the PDBSum server. Generate a Ramachandran plot. A quality model should have >90% of residues in the most favored regions. Cross-reference low-confidence regions (pLDDT < 70) with the predicted binding site; if they overlap, consider the need for MD refinement.

- System Preparation for MD (Optional but Recommended for AI Models):

a. Use

pdb2gmxin GROMACS to assign force field parameters (-ff amber19sb). b. Solvate the protein in a cubic water box (-spcwater model) with a 1.0 nm margin. c. Add ions to neutralize system charge. d. Perform energy minimization using the steepest descent algorithm until maximum force < 1000 kJ/mol/nm. e. Run a short (5-10 ns) NVT and NPT equilibration. f. Execute a production MD simulation (50 ns). Analyze backbone RMSD to confirm stability. g. Extract the most representative structure (centroid of the largest cluster from the stable trajectory) for the next step.

Protocol 2: AI-Based Binding Site Definition and Pocket Preparation

Objective: To define the biologically relevant binding pocket(s) using consensus AI prediction.

Materials:

| Item | Function/Description |

|---|---|

| Prepared Protein Structure (.pdb) | Output from Protocol 1. |

| DeepSite Web Server | CNN-based tool for binding site prediction using surface representation. |

| PrankWeb 2.0 Server | Conservation & geometry-based binding site predictor. |

| DoGSiteScorer (from ProteinsPlus) | Pocket detection and characterization server. |

| UCSF Chimera | For aligning predictions, calculating consensus, and defining the docking grid. |

Methodology:

- Consensus Pocket Prediction: Submit the prepared protein structure to both DeepSite and PrankWeb 2.0. Run DoGSiteScorer for a third geometry-based opinion.

- Result Integration: In UCSF Chimera, load the protein and the predicted pocket coordinates from each server. Visually overlay all predictions. Identify regions where at least two methods, preferably including DeepSite, show significant overlap.

- Pocket Selection Criteria: Prioritize pockets based on: i) Consensus across tools, ii) Location in known functional domains (from literature), iii) Druggability score (e.g., from DoGSiteScorer: volume > 500 ų, depth, hydrophobicity), iv) Relevance to natural product mechanism (e.g., allosteric site for modulation).

- Grid Box Definition for Docking: Using the centroid coordinates of the selected consensus pocket, define a docking search space. Set the grid box dimensions to enclose the entire pocket with a 5-10 Å margin in all directions. Record the exact center (x, y, z) and size (points, spacing) for use in molecular docking software (e.g., AutoDock Vina, GNINA).

Mandatory Visualization

AI-Guided Target Preparation Workflow

Data Flow in Target Prep: Sequence to Grid

This protocol details the second critical step in a thesis on AI-guided molecular docking for bioactive natural products research. A high-quality, well-curated compound library is the foundational dataset for all subsequent in silico screening. This stage transforms raw, heterogeneous natural product (NP) data into a structured, chemically standardized, and bioactivity-annotated virtual library suitable for computational analysis.

Application Notes

- Source Integration: Modern NP libraries aggregate data from public databases, commercial vendors, and proprietary in-house collections. The key is to capture maximum chemical diversity while ensuring data integrity.

- The Standardization Imperative: NPs are notorious for inconsistent representation (salts, stereochemistry, tautomers). Standardization ensures each molecule is uniquely and correctly represented, preventing redundancy and docking errors.

- Metadata is Critical: Beyond structure, libraries must include source organism, known bioactivities (with targets), ADMET properties (if available), and literature references. This contextual data is vital for training AI models and interpreting docking results.

- Pre-filtering for Drug-likeness: Applying rules like Lipinski's Rule of Five or the more NP-informed Natural Product-Likeness (NPL) score early can focus resources on the most promising candidates.

Quantitative Data on Common Natural Product Databases

Table 1: Key Public Natural Product Databases (Data from 2023-2024)

| Database Name | Approx. Number of Unique Compounds | Key Features | Primary Use in Library Curation |

|---|---|---|---|

| COCONUT (COlleCtion of Open Natural ProdUcTs) | ~ 450,000 | Non-redundant, openly accessible, includes predicted molecular features. | Primary source for structure harvesting. |

| NPASS (Natural Product Activity and Species Source) | ~ 35,000 (with ~ 300,000 activity records) | Detailed activity data (targets, potency) linked to species. | Source for bioactivity annotation and target association. |

| CMAUP (A Collection of Multitargeting Anti-infective Natural Products) | ~ 23,000 | Curated for anti-infective research, includes predicted targets. | Thematic library construction (e.g., antimicrobials). |

| SuperNatural 3.0 | ~ 450,000 | Includes purchasability information, derivatives, and predicted toxicity. | Sourcing and pre-filtering for drug-likeness. |

| PubChem (Natural Product Subset) | ~ 500,000 (subset) | Massive, linked to bioassays and literature. | Broad structure sourcing and cross-referencing. |

Experimental Protocols

Protocol 1: Library Assembly and Deduplication

Objective: To aggregate NP structures from multiple sources into a single, non-redundant collection.

Materials: Chemical structure files (SDF, SMILES) from databases in Table 1; Computational workstation; Cheminformatics software (e.g., RDKit, Open Babel, KNIME).

Procedure:

- Data Harvesting: Download structural data (preferably as SMILES or SDF) from selected databases.

- Format Standardization: Convert all files to a consistent format (e.g., SDF V3000) using a tool like Open Babel (

obabel -i sdf input.sdf -o sdf output.sdf --gen3D). - Initial Filtering: Remove compounds exceeding a molecular weight threshold (e.g., > 1500 Da) or containing non-druglike elements (e.g., heavy metals).

- Standardization Pipeline: Process all SMILES strings using RDKit in a Python script to: a. Neutralize charges on carboxylic acids and amines. b. Remove solvent molecules and counterions. c. Generate canonical tautomers. d. Explicitly define stereochemistry where known; discard mixtures of unspecified stereocenters critical for binding.

- Deduplication: Generate InChIKeys (or hashed canonical SMILES) for all standardized compounds. Remove exact duplicates based on InChIKey. For near-duplicate clustering (e.g., based on Tanimoto similarity > 0.95), retain the entry with the most complete metadata.

Protocol 2: Bioactivity and ADMET Annotation

Objective: To enrich the library with experimental and predicted biological data.

Materials: Curated structure list; Access to bioactivity databases (NPASS, ChEMBL); ADMET prediction software (e.g., SwissADME, pkCSM).

Procedure:

- Bioactivity Mapping: For each compound, query NPASS and ChEMBL via their APIs using the InChIKey or canonical SMILES. Extract known target proteins, activity values (IC50, Ki), and source organism.

- Data Integration: Create a master annotation table linking compound ID to:

- Target UniProt ID

- Activity measurement and value

- PubMed ID of source literature

- In Silico ADMET Profiling: Submit the standardized SMILES list to SwissADME and pkCSM webservers or run local scripts using pre-trained models. Record key predictions:

- Gastrointestinal absorption (HIA)

- Blood-Brain Barrier (BBB) permeability

- Cytochrome P450 inhibition (CYP2D6, 3A4)

- Hepatotoxicity

- Synthetic Accessibility Score

Visualization

Diagram Title: Workflow for Curating an AI-Ready Natural Product Library

Diagram Title: Chemical Standardization Protocol Steps

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools for NP Library Curation

| Tool / Resource | Type | Primary Function in Curation |

|---|---|---|

| RDKit | Open-source Cheminformatics Library | Core engine for chemical standardization, descriptor calculation, and substructure filtering. Used via Python scripts. |

| Open Babel | Open-source Chemical Toolbox | File format conversion and basic molecular editing in high-throughput batch processing. |

| KNIME / Orange | Visual Workflow Platforms | No-code/low-code pipeline building for data integration, standardization, and analysis. |

| SwissADME | Web Server / Tool | Predicts key physicochemical, pharmacokinetic, and drug-likeness parameters for pre-filtering. |

| NPASS & ChEMBL APIs | Programmable Database Interface | Automated retrieval of experimental bioactivity data for library annotation. |

| Tanimoto Coefficient (via RDKit) | Algorithmic Metric | Quantifies structural similarity for clustering and near-duplicate identification. |

| InChIKey | Standardized Identifier | Provides a unique, hash-based "fingerprint" for exact duplicate detection across databases. |

This protocol details the execution phase of AI-guided molecular docking, the third critical step in our comprehensive thesis pipeline for discovering bioactive natural products. Following compound library preparation (Step 1) and AI model selection/training (Step 2), Step 3 involves the practical computational experiment where pre-processed natural product libraries are virtually screened against target protein structures. This step transforms predictive models into actionable binding hypotheses, generating quantitative and qualitative data on protein-ligand interactions for downstream validation.

Core Protocol: Executing the Docking Simulation

Pre-Execution System Check

- Objective: Ensure computational environment and data integrity.

- Procedure:

- Verify the installation and dependencies of the selected docking software (e.g., AutoDock Vina, GNINA, rDock) and the AI guidance wrapper (e.g., DeepDock, DiffDock, a custom PyTorch/TensorFlow script).

- Confirm all input files are correctly formatted:

- Target Protein: PDBQT file (with hydrogens added, charges assigned, and flexible residues defined if performing induced-fit docking).

- Natural Product Ligands: Multi-ligand SDF or PDBQT file from Step 1.

- AI Model: Pre-trained weights file (e.g.,

.h5,.pth). - Configuration File: YAML/JSON file defining search space (grid box), exhaustiveness, and AI parameters.

- Validate the docking grid position and dimensions encompass the protein's active site, allosteric site, or other regions of interest as defined in the thesis hypothesis.

Launching the AI-Guided Docking Run

- Objective: Initiate the parallelized docking simulation.

Procedure:

- Activate the appropriate Conda/Python environment:

conda activate thesis_docking. Execute the main run command. This typically integrates the AI model to pre-score poses or guide the conformational search. Example for a hypothetical AI-docking pipeline:

Monitor the process via console output or a logging system (

tail -f docking.log) for errors or progress indicators (e.g., completion percentage, estimated time remaining).

- Activate the appropriate Conda/Python environment:

Post-Docking Analysis & Pose Extraction

- Objective: Process raw docking outputs to identify top candidates.

- Procedure:

- After job completion, consolidate all output files (e.g., individual pose files, score logs).

- Extract key metrics for each ligand: Predicted Binding Affinity (ΔG in kcal/mol), Intermolecular Interaction Data (H-bonds, hydrophobic contacts, pi-stacking), and Ligand Efficiency.

- Apply the AI model's confidence score or a consensus scoring function if multiple methods were used.

- Cluster ligand poses based on RMSD to identify unique binding modes.

- Generate a ranked list of natural product hits for visual inspection and further analysis in Step 4 (Validation).

Data Presentation: Representative Docking Results

Table 1: Top 5 AI-Docked Natural Product Hits Against Target Protein XYZ (PDB: 7ABC)

| Natural Product (Source) | Predicted ΔG (kcal/mol) | AI Confidence Score | Key Interactions (Residues) | Cluster RMSD (Å) | Ligand Efficiency |

|---|---|---|---|---|---|

| Chelerythrine (Macleaya cordata) | -9.8 | 0.92 | ASP-189 (H-bond), TYR-237 (π-π), VAL-293 (Hydrophobic) | 1.5 | 0.41 |

| Withaferin A (Withania somnifera) | -9.5 | 0.89 | LYS-102 (H-bond), GLU-201 (H-bond), Hydrophobic pocket (LEU-294, ALA-295) | 2.1 | 0.38 |

| Berberine (Berberis vulgaris) | -8.9 | 0.85 | ASP-189 (salt bridge), TYR-237 (cation-π) | 1.2 | 0.39 |

| Curcumin (Curcuma longa) | -8.7 | 0.81 | SER-105 (H-bond), ARG-204 (H-bond), Hydrophobic interaction | 3.0 | 0.33 |

| Silibinin (Silybum marianum) | -8.5 | 0.78 | Multiple H-bonds with backbone (GLY-106, SER-105), Hydrophobic contact | 1.8 | 0.31 |

Note: Data is illustrative, generated from a simulated docking run for protocol demonstration. Actual values will vary.

Experimental Protocols for Cited Key Experiments

Protocol A: Induced-Fit Docking for Flexible Binding Sites

- Rationale: Account for protein side-chain flexibility upon ligand binding.

- Method:

- Using UCSF Chimera or PyMOL, identify key flexible residues within 5Å of the docked ligand from a preliminary rigid docking run.

- Generate a flexible residue parameter file for AutoDock Vina or use the

--flexflag in GNINA. - Re-run the AI-docking simulation with the defined flexible sidechains, increasing the exhaustiveness parameter by 50%.

- Analyze conformational changes in the protein between the apo and holo models.

Protocol B: Consensus Scoring Validation

- Rationale: Mitigate scoring function bias by employing multiple evaluators.

- Method:

- Execute docking runs using two distinct backends: e.g., GNINA (CNN scoring) and AutoDock Vina (empirical scoring).

- For each ligand, record scores from both methods along with the primary AI guide score.

- Apply a normalized rank-based Z-score to each result set.

- Calculate a final composite score:

Composite = (0.5 * AI_Score) + (0.3 * Vina_Score) + (0.2 * GNINA_Score). - Re-rank the library based on the composite score to generate the final hit list.

Visualization: Workflow & Pathway Diagrams

AI-Guided Docking Execution Workflow

From Docking Pose to Biological Hypothesis

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools & Resources for AI-Guided Docking

| Item | Function/Benefit | Example/Note |

|---|---|---|

| Docking Software Suite | Core engine for pose generation and scoring. | GNINA: Supports CNN scoring; AutoDock Vina: Fast, empirical; rDock: Rule-based. |

| AI/ML Framework | Environment to run pre-trained guidance models. | PyTorch or TensorFlow with CUDA support for GPU acceleration. |

| Conda Environment | Manages isolated software dependencies to ensure reproducibility. | Use environment.yml to document all package versions. |

| High-Performance Computing (HPC) Cluster | Provides parallel CPUs/GPUs for screening large libraries in feasible time. | Slurm or PBS job schedulers are commonly used. |

| Visualization Software | Critical for analyzing and interpreting docking poses and interactions. | UCSF ChimeraX, PyMOL, BioVIA Discovery Studio. |

| Scripting Language | For automation, data parsing, and analysis pipeline creation. | Python with libraries (Pandas, NumPy, RDKit, MDAnalysis). |

| Configuration File (YAML/JSON) | Documents all docking parameters (grid box, exhaustiveness) for exact replication. | Essential for peer review and thesis methodology. |

Application Notes: Strategic Analysis within AI-Guided Docking

In the context of AI-guided molecular docking for bioactive natural products, Step 4 transforms raw computational output into validated, biologically interpretable hypotheses. This phase is critical for triaging virtual hits by moving beyond simplistic score-ranking to a multi-dimensional assessment of pose quality, predicted affinity, and interaction fidelity. The integration of AI/ML scoring functions and interaction predictors at this stage significantly reduces false positives and prioritizes candidates for in vitro validation.

Key Analytical Dimensions:

- Pose Analysis: Evaluates the geometric plausibility and stability of the ligand's binding conformation.

- Scoring Analysis: Utilizes consensus and AI-enhanced scoring functions to estimate binding affinity, acknowledging the inherent uncertainty of any single score.

- Interaction Analysis: Deciphers the specific molecular interactions (hydrogen bonds, hydrophobic contacts, pi-stacking) that confer binding specificity and affinity, often comparing them to known active compounds or pharmacophore models.

AI Integration: Modern protocols leverage AI not just for initial pose generation, but for post-docking rescoring (e.g., using graph neural networks like PointNet or SE(3)-Transformers trained on PDBbind data) and for predicting key interaction fingerprints. This allows for the prioritization of natural product poses that mimic the interaction profiles of successful drugs.

Experimental Protocols for Post-Docking Analysis

Protocol 2.1: Consensus Scoring and Pose Clustering

Objective: To identify robust binding poses by combining multiple scoring functions and clustering geometrically similar solutions.

Materials: Docking output file(s) (e.g., .sdf, .pdbqt), molecular visualization software (PyMOL, UCSF Chimera), computational environment (Python/R, RDKit).

Procedure:

- Pose Extraction: Extract all generated poses and their associated scores from the docking output file.

- Score Normalization: For each scoring function (e.g., Vina, Glide, NNScore), normalize scores to a common scale (e.g., Z-score) across all poses.

- Consensus Score Calculation: For each pose, calculate the mean of the normalized scores from at least three distinct scoring functions. Rank poses by this consensus score.

- RMSD-Based Clustering: Using the top-ranked pose as the first cluster centroid, calculate the Root-Mean-Square Deviation (RMSD) of all other heavy-atom coordinates for all other poses against centroids. Cluster poses with RMSD < 2.0 Å. Select the highest consensus-scoring pose from each major cluster as a representative binding mode.

Protocol 2.2: Detailed Protein-Ligand Interaction Profiling

Objective: To characterize the specific non-covalent interactions stabilizing the ligand pose.

Materials: Representative pose file, interaction analysis tool (PLIP, Schrödinger Maestro's Pose Analysis, or the prolif Python library).

Procedure:

- System Preparation: Ensure the protein and ligand structures are correctly protonated and atom types are assigned.

- Interaction Detection: Run the analysis tool to detect:

- Hydrogen bonds (donor, acceptor, distance, angle)

- Hydrophobic interactions (ligand aliphatic/aromatic rings to protein hydrophobic residues)

- Pi-stacking (face-to-face, edge-to-face)

- Salt bridges

- Water-mediated hydrogen bonds (if water molecules are included in the model).

- Visualization & Mapping: Generate a 2D interaction diagram. Visually inspect the 3D pose to confirm the spatial context of identified interactions.

Protocol 2.3: Binding Affinity Prediction with AI-Rescoring

Objective: To apply a trained machine learning model to improve binding affinity estimation.

Materials: Clustered pose files, pre-trained AI rescoring model (e.g., from platforms like Atomwise, or open-source models like gnina), suitable scripting environment.

Procedure:

- Data Preparation: Format the protein-ligand complex pose into the required input for the rescoring model (e.g., as 3D grids, graphs, or specified file format).

- Model Inference: Feed each prepared complex to the AI model to obtain a predicted binding affinity (pKd/Ki) or a classification score (active/inactive).

- Result Integration: Create a final ranked list where poses are sorted by the AI-rescored affinity. Compare this ranking with the initial docking and consensus rankings to highlight high-confidence candidates.

Table 1: Comparison of Scoring Functions in Post-Docking Analysis

| Scoring Function | Type | Strengths | Weaknesses | Typical Use in Consensus |

|---|---|---|---|---|

| AutoDock Vina | Empirical (Machine Learning-based) | Fast, good balance of speed/accuracy | Can be sensitive to search space, less accurate for metal ions | Primary docking and initial ranking |

| Glide SP/XP | Empirical & Force Field | Excellent pose prediction, detailed scoring | Computationally intensive, requires license | High-accuracy refinement & scoring |

| NNScore 3.0 | Neural Network (AI) | Trained on PDBbind, good affinity prediction | Requires careful feature engineering | AI-based rescoring & affinity estimation |

| MM-GBSA/PBSA | Force Field-Based | Physically rigorous, includes solvation | Very high computational cost, pose-dependent | Final affinity estimation for top poses |

Table 2: Key Interaction Types and Their Ideal Geometric Parameters

| Interaction Type | Critical Atoms/Groups | Ideal Distance (Å) | Ideal Angle (°) | Biological Significance |

|---|---|---|---|---|

| Hydrogen Bond | Donor (N-H, O-H) / Acceptor (O, N) | 2.5 - 3.5 | D-H...A > 120 | Specificity, directionality |

| Hydrophobic | Aliphatic/Aromatic C | ≤ 4.5 (C-C distance) | N/A | Binding affinity, desolvation |

| Pi-Pi Stacking | Aromatic ring centroids | 3.5 - 4.5 | 0-20 (parallel) | Aromatic residue engagement |

| Salt Bridge | Charged groups (e.g., COO⁻, NH₃⁺) | ≤ 4.0 | N/A | Strong electrostatic interaction |

Visualization: Post-Docking Analysis Workflow

Title: AI-Enhanced Post-Docking Analysis Protocol Flowchart

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software & Resources for Post-Docking Analysis

| Item | Function in Analysis | Example/Provider |

|---|---|---|

| Molecular Visualizer | 3D visualization of poses and interactions; creation of publication-quality images. | PyMOL, UCSF Chimera, BIOVIA Discovery Studio |

| Interaction Analysis Tool | Automated detection and classification of non-covalent protein-ligand interactions. | PLIP (Protein-Ligand Interaction Profiler), Maestro (Schrödinger), LigPlot⁺ |

| Scripting Library (Cheminfo) | Programmatic manipulation of molecules, calculation of descriptors, and workflow automation. | RDKit (Python), CDK (Java), Open Babel |

| AI-Rescoring Platform | Applies machine learning models to predict binding affinity and improve pose ranking. | gnina (open-source), DeepDock, commercial AI suites (e.g., AtomNet) |

| Consensus Scoring Script | Custom script or pipeline to normalize and combine scores from multiple docking functions. | In-house Python/R scripts, KNIME workflows |

| Structural Database | Source of reference complexes for comparative interaction analysis and pharmacophore modeling. | PDB, PDBbind, Binding MOAD |

Within the thesis "AI-guided molecular docking for bioactive natural products research," this case study demonstrates the practical application of virtual screening. The objective is to computationally dock a diverse library of flavonoid compounds against a specified kinase target (e.g., CDK2 or EGFR) to identify high-affinity, naturally derived lead candidates. Flavonoids, with their inherent bioactivity and favorable ADMET profiles, present a promising starting point for kinase inhibitor development.

Table 1: Essential Research Toolkit for Computational Docking Study

| Item | Function/Description |

|---|---|

| Flavonoid Compound Library | A curated digital library (e.g., from Zinc15, PubChem) of 3D flavonoid structures in ready-to-dock format (MOL2, SDF). |

| Kinase Target Structure | High-resolution (preferably <2.0 Å) X-ray or cryo-EM protein structure (PDB format) with a co-crystallized ligand. |

| Molecular Docking Software | Program such as AutoDock Vina, Glide (Schrödinger), or GOLD for performing the virtual screening experiments. |

| Protein Preparation Suite | Tool (e.g., Maestro Protein Prep Wizard, MGLTools) for adding hydrogen atoms, assigning protonation states, and optimizing side chains. |

| Ligand Preparation Tool | Utility (e.g., LigPrep, Open Babel) to generate correct tautomers, stereoisomers, and low-energy 3D conformations for each flavonoid. |

| Grid Generation Utility | Software component to define the 3D search space (docking box) centered on the target's active site. |

| High-Performance Computing (HPC) Cluster | Essential for processing thousands of docking calculations in a parallelized, time-efficient manner. |

| Visualization & Analysis Software | Molecular viewer (e.g., PyMOL, Chimera, Maestro) for analyzing pose predictions and protein-ligand interactions. |

Protocol: Virtual Screening Workflow

Target Selection and Preparation

- Identify Target: Select a kinase target (e.g., PIK3CA, PDB ID: 7KRR) relevant to a disease pathway.

- Retrieve Structure: Download the PDB file. Remove water molecules, except those involved in key bridging interactions.

- Process Protein: Using Maestro's Protein Preparation Wizard:

- Add missing hydrogen atoms.

- Assign protonation states at pH 7.4 using Epik.

- Optimize hydrogen-bonding network.

- Perform restrained minimization (RMSD cutoff 0.3 Å) using the OPLS4 force field.

- Define Binding Site: Generate a receptor grid. Center the grid box on the native ligand's centroid. Set box dimensions to 20x20x20 Å to encompass the entire active site.

Ligand Library Preparation

- Library Curation: Download a flavonoid subset (e.g., ~5000 compounds) from a natural products database.

- Ligand Processing: Using LigPrep (Schrödinger):

- Generate possible states at pH 7.4 ± 2.0.

- Retain specified chiralities.

- Perform energy minimization using the OPLS4 force field.

- Output structures in Maestro format.

Molecular Docking Execution

- Docking Setup: Use Glide's High-Throughput Virtual Screening (HTVS) mode for initial filtering, followed by Standard Precision (SP) docking on top hits.

- Parameters: Use default parameters. Employ the prepared receptor grid and ligand library as inputs.

- Execution: Submit the job to an HPC cluster. For 5000 compounds in SP mode, allocate approximately 50-100 core-hours.

Post-Docking Analysis

- Score Ranking: Export all poses ranked by GlideScore (GScore) or equivalent docking score.

- Pose Inspection: Visually inspect the top 50-100 compounds (e.g., in PyMOL) for key interactions: hydrogen bonds with hinge region residues, hydrophobic packing, and salt bridges.

- Interaction Fingerprinting: Generate interaction diagrams for top candidates to compare binding modes.

Data Presentation

Table 2: Docking Results for Top 5 Flavonoid Hits Against Kinase PIK3CA (PDB: 7KRR)

| Rank | Compound ID (e.g., ZINC ID) | Docking Score (GScore, kcal/mol) | MM-GBSA ΔGBind (kcal/mol) | Key Protein-Ligand Interactions |

|---|---|---|---|---|

| 1 | ZINC3871154 | -12.3 | -58.7 | H-bonds: Val851 (backbone), Asp933. Hydrophobic: Ile932, Trp780. |

| 2 | ZINC4098755 | -11.8 | -55.2 | H-bond: Asp933. π-π Stacking: Tyr836. Salt Bridge: Lys802. |

| 3 | ZINC03831971 | -11.5 | -52.9 | H-bonds: Val851 (backbone), Ser854. Hydrophobic: Ile848, Phe930. |

| 4 | ZINC85486542 | -11.2 | -51.4 | H-bond: Glu849. Halogen Bond: Asp933. Hydrophobic: Met922. |

| 5 | ZINC96703321 | -10.9 | -49.8 | H-bonds: Asp933, Ser854. Metal Coordination: Mg2+ ion. |

Table 3: Comparative Docking Metrics Across Flavonoid Subclasses

| Flavonoid Subclass | Avg. Docking Score (kcal/mol) | Avg. Molecular Weight (g/mol) | Avg. LogP | Hit Rate (% with GScore < -9.0) |

|---|---|---|---|---|

| Flavones | -8.7 ± 1.2 | 356.4 | 3.1 | 18% |

| Flavonols | -9.2 ± 1.5 | 372.4 | 2.8 | 24% |

| Isoflavones | -7.9 ± 1.0 | 354.3 | 3.4 | 12% |

| Flavanones | -8.1 ± 0.9 | 358.4 | 2.9 | 15% |

| Chalcones | -9.8 ± 1.8 | 298.3 | 3.8 | 31% |

Visualizations

Workflow for AI-Guided Flavonoid Docking

Thesis Context & Case Study Relationship

Kinase Signaling Pathway & Inhibitor Site

Navigating Computational Challenges: Troubleshooting and Optimizing AI-Docking

1. Introduction In AI-guided molecular docking for bioactive natural products, the static model of a protein target is a primary limitation. Natural products often interact with allosteric sites or induce specific conformational changes. Furthermore, crystallographic water molecules can be crucial mediators of ligand-binding interactions. Mishandling these elements leads to false negatives in virtual screening and inaccurate pose prediction.

2. Quantitative Data on Impact

Table 1: Impact of Receptor Flexibility on Docking Performance

| Method | Average RMSD Reduction vs. X-ray | Success Rate (RMSD < 2.0 Å) | Computational Cost Increase |

|---|---|---|---|

| Rigid Receptor Docking | 3.5 Å | 35% | Baseline (1x) |

| Ensemble Docking | 2.1 Å | 58% | 5-10x |

| Induced Fit Docking (IFD) | 1.8 Å | 72% | 50-100x |

| AI-Guided Adaptive Sampling | 1.5 Å* | 78%* | 20-50x* |

Table 2: Role of Conserved Water Molecules in Binding Affinity

| Water Handling Strategy | ΔG_bind Correlation (R²) | False Positive Rate | Key Application |

|---|---|---|---|

| Delete All Waters | 0.45 | High | Initial, rapid screening |

| Retain Crystallographic Waters | 0.62 | Medium | Standard docking protocol |