From Nature to Novel Drugs: How AI and Machine Learning Are Revolutionizing Natural Product Discovery

This article provides a comprehensive overview of the transformative role of artificial intelligence (AI) and machine learning (ML) in natural product drug discovery.

From Nature to Novel Drugs: How AI and Machine Learning Are Revolutionizing Natural Product Discovery

Abstract

This article provides a comprehensive overview of the transformative role of artificial intelligence (AI) and machine learning (ML) in natural product drug discovery. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles of using AI to decode nature's chemical library, details cutting-edge methodologies for virtual screening and de novo design, addresses key challenges in data integration and model interpretability, and critically evaluates validation frameworks and comparative performance against traditional methods. The synthesis offers a roadmap for integrating AI/ML into discovery pipelines to accelerate the identification of bioactive compounds from natural sources.

Decoding Nature's Pharmacy: AI as the New Lens for Natural Product Discovery

1. Introduction Traditional natural product (NP) screening has been the cornerstone of drug discovery, yielding landmark therapeutics like penicillin, paclitaxel, and artemisinin. However, this approach is embedded within a research and development framework characterized by significant inefficiencies. This application note details the principal challenges, providing quantitative data and standard protocols that illustrate the historical bottleneck. Understanding these limitations is critical for appreciating the transformative potential of AI and machine learning in de-risking and accelerating NP-based discovery.

2. Quantifying the Bottleneck: Core Challenges The challenges of traditional NP screening are multidimensional, spanning from sourcing to isolation. The table below summarizes key quantitative hurdles.

Table 1: Quantitative Challenges in Traditional NP Screening

| Challenge Category | Typical Metric/Data Point | Impact on Discovery Timeline |

|---|---|---|

| Source Acquisition & Dereplication | 10,000–100,000 extracts screened per hit; >30% rediscovery rate of known compounds. | Adds 3–6 months for sourcing, extraction, and preliminary analysis. |

| Bioassay Throughput | Manual or semi-automated assays process 100–1,000 samples per week. | Initial screening for a single target can take 6–12 months. |

| Compound Isolation & Structure Elucidation | 100 mg–1 g of raw extract required for isolation; leads to 1–10 mg of pure compound. Takes 2–6 months per active lead. | The major rate-limiting step, consuming 6–18 months of effort per promising extract. |

| Hit-to-Lead Optimization Complexity | NPs often have complex scaffolds with 5–10 chiral centers, making synthetic modification difficult. | Medicinal chemistry cycles are protracted, often >24 months per scaffold. |

3. Detailed Experimental Protocols Protocol 3.1: Standard Bioassay-Guided Fractionation Workflow Objective: To isolate the active constituent(s) from a crude natural product extract. Materials: See "Research Reagent Solutions" below. Procedure:

- Crude Extract Preparation (1-2 weeks): Macerate 1 kg of dried, ground source material (plant, marine sponge, etc.) sequentially with solvents of increasing polarity (e.g., hexane, dichloromethane, ethyl acetate, methanol). Concentrate each fraction in vacuo to yield crude extracts.

- Primary High-Throughput Screening (HTS) (2-4 weeks): Screen all crude extracts at a single concentration (e.g., 100 µg/mL) against the target (e.g., enzyme inhibition, cell viability). Confirm hits in dose-response (IC50/EC50 determination).

- Fractionation of Active Extract (2-3 months): a. Open-Column Chromatography (CC): Load active crude extract (~10 g) onto a normal-phase silica gel column. Elute with a stepwise or gradient solvent system (e.g., hexane:ethyl acetate:methanol). Collect 200-500 fractions. b. Thin-Layer Chromatography (TLC) Analysis: Analyze every 10th fraction by TLC (UV/chemical staining). Pool fractions with similar TLC profiles. c. Secondary Bioassay: Test all pooled fractions in the target bioassay. Select the most active pool for further separation. d. Iterative Chromatography: Repeat steps 3a-c using orthogonal methods (e.g., reverse-phase C18 column, Sephadex LH-20 size exclusion, HPLC) until pure compounds are obtained.

- Structure Elucidation (1-2 months): Analyze pure active compound using a suite of spectroscopic techniques: High-Resolution Mass Spectrometry (HR-MS), 1D/2D Nuclear Magnetic Resonance (NMR) (¹H, ¹³C, COSY, HSQC, HMBC). Compare data to published databases.

Protocol 3.2: Dereplication by LC-MS/MS Analysis Objective: To rapidly identify known compounds in an active extract prior to intensive isolation. Procedure:

- Prepare a dilute solution of the active crude extract.

- Perform Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) using a reverse-phase column coupled to a high-resolution mass spectrometer.

- Acquire data in both positive and negative ionization modes with data-dependent MS/MS fragmentation.

- Process raw data: deconvolute peaks, calculate exact masses, and extract MS/MS fragmentation patterns.

- Query processed data against NP-specific databases (e.g., GNPS, DNP, COCONUT) using spectral matching algorithms.

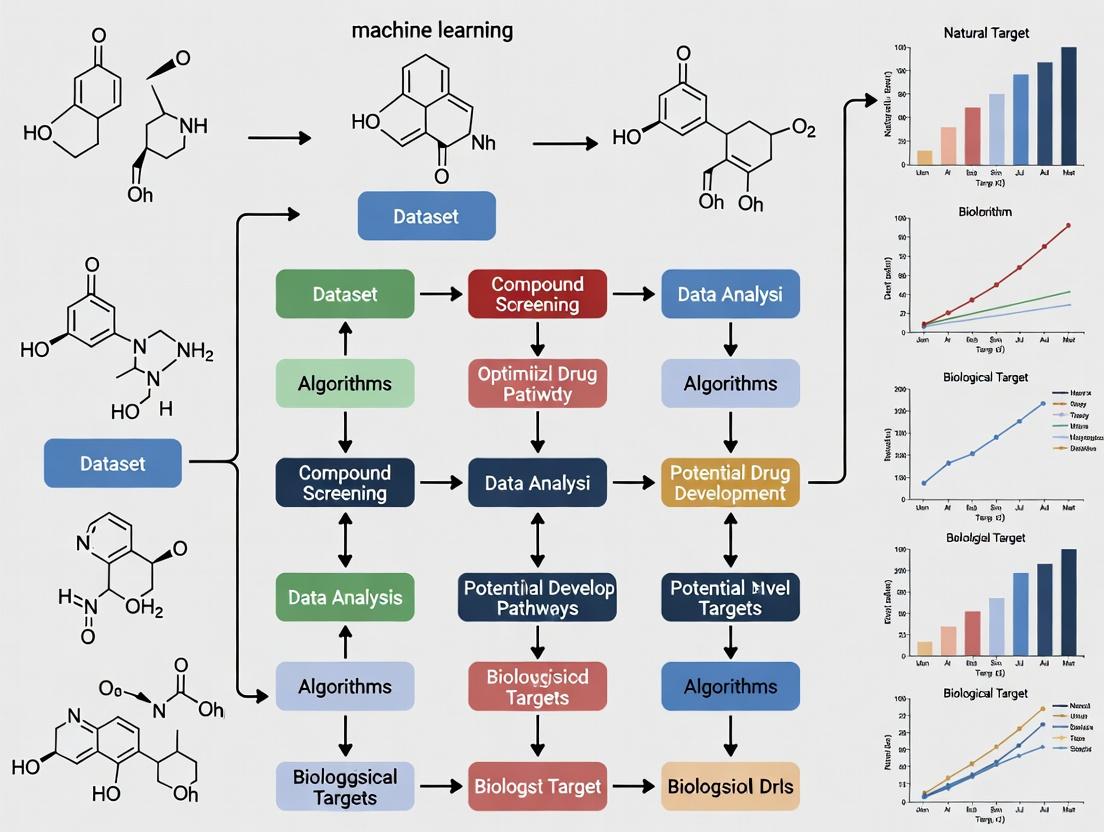

4. Visualizing the Workflow and Challenges

Diagram 1: Traditional NP Screening Workflow & Bottlenecks

Diagram 2: Key Challenges & Their Consequences

5. The Scientist's Toolkit: Research Reagent Solutions Table 2: Essential Materials for Traditional NP Screening

| Reagent/Material | Function & Application |

|---|---|

| Silica Gel (40-63 µm, 60 Å pore) | Stationary phase for open-column chromatography (CC); separates compounds by polarity. |

| Sephadex LH-20 | Size-exclusion chromatography gel; separates compounds by molecular size in organic solvents. |

| C18 Reverse-Phase Resin | Stationary phase for medium-pressure liquid chromatography (MPLC) or HPLC; separates by hydrophobicity. |

| Deuterated NMR Solvents (CDCl3, DMSO-d6, Methanol-d4) | Solvents for NMR spectroscopy that do not interfere with the sample's proton signal. |

| Bioassay Kits (e.g., CellTiter-Glo, Kinase-Glo) | Homogeneous, ready-to-use assay systems for high-throughput screening of cell viability or enzyme activity. |

| LC-MS Grade Solvents (Acetonitrile, Methanol, Water) | Ultra-pure solvents for LC-MS analysis to minimize background noise and ion suppression. |

| TLC Plates (Silica GF254) | Analytical plates for monitoring fractionation progress; UV-active indicator (254 nm) for visualization. |

The discovery of bioactive natural products (NPs) is transitioning from serendipitous discovery to a data-driven science. The immense chemical space of NPs—estimated at over (10^{60}) possible molecules—necessitates sophisticated computational approaches for efficient exploration. This document frames core AI and Machine Learning (ML) paradigms—Supervised, Unsupervised, and Deep Learning—within the specific context of accelerating NP-based drug development, from dereplication and target prediction to activity forecasting and synthesis planning.

Core Paradigms: Definitions and Chemical Data Applications

Supervised Learning: Models learn a mapping function from input chemical data (e.g., molecular fingerprints) to known output labels (e.g., IC(_{50}), toxic/no-toxic). It is the cornerstone for predictive modeling in cheminformatics.

- Primary Use Cases: Quantitative Structure-Activity Relationship (QSAR) models, property prediction (ADMET), target identification, reaction yield prediction.

Unsupervised Learning: Models identify inherent patterns, clusters, or structures in unlabeled chemical data. It is essential for data exploration and dimensionality reduction.

- Primary Use Cases: Compound clustering and diversity analysis, anomaly detection in high-throughput screening (HTS), novel scaffold discovery, molecular representation learning.

Deep Learning (DL): A subset of ML utilizing multi-layered neural networks to learn hierarchical representations directly from raw or minimally processed data (e.g., SMILES strings, 2D graphs, 3D structures).

- Primary Use Cases: De novo molecular design, prediction of protein-ligand binding poses, synthesis pathway prediction, advanced property prediction from molecular graphs.

Application Notes & Protocols

Application Note 1: Supervised Learning for NP Activity Prediction

Objective: Train a supervised model to predict the anti-malarial activity of natural product derivatives from molecular descriptors.

Quantitative Data Summary: Table 1: Performance Metrics of Supervised Models on Anti-Malarial Activity Dataset (n=2,450 compounds)

| Model Algorithm | Accuracy (%) | Precision | Recall | F1-Score | AUC-ROC | Key Molecular Descriptors Used |

|---|---|---|---|---|---|---|

| Random Forest | 87.2 | 0.85 | 0.88 | 0.86 | 0.93 | MW, AlogP, NumHDonors, NumHAcceptors, Topological Polar Surface Area (TPSA) |

| Gradient Boosting | 89.5 | 0.90 | 0.87 | 0.88 | 0.95 | Same as above, plus Molecular Fragmentation Indices |

| Support Vector Machine | 83.1 | 0.81 | 0.84 | 0.82 | 0.89 | Same as Random Forest |

| Feed-Forward Neural Net | 90.1 | 0.89 | 0.91 | 0.90 | 0.96 | Extended-Connectivity Fingerprints (ECFP6) |

Protocol 1.1: Building a QSAR Classification Model

- Data Curation: Assay data for 2,450 NP derivatives with binary labels (Active: pIC({50}) > 7.0; Inactive: pIC({50}) < 5.0). Apply curation steps (remove duplicates, check for assay interference compounds).

- Descriptor Calculation & Featurization: Compute a set of 200 standard 2D molecular descriptors (e.g., using RDKit or Mordred). Standardize features by removing low-variance descriptors and applying RobustScaler.

- Data Splitting: Split data into stratified Training (70%), Validation (15%), and Test (15%) sets. The validation set is for hyperparameter tuning.

- Model Training & Validation: Train multiple algorithms (e.g., Random Forest, XGBoost). Optimize hyperparameters via grid search or Bayesian optimization using 5-fold cross-validation on the training set, evaluated on the validation set.

- Model Evaluation: Apply the final tuned model to the held-out Test Set. Report standard metrics (Accuracy, Precision, Recall, F1, AUC-ROC). Perform applicability domain analysis (e.g., using leverage) to define the model's reliable prediction space.

- Deployment & Inference: Deploy the serialized model for predicting activity of newly designed or virtual NP libraries.

Application Note 2: Unsupervised Learning for NP Dereplication

Objective: Apply unsupervised clustering to a mass spectrometry (MS) dataset of marine invertebrate extracts to group structurally similar NPs and prioritize novel compounds.

Quantitative Data Summary: Table 2: Clustering Results for LC-MS/MS Data from 500 Marine Extracts

| Clustering Method | Number of Molecular Families Identified | Average Silhouette Score | Key Features Used | Computational Time (min) |

|---|---|---|---|---|

| Hierarchical Clustering (Ward) | 38 | 0.65 | MS1 (Precursor m/z), RT, MS2 (Tanimoto similarity of fingerprints) | 45 |

| DBSCAN | 41 (plus 120 noise points) | 0.71 | Same as above | 22 |

| t-SNE + HDBSCAN | 45 | 0.78 | Same as above | 38 |

| Variational Autoencoder (VAE) Latent Space + K-means | 40 | 0.82 | Learned 32-dimensional latent representation from MS2 spectra | 65 (incl. training) |

Protocol 2.1: MS-Based Dereplication Workflow

- Feature Extraction: Process raw LC-MS/MS data (

.rawor.mzML). Use tools like MZmine 3 or MS-DIAL to perform peak picking, alignment, and gap filling. Export a feature table with columns for Precursor m/z, Retention Time (RT), and peak intensity across samples. - MS2 Spectral Networking: For each MS1 feature, aggregate its associated MS2 spectra. Calculate pairwise spectral similarities (e.g., modified cosine score) to create an undirected graph of spectral matches.

- Molecular Network Construction: Use tools like GNPS to create a molecular network. Nodes represent consensus MS2 spectra; edges connect spectra with similarity scores above a threshold (e.g., >0.7). Visualize with Cytoscape.

- Cluster Analysis & Novelty Prioritization: Extract clusters (molecular families) from the network. Cross-reference cluster members against public NP databases (e.g., NPASS, COCONUT) via precursor mass and spectral matching. Flag clusters with no or weak database matches for novel compound discovery.

- Isolation Guidance: Prioritize extracts containing ions from high-priority novel clusters for subsequent bioassay-guided fractionation.

Application Note 3: Deep Learning forDe NovoNP Design

Objective: Utilize a Generative Adversarial Network (GAN) or a Transformer model to generate novel, synthetically accessible NP-like molecules with predicted activity against a kinase target.

Quantitative Data Summary: Table 3: Evaluation of Generated NP-like Molecules (n=10,000) by a Transformer Model

| Evaluation Metric | Result | Benchmark (ZINC NPs) | Pass/Fail Criteria |

|---|---|---|---|

| Validity (SMILES parsable) | 99.8% | 100% | >95% |

| Uniqueness | 88.5% | - | >80% |

| Novelty (Not in Training Set) | 85.2% | - | >75% |

| Drug-likeness (QED Score > 0.6) | 91.1% | 78.3% | >70% |

| Synthetic Accessibility (SA Score < 4.5) | 76.4% | 81.2% | >70% |

| Predictive Activity (pIC50 > 8.0) | 22.3% | 0.5% (Random) | Maximize |

Protocol 3.1: Training a Molecular Transformer Generator

- Data Preparation: Curate a dataset of 50,000 known bioactive NPs and NP-like molecules in SMILES notation. Canonicalize and sanitize molecules using RDKit. Tokenize the SMILES strings into a vocabulary of characters/substructures.

- Model Architecture: Implement a Transformer decoder-only architecture (similar to GPT). Use embedding layers for tokens, followed by multiple transformer blocks with multi-head self-attention and feed-forward networks.

- Training: Train the model with a causal language modeling objective (predicting the next token in a sequence). Use the AdamW optimizer with a learning rate scheduler. Monitor the loss on a validation set.

- Conditional Generation: Fine-tune the pre-trained model on a smaller set of molecules active against the specific kinase target (e.g., p38 MAPK). This conditions the model to generate molecules biased towards the desired activity.

- Generation & Filtering: Sample new SMILES strings from the fine-tuned model (e.g., using nucleus sampling). Filter the generated molecules through a pipeline assessing validity, uniqueness, drug-likeness (QED), synthetic accessibility (RAscore, SAScore), and predicted activity (using a pre-trained supervised model from Protocol 1.1).

- Output: Produce a ranked list of 100-500 novel, synthesizable NP-inspired candidate structures for in silico docking or synthesis planning.

Visualization: Workflows & Logical Frameworks

Diagram 1: AI/ML Framework for NP Drug Discovery (100 chars)

Diagram 2: Unsupervised MS Dereplication Workflow (100 chars)

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 4: Key Research Reagents & Computational Tools for AI/ML in NP Research

| Item/Category | Function in AI/ML Workflow | Example Specific Tools / Libraries |

|---|---|---|

| Chemical Database | Source of labeled/training data for supervised learning and generative model training. | NPASS, COCONUT, PubChem, ChEMBL, ZINC (NP subset) |

| Cheminformatics Suite | Calculates molecular descriptors, fingerprints, and performs basic molecule manipulations. | RDKit, OpenBabel, CDK (Chemistry Development Kit) |

| Mass Spectrometry Processing Software | Processes raw spectral data into feature tables for unsupervised dereplication workflows. | MZmine 3, MS-DIAL, OpenMS, GNPS Platform |

| Machine Learning Framework | Provides algorithms and infrastructure for building, training, and deploying models. | Scikit-learn (SL/UL), PyTorch (DL), TensorFlow (DL), XGBoost (SL) |

| Molecular Visualization & Analysis | Visualizes chemical structures, clusters, molecular networks, and model interpretations. | Cytoscape (for networks), RDKit (embedding), matplotlib/Plotly (graphs) |

| High-Performance Computing (HPC) / Cloud Resources | Provides necessary compute for training large DL models and processing massive datasets. | Local GPU clusters, Google Cloud AI Platform, AWS SageMaker, Azure ML |

| Model Validation & Benchmarking Suite | Ensures model robustness, reproducibility, and adherence to OECD QSAR principles. | scikit-learn metrics, DeepChem model evaluation, Applicability Domain tools |

Application Notes

The integration of genomic, metabolomic, and ethnobotanical databases through AI/ML pipelines is revolutionizing the identification and prioritization of natural product (NP) leads for drug discovery. This multi-omics approach enables the de-replication of known compounds, the prediction of novel bioactive scaffolds, and the targeted exploration of biodiversity, significantly accelerating the early discovery pipeline.

Table 1: Core Database Types for AI-Driven NP Discovery

| Database Type | Key Examples (2024-2025) | Primary Data | Utility in AI/ML Pipeline |

|---|---|---|---|

| Genomic | MIBiG 3.0, antiSMASH DB, NCBI GenBank, JGI MycoCosm | Biosynthetic Gene Clusters (BGCs) encoding NP pathways. | Predicts NP chemical class and potential novelty via BGC analysis. |

| Metabolomic | GNPS, Metabolights, COCONUT, NP Atlas, HMDB | MS/MS spectral data, molecular fingerprints, physico-chemical properties. | Enables spectral networking for compound identification and similarity-based novel compound prediction. |

| Ethnobotanical | NAPRALERT, Dr. Duke's Phytochemical and Ethnobotanical DBs, TKWB | Traditional use records, plant taxonomy, reported bioactivities. | Provides pre-filtered biological context, prioritizing species for multi-omics analysis. |

Table 2: Quantitative Output from an Integrated AI Prioritization Pipeline

| Analysis Step | Input Data | ML Model Used | Output Metric (Typical Yield) |

|---|---|---|---|

| Ethnobotanical Pre-filtering | 10,000 species records | NLP-based text mining | 500 species with high-priority traditional use claims. |

| Genomic Prioritization | 500 species genome skims | Random Forest Classifier | 120 species predicted to contain novel NRPS/PKS-type BGCs. |

| Metabolomic Matching | LC-MS/MS from 120 species | Spectral Network Analysis (GNPS) | 15 putative novel molecular families linked to prioritized BGCs. |

| Overall Pipeline Efficiency | 10,000 candidate species | Integrated AI workflow | 15 high-probability novel NP leads (0.15% hit rate) |

Experimental Protocols

Protocol 1: Integrated Multi-Omics Collection for AI Training Data

Objective: To generate linked genomic, metabolomic, and ethnobotanical data from a plant or microbial sample for building and validating AI prediction models.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- Ethnobotanical Curation: For the sampled organism, query databases (e.g., NAPRALERT) using its binomial name. Extract and structure all traditional use indications, geographical origin, and previously isolated compounds into a JSON format using a scripted API call or manual curation.

- Genomic DNA Extraction & Sequencing: a. Flash-freeze 100 mg of sample (e.g., microbial mycelia, plant leaf) in liquid N₂. b. Perform genomic DNA extraction using a kit optimized for high polysaccharide/polyphenol content (e.g., CTAB method for plants). c. Assess DNA purity (A260/280 ~1.8) and integrity via agarose gel. d. Prepare and sequence an Illumina paired-end (2x150 bp) whole-genome shotgun library. Target coverage: >50x.

- Metabolite Profiling via LC-MS/MS: a. Homogenize a separate 50 mg sample in 1 mL of 80% methanol/H₂O. b. Centrifuge at 14,000 x g for 15 min at 4°C. Transfer supernatant to an LC-MS vial. c. Perform reversed-phase UHPLC (C18 column, gradient: 5-100% acetonitrile in H₂O + 0.1% formic acid over 18 min). d. Acquire data-dependent acquisition (DDA) MS/MS on a high-resolution Q-TOF mass spectrometer in positive and negative ionization modes (m/z 100-1500).

- Data Packaging for AI: Create a dedicated directory. Place files:

sample_X_ethnobotanical.json,sample_X_genomic_R1.fastq.gz,sample_X_genomic_R2.fastq.gz,sample_X_metabolomic.mzML. This linked dataset forms one training instance for an ML model.

Protocol 2: AI-Powered Prioritization Workflow Using Public Databases

Objective: To computationally prioritize samples for fractionation based on the likelihood of yielding novel bioactive NPs.

Procedure:

- BGC Prediction & Quantification: a. Assemble genomic reads using SPAdes. Contigs >1 kb are retained. b. Submit assembled contigs to the antiSMASH 7.0 web server or run locally. This identifies BGCs and predicts their product class (e.g., terpene, nonribosomal peptide). c. Extract the "BGC novelty score" (a metric comparing predicted BGCs to MIBiG database) and the count of unique BGC classes per sample. Store in a table.

- Metabolomic Spectral Networking:

a. Convert all .mzML files to .mGF format using MSConvert.

b. Upload to the GNPS platform. Perform a Feature-Based Molecular Networking (FBMN) analysis with default parameters.

c. Download the network file (

graphml) and cluster information. Count the number of nodes (MS/MS spectra) not connected to any library spectrum ("orphan nodes") as a metric of chemical novelty. - Ethnobotanical Scoring: a. Using the curated JSON data, apply a scoring system: +2 points for a traditional use aligning with the target disease area (e.g., "antinflammatory" for pain), +1 point for any other medicinal use, 0 for no record.

- Integrated Ranking with ML: a. Create a feature matrix: Rows=samples, Columns=[BGCnoveltyscore, BGCclasscount, Metabolomicorphannodes, Ethnobotanical_score]. b. Train a simple Random Forest regressor on a labeled subset (where "hit" = known novel NP discovery) to predict a composite "Priority Score." c. Apply the model to unlabeled samples. Rank samples by the predicted Priority Score for lab investigation.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in NP Discovery Pipeline |

|---|---|

| CTAB DNA Extraction Buffer | Lysis buffer for tough plant/microbial cell walls; complexes polysaccharides for clean gDNA. |

| Illumina DNA Prep Kit | Library preparation for whole-genome sequencing; ensures compatible adapter ligation for NGS. |

| 80% Methanol / 0.1% Formic Acid | Standard metabolomics extraction/solvent; quenches enzymes and is compatible with LC-MS. |

| C18 UHPLC Column (1.7µm) | High-resolution separation of complex natural product extracts prior to MS detection. |

| antiSMASH 7.0 Software | Standard tool for BGC identification and classification from genomic data. |

| GNPS (Global Natural Products Social) Platform | Cloud-based ecosystem for mass spectrometry data processing, sharing, and molecular networking. |

Visualizations

AI-Driven Multi-Omics Prioritization Workflow

Multi-Omics Data Generation Protocol for AI

Within the broader thesis on AI and machine learning for natural product drug discovery, foundational chemical models represent a paradigm shift. These models, pre-trained on vast chemical corpora, learn fundamental representations of molecular structure, properties, and reactivity. They provide a powerful, transferable starting point for downstream tasks specific to natural product research, such as predicting bioactive conformations, identifying potential biosynthesis pathways, or screening for novel scaffolds with desired pharmacological profiles. This moves beyond traditional quantitative structure-activity relationship (QSAR) models by capturing a deeper, more generalizable chemical "language."

Core Concepts & Data Presentation

Types of Chemical Language Models & Embeddings

Table 1: Comparison of Major Chemical Representation Methods for Foundational Models

| Representation Method | Description | Example Model/Approach | Typical Embedding Dimension | Key Advantage for Natural Products |

|---|---|---|---|---|

| SMILES/String-Based | Treats Simplified Molecular-Input Line-Entry System strings as a sequence of tokens (e.g., atoms, brackets). | ChemBERTa, SMILES-BERT | 128 - 768 | Can handle complex, ring-rich structures common in natural products. |

| SELFIES | Treats SELF-referencing embedded Strings (SELFIES) as a sequence. Guarantees 100% valid chemical structures. | SELFIES-based Transformer | 128 - 512 | Enables robust generative tasks without invalid structure generation. |

| Graph-Based | Represents molecules as graphs with atoms as nodes and bonds as edges. Uses Graph Neural Networks (GNNs). | GROVER, MPNN, GraphTransformer | 300 - 1024 | Inherently captures topological and spatial relationships, ideal for stereochemistry. |

| 3D Conformer-Aware | Incorporates 3D molecular geometry (atomic coordinates) into the representation. | 3D-Transformer, GeoMol | 256 - 1024 | Critical for modeling pharmacophore and protein-ligand interactions. |

| Reaction-Aware | Trained on reaction data, learning transformations between reactants and products. | Molecular Transformer | 256 - 512 | Useful for predicting biosynthetic or synthetic pathways for natural product analogs. |

Table 2: Performance Benchmarks of Selected Foundational Chemical Models (Representative Tasks)

| Model Name (Year) | Representation | Pre-training Dataset Size | Fine-tuned Task (Dataset) | Key Metric (Score) | Relevance to NP Discovery |

|---|---|---|---|---|---|

| ChemBERTa-2 (2023) | SMILES | 77M SMILES | BBB Penetration (MoleculeNet) | ROC-AUC: 0.898 | Predicting natural product bioavailability. |

| GROVER (2022) | Graph | 11M Molecules | Toxicity Prediction (Tox21) | Avg. ROC-AUC: 0.855 | Early-stage safety screening of NP hits. |

| MoLFormer (2023) | SMILES (XLNet) | 1.1B SMILES | Quantum Property (QM9) | MAE on µ: 0.30 D | Estimating electronic properties of novel scaffolds. |

| 3D-EquiBind (2024) | 3D Graph | PDBBind (~20k complexes) | Protein-Ligand Docking (POSEIDON) | RMSD < 2.0 Å (Success Rate) | Rapid pose prediction for NP-target complexes. |

Experimental Protocols

Protocol: Fine-Tuning a Pre-trained Chemical LM for Activity Prediction

Objective: Adapt a general-purpose chemical language model (e.g., a SMILES-based transformer) to predict the activity of natural product-like compounds against a specific target.

Materials & Reagent Solutions:

- Pre-trained Model Weights: (e.g.,

ChemBERTa-77M-MLMfrom Hugging Face). - Fine-tuning Dataset: Curated CSV file with columns:

canonical_smiles,activity_label(e.g., 1/0 for active/inactive). - Software: Python 3.9+, PyTorch 2.0+, Transformers library, RDKit, scikit-learn.

- Computing Environment: GPU with >8GB VRAM recommended.

Procedure:

- Data Preparation:

- Using RDKit, standardize SMILES (neutralization, salt removal, tautomer canonicalization).

- Split data into training (70%), validation (15%), and test (15%) sets using stratified splitting based on

activity_label. - Create a tokenizer compatible with the pre-trained model or use its default.

- Model Setup:

- Load the pre-trained model architecture and weights.

- Replace the pre-training head (e.g., masked language modeling head) with a classification head (a dropout layer followed by a linear layer projecting to 2 output neurons).

- Initialize the classification head with random weights.

- Training Loop:

- Freezing (Optional): Initially freeze all layers except the classification head for 2-3 epochs.

- Hyperparameters: Set batch size (16-32), learning rate (2e-5 to 5e-5), number of epochs (20-50). Use AdamW optimizer.

- Execution: Unfreeze all layers. For each epoch, forward propagate batches of tokenized SMILES, compute cross-entropy loss between predictions and true labels, and backpropagate.

- Validation: After each epoch, evaluate on the validation set. Save the model with the best validation ROC-AUC score.

- Evaluation:

- Load the best saved model and evaluate on the held-out test set.

- Report standard metrics: ROC-AUC, Precision-Recall AUC, F1-score, and generate a confusion matrix.

Protocol: Generating Molecular Embeddings for Virtual Screening

Objective: Use a foundational model to create a fixed-dimensional vector (embedding) for each compound in a library to enable similarity-based virtual screening.

Materials & Reagent Solutions:

- Embedding Model: A pre-trained model capable of producing pooled molecular representations (e.g.,

GROVER-base,ChemBERTawith mean pooling). - Compound Library: A large database of natural products and synthetic analogs in SDF or SMILES format.

- Query Molecule: The known active natural product ("hit").

- Software: Python, PyTorch/TensorFlow, RDKit, NumPy, FAISS (Facebook AI Similarity Search) library.

Procedure:

- Library Standardization:

- Process all library molecules with RDKit: generate canonical SMILES, remove duplicates, filter by basic drug-like properties (e.g., MW < 800, LogP < 5).

- Embedding Generation:

- Load the pre-trained model in inference mode (

.eval()). - For each canonical SMILES in the library:

- Tokenize/encode the SMILES for the model.

- Pass the tokens through the model.

- Extract the embedding from the model's pooling layer (e.g., the [CLS] token embedding or mean of last hidden layer).

- Store the resulting vector (e.g., 768-dimensional) in a NumPy array.

- Load the pre-trained model in inference mode (

- Indexing for Search:

- Use the FAISS library to create an efficient similarity search index (e.g.,

IndexFlatIPfor inner product/cosine similarity). - Add all library embeddings to the index.

- Use the FAISS library to create an efficient similarity search index (e.g.,

- Similarity Search:

- Generate an embedding for the query natural product following Step 2.

- Query the FAISS index with the query embedding, requesting the top k (e.g., 100) most similar vectors.

- Retrieve the corresponding compound IDs and SMILES for the top results.

- Validation:

- Visually inspect top hits for structural similarity.

- If possible, evaluate retrieved hits using independent molecular docking or known bioactivity data.

Mandatory Visualizations

Diagram 1: Foundational Models in NP Discovery Workflow (98 chars)

Diagram 2: From Molecule to Embedding: Two Pathways (86 chars)

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Digital "Reagents" for Working with Chemical Foundational Models

| Item (Software/Library) | Function in Experiment | Key Notes for Implementation |

|---|---|---|

| RDKit | Core cheminformatics toolkit for molecule standardization, descriptor calculation, SMILES parsing, and image rendering. | Use for mandatory pre-processing to ensure input quality. Critical for handling natural product stereochemistry. |

| PyTorch / TensorFlow | Deep learning frameworks for loading, modifying, and training foundational model architectures. | Required for fine-tuning protocols. PyTorch is commonly used in recent model implementations. |

| Hugging Face Transformers | Provides easy access to thousands of pre-trained transformer models (including ChemBERTa). | Simplifies tokenization, model loading, and training loops via the Trainer API. |

| DeepChem | High-level library wrapping many molecular deep learning models and datasets. | Useful for quick prototyping and access to curated molecular datasets (e.g., MoleculeNet). |

| FAISS | Library for efficient similarity search and clustering of dense vectors. | Essential for performing virtual screening on large libraries using molecular embeddings. Runs on CPU/GPU. |

| MolVS / Cactvs | Specialized tools for rigorous molecular validation and standardization (tautomer normalization, charge correction). | Use for advanced pre-processing when RDKit's standard rules are insufficient. |

| Streamlit / Dash | Frameworks for building simple web applications to demo embedding models and similarity search tools. | Enables creation of shareable, user-friendly interfaces for project collaborators. |

This protocol details the integrated pipeline for natural product (NP) discovery, framed within the broader thesis that AI and machine learning (ML) are transformative for de-risking and accelerating NP research. By bridging classical microbiology, analytical chemistry, and modern bioinformatics, the pipeline generates structured, high-quality data essential for training robust AI models aimed at novel hit identification and mechanism prediction.

Application Notes: Core Stages & Data Generation for AI

Stage 1: Intelligent Sample Collection & Metadata Curation

Application Note: Geographic, taxonomic, and ecological metadata are critical features for AI models predicting chemical novelty. Standardized digital capture is mandatory.

Protocol: Environmental Sample Collection & Metadata Recording

- Site Selection: Use GIS tools to target unique or underrepresented biomes (e.g., marine sediments, plant endophytes from threatened ecosystems).

- Collection: Aseptically collect sample (e.g., 1g soil, 5ml water, plant tissue). Perform in-situ measurements (pH, temperature, GPS coordinates).

- Preservation: Immediately place samples in sterile containers. For microbial communities, use nucleic acid stabilization buffers or cryopreservation.

- Digital Metadata Entry: Log all data into a structured database (e.g., MIxS standards). Fields must include:

Sample_ID,Date_Time,GPS,Habitat,Host_Taxon,Depth,pH,Collector.

Stage 2: Strain Prioritization & Culturing

Application Note: The goal is to maximize chemical diversity for downstream analysis. AI models can prioritize strains based on genomic or morphological features.

Protocol: High-Throughput Culturing & Morphological Screening

- Selective Cultivation: Inoculate sample homogenate onto diverse media (ISP2, AIA, GYM, R2A, Chitin). Incubate at varied temperatures (12°C, 28°C, 37°C) for 7-28 days.

- Colony Picking: Use robotic pickers or manual selection to isolate unique morphotypes based on color, texture, diffusible pigments.

- Genomic DNA Extraction: Extract gDNA from biomass using a kit (e.g., FastDNA Spin Kit for Soil). Elute in 50 µL TE buffer.

- 16S/ITS Sequencing: Amplify rRNA gene regions via PCR. Sequence and perform taxonomic assignment via BLAST against NCBI or SILVA.

- AI-Prioritization Input: Data table for model training includes

Strain_ID,Morphotype_Class,Growth_Rate,Taxonomic_Lineage,Isolation_Medium.

Table 1: Example Strain Prioritization Data

| Strain_ID | Phylum | Growth_Score | Pigment_Production | AIPriorityRank |

|---|---|---|---|---|

| NPML001 | Actinobacteria | 0.85 | Blue (455 nm) | 1 |

| NPML002 | Ascomycota | 0.62 | Yellow | 3 |

| NPML003 | Proteobacteria | 0.71 | None | 2 |

Stage 3: Metabolite Extraction & LC-MS/MS Analysis

Application Note: Generate high-resolution, tandem MS data as the core input for molecular networking and AI-based structure prediction.

Protocol: Small Molecule Profiling

- Fermentation & Extraction: Inoculate strain in 50 mL production medium. Shake (180 rpm) for 7 days. Centrifuge. Extract broth and cell pellet separately with equal volume EtOAc (x3). Combine, dry under vacuum.

- LC-MS/MS Analysis:

- Column: C18 (2.1 x 100 mm, 1.7 µm).

- Gradient: 5-95% MeCN in H2O (+0.1% Formic acid) over 18 min.

- MS: High-resolution Q-TOF in data-dependent acquisition (DDA) mode.

- Settings: ESI (+/-), scan range 100-2000 m/z, top 10 precursors per cycle for MS/MS.

Stage 4: AI-Powered Data Analysis & Hit Identification

Application Note: Use computational tools to cluster MS data, predict structures, and correlate with bioactivity, reducing the need for exhaustive isolation.

Protocol: Molecular Networking & In-Silico Dereplication

- Data Conversion: Convert .raw files to .mzML using MSConvert (ProteoWizard).

- Feature Detection: Process with MZmine3 or GNPS Feature-Based Molecular Networking (FBMN) workflow.

- Molecular Network: Upload to GNPS. Create network with parameters:

Cos Score > 0.7,TopK > 10. - AI-Driven Dereplication:

- Use

DEREPLICATOR+orNAPon GNPS to annotate molecular families. - Input MS/MS data to

SIRIUSfor molecular formula and CSI:FingerID for structure prediction. - Use

MolDiscoveryor similar ML models to predict novelty score.

- Use

- Bioactivity Integration: If bioassay data exists (e.g., antimicrobial zone of inhibition), link activity to specific nodes/clusters in the network.

Table 2: AI/ML Tools for NP Discovery

| Tool Name | Function | Data Input | Output |

|---|---|---|---|

| GNPS | Molecular Networking | MS/MS (.mzML) | Spectral Networks |

| SIRIUS/CSI:FingerID | Structure Prediction | MS/MS | Molecular Formula & Structure |

| AntiSMASH | Biosynthetic Gene Cluster Prediction | Genomic FASTA | BGC Type & Prediction |

| NPClassifier | Compound Classification | Chemical Structure | Class & Pathway |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for the Modern NP Pipeline

| Item | Function | Example Product |

|---|---|---|

| DNA/RNA Shield | Stabilizes microbial community DNA/RNA at collection | Zymo Research R1100 |

| ISP Media Series | Selective cultivation of Actinomycetes | BD Difco ISP Media |

| FastDNA Spin Kit | Rapid gDNA extraction from complex samples | MP Biomedicals 116560200 |

| SDB Liquid Media | Fungal secondary metabolite production | HiMedia M108 |

| Solid Phase Extraction (SPE) Cartridges | Fractionation of crude extracts | Waters Oasis HLB |

| LC-MS Grade Solvents | High-resolution metabolomics analysis | Fisher Chemical Optima |

| 96-well Microtiter Plates | High-throughput bioassays | Corning 3631 |

| Resazurin Sodium Salt | Cell viability assay for antimicrobial screening | Sigma R7017 |

Visualizations

Title: Modern NP Discovery Pipeline Workflow

Title: AI Model Inputs & Outputs for NP Discovery

AI in Action: Key Methodologies and Real-World Applications in NP Discovery

Within the broader thesis that artificial intelligence and machine learning represent a paradigm shift in natural product (NP) drug discovery, this document details practical applications. Virtual Screening (VS) 1.0, reliant on molecular docking to physical target structures, struggles with the unique chemical space, stereochemical complexity, and lack of explicit targets for many NPs. VS 2.0 employs an ensemble of AI models to overcome these barriers, moving from simple target-ligand matching to predictive systems that prioritize NPs with a high probability of exhibiting a desired phenotypic or target-based activity, thereby accelerating the identification of novel bioactive leads.

Application Notes

Note 1: AI Model Ensemble for Target-Agnostic Prioritization When a specific protein target is unknown but high-throughput phenotypic screening data exists, an ensemble model can prioritize NPs for validation. This approach uses results from a cell-based assay (e.g., inhibition of cancer cell proliferation) as training labels.

Key Quantitative Results: Table 1: Performance Metrics of AI Ensemble on a Phenotypic Screening Dataset (Hypothetical Example)

| Model Type | Training Set Size | Validation AUC-ROC | Precision @ Top 100 | Key Function |

|---|---|---|---|---|

| Graph Neural Network (GNN) | 5,000 NP-activity pairs | 0.78 | 0.25 | Captures complex molecular topology |

| Transformer (ChemBERTa) | 5,000 NP-activity pairs | 0.75 | 0.22 | Understands SMILES syntax semantics |

| 3D-Convolutional Neural Network | 1,200 NP-3D structures | 0.71 | 0.18 | Analyzes spatial pharmacophore features |

| Ensemble (Weighted Average) | Combined | 0.82 | 0.31 | Synthesizes predictions, reduces variance |

Note 2: Target-Specific Screening with Interaction Predictors For a known target (e.g., kinase EGFR), VS 2.0 integrates more than docking. A hybrid pipeline uses a deep learning model trained on protein-ligand interaction fingerprints (PLIF) from the PDBbind database to score NP binding, complementing physics-based docking scores.

Key Quantitative Results: Table 2: Comparison of VS Methods for EGFR Inhibitor Identification

| Virtual Screening Method | Screening Library Size | Enrichment Factor (EF₁%) | Hit Rate in Experimental Validation | Runtime |

|---|---|---|---|---|

| Traditional Docking (VS 1.0) | 50,000 NPs | 5.2 | 8% | 48 hours |

| AI Scoring (PLIF Model Only) | 50,000 NPs | 8.7 | 12% | 2 hours |

| Consensus Docking + AI Scoring (VS 2.0) | 50,000 NPs | 15.3 | 22% | 50 hours |

Experimental Protocols

Protocol 1: Building a Target-Agnostic Prioritization Pipeline

Aim: To rank a library of 100,000 natural products for predicted anti-inflammatory activity using historical phenotypic screening data.

Materials & Software: See "The Scientist's Toolkit" below.

Procedure:

- Data Curation: Assemble a training set of 10,000 NPs with known binary activity labels (active/inactive) from a TNF-α inhibition assay in macrophages. Standardize SMILES strings, remove duplicates, and apply scaffold splitting to separate training and test sets.

- Feature Generation:

- For GNN: Convert SMILES to molecular graph objects with nodes (atoms) and edges (bonds).

- For Transformer: Tokenize SMILES strings for direct model input.

- For 3D-CNN: Generate low-energy 3D conformers for each NP using RDKit's ETKDG method and align to a reference grid.

- Model Training: Independently train the three AI models (GNN, Transformer, 3D-CNN) on the same training set, using 20% of the data for validation and early stopping.

- Ensemble Construction: On the held-out test set, optimize weights for a linear combination of the three models' prediction scores to maximize the AUC-ROC. Final weights: GNN (0.5), Transformer (0.3), 3D-CNN (0.2).

- Library Prioritization: Apply the weighted ensemble model to the entire 100,000 NP library. Rank compounds by descending predicted activity probability.

- Experimental Triaging: Select the top 500 ranked NPs for in vitro experimental validation.

Protocol 2: Experimental Validation of AI-Prioritized Hits

Aim: To confirm the anti-inflammatory activity of top 10 AI-prioritized NPs.

Procedure:

- Sample Preparation: Reconstitute dried NP samples in DMSO to 10 mM stock solutions.

- Cell Culture: Seed RAW 264.7 macrophage cells in 96-well plates at 50,000 cells/well and incubate overnight.

- Compound Treatment & Stimulation: Treat cells with NPs at 10 µM (n=3). After 1 hr, stimulate with LPS (100 ng/mL). Include controls: vehicle (DMSO), LPS-only, and dexamethasone (10 µM) as positive control.

- TNF-α ELISA: After 18 hours, collect cell culture supernatants. Perform TNF-α ELISA according to manufacturer protocol. Measure absorbance at 450 nm.

- Viability Assay (MTT): To the same cells, add MTT reagent (0.5 mg/mL). Incubate for 3 hours, solubilize with DMSO, and measure absorbance at 570 nm.

- Data Analysis: Normalize TNF-α secretion to cell viability and LPS-only control. Compounds showing >50% inhibition of TNF-α secretion with <20% cytotoxicity are considered confirmed hits.

Pathway & Workflow Diagrams

Title: VS 2.0 AI Ensemble Workflow for NP Prioritization

Title: Anti-inflammatory Pathway & AI-NP Target Hypotheses

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for AI-Driven NP Screening & Validation

| Item / Reagent | Provider Examples | Function in VS 2.0 Pipeline |

|---|---|---|

| Curated NP Libraries | AnalytiCon, Selleckchem, In-house Collections | Source of chemically diverse, often novel, compounds for AI prediction and experimental testing. |

| Cheminformatics Software (RDKit, OpenBabel) | Open Source | Fundamental for SMILES standardization, molecular descriptor calculation, fingerprint generation, and file format conversion. |

| AI/ML Platforms | DeepChem, PyTorch, TensorFlow, scikit-learn | Frameworks for building, training, and deploying GNNs, Transformers, and other models. |

| High-Performance Computing (HPC) / Cloud GPU | AWS, Google Cloud, Azure | Provides necessary computational power for training complex AI models and processing large libraries. |

| Docking Software | AutoDock Vina, Glide, GOLD | Generates initial pose and score for target-specific screening, used as input feature for AI. |

| Cell-Based Assay Kits (e.g., TNF-α ELISA) | R&D Systems, BioLegend, Abcam | Provides standardized, reliable methods for experimental validation of AI-prioritized hits in biological systems. |

| Raw Natural Product Extracts | NCI, USDA, Marine Biobanks | Complex starting material for the isolation of novel NPs, which can be characterized and added to screening libraries. |

Within the broader thesis on the application of artificial intelligence (AI) and machine learning (ML) to natural product drug discovery, predictive bioactivity modeling emerges as a critical computational bridge. It accelerates the identification of promising bioactive compounds from vast natural product libraries by predicting their interactions with specific molecular targets (target-specific) or their phenotypic outcomes in complex biological systems (phenotypic screening). This application note details the integration of ML workflows to enhance the efficiency and predictive power of both screening paradigms.

Effective models require high-quality, structured data. Key public and proprietary data sources are leveraged and must undergo rigorous curation.

Table 1: Key Data Sources for Predictive Bioactivity Modeling

| Data Source | Data Type | Typical Volume | Primary Use in ML |

|---|---|---|---|

| ChEMBL | Target-binding affinities (Ki, IC50) | >2M compounds, 1.4M assays | Training target-specific models. |

| PubChem BioAssay | Phenotypic & biochemical assay outcomes | >1M bioassays | Training phenotypic & target models. |

| DrugBank | Approved drug targets & actions | ~14k drug entries | Feature engineering, model validation. |

| GNPS (Natural Products) | MS/MS spectra of natural products | >1M spectra | Building NP-specific molecular libraries. |

| In-house HTS Data | Proprietary screening results | Project-dependent (10^4 - 10^6 data points) | Model fine-tuning and validation. |

Protocol 1.1: Data Curation and Standardization Workflow

- Data Retrieval: Programmatically access databases via REST APIs (e.g., ChEMBL, PubChem) using Python packages like

requestsandpandas. - Activity Thresholding: Define consistent activity thresholds (e.g., IC50 < 10 µM = 'Active'; IC50 > 20 µM = 'Inactive'). Discard borderline values.

- Chemical Standardization: Using RDKit in Python, standardize all molecular structures: neutralize charges, remove salts, generate canonical SMILES, and compute parent compounds.

- Descriptor Calculation: Generate a unified set of molecular features (descriptors) for all compounds. This includes:

- 1D/2D Descriptors: Molecular weight, LogP, topological polar surface area (TPSA), number of hydrogen bond donors/acceptors (via RDKit).

- Fingerprints: Morgan fingerprints (ECFP4) for structural similarity and machine learning input.

- Dataset Splitting: Partition the curated dataset into training (70%), validation (15%), and hold-out test (15%) sets using stratified splitting based on activity labels to maintain class balance.

Target-Specific Predictive Modeling

This approach trains ML models to predict the binding affinity or inhibitory activity of a compound against a defined protein target.

Protocol 2.1: Building a Target-Specific Random Forest Classifier

- Objective: To classify natural products as active or inactive against a specific target (e.g., kinase EGFR).

- Input: Standardized dataset of compounds with known activity against EGFR from Table 1 sources.

- Software/Tools: Python, scikit-learn, RDKit, imbalanced-learn (if needed).

- Steps:

- Feature Generation: Compute ECFP4 (2048 bits) fingerprints for all compounds.

- Model Training: Initialize a

RandomForestClassifier. Use the validation set to optimize hyperparameters (nestimators, maxdepth) via grid search. - Addressing Imbalance: If inactive compounds vastly outnumber actives, apply SMOTE (Synthetic Minority Over-sampling Technique) from the

imbalanced-learnlibrary during training only. - Evaluation: Predict on the hold-out test set. Calculate metrics: Accuracy, Precision, Recall, F1-score, and Area Under the ROC Curve (AUC-ROC).

Table 2: Example Performance of Target-Specific ML Models (Hypothetical EGFR Inhibitor Prediction)

| Model Algorithm | AUC-ROC | Balanced Accuracy | Precision (Active) | Recall (Active) |

|---|---|---|---|---|

| Random Forest | 0.89 | 0.81 | 0.75 | 0.70 |

| Graph Neural Network | 0.92 | 0.84 | 0.78 | 0.73 |

| Support Vector Machine | 0.85 | 0.78 | 0.72 | 0.65 |

ML Workflow for NP Bioactivity Prediction

Phenotypic Screening Prediction with Deep Learning

Predicting complex phenotypic outcomes (e.g., cell viability, morphology change) from chemical structure requires models capable of capturing intricate structure-activity relationships.

Protocol 3.1: Training a Convolutional Neural Network (CNN) on Phenotypic Data

- Objective: Predict a compound's effect on cell viability (e.g., % inhibition) from its molecular graph.

- Input: Compounds with associated high-content imaging readouts or viability metrics.

- Software/Tools: Python, PyTorch or TensorFlow, DeepChem, RDKit.

- Steps:

- Graph Representation: Convert each compound's SMILES string into a graph object (nodes=atoms, edges=bonds) with atom and bond features.

- Model Architecture: Implement a Graph Convolutional Network (GCN) or Message Passing Neural Network (MPNN).

- GCN Layers (2-3): Update atom embeddings by aggregating information from neighboring atoms.

- Global Pooling: Aggregate atom embeddings into a single molecular fingerprint.

- Fully Connected Layers: Map the pooled fingerprint to a regression output (% viability).

- Training: Use Mean Squared Error (MSE) loss and the Adam optimizer. Monitor loss on the validation set.

- Interpretation: Use gradient-based attribution methods (e.g., Integrated Gradients) to highlight molecular substructures influential to the prediction.

GCN for Phenotypic Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for Implementing Predictive Modeling Workflows

| Item / Reagent | Function / Purpose | Example Vendor / Tool |

|---|---|---|

| Curated Bioactivity Database | Provides labeled data for supervised ML model training. | ChEMBL, PubChem BioAssay |

| Chemical Standardization Suite | Cleans and standardizes molecular structures for consistent feature generation. | RDKit (Open Source), ChemAxon |

| Molecular Descriptor & Fingerprint Calculator | Generates numerical representations (features) of compounds for ML algorithms. | RDKit, PaDEL-Descriptor |

| ML/DL Framework | Provides libraries for building, training, and evaluating predictive models. | scikit-learn, PyTorch, TensorFlow |

| High-Performance Computing (HPC) / Cloud GPU | Accelerates model training, especially for deep learning on large datasets. | AWS EC2 (P3 instances), Google Cloud AI Platform, local GPU cluster |

| Model Interpretation Library | Helps explain model predictions and identify important molecular features. | SHAP, Captum, LIME |

| In-house HTS Dataset | Proprietary data for fine-tuning and validating models on specific disease models or compound libraries. | Organization's internal screening facility |

Integrated Validation Protocol

Predictions must be experimentally validated to close the AI-driven discovery loop.

Protocol 4.1: Experimental Validation of ML-Predicted Hits

- Objective: Biochemically validate top predictions from target-specific and phenotypic models.

- Materials: Predicted hit compounds, positive/negative controls, assay kits.

- Target-Specific Validation (e.g., Enzyme Inhibition):

- Source or synthesize predicted hit compounds.

- Perform a dose-response assay (e.g., 10-point, 1 nM - 100 µM) using a fluorescence- or luminescence-based activity kit for the target enzyme.

- Calculate IC50 values. A compound is considered validated if IC50 < 10 µM and shows a dose-dependent response.

- Phenotypic Validation (e.g., Cytotoxicity):

- Treat relevant cell lines (e.g., cancer line for an oncology phenotype) with predicted hits in a 96-well plate.

- After 72 hours, measure cell viability using a resazurin (Alamar Blue) or ATP-based (CellTiter-Glo) assay.

- Determine GI50 values. Validate hits that show GI50 < 20 µM and confirm morphology changes via high-content imaging if applicable.

De Novo Design of Natural Product-Inspired Compounds

Natural products (NPs) are a privileged source of drug leads but are often complex and difficult to synthesize or optimize. De novo design, powered by artificial intelligence (AI) and machine learning (ML), generates novel, synthetically accessible molecular structures inspired by NP scaffolds. This approach integrates generative models, predictive algorithms, and synthesis planning to accelerate the discovery of new chemical entities within therapeutically relevant chemical space. This Application Note details protocols for implementing AI-driven de novo design within a modern NP-inspired drug discovery pipeline.

Key Data & Performance Metrics of AI Models for De Novo Design

Table 1: Comparative Performance of AI/ML Models for De Novo Design (Summarized from Recent Literature)

| Model Type | Key Architecture/Technique | Primary Application | Reported Metric (Typical Range) | Key Advantage |

|---|---|---|---|---|

| Generative AI | Variational Autoencoder (VAE) | Latent space exploration of NP-like scaffolds | Validity: 85-98%; Uniqueness: 60-90% | Smooth latent space interpolation. |

| Generative AI | Generative Adversarial Network (GAN) | Generating novel structures from NP distributions | Novelty: >80% (vs. training set) | Can produce highly novel structures. |

| Generative AI | Transformer-based (e.g., MolGPT) | Sequence-based generation of SMILES strings | Syntactic Validity: >90% | Captures long-range molecular dependencies. |

| Reinforcement Learning (RL) | REINFORCE, PPO | Optimization for target properties (e.g., binding affinity) | Success Rate*: 40-70% per optimization cycle | Directly optimizes for multi-parameter objectives. |

| Hybrid | VAE/RL + Synthesizability Filter | Generating synthetically accessible leads | Synthetic Accessibility Score (SAscore) Improvement: 20-40% reduction | Balances novelty and synthetic feasibility. |

*Success Rate: Defined as the percentage of generated molecules meeting predefined target criteria.

Detailed Application Protocols

Protocol 1: Building and Training a VAE for NP-Inspired Scaffold Generation

Objective: To create a generative model that learns the chemical space of a curated NP library and samples novel, valid structures from its latent space.

Materials & Reagents:

- Hardware: GPU-equipped workstation (e.g., NVIDIA V100/A100).

- Software: Python 3.8+, PyTorch/TensorFlow, RDKit, pandas, NumPy.

- Data: Curated NP database (e.g., COCONUT, NP Atlas) in SMILES format, filtered for organic compounds and canonicalized.

Procedure:

- Data Preprocessing: a. Load SMILES strings from your NP dataset. b. Filter molecules based on molecular weight (e.g., 150-800 Da) and remove salts/metals using RDKit. c. Canonicalize SMILES and remove duplicates. d. Encode SMILES into one-hot tensors or using a tokenizer (character-level). Pad sequences to uniform length.

Model Architecture Setup: a. Define an encoder network: 1-2 LSTM/GRU layers, followed by dense layers mapping to mean (

mu) and log-variance (log_var) vectors of the latent space (dimensionz_dim=128). b. Define a decoder network: A dense layer to project latent vectorzto initial hidden state, followed by 1-2 LSTM/GRU layers and a final dense layer with softmax to predict the token sequence. c. Use a Gaussian prior for the latent space.Model Training: a. Use Adam optimizer (lr=0.0005). b. Loss function: Reconstruction Loss (Categorical Cross-Entropy) + β * KL Divergence Loss (β can be annealed from 0 to ~0.01). c. Train for 100-200 epochs, monitoring validation set loss for early stopping. d. Validate by decoding random latent vectors to SMILES and checking chemical validity with RDKit.

Protocol 2: Reinforcement Learning (RL) Fine-Tuning for Target Property Optimization

Objective: To fine-tune a pre-trained generative model (from Protocol 1) to bias generation towards molecules with desired properties (e.g., high predicted activity, drug-likeness).

Materials & Reagents:

- Pre-trained Model: VAE from Protocol 1.

- Software: Custom RL environment (OpenAI Gym style), reward calculation scripts.

- Predictive Models: QSAR model(s) for target activity (e.g., Random Forest, GNN) or calculated property functions (e.g., QED, LogP).

Procedure:

- Environment Setup:

a. Create an agent where the action is the generation of a complete molecule from the generative model.

b. Define the state as the latent vector

zor the sequence of generated tokens. c. Define the reward function R:R = w1 * pActivity + w2 * QED + w3 * SAscore + w4 * NP-likeness, where weightsware tuned.pActivityis the output from a predictive model.

- RL Fine-Tuning Loop:

a. Initialize the policy network as the decoder of the pre-trained VAE. Freeze the encoder.

b. Use a policy gradient method (e.g., REINFORCE or Proximal Policy Optimization - PPO).

c. For N iterations:

i. Sample a batch of latent vectors

zfrom the prior. ii. Decodezto molecules using the current policy (decoder). iii. Calculate the rewardRfor each generated molecule. iv. Compute policy loss and update decoder parameters to maximize expected reward. d. Periodically sample molecules and assess diversity to avoid mode collapse.

Protocol 3: In Silico Validation and Synthesis Prioritization

Objective: To computationally validate and rank AI-generated molecules for synthesis and testing.

Materials & Reagents:

- Software: Molecular docking suite (e.g., AutoDock Vina, GNINA), ADMET prediction tools (e.g., SwissADME, pkCSM), retrosynthesis software (e.g., AiZynthFinder, ASKCOS).

- Data: Target protein structure (PDB file), generated molecules in SDF format.

Procedure:

- Virtual Screening: a. Prepare the protein receptor (add hydrogens, assign charges). b. Prepare ligand libraries (generated molecules + reference NPs) for docking (generate 3D conformers, minimize energy). c. Perform molecular docking to a defined binding site. Record docking scores and poses.

ADMET & Property Profiling: a. Use batch processing in SwissADME to calculate key properties: LogP, TPSA, #Rotatable bonds, Lipinski/Veber rule compliance, synthetic accessibility score. b. Use pkCSM or similar to predict key ADMET endpoints: Caco-2 permeability, CYP inhibition, hERG liability, Ames toxicity.

Retrosynthesis Analysis & Prioritization: a. Input the top-scoring molecules (by docking and ADMET) into a retrosynthesis planning tool (e.g., AiZynthFinder). b. Set availability criteria for building blocks (e.g., "in-stock" catalog). c. Rank molecules by the number of plausible routes and the estimated complexity of the shortest route (e.g., number of steps). d. Select final candidates for synthesis based on a composite score: 0.4(Docking Score) + 0.2(SAscore) + 0.2(ADMET Score) + 0.2(Route Feasibility Score).

Diagrams

Title: AI-Driven De Novo Design Workflow

Title: In Silico Validation & Prioritization Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Tools & Resources for AI-Driven De Novo Design

| Item/Category | Example/Specific Tool | Primary Function in Protocol |

|---|---|---|

| Natural Product Database | COCONUT, NP Atlas, CMAUP | Provides the foundational chemical structures for model training and inspiration. |

| Cheminformatics Library | RDKit (Python) | Core toolkit for molecule manipulation, fingerprinting, descriptor calculation, and validation. |

| Deep Learning Framework | PyTorch, TensorFlow/Keras | Enables building, training, and deploying generative (VAE, GAN) and predictive models. |

| Generative Model Library | GUACA-MOL, MolGPT, PyTorch Geometric | Offers pre-implemented architectures for molecular generation, accelerating development. |

| Reinforcement Learning Environment | Custom (Gym-based), ChEMBL | Provides the framework for implementing policy gradient methods for molecule optimization. |

| Molecular Docking Software | AutoDock Vina, GNINA, GLIDE | Performs structure-based virtual screening of generated molecules against a biological target. |

| ADMET Prediction Platform | SwissADME, pkCSM, ADMETlab 2.0 | Computes pharmacokinetic and toxicity profiles to filter out undesirable compounds early. |

| Retrosynthesis Planner | AiZynthFinder, ASKCOS, IBM RXN | Assesses synthetic feasibility and proposes routes for top-ranked AI-generated candidates. |

| High-Performance Computing (HPC) | Local GPU Cluster / Cloud (AWS, GCP) | Provides necessary computational power for training large models and high-throughput virtual screening. |

Genome Mining and Biosynthetic Gene Cluster Analysis Enhanced by AI

Application Notes

The integration of artificial intelligence (AI) into genome mining is revolutionizing the discovery of natural products (NPs) for drug development. This paradigm shift addresses the historical challenges of dereplication, silent/cryptic biosynthetic gene cluster (BGC) activation, and functional prediction.

Key AI-Enhanced Applications:

- BGC Prediction & Prioritization: Deep learning models (e.g., CNNs, transformers) analyze genome sequences to predict BGC boundaries and chemical class (e.g., NRPS, PKS, RiPPs) with >90% accuracy, significantly outperforming traditional rule-based tools like antiSMASH.

- Chemical Structure Prediction: Models such as

DeepBGCandPRISM 4now predict the putative chemical scaffold encoded by a BGC, linking genetic architecture to chemical space prior to laborious heterologous expression. - Expression Activation of Cryptic Clusters: AI algorithms analyze multi-omics data (transcriptomics, metabolomics) to identify optimal environmental or genetic perturbation strategies to "awaken" silent BGCs.

- Targeted Genome Mining: Embedding models enable similarity searching across vast genomic databases (e.g., GenBank, MiBIG) to find BGCs encoding compounds with structural similarities to known bioactive molecules.

Quantitative Performance of Selected AI Tools in BGC Analysis (2023-2024 Benchmark Data)

| AI Tool Name | Primary Function | Reported Accuracy/Sensitivity | Benchmark Dataset | Key Advantage |

|---|---|---|---|---|

| DeepBGC | BGC detection & product class prediction | 94% Precision (PKS/NRPS) | MIBIG 2.0 | Embeddings for novelty scoring |

| PRISM 4 | BGC mapping & structure prediction | 88% Structure recall | In-house microbial genomes | Hybrid (rule + neural network) approach |

| GECCO | BGC detection & product prediction | 0.97 AUC-ROC (PKS I) | 1,200 bacterial genomes | Lightweight, classifier-agnostic |

| aiSCOPE | Metagenomic BGC mining | 92% Cluster detection | Simulated metagenomes | Optimized for fragmented assemblies |

| CLUSEEN | BGC boundary determination | 89% Boundary F1-score | Intergenic validation set | Uses DNA language models |

Research Reagent Solutions Toolkit

| Item | Function in AI-Enhanced Workflow |

|---|---|

| High-Molecular-Weight DNA Extraction Kit | Provides ultra-pure DNA for long-read sequencing, essential for high-contiguity genomes for AI analysis. |

| Nanopore PromethION / PacBio Revio | Long-read sequencing platforms to generate complete microbial genomes or metagenome-assembled genomes (MAGs). |

| Strain Libraries (e.g., ATCC, DSMZ) | Source of diverse, taxonomically identified genomes for training and validating AI models. |

| HTS Metabolomics Standard Mixes | LC-MS/MS standards for validating AI-predicted chemical structures from activated BGCs. |

| Induction Media Toolkit | Variety of media (ISP, R2A, seawater-based) for physiological perturbations to trigger cryptic BGC expression. |

| Heterologous Expression Host & Vector System | Streptomyces chassis (e.g., S. albus Chassis) and BAC vectors for BGC cloning and expression based on AI prioritization. |

| GPU-Accelerated Compute Instance (Cloud) | Essential for running large-scale AI inference on genomic databases (e.g., AWS p3.2xlarge, Azure NCv3). |

Detailed Experimental Protocols

Protocol 2.1: AI-Prioritized BGC Heterologous Expression

Objective: To clone, express, and characterize a high-priority BGC identified by an AI mining pipeline.

Materials:

- Bacterial genomic DNA (host and source).

- pCAP01-based BAC vector or similar.

- E. coli GBdir-gyrA462 & GB05-red.

- Streptomyces albus Chassis strain.

- PCR reagents, Gel extraction kit.

- Antibiotics (apramycin, kanamycin, nalidixic acid).

- TSB, MS, R2YE media.

Methodology:

- AI Prioritization: Input the assembled genome of the NP-producing strain into

DeepBGC. Rank predicted BGCs by novelty score and predicted product class. - BGC Capture: Design PCR primers targeting ~50 bp flanking regions of the top-priority BGC (30-80 kb). Perform PCR using long-range, high-fidelity polymerase.

- BAC Assembly: Digest the PCR product and the pCAP01 BAC vector with appropriate restriction enzymes. Ligate and transform into E. coli GBdir-gyrA462 for direct cloning. Select with kanamycin.

- Conjugation: Isolate the recombinant BAC from E. coli and transform into the conjugation donor strain E. coli GB05-red. Mate with Streptomyces albus spores. Select exconjugants on MS agar with apramycin (for BAC) and nalidixic acid (to counter E. coli).

- Metabolite Analysis: Incubate exconjugants in R2YE liquid medium for 5-7 days. Extract metabolites with ethyl acetate. Analyze extract by LC-HRMS. Compare mass spectra and retention times to AI-predicted structures or databases.

Protocol 2.2: Activation of a Cryptic BGC Using AI-Informed Culturing

Objective: To induce expression of a silent BGC predicted by in silico analysis but not expressed under standard lab conditions.

Materials:

- Producer strain fermentation broth.

- 48-well deep-well microtiter plates.

- Library of 50+ unique cultivation media (varied carbon/nitrogen/trace elements).

- RNAprotect reagent & RNA extraction kit.

- RT-qPCR reagents, primers for target BGC genes.

- LC-MS/MS system.

Methodology:

- Cryptic BGC Identification: Use

antiSMASHwithdeepBGCclassifier to identify all BGCs. UsePRISM 4to predict structures. Flag BGCs with no associated metabolite detected in standard extracts. - AI-Optimized Media Design: Input genomic data (including regulator genes within BGC) and standard metabolomic data into an algorithm (e.g.,

OmetaBox) to predict 3-5 key nutrients or stressors for activation. - Micro-Scale Cultivation: Inoculate the producer strain in 48-well plates containing 1 mL of each AI-suggested medium and controls. Culture with agitation for 96-168 hours.

- Dual Analysis:

- Transcriptomics: Harvest cells, stabilize RNA. Perform RT-qPCR on key biosynthetic genes from the target BGC (e.g., polyketide synthase).

- Metabolomics: Quench broth, extract metabolites with a solvent mixture. Analyze by LC-MS/MS.

- Correlation: Identify cultivation conditions where both transcript levels of the BGC and unique, predicted secondary metabolite peaks are significantly upregulated (>5-fold vs. control).

Visualizations

Within the broader thesis on AI and machine learning (AI/ML) for natural product (NP) drug discovery, this document provides application notes and protocols for three successful case studies. These exemplify the integration of computational pipelines with experimental validation to accelerate the discovery of antimicrobial, anticancer, and neuroprotective agents from complex NP sources.

Application Note 1: Discovery of Novel Antimicrobial Lipo-peptides

Objective: To identify novel antimicrobial peptides from marine Bacillus spp. using genome mining and molecular networking.

AI/ML Context: An ensemble model combining Random Forest and Convolutional Neural Networks (CNNs) was trained on known antimicrobial peptide sequences (from databases like APD3) to predict novel biosynthetic gene clusters (BGCs) in metagenomic-assembled genomes (MAGs).

Key Results & Data: Table 1: Predicted and Validated Antimicrobial Peptides from Marine Bacillus

| Compound ID (Predicted) | Core Sequence (AA) | Predicted BGC Type | MIC vs. S. aureus (µg/mL) | MIC vs. E. coli (µg/mL) | Hemolysis (HC50, µg/mL) |

|---|---|---|---|---|---|

| MarBac-001 | FAWWFLGK | Lipopeptide (Fengycin-like) | 4 | >128 | >256 |

| MarBac-002 | VQIVYKN | Lipopeptide (Surfactin-like) | 8 | 32 | 128 |

| MarBac-003 | GLFDIIKQ | Unknown (Novel) | 2 | 64 | >256 |

Experimental Protocol: In Vitro Antimicrobial and Cytotoxicity Assay

- Bacterial Culture: Inoculate S. aureus (ATCC 29213) and E. coli (ATCC 25922) in Mueller-Hinton Broth (MHB). Grow overnight at 37°C, then dilute to ~5 x 10^5 CFU/mL in fresh MHB.

- Compound Preparation: Serially dilute purified peptides (from HPLC fractionation) 2-fold in a 96-well microtiter plate using MHB, covering a range of 0.5 to 128 µg/mL.

- Inoculation & Incubation: Add 100 µL of the standardized bacterial inoculum to each well containing 100 µL of compound dilution. Include growth control (bacteria + media) and sterility control (media only). Incubate plates at 37°C for 18-24 hours.

- MIC Determination: The Minimum Inhibitory Concentration (MIC) is the lowest concentration that completely inhibits visible growth, as observed visually or measured with a microplate reader at OD600.

- Hemolysis Assay: Prepare a 4% (v/v) suspension of fresh human red blood cells (hRBCs) in PBS. Add 100 µL of compound dilution (in PBS) to 100 µL of hRBC suspension in a 96-well plate. Incubate at 37°C for 1 hour. Centrifuge plates at 800 x g for 5 minutes. Measure hemoglobin release in the supernatant at 540 nm. Calculate HC50 (concentration causing 50% hemolysis) relative to 0.1% Triton X-100 (100% lysis) and PBS (0% lysis).

Visualization: Antimicrobial Discovery Workflow

Title: AI-Driven Antimicrobial Peptide Discovery Pipeline

Research Reagent Solutions Toolkit

| Reagent/Material | Function in Protocol |

|---|---|

| Mueller-Hinton Broth (MHB) | Standardized medium for antimicrobial susceptibility testing. |

| 96-well Microtiter Plate | Platform for high-throughput broth microdilution assays. |

| Human Red Blood Cells (hRBCs) | Primary cells for assessing compound hemolytic toxicity. |

| Triton X-100 (0.1%) | Positive control for 100% lysis in hemolysis assays. |

| B. subtilis expression system (e.g., BS54 strain) | Heterologous host for expressing predicted peptide BGCs. |

Application Note 2: Identification of a Plant-Derived Anticancer Lead

Objective: To isolate and characterize a novel pro-apoptotic compound from Tabernaemontana elegans root extract using bioactivity-guided fractionation and target prediction.

AI/ML Context: A Graph Neural Network (GNN) trained on drug-target interaction databases (ChEMBL, BindingDB) was used to predict the molecular target of the isolated compound based on its structural features.

Key Results & Data: Table 2: In Vitro Anticancer Activity and Predicted Targets of Tabelegin-A

| Cell Line | IC50 (µM) | Apoptosis Induction (% at 10µM) | Predicted Primary Target (GNN, Probability) | Validated Target (Experimental) |

|---|---|---|---|---|

| A549 (Lung) | 1.2 ± 0.3 | 65% ± 8% | BCL-2 (0.87) | BCL-2 (SPR KD = 45 nM) |

| MCF-7 (Breast) | 0.8 ± 0.2 | 72% ± 6% | BCL-2 (0.91) | BCL-2 (SPR KD = 38 nM) |

| HepG2 (Liver) | 2.1 ± 0.5 | 45% ± 7% | BCL-XL (0.79) | BCL-2 (SPR KD = 52 nM) |

Experimental Protocol: Annexin V/PI Apoptosis Assay by Flow Cytometry

- Cell Treatment: Seed cancer cells in 6-well plates (3 x 10^5 cells/well) and incubate overnight. Treat cells with the compound (Tabelegin-A) at the desired concentration (e.g., 1x and 5x IC50) and a vehicle control (e.g., 0.1% DMSO) for 24 hours.

- Cell Harvesting: Collect both floating and adherent cells (using mild trypsinization). Pool cells per condition and wash twice with cold PBS.

- Staining: Resuspend cell pellet (~1 x 10^6 cells) in 100 µL of 1X Annexin V Binding Buffer. Add 5 µL of FITC-conjugated Annexin V and 5 µL of Propidium Iodide (PI) solution (50 µg/mL). Gently vortex and incubate at room temperature in the dark for 15 minutes.

- Analysis: Add 400 µL of 1X Annexin V Binding Buffer to each tube. Analyze samples using a flow cytometer within 1 hour. Use 488 nm excitation; measure FITC (Annexin V) emission at 530 nm (FL1 channel) and PI emission at >575 nm (FL2 or FL3 channel).

- Gating: Plot quadrants on an Annexin V-FITC vs. PI scatter plot: viable cells (Annexin V-/PI-), early apoptotic (Annexin V+/PI-), late apoptotic (Annexin V+/PI+), and necrotic (Annexin V-/PI+).

Visualization: Apoptotic Signaling Pathway of Tabelegin-A

Title: Pro-Apoptotic Mechanism of Anticancer Lead Compound

Research Reagent Solutions Toolkit

| Reagent/Material | Function in Protocol |

|---|---|

| FITC Annexin V Apoptosis Detection Kit | Contains binding buffer and fluorescent conjugates for detecting phosphatidylserine externalization. |

| Propidium Iodide (PI) Solution | Membrane-impermeable DNA dye to distinguish late apoptotic/necrotic cells. |

| Flow Cytometer with 488 nm laser | Instrument for quantifying fluorescence of single-cell suspensions. |

| DMSO (Cell Culture Grade) | Vehicle for solubilizing hydrophobic compounds in cell-based assays. |

| BCL-2 Coated SPR Chip | Biosensor chip for validating direct target binding via Surface Plasmon Resonance. |

Application Note 3: Screening for Neuroprotective Agents in a Microbial Library

Objective: To identify neuroprotective compounds from a filamentous fungal library using a phenotypic high-content screening (HCS) assay and AI-based cheminformatic clustering.

AI/ML Context: An autoencoder-derived molecular fingerprint was used to cluster active hits from HCS into distinct chemotypes, guiding the selection of structurally unique leads for downstream development.

Key Results & Data: Table 3: Neuroprotective Activity of Clustered Fungal Metabolites in an Oxidative Stress Model

| Cluster | Lead Compound | % Neuronal Viability (vs. Control) | ROS Reduction (% vs. Stressor) | Predicted BBB Permeability (logPS) | Chemotype |

|---|---|---|---|---|---|

| 1 | Asperginol D | 85% ± 5% | 60% ± 10% | -2.1 | Dihydroisocoumarin |

| 2 | Penicitrinol F | 92% ± 4% | 75% ± 8% | -1.8 | Alkaloid |

| 3 | Novel (F-147) | 88% ± 6% | 68% ± 7% | -1.5 | Depsipeptide |

Experimental Protocol: High-Content Screening for Neuronal Viability & ROS

- Cell Culture & Stress Model: Plate differentiated SH-SY5Y neuroblastoma cells or primary cortical neurons in 96-well imaging plates. Pre-treat cells with test compounds (10 µM) from fungal fractions for 2 hours. Induce oxidative stress by adding 200 µM H2O2 for 24 hours.

- Staining: After treatment, wash cells with PBS. Incubate with live-cell stains: Hoechst 33342 (5 µg/mL) for nuclei, CellMask Green (1:5000) for cytosol/cell morphology, and CellROX Deep Red (5 µM) for reactive oxygen species (ROS). Incubate for 30 minutes at 37°C.

- Image Acquisition: Using a high-content imaging system (e.g., ImageXpress), acquire 4 fields per well with 20x objective. Use DAPI, FITC, and Cy5 filter sets for Hoechst, CellMask, and CellROX, respectively.

- Image Analysis: Use software (e.g., MetaXpress, CellProfiler) to:

- Segment nuclei (Hoechst) and cells (CellMask).

- Measure CellROX median intensity per cell as a proxy for ROS levels.

- Calculate neuronal viability as the count of intact, CellMask-positive cells with normal morphology relative to control wells.

Visualization: Neuroprotective Screening & AI Triage Workflow

Title: Integrated HCS and AI Workflow for Neuroprotection

Research Reagent Solutions Toolkit

| Reagent/Material | Function in Protocol |

|---|---|

| CellROX Deep Red Reagent | Fluorogenic probe that becomes fluorescent upon oxidation by ROS. |

| Hoechst 33342 | Cell-permeant nuclear counterstain for viability and cell counting. |

| CellMask Green Plasma Membrane Stain | General stain for cytoplasm/cell morphology in live cells. |

| 96-well Imaging Plates (µClear) | Optically clear plates with black walls for automated fluorescence imaging. |

| Automated High-Content Imager | Microscope system for automated, multi-parametric image acquisition. |

Overcoming the Hurdles: Troubleshooting Data, Model, and Pipeline Challenges