From Molecule to Mechanism: Advanced Strategies to Improve Natural Product Target Prediction Accuracy in Drug Discovery

This article provides a comprehensive overview of contemporary strategies to enhance the accuracy of target prediction for natural products (NPs), a critical bottleneck in modern drug discovery.

From Molecule to Mechanism: Advanced Strategies to Improve Natural Product Target Prediction Accuracy in Drug Discovery

Abstract

This article provides a comprehensive overview of contemporary strategies to enhance the accuracy of target prediction for natural products (NPs), a critical bottleneck in modern drug discovery. It details the unique challenges posed by NP structural complexity and data scarcity, evaluates a spectrum of computational and experimental methodologies—from similarity-based tools and AI-driven models to chemical proteomics and single-cell multiomics. The content further addresses common troubleshooting issues, benchmarks current prediction platforms, and outlines robust validation frameworks. Synthesizing insights from foundational concepts to translational applications, this guide equips researchers and drug development professionals with actionable knowledge to accelerate the elucidation of NP mechanisms and the development of novel therapeutics.

The Landscape and Challenge: Why Accurate Target Prediction for Natural Products is Crucial Yet Difficult

The Historical Significance and Modern Relevance of Natural Products in Drug Discovery

Natural products (NPs) and their structural analogues have been foundational to pharmacotherapy, contributing to over 60% of all small-molecule drugs approved for cancer and infectious diseases [1] [2]. Their unique chemical diversity, evolved through biological interaction, provides privileged scaffolds that often exhibit potent bioactivity and high target specificity [3] [4]. Despite a period of declining interest in the late 20th century due to technical challenges in screening and supply, a powerful renaissance is now underway [1]. This resurgence is driven by the convergence of artificial intelligence (AI), advanced analytics, and synthetic biology, which are collectively overcoming historical bottlenecks and creating new paradigms for discovery [5] [6]. This article establishes the continuous thread from traditional medicine to modern high-throughput discovery and frames current research within the critical thesis of improving predictive accuracy for natural product target identification. The subsequent technical support center is designed to provide practical solutions for researchers navigating this complex and promising field.

Historical Significance: The Foundation of Modern Therapeutics

The use of natural products in medicine is as old as human civilization itself, with traditional knowledge systems providing the first documented "screening libraries" [4]. The formal scientific journey began in the early 19th century with the isolation of pure alkaloids like morphine, quinine, and atropine, demonstrating that discrete chemical entities from nature could produce profound physiological effects [4].

Table 1: Landmark Natural Product-Derived Drugs and Their Origins

| Natural Product | Source Organism | Therapeutic Area | Year of Discovery/Isolation | Significance |

|---|---|---|---|---|

| Aspirin (from salicin) | Willow bark (Salix spp.) | Analgesic, Anti-inflammatory | 1897 (synthesis) | First synthetic derivative of a natural product; widely used. |

| Penicillin | Fungus (Penicillium rubens) | Antibiotic | 1928 | Revolutionized treatment of bacterial infections. |

| Artemisinin | Sweet wormwood (Artemisia annua) | Antimalarial | 1972 | Key therapy for malaria; Nobel Prize in Physiology or Medicine 2015. |

| Paclitaxel (Taxol) | Pacific yew tree (Taxus brevifolia) | Anticancer | 1971 | Major chemotherapeutic agent for ovarian, breast cancer. |

| Statins (e.g., Lovastatin) | Fungus (Aspergillus terreus) | Cardiovascular | 1978 | First discovered HMG-CoA reductase inhibitor for cholesterol. |

The period from the 1940s to the 1980s is often considered the "golden age" of antibiotic and anticancer discovery from natural sources, particularly from soil-dwelling microorganisms [1]. This era yielded not only drugs but also the fundamental chromatographic and spectroscopic techniques (e.g., HPLC, NMR, MS) that became standard for isolation and structure elucidation [3]. The principal advantage of NPs has always been their structural complexity and "biological relevance"—their evolution alongside biological systems often grants them superior binding affinity and selectivity compared to purely synthetic libraries [4] [1].

Modern Relevance: A Renaissance Powered by Technology

The decline in NP research was precipitated by challenges of supply, rediscovery, and compatibility with high-throughput screening (HTS) of synthetic combinatorial libraries [4] [1]. Today, a suite of technological advancements is systematically addressing these issues, revitalizing the field.

1. AI and Machine Learning for Prediction and Design: AI has moved from a disruptive promise to a foundational platform [6]. Applications now include: * Target Prediction: Machine learning models trained on chemogenomic data predict the most likely protein targets for a novel NP, streamlining mechanistic deconvolution [7] [8]. * Virtual Screening: In silico docking and pharmacophore models pre-filter vast digital NP libraries, enriching hit rates. Integrated approaches have shown over 50-fold enrichment compared to traditional methods [6]. * Structure Elucidation: Tools like NatGen, a deep learning framework, predict the 3D chiral configurations of NPs with 96.87% accuracy on benchmark datasets, solving a critical bottleneck for the >20% of known NPs with undefined stereochemistry [2]. * Activity Prediction: Quantitative Structure-Activity Relationship (QSAR) models forecast bioactivity, though their accuracy depends heavily on data quality and diversity [8].

2. Advanced Analytical and Target Engagement Platforms: The integration of high-resolution mass spectrometry (HR-MS) with NMR enables rapid dereplication and structural characterization [1]. Crucially, technologies like the Cellular Thermal Shift Assay (CETSA) and its proteome-wide variants (e.g., thermal proteome profiling) allow for the direct confirmation of target engagement within a physiologically relevant cellular context, moving beyond simple biochemical assays [6].

3. Synthetic Biology and Engineered Production: To address supply and sustainability, genetic tools are revolutionizing NP access. * Genome Mining: Sequencing microbial genomes reveals cryptic "silent" biosynthetic gene clusters (BGCs) that are not expressed under standard lab conditions [5] [9]. * CRISPR-Cas and Refactoring: CRISPR-based tools are used to activate these silent BGCs or refactor them into amenable host organisms (e.g., Streptomyces or Aspergillus) for reliable production [5] [9]. * Cell-Free Biosynthesis: This emerging strategy bypasses cellular viability constraints altogether, using extracted enzymatic machinery to produce and diversify NPs in vitro, enabling the synthesis of otherwise toxic or low-yield compounds [9].

Table 2: Performance of Modern AI Tools in Natural Product Research

| Tool/Technology | Primary Application | Key Performance Metric | Impact |

|---|---|---|---|

| NatGen [2] | 3D Structure & Chirality Prediction | 96.87% accuracy on benchmark set; <1 Å RMSD. | Solves stereochemistry for 684,619+ NPs in COCONUT DB. |

| Integrated AI Virtual Screening [6] | Hit Identification | >50-fold enrichment over traditional screening. | Dramatically reduces cost and time for lead discovery. |

| CETSA [6] | Cellular Target Engagement | Quantifies target stabilization in intact cells/tissues. | Validates mechanistic hypothesis in physiologically relevant system. |

| CRISPR Activation [5] [9] | Silent Gene Cluster Activation | Enables production of previously inaccessible NP classes. | Expands the accessible NP universe from a single genome. |

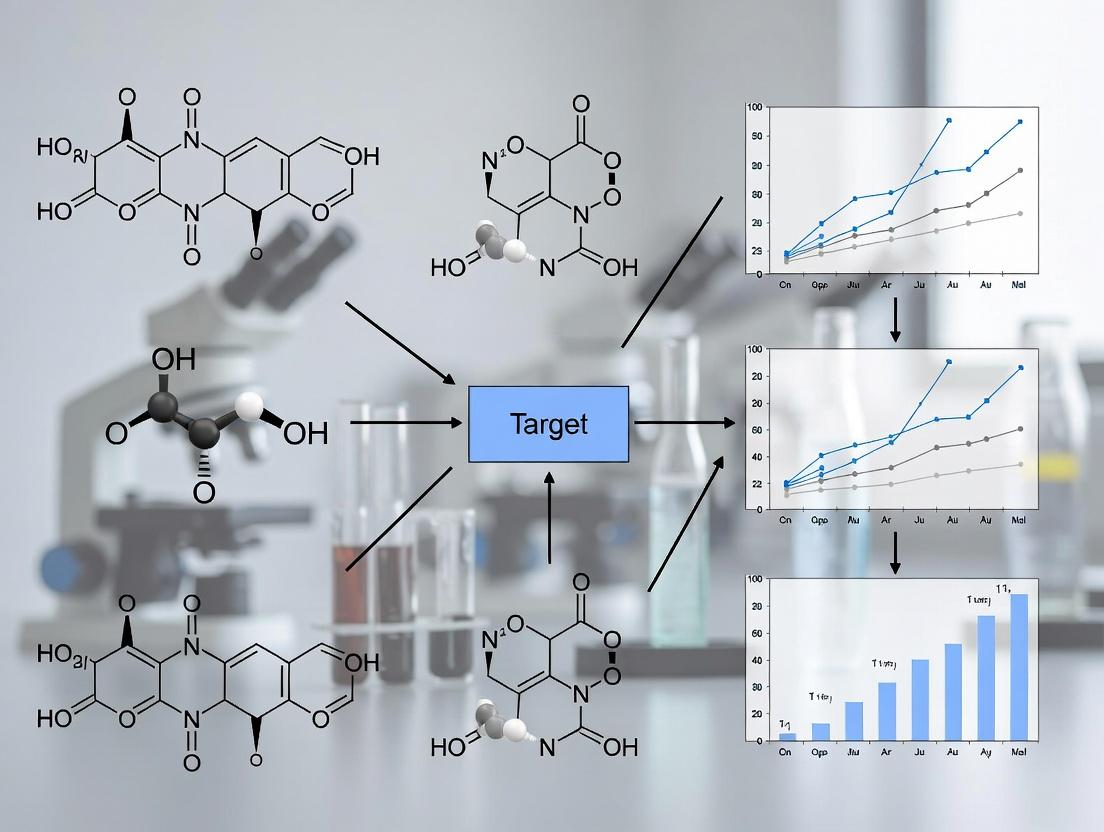

Diagram: Modern NP Drug Discovery Workflow Integrating AI and Experimental Validation. This closed-loop system emphasizes how experimental feedback refines predictive models, directly supporting the thesis of improved prediction accuracy.

Technical Support Center: Troubleshooting Natural Product Research

This section provides targeted guidance for common experimental challenges, framed within the goal of enhancing predictive model accuracy through reliable data generation.

Frequently Asked Questions (FAQs)

Q1: Our in silico virtual screening identified a promising NP hit from a database, but the compound is not commercially available. How can we proceed? A: This is a common challenge [4]. Your options are:

- Custom Synthesis: If the structure is known, engage a specialized organic synthesis laboratory. This is feasible for simpler structures but can be prohibitively expensive for complex ones.

- Source the Native Organism: If the source is known, acquire biomass (plant, microbial culture) through botanical gardens, culture collections, or ethical/bioprospecting-compliant field collection. You must then isolate the compound yourself [4].

- Engineered Biosynthesis: For microbial NPs, if the Biosynthetic Gene Cluster (BGC) is known, consider heterologous expression. Using CRISPR and refactoring tools, clone the BGC into a model host (like S. coelicolor) for production [5] [9].

- Seek an Analogue: Search commercial databases for structurally similar, available analogues that might share activity. Use this as a starting point for preliminary validation.

Q2: We isolated a novel compound, but standard target identification approaches (affinity pulldown) have failed. What are the next steps? A: Move to more holistic, systems-level technologies:

- CETSA/TPP: Implement a Cellular Thermal Shift Assay or Thermal Proteome Profiling. This method detects protein target engagement in intact cellular lysates or live cells by measuring ligand-induced thermal stabilization, requiring no chemical modification of the NP [6].

- Transcriptomics/Proteomics: Treat relevant cell lines with the NP and perform RNA-seq or mass spectrometry-based proteomics. Pathway analysis of differentially expressed genes or proteins can reveal the affected biological processes and infer potential targets [7].

- Network Pharmacology: Integrate your 'omics data with public bioinformatics databases to construct a compound-target-disease network, generating testable hypotheses about multi-target mechanisms [7].

Q3: How can we improve the accuracy of our QSAR models for predicting NP activity? A: Model accuracy hinges on data quality [8]. Focus on:

- Data Curation: Use high-confidence, consistently generated bioactivity data. Include well-validated negative (inactive) data to improve model discrimination [8].

- Representation: Employ advanced molecular descriptors or graph-based representations that capture the complex stereochemistry of NPs, potentially using AI-predicted 3D structures from tools like NatGen [2] [8].

- Define Applicability Domain: Clearly state the chemical space your model is trained on. Predictions for NPs falling outside this domain are unreliable [8].

- External Validation: Never rely solely on internal validation (e.g., cross-validation). Test your model on a truly external, blinded dataset to assess its real-world predictive power [8].

Troubleshooting Guides

Issue: Inconsistent or Unreproducible Bioactivity in Cell-Based Assays

- Potential Cause 1: Compound Purity or Stability. NPs in crude extracts or imperfectly purified fractions can have synergistic or antagonistic effects. Degradation can also occur.

- Solution: Re-analyze compound purity via HPLC/HR-MS. Test stability under assay conditions (medium, temperature). Use fresh stock solutions prepared in appropriate solvents (DMSO, ethanol).

- Potential Cause 2: Subtle Stereochemistry. Bioactivity can be highly specific to one stereoisomer.

- Solution: Confirm the absolute stereochemistry of your compound via computational prediction (NatGen) [2] or experimental methods (X-ray crystallography, chiral NMR analysis). Compare activity of different stereoisomers if available.

- Potential Cause 3: Cell Line Variability or Contamination.

- Solution: Authenticate your cell lines (STR profiling). Regularly test for mycoplasma contamination. Use consistent passage numbers and culture conditions.

Issue: Low Yield or Inaccessible Natural Product from Native Source

- Problem: The source organism is rare, slow-growing, or the NP is produced in trace amounts, making scaling impossible [4].

- Solutions:

- Pathway Engineering: If the biosynthetic pathway is known, use CRISPR-mediated gene editing in the native host to upregulate key enzymes or remove regulatory bottlenecks [5] [9].

- Heterologous Production: Refactor the entire BGC into a tractable industrial host (e.g., S. cerevisiae, E. coli with engineered pathways) [9].

- Cell-Free Synthesis: For complex pathways, explore in vitro cell-free protein expression systems that express the enzymatic machinery without cellular growth constraints, allowing precise control and potentially higher yields of toxic compounds [9].

Diagram: Troubleshooting Logic for NP Structure Elucidation. A clear structural definition is the critical first step for generating reliable data for predictive models.

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Modern Natural Product Research

| Reagent/Material | Function/Application | Key Considerations |

|---|---|---|

| CRISPR-Cas9 Gene Editing Kits | Activation of silent biosynthetic gene clusters; gene knockouts in host organisms [5] [9]. | Choose kits optimized for your host (actinomycetes, fungi). Requires prior genomic sequence data. |

| CETSA / TPP Assay Kits | Confirming direct target engagement of NPs in physiologically relevant cellular systems [6]. | Kits provide standardized protocols for cell lysis, heating, and protein quantification. Compatible with downstream MS or Western blot. |

| Cell-Free Protein Synthesis Systems | In vitro production of NPs using purified enzymatic machinery, bypassing cellular toxicity and yield issues [9]. | Systems are organism-specific (e.g., E. coli, wheat germ). Require purified DNA templates for biosynthetic enzymes. |

| Chiral Chromatography Columns | Separation and analysis of NP stereoisomers during purification and quality control. | Critical for validating AI-predicted chirality [2] and ensuring compound homogeneity for bioassays. |

| Stable Isotope-Labeled Precursors (e.g., ¹³C-glucose) | Feeding studies to trace biosynthetic pathways and aid in NMR-based structure elucidation. | Essential for deciphering complex NP biosynthesis prior to engineering efforts. |

| AI/Cheminformatics Software Licenses (e.g., for molecular docking, QSAR, ADMET prediction) | In silico screening, property prediction, and analog design [6] [8]. | Ensure software can handle the structural complexity and stereochemistry of NPs. Cloud-based platforms offer scalability. |

Experimental Protocols

Protocol 1: Validating NP Target Engagement Using Cellular Thermal Shift Assay (CETSA)

- Based on: Mazur et al. (2024) application of CETSA to quantify drug-target engagement ex vivo and in vivo [6].

- Principle: A ligand binding to its protein target often increases the protein's thermal stability. This shift can be measured by detecting the remaining soluble protein after heat denaturation.

- Method:

- Cell Treatment: Treat intact cells (or use tissue homogenates) with your NP at relevant concentrations and a vehicle control. Incubate (e.g., 30-60 min).

- Heating: Aliquot cell suspensions into PCR tubes. Heat each aliquot at a range of temperatures (e.g., 37–67°C) for a fixed time (e.g., 3 min) in a thermal cycler.

- Lysis & Clarification: Rapidly lyse heated cells, followed by centrifugation to remove aggregated, denatured proteins.

- Detection: Analyze the soluble protein fraction by Western blot (for specific target proteins) or quantitative mass spectrometry (for proteome-wide TPP).

- Analysis: Plot the amount of soluble protein remaining vs. temperature. A rightward shift in the melting curve for NP-treated samples indicates thermal stabilization and direct target engagement.

Protocol 2: Activating a Silent Biosynthetic Gene Cluster Using CRISPR-a

- Based on: Strategies reviewed by Madden et al. (2025) for enhancing microbial NP discovery [5] [9].

- Principle: A catalytically dead Cas9 (dCas9) fused to a transcriptional activator is guided by specific sgRNAs to the promoter region of a silent BGC, inducing its expression.

- Method:

- Bioinformatics: Identify a putative silent BGC in a microbial genome via antiSMASH or similar tools. Design 2-3 sgRNAs targeting the promoter region of the key biosynthetic gene.

- Vector Construction: Clone the sgRNAs into an expression plasmid containing the dCas9-activator fusion (e.g., dCas9-VPR). Use a host-specific shuttle vector.

- Transformation: Introduce the plasmid into the native NP-producing host strain or a suitable heterologous host containing the refactored BGC.

- Cultivation & Induction: Grow transformed cells under appropriate conditions and induce the expression of the CRISPR-a system.

- Metabolite Analysis: Extract metabolites from culture broth and analyze via LC-HRMS. Compare chromatograms to the control strain (without sgRNA or dCas9) to identify newly produced compounds.

The journey of natural products from ancient remedies to AI-predicted drug candidates underscores their unparalleled historical significance and ever-evolving modern relevance. The central challenge—and opportunity—lies in bridging the gap between the vast, complex chemical space of NPs and predictable, high-probability outcomes in drug discovery. By systematically addressing technical hurdles through the integrated use of AI prediction, robust target validation (e.g., CETSA), and innovative sourcing (e.g., synthetic biology), researchers can generate the high-fidelity data necessary to build and refine accurate predictive models. This virtuous cycle of prediction, experimental validation, and feedback is the cornerstone of the next generation of natural product-based therapeutics, ensuring that nature's chemical ingenuity continues to serve as a primary wellspring for human health.

Accurate prediction of the biological targets for natural products (NPs) is a cornerstone of modern drug discovery, given that approximately 60% of medicines approved in recent decades are derived from NPs or their derivatives [10]. However, this field is constrained by three interrelated fundamental challenges:

- Structural Complexity: NPs possess unique, often rigid scaffolds with multiple chiral centers and complex ring systems. Over 20% of known NPs lack complete chiral configuration annotations, and only 1–2% have fully resolved 3D crystal structures, making accurate molecular representation difficult [2].

- Data Sparsity: Bioactivity data for NPs is severely limited. Major databases contain structures with minimal associated target information, creating a "data desert" for training predictive computational models [10].

- Polypharmacology: NPs frequently interact with multiple protein targets due to their complex structures. This multi-target action is therapeutically valuable but exponentially complicates the accurate prediction of a compound's complete interaction profile [10].

Overcoming these barriers is essential to de-risk NP-based discovery and unlock new therapeutic candidates.

Troubleshooting Guides

This section employs a systematic 5-step troubleshooting framework [11] to address common experimental and computational obstacles.

Guide: Poor Target Prediction Accuracy for a Novel Natural Product

- Problem Description: A newly isolated NP yields low-confidence or implausible target predictions using standard similarity-based tools, delaying downstream validation.

- Impact: Inability to hypothesize a mechanism of action stalls the research project and consumes resources on blind screening.

- Context: Common when querying NPs with high structural complexity or novel scaffolds not well-represented in standard reference databases [10].

| Step | Action | Details & Tools |

|---|---|---|

| 1. Collect Information | Profile the query compound. | Determine molecular weight, key functional groups, and obtain the best possible 2D or 3D structure. Calculate molecular fingerprints (e.g., ECFP4, MACCS). |

| 2. Analyze Your Approach | Diagnose the likely failure mode. | If the structure is novel: The compound may have low similarity to all entries in a general-purpose database [10]. If chiral centers are undefined: 2D similarity searches are inherently limited [2]. |

| 3. Implement Your Solution | Apply specialized NP-focused tools. | Use CTAPred [10]: This tool uses a reference database focused on NP-relevant targets. Run: CTAPred predict -q your_compound.smi -o results.csv. Use NatGen [2]: If stereochemistry is unknown, first predict the 3D configuration with NatGen to enable 3D similarity searches. |

| 4. Assess the Solution | Evaluate prediction plausibility. | Check if predicted targets share a therapeutic theme. Manually inspect the top 3-5 most similar reference compounds for shared substructures. Are Tanimoto scores >0.5? |

| 5. Document the Process | Record parameters and outcomes. | Document the tool, database version, fingerprint type, similarity scores, and final target list. This creates a reproducible workflow for similar future compounds. |

Guide: Insufficient Bioactivity Data for Model Training

- Problem Description: You aim to build a machine learning (ML) model to predict activity for a NP class but have fewer than 100 reliable data points.

- Impact: Traditional DL models fail to converge or severely overfit, producing unreliable and non-generalizable predictions [12].

- Context: A typical scenario in NP research where isolation and testing are low-throughput [10].

| Step | Action | Details & Tools |

|---|---|---|

| 1. Collect Information | Audit available data. | Compile all data (IC50, Ki, active/inactive labels). Annotate data sources and confidence levels. Quantify the exact data gap. |

| 2. Analyze Your Approach | Select a low-data strategy. | Choose a technique matching your goal: Transfer Learning (TL) for leveraging related large datasets [12]. Multi-Task Learning (MTL) if you have sparse data across several related targets [12]. Data Augmentation (DA) to artificially expand your dataset [12]. |

| 3. Implement Your Solution | Apply the chosen strategy. | For TL: Download a pre-trained model (e.g., on ChEMBL bioactivities) and fine-tune the final layers on your small NP dataset. For MTL: Frame the problem to jointly predict activity against 3-5 phylogenetically related target proteins. |

| 4. Assess the Solution | Validate rigorously. | Use stringent nested cross-validation. Compare performance to a baseline model trained only on your small data. Key metric: Improvement in the Area Under the Precision-Recall Curve (AUPRC) for the hold-out test set. |

| 5. Document the Process | Report the data strategy. | Detail the pre-trained model source, fine-tuning protocol, augmentation methods, and final validation results to ensure transparency. |

Guide: Ambiguous Stereochemistry Blocking Research

- Problem Description: NMR data for a purified NP is inconclusive, leaving multiple stereoisomers possible. Chemical synthesis of each candidate for testing is prohibitively slow.

- Impact: The absolute structure—and therefore its accurate computational modeling and structure-activity relationship (SAR)—remains unknown [13].

- Context: A frequent bottleneck where distal stereocenters are separated by rotatable bonds, confounding NMR analysis [13].

| Step | Action | Details & Tools |

|---|---|---|

| 1. Collect Information | Gather all existing analytical data. | Collate NMR, MS, HR-MS, and any chromatography data. Precisely list the remaining stereochemical possibilities. |

| 2. Analyze Your Approach | Evaluate structure elucidation options. | Option A (Computational): If crystals are unavailable, use ab initio 3D structure prediction. Option B (Experimental): If microcrystals exist, use definitive diffraction methods. |

| 3. Implement Your Solution | Execute the chosen method. | Option A: Submit the 2D structure to NatGen [2] for chiral configuration and 3D conformation prediction. Option B: Attempt Microcrystal Electron Diffraction (MicroED) on sub-micron crystals, which has succeeded with <1 µg of sample [13]. |

| 4. Assess the Solution | Resolve the ambiguity. | For NatGen: Evaluate prediction confidence scores (reported as % accuracy). For MicroED: Solve the crystal structure; a final R1 value < 0.2 indicates a reliable solution [13]. |

| 5. Document the Process | Archive the definitive structure. | Deposit the final 3D structure (e.g., as a .SDF or .CIF file) in the project repository and public databases if applicable. |

Frequently Asked Questions (FAQs)

Q1: What is the most practical first step for predicting targets of a newly isolated natural product with no known analogs?

A1: Begin with the CTAPred tool [10]. Its curated Compound-Target Activity (CTA) dataset is focused on proteins relevant to NP interactions, increasing the chance of meaningful hits even for unique structures. Start with the default ECFP4 fingerprint and the --top-n 3 parameter, as using the top 3 most similar references is often optimal [10].

Q2: Our ML model for NP activity performs well on training data but poorly on new compounds. Is this due to data scarcity, and how can we fix it? A2: Yes, this is a classic sign of overfitting from data scarcity. Implement Multi-Task Learning (MTL) [12]. By training a single model to predict activities for multiple related targets simultaneously, you allow the model to learn more generalized features from the combined data, which improves performance on your primary, data-sparse task.

Q3: We have a promising NP hit from phenotypic screening. How can we efficiently identify its protein target(s) to understand the mechanism? A3: Employ a similarity-based polypharmacology screening [10]. Use the NP's structure to query platforms like TargetHunter or SEA. These tools will generate a ranked list of putative targets based on known ligands. Prioritize targets that are biologically plausible within your phenotypic context for experimental validation (e.g., cellular thermal shift assay).

Q4: Why is 3D structural information critical for natural product research, and how can I obtain it without a crystal suitable for X-ray diffraction? A4: The 3D conformation dictates all molecular interactions. For NPs, undefined stereochemistry is a major barrier [2]. If traditional X-ray crystallography fails due to crystal size or quality, MicroED is a powerful alternative that can determine structures from nanogram quantities of microcrystalline powder [13]. For computational prediction, the NatGen framework offers high-accuracy 3D structure prediction from 2D inputs [2].

Q5: What strategies exist for collaborating on NP drug discovery when bioactivity data is proprietary and cannot be shared centrally? A5: Federated Learning (FL) is designed for this challenge [12]. In an FL framework, collaborators train a shared model locally on their private datasets and only share model parameter updates (not the raw data). A central server aggregates these updates to improve a global model. This maintains data privacy while leveraging the collective knowledge across institutions.

Detailed Experimental & Computational Protocols

Protocol: Similarity-Based Target Prediction with CTAPred

Objective: To predict potential protein targets for a NP query compound using a focused reference database.

- Installation: Clone the CTAPred repository:

git clone https://github.com/Alhasbary/CTAPred.git. Install dependencies per therequirements.txtfile [10]. - Input Preparation: Prepare a query file (

query.smi) containing the SMILES string of your NP, one per line. - Run Prediction: Execute the core command:

python CTAPred.py predict -i query.smi -d CTA_reference.db -f ECFP4 -n 3 -o predictions.tsv.-d: Specifies the curated CTA database.-f: Uses the ECFP4 fingerprint for similarity calculation.-n 3: Considers the 3 most similar reference compounds for prediction, a recommended setting [10].

- Output Analysis: The

predictions.tsvfile will list predicted targets, associated similarity scores, and the source reference compounds. Visually inspect the top reference compounds to assess chemical rationale.

Protocol: Overcoming Data Scarcity with Transfer Learning (TL)

Objective: To build a predictive model for a sparse NP dataset by leveraging knowledge from a large, related chemical dataset.

- Base Model Selection: Obtain a pre-trained deep neural network (DNN) model trained on a large-scale bioactivity dataset (e.g., ChEMBL).

- Model Adaptation: Remove the final classification/regression layer of the pre-trained model. Replace it with new layers tailored to your specific prediction task (e.g., active/inactive for your target).

- Two-Stage Training:

- Stage 1 (Feature Extraction): Freeze the weights of all pre-trained layers. Train only the newly added final layers on your small NP dataset. This allows the model to learn a task-specific mapping from the general features.

- Stage 2 (Fine-Tuning): Unfreeze some of the deeper layers of the pre-trained model and continue training with a very low learning rate (e.g., 1e-5) on your NP data. This gently adjusts the general features to your specific chemical space.

- Validation: Use leave-one-out or 5-fold cross-validation on your NP data to assess performance gains over a model trained from scratch.

Protocol: 3D Structure Determination via MicroED

Objective: To determine the atomic structure of a natural product from microcrystals.

- Sample Preparation: Gently grind a few micrograms of the purified NP to a fine powder. Apply the powder to a TEM grid. Blot and rapidly plunge-freeze the grid in liquid ethane [13].

- Data Collection: Load the grid into a cryo-electron microscope. Identify crystalline domains at low dose. Collect continuous-rotation MicroED data by tilting the stage through a small angular range (e.g., ±50°), while the crystal is exposed to a low-dose electron beam [13].

- Data Processing: Use software (e.g., XDS, DIALS) to index diffraction patterns, integrate intensities, and scale the data. Merge data from multiple crystals if necessary.

- Structure Solution: Use direct methods or intrinsic phasing (e.g., in SHELXT) to obtain an initial structural model. The high resolution (<1 Å) typically achieved allows for ab initio solution [13].

- Refinement & Validation: Refine the atomic coordinates and displacement parameters against the diffraction data using crystallographic software (e.g., SHELXL). Validate the final model using standard crystallographic R-factors and geometry checks.

Workflow for Similarity-Based Target Prediction [10]

MicroED Workflow for NP Structure Elucidation [13]

Transfer Learning Protocol for Sparse NP Data [12]

The Scientist's Toolkit: Research Reagent Solutions

| Item / Resource | Function & Application | Key Notes |

|---|---|---|

| CTAPred Tool & CTA Dataset [10] | Open-source command-line tool for target prediction. Uses a focused reference database of compound-target activities relevant to NPs. | Optimized for NPs. Use --top-n 3 parameter. Superior to general databases for NP queries. |

| NatGen Framework & Database [2] | Deep learning model for predicting the 3D chiral configurations and conformations of NPs from 2D structures. | Achieves ~97% accuracy. Provides predicted 3D structures for over 684,000 NPs in the COCONUT database. |

| MicroED (Microcrystal Electron Diffraction) [13] | A Cryo-EM technique for determining atomic structures from sub-micron crystals. | Requires only nanogram quantities. Solves stereochemistry ambiguities where NMR fails. Essential for complex NPs. |

| ChEMBL Database [10] | A large, publicly available database of bioactive molecules with curated target annotations. | A primary source for building reference datasets and pre-training models. Version control is critical. |

| COCONUT (COlleCtion of Open Natural prodUcTs) [10] [2] | One of the largest open repositories of both elucidated and predicted NP structures. | Contains largely unannotated structures. Serves as a primary source for virtual screening and structure prediction (e.g., via NatGen). |

| Similarity Ensemble Approach (SEA) & TargetHunter [10] | Web servers for similarity-based target prediction. | Useful for initial, user-friendly queries. SEA uses statistical significance; TargetHunter offers a customizable Tanimoto threshold. |

| Pre-Trained Deep Learning Models (e.g., on ChEMBL) [12] | Models trained on large, general bioactivity datasets. | The starting point for Transfer Learning strategies to adapt to small, specific NP datasets, saving time and data. |

| Federated Learning (FL) Framework [12] | A distributed machine learning approach that trains an algorithm across decentralized devices holding local data samples. | Enables collaborative model training across institutions without sharing raw, proprietary NP bioactivity data, addressing privacy concerns. |

In modern pharmaceutical research, the journey from a promising compound to an approved therapy remains fraught with risk, characterized by lengthy timelines and prohibitive costs averaging over 12 years and $2.5 billion per drug [14]. A staggering 90% of drug candidates that enter clinical trials fail, with approximately 40-50% of these failures attributed to a lack of clinical efficacy [15]. A core contributor to this inefficiency is inaccurate target prediction—the flawed identification of the biological molecule a drug is designed to modulate.

This technical support center is designed within the critical context of improving prediction accuracy, especially for natural products research. Natural products are a vital source of novel therapeutics, constituting more than 60% of approved drugs since 1981 [16]. However, their unique and complex chemical structures make traditional target prediction models, often trained on synthetic compounds, less reliable [16]. Poor prediction at this earliest stage creates a cascade of problems, misdirecting the entire optimization process and ultimately leading to clinical failure due to inadequate efficacy or unmanageable toxicity [15] [17].

The following guides and FAQs address specific, high-impact experimental challenges, providing actionable protocols and frameworks to enhance the accuracy of target identification and validation, thereby de-risking the subsequent phases of drug development.

Troubleshooting Guide: Common Target Prediction & Validation Issues

This guide diagnoses frequent problems encountered during early-stage discovery and provides targeted solutions to improve outcomes.

Problem 1: Lack of Efficacy in Animal Models Despite Strong In Vitro Data

- Symptoms: A lead compound shows potent activity in biochemical or cell-based assays but fails to demonstrate the expected therapeutic effect in a preclinical disease model.

- Potential Causes & Solutions:

- Cause: Poor Target Engagement in vivo. The compound may not effectively reach or bind to its intended target within the complex physiological environment of a living organism [17].

- Solution: Implement Cellular Thermal Shift Assay (CETSA) or similar label-free technologies. CETSA allows for the direct measurement of drug-target engagement in intact cells, tissue samples, or even in vivo, providing a physiologically relevant validation step before committing to extensive animal studies [17].

- Cause: Incorrect Target Prediction. The compound's mechanism of action may be different from the hypothesized target.

- Solution: Employ broad phenotypic screening or chemo-proteomic profiling (e.g., using activity-based protein profiling) to identify the true biological target(s) of the compound before proceeding with optimization [15].

Problem 2: High Attrition During Lead Optimization Due to Poor Drug-Like Properties

- Symptoms: Promising hit compounds consistently fail during optimization cycles due to issues with solubility, permeability, metabolic stability, or toxicity.

- Potential Causes & Solutions:

- Cause: Over-reliance on Structure-Activity Relationship (SAR) alone. Optimization focuses solely on improving potency and specificity for the target, neglecting Structure-Tissue Exposure/Selectivity Relationship (STR) [15].

- Solution: Adopt a STAR (Structure-Tissue Exposure/Selectivity-Activity Relationship) framework. Classify leads not just by potency, but by their tissue exposure and selectivity profile. This helps identify compounds (Class I & III) that require lower doses for efficacy, improving the therapeutic window and clinical success rate [15].

- Cause: Inefficient chemical exploration. The synthetic exploration of analogs is slow and does not effectively probe the chemical space for optimal properties.

- Solution: Integrate High-Throughput Experimentation (HTE) with deep learning-based reaction prediction. As demonstrated in a recent Nature study, this combination can rapidly generate large, diverse virtual libraries from a hit scaffold and accurately predict synthesizable compounds with improved potency and properties, accelerating the hit-to-lead phase [18].

Problem 3: AI/ML Target Prediction Model Performs Poorly on Natural Products

- Symptoms: A target prediction model built on large chemical databases (e.g., ChEMBL) shows high accuracy for synthetic drug-like molecules but generates unreliable predictions for novel natural product scaffolds.

- Potential Causes & Solutions:

- Cause: Data Scarcity and Distribution Shift. Natural products are under-represented in training datasets and occupy a different region of chemical space (often higher molecular weight, greater structural complexity) than typical synthetic molecules [16].

- Solution: Utilize Transfer Learning. Pre-train a deep learning model (e.g., a Multilayer Perceptron) on a large, diverse dataset of synthetic compound-target interactions. Then, fine-tune this model on a smaller, curated dataset of natural product bioactivities. This approach leverages general learning from big data while adapting to the specific features of natural products, significantly improving prediction AUROC (e.g., from ~0.87 to over 0.91) [16].

Table: Root Causes of Clinical Failure and Their Link to Early Prediction

| Primary Cause of Failure | Approximate % of Failures | Connection to Poor Target Prediction |

|---|---|---|

| Lack of Clinical Efficacy | 40-50% [15] | Drug modulates an irrelevant or incorrectly validated target; poor tissue exposure at disease site [15]. |

| Unmanageable Toxicity | 30% [15] | Off-target effects due to low selectivity; on-target toxicity in vital organs due to poor tissue selectivity prediction [15]. |

| Poor Drug-Like Properties | 10-15% [15] | Early optimization focused only on potency (SAR), ignoring exposure/selectivity (STR), leading to compounds with insurmountable PK/PD issues [15]. |

Frequently Asked Questions (FAQs)

Q1: Our lead compound engages the target in cellular assays but shows no efficacy in the disease model. What should we do next? A: This discrepancy strongly suggests a target engagement or validation issue in a physiological context. Your immediate next step should be to confirm target engagement in the relevant disease model tissue using a direct method like CETSA [17]. Concurrently, revisit your target hypothesis. The lack of efficacy may indicate that the target's role in the disease pathway is not as critical as assumed, a problem known as inadequate preclinical target validation [17].

Q2: How can we improve target prediction accuracy for understudied natural products with limited bioactivity data? A: The most effective strategy is transfer learning [16]. Do not try to build a model from scratch on sparse natural product data. Instead:

- Start with a model pre-trained on a massive, general dataset of drug-target interactions (e.g., from ChEMBL).

- Fine-tune this model on your specialized, smaller dataset of natural product activities. This allows the model to apply broad principles of molecular recognition learned from millions of data points to the specific domain of natural products, achieving high accuracy even with limited task-specific data [16].

Q3: What is the most common mistake in building machine learning models for lead prioritization, and how can we avoid it? A: A critical mistake is temporal misalignment of data or information leakage [19]. This occurs when a model is trained on data (e.g., a compound's full toxicity profile or late-stage assay results) that would not be available at the time you need to make the actual prediction (e.g., early after initial synthesis). This creates models that perform well in validation but fail in real-world use. Solution: Build your training dataset using "snapshots" of data that mirror the real decision point. For example, train your model to predict clinical success using only the types of data (e.g., in vitro potency, early ADMET) that are available at the end of the lead optimization phase, not including data from later-stage animal studies [19].

Q4: Beyond potency (IC50/Ki), what are the most critical factors to optimize early to reduce clinical failure risk? A: You must optimize for tissue exposure and selectivity (STR) alongside activity (SAR). The STAR framework defines this integrated approach [15]. A compound with moderately high potency but excellent exposure in the disease tissue (and low exposure in organs prone to toxicity) – a Class III drug – often has a better clinical outlook than a super-potent compound with poor tissue distribution – a Class II drug. Early attention to properties that govern tissue distribution (e.g., logP, polarity, transporter affinity) is essential [15].

Featured Experimental Protocols

Protocol 1: Transfer Learning for Target Prediction of Natural Products

This protocol, based on [16], details how to adapt a general prediction model to the natural product domain.

- Data Curation:

- Source Data: Obtain a large-scale drug-target interaction dataset (e.g., from ChEMBL). Remove any known natural products or their derivatives from this set.

- Target Data: Curate a smaller, high-quality dataset of natural products with experimentally confirmed protein targets. Ensure a balanced representation of active and inactive pairs.

- Model Pre-training:

- Select a deep learning architecture, such as a Multilayer Perceptron (MLP).

- Using the large source dataset, train the model to predict binary compound-target interaction. Use a low learning rate (e.g., 5×10⁻⁵) and a large batch size (e.g., 1024) for stable convergence [16].

- Validate using 5-fold cross-validation. The pre-trained model should achieve a high AUROC (>0.85) on its own test set.

- Model Fine-tuning:

- Take the pre-trained model and replace its final classification layer.

- Train (fine-tune) the model on your natural product dataset. Use a higher learning rate (e.g., 5×10⁻³) for this stage to allow the model to adapt to the new data distribution [16].

- Freeze the weights of the initial layers of the network initially, then gradually unfreeze them in later training epochs.

- Validation:

- Evaluate the fine-tuned model on a held-out test set of natural products. A successful transfer learning approach should yield a final AUROC > 0.90 [16].

- Perform embedding space analysis to visualize how the model has learned to map natural products relative to synthetic molecules.

Protocol 2: Integrated Hit-to-Lead Optimization Using HTE and Deep Learning

This protocol, based on the workflow from [18], accelerates lead discovery through systematic chemical exploration.

- Reaction Scope Definition & HTE:

- Define a versatile chemical reaction applicable to your hit scaffold (e.g., Minisci-type C-H alkylation for heteroarenes).

- Perform High-Throughput Experimentation (HTE) in microtiter plates to test thousands of reaction conditions (variations in reagent, catalyst, solvent, temperature). This generates a robust dataset of successful reactions (e.g., 13,490 data points) [18].

- Reaction Outcome Prediction Model:

- Train a deep graph neural network (GNN) on the HTE data. The model learns to predict the success and yield of novel reactant combinations within the defined reaction space.

- Virtual Library Enumeration & Multi-Parameter Optimization:

- Use your hit compound as a core scaffold. Enumerate a large virtual library (e.g., >26,000 molecules) by applying the predicted reactions to available modification sites [18].

- Screen this library in silico using a cascade of filters:

- Synthetic Accessibility: Apply the trained GNN to predict if a molecule can be reliably synthesized.

- Physicochemical Properties: Filter based on rules (e.g., Lipinski's) and predicted ADMET.

- Target Binding: Use structure-based scoring (e.g., docking, binding affinity prediction) to prioritize molecules.

- Synthesis & Validation:

- Synthesize the top-ranking candidates (e.g., 14 compounds) from the virtual screen.

- Test them in biological assays. The integrated workflow has demonstrated the ability to identify compounds with potency improvements up to 4500-fold over the original hit [18].

- Validate binding modes through methods like co-crystallography.

Visualization of Key Concepts and Workflows

Diagram 1: The cascade of failure from poor target prediction to clinical failure.

Diagram 2: Integrated workflow for accelerated hit-to-lead optimization [18].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table: Key Resources for Advanced Target Prediction and Optimization

| Item / Solution | Function / Purpose | Example / Notes |

|---|---|---|

| CETSA Kits & Reagents | To measure direct drug-target engagement in physiologically relevant environments (cells, tissues). Critical for validating that a compound binds its intended target in a complex biological system before costly in vivo studies [17]. | Commercial CETSA kits (e.g., from Pelago Bioscience) or established lab protocols. Requires a thermostable target protein and a detection method (e.g., Western blot, immunoassay). |

| Curated Natural Product-Target Datasets | For training and validating specialized AI prediction models. The quality and scope of this data are the limiting factors for model accuracy [16]. | Databases like NPASS, CMAUP, or proprietary in-house collections. Must include both active and confirmed inactive pairs for reliable model training. |

| Pre-trained AI/ML Model Weights | A starting point for transfer learning. Saves immense computational time and data resources compared to building models from scratch [14] [16]. | Models published on platforms like GitHub (e.g., from studies like [16]) or available through commercial AI drug discovery platforms (e.g., Insilico Medicine). |

| High-Throughput Experimentation (HTE) Kits | To rapidly generate large, high-quality datasets on chemical reactions or biological interactions, which fuel predictive AI models [18]. | Commercially available HTE kits for common reaction types (e.g., amide coupling, cross-coupling) or for ADMET profiling (e.g., metabolic stability microsomal kits). |

| Graph Neural Network (GNN) Software | To build models that learn directly from molecular graph structures, ideal for predicting reaction outcomes, properties, and activities [18]. | Libraries such as PyTorch Geometric, Deep Graph Library (DGL), or commercial software. Requires significant computational expertise and GPU resources. |

| Structure-Tissue Exposure/Selectivity (STR) Assay Panel | To experimentally determine the tissue distribution profile of lead compounds, a key component of the STAR framework [15]. | May include assays for tissue-specific transporter affinity, tissue homogenate binding, or advanced imaging techniques (e.g., quantitative whole-body autoradiography) in animal models. |

This technical support center provides guidance for researchers employing Guilt-by-Association (GBA) and similarity-based paradigms to predict targets for natural products (NPs). The content supports the broader thesis that integrating these computational principles with robust experimental validation is key to improving prediction accuracy and accelerating NP drug discovery.

Foundational Concepts FAQ

Q1: What are the core principles of 'Guilt-by-Association' (GBA) and similarity-based prediction?

- GBA Principle: This principle operates on the hypothesis that biologically related entities (e.g., genes, proteins, compounds) share similar functions or interactions. If a novel entity is associated with a network of entities known to be involved in a specific process, it is inferred to be "guilty" of participating in that same process [20]. In drug discovery, this translates to predicting that a query compound will interact with the protein targets of its structurally or functionally similar neighbors [21].

- Similarity-Based Prediction: This is a direct application of GBA for small molecules. It is based on the premise that structurally similar compounds are likely to have similar biological activities and bind to similar protein targets [10]. Methods typically involve calculating molecular fingerprints (numerical representations of structure) and using similarity metrics like the Tanimoto coefficient to find the closest matches in a reference database of known compound-target pairs [22].

Q2: How do these paradigms specifically address challenges in natural product research? Natural products pose unique challenges, including structural complexity, scarce bioactivity data, and the "cold-start" problem for novel compounds. GBA and similarity-based methods address these by:

- Leveraging Limited Data: They can make predictions even for NPs with no known targets by associating them with well-characterized similar compounds [10].

- Data Augmentation: Advanced frameworks like GBA-Mixup artificially expand training data by interpolating the embeddings of neighboring drugs and targets, improving model performance in sparse data regions [21].

- Focused Libraries: Tools like CTAPred create specialized reference datasets focused on proteins relevant to NP activity, reducing noise from non-relevant targets found in broader chemical databases [10].

Q3: What is the typical performance improvement when using advanced GBA frameworks? Recent implementations demonstrate significant gains in predictive accuracy. The table below summarizes key quantitative improvements from the MixingDTA framework [21].

Table 1: Performance Improvement of the MixingDTA GBA Framework

| Model Component | Key Improvement | Reported Performance Gain |

|---|---|---|

| MEETA Backbone Model | Uses pretrained language models for molecules and proteins. | Up to 19% improvement in Mean Squared Error (MSE) over prior state-of-the-art models. |

| GBA-Mixup Augmentation | Interpolates embeddings based on GBA principle to tackle label sparsity. | Contributes a further 8.4% improvement in MSE to the MEETA model. |

| Model-Agnostic Benefit | The GBA-Mixup strategy can be applied to other model architectures. | Delivers performance gains of up to 16.9% across all tested backbone models. |

Troubleshooting Common Experimental Issues

Issue 1: High False Positive Rates in Target Predictions

- Problem: Your in silico screen returns an implausibly large number of potential protein targets, many of which fail experimental validation.

- Diagnosis & Solution:

- Refine Similarity Thresholds: Using too many reference compounds for prediction increases false positives [10]. Optimize the number of top similar neighbors (often between 1 and 5) [10].

- Curate Your Reference Database: Ensure your reference library is high-quality and domain-specific. For NPs, use databases like COCONUT, NPASS, or CMAUP [10] instead of general chemical libraries. Tools like CTAPred pre-filter targets to those more relevant to NPs [10].

- Apply Pharmacophore Filters: Integrate shape and chemical feature matching (e.g., using ROCS) post-similarity search to ensure predicted hits share critical interaction motifs [10].

Issue 2: Poor Performance on Novel or Structurally Unique Natural Products ("Cold-Start")

- Problem: Prediction models fail for NPs that have no close analogs in existing bioactivity databases.

- Diagnosis & Solution:

- Utilize Pretrained Foundation Models: Employ models like MolFormer for molecules or ESM for proteins. These models learn general representations from vast datasets and can generate meaningful embeddings even for novel entities [21].

- Implement GBA-Mixup Strategies: Adopt data augmentation techniques that interpolate between a novel compound and its distant neighbors in the embedding space, effectively creating synthetic training data to inform the prediction [21].

- Leverage Multi-Modal Similarity: Move beyond 2D structure. Incorporate 3D shape similarity (e.g., with Electroshape) or predicted target profile similarity to find functional, rather than purely structural, neighbors [10].

Issue 3: Inability to Reproduce Published Algorithm Results

- Problem: You cannot replicate the performance or predictions of a published similarity-based tool.

- Diagnosis & Solution:

- Check for Full Disclosure: Many web servers do not disclose their specific algorithms or reference data, making reproduction impossible [10]. Solution: Prefer using open-source, command-line tools (e.g., CTAPred) [10] where the code and data pipeline are transparent.

- Verify Dataset Versions: Bioactivity databases (ChEMBL, COCONUT) are frequently updated. Ensure you are using the same version as the original study [10].

- Audit for "Multifunctionality Bias": GBA methods can be biased toward genes/proteins that are simply well-studied and highly connected in networks, rather than being specifically informative [20]. Critically evaluate if predictions are driven by true biological signals or general gene annotation statistics.

Detailed Experimental Protocols

Protocol 1: Implementing a Similarity-Based Target Prediction Workflow with CTAPred This protocol outlines steps to predict targets for a list of NP query compounds using the open-source CTAPred tool [10].

- Input Preparation: Prepare a text file (

query_smiles.txt) containing the SMILES strings of your query NP compounds, one per line. - Reference Database Construction: Download and preprocess the required compound-target activity (CTA) dataset from the specified sources (ChEMBL, COCONUT, NPASS, CMAUP) as per CTAPred documentation. This creates a focused reference set.

- Fingerprint Generation & Similarity Search: Run CTAPred's first stage to generate molecular fingerprints (e.g., ECFP4) for both query and reference compounds, then execute a similarity search (e.g., using Tanimoto coefficient).

- Target Prediction & Ranking: Execute the second stage, which ranks the protein targets associated with the top N most similar reference compounds for each query. The default is the single most similar compound, but this parameter can be optimized [10].

- Output Analysis: The tool outputs a ranked list of predicted UniProt IDs for each query compound. Results should be prioritized for experimental validation.

Protocol 2: Experimental Validation of Predicted Targets using CETSA After in silico prediction, use the Cellular Thermal Shift Assay (CETSA) to confirm direct target engagement in a physiologically relevant cellular context [6].

- Cell Treatment: Treat live cells with your NP compound at various concentrations. Include a DMSO-only vehicle control.

- Heat Denaturation: Heat the cell aliquots to a range of temperatures (e.g., from 37°C to 65°C) to denature proteins.

- Cell Lysis & Protein Solubility Assessment: Lyse the heated cells and separate the soluble (non-denatured) protein fraction from the insoluble (denatured) fraction by centrifugation.

- Target Protein Quantification: Detect and quantify the amount of your specific predicted target protein remaining in the soluble fraction using Western blot or, for higher throughput, quantitative mass spectrometry [6].

- Data Interpretation: A positive interaction is indicated by a shift in the protein's thermal stability curve, meaning the target protein remains soluble at higher temperatures in compound-treated samples compared to the control. This confirms direct, cellular target engagement [6].

Protocol 3: Building a Multi-Feature Similarity Model for Therapeutic Effect Prediction This protocol is based on a method that predicts NP therapeutic effects by similarity to human metabolites [22].

- Data Collection: Gather structure (SMILES) and known target protein data for NPs (from TCM-ID, CTD) and human metabolites (from HMDB, KEGG). Collect phenotype/disease associations for metabolites from KEGG and HMDB.

- Similarity Feature Calculation:

- Structural: Calculate pairwise Tanimoto coefficients based on molecular fingerprints [22].

- Target: For each NP-metabolite pair, compute the average sequence alignment score (e.g., Smith-Waterman) of all their associated target proteins [22].

- Phenotype: Use a network propagation algorithm (e.g., Random Walk with Restart) on a biological network to compute phenotypic similarity scores [22].

- Model Training: Use known drug-metabolite pairs as a positive training set. Train a Support Vector Machine (SVM) classifier using the three similarity features as input to distinguish similar from dissimilar pairs [22].

- Prediction & Mapping: Apply the trained model to novel NP-metabolite pairs. For NPs paired with a metabolite, map the known therapeutic effects of that metabolite onto the NP as its predicted indication [22].

Core Algorithm & Workflow Visualizations

Similarity-Based Target Prediction Workflow

GBA-Mixup Data Augmentation Principle

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Resources for GBA and Similarity-Based NP Research

| Resource Name | Type | Primary Function in Research | Key Application / Note |

|---|---|---|---|

| ChEMBL [10] | Bioactivity Database | Provides a large, curated source of compound-target interaction data for building reference libraries. | Essential for training and benchmarking prediction models. |

| COCONUT [10] | Natural Product Database | One of the most extensive open repositories of elucidated and predicted NPs. | Critical for sourcing NP structures and building NP-focused datasets. |

| CTAPred [10] | Open-Source Tool | Command-line tool for similarity-based target prediction tailored for natural products. | Offers transparency and reproducibility; uses a focused NP-relevant target dataset. |

| CETSA [6] | Experimental Assay | Validates direct target engagement of compounds in intact cells and tissues. | Confirms computational predictions in a physiologically relevant context. |

| MolFormer / ESM [21] | Pretrained Language Model | Generates informative molecular or protein sequence embeddings for novel entities. | Solves the "cold-start" problem for NPs or proteins with little known data. |

| Tanimoto Coefficient | Similarity Metric | Quantifies the structural similarity between two molecular fingerprints. | The standard metric for 2D similarity-based virtual screening. |

| Random Walk with Restart | Network Algorithm | Measures phenotypic similarity by propagating information through biological networks [22]. | Enables prediction of therapeutic effects based on systems-level associations. |

The Methodological Toolkit: Computational and Experimental Strategies for Target Identification

Within the broader thesis context of improving target prediction accuracy for natural products (NPs), this technical support center addresses the operational use of essential similarity-based in silico target fishing tools. NPs are a vital source of novel therapeutics, but their complex, often poorly annotated structures pose a significant challenge for accurate target identification [23]. Computational tools that leverage the similarity principle—that similar molecules share similar biological targets—are indispensable workhorses for generating testable hypotheses [24]. This resource provides focused troubleshooting, protocols, and best practices for researchers and drug development professionals to optimize the use of these tools, thereby enhancing the reliability and efficiency of NP-based drug discovery pipelines.

Technical Specifications & Comparative Performance

Selecting the appropriate tool is the first critical step. The following table summarizes the core specifications and performance metrics of two highly recommended ligand-based target prediction methods, as evaluated in a 2023 comparative study [25].

Table 1: Comparative Analysis of Key Similarity-Based Target Prediction Tools

| Tool Name | Core Algorithm & Principle | Underlying Data (as of latest update) | Key Performance Metric | Primary Use Case & Strength |

|---|---|---|---|---|

| SwissTargetPrediction [24] | Combined 2D (FP2 fingerprint/Tanimoto) and 3D (Electroshape 5D/Manhattan) similarity scoring via logistic regression. | 376,342 compounds with >580,000 activities across 3,068 protein targets (ChEMBL23) [24]. | Achieves at least one correct human target in the top 15 predictions for >70% of external compounds [24]. | High-Precision Fishing: Best for producing reliable, high-confidence target predictions from a well-characterized chemical space. |

| Similarity Ensemble Approach (SEA) [25] | Calculates similarity between query and ligand sets per target using Tanimoto coefficients on ECFP4 fingerprints, aggregates via statistical model. | Not specified in evaluated search results; algorithm focuses on statistical enrichment. | High Recall: Able to find real targets for more query compounds compared to other methods [25]. | Broad-Spectrum Discovery: Optimal for casting a wide net and identifying potential targets outside the most obvious ones. |

Troubleshooting Guides & FAQs

A. Input & Job Submission Issues

Q1: My job on SwissTargetPrediction fails immediately or the molecule sketch appears incorrect. What should I check?

- Cause: This is typically an issue with the molecular structure input. The tool requires a valid, drug-like small molecule. Common errors include incorrect SMILES syntax, drawing disconnected structures, or inputting molecules that are too large (e.g., peptides or polymers) [24].

- Solution:

- Verify SMILES: If using a SMILES string, validate it using a dedicated chemical validator or re-sketch the molecule in the integrated MarvinJS editor [24].

- Check Structure: Ensure the drawn structure is a single, connected molecule. Remove any stray atoms or bonds.

- Use Provided Inputs: Utilize the tool's "Examples" to test the system and confirm your input method is working.

- Browser Compatibility: The website is optimized for Chrome, Firefox, and Safari. Using an unsupported browser may cause interface issues [24].

Q2: I receive no predictions or very low probability scores for my natural product. Is the tool not working?

- Cause: This is often a data gap issue, not a tool failure. The prediction is based on similarity to a library of known actives. If your NP is highly novel and structurally unique, there may be no sufficiently similar reference compounds in the tool's database [24] [23].

- Solution:

- Run Multiple Tools: Employ a consensus strategy. Submit your molecule to both SwissTargetPrediction and SEA. SEA's high-recall nature may identify targets missed by others [25].

- Check Tautomers/Chirality: For NPs, correct stereochemistry is critical. If possible, generate and submit the major tautomer and the correct stereoisomer.

- Consider Precursor or Fragment: Try predicting targets for known biosynthetic precursors or major structural fragments of your NP. A positive hit can provide a valuable starting point for investigation.

B. Performance & Technical Issues

Q3: The tool is running much slower than the advertised 15-20 seconds. What could be the problem?

- Cause: Server load or local internet connectivity issues. SwissTargetPrediction uses a queuing system (Slurm) to manage computations [24].

- Solution:

- Be Patient During Peak Times: High user traffic (common during business hours in major research regions) can delay job processing.

- Refresh the Page: The results page may not auto-refresh. Manually refresh your browser after a minute to check for job completion.

- Check Your Connection: A slow or unstable internet connection can delay the initial submission and final loading of results.

Q4: How can I improve the computational efficiency of my virtual screening workflow with these tools?

- Cause: Manual submission of large compound libraries is impractical.

- Solution: For batch processing, investigate if the tool offers an API (Application Programming Interface). For example, the underlying functions of tools like SwissTargetPrediction are often built upon open-source cheminformatics libraries like RDKit, which can be integrated into automated pipelines for high-throughput screening [26].

C. Interpretation & Validation Issues

Q5: I get a long list of predicted targets with varying probabilities. How do I prioritize them for experimental validation?

- Cause: The probabilistic output requires careful biological triage.

- Solution:

- Focus on High Probability & Consensus: Prioritize targets with the highest combined scores (SwissTargetPrediction) or p-values (SEA). Highest priority should go to targets that appear in the top ranks of multiple prediction tools [25].

- Analyze Target Class: Use the provided target class visualization (e.g., kinases, GPCRs) to see if predictions cluster in a biologically relevant family for your NP's known or suspected activity [24].

- Apply Biological Context: Cross-reference predictions with gene expression data in your disease model or known pathways associated with the NP's phenotypic effect.

Q6: My experimental validation contradicts the computational prediction. Does this mean the tool is inaccurate?

- Cause: Not necessarily. Several factors can explain this:

- Off-target Effects: The predicted target may be hit in vitro but the observed cellular phenotype is driven by a different, unpredicted target.

- Probe Dependency: The effect may require the compound to act on a protein complex or pathway network not captured by single-target prediction.

- Data Gap: The true target may not be present in the tool's training dataset.

- Solution:

- Use Prediction as a Guide: Treat computational predictions as high-quality hypotheses, not definitive answers. They are designed to direct and accelerate experimental work [27].

- Iterative Investigation: Use the initial prediction and experimental data to refine your search. For example, known ligands of the incorrectly predicted target can be used in new similarity searches to find related proteins.

Experimental Protocols for Enhanced Prediction

Accurate input structure is paramount, especially for the 3D similarity component of tools like SwissTargetPrediction. This protocol focuses on obtaining reliable 3D conformations for natural products.

Protocol: Generating 3D Structural Inputs for Natural Product Target Fishing

Objective: To prepare an accurate, energetically reasonable 3D molecular structure of a natural product for use in similarity-based target prediction tools that utilize 3D information.

Rationale: The 3D shape and electrostatic potential of a molecule are critical for its interaction with biological targets. Many NPs lack experimentally resolved 3D structures, and standard conformation generation tools may fail to correctly assign their complex chiral centers [2]. Using a specialized tool like NatGen significantly improves input accuracy.

Materials (The Scientist's Toolkit):

- NatGen: A deep learning framework for predicting chiral configurations and 3D conformations of natural products [2].

- COCONUT Database: The source of NP structures for pre-predicted files, or to find your compound of interest [2].

- RDKit or OpenBabel: Open-source cheminformatics toolkits for fundamental structure manipulation, format conversion, and basic conformation generation if NatGen is not used [26].

- SwissTargetPrediction Website: The primary target fishing tool that accepts 3D structure files (e.g., SDF) [24].

Methodology:

- Structure Acquisition & Preparation:

- Obtain the 2D molecular structure (SMILES or MOL file) of your natural product. Ensure the stereochemistry is defined as completely as possible from the literature or analytical data.

- If stereochemistry is unknown or ambiguous, note this for later interpretation of results.

3D Conformation Generation (NatGen-Preferred Method):

- Access the NatGen resource (https://www.lilab-ecust.cn/natgen/) [2].

- Option A: Search if your NP's 3D structure is already available in the NatGen-predicted database of 684,619 NPs from COCONUT. If found, download the 3D structure file (SDF format).

- Option B: If not pre-predicted, submit the 2D structure (SMILES) to the NatGen server for prediction. NatGen reports near-perfect accuracy (96.87%) on chiral configuration prediction [2].

- Download the predicted 3D structure file.

Alternative 3D Conformation Generation (Standard Method):

- If NatGen is unavailable, use a conformational search algorithm within software like RDKit or Schrodinger's LigPrep [27].

- Generate multiple low-energy conformers. For rigid molecules, one conformer may suffice. For flexible molecules, select a representative low-energy conformer or consider submitting multiple conformations as separate queries.

- Critical Note: This method may produce incorrect chiral assignments for complex NPs, reducing prediction reliability [2].

Tool Submission:

- Navigate to the SwissTargetPrediction website.

- Instead of sketching, use the "Choose File" option to upload your 3D structure file (e.g., .sdf, .mol2).

- Select the appropriate species (Human/Mouse/Rat) and run the prediction.

Diagram: Workflow for Natural Product Target Prediction

This technical support center is designed for researchers, scientists, and drug development professionals working to improve prediction accuracy in natural product targets research. The integration of Machine Learning (ML), Graph Neural Networks (GNNs), and Ensemble Models represents a transformative shift from manual, trial-and-error screening to data-driven, model-guided discovery pipelines [28]. Artificial Intelligence (AI) can accelerate the discovery of bioactive natural products by enabling efficient analysis of extensive datasets for virtual screening, compound optimization, and pharmacological mechanism elucidation [29].

However, implementing these advanced computational approaches presents unique challenges. This guide directly addresses specific, practical issues you might encounter during your experiments, framed within the broader thesis of enhancing predictive accuracy. The landscape is rapidly evolving, with regulatory bodies like the U.S. Food and Drug Administration (FDA) actively developing frameworks for the use of AI in drug development, underscoring the need for robust and reliable methodologies [30] [31].

Core Technical Challenges and Troubleshooting

This section provides targeted solutions for common technical problems in AI-driven natural product research.

Data Preparation and Modeling Issues

Q1: My dataset of natural product compounds and associated bioactivities is relatively small and imbalanced. Which model architectures should I prioritize to avoid overfitting and poor generalization? A1: Small, imbalanced datasets are a pervasive challenge in natural product research [32]. Prioritize the following strategies:

- Use Ensemble Models: Begin with tree-based ensemble methods like Gradient Boosting or Random Forest. These models are generally more robust to overfitting on small data than deep neural networks. A comparative study on multiclass prediction found Gradient Boosting achieved the highest macro accuracy (67%) among several algorithms on a limited dataset [33].

- Employ Transfer Learning: Leverage pre-trained models on large, general chemical databases (e.g., ChEMBL, PubChem). Fine-tune these models on your specific natural product dataset. This approach allows the model to start with learned chemical representations, reducing the data required for training [28].

- Implement Data Augmentation: For structural data, use validated techniques like SMILES randomization or adding synthetic data points through scaffold- or property-based sampling to artificially enlarge your training set.

- Apply Robust Validation: Use scaffold splitting (splitting data by molecular scaffold) instead of random splitting for train/test/validation sets. This ensures your model's performance is evaluated on novel chemotypes, providing a truer estimate of its generalization ability [32].

Q2: I am trying to model the complex relationships between natural products, their protein targets, and associated disease pathways. Why are standard ML models underperforming, and what is a better approach? A2: Standard ML models (e.g., SVMs, feed-forward neural networks) typically require tabular, fixed-size inputs and struggle with the inherently relational and heterogeneous data in biology. A superior approach is to structure your data as a knowledge graph and apply Graph Neural Networks (GNNs) [34].

- Root Cause: The power in this domain lies in the connections—e.g., how a compound interacts with a target, which is part of a pathway, which is implicated in a disease. Tabular models lose this relational information.

- Solution: Build a heterogeneous knowledge graph where nodes represent different entity types (Compound, Target, Pathway, Disease) and edges represent their relationships (binds-to, participates-in, associated-with). A GNN can then learn rich embeddings for each node by propagating information across the network, capturing the system's topology. This is fundamental for tasks like target fishing, drug repurposing, and polypharmacology prediction [28].

- Troubleshooting Step: If a GNN model is training slowly or consuming too much memory, check for densely connected "hub" nodes (e.g., a promiscuous compound). Consider graph sampling techniques like neighborhood sampling to manage computational load.

Q3: My ensemble model's performance plateaued. How can I strategically combine different model types (e.g., a GNN and a gradient boosting model) to achieve better predictive accuracy? A3: A naive ensemble (e.g., simple averaging) of diverse models may not yield optimal gains. Implement a learned ensemble or stacking strategy.

- Protocol for a Two-Stage Stacking Ensemble:

- Base Models: Train your diverse set of "base learners" (e.g., a GNN, a Random Forest, a XGBoost model) using k-fold cross-validation on your training data.

- Generate Meta-Features: For each training sample, collect the predictions from each base model's out-of-fold folds. This creates a new "meta-feature" dataset where each column is a base model's prediction, and the target remains the original label.

- Train Meta-Model: Train a relatively simple "meta-model" (e.g., logistic regression or a shallow neural network) on this new dataset. This meta-model learns the optimal way to weight and combine the predictions of the base models.

- Inference: For final prediction on new data, pass the input through all base models, then feed their outputs into the trained meta-model.

- Advanced Method: For dynamic, context-dependent weighting, consider a reinforcement learning-based ensemble framework. Research has shown that an ensemble model based on GNN and reinforcement learning (EMGRL) can effectively leverage the strengths of different base models by learning a policy for model selection and weighting [35].

Validation and Regulatory Compliance

Q4: My AI model shows excellent cross-validation metrics, but it fails during external validation or prospective testing. What critical steps might I have missed? A4: This is a common pitfall indicating a breach in the model's applicability domain or flaws in the validation protocol.

- Critical Check 1: Data Drift & Representativeness. Ensure your external/prospective data comes from the same distribution as your training data. Analyze the chemical space coverage (e.g., using PCA or t-SNE plots). If the new compounds fall outside the space covered during training, the model is extrapolating and its predictions are unreliable.

- Critical Check 2: Prospective Validation Protocol. AI-driven discovery must move beyond retrospective analysis. Pre-register your experimental validation plan before running the model on new data. Define the success criteria, the number of compounds to be tested, and the assay protocols. This prevents unintentional cherry-picking of positive results post-hoc [36].

- Critical Check 3: Uncertainty Quantification. Implement methods for your model to report prediction uncertainty (e.g., using ensemble variance, Bayesian methods, or conformal prediction). High uncertainty on a prediction is a flag for manual review. The FDA's draft guidance on AI emphasizes the challenge of uncertainty quantification and the need for it in regulatory submissions [31].