From Maceration to Machine Learning: A Comparative Analysis of Traditional vs. AI-Powered Natural Product Discovery

This article provides a comprehensive, comparative analysis of traditional and artificial intelligence (AI)-driven approaches in natural product (NP) research for drug discovery.

From Maceration to Machine Learning: A Comparative Analysis of Traditional vs. AI-Powered Natural Product Discovery

Abstract

This article provides a comprehensive, comparative analysis of traditional and artificial intelligence (AI)-driven approaches in natural product (NP) research for drug discovery. Aimed at researchers, scientists, and drug development professionals, it explores the foundational principles, methodological applications, practical challenges, and validation strategies of both paradigms. The scope spans from conventional extraction and bioassay-guided isolation to modern AI applications in virtual screening, activity prediction, and de novo design. The analysis synthesizes current evidence, addresses key operational and regulatory challenges, and outlines a forward-looking perspective on integrating these complementary methodologies to accelerate the translation of natural products into novel therapeutics.

The Pillars of Discovery: Core Principles of Traditional and AI-Driven Natural Product Research

Thesis Context: This guide is framed within a broader thesis comparing traditional and artificial intelligence (AI) approaches for natural products research, aiming to provide researchers, scientists, and drug development professionals with an objective, data-driven comparison of their performance and applications.

Historical Foundations and Modern Evolution of Natural Products Research

The investigation of natural products (NPs) for therapeutic purposes is one of the oldest scientific traditions, forming the cornerstone of modern pharmacology [1]. Historical records from ancient Mesopotamia (2600 B.C.), Egypt (Ebers Papyrus, 2900 B.C.), and China (Shennong Herbal, ~100 B.C.) document the extensive use of plant oils, extracts, and other natural substances to treat ailments ranging from coughs and inflammation to more complex diseases [1]. This empirical knowledge, developed through centuries of trial and error, established the foundational paradigm of traditional NP research: bioactivity-guided isolation and characterization [1].

The 19th and 20th centuries marked the systematization of this paradigm. Techniques such as solvent extraction, chromatography for purification, and spectroscopic methods (like NMR and mass spectrometry) for structural elucidation became standard [2]. This workflow led to landmark discoveries, including salicin (the precursor to aspirin) from willow bark, morphine from opium poppy, and penicillin from fungal mold [1] [3]. The core approach was—and in many labs remains—experimental and iterative: extract, fractionate, test for bioactivity, and identify the active constituent. However, this process is often labor-intensive, time-consuming, and limited by the complexity of natural mixtures and the scarcity of source material [2] [4]. The development of a single drug like Taxol from the Pacific yew tree spanned decades, highlighting the bottlenecks of traditional methods [4].

In the late 20th century, advances in genomics, analytical chemistry, and computational power began to reshape the field. The integration of omics technologies (genomics, metabolomics, proteomics) provided a more holistic view of the biosynthetic capabilities of organisms and the complexity of natural extracts [2] [5]. Concurrently, the advent of artificial intelligence (AI), particularly machine learning (ML) and deep learning (DL), has introduced a complementary, data-driven paradigm. AI leverages algorithms to find patterns in vast, multimodal datasets—including chemical structures, genomic sequences, spectral data, and pharmacological profiles—to predict bioactivity, elucidate structures, and even design novel NP-inspired molecules in silico before physical testing begins [6] [7] [4].

This evolution has created two interconnected yet distinct paradigms: the traditional, empirically-driven approach and the modern, AI-prediction-guided approach. The following sections provide a detailed, objective comparison of their methodologies, performance, and practical applications.

Comparative Analysis of Research Paradigms: Methodologies and Performance

The fundamental difference between the traditional and AI-enhanced paradigms lies in the starting point and sequence of the discovery workflow. The following table summarizes the core characteristics, advantages, and limitations of each approach.

Table 1: Core Paradigm Comparison in Natural Products Research

| Aspect | Traditional/Empirical Paradigm | AI-Enhanced/Predictive Paradigm |

|---|---|---|

| Primary Driver | Experimental observation & bioassay-guided fractionation [1] [2]. | Data-driven prediction & in silico modeling prior to experimentation [6] [4]. |

| Typical Workflow | Collection → Extraction → Bioactivity Screening → Bioassay-Guided Fractionation → Isolation → Structural Elucidation [2] [4]. | Data Curation & Integration → In Silico Target/Activity Prediction → Virtual Screening → Prioritization → Targeted Isolation/Synthesis [6] [7]. |

| Key Strengths | • Direct empirical validation.• Unbiased discovery of novel scaffolds & serendipitous findings.• Well-established, reproducible techniques [1] [2]. | • High speed & scalability in analyzing vast chemical space.• Ability to predict complex properties (ADMET, bioactivity).• Can overcome supply limitations via de novo design [6] [3] [4]. |

| Major Limitations | • Low-throughput, resource & time-intensive.• High rediscovery rate of known compounds (dereplication challenge).• Limited by source availability & extraction yields [2] [4]. | • Dependent on quality, quantity, and standardization of training data.• Risk of model bias & "black box" interpretability issues.• Predictions require final empirical validation [6] [7] [8]. |

| Data Utilization | Relies on data generated from each sequential experiment to guide the next step. | Integrates and learns from pre-existing, multimodal datasets (chemical, genomic, phenotypic) to generate hypotheses [7] [4]. |

The performance of these paradigms can be quantified in key discovery phases. Recent studies leveraging AI demonstrate significant acceleration.

Table 2: Performance Comparison in Key Research Phases

| Research Phase | Traditional Paradigm Metrics | AI-Enhanced Paradigm Metrics & Examples |

|---|---|---|

| Initial Screening & Hit Identification | • Months to years for extract library screening.• Hit rate often < 0.1% in random screening [2]. | • Virtual screening of millions of structures in days [4].• Example: Integration of pharmacophore & protein-ligand data reported >50-fold enrichment in hit rates compared to traditional methods [9]. |

| Structure Elucidation | • Relies on extensive NMR/MS analysis; can take weeks per compound.• Challenging for novel or complex scaffolds [2]. | • AI models (e.g., Deep Neural Networks) can predict NMR spectra or suggest structures from spectral data.• Example: DP4-AI automates NMR analysis for configuration assignment, speeding up the process [8]. |

| Lead Optimization | • Iterative synthesis & testing cycles; each cycle can take months.• Guided by structure-activity relationship (SAR) intuition [2]. | • Generative AI designs novel analogs meeting multiple criteria.• Example: Deep graph networks generated >26,000 virtual analogs, leading to inhibitors with a >4,500-fold potency improvement from initial hits [9]. |

| Mechanism Prediction | • Target identification requires extensive biochemical & cellular assays (e.g., pull-down assays, knockouts). | • Network pharmacology & knowledge graphs predict herb-ingredient-target-pathway interactions in silico [6] [5]. |

Detailed Experimental Protocols for Key Methodologies

Protocol for Traditional Bioassay-Guided Fractionation

This remains the gold standard for isolating bioactive pure compounds from complex natural extracts [2].

- Sample Preparation & Extraction: Source material (plant, marine organism, microbial culture) is dried, ground, and sequentially extracted with solvents of increasing polarity (e.g., hexane, dichloromethane, ethyl acetate, methanol/water) to create a crude extract library [2].

- Primary Bioactivity Screening: Crude extracts are screened in relevant in vitro biological assays (e.g., antimicrobial, anticancer, enzyme inhibition). The most potent extract is selected for further study.

- Bioassay-Guided Fractionation:

- The active crude extract is subjected to coarse separation, typically using vacuum liquid chromatography (VLC) or flash column chromatography with a normal-phase (e.g., silica gel) or reversed-phase (e.g., C18) stationary phase.

- Collected fractions are concentrated, and aliquots are tested in the same bioassay.

- Active fractions are further purified using high-performance liquid chromatography (HPLC) (analytical or semi-preparative scale) with optimized solvent systems.

- This cycle of fractionation and bioassay is repeated until pure, active compounds are obtained [2] [4].

- Structural Elucidation: The pure compound is subjected to:

- High-Resolution Mass Spectrometry (HR-MS) for molecular formula.

- Nuclear Magnetic Resonance (NMR) Spectroscopy (1D: 1H, 13C; 2D: COSY, HSQC, HMBC) for structural determination.

- Comparison with literature data or databases for dereplication to avoid rediscovery of known compounds [2].

Protocol for AI-Driven Virtual Screening & Validation

This protocol outlines a modern, prediction-first workflow for identifying NP-derived drug candidates [6] [4].

- Data Curation & Model Training:

- Collect and curate a multimodal dataset linking NP structures (from databases like COCONUT, NPASS) to associated data: genomic biosynthetic gene clusters (BGCs), mass spectra, and bioactivity profiles [7].

- Train a machine learning model (e.g., Graph Neural Network for structures, Convolutional Neural Network for spectra) on this data to learn patterns correlating features with a desired biological activity.

- Virtual Screening & Prioritization:

- Use the trained model to screen a in silico library of NP structures or hypothetical structures generated de novo.

- Rank candidates based on predicted activity scores, drug-likeness, and synthetic accessibility.

- In Vitro Experimental Validation:

- Targeted Isolation or Synthesis: Procure the top-ranked AI-predicted compounds either by targeted isolation from the natural source (if known) or by chemical synthesis.

- Primary Assay Confirmation: Test the compounds in the same biological assay used to define the model's training data. A key study validated several AI-predicted natural compounds in vitro, confirming their translational potential [6].

- Mechanistic Validation: Employ Cellular Thermal Shift Assay (CETSA) to confirm direct target engagement in live cells, providing functional validation of the AI prediction in a physiologically relevant context [9].

Visualization of Paradigms and Pathways

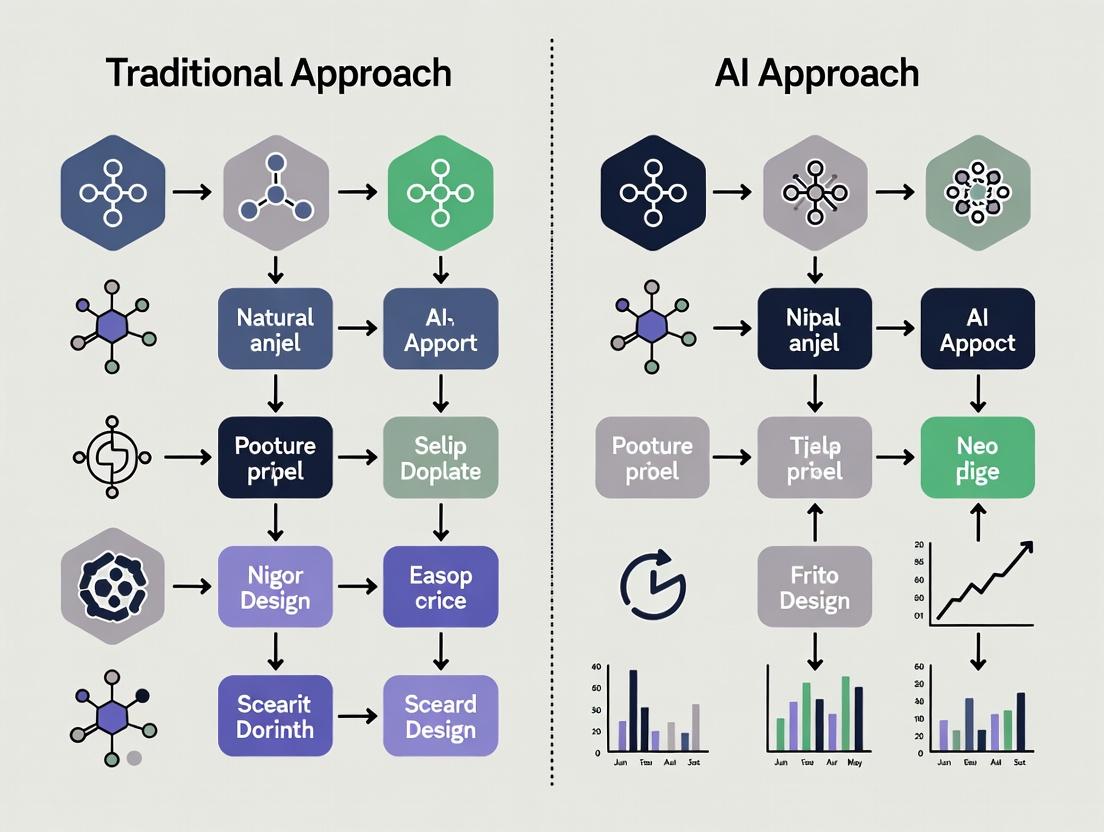

Comparative Research Workflow: Traditional vs. AI-Enhanced

This diagram contrasts the sequential, experiment-led traditional pathway with the integrated, prediction-led AI pathway.

Diagram 1: Comparative Workflow of Natural Product Research Paradigms

Network Pharmacology Mechanism for Multi-Target Natural Products

This diagram illustrates the AI-facilitated, systems-level approach to understanding how complex natural products interact with biological networks, moving beyond the single-target model [6] [5].

Diagram 2: AI and Network Pharmacology in Multi-Target Natural Product Action

The Scientist's Toolkit: Essential Research Reagent Solutions

The following table details key reagents, materials, and computational tools essential for conducting research within both paradigms.

Table 3: Essential Research Toolkit for Natural Products Research

| Tool/Reagent Category | Specific Examples & Functions | Primary Paradigm |

|---|---|---|

| Separation & Purification | Silica Gel / C18 Resin: Stationary phases for open column or flash chromatography for initial fractionation [2]. | Traditional |

| HPLC/Semi-prep HPLC Columns: For high-resolution purification of compounds to homogeneity. Critical for obtaining pure samples for NMR [2]. | Both | |

| Structural Elucidation | Deuterated Solvents (CDCl3, DMSO-d6): For NMR spectroscopy to provide a stable lock signal and avoid interfering proton signals [2]. | Both |

| NMR Reference Standards (TMS): Provides the chemical shift zero point for 1H and 13C NMR spectra [2]. | Both | |

| Bioactivity Validation | Cell-Based Assay Kits (e.g., MTT, Caspase-Glo): For measuring cytotoxicity, proliferation, or specific pathway activities in live cells [4]. | Both |

| CETSA (Cellular Thermal Shift Assay) Reagents: To confirm direct target engagement of a predicted compound in a physiologically relevant cellular context, bridging AI prediction and functional validation [9]. | AI-Enhanced | |

| Data Generation & Analysis | LC-HRMS Systems: Couples separation with high-resolution mass detection for metabolomic profiling and dereplication [2]. | Both |

| AI/ML Software Platforms (e.g., Python with RDKit, DeepChem): Open-source libraries for building predictive models for virtual screening and property prediction [4]. | AI-Enhanced | |

| Data Integration | Public NP Databases (COCONUT, NPASS, GNPS): Provide structured chemical and spectral data for training AI models [7]. | AI-Enhanced |

| Knowledge Graph Frameworks (e.g., Neo4j): Enable the integration of multimodal data (chemical, biological, omics) to uncover complex relationships as depicted in Diagram 2 [7]. | AI-Enhanced |

Synthesis and Future Trajectory

The historical, empirical paradigm and the emerging, AI-enhanced paradigm are not mutually exclusive but are increasingly synergistic. The traditional approach provides the critical, ground-truthed experimental data required to train and validate robust AI models [7]. In turn, AI offers powerful tools to overcome the historic bottlenecks of traditional research—speed, scale, and the dereplication challenge—by guiding researchers toward the most promising candidates within complex mixtures or vast chemical spaces [6] [4].

The future of natural product research lies in integrated, hybrid workflows. The ideal pipeline begins with AI mining multimodal knowledge graphs to predict promising source organisms or molecular scaffolds [7]. This is followed by targeted cultivation or synthesis and efficient, focused isolation using advanced analytics. Finally, AI-predicted mechanisms are validated using functional cellular assays like CETSA [9]. This convergence accelerates discovery while maintaining the empirical rigor that is the hallmark of traditional natural products chemistry. As data standardization improves and models become more interpretable, this synergistic paradigm is poised to unlock the vast, untapped potential of natural products for drug discovery more efficiently than ever before.

For decades, the systematic journey from biological source to isolated active compound has been the cornerstone of drug discovery. Traditional natural product research, responsible for approximately 32% of new small-molecule drugs introduced between 1981 and 2019 [10], relies on a rigorous, iterative process anchored by bioassay-guided fractionation (BGF). This workflow is defined by its activity-driven hypothesis testing, where each separation step is validated by a biological assay to track the desired effect [10].

This guide details the core components of this traditional paradigm—source selection, extraction, bioassay-guided fractionation, and structural elucidation—and objectively compares its performance against emerging artificial intelligence (AI)-driven approaches. The analysis is framed within a critical thesis: while AI promises revolutionary speed and scale [11], the traditional workflow remains indispensable for its empirical validation and proven track record in delivering clinically successful drugs from complex natural matrices [12] [13].

Foundational Stages of the Traditional Workflow

Source Selection and Prioritization

The initial stage involves selecting a promising biological source (plant, fungus, marine organism). This has historically been informed by ethnobotanical knowledge, ecological observations, or taxonomic relatedness to known prolific producers. A contemporary shift emphasizes sustainable sourcing, such as cultivating plants to prevent overexploitation of wild populations, as demonstrated with Salvia canariensis for biopesticide development [12].

Extraction and Initial Processing

The selected biomass undergoes extraction, typically using solvents of varying polarity. The goal is to obtain a chemically complex crude extract containing the source's secondary metabolites. Recent advancements apply Design of Experiments (DOE) to optimize extraction parameters (solvent, time, temperature) for improved yield and reduced environmental impact [14]. The initial crude extract is the starting material for all subsequent steps.

The Core Engine: Bioassay-Guided Fractionation (BGF)

BGF is the iterative heart of the traditional workflow. The crude extract is subjected to sequential separation techniques (e.g., liquid-liquid partitioning, column chromatography) to generate simpler fractions. The critical differentiator is that each fraction is simultaneously tested in a relevant biological assay. Only fractions retaining the desired bioactivity are selected for further separation, progressively enriching the active component(s) until pure compounds are obtained [12] [10].

Case Study: Discovery of a Biofungicide fromSalvia canariensis

A 2024 study provides a quintessential example of the modern BGF workflow [12]:

- Objective: Identify antifungal compounds from cultivated S. canariensis.

- Bioassay: Growth inhibition (% GI) of phytopathogenic fungi (Botrytis cinerea, Fusarium oxysporum, Alternaria alternata).

- Process:

- The ethanolic leaf extract showed significant activity (~42-69% GI at 1 mg/mL).

- Liquid-liquid partition yielded hexane (Hx), ethyl acetate (EtOAc), and aqueous fractions.

- The Hx fraction showed the highest activity, guiding its selection for further separation.

- The Hx fraction was separated via silica gel column chromatography into 13 sub-fractions (A1-A13).

- Sub-fraction A9 exhibited the strongest and broadest antifungal activity.

- Final purification of A9 led to the isolation of the known abietane diterpenoid salviol, confirmed as the key bioactive compound.

Table 1: Key Antifungal Activity Data from Bioassay-Guided Fractionation of S. canariensis [12]

| Sample | Concentration | % Growth Inhibition (Alternaria alternata) | % Growth Inhibition (Botrytis cinerea) | % Growth Inhibition (Fusarium oxysporum) |

|---|---|---|---|---|

| Crude Ethanolic Extract | 1 mg/mL | 68.6% | 41.1% | 53.6% |

| Hexane Fraction (Hx) | 1 mg/mL | 73.5% | 52.4% | 70.1% |

| Active Sub-fraction (A9) | 0.5 mg/mL | 82.5% | 68.8% | 88.9% |

| Isolated Compound (Salviol) | Variable | Concentration-dependent activity confirmed | ||

| Commercial Fungicide (Positive Control) | Label rate | ~90% (Fosbel-Plus) | ~90% (Fosbel-Plus) | ~90% (Fosbel-Plus) |

Evolution of Traditional Methods: The PLANTA Protocol

The core BGF logic is being enhanced by advanced analytical integrations. The PLANTA protocol (2025) exemplifies this evolution by combining 1H NMR profiling, HPTLC, and bioassays with chemometric tools before isolation [13]. It uses statistical correlation (NMR-HeteroCovariance Approach) to generate a "pseudospectrum" highlighting NMR signals correlated with bioactivity, allowing for the pre-isolation identification of active constituents in a complex mixture. In a proof-of-concept study, this method achieved an 89.5% detection rate of active metabolites [13].

Diagram 1: The Traditional Bioassay-Guided Fractionation Workflow (70 characters)

Performance Comparison: Traditional BGF vs. AI-Enabled Approaches

The traditional workflow is increasingly contrasted with data-driven AI methodologies. The comparison below is based on stated capabilities and limitations of each paradigm.

Table 2: Comparative Analysis of Traditional and AI-Enabled Workflows in Natural Product Research

| Performance Metric | Traditional Bioassay-Guided Workflow | AI/Computational Workflow | Supporting Data & Context |

|---|---|---|---|

| Primary Driver | Biological activity (phenotype-first). | Predictive algorithms and pattern recognition (data-first). | BGF is activity-driven [10]; AI uses models for target and molecule prediction [4]. |

| Typical Timeline (Hit to Lead) | Several months to years. | Potentially weeks to months for virtual screening and in silico design. | AI can compress early discovery phases [11]; traditional isolation is inherently labor-intensive [3]. |

| Key Strength | Empirical validation. Direct link between compound and biological effect in physiologically relevant assays. Unbiased discovery of novel mechanisms. | Speed & scale. Can screen millions of virtual compounds or analyze vast omics datasets almost instantaneously. | BGF delivers confirmed bioactive entities [12]. AI can evaluate vast chemical spaces [11] and integrate multi-omics data [4]. |

| Key Limitation | Low throughput, resource-intensive. Prone to rediscovery of known compounds (dereplication challenge). Slow and requires large amounts of starting material. | Data dependency & "black box". Relies on quality training data; predictions require experimental validation. Limited ability to model complex natural product interactions. | Dereplication is a major hurdle [13]. AI models are only as good as their data and lack intuitive explainability [4] [11]. |

| Success Rate (Early Phase) | Historically the source of many drugs; success is linked to assay quality and source novelty. | Reported 80-90% Phase I success for AI-designed drugs vs. 40-65% industry average, though this field is nascent [11]. | Metrics suggest AI improves early candidate selection [11]. Traditional methods have a proven, but slower, track record [10]. |

| Best Suited For | Exploring uncharacterized sources, phenotype-based discovery, isolating compounds with complex or unknown mechanisms. | Dereplication, virtual screening of analogs, target prediction, optimizing ADMET properties, and analyzing genomic data for biosynthetic potential. | AI excels at data mining and prediction [4] [3]. BGF is essential for genuine novelty from complex extracts [10]. |

Diagram 2: Logic for Choosing a Research Strategy (63 characters)

Detailed Experimental Protocols

Objective: To isolate antifungal compounds from plant material. Key Materials: Lyophilized plant powder, ethanol, chromatography-grade solvents (hexane, ethyl acetate), silica gel for column chromatography, fungal strains (Botrytis cinerea, Fusarium oxysporum, Alternaria alternata), potato dextrose agar (PDA). Procedure:

- Extraction: Macerate dried leaf powder in ethanol. Filter and concentrate under vacuum to obtain crude ethanolic extract.

- Liquid-Liquid Partition: Suspend crude extract in water. Partition sequentially with hexane and ethyl acetate. Concentrate each organic layer to obtain hexane (Hx) and ethyl acetate (EtOAc) fractions.

- Antifungal Disk Diffusion Assay: Prepare PDA plates inoculated with fungal mycelium. Apply sterile filter disks loaded with test samples (crude extract, fractions at 1 mg/mL). Incubate plates and measure zones of growth inhibition.

- Bioassay-Guided Column Chromatography: Pack a glass column with silica gel. Load the most active fraction (e.g., Hx fraction). Elute with a gradient of increasing polarity (e.g., hexane to ethyl acetate to methanol). Collect multiple sub-fractions.

- Assay of Sub-fractions: Test all sub-fractions in the antifungal assay (step 3). Select the sub-fraction(s) with the highest activity for further purification (e.g., repeated chromatography or preparative TLC).

- Structure Elucidation: Analyze the pure active compound using NMR spectroscopy (¹H, ¹³C, 2D experiments) and mass spectrometry to determine its chemical structure.

Objective: To identify bioactive compounds in a complex mixture prior to physical isolation. Key Materials: Complex natural extract, deuterated NMR solvent (e.g., methanol-d4), HPTLC plates, bioassay reagents (e.g., DPPH for antioxidant assay), NMR spectrometer, HPTLC system. Procedure:

- Fractionation & Bioassay: Fractionate the crude extract (e.g., by FCPC or column chromatography). Test each fraction for bioactivity (e.g., DPPH radical scavenging).

- ¹H NMR Profiling: Acquire ¹H NMR spectra for all fractions.

- NMR-HetCA Analysis: Use statistical software (e.g., MATLAB, Python) to perform HeteroCovariance Analysis. Calculate covariance between the ¹H NMR spectral data matrix and the vector of bioactivity values across fractions. Generate a HetCA pseudospectrum where peak intensities correlate with contribution to bioactivity.

- HPTLC Analysis & sHetCA: Run HPTLC on all fractions. Use densitometry to convert the HPTLC plate image into a chromatographic data matrix. Perform sparse HetCA (sHetCA) to correlate chromatographic bands with bioactivity.

- Cross-Correlation (SH-SCY): Implement Statistical Heterocovariance–SpectroChromatographY (SH-SCY) to correlate specific NMR peaks from HetCA with specific bands from HPTLC sHetCA. This orthogonally validates the bioactive components.

- STOCSY-Guided Dereplication: For key bioactive NMR peaks, use Statistical Total Correlation Spectroscopy (STOCSY) to identify all NMR signals from the same molecule. "Deplete" the full spectrum of non-correlating signals to generate a clean, database-compatible spectrum for querying natural product NMR libraries.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Traditional Natural Product Workflows

| Item/Category | Function in Workflow | Specific Example/Note |

|---|---|---|

| Chromatography Media | Physical separation of compounds based on polarity, size, or affinity. | Silica Gel: Standard for open column and flash chromatography. Sephadex LH-20: Size-exclusion chromatography for desalting or separating by molecular weight. |

| Bioassay Components | To provide a measurable biological endpoint for guiding fractionation. | Fungal Spores/Mycelia: For antifungal assays [12]. DPPH (2,2-diphenyl-1-picrylhydrazyl): Stable radical for antioxidant activity assays [13]. Cell Lines & Reagents: For cytotoxicity or specific target-based assays. |

| Solvents for Extraction & Partitioning | To dissolve and separate metabolites based on solubility. | Ethanol/Methanol: For polar extraction. Hexane: For non-polar fractionation. Ethyl Acetate: For medium-polarity fractionation. Water: Aqueous phase in partitions [12]. |

| Deuterated NMR Solvents | To provide an isotopic lock and non-interfering signal for NMR spectroscopy. | Methanol-d4, Chloroform-d: Standard solvents for acquiring ¹H and ¹³C NMR spectra for structure elucidation [13]. |

| Spectroscopic Standards | To calibrate instruments and provide reference points for structural analysis. | Tetramethylsilane (TMS): Internal standard for chemical shift calibration in NMR (δ = 0 ppm) [13]. |

| Culture Media | To grow and maintain test organisms for bioassays. | Potato Dextrose Agar (PDA): For cultivating phytopathogenic fungi [12]. LB Broth/Agar: For cultivating bacterial strains. |

The discovery of therapeutic agents from natural products (NPs) has historically been a cornerstone of medicine, contributing to approximately 50% of all FDA-approved drugs, including landmark treatments like penicillin and paclitaxel [3]. However, traditional NP research is characterized by formidable challenges: it is notoriously labor-intensive, time-consuming, and resource-heavy. The journey from source material to a characterized bioactive compound involves exhaustive extraction, complex purification, and challenging structural elucidation, often spanning years or even decades [3] [4].

This article presents a comparative analysis of traditional methodologies versus modern artificial intelligence (AI)-enabled approaches within NP-based drug discovery. The central thesis is that AI is not merely an incremental improvement but a transformative force that redefines the entire research pipeline [8]. By integrating machine learning (ML), deep learning (DL), and advanced data architectures, AI addresses the core inefficiencies of traditional work. It enables the rapid analysis of vast, multimodal datasets—from genomic and metabolomic information to chemical structures and pharmacological data—allowing researchers to predict bioactivity, elucidate mechanisms, and prioritize candidates with unprecedented speed and scale [6] [7].

The transition is driven by necessity. The traditional model, reliant on trial-and-error and manual expertise, struggles with the chemical complexity and low yield typical of NPs [4]. In contrast, the AI-enabled pipeline offers a systematic, data-driven framework for navigating this complexity, promising to accelerate the delivery of novel therapeutics for oncology, infectious diseases, neurodegeneration, and beyond [6] [15].

Core Comparison: Traditional vs. AI-Enabled Pipelines

The fundamental divergence between traditional and AI-enabled research lies in their approach to data, hypothesis generation, and experimental design. The table below provides a high-level comparison of the two paradigms across key dimensions of the drug discovery workflow.

Table: Comparative Overview of Traditional and AI-Enabled Approaches in Natural Product Research

| Aspect | Traditional Pipeline | AI-Enabled Pipeline | Key Implications |

|---|---|---|---|

| Primary Driver | Hypothesis-driven, based on ethnobotany, literature, or observed bioactivity [3]. | Data-driven, leveraging patterns discovered algorithmically from large-scale datasets [6] [16]. | Shifts from targeted, linear exploration to broad, parallelized discovery of novel patterns and connections. |

| Data Utilization | Relies on limited, manually curated datasets from targeted experiments (e.g., a single plant extract's bioassay results) [4]. | Integrates and mines massive, multimodal datasets (genomic, spectroscopic, bioassay, literature) [7] [17]. | Enables the discovery of relationships invisible to manual analysis, improving prediction accuracy and candidate novelty. |

| Compound Screening | Bioassay-guided fractionation; sequential, physical testing of extracts and compounds [3]. | Virtual screening of millions of compounds in silico; AI-prioritized shortlists for physical validation [8] [4]. | Dramatically increases throughput, reduces reagent costs, and focuses lab resources on the most promising leads. |

| Lead Optimization | Iterative, synthetic modification based on medicinal chemistry intuition and structure-activity relationship (SAR) tables [4]. | Generative AI and predictive modeling propose novel analogs with optimized properties (potency, solubility, ADMET) [17]. | Accelerates the design of better drug candidates and explores a broader chemical space around a natural scaffold. |

| Timeline & Resource Impact | Process can take 10-15 years from discovery to market, with high rates of late-stage failure [15]. | Can compress early discovery (target to pre-clinical candidate) from years to under 18 months [15]. | Reduces the multi-billion dollar cost of drug development and accelerates patient access to new therapies. |

| Key Limitation | Low throughput, high cost, difficult to scale, and limited by human bias and cognitive capacity [18]. | Dependent on data quality, quantity, and standardization; risk of model bias; "black box" interpretability issues [6] [7]. | Success hinges on solving data infrastructure challenges and developing transparent, robust AI models. |

The AI Pipeline Deconstructed: Data, Algorithms, and Predictive Modeling

Data: The New Foundation - From Silos to Knowledge Graphs

The efficacy of any AI system is fundamentally constrained by the quality, quantity, and structure of its training data. Traditional NP data is often fragmented, unstandardized, and scattered across proprietary lab notebooks, published papers, and disparate databases [7]. This "siloed" nature makes it poorly suited for ML models that require large, consistent datasets.

The AI-enabled future is being built on knowledge graphs [7]. Unlike simple databases, knowledge graphs structurally represent entities (e.g., a specific compound, a gene, a disease) as nodes and the relationships between them (e.g., "inhibits," "is biosynthesized by," "treats") as edges. This framework is inherently multimodal, capable of linking chemical structures from mass spectrometry, biosynthetic pathways from genomic data, bioactivity from assay results, and textual information from the scientific literature into a single, queryable network [7].

Table: Key Data Types and Their Role in the AI-Enabled Pipeline

| Data Modality | Description | AI/ML Application Examples | Traditional Challenge Addressed |

|---|---|---|---|

| Chemical & Metabolomic Data | Molecular structures, mass spectra (MS), nuclear magnetic resonance (NMR) spectra. | MS/NMR prediction & dereplication: AI models predict spectra from structures (and vice-versa) to rapidly identify known compounds and flag novel ones [4]. | Avoids redundant isolation of known compounds, saving months of lab work. |

| Genomic & Biosynthetic Data | DNA sequences, identified biosynthetic gene clusters (BGCs). | BGC prediction & pathway elucidation: ML models predict the type of NP a BGC produces and simulate its biosynthetic pathway [6] [7]. | Uncovers the genetic basis of compound production, enabling engineered biosynthesis or metagenomic mining. |

| Bioactivity & Pharmacological Data | Results from in vitro and in vivo assays (IC50, toxicity, etc.). | Predictive bioactivity modeling: QSAR and DL models predict a compound's activity against a target from its structure alone [8] [17]. | Prioritizes which compounds to isolate and test, moving from random screening to informed prediction. |

| Textual & Knowledge Data | Scientific literature, patents, electronic health records. | Literature mining with NLP: Natural Language Processing extracts implicit relationships (e.g., "compound X reduces inflammation in model Y") to generate new hypotheses [8] [4]. | Synthesizes knowledge across millions of documents, uncovering hidden connections between NPs, targets, and diseases. |

Diagram: The Central Role of the Knowledge Graph in AI-Enabled Discovery. Multimodal data sources are integrated into a structured knowledge graph, which serves as the foundational data layer for various AI applications that ultimately converge on validated lead candidates [7].

Algorithms: The Engine of Prediction and Design

AI algorithms transform integrated data into actionable predictions and designs. These algorithms operate at different stages of the pipeline, from initial screening to lead optimization.

Table: Key AI/ML Algorithm Classes and Their Applications in NP Research

| Algorithm Class | How It Works | Specific Application in NP Research | Comparative Advantage |

|---|---|---|---|

| Supervised Learning (e.g., Random Forest, SVM, Neural Nets) | Learns a mapping function from labeled input-output pairs (e.g., chemical structure -> biological activity). | QSAR Modeling, ADMET Prediction, Spectral Matching. Predicts properties of unknown compounds based on known data [17]. | Replaces or prioritizes costly, low-throughput experimental assays. A single model can screen millions of virtual compounds. |

| Unsupervised & Self-Supervised Learning (e.g., Clustering, Autoencoders) | Discovers inherent patterns, groupings, or representations in unlabeled data. | Chemical Space Exploration, Molecular Representation Learning. Groups NPs by structural similarity or creates compressed molecular "fingerprints" [7] [4]. | Uncovers novel structural families and bioactivity clusters without pre-existing labels, enabling de novo insight generation. |

| Deep Learning (DL) & Graph Neural Networks (GNNs) | Uses multi-layered neural networks to model highly complex, non-linear relationships. GNNs operate directly on graph-structured data. | Molecular Property Prediction, Protein-Ligand Docking, Knowledge Graph Reasoning. Excels at tasks where the molecular structure or relational context is paramount [6] [17]. | Provides superior accuracy for complex prediction tasks by directly learning from molecular graphs or the knowledge graph itself. |

| Generative AI (e.g., VAEs, GANs, Transformers) | Learns the underlying distribution of data (e.g., bioactive molecules) to generate novel, similar instances with desired properties. | De Novo Design of NP-inspired Compounds. Generates novel molecular structures optimized for multiple parameters (potency, synthesizability, safety) [4] [17]. | Moves beyond screening existing libraries to inventing new, optimal chemical entities, vastly expanding accessible chemical space. |

Predictive Modeling: From Correlation to Causal Inference

The ultimate goal of the AI pipeline is to make accurate, translational predictions. This evolves from simple statistical correlations toward more robust causal inference.

Traditional Forecasting vs. AI Predictive Modeling: Traditional methods, like linear regression on a handful of variables, are limited in handling the high-dimensional, noisy data of biology [16]. AI forecasting models, particularly DL models, can process thousands of interacting features (e.g., gene expression, metabolite levels, clinical phenotypes) to predict clinical outcomes, drug response, or synthetic viability with 10-50% greater accuracy [16].

The Next Frontier - Causal AI: Current models often identify correlations rather than causation. The next generation of AI aims for causal inference—understanding the underlying cause-and-effect mechanisms (e.g., which specific compound in a herbal extract inhibits which protein to cause an anti-inflammatory effect) [7]. This is critical for understanding NP mechanisms of action, which are often multi-target and synergistic. Knowledge graphs are a key stepping stone, as their relational structure is more amenable to causal reasoning than tabular data [7].

Experimental Validation & Case Studies

Experimental Protocols: Validating AI Predictions

The AI pipeline is iterative and requires rigorous experimental validation. A standard protocol for validating an AI-predicted NP lead involves:

- In Silico Prediction & Prioritization: A generative or screening model proposes candidate molecules with predicted activity against a target (e.g., PD-L1 for immuno-oncology). Candidates are ranked by multi-parameter optimization scores balancing predicted affinity, solubility, and synthetic accessibility [17].

- De Novo Synthesis or Procurement: The top-ranked candidates, which are often novel structures, are synthesized via AI-assisted retrosynthetic planning [4] or, if known, sourced from compound libraries.

- In Vitro Biochemical/Biophysical Assay: Purified compounds are tested in a target-specific assay (e.g., enzyme inhibition, binding displacement) to measure IC50/EC50. This step provides the first ground-truth validation of the AI's prediction [15].

- Cellular Phenotypic Assay: Active compounds move to cell-based assays (e.g., cancer cell cytotoxicity, immune cell activation reporter assays) to confirm functional activity in a more complex biological environment [17].

- Mechanistic Profiling & Multi-Target Analysis: For NPs known for polypharmacology, techniques like cellular thermal shift assay (CETSA) or network pharmacology analysis are used to identify all engaged protein targets and map them to disease pathways [6].

- In Vivo Efficacy Studies: The most promising lead undergoes testing in a relevant animal disease model to evaluate pharmacokinetics, toxicity, and therapeutic efficacy, bridging the gap to preclinical development [15].

Case Study: AI in Accelerating Small-Molecule Immunotherapy

A compelling illustration of the AI pipeline is in designing small-molecule immunotherapies, an area dominated by biologic drugs (antibodies).

The Challenge: Antibodies against targets like PD-1/PD-L1 are effective but costly, require infusion, and have poor tumor penetration. Small-molecule inhibitors could offer oral availability and better distribution but are extremely difficult to design for large, flat protein-protein interaction interfaces [17].

The AI-Enabled Approach:

- Target & Data Integration: AI integrates structural data (PD-L1 protein dimers), known active compounds (from literature/patents), and cellular expression data.

- Generative Design: A Generative Adversarial Network (GAN) or Reinforcement Learning (RL) model is tasked with generating novel chemical structures that fit the PD-L1 binding pocket and meet drug-like criteria [17].

- Virtual Screening & Optimization: Thousands of generated molecules are virtually screened using a DL-based docking model. Top hits are further optimized by another AI model for improved potency and ADMET properties.

- Validation: The final AI-designed molecule is synthesized and shown to successfully disrupt PD-L1 dimerization, enhance T-cell activation in vitro, and inhibit tumor growth in a mouse model [17].

The Result: This approach, employed by companies like Insilico Medicine and Exscientia, has demonstrated the ability to produce pre-clinical candidates for similar complex targets in under 12-18 months, a fraction of the traditional timeline [15] [19].

Diagram: AI-Enabled Workflow for Small-Molecule Immunotherapy Design. The process integrates AI-driven generative design and in-silico screening with focused experimental validation, creating a highly efficient, iterative loop from concept to pre-clinical candidate [17].

The Scientist's Toolkit: Essential Research Reagent Solutions

Transitioning to or integrating with an AI-enabled pipeline requires both computational tools and advanced experimental reagents.

Table: Key Research Reagent Solutions for AI-Integrated NP Discovery

| Tool/Reagent Category | Specific Example | Function in the AI Pipeline | Provider/Technology |

|---|---|---|---|

| Multimodal Data Generation | Untargeted Metabolomics Kits | Generate the mass spectrometry data that feeds AI models for dereplication and novel compound discovery. | Agilent, Waters, Bruker |

| Single-Cell RNA Sequencing Reagents | Provide high-resolution genomic data to understand the biosynthetic potential of individual cells within a complex source (e.g., plant tissue, microbiome). | 10x Genomics, PacBio | |

| Target Engagement & Validation | Cellular Thermal Shift Assay (CETSA) Kits | Experimentally validate AI-predicted drug-target interactions in a cellular context, crucial for polypharmacology studies [6]. | Thermo Fisher Scientific |

| Phospho-Specific Antibody Panels | Verify AI-predicted signaling pathway modulation (e.g., JAK-STAT, NF-κB) following NP treatment. | Cell Signaling Technology | |

| High-Content Screening | Fluorescent Cell-Based Reporter Assays | Generate rich, quantitative phenotypic data (images, fluorescence intensity) for training AI models that predict complex bioactivities. | PerkinElmer, Revvity |

| In Silico & AI Software | Molecular Modeling & Simulation Suites | Provide the physics-based computational environment for docking, MD simulations, and hybrid AI-physics modeling [19]. | Schrödinger, OpenEye |

| Cloud-Based AI Drug Discovery Platforms | Offer access to pre-trained models for target identification, molecule generation, and property prediction without building in-house AI infrastructure [19]. | Insilico Medicine (Pharma.AI), Exscientia (CentaurAI) |

The comparative analysis clearly demonstrates that the AI-enabled pipeline represents a fundamental upgrade over traditional NP research methods. By systematically addressing the bottlenecks of data fragmentation, low-throughput screening, and inefficient optimization, AI provides a scalable, predictive, and accelerated framework for discovery.

The future of this field will be shaped by several key developments:

- Widespread Adoption of Knowledge Graphs: Efforts like the Natural Product Science Knowledge Graph will become essential infrastructure, breaking down data silos and enabling more sophisticated causal AI models [7].

- Rise of Generative Biology: AI will move beyond small molecules to design novel enzymes, biosynthetic pathways, and optimized microbial strains for the sustainable production of complex NPs [6] [19].

- Enhanced Explainability and Trust: Developing "explainable AI" (XAI) techniques will be critical for interpreting model predictions and gaining regulatory acceptance, moving away from the "black box" perception [6].

- Democratization through Cloud Platforms: Cloud-based AI tools (e.g., from Atomwise, Cyclica) will make these powerful technologies accessible to academic labs and smaller biotechs, further accelerating innovation [19].

In conclusion, the integration of AI into natural products research is not a replacement for scientific intuition or experimental rigor but a powerful augmentation. The most successful future research programs will be those that effectively combine domain expertise with AI-driven insights, creating a synergistic loop where human knowledge trains better models, and model predictions guide more insightful experiments. This partnership holds the key to unlocking the vast, untapped therapeutic potential of the natural world.

The discovery and development of drugs from natural products stand at a methodological crossroads. On one path lies the traditional empirical approach, characterized by its deep, mechanistic richness and validation through direct biological observation. On the other is the modern computational-AI paradigm, defined by its unprecedented scale, speed, and ability to navigate vast chemical spaces. This guide provides an objective comparison of these two paradigms within natural products research, examining their performance, experimental foundations, and synergistic potential for researchers and drug development professionals [6].

Quantitative Comparison of Paradigm Performance

The following tables summarize the core performance metrics of traditional and AI-enhanced approaches across key stages of natural product research.

Table 1: Performance Metrics in Early Discovery & Screening

| Performance Metric | Traditional Empirical Approach | AI-Enhanced Computational Approach | Supporting Data & Notes |

|---|---|---|---|

| Library/Collection Scale | Hundreds to thousands of physical extracts or compounds [20]. | Billions of virtual compounds in screenable libraries [20]. | Ultra-large virtual libraries (e.g., ZINC20, Enamine REAL) contain >1 billion make-on-demand molecules [20]. |

| Primary Screening Throughput | Medium to High-Throughput Screening (HTS): 10⁴–10⁵ compounds per campaign [21]. | Ultra-large virtual screening: 10⁸–10⁹ compounds per campaign [20]. | Computational pre-filtering drastically reduces the number of compounds requiring physical HTS [9]. |

| Hit Identification Rate | Typically low (often <0.1%) in blind HTS [20]. | Significantly enriched via virtual screening; one study reported a 50-fold enrichment over random [9]. | AI/ML models integrate pharmacophore and interaction data to prioritize candidates [9]. |

| Time for Hit Identification | Months to years, depending on library size and assay complexity. | Days to weeks for virtual screening of billion-compound libraries [20]. | Case: An AI-driven platform identified a novel clinical candidate for fibrosis in 18 months (vs. 4-6 years traditional) [15]. |

| Multi-Target & Synergy Analysis | Challenging; requires sequential or multiplexed assays. | Native capability via network pharmacology and polypharmacology models [22]. | AI-NP constructs herb-ingredient-target-pathway graphs to propose synergistic effects [22] [6]. |

Table 2: Performance in Lead Optimization & Development

| Performance Metric | Traditional Empirical Approach | AI-Enhanced Computational Approach | Supporting Data & Notes |

|---|---|---|---|

| SAR (Structure-Activity Relationship) Cycle Time | Long (months per cycle) due to sequential synthesis and testing. | Compressed (weeks) via generative AI and predictive property models [9]. | AI-guided "design-make-test-analyze" (DMTA) cycles accelerate optimization [9]. |

| Potency Optimization Efficiency | Iterative, guided by medicinal chemist intuition. | Data-driven; one study used deep graphs to generate 26k analogs, achieving a >4,500-fold potency improvement [9]. | Generative models explore chemical space around a hit far more exhaustively [6]. |

| ADMET Prediction | Late-stage, relying on in vivo studies; high attrition. | Early-stage in silico filters (e.g., SwissADME) improve developability likelihood [21] [9]. | Tools predict pharmacokinetics and toxicity before synthesis, de-risking pipelines [21]. |

| Target Engagement Validation | Gold-standard but low-throughput (e.g., SPR, CETSA). | Predictive docking and simulation; AI can prioritize compounds for validation [9]. | CETSA provides empirical, cell-based validation that complements computational predictions [9]. |

| Clinical Translation Success Rate | Historically low (<10% from Phase I to approval) [15]. | Emerging evidence of improved efficiency; AI-derived molecules are now in clinical trials [15]. | AI's impact on late-stage success rates is promising but requires long-term tracking [15]. |

Detailed Experimental Protocols

A synergistic research program leverages the scale of computation and the richness of empirical validation. Below are detailed protocols for two integrative experiments.

Protocol: AI-Guided Virtual Screening for Natural Product-Inspired Compounds

This protocol leverages computational scale to identify novel bioactive candidates from virtual libraries [20].

Target Selection and Preparation:

- Select a protein target with a resolved 3D structure (from PDB) or a high-quality homology model.

- Prepare the target structure using standard software (e.g., Schrödinger's Protein Preparation Wizard, UCSF Chimera): remove water molecules, add hydrogen atoms, assign protonation states, and minimize the structure.

Virtual Library Curation:

- Access an ultra-large virtual compound library (e.g., ZINC20, Enamine REAL Space).

- Apply pre-filters for drug-likeness (e.g., Lipinski's Rule of Five, molecular weight <500 Da) to create a focused screening library of 100 million to 1 billion compounds [20].

AI-Powered Docking and Scoring:

- Method A (Structure-Based): Perform high-throughput molecular docking (e.g., using AutoDock-GPU, FRED) to generate predicted binding poses and scores for each compound [9].

- Method B (AI-Prediction): Employ a pre-trained deep learning model (e.g., a Graph Neural Network) to predict binding affinity or activity directly from molecular structures, bypassing explicit docking [6] [20].

- Rank the entire library based on the composite score.

Post-Screening Analysis & Prioritization:

- Cluster top-ranked compounds by chemical scaffold.

- Apply additional AI-based filters for synthetic accessibility and predicted ADMET properties [21].

- Select 50-100 diverse, high-priority virtual hits for procurement or synthesis.

Experimental Validation:

- Subject the acquired compounds to a biochemical or cell-based primary assay to confirm activity.

- Validate true hits through dose-response experiments to determine IC50/EC50 values.

Protocol: Empirical Validation of Multi-Target Mechanisms via Network Pharmacology & CETSA

This protocol uses empirical methods to validate the holistic, multi-target mechanisms predicted for a natural product extract [22] [9].

Network Pharmacology Analysis (In Silico):

- Component Identification: Use LC-MS/MS to characterize the chemical constituents of the natural product extract [21].

- Target Prediction: Input the identified compounds into multiple target prediction algorithms (e.g., SwissTargetPrediction, PharmMapper) to generate a list of putative protein targets.

- Network Construction: Integrate the compound-target predictions with protein-protein interaction (PPI) data and pathway databases (KEGG, Reactome) to build a holistic "herb-ingredient-target-pathway" network [22].

- Core Target Identification: Use network topology analysis (degree, betweenness centrality) to identify key mechanistic targets within the network.

Cellular Target Engagement Validation (In Vitro/In Situ):

- CETSA (Cellular Thermal Shift Assay) Setup: Treat live cells (relevant to the extract's indication) with the natural product extract or vehicle control [9].

- Heat Challenge & Protein Isolation: Heat aliquots of cell lysates to a gradient of temperatures (e.g., 37°C–67°C). Centrifuge to separate stabilized (soluble) protein from aggregated protein.

- Target Detection: Use Western blotting with antibodies against the core targets identified in Step 1 to quantify the amount of stabilized protein at each temperature.

- Data Analysis: Generate melt curves. A rightward shift in the thermal stability curve (increased Tm) for a target protein in the treated sample indicates direct ligand binding and target engagement within the complex cellular environment [9].

Functional Phenotypic Corroboration:

- In parallel, perform a relevant phenotypic assay (e.g., anti-inflammatory cytokine secretion, cell viability).

- Correlate the dose- and time-dependent phenotypic effects with the target engagement profiles from CETSA to establish a mechanistic link between pathway modulation and biological outcome.

Visualizing the Integrated Workflow

The synergy between computational and empirical methods is best understood as an iterative, reinforcing cycle.

AI-Enhanced Natural Product Discovery Workflow

This diagram illustrates the integrated pipeline from virtual discovery to empirical validation.

Knowledge Graph Construction for Mechanism Elucidation

This diagram details the core AI-driven method for predicting the multi-scale mechanisms of complex natural products.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents & Platforms for Integrated Research

| Tool/Reagent Category | Specific Examples | Primary Function in Research | Paradigm Alignment |

|---|---|---|---|

| Virtual Screening & Docking | AutoDock-GPU, FRED, Schrödinger Suite [20] [9] | Predicts binding pose and affinity of small molecules to a protein target. | Computational Scale |

| AI/ML Modeling Platforms | Deep Graph Networks, GNN Libraries (PyTorch Geometric), Random Forest/ SVM models [22] [6] | Predicts activity, properties, or generates novel molecular structures. | Computational Scale & Speed |

| ADMET Prediction | SwissADME, pkCSM, QikProp [21] [9] | Estimates pharmacokinetic and toxicity profiles from chemical structure. | Computational De-risking |

| Target Engagement Validation | CETSA (Cellular Thermal Shift Assay) kits, SPR (Biacore) systems [9] | Provides direct, cell-based evidence of physical drug-target interaction. | Empirical Richness |

| Multi-Omics Analysis | LC-MS/MS systems, scRNA-seq platforms, Proteomics suites (DIA) [21] | Empirically characterizes the full chemical and biological response profile. | Empirical Richness |

| Network Analysis & Databases | Cytoscape, KEGG, STRING, TCM databases [22] | Integrates disparate biological data into unified networks for systems-level insight. | Integrative (Both) |

Tools of the Trade: A Deep Dive into Techniques and Their Real-World Applications

The discovery and development of bioactive natural products (NPs) remain a cornerstone of modern therapeutics, with many successful drugs originating from plant, microbial, and marine sources [4]. This pipeline fundamentally depends on the efficient extraction and isolation of target compounds from complex biological matrices, a process that has evolved from simple solvent-based methods to sophisticated chromatographic technologies [23] [24]. These physical separation techniques constitute the essential, experimental arsenal for NP researchers.

Concurrently, a paradigm shift is underway with the integration of Artificial Intelligence (AI) and machine learning into NP research. AI promises to accelerate discovery by predicting bioactivity, elucidating complex mechanisms, and prioritizing compounds for isolation [4] [6]. However, the predictive power of AI is ultimately grounded in and validated by high-quality experimental data generated through these very extraction and isolation processes. This guide provides a comparative analysis of the traditional physical separation toolkit, framing it within the broader thesis of a synergistic future where empirical laboratory science and computational intelligence converge to overcome the historical challenges of time, cost, and complexity in NP drug discovery [4].

Comparative Analysis of Extraction Methodologies

The initial extraction step is critical for liberating bioactive compounds from cellular structures. The choice of method significantly impacts yield, compound stability, solvent consumption, and time.

Solvent-Based Conventional Extraction

These classical techniques rely on the solubility of target compounds and the use of heat and/or agitation.

- Maceration: Plant material is soaked in a solvent at room temperature with occasional agitation. It is simple and cost-effective but characterized by long extraction times (hours to days), high solvent consumption, and potentially low yields [24] [25].

- Soxhlet Extraction: Continuous extraction using solvent reflux. It is highly efficient and does not require filtration, but uses high temperatures unsuitable for thermolabile compounds and involves significant solvent use [24] [25].

- Percolation: Solvent passes slowly through a packed bed of material. It offers higher efficiency than maceration but remains a time-consuming process with variable yields [24].

Advanced Solvent-Assisted Extraction Techniques

Modern techniques enhance extraction efficiency by applying physical energy to disrupt cell walls and improve mass transfer.

- Ultrasound-Assisted Extraction (UAE): Uses ultrasonic cavitation to disrupt cells. It drastically reduces time and solvent use while increasing yields. A potential drawback is heat generation, which may degrade sensitive compounds [24] [26] [25].

- Microwave-Assisted Extraction (MAE): Employs microwave energy to heat solvents and plant interiors directly, causing cell rupture from internal pressure. It is exceptionally fast, efficient, and provides high yields of bioactive compounds, as demonstrated in comparative studies [26] [25]. Careful temperature control is necessary.

- Supercritical Fluid Extraction (SFE): Uses supercritical CO₂ as a solvent. It is a green technology, operates at low temperatures, and allows easy solvent removal. However, it has high capital cost and is less effective for very polar molecules without modifiers [23] [24].

- Accelerated Solvent Extraction (ASE): Uses conventional solvents at elevated temperatures and pressures. It automates extraction, reduces time and solvent volume, and is highly reproducible [23] [24].

Table 1: Performance Comparison of Key Extraction Techniques [23] [26] [25]

| Method | Typical Extraction Time | Solvent Consumption | Operational Temperature | Key Advantage | Major Limitation |

|---|---|---|---|---|---|

| Maceration | 12-72 hours | Very High | Ambient | Simplicity, low cost | Very slow, low efficiency |

| Soxhlet | 4-24 hours | High | High (solvent b.p.) | Exhaustive extraction | Degrades thermolabile compounds |

| UAE | 10-60 minutes | Low | Low-Moderate | Fast, good for thermolabiles | Possible heat buildup |

| MAE | 1-10 minutes | Very Low | Moderate-High | Very fast, high yields | Requires polar solvents |

| SFE | 30-90 minutes | Low (CO₂) | Low | Solvent-free extract, green | High cost, low polarity range |

| ASE | 12-20 minutes | Low | High | Automated, reproducible | High pressure equipment |

Table 2: Experimental Yield Data from Matthiola ovatifolia Extraction (Ethanol Solvent) [26] Comparative study showing quantitative differences in phytochemical recovery.

| Phytochemical Class | Conventional Solvent (mg/g) | UAE (mg/g) | MAE (mg/g) | UMAE (mg/g) |

|---|---|---|---|---|

| Total Phenolics | 52.1 ± 0.2 | 60.3 ± 0.4 | 69.6 ± 0.3 | 65.8 ± 0.3 |

| Total Flavonoids | 32.4 ± 0.1 | 40.1 ± 0.2 | 44.5 ± 0.1 | 42.2 ± 0.2 |

| Total Alkaloids | 58.7 ± 0.3 | 66.4 ± 0.2 | 71.6 ± 0.2 | 69.1 ± 0.3 |

Chromatographic Isolation and Purification Techniques

Following extraction, chromatographic techniques separate complex mixtures into individual compounds based on differential partitioning between mobile and stationary phases.

Table 3: Comparison of Chromatographic Isolation Techniques [27] [24] [28]

| Technique | Principle | Scale | Resolution | Speed | Best For |

|---|---|---|---|---|---|

| Flash Chromatography | Adsorption on silica | Prep | Low-Medium | Fast | Initial fractionation |

| Vacuum Liquid Chromatography | Adsorption under vacuum | Prep | Low | Fast | Quick bulk separation |

| Medium-Pressure LC (MPLC) | Optimized column packing | Prep | Medium | Medium | Milligram to gram isolation |

| High-Performance LC (HPLC) | High-pressure, small particles | Anal.-Prep | Very High | Slow (Anal.) to Med. (Prep) | Final purification, analytics |

| Preparative HPLC | Scalable HPLC conditions | Large Prep | High | Medium | Isolating 10mg-gram quantities |

| Gas Chromatography (GC) | Volatility & adsorption | Anal.-Micro Prep | High | Fast | Volatile, thermostable compounds |

High-Performance Liquid Chromatography (HPLC) is the workhorse for final purification. Its dominance stems from high resolution, precision, reproducibility, and versatility in analyzing diverse analytes [28]. The coupling of HPLC with mass spectrometry (LC-MS) provides an "invincible edge" for identification and quantification [28]. Modern advancements like Ultra-HPLC (UHPLC) using sub-2μm particles offer higher speed, resolution, and sensitivity [28].

Detailed Experimental Protocols

This protocol compares modified Bligh-Dyer (mBD) and Matyash (mMat) methods for polar metabolites.

1. Tissue Homogenization:

- Freeze tissue (bone or muscle) in liquid N₂.

- Homogenize using a Tissuelyzer (e.g., 2 min at 30 Hz) or a Pulverizer.

- Weigh 20-30 mg of homogenized powder.

2. Modified Bligh-Dyer (mBD) Extraction:

- Add 400 μL of methanol and 200 μL of water to the powder. Vortex.

- Add 400 μL of chloroform. Vortex vigorously.

- Add 400 μL of chloroform and 400 μL of water. Vortex.

- Centrifuge at 14,000 g for 15 min at 4°C to separate phases.

- Collect the upper aqueous phase containing polar metabolites.

- Dry under a gentle stream of nitrogen.

3. Modified Matyash (mMat) Extraction:

- Add 375 μL of methanol to the powder. Vortex.

- Add 1,250 μL of methyl tert-butyl ether (MTBE). Vortex vigorously.

- Add 313 μL of water. Vortex.

- Centrifuge at 14,000 g for 15 min at 4°C.

- Collect the lower aqueous phase.

- Dry under nitrogen.

4. Derivatization for GC-MS:

- Redissolve dried extract in 20 μL of methoxyamine hydrochloride in pyridine (15 mg/mL). Incubate at 70°C for 1 hour.

- Add 30 μL of N-methyl-N-(trimethylsilyl)trifluoroacetamide (MSTFA). Incubate at 70°C for 1 hour.

- Analyze by GC-MS.

Optimized protocol for high-yield extraction of bioactive phenolics and flavonoids.

1. Sample Preparation:

- Lyophilize plant material and grind to a fine powder.

- Weigh 1.0 g of powder into a sealed microwave extraction vessel.

2. Extraction:

- Add 30 mL of ethanol (material-to-liquid ratio 1:30).

- Set microwave parameters: Power = 550 W, Time = 165 seconds, Temperature = controlled to remain below solvent boiling point.

- Start the extraction cycle.

3. Post-Extraction Processing:

- Allow the vessel to cool. Transfer contents to a centrifuge tube.

- Centrifuge at 10,000 g for 10 minutes at 4°C to pellet debris.

- Collect the supernatant.

- Concentrate the supernatant using a rotary evaporator at 40°C.

- Store the dry extract at -18°C for analysis.

4. Analysis:

- Determine Total Phenolic Content (TPC) using the Folin-Ciocalteu method.

- Determine Total Flavonoid Content (TFC) using an aluminum chloride colorimetric assay.

Integration with AI-Driven Discovery Workflows

The traditional extraction-isolation pipeline is being transformed from a linear, trial-and-error process into an intelligent, iterative cycle powered by AI [4] [6].

1. Predictive Prioritization: AI models trained on chemical and biological data can predict the bioactivity of extracts or even specific metabolites within a complex mixture. This allows researchers to prioritize which plant sources or chromatographic fractions to investigate, dramatically reducing wasted effort on inactive leads [4].

2. Dereplication Acceleration: A major time sink in NP research is the re-isolation of known compounds. AI, particularly machine learning models applied to LC-MS or NMR data, can rapidly compare spectral fingerprints against vast databases to identify known compounds early in the process—a task known as dereplication [4] [6].

3. Experimental Design & Optimization: AI can help optimize extraction and separation parameters. For example, machine learning algorithms can model the effect of solvent polarity, temperature, and time on yield, suggesting the most efficient conditions for a target compound class [6].

4. Target Identification & Mechanism Prediction: Network pharmacology, an AI-driven approach, can construct herb-ingredient-target-pathway networks. This helps propose molecular targets and therapeutic mechanisms for isolated NPs, guiding subsequent biological testing [6].

The synergy is clear: AI provides the predictive intelligence to guide the physical separation arsenal, which in turn generates the high-quality experimental data required to validate and refine AI models.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Reagents, Materials, and Instruments for Extraction & Isolation

| Item | Category | Function & Application | Key Consideration |

|---|---|---|---|

| Methanol, Acetonitrile, Chloroform | Solvents | Universal extraction solvents for metabolites; used in mobile phases for chromatography [29] [30]. | Purity (LC-MS grade), toxicity, environmental impact. |

| Methyl tert-butyl ether (MTBE) | Solvent | Less toxic alternative to chloroform in biphasic extraction (e.g., Matyash method) [29]. | Stability, evaporation rate. |

| Supercritical CO₂ | Solvent & Fluid | Green extraction medium in SFE; non-toxic, easily removed [23] [24]. | Requires high-pressure equipment. |

| Silica Gel, C18-bonded Silica | Stationary Phase | Adsorbents for normal-phase (Silica) and reversed-phase (C18) column chromatography [24]. | Particle size, pore size, surface area. |

| HPLC/UHPLC Columns | Consumable | High-resolution separation of complex mixtures for analysis and purification [28]. | Chemistry (C18, HILIC, etc.), particle size (e.g., 1.7-5μm), dimensions. |

| Solid-Phase Extraction (SPE) Cartridges | Consumable | Rapid cleanup and fractionation of crude extracts; removal of phospholipids from biofluids [24] [30]. | Selectivity (phase chemistry), capacity. |

| Ultrasonic Bath/Probe | Instrument | Applies ultrasonic energy for UAE [24] [26]. | Power control, temperature management. |

| Microwave Reactor | Instrument | Applies controlled microwave energy for MAE [26] [25]. | Temperature and pressure monitoring, safety. |

| Rotary Evaporator | Instrument | Gently removes bulk solvent from extracts under reduced pressure. | Bath temperature, condenser efficiency. |

| GC-MS System | Instrument | Analyzes volatile and derivatized metabolites; provides identification [29]. | Requires sample derivatization for polar compounds. |

| LC-MS (or LC-MS/MS) System | Instrument | The gold-standard platform for analyzing non-volatile NPs; combines separation with identification and quantification [4] [28] [30]. | High resolution enables confident ID. |

| Preparative HPLC System | Instrument | Scales up analytical HPLC conditions to isolate milligram to gram quantities of pure compound [24]. | Flow rate, column diameter, detector sensitivity. |

The traditional arsenal of extraction and chromatography remains irreplaceable for physically obtaining pure, bioactive natural products. As comparative data shows, the evolution from basic maceration to advanced MAE and from simple column chromatography to UHPLC has delivered profound gains in speed, yield, and resolution [26] [28].

The future of efficient NP discovery, however, lies not in choosing between this empirical toolkit and AI, but in their strategic integration. AI acts as a force multiplier for the laboratory arsenal, providing predictive insights that guide researchers toward the most promising sources, compounds, and separation conditions [4] [6]. In turn, the meticulous work of extraction and isolation provides the validated, high-fidelity experimental data essential for building and refining trustworthy AI models. This synergistic loop between computational prediction and physical separation promises to accelerate the translation of nature's chemical diversity into the next generation of therapeutic agents.

This guide objectively compares the performance of ultrasound-assisted extraction (UAE), microwave-assisted extraction (MAE), and supercritical fluid extraction (SFE) against conventional methods and each other. Framed within a broader thesis on AI-enhanced natural products research, it provides experimental data, detailed protocols, and analysis of how modern techniques and computational tools are transforming extraction efficiency, compound preservation, and process sustainability for drug development [6] [9] [31].

Quantitative Performance Comparison of Modern Extraction Techniques

The following tables summarize key performance metrics for modern extraction techniques, based on comparative experimental studies for different natural product classes.

Table 1: Comparative Yield and Efficiency for Bioactive Compound Extraction

| Extraction Technique | Target Compound / Matrix | Key Performance Metric vs. Conventional Method | Optimal Conditions (Simplified) | Source Study |

|---|---|---|---|---|

| Microwave-Assisted Extraction (MAE) | Phenolics, Flavonoids, Antioxidants (Stevia leaves) [32] | Yield: 8.07-11.34% higher TPC/TFC; Time: 58.33% less [32] | 5.15 min, 284.05 W, 53.10% EtOH, 53.89°C [32] | Kumar & Tripathy, 2025 [32] |

| Ultrasound-Assisted Extraction (UAE) | Phenolics, Flavonoids, Antioxidants (Stevia leaves) [32] | Lower yield than MAE; Higher than conventional [32] | Varied with RSM/ANN-GA optimization [32] | Kumar & Tripathy, 2025 [32] |

| Ultrasonic-Microwave-Assisted (UMAE) | Polysaccharides (Alpinia officinarum) [33] | Max extraction rate: 18.28% ± 2.23% [33] | 19 mins, 410 W Ultrasonic power [33] | 2025 Study [33] |

| Ultrasound-Assisted Extraction (UAE) | Protein (Acacia seeds) [34] | Protein yield increase: 6.3-10.92% [34] | 80 W, 20 kHz, 20 min [34] | 2025 Study [34] |

| Modified QuEChERS | Hesperidin (Lemon peel) [35] | Yield: 48.7% higher than UAE; Time: 75% shorter [35] | Method-specific solvent & sorbent use [35] | 2025 Study [35] |

Table 2: Functional and Economic Parameters of Extraction Techniques

| Parameter | Ultrasound (UAE) | Microwave (MAE) | Supercritical Fluid (SFE) | Traditional (e.g., Soxhlet, Maceration) |

|---|---|---|---|---|

| Primary Mechanism | Acoustic cavitation [32] | Dielectric heating [32] | Tunable solvation power of supercritical fluids (e.g., CO₂) [36] | Solvent diffusion, heat |

| Typical Duration | Minutes to tens of minutes [32] [34] | Very fast (minutes) [32] | Moderate (tens of minutes to hours) | Very long (hours to days) |

| Operational Temperature | Low to Moderate (often < 50-60°C) [34] | Moderate to High (precise control) [32] | Near-ambient to Moderate (e.g., 31-60°C for CO₂) [36] | High (reflux) or Ambient |

| Solvent Consumption | Moderate to Low [32] | Low [32] | Very Low (CO₂ is recycled) [36] | High |

| Selectivity | Moderate | Moderate | High (tunable) [36] | Low to Moderate |

| Capital Investment | Moderate | Moderate | Very High [36] | Low |

| Best For | Heat-sensitive compounds, proteins [34], cell disruption | Rapid extraction of robust polyphenols [32] [37] | High-value, sensitive compounds; solvent-free requirement [36] | Universal, low-budget applications |

Detailed Experimental Protocols from Key Studies

Protocol 1: Comparative MAE and UAE for Stevia Bioactives [32]

- Objective: Optimize and compare MAE and UAE for total phenolic content (TPC), total flavonoid content (TFC), and antioxidant activity (AA) from Stevia rebaudiana leaves.

- Sample Prep: Dried leaves ground and sieved to ~250 microns [32].

- Experimental Design:

- Extraction:

- Analysis: TPC by Folin-Ciocalteu assay, TFC by aluminum chloride method, AA by DPPH radical scavenging assay [32].

- Key Result: MAE outperformed UAE, yielding significantly higher TPC, TFC, and AA with 58.33% less extraction time [32]. The ANN-GA model (R²=0.9985 for MAE) provided highly accurate predictions [32].

Protocol 2: UAE for Acacia Seed Protein Functionality [34]

- Objective: Enhance protein yield and techno-functional properties from Acacia seeds.

- Sample Prep: Seeds ground into flour and defatted [34].

- Extraction: Flour dispersed in water (1:10 w/v), pH adjusted to 9. UAE performed using a probe (e.g., 80 W, 20 kHz, 20 min) with temperature controlled below 35°C [34]. Protein precipitated at isoelectric point (pH 4.5) [34].

- Analysis: Protein yield calculated. Functionality tests included emulsifying activity index, foaming capacity/stability, water/oil holding capacity, and protein digestibility [34].

- Key Result: UAE increased protein yield by 6.3-10.9% and significantly improved all functional properties compared to non-ultrasonic extraction [34].

Protocol 3: Optimizing Polysaccharide Extraction via UMAE [33]

- Objective: Optimize UMAE for polysaccharides from Alpinia officinarum rhizomes and compare with hot reflux extraction (HRE).

- Sample Prep: Dried rhizomes powdered and de-fatted with ethanol [33].

- Experimental Design: A Box-Behnken Design (BBD) with three factors: liquid-solid ratio, extraction time, and ultrasonic power [33].

- Extraction: Performed in a combined microwave-ultrasound reactor. Optimal UMAE conditions were 19 min, 410 W ultrasonic power [33]. Compared against traditional HRE.

- Analysis: Polysaccharide yield calculated. Extracts purified and characterized for monosaccharide composition, molecular weight, and antioxidant activity [33].

- Key Result: UMAE achieved a maximum yield of 18.28%. The UMAE-derived polysaccharide (PAOR-1) showed different structural characteristics and higher antioxidant activity than the HRE-derived one (PAOR-2) [33].

The Scientist's Toolkit: Essential Reagents & Materials

Table 3: Key Reagents and Materials for Modern Extraction Protocols

| Item Name / Category | Typical Specification / Example | Primary Function in Extraction |

|---|---|---|

| Green Solvents | Ethanol (50-100%), Water [32] [33] | Extraction medium for phenolics, flavonoids; eco-friendly alternative to organic solvents. |

| Characterization Reagents | Folin-Ciocalteu reagent [32] [37], DPPH [32] [37], Aluminum chloride [32] | Quantification of total phenolic content (TPC) and assessment of antioxidant activity. |

| Sorbents for Clean-up | Primary Secondary Amine (PSA), C18, Graphitized Carbon Black (GCB) [35] | Used in QuEChERS to remove co-extracted interferences like organic acids, pigments, and sugars. |

| Supercritical Fluid | Supercritical Carbon Dioxide (scCO₂) [36] | Primary solvent in SFE; inert, tunable, leaves no toxic residue. |

| Co-solvents/Modifiers | Ethanol, Methanol (for SFE) [36] | Added to scCO₂ to modify polarity and improve extraction yield of more polar compounds. |

| Protease/Analytical Enzyme | Pepsin, Trypsin (for digestibility) [34] | Used in vitro to simulate gastrointestinal digestion and assess protein digestibility. |

AI-Enhanced Optimization in Extraction Research

A paradigm shift in optimization uses artificial intelligence (AI) and machine learning (ML) to surpass traditional statistical models. Response Surface Methodology (RSM) has been standard for modeling interactions between process variables (e.g., time, power, solvent concentration) [32] [33]. However, RSM can struggle with highly complex, non-linear relationships [32].

Advanced Artificial Neural Network (ANN) models, particularly when hybridized with optimization algorithms like Genetic Algorithms (GA), demonstrate superior predictive accuracy. In stevia extraction, an ANN-GA model for MAE achieved a near-perfect R² of 0.9985, outperforming the RSM model and precisely identifying global optimum conditions [32]. Similarly, ensemble ML models like LSBoost with Random Forest have been used to optimize MAE for pomegranate peel phenolics, identifying microwave power as the most critical parameter [37].