Dereplication in Natural Product Discovery: Preventing Rediscovery to Accelerate Drug Development

This article provides a comprehensive overview of dereplication strategies essential for natural product drug discovery, aimed at researchers, scientists, and drug development professionals.

Dereplication in Natural Product Discovery: Preventing Rediscovery to Accelerate Drug Development

Abstract

This article provides a comprehensive overview of dereplication strategies essential for natural product drug discovery, aimed at researchers, scientists, and drug development professionals. It explores how dereplication prevents the costly and time-consuming rediscovery of known compounds through four core perspectives: foundational concepts and historical evolution; methodological applications of advanced techniques like LC-MS/MS and molecular networking; troubleshooting and optimization of workflows; and validation through comparative analysis. The scope covers current tools, challenges, and best practices to enhance efficiency in identifying novel bioactive leads.

Foundations of Dereplication: Core Concepts, Historical Context, and the Rediscovery Problem

The discovery of novel Natural Products (NPs) from microbial, marine, and plant sources remains a cornerstone of drug development, particularly for antimicrobial and anticancer agents [1]. However, this process is notoriously resource-intensive, expensive, and prone to high rates of rediscovery. Within this context, dereplication—defined as the rapid, early-stage identification of known compounds in crude extracts—has emerged as a critical, non-negotiable step in the NP research pipeline [2]. Its primary function is to prevent the futile expenditure of time and resources on the isolation and elucidation of already-characterized molecules, thereby refocusing efforts on true novelty [3].

The scale of the challenge is significant. Since April 2014 alone, nearly 1,240 publications have focused on dereplication, with 908 articles receiving over 40,520 citations, underscoring its status as a dominant theme in the field [2]. The economic and intellectual rationale is clear: by efficiently filtering out known entities, dereplication accelerates the discovery pipeline, increases the probability of identifying novel bioactive leads, and is fundamental to the thesis that strategic front-end analysis is paramount for sustainable biodiscovery in an era of increasing chemical redundancy [2] [4].

The Conceptual and Technical Foundations of Dereplication

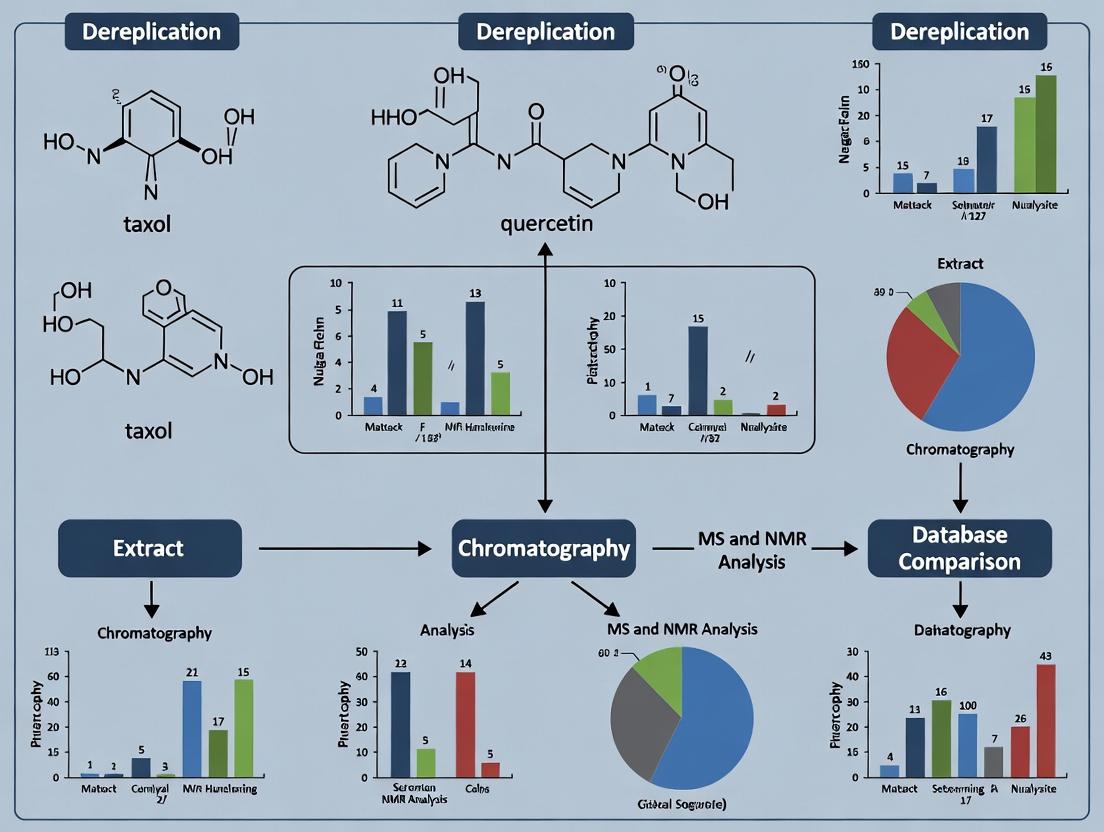

Effective dereplication operates on a triangulation model, integrating three core pillars of information: taxonomy, spectroscopy, and molecular structure databases [3].

- Taxonomy: The biological source of an extract provides the first filter. Organisms within related taxa often produce similar secondary metabolites. Knowledge of the chemical profile of a genus or family allows researchers to prioritize extracts from under-explored branches or deprioritize those from well-studied sources likely to contain known compounds [3].

- Spectroscopy: Analytical data, primarily from High-Resolution Mass Spectrometry (HRMS) and Nuclear Magnetic Resonance (NMR) spectroscopy, provides the experimental fingerprint of a compound. HRMS delivers accurate molecular mass and formula, while NMR offers detailed structural insights [5]. The tandem use of LC-MS/MS and NMR is considered a gold standard for confident tentative identification [1].

- Databases & Bioinformatics: This pillar encompasses the curated libraries of known compounds and their associated data. Successful dereplication relies on querying experimental spectral data against these repositories. Key databases include AntiBase (microbial metabolites), MarinLit (marine NPs), PubChem, and GNPS (tandem MS spectra) [5]. The integration of bioinformatics and cheminformatics tools for data mining and pattern recognition is what transforms raw data into actionable knowledge [2].

The synergy of these pillars creates a powerful dereplication strategy. For example, detecting a mass signal in a Streptomyces extract that matches the exact mass and isotopic pattern of a known streptomycin-like compound in a database, supported by similar UV profiles and taxonomic precedent, allows for its swift classification as a known entity [5].

Quantitative Impact and Core Methodologies

The adoption of dereplication is reflected in the scholarly landscape and is driven by specific, high-throughput methodologies.

Table 1: Quantitative Impact of Dereplication in Natural Products Research (2014-2023)

| Metric | Figure | Significance |

|---|---|---|

| Total Publications on Dereplication (since Apr 2014) | 908 articles | Indicates a sustained, high level of research activity and methodological development [2]. |

| Total Citations for Dereplication Research | > 40,520 citations | Demonstrates the foundational importance and widespread influence of dereplication work [2]. |

| Reviews Published on Dereplication | 89 reviews | Highlights the field's rapid evolution and the need for continual synthesis of best practices [2]. |

| Common Dereplication Success Rate (Method-Dependent) | High (for known compounds in databases) | Efficiency is contingent on database comprehensiveness and analytical data quality [3] [5]. |

Core Methodological Workflow: The standard dereplication protocol involves a cascade of analytical techniques, increasing in specificity and confirmatory power.

- Initial Profiling (LC-UV-HRMS): A crude extract is analyzed by liquid chromatography coupled with diode-array UV detection and high-resolution mass spectrometry. This provides retention time, UV spectrum (indicative of chromophore), and an accurate molecular formula for each major component [5] [4].

- Database Query: The molecular formula and UV data are queried against NP databases. A match tentatively identifies the compound.

- Confirmation (MS/MS & NMR): Tentative identifications are confirmed by comparing experimental tandem mass spectrometry (MS/MS) fragmentation patterns with library spectra (e.g., in GNPS). For critical or ambiguous cases, 1D and 2D NMR data on the crude extract or semi-purified fractions provide definitive structural confirmation without the need for full isolation [5] [1].

Detailed Experimental Protocol: A Metabolomics-Driven Dereplication Workflow

The following protocol, adapted from a study on antitrypanosomal actinomycetes, details a robust, metabolomics-based dereplication pipeline using HRMS and statistical analysis [5].

Objective: To rapidly identify known metabolites and highlight unknown signals in a bioactive bacterial crude extract.

Materials & Reagents:

- Sample: Crude ethyl acetate extract of fermented microbial culture.

- Analytical Instrument: High-Resolution Fourier Transform Mass Spectrometer (HRFTMS) coupled to UPLC (e.g., Orbitrap Exploris).

- Chromatography: Reversed-phase C18 column (e.g., 2.1 x 50 mm, 1.8 μm).

- Solvents: LC-MS grade water (with 0.1% formic acid) and acetonitrile.

- Software: MZmine 2.10 for data processing; SIMCA 13.0.2 for multivariate statistics; GNPS for MS/MS networking.

- Databases: AntiBase, MarinLit, in-house spectral libraries.

Procedure:

Sample Preparation: Dissolve crude extract in methanol to a concentration of 1 mg/mL. Centrifuge and filter (0.2 μm) prior to injection.

LC-HRMS Data Acquisition:

- Inject 2 μL of sample.

- Employ a gradient elution: 5% to 100% acetonitrile in water over 15 minutes.

- Operate the mass spectrometer in both positive and negative electrospray ionization (ESI) modes with a mass resolution >70,000.

- Acquire data in full-scan mode (m/z 100-1500).

Data Processing & Dereplication with MZmine:

- Import raw data files.

- Perform peak detection, deconvolution, and alignment across samples.

- Deisotope and group adducts.

- Predict molecular formulas for detected features using an isotope pattern matching algorithm (mass tolerance < 5 ppm).

- Export a list of features with m/z, retention time, and predicted formula.

Database Mining:

- Query the list of predicted molecular formulas against natural product databases (AntiBase, MarinLit).

- Filter results by considering the taxonomic origin of the sample.

- Tentatively assign structures to database matches.

Statistical Prioritization (Optional but Powerful):

- If multiple extracts are compared (e.g., from different fermentation conditions), perform Principal Component Analysis (PCA) or Orthogonal Projections to Latent Structures Discriminant Analysis (OPLS-DA) in SIMCA.

- Identify features (mass signals) that are statistically significant for the desired bioactivity or that distinguish one condition from another. These become high-priority targets for further investigation, whether for dereplication or novel compound isolation [5].

Expected Outcomes: The output is a annotated chromatogram distinguishing known compounds (dereplicated) from unknown features. The unknown features, particularly those correlated with bioactivity in statistical models, are prioritized for subsequent isolation and structure elucidation.

Advanced Strategies: Molecular Networking and Integrative Omics

Beyond single-database queries, advanced computational strategies have revolutionized dereplication.

Molecular Networking via GNPS: This is a paradigm-shifting tool that visualizes the chemical space of an entire extract library [4]. MS/MS spectra from all samples are compared; spectrally similar molecules cluster together as nodes in a network graph. Known compounds act as "anchors" in the network, allowing for the tentative identification of their close structural analogues (neighboring nodes). More importantly, clusters that contain no known compounds or that are distant from known compound clusters visually flag putative novel compound families for targeted isolation [2] [4].

Table 2: The Scientist's Toolkit: Essential Reagents and Resources for Dereplication

| Tool/Reagent | Function in Dereplication | Key Consideration |

|---|---|---|

| High-Resolution Mass Spectrometer (e.g., Q-TOF, Orbitrap) | Provides accurate mass (< 5 ppm error) for molecular formula prediction. The cornerstone of modern dereplication. | High mass resolution and stability are critical for reliable formula assignment [5]. |

| Hyphenated LC-MS System (UPLC/HRMS) | Separates complex mixtures and delivers clean, chromato-graphically resolved mass data for individual components. | Ultra-HPLC provides superior separation speed and resolution for complex extracts [6]. |

| Reference Databases (AntiBase, MarinLit, GNPS) | Digital libraries for comparing experimental data against known compounds. GNPS specializes in crowd-sourced MS/MS spectra. | Database coverage, accuracy, and search algorithms directly impact success rate [3] [5]. |

| NMR Spectrometer (High-Field) | Provides definitive structural information to confirm database matches or elucidate novel structures. | Used on crude or fractionated samples for confirming dereplication hits or solving new structures [1]. |

| Statistical Software (e.g., SIMCA, MZmine) | Enables multivariate analysis of metabolomic data to correlate chemical features with biological activity or origin. | Essential for prioritizing features in complex datasets from multiple samples [5]. |

Integrative Genomics and Metabolomics: The most progressive pipelines combine metabolomic dereplication with genome mining. Sequencing the genome of the source organism can reveal biosynthetic gene clusters (BGCs) for non-ribosomal peptide synthetases (NRPS) or polyketide synthases (PKS). Researchers can then specifically search for the predicted masses and features of these compounds in the metabolomic data, creating a highly targeted "hunting license" for novel molecules predicted to exist [2] [7]. This approach directly tests the link between genetic potential and chemical expression.

Case Studies and Practical Applications

The practical value of dereplication is best illustrated through case studies:

- Resurrecting a "Depleted" Library: A library of 960 marine sponge extracts, considered chemically exhausted after 30+ years of study, was reanalyzed using GNPS molecular networking. This led to the discovery of entirely new classes of alkaloids and terpenes in genera like Dysidea and Cacospongia, which were previously thought to be well-characterized. Dereplication here distinguished rare novel scaffolds from common known ones, breathing new life into an old resource [4].

- Targeted Isolation of Novel Bioactives: In the study of Actinokineospora sp. EG49, HRMS and NMR-based dereplication quickly identified numerous known angucycline analogs in the crude extract. By focusing on unknown mass signals that also showed characteristic angucycline MS/MS fragments, researchers prioritized and successfully isolated two novel O-glycosylated angucyclines, actinosporins A and B, with selective antitrypanosomal activity [5].

Dereplication has evolved from a simple checkpoint to a dynamic, informatics-driven strategy that is central to efficient natural product discovery. Its critical role in preventing the rediscovery of known compounds is the foundational thesis for modernizing NP research. By integrating high-throughput analytics, spectral networking, genomic context, and intelligent databases, dereplication no longer just says "what is known"—it actively guides researchers toward what is unknown and likely to be novel.

Future directions point towards greater automation, the integration of artificial intelligence for spectral prediction and classification, and the development of universal, federated databases [2] [7]. As these tools mature, dereplication will solidify its position as the indispensable gatekeeper and guide, ensuring that the vast effort invested in natural product research yields maximum innovation in drug discovery and development.

The Historical Evolution of Dereplication Strategies in Drug Discovery

The discovery of novel bioactive compounds from natural sources—including plants, microbes, and marine organisms—has been a cornerstone of therapeutic development for decades, with over half of all approved drugs originating from such products [8]. However, this field faces a persistent and costly challenge: the rediscovery of known compounds. Traditional bioactivity-guided fractionation is a labor-intensive process, often requiring weeks or months of work only to culminate in the isolation and characterization of a substance already documented in the scientific literature. This inefficiency severely hampers research productivity and resource allocation in drug discovery [8].

Dereplication, defined as the process of rapidly identifying known compounds within a complex mixture at the earliest possible stage, was developed as a strategic solution to this problem. Its core thesis is that by efficiently filtering out known entities, researchers can focus their efforts exclusively on novel chemistry, thereby accelerating the discovery pipeline and conserving valuable resources [6]. This whitepaper traces the historical evolution of dereplication methodologies, from early, low-throughput techniques to today's integrated, informatics-driven platforms, framing this progression within the overarching goal of preventing rediscovery.

The Historical Trajectory of Dereplication

The evolution of dereplication strategies mirrors advances in analytical technology and computational power. The field has progressed from reliance on simple physicochemical properties to the sophisticated integration of multi-dimensional data.

Table 1: Historical Evolution of Dereplication Strategies

| Era | Dominant Strategy | Key Technologies | Primary Goal | Throughput & Speed |

|---|---|---|---|---|

| Pre-1990s (Early) | Bioassay-guided fractionation with late-stage identification | Column chromatography, TLC, UV-Vis spectroscopy | Isolate pure compound for structure elucidation (often rediscovery) | Very low; weeks to months per compound |

| 1990s-2000s (Chromatographic) | Chromatographic profiling & spectral libraries | HPLC-DAD, GC-MS, LC-MS, early database searching | Compare profiles/spectra to libraries of knowns | Medium; days to weeks |

| 2000s-2010s (Spectroscopic Revolution) | High-resolution mass spectrometry & hyphenated techniques | HR-MS (e.g., Q-TOF, Orbitrap), LC-MS/MS, NMR microprobes | Determine molecular formula & fragment patterns for database matching | High; hours to days |

| 2010s-Present (Integrated & Predictive) | Multimodal data integration & in silico prediction | LC-HRMS/MS, molecular networking, online bioassays, AI/ML tools | Annotate knowns and prioritize novel scaffolds in complex mixtures | Very high; real-time to hours |

Early Era: Manual Separation and Late-Stage Identification

Initially, natural product discovery was a linear process. Crude extracts showing bioactivity were subjected to sequential chromatographic separations (e.g., open column chromatography, thin-layer chromatography) guided by repetitive biological testing. The structure of a purified active compound was elucidated only at the endpoint, typically using nuclear magnetic resonance (NMR) and mass spectrometry (MS) [6]. This approach carried a high risk of rediscovery, as the chemical identity remained unknown until significant effort had been expended.

Chromatographic Profiling and Spectral Library Matching

The introduction of high-performance liquid chromatography (HPLC) coupled with diode-array detection (DAD) marked a significant advance. Researchers could generate ultraviolet-visible (UV-Vis) spectral profiles of mixtures and compare chromatographic retention times and UV spectra against in-house or commercial libraries of known compounds [6]. This allowed for the tentative identification of known compounds before isolation. The subsequent coupling of HPLC with mass spectrometry (LC-MS) added a new dimension of specificity through molecular weight information.

The Spectroscopic Revolution: High-Resolution and Tandem MS

The advent of high-resolution mass spectrometry (HRMS) was transformative. Instruments like quadrupole-time-of-flight (Q-TOF) and Orbitrap analyzers could provide exact molecular masses, enabling the calculation of precise elemental compositions. This allowed researchers to search chemical databases with high confidence using mass queries alone [8]. Tandem MS/MS further empowered dereplication by providing diagnostic fragment ion patterns, creating a unique "fingerprint" for a molecule that could be matched against growing spectral libraries such as MassBank, GNPS, and mzCloud [8].

Modern Era: Integrated and Predictive Workflows

Today, dereplication is a multimodal, informatics-rich process. The state-of-the-art integrates chromatographic separation, HRMS/MS, and sometimes NMR or online biochemical assays into a unified workflow. Key developments include:

- Molecular Networking: Visualizes the chemical relationships within a sample based on MS/MS similarity, allowing clusters of known compounds to be identified rapidly while highlighting unique, potentially novel molecules for further investigation [6].

- Online Bioactivity Screening: Techniques like online DPPH radical scavenging assays couple directly to LC-MS, enabling the simultaneous detection of chemical constituents and their antioxidant activity, directly linking structure to function [9].

- Data Fusion and AI: Advanced platforms integrate HRMS, NMR, and retention time data with bioactivity readouts. Tools like the CATHEDRAL annotation platform use such fused data to assign confidence levels to compound identifications, while machine learning models begin to predict both identity and bioactivity from spectral data [9].

Diagram: The Historical Evolution of Dereplication Strategy Goals

Core Methodologies and Experimental Protocols

Modern dereplication relies on robust, standardized protocols that combine separation science, advanced spectroscopy, and data analysis.

Protocol 1: LC-HRMS/MS Library Construction for Rapid Dereplication

This protocol, adapted from a 2025 study, details the creation of an in-house tandem MS spectral library for dereplicating common phytochemicals [8].

1. Sample Preparation and Pooling Strategy:

- Obtain pure analytical standards (≥97% purity) of target compounds.

- Adopt a pooling strategy to increase efficiency. Group compounds into pools based on their calculated log P (lipophilicity) and exact mass to minimize co-elution and the presence of isomers in the same LC-MS run [8].

- Prepare stock solutions of individual standards in methanol or DMSO, then combine according to pool design.

2. LC-HRMS/MS Analysis:

- Chromatography: Use a reversed-phase C18 column. Employ a gradient elution with mobile phase A (0.1% formic acid in water) and B (0.1% formic acid in methanol or acetonitrile). Optimize the gradient to achieve baseline separation of compounds in the pool.

- Mass Spectrometry: Operate an electrospray ionization (ESI) source in positive and/or negative mode. For library construction, collect data in data-dependent acquisition (DDA) mode.

- Full scan (m/z 100-1500) at high resolution (e.g., 70,000 FWHM).

- Isolate the top N most intense ions for fragmentation.

- Fragment ions at multiple collision energies (e.g., 10, 20, 30, 40 eV) to capture comprehensive fragmentation patterns. A normalized collision energy ramp (e.g., 25-62 eV) can also be used [8].

- Acquire MS/MS spectra for both [M+H]+ and [M+Na]+ adducts where applicable.

3. Library Construction and Data Processing:

- Process raw files to extract for each compound: precursor m/z (with <5 ppm mass error), retention time, and all associated MS/MS spectra.

- Compile a structured library containing: compound name, molecular formula, exact mass, observed adducts, retention time, and collision energy-dependent MS/MS spectra.

- Submit data to public repositories (e.g., MetaboLights) to enhance community resources [8].

4. Dereplication of Unknown Extracts:

- Analyze crude plant or microbial extracts under identical LC-HRMS/MS conditions.

- Process the unknown dataset: detect features (m/z, RT), and perform MS/MS spectral matching against the constructed library.

- Apply a matching score threshold (e.g., cosine score >0.7) and consider retention time alignment to confidently annotate known compounds present in the extract.

Protocol 2: Integrated Online Bioactivity Screening and Dereplication

This advanced protocol integrates biochemical screening directly with chromatographic separation for targeted dereplication of active compounds [9].

1. System Configuration:

- Configure an online HPLC-DPPH-UV-HRMS/MS system. The effluent from the HPLC column is split post-column.

- One stream goes directly to the ESI source of the HRMS.

- The other stream mixes with a stable DPPH radical solution in a reaction coil. The decrease in DPPH absorbance (monitored at 517 nm) indicates radical scavenging activity.

2. Analysis of Complex Extract:

- Inject the fractionated or crude natural extract.

- Acquire data in parallel: 1) full scan and DDA MS/MS data from the HRMS, and 2) the UV trace at 517 nm from the DPPH reaction coil.

- The bioactivity (UV) trace is aligned in time with the total ion chromatogram (TIC) from the MS, allowing direct correlation of active peaks with specific MS features.

3. Data Fusion and Annotation:

- Extract HRMS and MS/MS data for features corresponding to active peaks in the DPPH trace.

- Search this data against commercial and public spectral libraries.

- For higher confidence, subject active fractions to further purification (e.g., by Centrifugal Partition Chromatography) and obtain micro-scale 13C NMR data. Use computer-assisted tools like CATHEDRAL to match 13C NMR profiles against databases [9].

- Fuse results from HRMS/MS and NMR to assign confidence levels (Level 1: confirmed structure with reference standard; Level 2: probable structure by spectral similarity; etc.) to each active compound.

Diagram: Integrated Modern Dereplication Workflow

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for Dereplication

| Item | Function in Dereplication | Example/Note |

|---|---|---|

| Analytical Reference Standards | Provide definitive RT and spectral data for library construction; used as positive controls. | Pure compounds (e.g., quercetin, catechin) from Sigma-Aldrich [8]. |

| Chromatography Solvents (HPLC/MS Grade) | Mobile phase components for high-resolution separation without MS interference. | Methanol, acetonitrile, water with 0.1% formic acid [8]. |

| LC Columns (Reversed-Phase) | Separate complex mixtures prior to detection. Essential for obtaining reliable RT. | C18 columns (e.g., 2.1 x 100 mm, 1.7-1.9 μm particle size). |

| DPPH Radical (2,2-Diphenyl-1-picrylhydrazyl) | Reagent for online antioxidant activity screening; detects free radical scavengers. | Prepared in methanol for post-column reaction assays [9]. |

| Deuterated NMR Solvents | For micro-scale NMR structure verification of prioritized unknowns. | Chloroform-d, methanol-d4, DMSO-d6. |

| Solid-Phase Extraction (SPE) Cartridges | Rapid cleanup and pre-fractionation of crude extracts to reduce complexity. | C18, Diol, or Ion-Exchange phases. |

| Chemical & Spectral Databases | Digital libraries for matching experimental data to known compounds. | SciFinder, Reaxys, GNPS, MassBank, NIST [8]. |

Impact and Future Perspectives

The systematic implementation of dereplication has fundamentally altered the natural product discovery workflow. By frontloading the identification process, it has dramatically reduced wasted effort on rediscovery, allowing teams to prioritize novel chemotypes with greater efficiency [6] [10]. This is evidenced by studies that rapidly annotate 50+ compounds in a single extract, simultaneously reporting new discoveries within a genus [9].

The future of dereplication lies in deeper integration and predictive analytics. The convergence of high-throughput analytical data with machine learning and artificial intelligence is poised to create predictive dereplication engines. These systems will not only identify knowns but also predict the structural novelty and potential bioactivity of unknown features directly from their spectral signatures. Furthermore, the expansion of open-access, crowd-sourced spectral libraries will continue to enhance the global capability to distinguish the known from the unknown, ensuring that drug discovery efforts remain focused on true innovation [8] [10].

Whitepaper Abstract Compound rediscovery represents a critical inefficiency in natural product and drug discovery pipelines, incurring substantial financial costs and significant temporal delays. This whitepates the economic burden of redundant research, details advanced dereplication protocols to prevent it, and provides a framework for quantifying the return on investment from implementing these strategies. Framed within the critical thesis that systematic dereplication is essential for sustainable innovation, this document serves as a technical guide for research and development (R&D) leaders, medicinal chemists, and laboratory scientists dedicated to optimizing discovery workflows.

The High Stakes of Rediscovery: Quantifying Economic and Temporal Drain

The process of discovering and developing a new drug is among the most capital- and time-intensive endeavors in modern science. Within this high-stakes landscape, the rediscovery of already known compounds constitutes a profound source of waste, diverting resources from truly novel research.

1.1 The Baseline Cost of Drug Development Recent analyses confirm a substantial range in the estimated cost to develop a new drug, influenced by methodology, data sources, and the definition of a "new" drug. A comprehensive 2022 review synthesized 17 studies to contextualize this variance, finding that industry R&D costs per new prescription drug range from $113 million to over $6 billion (in 2018 dollars). For novel New Molecular Entities (NMEs)—the category most relevant to new natural product leads—the range is narrower but still significant, at $318 million to $2.8 billion [11]. These figures encapsulate the immense financial risk against which the cost of rediscovery must be measured.

1.2 The Direct Cost of Delay Time is a direct driver of cost in drug development. Delays in clinical stages have a quantifiable dual impact: the direct operational cost of running trials and the opportunity cost of lost sales. A 2024 empirical study by the Tufts Center for the Study of Drug Development provides granular estimates [12]:

- The direct daily cost to conduct Phase II and III clinical trials is approximately $40,000.

- A single day of delay in launching a new drug equals approximately $500,000 in lost prescription drug sales.

The study further notes that daily sales are highest for drugs targeting infectious, hematologic, cardiovascular, and gastrointestinal diseases, making rediscovery delays in these areas particularly costly [12].

1.3 Attributing Cost to Rediscovery While the exact proportion of R&D expenditure wasted on rediscovery is challenging to isolate, its impact is felt across the discovery pipeline. Resources consumed include:

- Screening Costs: High-Throughput Screening (HTS) campaigns against biological targets or phenotypic assays.

- Isolation & Characterization Costs: Personnel time, consumables, and instrument time for the chromatographic separation and spectroscopic analysis (MS, NMR) required to purify and identify an active compound.

- Opportunity Cost: The most significant impact. The time and resources spent pursuing a known compound could have been allocated to investigating a novel lead with true therapeutic and commercial potential.

Table 1: Summary of Key Cost Metrics in Drug Development and Delay

| Cost Metric | Estimated Value (USD) | Key Notes & Source |

|---|---|---|

| Industry R&D Cost per New Drug | $113M - $6B+ | Broad range based on 17 studies; 2018 dollars [11]. |

| Industry R&D Cost per NME | $318M - $2.8B | Narrower range for novel molecular entities [11]. |

| Direct Daily Clinical Trial Cost (Ph II/III) | ~$40,000 | Operational cost of running trials [12]. |

| Lost Sales per Day of Delay | ~$500,000 | Opportunity cost of delayed market launch [12]. |

The Dereplication Solution: Methodologies to Prevent Rediscovery

Dereplication is the process of rapidly identifying known compounds within a complex mixture early in the discovery pipeline, thereby preventing redundant investment in their isolation and characterization. It is a multidisciplinary strategy integrating analytical chemistry, bioinformatics, and data science.

2.1 The Core Analytical Workflow The standard dereplication protocol is centered on hyphenated analytical techniques, primarily Liquid Chromatography coupled with High-Resolution Mass Spectrometry (LC-HRMS) and tandem MS/MS. The workflow involves separating a crude extract via LC, obtaining accurate mass and isotopic pattern data for each component, and fragmenting ions to obtain structural MS/MS spectra [2]. This data forms the digital fingerprint for each metabolite.

Diagram Title: Core Analytical Dereplication Workflow

2.2 Informatics & Database Interrogation The power of dereplication is unlocked by comparing acquired spectral data against curated databases.

- Spectral Libraries: Tools like the Global Natural Products Social Molecular Networking (GNPS) platform allow direct matching of experimental MS/MS spectra against community-contributed reference libraries [2] [1].

- In-Silico Prediction & Molecular Networking: When no direct match is found, computational tools predict chemical structures from MS/MS data. Molecular networking clusters MS/MS spectra by similarity, visualizing chemical relationships within a sample; known compounds cluster together, quickly highlighting unique, potentially novel metabolites for prioritization [2].

2.3 Integrating Orthogonal Data for Confidence Advanced dereplication increases confidence by layering multiple data streams:

- UV/Vis & NMR Data: Hyphenating LC with UV/Vis diodes or, with more technical effort, with NMR (LC-NMR) provides orthogonal structural information [1].

- Genomic Context: For microbial products, analyzing the biosynthetic gene cluster (BGC) data from the source organism can predict the structural class of metabolites produced, guiding the chemical analysis [2].

Table 2: Experimental Protocol for High-Confidence Dereplication

| Protocol Step | Description | Key Instrumentation/Technique | Output & Purpose |

|---|---|---|---|

| 1. Sample Preparation | Generation of a soluble, particulate-free crude extract from biological material. | Solvent extraction, centrifugation, filtration. | A standardized sample compatible with LC injection. |

| 2. Chromatographic Separation | Separation of metabolite mixture based on chemical polarity. | Ultra-High-Performance Liquid Chromatography (UHPLC). | Reduced complexity, temporal resolution of metabolites for cleaner spectral data. |

| 3. High-Resolution Mass Spectrometry | Detection, accurate mass measurement, and fragmentation of eluted compounds. | LC-HRMS with tandem MS/MS capability (e.g., Q-TOF, Orbitrap). | Molecular formula data (from accurate mass) and structural fragment fingerprints (MS/MS). |

| 4. Data Acquisition & Processing | Conversion of raw instrument data to analyzable spectra and peak lists. | Vendor and open-source software (e.g., MZmine, MS-DIAL). | Aligned, deconvoluted mass spectral data for all detected features. |

| 5. Database Query & Analysis | Interrogation of spectral and chemical databases. | GNPS, NAPROC-13, internal libraries; Molecular Networking software. | Annotation of known compounds & visualization of novel chemical space. |

| 6. Orthogonal Validation (Tier 2) | Application of additional techniques for ambiguous or high-priority hits. | Microfractionation + Bioassay; LC-NMR; Genomic mining. | Confirmation of bioactivity and/or structural class, final prioritization decision. |

The Scientist's Toolkit: Essential Reagents & Technologies for Modern Dereplication

Implementing an effective dereplication strategy requires both chemical reagents and computational resources.

Table 3: Key Research Reagent Solutions for Dereplication Workflows

| Item/Category | Function in Dereplication | Technical Notes |

|---|---|---|

| LC-MS Grade Solvents (Acetonitrile, Methanol, Water) | Used for sample preparation, mobile phase preparation, and instrument calibration. | High purity is critical to minimize background noise and ion suppression in MS. |

| Internal Mass Standards | Calibrates the mass spectrometer in real-time during runs, ensuring sustained high mass accuracy. | Commonly infused separately (e.g., lock mass) or included in mobile phase. Essential for reliable formula prediction. |

| Chemical Derivatization Agents | Modifies specific functional groups (e.g., amines, carboxylic acids) to alter chromatographic retention or fragmentation patterns for difficult compounds. | Can improve detection, separation, or provide additional structural clues. |

| Standardized Natural Product Extracts & Pure Compounds | Serve as positive controls and for building in-house spectral libraries. | Used to validate instrument performance and develop/curate local reference databases. |

| Bioinformatics Software & Computational Resources | Platforms for data processing, molecular networking, and database querying. | GNPS is the central public platform [2]. Local servers or cloud compute may be needed for large datasets. |

| Curated Spectral & Structural Databases | The reference knowledge base against which unknown spectra are compared. | Public: GNPS, NIST, NAPROC-13. Commercial: Chapman & Hall, AntiBase. Institutional curation is often required. |

Quantifying the Return on Investment (ROI) in Dereplication

Investing in dereplication infrastructure—both hardware and expertise—provides a measurable return by shifting resources from low-yield rediscovery to higher-probability novel discovery.

4.1 An ROI Estimation Framework The ROI can be modeled by comparing the costs of dereplication against the avoided costs of rediscovery: ROI = (Cost of Rediscovery Avoided − Cost of Dereplication Program) / Cost of Dereplication Program

- Cost of Dereplication Program: Includes capital equipment (LC-HRMS), annual maintenance, consumables, bioinformatics tools, and specialized personnel.

- Cost of Rediscovery Avoided: A composite of the screened, isolated, and characterized known compound's pro-rata share of R&D costs, plus the opportunity cost of the time delay. Using the metrics in Table 1, the full cost of advancing a single rediscovered compound through even early stages can easily reach millions when accounting for allocated overhead and lost time.

4.2 Strategic Implementation and Future Directions To maximize ROI, dereplication must be positioned as the first step in any discovery pipeline, not an afterthought. The future of the field lies in deeper integration:

- Artificial Intelligence & Machine Learning: AI models are being developed to predict novel chemical structures directly from MS/MS data and to design optimized screening libraries, pushing dereplication from mere recognition to predictive avoidance of redundancy [13].

- Automated & Integrated Workflows: Coupling automated extraction, LC-MS analysis, and real-time database querying can deliver dereplication decisions in near-real-time, guiding immediate decisions on which fractions to pursue for isolation [2].

Diagram Title: ROI Logic of Dereplication Investment

Conclusion The economic and temporal costs of compound rediscovery are severe and quantifiable drains on pharmaceutical and natural product R&D. Implementing robust, early-stage dereplication protocols is not merely a technical optimization but a strategic financial imperative. By integrating advanced analytical chemistry with bioinformatics and AI, research organizations can convert wasteful rediscovery cycles into efficient engines for novel discovery, directly improving their probability of technical and commercial success while stewarding finite resources responsibly.

Key Challenges Driving Modern Dereplication Efforts in Academia and Industry

Dereplication—the rapid identification of known compounds within complex mixtures—stands as a critical gatekeeper in natural product research and drug discovery. Its primary function is to prevent the costly and time-consuming rediscovery of known entities, thereby focusing resources on the identification of novel chemical scaffolds with potential therapeutic value [14]. In both academia and industry, modern dereplication is driven by the convergence of several pressing challenges: the immense structural redundancy in natural product libraries, the soaring costs and extended timelines of high-throughput screening, and the acute need for innovative scaffolds in areas of unmet medical need, such as antibiotic discovery [15]. This whitepaper details the core technical challenges, examines advanced methodological solutions leveraging mass spectrometry and NMR, and provides a framework for integrating these approaches to streamline the path from extract to novel lead compound.

The Core Challenges in Modern Dereplication

The process of dereplication is fundamentally challenged by the scale and complexity of natural chemical space and the economic and operational constraints of modern research.

1.1 Chemical Redundancy and Library Inflation Natural product (NP) libraries, derived from microbial, fungal, or plant extracts, are plagued by significant structural overlap. Organisms from similar ecological niches or phylogenetic lineages often produce identical or analogous secondary metabolites. This redundancy means that traditional screening of large libraries (often comprising thousands of extracts) results in a high rate of re-identifying known bioactive compounds. A seminal 2025 study demonstrated that in a library of 1,439 fungal extracts, a rational dereplication-based selection could achieve 84.9% reduction in the library size required to reach maximal scaffold diversity compared to random selection. This redundancy drastically reduces the probability of discovering novel chemotypes in initial screens [14].

1.2 Economic and Temporal Pressures in Drug Discovery The financial burden of drug development is prohibitive, and early-stage inefficiencies have cascading effects. High-throughput screening (HTS) of massive, redundant NP libraries is both time-consuming and expensive. Each screen against a biological target requires significant reagents, personnel time, and data management resources. Dereplication directly addresses this by pruning libraries prior to screening, ensuring that only the most chemically distinct extracts are tested. This rationalization increases the bioassay hit rate for novel entities. For instance, the same 2025 study showed that a rationally reduced library achieved an anti-Plasmodium falciparum hit rate of 22%, nearly double the 11.26% hit rate of the full library [14].

1.3 The Innovation Gap in Critical Therapeutic Areas The challenge of rediscovery is particularly acute in antibiotic development. The pipeline for new antibiotic classes, especially against Gram-negative pathogens, has been stagnant for decades [15]. Most "new" approvals are derivatives of existing scaffolds, which are vulnerable to pre-existing resistance mechanisms. Effective dereplication is therefore not merely an efficiency tool but a strategic imperative to uncover truly novel scaffolds with new modes of action. The global health threat of antimicrobial resistance (AMR) underscores the necessity of deploying dereplication to mine uncharted chemical space from under-explored biological sources [15].

Table 1: Impact of Rational Dereplication on Screening Efficiency [14]

| Activity Assay | Hit Rate in Full Library (1,439 extracts) | Hit Rate in 80% Diversity Library (50 extracts) | Fold Library Size Reduction |

|---|---|---|---|

| P. falciparum (phenotypic) | 11.26% | 22.00% | 28.8-fold |

| T. vaginalis (phenotypic) | 7.64% | 18.00% | 28.8-fold |

| Neuraminidase (target-based) | 2.57% | 8.00% | 28.8-fold |

Advanced Methodological Frameworks for Dereplication

Modern dereplication relies on a synergistic combination of analytical techniques, primarily liquid chromatography-tandem mass spectrometry (LC-MS/MS) and nuclear magnetic resonance (NMR) spectroscopy, integrated with powerful computational tools.

2.1 Mass Spectrometry-Based Molecular Networking LC-MS/MS has become the workhorse of dereplication due to its high sensitivity and throughput. The contemporary strategy involves untargeted LC-MS/MS analysis followed by computational organization of the data via molecular networking (MN).

Experimental Protocol: LC-MS/MS-Based Dereplication Workflow [14] [16]

- Sample Preparation: Extracts are prepared using standardized solvent systems (e.g., methanol/water/formic acid). For plant material like Sophora flavescens, dried root powder is extracted via sonication [16].

- LC-MS/MS Analysis: Analyses are performed on a UPLC system coupled to a high-resolution mass spectrometer (e.g., Q-TOF).

- Chromatography: A reverse-phase C18 column is used with a gradient of water (with ammonium acetate) and acetonitrile [16].

- Mass Spectrometry: Data is acquired in both Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA or SWATH) modes. DDA selects top ions for fragmentation, while DIA fragments all ions in sequential windows, providing a more complete MS/MS map [16].

- Data Processing: Raw files are converted (e.g., to mzML format) and processed through platforms like MZmine or MS-DIAL for feature detection, alignment, and isotope grouping [16].

- Molecular Networking: Processed MS/MS data is uploaded to the Global Natural Products Social Molecular Networking (GNPS) platform. GNPS clusters MS/MS spectra based on similarity, creating a network where each node is a consensus spectrum and edges represent spectral similarities. Clusters correspond to molecular families of structurally related compounds [14].

- Annotation and Dereplication: Nodes are annotated by searching against public spectral libraries (e.g., GNPS, MassBank). The network visualization allows researchers to quickly identify clusters of known compounds (dense, well-connected regions) and prioritize unknown or rare clusters for isolation [16].

Diagram 1: LC-MS/MS Molecular Networking Dereplication Pipeline

2.2 NMR-Based Dereplication with MADByTE While MS is highly sensitive, NMR provides superior structural information and can differentiate isomers. The MADByTE (Metabolomics and Dereplication By Two-Dimensional NMR Spectroscopy) platform represents a significant advance in NMR dereplication [17].

Experimental Protocol: NMR-Based Dereplication with MADByTE [17]

- Database Construction: A reference database is created by acquiring 2D NMR spectra (primarily HSQC and TOCSY) of pure compound standards. For example, a database for resorcylic acid lactones (RALs) and spirobisnaphthalenes was constructed from 29 isolated metabolites [17].

- Extract Analysis: Crude or pre-fractionated extracts are analyzed using the same standardized 2D NMR experiments.

- Data Analysis with MADByTE: Peak lists from the HSQC and TOCSY spectra of both standards and extracts are input into the MADByTE platform. The software identifies spin systems (groups of coupled nuclei) and builds connectivity networks.

- Network-Based Dereplication: MADByTE generates similarity networks. Shared spin system features between an extract and the reference database cluster together, allowing for the detection of specific compound classes directly in the complex mixture. This can rapidly identify both target compounds (e.g., bioactive RALs) and nuisance compounds (e.g., common cytotoxins) to guide isolation [17].

Table 2: Comparison of MS-Based and NMR-Based Dereplication Approaches [17]

| Characteristic | MS-Based Dereplication | NMR-Based Dereplication (e.g., MADByTE) |

|---|---|---|

| Primary Strength | High sensitivity; excellent for high-throughput, large-scale library profiling. | Provides definitive structural connectivity; superior for isomer differentiation and structure class identification. |

| Key Limitation | Cannot differentiate isomers unambiguously; dependent on ionization efficiency. | Lower sensitivity; requires larger sample amounts; longer acquisition times. |

| Typical Data | MS1 and MS/MS fragmentation patterns. | 2D NMR correlations (e.g., HSQC, TOCSY). |

| Best Application | Initial rapid triaging of large extract libraries. | In-depth characterization of prioritized extracts and isomer resolution. |

Diagram 2: NMR-Based Dereplication via the MADByTE Platform

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for Dereplication Experiments

| Item/Category | Function in Dereplication | Example from Protocols |

|---|---|---|

| Chromatography Columns | Separate complex mixtures for MS or NMR analysis. | 2.1 x 150 mm, 1.8 µm ECLIPSE PLUS-C18 column for UPLC [16]. |

| MS-Grade Solvents & Additives | Ensure compatibility with MS detection and achieve optimal chromatographic separation. | Ammonium acetate in water (mobile phase A); acetonitrile (mobile phase B); formic acid as modifier [16]. |

| Pure Natural Product Standards | Serve as references for constructing spectral databases for both MS and NMR. | Matrine, kurarinone, hypothemycin standards for library matching [17] [16]. |

| Deuterated NMR Solvents | Required for locking and shimming in NMR spectroscopy. | Deuterated methanol (CD₃OD), dimethyl sulfoxide (DMSO-d₆), or chloroform (CDCl₃). |

| Data Processing Software | Convert, align, and analyze raw instrumental data. | MSConvert (ProteoWizard), MZmine, MS-DIAL for MS data; MADByTE for NMR data [14] [17] [16]. |

| Spectral Libraries | Enable automated annotation of MS/MS spectra. | GNPS public spectral libraries, MassBank, in-house curated libraries [14] [16]. |

Integrated Workflow and Future Outlook

The most effective modern dereplication employs a sequential, orthogonal strategy. MS-based molecular networking is used first for high-throughput triaging of large libraries, rapidly identifying known clusters and highlighting unique chemical entities. Subsequently, promising, chemically distinct extracts are subjected to deeper NMR analysis (like MADByTE) for compound class verification, isomer discrimination, and partial structure elucidation prior to isolation.

The future of dereplication is inextricably linked to artificial intelligence and data integration. Machine learning models trained on vast MS/MS and NMR spectral libraries will improve prediction accuracy and novelty scoring. Furthermore, integrating genomic data (e.g., biosynthetic gene cluster analysis) with metabolomic dereplication data creates a powerful "triangulation" approach for targeting specific chemical phenotypes. As articulated in the 2021 roadmap for antibiotic discovery, overcoming the rediscovery bottleneck through such advanced dereplication is a non-negotiable prerequisite for rebuilding a sustainable pipeline of novel therapeutic agents [15]. The ongoing evolution of dereplication from a simple filtering step to an intelligent, predictive discovery engine is fundamental to translating nature's chemical diversity into the next generation of medicines.

Methodological Advances: Applying LC-MS/MS, Molecular Networking, and Bioinformatics Tools

Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) as a Dereplication Workhorse

The Critical Imperative: Dereplication in the Context of Rediscovery

In the pursuit of novel bioactive compounds from natural sources, researchers face a fundamental and costly challenge: the rediscovery of known metabolites. This process of repeatedly isolating and characterizing compounds that are already documented in the scientific literature consumes immense resources, time, and intellectual capital [18]. Within the broader thesis of efficient natural product discovery, dereplication—the early and rapid identification of known compounds in crude extracts—stands as the essential gatekeeper. Its primary function is to prevent redundant research, allowing programs to focus exclusively on novel chemistry with potential therapeutic or biotechnological value [19].

The economic and temporal costs of rediscovery are substantial. Historically, the significant expenses associated with advancing known compounds into the late stages of isolation and characterization contributed to a decline in natural product discovery programs within the pharmaceutical industry [19]. Beyond economics, rediscovery represents a systemic inefficiency in scientific progress. As noted in broader scientific discourse, claims of "discovery" are frequently made for phenomena already established in the literature, a trend exacerbated by modern "kit culture" and sometimes insufficient engagement with historical research [18]. In natural product research, this is not merely an academic concern; it directly impacts the pipeline for new drugs and lead compounds.

Therefore, effective dereplication is not a peripheral analytical task but a core strategic competency. It requires a powerful analytical technique capable of delivering high-confidence identifications from complex mixtures with minimal material. Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) has emerged as the preeminent workhorse for this role, offering the requisite combination of sensitivity, specificity, and compatibility with high-throughput workflows [2] [20].

Technical Foundation: Principles of LC-MS/MS for Dereplication

The power of LC-MS/MS for dereplication stems from its two-dimensional separation and identification process. First, liquid chromatography (LC) separates the complex mixture of metabolites in a crude extract based on physicochemical properties such as polarity. This reduces ion suppression and simplifies the mass spectrometric analysis. The separated analytes are then introduced into the mass spectrometer [21].

Electrospray ionization (ESI) is the most common soft ionization technique, gently converting liquid-phase molecules into gas-phase ions ([M+H]⁺ or [M-H]⁻) with minimal fragmentation, providing the intact molecular weight [21]. For less polar compounds, alternative techniques like atmospheric pressure chemical ionization (APCI) may be employed [21].

The tandem mass spectrometry (MS/MS) component adds a critical layer of specificity. In a common triple quadrupole configuration, the first quadrupole (Q1) selects the precursor ion of interest. This ion is then fragmented in a collision cell (q2) through collision-induced dissociation (CID), and the resulting product ion spectrum is recorded by the third quadrupole (Q3) [21] [22]. This MS/MS spectrum serves as a unique "fingerprint" of the compound, reflecting its specific chemical structure and far more diagnostic than the molecular weight alone.

Table 1: Key Ionization Techniques and Mass Analysers in LC-MS/MS for Dereplication

| Component | Common Types in Dereplication | Primary Function & Advantage |

|---|---|---|

| Ionization Source | Electrospray Ionization (ESI) | Soft ionization for polar molecules; produces [M+H]⁺/[M-H]⁻ ions [21]. |

| Atmospheric Pressure Chemical Ionization (APCI) | Suitable for less polar, thermally stable small molecules (e.g., some steroids) [21]. | |

| Mass Analyser | Triple Quadrupole (QqQ) | Excellent for targeted quantification (MRM) and library matching; robust and sensitive [21] [22]. |

| Quadrupole-Time of Flight (Q-TOF) | High-resolution and accurate mass measurement for untargeted profiling; enables formula prediction [20]. | |

| Ion Trap (e.g., LTQ) | Allows multiple stages of fragmentation (MSⁿ); useful for elucidating fragmentation pathways [19]. |

High-resolution mass spectrometers (HRMS), such as Q-TOF or Orbitrap instruments, provide accurate mass measurements to determine elemental composition, adding another definitive filter for compound identification [20].

The Dereplication Workflow: From Raw Extract to Confident Identification

A modern dereplication workflow integrates LC-MS/MS analysis with powerful computational tools and databases. The following diagram illustrates this integrated process.

Figure 1: Integrated LC-MS/MS Dereplication Workflow for Natural Products [19] [2] [20].

The workflow begins with a crude extract, which is separated by LC. Each chromatographic peak is analyzed by MS to obtain its molecular ion and then fragmented to yield an MS/MS spectrum [19]. The critical, high-throughput step is the automated comparison of this experimental MS/MS data against reference spectral libraries.

Platforms like Global Natural Products Social Molecular Networking (GNPS) are central to this effort [19] [2]. GNPS hosts a crowdsourced MS/MS library. A query spectrum is matched against this library using similarity metrics (e.g., cosine score). A high-score match provides a putative identification, effectively dereplicating the compound.

Furthermore, GNPS enables molecular networking, an untargeted approach that visualizes the chemical relationships within a dataset. Spectra are clustered based on similarity, forming molecular families. Known compounds identified in one node can help annotate structurally related, potentially novel analogues in the same cluster, guiding the discovery process beyond simple dereplication [19] [2].

Validation and Quality Assurance: Ensuring Confidence in Dereplication

Confident dereplication requires that the underlying LC-MS/MS data be reliable, reproducible, and of high quality. Adherence to rigorous method validation and routine series quality control is non-negotiable. Validation characterizes the method's performance capabilities, while series validation confirms that performance is maintained for each analytical batch [23].

Table 2: Essential Validation Parameters for Quantitative LC-MS/MS Dereplication Methods [23] [24]

| Validation Parameter | Definition & Purpose | Typical Acceptance Criteria (Example) |

|---|---|---|

| Accuracy | Closeness of measured value to true value. Assesses method correctness. | Mean accuracy within ±15% of nominal (±20% at LLOQ). |

| Precision | Closeness of repeated measurements. Includes within-run (repeatability) and between-run. | CV ≤15% (≤20% at LLOQ). |

| Specificity/Selectivity | Ability to measure analyte unequivocally in presence of matrix. | No significant interference (>20% of LLOQ) from blank matrix. |

| Lower Limit of Quantification (LLOQ) | Lowest concentration measurable with acceptable accuracy and precision. | Signal-to-noise ≥10:1; accuracy/precision as above. |

| Linearity | Ability to produce results proportional to concentration across a range. | Coefficient of determination (R²) ≥0.99. |

| Recovery | Efficiency of extraction process. | Consistent and reproducible recovery, not necessarily 100%. |

| Matrix Effect | Suppression or enhancement of ionization by co-eluting matrix. | Evaluated; internal standard correction typically applied. |

| Stability | Analyte integrity during storage, processing, and analysis. | Measured concentration within ±15% of nominal. |

For ongoing quality assurance, each analytical batch or "series" must pass predefined acceptance criteria before its data can be used for dereplication. A comprehensive approach, as outlined in the following checklist derived from clinical standards, is highly applicable to ensure data integrity in discovery settings [23].

Figure 2: Key Checks for LC-MS/MS Analytical Series Validation [23].

Detailed Experimental Protocols for Dereplication

Protocol 1: Untargeted Dereplication of Plant Extracts Using LC-QTOF-MS/MS and GNPS [19] [20]

This protocol is designed for the high-throughput profiling and dereplication of secondary metabolites in crude plant extracts.

- Sample Preparation: Weigh 10-50 mg of dried, powdered plant material. Extract with an appropriate solvent (e.g., 80% methanol/water, acetone) via sonication or agitation for 30-60 minutes. Centrifuge, filter (0.22 µm PTFE or nylon), and dilute the supernatant as needed for analysis.

- LC Conditions:

- Column: Reversed-phase C18 (e.g., 2.1 x 100 mm, 1.7-1.8 µm particle size).

- Mobile Phase: A: Water with 0.1% Formic Acid; B: Acetonitrile with 0.1% Formic Acid.

- Gradient: 5% B to 100% B over 15-25 minutes, hold, re-equilibrate.

- Flow Rate: 0.3-0.4 mL/min. Column temperature: 40°C. Injection volume: 1-5 µL.

- MS Conditions (QTOF):

- Ionization: ESI positive and/or negative mode.

- Mass Range: 100-1500 m/z for MS¹.

- Data-Dependent Acquisition (DDA): MS¹ survey scan followed by MS/MS fragmentation of the top N most intense ions (e.g., top 10). Dynamic exclusion enabled.

- Collision Energies: Ramped (e.g., 20-40 eV) to generate informative fragments.

- Data Processing & Dereplication:

- Convert raw data files (.d, .raw) to open formats (.mzML, .mzXML).

- Upload files to the GNPS platform (https://gnps.ucsd.edu).

- Perform a "library search" workflow. Set precursor and fragment ion mass tolerances (e.g., 0.02 Da for high-res data). Set minimum cosine score for matches (e.g., 0.7).

- Inspect results: Matches with high cosine scores and fragment coverage are putative identifications. Review manually for plausibility (retention time, adducts).

Protocol 2: Targeted Quantitative Profiling of Known Bioactives Using LC-QqQ-MS/MS [20]

This protocol is for quantifying specific, known compound classes (e.g., flavonoids, terpenoids) after initial untargeted dereplication.

- Method Development:

- Standard Solutions: Prepare analytical standards for target compounds.

- MRM Optimization: Directly infuse each standard to select the optimal precursor ion (Q1) and the 2-3 most intense product ions (Q3). Optimize collision energy for each transition. The most intense transition is used for quantification, others for qualification.

- Chromatography Optimization: Adjust gradient and mobile phase to achieve baseline separation of critical pairs and short run times.

- Validated Method Execution:

- Calibration Curve: Prepare matrix-matched calibration standards covering the expected concentration range (e.g., 5 points).

- Internal Standards: Use stable isotope-labeled (SIL) internal standards for each analyte or class when possible.

- LC-QqQ-MS/MS Analysis: Use Multiple Reaction Monitoring (MRM) mode. The instrument cycles through each defined MRM transition, dwell time ~10-50 ms.

- Quantification: Use the calibration curve to calculate the concentration of each target compound in the unknown samples based on the analyte-to-internal standard peak area ratio [21] [22].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for LC-MS/MS Dereplication

| Item | Function & Role in Dereplication |

|---|---|

| Ultra-Purity Solvents & Additives | LC-MS grade water, acetonitrile, methanol, and formic acid/ammonium formate are essential to minimize chemical noise, prevent ion source contamination, and ensure reproducible chromatography [20]. |

| Stable Isotope-Labeled (SIL) Internal Standards | Chemically identical to the analyte but heavier (e.g., ¹³C, ²H). Added to samples to correct for losses during preparation and variability in ionization efficiency (matrix effects), crucial for accurate quantification [23] [24]. |

| Analytical Reference Standards | Pure chemical compounds used to build calibration curves for quantification and to acquire reference MS/MS spectra for library generation and method development [20]. |

| Solid-Phase Extraction (SPE) Cartridges | Used for rapid fractionation or clean-up of crude extracts to reduce complexity, concentrate analytes of interest, or remove interfering salts/polymers prior to LC-MS/MS analysis [19]. |

| Quality Control (QC) Materials | Pooled sample or commercially available control matrices spiked with known amounts of analytes. Run in every batch to monitor method accuracy, precision, and stability over time [23]. |

| Matrix-Matched Calibrators | Calibration standards prepared in a blank matrix that mimics the sample (e.g., extracted control plant tissue). Corrects for matrix effects on ionization, providing more accurate quantification than solvent-based calibrators [23]. |

Within the overarching thesis that efficient discovery hinges on avoiding redundant effort, LC-MS/MS stands as the indispensable technological pillar of modern dereplication. By integrating high-resolution chromatographic separation with highly specific mass spectrometric detection and fragmentation, it provides a rapid, sensitive, and information-rich profile of complex natural mixtures. When this analytical power is coupled with public spectral libraries and computational platforms like GNPS for molecular networking, the dereplication process is transformed from a simple check against knowns into an active strategy for guiding the discovery of novelty [19] [2].

The rigorous application of method validation and series quality assurance protocols ensures that the data driving these critical "go/no-go" decisions are trustworthy [23] [24]. As natural product research continues to evolve towards ever-greater throughput and complexity, the role of LC-MS/MS as the dereplication workhorse will only become more central, ensuring that scientific and financial resources are invested in truly novel compounds with the greatest potential to become the therapeutics of the future.

The discovery of natural products (NPs) has been a cornerstone of therapeutic development, yielding countless drugs. However, a persistent and costly challenge has been the rediscovery of known compounds late in the isolation pipeline, which wastes significant resources and time [25]. Dereplication—the early identification of known molecules—is therefore a critical gatekeeping step in modern NP research. It aims to filter out known entities to focus efforts on novel chemistry, thereby accelerating discovery and improving the return on investment [25].

Traditional dereplication relies on hyphenated techniques (e.g., HPLC-MS, HPLC-NMR) and bioactivity fingerprints, which compare physical characteristics like retention time, UV profiles, or biological responses [25]. While powerful, these methods can struggle with complex mixtures and often fail to identify structural analogs—molecules with slight modifications that may possess novel bioactivities [25].

Molecular networking (MN) has emerged as a transformative computational and visualization strategy that directly addresses these gaps. By organizing tandem mass spectrometry (MS/MS) data based on spectral similarity, MN provides a global, visual map of chemical space. Within this map, known compounds can be rapidly pinpointed (dereplicated), and, crucially, their structurally related analogs are visually clustered around them, revealing hidden novelty within families of known scaffolds [25]. This guide details the technical foundations, protocols, and applications of molecular networking as an indispensable tool for efficient dereplication and analog discovery.

Technical Foundations of Molecular Networking

The core premise of molecular networking is that structurally similar molecules share similar fragmentation patterns when subjected to collision-induced dissociation in a mass spectrometer [25]. This chemical similarity is quantified and visualized as a network.

Core Concepts and Terminology: In a molecular network, each node represents a consensus MS/MS spectrum (a merged spectrum from ions of similar mass and pattern). Nodes are connected by edges when the similarity of their spectra exceeds a defined threshold. A group of interconnected nodes forms a molecular family, visually representing a class of related metabolites [26]. The primary metric for spectral similarity is the cosine score, a normalized dot-product where a score of 1 indicates identical spectra and 0 indicates no similarity [26].

Evolution of Methodologies: The field has evolved from Classical Molecular Networking (CMN) to more advanced, information-rich workflows.

- Classical Molecular Networking (CMN): The foundational method, which networks spectra based purely on MS/MS similarity using algorithms like MS-Cluster to merge near-identical spectra [26] [27].

- Feature-Based Molecular Networking (FBMN): This now-standard advance integrates data from LC-MS feature detection tools (e.g., MZmine, XCMS). FBMN links network nodes to chromatographic features, enabling relative quantification (using peak areas), resolution of isomers with different retention times, and reduction of spectral redundancy [28].

- Specialized Networking Workflows: The ecosystem has expanded to include targeted strategies such as Ion Identity Molecular Networking (for adduct and complexation relationships), Bioactive Molecular Networking (integrating bioassay data), and Library-Enhanced Networking (for more confident annotations) [29].

Table 1: Comparison of Molecular Networking Approaches

| Approach | Core Data Input | Key Advantages | Primary Use Case |

|---|---|---|---|

| Classical (CMN) | Raw MS/MS spectra (.mzML, .mgf) | Fast, simple, ideal for large-scale or repository-scale meta-analysis [28]. | Initial exploration of spectral datasets; meta-analysis across studies. |

| Feature-Based (FBMN) | Aligned LC-MS features + MS/MS | Quantification, isomer resolution, reduced redundancy, enables robust statistics [28]. | Detailed analysis of individual LC-MS/MS studies; comparative metabolomics. |

| Ion Identity (IIN) | FBMN output + peak shape correlation | Groups different ion forms (e.g., [M+H]⁺, [M+Na]⁺) of the same molecule [26]. | Simplifying networks and correctly assessing metabolite abundance. |

Core Experimental and Computational Methodologies

The Molecular Networking Workflow

A standard MN workflow involves sequential steps from data acquisition to biological interpretation [25] [27].

Diagram 1: Molecular Networking Conceptual Workflow

Diagram Title: Conceptual Workflow for Molecular Networking

Detailed Protocol: Executing a Feature-Based Molecular Network on GNPS

The Global Natural Products Social Molecular Networking (GNPS) platform is the most widely used resource for performing MN [29] [27]. Below is a protocol for a typical FBMN job.

Step 1: Data Acquisition and Conversion

- Acquire LC-MS/MS data in data-dependent acquisition (DDA) mode.

- Convert vendor files (.raw, .d) to an open format (.mzML, .mzXML) using tools like MSConvert.

Step 2: LC-MS Feature Detection with MZmine 3

- Input: .mzML files.

- Process: Use MZmine 3 to perform mass detection, chromatogram building, deconvolution, isotope grouping, and alignment across samples.

- Output: A feature quantification table (.CSV) and a representative MS/MS spectral summary file (.MGF). These are linked via feature IDs [28].

Step 3: Molecular Networking Job on GNPS

- Upload: Import the .MGF and .CSV files to GNPS.

- Parameter Selection: Critical parameters directly impact network quality and interpretation [27].

- Precursor Ion Mass Tolerance: Set according to instrument accuracy (e.g., ±0.02 Da for high-resolution instruments).

- Fragment Ion Mass Tolerance: Typically ±0.02 Da for high-res instruments.

- Minimum Cosine Score: Usually 0.7 for connecting nodes. A higher value (e.g., 0.8) creates more stringent, related families.

- Minimum Matched Peaks: Set to 4-6 to require a minimum shared fragment ions for a valid edge.

- Library Search: Enable spectral library matching against public (e.g., GNPS) or custom libraries. A score threshold of 0.7 is standard for confident annotations [27].

Step 4: Visualization and Analysis

- Online Viewer: Explore networks directly in the GNPS browser. Nodes can be colored by sample origin or annotated compound class.

- Advanced Visualization: Export the network (as a .graphML file) to Cytoscape for advanced layout customization, filtering, and graphical design [26]. Apply principles from network visualization best practices to optimize clarity [30].

Step 5: Annotation Enhancement

- Use integrated tools on GNPS to boost annotations:

Diagram 2: Technical FBMN Workflow on GNPS

Diagram Title: Technical FBMN Protocol from Data to Annotation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 2: Key Software and Platforms for Molecular Networking

| Tool/Platform | Function | Role in Workflow | Access/Reference |

|---|---|---|---|

| GNPS (Global Natural Products Social) | Web-based ecosystem for MS/MS data analysis. | Core platform for running MN jobs, library searches, and storing/publicizing data [29] [27]. | https://gnps.ucsd.edu |

| MZmine 3 | Open-source software for LC-MS data processing. | Performs feature detection, alignment, and deconvolution for FBMN input [28]. | https://mzmine.github.io |

| Cytoscape | Open-source platform for complex network visualization and analysis. | Advanced visualization, customization, and exploration of molecular networks exported from GNPS [26]. | https://cytoscape.org |

| MSConvert (ProteoWizard) | Tool for converting mass spectrometer vendor files to open formats. | Converts .raw, .d, etc., to .mzML or .mzXML for GNPS compatibility [27]. | Part of ProteoWizard. |

| SIRIUS | Software for molecular structure annotation from MS/MS data. | Provides molecular formula and structure predictions; can be integrated via MolNetEnhancer [29]. | https://bio.informatik.uni-jena.de/software/sirius/ |

Applications in Dereplication and Analog Discovery

Molecular networking's power lies in its dual capability for dereplication and novel analog discovery, as demonstrated in numerous studies.

Comprehensive Dereplication: In a landmark study, MN was applied to diverse marine and terrestrial microbial samples, leading to the dereplication of 58 known molecules, including compounds like carmabin A, tumonoic acid I, and barbamide [25]. The process was accelerated by including "seed" spectra of known standards in the network.

Targeted Analog Discovery: More importantly, the networks revealed clusters of uncharacterized nodes around these known compounds. For example, the dereplication of barbamide also highlighted the presence of 4-O-demethylbarbamide and a putative dechlorobarbamide analog [25]. Similarly, networks from Moorea bouillonii suggested novel chlorinated, methylated, and deoxygenated analogs of the known cytotoxins lyngbyabellin A and the apratoxins [25]. This visual guidance prioritizes isolation efforts toward novel variants within a bioactive scaffold.

Quantitative and Isomeric Analysis: In food science, FBMN coupled with a quantitative ion strategy was used to profile glucosinolates in broccoli and cauliflower, leading to the discovery of two new indole-glucosinolates [31]. The quantitative aspect of FBMN allowed for accurate profiling alongside discovery.

Molecular networking has fundamentally changed the strategy of natural product discovery by making dereplication a proactive, discovery-oriented process. The field continues to evolve rapidly. Future directions include the deeper integration of ion mobility spectrometry for enhanced isomer resolution [28], tighter coupling with genomic data (metabologenomics) to link molecules to their biosynthetic pathways [29], and the development of real-time networking for guiding fraction collection.

The integration of advanced annotation pipelines like MolNetEnhancer, which synthesizes results from multiple in-silico tools, is making structural proposals more accessible and confident [26]. As these tools become more user-friendly and integrated, molecular networking will solidify its role as an essential, central platform in the metabolomics and natural products workflow, ensuring that research resources are invested in true novelty and accelerating the journey from complex extract to new therapeutic lead.

The discovery of novel bioactive natural products is a cornerstone of drug development, particularly for antibiotics, anticancer agents, and other therapeutics. However, this field has long been hampered by a persistent and costly challenge: the high rate of rediscovering known compounds [32]. Historically, researchers would invest substantial time and resources in the isolation and structural elucidation of a promising compound, only to find it was already documented. This inefficiency stifled innovation and wasted valuable research capital.

Dereplication—the process of rapidly identifying known compounds within a complex mixture early in the discovery pipeline—was developed as the solution to this problem [33]. By using chemical or spectroscopic information to screen out known entities, researchers can focus their efforts on truly novel chemistry. The advent of liquid chromatography coupled with tandem mass spectrometry (LC-MS/MS) provided a powerful tool for this purpose, generating unique fragmentation "fingerprints" for compounds. The subsequent challenge became developing computational methods to compare these experimental fingerprints against vast repositories of known chemical data [34].

This whitepaper examines the integrated ecosystem of tools that has transformed dereplication from a manual screening process into a high-throughput, computational science. We focus on the Global Natural Products Social (GNPS) molecular networking infrastructure, its in-silico dereplication algorithms (DEREPLICATOR and DEREPLICATOR+), and the foundational role of curated spectral libraries. Framed within the broader thesis that efficient dereplication is essential to prevent the rediscovery of known compounds, this guide provides researchers with a technical roadmap for implementing these powerful strategies in their drug discovery workflows.

Foundational Technologies: Spectral Libraries and the GNPS Infrastructure

The Central Role of Mass Spectral Libraries

Spectral library searching is the most common and reliable approach for annotating compounds in untargeted metabolomics [34]. The concept is based on matching an experimental MS/MS spectrum against a reference library of spectra acquired from authentic chemical standards. A high-similarity match results in the transfer of the compound's identity from the library to the unknown spectrum, constituting a Level 2 (putatively annotated compound) or Level 3 (tentative candidate) identification according to the Metabolomics Standards Initiative [34].

The landscape of publicly accessible spectral libraries has expanded dramatically. As illustrated in Table 1, their size has increased more than 60-fold in recent years, a growth driven by community efforts and aggregation platforms [34].

Table 1: Key Public and Commercial Spectral Libraries for Dereplication

| Library Name | Type | Approximate Scale (Compounds/Spectra) | Primary Focus & Notes | Source/Access |

|---|---|---|---|---|

| GNPS Community Libraries | Public, Aggregated | Hundreds of thousands of spectra | Natural products, microbial metabolites, lipids, drugs; exchanged with MoNA & MassBank EU. | GNPS Platform [34] |

| MassBank of North America (MoNA) | Public, Aggregated | Hundreds of thousands of spectra | Aggregates community and institutional libraries in an open repository. | MoNA Website [34] |

| NIST Tandem Mass Spectral Library | Commercial | 1.32 million spectra / 31k compounds | Broad small molecule coverage; includes human & plant metabolites. Considered a gold standard. | National Institute of Standards and Technology [34] [35] |