Dereplication in Drug Discovery: Accelerating the Pipeline from Natural Products to Novel Leads

This comprehensive article examines the pivotal role of dereplication within the drug discovery pipeline, specifically for researchers and drug development professionals.

Dereplication in Drug Discovery: Accelerating the Pipeline from Natural Products to Novel Leads

Abstract

This comprehensive article examines the pivotal role of dereplication within the drug discovery pipeline, specifically for researchers and drug development professionals. It establishes foundational principles, detailing the historical evolution and the three core pillars of taxonomy, molecular structures, and spectroscopy that underpin the process. The scope covers methodological advancements, including integrated LC-MS strategies and molecular networking, followed by practical troubleshooting for common challenges like nuisance compounds and workflow optimization. Finally, it explores validation through case studies, comparative analysis of tools, and the integration of emerging artificial intelligence and machine learning technologies, outlining a complete framework for efficient natural product lead identification.

The Foundation of Dereplication: Core Principles and Its Strategic Role in Drug Discovery

Foundational Understanding and Core Purpose

Dereplication is a strategic, early-stage process in natural product (NP) drug discovery defined as the use of chromatographic and spectroscopic analyses to recognize previously isolated or known substances present in a complex biological extract [1]. Its primary mandate is to expedite the discovery of novel bioactive compounds by systematically identifying and setting aside known entities or "nuisance" compounds, thereby preventing the costly and time-consuming rediscovery of known molecules [1] [2].

The operational scope of dereplication has evolved from simple comparison techniques to a sophisticated, multi-parametric decision gate within the drug discovery pipeline. Traditionally, it involved methods like UV comparison and thin-layer chromatography [1]. Today, it integrates hyphenated analytical techniques (e.g., LC-MS, LC-NMR), bioactivity profiling, and database mining to evaluate the chemical novelty of an active extract before committing to full-scale bioassay-guided fractionation [1] [3]. This is particularly critical because natural product extracts are inherently complex mixtures, and biological assays alone cannot distinguish between novel and known bioactive components [1].

The core purposes of dereplication are threefold:

- Novelty Filtering: To identify known compounds—including common interferents like tannins, fatty acids, or saponins—responsible for observed biological activity [1].

- Resource Prioritization: To recognize multiple extracts containing the same active profile, allowing researchers to prioritize the most promising, chemically unique leads for downstream isolation [1].

- Efficiency Enhancement: To condense multiple rounds of purification and testing into streamlined workflows, dramatically accelerating the early discovery timeline [4] [3].

Core Methodologies and Workflows

Modern dereplication employs a suite of orthogonal analytical and computational strategies. The choice of methodology depends on the sample origin, the nature of the bioassay, and the desired depth of information.

- Hyphenated Analytical Techniques: Liquid chromatography coupled with mass spectrometry (LC-MS) and photodiode array detection (LC-PDA-MS) forms the backbone of dereplication, providing data on molecular weight, fragmentation patterns, and UV profiles for comparison with libraries [4] [2]. Supercritical fluid chromatography-MS (SFC-MS) is an emerging greener alternative, offering rapid isolation without the need for dry-down steps common in reversed-phase LC [1].

- Ligand Fishing Assays: These target-based approaches, such as the ultrafiltration-based LLAMAS (Lickety-Split Ligand-Affinity-Based Molecular Angling System), transform the biological target into a sorbent. They selectively isolate binding molecules from a mixture in a single step, directly linking bioactivity to chemical identity [4].

- Mass Spectrometry-Based Molecular Networking: This computational strategy organizes MS/MS data based on chemical similarity, visually clustering related molecules. It not only dereplicates known compounds but also reveals structural analogs within a sample, guiding the discovery of novel variants [2].

- In-Silico Database Search Algorithms: Tools like DEREPLICATOR+ search experimental tandem mass spectra against vast databases of natural product structures (e.g., Dictionary of Natural Products, AntiMarin). By using detailed fragmentation models, they can identify diverse classes of metabolites beyond peptides, such as polyketides and terpenes, with high statistical confidence [5].

Table 1: Comparison of Core Dereplication Methodologies

| Methodology | Key Principle | Typical Data Output | Primary Strength | Common Tool/Platform |

|---|---|---|---|---|

| LC-PDA-MS/MS | Separation coupled with mass and UV spectral acquisition | Retention time, parent mass, fragment ions, UV spectrum | High sensitivity, robust and standardized workflows | Common commercial LC and MS systems |

| Ligand Fishing (e.g., LLAMAS) | Affinity capture of bioactive compounds using immobilized target | List of target-binding compounds from a mixture | Direct link between structure and bioactivity; high selectivity | Ultrafiltration plates; target protein/DNA [4] |

| Molecular Networking | Cosine-based clustering of MS/MS spectra by similarity | Visual network of related compounds; clusters of analogs | Discovers analogs and compound families; visual intuitive output | Global Natural Products Social Molecular Networking (GNPS) [2] |

| Database Search (e.g., DEREPLICATOR+) | In-silico matching of experimental spectra to theoretical fragmentation | Compound identity with statistical score (e.g., FDR) | High-throughput, automated identification from large spectral datasets | DEREPLICATOR+ algorithm; GNPS platform [5] |

Detailed Experimental Protocol: The LLAMAS Workflow

The following protocol details the LLAMAS, an integrated method for dereplicating DNA-binding molecules from complex natural product extracts [4].

1. Principle: LLAMAS combines ultrafiltration-based ligand fishing with LC-PDA-MS/MS analysis and database mining. Compounds with affinity for DNA are selectively retained in an incubation complex, while unbound molecules are removed. Comparative analysis of filtrates from DNA-containing and control samples reveals the binding agents.

2. Reagents and Materials:

- Biological Target: Bulk salmon sperm DNA (or specific DNA sequences).

- Ultrafiltration Units: 100 kDa molecular weight cut-off (MWCO) modified poly(ether sulfone) membrane filters.

- Incubation/Wash Buffer: Tris-EDTA buffer modified with glycerol and 33% (v/v) methanol (MeOH) to maintain DNA structure and solubilize diverse natural products.

- Elution Solvent: MeOH spiked with 0.1% formic acid.

- Analytical Instrumentation: Ultra-high-performance liquid chromatography (UHPLC) system coupled to a photodiode array detector and an ion-trap mass spectrometer capable of MS/MS.

3. Step-by-Step Procedure:

- Step 1 – Sample Incubation: Incubate the natural product extract (in incubation buffer) with DNA (experimental) or without DNA (control) for a defined period (e.g., 30 minutes) at room temperature.

- Step 2 – Ultrafiltration and Washing: Transfer the incubation mixture to the 100 kDa MWCO ultrafiltration device. Centrifuge (e.g., 5000 x g) to obtain the filtrate containing unbound compounds. Wash the retentate (DNA-compound complex) with incubation buffer to remove non-specifically bound materials. Collect all wash filtrates.

- Step 3 – Target Elution: Add the organic elution solvent (MeOH + 0.1% formic acid) to the retentate to disrupt DNA-ligand interactions. Centrifuge and collect the eluate containing the DNA-binding compounds.

- Step 4 – LC-PDA-MS/MS Analysis: Analyze the final wash filtrates and the eluates from both experimental and control samples using UHPLC-PDA-MS/MS.

- Step 5 – Data Analysis and Dereplication:

- Compare chromatograms of experimental vs. control samples. Compounds that show a significant decrease in peak area in the experimental wash filtrate or appear in the experimental eluate are candidate DNA binders.

- Use the acquired MS/MS spectral data and precursor masses to search natural product databases (e.g., GNPS, SciFinder, Dictionary of Natural Products) for identity confirmation [4].

Modern Approaches and Integrative Strategies

Contemporary dereplication is characterized by integration with other 'omics' technologies and high-throughput workflows.

- Integration with Genomics and Metagenomics: Genome mining tools predict biosynthetic gene clusters (BGCs) for novel compounds. Dereplication validates these predictions by matching the observed metabolites from the organism's extract to known compounds, ensuring effort is focused on truly novel BGC products [6] [5]. This synergy between genetic potential and chemical analysis is a cornerstone of modern NP discovery.

- High-Throughput Analytical Platforms: Advances in UHPLC, automated fractionation, and rapid scanning mass spectrometers have drastically increased the throughput of dereplication. SFC-MS is notable for its speed and reduced solvent consumption [1]. Furthermore, ambient ionization techniques like nanoDESI allow for direct analysis of microbial colonies, feeding spectra directly into molecular networking for real-time dereplication [2].

- Advanced Informatics and Artificial Intelligence: AI and machine learning are transforming dereplication. Models now predict bioactive compounds, infer mechanisms of action, and assist in structure elucidation [7] [8]. Large language models (LLMs) can standardize and curate data from literature and patents, populating the very databases essential for dereplication [7].

Table 2: Key Integrated 'Omics' and Informatics Tools for Dereplication

| Tool/Strategy | Function in Dereplication | Associated Technique | Outcome |

|---|---|---|---|

| Genome Mining (e.g., AntiSMASH) | Predicts biosynthetic potential for novel compounds from genetic data [6]. | Genomics / Metagenomics | Prioritizes strains with high novelty potential for chemical analysis. |

| Global Natural Products Social Molecular Networking (GNPS) | Public repository and platform for sharing, processing, and comparing MS/MS spectra [2] [5]. | Tandem Mass Spectrometry | Enables crowdsourced dereplication and discovery of analogs via molecular networking. |

| Machine Learning / AI Models | Predicts chemical identity, bioactivity, or structural class from spectral or genomic data [7] [8]. | Cheminformatics / Bioinformatics | Accelerates preliminary identification and prioritizes unknown signals for investigation. |

| Spectral Library Search Algorithms (e.g., DEREPLICATOR+) | Automates high-confidence matching of experimental spectra to vast compound libraries [5]. | Tandem Mass Spectrometry | Provides rapid, automated identifications with controlled false discovery rates (FDR). |

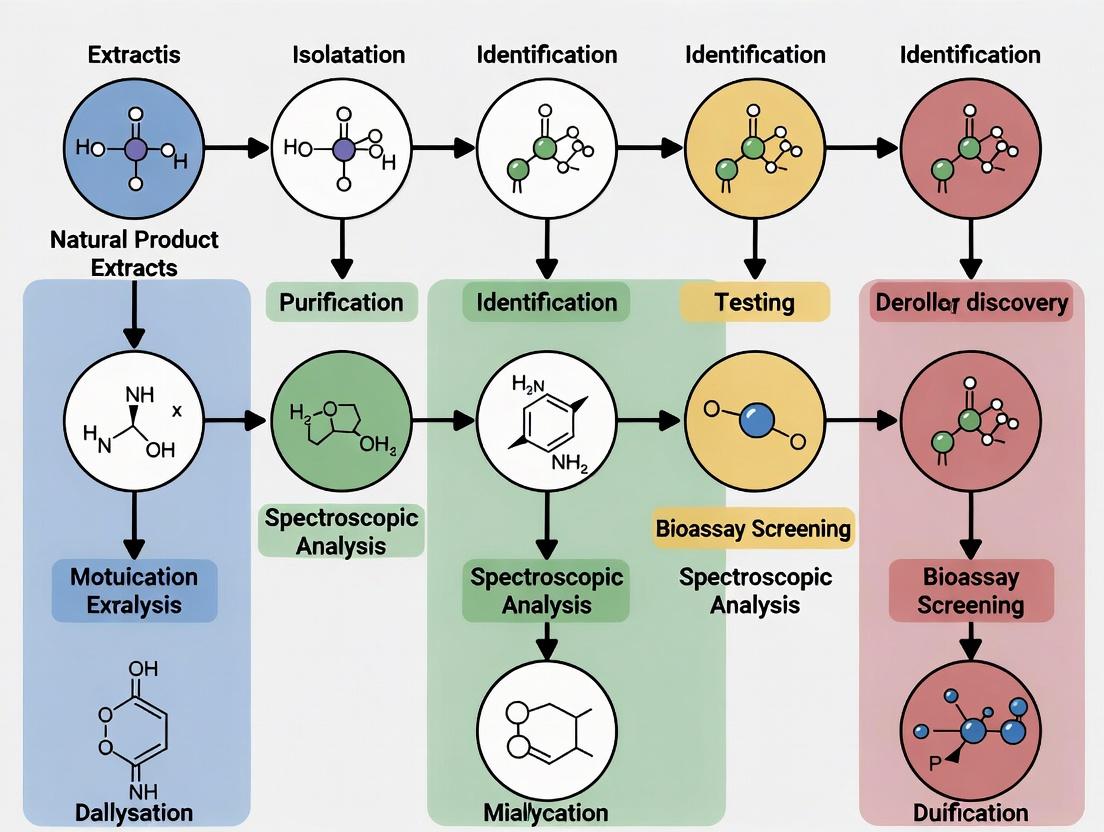

Diagram 1: Molecular Networking & Informatics Workflow for Dereplication (92 characters)

Future Directions and Evolving Challenges

The future of dereplication is inextricably linked to broader trends in sustainable drug discovery and digital transformation.

- Sustainability-Driven Discovery: Dereplication supports green chemistry principles by minimizing wasted resources on rediscovery. It aligns with sustainable sourcing (e.g., using genome mining to access compounds without overharvesting) and green analytical techniques like SFC-MS [1] [6].

- Advanced AI and Automation: The next generation of dereplication will see deeper AI integration. This includes using graph neural networks for better structure-spectrum predictions, generative AI for designing NP-inspired libraries, and large language models for intelligent database curation and literature mining [7] [8].

- Persisting and Emerging Challenges: Key hurdles remain, including handling extreme chemical complexity and "cocktail effects", the high cost of proprietary databases, and integrating disparate data types (genomic, spectral, bioassay) [3]. Ensuring data quality, standardization, and open access will be critical for advancing the field.

Diagram 2: Integrated Dereplication in the Drug Discovery Pipeline (83 characters)

Table 3: Key Research Reagent Solutions for Dereplication

| Item / Resource | Function in Dereplication | Example / Note |

|---|---|---|

| Hyphenated LC-MS System | Separates complex mixtures and provides mass spectral data for compound detection and fragmentation analysis. | UHPLC coupled to high-resolution Q-TOF or ion trap MS. |

| Standardized Bioassay Kits | Provides reliable biological activity data to trigger and guide the dereplication of active extracts. | Commercial enzyme inhibition or cell viability assay kits. |

| Ultrafiltration Devices | Enables ligand-fishing assays by size-based separation of target-compound complexes from unbound molecules. | 100 kDa MWCO centrifugal units for protein/DNA target assays [4]. |

| Natural Product Databases | Reference libraries for comparing spectral, chromatographic, and structural data. | Dictionary of Natural Products (commercial), AntiMarin, GNPS spectral libraries (public) [2] [5]. |

| Informatics Software Platforms | Processes, analyzes, and visualizes complex dereplication data. | GNPS for molecular networking, DEREPLICATOR+ for automated identification, Cytoscape for network visualization [2] [5]. |

| Specialized Chromatography | Offers orthogonal separation to resolve challenging compounds, improving MS detection. | SFC-MS for rapid, green analysis of non-polar metabolites [1]. |

The discovery of therapeutics from natural products (NPs) has been a cornerstone of medicine for millennia. From the use of opium and myrrh in ancient Mesopotamia to the modern application of paclitaxel and artemisinin, NPs and their derivatives have consistently provided novel lead compounds [9]. Historically, the predominant method for uncovering these bioactive entities was bioassay-guided fractionation (BGF), a linear, labor-intensive process of separating complex extracts based on biological activity. Despite its success, this approach presented significant bottlenecks, including the frequent rediscovery of known compounds and the inefficient allocation of resources [1].

Dereplication has emerged as the critical strategic pivot addressing these inefficiencies within the modern drug discovery pipeline. It is defined as the early and rapid identification of known compounds in complex mixtures before committing to full isolation and characterization [3]. By integrating advanced analytical chemistry, bioinformatics, and data mining, dereplication acts as a triage system, allowing researchers to prioritize novel chemotypes and avoid redundant work. Since approximately 2012, dereplication has experienced a publication boom, reflecting its role as a multidisciplinary field essential for accelerating the pace of NP discovery [10]. This article details the historical evolution from classical BGF to integrated dereplication workflows, framing it within the broader thesis that modern dereplication is not merely an auxiliary technique but a fundamental and indispensable component of an efficient, data-driven NP drug discovery pipeline.

The Traditional Paradigm: Bioassay-Guided Fractionation

2.1 Principles and Historical Workflow Bioassay-guided fractionation is an iterative, feedback-driven process. It begins with the selection and preparation of a crude natural extract (e.g., from plants, marine organisms, or microbes), which is then subjected to a biological assay relevant to a therapeutic target (e.g., antimicrobial, cytotoxic, or enzyme inhibition activity). The active crude extract is systematically separated, typically using chromatographic techniques like open-column or flash chromatography, into a series of less complex fractions. Each fraction is re-evaluated in the bioassay. Only those fractions retaining the desired activity are selected for the next round of fractionation, which employs higher-resolution separation methods (e.g., HPLC). This cycle of separation, bioassay, and selection continues until a pure, active compound is isolated, at which point structure elucidation (primarily via NMR and MS) is performed [9] [11].

2.2 Strengths and Inherent Limitations The principal strength of BGF is its unbiased, activity-centric approach. It requires no prior knowledge of the extract's chemical composition and is guaranteed to isolate compounds with a confirmed biological effect in the chosen assay [12]. This method was responsible for the discovery of countless blockbuster drugs.

However, its limitations became increasingly apparent:

- Inefficiency and Resource Intensity: The process is slow, often requiring months to years to isolate a single compound, and consumes large quantities of solvents and assay reagents.

- Rediscovery of Known Compounds: The most significant bottleneck was the high probability of painstakingly isolating well-characterized, ubiquitous "nuisance" compounds (e.g., tannins, fatty acids, common flavonoids) or known active compounds [1].

- "Cocktail Effect" Misidentification: Bioactivity in a crude fraction can result from the synergistic interaction of multiple compounds rather than a single potent agent. BGF can inadvertently break this synergistic combination during fractionation, causing the loss of activity and misleading the isolation pathway [1].

- Compound Degradation: Repeated handling and lengthy processes risk the degradation of labile metabolites.

The following table quantifies the historical success of NPs, underscoring the importance of the source material that BGF sought to mine, while also highlighting the need for more efficient methods [9] [11].

Table 1: Quantitative Impact of Natural Products in Drug Discovery

| Metric | Data | Time Period / Context |

|---|---|---|

| New Chemical Entities (NCEs) from Natural Sources | 28% | 1981-2002 |

| NCEs developed from natural product pharmacophores | 24% | 1981-2002 |

| FDA-approved drugs that are NPs or NP-derived | ~34% | 1981-2014 (of 1562 drugs) |

| Proportion in Antibiotic & Anticancer Agents | 60-80% | 1983-1994 |

| Prescription drugs in USA based on NPs | 84 of top 150 | 1997 analysis |

| Annual global medicine market from NPs | ~35% | - |

The Paradigm Shift: The Rise of Dereplication

Dereplication evolved as a solution to the core inefficiencies of BGF. Its primary objective is to "race to identify" known substances as early as possible in the discovery pipeline. The conceptual shift moved the point of chemical analysis from the end of the process (after isolation of a pure compound) to the very beginning (profiling of crude or semi-purified extracts) [10].

3.1 Core Objectives and Strategic Advantages The implementation of dereplication provides several key strategic advantages that streamline the NP pipeline:

- Priority Setting: Rapidly identifies extracts or fractions containing novel or rare chemotypes worthy of further investment.

- Resource Economy: Prevents the costly and time-consuming isolation of known or undesirable compounds.

- Chemical Ecology Insight: Can identify clusters of related metabolites within an extract, guiding the discovery of analogue series.

- Data-Rich Foundation: Creates searchable, annotated chemical profiles of extract libraries for future mining.

3.2 Evolution of Enabling Technologies The feasibility of modern dereplication is entirely dependent on technological advances in separation science, spectroscopy, and data processing:

- Hyphenated Analytical Techniques: The coupling of high-resolution separation with spectroscopic detection became foundational. LC-MS/MS and UHPLC-HRMS provide precise molecular formulae and fragment fingerprints. LC-NMR offers critical structural information from partially purified mixtures [3] [13].

- Databases and Cheminformatics: The development of comprehensive, searchable NP databases (e.g., AntiBase, MarinLit, GNPS) allows for the cross-referencing of acquired analytical data (exact mass, MS/MS spectra, UV profiles) against known compounds [3] [14].

- Molecular Networking: A transformative, visualization-based tool. Platforms like GNPS create networks where related MS/MS spectra cluster together. This allows for the immediate visualization of known compound clusters (dereplication) and the simultaneous highlighting of unique, potentially novel spectral nodes in a complex dataset [3] [14].

- Advanced Structure Elucidation: Computer-Assisted Structure Elucidation (CASE) systems and quantum chemical calculations for NMR and optical properties aid in solving complex structures, particularly stereochemistry, with less material and greater speed [3].

Integrated Modern Workflow: Combining Bioassay and Dereplication

The contemporary NP discovery pipeline is not an abandonment of bioactivity but a synergistic integration of biological screening with upfront chemical intelligence. The modern workflow is parallelized and data-driven.

Diagram: Modern Dereplication-First Workflow in Natural Product Discovery. This integrated pipeline conducts biological screening and chemical profiling in parallel, with a dereplication "engine" triaging results to prioritize novel chemotypes for targeted isolation [3] [14] [10].

4.1 Detailed Experimental Protocols

Protocol 1: High-Throughput Bioassay Coupled with Microfractionation for Dereplication

- Objective: To identify the specific chromatographic peak(s) responsible for activity in a complex extract.

- Procedure:

- Extract Preparation: Prepare a concentrated solution of the active crude extract in a suitable solvent (e.g., DMSO).

- Analytical Separation: Inject the extract onto an analytical-scale UHPLC system.

- Microfractionation: Using an automated fraction collector, collect the column effluent at fixed time intervals (e.g., every 6-15 seconds) into a 96- or 384-well microtiter plate. Evaporate solvents to leave dried fractions.

- Daughter Plate Bioassay: Re-dissolve each fraction in assay buffer and subject it to the relevant bioassay (e.g., a cell-based or enzymatic assay).

- Chemical Analysis: In parallel, analyze the same UHPLC method coupled to HR-MS. Correlate the bioactivity results from the microtiter plate wells with the specific retention time and MS data.

- Outcome: The active compound(s) are pinpointed to a specific retention time window and associated with a precise mass and MS/MS spectrum for immediate database dereplication [13].

Protocol 2: LC-HRMS/MS and Molecular Networking for Dereplication of an Active Extract

- Objective: To rapidly visualize the chemical composition of an active extract and identify both known and novel metabolite families.

- Procedure:

- Data Acquisition: Analyze the crude or pre-fractionated extract using RP-UHPLC coupled to a high-resolution tandem mass spectrometer (e.g., Q-TOF, Orbitrap). Acquire data in both positive and negative ionization modes with data-dependent acquisition (DDA) to obtain MS1 and MS2 spectra.

- Data Processing: Convert raw data files to an open format (.mzML). Use software like MZmine or MS-DIAL for peak picking, alignment, and generation of a feature table (containing m/z, RT, and intensity).

- Molecular Networking: Upload the MS2 data files (.mgf) to the GNPS platform (https://gnps.ucsd.edu). Set networking parameters (cosine score, minimum matched peaks). The analysis clusters MS2 spectra based on similarity.

- Dereplication Analysis: Within the GNPS environment, search MS2 spectra against reference spectral libraries. Nodes (metabolites) with library matches are annotated as known compounds. Clusters containing only unmatched nodes represent potential novel metabolite families.

- Bioactivity Overlay: Import the feature table from Step 2 and overlay quantitative bioassay data (if available) or annotate which fraction/cluster showed activity. This visually links bioactivity to specific regions of the chemical network [3] [14].

4.2 The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Dereplication

| Reagent / Material | Function in Dereplication |

|---|---|

| Ultra-High-Performance Liquid Chromatography (UHPLC) Systems | Provides high-resolution, rapid separation of complex natural extracts, essential for obtaining pure compound spectra and accurate microfractionation [13]. |

| High-Resolution Mass Spectrometer (HR-MS/MS; e.g., Q-TOF, Orbitrap) | Delivers exact mass measurements for molecular formula determination and generates fragmentation spectra (MS/MS) for structural comparison and database matching [3] [14]. |

| Global Natural Products Social Molecular Networking (GNPS) Platform | A cloud-based ecosystem for processing MS/MS data, performing spectral library searches (dereplication), and creating visual molecular networks to explore chemical relationships [3] [14]. |

| Natural Product Databases (e.g., AntiBase, MarinLit, LOTUS, NP Atlas) | Curated repositories of chemical, spectral, and biological data for known NPs. Used to query acquired MS, MS/MS, and NMR data for identification [3] [14]. |

| Computer-Assisted Structure Elucidation (CASE) Software | Uses algorithms to interpret spectroscopic data (primarily NMR) and generate plausible structural candidates, drastically accelerating the structure elucidation process [3]. |

| Microfractionation & Automated Liquid Handling Systems | Enables the precise collection of HPLC peaks into microtiter plates for parallelized biological testing, directly linking chromatographic peaks to bioactivity [12] [13]. |

Impact and Future Directions in the Drug Discovery Pipeline

The integration of dereplication has fundamentally reshaped the economics and output of the NP discovery pipeline. It has enabled a shift from low-throughput, single-compound isolation to the high-throughput characterization of chemical libraries. This allows academic and industrial labs to interrogate biodiversity more comprehensively, focusing efforts on the most promising, novel leads.

Future advancements are poised to deepen this integration:

- Artificial Intelligence and Machine Learning: AI models are being trained to predict MS/MS fragmentation patterns, propose structures from spectral data, and even predict bioactivity from chemical fingerprints, further accelerating the identification and prioritization steps [3].

- Integrated Multi-Omics: Combining metabolomics (dereplication) with genomics (genome mining for biosynthetic gene clusters) and transcriptomics allows for a holistic understanding of an organism's chemical potential and its regulation, guiding targeted discovery efforts [3] [14].

- Open Data and Collaboration: Platforms like GNPS exemplify the power of community-shared data. The growth of open-access spectral libraries continues to enhance the power and accuracy of dereplication for the global scientific community [14].

The historical journey from bioassay-guided fractionation to modern dereplication represents a paradigm shift in natural product drug discovery. Dereplication has evolved from a simple screening step to a sophisticated, data-centric discipline that sits at the heart of the discovery pipeline. By frontloading chemical intelligence, it effectively de-risks the resource-intensive process of natural product isolation, ensuring that effort is invested in truly novel and promising chemotypes. As part of a broader thesis on modern drug discovery, dereplication is the critical filter that transforms the vast complexity of nature into a tractable stream of innovative lead compounds, thereby securing the continued relevance and productivity of natural products as an indispensable source of future medicines.

The drug discovery pipeline is a high-stakes endeavor characterized by immense investments of time and capital, where the efficient triage of potential leads is paramount. Within this context, especially in natural product research, dereplication stands as a critical, proactive strategy. It is defined as the process of rapidly identifying known compounds within a crude extract or fraction early in the discovery workflow, thereby preventing the redundant expenditure of resources on the re-isolation and re-elucidation of previously characterized molecules [15]. The core thesis of modern dereplication is that this rapid identification is not reliant on a single data point but on the synergistic integration of three foundational pillars: the biological taxonomy of the source organism, the molecular structure of the compound, and its spectroscopic signature [15].

The convergence of these three data streams creates a powerful filter. Taxonomic information provides a prior probability, guiding the search toward compounds known from related organisms. The definitive identification is achieved by matching experimental spectroscopic data—most crucially from mass spectrometry (MS) and nuclear magnetic resonance (NMR)—against the structural and spectral data of known compounds within curated databases [15]. This integrated approach accelerates the discovery process, allowing researchers to swiftly bypass known entities and focus efforts on truly novel chemistry with potential therapeutic value. The following sections deconstruct each pillar, detail their integration, and provide a practical protocol illustrating the complete workflow.

Deconstructing the Three Pillars

Pillar I: Biological Taxonomy as a Predictive Filter

Taxonomy, the science of classifying living organisms, serves as the first logical filter in dereplication. It operates on the principle of chemotaxonomy, which posits that evolutionary relationships are often reflected in metabolic profiles. Organisms within the same genus or family frequently biosynthesize similar or identical secondary metabolites [15]. Therefore, knowing the precise taxonomic identity of a source organism (e.g., the marine sponge Aplysina cauliformis) allows researchers to narrow the search space significantly. Instead of comparing spectral data against all known natural products, the search can be focused on compounds reported from the same genus, family, or order, dramatically increasing efficiency and hit accuracy.

- Key Tools & Databases: Navigating modern taxonomy requires digital resources. The NCBI Taxonomy Browser provides a constantly updated hierarchical framework for species classification [15]. For linking taxonomy to chemistry, databases like KNApSAcK and UNPD (Universal Natural Products Database) are essential, as they explicitly connect compound records to their biological sources [15].

Pillar II: Molecular Structures as the Definitive Identity

The molecular structure is the ultimate identifier of a compound. In silico, chemical structures are represented as mathematical graphs (atoms as nodes, bonds as edges). For dereplication, the accurate and standardized representation of these structures in databases is critical [15].

- Representation Formats: Common machine-readable formats include:

- SMILES (Simplified Molecular-Input Line-Entry System): A linear string notation [15].

- InChI (International Chemical Identifier): A non-proprietary, layered identifier standard developed by IUPAC [15].

- MOL/SDF files: File formats that include atomic coordinates, essential for depicting 2D/3D structure and for computational analysis [15].

- Critical Databases: Comprehensive structural databases are the reference libraries for dereplication. PubChem and CAS (Chemical Abstracts Service) are among the largest public and commercial collections, respectively [15]. For natural products, resources like COCONUT offer large, curated, open-access collections of unique NP structures [15]. The integrity of dereplication hinges on the quality and comprehensiveness of these structural repositories.

Pillar III: Spectroscopy as the Analytical Interrogator

Spectroscopy encompasses the suite of analytical techniques that probe the interaction of matter with electromagnetic radiation or other energy sources to produce a characteristic "fingerprint" [16]. In dereplication, spectroscopy provides the experimental data that is matched against theoretical or library data associated with known structures.

Core Techniques:

- Mass Spectrometry (MS): Determines the mass-to-charge ratio (m/z) of ions. High-Resolution MS (HRMS) delivers exact mass measurements, enabling the determination of empirical molecular formulas with high confidence. Tandem MS (MS/MS or MSⁿ) fragments precursor ions, providing structural information about molecular substructures [15] [17].

- Nuclear Magnetic Resonance (NMR) Spectroscopy: Provides detailed information on the carbon-hydrogen framework of a molecule. ¹H and ¹³C NMR chemical shifts, along with 2D experiments (e.g., COSY, HSQC, HMBC), reveal connectivity and spatial relationships between atoms, offering the most definitive proof of structure matching [15].

- Hybrid & Supplementary Techniques: LC-MS/MS and LC-NMR combine separation with spectral analysis for complex mixtures. Other techniques like infrared (IR) and ultraviolet-visible (UV-Vis) spectroscopy provide supportive data on functional groups and chromophores [16].

The Data Gap and Predictive Solution: A major historical challenge has been the lack of accessible, high-quality experimental spectral libraries for many natural products. A powerful workaround is the use of computational prediction. Software tools (e.g., CNMR Predictor, nmrshiftdb2) can predict NMR chemical shifts for a given candidate structure with high accuracy. This allows for dereplication by comparing experimental spectra against predicted spectra for all candidate structures from a taxonomically informed search [15].

The Integrated Dereplication Workflow

The power of the three-pillar approach is realized in their integration within a systematic workflow. The process is not linear but iterative, with each piece of evidence refining the hypothesis.

Diagram: The Three-Pillar Dereplication Workflow

- Input: The process begins with a biologically active crude extract.

- Taxonomic Filtering (Pillar I): The source organism is identified, and its taxonomy is used to query databases (e.g., KNApSAcK, UNPD) for a focused list of known compounds associated with related organisms.

- Analytical Interrogation (Pillar III): The extract is analyzed via HRMS and NMR to generate experimental spectroscopic data (e.g., molecular ion, fragmentation pattern, chemical shifts).

- Integrated Query & Matching: An annotated query is created, combining the taxonomic prior with the experimental spectral data. This query is used to search structural databases (Pillar II). Searches can be for:

- Experimental library spectra (if available).

- Predicted spectra generated in real-time for database candidates.

- Decision Point: A confident match leads to dereplication (identification of a known compound). A partial or absent match flags the compound as a potential novel entity, warranting further isolation and full structure elucidation. This step may trigger a refinement of the taxonomic or analytical parameters in an iterative loop.

Quantitative Landscape of Dereplication Tools and Data

The efficacy of dereplication is directly tied to the scale and quality of available data resources. The table below summarizes key metrics for databases and research activity central to the three-pillar approach.

Table 1: Key Databases and Metrics for Dereplication

| Database/Tool Name | Primary Focus | Key Metric / Scale | Role in the Three Pillars |

|---|---|---|---|

| PubChem [15] | Chemical Structures | >100 million compounds [15] | Pillar II: Definitive structural repository. |

| COCONUT [15] | Natural Products | ~400,000 unique NP structures [15] | Pillar II: Curated NP-specific structural data. |

| KNApSAcK [15] | Species-Metabolite Relationships | Links compounds to source species | Pillars I & II: Integrates taxonomy (I) with structures (II). |

| GNPS (Global Natural Products Social Molecular Networking) [3] [17] | Tandem MS Spectral Networking | Community-driven MS/MS library & tools | Pillar III: Enables MS-based dereplication via spectral matching and molecular networking. |

| Research Publications (2014-2023) [3] | Dereplication & Structure Elucidation | ~908 articles, ~40,520 citations [3] | Indicator of high field activity and methodological evolution. |

Experimental Protocol: Bioassay-Guided Dereplication in Action

The following protocol, adapted from a 2025 study on the marine sponge Aplysina cauliformis, exemplifies the integrated three-pillar approach in a drug discovery context [17].

Title: Bioassay-Guided Dereplication for the Identification of an Antiproliferative Bromotyramine.

Objective: To rapidly isolate and identify the bioactive constituent(s) from a crude organic extract with cytotoxic activity against HepG2 liver cancer cells.

Materials & Methods:

- Source Material & Taxonomy: The marine sponge Aplysina cauliformis (Phylum: Porifera, Order: Verongida) was collected, identified, and voucher specimens deposited. This taxonomic classification immediately suggests the potential presence of brominated tyrosine-derived alkaloids, a known chemotaxonomic marker for this genus [17].

- Bioactivity Screening: The crude organic extract was tested for cytotoxicity against HepG2 cells using an MTT assay, confirming activity (IC₅₀ = 214.29 ± 1.19 µg/mL) [17].

- Fractionation & Bioassay: The crude extract was fractionated using reversed-phase (RP-C18) chromatography. All fractions were re-screened for bioactivity. The most active fraction (A4, IC₅₀ = 134.28 ± 1.05 µg/mL) was selected for detailed analysis [17].

- Integrated Spectroscopic Analysis (LC-HRMS/MS & Molecular Networking):

- Fraction A4 was analyzed by LC-HRMS/MS on an Orbitrap mass spectrometer.

- Raw data was processed (MZmine software) to detect features, align peaks, and deconvolute isotopes.

- The processed data was uploaded to the GNPS platform to create a molecular network. In this network, each node represents a molecular ion, and connecting edges indicate shared MS/MS fragmentation patterns, visually clustering structurally related molecules [17].

- Dereplication Decision:

- The molecular network revealed a distinct cluster containing all features unique to the bioactive Fraction A4. Nodes within this cluster showed isotopic patterns indicative of dibrominated compounds [17].

- One node (m/z 335.9589, [M]+) was putatively annotated as N,N,N-trimethyl-3,5-dibromotyramine by matching its exact mass and MS/MS fragments against in-silico predictions and literature data for the Aplysina genus (leveraging Pillars I & III) [17].

- Targeted Isolation & Validation: Guided by this annotation, Fraction A4 was subjected to targeted HPLC purification to isolate the predicted compound. The isolated compound was confirmed to be N,N,N-trimethyl-3,5-dibromotyramine by full NMR analysis and exhibited antiproliferative activity (IC₅₀ = 37.49 ± 1.94 µg/mL on HepG2) [17].

Diagram: Experimental Protocol Workflow

The Scientist's Toolkit: Essential Reagents & Materials

Table 2: Key Research Reagent Solutions for Dereplication

| Item | Function / Application | Specific Example/Note |

|---|---|---|

| RP-C18 Stationary Phase | Fractionation of crude extracts based on hydrophobicity. | Used in flash chromatography or cartridges for initial bioassay-guided fractionation [17]. |

| Deuterated NMR Solvents | Required for NMR spectroscopy to provide a signal lock and avoid interference from protonated solvents. | Chloroform-d (CDCl₃), Methanol-d₄ (CD₃OD), DMSO-d₆. |

| LC-MS Grade Solvents | Essential for high-performance liquid chromatography coupled to mass spectrometry to minimize background noise and ion suppression. | Acetonitrile, Methanol, Water with 0.1% Formic Acid. |

| Cell Lines & Assay Kits | For bioactivity-guided isolation. Provides the phenotypic anchor for the discovery process. | HepG2 (cancer), IHH (normal) cells; MTT assay kit for cell viability [17]. |

| Internal MS Calibrants | Ensures accurate mass measurement in HRMS. | Calibration solution specific to the mass spectrometer (e.g., ESI-L Low Concentration Tuning Mix). |

| Molecular Networking Software | Processes untargeted MS/MS data to visualize chemical relationships within a sample. | GNPS2 (web platform), MZmine (for data preprocessing), Cytoscape (for visualization) [17]. |

The integration of taxonomy, molecular structures, and spectroscopy has transformed dereplication from a defensive check against redundancy into an offensive engine for discovery. Current trends point toward even greater integration and automation:

- Artificial Intelligence & Machine Learning: AI models are being trained to predict not just NMR shifts, but also MS/MS fragmentation patterns and even bioactivity from structural or spectral input, potentially bypassing multiple traditional steps [3].

- Genomic Integration: Linking biosynthetic gene cluster (BGC) data from the source organism's genome to spectroscopic and taxonomic data creates a fourth, predictive pillar. This "genome-mining" approach can forecast the type of molecules an organism can produce before extraction even begins [3].

In conclusion, the three-pillar framework is the cornerstone of a lean and effective natural product drug discovery pipeline. By strategically employing taxonomic prediction, structural database mining, and advanced spectroscopic analysis in a convergent workflow, researchers can accelerate the journey from raw biological material to novel therapeutic lead. As databases grow and algorithms become more sophisticated, this integrated approach will continue to be indispensable in navigating the complex chemical landscape of nature for drug discovery.

The drug discovery pipeline is a notoriously inefficient system, often characterized as finding "a needle in a haystack." A fundamental and persistent challenge exacerbating this inefficiency is the rediscovery of known compounds—a problem known as the dereplication challenge [18]. In natural product research, which accounts for roughly 70% of approved pharmaceuticals, this issue is particularly acute, where the same bioactive molecules are isolated and characterized repeatedly, wasting immense time and resources [19]. The conventional discovery process, reliant on labor-intensive trial-and-error and high-throughput screening, is slow, costly, and yields results with low accuracy [20]. With the projected pipeline value for new therapeutic modalities now at $197 billion, representing 60% of the total pharmaceutical pipeline, the economic stakes for optimizing discovery efficiency have never been higher [21]. This whitepaper frames dereplication not merely as a technical step in the workflow but as an economic imperative. By strategically avoiding rediscovery through advanced computational and analytical methods, the industry can conserve finite resources, accelerate the delivery of novel therapies, and ensure that research investments yield truly innovative returns.

The Economic and Temporal Cost of Conventional Discovery

The traditional drug discovery model is unsustainable from both a financial and temporal perspective. The process is measured in years and billions of dollars, with a significant portion of that investment yielding no novel information due to redundant rediscovery.

Table 1: The Economic Scale of Drug Discovery and Savings (2024-2025)

| Metric | Data | Source/Context |

|---|---|---|

| Projected Pipeline Value (New Modalities) | $197 billion (60% of total pipeline) [21] | BCG 2025 Report |

| Total Savings from Generics & Biosimilars (2024) | $467 billion [22] | AAM/IQVIA Report |

| Savings from Biosimilars Since 2015 | $56.2 billion [23] | Biosimilars Council Report |

| Typical Discovery-to-Preclinical Timeline | ~5 years [24] | Industry standard |

| AI-Accelerated Discovery Timeline (Example) | 18 months to Phase I [24] | Insilico Medicine's IPF drug |

| High-Through Screening Attrition Rate | ~1 marketable drug per 1 million screened compounds [25] | Scientific Reports 2024 |

The data underscores a dual economic reality: the immense value trapped in the innovation pipeline and the staggering savings unlocked by overcoming exclusivity—a process that efficient dereplication can initiate earlier. The "dereplication problem" in natural product discovery is a primary bottleneck, leading to diminishing returns on screening efforts [18]. Furthermore, while new modalities like antibodies and nucleic acids are driving growth, they are not immune to the inefficiencies of redundant target pursuit and molecule optimization [21]. Each cycle of rediscovery consumes resources that could be allocated to pioneering research, directly impacting a company's bottom line and the industry's capacity to address unmet medical needs.

Dereplication: Core Concepts and Strategic Importance

Dereplication is the process of rapidly identifying known compounds within a test sample early in the discovery pipeline to prioritize novel leads. Its primary objective is to avoid the costly and time-consuming isolation and full characterization of substances already documented in the scientific literature or proprietary databases.

The strategic implementation of dereplication transforms the discovery workflow:

- Front-Loaded Efficiency: By identifying known entities at the crude extract or early fraction stage, resources are focused solely on unknown chemistry.

- Informed Prioritization: It enables data-driven decisions, steering medicinal chemists and biologists toward the most promising, novel leads.

- Foundation for Innovation: It clears the "noise" of known biology, allowing true innovation to emerge. Effective dereplication is especially critical in natural product research, where chemical diversity is vast but the rediscovery rate is high [19] [26].

Table 2: Analytical Techniques for Dereplication

| Technique | Key Output | Role in Dereplication | Typical Throughput |

|---|---|---|---|

| LC-HRMS/MS (Liquid Chromatography-High Resolution Mass Spectrometry) | Exact mass, isotopic pattern, fragmentation spectrum [26] | Gold standard. Provides precise molecular formula and structural fingerprints for database matching. | Medium-High |

| NMR (Nuclear Magnetic Resonance) Spectroscopy | Detailed structural and conformational data | Provides definitive structural elucidation but is lower throughput; often used after MS-based triage. | Low |

| UV/Vis Spectroscopy | Chromophore information | Supports compound class identification (e.g., flavonoids, alkaloids). | High |

| Database Mining & Molecular Networking | Spectral similarity networks, putative identifications | Uses algorithms to compare experimental data against spectral libraries (e.g., GNPS, MassBank) [26]. | Very High (in silico) |

Methodologies: Implementing Effective Dereplication Protocols

A robust dereplication strategy integrates standardized experimental protocols with computational validation. The following detailed methodology, adapted from a 2025 study, outlines a systematic approach for LC-MS/MS-based dereplication [26].

Experimental Protocol: Construction and Use of an In-House Tandem Mass Spectral Library for Dereplication [26]

1. Objective: To develop a rapid, high-confidence LC-ESI-MS/MS method for dereplicating 31 common phytochemicals from complex plant and food extracts.

2. Materials and Reagents:

- Standards: 31 purified natural product standards (e.g., quercetin, apigenin, chlorogenic acid), purity 97-98%.

- Solvents: LC-MS grade methanol, formic acid, Type-I ultrapure water.

- Equipment: UHPLC system coupled to a high-resolution tandem mass spectrometer (e.g., Q-TOF or Orbitrap).

3. Experimental Procedure:

- A. Sample Pooling Strategy: To maximize efficiency, standards are pooled based on log P values and exact masses to minimize co-elution and the presence of isomers in the same LC run.

- B. LC-MS/MS Data Acquisition:

- Chromatography: Use a reverse-phase C18 column. A binary gradient of water (0.1% formic acid) and methanol is typical.

- Mass Spectrometry: Operate in positive electrospray ionization (ESI+) mode.

- Data-Dependent Acquisition (DDA): Full MS scan (e.g., m/z 100-1500) followed by MS/MS scans of the most intense precursors.

- Collision Energies: Acquire data at multiple collision energies (e.g., 10, 20, 30, 40 eV) to capture comprehensive fragmentation patterns.

- C. Library Construction: For each standard, compile the following into a database entry:

- Compound name, molecular formula, class.

- Observed exact mass (with <5 ppm error) for [M+H]+ and/or [M+Na]+ adducts.

- Retention time (RT).

- MS/MS spectrum at various collision energies.

- D. Dereplication of Unknown Extracts:

- Prepare test samples (e.g., plant extract).

- Analyze under identical LC-MS/MS conditions used for the library.

- Process data: For each peak in the unknown, extract precursor exact mass and MS/MS spectrum.

- Query the in-house library: Match observed RT (with a tolerance window, e.g., ±0.2 min), exact mass (e.g., ±5 ppm), and MS/MS spectral similarity.

- A positive identification requires consensus across these three parameters.

4. Data Analysis and Validation: The developed library was validated by successfully dereplicating compounds in 15 different plant and food extracts. The use of pooled standards, standardized conditions, and multi-parameter matching significantly reduces analytical time and cost compared to analyzing each standard individually [26].

The AI and Computational Revolution in Dereplication

Artificial Intelligence (AI) and Machine Learning (ML) are overcoming the limitations of traditional dereplication by moving beyond simple database lookups to predictive and generative modeling. This represents a paradigm shift from recognizing known compounds to predicting novel bioactivity.

Deep Learning for Predictive Dereplication & Discovery: Modern deep neural networks can learn complex structure-activity relationships from existing data. A landmark 2020 study demonstrated this by training a deep learning model on just 2,335 molecules to predict antibacterial activity [18]. When this model screened over 107 million molecules in silico, it identified halicin—a structurally novel antibiotic with broad-spectrum activity—and eight other promising antibacterial compounds [18]. This approach inverts the traditional workflow: instead of physically screening millions of compounds to find a few hits, AI virtually screens billions of molecules to prioritize a handful for empirical testing, dramatically increasing efficiency and reducing cost.

Integrated AI Platforms in the 2025 Landscape: The field has rapidly evolved, with several platforms now integrating AI throughout the discovery pipeline [24].

- Generative Chemistry (e.g., Exscientia): Uses AI to design novel drug-like molecules de novo that satisfy specific target product profiles, compressing design cycles [24].

- Phenomics-First Systems (e.g., Recursion): Leverages AI to analyze high-content cellular imaging data to identify disease phenotypes and potential drug modulators [24].

- Physics-Plus-ML (e.g., Schrödinger): Combines molecular simulations with machine learning for accurate binding affinity prediction and lead optimization [24].

Table 3: Performance Metrics of Leading AI Discovery Platforms (2025 Landscape)

| Platform / Company | Core AI Approach | Reported Efficiency Gain | Clinical-Stage Pipeline |

|---|---|---|---|

| Exscientia | Generative Chemistry, Centaur Chemist | ~70% faster design cycles; 10x fewer compounds synthesized [24] | Multiple Phase I/II candidates (e.g., CDK7, LSD1 inhibitors) [24] |

| Insilico Medicine | Generative AI & Target Discovery | 18 months from target to Phase I (idiopathic pulmonary fibrosis) [24] | Phase IIa results for ISM001-055 [24] |

| Schrödinger | Physics-Based Simulation + ML | Advanced TYK2 inhibitor (zasocitinib) to Phase III [24] | Late-stage clinical validation of platform [24] |

| VirtuDockDL (Research Platform) | Graph Neural Network (GNN) for Virtual Screening | 99% accuracy in benchmarking vs. HER2 target; superior to traditional tools [25] | Research tool for accelerating lead identification [25] |

These platforms exemplify the transition to an AI-augmented pipeline, where dereplication is no longer a discrete step but a continuous, intelligent filtering process embedded from virtual screening to lead optimization.

The Scientist's Toolkit: Essential Reagents and Solutions

Implementing a state-of-the-art dereplication strategy requires both wet-lab and computational tools.

Table 4: Research Reagent Solutions for Advanced Dereplication

| Item / Solution | Function in Dereplication | Example / Specification |

|---|---|---|

| High-Resolution Mass Spectrometer | Provides exact mass and MS/MS fragmentation data for unambiguous compound identification [26]. | Q-TOF or Orbitrap LC-MS/MS systems. |

| Validated Natural Product Standards | Essential for building and calibrating in-house spectral libraries [26]. | Purified compounds (e.g., flavonoids, alkaloids) from Sigma-Aldrich, etc. |

| LC-MS Grade Solvents & Columns | Ensure reproducibility and sensitivity in chromatographic separation prior to MS detection. | Methanol, acetonitrile, formic acid; reverse-phase C18 UHPLC columns. |

| Curated Spectral Databases | Provide reference data for matching unknown spectra against known compounds. | GNPS, MassBank, NIST, mzCloud [26]. |

| AI/ML Software Platforms | Enable predictive screening, generative design, and complex data integration. | Proprietary (Exscientia, Schrödinger) or open-source (VirtuDockDL [25], DeepChem). |

| Chemical Structure Databases | Large-scale libraries for virtual screening and novelty assessment. | ZINC15 (>107 million molecules) [18], Drug Repurposing Hub [18]. |

Dereplication has evolved from a defensive tactic to avoid wasted effort into a proactive, strategic engine for innovation. By integrating sophisticated analytical chemistry with powerful AI, the drug discovery pipeline can shed its inefficiencies and redirect resources toward true breakthrough science. The economic imperative is clear: in an era where new modalities dominate a $197 billion pipeline [21] and the cost of failure is astronomical, avoiding rediscovery is not just prudent—it is critical for sustainability and growth.

The future of dereplication lies in the seamless fusion of experimental and computational domains. Advances in automated, robotics-driven synthesis and screening will generate high-quality data at scale [24], which will, in turn, fuel more accurate AI models. Explainable AI (XAI) will build trust in algorithmic predictions [20], while federated learning may allow for collaborative model training across institutions without compromising proprietary data. As these tools mature, the vision of a fully integrated, AI-driven discovery pipeline—where dereplication is a continuous, intelligent process from hypothesis to candidate—will become a reality, fundamentally accelerating the delivery of new therapies to patients.

Dereplication as a Strategic Gatekeeper in Natural Product Screening Campaigns

Within the natural product (NP) drug discovery pipeline, dereplication functions as an indispensable strategic gatekeeper. Its primary role is the early and rapid identification of known compounds within bioactive extracts, thereby preventing the costly and time-consuming rediscovery of common metabolites [27]. By acting as a critical filter, dereplication ensures that research resources are allocated efficiently toward the discovery of novel chemical entities with therapeutic potential [3].

The re-emergence of NPs as a vital source of drug leads is directly tied to advances in dereplication methodologies [27]. The process is driven by two interconnected factors: the expansion of large, annotated NP databases and significant improvements in analytical technologies, particularly in mass spectrometry (MS) and nuclear magnetic resonance (NMR) spectroscopy [27]. Modern dereplication integrates chemical profiling, biological screening, and computational data analysis into a cohesive workflow, transitioning from a simple negative filter to an active, knowledge-guided prioritization engine. This evolution solidifies its role as a non-negotiable, strategic checkpoint that governs the flow of candidates through the discovery pipeline, from initial screening to lead development [3] [28].

Core Dereplication Technologies: Principles and Comparative Analysis

Effective dereplication relies on hyphenated analytical techniques that separate complex mixtures and provide structural data for rapid compound identification. The selection of technology is dictated by the need for sensitivity, speed, and informational depth.

Liquid Chromatography-Mass Spectrometry (LC-MS) is the cornerstone of modern dereplication. Ultra-high-performance LC (UHPLC) coupled with high-resolution mass spectrometry (HR-MS) enables the rapid profiling of crude extracts [28]. Tandem mass spectrometry (MS/MS) generates fragmentation patterns that serve as molecular fingerprints, which can be searched against spectral libraries such as Global Natural Product Social Molecular Networking (GNPS) [29]. Affinity Selection Mass Spectrometry (AS-MS) represents a targeted, label-free biophysical approach. It directly probes non-covalent interactions between a biological target and ligands from a complex mixture, identifying binders based on mass [30]. AS-MS is particularly valuable for identifying active compounds without prior fractionation, streamlining the path from screening to identification.

Nuclear Magnetic Resonance (NMR) Spectroscopy, while less high-throughput, provides unparalleled structural detail, including stereochemistry. It is often employed as a secondary, confirmatory technique following MS-based screening or for the detailed analysis of prioritized unknowns [27].

The following table summarizes the core technologies, their output, and primary applications in dereplication workflows.

Table 1: Core Analytical Technologies for Dereplication

| Technology | Key Output | Primary Role in Dereplication | Throughput |

|---|---|---|---|

| LC-HR-MS/MS | Accurate mass, isotopic pattern, MS/MS fragmentation spectrum | Initial chemical profiling, molecular formula assignment, library searching [27] [29] | High |

| AS-MS | Mass of target-bound ligands | Direct identification of bioactive binders from mixtures; orthogonal to functional assays [30] | Medium-High |

| NMR Spectroscopy (e.g., 1H, 13C, HSQC, HMBC) | Detailed structural and stereochemical information | Confirmation of knowns, partial or full structure elucidation of novel compounds [27] [3] | Low-Medium |

Integrated Methodologies: From Workflow to Experimental Protocol

A robust dereplication strategy integrates orthogonal data streams to maximize confidence in identification. A contemporary, high-throughput workflow combines chemical analysis with biological mechanism profiling.

Strategic Workflow Integration

The strategic position and integration of dereplication within a broader NP screening campaign is visualized below. This workflow emphasizes its gatekeeper function, preventing known compounds from proceeding to costly downstream development.

Detailed Experimental Protocol: Affinity Selection Mass Spectrometry (AS-MS)

AS-MS is a powerful, non-functional assay method for identifying ligands directly from complex NP libraries [30]. The following protocol outlines a solution-based ultrafiltration AS-MS experiment.

1. Incubation:

- Prepare the target protein (e.g., an enzyme) in a suitable buffer (e.g., PBS, Tris-HCl) at a concentration in the low micromolar range (typically 1-10 µM).

- Incubate the protein with the crude NP extract or fraction library. The target is usually in molar excess over individual library components to minimize ligand competition [30].

- Optimize incubation time and temperature to reach binding equilibrium.

2. Separation (Ultrafiltration):

- Transfer the incubation mixture to an ultrafiltration device with a molecular weight cutoff (MWCO) selected to retain the protein-ligand complex (e.g., 10-30 kDa).

- Apply centrifugal force to filter the solution. Unbound small molecules pass through the membrane, while the protein and bound ligands are retained.

- Wash the retentate with buffer to remove non-specifically bound compounds.

3. Dissociation:

- Dissociate ligands from the target protein complex in the retentate. Denaturing conditions are commonly used, such as adding a 50:50 mixture of methanol or acetonitrile with 1% formic acid [30].

- For reusable immobilized targets, gentler dissociation methods like pH change or competitive displacement with a high-affinity ligand may be used [30].

4. Analysis & Identification:

- Analyze the dissociated ligand eluate via LC-MS/MS.

- Compare acquired masses and MS/MS spectra to in-house or public databases.

- Perform control experiments (incubation without target) to calculate affinity ratios and distinguish specific binders from background [30].

Detailed Experimental Protocol: Integrated LC-MS/MS and Chemical Genomics

A recent study on antifungal discovery demonstrated a powerful integrated protocol combining structural and functional dereplication [29].

1. Sample Preparation & Screening:

- Generate a prefractionated library from prioritized bacterial strains (e.g., from marine or insect microbiomes).

- Screen fractions at multiple concentrations against target pathogens (e.g., Candida albicans) and counter-screen for cytotoxicity.

2. Structural Dereplication (LC-MS/MS):

- Analyze active fractions using high-resolution LC-MS/MS.

- Process data using GNPS for spectral library matching and SIRIUS 5 for database-independent structure prediction and classification [29].

- Annotate compounds by matching MS/MS spectra and calculated molecular formulas to known antifungal families.

3. Functional Dereplication (Yeast Chemical Genomics - YCG):

- Expose a pooled library of DNA-barcoded Saccharomyces cerevisiae knockout strains to the active fraction in a 384-well plate format.

- After incubation, extract genomic DNA, amplify barcodes via PCR, and sequence.

- Use software (e.g., BEAN-counter) to quantify strain abundance and generate a chemical genomic profile—a vector of hypersensitivity/resistance for each knockout [29].

- Cluster this profile against reference profiles of known antifungals. Similar profiles suggest a shared or similar mechanism of action (MoA).

4. Data Integration:

- Triangulate results. A fraction where LC-MS/MS identifies a known polyene and YCG clusters with an amphotericin B profile provides high-confidence dereplication.

- Fractions with novel chemistry and a unique YCG profile are prioritized for full isolation and characterization.

The integrated workflow of this dual-method approach is detailed below.

Quantitative Impact and AI-Enhanced Dereplication

The efficiency gain from dereplication is quantifiable. In the antifungal campaign cited, screening over 40,000 fractions yielded 450 active hits. Integrated dereplication rapidly identified known compounds like the macrotetrolides (e.g., nonactin), allowing efforts to focus on the most promising novel leads [29]. This filtering prevented the redundant expenditure of resources on rediscovery.

Table 2: Impact Metrics from an Integrated Dereplication Campaign [29]

| Metric | Result | Implication |

|---|---|---|

| Fractions Screened | >40,000 | Scale of the initial screening library |

| Primary Actives | 450 (~1.1% hit rate) | Candidates entering the dereplication gateway |

| Confirmed Knowns via LC-MS/MS & YCG | Multiple families (e.g., Macrotetrolides) | Resources saved by early termination |

| Key Outcome | Focus on fractions with novel chemistry & MoA | Strategic reallocation to highest-value targets |

Artificial Intelligence (AI) and Machine Learning (ML) are transforming dereplication from a database-matching exercise into a predictive science. Key applications include:

- Spectrum Prediction and Matching: ML models predict MS/MS spectra from structures and vice versa, improving identification accuracy, especially for compounds not in libraries [31] [8].

- Bioactivity Prediction: Models trained on chemical structures and associated bioactivity data can predict the potential therapeutic action of a dereplicated but not fully identified metabolite, aiding in prioritization [31].

- Database Enhancement: AI helps manage and cross-link disparate data sources (spectral, genomic, taxonomic), creating more powerful knowledge networks for search and annotation [8]. For example, AI models are increasingly used for the virtual screening of NP databases and predicting candidates with specific pharmacological properties [31].

Table 3: Research Reagent Solutions for Dereplication Workflows

| Item/Category | Function in Dereplication | Example/Specification |

|---|---|---|

| Ultrafiltration Units | Separation of protein-ligand complexes from unbound molecules in AS-MS protocols [30]. | Devices with 10-30 kDa MWCO membranes. |

| Magnetic Microbeads (for MagMASS) | Solid support for immobilizing protein targets in affinity capture AS-MS setups [30]. | Beads functionalized with NHS ester or streptavidin for target conjugation. |

| LC-MS Grade Solvents | Ensure high sensitivity and low background in MS analysis for reliable metabolite detection. | Methanol, Acetonitrile, Water with 0.1% Formic Acid. |

| Yeast Knockout Strain Pool | Essential reagent for Yeast Chemical Genomics (YCG) to generate mechanism-of-action profiles [29]. | A pooled library of barcoded S. cerevisiae deletion strains (e.g., ~310 diagnostic strains). |

| Reference Standard Library | Critical for definitive compound identification by matching retention time, mass, and fragmentation. | In-house or commercial collections of known natural products and drugs. |

| DNA Barcode Primers | Amplification of unique sequence tags from YCG strain pools for NGS quantification [29]. | Primers specific to the upstream/downstream sequences flanking the knockout barcodes. |

Dereplication has firmly evolved into a strategic gatekeeper, essential for the sustainability and productivity of NP drug discovery. By integrating advanced analytical technologies like LC-MS/MS and AS-MS with functional genomics and AI-driven informatics, modern dereplication platforms deliver more than just identification—they provide mechanistic insight and predictive prioritization.

The future of the field lies in deeper integration and automation. The convergence of AI-predicted properties, real-time analytics coupled with screening, and standardized data-sharing platforms will further compress the timeline from extract to novel lead. Overcoming challenges related to mixture complexity, stereochemistry determination, and the "known-unknown" gap will require continuous innovation [8] [3]. As these tools mature, dereplication will solidify its role not merely as a gate, but as an intelligent guide, steering NP research toward the most promising frontiers of chemical and therapeutic novelty.

Methodologies in Practice: Advanced Analytical Tools and Integrated Application Workflows

In the resource-intensive journey of drug discovery, dereplication serves as a critical, early-stage filter to avoid the costly rediscovery of known compounds. The process involves the rapid identification of previously characterized metabolites within complex biological extracts, allowing researchers to prioritize novel chemical entities with therapeutic potential [32]. Historically, the inability to effectively dereplicate natural products contributed to the decline of such programs in the pharmaceutical industry, as significant investment was exhausted on isolating and characterizing known substances [32]. Today, the integration of advanced analytical techniques into the discovery pipeline—spanning from initial lead identification through preclinical development—is fundamental to improving efficiency and success rates.

The modern drug discovery pipeline encompasses target identification, lead discovery, lead optimization, and preclinical assessment before a candidate enters clinical trials [33]. Analytical chemistry is pivotal at multiple junctures, particularly in characterizing compounds derived from natural sources, synthetic libraries, or biotransformation studies. Liquid Chromatography-Mass Spectrometry (LC-MS), Liquid Chromatography-Nuclear Magnetic Resonance Spectroscopy (LC-NMR), and High-Resolution Mass Spectrometry (HRMS) form a complementary triad of technologies that provide the structural elucidation, sensitivity, and high-throughput capability necessary for effective dereplication and compound characterization [32] [34] [35]. This whitepaper provides an in-depth technical guide to these core techniques, detailing their principles, applications, and specific methodologies within the context of a streamlined drug discovery workflow.

High-Resolution Mass Spectrometry (HRMS): Principles and Pharmaceutical Applications

High-Resolution Mass Spectrometry is defined by its ability to measure the mass-to-charge ratio (m/z) of ions with high accuracy and resolving power, typically ≥ 10,000 Full Width at Half Maximum (FWHM) [34]. This high resolution allows for the discrimination between ions of very similar mass, providing unequivocal determination of elemental compositions via accurate mass measurements [34]. Unlike low-resolution mass spectrometers that report nominal mass, HRMS provides exact mass with up to 4-5 decimal places, enabling the distinction of compounds with the same nominal mass but different elemental formulas (e.g., CO vs. C₂H₄) [34] [36].

HRMS Instrumentation and Performance

The key performance characteristics of HRMS analyzers are resolving power, mass accuracy, and mass range. Common HRMS platforms include Time-of-Flight (TOF), Orbitrap, and Fourier Transform Ion Cyclotron Resonance (FT-ICR) mass analyzers, each with distinct advantages [34].

Table 1: Comparison of Common High-Resolution Mass Analyzers [34]

| Mass Analyzer Type | Typical Resolving Power (FWHM) | Mass Accuracy (ppm) | m/z Range (Upper Limit) | Relative Cost |

|---|---|---|---|---|

| Quadrupole (Q) | < 5 x 10³ | > 100 | 2,000 - 4,000 | Lower |

| Ion Trap (IT) | < 5 x 10³ | < 30 | 4,000 - 20,000 | Lower |

| Time-of-Flight (TOF) | 10 - 60 x 10³ | 0.5 - 5 | 100,000 | Moderate |

| Orbitrap | 120 - 1,000 x 10³ | 0.5 - 5 | 20,000 | Higher |

| FT-ICR | 100 - 10,000 x 10³ | 0.05 - 1 | 30,000 | High |

Orbitrap and FT-ICR instruments offer superior resolution and mass accuracy, making them ideal for elucidating complex mixtures and new drug modalities like peptides, oligonucleotides, and antibody-drug conjugates [34]. Hybrid instruments, such as quadrupole-Orbitrap systems, combine the selectivity of quadrupole precursor ion selection with the high resolution of an Orbitrap analyzer, proving exceptionally powerful for quantitative and qualitative analyses in regulated bioanalytical laboratories [37].

Application in Drug Discovery and Development

HRMS has become indispensable across the pharmaceutical development continuum. Its applications include:

- Metabolite Identification (MetID): HRMS is used to identify and characterize in vitro and in vivo drug metabolites, providing critical data for lead optimization and toxicology studies [34] [37]. The technique's high resolution helps separate metabolite signals from biological matrix interferences.

- Impurity and Degradant Profiling: The accurate mass capabilities support the identification of low-level impurities and degradation products during drug substance and product development [34].

- Quantitative Bioanalysis: While traditionally the domain of triple quadrupole mass spectrometers, HRMS is increasingly used for targeted quantitative analysis, especially for complex molecules where its superior selectivity improves sensitivity and accuracy [37].

- Dereplication: HRMS provides exact mass data for molecular ions and fragments, which can be searched against chemical databases to rapidly identify known compounds in natural product extracts, a cornerstone of efficient dereplication [32] [38].

A key advantage in troubleshooting is HRMS's capability for data-independent acquisition and retrospective data mining. Unlike targeted triple quadrupole methods, a single HRMS full-scan acquisition can be revisited to investigate unforeseen analytes or stability issues without re-injecting the sample [37].

Liquid Chromatography-Mass Spectrometry (LC-MS/MS) for Dereplication

The coupling of liquid chromatography with tandem mass spectrometry (LC-MS/MS) is the workhorse technique for dereplication. LC separates the complex mixture of an extract, and MS/MS provides structural information via fragmentation patterns, which are matched against reference spectral libraries [32].

The Dereplication Workflow

A standardized LC-MS/MS dereplication protocol, as applied to natural product extracts, involves several key stages [32] [38].

Diagram 1: LC-MS/MS dereplication workflow.

Detailed Experimental Protocol for LC-MS/MS Dereplication

The following protocol is adapted from a high-throughput dereplication study of Salvia species and an undergraduate laboratory experiment [32] [38].

1. Sample Preparation:

- Plant material is dried, ground, and extracted using solvents like methanol, acetone, or water [32].

- The crude extract may be fractionated using solid-phase extraction (SPE) to simplify the mixture [32].

- Extracts are filtered (e.g., 0.22 µm) prior to LC injection.

2. Liquid Chromatography:

- Column: Reversed-phase C18 column (e.g., 2.1 x 100 mm, 1.8 µm particle size).

- Mobile Phase: Binary gradient. Typical solvents are water (A) and acetonitrile (B), both with 0.1% formic acid to enhance ionization.

- Gradient: Optimized for the sample. Example: 5% B to 100% B over 25 minutes, held, then re-equilibrated [38].

- Flow Rate: 0.3 - 0.4 mL/min.

- Injection Volume: 1-5 µL.

3. Mass Spectrometry:

- Instrument: High-resolution ESI-QTOF or ion trap mass spectrometer capable of MS/MS [32] [38].

- Ionization Mode: Electrospray Ionization (ESI), positive and/or negative mode.

- Data Acquisition: Full-scan MS survey (e.g., m/z 100-1500) followed by data-dependent MS/MS scans on the most intense ions. Collision energies are set to induce fragmentation (e.g., 20-40 eV).

4. Data Processing and Dereplication:

- MS/MS data files are converted to open formats (e.g., .mzXML).

- Data is uploaded to a spectral matching platform like the Global Natural Products Social Molecular Networking (GNPS) [32].

- The platform matches experimental MS/MS spectra against reference libraries (e.g., GNPS, MassBank). A cosine similarity score quantifies match quality.

- Putative identifications are made for high-scoring matches, successfully dereplicating known compounds.

The Scientist's Toolkit: LC-MS/MS Dereplication

Table 2: Essential Research Reagents and Tools for LC-MS/MS Dereplication

| Item | Function in Dereplication | Example/Notes |

|---|---|---|

| High-Resolution Mass Spectrometer | Provides accurate mass and MS/MS fragmentation data for compound identification. | Q-TOF, Quadrupole-Orbitrap, Ion Trap [34] [38]. |

| Reversed-Phase UHPLC Column | Separates complex mixtures of metabolites prior to mass analysis. | C18 column, 1.7-1.8 µm particle size for high resolution [38]. |

| Global Natural Products Social Molecular Networking (GNPS) | Online platform for spectral library matching and creating molecular networks based on shared fragments [32]. | Freely accessible platform crucial for dereplication. |

| Solvents & Mobile Phase Additives | Extraction and chromatographic separation. | LC-MS grade Acetonitrile, Water, Methanol; Formic Acid for pH control/ionization [32] [38]. |

| Solid-Phase Extraction (SPE) Cartridges | Pre-fractionates crude extracts to reduce complexity and concentrate analytes of interest. | C18 or modified silica phases [32]. |

| Reference Standard Compounds | Validates identifications by comparing retention time and MS/MS spectrum. | Commercially available bioactive natural products (e.g., rosmarinic acid in Salvia) [38]. |

Liquid Chromatography-Nuclear Magnetic Resonance (LC-NMR) Spectroscopy

LC-NMR integrates the separation power of chromatography with the unparalleled structural elucidation capabilities of Nuclear Magnetic Resonance spectroscopy. It is a premier technique for the de novo structure determination of unknown compounds in mixtures, especially when MS data is insufficient [39] [35].

Principles and Modes of Operation

NMR detects atoms with nuclear spin (e.g., ¹H, ¹³C) in a strong magnetic field, providing detailed information on molecular structure, connectivity, and stereochemistry. Coupling it with LC presents significant technical challenges due to NMR's inherently low sensitivity compared to MS [35]. Several operational modes have been developed:

- On-Flow Mode: NMR spectra are acquired continuously as the LC eluent passes through the flow probe. This is fast but has low sensitivity.