Dereplication in Blue Biotechnology: A Critical Strategy for Efficient Drug Discovery and Sustainable Innovation

This article addresses the pivotal role of dereplication in streamlining blue biotechnology research and development, aimed at researchers, scientists, and drug development professionals.

Dereplication in Blue Biotechnology: A Critical Strategy for Efficient Drug Discovery and Sustainable Innovation

Abstract

This article addresses the pivotal role of dereplication in streamlining blue biotechnology research and development, aimed at researchers, scientists, and drug development professionals. The content provides a foundational understanding of dereplication as a solution to the costly problem of compound rediscovery, which can cost millions and delay projects for years[citation:2]. It details methodological workflows integrating modern analytical tools like HPLC-MS and AI-driven informatics to accelerate the identification of novel marine-derived compounds[citation:4][citation:8]. The article further tackles common troubleshooting scenarios and offers strategies to optimize dereplication protocols for enhanced efficiency. Finally, it provides a framework for validating dereplication outcomes and compares various approaches to help professionals select the most effective strategies for their specific research goals in pharmaceuticals, nutraceuticals, and biomaterials[citation:6][citation:10].

Unveiling Dereplication: The Strategic Cornerstone of Efficient Marine Bioprospecting

Dereplication represents a critical early-stage filtering process in natural product discovery, enabling researchers to rapidly identify known compounds within complex biological extracts. In blue biotechnology, where marine organisms present both unparalleled chemical diversity and significant rediscovery challenges, dereplication serves as an essential efficiency engine. This technical guide examines dereplication's scientific foundations, methodologies, and transformative applications within marine biodiscovery pipelines. We detail how advanced computational tools, integrated with high-throughput analytical platforms, are accelerating the identification of novel marine-derived pharmaceuticals, nutraceuticals, and biomaterials while preventing costly redundant research.

The ocean, covering more than 70% of Earth's surface, hosts immense biological and chemical diversity, with marine organisms producing unique secondary metabolites with valuable biological activities [1]. Blue biotechnology—the application of science to living aquatic resources for goods and services—aims to harness this potential [2]. However, discovering novel marine natural products (MNPs) is an expensive and time-consuming process, historically plagued by the high rate of re-isolating known compounds [2] [3].

Dereplication is defined as the early identification of known compounds within a discovery pipeline. Its primary objective is to avoid redundant characterization efforts, thereby accelerating the path to novel chemical entities [2]. In the context of blue biotechnology, dereplication is not merely a convenience but a fundamental necessity. Marine sampling is often logistically challenging and costly, involving organisms from extreme or deep-sea environments that may be difficult to culture under laboratory conditions [2] [1]. Furthermore, the chemical complexity of marine extracts is exceptionally high. Efficient dereplication ensures that limited resources are focused solely on truly novel leads with potential for drug development or other biotechnological applications [4].

The economic and temporal stakes are substantial. The journey from marine bioprospecting to a commercial drug, for example, can span 15-20 years with costs exceeding 800 million USD [4]. By integrating robust dereplication strategies early, researchers can streamline this pipeline, significantly reducing wasted effort on known compounds and enhancing the probability of breakthrough discoveries in sectors ranging from pharmaceuticals to cosmetics and biofuels [5] [6].

Scientific Foundations: Why Dereplication is a Blue Biotech Bottleneck

The need for dereplication in blue biotechnology is underscored by several intersecting factors: the sheer scale of marine chemical space, the historical context of natural products research, and the specific challenges of working with marine specimens.

The Scale of the Challenge

Marine ecosystems are reservoirs of extraordinary, yet underexplored, biodiversity. It is estimated that 2.2 ± 0.18 million marine species exist, with only about 600 new species cataloged annually [4]. This biodiversity translates into a vast, untapped chemical repertoire. However, this potential is counterbalanced by a high probability of rediscovery. Since the 1990s, the pace of novel antibiotic discovery from natural sources has declined, partly due to repeated identification of known metabolites [3].

Table 1: Key Challenges in Marine Natural Product Discovery Addressed by Dereplication

| Challenge | Impact on Discovery Pipeline | Dereplication Solution |

|---|---|---|

| Extreme Sample Acquisition | Logistically difficult and expensive collection from deep-sea or remote environments [2]. | Prioritizes samples with highest novelty potential before intensive investment. |

| Complex Extract Chemistry | Marine organism extracts contain hundreds to thousands of metabolites, complicating analysis [2]. | Rapidly filters out known compounds, highlighting unknown signatures for focus. |

| Unculturable Organisms | Many marine microbes cannot be cultured in the lab, limiting re-supply [2]. | Enables full chemical profiling from single, precious samples. |

| Sustainable Supply | Harvesting bulk biomass from marine habitats is often ecologically unsustainable [2]. | Minimizes the need for large-scale recollection by maximizing information from initial samples. |

The Analytical-Computational Gap

Modern high-resolution mass spectrometry (HR-MS) and nuclear magnetic resonance (NMR) can generate vast amounts of data from a single marine extract [2]. The central challenge has shifted from data generation to data interpretation. Without dereplication, researchers drown in data, unable to distinguish known from novel compounds efficiently. This gap creates a major bottleneck, slowing the entire discovery process [3].

The integration of metabolomics and genomics further amplifies both the challenge and the opportunity. Genomic data can predict the potential of an organism to produce novel compounds, but only through metabolomic analysis and dereplication can these predictions be confirmed and the actual metabolites identified [2]. This synergy is a cornerstone of modern blue biotechnology.

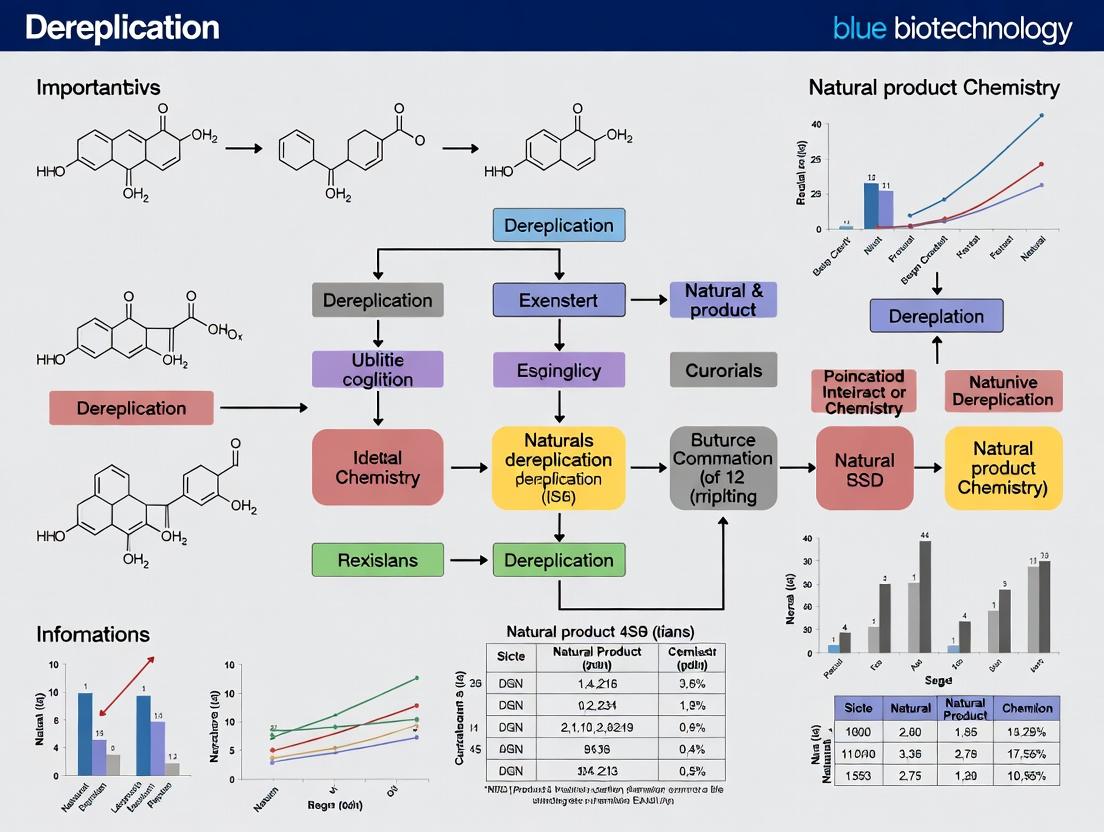

Diagram Title: Dereplication Resolves the Analytical Data Bottleneck in Blue Biotech

Core Methodologies and Experimental Protocols

Dereplication strategies have evolved from simple library comparisons to sophisticated workflows integrating multiple data types and computational intelligence.

The Dereplication Workflow: A Multi-Tiered Approach

An effective dereplication protocol is not a single test but a cascade of complementary techniques.

Table 2: Tiered Experimental Protocol for Comprehensive Dereplication

| Tier | Primary Technique | Data Output | Key Action | Common Tools/Resources |

|---|---|---|---|---|

| Tier 1: Rapid Screening | HPLC-UV/HR-MS | Retention time, UV spectrum, accurate mass, isotopic pattern. | Compare to in-house library of standard compounds. | LC-MS systems, Open Access software. |

| Tier 2: Tentative Identification | Tandem MS/MS | Fragmentation pattern (spectral fingerprint). | Search against public spectral libraries (e.g., GNPS). | GNPS platform, DEREPLICATOR+. |

| Tier 3: Confirmation & Novelty Assessment | Microscale NMR (1D & 2D), Bioactivity Assay | Partial/Full planar structure, biological activity profile. | Compare NMR data and bioactivity to literature/databases. | NMR suites, AntiMarin, Dictionary of Natural Products. |

| Tier 4: Absolute Configuration | Chiral analysis, NMR calculation, CD spectroscopy | 3D stereochemical structure. | Determine for novel bioactive compounds. | DP4 analysis, quantum chemical calculations [2]. |

Protocol: Integrated LC-MS/MS and Molecular Networking for Dereplication

- Sample Preparation: Prepare a crude extract from marine biomass (e.g., sponge, microbial culture). Perform a standardized solid-phase extraction (SPE) to fractionate and remove salts.

- LC-HR-MS/MS Analysis:

- Instrument: Use a UPLC system coupled to a Q-TOF or Orbitrap mass spectrometer.

- Chromatography: Employ a reverse-phase C18 column with a water-acetonitrile gradient (both solvents modified with 0.1% formic acid).

- Acquisition: Acquire data in data-dependent acquisition (DDA) mode. Collect full-scan HR-MS data (e.g., m/z 100-1500) and automatically trigger MS/MS scans on the top N most intense ions.

- Data Processing:

- Convert raw data to open formats (.mzXML, .mzML).

- Use software like MZmine or MS-DIAL for feature detection, alignment, and deconvolution to create a list of detected ions (mass, RT, intensity).

- Dereplication via GNPS Molecular Networking:

- Upload the processed MS/MS data to the Global Natural Products Social Molecular Networking (GNPS) platform [2] [3].

- Run the "Library Search" workflow to compare experimental MS/MS spectra against reference spectra in public libraries (e.g., GNPS, NIST, MassBank).

- Simultaneously, run the "Molecular Networking" workflow. This clusters MS/MS spectra based on similarity, visualizing the chemical space of the extract. Known compounds identified via library search will appear in clusters (molecular families), and their connected, unannotated nodes represent structural analogs or novel compounds in the same chemical family.

- Validation: Isolate the compound of interest (guided by the network) for microgram-scale 1D NMR to confirm the dereplication hypothesis before committing to large-scale isolation.

Advanced Computational Tools: The Role of DEREPLICATOR+

While library matching is powerful, it fails when a compound's spectrum is not in the reference library. Tools like DEREPLICATOR+ address this by searching chemical structure databases directly [3].

Algorithm Protocol: DEREPLICATOR+ Workflow DEREPLICATOR+ operates by predicting fragmentation patterns from chemical structures.

- Input: An experimental MS/MS spectrum and a database of chemical structures (e.g., AntiMarin, Dictionary of Natural Products).

- Fragmentation Graph Construction: For each candidate structure, the algorithm generates a "fragmentation graph." This involves:

- Converting the chemical structure into a molecular graph (atoms as nodes, bonds as edges).

- Systematically breaking bonds between heavy atoms to simulate potential fragmentation pathways, generating a set of theoretical fragment masses [3].

- Spectral Annotation & Scoring: The theoretical fragment masses are matched against peaks in the experimental MS/MS spectrum. A score is computed based on the number and intensity of matched peaks.

- Decoy Generation & FDR Control: To ensure identifications are statistically significant, the algorithm generates decoy (randomized) fragmentation graphs. The False Discovery Rate (FDR) is estimated by comparing scores from real and decoy matches [3].

- Output: A ranked list of candidate structures with associated confidence scores (p-values), enabling the identification of natural product classes like polyketides and terpenes beyond just peptides [3].

Diagram Title: The DEREPLICATOR+ Algorithm Workflow for Dereplication

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Dereplication in Blue Biotechnology

| Resource Type | Specific Example(s) | Function in Dereplication | Key Characteristics/Utility |

|---|---|---|---|

| Public Spectral Libraries | GNPS Public Libraries, NIST MS/MS, MassBank, HMDB [2] [3]. | Reference for direct MS/MS spectrum matching. | Crowdsourced, community-reviewed spectra. GNPS is central to marine NP research. |

| Chemical Structure Databases | AntiMarin (~60k compounds), Dictionary of Natural Products (~300k compounds), PubChem, MarinLit [3]. | Source of structures for in silico fragmentation & prediction. | AntiMarin and MarinLit are specialized for marine compounds. |

| Bioinformatics Platforms | Global Natural Products Social Molecular Networking (GNPS) [2] [3]. | Cloud platform for data analysis, library search, and molecular networking. | Enables workflow execution and data sharing without local compute infrastructure. |

| Dereplication Software | DEREPLICATOR+ [3], SIRIUS/CSI:FingerID, MolDiscovery. | Algorithms for identifying compounds from MS/MS data against structure DBs. | DEREPLICATOR+ excels with diverse microbial and marine metabolites. |

| Reference Material | In-house library of purified natural product standards. | Provides definitive retention time, MS, and NMR data for comparison. | Gold standard for confirmation; built over time from previous work. |

Applications and Impact in Blue Biotechnology

Effective dereplication directly fuels innovation across the blue economy by making discovery pipelines viable and efficient.

1. Accelerating Marine Drug Discovery: Dereplication is pivotal in the search for new pharmaceuticals. For example, the discovery of chalcomycin variants from Actinomyces was dramatically accelerated by DEREPLICATOR+, which identified not only the core compound but also related analogs through molecular networking [3]. This approach is crucial for companies like PharmaMar, which has successfully developed marine-derived drugs like Yondelis. By quickly discarding known cytotoxins, researchers can focus resources on novel anticancer or antimicrobial leads from sponges, tunicates, and marine microbes [5] [1].

2. Supporting Sustainable Bioprospecting: In microalgae biotechnology—a pillar of the blue bioeconomy for products ranging from nutraceuticals (omega-3s, astaxanthin) to biofuels—dereplication aids strain selection and optimization [6]. By chemically profiling different strains of Chlorella or Nannochloropsis, researchers can identify which produce the highest yields of desired compounds or which harbor unique chemistries, guiding sustainable cultivation efforts without exhaustive bioassay-guided fractionation [6].

3. Enabling Metabolomics-Guided Discovery: Dereplication is the essential step that translates metabolomic profiles into actionable insights. Studies of marine symbionts, such as bacteria associated with sponges or ascidians, use dereplication to pinpoint which specific metabolites are likely produced by the symbiont and are responsible for observed biological activities (e.g., antibacterial, antiviral) [1]. This precise understanding is key for subsequent genetic or cultivation studies aimed at sustainable production.

The future of dereplication in blue biotechnology lies in deeper integration and increased predictive power.

- Artificial Intelligence and Machine Learning: AI models are being trained to predict not just identity, but also bioactivity from spectral data, further prioritizing novel leads [2].

- Real-Time Dereplication: Coupling automated analytics with dereplication software will enable real-time decisions during purification, drastically reducing bench time.

- Integrated Multi-Omics Platforms: Combining dereplication with genome mining tools will create a powerful feedback loop. A gene cluster predicting a novel compound class can trigger targeted metabolomics, whose results, once dereplicated, can validate genomic predictions [2] [3].

In conclusion, dereplication has matured from a simple avoidance tactic into a strategic cornerstone of blue biotechnology. It is the critical filter that transforms the overwhelming chemical complexity of the ocean into a navigable discovery landscape. By embracing the advanced computational and analytical workflows outlined here, researchers can accelerate the sustainable translation of marine biodiversity into solutions for health, industry, and environmental challenges, fully realizing the promise of the blue bioeconomy.

The pharmaceutical industry and the burgeoning field of blue biotechnology are grappling with a persistent R&D productivity crisis, characterized by escalating costs and extended timelines for bringing new therapeutics to market. A central, addressable contributor to this inefficiency is the rediscovery of known natural compounds—a drain on resources that dereplication strategies aim to prevent. This whitepaper provides an in-depth technical analysis of the economic and temporal costs of rediscovery within blue biotechnology R&D pipelines. It details advanced, high-throughput dereplication methodologies, including molecular networking and integrative informatics, which are critical for early-stage identification of novel marine-derived bioactive compounds. By framing this discussion within the broader context of sustainable marine bioeconomy growth, this guide equips researchers and drug development professionals with the protocols and tools necessary to enhance pipeline efficiency, reduce attrition, and accelerate the discovery of unique marine natural products.

Bioprospecting in marine environments offers an unparalleled resource for novel drug leads due to the immense and largely untapped biodiversity of oceanic ecosystems. Marine organisms have evolved unique biochemical pathways, resulting in compounds with novel mechanisms of action and high therapeutic potential [2]. The commercialization rate of marine-derived pharmaceuticals can be up to four times higher than that of their terrestrial counterparts [7]. However, the discovery process is fraught with a major recurring hurdle: the re-isolation and redundant characterization of already-known compounds, commonly termed "rediscovery."

Rediscovery represents a profound drain on R&D resources. It consumes finite financial capital, researcher time, and operational capacity without advancing the pipeline toward novel intellectual property or clinical candidates. In blue biotechnology, where sourcing and cultivating marine organisms can be logistically complex and costly, the penalty for rediscovery is particularly severe [2]. Dereplication—the process of rapidly identifying known compounds within crude extracts or fractions early in the discovery workflow—is therefore not merely a technical step but a critical strategic imperative for economic viability and scientific progress. This guide examines the cost of failure to dereplicate effectively and provides a technical roadmap for implementing robust dereplication within marine natural product (MNP) discovery pipelines.

The R&D Productivity Crisis: Quantifying the Drain

The broader pharmaceutical industry has faced a well-documented decline in R&D efficiency for decades. This context underscores the acute need for optimization in all discovery stages, including early bioprospecting.

Table 1: Key Metrics of R&D Inefficiency in Pharmaceutical Development

| Metric | Data | Implication |

|---|---|---|

| Cost per Novel Approved Drug | Exceeds $3.5 billion [8] | Justifies significant investment in early-stage efficiency measures like dereplication. |

| Clinical Attrition Rate (Phase I to III) | Up to 60% failure [9] | Highlights the need to ensure only the most promising, novel candidates enter costly clinical stages. |

| Industry-Wide R&D Spend | Projected at $265 billion globally [9] | Even small percentage savings from avoiding rediscovery free up substantial capital for innovative work. |

| Trial Timelines (Oncology/CNS) | Median >7.5 years [9] | Early dereplication accelerates the pre-clinical discovery phase, shortening the overall pipeline. |

This productivity gap forces a strategic evolution. The industry has shifted from a closed model to an open, collaborative ecosystem encompassing biotech innovators and specialized service providers [10]. In this competitive landscape, blue biotechnology companies that master efficient discovery through dereplication gain a distinct advantage in securing investment and partnerships.

Blue Biotechnology: Potential and Pipeline Challenges

Blue biotechnology, the application of science and technology to marine organisms, is a high-growth sector central to the sustainable blue bioeconomy [11] [12]. Its promise is vast, spanning pharmaceuticals, nutraceuticals, cosmetics, and biomaterials [5].

Table 2: The EU Blue Biotechnology Sector: Economic Snapshot (2022-2023)

| Economic Indicator | 2022 Value | 2023 Estimate | Notes |

|---|---|---|---|

| Turnover | €942 million | ~3% increase [12] | Demonstrates steady market growth. |

| Gross Value Added (GVA) | €327 million | ~3% increase [12] | Germany and France lead, contributing 29% and 21% of GVA respectively [12]. |

| Direct Employment | ~2,400 persons | ~3% increase [12] | High-skill sector with an average wage of ~€66,300 [12]. |

| Market Value by Application (2021) | - | - | Drug discovery represents the largest segment (24%), followed by vaccine development (13%) [12]. |

Despite this growth, the MNP discovery pipeline faces specific technical and economic challenges that amplify the cost of rediscovery:

- Sustainable Sourcing: Cultivating marine microbes or accessing deep-sea organisms is complex and expensive [2] [6].

- Structural Complexity: Elucidating the absolute configuration of novel MNPs with multiple stereogenic centers remains a major technical bottleneck [2].

- High-Throughput Compatibility: Crude marine extracts are chemically complex, creating challenges for target-based screening campaigns [2].

These factors make every step in the pipeline resource-intensive. Wasting these resources on rediscovering known compounds, such as the common metabolite tambjamine from marine Pseudomonas spp., is a luxury the field cannot afford.

Foundational Dereplication Methodologies: From Classical to Integrated

Effective dereplication requires a tiered, multi-technique approach. The goal is to filter out knowns with increasing confidence before committing to full structure elucidation.

Bioactivity-Coupled High-Throughput Screening (HTS)

While HTS of complex extracts presents challenges, innovative assays are being developed for MNP discovery.

- Protocol Example: Image-Based Biofilm Inhibitor Screening [2]

- Objective: Identify compounds from marine microbial extracts that inhibit biofilm formation in Pseudomonas aeruginosa.

- Workflow:

- Strain Preparation: Use a constitutively GFP-tagged P. aeruginosa strain.

- Assay Setup: Dispense test extracts and bacterial culture into 384-well plates. Include controls (e.g., DMSO, known inhibitors).

- Incubation & Staining: Allow for biofilm formation, then add the redox dye XTT to assess metabolic activity.

- Image Acquisition: Use automated, non-z-stack epifluorescence microscopy to capture biofilm biomass (GFP signal) and metabolic activity (XTT conversion).

- Data Analysis: Employ automated image analysis scripts to quantify biofilm coverage and cellular activity. Prioritize hits that show biofilm inhibition without general antibacterial activity (low XTT signal reduction).

- Utility: This phenotypic HTS method discovers inhibitors of a complex virulence trait and, when coupled with rapid chemical analysis of active wells, enables early dereplication.

Dereplication Workflow Following Bioactive HTS

Analytical Dereplication: The Core of Modern Workflows

The integration of separation science with spectroscopy forms the backbone of dereplication.

- Primary Tool: High-Resolution LC-MS/MS

- Protocol: Extracts are separated via Ultra-High-Performance Liquid Chromatography (UHPLC) and analyzed in real-time using a high-resolution mass spectrometer (e.g., Q-TOF, Orbitrap). Data-Dependent Acquisition (DDA) or Data-Independent Acquisition (DIA) modes are used to collect precursor ion m/z, retention time (RT), and MS/MS fragmentation spectra for all detectable metabolites [2].

- Data Processing: Software (e.g., MZmine, MS-DIAL) deconvolutes raw data into discrete molecular features (RT-m/z pairs) and aligns them across samples.

Informatics & Database Interrogation

The extracted molecular features are queried against specialized databases.

- Key Databases:

- GNPS (Global Natural Products Social Molecular Networking): A community-wide, open-access platform for storing and sharing MS/MS spectra [2].

- MarinLit: A curated database dedicated to marine natural products literature.

- PubChem, ChemSpider: Broad chemical databases.

- Matching Strategy: Searches are based on precursor mass (with a narrow tolerance, e.g., 5 ppm) and MS/MS spectral similarity (e.g., cosine score). A high spectral match strongly indicates rediscovery.

Advanced Strategies: Molecular Networking and AI-Driven Workflows

To address novel compounds not in databases, advanced comparative and predictive strategies are employed.

Molecular Networking via GNPS

This is a powerful visual and computational tool for dereplication and novelty targeting.

- Protocol: Molecular Network Creation [2]

- Data Submission: Convert aligned MS/MS data from a set of related samples (e.g., multiple marine bacterial isolates) into the open .mzML format.

- GNPS Analysis: Upload files to the GNPS workflow. Parameters define MS/MS spectral similarity (cosine score > 0.7) and minimum matched peaks.

- Network Visualization: Nodes (representing consensus MS/MS spectra) cluster together based on similarity, forming molecular families. Known compounds, identified via database match, anchor clusters of related analogues.

- Dereplication & Novelty Detection: Annotate nodes by spectral match to reference libraries. Compounds in the same cluster as known metabolites are structurally related, guiding the targeted isolation of new analogues (putative novelty). Compounds in unannotated clusters represent distinct chemotypes worthy of prioritization.

In Silico Structure Prediction and AI

When database searches fail, computational methods predict structures from spectral data.

- Computer-Assisted Structure Elucidation (CASE): Systems combine NMR, MS, and other spectroscopic data with algorithmic rules to generate plausible structural candidates [2].

- Machine Learning (ML) Models: Trained on large datasets of known compound-structure-spectra relationships, ML models can predict structural features or even propose likely structures for unknown MS/MS or NMR spectra, accelerating the prioritization process [2].

Integrated Dereplication & Novelty Prioritization Pathway

Integrating Dereplication into the R&D Pipeline: A Strategic Framework

To mitigate economic and temporal drains, dereplication must be a foundational, integrated component, not an ancillary check.

Table 3: The Scientist's Toolkit for Dereplication in Blue Biotechnology

| Tool/Reagent Category | Specific Examples | Primary Function in Dereplication |

|---|---|---|

| Separation & Analysis | UHPLC-HR-MS/MS (Q-TOF, Orbitrap) | Provides accurate mass, RT, and fragmentation data for all metabolites in a complex extract. |

| Informatics & Databases | GNPS Platform, MarinLit, MZmine | Enables spectral matching, molecular networking, and data management. |

| Reference Standards | In-house library of known marine metabolites | Allows for co-injection (spiking) experiments to confirm identity via identical RT and MS/MS. |

| Bioassay Components | GFP-tagged reporter strains, viability dyes (XTT) | Couples chemical analysis with biological activity to dereplicate bioactive knowns rapidly. |

| Computational Tools | CASE software, machine learning models (e.g., from studies like Prihoda et al. [2]) | Predicts structures for unknowns and prioritizes novel chemical space. |

Strategic Implementation:

- Front-Loading: Perform HR-MS/MS profiling and molecular networking on crude extracts or early fractions before large-scale purification or extensive bioassay.

- Iterative Feedback: Use dereplication data to guide fractionation—only pursue fractions containing nodes of putative novelty.

- Cross-Disciplinary Collaboration: Integrate genomic data (e.g., biosynthetic gene cluster analysis) with metabolomic networks to predict novel compound families from genetically unique strains [2].

- Economic Justification: The cost of implementing these advanced dereplication tools is offset by avoiding the far greater expense of purifying, elucidating, and testing a known compound through later pipeline stages, which can waste months of work and hundreds of thousands of dollars.

The high cost of rediscovery is a preventable drain on the economic and innovative potential of blue biotechnology. Implementing robust, integrated dereplication protocols is a critical leverage point for improving R&D productivity. As the field advances, future gains will come from:

- Enhanced Data Integration: Seamlessly linking genomic, metabolomic, and bioactivity data in unified platforms.

- Advanced AI Models: Developing more accurate models for de novo structure prediction from minimal spectroscopic data.

- Global Collaboration: Expanding open-access spectral libraries and fostering pre-competitive sharing to elevate the entire field's efficiency.

By adopting the methodologies and strategic framework outlined here, researchers and organizations can ensure their pipelines are focused squarely on true novelty, accelerating the sustainable delivery of marine-derived solutions to global health and industrial challenges.

Biodiversity and Complexity of Marine Ecosystems

Marine environments encompass over 70% of the Earth's surface and host an enormous, largely untapped reservoir of biological diversity with immense potential for biotechnology and drug discovery [5]. The complexity of these ecosystems, ranging from sunlit coastal waters to deep-sea hydrothermal vents, presents both a unique resource and a significant challenge for systematic research and development.

Extent of Biodiversity: It is estimated that there are approximately 2.2 ± 0.18 million marine eukaryotic species globally [4]. However, current cataloging efforts identify only around 600 new marine species per year, suggesting it could take an extraordinarily long time to fully document this diversity [4]. For prokaryotes alone, an estimated 1.3 million species exist worldwide, with only about half currently cataloged [4]. This vast, undocumented biodiversity is a primary driver for blue biotechnology—the application of biotechnological techniques to marine organisms to develop new products and processes [5] [13].

Environmental and Biological Complexity: Marine ecosystems are characterized by extreme gradients and a complex interplay of abiotic and biotic factors. Key environmental variables include hydrostatic pressure (increasing by 1 atmosphere per 10 meters depth), temperature (from -2°C in polar waters to over 400°C at hydrothermal vents), light availability, salinity, nutrient concentrations, and oxygen levels [4] [14]. Biologically, marine organisms have evolved unique adaptations to thrive in these conditions. For instance, their cellular membranes may contain negatively charged phospholipids to maintain fluidity under high pressure, and they can produce specialized compounds like docosahexaenoic acid (DHA) and eicosapentaenoic acid (EPA) [4].

Knowledge Gaps and Identification Challenges: A critical analysis of biodiversity data reveals profound knowledge gaps, especially in deep-sea environments. A 2025 study focusing on the Norwegian continental shelf—an area of interest for deep-sea mining—analyzed over 10.5 million species occurrence records spanning 149 years [14]. The findings were stark: 97% of records were from shallow waters (<500 m), with only 3% from deep waters (≥500 m). Furthermore, the study concluded that the species identities in deep-sea data are insufficient to quantify reliable area-based biodiversity indices, highlighting a massive deficit in our understanding of benthic (seafloor) life [14]. This data paucity directly complicates the identification of organisms and their associated bioactive compounds.

Expert Disagreement in Species and Habitat Sensitivity: The challenge of identification extends to assessing ecosystem vulnerability. A 2025 meta-analysis of 21 studies that used expert judgment to rate the sensitivity of marine species and habitats to human pressures found significant inconsistencies [15]. While there was broad agreement on major threats (e.g., bottom trawling, climate change), scores from individual experts varied widely within and across studies. Sensitivity scores were often more similar when collected with the same methodology in different regions than when collected with different methods in the same region [15]. This inconsistency underscores the difficulty of achieving standardized, reliable identification and assessment in complex marine systems, even among specialists.

Table 1: Documented Biodiversity and Knowledge Gaps in Marine Environments

| Metric | Shallow Waters (<500 m) | Deep Waters (≥500 m) | Source/Notes |

|---|---|---|---|

| Species Occurrence Records | 97% of total records | 3% of total records | From a 149-year dataset in the N. Atlantic [14] |

| Grid Cells with Data | 32,274 cells | 15,528 cells | Analysis of 122,955 total grid cells [14] |

| Estimated Eukaryotic Species | Part of 2.2 ± 0.18 million total marine species | Part of 2.2 ± 0.18 million total marine species | Global estimate [4] |

| Cataloging Rate | ~600 new species identified per year | ~600 new species identified per year | Current global pace [4] |

| Data Sufficiency | Relatively higher, but patchy | Insufficient to calculate biodiversity indices | Major gap for benthic communities [14] |

The Centrality of Dereplication in Blue Biotechnology

In the context of these challenges—overwhelming biodiversity, extreme complexity, and difficult identification—dereplication emerges as a non-negotiable, foundational practice for efficient and sustainable blue biotechnology research. Dereplication is the process of early and rapid identification of known compounds within crude biological extracts to avoid redundant rediscovery, thereby streamlining the path to novel discoveries.

Economic and Temporal Imperative: The drug discovery pipeline from marine sources is exceptionally long and costly. For example, the biopharmaceutical company Pharma Mar S.A. invested 802 million USD and 15-20 years of development to bring a marine-derived cancer therapy to market [4]. This process yielded five active molecules, but only two were commercially viable enough to recover the investment [4]. The global marine-derived drugs market, valued at $12.4 billion in 2024, is projected to grow to $20.96 billion by 2030, intensifying the race for novel compounds [16] [17]. Without dereplication, research resources are wasted on re-isolating and re-characterizing known entities like the ubiquitous bacteriacin classes or common sponge alkaloids, draining funds and delaying genuine innovation.

Navigating Chemical Redundancy: Marine organisms, particularly microbes and invertebrates, often produce similar or identical bioactive compounds. This can be due to convergent evolution, shared symbiotic microbes, or horizontal gene transfer. For instance, many Bacillus and Vibrio species from different geographic locations produce similar bacteriocins [4]. Advanced dereplication employs genomic tools to identify biosynthetic gene clusters (BGCs) responsible for compound synthesis before laborious isolation begins. This allows researchers to prioritize strains with unique genetic potential, ensuring that effort is focused on truly novel chemistry.

Enabling Sustainable Bioprospecting: The principle of sustainable and ethical sourcing is central to the blue economy [5]. Collecting marine organisms, especially from vulnerable deep-sea habitats, has an ecological footprint. Efficient dereplication maximizes the information and potential yield from each collected sample, reducing the need for repeated, invasive sampling campaigns. This aligns with the goals of the European Union's Marine Strategy Framework Directive and other policies requiring sustainable ocean management [15] [18].

Table 2: The High Cost and Long Timeline of Marine Drug Development

| Stage | Typical Duration | Key Challenges & Costs | Role of Dereplication |

|---|---|---|---|

| Discovery & Preclinical | 3-7 years | Sample collection (ROVs, expeditions), extraction, in vitro/vivo testing. Highest attrition rate. | Crucial for prioritizing novel leads early, saving millions in R&D. |

| Clinical Trials (Phases I-III) | 6-9 years | Extremely costly human trials. High failure rate due to efficacy/safety. | Ensures clinical candidates are based on unique chemistry with clear IP. |

| Regulatory Approval & Commercialization | 1-3 years | FDA/EMA review, manufacturing scale-up, market launch. | Strong patent position for novel compounds is key to commercial viability. |

| Total Timeline & Cost | 15-20 years, ~$800M+ | Cumulative cost of failures, high technical barriers for marine sourcing. | Reduces redundant work, focuses resources, shortens time to novel candidates. |

Foundational Experimental Protocols for Marine Bioprospecting

Effective dereplication is integrated into a structured workflow. Below are detailed protocols for key stages in marine bioprospecting, from sample collection to initial bioactivity screening.

Protocol 1: Integrated Sample Collection & Metagenomic Library Construction This protocol is designed for the simultaneous collection of organism specimens and environmental DNA (eDNA), maximizing data from a single sampling effort.

- Site Selection & Collection: Using remotely operated vehicles (ROVs) or manned submersibles, target ecologically distinct niches (e.g., hydrothermal vent chimneys, deep-sea coral mounds, nutrient-rich brine pools) [14]. Precisely document GPS coordinates, depth, temperature, and salinity.

- Sterile Processing: In an onboard clean lab, aseptically subsample the specimen. For a sponge, for example, separate exterior and interior tissue.

- DNA Extraction & Sequencing: Extract high-molecular-weight DNA from a tissue subsample using a kit optimized for complex polysaccharides and inhibitors (e.g., CTAB method). Prepare sequencing libraries for both Illumina short-read (for assembly) and Oxford Nanopore long-read (for scaffolding) technologies. Sequence to high coverage (>50x).

- Metagenomic Analysis: Assemble reads into contigs using a hybrid assembler (e.g., SPAdes, metaSPAdes). Use antiSMASH or PRISM software to identify and annotate Biosynthetic Gene Clusters (BGCs) [19]. Compare BGCs against public databases (MIBiG, NCBI) for dereplication at the genetic level.

- Culture Attempts: Homogenize a separate tissue subsample in sterile seawater. Use dilution plating on multiple media types (e.g., marine agar, chitin agar, low-nutrient agar) incubated at in situ temperatures to isolate associated microorganisms.

Protocol 2: Bioactivity-Guided Fractionation with In-Line Dereplication This protocol links biological screening directly to chemical analysis to rapidly identify the active principle.

- Crude Extract Preparation: Lyophilize organism tissue or microbial biomass. Perform sequential extraction with solvents of increasing polarity (hexane, dichloromethane, ethyl acetate, methanol/water). Combine and evaporate each fraction under reduced pressure.

- High-Throughput Screening (HTS): Test all crude fractions in a panel of automated, microtiter plate-based bioassays (e.g., anti-cancer against a cell line panel, antibacterial against ESKAPE pathogens, enzyme inhibition) [19]. Use robotics for consistency and speed.

- LC-MS/MS Analysis of Active Fractions: Immediately analyze active fractions via High-Resolution Liquid Chromatography-Tandem Mass Spectrometry (HR-LC-MS/MS). Use a C18 column with a water-acetonitrile gradient. Acquire data in both positive and negative ionization modes.

- Dereplication Analysis: Process MS/MS data with software like GNPS (Global Natural Products Social Molecular Networking) or SIRIUS. Compare observed molecular formulas, isotopic patterns, and fragmentation spectra against databases such as MarinLit, AntiBase, and the in-house library. Molecular networking clusters similar spectra, visually highlighting both known compounds and unique, potentially novel clusters for isolation.

- Targeted Isolation: Based on dereplication results, use semi-preparative HPLC to isolate only the peaks corresponding to novel or high-priority compounds for downstream structural elucidation (NMR).

Marine Bioprospecting and Dereplication Workflow

Protocol 3: Genome Mining and Heterologous Expression for Supply This protocol addresses the critical supply problem by expressing marine-derived BGCs in cultivable model hosts.

- BGC Selection & Engineering: Identify a promising, dereplicated novel BGC from metagenomic data. Use software (e.g., ClonTractor, RED) to design optimal PCR or Gibson assembly primers for the entire gene cluster (often 30-80 kb).

- Vector Construction: Capture the BGC into a suitable shuttle vector (e.g., a BAC or cosmic vector) capable of replication in both E. coli (for cloning) and a chosen expression host like Streptomyces coelicolor or Pseudomonas putida.

- Heterologous Expression: Introduce the constructed vector into the expression host via conjugation or electroporation. Cultivate the engineered host under various fermentation conditions to activate the silent BGC.

- Metabolite Analysis & Scaling: Screen culture extracts for the target compound using LC-MS. Optimize fermentation media and conditions (pH, temperature, aeration) in bioreactors for yield maximization, providing a sustainable supply for preclinical studies.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful marine bioprospecting and dereplication rely on specialized tools and reagents.

Table 3: Key Research Reagent Solutions for Marine Biodiscovery

| Reagent / Material | Function & Specificity | Application in Dereplication |

|---|---|---|

| Standardized Marine Media (e.g., Marine Agar 2216, ATCC Medium 802) | Provides essential ions (Na+, Mg2+, Cl-) and nutrients to cultivate fastidious marine bacteria that fail on terrestrial media. | Cultivating the true producer of a bioactive compound from a complex holobiont (e.g., sponge). |

| Inhibition-Buffering DNA/RNA Extraction Kits (e.g., with CTAB or SPRI beads) | Counteracts potent PCR inhibitors common in marine samples (polysaccharides, polyphenols, humic acids) for high-quality NGS library prep. | Enabling metagenomic sequencing for early BGC-based dereplication. |

| LC-MS Grade Solvents & Ion-Pairing Reagents (e.g., Trifluoroacetic Acid - TFA) | Provides ultra-pure mobile phases for high-resolution chromatography. TFA improves peak shape for peptides and polar compounds. | Essential for generating reproducible, high-quality MS data for reliable database matching. |

| Commercial & In-House Natural Product Spectral Libraries (e.g., GNPS, MarinLit) | Curated databases of mass spectra, NMR shifts, and bioactivity data for known natural products. | The core reference for comparing analytical data to flag known compounds during dereplication. |

| Heterologous Expression Hosts & Vectors (e.g., Streptomyces strains, fosmid vectors) | Model, genetically tractable microorganisms and DNA carriers designed to express large, foreign BGCs. | Solving supply and production issues for novel compounds after successful dereplication and prioritization. |

| High-Throughput Bioassay Kits (e.g., ATP-based viability, fluorescence protease assays) | Miniaturized, robust biochemical assays formatted for 384-well plates to test many fractions with low reagent volume. | Rapidly identifying fractions with desirable bioactivity for downstream targeted analysis. |

Advanced Technologies and Future Trajectories

The field is being revolutionized by a suite of advanced technologies that enhance both dereplication and the overall discovery pipeline.

AI and Machine Learning: AI-driven platforms are now used to predict the biological activity, toxicity, and chemical novelty of compounds directly from spectral data or genomic sequences, prioritizing candidates for isolation with unprecedented speed [19].

Hyphenated Analytical Systems: The integration of separation, spectroscopy, and bioactivity detection is key. Examples include:

- LC-MS-NMR: Where fractions from the LC are automatically analyzed by NMR, providing structural information in real-time.

- Bioaffinity Chromatography: Where a biological target (e.g., an enzyme) is immobilized on a column to selectively capture active compounds from a crude extract.

Sustainable Bioproduction: Advances in synthetic biology and metabolic engineering are moving the field beyond collection. By inserting marine-derived BGCs into industrial microbial chassis, researchers can create "cell factories" for the sustainable, large-scale production of valuable marine compounds, mitigating environmental impact [5].

Strategic Role of Dereplication in Marine Drug Discovery

The unique challenges of marine environments—their profound biodiversity, extreme physicochemical complexity, and the associated difficulties in species and compound identification—define the frontier of blue biotechnology. Within this context, dereplication is not merely a technical step but a critical strategic framework. It is the essential filter that allows researchers to navigate this complexity efficiently, transforming an overwhelming biological resource into a tractable pipeline for innovation. By integrating advanced genomic, spectroscopic, and bioinformatic tools into standardized protocols from the moment of sample collection, the field can overcome the historical burdens of cost, time, and redundancy. This rigorous approach ensures that the quest for new medicines, materials, and solutions from the ocean is both scientifically productive and aligned with the principles of sustainability and conservation that are essential for the future of the blue economy.

1. Introduction: The Imperative of Dereplication in Blue Biotechnology

The systematic identification of known compounds, or dereplication, is a critical gatekeeping step in natural product discovery. Its primary function is to prevent the costly and time-consuming reinvestigation of previously characterized molecules, thereby accelerating the path toward novel bioactive leads [2]. In terrestrial settings, dereplication methodologies matured alongside the golden age of antibiotic discovery from soil microbes. However, the paradigm has fundamentally shifted with the rise of blue biotechnology—the application of science and technology to living aquatic organisms for products and services [2] [5]. The marine environment presents a vastly underexplored reservoir of biodiversity, estimated to contain approximately 2.2 million eukaryotic species, with thousands of new microbial species cataloged annually [4]. This immense biological potential is matched by unique challenges: extreme physicochemical conditions (high pressure, salinity, low temperature), difficulties in culturing marine organisms, and the sheer structural novelty of marine natural products (MNPs) [4] [2]. Within this context, dereplication transforms from a mere efficiency tool into an essential strategic framework. It is the critical filter that enables researchers to navigate the overwhelming complexity of marine extracts and focus resources on truly unique chemotypes, making the pursuit of MNPs for drug development and other biotechnological applications economically and practically viable [2] [20].

2. Methodological Evolution: From Terrestrial Foundations to Marine Adaptation

The core philosophy of dereplication—early identification to prioritize novelty—remains constant, but its technical execution has evolved significantly to meet the demands of different environments.

Table 1: Evolution of Key Dereplication Parameters from Terrestrial to Marine Focus

| Parameter | Classical Terrestrial Approach | Modern Marine-Integrated Approach |

|---|---|---|

| Cultivation Source | Primarily soil isolates (e.g., Streptomyces), often amenable to lab culture [21]. | Marine sediment, water column, symbionts, extremophiles; requires specialized cultivation (e.g., diffusion chambers) [21]. |

| Chemical Library & Database | Reliance on terrestrial compound libraries (e.g., Antibase, Chapman & Hall). | Integration of marine-specific databases (e.g., MarinLit, NPASS) and untargeted mass spectral networks [2]. |

| Primary Analytical Tool | HPLC-UV/VIS, coupled with literature review on specific taxa. | Hyphenated LC-HRMS/MS coupled with molecular networking (e.g., GNPS) [21] [2]. |

| Integrative Data | Limited, mostly bioactivity and taxonomy. | Multi-omics: Metabolomics linked with genomics (BGC analysis) and metagenomics [2] [20]. |

| Scale & Throughput | Lower throughput, more targeted. | High-throughput (HTS) compatible, automated from screening to analysis [2]. |

2.1 Terrestrial Foundations and Their Limitations Traditional terrestrial dereplication relied heavily on bioactivity-guided fractionation coupled with techniques like thin-layer chromatography (TLC) and standard HPLC-UV. Identification was often tentative, based on comparing spectral properties and retention times with published data for related taxa. The cultivation of soil bacteria, particularly Actinomycetes, was relatively standardized. A landmark 2025 study on Australian soil samples exemplifies the modern terrestrial approach: using microbial diffusion chambers for in situ cultivation, researchers recovered 1,218 bacterial isolates, with 16% showing antibiotic activity [21]. Dereplication via Global Natural Products Social Molecular Networking (GNPS) identified known antibiotics in 33% of bioactive strains, immediately streamlining the discovery pipeline [21]. This study highlights a key terrestrial challenge: even with advanced cultivation, a significant portion of bioactivity stems from known compounds, underscoring dereplication's value.

2.2 Marine Adaptation and Integration Marine dereplication inherits these tools but operates under expanded complexity. The initial cultivation hurdle is higher. While diffusion chambers also aid marine isolate recovery [21], many marine microbes require simulated natural conditions. Following cultivation, the chemical analysis must account for greater structural diversity. High-Resolution Mass Spectrometry (HR-MS/MS) has become the cornerstone. It provides accurate mass and fragmentation fingerprints for compounds, which are queried against public spectral libraries [2]. The most significant evolutionary leap is the adoption of molecular networking, as implemented by the GNPS platform. This technique visualizes the chemical space of an extract by clustering MS/MS spectra based on similarity, creating a network where related molecules (e.g., analogs within a biosynthetic family) cluster together [2] [20]. This allows for the rapid annotation of both known molecule families and the immediate spotting of unique, unclustered nodes that represent strong candidates for novel chemistry. Furthermore, marine dereplication is increasingly multi-omic. Genomic DNA sequencing of an active marine isolate can reveal biosynthetic gene clusters (BGCs) that code for secondary metabolite pathways. Correlating the presence of a "silent" or expressed BGC with mass spectrometric features in the metabolome provides powerful orthogonal evidence for novelty, a process called genome mining [2] [20]. This integrated approach is crucial for the sustainable and rational exploration of marine genetic resources.

3. Quantitative Data: Comparing Terrestrial and Marine Dereplication Outcomes

The practical impact of dereplication is quantifiable in key metrics that differentiate terrestrial and marine campaigns.

Table 2: Comparative Quantitative Outcomes of Dereplication Studies

| Study Focus | Dereplication Method | Key Quantitative Outcome | Implication |

|---|---|---|---|

| Terrestrial Soil Bacteria [21] | GNPS-based MS/MS networking & genomics. | Of bioactive isolates, 33% were dereplicated as known antibiotics (actinomycin D, valinomycin). Genomics revealed an additional ~5% producing known compounds not detected by MS. | Highlights the need for multi-layered dereplication; MS alone may miss some knowns. |

| Marine Bacteriocins [4] | Activity-guided isolation with molecular weight/activity comparison. | Table lists >20 marine bacteriocins; weights range from 1.35 kDa to >4500 Da, highlighting vast chemical diversity requiring advanced MS for dereplication. | Simple comparison is insufficient; database integrity and spectral matching are critical. |

| General NP Discovery [2] | Integrated metabolomics-genomics workflow. | Dereplication and structure elucidation are cited as the two major bottlenecks, consuming significant time and resource investment in discovery pipelines. | Validates dereplication as a primary target for methodological innovation in both fields. |

The data underscores that dereplication's efficiency gain is universal. The terrestrial study [21] shows a clear metric: one-third of promising isolates can be deprioritized early. The marine example [4] implicitly shows the challenge: without robust dereplication, characterizing each unique compound from the immense structural pool becomes prohibitive.

4. Experimental Protocols: Detailed Methodologies

4.1 Protocol 1: Integrated Dereplication of Soil-Derived Bacteria Using Diffusion Chambers and GNPS [21] This protocol details a modern, integrated approach for terrestrial microbial dereplication.

- Sample Preparation & Cultivation: Soil is suspended in a sterile saline solution. Microbial diffusion chambers are constructed using 0.03 µm semi-permeable membranes sealed to a 96-well plate frame. The chambers are filled with a low-nutrient SMS agar, inoculated with a diluted soil slurry (~1 cell/100 µL), and sealed. Chambers are incubated in situ by burying them in the source soil for 2-4 weeks to allow growth of uncultivable species via nutrient diffusion.

- Isolate Retrieval & Bioactivity Screening: Colonies from chamber wells are retrieved and domesticated on R2A agar. Isolates are grown in liquid culture for 7 days. Antibiotic activity is screened via overlay assays against target pathogens (e.g., E. coli, S. aureus, multidrug-resistant strains).

- Metabolite Extraction & MS Analysis: Biomass from bioactive isolates is extracted with organic solvents (e.g., ethyl acetate). Crude extracts are analyzed by reversed-phase LC-HRMS/MS (e.g., C18 column, water-acetonitrile gradient, positive/negative ESI modes).

- Mass Spectrometric Dereplication: Raw MS/MS data is processed (peak picking, alignment) and uploaded to the GNPS platform. A molecular network is created using the feature-based molecular networking (FBMN) workflow. Spectra are compared against GNPS spectral libraries (e.g., GNPS, NIST, MassBank). Matches with high cosine scores (>0.7) and significant library presence are annotated as known compounds.

- Genomic Corroboration: DNA from the isolate is sequenced (Illumina). The genome is assembled, and BGCs are predicted using tools like antiSMASH. The presence of BGCs matching the dereplicated compound class (e.g., nonribosomal peptide synthetase for valinomycin) provides confirmatory evidence.

4.2 Protocol 2: Dereplication of Marine Extracts via Molecular Networking and Database Integration [2] [20] This protocol is tailored for the complex extracts typical of marine invertebrates or microbial symbionts.

- Extract Preparation: Marine organism tissue is lyophilized and homogenized. Metabolites are exhaustively extracted using a sequential solvent system (e.g., hexane, dichloromethane, methanol) to capture compounds of varying polarity.

- High-Throughput LC-MS/MS Analysis: Extracts are analyzed using a UHPLC system coupled to a Q-TOF or Orbitrap mass spectrometer. A short, fast gradient is used for initial high-throughput profiling. Data-Dependent Acquisition (DDA) or Data-Independent Acquisition (DIA) modes are used to collect MS/MS spectra for all detectable ions.

- Digital Dereplication Workflow:

- Step A - In-Silico Filtering: Acquired HRMS data (precise m/z) is queried against marine-specific structural databases (e.g., MarinLit, NPASS) using molecular formula or exact mass search. This provides a preliminary list of knowns.

- Step B - Molecular Networking: MS/MS data is subjected to molecular networking on GNPS. This clusters related molecules, often exposing families of analogs. Library search within the network annotates entire clusters of known compounds simultaneously.

- Step C - Metadata Integration: Taxonomic information of the source organism is integrated. If the extract is from a well-studied genus like Penicillium, and the network cluster matches known Penicillium metabolites, confidence in dereplication is high.

- Prioritization: Features with no database match (by mass or spectrum) and belonging to unannotated clusters in the network are flagged as high-priority for novel compound isolation. Nodes connected to annotated clusters but with different spectra may represent new analogs.

5. Visualization: Dereplication Workflows

Diagram 1: Integrated Dereplication Workflow from Source to Decision.

Diagram 2: Molecular Networking Logic for Dereplication & Prioritization.

6. The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent Solutions for Dereplication Workflows

| Item | Function in Dereplication | Typical Specification/Example |

|---|---|---|

| Semi-permeable Membranes [21] | Enables in situ cultivation of uncultivable microbes in diffusion chambers by allowing nutrient exchange. | 0.03 µm polycarbonate track-etched membrane (e.g., Whatman Nuclepore). Pore size allows passage of nutrients but not cells. |

| Low-Nutrient Cultivation Media [21] | Mimics oligotrophic environmental conditions to promote growth of slow-growing or fastidious microbes. | SMS Agar, R2A Agar; lower nutrient concentration than standard media (e.g., LB, TSB). |

| LC-MS Grade Solvents | Essential for reproducible, high-sensitivity metabolite extraction and LC-MS analysis. Minimal impurities prevent background noise. | Acetonitrile, Methanol, Water, Ethyl Acetate (all LC-MS grade). |

| MS Calibration Solution | Ensures mass accuracy of the HRMS instrument, which is critical for reliable molecular formula assignment. | ESI Positive/Negative Ion Calibration Kit (e.g., from Agilent, Thermo Fisher). |

| Internal Standards for Metabolomics | Used to monitor instrument performance, correct for retention time shifts, and enable semi-quantification. | Stable isotope-labeled compounds or chemical analogs not expected in samples. |

| DNA Extraction Kit (Microbial) | High-quality genomic DNA extraction is the first step for genome sequencing and BGC analysis. | Kits optimized for Gram-positive/Gram-negative bacteria or fungi (e.g., from Qiagen, Macherey-Nagel). |

| PCR Reagents for 16S/ITS Sequencing | For rapid taxonomic identification of microbial isolates, informing dereplication based on taxonomic novelty. | 16S rRNA gene primers (27F, 1492R), high-fidelity DNA polymerase, dNTPs. |

| Bioinformatics Software Suites | For processing and interpreting omics data. Essential for the integrated dereplication approach. | antiSMASH (BGC prediction), MZmine (MS data processing), CytoScape (network visualization). |

7. Conclusion and Future Trajectory

Dereplication has evolved from a simple checklist activity into a sophisticated, multi-omic strategic intelligence engine central to blue biotechnology. The transition from terrestrial to marine exploration has driven this evolution, necessitating the integration of advanced cultivation, high-throughput metabolomics, genomics, and bioinformatics into a unified workflow. The future of dereplication lies in increased automation and artificial intelligence. Machine learning models trained on vast spectral and genomic datasets will predict novel compound classes and bioactivity directly from crude extract data, further compressing the discovery timeline [2]. As blue biotechnology strives to unlock the ocean's sustainable bounty for drug development, materials science, and beyond [22] [5] [6], continued innovation in dereplication will remain the critical factor in ensuring that this exploration is both efficient and effective, turning the vast chemical mystery of the ocean into a tractable resource for discovery.

Modern Dereplication Workflows: Techniques and Applications in Marine Drug Discovery

The exploration of marine biological resources—blue biotechnology—represents a frontier for discovering novel bioactive compounds with applications in pharmaceuticals, nutraceuticals, and agrochemicals [13]. The marine metabolome is vast and largely uncharted, estimated to contain orders of magnitude more unique chemical structures than currently cataloged [23]. This immense diversity, however, presents a significant bottleneck: the high probability of rediscovering known compounds during resource-intensive bioassay-guided fractionation campaigns. Dereplication—the rapid identification of known compounds within complex mixtures at the earliest stages of screening—is therefore not merely a convenience but a critical economic and strategic necessity [24]. It accelerates the discovery pipeline by allowing researchers to prioritize truly novel leads, conserving precious marine samples and research funding.

The economic context underscores this urgency. The blue economy, encompassing sustainable marine resource use, is projected to reach three trillion USD and employ 40 million people by 2030 [25]. Realizing this potential depends on efficient bioprospecting. Yet, traditional dereplication relying on a single analytical technique is often insufficient, leading to ambiguous identifications and missed opportunities. The integration of High-Performance Liquid Chromatography-Mass Spectrometry (HPLC-MS) and Nuclear Magnetic Resonance (NMR) spectroscopy, powered by specialized databases, provides a synergistic solution. This tandem approach delivers a higher level of confidence in compound annotation, transforming dereplication from a bottleneck into a catalyst for sustainable innovation in blue biotechnology [26].

Foundational Analytical Techniques: HPLC-MS and NMR

The power of integrated dereplication stems from the complementary strengths and weaknesses of HPLC-MS and NMR spectroscopy. A comparative analysis reveals how their synergy provides a more comprehensive analytical profile than either technique alone [26].

HPLC-MS excels in sensitivity and separation power. It can detect metabolites at very low concentrations (femtomolar to attomolar range) and boasts high resolution (~10³–10⁴). Its primary strengths include the ability to determine exact molecular mass (yielding molecular formulae), generate fragment ions for structural clues via tandem MS (MS/MS), and handle complex mixtures through chromatographic separation. Its major limitations are its dependence on a compound's ionization efficiency—leaving some classes "MS-silent"—and challenges with absolute quantification due to ion suppression effects from co-eluting matrix components [26].

NMR spectroscopy, in contrast, is a quantitative and highly reproducible technique that provides definitive structural information. It elucidates atomic connectivity, functional groups, and stereochemistry through parameters like chemical shift, J-coupling, and nuclear Overhauser effect (NOE). It is non-destructive and requires minimal sample preparation. Its principal limitation is relatively lower sensitivity, typically detecting compounds in the micromolar (≥1 μM) range, making it less suited for trace-level analysis in crude extracts [26].

Table 1: Core Complementary Strengths of HPLC-MS and NMR Spectroscopy

| Analytical Parameter | HPLC-MS | NMR | Synergistic Advantage |

|---|---|---|---|

| Sensitivity | Very High (fM-aM) | Moderate (μM) | Broad dynamic range for major & minor components |

| Structural Output | Molecular formula, fragment ions | Atomic connectivity, functional groups, stereochemistry | Holistic structural elucidation |

| Quantification | Relative (prone to matrix effects) | Absolute (inherently quantitative) | Robust quantitative data |

| Sample Throughput | High | Moderate | Balanced workflow |

| Key Limitation | Ionization bias, matrix suppression | Lower sensitivity | Techniques compensate for each other's blind spots |

Recent advancements have actively addressed compatibility challenges that historically discouraged combined use. A pivotal 2025 study demonstrated that a single sample preparation protocol could service both techniques sequentially. It confirmed that using deuterated buffers for NMR analysis did not lead to detectable deuterium incorporation into metabolites, nor did it adversely affect subsequent LC-MS feature abundance [27]. This protocol typically involves protein removal via solvent precipitation or molecular weight cut-off filtration, which was identified as the major factor influencing metabolite recovery, followed by resuspension in a compatible buffer for NMR analysis first, and then LC-MS [27].

Integrated Dereplication Workflow: From Sample to Annotation

A modern, efficient dereplication pipeline for marine extracts strategically sequences analytical tools and data interrogation steps. The following workflow (Figure 1) outlines this integrated process.

Figure 1: Integrated Dereplication Workflow for Marine Natural Products. The process begins with a single extract aliquot processed through a unified protocol [27], followed by parallel HPLC-MS and NMR analyses. Data streams converge for simultaneous database querying, leading to a confident dereplication decision.

1. Sample Preparation: The workflow begins with a unified preparation protocol applicable to both techniques. For a marine microbial broth or invertebrate extract, this involves homogenization, solvent extraction (e.g., using methanol/water), and a critical step of protein/ salt removal via solid-phase extraction or filtration to prevent instrument interference and ion suppression [27]. The cleaned extract is divided, with one portion analyzed directly and another potentially used for pre-fractionation if complexity is extreme.

2. Parallel Analytical Runs:

- HPLC-MS Analysis: The extract is separated via reverse-phase HPLC coupled to a high-resolution mass spectrometer (e.g., Q-TOF or Orbitrap). Data-Dependent Acquisition (DDA) or Data-Independent Acquisition (DIA) is used to collect MS¹ (precursor) and MS² (fragment) data for all detected features. Key outputs are retention time (RT), accurate mass (for molecular formula assignment), and MS/MS fragmentation patterns [28].

- NMR Analysis: The parallel sample is analyzed, typically starting with 1D ¹H NMR for a rapid fingerprint. Based on need, 2D experiments like COSY (correlation spectroscopy), HSQC (heteronuclear single quantum coherence), and HMBC (heteronuclear multiple bond correlation) are performed to map proton-proton networks and carbon-proton connectivities, revealing the compound's skeletal framework [26].

3. Data Integration and Database Query: The molecular formula from MS and substructural motifs from NMR are used as joint search criteria. This dual input significantly narrows the candidate pool in databases compared to using either data type alone. Search strategies include: * Exact Molecular Formula Search filtered by marine natural product sources. * MS/MS Spectral Library Matching against platforms like GNPS (Global Natural Products Social Molecular Networking) or in-house libraries [28]. * NMR Chemical Shift Prediction & Matching using tools that predict shifts for candidate structures. * Peak Dictionary Lookups in specialized marine natural product databases.

4. Dereplication Decision: A confidence level is assigned to each annotation. A match of RT, accurate mass, MS/MS spectrum, and key NMR signals constitutes a Level 1 identification (highest confidence). Discrepancies trigger a review—it may be a novel derivative of a known compound or a genuinely novel scaffold, flagging it for priority isolation [24].

The Critical Role of Integrated Databases and Bioinformatics

Databases are the enablers that transform analytical data into knowledge. Effective dereplication requires navigating a multi-layered database ecosystem, categorized below.

Table 2: Key Database Categories for Dereplication in Blue Biotechnology

| Database Category | Primary Function | Representative Examples | Utility in Dereplication |

|---|---|---|---|

| General Metabolomic/Chemical | Store chemical structures, properties, and spectral data. | PubChem [29], SciFinder-n [30], METLIN, MassBank | Broad search for molecular formula, structure; source filtering is key. |

| Specialized Natural Product | Curate compounds from biological sources with associated biological data. | MarinLit, NPASS, AntiBase | Essential for filtering results to marine or microbial origins. |

| Tandem MS Spectral Libraries | Archive experimental MS/MS fragmentation patterns. | GNPS [28], mzCloud, ReSpect | Direct spectral matching for high-confidence annotation. |

| Genomic & Metagenomic | Link biosynthetic gene clusters (BGCs) to potential metabolites. | MIBiG, IMG-ABC, MGnify [25] | Predict compound class from genomic data of the source organism. |

| Literature Citation | Provide access to full-text research for validation. | MEDLINE [30], Biotechnology Source [31], Elsevier ScienceDirect | Contextualize findings and locate original isolation reports. |

The current trend moves beyond simple querying toward predictive and integrative bioinformatics. Tools like SIRIUS/CSI:FingerID use MS/MS data to predict molecular fingerprints and search structural databases in silico, which is invaluable for novel compounds absent from spectral libraries [23]. Molecular Networking on GNPS clusters MS/MS spectra by similarity, visually relating compounds within an extract and allowing for annotation propagation; if one node is identified, structurally similar neighbors can be hypothesized [28]. The ultimate frontier is the integration of genomic and metabolomic data ("genome mining"), where the presence of a specific biosynthetic gene cluster in the source organism's genome is used to predict the type of compound produced, guiding the dereplication search [25].

The architecture of this integrated data ecosystem is visualized in Figure 2.

Figure 2: Architecture of an Integrated Database Ecosystem for Dereplication. Analytical data feeds into and queries a interconnected network of specialized databases. Bioinformatics tools leverage these connections to generate confident annotations.

Advanced and Emerging Methodologies

To address the challenge of annotating novel compounds not found in libraries, advanced methodologies are emerging.

Multiplexed Chemical Metabolomics (MCheM): This cutting-edge strategy uses post-column derivatization reactions integrated with LC-MS/MS to probe specific functional groups. As analytes elute from the HPLC, they mix with reagents that selectively label groups like amines, carbonyls, or epoxides, causing a predictable mass shift. Detecting this shift confirms the presence of that functional group in the unknown compound [23]. In a 2025 study, MCheM improved the correct top-ranking annotation by 31.9% for library compounds and by 48.8% for authentic natural product standards when used with in-silico prediction tools [23]. For blue biotechnology, this method can rapidly flag novel derivatives (e.g., a glycosylated analog with a new sugar moiety) by revealing specific reactive sites.

Quantitative Microscale NMR: Advances in cryoprobes and microcoil technology have dramatically reduced the amount of material needed for NMR analysis, pushing sensitivity toward the nanogram scale. This is transformative for blue biotechnology, where often only minute quantities of a precious marine compound are available after initial purification. It allows for acquiring crucial 2D NMR data earlier in the isolation pipeline.

In-silico Fragmentation and NMR Prediction: When a library match fails, computational tools predict the MS/MS fragmentation pattern or NMR spectrum of candidate structures generated from molecular formula. The candidate whose predicted spectra best match the experimental data is prioritized. These tools are constantly improving with machine learning models trained on larger datasets.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Integrated Dereplication Workflows

| Reagent / Material | Function | Application Note |

|---|---|---|

| Deuterated NMR Solvents (e.g., D₂O, CD₃OD) | Provides deuterium lock signal for stable NMR field; dissolves sample without obscuring ¹H spectrum. | Compatibility with LC-MS confirmed; no significant H/D exchange during analysis [27]. |

| LC-MS Grade Solvents (MeOH, ACN, H₂O) | Mobile phase for HPLC-MS; ensures minimal background ions and consistent chromatography. | Low volatility additives (e.g., formic acid) promote protonation in ESI+ mode [28]. |

| Solid-Phase Extraction (SPE) Cartridges (C18, HLB) | Desalting and clean-up of crude marine extracts; removes interfering salts and macromolecules. | Critical step to prevent ion suppression in MS and improve column longevity. |

| Molecular Weight Cut-Off (MWCO) Filters | Physical removal of proteins and large biomolecules via centrifugal filtration [27]. | Key step in unified prep protocol; major factor affecting metabolite recovery [27]. |

| Chemical Derivatization Reagents (e.g., AQC, Hydroxylamine) | Selective labeling of functional groups for MCheM workflows [23]. | Post-column infusion provides orthogonal structural data (e.g., confirms amine group). |

| Authentic Chemical Standards | Reference compounds for building in-house spectral libraries and validating identifications. | Pooling strategy by logP/mass optimizes library creation efficiency [28]. |

| Database Subscriptions (e.g., MarinLit, SciFinder-n) | Access to curated structural and spectral data for marine natural products. | Foundational resource for confident dereplication; requires institutional access [30]. |

Case Study: Dereplication in a Marine Streptomyces Discovery Campaign

Consider a research team screening marine Streptomyces strains from coastal sediments for novel antibiotics. The crude ethyl acetate extract of a fermentation broth shows promising activity against methicillin-resistant Staphylococcus aureus (MRSA).

- Initial Triage: HPLC-DAD-ESIMS analysis of the crude extract reveals a dominant UV-active peak with [M+H]⁺ at m/z 485.2500. A quick molecular formula search (C₂₈H₃₆O₇) in a marine natural product database like MarinLit returns several possible macrolides.

- Integrated Analysis: The team uses the unified prep protocol [27]. 1D ¹H NMR of the semi-purified active fraction shows characteristic olefinic and downfield oxymethine protons. HRMS/MS yields key fragments suggesting a glycosidic cleavage and a lactone ring.

- Database Interrogation: Simultaneous query: The molecular formula and MS/MS spectrum are submitted to GNPS. The molecular formula and key NMR chemical shifts (e.g., anomeric proton at δ 5.40 ppm) are queried in MarinLit. Both point to oleandromycin, a known macrolide antibiotic.

- Confirmation & Decision: Co-injection of the fraction with an oleandromycin standard shows identical RT, MS/MS, and HPLC-UV profile. The activity is thus dereplicated to this known compound. The team deprioritizes this strain for further large-scale isolation, instead focusing resources on other active extracts with no database matches—potential novel leads.

This streamlined process, completed in days, avoids months of labor spent isolating a known compound, exemplifying the power of the integrated approach.

The tandem integration of HPLC-MS, NMR, and databases represents the state-of-the-art in dereplication for blue biotechnology. This synergistic paradigm compensates for the limitations of individual techniques, provides multi-layered evidence for confident annotation, and dramatically accelerates the discovery pipeline. As marine bioprospecting scales to meet the demands of the growing blue economy, such efficiency is paramount [25].

The future of this field lies in deeper automation and artificial intelligence. Machine learning models will better predict NMR and MS spectra from structures (and vice versa). Real-time, cloud-based dereplication is emerging, where analytical instruments stream data to platforms that instantly query constantly updated global databases and return annotations while an experiment is still running. Furthermore, the systematic integration of metagenomic data from projects like TREC [25] will allow for genome-guided dereplication, predicting chemical scaffolds from biosynthetic potential before they are even isolated. By embracing these integrated analytical powerhouses, researchers can navigate the vast chemical diversity of the oceans with unprecedented precision, ensuring that blue biotechnology fulfills its promise as a sustainable source of innovation.

Harnessing Bioinformatics and AI for Rapid Compound Annotation and Prioritization