Decoding the Black Box: Achieving Transparency and Trust in AI-Driven Drug Discovery

This article provides a comprehensive analysis of the 'black box' problem in AI for drug discovery, addressing the critical need for transparency among researchers and development professionals.

Decoding the Black Box: Achieving Transparency and Trust in AI-Driven Drug Discovery

Abstract

This article provides a comprehensive analysis of the 'black box' problem in AI for drug discovery, addressing the critical need for transparency among researchers and development professionals. It explores the fundamental risks of opaque models, details practical methodologies of Explainable AI (XAI), outlines strategies for troubleshooting bias and implementation challenges, and examines frameworks for validation and regulatory compliance. By synthesizing current research and solutions, the article offers a roadmap for integrating interpretable AI to enhance scientific rigor, foster trust, and accelerate the development of safe, effective therapeutics.

The Black Box Dilemma in Drug Discovery: Understanding the Core Risks and Ethical Imperatives

In the context of AI-driven drug discovery, the "Black Box Effect" refers to systems that deliver predictions without revealing the internal logic behind their conclusions [1]. For researchers and scientists, this opacity is more than a technical curiosity—it is a significant stumbling block that complicates the validation of targets, the understanding of biological mechanisms, and the justification of costly experimental follow-ups [1] [2]. The inability to interpret a model's decision-making process raises critical concerns about trust, efficacy, and safety, particularly in the high-stakes field of therapeutic development [3] [2].

This Technical Support Center is designed to assist drug development professionals in diagnosing, troubleshooting, and overcoming the challenges posed by opaque AI/ML models. By providing clear guidance on Explainable AI (XAI) techniques and practical troubleshooting steps, we aim to bridge the gap between powerful predictive algorithms and the interpretable, actionable insights required for rigorous scientific research.

Core Concepts: Interpretability vs. Explainability

Understanding the tools available to open the black box begins with clarifying key terminology:

- Interpretability refers to the ability to discern the cause-and-effect relationships within a model, often by analyzing how changes in input features affect the output, even if the model's complete internal workings remain complex [4].

- Explainability is a related concept that seeks to answer the "why" behind a specific model prediction in human-understandable terms [4].

A primary strategy is to use inherently interpretable models (like linear regression or decision trees) whose structures are transparent by design [5]. However, for complex tasks requiring deep learning or ensemble methods, post-hoc interpretability techniques are essential. These methods, applied after a model is trained, can be model-agnostic (applicable to any model) or model-specific [5].

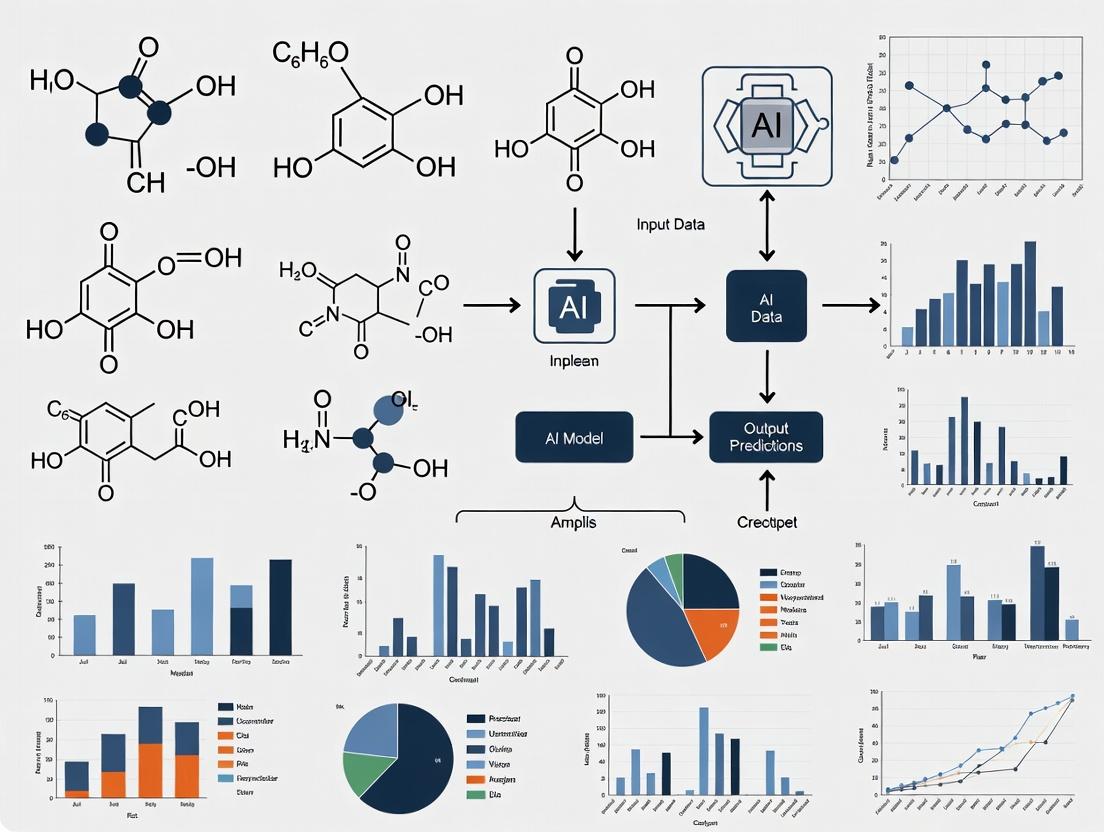

The following diagram categorizes the main approaches to tackling model opacity, illustrating the path from a trained black-box model to human-understandable insights.

Technical Support Center: Troubleshooting Guide & FAQs

Frequently Asked Questions (FAQs)

Q1: My deep learning model for toxicity prediction has high accuracy, but reviewers keep asking for "mechanistic insight." How can I provide this from a black box model? [3] [2]

A: This is a common hurdle in publishing and validating AI work in drug discovery. To address it:

- Employ Post-Hoc Explainability Techniques: Use model-agnostic methods like SHAP (Shapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to generate feature importance scores for individual predictions [4]. For a global view, use Partial Dependence Plots (PDP) to show the average relationship between a key molecular descriptor (e.g., logP, presence of a toxicophore) and the predicted toxicity [4].

- Link Features to Domain Knowledge: Map the high-importance features identified by SHAP or LIME back to known chemical or biological principles. For example, if the model consistently highlights a specific substructure, correlate this with literature on structural alerts for toxicity.

- Validate with Experimental Data: Use the model's explanations to form a testable hypothesis. If the model predicts toxicity based on feature X, design a small wet-lab experiment (e.g., a cell-based assay) to confirm that modifying feature X alters the toxic outcome. This bridges the AI prediction and biological validation [1].

Q2: We are using a random forest model to prioritize novel drug targets from genomic data. How can we be confident it's learning real biology and not just dataset artifacts? [1] [5]

A: Ensuring biological fidelity is critical.

- Conduct Permutation Feature Importance Analysis: This technique shuffles each feature column and measures the resulting increase in model error. Features whose permutation causes a large error increase are considered important. This helps distinguish causal signals from noise [4].

- Implement Rigorous Data Sanitization: The most common source of artifact is data leakage or batch effects. Ensure that no information from the validation/test sets leaks into the training process. For genomic data, correct for batch effects and platform-specific biases before training.

- Use Domain Knowledge as a Filter: Integrate prior biological knowledge into your model's feature set or as a post-processing filter. For instance, Envisagenics incorporates RNA-protein interaction data and known regulatory circuits of the spliceosome directly into its predictive features, grounding the model in mechanistic biology [1].

Q3: What are the simplest first steps to make my AI/ML workflow more interpretable for a drug discovery project? [4] [5]

A: Start with straightforward, actionable practices:

- Baseline with Simple Models: Before deploying a complex neural network, always train a simpler, inherently interpretable model like a logistic regression or a shallow decision tree on the same data. Use its performance as a benchmark. If the complex model's superior accuracy doesn't justify its opacity, consider sticking with the simpler one [5].

- Generate and Review Feature Importance: For your chosen model, consistently calculate and document global feature importance (e.g., via mean decrease in impurity for tree-based models or coefficients for linear models). Have a domain expert review the top features for biological plausibility.

- Create Standard Interpretation Reports: For key predictions (e.g., a top-ranked drug candidate), automate a report that includes: the prediction, the confidence score, a local explanation (e.g., SHAP values showing the top 5 features driving this specific prediction), and a counterfactual suggestion (e.g., "Which molecular property would most reduce the predicted off-target risk?").

Q4: Our team has developed a promising predictive model, but the clinical team doesn't trust it because they "can't see how it works." How do we build trust? [1] [2]

A: Building trust requires transparency and collaboration.

- Visualize the Decision Pathway: Create intuitive visualizations of how data flows through your model to a prediction. For a model analyzing cell images, use saliency maps to highlight which regions of the image most influenced the classification [4] [6].

- Implement a "Glass Box" Prototype: Develop a simplified, interpretable version of your model (a surrogate model) that mimics the predictions of the black-box system for the most common cases. A clinician can interact with and understand this surrogate, building confidence in the overall system's logic [4].

- Facilitate Interactive Exploration: Allow users to ask "what-if" questions. Build a simple interface where scientists can adjust input parameters (e.g., gene expression levels) and see how the model's prediction changes in real-time. This interactive exploration demystifies the model's behavior [6].

Q5: Are there specific XAI techniques recommended for different stages of the drug discovery pipeline? [3]

A: Yes, the choice of XAI technique can be tailored to the stage-specific question.

| Drug Discovery Stage | Primary AI Task | Recommended XAI Techniques | Goal of Interpretation |

|---|---|---|---|

| Target Identification | Prioritizing genes/proteins from omics data. | Permutation Feature Importance, Global Surrogate Models (e.g., a decision tree) [4] [5]. | Understand which genomic or pathway features the model uses globally to identify high-priority targets. |

| Compound Screening & Design | Predicting activity, toxicity, or ADMET properties. | SHAP, LIME, Counterfactual Explanations [4]. | Explain why a specific compound was predicted to be active/toxic and suggest structural modifications. |

| Preclinical Validation | Analyzing high-content imaging or biomarker data. | Layer-wise Relevance Propagation (LRP), Attention Mechanisms, Saliency Maps [6]. | Identify which parts of an image or which biomarkers the model focused on to make its assessment. |

Featured Experimental Protocol: Decoding Splicing Modulation with Interpretable AI

This protocol is adapted from the methodology of Envisagenics, which uses its SpliceCore platform to transparently predict splice-switching oligonucleotide (SSO) drug targets [1].

Objective: To identify and validate novel, druggable splicing events in a disease context (e.g., triple-negative breast cancer) using an interpretable AI/ML workflow.

Detailed Methodology:

Data Curation & Feature Engineering:

- Input: Collect RNA-sequencing data from diseased and healthy control tissues. Perform alternative splicing analysis to quantify splicing event levels (e.g., percent spliced in, PSI).

- Feature Construction: Instead of using raw data, engineer biologically meaningful features. Map splicing events to 32 unique regulatory circuits of the spliceosome—defined networks of RNA-protein interactions. The presence and activity level of each circuit for a given splicing event become the discrete, quantifiable predictive features for the ML model [1].

Model Training with Interpretability by Design:

- Train a model (e.g., a gradient boosting machine) to predict splicing disruption using the regulatory circuit features.

- Key Transparency Step: Prioritize model transparency. This may involve sacrificing marginal predictive accuracy for a model whose feature weights (importance) can be clearly articulated and mapped back to the biological function of the corresponding spliceosome circuit [1].

Prediction & Druggability Mapping:

- Apply the trained model to the transcriptome of the target disease to predict dysregulated, disease-driving splicing events.

- Overlay RNA-binding protein (RBP) binding site data and secondary structure information onto the top predictions to create a "druggability map." This map identifies optimal binding sites for antisense oligonucleotides (ASOs) or splice-switching oligonucleotides (SSOs) designed to correct the splicing defect [1].

In Vitro Validation:

- Design SSOs targeting the highest-ranked predicted sites.

- Transfert cell lines with the SSOs and measure the correction of the aberrant splicing event via RT-PCR or nanostring.

- Assess downstream functional effects using relevant cell viability, migration, or gene expression assays.

The workflow below illustrates this integrated cycle of computational prediction and experimental validation.

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential computational and biological reagents for implementing interpretable AI workflows in splicing-targeted drug discovery, as exemplified in the protocol above.

| Research Reagent / Solution | Primary Function in Interpretable AI Workflow | Key Consideration for Transparency |

|---|---|---|

| SpliceCore or Similar AI Platform [1] | Cloud-based platform for exon-centric analysis; identifies splicing events and maps them to regulatory circuits for use as interpretable ML features. | Ensures features are grounded in RNA biology, making model outputs relatable and actionable. |

| RNA-seq Datasets (Disease & Control) | Primary input data for quantifying alternative splicing events (e.g., using tools like rMATS, LeafCutter). | Quality and batch effect correction are critical to prevent model from learning technical artifacts instead of biology. |

| Spliceosome Regulatory Circuit Database | A curated knowledge base defining the 32+ mechanistic units (RNA-protein interaction networks) of the spliceosome [1]. | Provides the ontology for translating raw splicing data into biologically meaningful, interpretable model features. |

| RNA-Protein Interaction (CLIP-seq) Data | Maps binding sites of RNA-binding proteins (RBPs) across the transcriptome. | Integrated post-prediction to assess "druggability" by identifying accessible sites for oligonucleotide binding. |

| Splice-Switching Oligonucleotide (SSO) Libraries | Molecules designed to hybridize to pre-mRNA and modulate splicing. Used for experimental validation of AI predictions [1]. | Validation of AI predictions with SSOs provides a direct functional readout, closing the loop between computation and biology. |

| Interpretable ML Software Libraries(e.g., SHAP, LIME, Eli5) | Python/R packages that implement post-hoc explanation algorithms on top of existing black-box models. | Allows researchers to add interpretability layers to complex models without redesigning the entire AI pipeline. |

The integration of Artificial Intelligence (AI) and Machine Learning (ML) into drug discovery has accelerated target identification, molecular design, and preclinical analysis. However, the "black box" nature of many complex models—particularly deep learning—poses a significant risk to the foundational pillars of drug development: safety, efficacy, and scientific trust. When researchers cannot interrogate how an AI model arrived at a novel drug candidate or a toxicity prediction, it undermines the rigorous validation processes required by regulators and the scientific method itself. This technical support center provides actionable guidance for researchers to implement transparent, interpretable, and reproducible AI-driven workflows, thereby mitigating the risks associated with opaque models.

Technical Support Center: Troubleshooting AI-Driven Experiments

Frequently Asked Questions (FAQs) & Troubleshooting Guides

Q1: My AI model for virtual screening identified a lead compound, but I cannot explain its decision. How can I validate this finding before proceeding to synthesis? A: This is a classic "black box" output. Follow this protocol:

- Employ Post-Hoc Interpretation Tools: Use SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to generate feature importance scores for the predicted compound.

- Perform Similarity Analysis: Calculate Tanimoto coefficients or use Matched Molecular Pair analysis against known actives in your training set. High similarity may provide a traditional chemical rationale.

- Initiate a Focused In Silico Assay: Dock the compound into the target's crystal structure (if available) and analyze interaction fingerprints. Compare these to interactions made by known binders.

- Design a Control Set: Synthesize or acquire a small set of analogues with systematic variations on the unexplained pharmacophore to test the model's sensitivity.

Q2: My predictive model for cytotoxicity shows high accuracy on test data but fails drastically in preliminary wet-lab experiments. What could be wrong? A: This indicates a potential "domain shift" or hidden bias in your training data.

- Troubleshooting Steps:

- Audit Your Training Data: Check for source bias. Was data pooled from multiple cell lines or assay types? Use t-SNE or UMAP to visualize the chemical space of your training set versus your experimental compounds.

- Check for Data Leakage: Ensure no experimental compounds (or their close analogues) were inadvertently present in the training data. Examine the model's performance on truly external data.

- Implement Uncertainty Quantification: Use models that provide prediction confidence intervals (e.g., Bayesian Neural Networks, ensemble methods). Discard predictions with high epistemic uncertainty.

- Validate with a Simpler Model: Train a simple, interpretable model (like a random forest or linear model) on the same data. If it performs comparably, use its decision rules to cross-check the deep learning model's predictions.

Q3: How can I ensure the reproducibility of my AI-based drug response prediction model? A: Reproducibility is a cornerstone of transparency.

- Mandatory Documentation Checklist:

- Code & Environment: Publish code in a repository (e.g., GitHub, GitLab) with a detailed

README.mdand an environment file (e.g.,environment.yml,Dockerfile). - Data Provenance: Document the exact source, version, and pre-processing steps (including all normalization and filtering parameters) for all training and test data. Use unique identifiers (like DOIs) where possible.

- Hyperparameter Logging: Record every hyperparameter (learning rate, batch size, architecture specifics, dropout rates) using a framework like Weights & Biases or MLflow.

- Random Seed Declaration: Fix and report all random seeds for Python, NumPy, and deep learning frameworks (PyTorch, TensorFlow).

- Code & Environment: Publish code in a repository (e.g., GitHub, GitLab) with a detailed

Key Experimental Protocols for Transparent AI Validation

Protocol 1: Implementing SHAP for Compound Prioritization Explainability Objective: To explain the output of any ML model that predicts compound activity. Methodology:

- Train your predictive model (e.g., a graph neural network for property prediction).

- For the compound(s) of interest, instantiate a

shap.Explainer()using the appropriate explainer (e.g.,KernelExplainerfor any model,DeepExplainerfor neural networks). - Calculate SHAP values on a representative background dataset (e.g., 100 randomly sampled training compounds).

- Visualize the results using

shap.plots.waterfall()for single-prediction explanation orshap.plots.beeswarm()for global feature importance. - Map critical molecular features (e.g., specific functional groups identified by SHAP) back to structural alerts or pharmacophoric hypotheses.

Protocol 2: Counterfactual Analysis for Model Interrogation Objective: To understand the minimal changes required to flip a model's prediction (e.g., from "active" to "inactive"). Methodology:

- Start with a seed molecule predicted as "active."

- Use a library like

DiBSorMoliverseto generate a set of similar molecules via small, rational structural perturbations (e.g., adding/removing a methyl, changing a heteroatom). - Run the perturbed molecules through the trained AI model.

- Identify the molecule(s) with the highest structural similarity to the seed but a flipped prediction ("inactive").

- Analyze the structural difference between the seed and the counterfactual; this difference highlights the chemical feature the model deems critical for activity, providing a testable hypothesis.

Data & Materials

Quantitative Comparison of AI Explainability Techniques

Table 1: Comparison of Post-Hoc AI Interpretability Methods in Drug Discovery Contexts

| Method | Model Agnostic | Output Type | Computational Cost | Best Use Case in Drug R&D |

|---|---|---|---|---|

| SHAP | Yes | Global & Local Feature Importance | Medium-High | Explaining individual compound predictions & identifying key molecular descriptors. |

| LIME | Yes | Local Feature Importance | Low | Generating simple, intuitive explanations for a single prediction for interdisciplinary teams. |

| Attention Mechanisms | No (Built-in) | Feature Weights | Low | Interpreting sequence-based (proteins, genes) or graph-based (molecules) models inherently. |

| Counterfactual Analysis | Yes | Example-Based | Medium | Generating testable chemical hypotheses by finding minimal change to alter prediction. |

| Partial Dependence Plots | Yes | Global Feature Effect | Medium | Understanding the marginal effect of a specific molecular feature on model output. |

The Scientist's Toolkit: Essential Reagents for Transparent AI Workflows

Table 2: Research Reagent Solutions for Interpretable AI-Driven Research

| Item / Tool | Function / Purpose | Example/Provider |

|---|---|---|

| Explainability Libraries | Provide post-hoc analysis of black-box models. | SHAP, LIME, Captum (for PyTorch), ALIBI |

| Cheminformatics Toolkits | Handle molecular representation, featurization, and similarity analysis. | RDKit, OpenBabel, ChemPy |

| Uncertainty Quantification Frameworks | Estimate model confidence and reliability of predictions. | Monte Carlo Dropout (in TensorFlow/PyTorch), Bayesian Neural Networks (via Pyro, TensorFlow Probability) |

| Experiment Tracking Platforms | Log hyperparameters, code versions, and results for full reproducibility. | Weights & Biases, MLflow, Neptune.ai |

| Standardized Datasets | Provide benchmark data for fair comparison and model validation. | MoleculeNet, Therapeutics Data Commons, ChEMBL |

| Molecular Docking Suite | Perform structural validation of AI-predicted active compounds. | AutoDock Vina, Glide, GOLD |

Visual Workflows & Diagrams

Diagram 1: Workflow for Integrating XAI into Drug Discovery

Diagram 2: SHAP Protocol for Explaining Compound Activity

Technical Support Center: AI Ethics & Equity in Drug Discovery

Welcome to the Technical Support Center for AI Ethics and Equity in Drug Discovery Research. This resource is designed for researchers, scientists, and drug development professionals navigating the challenges of algorithmic transparency and fairness. The guidance below is framed within the critical thesis of addressing the "black box" problem in AI to build more accountable and equitable research pipelines [7] [8].

Troubleshooting Guides

Problem Category 1: Suspected Algorithmic Bias in Pre-Clinical Screening

- Symptoms: Your AI model for target identification or compound screening shows high performance overall but consistently fails or performs poorly for specific molecular subgroups or patient-derived cell lines. You suspect the training data may be unrepresentative.

- Diagnostic Steps:

- Audit Training Data Demographics: Quantify the representation of biological sex, genetic ancestry, and disease subtypes in your training datasets. A 2023 systematic review found that 50% of healthcare AI studies had a high risk of bias, often due to imbalanced or incomplete datasets [8].

- Implement Explainable AI (xAI) Techniques: Use tools like SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations) to identify which features (e.g., specific genetic markers) are most influential in your model's predictions. This can reveal if predictions are unfairly driven by proxies for demographic factors [7].

- Perform Disaggregated Evaluation: Break down your model's performance metrics (accuracy, sensitivity, AUC-ROC) by subgroup (e.g., by sex or ancestral population) instead of relying on aggregate scores. This can uncover hidden disparities [9].

- Resolution Protocol:

- Data Augmentation & Rebalancing: If gaps are found, employ techniques like synthetic data generation (using GANs or SMOTE) to carefully augment underrepresented groups in your training set, ensuring biological plausibility is maintained [7] [9].

- Incorporate Bias Mitigation Algorithms: Integrate fairness-aware learning algorithms (e.g., adversarial debiasing, reweighting methods) during model training to penalize unfair associations [8].

- Adopt Dual-Track Verification: As recommended by ethical frameworks, synchronize AI virtual model predictions with targeted in vitro or animal experiments specifically designed to test the subgroups where AI performance was weak [10]. Do not rely on AI prediction alone for safety and efficacy.

Problem Category 2: Unrepresentative Patient Recruitment in AI-Optimized Clinical Trials

- Symptoms: An AI tool used to optimize trial site selection or patient pre-screening is resulting in a recruited cohort that lacks diversity compared to the real-world disease population. This threatens the generalizability of your trial results.

- Diagnostic Steps:

- Interrogate Historical Data Bias: Analyze the historical clinical trial data used to train the recruitment AI. Check for documented underrepresentation of racial and ethnic minorities, women, or older adults [11] [12]. For instance, a 2024 analysis of SLE trials found only 4.7% implemented specific strategies to recruit from marginalized populations [12].

- Map "Digital Phenotype" Bias: Determine if the digital biomarkers or eligibility criteria suggested by the AI (e.g., specific lab ranges, imaging features) are known to vary demographically and may inadvertently exclude groups [9].

- Community Feedback Loop: Establish a process for community advisory boards to review AI-suggested recruitment plans and identify potential structural or cultural barriers they may create [11].

- Resolution Protocol:

- Apply Recruitment Best Practices Proactively: Integrate evidence-based strategies into your trial design from the start, as reactive measures are less effective [11].

- Budget for Equity: Allocate funds for participant support (travel, parking, childcare) [11].

- Staff Training: Require cultural competency and communication training for all research staff [11].

- Community Partnership: Collaborate with community health centers and trusted local leaders for trial design and outreach [11].

- Leverage Decentralized Trial (DCT) Tools: Use FDA-endorsed DCT frameworks and digital health technologies to lower geographic and mobility barriers to participation [13].

- Continuous Monitoring with xAI: Use explainable AI tools to continuously monitor the demographics of recruited patients versus targets. If bias is detected, use counterfactual explanations to ask, "What factors would need to change for this patient to have been eligible?" and adjust protocols accordingly [7].

- Apply Recruitment Best Practices Proactively: Integrate evidence-based strategies into your trial design from the start, as reactive measures are less effective [11].

Problem Category 3: The "Black Box" Problem Impeding Regulatory and Scientific Trust

- Symptoms: Your deep learning model is highly predictive, but you cannot explain its reasoning to internal peers, journal reviewers, or regulatory bodies. This opacity hinders adoption and challenges fundamental scientific principles.

- Diagnostic Steps:

- Assess Model Complexity vs. Need: Determine if a simpler, more interpretable model (e.g., linear model, decision tree) could achieve acceptable performance for the task. Often, complexity is over-applied.

- Document the "Explainability Gap": Systematically list all questions about the model that cannot be answered (e.g., "Why did compound A score higher than B?", "Which molecular substructure is key to the predicted toxicity?").

- Resolution Protocol:

- Build an Explainability-by-Design Pipeline: Integrate xAI methods not as an afterthought, but as a core component of the model development cycle [7]. For drug discovery, prioritize techniques that provide biological insight, such as attention mechanisms that highlight relevant protein domains or chemical substructures.

- Develop a Model Fact Sheet: Create standardized documentation detailing the model's intended use, training data demographics, known limitations, fairness evaluations, and example explanations.

- Align with Regulatory Frameworks: Understand that while AI for pure research may have exemptions, systems influencing clinical decisions are increasingly regulated. The EU AI Act, for example, mandates transparency for high-risk systems [7]. Proactively adopt these principles.

Frequently Asked Questions (FAQs)

Q1: Our training data is inevitably skewed because historical biomedical data lacks diversity. How can we ever build fair AI? A1: Perfectly representative historical data is rare, but this is not an insurmountable barrier. The strategy is threefold: First, acknowledge and quantify the bias in your current data. Second, use complementary techniques like transfer learning from related domains, synthetic data augmentation for underrepresented groups, and active learning to strategically collect new, balanced data. Third, implement robust, ongoing bias detection throughout the model lifecycle, not just at training [9] [8]. The goal is progressive improvement, not instant perfection.

Q2: What are the most practical first steps to make our clinical trial recruitment AI more equitable? A2: Begin with three actionable steps:

- Set and Monitor Explicit Diversity Targets: Based on disease epidemiology, proactively set enrollment goals for underrepresented groups (e.g., "30% of our cohort for Disease X will be from Population Y") [11]. Monitor this in real-time.

- Expand Eligibility Criteria Review: Use AI not to restrict, but to simulate expanded criteria. Work with clinicians to analyze how relaxing certain strict, non-safety-critical eligibility criteria (common in oncology) could increase diversity while maintaining scientific integrity [13].

- Incorporate Social Determinants of Health (SDOH) Data: If possible and privacy-compliant, use anonymized ZIP code-level SDOH data (e.g., transportation access, income level) to identify and mitigate geographic recruitment deserts, moving beyond purely clinical site selection [9].

Q3: How do we balance the demand for explainable AI with the superior performance of complex "black box" models like deep neural networks? A3: This is a key trade-off. The resolution involves shifting the question from "which model" to "what explanation." The goal is not always a fully interpretable model but a reliable explanation of a complex model's output. Use post-hoc xAI techniques (e.g., counterfactual explanations) to generate trustworthy insights. For example, "The model predicts high toxicity because this molecule contains a reactive thioester group, similar to known toxic compound Z." This provides the necessary scientific insight without sacrificing performance [7] [8]. Furthermore, regulatory guidance is evolving to accept well-validated explanations even for complex models [7].

Q4: Who is ultimately responsible if an AI tool leads to biased outcomes in drug discovery? A4: Responsibility is shared across the ecosystem, but primary accountability lies with the drug development sponsor and the AI tool developers. Researchers have a professional obligation to conduct due diligence. This includes auditing AI tools for bias, demanding transparency from vendors, and adhering to ethical frameworks like the four-principle approach (autonomy, justice, non-maleficence, beneficence) for the entire AI-assisted R&D cycle [10]. Regulatory agencies like the FDA are clarifying guidelines, but the implementation responsibility rests with the industry [13].

Table 1: Survey of Stroke Clinical Trial Researchers on Minority Inclusion Practices (n=93) [11]

| Practice | Number of Researchers | Percentage |

|---|---|---|

| Proactively set minority recruitment goals | 43 | 51.2% |

| Required cultural competency staff training | 29 | 36.3% |

| Collaborated with community on trial design | 44 | 51.2% |

| Reported being "successful" in minority recruitment | 31 | 36.9% |

Table 2: Analysis of Bias Risk in Healthcare AI Studies [8]

| Study Focus | Finding | Implication for Drug Discovery |

|---|---|---|

| General Healthcare AI Models (n=48) | 50% had high risk of bias (ROB); only 20% low ROB. | Half of published models may have significant fairness issues. |

| Neuroimaging AI for Psychiatry (n=555) | 83% rated high ROB; 97.5% used only high-income region data. | Extreme geographic/data bias limits global applicability of tools. |

Experimental Protocol: Dual-Track Validation for AI-Predicted Toxicity

Objective: To validate and mitigate risk from an AI model predicting a novel compound's intergenerational toxicity, a known blind spot in accelerated AI-driven development [10].

Background: AI can simulate virtual animal models, but a "black box" prediction of long-term safety is insufficient. This protocol synchronizes in silico and in vivo tracks.

Materials: See "The Scientist's Toolkit" below.

Procedure:

- AI Prediction Phase: Input the novel compound structure into the trained toxicity prediction model (e.g., using DeepChem). Record the predicted toxicity score and the xAI-derived rationale (e.g., highlighted molecular alerts) [10] [7].

- Dual-Track Experimental Design:

- Track A (AI-Informed In Vivo): Design a targeted animal study focusing on the specific toxicity endpoint and organ system predicted by the AI model. Use the xAI rationale to select relevant biomarkers for monitoring.

- Track B (Standard In Vivo): Run a parallel, traditional toxicology study following standard regulatory guidelines (e.g., ICH S5), which is broader and not informed by the AI prediction.

- Analysis & Reconciliation:

- Compare outcomes from Tracks A and B. Does Track A confirm the AI prediction with greater efficiency?

- Critically, does Track B reveal any unpredicted toxicities missed by the AI? This is crucial for risk mitigation.

- Use the combined data to refine the AI model, closing the loop between prediction and biological reality.

Ethical Note: This protocol aligns with the non-maleficence and beneficence principles by actively seeking to uncover harm missed by accelerated AI cycles [10].

Visualizing the Workflow and Framework

Diagram 1: The Bias Amplification Cycle & Mitigation Points in AI Drug Discovery

Diagram 2: Operational AI Ethics Framework for the Drug Development Lifecycle [10]

Table 3: Essential Resources for Bias-Aware AI Research in Drug Discovery

| Tool / Resource | Function / Purpose | Key Consideration for Bias Mitigation |

|---|---|---|

| DeepChem | Open-source toolkit for deep learning in drug discovery, chemistry, and biology [10]. | Allows for building and dissecting models. Use to implement and test fairness-aware graph neural networks. |

| SHAP/LIME Libraries | Explainable AI (xAI) libraries for interpreting model predictions [7] [8]. | Core diagnostic tool. Use to audit which features drive predictions and identify proxy bias. |

| Synthetic Data Generation Tools (GANs, SMOTE) | Generates synthetic data samples to balance underrepresented classes in training sets [7] [9]. | Apply carefully to augment rare subgroups. Must validate that synthetic data preserves real biological variance. |

| AI Fairness 360 (AIF360) / Fairlearn | Open-source toolkits containing algorithms to detect and mitigate bias throughout the ML lifecycle [8]. | Provides standardized metrics (demographic parity, equalized odds) and debiasing algorithms for systematic use. |

| Community Advisory Board (CAB) Framework | Structured partnership with patient and community representatives [11]. | Critical for external validity. Use CABs to review AI-driven recruitment plans, consent forms, and trial design for cultural appropriateness. |

| FDA Guidance on Enhancing Clinical Trial Diversity | Final guidance document outlining approaches to broaden eligibility criteria, enrollment practices, and trial designs [13]. | Regulatory benchmark. Use to inform the development of AI tools for recruitment, ensuring they align with agency expectations for diversity. |

The integration of Artificial Intelligence (AI) into drug discovery promises to revolutionize the field by accelerating target identification, compound screening, and predictive toxicology [14]. However, the inherent "black box" problem—where the decision-making process of complex AI models is opaque—poses a fundamental challenge to scientific validation, regulatory approval, and ethical deployment [7]. This opacity conflicts with core ethical principles essential to biomedical research: respect for autonomy, which requires understandable information for consent; justice, which demands fair and unbiased outcomes; and non-maleficence, the duty to prevent harm [15].

This technical support center is designed to help researchers, scientists, and drug development professionals navigate these challenges. By framing common technical issues within an ethical framework and providing actionable troubleshooting guides, we aim to bridge the gap between advanced AI capabilities and the rigorous, principled standards required for trustworthy drug discovery.

Troubleshooting Guide: Ethical AI in Practice

This section addresses specific, high-impact problems grouped by the ethical principle they most directly impact. Each entry follows a problem-diagnosis-solution format.

Principle: Explicability

- Core Question: How can we understand and trust AI model predictions? [15]

- Common Issue: Model Interpretability & "Black Box" Predictions

- Problem: A deep learning model for predicting compound efficacy shows high accuracy but provides no insight into which molecular features drove the prediction, making scientific validation and hypothesis generation impossible [7].

- Diagnosis: This is a classic explicability deficit. The model lacks integrated Explainable AI (xAI) techniques, preventing researchers from transforming predictions into actionable biological insights [7].

- Solution: Implement post-hoc and intrinsic xAI methods.

- Apply SHAP (SHapley Additive exPlanations) or LIME (Local Interpretable Model-agnostic Explanations): Use these tools on your model to generate feature importance scores for individual predictions, highlighting key molecular descriptors [7].

- Employ Counterfactual Analysis: Use xAI tools to ask "what-if" questions (e.g., "How would the prediction change if this functional group were removed?") to explore the model's decision boundaries and refine compound design [7].

- Document Rationale: For regulatory compliance, especially under frameworks like the EU AI Act, maintain an audit trail of the xAI methods used and the insights derived to demonstrate transparency [7].

Principle: Justice

- Core Question: How do we ensure AI promotes fairness and does not perpetuate bias? [15]

- Common Issue: Bias in Training Data and Model Outputs

- Problem: A model trained primarily on genomic data from populations of European ancestry performs poorly when predicting drug responses for other demographic groups, risking inequitable health outcomes [7].

- Diagnosis: The training data suffers from a representation bias and potentially a historical bias, leading the AI to reproduce and amplify existing healthcare disparities [7].

- Solution: Proactively identify and mitigate bias throughout the AI lifecycle.

- Bias Audit: Before training, use tools like

AequitasorFairlearnto audit your dataset for representation gaps across key demographic variables (e.g., sex, ancestry) [7]. - Mitigation Strategies:

- Technical: Apply algorithmic fairness constraints during model training or use post-processing techniques to calibrate outputs for different groups [7].

- Data-Centric: Augment data using synthetic data generation techniques (e.g., via Generative Adversarial Networks) to carefully balance underrepresented scenarios without compromising privacy [7].

- Continuous Monitoring: Establish fairness metrics (e.g., equalized odds, demographic parity) and monitor them on validation sets that mirror real-world diversity [16].

- Bias Audit: Before training, use tools like

Principle: Non-Maleficence

- Core Question: How do we prevent AI from causing harm? [15] [17]

- Common Issue: AI Hallucinations and Erroneous Predictions

- Problem: A generative AI model for de novo molecular design proposes compounds with chemically impossible structures or unrealistic binding affinities, wasting valuable experimental resources [14].

- Diagnosis: The model may be generating "hallucinations"—confident but incorrect outputs—due to overfitting, training on noisy data, or a lack of grounding in physical laws [14] [7].

- Solution: Implement robust validation and grounding pipelines.

- Rule-Based Filtering: Integrate a post-generation filter that checks all proposed molecules against fundamental rules of chemistry (e.g., valency, synthetic accessibility scores).

- Multi-Model Consensus: Use a panel of different, independently trained models (an "ensemble") to evaluate outputs. Predictions are only advanced if a consensus is reached [16].

- Iterative Human-in-the-Loop (HITL) Review: Design workflows where AI-generated candidates are automatically flagged for expert review based on uncertainty metrics (e.g., high prediction variance) or novelty scores [14].

Principle: Autonomy

- Core Question: How does AI support, rather than undermine, informed human decision-making? [15] [18]

- Common Issue: Informed Consent for AI-Driven Clinical Trials

- Problem: Patients struggle to understand how their data will be used by complex AI algorithms in a clinical trial, compromising the validity of informed consent [18].

- Diagnosis: Traditional consent forms are ill-suited for explaining dynamic, data-driven AI processes. This fails to provide the "epistemic scaffolding" needed for genuine autonomous decision-making [18].

- Solution: Develop AI-enhanced consent processes that scaffold understanding.

- Structured LLM Interaction: Implement a secure, clinically validated Large Language Model (LLM) interface. This allows patients to ask questions in their own words about AI's role, data privacy, and algorithm-based decisions at their own pace [18].

- Dynamic Documentation: The system generates a report summarizing the patient's questions, concerns, and level of understanding, which is then reviewed by the study physician to address any remaining gaps [18].

- Transparency of Process: Clearly communicate the core principles: that AI is a tool to analyze patterns, that human researchers oversee all decisions, and that patients retain the right to withdraw their data [19].

Experimental Protocols for Ethical AI Validation

Protocol 1: xAI Validation for a Target Identification Model

- Objective: To validate and explain the predictions of a deep learning model identifying novel disease-associated protein targets.

- Materials: Trained target identification model, hold-out validation dataset, SHAP/LIME libraries, access to biological pathway databases (e.g., KEGG, Reactome).

- Method:

- Prediction & Baseline Interpretation: Run the validation dataset through the model. For each high-priority predicted target, generate a baseline SHAP force plot.

- Biological Plausibility Check: Cross-reference the top 10 features (e.g., gene expression levels, protein interaction network centrality) driving each prediction with known biological literature and pathway databases.

- Counterfactual Testing: For a subset of predictions, systematically alter key input features (e.g., simulate a different mutation status) and re-run the model to confirm the direction of change aligns with biological expectation [7].

- Expert Review Panel: Present the xAI outputs (SHAP plots, counterfactual results) to a panel of disease biologists for blind assessment of the rationale's plausibility.

- Success Metric: >80% of high-priority predictions must receive a "biologically plausible" or "very plausible" rating from the expert panel based on the xAI-provided rationale.

Protocol 2: Bias Detection and Mitigation in a Toxicity Predictor

- Objective: To audit and mitigate subgroup performance disparity in a model predicting compound hepatotoxicity.

- Materials: Toxicity prediction model, training and test datasets annotated with compound structural classes and demographic origin of the underlying assay data, fairness auditing toolkit (

Fairlearn). - Method:

- Disparity Measurement: Segment your test set by compound structural class (e.g., kinase inhibitors vs. GPCR ligands). Calculate performance metrics (AUC, precision, recall) for each subgroup [7].

- Root Cause Analysis: If performance disparity >15% is found, audit the training data distribution for the underperforming class. Analyze feature importance differences between groups using xAI.

- Mitigation Intervention: Employ a re-weighting technique (assigning higher sample weights to the underrepresented class during training) or use a fairness-constrained optimizer [7].

- Validation: Re-train the model with the mitigation strategy. Re-evaluate performance on the held-out test set to confirm reduction in disparity without significant overall performance loss.

- Success Metric: Post-mitigation, the maximum performance gap between any two major compound structural classes is reduced to <10%.

Table 1: AI in Biotech: Market Forecast and Performance Metrics This table synthesizes key quantitative data on the impact and expectations for AI in drug discovery [16].

| Metric Category | Specific Metric | 2024/2025 Value | 2034 Projection/Note | Source / Context |

|---|---|---|---|---|

| Market Size | Global AI in Biotech Market | $4.70B (2024) | $27.43B (2034) | Projected CAGR of 19.29% [16]. |

| Market Size | Global AI in Biotech Market | $5.60B (2025) | - | Expected yearly growth [16]. |

| Pipeline Impact | Share of AI-discovered drugs | - | ~30% (by 2025) | Projection from World Economic Forum [16]. |

| Efficiency Gains | Time savings for pre-clinical stage | - | Up to 40% saved | For challenging targets [16]. |

| Efficiency Gains | Cost savings for pre-clinical stage | - | Up to 30% saved | For challenging targets [16]. |

| Benchmarking | Phase 2 trial failure rates | No significant difference | Between AI-discovered and traditional drugs [16]. |

Table 2: Research Reagent Solutions for Ethical AI Experimentation Essential software tools and frameworks for implementing the ethical principles discussed [16] [18] [7].

| Item Name | Category | Function/Brief Explanation |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | xAI Library | Explains the output of any machine learning model by calculating the contribution of each feature to a specific prediction, based on game theory. |

| LIME (Local Interpretable Model-agnostic Explanations) | xAI Library | Creates a local, interpretable model to approximate the predictions of a black-box model for individual instances. |

| Fairlearn | Fairness Toolkit | An open-source Python package to assess and improve the fairness of AI systems, including metrics and mitigation algorithms. |

| AI Fairness 360 (AIF360) | Fairness Toolkit | A comprehensive open-source toolkit from IBM with metrics, datasets, and algorithms to check and mitigate bias throughout the AI lifecycle. |

| Clinically-Validated LLM Interface | Autonomy Scaffold | A controlled Large Language Model system, fine-tuned on medical literature and consent guidelines, to support patient Q&A and comprehension monitoring [18]. |

| Synthetic Data Generation Platform (e.g., GANs) | Data Augmentation | Generates realistic, artificial datasets to balance underrepresented groups in training data, mitigating bias while protecting privacy [7]. |

| Multi-Agent Lab System (e.g., BioMARS) | Autonomous Research | A system using LLM agents to design, execute, and inspect biological experiments, enhancing reproducibility but requiring human oversight for complex tasks [16]. |

Visual Guides: Workflows and Frameworks

Diagram 1: xAI-Integrated Validation Workflow for Drug Discovery AI This diagram outlines a robust workflow integrating Explainable AI (xAI) techniques to validate and interpret predictions from a black-box model, ensuring scientific and ethical scrutiny.

Diagram 2: Scaffolded Autonomy Process for AI-Informed Consent This diagram illustrates the scaffolded autonomy process using LLMs to enhance patient understanding and support truly informed consent for AI-involved clinical research [18].

Technical Support Center: Troubleshooting AI in Drug Discovery Research

Welcome, Researcher. This support center provides targeted guidance for addressing the "black box" problem in AI-driven drug discovery. The following troubleshooting guides and FAQs are designed to help you diagnose failures, improve model interpretability, and validate AI outputs within your experimental workflows [20] [7].

Troubleshooting Guide: Common Experimental Failures

This guide addresses frequent pain points where a lack of explainability can derail a research pipeline.

Issue 1: AI Model for Target Identification Shows High Validation Accuracy but Suggests Biologically Implausible Targets.

- Root Cause: The model is likely exploiting dataset bias or confounding features rather than learning the true biological signal [20] [7]. For example, a model may associate a disease with lab-specific slide preparation artifacts or with patient demographic data correlated with the condition, not the underlying pathology.

- Diagnostic Step: Perform feature attribution analysis using explainable AI (XAI) techniques like SHAP or LIME. Examine the top features influencing the model's predictions.

- Solution: If features are non-biological (e.g., image background, batch identifiers), you must deconfound your training data. Apply techniques such as batch effect correction, stratified sampling, or use a custom explainability framework to design a test that isolates the signal of interest [20] [21].

- Preventive Measure: Integrate XAI checks during model development, not just post-hoc. Use diverse, multi-center datasets for training to reduce bias [21] [7].

Issue 2: AI-Designed Compound Fails in Preclinical Toxicity Studies Despite Favorable In Silico ADMET Predictions.

- Root Cause: The toxicity was not represented in the model's training data, or the model's chemical space exploration prioritized binding efficacy over safety, venturing into regions with unknown toxicity profiles [22] [23].

- Diagnostic Step: Use counterfactual explanation tools. Ask: "What minimal changes to the compound's structure would flip the model's prediction from 'non-toxic' to 'toxic'?" Analyze if those structural motifs are known in toxicology databases [7].

- Solution: Enhance training data with high-quality toxicity data, including published failure data. Implement multi-objective optimization in your generative AI, explicitly penalizing predicted toxicity and structural alerts during molecule generation [14] [23].

- Preventive Measure: Move toxicity prediction earlier in the workflow. Use AI to run in silico panels against a wider range of off-target proteins beyond the standard secondary pharmacology panel [22].

Issue 3: High-Performing Diagnostic AI Model Fails in External Clinical Validation.

- Root Cause: Shortcut learning. The model has learned spurious correlations in your training set (e.g., associating a brand of imaging equipment with a disease prevalent at a specific hospital) [20].

- Diagnostic Step: Employ model behavior visualization tools (like the LENS system). Test if the model activates on irrelevant background features or if its attention maps align with a clinician's expert focus areas [20].

- Solution: Create a challenge test set designed to break the shortcut. For a histopathology model, this could involve slides with the disease but without the confounding feature, or slides with the confounding feature but without the disease. Retrain the model using methods that force it to focus on the core pathology [20].

- Preventive Measure: Collaborate closely with clinical domain experts during dataset curation and model evaluation to identify potential shortcuts [20].

Table 1: Summary of Common AI Model Failures and Diagnostic Actions

| Failure Mode | Likely Root Cause | Key Diagnostic Action | Primary Solution Path |

|---|---|---|---|

| Biologically implausible predictions | Dataset bias & confounding features | Perform feature attribution analysis (SHAP, LIME) | Deconfound training data; use multi-source datasets [20] [7] |

| Unexpected toxicity in vivo | Gaps in toxicity training data; generative model bias | Conduct counterfactual explanation analysis | Augment data with toxicity failures; implement multi-objective AI design [22] [23] |

| Poor external validation performance | Shortcut learning (spurious correlations) | Use model visualization (e.g., attention maps) | Create and test against a challenge set; apply robust training [20] |

Frequently Asked Questions (FAQs)

Q1: Our team prioritizes getting accurate predictions to accelerate projects. Why should we slow down to implement explainability? A1: Because unexplainable accuracy is a high-risk liability. A model with 95% accuracy on your test set may have learned a flaw that causes 100% failure upon deployment or in the next phase of research [2] [20]. In drug discovery, where decisions cascade into years of investment and impact patient safety, understanding the "why" is non-negotiable for risk mitigation. Explainability is not about slowing down; it's about ensuring your project's foundation is solid [14] [7].

Q2: What is a practical first step to make our existing "black box" model more interpretable? A2: Start with post-hoc explanation techniques applied to critical predictions. For a compound prioritization model, use a tool like SHAP to generate a list of the molecular features (e.g., specific functional groups, solubility parameters) that most contributed to a single compound's high score. Present this list to your medicinal chemists. Their feedback on whether these features make sense will immediately validate or question the model's logic and build collaborative trust [21] [7].

Q3: We found a significant demographic bias in our training data. How can we fix the model without recollecting all the data? A3: Several technical strategies can mitigate bias:

- Data Augmentation: Use synthetic data generation or weighting techniques to balance the representation of underrepresented groups in your training set [7].

- Algorithmic Fairness: Employ fairness-aware machine learning algorithms that include constraints or penalties for biased predictions during model training [7].

- Federated Learning: If data silos are the issue, consider a federated learning approach. This allows you to train a model across multiple, diverse datasets without the data ever leaving its secure source, naturally improving representativeness [23]. The key is to use XAI tools to first quantify the bias and then monitor its reduction during these mitigation steps [7].

Q4: Are we legally required to use explainable AI for drug discovery? A4: The regulatory landscape is evolving. While AI used "for the sole purpose of scientific research and development" may be exempt from strict regulations like the EU AI Act, the principle of transparency is becoming a standard expectation [7]. Regulatory agencies like the FDA and EMA emphasize the need for understanding and validating AI tools used in the development process. Furthermore, if an AI-derived product enters clinical trials or clinical use, its validation will require substantial evidence of robustness and understanding, which XAI directly supports [24] [7]. Proactively adopting explainability is a best practice for future-proofing your research.

Q5: How can we validate an AI model that claims to perform a "superhuman" task, like predicting genetic mutations from histology images? A5: You must design a "superhuman test" with a human-verifiable ground truth [20]. The protocol from Serre's group is exemplary:

- Isolate the Signal: Use a precise method like laser capture microdissection to extract pure cell populations of interest, removing confounding tissue features [20].

- Obtain Definitive Ground Truth: Genetically sequence these isolated cells to obtain a definitive label (e.g., KRAS+ or KRAS-) [20].

- Train and Test on Clean Data: Train your model only on these isolated, genetically confirmed image patches. Then test it on a held-out set of similarly clean patches [20].

- Analyze Failures: If the model fails on this clean test, it cannot perform the superhuman task. If it succeeds, you have strong evidence it is learning the true visual signature of the mutation. This method moves validation beyond correlation to causation [20].

Table 2: Experimental Protocol for Validating a "Superhuman" AI Diagnostic Model [20]

| Step | Protocol Detail | Purpose | Key Reagent/Instrument |

|---|---|---|---|

| 1. Sample Preparation | Perform laser capture microdissection (LCM) on tissue slides to isolate specific, homogeneous cell clusters. | To create a purified input signal, removing microenvironmental confounders. | Laser Capture Microdissection System |

| 2. Ground Truthing | Subject the isolated cell clusters to genetic sequencing (e.g., RNA-seq, PCR) or proteomic analysis. | To obtain a definitive, molecular-level label for each image patch. | Next-Generation Sequencer |

| 3. Data Curation | Pair each high-resolution image patch of isolated cells with its molecular profile label. Curate training and test sets. | To create a clean, causally-linked dataset for model training and evaluation. | Image Database Management Software |

| 4. Model Training & Testing | Train a vision model (e.g., CNN) on the training set. Evaluate its performance on the held-out test set of isolated patches. | To assess the model's ability to learn the genuine visual correlate of the molecular state. | GPU Cluster, Deep Learning Framework |

| 5. Explanation & Analysis | Use XAI methods (saliency maps, feature visualization) on model predictions to see what image features it used. | To verify the model is focusing on biologically plausible cellular morphology, not artifacts. | Explainability Toolbox (e.g., Captum, iNNvestigate) |

The Scientist's Toolkit: Research Reagent Solutions

Essential computational and data resources for building explainable, robust AI in drug discovery.

- Explainability Software Libraries: SHAP (SHapley Additive exPlanations) and LIME (Local Interpretable Model-agnostic Explanations). Used for post-hoc explanation of any machine learning model's individual predictions [21] [7].

- Specialized XAI Platforms: Tools like the LENS (Learnable Explanation via Network Structures) visualization system. Allows researchers to interactively probe which features a deep neural network uses for object recognition, crucial for diagnosing shortcut learning [20].

- High-Quality, Curated Public Databases: ChEMBL (bioactivity data), Protein Data Bank (PDB) (3D structures), UK Biobank (genomic and health data). Provide essential, structured data for training and validating models. Must be carefully harmonized to avoid batch effects [23].

- Federated Learning Platforms: Software like the Lifebit Federated AI Platform. Enables training models on distributed, private datasets across institutions without data movement, addressing data silo and privacy challenges while improving data diversity [23].

- In Silico Toxicity Prediction Suites: Tools that expand beyond standard panels to predict interactions with a wide range of off-target proteins and adverse outcome pathways. Critical for early toxicity flagging [22].

Visualizing the Black Box Problem & Solution Workflow

The following diagrams map the logical consequences of opaque AI models and outline a rigorous validation workflow to ensure reliability.

Diagram 1: Mapping how unexplainable AI models create multidimensional risks in biomedical research, synthesizing issues from algorithmic shortcuts to patient harm [2] [20] [22].

Diagram 2: A step-by-step experimental workflow for rigorously validating an AI model's claim to perform a "superhuman" biomedical task, ensuring it learns true biological signals and not data artifacts [20].

Explainable AI (XAI) in Action: Key Techniques and Real-World Applications in Pharma

The integration of Artificial Intelligence (AI) into drug discovery has revolutionized the identification of therapeutic targets and the optimization of drug candidates [25]. However, the "black box" nature of advanced AI models, where inputs and outputs are visible but the internal decision-making logic is obscured, poses a significant barrier to trust and adoption in the scientifically rigorous and safety-critical field of pharmaceutical research [2] [26]. This opacity makes it difficult for researchers to validate predictions, understand failure modes, and comply with regulatory standards for safety and efficacy.

Explainable AI (XAI) has emerged as a crucial interdisciplinary field aimed at making AI models more transparent, interpretable, and trustworthy [27]. Its core goals are to provide human-understandable insights into model predictions, establish accountability for AI-driven decisions, and ultimately build the confidence necessary for deploying AI in high-stakes scenarios like drug development [25]. This technical support center is designed to assist researchers in implementing XAI techniques to address the black box problem within their drug discovery workflows.

Understanding the XAI Landscape in Drug Discovery

The application of XAI in drug discovery is a rapidly growing field. A bibliometric analysis of research from 2002 to mid-2024 shows a decisive shift from theoretical exploration to active application.

Table: Annual Publication Trends in XAI for Drug Research (2017-2024) [3]

| Year | Total Publications (TP) | Description of Trend |

|---|---|---|

| 2017 & prior | <5 per year | Field in early exploration stage. |

| 2019-2021 | Avg. 36.3 per year | Period of significant growth and high-quality development. |

| 2022-2024 | >100 per year (avg.) | Steady development and increased academic attention. |

| Future | Projected continuous rise | Field is expected to maintain an upward trajectory. |

Geographically, research is concentrated in major scientific hubs, with specific regions developing distinct strengths.

Table: Top Countries Contributing to XAI in Drug Research (by Publication Count) [3]

| Rank | Country | Total Publications (TP) | Total Citations (TC) | TC/TP Ratio | Notable Research Focus |

|---|---|---|---|---|---|

| 1 | China | 212 | 2949 | 13.91 | High volume of research output. |

| 2 | USA | 145 | 2920 | 20.14 | Broad applications across the pipeline. |

| 3 | Germany | 48 | 1491 | 31.06 | Multi-target compounds, drug response prediction. |

| 4 | Switzerland | 19 | 645 | 33.95 | Molecular property prediction, drug safety. |

| 5 | Thailand | 19 | 508 | 26.74 | Biologics, peptides for infections and cancer. |

The high TC/TP ratios for countries like Germany, Switzerland, and Thailand indicate influential, high-impact research, often characterized by deep interdisciplinary collaboration between computational and biomedical scientists [3].

Core Technical Protocols for XAI in Drug Discovery

Implementing XAI requires systematic methodologies. Below are detailed protocols for two fundamental XAI approaches.

Experimental Protocol 1: Generating Model-Agnostic Explanations with SHAP (SHapley Additive exPlanations)

- Objective: To explain the prediction of any machine learning model (e.g., a bioactivity classifier) by quantifying the marginal contribution of each input feature (e.g., molecular descriptor, fingerprint bit).

- Materials: A trained AI/ML model, a dataset of molecular instances for explanation, the

shapPython library. - Methodology:

- Model Preparation: Load your pre-trained model (e.g., a random forest or deep neural network predicting binding affinity).

- Background Selection: Select a representative subset of your training data (~100-500 instances) to serve as the "background" distribution.

- Explainer Initialization: Instantiate a SHAP explainer object (e.g.,

shap.KernelExplainerfor model-agnostic use) by passing your model and the background dataset. - Explanation Calculation: For a specific molecule of interest (the "query instance"), compute SHAP values using the explainer. These values are calculated by evaluating the model's output with and without every possible combination of features.

- Visualization & Interpretation:

- Use

shap.summary_plotto view global feature importance across the dataset. - Use

shap.force_plotorshap.decision_plotto visualize the local explanation for the single query molecule, showing how each feature pushed the prediction from the base value to the final output.

- Use

Experimental Protocol 2: Implementing Local Interpretable Explanations with LIME (Local Interpretable Model-agnostic Explanations)

- Objective: To create a simple, interpretable local surrogate model (like linear regression) that approximates the complex model's predictions for a specific instance and its immediate neighbors.

- Materials: A trained AI/ML model, a single query instance for explanation, the

limePython library. - Methodology:

- Instance Perturbation: Generate a new dataset of perturbed samples around the query instance (e.g., for text or image data, turn words/pixels on/off; for tabular data, perturb feature values).

- Black-Box Prediction: Use the complex, original "black-box" model to make predictions for each of these perturbed samples.

- Surrogate Model Fitting: Fit an interpretable model (e.g., a linear model with Lasso regularization) on this new dataset. The features are the perturbations, and the labels are the corresponding black-box predictions.

- Interpretation: Analyze the weights of the fitted linear model. Features with the largest absolute weights are the most important for the black-box model's prediction on the specific query instance. This provides an intuitive, localized explanation.

The XAI-Integrated Drug Discovery Pipeline The following diagram illustrates how XAI techniques integrate into and enhance a modern AI-supported drug development pipeline [25].

How SHAP Values Explain a Model's Prediction This diagram details the computational process behind SHAP values, which are a cornerstone of model-agnostic explanation [3].

The Scientist's Toolkit: Essential XAI Research Reagents

Table: Key XAI Tools and Resources for Drug Discovery Research

| Tool/Resource Name | Type | Primary Function in Drug Discovery | Key Reference/ Source |

|---|---|---|---|

| SHAP (SHapley Additive exPlanations) | Model-agnostic explanation library | Quantifies the contribution of each molecular feature (e.g., a chemical substructure) to a model's prediction, enabling local and global interpretability. | [3] [25] |

| LIME (Local Interpretable Model-agnostic Explanations) | Model-agnostic explanation library | Creates simple, local surrogate models (e.g., linear models) to approximate and explain individual predictions of complex models. | [3] [25] |

| ChEMBL, PubChem | Chemical & Bioactivity Databases | Provide large-scale, structured datasets of molecules and their biological properties essential for training and validating predictive AI models. | [3] |

| RDKit | Cheminformatics Toolkit | Handles molecular representation (e.g., SMILES, fingerprints), descriptor calculation, and substructure analysis, which are foundational for both model input and XAI output interpretation. | Implied in [3] [25] |

| Atomwise, Insilico Medicine Platforms | AI-Driven Drug Discovery Platforms | Real-world examples of integrated AI platforms where XAI is critical for validating target and compound selection decisions. | [26] |

Technical Support Center: FAQs & Troubleshooting

Q1: How do I validate if the explanations provided by an XAI method (like SHAP or LIME) are correct or trustworthy? A1: Validating explanations is an active research area. A pragmatic multi-method approach is recommended [27]:

- Sanity Checks: Remove a feature deemed important by the explanation. Does the model's prediction change significantly? Conversely, does adding random noise to an "unimportant" feature leave the prediction stable?

- Stability Testing: Apply the XAI method multiple times with slight variations (e.g., different background samples for SHAP). Trustworthy explanations should be stable and not vary wildly.

- Human-in-the-Loop Evaluation: Present explanations to domain experts (medicinal chemists, biologists). Do the highlighted molecular features or biological pathways align with established domain knowledge?

- Explanation Accuracy: For surrogate-based methods like LIME, measure how well the simple explanation model fits the complex model's predictions in the local neighborhood (e.g., using R-squared).

Q2: My model uses complex data types like molecular graphs or protein sequences. Which XAI methods are most suitable? A2: For non-tabular data, you need specialized XAI approaches:

- Graph Neural Networks (GNNs): Use GNNExplainer or PGExplainer, which are designed to identify important nodes (atoms) and edges (bonds) within a molecular graph that contribute to the prediction.

- Sequence Models (for proteins/genes): Methods like attention visualization (for Transformer-based models) or input perturbation (systematically masking sequence segments) can highlight which residues or regions of the sequence the model "attends to."

- Integrated Gradients: A gradient-based method that can be applied to various data types by accumulating the model's gradients along a path from a baseline input (e.g., a blank graph or zeroed sequence) to the actual input.

Q3: The XAI output highlights thousands of features from my high-dimensional omics data. How can I make this interpretable? A3: High-dimensional explanations require aggregation and contextualization:

- Pathway/Functional Enrichment Analysis: Do not interpret individual genes. Instead, map the top-weighted genes from the XAI output to biological pathways (using tools like DAVID, Enrichr, or GSEA). An explanation implicating a coherent biological pathway (e.g., "p53 signaling") is more meaningful than a list of genes.

- Hierarchical Summarization: For structural data, aggregate atomic-level SHAP values up to functional group or chemical scaffold levels to provide chemist-friendly explanations.

- Dimensionality Reduction: Visualize the explanation vectors using t-SNE or UMAP to see if molecules with similar explanations cluster together biologically.

Q4: I'm preparing a regulatory submission. How can XAI help demonstrate the validity of our AI-derived drug candidate? A4: XAI provides critical evidence for regulatory science:

- Mechanistic Plausibility: Use XAI to demonstrate that the model's prediction for efficacy relies on features (e.g., binding to a specific protein pocket) with a known mechanistic link to the disease biology. This builds a "biologically plausible story."

- Safety Risk Assessment: Apply XAI to toxicity predictors to understand why a compound is flagged as potentially toxic (e.g., is it activating an adverse outcome pathway?). This informs safer lead optimization.

- Model Transparency Dossier: Compile XAI analyses as part of your documentation, showing consistent, stable, and intelligible reasoning behind model decisions, which aligns with regulatory pushes for transparent AI [2].

Q5: What are the most common pitfalls when implementing XAI, and how can I avoid them? A5: Common pitfalls and their mitigations include:

- Pitfall: Confusing Correlation with Causation. An XAI method highlights a feature as important, but it may be a spurious correlation in the data.

- Mitigation: Corroborate findings with experimental data or established domain knowledge. Use controlled in silico experiments.

- Pitfall: Misinterpreting Local for Global. A feature important for one molecule's prediction may not be globally important.

- Mitigation: Always complement local explanations (for one compound) with global summaries (across the entire test set or representative clusters).

- Pitfall: Over-reliance on a Single XAI Method. Different methods can yield different explanations for the same prediction.

- Mitigation: Adopt a multi-method strategy. If SHAP, LIME, and a gradient-based method all highlight similar features, confidence in the explanation increases.

- Pitfall: Ignoring Data Quality. "Garbage in, garbage out" applies to explanations. Biased or poor-quality training data leads to untrustworthy explanations.

- Mitigation: Rigorously audit and curate training data. Use XAI itself as a diagnostic to see if models are relying on artifacts or biases in the data [26].

The integration of Artificial Intelligence (AI) and Machine Learning (ML) into drug discovery has revolutionized the identification of novel drug targets and the prediction of compound efficacy [7]. However, the "black box" nature of many advanced models—where inputs and outputs are visible but the internal logic is not—poses a critical barrier to scientific trust, regulatory acceptance, and the iterative refinement of hypotheses [1] [7]. This lack of transparency is particularly problematic in molecular analysis, where understanding why a model predicts a molecule to be active, toxic, or synthesizable is as crucial as the prediction itself [7].

This technical support center is framed within a broader thesis addressing the black box problem. It provides actionable guidance for implementing three cornerstone model-agnostic Explainable AI (XAI) techniques—SHAP, LIME, and Anchors—specifically for molecular analysis tasks. Model-agnostic methods are essential as they allow researchers to explain any existing black-box model (e.g., deep neural networks, complex ensembles) without requiring access to its internal architecture [28]. By enabling post-hoc interpretability, these techniques help researchers validate models, uncover spurious correlations, generate biologically plausible hypotheses, and build the confidence necessary to translate AI-driven insights into viable laboratory experiments and, ultimately, clinical applications [1] [29].

Technical Support Center: FAQs & Troubleshooting Guides

FAQ: Foundational Concepts

Q1: What are the core differences between SHAP, LIME, and Anchors, and when should I use each? A1: These techniques offer complementary approaches to explainability. Your choice depends on whether you need local or global insights, the required explanation format, and the nature of your molecular data.

Table 1: Comparison of Core XAI Techniques for Molecular Analysis

| Criteria | SHAP (SHapley Additive exPlanations) | LIME (Local Interpretable Model-agnostic Explanations) | Anchors |

|---|---|---|---|

| Core Philosophy | Based on cooperative game theory, attributing prediction value fairly among input features [30] [31]. | Approximates the black-box model locally with an interpretable surrogate model (e.g., linear model) [30] [28]. | Finds a "sufficient" condition (a set of "if" rules) that anchors the prediction with high probability [28]. |

| Explanation Scope | Both Local & Global. Provides feature importance for single predictions and across the dataset [30]. | Primarily Local. Explains individual predictions [30] [28]. | Local. Provides a rule-based explanation for a single instance. |

| Output Format | Numeric (Shapley values) and visual plots (summary, dependence, force plots). | Visual, textual, or numeric highlights of contributing features [30]. | Human-readable "IF-THEN" rules (e.g., "IF molecular weight > 500 AND presence of carboxyl group THEN predict: High Permeability"). |

| Best Use Case in Molecular Analysis | Identifying which molecular descriptors/features consistently drive activity across a compound series (global). Quantifying the contribution of a specific substructure to a single molecule's predicted toxicity (local). | Understanding why a specific novel molecule was misclassified. Debugging individual predictions on complex molecular graphs or images. | Generating clear, Boolean rules for a prediction that are easily communicated and validated in a wet-lab context. |

| Key Consideration | Computationally expensive for many features or large datasets. Values can be affected by feature collinearity [32]. | Explanations can be unstable; small changes in the sampling neighborhood may alter the result [28]. | The search for an anchor rule can be computationally intensive for high-dimensional data. |

Q2: How do I choose the right explainability metric for my molecular analysis project? A2: Beyond standard performance metrics (AUC, Accuracy), evaluating the explanations themselves is critical for scientific rigor. Use a combination of the following metrics [31]:

Table 2: Key Metrics for Evaluating XAI Explanations

| Metric | Definition | Interpretation in Molecular Context |

|---|---|---|

| Fidelity | How well the explanation (e.g., LIME's surrogate model) matches the original black-box model's behavior locally. | Ensures the features you're told are important for a specific molecule truly reflect what the complex model used. High fidelity is non-negotiable for reliable insight [31]. |

| Stability/Robustness | The consistency of explanations for similar inputs or under slight perturbations. | If two molecules with nearly identical fingerprints yield vastly different explanation maps, the explanation is unstable and less trustworthy [28]. |

| Comprehensibility | How easily a domain expert (e.g., a medicinal chemist) can understand and act on the explanation. | Rule-based Anchors often score highly here. Complex SHAP summary plots may require more expertise to interpret. |