Decoding Nature: A Comprehensive Guide to LC-MS/MS Profiling for Natural Product Identification and Drug Discovery

This article provides a comprehensive roadmap for researchers and drug development professionals on leveraging Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) for natural product (NP) identification.

Decoding Nature: A Comprehensive Guide to LC-MS/MS Profiling for Natural Product Identification and Drug Discovery

Abstract

This article provides a comprehensive roadmap for researchers and drug development professionals on leveraging Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) for natural product (NP) identification. Beginning with foundational principles, it explores the critical role of NPs in drug discovery and the core components of an LC-MS/MS system[citation:3]. The guide details advanced methodological workflows, including untargeted profiling, molecular networking via platforms like GNPS for dereplication, and quantitative techniques[citation:5][citation:8]. It addresses common operational challenges with symptom-based troubleshooting strategies to ensure data integrity and method robustness[citation:7]. Finally, the article establishes a framework for method validation—covering accuracy, precision, specificity, and matrix effects—and discusses comparative approaches to standardize analyses across diverse plant matrices[citation:4][citation:8][citation:10]. The synthesis aims to equip scientists with the knowledge to efficiently translate complex NP extracts into validated, biologically relevant leads.

The Blueprint of Nature: Foundational Principles of LC-MS for Natural Product Discovery

The Critical Role of Natural Products in Modern Drug Discovery and Biomedical Research

Natural products (NPs) and their structural analogues have been the cornerstone of pharmacotherapy for centuries, making unparalleled contributions to treating cancer, infectious diseases, and other critical conditions [1]. Historically, more than one-third of all FDA-approved small-molecule drugs are derived from or inspired by natural sources, with this figure rising to 67% for anti-infectives and 83% for anticancer agents [2] [3]. Iconic therapeutics such as paclitaxel (Taxol) from the Pacific yew tree, artemisinin from sweet wormwood, and penicillin from fungus underscore the profound biological relevance and evolutionary optimization of natural chemical scaffolds [1] [2].

Despite this legacy, NP research experienced a decline in the late 20th century. The pharmaceutical industry shifted towards combinatorial chemistry and high-throughput screening of synthetic libraries, driven by challenges inherent to NPs: complex isolation and characterization, supply chain uncertainties, and intellectual property complexities [1]. However, the relentless rise of antimicrobial resistance, coupled with unmet therapeutic needs in areas like oncology and neurodegenerative diseases, has catalyzed a powerful renaissance.

This revival is fundamentally enabled by technological breakthroughs in analytical chemistry and genomics. Advanced analytical tools, particularly liquid chromatography-mass spectrometry (LC-MS) and its multidimensional variants, are now capable of deconvoluting the immense chemical complexity of natural extracts with unprecedented speed and sensitivity [4] [5]. Concurrently, genome mining reveals that the biosynthetic potential of microorganisms is vastly underestimated; for each known microbial natural product, genomic data suggests approximately 30 more "silent" or unexpressed compounds await discovery [3]. This whitepaper frames the critical role of NPs within the context of LC-MS profiling for identification research, detailing the quantitative impact, cutting-edge methodologies, and integrated workflows that are重新defining NP-based drug discovery for the 21st century.

Quantitative Impact and Current Landscape

The following tables summarize the decisive quantitative evidence for the role of natural products in therapy and the corresponding analytical tools required for their study.

Table 1: Impact of Natural Products on Approved Therapeutics

| Therapeutic Area | Percentage of Approved Drugs Derived from or Inspired by Natural Products [2] [3] | Notable Examples [1] [2] |

|---|---|---|

| All FDA-Approved Small Molecules | ~34% | Morphine, Digoxin, Aspirin (derivative) |

| Anti-Infective Agents | 67% | Penicillin, Tetracycline, Artemisinin |

| Anticancer Agents | 83% | Paclitaxel, Doxorubicin, Vinblastine |

| Key Statistic | Estimate of Undiscovered Potential [3] | Source |

| Natural products in a major microbial strain collection | ~3.75 million | Natural Products Discovery Center (125,000 strains) |

| Known bacterial NPs vs. estimated potential | ~1% (20,000 known vs. millions estimated) | Genomic analysis of biosynthetic gene clusters |

Table 2: Analytical Publication Trends and Global Utilization of LC-MS and GC-MS

| Analytical Technique | Estimated Yearly Publication Rate (1995-2023) [6] | LC-MS/GC-MS Publication Ratio (2024 estimate) [6] | Leading Countries by Publication Volume [6] |

|---|---|---|---|

| GC-MS / GC-MS/MS | 3,042 articles/year | 1 : 1.5 | 1. China (16,863), 2. Japan (5,165), 3. Germany (6,662) |

| LC-MS / LC-MS/MS | 3,908 articles/year | 1.5 : 1 | 1. China (23,018), 2. USA (~15,000 est.), 3. Germany (8,016) |

| Key Trend | LC-MS/MS usage now dominates quantitative bioanalysis, with at least 60% of LC-MS articles employing MS/MS, compared to ~5% for GC-MS articles [6]. |

Core Experimental Protocols: LC-MS Workflows for Natural Product Analysis

The identification and characterization of bioactive natural products rely on sophisticated, tiered analytical workflows. The following protocols are central to modern NP research.

Protocol: Untargeted Metabolomic Profiling and Dereplication

Objective: To comprehensively characterize the chemical composition of a crude natural extract and rapidly identify known compounds (dereplication) to prioritize novel leads [1] [7].

- Sample Preparation: Extract plant or microbial biomass using a standardized solvent system (e.g., methanol-water). Employ prefractionation or solid-phase extraction to reduce complexity and remove interfering compounds like polyphenolic tannins [2].

- LC-HRMS Analysis:

- Chromatography: Utilize reversed-phase UHPLC with a C18 column (1.7-1.8 µm particle size) for high-resolution separation. A water-acetonitrile gradient with 0.1% formic acid is standard [4].

- Mass Spectrometry: Acquire data in data-dependent acquisition (DDA) mode on a Q-TOF or Orbitrap mass spectrometer. Collect full-scan MS data at high resolution (>30,000 FWHM) and automatically trigger MS/MS scans on the top N most intense ions [7].

- Data Processing & Dereplication:

- Process raw data (peak picking, alignment, deisotoping) using software like MZmine or MS-DIAL.

- Search accurate mass and MS/MS spectra against public databases (GNPS, MassBank, MetLin) and in-house libraries for dereplication [1].

- Employ molecular networking via the GNPS platform to visualize spectral similarity and cluster related compounds, highlighting novel chemical families [1].

Protocol: Targeted Quantitative Analysis of Bioactive Compound Classes

Objective: To accurately quantify specific, known NP classes (e.g., phenolic acids, flavonoids) in complex matrices for quality control or bioactivity correlation studies [7].

- Calibration and Internal Standards:

- Prepare a calibration curve using authentic reference standards across a physiologically relevant concentration range.

- Use stable isotope-labeled internal standards (e.g., ¹³C-labeled compounds) where available. This corrects for matrix effects and variability in extraction and ionization efficiency, providing the highest analytical accuracy [6].

- LC-MS/MS Analysis:

- Chromatography: Optimize LC conditions (column chemistry, gradient) for separation of target isomers.

- Mass Spectrometry: Operate a triple quadrupole (QQQ) instrument in Multiple Reaction Monitoring (MRM) mode. For each analyte, optimize declustering potential, collision energy, and monitor one quantitative and one or two confirmatory ion transitions [7].

- Example (Phenolic Acid): For caffeic acid ([M-H]⁻ m/z 179), the primary quantifier transition is 179→135 (decarboxylation), with 179→134 as a qualifier [7].

- Validation: Establish method validation parameters including limit of detection (LOD), limit of quantification (LOQ), linearity, precision, and accuracy. Use matrix-matched calibration or standard addition to account for signal suppression/enhancement [7].

Protocol: Advanced Two-Dimensional LC (LC×LC) for Complex Extract Resolution

Objective: To achieve maximum separation power for deeply profiling complex NP mixtures where one-dimensional LC is insufficient [5].

- System Configuration:

- Implement a comprehensive LC×LC system with two independent separation mechanisms. A common orthogonal setup is HILIC × Reversed-Phase, separating compounds first by polarity then by hydrophobicity [5].

- Employ a focusing modulation interface (e.g., a switching valve with dual trapping loops) to capture and refocus effluent from the first dimension (¹D) before injection into the second dimension (²D). This preserves ¹D separation fidelity [5].

- Optimized Operation:

- Use microLC (e.g., 1 mm ID column) in the first dimension for low flow rates, compatible with effective modulation.

- Employ very fast gradients on a short, narrow column in the second dimension (e.g., 5-60 second runs) to analyze multiple fractions from the ¹D run [5].

- Detection: Couple the LC×LC system to a high-resolution mass spectrometer (HRMS). The vastly increased peak capacity enables the detection and differentiation of thousands of features, including minor but potentially bioactive constituents [5].

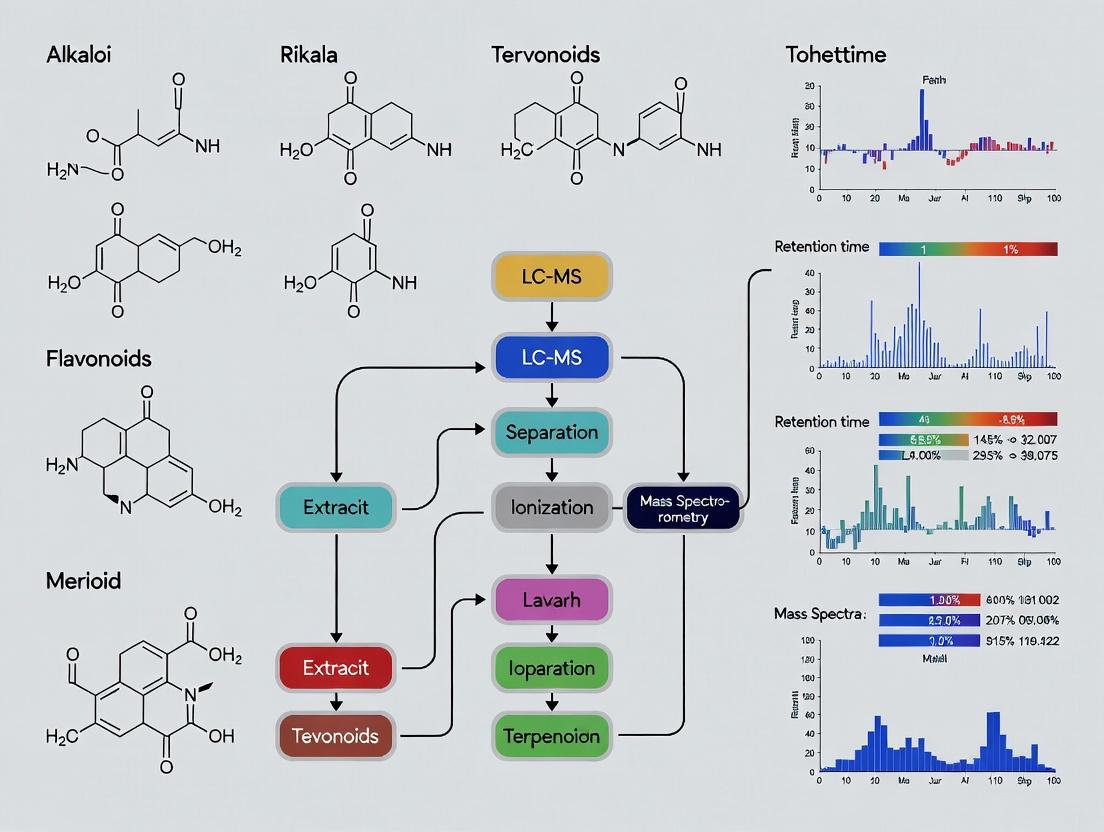

Visualization of Workflows and Pathways

The following diagrams, generated using Graphviz DOT language, illustrate the core logical and experimental relationships in NP drug discovery and LC-MS analysis.

Diagram 1: Integrated NP Drug Discovery & LC-MS Workflow (100 chars)

Diagram 2: Evolution of LC-MS Tech in NP Research (94 chars)

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for NP LC-MS Profiling

| Item | Function & Role in NP Research | Technical Consideration |

|---|---|---|

| Stable Isotope-Labeled Internal Standards (SIL-IS) [6] | Provides the highest accuracy in quantification by correcting for matrix effects and analyte loss during sample workup. Acts as an identical chemical "scale weight" within the sample. | Essential for rigorous targeted quantification. Use ¹³C or ²H-labeled analogues of target NPs where commercially available. |

| Authentic Natural Product Reference Standards | Enables definitive identification (via chromatographic co-elution and spectral match) and creation of calibration curves for quantification. | Source from reputable suppliers (e.g., Sigma-Aldrich, Extrasynthese). Purity should be >95% (HPLC grade). |

| Solid-Phase Extraction (SPE) Cartridges | Cleans up crude extracts by removing salts, pigments (e.g., chlorophyll), and highly polar or non-polar interfering compounds. Pre-fractionates extracts to simplify profiles [2]. | Choose sorbent chemistry (C18, HLB, silica, ion-exchange) based on target NP polarity and known interferences. |

| LC-MS Grade Solvents & Additives | Ensures low background noise, prevents system contamination, and provides consistent ionization efficiency. Critical for reproducible retention times and sensitive detection. | Use solvents (acetonitrile, methanol, water) with low UV cutoff and specified LC-MS purity. Additives like formic acid must be volatile and pure. |

| Specialized Chromatography Columns | Provides the critical separation required before MS detection. Different column chemistries resolve different NP classes. | C18: General workhorse for medium-nonpolar NPs. HILIC: For polar, glycosylated compounds. Phenyl-Hexyl: For isomer separation of flavonoids [7]. |

Core Components and Workflow of an LC-MS/MS System for Natural Product Analysis

Liquid Chromatography-Tandem Mass Spectrometry (LC-MS/MS) has become the cornerstone analytical technology for the discovery, profiling, and characterization of natural products (NPs). Within the broader context of a thesis focused on LC-MS profiling for natural product identification, this technique is indispensable for bridging the gap between complex biological matrices and actionable structural data. Natural products, derived from plants, microbes, and marine organisms, are renowned for their structural diversity and potent bioactivities, serving as crucial leads for drug development in areas such as oncology, infectious diseases, and neurology [8]. However, this same complexity presents a significant analytical challenge. LC-MS/MS addresses this by coupling high-resolution chromatographic separation with sensitive and selective mass analysis, enabling researchers to detect thousands of metabolites in a single run, characterize novel compounds, and quantify bioactive constituents at trace levels in intricate samples like plant extracts, cell lysates, or biological fluids [4] [9].

The evolution of this platform—from early interfaces to modern ultra-high-performance systems and high-resolution mass analyzers—has been driven by the needs of natural product research [4]. The integration of advanced ionization techniques, such as electrospray ionization (ESI), has been particularly transformative, allowing for the analysis of a wide range of polar, non-polar, and high-molecular-weight compounds [9]. Today, LC-MS/MS workflows are fundamental to various 'omics' disciplines, including metabolomics and proteomics, which are applied to map the mechanisms of action of natural products and discover their cellular targets [8]. This guide provides an in-depth examination of the core components, standardized workflows, and advanced methodologies that define modern LC-MS/MS analysis in the field of natural products.

Core System Components and Configuration

An LC-MS/MS system is an integrated instrument consisting of two main units: the liquid chromatography (LC) module for compound separation and the tandem mass spectrometer (MS/MS) for detection and structural analysis. The configuration and selection of components within each unit are critical for method sensitivity, specificity, and throughput.

Liquid Chromatography (LC) Module

The LC module is responsible for the temporal separation of the complex mixture of compounds in a natural product extract prior to introduction into the mass spectrometer.

- Pump Systems: Modern systems use binary or quaternary high-pressure pumps capable of delivering precise, pulse-free gradients at pressures exceeding 1000 bar, as seen in Ultra-High-Performance Liquid Chromatography (UHPLC). This allows for faster separations with superior resolution compared to traditional HPLC [4].

- Autosampler: A temperature-controlled autosampler enables the automated injection of multiple samples (often from 96-well plates), improving reproducibility and throughput for large-scale studies [10].

- Chromatographic Column: The column is the heart of the separation. Selection is based on the chemical properties of the target analytes.

- Reversed-Phase (RP) Columns (e.g., C18): These are the most widely used for natural product analysis. They separate compounds based on hydrophobicity, with polar compounds eluting first. Core-shell particle columns (e.g., 2.1 x 100 mm, 1.7 µm) are popular for their high efficiency and lower backpressure [11].

- Hydrophilic Interaction Liquid Chromatography (HILIC) Columns: Used for the retention and separation of highly polar metabolites that are poorly retained on RP columns [9].

- Specialty Phases: Columns like pentafluorophenyl (PFP or F5) are used for challenging separations of structural isomers commonly found in natural products [11].

- Mobile Phase: Typically consists of water (aqueous phase, A) and an organic solvent like methanol or acetonitrile (organic phase, B). Additives such as 0.1% formic acid are common to promote protonation in positive ionization mode or to improve peak shape [11] [12].

Tandem Mass Spectrometry (MS/MS) Module

The MS/MS module ionizes the separated compounds, filters and fragments the ions, and detects them to provide mass and structural information.

- Ionization Source: This converts liquid-phase analytes into gas-phase ions.

- Mass Analyzers: These separate ions based on their mass-to-charge ratio (m/z). Hybrid systems combine analyzers for enhanced performance.

- Quadrupole (Q): Filters specific m/z ions; often used in series as a triple quadrupole (QQQ) for highly sensitive and selective targeted quantification.

- Time-of-Flight (TOF): Measures the flight time of ions to determine m/z with high mass accuracy and resolution, essential for untargeted profiling and determining molecular formulas.

- Orbitrap: Utilizes an electrostatic field to trap ions, offering extremely high resolution and mass accuracy, crucial for distinguishing between closely related natural product derivatives [4].

- Ion Trap (IT): Traps and sequentially ejects ions, useful for multiple stages of fragmentation (MSⁿ) for detailed structural elucidation.

- Common Hybrid Configurations:

- Q-TOF: Combines quadrupole mass filtering with TOF analysis, ideal for accurate mass measurement of both precursor and fragment ions in untargeted workflows.

- Q-Orbitrap: Similar to Q-TOF but offers even higher resolution, making it a premier tool for complex natural product mixtures [13].

- Triple Quadrupole (QQQ): The workhorse for targeted quantification (e.g., pharmacokinetic studies). The first (Q1) and third (Q3) quadrupoles act as mass filters, selecting predefined precursor and product ions, respectively, resulting in exceptional sensitivity and specificity [10] [11].

Table 1: Common LC-MS/MS Instrument Configurations for Natural Product Analysis

| Configuration | Key Strengths | Typical Application in NP Research | Example from Literature |

|---|---|---|---|

| Triple Quadrupole (QQQ) | High sensitivity, excellent reproducibility, robust quantification | Targeted analysis of known bioactive compounds; pharmacokinetic studies [10] [11] | Quantification of ADC payloads (MMAE) in mouse serum [11] |

| Quadrupole-Time of Flight (Q-TOF) | High mass accuracy, fast acquisition, good resolution | Untargeted metabolomics, profiling of unknown compounds, molecular formula assignment | Profiling of phytohormones across diverse plant matrices [12] |

| Quadrupole-Orbitrap | Very high resolution and mass accuracy, high dynamic range | Detailed characterization of complex extracts, identification of minor constituents, distinguishing isomers | Advanced annotation workflows (e.g., MCheM integration) [13] |

| Ion Trap (IT) or Linear IT | Multiple stages of fragmentation (MSⁿ) | Elucidation of detailed fragmentation pathways for structural determination | Not specifically highlighted in gathered sources, but a classical tool. |

Standardized Workflow for Natural Product Analysis

A robust LC-MS/MS analysis follows a structured sequence from sample preparation to data reporting. Adherence to this workflow ensures reliable and interpretable results.

Sample Preparation and Extraction

Effective sample preparation is critical for removing interfering compounds and concentrating analytes. The optimal method depends heavily on the sample matrix (plant tissue, cell culture, serum) and the chemical properties of the target NPs.

- Principles: The goal is to maximize the recovery of target metabolites while minimizing co-extraction of salts, proteins, lipids, and other interferences that can suppress ionization or damage the instrument.

- Solvent Selection: Methanol, ethanol, acetonitrile, and mixtures with water are commonly used. A study optimizing extraction for botanicals found methanol (often with 10% deuterated methanol for NMR compatibility) to be the most effective single solvent for broad metabolite coverage across diverse species like Camellia sinensis and Cannabis sativa [14].

- Techniques:

- Protein Precipitation: Essential for biological fluids. Involves adding an organic solvent (e.g., cold methanol or a methanol-ethanol mixture) to precipitate proteins, followed by centrifugation [11].

- Solid-Phase Extraction (SPE): Provides selective cleanup and concentration using cartridges with various sorbents.

- Automation: Automated liquid handling systems can perform solvent dispensing, mixing, and transfer in 96-well plate formats, drastically improving throughput, reproducibility, and safety when handling clinical or large sample sets [10].

Chromatographic Separation

Following extraction, the complex mixture is separated chromatographically to reduce ion suppression and allow individual compounds to enter the MS detector at distinct times.

- Method Development: This involves optimizing the column chemistry, mobile phase gradient, flow rate, and temperature to achieve baseline separation of critical analyte pairs.

- Gradient Elution: A typical reversed-phase gradient for a natural product extract might start at 5-20% organic solvent (B), ramp to 95-100% B over 5-20 minutes, hold to wash the column, and then re-equilibrate to the starting conditions [11] [12].

- High-Throughput Methods: For screening applications, fast gradients (e.g., 2-5 minutes) on UHPLC systems are employed, significantly increasing daily sample capacity [4].

Mass Spectrometric Analysis and Data Acquisition

The separated compounds are ionized and analyzed based on the selected operational mode.

- Data Acquisition Modes:

- Full Scan (MS¹): Records all ions within a specified m/z range. Used in untargeted profiling to capture a comprehensive snapshot of the sample.

- Tandem Mass Spectrometry (MS/MS or MS²): A precursor ion is selected, fragmented (typically by collision-induced dissociation, CID), and the product ions are analyzed. This provides structural fingerprint data.

- Data-Dependent Acquisition (DDA): Automatically selects the most intense ions from an MS¹ scan for subsequent MS/MS analysis. Common in discovery workflows but can miss low-abundance ions.

- Data-Independent Acquisition (DIA): Fragments all ions in sequential, broad m/z windows (e.g., SWATH). Provides comprehensive MS/MS data for all detectable compounds, beneficial for retrospective analysis [15].

- Multiple Reaction Monitoring (MRM): Used exclusively on QQQ instruments. Monitors specific, predefined transitions (precursor ion → product ion) for each analyte. It offers the highest sensitivity and selectivity for targeted quantification [11].

Data Processing, Annotation, and Analysis

This is often the most time-intensive step, transforming raw spectral data into biological insights.

- Raw Data Processing: Software tools (e.g., MZmine, XCMS, MetaboAnalystR) perform peak detection, alignment across samples, and filtering [15].

- Compound Annotation: Identifying unknowns by matching experimental data against reference databases.

- Level 1 (Confirmed): Matches to an authentic standard analyzed under identical conditions (RT, m/z, MS/MS).

- Level 2 (Probable): Matches based on accurate mass and MS/MS spectrum to a library entry.

- Level 3 (Tentative): Matches based on accurate mass and/or diagnostic fragments to a compound class [16].

- Statistical Analysis: Multivariate statistical methods (PCA, PLS-DA) are used to identify differentially abundant metabolites between sample groups (e.g., treated vs. control) [8].

- Pathway Analysis: Enrichment analysis tools map annotated metabolites onto biochemical pathways to interpret biological effects [15].

Detailed Methodologies for Key Experiment Types

Protocol 1: Automated, High-Throughput Quantification for Therapeutic Monitoring

This protocol, adapted from a study on monitoring antiseizure medications, is ideal for the precise quantification of one or several known natural products or their metabolites in biological fluids [10].

- Internal Standard (IS) Addition: To each aliquot of sample (e.g., 50 µL of serum), add a stable isotope-labeled analog of the target analyte (e.g., CBD-d3 for cannabidiol).

- Automated Sample Preparation:

- Use an automated liquid handling platform.

- Dispense precipitation solvent (e.g., cold acetonitrile with 0.1% formic acid) to each well of a 96-well plate containing the samples and IS.

- Seal the plate, mix thoroughly, and centrifuge (e.g., 4000 × g, 10 min, 4°C) to pellet proteins.

- Supernatant Transfer: Automatically transfer the clarified supernatant to a new 96-well analysis plate.

- LC-MS/MS Analysis (QQQ):

- Column: Reversed-phase C18 (e.g., 2.1 x 50 mm, 1.7 µm).

- Mobile Phase: (A) Water with 0.1% formic acid; (B) Methanol with 0.1% formic acid.

- Gradient: Rapid linear gradient from 30% B to 95% B over 2.5 minutes.

- MS Detection: Operate in positive MRM mode. Use optimized compound-specific transitions (Precursor m/z → Product m/z) and collision energies.

- Quantification: Generate a calibration curve using spiked matrix standards. Quantify samples by comparing the analyte/IS peak area ratio to the curve.

Protocol 2: Highly Sensitive, Multi-Payload Quantification in Serum

This protocol, developed for antibody-drug conjugate (ADC) payloads, is exemplary for quantifying potent, low-abundance natural product-like toxins (e.g., auristatins, calicheamicin) at sub-nanomolar levels [11].

- Micro-Sample Preparation:

- To just 5 µL of mouse serum, add 2 µL of internal standard solution (e.g., Nicotinamide-D4).

- Add 15 µL of ice-cold methanol:ethanol (50:50, v/v) for protein precipitation.

- Vortex for 5 minutes, incubate at -20°C for 20 minutes, then centrifuge at 14,000 × g for 10 minutes at 4°C.

- LC-MS/MS Analysis (QQQ):

- Column: Pentafluorophenyl (F5) core-shell column (2.1 x 100 mm, 1.7 µm) for selective separation.

- Mobile Phase: (A) 0.1% formic acid in water; (B) 0.1% formic acid in methanol.

- Gradient: 20% B to 70% B over 2 min, hold for 5 min, then wash and re-equilibrate. Total run time: 11 min.

- MS Detection: Positive ion MRM mode. The use of a simple methanol/water/formic acid system and a focused gradient enhances sensitivity for the target compounds.

- Validation: The method demonstrated linearity from 0.04-100 nM for some payloads, recoveries >85%, and was successfully applied to a mouse pharmacokinetic study.

Protocol 3: Untargeted Phytohormone Profiling Across Diverse Plant Matrices

This protocol outlines a unified approach to analyze multiple hormone classes in different plant species, a common challenge in plant natural product research [12].

- Matrix-Specific Extraction:

- Homogenize ~1.0 g of frozen plant tissue (e.g., leaf, fruit) under liquid nitrogen.

- Extract with a tailored solvent system. For example, use methanol/water/formic acid for many tissues, or a two-step acidified solvent for sugar-rich matrices like dates.

- Add a suitable internal standard (e.g., salicylic acid-D4).

- Centrifuge, filter (0.22 µm), and dilute the supernatant with the initial mobile phase.

- LC-MS/MS Analysis (Q-TOF or QQQ):

- Column: Reversed-phase C18 (e.g., 4.6 x 100 mm, 3.5 µm).

- Mobile Phase: (A) 0.05% formic acid in water; (B) methanol or acetonitrile.

- Gradient: Optimized to separate acidic hormones (ABA, SA, JA) from more neutral ones (IAA, CKs, GAs).

- MS Detection: Use negative ionization mode for acidic hormones (ABA, SA) and positive mode for others (IAA, CKs). For Q-TOF, use full scan for profiling and auto-MS/MS for identification. For QQQ, use scheduled MRM for quantification.

- Profiling and Quantification: Use calibration curves for absolute quantification or normalized peak areas for comparative profiling across species.

Protocol 4: Advanced Annotation via Multiplexed Chemical Metabolomics (MCheM)

MCheM is a cutting-edge workflow that integrates post-column derivatization reactions to gain orthogonal chemical data, vastly improving confidence in annotating unknown natural products [13].

- Hardware Setup:

- Install a post-column reagent infusion setup: a T-splitter, a PEEK capillary, and a syringe pump to deliver derivatization reagents into the mobile post-column effluent before the ESI source.

- Iterative Analysis:

- Run the same natural product extract multiple times, each time infusing a different reagent that targets specific functional groups (e.g., hydroxylamine for aldehydes/ketones, AQC for amines, cysteine for β-lactones/Michael acceptors).

- Data Acquisition and Processing:

- Acquire data in standard DDA mode.

- Use specialized software (a module in MZmine) to process the data. The software correlates shifts in mass (due to derivatization) and changes in MS/MS spectra with specific reagent reactions.

- Enhanced Annotation:

- The software generates an "MCheM spectrum" file annotated with functional group information.

- Submit this file to annotation tools like SIRIUS or GNPS. The functional group data constrains the chemical search space, allowing the tools to filter and re-rank structural candidates, leading to more confident annotations.

Data Analysis and Computational Workflows

Modern LC-MS/MS generates vast datasets, necessitating automated, reproducible bioinformatics pipelines.

- Integrated Analysis Platforms: Tools like MetaboAnalystR 4.0 provide an end-to-end solution, from raw LC-MS data processing (peak picking, alignment) to statistical analysis and functional interpretation. It supports both DDA and DIA (SWATH) data, performs MS/MS deconvolution, and searches against comprehensive spectral libraries [15].

- Automated Compound Annotation: Specialized software like AutoAnnotatoR addresses the bottleneck of annotating plant-specific natural products. It allows users to import in-house databases and diagnostic fragment ion rules, enabling efficient batch annotation of complex samples like Fritillaria alkaloids, characterizing thousands of constituents in a few hours [16].

- From Data to Biological Insight: The final step involves using statistical results and annotated metabolite lists for pathway enrichment analysis (using databases like KEGG) to generate hypotheses about the biological mechanisms underlying observed changes, such as the response to a natural product treatment [8] [15].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for LC-MS/MS Analysis of Natural Products

| Category | Item | Function in NP Analysis | Key Considerations & Examples |

|---|---|---|---|

| Extraction Solvents | LC-MS Grade Methanol, Acetonitrile, Ethanol, Water | Primary solvents for metabolite extraction from solid or liquid matrices. | Methanol is often the most versatile for broad metabolite coverage [14]. Acetonitrile excels in protein precipitation for cleaner samples. |

| Mobile Phase Additives | Formic Acid, Ammonium Acetate, Ammonium Hydroxide | Modifies pH to control analyte ionization in ESI. Improves chromatographic peak shape. | 0.1% Formic Acid is standard for positive mode. Ammonium acetate buffers (5-10 mM) are used for both positive and negative modes. |

| Internal Standards (IS) | Stable Isotope-Labeled Analogs (¹³C, ²H, ¹⁵N) | Corrects for variability in sample prep, ionization efficiency, and instrument performance. Essential for accurate quantification. | CBD-d3 for cannabidiol studies [10]. Salicylic acid-D4 for phytohormone analysis [12]. Should be added as early as possible in the protocol. |

| Chromatography Columns | Reversed-Phase C18, HILIC, PFP (F5) Core-Shell Columns | Separate the complex mixture of natural products based on hydrophobicity, polarity, or specific interactions. | C18: General purpose. HILIC: For polar metabolites. PFP: For separating challenging isomers [11] [12] [9]. |

| Derivatization Reagents | e.g., AQC, Hydroxylamine, Cysteine (for MCheM) | Chemically modifies analytes post-column to impart functional group information or improve detectability. | Used in advanced workflows like MCheM to tag amines, carbonyls, or reactive electrophiles, aiding structural annotation [13]. |

| Reference Standards | Authentic Natural Product Compounds | Provides definitive identification (RT, m/z, MS/MS match) and is required for creating calibration curves for absolute quantification. | Commercially available for many common NPs. Critical for method validation and reporting Level 1 identifications [16]. |

The identification and characterization of bioactive natural products (NPs) from complex biological matrices represent a cornerstone of modern drug discovery and development. Within this pipeline, liquid chromatography-mass spectrometry (LC-MS) has emerged as the preeminent analytical platform, enabling the sensitive detection, quantification, and structural elucidation of metabolites across a vast chemical space [4]. However, the fidelity and success of any LC-MS analysis are fundamentally constrained by the steps taken before the sample enters the instrument. Effective sample preparation—encompassing extraction, clean-up, and concentration—is not merely a preliminary step but a strategic determinant of data quality, impacting sensitivity, reproducibility, and the breadth of metabolite coverage [17].

This whitepaper frames strategic sample preparation within the context of a broader thesis on LC-MS profiling for natural product identification. The goal is to transform a raw, heterogeneous biological sample (e.g., plant leaf, microbial culture) into a purified extract suitable for high-resolution LC-MS analysis, while preserving the integrity of the native metabolome. The challenge is multifaceted: methods must efficiently liberate analytes from intricate cellular structures, remove interfering compounds (e.g., lipids, pigments, salts, proteins) that suppress ionization or occlude chromatographic separation, and be adaptable to both targeted quantification and untargeted discovery workflows [18] [19].

Failure to address these challenges can lead to significant matrix effects, false negatives, instrument contamination, and ultimately, the misprioritization of leads in a drug discovery campaign. Therefore, the development and optimization of sample preparation protocols are as critical as the choice of the LC-MS instrument itself. This guide provides an in-depth examination of established and emerging strategies for handling diverse plant and microbial matrices, supported by current experimental data and methodological details.

Foundational Principles and Method Selection

The design of a sample preparation strategy must be guided by the analytical objective (targeted vs. non-targeted), the physico-chemical properties of the analytes of interest (polarity, stability, molecular weight), and the specific challenges posed by the sample matrix.

- Targeted vs. Untargeted Analysis: Targeted methods focus on a predefined set of analytes, allowing for optimization of extraction solvents and clean-up sorbents for maximum recovery of those specific compounds. In contrast, untargeted metabolomics or non-target screening aims for comprehensive coverage of the metabolome, necessitating broader, more inclusive protocols that balance the extraction of diverse chemical classes [18] [19].

- Analyte and Matrix Considerations: The log P (or log Kow) of target compounds is a key predictor of optimal extraction solvent. Complex matrices like breast milk (~4% lipid) or plant tissues rich in polyphenols and pigments require robust clean-up to remove interferences that cause ion suppression in the MS source [18] [20]. Microbial matrices often present challenges from polysaccharides, proteins, and salts from growth media.

- The Universality Trade-off: While a single, universal protocol is desirable for high-throughput labs, the chemical diversity of NPs often requires customized approaches. The trend is toward developing multiresidue methods capable of handling a wide log Kow range (e.g., -0.3 to 10) within a single workflow [18].

Core Methodologies: Extraction and Clean-up Techniques

Extraction Strategies

The primary goal of extraction is to quantitatively transfer analytes from the solid or semi-solid matrix into a solvent compatible with LC-MS. The choice of solvent system is paramount.

- Solvent Selection: Methanol, acetonitrile, and acetone, often acidified (e.g., with 1% formic acid), are commonly used for their ability to denature proteins and extract a broad polarity range [20] [21]. For example, a systematic evaluation for PFAS analysis in ten plant species found methanol to be the optimal solvent, outperforming acetonitrile-water mixtures [20]. In multiresidue methods for environmental contaminants, the acetonitrile-based partition step from the QuEChERS (Quick, Easy, Cheap, Effective, Rugged, and Safe) approach is frequently adopted as a starting point due to its effectiveness for both polar and non-polar compounds [18].

- Assisted Extraction Techniques: To improve efficiency and reduce extraction time, techniques like ultrasonication (UAE), microwave-assisted extraction (MAE), and pressurized liquid extraction (PLE) are employed. These methods enhance cell lysis and mass transfer, leading to higher yields, especially for solid plant tissues [9].

Clean-up Strategies

Post-extraction, the crude extract contains co-extracted matrix components that must be removed to ensure analytical robustness.

- Solid-Phase Extraction (SPE): SPE remains a gold standard for selective clean-up. Cartridges like Oasis HLB (hydrophilic-lipophilic balanced) are versatile for retaining a wide analyte range, while specific phases like ENVI-Carb (graphitized carbon) are highly effective for removing pigments and other planar molecules, as demonstrated in PFAS analysis where 1 g ENVI-Carb cartridges yielded superior results [20] [21].

- Dispersive-SPE (d-SPE): Integral to the QuEChERS workflow, d-SPE involves adding a loose sorbent directly to the extract. Primary sorbents include:

- PSA (Primary Secondary Amine): Effective for removing fatty acids, sugars, and some pigments.

- C18: Removes non-polar interferences like lipids and sterols.

- Zirconium dioxide-based sorbents (e.g., Z-Sep): Particularly effective for removing phospholipids and fats from complex matrices like animal tissues and breast milk, significantly reducing matrix effects in GC-MS and LC-MS analysis [18].

- Filtration and Precipitation: Specialized filter cartridges, such as Captiva ND Lipid plates, offer a convenient pass-through clean-up method for efficient protein and lipid removal prior to LC-MS [18].

Table 1: Comparison of Extraction and Clean-up Methods for Different Matrices and Analytes

| Matrix | Target Analytes | Optimal Extraction Solvent | Optimal Clean-up Method | Key Outcome | Source |

|---|---|---|---|---|---|

| Fish Muscle, Breast Milk | 77 Polar/Lipophilic Contaminants (log Kow -0.3 to 10) | Acetonitrile (QuEChERS) | d-SPE: Zirconium dioxide sorbents (GC-MS); Captiva ND Lipids filter (LC-MS) | Mean recoveries 70-120%, RSD <20% for most compounds. | [18] |

| Various Plant Tissues | 24 PFAS Compounds | Methanol | SPE: ENVI-Carb Cartridge (1g) | Recovery 90-120%, precision RSD <20%, low MDL (0.04–4.8 ng/g). | [20] |

| Medicinal Plant Parts | Bioactive Metabolites (e.g., Antioxidants) | Water or Acetone | Fractionation via SPE C18 Cartridge | Enabled bioactivity-guided fractionation and LC-MS/MS identification. | [22] |

| Chicken/Cattle Tissues, Milk | Aflatoxins (B1, B2, G1, G2, M1, M2) | 1% Formic Acid in Acetonitrile | Multi-modal: QuEChERS (muscle), QuEChERS+Oasis Ostro (liver), Oasis PRiME HLB (milk). | High-throughput (96 samples/batch), validated per EU guidelines. | [21] |

| Annona crassiflora Plant Parts | Larvicidal Acetogenins | Hexane, Ethyl Acetate, Methanol | Partitioning using Diol Cartridges | Simplified chemical profiles for metabolomics analysis. | [19] |

Detailed Experimental Protocols

Protocol A: Multi-Residue Extraction and Clean-up for LC-MS Non-Target Screening (Adapted from Baduel et al.)

This protocol is designed for the simultaneous extraction of a wide range of organic chemicals from medium-lipid content biological matrices (e.g., plant tissue, animal tissue) [18].

- Homogenization: Weigh 2.0 ± 0.1 g of homogenized frozen sample into a 50 mL PTFE centrifuge tube.

- Extraction: Add 10 mL of acetonitrile and internal standards. Shake vigorously for 1 minute. Add a salts packet (containing MgSO₄ and NaCl) from a commercial QuEChERS kit. Shake immediately and vigorously for another 3 minutes.

- Centrifugation: Centrifuge at >4000 rpm for 5 minutes. The acetonitrile (upper) layer is transferred to a clean tube.

- Clean-up (for LC-MS): Pass approximately 2 mL of the acetonitrile extract through a Captiva ND Lipid 1 mL filtration cartridge. Collect the filtrate.

- Concentration and Reconstitution: Evaporate the clean extract to near-dryness under a gentle stream of nitrogen. Reconstitute the residue in 200 µL of a methanol/water (50:50, v/v) mixture suitable for LC-MS injection.

- Analysis: Analyze by LC-QTOF-MS/MS in data-dependent acquisition (DDA) mode for non-target screening.

Protocol B: Optimized PFAS Analysis in Plant Matrices (Adapted from the 2022 Study)

This protocol details a method validated for 24 PFAS in roots, stems, leaves, and needles [20].

- Drying and Milling: Freeze-dry plant material and mill to a fine powder.

- Extraction: Weigh 0.2 g dry weight into a 15 mL tube. Spike with mass-labeled internal standards. Add 5 mL of methanol.

- Shaking and Sonication: Shake horizontally at 250 rpm for 60 minutes, followed by ultrasonication in a water bath for 15 minutes.

- Centrifugation and Collection: Centrifuge at 4500 g for 10 minutes. Decant the supernatant into a new tube. Repeat the extraction with another 5 mL of methanol, combine supernatants.

- Clean-up: Condition a 1 g ENVI-Carb SPE cartridge with 5 mL methanol. Load the combined extract. Elute with 12 mL of methanol. Collect the entire eluate.

- Concentration and Analysis: Evaporate the eluate to near dryness under nitrogen. Reconstitute in 1 mL of methanol. Filter through a 0.22 µm nylon filter into an LC vial. Analyze by LC-MS/MS using negative electrospray ionization and multiple reaction monitoring (MRM).

Integration with Downstream LC-MS Analysis and Dereplication

Effective sample preparation is the first link in an analytical chain. A clean extract directly enhances chromatographic performance (peak shape, resolution) and MS sensitivity by reducing ion suppression. This is crucial for the subsequent step of dereplication—the rapid identification of known compounds to prioritize novel leads [22] [19].

Modern dereplication relies on hyphenated techniques and databases. LC-MS/MS data from prepared extracts can be processed through platforms like:

- Global Natural Products Social Molecular Networking (GNPS): Creates molecular networks based on MS/MS fragmentation similarity, visually clustering related compounds and allowing library spectrum matching for rapid annotation [22] [19].

- MetaboAnalyst: Performs statistical analysis (PCA, PLS-DA) on LC-MS feature tables to identify ions that discriminate between sample groups (e.g., bioactive vs. inactive fractions), guiding the isolation of responsible metabolites [19].

The choice of ionization source (e.g., ESI, APCI, APPI) is also a function of the cleaned extract's composition, affecting the detection of different analyte classes [9] [4].

Table 2: Performance Metrics of Validated Sample Preparation Methods

| Method Description | Matrix | Recovery Range (%) | Precision (RSD%) | Limit of Quantification (LOQ) | Key Innovation/Note |

|---|---|---|---|---|---|

| Multi-residue QuEChERS + d-SPE [18] | Fish Muscle, Breast Milk | 70 – 120 | <20% (most) | GC-MS/MS: 0.08-3 µg/kg; LC-QTOF: 0.2-9 µg/kg | One protocol for polar & lipophilic contaminants (log Kow -0.3 to 10). |

| Methanol + ENVI-Carb SPE [20] | 10 Plant Species (Leaves, Roots, etc.) | 90 – 120 | <20% (within/between day) | 0.04 – 4.8 ng/g (dry weight) | Optimized for challenging PFAS in complex plant tissues. |

| Multi-modal for Aflatoxins [21] | Chicken Liver, Muscle, Egg, Milk | Data meets EU criteria | Data meets EU criteria | Not specified; method validated per EU guidelines. | High-throughput (96 samples/batch), tailored clean-up per matrix. |

| Bioactivity-Guided w/ SPE C18 [22] | Medicinal Plants (e.g., Rosemary, Ashwagandha) | N/A (Qualitative) | N/A (Qualitative) | N/A | Integrated with antioxidant assay and student training. |

Advanced Context: Sample Preparation for Proteomics in Natural Product Mechanism Studies

Beyond metabolomics, LC-MS-based proteomics is a powerful tool for elucidating the mechanisms of action of bioactive natural products. Here, sample preparation focuses on proteins [8].

- Cell/Tissue Treatment: Treat cell lines (e.g., MCF-7, A549) with the NP extract or pure compound.

- Protein Extraction: Lyse cells in a suitable buffer (e.g., RIPA with protease/phosphatase inhibitors) to extract the full proteome.

- Digestion: Digest proteins into peptides using a sequence-specific protease (typically trypsin). This "bottom-up" proteomics approach is standard.

- Peptide Clean-up: Use StageTips (C18 membrane) or similar micro-SPE to desalt and concentrate peptides before LC-MS/MS.

- Analysis and Quantification: Analyze peptides by nanoLC-MS/MS. Use label-free (e.g., MaxQuant) or label-based (e.g., TMT, SILAC) quantification to identify proteins with significantly altered expression following NP treatment [8].

Diagram 1: Strategic Workflow for Natural Product LC-MS Profiling

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Research Reagents and Materials for Sample Preparation

| Item | Function | Example Application |

|---|---|---|

| QuEChERS Extraction Kits | Provides optimized salt mixtures (MgSO₄, NaCl) for phase separation and initial extraction of broad analyte classes. | Multi-residue extraction of contaminants from biological matrices [18]. |

| Zirconium Dioxide-based d-SPE Sorbents (e.g., Z-Sep) | Selectively removes phospholipids and fatty acids, significantly reducing matrix effects in LC-MS. | Clean-up of lipid-rich samples like breast milk, liver, or avocado [18]. |

| ENVI-Carb SPE Cartridges | Graphitized carbon sorbent effective at removing pigments, polyphenols, and other planar interfering compounds. | Essential for clean-up of plant extracts prior to PFAS or other contaminant analysis [20]. |

| Oasis HLB & PRiME HLB SPE Cartridges | Hydrophilic-Lipophilic Balanced polymer. Retains a wide range of analytes; PRiME HLB requires no conditioning for simpler protocols. | General purpose clean-up for toxins (e.g., aflatoxins) in milk, plasma, and food samples [21]. |

| Captiva ND Lipid Filtration Cartridges | A pass-through, phospholipid removal device. Simple and fast clean-up for proteinaceous and lipid-rich samples. | Rapid clean-up of biological extracts prior to LC-MS for metabolomics [18]. |

| C18 and Diol Phase SPE Cartridges | C18 binds non-polar compounds; Diol phase (silica with diol groups) is used for normal-phase separation of different polarity fractions. | Fractionation of crude plant extracts to simplify profiles for bioactivity testing [22] [19]. |

Diagram 2: Proteomics Workflow for Natural Product Mechanism Studies

Strategic sample preparation is a dynamic and critical component of the natural product research pipeline. As demonstrated, there is no single "best" method; rather, success lies in the rational selection and optimization of extraction and clean-up techniques based on a clear understanding of the matrix, the analytes, and the analytical goals. The integration of robust, validated preparation protocols—such as QuEChERS with advanced d-SPE sorbents or optimized SPE for specific interferences—with powerful LC-MS/MS instrumentation and bioinformatics platforms like GNPS, creates a formidable pipeline for accelerating the discovery and identification of novel bioactive natural products. Future advancements will continue to lean towards automation, green chemistry principles, and even more selective sorbents to improve throughput, sustainability, and specificity in unraveling the complex chemistry of life.

Within the framework of LC-MS profiling for natural product (NP) identification research, three primary data outputs form the analytical cornerstone: chromatograms, mass spectra, and fragmentation patterns. The chromatogram provides the first dimension of separation, resolving a complex extract into individual components over time. The mass spectrum delivers the molecular signature for each component, revealing its mass-to-charge ratio and isotopic pattern. Finally, fragmentation patterns (MS/MS or MSⁿ spectra) offer a structural blueprint by illustrating how the molecule breaks apart, enabling definitive identification and differentiation of isomers [23] [24]. Mastering the interpretation of this interdependent data triad is essential for dereplicating known compounds and discovering novel bioactive entities from natural sources [25] [26].

Foundational Concepts in LC-MS Profiling for Natural Products

Liquid Chromatography-Mass Spectrometry (LC-MS) is the central analytical platform in modern natural product research. It synergistically combines the physical separation capability of liquid chromatography with the mass-resolving and detecting power of mass spectrometry [27] [24]. In this workflow, a crude natural product extract is first injected into the LC system. Components separate based on their differential interaction with the stationary phase (e.g., C18 silica) and the mobile phase (a gradient of water and organic solvents) [28]. As each compound elutes from the column, it is introduced into the mass spectrometer.

The mass spectrometer functions by converting neutral molecules into gas-phase ions in the ion source (e.g., Electrospray Ionization - ESI), separating these ions according to their mass-to-charge ratio (m/z) in the mass analyzer, and detecting them [24]. The primary output is a plot of ion intensity versus m/z, known as a mass spectrum. The most intense peak is designated the base peak (relative abundance 100%), and the peak corresponding to the intact ionized molecule is the molecular ion peak [29] [24]. For structural elucidation, a specific molecular ion can be isolated and fragmented via Collision-Induced Dissociation (CID), generating a secondary mass spectrum (MS/MS or MS2) that reveals characteristic fragmentation patterns [29] [30]. Advanced instruments can perform multiple rounds of fragmentation (MSⁿ), providing deeper structural insights [23].

Data Outputs: Interpretation and Interrelationship

The Chromatogram: The Dimension of Separation

A chromatogram is a two-dimensional plot depicting detector response (abundance) against retention time (RT). Each peak represents a distinct chemical species or a set of co-eluting compounds.

- Retention Time (RT): A compound's RT is a reproducible characteristic under identical chromatographic conditions (column, mobile phase, gradient, temperature). It provides a primary diagnostic for compound matching against standards [28].

- Peak Shape and Width: Symmetric, sharp peaks indicate good separation and column health. Tailing or broadening peaks can suggest secondary interactions with the column or instrumental issues [28].

- Peak Area/Height: This is proportional to the compound's concentration in the sample, enabling quantitative analysis.

The chromatogram's role is to reduce sample complexity, delivering purified components to the mass spectrometer for sequential analysis. Effective separation is critical, as co-elution leads to ion suppression and mixed mass spectra, complicating interpretation [23] [27].

Table 1: Key Chromatographic Parameters and Their Impact on Natural Product Analysis

| Parameter | Typical Setup for NP Profiling | Impact on Data Output |

|---|---|---|

| Column Chemistry | Reversed-Phase (C18), HILIC | Determines selectivity; C18 separates by hydrophobicity, HILIC by polarity [27] [28]. |

| Gradient | Water/Acetonitrile with 0.1% Formic Acid | Controls resolution and run time; shallower gradients improve separation of complex mixtures [27]. |

| Retention Time | Compound-specific | Primary identifier for alignment and dereplication across samples [28]. |

| Peak Width | 5-30 seconds (for LC-MS) | Affects spectral quality; narrower peaks yield higher signal-to-noise ratios [28]. |

The Mass Spectrum: The Dimension of Mass

The mass spectrum provides the molecular fingerprint. Key features include:

- Molecular Ion: Identifies the intact ionized molecule (e.g., [M+H]⁺, [M-H]⁻). Its m/z value allows calculation of the exact mass, critical for determining elemental composition [24].

- Isotopic Pattern: The characteristic "clusters" of peaks (e.g., for Cl, Br, ³⁴S, ¹³C) provide immediate clues about the presence of specific elements. The relative abundance of the M+1 peak helps estimate the number of carbon atoms.

- Adduct Ions: Molecules may form ions with other species present (e.g., [M+Na]⁺, [M+NH₄]⁺, [M+HCOO]⁻). Recognizing these patterns is essential for correct molecular weight assignment [24].

- In-Source Fragmentation: Some labile bonds may break in the ion source before mass analysis, generating fragments that appear in the full MS scan. These can be informative but may also complicate the spectrum.

For natural products, high-resolution accurate mass (HRAM) measurement is indispensable. It allows the determination of an ion's exact mass (e.g., 279.1591 Da) rather than its nominal mass (279 Da). This precision dramatically narrows down the possible molecular formulas from hundreds to just a few [23] [24].

Fragmentation Patterns (MS/MS): The Dimension of Structure

Fragmentation spectra are the most informative data layer for structural elucidation. When a precursor ion is activated (e.g., via CID), it breaks at chemically favored bonds to yield product ions.

- Fragmentation Rules: Cleavage is guided by chemical principles: the stability of the resulting fragment ions, charge retention on the more favorable site, and known rearrangement reactions (e.g., retro-Diels-Alder, neutral losses of H₂O, CO₂) [30].

- Spectral Trees (MSⁿ): Sequential fragmentation (MS3, MS4) creates a tree of related spectra, mapping connectivity between fragments and the precursor ion. This is particularly powerful for elucidating glycosylation patterns or peptide sequences in NPs [23].

- Spectral Libraries: Experimental MS/MS spectra can be matched against reference libraries (e.g., GNPS, MassBank) for rapid dereplication [25] [30]. The match score indicates confidence in the identification.

- In Silico Fragmentation: Computational tools like MassKG predict fragmentation patterns from chemical structures using knowledge-based rules or deep learning models. This aids in annotating spectra for which no reference exists and in proposing structures for novel compounds [25] [30].

Table 2: Comparative Utility of MSⁿ Levels in Natural Product Identification

| MS Level | Information Provided | Typical Application in NP Research | Advantage | Limitation |

|---|---|---|---|---|

| Full MS (MS1) | Molecular mass, isotopic pattern, adduct formation [24]. | Molecular formula assignment, initial profiling. | Fast, high sensitivity. | No structural information; isomers are indistinguishable. |

| Tandem MS (MS2) | Primary fragmentation pattern, characteristic neutral losses [29]. | Dereplication against libraries, partial structure elucidation. | Good balance of speed and structural insight. | May be insufficient for complete structure or isomer distinction. |

| Multi-stage MS (MS3+) | Secondary fragmentation, reveals connectivity between MS2 fragments [23]. | Detailed structural elucidation of novel scaffolds, sequencing of glycosides. | Provides deeper structural evidence. | Lower signal intensity, requires more sample, longer acquisition times. |

The interrelationship of these data outputs is sequential and hierarchical. The chromatogram selects when to analyze. The full mass spectrum reveals what is present at that time. The fragmentation pattern explains how that molecule is built.

LC-MS Data Generation Workflow for Natural Products

Experimental Protocol for Untargeted LC-MS/MS Profiling of Natural Products

The following protocol is adapted from established untargeted metabolomics methods for the analysis of natural product extracts, such as plant or microbial cultures [27].

Sample Preparation

- Extraction: Weigh freeze-dried biomass (e.g., 10 mg plant material). Add a suitable extraction solvent (e.g., 1 mL of 70% methanol/water or acetonitrile/methanol/formic acid mixture [27]). Vortex vigorously for 1 minute.

- Sonication: Sonicate the mixture in an ice-water bath for 15 minutes.

- Centrifugation: Centrifuge at 13,000 x g for 10 minutes at 4°C to pellet insoluble debris.

- Filtration: Transfer the supernatant to an LC vial via a 0.2 µm PTFE or nylon membrane filter.

- Internal Standards: Add a cocktail of stable isotope-labeled internal standards (e.g., l-Phenylalanine-d8) to the extraction solvent or final extract for quality control of extraction efficiency and instrument performance [27].

Instrumental Analysis (LC-HRMS/MS)

Liquid Chromatography:

- Column: Reversed-phase C18 column (e.g., 2.1 x 100 mm, 1.7 µm particle size) or a HILIC column for polar compounds [27].

- Mobile Phase: (A) Water with 0.1% formic acid; (B) Acetonitrile with 0.1% formic acid [27].

- Gradient: Optimized for the sample. Example: 5% B to 95% B over 20 minutes, hold 5 minutes, re-equilibrate.

- Flow Rate: 0.3 mL/min. Column Oven: 40°C. Injection Volume: 2-5 µL.

Mass Spectrometry (Orbitrap or Q-TOF):

- Ionization: Electrospray Ionization (ESI), positive and/or negative mode.

- Full MS (MS1) Parameters: Resolution: 70,000 (at m/z 200); Scan Range: m/z 100-1500; Automatic Gain Control (AGC) Target: 1e6.

- Data-Dependent Acquisition (DDA) for MS/MS: Top N (e.g., 10) most intense ions from each MS1 scan are selected for fragmentation. Collision Energy: Stepped or fixed (e.g., 20, 35, 50 eV for small molecules). Isolation Window: 1.0 m/z. Dynamic Exclusion: 15 seconds to prevent repeated fragmentation of abundant ions.

Data Processing and Annotation Workflow

- Conversion: Convert raw instrument files to an open format (e.g., .mzML).

- Feature Detection: Use software (e.g., MZmine, XCMS, Compound Discoverer) to detect chromatographic peaks, align features across samples, and deconvolute adducts and isotopes [27] [31]. Output: A feature table with m/z, RT, and intensity for each sample.

- Compound Annotation:

- Level 1: Confident identification using an authentic standard (matching RT and MS/MS spectrum).

- Level 2: Probable structure based on MS/MS spectral library match (e.g., via GNPS) [25] [30].

- Level 3: Tentative candidate based on in silico fragmentation prediction (e.g., using MassKG, SIRIUS/CSI:FingerID) [25] [30].

- Level 4: Molecular formula from exact mass and isotopic pattern.

- Advanced Analysis: Perform molecular networking on GNPS to visualize spectral similarity and cluster related compounds [26].

The Scientist's Toolkit: Essential Reagents and Materials

Table 3: Key Research Reagent Solutions for LC-MS Profiling of Natural Products

| Item | Function/Description | Critical Considerations |

|---|---|---|

| LC-MS Grade Solvents (Water, Acetonitrile, Methanol) | Used for mobile phases and sample extraction. Minimizes chemical noise and ion suppression. | Purity is paramount; contaminants cause background ions and reduced sensitivity [27]. |

| Formic Acid / Ammonium Formate / Ammonium Acetate | Mobile phase additives. Aid in protonation/deprotonation (formic acid) and provide consistent adduct formation (ammonium salts) [27]. | Concentration (typically 0.1%) must be consistent for reproducibility. |

| Stable Isotope-Labeled Internal Standards (e.g., l-Phenylalanine-d8) | Added to all samples and blanks. Monitor extraction efficiency, instrument stability, and aid in semi-quantitation [27]. | Should not be endogenous to the sample. |

| Natural Product Standards | Authentic chemical standards. Used to create in-house spectral libraries and validate retention times for Level 1 identification [23]. | Purity should be verified (e.g., by NMR). |

| LC Columns (C18, HILIC) | Stationary phase for compound separation. Different chemistries separate compounds based on hydrophobicity or polarity [27] [28]. | Column lot-to-lot variability can shift RTs; conditioning is essential. |

| Solid Phase Extraction (SPE) Cartridges | For sample clean-up and fractionation prior to LC-MS to remove salts or interfering matrix components. | Select sorbent (C18, HLB, etc.) based on target compound chemistry. |

| In Silico Tools & Databases (MassKG, GNPS, COCONUT) | Software and spectral libraries for data processing, dereplication, and structural prediction [25] [30] [26]. | Integral to modern workflows for annotating unknown spectra. |

Advanced Applications in Natural Product Research

Rational Library Minimization for Drug Discovery

Large libraries of natural product extracts present a screening bottleneck. A method using LC-MS/MS spectral similarity via molecular networking can rationally reduce library size by selecting extracts with maximal scaffold diversity. This approach prioritizes chemical novelty and has been shown to increase bioassay hit rates by reducing redundancy. For instance, a library of 1,439 fungal extracts was reduced to 50 extracts representing 80% of the chemical diversity, which increased the hit rate against Plasmodium falciparum from 11.3% to 22% [26].

Knowledge-Based and AI-Driven Structural Annotation

The challenge of annotating novel NPs is being addressed by computational tools like MassKG. This algorithm combines a knowledge-based fragmentation generator, trained on statistical analysis of existing NP MS/MS libraries, with a deep learning-based molecule generation model. It can annotate spectra against a vast database of known and computer-generated novel NP structures (over 670,000 in total), providing a powerful resource for dereplication and de novo structure elucidation [25] [30].

Data Annotation Pathway for Known and Novel Natural Products

Chromatograms, mass spectra, and fragmentation patterns are the fundamental, interconnected data pillars of LC-MS-based natural product research. The chromatogram provides the temporal axis of purity, the mass spectrum delivers the molecular identity, and the fragmentation pattern reveals the structural architecture. Proficiency in interpreting this integrated data stream is what transforms a complex analytical profile into a logical series of chemical identities. As the field advances, the integration of higher-order MSⁿ experiments [23], computational prediction tools [25] [30], and strategic bioactivity-guided workflows [26] continues to enhance the speed and success of discovering novel, bioactive natural products. This robust analytical framework ensures that LC-MS profiling remains an indispensable engine for innovation in drug discovery from natural sources.

From Raw Data to Biological Insights: Advanced LC-MS/MS Workflows and Applications

The identification of novel secondary metabolites from natural sources represents a cornerstone of drug discovery. However, researchers face the significant challenge of efficiently differentiating novel compounds from the vast number of known molecules, a process known as dereplication [32]. High-Resolution Accurate-Mass (HRAM) Liquid Chromatography-Mass Spectrometry (LC-MS) has emerged as the pivotal technology for addressing this challenge. By providing exceptional m/z resolution, sensitivity, and mass accuracy, HRAM instruments, notably Orbitrap and quadrupole time-of-flight (qTOF) analyzers, enable the acquisition of detailed chemical fingerprints from complex natural extracts [32]. This technical guide details the systematic design of untargeted profiling experiments, focusing on robust data acquisition and pre-processing methodologies. These protocols are designed to transform raw, complex spectral data into clean, representative information suitable for confident metabolite identification and novelty assessment, directly supporting the broader thesis objective of advancing natural product lead discovery.

Foundational Experimental Design

The success of an untargeted profiling study is determined before the first sample is injected. Careful experimental design ensures the acquired data contains meaningful biological variation rather than technical artifact.

- Sample Preparation & Chromatography: For plant or microbial extracts, a balance between comprehensiveness and complexity must be struck. Generalized extraction with solvents like methanol-water mixtures is common, but may require clean-up steps (e.g., solid-phase extraction) to reduce matrix interference. Ultra-High-Performance Liquid Chromatography (UHPLC) with sub-2-µm particle columns is standard, providing superior peak capacity and separation efficiency critical for resolving complex metabolite mixtures [33]. The choice of column chemistry (e.g., C18, HILIC, PFP) dictates the metabolite space covered [33].

- Quality Controls (QCs): A robust design incorporates multiple QC types. A pooled QC, created by mixing a small aliquot of every sample, is analyzed repeatedly throughout the acquisition sequence. It is used to monitor and correct for system stability. Processed blanks (extraction solvents taken through the preparation protocol) are essential for identifying background and contamination ions during data pre-processing [34].

- Data Acquisition Strategy: Untargeted profiling typically employs data-dependent acquisition (DDA). The instrument first performs a full MS scan at high resolution to detect all ions above a threshold. Subsequently, the most intense ions from that scan are sequentially isolated and fragmented to produce MS/MS spectra for structural elucidation. Advanced software solutions, such as AcquireX, automate this process by using iterative injections to build and update exclusion lists, preventing repeated fragmentation of background ions and ensuring comprehensive coverage of sample-derived compounds [34].

Table 1: Key Experimental Design Elements for Untargeted Profiling

| Design Element | Purpose | Recommendation |

|---|---|---|

| Pooled QC Sample | Monitors instrumental drift, evaluates reproducibility, normalizes data. | Create from equal aliquots of all study samples; inject at start, end, and regularly throughout batch. |

| Processed Blanks | Identifies background ions, solvent impurities, and contaminants for post-acquisition filtering. | Subject extraction solvent to the entire sample preparation workflow. |

| Acquisition Order | Minimizes systematic bias. | Randomize injection order of biological samples; bracket with QCs and blanks. |

| Data Acquisition Mode | Balances breadth of detection with depth of structural information. | Use DDA with dynamic exclusion; consider advanced iterative modes (e.g., AcquireX) for complex samples [34]. |

HRAM Data Acquisition: Core Parameters and Configuration

Configuring the mass spectrometer correctly is paramount for generating high-fidelity data. The following parameters are critical for untargeted natural product profiling.

- Resolution: For the full MS scan, a resolution of ≥ 60,000 (at m/z 200) is recommended for sufficient separation of isobaric ions and accurate determination of monoisotopic mass. For MS/MS scans, a resolution of 15,000-30,000 is often a suitable compromise between spectral detail and scan speed [35].

- Mass Accuracy: HRAM systems should deliver sub-2-ppm mass error with internal calibration. This accuracy is fundamental for generating reliable molecular formulas.

- Scan Range: A typical range of m/z 100-1500 covers most secondary metabolites. A lower start (m/z 70-100) may be needed for very small molecules.

- Automatic Gain Control (AGC) & Maximum Injection Time: These settings control ion population in the analyzer and significantly impact dynamic range and sensitivity. For comprehensive profiling, use an AGC target of 1e6 ions for full MS and 5e4-1e5 for MS/MS, with maximum injection times of 100 ms and 50-100 ms, respectively, to ensure adequate sampling of both high- and low-abundance features [35].

- Fragmentation Settings: Higher-energy collisional dissociation (HCD) is common. Normalized collision energy (NCE) should be optimized; a stepped NCE (e.g., 20, 40, 60 eV) can provide more comprehensive fragment information in a single run.

Table 2: Representative HRAM-MS Acquisition Parameters for Untargeted Profiling

| Parameter | Full MS Scan | dd-MS/MS Scan | Rationale |

|---|---|---|---|

| Resolution | 60,000 - 120,000 | 15,000 - 30,000 | High res for accurate mass; moderate res for faster MS/MS cycling [35]. |

| Scan Range | m/z 100 - 1500 | Determined by precursor | Covers typical natural product masses. |

| AGC Target | 1e6 | 5e4 - 1e5 | Optimizes ion trapping for wide dynamic range [35]. |

| Max. Injection Time | 100 ms | 50 - 100 ms | Balances sensitivity and scan duty cycle [35]. |

| Isolation Window | N/A | 1.0 - 2.0 m/z | Isolates precursor with minimal co-fragmentation. |

| Fragmentation | N/A | HCD with stepped NCE (e.g., 20, 40, 60 eV) | Generates rich, structurally informative fragment spectra. |

HRAM Untargeted Profiling and Pre-processing Workflow

Data Pre-processing: From Raw Spectra to a Clean Feature Table

Raw HRAM data is a complex series of spectra containing information from metabolites, matrix, background, and noise. Pre-processing transforms this into a structured feature table suitable for statistical analysis.

1. Peak Picking & Feature Detection: Software algorithms (e.g., in Compound Discoverer, MZmine) detect chromatographic peaks across all samples. A "feature" is defined by its precise m/z (from the accurate mass measurement) and retention time (RT). The peak area or height provides the intensity value [32].

2. Noise Filtering & Background Subtraction: This critical step removes non-sample-derived signals. Features consistently present in processed blank injections are flagged or subtracted. Signal-to-noise ratio thresholds are applied to eliminate stochastic noise [32].

3. Deisotoping & Adduct Annotation: A single metabolite generates multiple ions in the mass spectrometer: the [M+H]+ or [M-H]- ion, isotopic peaks (e.g., M+1, M+2 from 13C), and adducts (e.g., [M+Na]+, [M+NH4]+). Algorithms group these related ions into a single feature representing the neutral molecule [32].

4. Alignment & Gap Filling: Minor shifts in m/z and RT across samples are corrected (alignment). If a feature is not detected in some samples due to low abundance, the software may "fill the gap" by integrating the expected m/z/RT region to recover a weak signal.

As demonstrated in research on Agrimonia pilosa, optimizing pre-processing parameters like similarity score thresholds (e.g., 0.95) is essential for correctly grouping scans from a single metabolite while separating co-eluting compounds [32]. The final output is a matrix where rows are features, columns are samples, and values are intensities.

Data Pre-processing Logical Pipeline

The Scientist's Toolkit: Essential Research Reagents and Software

Successful untargeted profiling relies on a suite of reliable materials and informatics tools.

Table 3: Essential Toolkit for HRAM Untargeted Profiling Experiments

| Category | Item / Solution | Function / Purpose | Example / Note |

|---|---|---|---|

| Chromatography | UHPLC-grade solvents (MeOH, ACN, Water) | Mobile phase for high-sensitivity, low-background separation. | With 0.1% formic acid or ammonium acetate for ionization. |

| Analytical Column (C18, HILIC, PFP) | Separates complex metabolite mixtures. | 2.1 x 100-150 mm, sub-2-µm particles for UHPLC [33]. | |

| Mass Spectrometry | Calibration Solution | Ensures sub-ppm mass accuracy of the HRAM instrument. | Vendor-supplied mixture (e.g., Pierce LTQ Velos ESI). |

| Internal Standards (ISTDs) | Monitors ionization efficiency and system performance. | Stable isotope-labeled compounds not expected in samples. | |

| Software & Informatics | Acquisition Software (e.g., Xcalibur, MassHunter) | Controls instrument, creates methods, acquires raw data [34] [36]. | Vendor-specific. Enables advanced workflows like AcquireX [34]. |

| Pre-processing Software (e.g., Compound Discoverer, MZmine) | Converts raw data to feature tables via peak picking, alignment, annotation. | Critical for reproducible data reduction [34] [32]. | |

| Spectral Libraries (e.g., mzCloud, GNPS) | Provides reference MS/MS spectra for metabolite identification by spectral matching [34]. | mzCloud is a high-resolution, curated MS/MS library [34]. | |

| Sample Preparation | Solid-Phase Extraction (SPE) Sorbents | Fractionates or cleans up crude extracts to reduce complexity. | C18, polymeric, or mixed-mode sorbents. |

Designing a rigorous untargeted profiling experiment requires integration of meticulous wet-lab practices, optimized HRAM instrument parameters, and a robust computational pre-processing pipeline. By implementing the strategies outlined—from employing pooled QCs and advanced DDA with background exclusion [34] to executing systematic noise filtering and deisotoping [32]—researchers can generate data of the highest integrity. This disciplined approach to acquisition and pre-processing forms the essential foundation for all downstream analyses. The resulting clean, representative feature table unlocks the potential for reliable statistical analysis, confident metabolite annotation, and ultimately, the successful dereplication and discovery of novel bioactive natural products, thereby making a substantive contribution to the field of natural product-based drug discovery.

In the structured pipeline of LC-MS profiling for natural product (NP) identification, dereplication—the rapid identification of known compounds—is a critical, upfront challenge. The primary goal is to avoid the costly and time-consuming rediscovery of known entities, thereby focusing resources on truly novel and bioactive molecules [37]. Molecular Networking (MN), particularly through platforms like the Global Natural Products Social Molecular Networking (GNPS), has emerged as a transformative strategy that moves beyond simple spectral matching [38]. By organizing complex tandem mass spectrometry (MS/MS) data based on chemical similarity, MN visualizes the "chemical space" of an extract, enabling the simultaneous dereplication of known compounds and the targeted discovery of their structurally related analogues [37]. This guide details the integration of MN into NP research, providing technical workflows, experimental protocols, and strategic frameworks to enhance the efficiency of LC-MS-based discovery campaigns.

The Evolution and Imperative of Dereplication in Natural Product Research

Natural products have been the source of nearly two-thirds of all small-molecule drugs approved over recent decades [38]. However, the field faces a significant bottleneck: the high probability of rediscovering known compounds from complex biological extracts. Traditional dereplication methods, which rely on comparing UV, NMR, or MS data against databases, are often manual, slow, and ill-suited for detecting novel analogues of known compound families [38].

The introduction of LC-MS/MS-based molecular networking in 2012 marked a paradigm shift [38]. Its core principle is that compounds with similar structures produce similar MS/MS fragmentation patterns. By calculating spectral similarity scores (e.g., cosine score), algorithms can cluster related molecules into visual networks [38]. Within these networks, the annotation of a single "node" (representing one MS/MS spectrum) using a reference library can propagate to nearby, unannotated nodes, suggesting they are structural analogues [37]. This capability makes MN uniquely powerful for identifying both known compounds and the novel variants that often escape traditional database searches, directly addressing a key limitation in the field.

Core Technical Workflow: From Sample to Annotated Network

A standard MN-based dereplication pipeline integrates LC-MS/MS analysis with data processing and visualization via GNPS. The following diagram outlines this core workflow.

Workflow for Molecular Networking-Based Dereplication

Experimental Protocol for LC-MS/MS Data Acquisition

High-quality MS/MS data is the foundation of a reliable molecular network. The following protocol, adapted from a 2025 study on Sophora flavescens, can be generalized for plant or microbial extracts [39].

- Sample Preparation: Dry and finely grind biological material. Extract (e.g., 50 mg powder) with a suitable solvent system (e.g., methanol/water/formic acid, 49:49:2, v/v/v) via sonication for 60 minutes. Centrifuge, combine supernatants, dry under nitrogen or vacuum, and reconstitute in a compatible solvent (e.g., H₂O/acetonitrile, 95:5). Filter through a 0.22 µm membrane prior to analysis [39].

- LC Conditions:

- Column: Reversed-phase C18 (e.g., 2.1 x 150 mm, 1.8 µm).

- Mobile Phase: (A) 8 mM ammonium acetate in water; (B) acetonitrile.

- Gradient: Optimize for your sample. An example: 3-5% B (0-3 min), 5-15% (5-8 min), 15-60% (8-12 min), 60-98% (12-20 min), hold (20-21 min).