Decoding Herbal Synergy: How AI is Revolutionizing the Mechanistic Understanding of Traditional Medicine Formulas

This article provides a comprehensive exploration of how artificial intelligence (AI) and machine learning (ML) are transforming the research into the mechanisms of action (MOA) of complex herbal formulas.

Decoding Herbal Synergy: How AI is Revolutionizing the Mechanistic Understanding of Traditional Medicine Formulas

Abstract

This article provides a comprehensive exploration of how artificial intelligence (AI) and machine learning (ML) are transforming the research into the mechanisms of action (MOA) of complex herbal formulas. Aimed at researchers, scientists, and drug development professionals, it addresses the core challenge of elucidating the polypharmacology of multi-herb, multi-component systems. The scope encompasses foundational AI strategies, practical methodologies for network pharmacology and target identification, solutions for common data and validation bottlenecks, and frameworks for comparative analysis and clinical translation. By synthesizing current evidence and future directions, this article serves as a guide for leveraging AI to bridge traditional empirical knowledge with modern systems biology, accelerating the development of safe, effective, and evidence-based phytomedicines.

From Complexity to Clarity: Foundational AI Strategies for Deconstructing Herbal Formula Synergy

The scientific investigation of Traditional Chinese Medicine (TCM) formulas confronts a fundamental paradox: their celebrated clinical efficacy stands in stark contrast to the profound obscurity of their molecular mechanisms. Unlike conventional drugs designed for single targets, herbal formulas are complex systems engineered under the principle of "Jun-Chen-Zuo-Shi" (monarch-minister-assistant-courier), where multiple botanical ingredients interact to produce a holistic therapeutic effect [1]. This gives rise to a multi-component, multi-target, multi-pathway mode of action, presenting a research challenge of extraordinary complexity [2].

The core puzzle consists of three interlocked layers: the chemical complexity of numerous, often synergistic, bioactive metabolites; the biological complexity of their simultaneous interaction with diverse proteins, genes, and cellular pathways; and the systems complexity of the emergent therapeutic outcome that cannot be predicted from the study of isolated compounds [3]. For decades, reductionist approaches have struggled to deconvolute this puzzle. Today, the integration of Artificial Intelligence (AI) with advanced omics technologies and network pharmacology is catalyzing a paradigm shift. AI provides the computational framework necessary to model these high-dimensional, non-linear interactions, transforming the puzzle from an intractable problem into a decipherable code for modern drug discovery and systems pharmacology [2] [4].

Deconstructing the Puzzle: Components, Targets, and Synergistic Networks

The Chemical Dimension: From Herbal Mixture to Defined Metabolites

A single herbal formula is a repository of vast chemical diversity. A typical formula contains thousands of unique phytochemicals, including alkaloids, flavonoids, terpenoids, saponins, and polysaccharides [4]. The primary challenge is distinguishing the active pharmacological components from inert or matrix substances. This is not merely an identification task but requires understanding the bioavailability, metabolic fate, and dynamic concentration of each compound.

Critical to this dimension is rigorous quality control to ensure the consistent chemical profile of research materials. Modern methodologies, such as the Vector Control Quantitative Analysis (VCQA), have been developed to accurately quantify the presence of specific plant species within a complex formula. For instance, VCQA can detect Asarum sieboldii Miq. in the formula ChuanXiong ChaTiao Wan down to a limit of quantification of 1%, addressing issues of product mislabeling and species fraud [5].

Table 1: Key Methodologies for Deconvoluting the Chemical Dimension

| Methodology | Primary Function | Key Output | Representative Tool/Example |

|---|---|---|---|

| High-Performance Liquid Chromatography (HPLC) & Mass Spectrometry | Separation, identification, and quantification of chemical constituents. | Chemical fingerprint, metabolite identification. | Used in metabolomics for quality control [3]. |

| Vector Control Quantitative Analysis (VCQA) | Absolute DNA-based quantification of multiple plant species in a formula. | Species-specific percentage composition (e.g., mg/mg). | Quantified 8 species in ChuanXiong ChaTiao Wan [5]. |

| AI-Enhanced Metabolomics | Unsupervised pattern recognition to link chemical profiles to bioactivity. | Clusters of co-varying metabolites predictive of efficacy. | Machine learning analysis of spectral data [3]. |

| ADME Prediction Models | In silico prediction of absorption, distribution, metabolism, and excretion. | Bioavailability scores, likely bioactive metabolites. | TCM-ADMEpred and other AI models [1]. |

The Biological Dimension: Mapping the Multi-Target Landscape

The "multi-target" nature of herbal formulas means their metabolites interact with a broad network of proteins, receptors, enzymes, and genes. A single compound may modulate several targets, while multiple compounds may converge on a single pivotal target, creating a dense and robust network of interactions [2]. The biological challenge is to move from a list of putative targets to a mechanistic model of polypharmacology.

This involves identifying primary protein targets (e.g., kinases, receptors), mapping to downstream signaling pathways (e.g., NF-κB, PI3K-Akt), and linking these to disease-related gene modules. For example, research on Gegen Qinlian Decoction for diabetes identified key targets like Nfkb1, Stat1, and Ifngr1 by integrating genomics and AI-driven consensus clustering [4]. Similarly, compounds from Huangqin Decoction were found to act on targets like PTGS2 and IL-6 to mediate effects on ulcerative colitis [1].

Table 2: Experimental & Computational Approaches for Target Identification

| Approach | Description | Strengths | Limitations |

|---|---|---|---|

| Affinity Purification Mass Spectrometry | Isolates protein complexes bound to immobilized drug molecules. | Identifies direct physical interactors; unbiased. | May miss low-affinity or transient interactions. |

| Molecular Docking & Dynamics | Computational simulation of compound binding to protein structures. | High-throughput; provides structural insights. | Accuracy depends on protein structure quality; static. |

| Network Pharmacology | Constructs "compound-target-pathway-disease" networks from databases. | Holistic, systems-level view; hypothesis-generating. | Prone to false positives from database noise [2]. |

| AI-Driven Target Prediction | ML/DL models trained on chemical/biological data to infer novel targets. | Integrates multi-omics data; powerful pattern recognition. | "Black box" nature; requires large, high-quality datasets [4]. |

| CRISPR-based Functional Genomics | High-throughput gene knockout/activation screens with treatment. | Establishes causal gene-efficacy relationships. | Costly and complex; primarily for cellular models. |

The Systems Dimension: Emergent Synergy and Network Pharmacology

The therapeutic effect of a formula is an emergent property of the entire component-target network, not a simple sum of individual actions. This synergy can be pharmacodynamic (enhanced effect on a biological system) or pharmacokinetic (improved absorption or distribution of active compounds) [1]. Network Pharmacology (NP) provides the foundational framework for modeling this systems dimension by representing drugs, targets, and diseases as interconnected nodes within a large graph [2].

However, conventional NP faces limitations: it often relies on static databases, produces high-noise networks, and struggles with dynamic or causal interpretations. AI-driven Network Pharmacology (AI-NP) overcomes these hurdles by applying machine learning (ML), deep learning (DL), and graph neural networks (GNNs) to mine heterogeneous data, predict novel interactions, and prioritize key network nodes. This transforms NP from a descriptive tool into a predictive and analytical engine for mechanism elucidation [2].

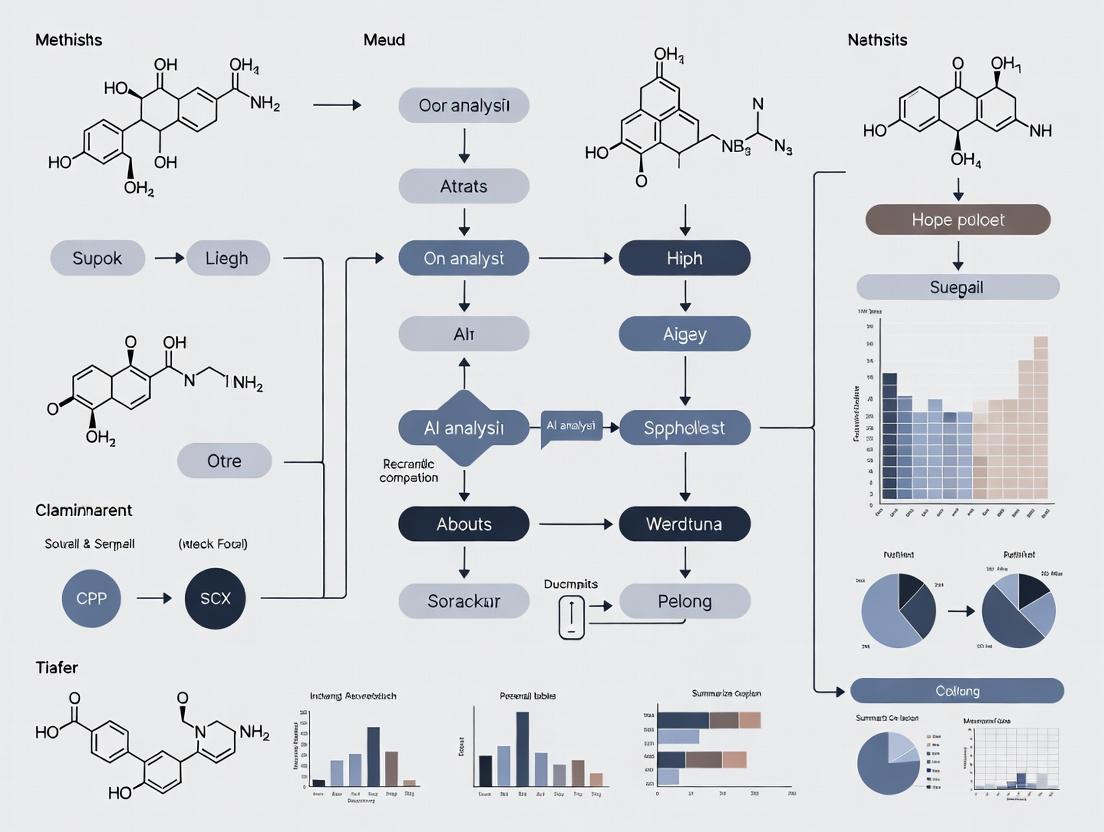

Diagram: The Multi-Scale Puzzle of Herbal Formula Mechanism. The diagram illustrates the flow from multi-herbal components through a cloud of metabolites, which interact with a multi-target biological network. An AI-NP engine analyzes these interactions to model the emergent therapeutic synergy.

The AI Arsenal: Computational Strategies for Puzzle Solving

Data Integration and Knowledge Graph Construction

The first step in AI-enabled research is synthesizing fragmented data. Specialized databases like TCMSP, HERB, and TCM-ID curate information on herbs, compounds, and targets [4]. AI, particularly natural language processing (NLP), can mine millions of scientific articles to extract novel relationships. These disparate data streams are integrated into a unified TCM knowledge graph, where entities (herbs, compounds, genes) are nodes and relationships (contains, targets, associates-with) are edges. Graph Neural Networks (GNNs) can then traverse these graphs to predict missing links and identify novel herb-target-disease associations [2] [3].

Predictive Modeling for Target and Mechanism Discovery

With a structured knowledge base, predictive AI models take center stage.

- Target Prediction: Models trained on known compound-protein interaction data can predict targets for novel TCM metabolites. Deep learning architectures like Graph Convolutional Networks (GCNs) are particularly effective as they operate directly on molecular graph structures [4].

- Synergy Prediction: AI models can analyze high-throughput screening data to identify metabolite pairs with synergistic effects, moving beyond trial-and-error experimentation. Unsupervised ML can detect clusters of compounds that co-vary with therapeutic outcomes, suggesting functional groups [3].

- Pathway and Mechanism Inference: By integrating predicted targets with pathway databases (KEGG, Reactome) and multi-omics data (transcriptomics, proteomics from treated cells), AI can reconstruct the perturbed biological network and infer the dominant mechanisms of action [2] [4].

Table 3: Comparison of Conventional vs. AI-Driven Network Pharmacology

| Comparison Dimension | Conventional Network Pharmacology | AI-Driven Network Pharmacology (AI-NP) | Implications for TCM Research |

|---|---|---|---|

| Data Acquisition & Integration | Relies on manual curation from public databases; fragmented, slow updates [2]. | Integrates multimodal data (omics, EMR, text) dynamically; automated fusion. | Enables real-time, high-dimensional data synthesis for complex formulas. |

| Algorithmic Core | Based on statistics, topology analysis, and expert interpretation [2]. | Utilizes ML, DL, and GNN to automatically identify complex, non-linear patterns. | Shifts from experience-driven hypothesis to data-driven discovery. |

| Model Interpretability | Generally good, but limited in handling high-dimensional data. | Often complex ("black box"); but eXplainable AI (XAI) tools (SHAP, LIME) can help. | Critical for gaining biological insights; a key area of development [2]. |

| Dynamic & Causal Analysis | Predominantly static network analysis; poor at capturing temporal dynamics. | Can incorporate time-series omics data and perform causal inference modeling. | Essential for understanding how effects unfold over time in biological systems. |

| Clinical Translation Potential | Focused on mechanistic validation; limited direct predictive utility for patients. | Can integrate clinical big data (EMRs, real-world data) for precision prediction. | Bridges the gap between molecular mechanism and personalized patient outcomes [2]. |

Diagram: AI-Driven Workflow for TCM Mechanism Elucidation. The workflow shows the integration of multi-source data into a knowledge graph, which fuels AI modeling to generate testable predictions, creating a closed-loop validation system.

Experimental Protocols for Validation

AI-generated hypotheses must be rigorously validated. Below is a detailed protocol for a key experimental method cited in the research.

Protocol: Vector Control Quantitative Analysis (VCQA) for Species Quantification in Herbal Formulas [5]

Objective: To absolutely quantify the proportion of multiple plant species in a finished complex herbal formula product.

Principle: The method uses the nuclear ribosomal DNA Internal Transcribed Spacer (ITS) region as a species-specific barcode. Species-specific ITS fragments are cloned into a single "quantitative vector" that serves as an absolute standard in quantitative PCR (qPCR), controlling for variations in DNA extraction efficiency.

Materials & Reagents:

- Sample: Powdered herbal formula product (e.g., ChuanXiong ChaTiao Wan tablets).

- DNA Extraction Kit: A plant-optimized kit for complex, polysaccharide/polyphenol-rich samples.

- PCR Reagents: High-fidelity DNA polymerase, dNTPs, species-specific and universal ITS primers with added BsaI recognition sites.

- Cloning Enzymes: BsaI restriction enzyme and T4 DNA Ligase for Golden Gate assembly; Gateway BP/LR Clonase II enzyme mix.

- qPCR Master Mix: SYBR Green or TaqMan-based mix for absolute quantification.

- Standard Vector: pDONR221 and destination vector (e.g., pDEST) for Gateway cloning.

- Equipment: Thermal cycler, real-time PCR system, electrophoresis equipment, spectrophotometer.

Procedure:

- DNA Extraction: Extract total genomic DNA from the formula powder and from authentic, vouchered reference plant materials using a standardized kit. Assess DNA quality/purity via spectrophotometry and gel electrophoresis.

- Amplification of ITS Fragments: Perform PCR on reference samples using universal ITS primers flanked by BsaI sites. For the formula sample, use a multiplex PCR with a pool of species-specific primers.

- Golden Gate Assembly: Digest the PCR products with BsaI and ligate them together in a one-pot reaction to create a concatemer of all species-specific ITS fragments.

- Gateway Recombination: Recombine the assembled fragment mix into the pDONR221 donor vector via BP reaction, then subclone into the destination vector via LR reaction to create the final quantitative control vector.

- Standard Curve Generation: Prepare a serial dilution of the quantified control vector. Run qPCR with species-specific primers for each target species.

- Sample Quantification: Run qPCR on DNA extracted from the commercial formula product using the same species-specific primer sets. Use the standard curve to calculate the absolute copy number of each species' ITS region in the sample.

- Data Conversion: Convert the DNA copy number ratio to a mass/mass percentage ratio using pre-established calibration, allowing comparison with pharmacopeial standards.

Key Advantages: Eliminates variability from DNA extraction; enables simultaneous quantification of many species in one assay; results are traceable to an absolute DNA standard.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 4: Key Research Reagent Solutions for Herbal Formula Research

| Category | Item | Function & Role in Research | Example/Note |

|---|---|---|---|

| Quality Control & Authentication | DNA Barcoding Primers (ITS, psbA-trnH) | To authenticate plant species in raw herbs and detect adulteration. | Essential for verifying starting material prior to compound extraction [5]. |

| Quantitative Vector (VCQA) | An absolute DNA standard for multiplex qPCR quantification of species in formulas. | Crucial for ensuring formula consistency and detecting ingredient fraud [5]. | |

| Chemical Reference Standards | Pure compounds for HPLC/UPLC calibration to create chemical fingerprints. | Used for batch-to-batch quality assessment of formula extracts [3]. | |

| Target Identification | Activity-Based Protein Profiling (ABPP) Probes | Chemical probes that label the active sites of enzymes in complex proteomes. | Identifies direct protein targets of reactive metabolites in cell lysates. |

| Phospho-Specific & Total Antibodies | To detect activation/inhibition of specific signaling pathway proteins (e.g., p-ERK, p-AKT). | Validates AI-predicted pathway perturbations in cell-based assays [4]. | |

| Omics Analysis | Multi-Omics Kits (RNA-Seq, Proteomics, Metabolomics) | For comprehensive, unbiased profiling of molecular changes induced by formula treatment. | Generates validation data for AI models and discovers novel mechanisms [2] [4]. |

| AI & Computation | TCM-Specific Databases (TCMSP, HERB, TCM-ID) | Curated knowledge bases linking herbs, compounds, targets, and diseases. | Foundational data source for building network pharmacology models and knowledge graphs [4]. |

| XAI Software Libraries (SHAP, LIME) | Explainable AI tools to interpret predictions from complex ML/DL models. | Vital for translating model outputs into biologically interpretable insights [2]. |

Defining the multi-component, multi-target puzzle of herbal formulas is no longer a purely philosophical challenge but a tractable computational and systems biology problem. AI, particularly when fused with network pharmacology and rigorous experimental omics, provides an unprecedented toolkit to navigate this complexity. It enables researchers to transition from asking "What are the active ingredients?" to "How does the emergent network of interactions produce a therapeutic effect?" The future lies in developing more transparent, interpretable, and causally-aware AI models that can seamlessly integrate multi-scale data—from molecular interactions to patient-reported outcomes. This convergence will not only demystify TCM but also contribute a revolutionary network-based pharmacology paradigm to the broader field of drug discovery for complex diseases [2] [3] [4].

The fundamental challenge in modernizing Traditional Chinese Medicine (TCM) and herbal formula research lies in reconciling its holistic, multi-target philosophy with the reductionist, target-centric paradigms of contemporary Western drug discovery [4]. TCM utilizes complex formulations where therapeutic efficacy emerges from the synergistic interplay of multiple active metabolites—such as alkaloids, polyphenols, and terpenoids—acting on diverse biological targets simultaneously [4]. This "multi-component, multi-target, multi-pathway" mode of action offers distinct advantages for treating complex, systemic diseases but poses significant challenges for mechanistic elucidation using conventional methods [2].

Artificial Intelligence (AI), particularly machine learning (ML) and deep learning (DL), has emerged as the essential bridge capable of integrating TCM's holistic tradition with the analytical power of systems biology [6]. By processing high-dimensional, multi-scale biological data, AI enables researchers to model the non-linear, dynamic interactions that characterize herbal formula pharmacology [4]. This technical guide outlines the core frameworks, methodologies, and experimental protocols for applying AI-driven systems biology to elucidate the mechanisms of action (MoA) of herbal formulas, translating traditional wisdom into validated, precision medicine.

Core AI-Systems Biology Frameworks for Herbal Research

The integration of AI with systems biology has given rise to specialized computational frameworks designed to handle the complexity of herbal medicine. The most transformative is AI-driven Network Pharmacology (AI-NP), which represents a significant evolution from conventional network pharmacology approaches [2].

Table 1: Comparison of Conventional vs. AI-Driven Network Pharmacology (AI-NP)

| Comparison Dimension | Conventional Network Pharmacology | AI-Driven Network Pharmacology (AI-NP) | Key Advancement |

|---|---|---|---|

| Data Acquisition & Integration | Relies on static public databases; fragmented, slow updates [2]. | Integrates dynamic, multimodal data (omics, EHR, real-world data) [2]. | Enables dynamic, high-dimensional data fusion for a more holistic foundation. |

| Algorithmic Core | Based on statistical correlation and topology analysis; expert-dependent [2]. | Utilizes ML, DL, and Graph Neural Networks (GNN) for automatic pattern recognition [2]. | Shifts from experience-driven to data-driven discovery of complex, non-linear interactions. |

| Model Interpretability | Good interpretability but limited handling of high-dimensional data [2]. | Complex "black-box" models, but tools like SHAP and LIME enhance transparency [2]. | Balances predictive power with explainability, crucial for scientific validation. |

| Computational Scalability | Manual or semi-automated processing; low efficiency for large networks [2]. | High-throughput parallel computing suitable for genome- and proteome-scale networks [2]. | Makes the analysis of full herbal formula-target-disease networks computationally feasible. |

| Translational Potential | Focused on mechanistic hypotheses; weak direct link to clinical outcomes [2]. | Integrates clinical big data for precision prediction and patient stratification [2]. | Directly bridges molecular mechanisms with patient-level efficacy and biomarkers. |

AI-NP operates through a multi-stage analytical workflow. The process begins with data integration from TCM databases (e.g., TCMSP, TCMID), multi-omics sources (genomics, proteomics, metabolomics), and clinical repositories [4]. Graph Neural Networks (GNNs) are then particularly effective at modeling the resulting "herb-ingredient-target-pathway" network, capturing the higher-order relationships and dependencies within this complex graph structure [2]. Predictive modeling using ML classifiers (e.g., Random Forest, Support Vector Machines) or DL architectures identifies potential bioactive compounds and key targets [7]. Finally, the model's predictions and inferred mechanisms must be subjected to rigorous experimental validation in wet-lab settings [6].

Diagram 1: AI-NP Analytical Workflow for Herbal Formula Research

Multi-Omics Integration: The Data Foundation for AI Models

The predictive power of AI models is contingent on the quality and comprehensiveness of the input data. A multi-omics strategy is therefore non-negotiable for constructing a systems-level understanding of herbal formula action [4].

Genomics and Epigenomics identify genetic predispositions and how herbal compounds influence gene expression networks. For instance, consensus clustering algorithms have been used to identify driver genes (e.g., Nfkb1, Stat1) targeted by formulations like Gegen Qinlian Decoction for diabetes [4]. Epigenomic analyses reveal how compounds like curcumin exert anticancer effects by modulating DNA methyltransferase (DNMT) and histone deacetylase (HDAC) activity [4].

Proteomics is critical for mapping the direct and indirect protein targets of herbal metabolites, quantifying their expression changes, and characterizing post-translational modifications [4]. Proteomic profiling helps move beyond gene expression to confirm actual protein-level activity and interaction.

Metabolomics, both targeted and untargeted, serves a dual role: it profiles the complex metabolite composition of the herbal formula itself and measures the endogenous metabolic changes induced in the biological system. This creates a direct link between formula chemistry and host biochemical response [6].

Spatial omics and single-cell sequencing add further resolution, allowing researchers to pinpoint mechanism-relevant activity to specific tissue regions or cell types [4]. The integration of these layers via AI creates a powerful, multi-dimensional view of pharmacological action.

Diagram 2: Multi-Omics Integration to Elucidate Herbal Formula Mechanisms

Experimental Protocols for AI-Hypothesis Validation

AI-generated predictions require rigorous experimental validation. Below are detailed protocols for key validation stages.

Protocol for High-Content Screening (HCS) of AI-Predicted Synergistic Pairs

Objective: To experimentally validate synergistic herb-compound or compound-compound pairs predicted by AI association rule mining or network models [8]. Materials:

- Cell line relevant to the disease pathology.

- AI-prioritized individual compounds and putative synergistic pairs.

- Fluorescent probes for multiplexed readouts (e.g., CellEvent Caspase-3/7 for apoptosis, DCFDA for ROS, FLIPR Calcium 4 for signaling).

- High-content imaging system (e.g., ImageXpress Micro Confocal). Method:

- Cell Seeding: Seed cells in 96- or 384-well microplates at optimal density.

- Compound Treatment: Treat cells with a matrix of concentrations for each single compound and their combinations (e.g., 8x8 serial dilution). Include controls.

- Staining & Fixation: At the assay endpoint (e.g., 24h/48h), stain with live-cell or fixable fluorescent dyes according to probe protocols.

- Image Acquisition & Analysis: Acquire images in multiple channels. Use automated image analysis software to quantify cell count, fluorescence intensity, and morphological features per well.

- Data Analysis: Calculate synergy using the Combination Index (CI) method via software like CompuSyn. A CI < 1 indicates synergy, validating the AI prediction.

Protocol for Target Engagement Validation using Cellular Thermal Shift Assay (CETSA)

Objective: To confirm direct binding of an AI-predicted herbal compound to its purported protein target in a cellular context. Materials:

- Cell lysate or intact cells.

- AI-predicted compound and inactive analog/vehicle control.

- Thermal cycler with gradient function.

- Antibodies for target protein and loading control (for Western Blot readout) or mass spectrometry setup. Method:

- Compound Treatment: Aliquot cell lysate or seed cells for intact-CETSA. Treat with compound or vehicle for a predetermined time.

- Heating: For lysate-CETSA, heat compound-treated and control lysates at a temperature gradient (e.g., 37°C – 67°C) for 3 min. For intact-CETSA, heat the whole cells.

- Protein Solubilization: Cool samples, then solubilize proteins. For intact-CETSA, lyse cells first.

- Analysis: Centrifuge to separate soluble protein. Analyze the soluble fraction by Western Blot (for specific targets) or quantitative proteomics.

- Interpretation: A shift in the thermal stabilization curve (increased soluble target protein at higher temperatures) in the compound-treated sample confirms target engagement.

Protocol for In Vivo Validation of AI-Optimized Formula in a Disease Model

Objective: To assess the efficacy and systemic effects of an AI-optimized herbal formula in a preclinical animal model. Materials:

- Animal model of disease (e.g., rodent model of metabolic syndrome, colitis, or cancer).

- AI-optimized herbal formula and standard-of-care control.

- Equipment for physiological monitoring, blood collection, and tissue harvesting.

- Assay kits for serum biomarkers (e.g., cytokines, liver enzymes) and materials for histopathology. Method:

- Study Design: Randomize animals into groups: disease model + AI-formula, disease model + vehicle, disease model + positive control, healthy control.

- Dosing: Administer formula orally or via injection at an AI-suggested or pharmacologically derived dose for the study duration.

- Phenotypic Monitoring: Record clinical signs, body weight, and disease-specific metrics (e.g., glucose tolerance, tumor volume) regularly.

- Terminal Analysis: Collect blood for plasma metabolomics and biomarker analysis. Harvest relevant tissues for transcriptomic/proteomic analysis and histopathology.

- Systems Analysis: Integrate phenotypic, multi-omics, and histology data. Use statistical and pathway analysis to confirm if the in vivo mechanism aligns with the AI-NP model prediction, closing the translational loop.

The Scientist's Toolkit: Essential Reagents and Solutions

Table 2: Key Research Reagent Solutions for AI-Guided Herbal Formula Research

| Category & Item | Function & Application | Key Consideration |

|---|---|---|

| AI & Data Analysis | ||

| TCM-Specific Databases (TCMSP, TCMID) | Provide curated chemical, target, and ADMET data for herbal compounds for network construction [4]. | Data quality and update frequency are critical for model accuracy. |

| Graph Neural Network (GNN) Software (PyTorch Geometric, DGL) | Model complex herb-ingredient-target-disease networks and predict novel interactions [2]. | Requires expertise in graph-based ML. |

| eXplainable AI (XAI) Tools (SHAP, LIME) | Interpret "black-box" AI model predictions to generate testable biological hypotheses [2]. | Essential for translating model output into mechanistic insight. |

| Multi-Omics Profiling | ||

| Untargeted Metabolomics Kits | Profile the full spectrum of small molecules in herbal extracts and biological samples [4]. | Crucial for capturing formula complexity and host metabolic response. |

| Phospho-/Total Protein Antibody Arrays | Simultaneously measure activity changes in signaling pathways predicted to be modulated by the formula [4]. | Validates network pharmacology predictions of pathway regulation. |

| Spatial Transcriptomics Reagents | Map gene expression changes within tissue architecture, linking mechanism to specific anatomical sites [4]. | Confirms tissue- or cell-type-specific activity predicted by models. |

| Validation & Screening | ||

| Recombinant Human Protein Targets | Used in biochemical assays (e.g., enzyme inhibition, binding) to confirm direct AI-predicted compound-target interactions. | Must match the specific protein isoform predicted by the model. |

| Multiplex Cytokine & Signaling Panels (Luminex/MSD) | Quantify a panel of secreted proteins to validate AI-predicted effects on immune or inflammatory pathways. | Enables high-throughput verification of multi-target effects. |

| CRISPRa/i Gene Modulation Kits | Functionally validate key target genes identified by AI models by overexpressing or knocking them down in cellular assays. | Establishes causal relationship between target gene and phenotypic effect. |

AI serves as the indispensable bridge connecting the holistic, systems-oriented epistemology of TCM with the quantitative, mechanistic framework of modern systems biology. By leveraging AI-NP, multi-omics integration, and rigorous validation protocols, researchers can systematically deconvolute the "multi-component, multi-target, multi-pathway" mechanisms of herbal formulas [2].

The future of this field lies in enhancing model interpretability and translational fidelity. This will be achieved through the development of dynamic, temporal AI models that capture the pharmacokinetic-pharmacodynamic progression of formula effects, and the closer integration of AI with advanced experimental systems like organs-on-chips and digital twins [6]. Furthermore, applying natural language processing (NLP) to mine classical TCM texts and modern biomedical literature in tandem will continue to generate novel, testable hypotheses rooted in both traditional wisdom and contemporary science [8]. The ultimate goal is a fully realized, AI-powered pipeline that accelerates the discovery of synergistic herbal combinations, validates their mechanism, and paves the way for their development into next-generation, precision phytotherapeutics.

The global resurgence of interest in herbal medicines (HMs) and traditional systems like Traditional Chinese Medicine (TCM) is driven by their potential to treat complex, chronic diseases through holistic, multi-target mechanisms [9] [10]. However, this potential is locked behind a formidable scientific challenge: the inherent chemical and biological complexity of herbal formulas. A single formula comprises dozens to hundreds of phytochemicals, which may interact with a network of biological targets, leading to synergistic, additive, or antagonistic effects that are difficult to decipher using conventional "one drug, one target" paradigms [11] [12].

Artificial Intelligence (AI) has emerged as an indispensable set of tools for decoding this complexity. By integrating and analyzing vast, multidimensional datasets, AI disciplines provide a systematic framework for transitioning from traditional, experience-based herbal medicine to a modern, evidence-based scientific practice [9] [13]. This whitepaper delineates the synergistic roles of three core AI disciplines—Network Pharmacology, Cheminformatics, and Natural Language Processing (NLP)—in elucidating the mechanisms of action (MoA) of herbal formulas. This integrated, in-silico-first approach enables the prediction of bioactive compounds, their protein targets, associated disease pathways, and eventual experimental validation, thereby accelerating the scientific validation and drug discovery potential of herbal medicine [14] [15].

Network Pharmacology: Mapping the Polypharmacology of Herbal Systems

Network Pharmacology provides the foundational theoretical and computational framework for understanding herbal formulas. It shifts the paradigm from a single target to a "network-target, multiple-component therapeutics" model, which aligns perfectly with the holistic nature of HMs [15] [12]. It treats biological systems as interconnected networks, where nodes represent entities like herbs, compounds, proteins, or diseases, and edges represent the interactions or relationships between them.

Core Methodologies and Workflow

A standard network pharmacology workflow for herbal formula analysis involves several key stages, as exemplified by a study on Taohong Siwu Decoction (THSWD) for osteoarthritis [11]:

- Compound Identification and Collection: Bioactive constituents of each herb in the formula are compiled from specialized databases (e.g., TCM Database@Taiwan, CMAUP) [14].

- Target Prediction: The putative protein targets of these compounds are identified using methods like reverse docking, similarity-based prediction, or machine learning models.

- Network Construction and Analysis: Multiple layers of networks are built (e.g., Compound-Target, Target-Pathway, Target-Disease). Tools like Cytoscape are used for visualization and topological analysis to identify key "hub" targets and central biological pathways [11].

- Mechanistic and Therapeutic Insight: The integrated network is analyzed to hypothesize the synergistic MoA of the formula against specific diseases.

The following diagram illustrates this multi-stage workflow from herbal formula to mechanistic hypothesis.

Quantitative Insights from Network Analysis

The application of this workflow yields concrete, quantitative insights. In the THSWD study, network analysis revealed its multi-target nature [11].

Table 1: Key Quantitative Findings from a Network Pharmacology Study of Taohong Siwu Decoction (THSWD) for Osteoarthritis [11]

| Analysis Aspect | Finding | Interpretation |

|---|---|---|

| Total Compounds Identified | 206 compounds from 6 herbs | The formula constitutes a natural combinatorial chemical library. |

| Multi-Target Compounds | 19 compounds correlated with >1 target; maximum connectivity = 7 targets/compound. | Individual compounds exhibit polypharmacology, capable of modulating multiple proteins simultaneously. |

| Potential Disease Coverage | Targets associated with 69 diseases. | Suggests a broad therapeutic potential and possible new indications (drug repositioning). |

| Key Target Proteins | Included MMPs (1,3,9,13), COX-2, iNOS, TNF-α, PPARγ. | Indicates a concerted action on inflammation, cartilage degradation, and metabolic regulation. |

Advanced Integration: From Static Networks to Dynamic AI

Traditional network analysis is limited by the static, incomplete nature of underlying knowledge graphs. The field is now advancing through integration with AI, particularly Large Language Models (LLMs) and Graph Neural Networks (GNNs) [15]. LLMs can process vast textual corpora (literature, patents, clinical records) to extract latent relationships and expand network connections dynamically. GNNs, such as models like DeepH-DTA, directly learn from the graph structure of biological networks to make superior predictions about drug-target interactions and drug combinations [15]. This creates a powerful synergy: network pharmacology provides the interpretable, biologically-grounded scaffold, while AI models enhance its predictive power and comprehensiveness.

Cheminformatics: Decoding the Chemical Language of Natural Products

Cheminformatics provides the essential tools to represent, analyze, and predict the properties of the small molecules at the heart of herbal medicine. It translates chemical structures into a numerical or graphical language that computers can process, enabling the virtual screening and prioritization of the vast chemical space of natural products [14] [16].

Molecular Representation: The Foundation of AI Models

The choice of molecular representation is critical for downstream AI tasks. Representations move from human-readable to machine-computable formats [16].

Table 2: Common Molecular Representations in Cheminformatics [16]

| Representation | Format | Description | Primary Use in AI |

|---|---|---|---|

| SMILES | Text (Linear Notation) | A string of characters representing atomic symbols and bond types in a depth-first traversal of the molecular graph. | Simple input for various models; requires preprocessing (tokenization). |

| Molecular Fingerprint (e.g., ECFP) | Bit Vector (Binary) | A fixed-length vector where set bits indicate the presence of specific molecular substructures or paths. | Feature vector for traditional machine learning models (e.g., Random Forest, SVM). |

| Molecular Graph | Graph (Adjacency + Feature Matrices) | Atoms as nodes (with features like atom type) and bonds as edges (with features like bond order). | Direct input for Graph Neural Networks (GCNs/GNNs), preserving topological information. |

AI-Driven Predictive Modeling

With molecules effectively represented, AI models can be trained to predict biological activity, a process central to identifying active components in herbal mixtures.

- Graph Convolutional Networks (GCNs): GCNs operate directly on the molecular graph structure. They iteratively aggregate information from a node's neighbors, allowing the model to learn complex, sub-structural features that correlate with bioactivity. Studies have shown GCNs built solely from 2D structural information can quantitatively predict activity (pIC50) against diverse protein targets with high accuracy [17].

- Virtual Screening Workflow: A typical AI-enhanced cheminformatics pipeline for herbal formula analysis involves: 1) curating a library of formula constituents; 2) representing them as molecular graphs or fingerprints; 3) applying a pre-trained GCN or other ML model to predict binding affinity against a panel of disease-relevant targets; and 4) ranking compounds for further experimental testing [14] [17].

The diagram below outlines this predictive cheminformatics pipeline.

Addressing the Natural Product Bottleneck

A major hurdle in natural product research is the physical availability of compounds for testing. Cheminformatics directly addresses this by enabling virtual screening of hundreds of thousands of compounds in silico, prioritizing only the most promising candidates for costly and time-consuming laboratory work [14]. Furthermore, analyses show that while over 250,000 natural product structures are known, only about 10% (~25,000) are readily purchasable, highlighting the critical role of computational prioritization [14].

Natural Language Processing: Mining Knowledge from Textual Data

NLP, and specifically Large Language Models (LLMs), unlock a different dimension of data critical for herbal medicine research: the vast and unstructured textual knowledge found in historical texts, modern scientific literature, electronic health records, and clinical trial reports [15] [13].

Applications in Herbal Formula Research

- Knowledge Graph Enhancement: NLP can automatically extract entities (herb names, compounds, diseases, targets) and relationships (treats, inhibits, associates with) from millions of publications. This populates and expands the knowledge graphs that underpin network pharmacology, filling gaps and revealing novel connections not yet captured in structured databases [15].

- Intelligent Syndrome Differentiation and Formula Generation: In TCM practice, treatment begins with syndrome differentiation (bian zheng). AI-powered systems use NLP to analyze patient clinical notes (symptoms, tongue, pulse descriptions) and match them to TCM syndrome patterns. Based on this, the system can suggest personalized herbal formula compositions, optimizing classic prescriptions for the individual patient [13].

- Literature-Based Discovery and Hypothesis Generation: LLMs can synthesize information across disparate documents to propose novel mechanistic hypotheses. For example, an LLM might connect a herb known for "activating blood" in TCM literature to modern studies on platelet aggregation and vascular endothelial growth factor (VEGF) pathways, suggesting specific molecular targets for validation [15].

Table 3: Key Research Reagent Solutions & Computational Tools for AI-Enabled Herbal Formula Research

| Category | Item / Resource | Function & Application | Key Features / Examples |

|---|---|---|---|

| Chemical & Biological Databases | TCM Database@Taiwan [14], CMAUP [14], Super Natural II [14] | Provide structured data on herbal constituents, chemical structures, and associated biological activities. Foundational for building compound libraries. | Contain tens to hundreds of thousands of natural product entries with bioactivity annotations. |

| Cheminformatics & Modeling Software | RDKit [14] [17], DeepChem [17], KNIME [14] | Open-source toolkits for cheminformatics operations, molecule manipulation, and building machine learning pipelines. Essential for molecular representation and model development. | RDKit is a core library for converting SMILES to graphs. DeepChem provides implementations of GCNs and other deep learning models for chemistry. |

| Network Analysis & Visualization | Cytoscape [11] | Platform for visualizing and analyzing complex biological networks. Used to construct and interrogate compound-target-disease networks. | Extensive plugin ecosystem (e.g., for network topology analysis, pathway enrichment). |

| AI/ML Libraries & Frameworks | PyTorch, TensorFlow, scikit-learn [14] | Core libraries for developing and training custom deep learning (GNNs, LLMs) and traditional machine learning models. | Provide flexibility for implementing state-of-the-art architectures like heterogeneous graph attention networks for target prediction [15]. |

| Omics Data Repositories | GEO (Gene Expression Omnibus), Metabolomics Workbench | Sources of transcriptomic, proteomic, and metabolomic data for experimental validation of network pharmacology predictions. | Used to verify if treatment with an herbal formula alters the expression of predicted key targets and pathways. |

Integrated Workflow and Future Outlook

The true power of AI in elucidating herbal formula MoA lies in the sequential and iterative integration of these three disciplines. A robust workflow may begin with NLP mining the literature to define a disease-specific target network. Cheminformatics models then screen the herbal formula's chemical library against these targets, producing a ranked list of candidate active compounds. Network pharmacology integrates these predictions to construct a hypothetical, formula-specific MoA network, identifying key hubs and pathways. This hypothesis then informs targeted biological experiments (e.g., testing compound effects on hub protein activity in vitro). Results from these experiments are fed back to refine the AI models and the network, creating a closed-loop, iterative discovery system [18] [13].

Despite rapid progress, challenges remain. Key issues include the variable quality and incompleteness of data in herbal medicine databases, the "black-box" nature of some complex AI models which can hinder biological interpretability, and the need for standardized experimental protocols to generate high-quality data for AI training and validation [12] [18]. Future advancements will depend on improved, curated data resources, the development of more interpretable AI models, and closer interdisciplinary collaboration among computational scientists, phytochemists, and pharmacologists. By addressing these challenges, the integrated application of Network Pharmacology, Cheminformatics, and NLP will continue to transform herbal medicine from a traditional practice into a cornerstone of next-generation, precision multi-target therapeutics [9] [10].

The systematic elucidation of the mechanisms of action (MoA) for complex herbal formulas represents a significant bottleneck in modern pharmacology. Traditional medicine (TM), with its millennia of documented practice in texts like Pu-Ji Fang, offers a vast repository of untapped therapeutic hypotheses [8]. However, the complexity of multi-herb, multi-target formulations and the unstructured nature of historical literature have hindered efficient scientific validation [19] [20]. Within the broader thesis of employing artificial intelligence (AI) to decode these MoAs, Natural Language Processing (NLP) serves as the critical first pillar: the knowledge mining and digitization engine.

NLP and text mining transcend simple information retrieval by transforming unstructured textual data into structured, analyzable knowledge. They categorize information, make connections between disparate documents, and generate visual maps of concepts, thereby uncovering latent patterns and predictions buried within vast corpora [21]. This capability is paramount for distilling testable hypotheses from historical TM texts and linking them to modern biomedical literature. The integration of AI-powered methods—including machine learning (ML), deep learning (DL), and large language models (LLMs)—enables researchers to link chemical composition, herbs, targets, and diseases, offering new approaches to screen major components and reveal MoAs [19] [22]. This technical guide details the core methodologies, experimental protocols, and tools required to build this NLP pipeline, from digitizing fragile manuscripts to generating novel herbal formula candidates for mechanistic study.

Foundational NLP Techniques for Textual Knowledge Mining

The journey from physical manuscript to computational insight involves a multi-stage pipeline. The initial step is the digitization of historical sources, which often involves high-resolution image capture (at 300-600 DPI or higher) using planetary scanners or multispectral imaging to handle fragile materials and recover faded text [23]. Subsequent Optical Character Recognition (OCR) converts images to machine-encoded text. For historical documents with stylistic variations, poor print quality, or archaic language, standard OCR is error-prone [24]. Advanced solutions employ deep learning-based object detection models to first identify text blocks and illustrations before recognition, improving accuracy for subsequent analysis [25].

Once digitized, raw text undergoes a series of NLP transformations to extract structured knowledge.

- Tokenization and Part-of-Speech (POS) Tagging: This foundational step breaks text into words (tokens) and labels them by grammatical role (nouns, verbs). For classical Chinese texts, which lack word separators, specialized segmentation tools (e.g., Jieba) supplemented with TM-specific lexicons are essential [8].

- Named Entity Recognition (NER): NER models are trained to identify and classify key entities within the text. In the TM domain, critical entities include herbal names (e.g., Salvia miltiorrhiza), formula names (e.g., "Ning Fei Ping Xue decoction"), symptoms, and body parts [8] [24]. Machine learning models, such as conditional random fields or deep neural networks, can be trained on annotated corpora to recognize these entities even amidst spelling variations or synonyms common in historical documents [24].

- Relationship Extraction: This technique identifies semantic relationships between entities, such as "herb X treats symptom Y" or "formula Z contains herb A and herb B." Rule-based patterns or supervised ML models can extract these relationships, building a network of knowledge [24].

- Topic Modeling and Keyword Analysis: Algorithms like Latent Dirichlet Allocation (LDA) can uncover thematic clusters across a large corpus (e.g., all texts related to "lung ailments"). An iterative keyword extraction approach can systematically discern herbs and concepts, as demonstrated in the analysis of the Pu-Ji Fang compendium [8].

Table 1: Core NLP Techniques for Traditional Medicine Text Analysis

| Technique | Primary Function | Application in TM Research | Key Challenge |

|---|---|---|---|

| Optical Character Recognition (OCR) | Converts scanned images to machine-readable text. | Digitizing historical formularies and medical journals. | Poor source quality, archaic fonts, and linguistic variations reduce accuracy [25] [24]. |

| Named Entity Recognition (NER) | Identifies and classifies predefined entities (e.g., drugs, diseases). | Extracting herb names, formula names, symptoms, and targets from literature. | Requires domain-specific model training; handling synonymy and historical terminology [8] [24]. |

| Relationship Extraction | Identifies semantic relations between entities. | Mapping "herb-treats-disease" or "formula-contains-herb" relationships. | Often requires complex, supervised models or carefully crafted rules [24]. |

| Topic Modeling | Discovers abstract themes across a document collection. | Identifying clusters of herbs used for specific therapeutic approaches (e.g., "heat-clearing"). | Output topics can be abstract and require expert interpretation [8]. |

| Association Rule Learning | Finds frequent co-occurring itemsets in transactional data. | Discovering statistically significant herb-pair combinations in classical formulas. | Generating rules that are both statistically sound and pharmacologically meaningful [8]. |

Experimental Protocols: From Text to Testable Hypothesis

The following protocols outline a replicable pipeline for generating mechanistic research hypotheses from textual data.

Protocol 1: Mining Herb-Pair Associations from Classical Texts

This protocol, adapted from research on Pu-Ji Fang, details the extraction of novel herb-pair candidates for further study [8].

Objective: To automatically identify and statistically validate frequently co-occurring herb pairs in a classical TM corpus. Materials: Digital corpus of classical text (e.g., Pu-Ji Fang, Sheng Ji Zong Lu); TM-specific dictionary/lexicon; computational environment (Python/R). Method:

- Corpus Preprocessing: Clean digitized text, apply custom word segmentation using a tool like Jieba enhanced with a TM lexicon.

- Entity Recognition: Apply a NER model to tag all herbal names within each formula or medical passage.

- Transaction Database Creation: Transform each formula/passage into a "transaction" record listing all unique herbs identified within it.

- Association Rule Mining: Apply the Apriori or FP-Growth algorithm to the transaction database. Set minimum thresholds for support (frequency of co-occurrence) and confidence (conditional probability that herb B appears given herb A appears).

- Validation & Prioritization: Filter generated rules by lift (measuring independence) and statistical significance (e.g., p-value from Chi-square test). Prioritize pairs that are frequent in historical texts but under-explored in modern PubMed literature [8].

Protocol 2: Linking Historical Herbal Pairs to Modern Molecular Pathways

This protocol bridges historical knowledge with contemporary biomedical evidence.

Objective: To contextualize a historically derived herb-pair within modern molecular biology via literature-based enrichment. Materials: Target herb-pair (e.g., "Huang Qi - Dang Shen"); biomedical literature database (e.g., PubMed); gene annotation databases (e.g., GO, KEGG). Method:

- Gene-Herb Literature Search: Perform a systematic cross-search on PubMed using queries pairing each herb's Latin name and common synonyms with "gene" or "target." Use APIs (e.g., Biopython's Entrez) for automation. Record the number of associated publications for each herb [8].

- Gene List Compilation: Extract the unique set of genes/proteins mentioned in the retrieved abstracts for each herb.

- Pathway Enrichment Analysis: Use the combined gene list as input for enrichment analysis against the KEGG pathway database. Employ tools like clusterProfiler (R) or Enrichr. Apply a false discovery rate (FDR) correction.

- Hypothesis Generation: Identify significantly enriched pathways (FDR < 0.05). For example, a pair might be enriched for "PI3K-Akt signaling pathway" or "T cell receptor signaling," suggesting a potential MoA framework for experimental design [19].

Protocol 3: Generative Modeling for Novel Formula Proposals

This protocol uses deep learning to extend historical patterns into novel, plausible formulations.

Objective: To train a generative model that proposes new multi-herb formulations based on the patterns learned from classical texts. Materials: A large, structured dataset of historical formulas (herb sequences); deep learning framework (e.g., TensorFlow, PyTorch). Method:

- Data Preparation: Represent each formula as a sequence of herb tokens. Create a unified vocabulary of all herbs. Pad sequences to a uniform length.

- Model Architecture: Implement a Long Short-Term Memory (LSTM) neural network, an architecture effective for sequence generation. The model takes a sequence of herbs as input and learns to predict the next herb in the sequence [8].

- Training: Train the model on the corpus of historical formulas. Use cross-entropy loss and an optimizer like Adam.

- Generation: To generate a new formula, provide the model with a seed herb or a short sequence. The model samples the next herb from its predicted probability distribution, and the process iterates until a complete formula of desired length is generated.

- Evaluation: Use computational metrics (perplexity) and expert review by TM practitioners to assess the coherence and plausibility of generated formulas.

Diagram 1: NLP-Driven Workflow for Herbal Formula Hypothesis Generation (Max Width: 760px).

Case Studies: NLP in Action for Mechanism Elucidation

Case Study 1: Decoding a Formula for Acute Respiratory Distress Syndrome (ARDS) Researchers integrated network pharmacology, AI, and transcriptome analysis to study the Ning Fei Ping Xue (NFPX) decoction, a 20-herb formula. The initial step likely involved text mining to define the formula's standardized composition and identify 37 active ingredients (e.g., astragaloside IV). NLP-facilitated database searches linked these ingredients to targets, which were then analyzed against lung tissue transcriptomic data from ARDS models. The AI analysis inferred that the formula's MoA involved modulation of the immune-inflammatory response via regulation of HRAS, AMPK, and SMAD4 gene expression, providing a focused pathway for validation [19].

Case Study 2: Discovering Anti-Influenza Agents from Isatis tinctoria (Banlangen) A network-based AI framework was applied to this herb. Text mining of literature and databases compiled its known chemical constituents. An AI model then screened these constituents against viral and host targets. The model successfully prioritized six candidates (e.g., acacetin, tryptanthrin), which were subsequently confirmed to have anti-influenza activity in vitro. This demonstrates a "in silico prediction → experimental validation" pipeline, where NLP and data mining front-load the process with high-probability candidates [19].

Case Study 3: Analyzing Wogonin's Action in Lung Cancer This study combined a clinical ML model with NLP-driven mechanism investigation. An optimized support vector machine model was built for lung cancer diagnosis. Separately, the flavonoid wogonin (from Scutellaria baicalensis) was investigated. Literature mining and network analysis were used to construct its target profile and downstream signaling pathway. The study exemplifies how diagnostic AI and NLP-derived MoA analysis can run in parallel to provide a more comprehensive picture of a TM component's role [19].

Table 2: Quantitative Outcomes from NLP- and AI-Driven Herbal Research Studies

| Study Focus | Data Source | NLP/AI Method | Key Quantitative Output | Experimental Validation |

|---|---|---|---|---|

| Ning Fei Ping Xue (NFPX) for ARDS [19] | 20-herb formula, transcriptome data | Network pharmacology, AI integration | Identified 37 active ingredients; predicted regulation of HRAS, AMPK, SMAD4 genes. | Lung tissue transcriptome analysis confirmed gene expression changes. |

| Isatis tinctoria vs. Influenza [19] | Herbal constituent databases | Network-based screening framework | Prioritized 6 active candidates (e.g., acacetin, tryptanthrin) from a larger chemical set. | In vitro anti-viral assay confirmed activity. |

| Pu-Ji Fang Herb-Pair Mining [8] | 426-volume classical text | Iterative keyword extraction, association rule mining | Analyzed 16,384 keyword combinations; generated novel herb-pair rules. | Literature-based validation via 7,664 PubMed gene-herb cross-search entries. |

| Wogonin in Lung Cancer [19] | Biomedical literature | Network pharmacological analysis | Constructed target network and signaling pathway for a single flavonoid. | Linked to in vitro experimental data on mechanism. |

Table 3: Research Reagent Solutions for NLP-Driven Herbal Pharmacology

| Tool/Resource Category | Specific Example | Function in Research | Relevance to MoA Elucidation |

|---|---|---|---|

| Specialized NLP Tools | Jieba (Chinese text segmentation) | Segment classical Chinese medical text into words for analysis. | Foundational step for all subsequent entity and relationship extraction [8]. |

| TM-Specific Databases | TCMBank [20], ETCM v2.0 [20] | Provide standardized data on herbs, ingredients, targets, and diseases. | Essential for linking text-mined herb names to chemical and target data for network construction [20]. |

| Biomedical Literature APIs | PubMed E-utilities (Entrez) | Programmatically search and retrieve scientific literature for gene-herb associations. | Enables systematic, large-scale validation of historical findings against modern molecular biology [8]. |

| Generative AI Models | Custom LSTM/Transformer models | Learn patterns from classical formula sequences to generate novel, plausible formulations. | Proposes new multi-herb combinations for testing synergistic MoAs [8]. |

| Network Analysis Software | Cytoscape, Gephi | Visualize and analyze complex herb-target-pathway-disease networks. | Provides a systems-level view of a formula's potential MoA, identifying key hubs and pathways [19]. |

| Experimental Validation Suite | LC-MS/MS, Transcriptomics (RNA-seq), in vivo models | Validate computational predictions (e.g., confirm compound presence, pathway activity). | The critical step to transition from in silico hypothesis to confirmed biological mechanism [19] [8]. |

Visualization of Mechanistic Pathways Derived from Text Mining

The endpoint of the NLP pipeline is a testable mechanistic hypothesis. For instance, analysis of the herb wogonin (Scutellaria baicalensis) for lung cancer, informed by literature mining, can be visualized as a candidate signaling pathway [19].

Diagram 2: Candidate Signaling Pathway for an Herb Mined from Literature (Max Width: 760px).

NLP provides the indispensable foundation for a data-driven, AI-powered research thesis aimed at elucidating the MoA of herbal formulas. By systematically converting millennia of documented empirical knowledge into structured, analyzable data, it generates high-quality hypotheses for experimental validation. The field is evolving rapidly with the incorporation of large language models (LLMs) capable of deeper semantic understanding and more sophisticated reasoning about textual content [22]. The future lies in tighter integration between these advanced NLP models, comprehensive knowledge graphs that link historical use with multi-omics data, and automated experimental platforms. This闭环 (closed-loop) from historical text to wet-lab validation and back will significantly accelerate the translation of traditional herbal wisdom into evidence-based, mechanism-understood modern therapeutics.

The AI Toolbox in Action: Methodologies for Predicting Targets, Pathways, and Synergies

The holistic nature of Traditional Chinese Medicine (TCM) and other herbal medicinal systems, characterized by a “multi-component, multi-target, multi-pathway” therapeutic model, presents a significant challenge for mechanistic elucidation using conventional single-target drug discovery paradigms [26]. Network pharmacology (NP) has emerged as a transformative systems biology-based methodology that aligns perfectly with this complexity by constructing multidimensional herb–component–target–disease networks [27]. The integration of artificial intelligence (AI) and multi-omics technologies is now pushing this field beyond static correlations, enabling the dynamic, predictive, and mechanism-driven analysis of herbal formula actions [26] [28]. This convergence represents the core of a new framework for sustainable drug discovery, aiming to decode the "black box" of herbal medicine by bridging empirical knowledge with modern precision science [26].

The Quantitative Landscape of Network Pharmacology Research

The field has experienced exponential growth, particularly in applications to TCM. A systematic analysis of 7,288 publications in PubMed from 2007 to mid-2025 reveals clear trends [26].

Table 1: Publication Trends in Network Pharmacology (2007-2025)

| Analysis Category | Number of Publications | Key Finding/Proportion |

|---|---|---|

| Total NP Publications | 7,288 | Found via PubMed search [26] |

| NP + Omics Studies | 808 | Represents integrated multi-omics validation [26] |

| NP + AI Studies | 773 | Shows growing use of AI enhancement [26] |

| NP + TCM Focus | 6,773 | 92.95% of total NP publications [26] |

| TCM Studies with Experimental Validation | 79 (from 239 screened) | Qualified cases meeting rigorous design criteria [26] |

The data indicates a dominant focus on TCM, with applications to TCM theory, prescriptions, and herbs accounting for 40.12% (2,924/7,288) of all NP publications in 2024, a 28-fold increase over a decade [26]. This underscores the proven feasibility and intense interest in using NP to deconvolute herbal formulas.

Evolution of the Core Workflow: From Basic Construction to AI-Enhanced Prediction

The fundamental workflow of network pharmacology involves three integrated stages: network construction, interaction analysis, and experimental verification [26]. This process has evolved from a manual, database-dependent approach to an AI-augmented predictive science.

Figure 1: The foundational three-stage workflow of network pharmacology for herbal medicine research [26] [27].

AI technologies are now deeply embedded in this workflow, transforming each stage. Graph Neural Networks (GNNs) analyze complex component-target-disease networks, while natural language processing (NLP) mines unstructured text from classical texts and electronic health records for novel relationships [26] [28]. AlphaFold3 and similar tools predict protein structures for improved molecular docking with phytochemicals, and generative AI platforms like Chemistry42 facilitate the design and optimization of novel derivatives from herbal leads [26].

Figure 2: An AI-enhanced network pharmacology workflow, showing the integration of predictive modeling, dynamic network analysis, and multi-omics data fusion [26] [28].

Detailed Experimental Protocols for Network Construction and Validation

Protocol for Constructing a Herb-Target-Disease Network

This protocol details the steps for building a foundational network.

Compound Identification and ADME Screening:

- Extract chemical constituents of the herbal formula from specialized databases (e.g., TCMSP, ETCM). For a formula like Jianpi-Yishen, this may yield 200+ candidates [26].

- Filter compounds using pharmacokinetic ADME criteria (Oral Bioavailability ≥30%, Drug-likeness ≥0.18) to identify bioactive molecules likely to reach systemic circulation [26].

Target Prediction and Disease Association:

- Retrieve predicted and known protein targets for the filtered compounds from the TCMSP and PharmMapper databases.

- Collect disease-associated targets from GeneCards, OMIM, and DisGeNET using a relevance score threshold (e.g., >10 for GeneCards) [29].

- Intersect the compound-target list with the disease-target list to identify putative therapeutic targets.

Network Assembly and Topological Analysis:

- Import the compound-target and target-disease relationships into Cytoscape (v3.10.2) to construct a visual network [26].

- Perform topological analysis using Cytoscape plugins (e.g., CytoNCA) to calculate degree, betweenness centrality, and closeness centrality for each node [27].

- Identify hub targets (e.g., AKT1, TNF, IL6, VEGFA) and core pathways by applying cutoff values (e.g., degree ≥2× median degree).

Pathway and Functional Enrichment:

Protocol for AI-Enhanced Target and Synergy Prediction

This advanced protocol integrates machine learning for deeper analysis.

Data Preparation and Featurization:

- Represent the herb-target-disease network as a graph where nodes (compounds, targets) are featurized using molecular descriptors (ECFP6 fingerprints) and protein sequence embeddings (from UniProt).

- Use historical herb-treatment-outcome data from electronic medical records (EMRs), processed via NLP, to label node relationships [28] [30].

Model Training for Target Prediction:

- Train a Graph Convolutional Network (GCN) or Graph Attention Network (GAT) to learn the network structure [28].

- The model learns to map the compound features to target features through the graph's edges. Implement using PyTorch Geometric with a 70/15/15 train/validation/test split.

- The objective is a link prediction task: to accurately predict missing edges (interactions) between compounds and targets not present in the original database.

Synergy Prediction and Mechanism Hypothesis:

- Formulate synergy prediction as a graph classification problem. Subgraphs representing combinations of herbs or compounds are fed into the GNN.

- The model outputs a probability score for therapeutic synergy, trained on labeled data from combination therapy clinical studies or high-throughput screens [31].

- Use explainable AI (XAI) tools like GNNExplainer or SHAP to interpret the model and identify which sub-network motifs (e.g., a specific target cluster) are most influential for the predicted synergy, generating testable mechanistic hypotheses [28].

Protocol for Multi-Omics Experimental Validation

This protocol validates NP predictions using systems biology.

In Vivo/In Vitro Model Treatment:

- Administer the herbal formula (e.g., Jianpi-Yishen decoction) to a disease model (e.g., adenine-induced chronic kidney disease in rats) at a clinical equivalent dose [26].

- Include model control and positive drug control groups. Collect tissue samples (e.g., kidney, serum) after a prescribed treatment period.

Multi-Omics Profiling:

- Transcriptomics: Perform RNA sequencing (RNA-seq) on tissue. Identify differentially expressed genes (DEGs) (|log2FC|>1, adj. p<0.05) between treatment and control groups.

- Proteomics: Conduct LC-MS/MS-based label-free quantitative proteomics on the same tissue. Identify differentially expressed proteins (DEPs) with similar thresholds.

- Metabolomics: Analyze serum samples using UPLC-QTOF-MS. Identify differentially abundant metabolites.

Integrative Bioinformatics Analysis:

- Overlap the NP-predicted hub targets with the DEGs and DEPs to obtain a mechanistically validated core target list.

- Perform pathway enrichment on this core list. Construct a causal network by linking enriched pathways (e.g., glycine/serine metabolism from metabolomics) with upstream regulatory proteins (from proteomics) and genes (from transcriptomics) [26].

- Use tools like MetaboAnalyst for joint pathway analysis of transcriptomic and metabolomic data to identify key regulated pathways such as "tryptophan metabolism" [26].

Table 2: Essential Databases for Network Pharmacology Construction

| Database Type | Name | Key Function | Website / Reference |

|---|---|---|---|

| TCM Compound | TCMSP | Contains herbs, compounds, ADME properties, targets, and diseases. | https://tcmsp-e.com/ [26] |

| TCM Formula | ETCM 2.0 | Provides information on TCM formulas, herbs, ingredients, and predictive targets. | http://www.tcmip.cn/ETCM/ [26] |

| General Compound | PubChem | A comprehensive repository of chemical molecules and their biological activities. | https://pubchem.ncbi.nlm.nih.gov/ [26] |

| Disease Target | GeneCards | Integrates human genes with annotations and disease associations. | https://www.genecards.org/ [26] |

| Therapeutic Target | TTD (Therapeutic Target Database) | Documents known and explored therapeutic protein targets. | http://db.idrblab.net/ttd/ [26] |

| Pathway | KEGG | Resource for understanding high-level functions of biological systems from pathways. | https://www.genome.jp/kegg/ [26] |

| Protein Interaction | STRING | Database of known and predicted protein-protein interactions. | https://string-db.org/ [29] |

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational and experimental resources for implementing the described protocols.

Table 3: Research Reagent Solutions for NP and Multi-Omics Integration

| Tool Category | Specific Tool/Reagent | Function in Research | Key Application Example |

|---|---|---|---|

| Network Visualization & Analysis | Cytoscape v3.10.2 | Open-source platform for visualizing complex networks and integrating with attribute data. | Visualizing "herb-compound-target-pathway" networks and performing topological analysis [26]. |

| Molecular Docking | AutoDock Vina, Schrödinger Suite | Predicts the preferred orientation and binding affinity of a small molecule (herbal compound) to a protein target. | Validating interactions between a predicted active component (e.g., salvianolic acid B) and a hub target (e.g., AKT1) [26] [27]. |

| AI/ML Modeling | PyTorch Geometric (PyG) | A library for deep learning on graphs, built upon PyTorch. Essential for GNN implementation. | Building a GCN model for link prediction in a herb-target network [28]. |

| Multi-Omics Profiling | Illumina NovaSeq (Transcriptomics), Q Exactive HF (Proteomics), UPLC-QTOF-MS (Metabolomics) | High-throughput platforms for generating genome-wide expression, protein abundance, and metabolite abundance data. | Generating validation data from animal models treated with herbal formulas to confirm network predictions [26]. |

| Pathway & Enrichment Analysis | DAVID, MetaboAnalyst, clusterProfiler (R) | Bioinformatics tools for functional interpretation of gene/protein lists and integrated pathway analysis. | Identifying KEGG pathways significantly enriched by the core targets of an herbal formula (e.g., PI3K-Akt signaling) [29]. |

| Explainable AI (XAI) | SHAP (SHapley Additive exPlanations), GNNExplainer | Frameworks for interpreting the output of machine learning models, crucial for AI-driven hypothesis generation. | Identifying which specific herb compounds and targets in a network were most important for a model's prediction of efficacy [28]. |

Multi-Scale Mechanism Analysis: From Molecules to Patients

The ultimate goal of AI-enhanced NP is to elucidate mechanisms across biological scales. A landmark study on the Jianpi-Yishen formula for chronic kidney disease exemplifies this [26]. NP predicted core targets related to inflammation and metabolism. Integrated transcriptomic, proteomic, and metabolomic profiling of treated rat models revealed that the formula's efficacy was mediated through:

- Molecular Scale: Regulation of betaine-mediated glycine/serine/threonine metabolism.

- Cellular Scale: Reprogramming of tryptophan metabolism, leading to.

- Tissue/Systems Scale: Synergistic modulation of M1/M2 macrophage polarization to restore inflammatory microenvironment homeostasis [26].

This demonstrates how the convergence of NP, AI, and multi-omics can construct a detailed causal chain from molecular targets to tissue-level phenotype, providing a comprehensive and testable mechanistic model for complex herbal formulas.

The integration of network pharmacology with AI and multi-omics has matured into a robust, predictive framework for deconstructing the systemic mechanisms of herbal medicines. By moving from descriptive network mapping to dynamic, AI-powered prediction and multi-omics validation, this paradigm effectively addresses the "multi-component, multi-target" challenge. It transforms herbal medicine from an experience-based practice into a mechanism-driven discipline, enabling sustainable drug discovery, rational formula optimization, and the development of precision herbal prescriptions tailored to individual patient networks [26] [28]. Future progress hinges on improving data quality, developing more interpretable AI models, and fostering interdisciplinary collaboration to fully unlock the therapeutic wisdom of traditional medicine.

The elucidation of the Mechanism of Action (MoA) for herbal formulas represents a formidable scientific challenge due to their inherent multi-component, multi-target, and multi-pathway nature [31]. Traditional reductionist approaches, focused on isolating single active compounds, often fail to capture the synergistic therapeutic effects and holistic network regulation that are central to traditional medicine systems like Traditional Chinese Medicine (TCM) [6] [32]. This complexity results in significant gaps in understanding the pharmacokinetic profiles, precise molecular targets, and polypharmacological networks underlying formula efficacy.

Artificial Intelligence (AI) and machine learning (ML) are emerging as transformative tools to navigate this complexity [20]. By integrating and analyzing high-dimensional data—from chemical structures and omics profiles to clinical phenotypes—AI provides a robust framework for predictive modeling and simulation [33]. Within the context of herbal medicine research, AI-driven approaches enable the deconvolution of herbal mixtures, prediction of compound-target interactions, and simulation of systems-level pharmacological effects [31] [13]. This technical guide details the core AI methodologies of ADMET prediction, target docking, and polypharmacology modeling, framing them as essential, interconnected components for a new, mechanism-driven paradigm in herbal formula research.

Table 1: Core AI Methodologies and Their Application in Herbal Formula Research

| AI Methodology | Primary Function | Key Challenge in Herbal Research | AI-Driven Solution |

|---|---|---|---|

| ADMET Prediction | Forecasts absorption, distribution, metabolism, excretion, and toxicity of molecules. | Predicting PK/PD for complex mixtures; herb-drug interaction risk [33]. | Multitask deep learning models using molecular fingerprints and structural descriptors [34]. |

| Target Docking | Predicts binding pose and affinity of a small molecule to a protein target. | Screening thousands of phytochemicals against proteome-wide targets [35]. | High-throughput virtual screening accelerated by AI scoring functions and AlphaFold2-predicted structures [36]. |

| Polypharmacology Modeling | Identifies and analyzes multi-target action of single compounds or mixtures. | Mapping synergistic "herb-ingredient-target-pathway" networks for formulas [6]. | Network pharmacology integrated with graph neural networks to predict multi-target synergy and side effects [37]. |

AI for ADMET Prediction: Ensuring Safety and Viability of Herbal Constituents

Predicting the ADMET properties of phytochemicals is a critical first step in prioritizing lead compounds and assessing clinical viability. For herbal formulas, this extends to evaluating potential drug-herb interactions (DHIs), a major clinical safety concern [33].

Technical Foundations and Modeling Approaches

Modern computational toxicology employs a hierarchy of AI models, evolving from single-endpoint to multi-endpoint joint modeling [34]. Models are trained on large-scale toxicological databases (e.g., TOXNET, PubChem) using molecular representations such as molecular fingerprints, graph convolutional networks, and SMILES strings.

- Rule/Statistical-Based Models: Use predefined structural alerts (e.g., for genotoxicity) or quantitative structure-activity relationship (QSAR) models.

- Machine Learning Models: Random Forest, Support Vector Machines, and Gradient Boosting models integrate diverse chemical and biological features for classification (toxic/non-toxic) and regression (e.g., IC50 values) tasks.

- Deep Learning & Graph-Based Models: Advanced multitask deep learning models and graph neural networks (GNNs) capture complex, non-linear relationships and learn directly from molecular graph structures, improving prediction for novel scaffolds [34].

Experimental Protocol: In Silico ADMET Screening and DHI Risk Assessment