Comparative Analysis of Structural Features in Biologically Active Datasets: From Molecular Networks to Drug Discovery

This article provides a comprehensive comparative analysis of structural features in biologically active datasets for researchers, scientists, and drug development professionals.

Comparative Analysis of Structural Features in Biologically Active Datasets: From Molecular Networks to Drug Discovery

Abstract

This article provides a comprehensive comparative analysis of structural features in biologically active datasets for researchers, scientists, and drug development professionals. We explore foundational concepts from protein-protein interaction networks (e.g., STRING) and bioactive compound databases (e.g., ChEMBL, PubChem). Methodological approaches include structural alignment tools, sequence analysis, and machine learning applications. Troubleshooting strategies address data quality and computational challenges, while validation techniques involve benchmarking and comparative assessments. The synthesis offers actionable insights for advancing drug discovery and biomedical research.

Foundations of Biological Structures: Exploring Core Datasets and Key Features

Protein-Protein Interaction Networks (PPINs) provide a systems-level framework for modeling the interactome, enabling researchers to decipher the complex relationships governing cellular processes, from signal transduction to disease mechanisms [1]. Within the broader thesis on the comparative analysis of structural features in biologically active datasets, this guide serves as a foundational comparison of the methodologies, data sources, and analytical tools used to construct, analyze, and derive biological meaning from PPINs. For researchers and drug development professionals, the choice of database, experimental validation protocol, and computational prediction model directly impacts the identification of robust drug targets and functional biomarkers. This guide objectively compares these critical alternatives, supported by experimental and topological data.

The foundation of any network analysis is the underlying data. Various public databases catalog PPIs, but they differ significantly in content, curation methods, and consequently, their topological properties. A 2024 topological review of four human PPINs revealed that while networks share many common protein-encoding genes, they diverge markedly in their specific interactions and neighborhood connectivities [1]. This inconsistency underscores the importance of database selection for specific research goals, such as functional enrichment or cancer driver gene discovery [1].

Table 1: Comparison of Key Protein-Protein Interaction Databases and Their Characteristics [1] [2] [3]

| Database | Primary Focus / Species | Interaction Sources | Key Strength | Reported Global Network Density Range |

|---|---|---|---|---|

| BioGRID | Multi-species, extensive genetic & protein interactions | Manual curation from literature, high-throughput studies | High-quality, curated physical and genetic interactions | 0.0012 - 0.0018 (varies by build) |

| STRING | Known & predicted interactions across >14,000 organisms | Experimental, curated, textmining, predictive algorithms | Integrative scores, functional partner prediction, broad coverage | ~0.0015 (human, high-confidence) |

| IntAct | Molecular interaction data, emphasis on curation | Manually curated experimental data from literature | Detailed annotation of experimental methods and conditions | N/A |

| HPRD | Human protein reference database | Manual curation of literature for human proteins | Comprehensive human-specific data with functional annotations | ~0.0009 |

| DIP | Experimentally verified interactions | Curated core dataset of verified interactions | Focus on reliability and reducing false positives | 0.0010 - 0.0021 |

Supporting Experimental Data: The topological comparison study calculated standard network metrics for networks sourced from different databases [1]. It found that small, functionally coherent sub-networks (e.g., cancer pathways) showed improved topological consistency across different source databases compared to the whole networks. This suggests that pathway-specific analyses may be more reproducible. Furthermore, centrality analysis demonstrated that the same genes (e.g., TP53, MYC) can occupy dramatically different topological roles (like betweenness or degree centrality) depending on the network they are placed in, which could alter their perceived biological importance in a study [1].

Comparison of Methodologies for PPI Discovery and Validation

PPI data is generated through wet-lab experiments and, increasingly, computational predictions. The choice of method involves trade-offs between throughput, cost, and reliability.

Experimental Protocol 1: Imaging-Based Phenotypic Profiling (Cell Painting) This untargeted screening assay measures hundreds to thousands of cellular features to capture a compound's phenotypic impact [4].

- Cell Culture & Treatment: Seed U-2 OS osteosarcoma cells in 384-well plates. Treat with test compounds across a concentration range (e.g., 8 concentrations, 0.03 – 100 μM) for 24 hours [4].

- Multiplex Staining: Use a cocktail of fluorescent dyes: Hoechst 33342 (nucleus/DNA), concanavalin A & wheat germ agglutinin (endoplasmic reticulum and plasma membrane), phalloidin (actin cytoskeleton), etc. [4].

- High-Content Imaging: Acquire images using an automated microscope across all fluorescent channels.

- Feature Extraction: Use image analysis software (e.g., CellProfiler) to extract ~1,300 morphological features (size, shape, texture, intensity) per cell [4].

- Data Normalization & Hit Identification: Normalize cell-level data to solvent controls. Aggregate to well-level medians. Apply hit-calling strategies: multi-concentration analysis (curve-fitting per feature or category) or single-concentration analysis (signal strength, profile correlation) [4].

Experimental Protocol 2: Structural Bioinformatics for Target Identification This computational protocol identifies and evaluates drug targets, as applied to the Hepatitis C Virus (HCV) proteome [5].

- Data Retrieval & Preprocessing: Obtain target protein sequences (e.g., HCV NS3, NS5B) from UniProt. Cluster sequences to remove redundancy (e.g., using CD-HIT at 90% identity) [5].

- Homology Modeling: For proteins without solved structures, identify a high-resolution template (sequence identity >30%, coverage >80%) from the PDB. Generate 3D models using software like MODELLER or I-TASSER [5].

- Binding Site Prediction & Molecular Docking: Predict druggable pockets on the protein surface. Prepare a library of small molecules (e.g., from ZINC database). Dock ligands into the binding site using AutoDock Vina, scoring poses by calculated binding affinity (ΔG) [5].

- Molecular Dynamics (MD) Simulation: Solvate the top-ranked protein-ligand complex in a water box. Run MD simulations (e.g., using GROMACS with AMBER force field) for 50-100 nanoseconds to assess complex stability, root-mean-square deviation (RMSD), and interaction persistence [5].

- Validation: Redock known inhibitors to benchmark the docking protocol (target RMSD < 2.0 Å). Compare predicted binding poses and affinities with existing experimental data [5].

Table 2: Comparison of PPI Investigation Methodologies [4] [3] [5]

| Method Category | Example Techniques | Throughput | Key Output | Primary Advantage | Primary Limitation |

|---|---|---|---|---|---|

| High-Throughput Experimental | Yeast Two-Hybrid (Y2H), Affinity Purification-MS (AP-MS) | Very High | Binary interaction pairs, protein complexes | Unbiased, genome-scale discovery | High false positive/negative rates [2] |

| Targeted Experimental | Co-Immunoprecipitation (Co-IP), Surface Plasmon Resonance (SPR) | Low to Medium | Validated direct interactions, binding kinetics | High confidence, quantitative data | Low throughput, hypothesis-driven |

| Phenotypic Profiling | Cell Painting [4] | High | Morphological profiles, inferred functional associations | Captures system-wide phenotypic impact | Indirect measure of PPIs, complex data analysis |

| Computational Prediction | Deep Learning (GNNs, Transformers) [3], Docking [5] | Very High | Predicted interaction probability, binding poses | Scalable, low cost, can predict unseen pairs | Dependent on training data quality, requires validation |

| Structural Analysis | Homology Modeling, MD Simulations [5] | Medium | 3D structural models, dynamic interaction details | Mechanistic insight, enables rational design | Computationally intensive, requires template/sequence |

Comparison of Computational Models for PPI Prediction

Deep learning has revolutionized computational PPI prediction. Different architectures excel at capturing various aspects of protein data.

Table 3: Comparison of Deep Learning Architectures for PPI Prediction [3]

| Model Architecture | Core Mechanism | Typical Input Data | Strength for PPI | Representative Tool/Approach |

|---|---|---|---|---|

| Graph Neural Network (GNN) | Message-passing between nodes in a graph | PPI networks, residue contact graphs | Captures topological relationships and neighborhood structure in networks | GCN, GAT, GraphSAGE [3] |

| Convolutional Neural Network (CNN) | Local filter convolution across spatial dimensions | Protein sequences (1D), structural images (2D/3D) | Extracts local sequence motifs or spatial features from structures | 1D-CNN for sequences, 3D-CNN for grids |

| Recurrent Neural Network (RNN) | Processing sequential data with internal memory | Protein amino acid sequences | Models long-range dependencies in sequences | LSTM, GRU |

| Transformer | Self-attention weighting across all sequence positions | Protein sequences, multiple sequence alignments | Captures global context and remote homology; excels with large datasets | ProteinBERT, ESM-2 [3] |

| Multimodal / Hybrid | Integration of multiple model types | Sequence + structure + network data | Leverages complementary information for higher accuracy | AG-GATCN (GAT+TCN) [3] |

Supporting Experimental Data: A 2025 review notes that models integrating multiple data types (multimodal) and architectures (hybrid) are setting new performance benchmarks [3]. For instance, the RGCNPPIS system, which combines Graph Convolutional Networks (GCN) and GraphSAGE, can simultaneously extract macro-scale topological patterns and micro-scale structural motifs from PPI data [3]. Benchmarking studies often use metrics like Area Under the Precision-Recall Curve (AUPR) on datasets from DIP or STRING to compare models, with top-performing hybrid models frequently achieving AUPR scores above 0.95 on gold-standard test sets [3].

Visualization and Analysis: Network Alignment and Complex Detection

Comparing networks across species or conditions is achieved through network alignment, while identifying functional modules within a single network relies on complex detection algorithms.

Network Alignment Protocols:

- Problem Definition: Given K networks G=(V,E), find a mapping M between nodes (proteins) across networks based on biological similarity (e.g., sequence homology from BLAST) and topological similarity (conservation of interaction patterns) [2].

- Algorithm Selection: Choose an aligner based on need: Local alignment (e.g., NetworkBLAST) finds conserved subnetworks; Global alignment (e.g., IsoRank) finds a consistent mapping across entire networks [2].

- Evaluation: Use biological metrics like Functional Coherence (FC)—the average GO term similarity of aligned pairs—and topological metrics like Edge Correctness (EC)—the fraction of conserved edges [2].

Table 4: Comparison of Protein Complex Detection Algorithms [6]

| Algorithm Type | Example (Year) | Core Principle | Performance Metric (F1-Score Range) | Advantage |

|---|---|---|---|---|

| Unsupervised Clustering | MCODE (2003), MCL (2002) | Clusters densely connected subgraphs in PPI network | 0.30 - 0.45 (varies by dataset) | Fast, intuitive, no training data needed |

| Supervised Learning | ClusterEPs (2016) [6] | Learns contrast patterns ("Emerging Patterns") between true complexes and random subgraphs | 0.45 - 0.60 | Can detect sparse complexes, provides explanatory patterns |

| Semi-Supervised | NN (2014) | Uses neural networks with labeled and unlabeled data | ~0.40 | Leverages both known and unknown data |

| Core-Attachment | COACH (2009) | Identifies dense cores and attaches peripherals | 0.35 - 0.50 | Reflects biological complex architecture |

| Ensemble Clustering | Ensemble (2012) | Aggregates results from multiple clustering methods | 0.40 - 0.55 | Improved robustness and coverage |

Supporting Experimental Data: A study on the ClusterEPs method demonstrated its superior performance. On benchmark yeast PPI datasets, ClusterEPs achieved a higher maximum matching ratio (a quality measure aligning predicted and known complexes) than seven unsupervised methods [6]. Crucially, it could detect challenging complexes, such as the sparse RNA polymerase I complex (14 proteins) and the small, poorly separated RecQ helicase-Topo III complex (3 proteins), which many density-based methods missed [6]. This highlights how supervised methods leveraging multiple topological features can outperform traditional density-based clustering.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 5: Key Research Reagents and Resources for PPI Network Studies

| Item / Resource | Category | Function in PPI Research | Example / Source |

|---|---|---|---|

| Hoechst 33342 | Fluorescent Dye | Stains nucleus (DNA); used in Cell Painting for cellular segmentation and nuclear feature extraction [4]. | Thermo Fisher Scientific |

| Phalloidin (conjugated) | Fluorescent Probe | Binds filamentous actin (F-actin); visualizes cytoskeletal morphology in phenotypic profiling [4]. | Sigma-Aldrich |

| Magnetic Protein A/G Beads | Chromatography Media | Used for Co-Immunoprecipitation (Co-IP) to pull down protein complexes with antibody specificity. | Pierce, Dynabeads |

| STRING Database | Bioinformatics Database | Provides known and predicted PPIs with confidence scores; essential for network construction and analysis [2] [3]. | https://string-db.org |

| Cytoscape | Software Platform | Open-source platform for visualizing, analyzing, and modeling molecular interaction networks [7]. | https://cytoscape.org |

| AutoDock Vina | Software Tool | Performs molecular docking to predict protein-ligand and protein-protein binding modes and affinities [5]. | Scripps Research |

| Gene Ontology (GO) | Annotation Resource | Provides standardized functional terms; used for enrichment analysis to interpret biological themes in PPI modules [2]. | http://geneontology.org |

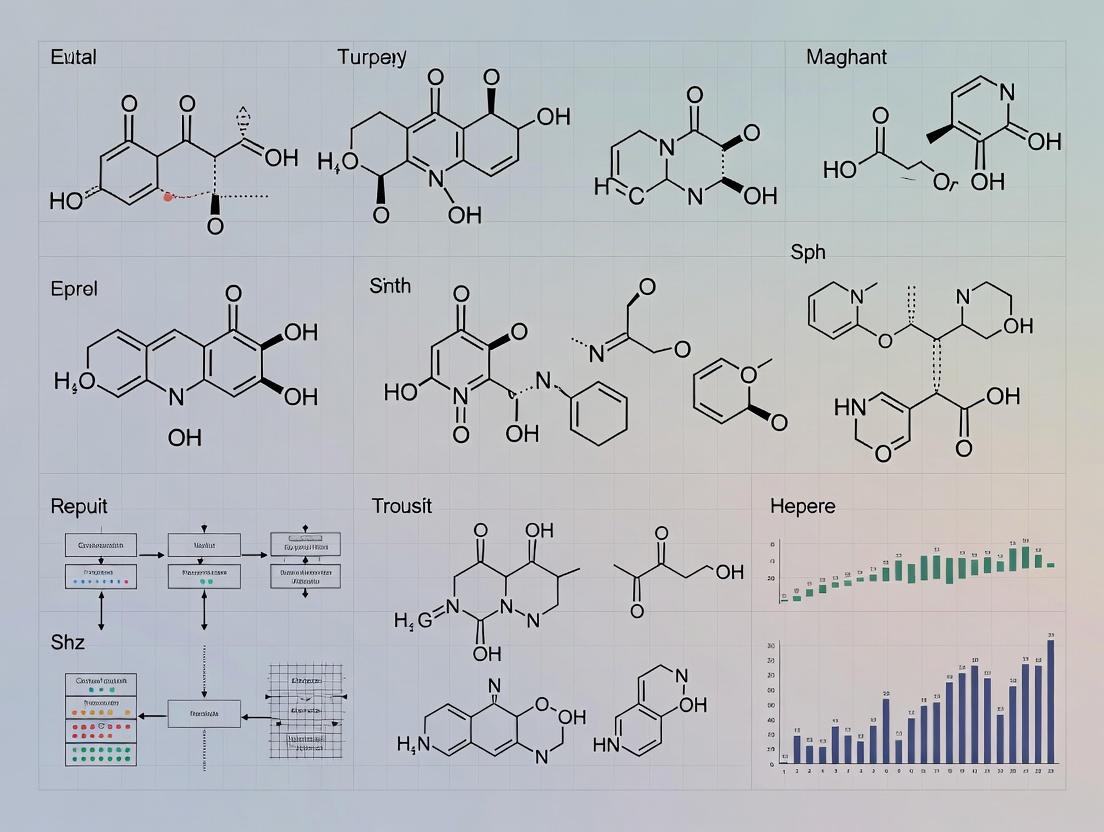

Visual Guides to PPI Analysis Workflows

Diagram 1: PPI Analysis and Discovery Workflow (760px max-width).

Diagram 2: From PPI Networks to Drug Target Identification (760px max-width).

In the field of chemogenomics and data-driven drug discovery, publicly accessible databases of bioactive compounds are foundational resources. They enable the translation of genomic information into therapeutic hypotheses and provide the essential datasets for training predictive computational models [8]. Within this landscape, ChEMBL and PubChem are two preeminent, open-access repositories, yet they are architected with distinct philosophies, scope, and curation standards [9]. A precise comparative analysis of their structural and bioactivity data is not merely an inventory exercise but a critical research activity. It directly informs the reliability of downstream scientific conclusions, affecting areas such as virtual screening, machine learning model development, and the identification of chemical probes [10] [11].

This guide provides an objective comparison of ChEMBL and PubChem, framed within the broader thesis of understanding variability and concordance in biologically active datasets. By dissecting their content, curation, and overlap, we equip researchers to make informed choices about database utility for specific tasks and to critically assess the integration of data from multiple sources [9].

Quantitative Database Comparison

The following tables summarize the core characteristics, content, and overlap between ChEMBL and PubChem, highlighting their complementary natures.

Table 1: Core Characteristics and Data Model

| Feature | ChEMBL | PubChem |

|---|---|---|

| Primary Focus | Manually curated bioactivity data for drug-like molecules [12] [8]. | Public repository for chemical structures and biological test results [13]. |

| Curational Approach | High, manual extraction from literature; data normalized to uniform endpoints [14] [15]. | Automated and submitter-driven; aggregates data from hundreds of sources with varying curation [9]. |

| Data Model | Assay- and target-centric, with detailed confidence tags and a sophisticated target classification system (e.g., SINGLE PROTEIN, PROTEIN FAMILY) [16]. | Substance- and compound-centric; links substances (SIDs) to unique chemical structures (CIDs) and assay data (AIDs) [13]. |

| Key Strength | Quality, consistency, and depth of annotated bioactivity data for drug discovery [14]. | Unparalleled breadth of chemical structures and a vast aggregation of screening data [9]. |

Table 2: Content Scale and Overlap (Current & Historical)

| Content Metric | ChEMBL | PubChem | Notes on Overlap |

|---|---|---|---|

| Unique Compounds | ~2.4 million (Release 33, 2023) [14]. | ~111 million total CIDs; subset with bioactivity is smaller [11]. | A 2022 study found only 0.14% of molecules were present in ChEMBL, PubChem, and three other specialized DBs [11]. |

| Bioactivity Records | >20.3 million measurements (Release 33) [14]. | Tens of millions of bioactivity outcomes [13]. | Significant divergence in activity annotations for shared compounds due to different sourcing and curation [11]. |

| Target Coverage | >17,000 targets (incl. proteins, cell-lines) [14]. | Broad but less uniformly annotated; linked via assay descriptions [13]. | Protein target mapping in ChEMBL is more precise and explicitly modeled [16]. |

| Growth Trajectory | Steady growth via manual curation and deposited datasets; literature coverage from 1970s onward [14]. | Rapid, linear growth dominated by vendor catalogs and automated patent extraction [9]. | A 2013 analysis noted ChEMBL's content in PubChem can differ from its direct download due to submission timing and processing rules [10]. |

Experimental Methodology for Comparative Analysis

Objective comparison requires standardized protocols to handle structural representation and data merging. The following methodology, derived from published comparative studies, outlines a robust approach [10] [11].

1. Data Acquisition and Pre-processing:

- Source: Obtain canonical structure files (e.g., SDF) directly from each database's official download portal to ensure version consistency [10].

- Standardization: Apply a consistent chemical structure normalization pipeline. This typically involves:

- Structure Cleaning: Correcting valences, removing duplicate fragments, and standardizing functional group representation.

- Tautomer Standardization: Using a canonical tautomer representation (e.g., via the InChI

KETandT13flags or toolkit-defined rules) to merge different tautomeric forms of the same compound [10]. - Descriptor Calculation: Generate standardized identifiers (InChIKey, canonical SMILES) and molecular properties (Molecular Weight, logP) for all structures after normalization [11].

2. Structural Overlap Analysis:

- Exact Match: Determine the set of compounds shared between databases by matching canonical SMILES or standard InChIKeys [11].

- Scaffold-Level Analysis: Perform Murcko scaffold decomposition to compare the diversity and overlap of core chemical frameworks. This reveals if databases cover distinct regions of chemical space despite sharing some compounds [11].

3. Bioactivity Data Concordance Assessment:

- Compound Matching: For compounds with shared structures, retrieve all associated bioactivity data (e.g., IC50, Ki, % inhibition).

- Endpoint Normalization: Convert all activity values to a common unit (e.g., nM) and log-scale.

- Statistical Comparison: For a given compound-target pair present in both databases, calculate the correlation or discrepancy between reported potency values. Large-scale analysis can identify systematic biases or outlier data points requiring manual curation [11].

4. Target Space Comparison:

- Identifier Mapping: Map protein targets to a common nomenclature (e.g., UniProt IDs) using database cross-references.

- Coverage Analysis: Compare the coverage of major druggable protein families (e.g., kinases, GPCRs) to identify database-specific biases [11].

Database Relationships and Data Flow

The public bioactivity data ecosystem is interconnected. Understanding how ChEMBL and PubChem relate to each other and to other resources is key for meta-analysis.

The following table lists key reagents, software, and resources essential for performing rigorous comparative analyses of bioactive compound databases.

Table 3: Essential Research Reagent Solutions for Database Comparison

| Tool/Resource | Function in Comparative Analysis | Example/Note |

|---|---|---|

| Cheminformatics Toolkit | Performs structure standardization, canonicalization, descriptor calculation, and fingerprint generation. | RDKit, OpenBabel, or CACTVS [10] are used for normalizing structures to a consistent representation. |

| Standardized Identifier | Provides a universal key for exact chemical structure matching across databases. | The InChIKey (including standard and tautomer-sensitive forms) is critical for overlap assessment [10] [11]. |

| Consensus Bioactivity Dataset | Serves as a benchmark for validating database quality and completeness. | Datasets that merge and curate records from multiple sources help identify errors and gaps [11]. |

| Target Mapping Service | Translates diverse database target identifiers to a common ontology. | UniProt ID mapping is essential for comparing protein coverage across resources [10]. |

| Meta-Portal/Linking Database | Reveals the provenance and multiplicity of records across the ecosystem. | UniChem explicitly tracks connections between identical structures in hundreds of sources, including ChEMBL and PubChem [9]. |

Defining Key Structural Features in Small Molecules and Their Bioactivity

In the contemporary landscape of rational drug design, the systematic elucidation of relationships between molecular structure and biological activity forms the cornerstone of efficient therapeutic development [17]. The transition from traditional, intuition-driven approaches to data-driven methodologies has been catalyzed by advancements in structural bioinformatics and artificial intelligence, enabling researchers to navigate vast chemical spaces with unprecedented precision [18] [19]. This guide presents a comparative analysis of the computational and experimental frameworks central to defining key structural features in small molecules, framed within the broader thesis of comparative analysis in biologically active datasets research. The objective is to provide a clear, evidence-based comparison of methods for quantifying structural features, predicting bioactivity, and optimizing lead compounds, supported by experimental data and standardized protocols for researchers and drug development professionals [20] [21].

Comparative Analysis of Structural Feature Quantification Methods

The accurate representation of a small molecule's structure is the foundational step in predicting its behavior. Traditional and modern methods offer different trade-offs between interpretability, computational efficiency, and predictive power [21].

Table 1: Comparison of Molecular Representation Methods for Feature Quantification

| Method Category | Key Examples | Description | Strengths | Weaknesses | Primary Application |

|---|---|---|---|---|---|

| 1D String-Based | SMILES, SELFIES, InChI | Linear strings encoding atom and bond sequences. | Human-readable, simple, compact storage [21]. | Poor capture of spatial relationships; single string can represent multiple tautomers. | Chemical database storage and registration [22]. |

| 2D Fingerprint-Based | MACCS, ECFP4, Morgan | Binary bit vectors indicating presence/absence of substructures. | Computationally efficient; excellent for similarity searching [20]. | Hand-crafted; may miss complex, non-linear structure-activity relationships. | Ligand-based virtual screening, similarity assessment [20] [21]. |

| 3D Descriptor-Based | Pharmacophore, 3D Molecule. | Numerical descriptors of shape, electrostatic potential, and hydrophobic fields. | Directly encodes spatial information critical for binding. | Conformationally dependent; higher computational cost. | Structure-based drug design, docking studies [18]. |

| AI-Driven Learned Representations | Graph Neural Networks, Transformers | High-dimensional vectors learned by models from large datasets. | Captures complex, non-obvious patterns; superior for novel scaffold prediction [19] [21]. | "Black-box" nature reduces interpretability; requires large, curated datasets. | Scaffold hopping, property prediction, de novo design [21]. |

Experimental evidence underscores the impact of representation choice. A 2025 benchmark study comparing target prediction methods found that the MolTarPred algorithm performed best when using Morgan fingerprints (a 2D method) over MACCS keys, demonstrating the importance of the feature set for predictive accuracy [20]. Conversely, for tasks like predicting RNA-binding affinity, quantitative structure-activity relationship (QSAR) models often rely on 3D descriptors or learned representations to capture subtle electronic and steric effects critical for interaction, as seen in studies of cobalamin derivatives targeting riboswitches [23].

Comparative Performance in Bioactivity Prediction

Predicting a molecule's biological activity—its binding affinity, efficacy, or mechanism of action—from its structure is a central challenge. The performance of different computational approaches varies significantly based on the prediction task and data availability.

Table 2: Comparative Performance of Bioactivity Prediction Methods

| Prediction Method | Underlying Principle | Typical Performance Metrics | Key Experimental Finding | Data Requirements |

|---|---|---|---|---|

| Quantitative Structure-Activity Relationship | Statistical model linking descriptors to measured activity. | R², RMSE, Q². | A 2025 study on acylshikonin derivatives achieved a Principal Component Regression model with R² = 0.912 and RMSE = 0.119, identifying hydrophobic and electronic descriptors as key [24]. | Curated dataset of compounds with homogeneous activity data. |

| Molecular Docking | Computational simulation of ligand binding to a protein target. | Docking score (kcal/mol), pose accuracy. | For acylshikonin derivatives, compound D1 showed a docking score of -7.55 kcal/mol with target 4ZAU, indicating strong predicted binding [24]. | High-resolution 3D structure of the target protein. |

| Ligand-Centric Target Prediction | Compares query molecule to a database of known ligand-target pairs. | Recall, Precision, AUC. | MolTarPred was identified as the most effective method in a 2025 benchmark, particularly using Morgan fingerprints [20]. | Large, annotated database of ligand-target interactions (e.g., ChEMBL). |

| AI-Driven Property Prediction | End-to-end deep learning models trained on diverse datasets. | Varies by task (AUC, RMSE). | Models like FP-BERT and MolMapNet show state-of-the-art results for ADMET and activity prediction by transforming fingerprints into learnable features [21]. | Very large, diverse, and well-structured datasets. |

A critical insight from comparative studies is that no single method is universally superior. For instance, while docking provides mechanistic insight, its accuracy is limited by the quality of the protein structure and scoring function [18] [17]. Conversely, QSAR models can be highly accurate for congeneric series but fail to extrapolate to novel scaffolds [24]. The emerging best practice is a consensus or integrated approach. The acylshikonin study exemplifies this, combining QSAR, docking, and ADMET prediction into a single workflow to prioritize lead compounds [24].

Experimental Protocols for Key Methodologies

Integrated QSAR Modeling Workflow

The following protocol is adapted from a 2025 study on acylshikonin derivatives [24]:

- Dataset Curation: Compile a series of 24 structurally related compounds with experimentally determined cytotoxic activity (e.g., IC50 values).

- Descriptor Calculation: Compute a wide range of molecular descriptors (e.g., topological, electronic, hydrophobic) for each compound using software like RDKit or PaDEL.

- Data Reduction: Apply Principal Component Analysis (PCA) to reduce descriptor dimensionality and mitigate multicollinearity.

- Model Building & Validation:

- Split data into training and test sets.

- Build multiple model types (e.g., Multiple Linear Regression (MLR), Partial Least Squares (PLS), Principal Component Regression (PCR)).

- Validate models using cross-validation and external test sets. Evaluate with R² and Root Mean Square Error (RMSE). The cited study found the PCR model performed best [24].

- Interpretation: Analyze model coefficients to identify which structural descriptors (e.g., hydrophobicity, electron density) are most statistically significant for activity.

Benchmarking Target Prediction Methods

This protocol is based on a 2025 comparative analysis [20]:

- Database Preparation:

- Use a comprehensive database like ChEMBL (version 34). Filter bioactivity records for high-confidence interactions (confidence score ≥ 7) and standard values (IC50/Ki/EC50 < 10,000 nM).

- Remove non-specific targets and duplicate compound-target pairs to create a clean benchmark set.

- Separate a subset of FDA-approved drugs not present in the training database to use as a blinded query set.

- Method Evaluation:

- Select a panel of stand-alone and web-server methods (e.g., MolTarPred, PPB2, RF-QSAR, TargetNet).

- Run each method to predict targets for the blinded query molecules.

- Compare predictions to known, experimentally validated interactions from the literature.

- Performance Analysis:

- Calculate standard metrics (Recall, Precision, AUC) for each method.

- The cited study concluded MolTarPred with Morgan fingerprints provided the best overall performance [20].

Diagram 1: Generalized Workflow for Comparative Method Analysis.

The Scientist's Toolkit: Essential Research Reagents and Platforms

Effective research in this field relies on a combination of software tools, databases, and data management platforms.

Table 3: Key Research Reagent Solutions for Structural Bioinformatics

| Tool/Resource Name | Type | Primary Function | Application in Comparative Analysis |

|---|---|---|---|

| ChEMBL Database | Public Database | Repository of bioactive molecules with drug-like properties, annotated with targets and activities [20]. | Provides the essential, curated datasets for training and benchmarking ligand-centric prediction models. |

| RDKit | Open-Source Cheminformatics Library | Provides functions for calculating molecular descriptors, fingerprints, and handling chemical data [21]. | The workhorse for standardizing molecules, generating input features for QSAR, and performing similarity searches. |

| AlphaFold & PDB | Structure Prediction & Database | Provides high-quality 3D protein structure predictions (AlphaFold) and experimentally solved structures (PDB) [18] [25]. | Supplies target structures for structure-based methods like molecular docking and dynamics simulations. |

| CDD Vault | Scientific Data Management Platform (SDMP) | Centralizes and structures experimental data for small molecules and biologics, linking structures to assay results [22]. | Critical for maintaining AI-ready datasets, ensuring reproducibility, and enabling cross-team collaboration on SAR studies. |

| Graph Neural Network Libraries (e.g., PyTorch Geometric) | AI/ML Framework | Facilitates the development of deep learning models on graph-structured molecular data [21]. | Enables the creation and testing of modern AI-driven molecular representation and property prediction models. |

The integration of these tools into a cohesive pipeline is paramount. As noted in a 2025 perspective, the value of AI models is contingent on robust, structured, and accessible data [22]. Platforms like CDD Vault are highlighted as critical for transforming raw experimental results into AI-ready datasets, directly supporting use cases like SAR optimization and hit triage [22].

Diagram 2: Standard QSAR Modeling Workflow.

The comparative analysis of methods for defining structural features and predicting bioactivity reveals a dynamic field moving towards hybrid and integrated workflows. The combination of traditional, interpretable descriptors with powerful AI-driven models is emerging as a powerful paradigm to balance accuracy with understanding [19] [21]. Future progress hinges on addressing key challenges: improving the interpretability of deep learning models, generating high-quality, standardized public datasets, and developing more physiologically relevant computational models that account for dynamics and cellular context [25] [17]. As these tools evolve, their systematic comparison within well-defined frameworks will remain essential for guiding researchers toward the most efficient and reliable strategies for unlocking the therapeutic potential encoded in small molecule structures.

Diagram 3: Benchmarking Process for Target Prediction Methods.

The Role of Pathway Databases and Biological Network Contexts

Abstract This comparison guide provides a systematic evaluation of major pathway databases and biological network analysis methods, contextualized within a broader thesis on comparative structural analysis of biologically active datasets. We synthesize current experimental data to benchmark the performance, robustness, and interpretability of these critical resources. Direct comparisons reveal that the structural features inherent to each database—such as curation focus, hierarchical organization, and topological detail—profoundly influence downstream analytical outcomes in enrichment analysis, predictive modeling, and machine learning applications. For researchers and drug development professionals, this guide offers evidence-based recommendations for tool selection based on specific biological questions and data types.

Comparative Analysis of Major Pathway Database Architectures

Pathway databases serve as foundational blueprints for systems biology, but their structural heterogeneity directly impacts analytical conclusions. The choice between primary and integrative resources represents a critical first decision.

Table 1: Comparative Structural Features and Usage of Major Pathway Databases [26] [27] [28]

| Database Name | Type | Primary Curation Focus | Typical Pathway Count & Scope | Key Structural Characteristics | Reported Publication Count (Example) |

|---|---|---|---|---|---|

| KEGG | Primary | Metabolic and signaling pathways | ~500 pathways; Broad organism coverage | Hierarchical, manual curation; Classic pathway maps | 27,713 [26] |

| Reactome | Primary | Human biological processes | ~2,500 pathways; Detailed reaction-level data | Event-oriented, peer-reviewed; Detailed hierarchical structure | 3,765 [26] |

| WikiPathways | Primary | Community-curated pathways | ~700 pathways; Diverse model organisms | Collaborative, open curation; Rapidly updated | 651 [26] |

| MSigDB | Integrative | Gene sets for GSEA | ~30,000 sets; Includes Hallmarks, curated genesets | Collection of multiple resources (inc. KEGG, Reactome); Categorical divisions | 2,892 [26] |

| Pathway Commons | Integrative | Aggregated pathway information | ~3,000 pathways from multiple sources | Meta-database; Standardized BioPAX format | 1,640 [26] |

| MPath (Integrative Example) | Integrative | Merged equivalent pathways | ~2,900 pathways (merged from KEGG, Reactome, WikiPathways) [26] | Graph union of analogous pathways from primary sources; Reduces redundancy | N/A (Benchmarking study) |

Performance Implications of Database Choice: Experimental benchmarking demonstrates that the choice of database is non-trivial. A 2019 study analyzing five TCGA cancer datasets showed that statistically equivalent pathways from KEGG, Reactome, and WikiPathways yielded disparate enrichment results [26]. Furthermore, the performance of machine learning models for clinical prediction tasks was significantly dataset-dependent based on the underlying pathway resource. The study's integrative database, MPath—created by merging analogous pathways via graph union—improved prediction performance and reduced result variance in some cases, demonstrating the potential of integrated structural contexts [26].

Benchmarking Pathway and Network Analysis Methods

Analysis methods leverage database structures to infer biological activity. They are broadly categorized as non-Topology-Based (non-TB) or Pathway Topology-Based (PTB), with significant performance differences.

Table 2: Performance Benchmarking of Pathway Activity Inference Methods [29] [30]

| Method Category | Example Methods | Key Principle | Performance Metric (Robustness) | Comparative Insight |

|---|---|---|---|---|

| Non-Topology-Based (non-TB) | GSVA, PLAGE, COMBINER, PAC | Treats pathways as flat gene lists; no interaction data used. | Lower mean reproducibility power (range: 10-493 across studies) [29]. | Sensitivity to sample size and DEG threshold; may miss pathway deregulation patterns [30]. |

| Pathway Topology-Based (PTB) | SPIA, CePa, DEGraph, e-DRW | Incorporates interaction type, direction, and network topology. | Higher mean reproducibility power (range: 43-766) [29]. e-DRW consistently ranked top [29]. | Generally superior robustness; better at identifying biologically relevant, context-specific deregulation [29] [30]. |

| Advanced Network Dynamics | RACIPE, DSGRN | Models parameter space of gene regulatory networks (GRNs). | High agreement (>90% in studies) between stochastic simulation (RACIPE) and combinatorial parameter analysis (DSGRN) for core networks [31]. | Provides comprehensive view of potential network behaviors (e.g., multistability) beyond static enrichment [31]. |

Experimental Protocol for Robustness Evaluation: A key 2024 benchmarking study provides a template for comparison [29]. The protocol involved:

- Data Preparation: Six public cancer microarray gene expression datasets (e.g., BRCA, LUAD) were uniformly processed.

- Method Application: Seven methods (4 non-TB, 3 PTB) were applied to each dataset to infer pathway activity scores.

- Robustness Assessment (Two-pronged):

- Pathway Activity Robustness: The top-k active pathways were selected. Their reproducibility power was quantified using the C-score metric, which measures consistency of pathway rankings across repeated subsampling of the dataset [29].

- Prediction Robustness: Active pathways were used to build classifiers to predict sample phenotypes. The reproducibility of identified risk-active pathways and gene markers was assessed [29].

- Statistical Comparison: The mean reproducibility power and coefficient of variation were calculated across all datasets for each method.

Pathway Integration in Interpretable Artificial Intelligence

Pathway-Guided Interpretable Deep Learning Architectures (PGI-DLAs) directly embed database structures as model blueprints, making AI decisions biologically interpretable.

Table 3: Impact of Database Choice on PGI-DLA Model Design & Performance [27]

| Database Used as Blueprint | Compatible Omics | Model Architecture Examples | Interpretability Advantage |

|---|---|---|---|

| Gene Ontology (GO) | Genomics, Transcriptomics | DCell, DrugCell, VNNs [27] | Functional hierarchy (BP, MF, CC) provides multi-scale explanation from process to component. |

| KEGG | Transcriptomics, Metabolomics | KP-NET, PathDNN, sparse DNNs [27] | Well-defined pathway maps allow mapping of activity to specific pathway modules (e.g., KEGG modules). |

| Reactome | Multi-omics, Clinical data | P-NET, IBPGNET, GNNs [27] | Detailed reaction hierarchy and event-based structure enable mechanistic tracing of signal flow. |

| MSigDB (e.g., Hallmarks) | Transcriptomics, Survival data | Cox-PASNet, PASNet [27] | Curated, cancer-relevant gene sets (like Hallmarks) yield compact, disease-focused explanations. |

Experimental Insight: The choice fundamentally shapes the model. For instance, using Reactome's detailed hierarchy allows a model to not only predict drug response but also indicate whether the effect is mediated through specific signaling events like "Signaling by ERBB4" [27]. In contrast, MSigDB Hallmarks provide a higher-level, more condensed view of cellular programs like "Epithelial-Mesenchymal Transition" [27].

Advanced Network Contexts: From Single-Cell to Dynamic Modeling

Moving beyond static pathways, research leverages broader network contexts.

Single-Cell Network Integration (scNET): The scNET method exemplifies advanced integration by combining single-cell RNA-seq data with a global Protein-Protein Interaction (PPI) network using a dual-view graph neural network [32]. The experimental workflow shows:

- Input scRNA-seq data (high noise, dropout) and a PPI network (e.g., from STRING).

- Joint embedding via GNNs propagates gene expression information across PPI edges and refines cell-cell similarity graphs.

- Output includes denoised gene embeddings and cell embeddings. Result: scNET embeddings showed a mean correlation of ~0.17 with Gene Ontology semantic similarity, outperforming methods without prior network integration. This led to better functional gene clustering and improved differential pathway enrichment analysis across cell types [32].

Comparative Network Dynamics (RACIPE vs. DSGRN): For analyzing Gene Regulatory Network (GRN) dynamics, two methods are compared [31]:

- RACIPE (Random Circuit Perturbation): Uses random parameter sampling within a physiologically plausible range to simulate ODE models (Hill functions). It generates an ensemble of steady states to identify prevalent network behaviors (e.g., bistability) [31].

- DSGRN (Dynamic Signatures Generated by Regulatory Networks): Uses combinatorial decomposition of the entire parameter space into regions with equivalent dynamics. It provides a rigorous, discrete map of all possible dynamic regimes without continuous simulation [31]. Finding: Studies on toggle-switch and feedback-loop networks show remarkable agreement (>90%) between the two approaches, validating that DSGRN's parameter domains accurately predict ODE dynamics even for biologically realistic, non-infinite Hill coefficients [31].

Visualization of the scNET Integrative Architecture

Essential Research Toolkit

This table catalogs key resources for conducting analyses within pathway and network contexts.

Table 4: Research Reagent Solutions for Pathway & Network Analysis [26] [27] [28]

| Resource Type | Name | Primary Function / Description |

|---|---|---|

| Primary Pathway DBs | KEGG, Reactome, WikiPathways | Provide foundational, curated pathway knowledge with distinct structural focuses (metabolic, reaction-level, community-driven) [26]. |

| Integrative DBs/Tools | MSigDB, Pathway Commons, MPath, OmniPath | Aggregate multiple sources to increase coverage or create consensus resources, reducing single-database bias [26] [27]. |

| PPI Networks | STRING, BioGRID | Provide large-scale protein interaction data (physical/functional) for network-based analysis and integration with omics data [28] [32]. |

| Enrichment Analysis | GSEA, clusterProfiler | Standard tools for performing gene set enrichment analysis (non-TB methods) [26]. |

| Topology-Based Analysis | SPIA, CePa, e-DRW (R) | Software packages implementing PTB methods that incorporate pathway structure into significance testing [29] [30]. |

| Network Dynamics | RACIPE, DSGRN | Tools for modeling and analyzing the dynamic behavior of gene regulatory networks across parameter spaces [31]. |

| AI Integration | DCell, P-NET, scNET | Reference implementations of PGI-DLAs that use pathway/network structures as model backbones for interpretable prediction [27] [32]. |

| Visualization | Cytoscape, Graphviz | Essential platforms for visualizing and exploring biological networks and pathways [33]. |

Synthesis and Strategic Recommendations for Comparative Research

Within the thesis framework of comparing structural features in biological datasets, the evidence indicates that the architecture of the knowledge base is as consequential as the algorithm applied to it.

Key Structural Determinants of Performance:

- Granularity vs. Coverage: High-detail databases (Reactome) support mechanistic deep dives but may be complex for screening. Broad-coverage resources (KEGG, MSigDB) are efficient for initial discovery [26] [27].

- Static vs. Dynamic Context: Static pathway enrichment (non-TB/PTB) identifies perturbed modules. Dynamic modeling (RACIPE/DSGRN) explains how perturbations alter system stability or state transitions [31].

- Flat vs. Hierarchical Organization: Flat gene sets simplify analysis. Hierarchical structures (GO, Reactome) enable multi-resolution interpretation, crucial for PGI-DLAs [27].

Actionable Recommendations:

- For Novel Biomarker Discovery: Use integrative databases (MPath, MSigDB) or PTB methods (e-DRW, SPIA) to maximize robustness and biological consistency across heterogeneous datasets [26] [29].

- For Mechanistic Hypothesis Generation: Use detailed primary databases (Reactome) coupled with PTB or dynamic methods (CePa, RACIPE) to pinpoint specific pathway components and interactions for experimental validation [31] [30].

- For Developing Interpretable AI Models: Select a database whose structural hierarchy aligns with the desired explanation level (e.g., GO for cellular function, Reactome for signaling mechanisms) as the model's architectural blueprint [27].

- For Single-Cell Omics Analysis: Employ network integration methods (scNET) that contextualize sparse data within PPI networks to improve functional inference and cell state characterization [32].

Conclusion: The role of pathway databases and network contexts is foundational and transformative. Their structural features—curation philosophy, topological completeness, and organizational logic—propagate through the analytical pipeline, systematically influencing the identification of biomarkers, the elucidation of disease mechanisms, and the interpretability of complex models. A deliberate, comparative approach to selecting these resources, informed by their documented performance characteristics, is therefore a critical component of rigorous research in systems biology and translational drug development.

Advanced Methodologies for Structural Analysis and Application in Drug Discovery

Within the broader thesis of comparative analysis of structural features in biologically active datasets, the computational alignment and comparison of protein structures serve as a foundational pillar. Understanding protein function, elucidating evolutionary relationships, and guiding rational drug design all depend on the ability to accurately quantify structural similarity. However, proteins are dynamic molecules that adopt different conformations to perform their functions. This inherent flexibility presents a significant challenge for traditional rigid-body alignment algorithms. The field has therefore developed specialized tools to address varying needs: detecting flexible hinge motions, comparing multiple structures simultaneously, and performing rapid, large-scale database searches. This guide provides a comparative analysis of three tools—FlexProt, MultiProt, and MASS—framed within the context of contemporary research needs, supported by experimental performance data and detailed methodological protocols.

Comparative Analysis of Tool Performance and Capabilities

The following table synthesizes the core characteristics and performance metrics of the featured structural alignment tools based on available literature and experimental benchmarks.

Table: Comparative Overview of Structural Alignment Tools

| Tool | Primary Method | Key Strength | Typical Use Case | Reported Performance | Scalability |

|---|---|---|---|---|---|

| FlexProt [34] [35] | Graph theory & clustering of maximal congruent rigid fragments. | Flexible alignment without predefined hinges. Simultaneously aligns rigid subparts and detects hinge regions. | Comparing conformers of a single protein or homologous proteins with domain motions. | ~7 seconds for a 300-residue pair on a 400 MHz PC [34]. | Designed for pairwise comparison. |

| MultiProt | (Note: Insufficient current data from provided search results for a detailed summary.) | Multiple structure alignment. Aligns several protein structures concurrently to identify common cores. | Identifying conserved structural motifs across a protein family or functional group. | N/A | Limited to moderate-sized sets. |

| MASS | (Note: Insufficient current data from provided search results for a detailed summary.) | Alignment of spatially similar regions regardless of connectivity. | Detecting common binding sites or structural motifs in spatially distant regions. | N/A | Pairwise comparison. |

| SARST2 [36] | Filter-and-refine with machine learning, using AAT, SSE, WCN, and PSSM entropy. | High-throughput database search. Extreme speed and memory efficiency for massive databases. | Searching entire predicted structure databases (e.g., AlphaFold DB) for homologs. | 3.4 min for AlphaFold DB search (32 CPUs); 96.3% avg. precision [36]. | Exceptionally high; handles hundreds of millions of structures. |

| DeepSCFold [37] | Deep learning prediction of structural similarity (pSS-score) and interaction probability (pIA-score). | Protein complex structure modeling. Constructs paired MSAs for accurate multimer prediction. | Predicting quaternary structures of complexes, especially antibody-antigen interfaces. | 11.6% & 10.3% TM-score improvement over AF-Multimer & AF3 on CASP15 [37]. | Computationally intensive; focused on high-accuracy complex prediction. |

Detailed Experimental Protocols

FlexProt Flexible Alignment Protocol The FlexProt algorithm is designed to align pairs of flexible protein structures without prior knowledge of hinge locations [34] [35]. Its workflow is as follows:

- Input Structures: Provide two protein structures in PDB format.

- Fragment Detection: The algorithm efficiently detects all maximal congruent rigid fragments (MCRFs) between the two molecules. These are pairs of short, contiguous backbone segments from each protein that can be superimposed within a defined RMSD threshold.

- Graph Construction: Each detected MCRF-pair is represented as a node in a graph. Edges are drawn between nodes if their corresponding MCRF-pairs are compatible—meaning they do not violate the protein's sequence order and can be part of the same global alignment.

- Clustering & Alignment: A graph theoretic clustering procedure groups nodes (MCRF-pairs) that share the same three-dimensional rigid transformation. Each cluster represents a larger, aligned rigid body. The final flexible alignment is the optimal arrangement of these clusters, with the regions between them defined as flexible hinges.

- Output: The result is a set of aligned rigid subparts, the transformation matrices for each, the location of hinge regions, and a corresponding sequence alignment.

QuanTest Benchmarking Protocol for Alignment Quality To objectively evaluate and compare the quality of multiple sequence alignments (MSAs), which are often used as input for structural analysis, the QuanTest benchmark was developed [38]. Its protocol is:

- Dataset Construction: Create test sets by combining sequences from the Pfam database (for volume) with structurally aligned sequences from the Homstrad database (for reference truth). For example, build test cases with 200 or 1000 sequences.

- Alignment Generation: Run the MSA tool to be evaluated (e.g., T-Coffee, Clustal Omega) on each test set.

- Alignment Filtering: For each generated MSA, select three reference sequences with known structure. Create a filtered version of the alignment by removing all columns where the reference sequence has a gap.

- Secondary Structure Prediction (SSP): Submit each filtered alignment to the JPred server via its API to predict the secondary structure of the reference sequence.

- Accuracy Calculation: Compare the predicted secondary structure against the known reference from Homstrad. Calculate the prediction accuracy (Q3 score) as the percentage of correctly assigned residues (helix, strand, coil).

- Score Aggregation: The final QuanTest score for the MSA tool is the average secondary structure prediction accuracy across all reference sequences and test cases. The underlying assumption is that a better MSA leads to more accurate secondary structure prediction [38].

FlexProt Flexible Alignment Workflow

QuanTest MSA Benchmarking Protocol

Table: Key Resources for Structural Alignment Research

| Resource Name | Type | Primary Function in Analysis |

|---|---|---|

| SCOP Database [34] | Classification Database | Provides a curated, hierarchical classification of protein structures (Family, Superfamily, Fold), serving as a gold standard for benchmarking homology detection tools. |

| Protein Data Bank (PDB) Files | Experimental Data | The source files of atomic coordinates for protein structures, serving as the fundamental input for all structural alignment and comparison algorithms. |

| JPred Server [38] | Web Service / Tool | Provides secondary structure prediction from sequence or alignment; used as the evaluation engine in the QuanTest benchmark to infer MSA quality. |

| Homstrad Database [38] | Benchmark Dataset | A collection of protein families with structurally aligned members, used to create reference "true" alignments for testing and validation purposes. |

| AlphaFold Protein Structure Database [36] | Predicted Structure Database | A massive repository of predicted protein structures; tools like SARST2 are specifically optimized for high-throughput searches against this scale of data. |

The selection of an appropriate structural alignment tool must be driven by the specific research question within the comparative analysis of biologically active datasets. For studying intrinsic protein flexibility and conformational changes, FlexProt remains a foundational method for its robust hinge-detection capability [34] [35]. For the emerging challenge of navigating the vast landscape of predicted structures, modern, ultra-efficient tools like SARST2 are indispensable, offering unparalleled speed and manageable memory footprints for database-scale analysis [36]. Meanwhile, for the critical task of predicting protein-protein interactions and complex structures, deep learning approaches like DeepSCFold represent the cutting edge, leveraging sequence-derived structural information to achieve superior accuracy [37].

This evolving toolkit allows researchers to move seamlessly from analyzing detailed molecular motions to mapping the structural relationships across entire proteomes, directly supporting drug development efforts through target identification, functional annotation, and interaction surface analysis.

Sequence-Based Analysis with BLAST for Evolutionary and Functional Insights

Within the broader thesis of comparative analysis of structural features in biologically active datasets, sequence-based analysis stands as the fundamental first step. The exponential growth of biological sequence data has rendered manual comparison impossible, necessitating robust computational tools [39]. The Basic Local Alignment Search Tool (BLAST) is the definitive standard for this task, enabling researchers to infer functional and evolutionary relationships by finding regions of local similarity between nucleotide or protein sequences [40] [41]. For drug development professionals, this is often the critical initial step in target identification, where a protein sequence of interest is compared against vast databases to find homologs, understand conserved functional domains, and predict structure [42]. This guide provides a comparative framework for utilizing BLAST and alternative tools to extract maximum evolutionary and functional insight from sequence data, supported by experimental data on performance and efficacy.

Comparative Performance Analysis: BLAST Versus Alternative Tools

Selecting the correct sequence analysis tool depends on the specific research goal, whether it’s raw speed, sensitivity for distant homology, or specialized database searches. The following table compares BLAST (represented by its standard protein search, BLASTp) against other common tools.

Table 1: Comparative Analysis of Sequence Similarity Search Tools

| Tool | Primary Use Case & Strength | Typical Speed | Sensitivity for Distant Homologs | Key Limitation |

|---|---|---|---|---|

| BLAST (BLASTp) | Fast, versatile local alignment; ideal for general homology searches and functional annotation [40] [41]. | Very Fast | Moderate | May miss very distant evolutionary relationships (remote homologs). |

| PSI-BLAST | Iterative, profile-based search; excellent for finding remote protein homologs by building a position-specific scoring matrix [42]. | Fast (per iteration) | High | Risk of "profile drift" accumulating errors over iterations if not carefully monitored. |

| HMMER (hmmscan) | Profile Hidden Markov Model search; superior for identifying membership in protein families and domains using curated models (e.g., Pfam) [39]. | Moderate | Very High | Requires pre-built, high-quality HMM profiles; slower than BLAST for single sequences. |

| DIAMOND | Ultra-fast protein alignment; designed for high-throughput metagenomic analysis against large databases (e.g., nr) [39]. | Extremely Fast | Low to Moderate | Less sensitive than BLASTp for standard searches; a trade-off for speed. |

| CLUSTAL Omega / MAFFT | Multiple Sequence Alignment (MSA) tools; used for deep evolutionary analysis and consensus building after homologs are identified [41] [43]. | Slow (for MSA) | N/A (Alignment tool) | Computationally intensive; not a database search tool. Requires pre-identified sequence set. |

Experimental Protocols for Evolutionary and Functional Analysis

Core Protocol: Identifying a Protein and Its Homologs

This fundamental protocol is used to annotate an unknown protein sequence and find its evolutionary relatives [41].

- Sequence Submission: Navigate to the NCBI BLAST web interface. Select BLASTp (for a protein query). Paste your amino acid sequence (e.g., in FASTA format) into the query box [41].

- Database Selection: Choose a non-redundant protein database, such as "nr" or a curated subset like UniProtKB/Swiss-Prot [44]. For evolutionary studies, restricting the search to a specific taxonomic group (e.g., Mammalia) can be useful.

- Parameter Configuration: Key parameters influence results:

- Max Target Sequences: Determines the number of results. Set to 100-500 for a broad view [39].

- Expect Threshold (E-value): The statistical significance threshold. A lower value (e.g., 0.001, 0.01) yields more stringent, reliable matches [41].

- Word Size: Larger word sizes (e.g., 6 for proteins) increase speed but decrease sensitivity for short matches.

- Execution and Analysis: Run BLAST. Analyze the "Descriptions" tab, sorting by E-value (lowest is best) and Percent Identity. A high Query Cover indicates the match spans much of your protein [41]. Examine top hits for functional annotation and potential homologs across species.

Advanced Protocol: Sampling Strategy for Efficient Multiple Alignment Construction

A common challenge is that a single BLASTp search can yield hundreds to thousands of homologs, making downstream structural/functional analysis cumbersome [39]. This protocol tests different sampling methods to reduce dataset size while preserving critical functional information, such as active site residues.

- Initial Search: Perform a BLASTp search for a query protein with a known, annotated active site against the UniRef90 database (clustered at 90% identity to reduce redundancy) [39].

- Sequence Sampling: Apply different sampling methods to the results (sequences with E-value ≤ 0.001):

- Strips Method (sm): User-defined selection of the top N sequences.

- Random Method (rm): Random selection of a user-defined percentage of sequences.

- Automatic Methods: Mean Method (mm) and Second Derivative Method (sdm), which use the distribution of E-values to automatically determine a cutoff point [39].

- Multiple Alignment: For each sampled set and the original full set, construct a Multiple Alignment of Complete Sequences (MACS) using a tool like COBALT or MAFFT [43] [39].

- Evaluation Metrics: Quantify the performance of each sampling method:

- Reduction Rate: (1 - [Sampled Sequences] / [Total Sequences]) * 100.

- Alignment Quality: Measured via column score or consensus residue agreement.

- Functional Information Retention: Percentage of known active site residues from the query that remain conserved in the consensus of the sampled alignment [39].

Table 2: Performance of Sampling Methods on a Test Set of 284 Protein Families [39]

| Sampling Method | Mean Sequence Reduction Rate (%) | Standard Deviation | Key Characteristic | Impact on Active Site Conservation |

|---|---|---|---|---|

| Mean Method (mm) | 70 | 14 | Automatic, based on E-value distribution. | Preserves >95% of active site information in >90% of test cases. |

| Second Derivative Method (sdm) | 70 | 14 | Automatic, identifies inflection point in E-value list. | Comparable to mm; effective at retaining functional signals. |

| Strips Method (sm, top N) | 71 | 26 | User-controlled, simple. | Performance varies widely; can omit distant but informative homologs. |

| Random Method (rm, 70%) | 70 (set) | Not Provided | Random sampling. | Unreliable; risks losing key sequences and degrading alignment quality. |

Visualization of Analysis Workflows

BLAST Analysis Workflow for Functional and Evolutionary Insight

Table 3: Key Resources for BLAST-Based Structural and Functional Analysis

| Resource Category | Specific Item / Database | Function & Utility in Analysis |

|---|---|---|

| Core Search Programs [40] [41] | BLASTn, BLASTp, BLASTx, tBLASTn | Each optimized for specific query/database types (nucleotide/protein). tBLASTn is crucial for finding protein-coding regions in unannotated nucleotide data (e.g., ESTs). |

| Curated Protein Databases [39] [44] | UniProtKB/Swiss-Prot, UniRef90, PDB | Provide high-quality, annotated sequences. UniRef90 reduces redundancy. PDB links sequences to known 3D structures for direct structural inference. |

| Specialized Databases | ClusteredNR, Organism-specific db | ClusteredNR groups similar sequences to simplify taxonomic assessment. Organism-specific databases focus searches for targeted comparisons [43]. |

| Downstream Analysis Tools [43] | COBALT, MSA Viewer, TreeViewer | COBALT creates multiple alignments. TreeViewer generates phylogenetic trees from BLAST results to visualize evolutionary relationships. |

| Analysis Parameter | Expect Value (E-value), Percent Identity | E-value: Primary metric for statistical significance of a hit. Percent Identity: Direct measure of sequence conservation; high values suggest conserved function [41]. |

Application in Drug Discovery: A Strategic Pathway

In computer-aided drug design (CADD), BLAST initiates the target discovery pipeline [42]. A protein implicated in a disease is used as a query to identify orthologs (same function in different species) for potential animal model studies, and paralogs (similar function within the same genome) which may reveal selectivity constraints. Crucially, identifying conserved functional domains and active site residues across homologs validates the target's essentiality and defines a conserved region for drug binding. The following diagram integrates BLAST into this broader context.

BLAST in the Drug Target Discovery and Validation Pipeline

For researchers conducting comparative analysis of structural features, BLAST remains an indispensable, high-performance tool for initial homology detection. Based on the comparative data and experimental protocols:

- For General Purpose Annotation & Homology Search: Standard BLASTp or BLASTn, with careful attention to E-value and organism filters, provides the best balance of speed and reliability [41].

- For Deep Evolutionary Studies & Remote Homology: PSI-BLAST or HMMER are superior choices, as they utilize sequence profiles that capture more subtle patterns conserved across deep evolutionary time [42] [39].

- For Managing Large Result Sets: Employ an automatic sampling method like the Mean Method (mm) prior to multiple alignment construction. This strategy reduces computational load by approximately 70% while robustly preserving the critical functional information contained in active site residues [39].

The integration of BLAST-driven sequence analysis with structural databases and downstream bioinformatic tools creates a powerful framework for generating testable hypotheses about protein function, evolution, and druggability, directly supporting the goals of modern biologically active dataset research.

Within the broader thesis of comparative analysis of structural features in biologically active datasets, the systematic integration and robust analysis of bioassay data stand as critical foundational steps. The PubChem database, established by the National Center for Biotechnology Information (NCBI), has evolved into the largest public repository of chemical information and biological activity data [45]. It serves as an indispensable resource for researchers, scientists, and drug development professionals engaged in cheminformatics, chemical biology, and early-stage drug discovery [46] [45].

PubChem organizes its vast data into three interlinked primary databases: the Substance database (containing depositor-provided chemical descriptions), the Compound database (housing unique, standardized chemical structures), and the BioAssay database (storing descriptions and results from biological experiments) [45]. As of late 2024, PubChem aggregates data from over 1,000 sources, encompassing more than 119 million unique compounds, 295 million bioactivity data points, and results from 1.67 million biological assays [46]. This massive scale, combined with a suite of integrated analytical tools, enables researchers to perform comparative analyses, identify structure-activity relationships (SAR), and prioritize compounds for further experimental validation within a unified platform. The following analysis provides a comparative guide to PubChem's capabilities, benchmarking its performance and utility against other key resources in the field.

To contextualize PubChem's utility in structural feature analysis, its features and performance are compared against other widely used databases. Each resource offers unique strengths, making them suited for different stages of research, from target identification and chemical screening to pathway analysis and structural biology.

Table 1: Comparative Analysis of PubChem and Key Alternative Resources

| Feature / Resource | PubChem [46] [45] | ChEMBL [45] | STRING [47] | AlphaFold DB [48] |

|---|---|---|---|---|

| Primary Content Type | Chemicals, substances, bioactivity data | Bioactive drug-like molecules, ADMET data | Protein-protein interaction networks | Protein structure predictions |

| Key Strength | Largest public bioactivity repository; highly integrated chemical/assay data | High-quality, curated bioactivity data from literature | Comprehensive functional & physical protein networks | Highly accurate 3D protein structure models |

| Data Volume (Representative) | 119M compounds, 295M bioactivities, 1.67M assays [46] | ~2M compounds, ~16M bioactivities | 67.6M proteins, 2B+ interactions (v12.5) | >200M predicted structures |

| Structural Analysis | 2D/3D structure search, similarity, substructure, physiochemical property profiling | SAR analysis, target prediction, molecular profiling | Not applicable (protein-centric) | 3D structure visualization, quality metrics (pLDDT), fold search |

| Bioassay Integration | Direct storage of HTS/screening data, protocols, and results | Extracted and curated bioactivity results from publications | Pathway and functional enrichment from assay gene lists | Limited; structural context for assay targets |

| Best For | Chemical screening, hit identification, SAR exploration, data aggregation | Drug discovery, lead optimization, literature-based data mining | Understanding target biology, pathway context, network pharmacology | Target selection, structure-based drug design, understanding mutations |

PubChem vs. ChEMBL: While both are pillars for bioactivity data, their origins dictate different use cases. PubChem is a primary deposition repository that includes raw and summarized data from high-throughput screening (HTS) campaigns, government agencies, and chemical vendors [46] [49]. This makes it unparalleled for accessing original screening data and a vast chemical space. ChEMBL, in contrast, is a manually curated database derived from published medicinal chemistry and pharmacology literature [45]. Its data is typically of higher consistency and is explicitly tailored for drug discovery, making it a preferred source for building predictive models for lead optimization. For a comparative analysis of structural features, PubChem offers a broader, more diverse chemical starting point, while ChEMBL provides deeper, more reliable annotations for known bioactive chemotypes.

PubChem vs. STRING and AlphaFold: This comparison highlights the difference between chemical-centric and target-centric resources. STRING specializes in mapping protein-protein interaction networks and functional associations, which is crucial for understanding the biological context of a drug target identified through bioassay screening [47]. It complements PubChem data by enabling pathway enrichment analysis for hit lists. AlphaFold provides predicted 3D protein structures for nearly the entire proteome [48]. In the workflow, AlphaFold structures can be used to understand the structural context of a target from a PubChem bioassay, enabling hypothesis generation about binding sites and mechanisms of action. PubChem itself does not generate these network or deep structural views but integrates links to such resources, serving as the chemical data hub that connects to these specialized biological tools.

The Core Challenge: Data Heterogeneity and Integration

A central theme in the comparative analysis of structural datasets is the challenge of data heterogeneity. PubChem’s greatest strength—aggregating data from countless sources—is also the source of its most significant analytical challenge. Discrepancies in experimental protocols, measurement units, activity thresholds, and reporting standards across different depositors can lead to distributional misalignments and annotation conflicts [49] [50].

This issue is not unique to PubChem but is acute due to its scale. For instance, a 2020 review noted that many PubChem assays are cell-based without a specific protein target, and activity summaries can be inconsistently applied [49]. Similarly, a 2025 analysis of pharmacokinetic (ADME) data found significant misalignments between gold-standard literature datasets and commonly used benchmarks, where naive integration degraded machine learning model performance [50]. These findings underscore a critical point for researchers: data extraction from PubChem must be followed by rigorous consistency assessment. Tools like AssayInspector, a model-agnostic package that identifies outliers, batch effects, and discrepancies across datasets, have been developed specifically to address this need before modeling [50].

Table 2: Common Data Heterogeneity Issues in PubChem and Mitigation Strategies

| Issue Category | Description | Impact on Analysis | Recommended Mitigation Strategy |

|---|---|---|---|

| Protocol Variability | Differences in assay type (biochemical vs. cell-based), concentration, incubation time [49]. | Prevents direct comparison of activity values across assays. | Use normalized activity scores (e.g., % inhibition, PubChem Activity Score); group analyses by assay type. |

| Activity Annotation | Inconsistent use of "active," "inconclusive," or "inactive" labels between depositors [49]. | Introduces noise in active/inactive classification for model training. | Re-annotate activities based on deposited dose-response data or uniform thresholds. |

| Chemical Representation | Same compound represented by different salts, stereochemistry, or as part of a mixture (Substance vs. Compound) [46]. | Inflates compound counts and obscures true SAR. | Analyze data at the Compound ID (CID) level, which represents unique, standardized structures. |

| Target Ambiguity | Many assays, especially cell-based, list no specific protein target or list multiple targets [49]. | Limits target-centric SAR and mechanistic insight. | Use cross-links to Gene and Protein databases; prioritize target-specific AIDs for focused studies. |

Experimental Protocols for Comparative Analysis

To ensure reproducible and meaningful comparative analyses, researchers must adopt systematic protocols for data retrieval, curation, and integration. The following methodologies are essential when working with PubChem and complementary datasets.

Protocol 1: Building a Benchmarking Dataset from PubChem BioAssay This protocol is adapted from practices used to create validated datasets for virtual screening [49].

- Assay Selection: Use the PubChem Advanced Search to identify assays (AIDs) with a clear protein target, dose-response data, and a sufficient number of active and inactive compounds. Filter by "Confirmatory" or "Dose-Response" screening stage.

- Data Retrieval: Download the full data table for the selected AID via the "Download" button. Ensure the download includes SIDs, CIDs, standard activity values (e.g., IC50, Ki), and activity summaries.

- Activity Standardization: Re-annotate activity based on a uniform threshold. For example, define actives as compounds with IC50 < 10 µM and inactives as those with IC50 > 20 µM or reported as inactive. Discard "inconclusive" compounds.

- Structure Curation: Use the parent Compound ID (CID) as the primary identifier. Download the corresponding canonical SMILES and 2D structures for all unique CIDs.

- Deduplication and Finalization: For assays against the same target, merge compound lists. Remove compounds that conflict in activity annotation across merged assays (or assign a consensus label) to create a final clean dataset.

Protocol 2: Data Consistency Assessment Prior to Integration This protocol is based on the workflow enabled by the AssayInspector tool [50] and is critical before integrating data from multiple PubChem assays or external sources.

- Dataset Assembly: Gather the molecular datasets (e.g., from multiple relevant PubChem AIDs or from PubChem and an external source like ChEMBL).

- Descriptor Calculation: Generate consistent molecular descriptors (e.g., ECFP4 fingerprints, physicochemical properties) for all molecules across all datasets.

- Distribution Analysis: Use statistical tests (e.g., Kolmogorov–Smirnov test for continuous properties, Chi-square for categorical) to compare the distribution of key endpoints and descriptors between datasets.

- Chemical Space Visualization: Employ dimensionality reduction (e.g., UMAP) on the descriptor space to visualize overlap and coverage between datasets.

- Conflict Identification: For molecules appearing in multiple datasets, flag significant discrepancies in reported activity values (e.g., active in one source, inactive in another).

- Informed Integration: Based on the assessment, decide whether to merge datasets (if they are aligned), transform data, or keep them separate for analysis. The AssayInspector tool provides automated alerts and recommendations for this step [50].

Visualization of Integrated Analysis Workflows

Visualizing the logical flow of data integration and analysis is key to designing robust research. The diagrams below, created using Graphviz DOT language, map out standard and advanced workflows leveraging PubChem.

Standard PubChem-Driven Workflow for Hit Identification

Multi-Database Integration for Systems-Chemical Biology

The Scientist's Toolkit: Essential Research Reagent Solutions

Beyond software tools, effective analysis requires access to well-characterized reagents and data resources. The following table details key "research reagent solutions" essential for experimental validation and advanced computational studies stemming from PubChem-based analyses.

Table 3: Essential Research Reagent Solutions for Experimental Follow-up

| Resource / Reagent | Provider / Source | Primary Function in Workflow | Key Consideration for Integration |

|---|---|---|---|