Bridging Prediction and Reality: Validating AI-Generated Molecular Models with Molecular Dynamics Simulations

This article provides a comprehensive guide for researchers on the critical integration of Molecular Dynamics (MD) simulations for validating and refining artificial intelligence (AI)-predicted molecular interactions, crucial in drug discovery...

Bridging Prediction and Reality: Validating AI-Generated Molecular Models with Molecular Dynamics Simulations

Abstract

This article provides a comprehensive guide for researchers on the critical integration of Molecular Dynamics (MD) simulations for validating and refining artificial intelligence (AI)-predicted molecular interactions, crucial in drug discovery and structural biology. It explores the fundamental principles of AI-based structural prediction tools like AlphaFold2 and their limitations. A detailed methodological framework is presented for applying MD to assess the stability, dynamics, and energetic profiles of AI-generated models. The article further addresses common pitfalls in the validation workflow and offers strategies for optimization. Finally, it establishes rigorous protocols for comparative analysis and validation against experimental data, underscoring MD's indispensable role in transforming high-potential AI predictions into reliable, physics-based models for biomedical research.

From Sequence to Structure: Understanding AI's Predictive Power and Its Physical Limits

The advent of deep learning has irrevocably transformed structural biology, with AlphaFold2 heralded as a solution to the decades-old protein folding problem [1]. However, the remarkable success in predicting single, static structures has illuminated a more profound challenge: proteins are dynamic machines that sample multiple conformational states to perform their functions [2] [3]. This reality creates a critical gap between AI-predicted static models and the conformational ensembles relevant for understanding mechanisms and designing therapeutics, particularly for intrinsically disordered proteins (IDPs) and flexible drug targets like GPCRs [2] [4].

This comparison guide is framed within a broader thesis on the molecular dynamics validation of AI-predicted interactions. The central premise is that the next frontier in computational structural biology is not merely prediction accuracy, but the accurate prediction of functional dynamics and binding-competent states. We objectively compare leading AI tools—AlphaFold2, RoseTTAFold, and next-generation ensemble and generative models—by evaluating their performance against experimental data, their capacity to model conformational diversity, and their utility in therapeutic design. The integration of AI predictions with physics-based simulation and experimental validation is now the essential pathway for reliable drug discovery [5] [4].

Comparative Performance Analysis of AI Structure Prediction Platforms

The table below provides a systematic, quantitative comparison of leading AI structure prediction tools, evaluating their core architectural approaches, performance on key benchmarks, and suitability for different research applications, particularly in drug discovery.

Table 1: Comparative Analysis of Major AI Protein Structure Prediction Tools

| Tool (Primary Developer) | Core Architectural Approach | Key Performance Metric (Typical Range) | Strengths | Key Limitations & Dynamic Validation Gaps | Primary Use Case in Drug Discovery |

|---|---|---|---|---|---|

| AlphaFold2 (DeepMind) [1] | Evoformer trunk + structure module; MSA-dependent deep learning. | Backbone accuracy: 0.96 Å RMSD95 (CASP14 median) [1]. pLDDT confidence score. | Exceptional accuracy for single, stable folds; high side-chain precision; reliable confidence metrics. | Predicts single, static conformation; misses alternative states and binding-induced changes; systematically underestimates flexible pocket volumes (e.g., by 8.4% in nuclear receptors) [6]. | High-confidence template generation for structured targets; initial pocket identification. |

| RoseTTAFold (Baker Lab) [7] | Three-track neural network (1D seq, 2D pair, 3D coord); more compute-efficient. | Accuracy comparable to AF2 for many targets; successful in CASP14 [8]. | Good accuracy with lower hardware demand; adaptable for design (e.g., ProteinGenerator) [7]. | Similar single-state limitation as AF2; performance varies (e.g., lower success on antibody-antigen docking (20%) [5]). | Rapid initial modeling; basis for generative design (sequence space diffusion). |

| FiveFold (Ensemble Method) [2] | Consensus ensemble from AF2, RoseTTAFold, OmegaFold, ESMFold, EMBER3D. | Functional Score (composite metric: diversity, exp. agreement, etc.). Outperforms single methods on IDPs. | Explicitly models conformational diversity; better captures spectra of IDP states; addresses "undruggable" target challenge. | Computationally intensive; consensus may average out rare but critical states. | Targeting intrinsically disordered proteins and flexible interfaces; allosteric drug discovery. |

| BoltzGen (MIT) [9] | Unified generative model for prediction & design; built-in physical constraints. | Successfully generated binders for 26 diverse targets, including "undruggable" ones in wet-lab tests [9]. | Unifies prediction and de novo design; focuses on challenging targets with low homology. | Novel model; full independent benchmarking against established tools is ongoing. | De novo generation of protein binders against targets with few or no known binders. |

| AlphaFold-MultiState / AlphaRED [5] [4] | AF2 modified with state-specific templates or coupled with physics-based docking (ReplicaDock). | AlphaRED achieved 43% success on antibody-antigen targets (vs. 20% for AF-multimer alone) [5]. | Integrates AI with physics; captures binding-induced conformational change. | Pipeline complexity; success depends on accurate flexibility estimation from AF2 confidence metrics. | Modeling protein-protein complexes with flexibility; antibody-antigen docking. |

Architectural and Functional Comparison

Beyond benchmark performance, the underlying architecture dictates a model's capabilities and limitations. The next table contrasts the technical foundations that enable or constrain the prediction of biologically relevant dynamics.

Table 2: Architectural and Functional Comparison of AI Prediction Approaches

| Feature | AlphaFold2 [1] | RoseTTAFold & Variants [7] [8] | Next-Generation Ensemble & Generative Models [2] [9] |

|---|---|---|---|

| Input Paradigm | Heavily reliant on deep Multiple Sequence Alignment (MSA) evolutionary information. | Utilizes MSA but with a three-track network integrating sequence, distance, and coordinates. | Varies: from ensemble of MSAs (FiveFold) [2] to single-sequence generative approaches (BoltzGen) [9]. |

| Output Type | Single, high-confidence 3D structure with per-residue pLDDT. | Single 3D structure. Can be adapted for sequence-structure co-generation (ProteinGenerator) [7]. | Ensembles of plausible conformations (FiveFold) [2] or novel sequence-structure pairs for design (BoltzGen, ProteinGenerator) [7] [9]. |

| Explicit Handling of Dynamics | No. Outputs a static "average" conformation biased by training data. Implicit uncertainty may correlate with flexibility [4]. | No inherent dynamics. However, its sequence-space diffusion model (PG) can design multistate proteins [7]. | Yes. Core objective is to sample conformational landscape (FiveFold) [2] or generate diverse binders (BoltzGen) [9]. |

| Physical Constraints | Learned implicitly from protein data bank (PDB) structures. Stereochemical violations are typically mild and relaxable [4]. | Similar implicit learning. ProteinGenerator incorporates sequence-based potentials for physicochemical control [7]. | Often explicitly incorporated (e.g., BoltzGen's built-in constraints from wet-lab feedback) [9] or via post-prediction MD refinement. |

| Typical Computational Cost | High (significant GPU memory and time for large MSAs). | Moderate to High (generally more efficient than AF2). | Very High (ensemble methods run multiple predictors; generative design requires sampling). |

Specialized Application Performance

Performance is highly dependent on target class. The following table summarizes key experimental findings for therapeutically relevant protein families, highlighting where dynamic validation is most critical.

Table 3: Performance Across Key Therapeutic Target Classes

| Target Class | Key Experimental Findings & Validation Gap | Implication for Structure-Based Drug Discovery |

|---|---|---|

| Intrinsically Disordered Proteins (IDPs) | Single-state predictors (AF2) fail. Ensemble method (FiveFold) better captures conformational diversity of alpha-synuclein [2]. | Enables rational approach to previously "undruggable" targets comprising ~30-40% of human proteome [2]. |

| GPCRs [4] | AF2/RoseTTAFold achieve ~1Å Cα RMSD in TM domains but struggle with extracellular loops and ligand-pocket side chains. Models often represent an "average" or training-data-biased state, not a specific functional state. | Direct docking to raw AF2 models often fails; requires state-specific modeling (AlphaFold-MultiState) or MD refinement for reliable pose prediction. |

| Antibodies [8] [5] | RoseTTAFold models antibodies with reasonable accuracy but may be outperformed by specialized tools (ABodyBuilder) on overall structure. AF-multimer has low success rate (20%) on antibody-antigen docking [5]. | Hybrid AI+physics pipelines (AlphaRED) significantly improve complex prediction success (to 43%) [5]. |

| Nuclear Receptors [6] | AF2 shows high accuracy for stable domains but systematically underestimates ligand-binding pocket volumes by 8.4% on average and misses functional asymmetry in homodimers. | Highlights risk of using static AF2 models for pocket-sized small molecule design; dynamic refinement is essential. |

| Cyclic Peptides [10] | Modified AF2 (AfCycDesign) accurately predicts cyclic peptide structures (median RMSD 0.8Å), enabling de novo design of macrocyclic binders. | Opens avenue for designing constrained peptide therapeutics targeting difficult PPI interfaces. |

Detailed Experimental Protocols for Validation

A critical component of the molecular dynamics validation thesis is the methodology for testing and refining AI predictions. Below are detailed protocols for key experiments cited in this guide.

This protocol generates multiple plausible conformations for a target protein, crucial for studying dynamics.

- Input Preparation: Provide the target protein's amino acid sequence.

- Parallel Structure Prediction: Run the sequence through five complementary algorithms: AlphaFold2, RoseTTAFold, OmegaFold, ESMFold, and EMBER3D.

- Consensus Building & Variation Mapping:

- Use the Protein Folding Shape Code (PFSC) system to translate each predicted 3D structure into a standardized string code representing secondary structure elements per residue.

- Construct a Protein Folding Variation Matrix (PFVM) by aligning the PFSC strings, cataloging the frequency of different structural states at each residue position across all five predictions.

- Ensemble Sampling: Use a probabilistic algorithm to sample distinct combinations of structural states from the PFVM, guided by user-defined diversity constraints (e.g., minimum RMSD between output models).

- 3D Model Reconstruction: Convert each sampled PFSC string back into a full atomic 3D model using homology modeling against a structural database.

- Quality Control: Filter final ensemble through stereochemical validation (e.g., MolProbity) to ensure physical realism.

This protocol designs novel protein sequences and structures with desired properties using a RoseTTAFold-based diffusion model.

- Conditioning Setup: Define design objectives (e.g., "scaffold a structural motif," "enrich amino acid composition to 20% tryptophan," "achieve target isoelectric point").

- Noise Initialization: Begin with a sequence tensor of Gaussian noise and a blank structure initialization.

- Iterative Denoising: At each diffusion timestep:

- The RoseTTAFold-based network predicts a step toward the ground-truth sequence and structure.

- Guidance: Gradients from external "guide" functions (e.g., a classifier that scores tryptophan content) are injected to steer the denoising toward the objective.

- The predicted sequence is noised to prepare for the next step.

- Output Generation: After the final step, a full amino acid sequence and its predicted 3D structure are generated.

- In-silico Validation: Filter designs using independent structure prediction networks (AlphaFold2, ESMFold) for fold self-consistency (RMSD < 2Å, pLDDT > 90).

This protocol docks two protein structures where one or both undergo binding-induced conformational change.

- Template Generation with AF2: Input the sequences of the binding partners. Run AlphaFold-multimer (AFm) to generate a preliminary complex template and, crucially, the per-residue pLDDT confidence metrics.

- Flexibility Analysis: Interpret regions of low pLDDT in the AFm model as putative flexible regions likely to change upon binding.

- Replica Exchange Docking Initiation: Feed the AFm model and the flexibility map into the ReplicaDock 2.0 physics-based docking engine.

- Enhanced Sampling: ReplicaDock performs molecular dynamics simulations at multiple temperatures, focusing backbone moves on the identified flexible regions, to extensively sample the conformational landscape.

- Pose Selection: The ensemble of docked poses is clustered and scored using Rosetta energy functions to identify low-energy, structurally plausible complexes.

- Validation: Success is defined by producing a model with at least "acceptable" quality (according to CAPRI criteria) compared to the experimental bound structure.

Visualizing Workflows and Validation Pipelines

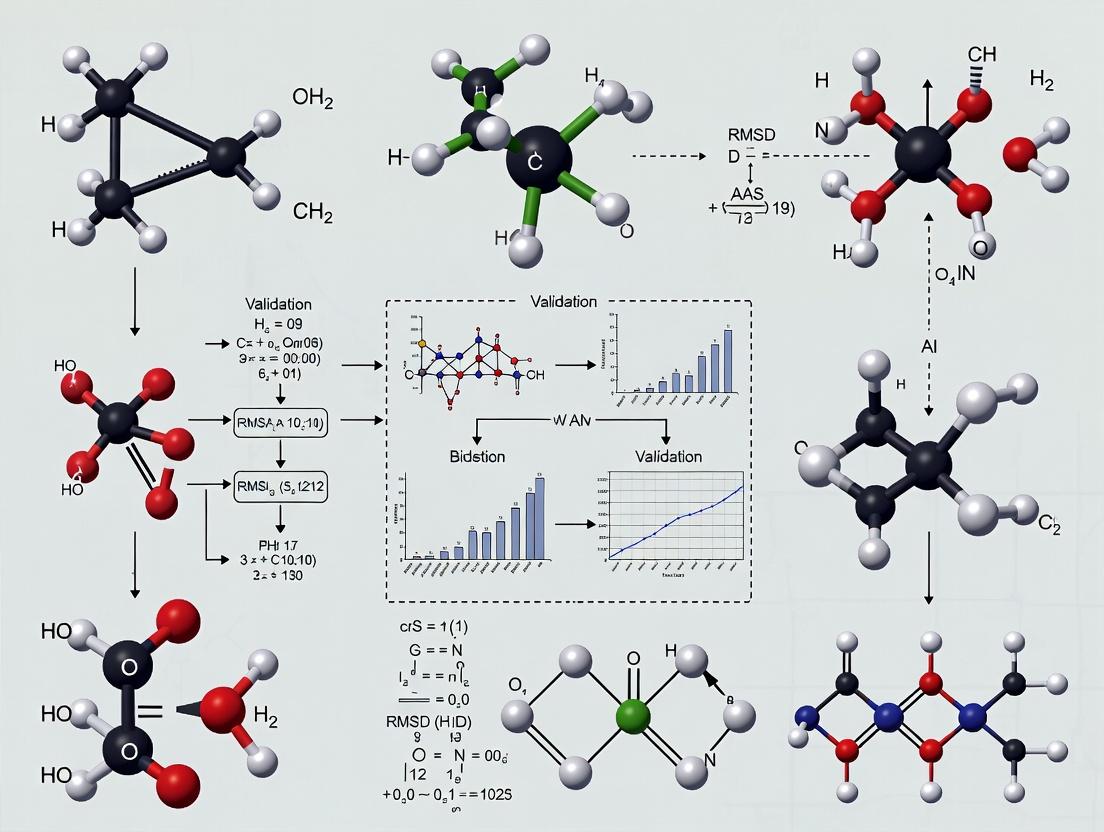

Diagram 1: Comparative workflows of major AI structure prediction and design approaches, converging on molecular dynamics and experimental validation.

Diagram 2: A hybrid AI-physics pipeline for the molecular dynamics validation of AI-predicted structures.

Table 4: Key Research Reagent Solutions for AI Model Validation

| Category | Tool / Resource | Primary Function in Validation Pipeline |

|---|---|---|

| Computational Prediction & Design | AlphaFold2 / ColabFold [1], RoseTTAFold [7], ESMFold | Generate initial static structural models or sequence embeddings. |

| Ensemble & Conformational Sampling | FiveFold framework [2], BioEmu [4], MODELLER [8] | Generate multiple plausible conformations to model flexibility and uncertainty. |

| Physics-Based Simulation & Refinement | GROMACS / AMBER / CHARMM, ReplicaDock 2.0 [5], Rosetta Relax [8] | Perform molecular dynamics simulations to assess stability, sample dynamics, and refine models. |

| Specialized Structure Prediction | AfCycDesign (cyclic peptides) [10], ABodyBuilder (antibodies) [8] | Predict structures for specialized, therapeutically relevant target classes. |

| Experimental Structure Databases | Protein Data Bank (PDB) [4], SAbDab (antibodies) [8] | Source of ground-truth structures for training AI and validating predictions. |

| Validation & Analysis Metrics | pLDDT / pTM (confidence), RMSD / RMSF, TM-score [1], MolProbity (clashes) | Quantify model accuracy, confidence, flexibility, and stereochemical quality. |

| Hybrid Docking Pipelines | AlphaRED (AlphaFold + ReplicaDock) [5] | Predict protein-protein complexes involving conformational change. |

The fundamental challenge of predicting how molecules interact—be it a drug binding to a protein target or two proteins forming a complex—from their sequence information alone represents a central problem in modern computational biology and drug discovery [11]. Traditional drug discovery is notoriously lengthy, expensive, and carries a high risk of failure, with the overall probability of a drug candidate succeeding from Phase I trials to approval being only about 8.1% [12]. This inefficiency has catalyzed a paradigm shift towards artificial intelligence (AI)-driven methodologies that promise to extract predictive rules directly from molecular sequences, thereby accelerating the identification of viable therapeutic candidates [11] [12].

The premise is deceptively simple: given the amino acid sequence of a protein or the chemical notation (e.g., SMILES string) of a ligand, an AI model must infer the likelihood and nature of their interaction. However, beneath this lies the profound complexity of molecular biophysics. AI models, particularly deep learning architectures, attempt to learn the hidden patterns and physical principles that govern these interactions from vast datasets of known examples, effectively building an internal, nonlinear map from sequence space to interaction space [13] [14]. This capability is transformative, enabling the high-throughput screening of millions of compounds against novel disease targets, a task infeasible with experimental methods alone [15].

Nevertheless, the predictive power of these "black box" models must be rigorously validated. This is where molecular dynamics (MD) simulations provide a critical bridge. MD offers a physics-based, mechanistic lens to scrutinize AI predictions, allowing researchers to simulate the temporal evolution of a predicted complex, assess its stability, calculate binding energies, and validate whether the inferred interaction is thermodynamically plausible [16]. Thus, the synergy between AI's predictive speed and MD's mechanistic depth forms the core thesis of contemporary molecular interaction research: sequence-based AI predictions provide testable hypotheses, which are then validated and refined through physics-based simulation [16] [3].

Comparative Guide to AI Model Architectures for Interaction Prediction

Different AI architectures approach the problem of learning from molecular sequences with distinct strategies, leading to variations in performance, interpretability, and computational demand. The following comparison is based on benchmark studies across diverse pharmaceutical endpoints, from target binding to toxicity [13] [11] [14].

Table 1: Performance Comparison of AI/ML Models on Diverse Pharmaceutical Prediction Tasks

| Model Architecture | Typical Application | Key Strength | Key Limitation | Reported Performance (AUC Range) | Interpretability |

|---|---|---|---|---|---|

| Deep Neural Networks (DNN) | ADME/Tox, Bioactivity Classification [13] | Learns complex, non-linear feature hierarchies; High predictive accuracy on large datasets. | Requires very large datasets; Prone to overfitting; Computational black box. | 0.80 - 0.95 [13] | Low |

| Graph Neural Networks (GNN) | Protein-Ligand Binding Affinity, Virtual Screening [11] | Natively handles molecular graph structure; Captures topological and spatial relationships. | Performance can depend on graph quality; Computationally intensive for large graphs. | >0.90 on specific docking benchmarks [11] | Medium (via attention mechanisms) |

| Transformer Models | Protein-Ligand Interaction, Sequence-Based Binding Site ID [11] [14] | Superior at capturing long-range dependencies in sequences; Effective with pre-training. | Extremely high parameter count; Demands massive compute and data. | Varies widely; can match or exceed GNNs [14] | Medium (via attention maps) |

| Support Vector Machine (SVM) | Binary Classification (e.g., hERG inhibition) [13] | Effective in high-dimensional spaces; Robust with smaller datasets. | Poor scalability to very large data; Kernel choice is critical. | 0.75 - 0.90 [13] | Medium |

| Random Forest (RF) | Bioactivity Classification, ADMET Prediction [13] | Handles non-linearities well; Provides feature importance metrics. | Can overfit noisy data; Less accurate than DL for complex patterns. | 0.70 - 0.88 [13] | High |

| Factorization Machines (e.g., survivalFM) | Modeling Pairwise Feature Interactions for Risk Prediction [17] | Efficiently models all pairwise interactions; Maintains interpretability. | Primarily designed for tabular data, not raw sequences. | Improved C-index in 41.7% of disease risk scenarios [17] | High |

Key Insights from Comparative Analysis: The landscape is not monolithic. A seminal comparative study found that Deep Neural Networks (DNNs) consistently ranked highest across eight diverse datasets (including solubility, hERG, and pathogen bioactivity) when evaluated using a composite of metrics like AUC and F1 score, outperforming SVM, which in turn outperformed other classical methods [13]. This highlights deep learning's power for direct pattern recognition.

However, for structured prediction tasks like binding pose or affinity, Geometric Deep Learning models, such as GNNs and SE(3)-equivariant networks, have taken precedence. They explicitly incorporate 3D structural inductive biases, leading to more accurate predictions when structural information is available or can be reliably predicted [11].

A critical caveat emerged from the analysis of models like DeepPurpose: many state-of-the-art models can exploit "topological shortcuts." They often learn to predict based on the network connectivity of proteins and ligands in the training database (i.e., how promiscuous a molecule is) rather than on their intrinsic chemical features. This leads to a catastrophic drop in generalizability to novel, unseen molecules [14]. This finding underscores the necessity for robust validation and model designs that force learning from sequence/structure features.

Experimental Protocols for Training and Validating AI Interaction Models

The reliability of an AI prediction is fundamentally tied to the quality of the experimental data and protocols used to create the model. Below are detailed methodologies for key steps in the pipeline.

Protocol 1: Dataset Curation and Feature Engineering for a Binary Binding Classifier

- Objective: To create a robust dataset for training a model to classify whether a ligand binds to a protein target.

- Materials:

- Source Databases: BindingDB [14], ChEMBL [13], DrugBank [14] for positive (binding) pairs.

- Negative Sampling Strategy: Crucial for avoiding bias. Use network-based distant sampling [14]: select non-binding pairs where the protein and ligand are far apart in the known interaction network (e.g., shortest path distance >3). Combine with experimental non-binders from databases like Tox21 [14].

- Descriptors: For ligands, use Extended-Connectivity Fingerprints (ECFP) or SMILES strings. For proteins, use amino acid sequence or pre-trained embeddings (e.g., from ProtBERT).

- Procedure:

- Extract all confirmed binding pairs for a target family of interest (e.g., kinases) from source databases, applying a consistent binding affinity threshold (e.g., Kd < 10 µM).

- Apply the distant negative sampling algorithm to generate a set of putative non-binders of equal size to the positive set.

- Split the data into training, validation, and test sets using a temporal split or a clustered split (based on molecular similarity) to prevent data leakage and better simulate real-world generalization [13].

- Convert all molecular entities into their chosen descriptor format (e.g., tokenize SMILES, generate fingerprints).

Protocol 2: Unsupervised Pre-training of Molecular Embeddings (as in AI-Bind)

- Objective: To learn general, informative representations of proteins and ligands from large, unlabeled databases to improve downstream binding prediction, especially for novel entities [14].

- Materials:

- Large-scale molecular databases (e.g., PubChem for ligands, UniProt for protein sequences).

- A self-supervised learning framework (e.g., a Transformer autoencoder).

- Procedure:

- Assemble a corpus of millions of ligand SMILES strings and/or protein sequences.

- For ligands, train a model on tasks like masked token prediction (mask parts of the SMILES string and predict them) or contrastive learning between similar molecules.

- For proteins, train on next-amino-acid prediction or homology-based contrastive tasks.

- Use the trained encoder to generate fixed-dimensional embedding vectors for all molecules in the supervised binding dataset. These embeddings, rather than raw sequences, serve as the input features for the binding classifier.

Protocol 3: Benchmarking an AI Model in a Virtual Screening Challenge (DO Challenge)

- Objective: To evaluate an AI agent's ability to strategically identify top candidate molecules from a vast library with limited resources [15].

- Materials:

- The DO Challenge benchmark dataset (1 million molecular conformations with a hidden "DO Score").

- A computational environment where the agent can write and execute code.

- Procedure:

- The agent is allowed to query the true DO Score for a maximum of 10% (100,000) of the library.

- The agent must develop and execute a strategy (e.g., active learning with a GNN) to select 3,000 molecules predicted to have the highest scores.

- Performance is scored by the percentage overlap between the agent's selection and the true top 1,000 molecules.

- This protocol tests not just model accuracy, but strategic planning, resource allocation, and iterative learning [15].

The Validation Bridge: Molecular Dynamics of AI-Predicted Complexes

AI models provide a static prediction—a snapshot of a potential interaction. Molecular dynamics (MD) simulation is the essential tool for validating the dynamic stability and thermodynamic feasibility of this snapshot [16]. This process transforms a computational prediction into a physically credible hypothesis.

Table 2: MD Simulation Protocols for Validating AI-Predicted Interactions

| Simulation Stage | Protocol for a Globular Protein-Ligand Complex | Protocol for an Intrinsically Disordered Protein (IDP) Complex | Key Metrics for Validation |

|---|---|---|---|

| System Preparation | 1. Place AI-predicted pose in a solvation box. 2. Add ions to neutralize charge. 3. Apply force fields (e.g., CHARMM36, AMBER). | 1. Start from an ensemble of AI-generated IDP conformations. 2. Solvate and neutralize. Use force fields tuned for IDPs (e.g., CHARMM36m). | System size, charge neutrality. |

| Equilibration | 1. Energy minimization. 2. Gradual heating to 310 K over 100 ps. 3. Pressure equilibration (1 atm) over 100 ps. | 1. Energy minimization. 2. Extended equilibration (ns timescale) to relax the flexible chain. | Stable temperature, pressure, density; Root-mean-square deviation (RMSD) plateau. |

| Production Run | Unconstrained simulation for 100 ns to 1 µs. Multiple replicates from different initial velocities are recommended. | Enhanced sampling (e.g., Gaussian accelerated MD) is often required to capture rare transitions over ~1 µs [16]. | Complex stability (ligand RMSD), binding mode persistence, residence time. |

| Analysis & Validation | 1. Calculate binding free energy (e.g., via MM/PBSA or FEP). 2. Analyze interaction fingerprints (H-bonds, hydrophobic contacts). 3. Compare to experimental data (Kd, IC50) if available. | 1. Analyze ensemble properties: radius of gyration, secondary structure propensity. 2. Calculate contact maps with binding partner. 3. Validate against experimental data (NMR chemical shifts, SAXS profiles) [16]. | Quantitative binding affinity, mechanistic interaction details, agreement with biophysical experiments. |

The Critical Role of MD for IDPs: AI predictions for IDPs are exceptionally challenging due to their lack of a fixed structure. Here, AI and MD roles can reverse: AI generative models can rapidly sample the vast conformational ensemble of an unbound IDP, which would be prohibitively expensive for MD alone [16]. MD simulations then take these AI-generated conformations as starting points and simulate their binding to a partner, testing which conformational sub-states are competent for interaction. For example, a study on the disordered protein ArkA used Gaussian accelerated MD to reveal how proline isomerization acts as a conformational switch regulating SH3 domain binding [16], a detail beyond the scope of static AI prediction.

Diagram: AI Prediction and MD Validation Workflow. The pipeline shows how AI models generate static structural hypotheses from sequence, which are then solvated and simulated using MD to produce dynamic, energetically validated insights. A feedback loop allows MD results to improve future AI training [16] [14].

The Scientist's Toolkit: Essential Research Reagent Solutions

Moving from concept to practice requires a suite of computational tools and data resources. The following toolkit is essential for building, validating, and interpreting sequence-based interaction models.

Table 3: Essential Toolkit for AI-Driven Molecular Interaction Research

| Tool/Resource Name | Type | Primary Function | Key Application in Workflow |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Generation and manipulation of chemical molecules, calculation of molecular descriptors and fingerprints [13]. | Featurization of ligand SMILES strings into model-ready inputs (e.g., ECFP fingerprints). |

| PyTorch Geometric / DGL-LifeSci | Deep Learning Library | Implements Graph Neural Networks and other geometric deep learning models tailored for molecules [11]. | Building and training models that learn directly from molecular graphs or 3D structures. |

| AlphaFold2 / OpenFold | Protein Structure Prediction Model | Predicts highly accurate 3D protein structures from amino acid sequences [3]. | Provides structural inputs for models that require 3D protein data when experimental structures are unavailable. |

| GROMACS / AMBER | Molecular Dynamics Simulation Suite | Performs high-performance MD simulations using physics-based force fields [16]. | Validating the stability and thermodynamics of AI-predicted complexes (Production Run & Analysis). |

| BindingDB / ChEMBL | Interaction Database | Curated repositories of experimental protein-ligand binding affinities and bioactivities [13] [14]. | Source of ground-truth data for training and testing supervised AI models. |

| AI-Bind Pipeline | Specialized Prediction Pipeline | Combines network science and unsupervised learning to improve predictions for novel proteins/ligands [14]. | Tackling the "cold start" problem in drug discovery for targets with little known binding data. |

| DO Challenge Benchmark | Evaluation Benchmark | Simulates a resource-constrained virtual screening campaign [15]. | Benchmarking the end-to-end strategic performance of AI agentic systems in drug discovery. |

Future Directions and Integrative Frameworks

The field is rapidly evolving beyond static prediction. The next frontier involves integrative agentic systems that don't just predict but plan and execute entire discovery campaigns. As demonstrated by the Deep Thought system in the DO Challenge, future AI will manage the entire loop: proposing targets, generating molecules, predicting interactions, prioritizing compounds for MD validation, and designing subsequent experiments [15].

A major focus is overcoming the generalizability challenge. Solutions like AI-Bind's unsupervised pre-training and network-aware negative sampling are critical steps toward models that reason from first principles of chemistry rather than database biases [14]. Furthermore, the integration of physics directly into AI models is a growing trend. This includes developing hybrid models that use neural networks to approximate energy functions or guide MD sampling, blending the speed of learning with the rigor of physics [16].

Finally, the community is moving toward dynamic ensemble predictions, especially for disordered systems. The goal is to predict not a single structure but a probabilistic ensemble of conformations and their interaction probabilities, which can then be faithfully tested by MD and experiment [16] [3]. This shift from a static to a dynamic worldview represents the final step in fully decoding the black box, transforming it into a principled, predictive, and interpretable engine for molecular discovery.

The integration of Artificial Intelligence (AI) into molecular research and drug discovery represents a paradigm shift, promising to compress traditional development timelines from years to months [18]. AI platforms now generate novel molecular structures, predict protein-ligand interactions, and nominate therapeutic candidates with unprecedented speed. However, this acceleration has revealed a critical gap: static computational predictions frequently fail to capture the dynamic, energetic, and context-dependent realities of biological systems [19]. A prediction of high binding affinity is meaningless if the compound cannot adopt the necessary conformation in solution or if it disrupts essential protein dynamics.

This guide argues that the transformative potential of AI in molecular sciences is contingent on robust, physics-based validation. It compares leading approaches and platforms, not by their computational prowess alone, but by their commitment to and frameworks for dynamic and energetic validation through molecular dynamics simulations (MDS) and iterative experimental cycles. The convergence of AI with high-fidelity simulation and automated experimentation forms the essential bridge across the credibility gap, turning fast predictions into reliable discoveries.

Comparative Analysis of Platforms and Methods

This section provides a structured comparison of leading AI-driven discovery platforms and the computational methods used to validate their predictions.

Comparison of Leading AI Drug Discovery Platforms

The following table compares major AI-driven drug discovery companies based on their core validation philosophy and recorded outcomes.

| Platform (Company) | Core AI Approach | Primary Validation Strategy | Key Metric/Outcome | Clinical Stage Example (as of 2025) |

|---|---|---|---|---|

| Generative Chemistry (Exscientia) | Generative AI for molecular design; "Centaur Chemist" human-AI collaboration. | Integrated design-make-test-analyze (DMTA) cycles with patient-derived tissue phenotyping [18]. | ~70% faster design cycles; 10x fewer compounds synthesized than industry norm [18]. | CDK7 inhibitor (GTAEXS-617) in Phase I/II for solid tumors [18]. |

| Physics-Enabled Design (Schrödinger) | First-principles physics (e.g., free-energy perturbation) combined with machine learning. | Rigorous physics-based simulations (e.g., FEP, MD) for binding affinity and selectivity prediction prior to synthesis [18]. | Advanced TYK2 inhibitor (zasocitinib) from Nimbus Therapeutics into Phase III trials [18]. | TAK-279 (zasocitinib) for psoriasis in Phase III [18]. |

| Phenomics-First Systems (Recursion) | AI analysis of high-content cellular imaging (phenomics) to infer biology and drug activity. | Large-scale phenotypic screening in disease models; validation of AI-hypothesized mechanisms [18]. | Merger with Exscientia to combine phenomics with generative chemistry [18]. | Pipeline includes candidates for oncology and genetic diseases [18]. |

| Knowledge-Graph Repurposing (BenevolentAI) | Mining scientific literature and data to identify novel drug-target-disease associations. | In silico evidence strengthening followed by in vitro biological assay validation [18]. | Identified BAR-Therapeutic's latent TGF-β binding protein 4 (LTBP4) program for muscular dystrophy [18]. | Preclinical and clinical-stage pipeline across neurology, psychiatry, immunology [18]. |

| End-to-End Generative (Insilico Medicine) | Generative AI for target discovery and molecular design (Chemistry42). | Multimodal validation including MDS for binding mode stability and in vitro / in vivo testing [18]. | First AI-discovered drug (ISM001-055) reached Phase I in 18 months from target discovery [18]. | TNIK inhibitor (ISM001-055) for idiopathic pulmonary fibrosis in Phase IIa [18]. |

Comparison of Computational Validation Methods

This table contrasts different computational methods used to assess the stability and energetics of AI-predicted molecular interactions, such as protein-ligand complexes.

| Validation Method | Underlying Principle | Key Output Metrics | Strengths | Limitations | Role in Bridging the "Critical Gap" |

|---|---|---|---|---|---|

| Classical Molecular Dynamics (MDS) | Numerical integration of Newton's equations of motion for all atoms using a molecular mechanics force field. | Root-mean-square deviation (RMSD), radius of gyration (Rg), solvent-accessible surface area (SASA), hydrogen bond counts, free energy landscapes [19]. | Provides time-resolved insight into conformational stability, flexibility, and essential dynamics. Can simulate microseconds. | Computationally expensive; accuracy limited by the empirical force field parameters. | Directly assesses the dynamic stability of a predicted pose, revealing if it is a stable minimum or a transient state. |

| Neural Network Potentials (NNPs) (e.g., Meta's UMA/eSEN) | Machine-learned potentials trained on vast datasets of high-accuracy quantum chemical calculations [20]. | Potential energy, forces, and properties at near-quantum mechanics (QM) accuracy but at MD speed. | Near-DFT accuracy with MD scalability. Can model reactive chemistry. Excellent for geometry optimization [20]. | Requires massive training datasets (~100M calculations for OMol25); inference slower than classical MD [20]. | Enables high-fidelity energy evaluations and dynamics for systems where QM is too slow and classical MD is insufficiently accurate. |

| Free Energy Perturbation (FEP) | Computes the free energy difference between two states (e.g., bound/unbound, different ligands) via thermodynamic perturbation. | Relative binding free energy (ΔΔG) in kcal/mol. | Gold standard for in silico binding affinity prediction when configured correctly. Highly quantitative. | Extremely computationally intensive; sensitive to setup (alignment, sampling); requires expert knowledge. | Provides the energetic validation of AI predictions, quantifying whether a predicted interaction is thermodynamically favorable. |

| Static Docking & Scoring | Rigid or semi-flexible docking of a ligand into a protein active site, scored with an empirical or knowledge-based function. | Docking score (unitless), predicted binding pose. | Extremely fast, allowing virtual screening of billions of compounds. | Ignores dynamics, solvation, and entropic effects. High false-positive rate. Prone to the "critical gap." | The starting point for AI predictions that must be followed by dynamic and energetic validation methods. |

Experimental Protocols for Dynamic Validation

Protocol: Molecular Dynamics Simulation for Mutation Pathogenicity

This protocol, based on the Dynamicasome study, details how MDS can validate AI predictions of mutation effects [19].

System Preparation:

- Model Generation: For a protein of interest (e.g., PMM2), generate 3D structural models for the wild-type and all possible missense variants using in silico mutagenesis tools.

- Solvation and Ionization: Embed each protein model in a rectangular water box (e.g., TIP3P water model) with a minimum distance between the protein and box edge. Add physiological concentrations of ions (e.g., Na⁺, Cl⁻) to neutralize the system's charge and mimic ionic strength.

- Parameter Assignment: Assign atomic coordinates and force field parameters (e.g., CHARMM36, AMBER ff19SB) to all atoms in the system.

Simulation Execution:

- Energy Minimization: Perform steepest descent and conjugate gradient minimization to remove steric clashes and bad contacts from the initial structure.

- Equilibration: Conduct a two-stage equilibration in the NVT (constant Number, Volume, Temperature) and NPT (constant Number, Pressure, Temperature) ensembles. Gradually heat the system to the target temperature (e.g., 310 K) and adjust the pressure to 1 atm using a Berendsen barostat. Apply positional restraints on protein heavy atoms during initial equilibration, which are gradually released.

- Production Run: Perform an unrestrained production MD simulation for a duration sufficient to capture relevant dynamics (e.g., 100 ns to 1 μs per variant). Save atomic coordinates at regular intervals (e.g., every 10-100 ps) for analysis.

Feature Extraction for AI Training:

- From the production trajectory, calculate a suite of dynamic stability metrics for both wild-type and variant proteins:

- Root-mean-square deviation (RMSD) of the protein backbone.

- Radius of gyration (Rg) as a measure of compactness.

- Solvent-accessible surface area (SASA).

- Number of intramolecular hydrogen bonds.

- Secondary structure composition over time.

- These features form the dynamic dataset used to train or validate AI models, moving beyond static structural features [19].

- From the production trajectory, calculate a suite of dynamic stability metrics for both wild-type and variant proteins:

Protocol: Closed-Loop AI-Driven Experimental Validation (CRESt Framework)

This protocol describes an automated, robotic workflow for validating and optimizing AI-predicted materials, as exemplified by the MIT CRESt platform for catalyst discovery [21].

Human-AI Co-Design:

- A researcher defines the objective in natural language (e.g., "maximize power density of a formate fuel cell catalyst").

- The AI system (e.g., a multimodal large language model) integrates this goal with knowledge from scientific literature, existing databases, and prior experimental results to propose an initial set of candidate material recipes.

Robotic Synthesis and Processing:

- A liquid-handling robot precisely combines precursor solutions according to the AI-proposed recipe.

- A carbothermal shock system or other automated reactors perform the rapid, controlled synthesis of the candidate material.

Automated Characterization and Testing:

- The synthesized material is transferred to an automated electrochemical workstation for performance testing (e.g., cyclic voltammetry, impedance spectroscopy).

- Parallel characterization is performed using automated electron microscopy (SEM/TEM) and X-ray diffraction to analyze morphology and structure.

Analysis, Learning, and New Proposal:

- Test and characterization data are fed back to the AI models.

- A Bayesian optimization active learning loop analyzes the results, quantifies uncertainty, and balances exploration of new chemistries with exploitation of promising leads.

- The system proposes a new batch of refined material recipes, and the loop (Steps 2-4) repeats. This closed cycle was used to test over 900 chemistries and 3,500 electrochemical tests autonomously [21].

Visualizing Validation Workflows

Diagram 1: Molecular Dynamics Validation Workflow for AI Predictions [19]

Diagram 2: Closed-Loop AI-Driven Experimental Validation Cycle [21] [22]

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential computational and experimental resources for implementing dynamic validation of AI predictions.

| Item Name | Type/Provider | Primary Function in Validation | Key Consideration for Use |

|---|---|---|---|

| Open Molecules 2025 (OMol25) Dataset & UMA Models | Dataset & Pre-trained NNPs (Meta FAIR) [20] | Provides a massive, high-accuracy quantum chemical dataset and neural network potentials for performing molecular dynamics or geometry optimization at near-DFT accuracy, crucial for validating electronic properties and reaction energies. | Models are computationally demanding for inference. Best for final-stage validation of promising candidates rather than high-throughput screening. |

| GROMACS/AMBER/NAMD | Molecular Dynamics Simulation Software | Industry-standard suites for running classical all-atom MD simulations. Used to calculate dynamic stability metrics (RMSD, Rg, SASA) for proteins, complexes, or materials predicted by AI. | Choice of force field (e.g., CHARMM36, AMBER ff19SB) and water model is critical for biological accuracy. Requires significant HPC resources. |

| Schrödinger's Desmond & FEP+ | Integrated MD & Free-Energy Simulation Suite | Provides a streamlined workflow for running MD and free energy perturbation calculations to validate binding modes and predict relative binding affinities of AI-generated compounds. | Commercial software with high licensing costs. FEP+ requires careful system preparation for reliable results. |

| CRESt-like Robotic Platform | Integrated Robotic System (e.g., custom) [21] | Automates the physical synthesis, characterization, and testing of AI-predicted molecules or materials, creating a closed validation loop. Components include liquid handlers, electrochemical workstations, and automated microscopes. | High capital investment and maintenance. Requires interdisciplinary expertise to integrate robotics, chemistry, and AI software. |

| Bayesian Optimization Libraries (BoTorch, GPyOpt) | Python Software Libraries [22] | Implements Bayesian optimization and active learning algorithms to intelligently select the next best experiment based on previous results, maximizing information gain from each validation cycle. | Effective design requires a well-defined search space and a suitable surrogate model (e.g., Gaussian Process). |

In the rapidly advancing field of computational structural biology, molecular dynamics (MD) simulation remains an indispensable tool for the validation of artificial intelligence (AI)-predicted molecular interactions. This is particularly critical for research focused on drug development, where understanding the stability, dynamics, and binding mechanisms of protein-ligand complexes directly impacts the discovery of new therapeutics. While AI models, such as AlphaFold and RosettaFold, have achieved remarkable success in predicting static protein structures, they provide limited information on dynamic behavior, conformational plasticity, and the thermodynamic feasibility of interactions—all of which are essential for understanding biological function and drug efficacy [23].

MD simulations bridge this gap by providing an atomic-resolution, time-evolving perspective based on physics-based principles. The foundational pillars of any reliable MD study are the force field—the mathematical model defining interatomic potentials—and the sampling methodology—the strategy for exploring the conformational landscape. The accuracy of the force field dictates how realistically the simulation represents true physical behavior, while the comprehensiveness of the sampling determines whether the observed dynamics are statistically representative or merely artifacts of limited exploration [24] [25]. Consequently, the systematic comparison and selection of these components are not merely technical choices but are central to constructing a robust validation pipeline for AI predictions. This guide provides an objective, data-driven comparison of contemporary force fields and sampling strategies, contextualized within the workflow of validating AI-predicted biomolecular interactions.

Comparative Analysis of Biomolecular Force Fields

The choice of force field is arguably the most consequential factor affecting the outcome and credibility of an MD simulation. Modern biomolecular force fields share a common mathematical form, comprising terms for bonded interactions (bonds, angles, dihedrals) and non-bonded interactions (van der Waals and electrostatic forces), but differ in their parameterization philosophies and target applications [26] [25]. Their performance is not universal; it varies significantly with the type of molecule (e.g., protein, nucleic acid, lipid) and the property of interest (e.g., structural stability, loop dynamics, binding free energy).

The following tables synthesize key findings from recent benchmarking studies across different biological systems. A critical insight is that a force field performing excellently for one system or property may be inadequate for another, underscoring the need for system-specific selection.

Table 1: Performance of Force Fields for Folded Protein Simulations Data derived from 10 µs simulations of ubiquitin (Ubq) and the GB3 domain, compared to NMR experimental data [24].

| Force Field | Class/Category | Agreement with NMR Data (Ubq/GB3) | Key Observations on Structural Ensemble |

|---|---|---|---|

| CHARMM27 | Classical (All-Atom) | Good / Good | Samples a relatively narrow, well-defined native-like ensemble. Reliable for stable, folded proteins [24]. |

| CHARMM22* | Modern (Backbone-Corrected) | Good / Good | Similar to CHARMM27. Improved torsion parameters enhance sampling accuracy [24]. |

| Amber ff99SB-ILDN | Modern (Side-Chain Refined) | Good / Good | Balanced ensemble for folded proteins. A widely used standard in protein simulations [24]. |

| Amber ff99SB*-ILDN | Modern (Backbone & Side-Chain) | Good / Good | Despite different helical propensity parameters, ensemble is indistinguishable from ff99SB-ILDN for these folded proteins [24]. |

| Amber ff03 | Classical (All-Atom) | Intermediate / Intermediate | Samples a distinct, native-like ensemble but shows systematic deviations from ff99SB-derived fields [24]. |

| Amber ff03* | Modern (Backbone-Corrected) | Intermediate / Intermediate | Similar to ff03. Differences from experiment are likely due to fundamental parameterization [24]. |

| OPLS-AA | Classical (All-Atom) | Poor (Drift) / Poor (Drift) | Exhibits substantial conformational drift over time, leading to decreasing agreement with experiment [24]. |

| CHARMM22 | Classical (All-Atom) | Poor (Drift) / Very Poor (Unfolding) | Samples an overly broad ensemble; can lead to partial unfolding in long simulations [24]. |

Table 2: Performance of Force Fields for Specialized Systems Data compiled from studies on liquid membranes, polyamide membranes, and intrinsically disordered proteins (IDPs) [27] [23] [28].

| System Type | Tested Force Fields | Top Performing Force Field(s) | Key Benchmarking Metric(s) | Performance Notes |

|---|---|---|---|---|

| Ether-Based Liquid Membranes (Diisopropyl Ether) [27] | GAFF, OPLS-AA/CM1A, CHARMM36, COMPASS | CHARMM36 | Density, shear viscosity, interfacial tension, partition coefficients | CHARMM36 predicted density within 0.5% and viscosity within 15% of experiment. GAFF and OPLS overestimated viscosity by 60-130% [27]. |

| Polyamide Reverse-Osmosis Membranes [28] | PCFF, CVFF, SwissParam, CGenFF, GAFF, DREIDING | SwissParam, CGenFF, CVFF | Dry density, porosity, Young's modulus, pure water permeability | Top performers predicted pure water permeability within the experimental 95% confidence interval. GAFF showed significant deviations in dry-state properties [28]. |

| Intrinsically Disordered Proteins (IDPs) [23] | Traditional (e.g., Amber, CHARMM variants) | Specialized MD (GaMD) & AI Methods | Conformational diversity, agreement with SAXS/CD data | Traditional fixed-charge force fields often struggle with IDP ensembles. Enhanced sampling (e.g., Gaussian accelerated MD) and AI-based generative models show superior sampling efficiency [23]. |

Table 3: Key Parameterization Features of Major Force Field Families

| Force Field Family | Parameterization Philosophy | Typical Water Model Partner | Strengths | Common Application Domains |

|---|---|---|---|---|

| AMBER | Fit to quantum mechanics (QM) calculations and experimental data for proteins/nucleic acids. [26] | TIP3P, SPC/E, OPC | Accurate torsional potentials for proteins and nucleic acids. Extensive parameter libraries. [25] | Protein folding, protein-ligand binding, DNA/RNA dynamics. [25] |

| CHARMM | Empirical optimization to reproduce experimental thermodynamic and QM data. [26] | TIP3P (CHARMM-modified) | Excellent for heterogeneous systems (e.g., proteins with lipids/membranes). Detailed lipid parameters. [25] | Membrane proteins, lipid bilayers, protein-nucleic acid complexes. [25] |

| OPLS-AA | Optimized for liquid-state properties and cohesive energy densities. [26] | TIP3P, TIP4P | Highly accurate for organic liquids and small molecule thermodynamics. [27] | Solvent modeling, ligand binding free energies, materials science. [27] [25] |

| GROMOS | Parameterized based on condensed-phase simulations to match thermodynamic properties. [26] | SPC | High computational efficiency. Good for long timescale simulations of large systems. [25] | Large-scale biomolecular systems, lipid membrane dynamics. [25] |

Methodologies for Enhanced Conformational Sampling

Achieving sufficient sampling of the conformational landscape is as critical as force field accuracy. Standard MD simulations are often limited by high energy barriers that trap the system in metastable states, a problem acutely felt when validating AI-predicted complexes that may reside in shallow energy minima.

Table 4: Comparison of Advanced Sampling Strategies

| Sampling Method | Core Principle | Typical Workflow | Advantages | Limitations & Considerations |

|---|---|---|---|---|

| Multiple Independent Simulations (MIS) [29] | Run many parallel, short simulations from diverse starting conformations. | 1. Generate diverse initial structures (e.g., from AI prediction or docking).2. Run 10s-100s of independent, short (e.g., 100 ns) MD replicates.3. Pool and analyze trajectories using cluster/PCA analysis. | Efficiently explores broad conformational space; reduces risk of single-trajectory trapping; naturally parallelizable. [29] | Determining optimal number and length of replicates is system-dependent. Convergence must be assessed globally (coverage) and locally (overlap). [29] |

| Enhanced Sampling via Collective Variables (CVs) [30] | Apply a biasing potential along predefined reaction coordinates (CVs) to drive transitions. | 1. Identify relevant CVs (e.g., distance, angle, RMSD).2. Choose method (e.g., Umbrella Sampling, Metadynamics).3. Run biased simulation(s) to sample along CVs.4. Re-weight data to reconstruct unbiased free energy surface. | Directly targets and overcomes specific barriers; enables calculation of free energies. | Choice of CVs is critical and non-trivial. Poor CVs lead to ineffective sampling. Can be computationally demanding to set up. |

| AI-Enhanced & Machine-Learned Sampling [23] [31] | Use generative AI models to produce diverse conformations or machine learning to create accurate force fields. | A) Generative AI: Train a model on structural databases to directly generate plausible conformational ensembles.B) ML Force Fields: Train a model (e.g., sGDML) on high-level QM data to create a highly accurate potential. [31] | Generative AI: Extremely efficient at exploring vast conformational spaces for IDPs. [23]ML Force Fields: Achieves quantum chemical (e.g., CCSD(T)) accuracy for small molecules. [31] | Generative AI: May generate physically unrealistic states; validation with physics-based methods is essential. [23]ML Force Fields: Currently limited to small systems (<~50 atoms); requires costly QM training data. [31] |

| Hybrid AI/MD Protocols | Use AI to generate initial states or guide CV selection, then refine with physics-based MD. | 1. Generate initial diverse ensemble with a generative AI model.2. Refine and score ensembles using short, parallel MD simulations.3. Validate final ensemble against experimental data (e.g., SAXS, NMR). | Leverages AI's exploration power and MD's physical rigor. Provides a robust validation pipeline for AI predictions. [23] | Requires integration of different software pipelines. Validation strategy must be carefully designed. |

Experimental Protocol: Multiple Independent Simulations (MIS) for Validating a Predicted Protein-Ligand Complex

This protocol, adapted from a study on RNA aptamer sampling, is highly effective for assessing the stability and dynamics of an AI-predicted protein-ligand pose [29].

Initial Structure Preparation:

- Start with the AI-predicted protein-ligand complex structure.

- Generate structural variations to create a diverse starting set. Methods include:

- Using alternate AI model outputs (e.g., different AlphaFold models).

- Molecular docking with multiple scoring functions.

- Perturbing the ligand pose with short, high-temperature MD simulations.

- A minimum of 5-10 distinctly different starting conformations is recommended.

System Setup and Equilibration:

- For each starting conformation, prepare the simulation system (solvation, ionization).

- Perform a standard energy minimization and equilibration protocol in stages: (i) restraint on heavy atoms, (ii) restraint on protein backbone, (iii) full system equilibration in the NPT ensemble.

Production Simulations:

- Launch N independent, unbiased MD simulations from each equilibrated starting structure (typically N=5-10 per starting conformation).

- Use an appropriate force field (see Table 1 & 2). Simulation length should be sufficient to observe local relaxation and initial stability; 100-500 ns is a common starting point.

- This results in a total of M (e.g., 50-100) independent simulation trajectories.

Convergence and Sampling Analysis:

- Global Convergence: Calculate the root-mean-square deviation (RMSD) and radius of gyration (Rg) over time for all trajectories. Use Principal Component Analysis (PCA) on the combined trajectory data to visualize the total conformational space covered.

- Local/Overlap Analysis: Perform cluster analysis on the combined ensemble. Assess whether trajectories starting from different points converge to similar clusters, indicating robust sampling of stable states.

- Property Monitoring: Track key interaction metrics (e.g., hydrogen bonds, contact maps, binding pocket distances) to identify stable vs. dissociated states.

Validation Outcome:

- Strong Support for AI Prediction: If a majority of independent trajectories remain stable in the predicted binding mode, with low RMSD and persistent key interactions.

- Inconclusive/Needs Refinement: If trajectories show high variance, multiple metastable states, or ligand dissociation. This suggests the AI pose may be in a shallow minimum or that further sampling (longer times, enhanced methods) is required.

- Refutation of AI Prediction: If all trajectories rapidly diverge from the predicted pose and converge to a different, consistent state.

Integration of AI and MD for Next-Generation Validation

The frontier of molecular simulation lies in the synergistic integration of AI and MD, moving beyond using MD merely as a validation tool to creating hybrid, iterative workflows.

Diagram 1: An iterative AI-MD validation and refinement pipeline for predicting molecular interactions. The feedback loop (dashed lines) allows experimental discrepancies to refine AI models.

A primary application is overcoming the sampling challenge for Intrinsically Disordered Proteins (IDPs). Traditional MD struggles to capture their vast conformational landscapes. Generative AI models, such as variational autoencoders (VAEs) or diffusion models trained on protein structure databases, can rapidly produce a wide array of plausible disordered conformations [23]. These AI-generated ensembles are not final but serve as an excellent starting point. They can be filtered and refined through short, parallel MD simulations to ensure physical realism (e.g., proper stereochemistry, energy minimization) and then validated against experimental data like small-angle X-ray scattering (SAXS) profiles or NMR chemical shifts [23]. This hybrid approach leverages the exploration strength of AI and the physical rigor of MD.

A second transformative integration is the development of machine-learned force fields (ML-FFs). Models like the symmetrized gradient-domain machine learning (sGDML) framework can construct force fields directly from high-level quantum mechanical calculations (e.g., CCSD(T)) [31]. These ML-FFs achieve "spectroscopic accuracy" for small molecules, allowing for converged MD simulations with fully quantized electrons and nuclei at a fraction of the computational cost of direct ab initio MD. While currently applicable to systems of only a few dozen atoms, they represent the future for simulating chemical reactions, excited states, or systems where electronic polarization is critical—scenarios where classical force fields fail. In a validation pipeline, an ML-FF could be used to perform ultra-accurate, short simulations of a ligand binding site or a catalytic core to definitively assess the stability of an AI-predicted pose.

Diagram 2: Workflow for AI-driven ensemble generation refined by physics-based MD simulation.

Table 5: Key Software, Platforms, and Resources

| Category | Tool Name | Primary Function | Relevance to Validation | Key Feature |

|---|---|---|---|---|

| Simulation Engines | GROMACS, AMBER, NAMD, OpenMM, LAMMPS | Performing high-performance MD calculations. | Core workhorse for running production simulations. | OpenMM and GROMACS offer strong GPU acceleration. AMBER/NAMD are standards for biomolecules. |

| Enhanced Sampling Suites | PLUMED, PySAGES [30], SSAGES | Implementing advanced sampling methods (Metadynamics, ABF, etc.). | Essential for overcoming barriers and calculating free energies of binding or conformational change. | PySAGES provides GPU-accelerated methods and easy Python integration [30]. PLUMED is the most widely used. |

| Analysis & Visualization | VMD, PyMOL, MDTraj, MDAnalysis, Bio3D | Trajectory analysis, visualization, and metric calculation. | Critical for analyzing RMSD, RMSF, interactions, and preparing figures. | MDAnalysis/MDTraj are programmable Python libraries for automated analysis pipelines. |

| AI/ML Integration | PyTorch, TensorFlow, JAX | Building and deploying custom AI/ML models. | For developing or using generative models for sampling or ML force fields. | JAX is central to modern libraries like PySAGES for differentiable programming [30]. |

| Specialized Platforms | ANTON Supercomputer, Google Cloud TPUs, Folding@home | Specialized hardware for extremely long timescale simulations. | Enables microsecond-to-millisecond simulations for direct observation of rare events. | ANTON has been pivotal for force field benchmarking studies [24]. |

| Benchmark Datasets | Protein Data Bank (PDB), NMR data for Ubq/GB3 [24], experimental membrane data [27] [28] | Sources of ground-truth experimental structures and properties. | The ultimate reference for validating both AI predictions and MD simulation accuracy. | Force field selection should be guided by performance on relevant benchmark systems. |

A Practical Workflow: Applying MD Simulations to Validate and Refine AI-Generated Models

Within the broader thesis on validating AI-predicted molecular interactions, the integrity of the entire computational pipeline hinges on the foundational step of system preparation. An accurately constructed, physics-ready simulation box is non-negotiable for producing molecular dynamics (MD) trajectories that can reliably test artificial intelligence (AI) forecasts of binding affinities, conformational changes, and resistance mechanisms [32]. This guide objectively compares the dominant methodologies and software suites for transforming a raw Protein Data Bank (PDB) file into a solvated, ionized, and neutralized simulation system, providing the empirical scaffolding for subsequent AI validation.

Methodological Comparison of System Preparation Workflows

The initial setup of a molecular dynamics system involves a series of critical decisions that directly impact simulation stability, computational cost, and biological relevance. The table below compares the predominant approaches for key stages of system preparation, informed by current community practices and literature.

Table 1: Comparison of System Preparation Methodologies and Outcomes

| Preparation Stage | Primary Methodologies | Key Performance Considerations | Typical Software Suites | Impact on AI Validation |

|---|---|---|---|---|

| Structure Repair & Completion | Homology Modeling (e.g., MODELLER): Rebuilds missing loops/termini using structural templates. Physics-Based Refinement: Uses energy minimization to fix steric clashes. | Accuracy vs. Risk: Homology modeling can introduce template bias; physics-based methods may not correct large gaps. Essential for ensuring the protein's functional state is modeled [33]. | MODELLER, Rosetta, CHARMM-GUI, SwissModel | Directly affects the starting conformation for assessing AI-predicted poses or interaction networks. Gaps lead to non-physical dynamics. |

| Solvation (Water Box) | Explicit Solvent: Surrounds solute with thousands of water molecules (e.g., TIP3P, SPC/E, OPC). Implicit Solvent: Models water as a continuous dielectric field. | Accuracy/Cost Trade-off: Explicit solvent is computationally expensive but captures specific water-mediated interactions critical for binding. Implicit solvent is fast but misses these details [34]. | GROMACS, AMBER, NAMD, OpenMM | Explicit solvent is the gold standard for validating detailed AI interaction predictions. Implicit models may suffice for high-throughput pre-screening. |

| Neutralization & Ion Placement | Random Replacement: Replaces random water molecules with ions. Electrostatic Potential Mapping: Places ions at points of strongest electrostatic potential [34]. | Equilibration Time: Random placement requires longer equilibration to achieve realistic ion distributions. Potential-based placement is more physically realistic and accelerates convergence [34]. | AMBER tleap/addions, GROMACS genion, CHARMM-GUI |

Correct charge environment is crucial for simulating pH effects and ion-dependent binding predicted by AI models. |

| System Size & Shape | Isotropic (Cube): Equal box dimensions. Truncated Octahedron: Minimizes water count for a given solute-wall distance. Rectangular: Used for membrane simulations. | Computational Efficiency: Truncated octahedron saves ~25% water molecules vs. a cube. Artifact Risk: Box must be large enough to prevent solute from interacting with its periodic image [34]. | All major MD packages | System size balances computational cost (limiting sampling depth) with the need to avoid finite-size artifacts, which can skew free energy estimates for AI-predicted binding. |

The choice of force field, while not listed as a preparation step per se, is a critical parallel decision. Compatibility between the chosen force field (e.g., AMBER's FF19SB [34], CHARMM36m, OPLS-AA/M) and the water model (e.g., OPC [34], TIP3P) is essential for thermodynamic accuracy.

Detailed Experimental Protocols for Key Steps

Neutralization and Ion Concentration Matching

A system must be electrically neutral for long-range electrostatic calculations under periodic boundary conditions to be valid [34]. The protocol involves two steps: neutralization and achieving physiological ionic strength.

- Neutralization: After loading the protein and applying a force field, the total charge is calculated. A corresponding number of counter-ions (e.g., Na⁺ for a negatively charged protein) are added. In AMBER's

tleap, this is done with a command likeaddions s Na+ 0, where0tells the program to add enough ions to neutralize the net charge [34]. - Adding Physiological Salt Concentration: To mimic a physiological environment (e.g., 150 mM NaCl), additional ion pairs must be added. The number can be estimated using the formula:

N_ions = 0.0187 * [Molarity] * N_water[34]. For example, for a 0.15 M solution in a box with 10,202 water molecules:0.0187 * 0.15 * 10202 ≈ 29ion pairs [34]. More accurate methods, like the SLTCAP server, account for the solute's excluded volume and screening effects, which may yield a different number (e.g., 24 pairs for the same system) [34]. Ions are typically added by replacing random water molecules, though placement via electrostatic potential is recommended for stability [34].

Complete Workflow for AMBER/tleap

The following protocol, adapted from a tutorial for the protein 1RGG, outlines a reproducible setup sequence [34]:

- Load Force Fields and Structure: Source the appropriate protein and water force field files (e.g.,

leaprc.protein.ff19SB,leaprc.water.opc). - Load and Neutralize: Load the PDB file and add counter-ions to neutralize the system's net charge.

- Solvate: Place the solute in a predefined water box (e.g.,

solvatebox s SPCBOX 15 iso). The15specifies a 15 Å buffer between the solute and the box edge, andisocreates an isotropic (cubic) box [34]. - Add Salt: Add the calculated number of ion pairs (e.g.,

addionsrand s Na+ 24 Cl- 24) to reach the target concentration. - Handle Special Features: Formulate disulfide bonds or other covalent modifications (e.g.,

bond s.7.SG s.96.SG). - Output Files: Save the topology (

parm7) and coordinate (rst7) files for simulation.

This sequence can be automated in a script (solvate_1RGG.leap) for reproducibility [34].

Workflow Diagram: From PDB to Simulation Box

The following diagram maps the logical sequence and decision points in a robust system preparation pipeline.

The Scientist's Toolkit: Essential Research Reagent Solutions

The "reagents" in computational biochemistry are software tools, force fields, and parameters. This table details the essential components for the system preparation phase.

Table 2: Essential Research Reagent Solutions for MD System Preparation

| Reagent Category | Specific Examples | Primary Function | Considerations for AI Validation Studies |

|---|---|---|---|

| Structure Preparation Suites | MODELLER [33], UCSF Chimera, CHARMM-GUI | Rebuilds missing residues and atoms, adds hydrogens, optimizes side-chain rotamers. | Ensures the initial atomic model is complete and chemically plausible, providing a correct baseline for testing AI predictions. |

| Force Fields | AMBER (ff19SB) [34], CHARMM36m, OPLS-AA/M, GROMOS | Defines the potential energy function governing bonded and non-bonded atomic interactions. | Choice must be validated for the specific molecule class (proteins, lipids, nucleic acids). Inconsistency between AI training data force field and simulation force field can invalidate comparisons. |

| Solvent Models | Explicit Water (TIP3P, SPC/E, OPC) [34], Implicit Solvent (GB/SA) | Mimics the aqueous environment. Explicit models are standard for accuracy; implicit models offer speed. | Explicit water is critical for validating predictions of water-mediated hydrogen bonds or hydrophobic interactions. |

| Ion Parameters | Joung-Cheatham (for AMBER), CHARMM, GROMOS | Defines the van der Waals and electrostatic properties for ions like Na⁺, K⁺, Cl⁻, Mg²⁺. | Correct ion parameters are vital for simulating ion-dependent processes or allosteric regulation predicted by AI. |

| Automation & Scripting Tools | Python/MDAnalysis, Bash Scripting, Jupyter Notebooks | Automates repetitive preparation and analysis steps, ensuring reproducibility. | Essential for creating large, consistent datasets of prepared systems to benchmark or train AI models [35]. |

Integration with AI Validation Frameworks

The prepared simulation system is the launchpad for rigorous AI validation. For instance, an explainable AI (xAI) framework like NeurixAI can predict key genes influencing drug response by modeling drug-gene interactions [36]. Molecular dynamics of the drug-target complex, initiated from a properly prepared system, can test these predictions at an atomic level, visualizing and quantifying the stability of the binding pose and the involvement of specific residues [32].

This iterative validation loop—where AI identifies potential interaction hotspots and MD simulations physically test them—requires the simulation's initial conditions to be beyond reproach. Advanced sampling simulations, which start from the prepared box, can then compute binding free energies to provide quantitative metrics for validating AI-predicted affinities [33].

Diagram: AI Validation Loop via Molecular Dynamics The following diagram illustrates how a prepared MD system integrates into a cycle for validating AI-predicted interactions.

In conclusion, the meticulous preparation of a solvated and ionized simulation box is a critical, non-trivial step that transforms a static PDB coordinate set into a dynamic, physics-based model. The methodologies and tools compared here provide researchers with a roadmap for establishing a solid foundation. In the context of AI validation, this rigorous preparation ensures that the subsequent simulation data provides a trustworthy ground truth against which intelligent predictions are measured, thereby accelerating the discovery of novel therapeutic interactions [32].

In the modern paradigm of AI-driven drug discovery, computational pipelines rapidly generate predictions for novel drug-target interactions (DTIs) and lead compounds [12]. Before investing in costly experimental validation, molecular dynamics (MD) simulation serves as a crucial intermediary step to assess the structural stability and binding dynamics of these AI-proposed complexes. The reliability of this assessment hinges entirely on a foundational step: the equilibration protocol.

Equilibration prepares a molecular system—often starting from an AI-predicted pose or a static crystal structure—for production simulation by stabilizing its temperature and pressure to match target experimental or physiological conditions (e.g., 300 K, 1 bar). A poorly equilibrated system yields non-physical artifacts, rendering subsequent trajectory analysis misleading. This is particularly critical when validating AI predictions, as the goal is to distinguish genuinely stable interactions from false positives. Research indicates that assuming equilibrium without rigorous checks is a common oversight that can invalidate simulation results [37]. Therefore, selecting an efficient and robust equilibration protocol is not merely a technical prerequisite but a fundamental determinant of success in the molecular validation of AI-predicted interactions.

This guide objectively compares three established equilibration methodologies—Conventional Annealing, the Lean Method, and a novel Ultrafast Algorithm—within the context of this research workflow. We provide supporting experimental data on their computational efficiency and effectiveness in achieving stable system properties.

Comparative Analysis of Equilibration Protocols

The following table summarizes a quantitative comparison of three key equilibration protocols, based on performance data from simulations of ion exchange polymers, a complex system relevant to membrane protein studies [38]. The "Ultrafast Algorithm" represents a modern, optimized approach.

Table: Performance Comparison of Equilibration Protocols [38]

| Protocol | Key Steps (Ensemble Sequence) | Typical Time to Density Convergence (Relative) | Computational Efficiency (Relative to Annealing) | Primary Use Case & Notes |

|---|---|---|---|---|

| Conventional Annealing | Repeated cycles of NVT and NPT ensembles across a wide temperature range (e.g., 300K-1000K). | 1.0x (Baseline) | 1.0x (Baseline) | Historically common; considered robust but computationally expensive for large systems. |

| Lean Method | A simplified two-step process: an initial NPT ensemble (often at elevated temperature) followed by a long NVT ensemble at target temperature. | ~1.5x - 2x faster than Annealing | ~200% more efficient than Annealing [38] | Used for faster equilibration; may require careful parameter tuning to ensure proper stabilization. |

| Ultrafast Algorithm | A robust, optimized sequence of NVT and NPT stages with intelligent scaling and relaxation steps, avoiding brute-force temperature cycling. | ~3x faster than Annealing | ~600% more efficient than the Lean Method [38] | Designed for maximum speed and reliability in large-scale systems (e.g., multi-chain membranes). |

Key Comparative Insights:

- Efficiency Gap: The data shows a significant efficiency gradient, with the Ultrafast Algorithm outperforming the Lean Method by 600%, which itself is 200% more efficient than Conventional Annealing [38]. This translates to substantial savings in computational time and resources.

- Accuracy Consideration: While faster, the Lean and Ultrafast methods must not compromise the stability of the equilibrated system. The cited study demonstrated that the Ultrafast Algorithm successfully achieved target density and stable energy levels, making it suitable for subsequent production simulations [38].

- Practical Selection: The choice depends on system size, complexity, and available resources. For initial validation of AI-predicted small molecule-protein complexes, the Lean Method may suffice. For larger systems like membrane proteins or multi-chain assemblies, the Ultrafast Algorithm offers a superior balance of speed and reliability.

Detailed Experimental Protocols

Protocol for Conventional Annealing

This traditional method uses thermal cycling to overcome energy barriers and achieve a stable state [38].

- Energy Minimization: The initial structure is minimized using algorithms like steepest descent to remove steric clashes.

- Heating Phase (NVT): The system is heated from a low temperature (e.g., 10K) to the target temperature (e.g., 300K) over tens to hundreds of picoseconds.

- Annealing Cycles: Multiple cycles are run, each consisting of:

- A high-temperature phase (e.g., 600K or 1000K) under NPT or NVT ensembles to encourage conformational exploration.

- A cooling phase back to the target temperature.

- Density Stabilization (NPT): The system is simulated under an NPT ensemble at the target temperature and pressure (1 bar) until the system density fluctuates around a stable value. Steps 3-4 are repeated until the target experimental density is achieved [38].

Protocol for the Lean Method

This streamlined approach aims for faster equilibration with fewer steps [38].

- Initial Solvation and Minimization: The solute is solvated in a water box, and ions are added for neutrality. The entire system undergoes energy minimization.