Beyond the Black Box: Solving AI Pharmacology's Data Dilemma for Smarter Drug Development

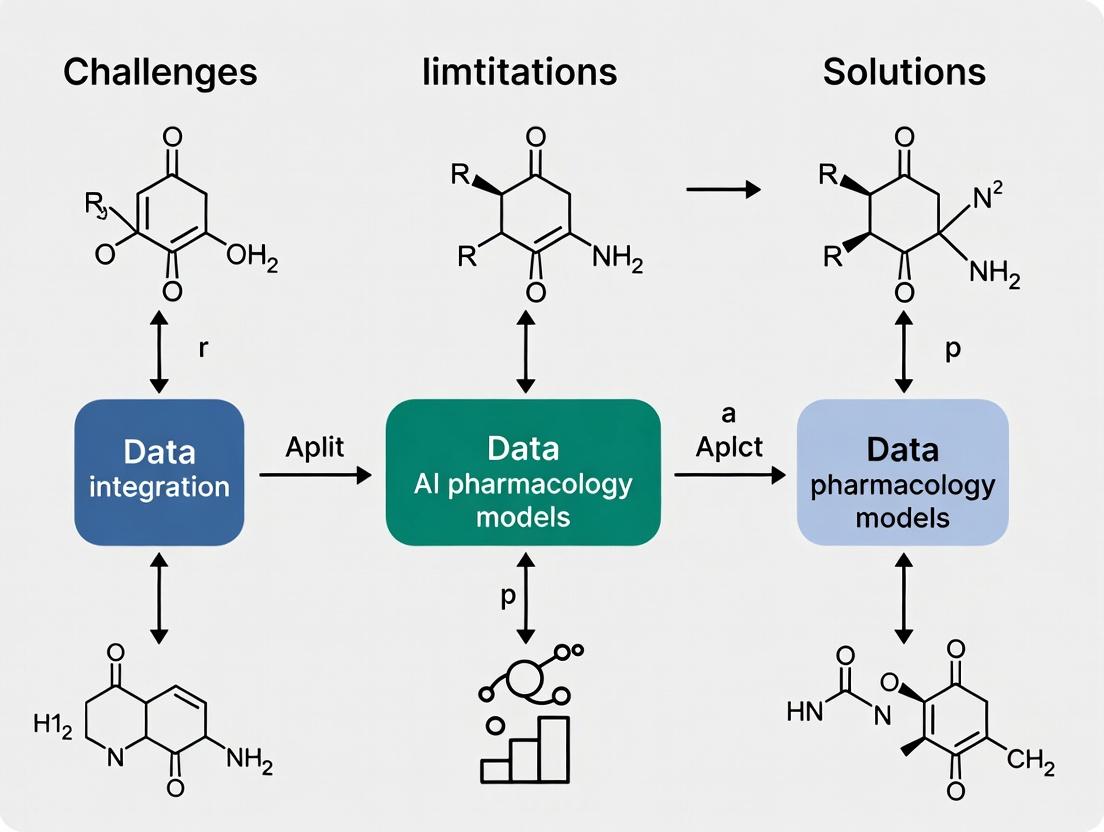

This article provides a comprehensive analysis for researchers and drug development professionals on overcoming the critical data limitations hindering AI pharmacology models.

Beyond the Black Box: Solving AI Pharmacology's Data Dilemma for Smarter Drug Development

Abstract

This article provides a comprehensive analysis for researchers and drug development professionals on overcoming the critical data limitations hindering AI pharmacology models. We explore the fundamental challenges of data scarcity, quality, and bias that create bottlenecks in pharmacokinetics, pharmacodynamics, and drug discovery [citation:1][citation:2]. The scope extends to methodological innovations like synthetic data generation and hybrid modeling, practical strategies for troubleshooting model opacity and ethical risks, and frameworks for rigorous validation. By synthesizing current research and industry insights, this guide outlines a pathway to build more robust, generalizable, and trustworthy AI tools capable of accelerating precision medicine and therapeutic innovation [citation:5][citation:9].

The Data Bottleneck: Diagnosing Scarcity, Noise, and Bias in AI Pharmacology

Characterizing the 'Small Data' Problem in Clinical Pharmacology and Rare Diseases

In clinical pharmacology and rare disease research, the promise of artificial intelligence (AI) to accelerate discovery and personalize treatment collides with a fundamental constraint: the severe scarcity of high-quality, relevant data. While "Big Data" has transformed many fields, drug development for rare conditions operates in a "Small Data" regime, defined by limited patient populations, heterogeneous disease presentations, and costly, sparse experimental data points [1] [2]. This technical support center is designed within the broader thesis that overcoming these data limitations is the critical path to unlocking reliable AI in pharmacology. The following guides address the most pressing operational challenges researchers face, providing actionable strategies, protocols, and resources to navigate the small data landscape.

Frequently Asked Questions & Troubleshooting Guides

Category 1: Challenges in Data Generation & Collection

Q1: How can I design a meaningful pharmacokinetic/pharmacodynamic (PK/PD) study for a rare disease with an extremely small and heterogeneous patient cohort?

- Problem: Traditional study designs require large sample sizes for statistical power, which is impossible for rare diseases. Heterogeneity in disease manifestation and progression further complicates the extraction of generalizable signals.

- Solution & Protocol: Implement a rich sampling, population PK (PopPK) approach combined with physiologically-based pharmacokinetic (PBPK) modeling to maximize information from every data point.

- Study Design: Opt for sparse sampling per patient but enroll as many patients as possible across multiple clinical sites. Collect rich covariate data (genetics, organ function, concomitant medications) [3].

- Sample Analysis: Utilize a core facility like a Clinical Pharmacology Shared Resource (CPSR) for Good Laboratory Practice (GLP)-compliant, sensitive bioanalytical assays to quantify drug and metabolite concentrations from minimal sample volumes (e.g., dried blood spots) [3].

- Data Integration: Use PopPK software (e.g., NONMEM, Monolix) to build a model that describes between-subject variability. Integrate prior knowledge from in vitro assays or similar compounds using PBPK platforms (e.g., GastroPlus, Simcyp) to inform and constrain the model.

- Leverage Real-World Data (RWD): Augment trial data with RWD from registries or electronic health records to understand natural disease history and treatment patterns [2].

Q2: My in vitro drug sensitivity data (e.g., IC50) from cancer cell lines seems to predict drug potency but fails to translate to patient-specific response. What is wrong?

- Problem: Standard metrics like IC50 or AUC are often dominated by a drug's inherent potency or toxicity, creating high correlation across diverse cell lines and masking subtler, biologically relevant differences crucial for personalized prediction [4].

- Solution & Protocol: Re-normalize drug response metrics to focus on relative, not absolute, effects.

- Re-analysis Workflow: For your dose-response matrix (drugs x cell lines/organoids), calculate a z-score for each drug separately. Formula:

z-score = (Individual Response - Mean Response for that Drug) / Standard Deviation for that Drug[4]. - Interpretation: This transformation removes the drug-specific bias. A high z-score indicates a cell line is unusually sensitive to that drug relative to the average, while a low z-score indicates unusual resistance.

- Validation: Train your AI/ML model to predict these z-scored values. A model that succeeds is learning true biological signatures of differential response rather than memorizing generic drug toxicity [4].

- Alternative Metrics: Consider using the Normalized Growth Rate Inhibition (GR) metric, which accounts for confounding effects of cell division rate [4].

- Re-analysis Workflow: For your dose-response matrix (drugs x cell lines/organoids), calculate a z-score for each drug separately. Formula:

Table 1: Summary of Key Quantitative Data on AI Limitations and Data Challenges

| Data Aspect | Key Finding/Statistic | Implication for Small Data Problems | Source |

|---|---|---|---|

| AI Hallucination Rate | Up to 90% in certain medical domains; 50% accuracy for drug info queries vs. specialist centers. | Highlights extreme risk of using general AI without domain-specific tuning and validation on scarce data. | [5] |

| Diagnostic Error Rates | Median discrepancy rate between pathologists: 18.3%; major discrepancies: 5.9%. | Provides a benchmark for human performance; AI tools must be assessed for clinical impact, not just technical metrics. | [6] |

| Prescription Error Impact | ~1.5 million preventable adverse events, ~$3.5 billion annual cost in the U.S. | Demonstrates the high stakes of getting pharmacology decisions right, even with incomplete data. | [7] [8] |

| Drug Response Correlation | Very high correlation of IC50 across different cancer cell lines, driven by drug potency. | Shows why raw experimental data can mislead AI models; normalization (e.g., z-scoring) is essential. | [4] |

Category 2: Challenges in AI Model Training & Development

Q3: I want to build a predictive model for drug-target interaction, but I have less than 100 positive examples for my rare disease target. How can I train a robust model?

- Problem: Deep learning models are data-hungry and will overfit on tiny datasets, producing unreliable and non-generalizable predictions.

- Solution & Protocol: Employ Transfer Learning (TL) and Multi-Task Learning (MTL) frameworks.

- Transfer Learning Protocol:

- Step 1 - Source Model: Obtain a pre-trained model (e.g., a graph neural network) trained on a large, general molecular dataset (e.g., ChEMBL, PubChem) to predict broad chemical properties or interactions.

- Step 2 - Model Adaptation: Replace the final output layer of the source model. Keep the earlier layers (which encode fundamental chemical features) "frozen" or apply a very low learning rate to them.

- Step 3 - Fine-Tuning: Re-train (fine-tune) the modified model on your small, specific dataset for the rare disease target. This allows the model to apply general chemical knowledge to your specific problem [9].

- Multi-Task Learning Protocol:

- Step 1 - Task Selection: Identify several related prediction tasks (e.g., activity against multiple related targets, ADMET properties). These tasks should share underlying biological or chemical features.

- Step 2 - Shared Architecture: Design a neural network with shared hidden layers that learn a common representation from all tasks.

- Step 3 - Joint Training: Train the model simultaneously on all tasks. The shared layers benefit from the combined signal of all data, improving generalization on your primary small-data task [9].

- Transfer Learning Protocol:

Q4: My proprietary data on a rare disease is too limited to build a good model. Collaborating is difficult due to privacy and IP concerns. What are my options?

- Problem: Data silos prevent the pooling of scarce datasets necessary to build powerful AI models.

- Solution & Protocol: Implement a Federated Learning (FL) collaboration framework.

- Collaboration Setup: Partner with other institutions holding relevant data. A central server coordinates the process.

- Training Cycle:

- Step 1: The central server sends the current global AI model to each participating institution.

- Step 2: Each institution trains the model locally on its own private data. No raw data leaves the institution.

- Step 3: Each institution sends only the model updates (e.g., gradients or weights) back to the central server.

- Step 4: The server aggregates these updates to improve the global model. Steps 1-4 are repeated [9].

- Technical Consideration: Use frameworks like PySyft or TensorFlow Federated. Establish clear agreements on model ownership and the use of the final global model.

Diagram 1: Federated Learning Workflow for Multi-Institution Collaboration

Category 3: Challenges in Validation & Deployment

Q5: How do I validate my AI model when there is no large hold-out test set available, and traditional performance metrics seem insufficient?

- Problem: With small data, splitting into train/validation/test sets severely reduces learning signal. Standard metrics (e.g., accuracy, AUC-ROC) may not reflect clinical utility or error severity.

- Solution & Protocol: Adopt nested cross-validation and implement clinical impact assessment.

- Nested Cross-Validation Protocol:

- Step 1 - Outer Loop: Split your total data into K folds (e.g., K=5). Reserve one fold as the "final test" set.

- Step 2 - Inner Loop: On the remaining K-1 folds, perform another cross-validation to select optimal model hyperparameters and perform feature selection.

- Step 3 - Training & Evaluation: Train the model with optimal settings on the K-1 folds and evaluate on the held-out outer test fold. Repeat for all K outer folds. This gives a robust estimate of performance on unseen data while using all data efficiently.

- Clinical Error Assessment Protocol:

- Step 1 - Error Audit: Work with a clinical pharmacologist to categorize the model's errors not just as "false positives/negatives," but by clinical severity (e.g., "Error leading to potential toxicity" vs. "Error suggesting a suboptimal but safe alternative") [6].

- Step 2 - Near-Miss Analysis: If deploying in a workflow (e.g., prescription aid), instrument the system to flag "near-miss" events where the AI output was corrected by a human expert. The reduction in this rate is a key safety metric [8].

- Step 3 - Guardrails: Implement rule-based safety guardrails that halt AI output if it violates core clinical logic (e.g., dose exceeding maximum daily limit, dangerous drug-disease contradiction) [8].

- Nested Cross-Validation Protocol:

Q6: How can I use AI to assist with medication safety without introducing new risks from "hallucinations" or incorrect data?

- Problem: General-purpose large language models (LLMs) confidently generate incorrect or fabricated information ("hallucinations"), a critical risk in pharmacology [5].

- Solution & Protocol: Develop a domain-specific, guardrail-protected "copilot" system following the MEDIC (medication direction copilot) blueprint [8].

- System Design: The AI should not operate autonomously but as an assistant within a human-in-the-loop workflow (e.g., pharmacist verification).

- Training Data: Fine-tune a compact, efficient model (e.g., DistilBERT) on a small, high-quality dataset of expert-annotated medical instructions (~1000 examples can suffice) [8].

- Architecture: Decompose the task. First, use the AI to extract discrete clinical components (drug, dose, route, frequency) from text. Then, use a separate, rules-based module to assemble these into a standard instruction using a verified medication database [8].

- Safety Guardrails: Program hard stops if the AI output: conflicts with the drug database, is internally inconsistent, misses a critical component, or suggests an implausible administration form [8].

Diagram 2: AI Copilot Architecture with Safety Guardrails

Table 2: Research Reagent Solutions: Key Databases & Core Facilities

| Resource Name | Type | Primary Function in Small Data Context | Access / Notes |

|---|---|---|---|

| Clinical Pharmacology [10] | Database | Provides peer-reviewed drug monographs & off-label use info. Critical for establishing prior knowledge for modeling. | Restricted institutional access. |

| BenchSci [10] | AI-Powered Search | Uses ML to find specific antibodies from published figures. Accelerates reagent selection for validation experiments. | Free with academic email. |

| PubMed / MEDLINE [10] | Literature Database | Foundational for systematic reviews, hypothesis generation, and identifying analogous research. | Open access. |

| Scopus / Web of Science [10] | Citation Database | Enables literature mapping and identification of key researchers for potential collaboration. | Institutional subscription. |

| Clinical Pharmacology Shared Resource (CPSR) [3] | Core Facility | Provides end-to-end PK/PD study support: protocol design, GLP bioanalysis, PK modeling. Essential for generating high-quality primary data. | Fee-for-service at cancer centers (e.g., KU). |

The integration of Artificial Intelligence (AI) into pharmacology promises a revolution in drug discovery, personalized dosing, and safety monitoring [11]. However, this potential is constrained by a foundational challenge: data quality. In AI pharmacology, models for predicting drug behavior or patient response are only as reliable as the data used to train them [5]. Poor data quality cascades through the research pipeline, leading to irreproducible experiments in the lab and unreliable evidence from sparse clinical trials [12] [13].

This technical support center is designed to help researchers, scientists, and drug development professionals diagnose, troubleshoot, and overcome critical data quality limitations. By providing actionable guides and frameworks, we aim to support the broader thesis that overcoming data limitations is not merely a technical step, but the essential prerequisite for building robust, trustworthy, and clinically impactful AI models in pharmacology.

Troubleshooting Guides

Guide 1: Diagnosing and Remedying Irreproducible AI Model Outputs

A model that yields different results on the same data indicates a core reproducibility failure, often rooted in code and data practices [14].

- Problem: Your AI/ML script produces different effect size estimates or predictions each time it is run, even with the same input dataset.

- Investigation & Solution:

- Check for Random Seeds: Ensure all random number generators (e.g., in Python's

numpy,random, or PyTorch libraries) are seeded at the beginning of your script. Document these seeds in the code comments [14]. - Audit Code Transparency: Review your analytical code. Is every data transformation, inclusion/exclusion criterion, and feature engineering step clearly documented? Reproducibility failures in real-world evidence studies are frequently due to ambiguous operational definitions (e.g., "first diagnosis date") [12]. Annotate your code to eliminate these ambiguities.

- Verify Package Environments: Different versions of software packages can alter results. Use environment management tools (e.g., Conda, Docker) to containerize your project with exact package versions [14].

- Implement Peer Code Review: Have a colleague review your code using a structured checklist. This practice, common in software engineering, catches errors and improves clarity, directly enhancing reproducibility [14].

- Check for Random Seeds: Ensure all random number generators (e.g., in Python's

Guide 2: Addressing Poor Performance in Predictive PK/PD Models

When a pharmacokinetic/pharmacodynamic (PK/PD) model performs well on training data but fails on new clinical data, the issue often lies with the data's representativeness or quality [11].

- Problem: Your machine learning model for predicting drug exposure or effect generalizes poorly to external patient cohorts or trial data.

- Investigation & Solution:

- Assess Data Sparsity and Timing: PK/PD data from clinical trials can be sparse and irregularly sampled. Standard models may fail. Consider switching to or integrating AI architectures designed for such data, like Recurrent Neural Networks (RNNs) or Neural Ordinary Differential Equations (NeuralODEs), which can handle irregular time-series data more effectively [11].

- Evaluate Data Provenance: Scrutinize the source of your training data. Does it come from a homogeneous population? Models trained on narrow demographic or clinical trial data may fail in broader, real-world populations. Seek out or generate more diverse training datasets where possible.

- Hybrid Modeling Approach: Instead of a pure AI model, develop a hybrid model that combines a traditional physiology-based PK model with a machine learning component. The mechanistic model provides a strong biological prior, while the ML component corrects for individual variability, often leading to more robust predictions [11].

- Implement Rigorous External Validation: Never rely solely on internal validation. Strictly partition your data or, better, validate the model on a completely independent dataset from a different source or clinical site to test true generalizability [11].

Guide 3: Managing Variable Data Quality in Decentralized and Real-World Trials

Flexible trial designs improve access but introduce variability in data collection methods and quality [15] [13].

- Problem: Data streaming from wearable devices, electronic health records (EHRs), or multiple trial sites is inconsistent, fragmented, and contains unexpected missing values.

- Investigation & Solution:

- Deploy Automated Data Quality (DQ) Checks: Use automated tools to run validation on incoming data streams. Key checks include trend analysis for physiological readings, unit of measure consistency, and range validation for critical clinical variables [13]. Automation is essential for scaling these checks.

- Establish a Risk-Based Monitoring Protocol: Move away from 100% source data verification. Use centralized monitoring tools to statistically identify outlier sites or anomalous data patterns for targeted, on-site review. This approach is more efficient and effectively ensures data integrity [16].

- Standardize at Point of Capture: Work with technology providers to enforce standardized data formats and value sets (e.g., SNOMED CT codes) within digital case report forms (eCRFs) and device software to reduce entry errors and fragmentation [13].

- Create a Unified Data Pipeline: Implement a central data platform that ingests data from all sources (EHRs, wearables, eCRFs), applies transformation and quality rules, and creates a single, analysis-ready dataset to break down data silos [13].

Frequently Asked Questions (FAQs)

Q1: Our lab’s cell-based assay results for a compound’s EC50 are inconsistent with a collaborator’s findings. Where should we start troubleshooting? Start by standardizing your reagent preparation. The most common reason for inter-lab EC50/IC50 variability is differences in compound stock solution preparation (typically at the 1 mM stage) [17]. Ensure identical solvents, storage conditions, and dilution protocols. Next, verify that both labs are using the same assay format (e.g., binding vs. activity assay) and that the instrument filter sets are correctly configured for the detection method (e.g., exact filters for TR-FRET) [17].

Q2: What is the minimum acceptable standard for an assay’s data quality before we can confidently use it for screening? Do not rely on the assay window size alone. The key metric is the Z'-factor, which incorporates both the signal dynamic range and the data variation [17]. Calculate it using positive and negative control samples. A Z'-factor > 0.5 is widely considered the threshold for an assay robust enough for screening purposes. An assay with a large window but high noise (low Z'-factor) is less reliable than one with a smaller, more precise window [17].

Q3: How can we improve the reproducibility of our real-world evidence (RWE) studies using EHR data? Focus on methodological transparency. A major study found that incomplete reporting of operational details (e.g., exact algorithms for defining exposure windows, covariate measurements, and cohort entry dates) is the primary barrier to reproducibility [12]. Provide a detailed attrition flow diagram, publish your analysis code, and use a structured template to report all data transformation decisions. This moves your study from being merely "replicable in principle" to independently reproducible [14] [12].

Q4: Can AI language models like ChatGPT be used to source or validate drug information for research? Use extreme caution. While tempting, current general-purpose Large Language Models (LLMs) have high hallucination rates for technical medical information, generating false citations or incorrect mechanistic data with a confident tone [5]. They are not reliable standalone resources for drug information. Their current utility is in education and drafting, but all outputs must be rigorously verified against authoritative, primary sources like biomedical literature and trusted databases [5].

Q5: What are the regulatory consequences of poor data quality in drug development? They are severe and direct. Regulatory agencies like the FDA and EMA can deny drug applications based on insufficient or poor-quality data from clinical trials [13]. Inspections can reveal data integrity lapses (e.g., inadequate record-keeping), leading to warnings, fines, and placement on import alert lists, which devastate a company's credibility and market access [13]. Robust data governance is a regulatory imperative, not just a technical best practice.

The following tables synthesize key quantitative findings on reproducibility and data quality practices.

Table 1: Reproducibility of Real-World Evidence Studies (Analysis of 150 Studies) [12]

| Metric | Finding | Implication |

|---|---|---|

| Correlation of Effect Sizes | Pearson’s correlation = 0.85 between original and reproduced results. | Strong overall reproducibility, but significant room for improvement exists. |

| Relative Effect Magnitude | Median ratio (original/reproduction) = 1.0 [IQR: 0.9, 1.1]. Range: [0.3, 2.1]. | While most results are closely reproduced, a subset diverges substantially (up to 3-fold differences). |

| Sample Size Reproduction | 21% of reproduction cohorts were <50% or >200% the size of the original. | Ambiguity in defining study populations (inclusion/exclusion, index date) is a major source of irreproducibility. |

| Reporting of Key Parameters | Median of 4 out of 6 key design categories required assumptions to be made during reproduction. | Published methods sections are consistently incomplete, forcing guesswork and hindering independent verification. |

Table 2: Data Quality Management Practices in Clinical Trials (Survey of 20 Australian Trial Sites) [16]

| Practice | Prevalence Among Sites | Note |

|---|---|---|

| Use of Centralized Monitoring | 65% | The most common procedure, aligning with modern risk-based approaches. |

| Existence of a Data Management Plan | 50% | Highlights that half of the sites may lack a formal, documented strategy for data quality. |

| Pre-defined Error Acceptance Level | 10% | Only 2 sites had a defined threshold (e.g., <5% discrepancy), indicating a lack of standardized benchmarks. |

| Average Staff Training on Data Quality | 11.58 hours/person/year | Suggests variable investment in building data competency among trial staff. |

Detailed Experimental Protocol: Establishing a Reproducible AI Pharmacology Workflow

This protocol outlines a standardized workflow for developing an AI model for a pharmacology task (e.g., predicting trough concentrations of a drug) while embedding reproducibility at each step.

1. Project Initialization & Environment Setup

- Objective: Create a stable, documented computational environment.

- Steps:

- Initialize a version-controlled repository (e.g., Git).

- Create a

README.mdfile specifying the project title, aim, and data source descriptions. - Use a package manager (e.g.,

conda) to create a new environment. Document all installed packages and their versions in anenvironment.ymlfile [14]. - For higher reproducibility, write a

Dockerfileto define a container with the exact OS and software stack.

2. Data Ingestion & Preprocessing

- Objective: Transform raw data into an analysis-ready dataset with transparent, auditable steps.

- Steps:

- Keep raw data immutable. Perform all transformations via code.

- Create a single, well-commented script (e.g.,

01_data_preprocessing.R) that performs: data cleaning, handling of missing values, variable derivation, and application of inclusion/exclusion criteria. - Critical: Generate and save a participant flow diagram (attrition table) showing cohort counts at each filtering step [12].

- Output a clean dataset and a accompanying data dictionary detailing each variable, its source, and transformation logic [14].

3. Model Development & Training

- Objective: Build a predictive model with traceable hyperparameters and training splits.

- Steps:

- Explicitly set and record random seeds for data splitting and model initialization.

- Partition data into training, validation, and test sets. Save the unique identifiers for each split to allow exact reconstruction.

- Develop the model (e.g., a hybrid PK-ML model [11]). Use version control to track changes to the model architecture code.

- Log all hyperparameters, training metrics, and final model artifacts using an experiment tracking tool (e.g., MLflow, Weights & Biases).

4. Analysis, Reporting & Sharing

- Objective: Generate reproducible results and share all research artifacts.

- Steps:

- Create analysis scripts that generate all final tables and figures from the clean data and saved model.

- Use literate programming tools (e.g., Jupyter Notebook, R Markdown) to weave narrative, code, and outputs into a final report.

- Perform a peer code review using a checklist focused on clarity, structure, and logic [14].

- Archive and Share: Deposit the final code repository, data dictionary, and analysis report on a persistent, publicly accessible archive (e.g., Zenodo, OSF) and cite the DOI in any resulting publication [14].

Visualizing the Data Quality Ecosystem

Diagram 1: The Impact Pathway of Data Quality on AI Pharmacology Research

Diagram 2: Workflow for a Reproducible AI Pharmacology Analysis

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Tools for Robust AI Pharmacology Research

| Item Category | Specific Example / Function | Role in Overcoming Data Limitations |

|---|---|---|

| Assay Quality Control Reagents | Z'-factor Control Compounds [17] | Provide standardized positive/negative controls to quantitatively assess assay robustness and suitability for screening, preventing poor-quality data from entering model training. |

| Standardized Bioassays | TR-FRET Kinase Assays (e.g., LanthaScreen) [17] | Offer a homogeneous, ratiometric readout (acceptor/donor emission ratio) that minimizes well-to-well variability and corrects for pipetting errors, generating more consistent potency (IC50) data. |

| Data Validation Software | Automated DQ Tools (e.g., DataBuck) [13] | Use machine learning to automatically profile data, detect anomalies, and enforce quality rules across large, complex datasets from trials or real-world sources, ensuring data integrity. |

| Reproducibility & Coding Tools | Containerization (Docker), Version Control (Git), Environment Managers (Conda) [14] | Create frozen, executable computational environments and track all code changes. This eliminates "works on my machine" problems and is foundational for reproducible analysis. |

| Hybrid PK/PD Modeling Platforms | Software integrating NLME solvers with ML libraries (e.g., PyTorch/TensorFlow) [11] | Enable the development of hybrid pharmacokinetic models that combine mechanistic understanding with data-driven flexibility, improving predictions from sparse clinical data. |

| Centralized Monitoring Platforms | Risk-based clinical trial monitoring software [16] | Shift monitoring from 100% source verification to statistical surveillance of aggregated data, enabling efficient quality oversight in flexible and decentralized trial designs. |

Technical Support Center: Overcoming Data Limitations in AI Pharmacology Models

Welcome to the Technical Support Center for AI Pharmacology Research. This resource is designed for researchers, scientists, and drug development professionals encountering challenges related to biased or limited training data. The following troubleshooting guides, FAQs, and protocols are framed within the critical thesis that overcoming historical data gaps is essential for building equitable, effective, and clinically translatable AI models.

Understanding the Core Problem: Data Bias in Healthcare and AI

Historical and systemic inequities in healthcare delivery directly influence the data used to train AI models. These biases, if unaddressed, are perpetuated and can even be amplified by algorithmic systems.

- Evidence of Clinical Inequity: In the U.S., significant health disparities exist, such as a life expectancy of 76.4 years compared to 81.1 years in other high-income countries, with marginalized groups disproportionately affected [18]. A 2025 study found that state-level anti-Black implicit bias among the public significantly predicted higher Black infant mortality rates, accounting for 30-39% of the variance across studied years [19].

- AI's Data Dependency: AI models learn patterns from existing data. In pharmacology, this data includes electronic health records (EHRs), clinical trial results, genomic databases, and published literature. Gaps and biases in these sources directly compromise AI outputs [20] [21].

- The Consequence for AI Pharmacology: Models trained on non-representative or biased data may fail to generalize across diverse populations, leading to inaccurate predictions for drug efficacy, safety, or optimal dosage in underrepresented groups [5] [21]. One review noted that AI models can show "systemic bias and factual inaccuracies... even when the AI responded with high confidence" [5].

Troubleshooting Guide: Common Data & Model Failure Modes

| Problem Symptom | Potential Root Cause (Data Bias) | Recommended Diagnostic Check |

|---|---|---|

| Model performs well in validation but fails in real-world clinical application. | Training/validation data lacks demographic, genomic, or socioeconomic diversity; does not reflect real-world patient population [18] [21]. | Audit dataset composition. Compare the distributions of key variables (e.g., ancestry, age, gender, comorbidities) against the target patient population. |

| AI suggests drug candidates or dosages that contradict clinical guidelines for specific patient groups. | Historical undertreatment or diagnostic bias for certain groups is encoded in the training data (e.g., EHRs showing unequal pain management) [18] [19]. | Conduct subgroup analysis. Evaluate model performance and recommendations stratified by race, ethnicity, gender, and age. |

| Model exhibits "hallucinations" or high-confidence errors in drug mechanism or interaction details. | Reliance on incomplete or biased textual corpora (e.g., published literature with positive-result bias) without robust biomedical grounding [5] [20]. | Implement source verification. Cross-check AI-generated outputs against authoritative, curated databases and primary literature. |

| Difficulty replicating published AI model results with a new, similar dataset. | Underlying data is fragmented, collected with different protocols, or lacks standardized ontologies, leading to poor model generalizability [20] [22]. | Assess data provenance and harmonization. Check for batch effects and variability in data collection methods. |

FAQs and Step-by-Step Mitigation Protocols

FAQ 1: How can I identify if my dataset has problematic gaps or representation biases?

- Step 1 – Demographic Inventory: Create a table quantifying the representation of relevant demographic groups in your dataset. Compare these proportions to the incidence of the disease in the general population or the intended use population.

- Step 2 – Clinical Variable Correlation Analysis: Test for correlations between demographic variables and key clinical outcomes or treatments in your data. A strong correlation may indicate a historical care bias. For example, analyze if pain medication prescription levels vary by patient race for similar conditions [19].

- Step 3 – Utilize Bias Detection Tools: Employ algorithmic auditing tools (e.g., AI Fairness 360, Fairlearn) to measure disparities in model error rates (like false positive/negative rates) across different subgroups before and after training.

FAQ 2: What are practical strategies to mitigate bias when historical data is limited or biased?

- Strategy A – Intentional Data Augmentation:

- Protocol: Proactively collect "negative data" (failed experiments, null results) and data from underrepresented cohorts [21]. Partner with research consortia focused on diverse population health.

- Materials: Federated learning platforms (e.g., Lifebit) can enable analysis across decentralized data sources without transferring raw data, addressing privacy concerns while improving diversity [20].

- Strategy B – Algorithmic De-biasing Techniques:

- Protocol: During model training, apply techniques such as re-sampling (over-sampling underrepresented groups), re-weighting (assigning higher importance to samples from rare groups), or adversarial de-biasing (where the model is simultaneously trained to perform its task and to conceal protected attributes like race) [23].

- Validation: After applying these techniques, rigorously validate model performance on held-out test sets that are deliberately diverse.

- Strategy C – Synthetic Data Generation:

- Protocol: Use generative AI models to create synthetic patient data that mirrors the statistical properties of real data but increases representation of minority groups. Critical Note: This data must be rigorously validated to ensure it does not introduce new, unrealistic artifacts or perpetuate existing biases [21].

- Workflow Diagram: The following diagram illustrates a robust synthetic data generation and validation workflow.

Synthetic Data Generation and Validation Workflow

FAQ 3: How do I validate an AI pharmacology model for fairness and generalizability?

- Protocol: Multi-Scale Validation Framework

- Molecular/Cellular Scale: Ensure predictions (e.g., binding affinity, toxicity) hold across genetic variants or cell lines from diverse backgrounds [23].

- Clinical Trial Simulation: Test the model's patient stratification or outcome prediction on synthetic or real-world cohorts mirroring diverse trial populations.

- Real-World Evidence (RWE) Benchmarking: Compare model predictions against high-quality RWE datasets that include outcomes from diverse healthcare settings [21].

- Explainability Audit: Use Explainable AI (XAI) tools like SHAP or LIME to ensure model decisions are driven by clinically relevant features, not protected attributes [23] [21].

The Scientist's Toolkit: Key Research Reagent Solutions

| Item / Resource | Function in Bias Mitigation | Key Consideration |

|---|---|---|

| Federated Learning Platform (e.g., Lifebit, NVIDIA Clara) | Enables training models on decentralized data sources without centralizing raw data. Crucial for incorporating diverse, privacy-sensitive data from multiple institutions [20]. | Requires robust data harmonization protocols and secure infrastructure. |

| Graph Neural Networks (GNNs) | Excellently suited for biological network data. Can integrate multi-omic data to uncover complex, systems-level interactions that may be more consistent across populations than single biomarkers [23]. | Model interpretability can be challenging; requires XAI techniques. |

| Knowledge Graphs (KGs) | Integrate structured knowledge from disparate sources (drugs, targets, diseases, pathways). Helps ground LLMs and prevent hallucinations by providing a verified factual scaffold [23]. | Construction and curation are resource-intensive. Must be updated regularly. |

| Explainable AI (XAI) Tools (e.g., SHAP, LIME, Integrated Gradients) | Provide post-hoc explanations for model predictions. Allows researchers to audit whether decisions are based on spurious correlations or genuine biomedical signals [23] [21]. | Explanations are approximations; should be used as a guide, not a definitive truth. |

| Synthetic Data Generators (e.g., GANs, VAEs) | Can augment rare populations or create balanced datasets for training. Useful for stress-testing models under various scenarios [21]. | Critical: Synthetic data must be meticulously validated for biological plausibility and fidelity. |

| Regulatory Guidance (e.g., ISPE GAMP AI Guide, FDA discussion papers) | Provides frameworks for risk-based validation, lifecycle management, and demonstrating model robustness and fairness to regulators [22]. | Essential for translational research. Early engagement with regulatory principles is recommended. |

Experimental Protocol: A Network Pharmacology Case Study for Holistic Analysis

This protocol outlines how to use AI-driven network pharmacology to elucidate multi-scale mechanisms, which can help overcome biases inherent in single-target, single-population approaches [23].

- Objective: To identify the systemic therapeutic mechanisms of a complex intervention (e.g., a traditional medicine compound or multi-drug combination) across molecular, cellular, and patient scales.

- Materials:

- Compound/Target Databases (ChEMBL, TCMSP)

- Protein-Protein Interaction Databases (STRING, BioGRID)

- Omics Data Repositories (TCGA, GEO) with diverse sample metadata.

- Clinical EHR or Trial Data (with necessary IRB approval).

- AI/ML Tools: GNN libraries (PyTorch Geometric, DGL), NLP tools for literature mining, and XAI libraries.

- Methodology:

- Network Construction: Build a heterogeneous knowledge graph integrating compound-protein, protein-protein, protein-disease, and gene-expression relationships.

- Multi-Scale Modeling: Apply GNNs to analyze this network. Train models to predict patient-level outcomes (from EHR/trial data) based on molecular and cellular network features derived from omics data.

- Bias-Conscious Validation:

- Split data by ancestral background or demographic cohort.

- Validate model predictions separately on each hold-out test cohort.

- Use XAI to identify the key network features driving predictions for each cohort and assess their biological consistency.

- Interpretation: A robust, equitable model should identify core, conserved biological pathways as key drivers across populations, while also potentially revealing cohort-specific modulating factors. Significant divergence in key features may indicate underlying data bias or genuine pharmacogenomic differences requiring further study.

Conclusion and Path Forward: Overcoming systemic biases in training data is not merely an ethical imperative but a technical necessity for building effective AI pharmacology models. By adopting the troubleshooting practices, mitigation protocols, and toolkit resources outlined in this support center, researchers can proactively address historical gaps. The future of equitable drug discovery depends on rigorous, intentional methods that prioritize diverse data acquisition, algorithmic fairness, and transparent, multi-scale validation.

Welcome to the Technical Support Center for AI Pharmacology. This resource addresses common experimental and computational challenges faced when developing predictive models under real-world data constraints, specifically the systemic absence of negative trial results.

Troubleshooting Guide: Core Model Performance Issues

Problem Statement: Model Performance Degrades in Real-World Validation

Q1: My AI model for predicting drug efficacy shows excellent validation metrics (AUC >0.9) during development, but its performance drops significantly when applied to prospectively planned clinical trials. What is the most likely cause?

A1: This is a classic symptom of training data censoring, primarily due to the "missing negative" problem. Your model was likely trained and validated on a biased dataset comprised predominantly of successful trials or published research, which represents a small, non-representative subset of all research conducted [24]. This creates an inflated sense of accuracy. In reality, approximately 90% of investigational drugs fail to reach approval [25]. When your model encounters the broader spectrum of candidate compounds—including those with a high probability of failure—its predictions become unreliable.

- Primary Root Cause: Publication bias and selective reporting. Negative or inconclusive trial results are significantly less likely to be published or deposited in accessible databases [26]. For instance, one analysis found that industry-sponsored trials were more likely to be terminated for futility or toxicity, reasons that may not be fully documented in public sources [26].

- Technical Manifestation: Your model has learned patterns associated with the characteristics of published studies, not the underlying biology of success/failure. It may be keying in on spurious correlations related to trial design (e.g., certain endpoints, specific patient subgroups common in successful trials) rather than the drug's true pharmacodynamic profile.

Recommended Protocol for Diagnosis & Mitigation:

- Data Audit: Conduct a provenance audit of your training data. Trace the source of each data point (compound, trial result, biomarker) to a publication or registry entry. Estimate the percentage of data derived from:

- Positive/statistically significant outcomes.

- Trials leading to regulatory approval.

- Preclinical studies without subsequent clinical translation.

- Synthetic Negative Augmentation: Implement a data augmentation strategy. Use historical data on trial failure reasons (e.g., from sources like ClinicalTrials.gov) to generate synthetic "negative" examples [27].

- Method: For a given successful compound in your training set, algorithmically modify key features (e.g., adjust pharmacokinetic parameters, introduce structural alerts associated with toxicity, simulate enrollment difficulties) to create a plausible "failure" counterpart. Label these as negative instances.

- Protocol: Use a framework like SMOTE (Synthetic Minority Over-sampling Technique) but apply it with domain-informed constraints to ensure biologically plausible synthetic failures.

- Failure-Prediction Parallel Model: Develop a dedicated machine learning model to predict the risk of trial failure based on protocol design features. Research shows models analyzing thousands of features from trial protocols can predict failure risk and identify modifiable factors (e.g., eligibility complexity, site selection) [26]. Integrate this risk score as a feature or a filter in your primary efficacy model.

Problem Statement: AI Models Ignore Critical Negative Keywords

Q2: Our natural language processing (NLP) model, trained on medical literature and trial reports, is poor at identifying exclusion criteria or adverse event narratives. It seems to ignore words like "no," "not," or "absent." Why?

A2: This is a known, fundamental limitation in many vision-language and large language models called "affirmation bias." Models are typically trained on image-caption or text pairs that describe what is present (e.g., "the chest X-ray shows an enlarged heart") [27]. They are rarely trained on pairs that explicitly negate or describe the absence of features (e.g., "the chest X-ray shows no sign of an enlarged heart") [27] [28]. Consequently, they learn to prioritize the presence of object keywords and ignore negation modifiers.

- Impact: In pharmacology, this flaw can be catastrophic. Misinterpreting "no sign of cardiotoxicity" as "cardiotoxicity" alters risk-benefit assessment entirely [28].

- Evidence: Studies testing vision-language models on negation tasks found performance could drop by nearly 25% when captions included negation words, with some models performing at or below random chance [27].

Recommended Protocol for Diagnosis & Mitigation:

- Benchmark Testing: Create a dedicated benchmark set for negation. For example:

- Image/Text Pairs: Curate a set of medical images (e.g., histology slides, radiographs) with two captions: one accurate (e.g., "no neutrophil infiltration") and one inaccurate (e.g., "neutrophil infiltration").

- Text Classification: Create sentence pairs for adverse event extraction: "The patient did not report nausea" vs. "The patient reported nausea."

- Measure your model's accuracy on this set. Performance below 80% indicates a severe negation blindness issue [27].

- Focused Retraining (Finetuning): Finetune your model on a dataset enriched with negations.

- Protocol: Use a large language model to augment existing image captions or text snippets. Prompt the LLM to generate related captions that specify what is excluded from the image or context [27]. For example, from a caption "a graph of tumor volume reduction," generate a synthetic counterpart: "a graph of tumor volume reduction, with no adverse effect on body weight noted."

- Validation: Research using this method showed it could improve model performance on negation-based image retrieval by about 10% and on multiple-choice QA tasks by about 30% [27]. Retrain your model on a mix of original and synthetic negation-rich data.

Problem Statement: Inability to Quantify "Unknown-Unknown" Failure Risk

Q3: We can account for known failure modes (e.g., hERG toxicity, poor solubility), but our models cannot anticipate novel, unforeseen mechanisms of failure that derail late-phase trials. How can we model this uncertainty?

A3: You are facing the challenge of epistemic uncertainty—uncertainty arising from incomplete knowledge. Traditional models operate within the manifold of known data and are ill-equipped to flag when a new compound falls outside this distribution in a meaningful way.

Recommended Protocol for Diagnosis & Mitigation:

- Out-of-Distribution (OOD) Detection: Implement an OOD detection framework as a sentinel for novel failure risk.

- Method: Use techniques like Deep Deterministic Uncertainty (DDU) or model ensembles. Train your primary model to not only make a prediction but also to output an uncertainty score. This score should be calibrated to be high when the input data (the new compound's features) is dissimilar to the training data manifold.

- Protocol: During inference, if a compound receives a high uncertainty score alongside a positive efficacy prediction, it should be flagged for extreme scrutiny. This indicates the model is making a guess in the dark.

- Causal Reasoning Integration: Move beyond correlative patterns. Incorporate known pharmacologic causal pathways (e.g., signaling pathways, metabolic networks) as a graph-based prior into your model architecture.

- Method: Use Graph Neural Networks (GNNs) where the initial graph structure is defined by established biological knowledge (e.g., protein-protein interaction networks, disease pathways). This grounds the model in mechanistic biology, making its predictions more interpretable and potentially more robust to novel chemistries that interact with known pathways in unexpected ways.

- Validation: The model's predictions should be accompanied by an "explanation" highlighting the sub-graph of the biological network that most influenced the decision, allowing human experts to evaluate biological plausibility.

Table 1: Comparison of Data Sources for AI Pharmacology Models: The Visibility Gap

| Data Source | Typical Content | Availability of Negative/Failure Data | Risk of Introducing Bias | Recommended Use Case |

|---|---|---|---|---|

| Published Literature | Positive results, significant findings, successful trials. | Very Low. Publication bias is well-documented [24]. | Very High. Models will learn a "success-only" manifold. | Hypothesis generation, understanding biological mechanisms. |

| Clinical Trial Registries (e.g., ClinicalTrials.gov) | Protocol details, some results (mandated), completion status. | Moderate. Includes terminated/suspended trials, sometimes with reasons [26]. | Medium. Better but incomplete; some failures may go unreported or lack detailed results. | Training trial outcome predictors, analyzing design risk factors [26]. |

| Regulatory Submission Archives | Comprehensive data on both successful and failed applications for approved drugs. | High for a subset. | Low for the chemical space covered, but limited to entities that reached late-stage trials. | Gold standard for validating predictive models, understanding regulatory benchmarks. |

| Internal Pharmaceutical Company Data | Full spectrum of preclinical and clinical data on all programs. | Very High (theoretically). | Low (if fully utilized). The most complete dataset but is proprietary and siloed. | Ideal but inaccessible for public research. Emphasizes need for secure, multi-party collaboration frameworks. |

FAQs on Data Sourcing & Experimental Design

Q1: Where can I find data on failed trials to re-balance my training sets? A1: Start with clinical trial registries. ClinicalTrials.gov and other WHO-linked registries require the posting of summary results for many trials, including some that are terminated. Filter for trials with statuses "Terminated," "Withdrawn," or "Suspended" and review the "Reason" field [26]. However, be aware that data completeness is variable. The CITI Program and BioPharma Commons are emerging initiatives aimed at sharing controlled-access, anonymized clinical trial data, including from some failed studies. Literature searches should include terms like "failed trial," "negative trial," and "futility," and databases like PubMed Central and Europe PMC should be searched systematically.

Q2: What are the key experimental design flaws in trials that AI should help avoid? A2: AI models trained on comprehensive data can predict and mitigate several key design flaws:

- Inappropriate Patient Selection: Contributing to ~35% of design-related failures. Models can analyze real-world patient data (EHRs) to simulate whether eligibility criteria are too restrictive (causing recruitment failure) or too broad (introducing noisy heterogeneity) [25].

- Poor Endpoint Selection: Contributing to ~30% of design-related failures. AI can analyze historical trials to assess if a chosen surrogate endpoint correlates with the true clinical outcome of interest [25].

- Wrong Dose Selection: Contributing to ~25% of design-related failures. Pharmacokinetic/pharmacodynamic (PK/PD) AI models, like those using Neural Ordinary Differential Equations, can optimize dosing regimens from sparse Phase I/II data before Phase III [11].

- Operational Complexity: Overly complex protocols contribute to failures. Natural Language Processing can analyze protocol documents to predict site and patient burden [26].

Q3: How do I validate an AI model knowing the available data is biased? A3: Employ rigorous, prospective-validation-in-simulation techniques:

- Create a Synthetic, Unbiased Test Set: Use the augmentation and registry-sourcing methods above to construct a test set with a plausible ratio of successes to failures (e.g., close to the industry average of ~10% success from Phase I to approval) [25].

- Temporal Hold-Out Validation: Never validate on data from trials that concluded after your training data. Always hold out the most recent data to simulate real-world forecasting. This tests the model's ability to generalize to future, unseen compounds.

- External Validation Consortiums: Participate in or initiate community challenges (e.g., using platforms like Synapse by Sage Bionetworks) where a hold-out dataset, often with proprietary negative data contributed by partners, serves as the final arbiter of model performance.

Table 2: Experimental Protocol for Mitigating the "Missing Negative" Problem

| Step | Action | Detailed Methodology | Expected Output |

|---|---|---|---|

| 1. Data Audit & Enrichment | Identify gaps in negative data. | 1. Map training data sources. 2. Cross-reference compound IDs with trial registries to find unreported outcomes. 3. Augment text data using LLM-generated negations [27]. | A report quantifying the % of known negative outcomes missing from the training set. An enriched dataset. |

| 2. Failure Risk Prediction | Build a parallel model for trial failure. | 1. Extract ~2,000 features from trial protocols (design, endpoints, eligibility text via NLP) [26]. 2. Train a classifier (e.g., XGBoost) to predict termination. 3. Use SHAP analysis to identify top modifiable risk factors [26]. | A model that outputs a failure risk score and recommends protocol modifications. |

| 3. Causal Integration | Ground models in biology. | 1. Construct a knowledge graph from databases like KEGG, Reactome. 2. Use a Graph Neural Network where molecule features are mapped to graph nodes/edges. 3. Train for the prediction task. | A model whose predictions are explainable via sub-pathway activation, reducing reliance on spurious correlations. |

| 4. Prospective Simulation | Validate model robustness. | 1. Use the enriched data from Step 1 to create a realistic, balanced test set. 2. Apply the model to design simulated trials for new compounds. 3. Compare the model's predicted success rate against the historical baseline (e.g., 6.7% LOAIcitation:10]). | A quantifiable estimate of how much the model could improve trial success rates, with confidence intervals. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Tools & Resources for Overcoming Data Limitations

| Item / Resource | Function / Purpose | Key Considerations |

|---|---|---|

| ClinicalTrials.gov API | Programmatic access to registry data, including trial status, conditions, and some results. | Essential for sourcing data on terminated trials. Data quality and completeness vary; requires careful curation [26]. |

| SHAP (SHapley Additive exPlanations) | Explainable AI (XAI) library. Quantifies the contribution of each feature (e.g., a trial design element) to a model's prediction [26]. | Critical for interpreting black-box models and identifying actionable protocol risks (e.g., "complex visit schedule increases failure risk by X%") [26]. |

| Neural Ordinary Differential Equations (Neural ODEs) | A neural network architecture for modeling continuous, time-dependent systems. | Superior for modeling irregularly sampled pharmacokinetic/pharmacodynamic (PK/PD) data, improving dose prediction and optimization [11]. |

| Synthetic Data Generation Framework (e.g., using GPT-4, Claude 3) | Generates biologically plausible negative data points or negation-rich text for augmentation. | Crucial: Must be tightly constrained by domain knowledge (e.g., SMILES strings, known ADMET rules) to avoid generating nonsense chemical or clinical data [27]. |

| Graph Neural Network (GNN) Framework (e.g., PyTorch Geometric) | Implements models that operate on graph-structured data. | Used to integrate biological knowledge graphs (e.g., protein interactions, disease pathways) as a prior, promoting causal reasoning over correlation [11]. |

| ARTIREV or Similar Hybrid Bibliometric AI Tools | AI-assisted literature review and analysis platforms. | Helps systematically scan vast literature for negative findings or design flaws that might be missed in manual reviews, overcoming confirmation bias [11]. |

Model Workflow & Failure Analysis Visualization

AI Pharmacology Model Workflow: Flawed vs. Improved Pathways

Analysis of Clinical Trial Failure Factors and AI Intervention Points

Building from Scarcity: Innovative Methods to Augment and Leverage Pharmacological Data

Technical Support Center: Troubleshooting and FAQs

This technical support center is designed for researchers and scientists working to overcome data limitations in AI pharmacology models through synthetic data generation (SDG). The guidance is framed within the critical trade-offs of data fidelity, analytical utility, and privacy preservation—the core criteria for synthetic data acceptance in pharmaceutical research [29].

Section 1: Data Fidelity and Statistical Quality

Q1: My synthetic pharmacogenetic dataset has similar overall averages to my real data, but machine learning models trained on it perform poorly. What's wrong? A: High-level statistical similarity does not guarantee the preservation of complex, non-linear relationships crucial for prediction. This is a common pitfall where broad utility (overall distribution) and specific utility (predictive power) are not strongly correlated [30].

- Troubleshooting Steps:

- Diagnose: Move beyond summary statistics. Use the Train-Synthetic-Test-Real (TSTR) framework [30]. Train a model (e.g., Random Forest) on your synthetic data and test it on the held-out real data. Compare its performance (e.g., F1-score, AUC) to a model trained and tested on real data.

- Analyze Relationships: Check the preservation of key pharmacogenetic associations. For example, if your real data shows a specific hazard ratio (HR) for a gene variant and drug outcome, fit the same Cox proportional hazards model on your synthetic data and compare the HR estimates [31].

- Action: If specific utility is low, consider switching your SDG method. Studies find that for high-dimensional, small-sample pharmacogenetic data, methods like Copula or Avatar (with k=10) often better preserve internal covariate relationships than some deep learning models [31] [30].

Q2: When I use CT-GAN, the generated data for rare categorical features (e.g., a specific haplotype or phenotype) seems incorrect or missing. How can I fix this? A: CT-GAN can struggle with imbalanced categorical distributions, a common feature in pharmacogenetics where certain alleles or phenotypes are rare [32]. The generator may fail to learn the true distribution of minority classes.

- Troubleshooting Steps:

- Diagnose: Compare the frequency tables for all categorical variables (genotype, phenotype, disease code) between real and synthetic data. Pay close attention to categories with less than 5-10% frequency.

- Adjust Training: CT-GAN has a

log_frequencyparameter. Setting this toTruecan help it better model imbalanced categorical columns by sampling from a log-frequency distribution during training. - Action: If the problem persists, test TVAE. Variational Autoencoders may handle imbalanced data differently and sometimes produce more robust results across varied datasets [32]. Alternatively, data augmentation (generating more synthetic samples than the original dataset) can sometimes help recover rare category proportions [31].

Section 2: Analytical Utility and Model Performance

Q3: Can synthetic data ever be better than real data for training predictive models in pharmacogenetics? A: Surprisingly, yes. Under specific conditions, synthetic data can act as a regularizer, improving model generalization. A 2024 study found that synthetic data from CTAB-GAN+ could achieve higher Random Forest accuracy than the original dataset [33]. Similarly, Copula and synthpop have been shown to outperform original data in predictive tasks under conditions of noise or data imbalance [30].

- Guidance: This "synthetic boost" is not guaranteed. It depends on the SDG method, the dataset, and the task. Rigorously validate using the TSTR framework. Do not assume improved performance; always measure it.

Q4: I am generating synthetic data for survival analysis (time-to-event). Which method is most reliable for preserving key hazard ratios? A: The choice of method significantly impacts the accuracy of survival estimates. A focused 2024/2025 study on a pharmacogenetic kidney transplant dataset (n=253) found clear differences [31] [34]:

- Avatar (with k=10 neighbors) produced HR estimates closest to the original data.

- CT-GAN slightly underestimated the HR.

- TVAE showed the most significant deviation from the original HR.

- Recommendation: For non-longitudinal survival data, Avatar with a tuned

kparameter is recommended. Furthermore, applying the chosen algorithm multiple times (e.g., 100 seeds) and aggregating results improves the stability and reliability of HR estimates, especially for small datasets [31].

Section 3: Privacy and Re-identification Risk

Q5: How do I measure and ensure that my synthetic pharmacogenetic data protects patient privacy? A: Privacy is not automatic. You must evaluate it using dedicated metrics. A key risk is membership inference, where an attacker could determine if a specific individual's data was in the training set [30].

- Evaluation Protocol:

- Primary Metric: Use ε-Identifiability [30] [32]. It measures the likelihood that a real record is closer to a synthetic record than to any other real record. A lower ε (e.g., 0.1-0.3) indicates lower re-identification risk. Studies suggest classical methods like Copula and synthpop often achieve lower ε-identifiability (0.25-0.35) compared to some deep learning models (>0.4) [30].

- Diagnostic Check: Perform a nearest neighbor distance analysis. For a sample of real records, check if the nearest synthetic record is too similar. A healthy synthetic dataset should maintain distance from individual real points while capturing population statistics.

- Action: If privacy risk is too high, consider methods with built-in privacy guarantees, such as differentially private generation (e.g., DP-GAN) [35], or use the synthetic data in a hybrid model mixed with real data under a secure federated learning framework [29].

Q6: What is the core privacy vs. utility trade-off, and how do I manage it for my project? A: There is an inherent tension: maximizing data utility (making synthetic data very realistic) often increases the risk that it can be traced back to real individuals, and vice-versa [29].

- Management Strategy:

- Define Acceptance Criteria Before Generation: For your project, decide on minimum thresholds for utility (e.g., TSTR model performance must be >90% of baseline) and maximum thresholds for privacy risk (e.g., ε-identifiability < 0.3) [29].

- Choose Method Based on Priority: The 2025 evaluation of 7 methods provides a guide [30]:

- Iterate: Generate data, evaluate both utility and privacy metrics, adjust method or parameters, and repeat until your predefined criteria are met.

Section 4: Technical Implementation and Scalability

Q7: TVAE performs well, but training is extremely slow on my high-dimensional genomic dataset. How can I improve this? A: This is a known scalability challenge. Deep learning-based SDG methods demand substantial computational resources [32].

- Troubleshooting and Solutions:

- Hardware: Ensure access to adequate GPUs (e.g., NVIDIA H100) and RAM (100s of GB may be needed for large datasets) [32].

- Dimensionality Reduction: As a preprocessing step, apply Principal Component Analysis (PCA) to your genetic variant data to reduce dimensionality before synthesis. The Avatar algorithm inherently uses PCA for this reason [31] [30].

- Alternative Methods: For very high-dimensional data (e.g., 100+ genetic variants), consider Copula-based methods. They are statistically grounded and often less computationally intensive than neural network approaches while maintaining strong performance [30] [32].

- Epochs and Batch Size: Do not arbitrarily train for 10,000 epochs. Use a validation metric (like a utility score on a hold-out set) for early stopping. Increasing batch size can improve training stability and speed [32].

Q8: How many synthetic samples should I generate from my original dataset of size N? A: The optimal size depends on your goal.

- For Privacy-Preserving Replacement: Generate a dataset of similar size (N) to share or publish [31].

- For Data Augmentation: You can generate a larger dataset (e.g., 4xN) to enhance model training. However, be cautious: one study noted that data augmentation, while improving stability, can also increase the number of false-positive findings in associative analyses [31]. Always validate findings on a real hold-out set.

- For Stabilizing Estimates: As noted in Q4, generating multiple synthetic datasets (e.g., M datasets of size N) and aggregating results (e.g., averaging hazard ratios) is a robust strategy for small-N studies [31].

Experimental Protocols from Key Studies

Protocol 1: Comparative Evaluation of CT-GAN, TVAE, and Avatar

This protocol is based on a seminal 2024/2025 study evaluating SDG for a pharmacogenetic survival analysis [31] [34].

- Original Data: A tabular dataset of 253 renal transplant recipients. Variables: donor/recipient age (continuous), sex (categorical), ABCB1 haplotype (ordinal: 0,1,2), acute rejection (binary), time-to-graft-loss (survival).

- SDG Methods & Implementation:

- Avatar: A simplified implementation in R. Key hyperparameter

k(nearest neighbors) tested at 5, 10, 20. Data standardized, PCA applied, synthetic samples generated as weighted barycenters of k neighbors with exponential noise. - CT-GAN & TVAE: Implemented using the Synthetic Data Vault (SDV) library's

CTGANSynthesizerandTVAESynthesizer[31].

- Avatar: A simplified implementation in R. Key hyperparameter

- Evaluation Workflow:

- For each method, generate 100 synthetic datasets of size N=253.

- On each synthetic dataset, fit a Cox proportional hazards model for graft loss based on haplotype, adjusted for acute rejection.

- Record the Hazard Ratio (HR) estimate from each model.

- Calculate: (a) The median HR across 100 runs, (b) The 5th and 95th percentiles (showing variability), (c) The deviation from the original HR (9.346).

- Key Outcome: Compare the median HR and its variability across methods to assess accuracy and stability.

Protocol 2: Evaluating Privacy and Utility for High-Dimensional PGx Data

This protocol is based on a 2025 benchmark of 7 SDG methods on high-dimensional Swiss PGx cohort data [30].

- Original Data: Two datasets from 142 patients: a Genotype dataset (104 genetic variant columns) and a Phenotype dataset (24 clinical/demographic columns).

- SDG Methods: synthpop, avatar, copula, copulagan, ctgan, tvae, tabula (LLM-based).

- Evaluation Metrics:

- Broad Utility: Propensity Mean Squared Error (

pMSE). Lower scores indicate the synthetic data distribution is statistically closer to the real. - Specific Utility: Weighted

F1score in a Train-Synthetic-Test-Real (TSTR) framework for a relevant prediction task. - Privacy Risk: ε-Identifiability, calculating the proportion of real records whose nearest synthetic neighbor is closer than its nearest real neighbor.

- Broad Utility: Propensity Mean Squared Error (

- Key Outcome: A multi-metric profile for each method, revealing trade-offs (e.g., Copula offers low ε-identifiability and strong utility, while deep learning models may have higher fidelity but higher risk).

The following tables consolidate quantitative findings from recent studies to guide method selection.

Table 1: Performance in Pharmacogenetic Survival Analysis (n=253) [31] [34]

| SDG Method | Key Parameter | Median Hazard Ratio (HR) | Deviation from Original HR (9.346) | Privacy-Performance Trade-off |

|---|---|---|---|---|

| Original Data | - | 9.346 | Baseline | N/A |

| Avatar | k=10 | Closest to Original | Smallest Deviation | Best Balance of utility and privacy |

| CT-GAN | Default | ~8.5 (estimated) | Slight Underestimation | Good overall performance |

| TVAE | Default | Most Significant Deviation | Largest Deviation | Lower performance in this context |

Table 2: Multi-Metric Benchmark on High-Dimensional PGx Data (Genotype Dataset) [30]

| SDG Method | Type | Broad Utility (pMSE ↓) | Specific Utility (F1 ↑) | Privacy Risk (ε-Identifiability ↓) |

|---|---|---|---|---|

| Copula | Statistical | Low | High (Can exceed original) | Low (0.25-0.35) |

| synthpop | Statistical | Low | High | Low |

| Avatar | PCA/KNN | Moderate | Moderate | Moderate |

| TVAE | Deep Learning | Very Low (High Fidelity) | Moderate | High (>0.4) |

| CT-GAN | Deep Learning | Low | Moderate | High |

| tabula | LLM-based | Low | Moderate | High |

Workflow and Conceptual Diagrams

Diagram 1: Synthetic data validation workflow for pharmacogenetics.

Diagram 2: Core trade-offs between synthetic data properties.

Diagram 3: The Avatar algorithm workflow for synthetic data generation.

Table 3: Key Research Reagent Solutions for SDG in Pharmacogenetics

| Tool / Resource | Category | Description & Function | Primary Source / Reference |

|---|---|---|---|

| Synthetic Data Vault (SDV) | Software Library | Open-source Python library providing unified access to multiple SDG models (CTGAN, TVAE, CopulaGAN, etc.) for tabular data. Essential for implementation and benchmarking. | [30] [32] |

| PharmGKB & CPIC Guidelines | Knowledge Base | Curated databases linking genetic variants to drug response. Critical for defining meaningful variables and validating the clinical relevance of synthetic data associations. | [36] |

| Propensity Score (pMSE) & ε-Identifiability | Evaluation Metric | Statistical metrics for assessing broad utility and privacy risk, respectively. Required for rigorous, multi-faceted validation of synthetic datasets. | [30] [29] |

| High-Performance Computing (HPC) | Infrastructure | Access to GPU clusters (e.g., NVIDIA H100) and substantial RAM (>500GB) is often necessary for training deep learning-based SDG models on genomic-scale data. | [32] |

| Train-Synthetic-Test-Real (TSTR) | Evaluation Framework | A critical validation protocol that measures the specific utility of synthetic data by testing models trained on it against held-out real data. | [30] |

Technical Support Center: Troubleshooting Digital Twin Platforms for Clinical Research

This support center provides targeted solutions for common technical issues encountered when building and validating AI-driven digital twins for clinical trial simulation. The guidance is framed within the critical research challenge of overcoming data limitations—such as sparse, biased, or non-representative datasets—to develop robust pharmacological models [37] [38].

Troubleshooting Guides

Issue 1: Entity Instances and Time Series Data Missing from Digital Twin Explorer

- Symptoms: The exploration or visualization interface of your digital twin platform appears empty after mapping data sources.

- Diagnosis & Resolution:

- Verify Operations: Check the platform's operation management log (e.g., "Manage operations" tab). Ensure all data mapping operations (both non-time series and time series) have completed successfully. Rerun any failed operations, starting with non-time series mappings first [39].

- Check SQL Endpoint: If mappings are successful, the issue may be a delay or failure in provisioning the SQL endpoint for the connected data lakehouse. Navigate to your workspace root to locate the SQL endpoint (often named after your digital twin instance). If missing, follow platform-specific prompts to reprovision it [39].

- Review Data Links: For missing time series data on otherwise visible entities, verify that the "link property" in your time series mapping configuration exactly matches the corresponding entity type property. Even minor mismatches will cause failures. Redo the mapping if values are not identical [39].

Issue 2: Failed Operations During Model Building or Data Integration

- Symptoms: Operations in your digital twin pipeline fail with error statuses.

- Diagnosis & Resolution:

- Inspect Error Details: Select the "Details" link for the failed operation. Examine the run history to determine if the failure was in a specific ad-hoc operation or a larger automated flow [39].

- Decode Common Errors:

- Error: "Concurrent update to the log. Multiple streaming jobs detected...": This indicates multiple instances of a mapping operation are running in conflict. Solution: Rerun the mapping operation [39].

- Error: Empty or Generic Failure Message: If the error log is empty, gather the Job Instance ID from the platform's Monitor Hub and create a support ticket with this information [39].

- Validate Source Data: Often, failures originate from poor-quality input data. Before retrying, ensure your source data (e.g., EHRs, biomarker data) has undergone rigorous cleaning to address noise, missing values, and artifacts that can lead to model bias and overfitting [40].

Issue 3: AI Model Hallucinations or Low Resiliency in Digital Twin Predictions

- Symptoms: The digital twin generates confident but factually incorrect predictions about disease progression or drug response. Outputs lack consistency when queries are rephrased.

- Diagnosis & Resolution:

- Identify Hallucination: Cross-reference all AI-generated predictions (e.g., simulated clinical endpoints, biomarker changes) against established biomedical literature and known disease pathways. Be wary of fabricated citations or plausible-sounding mechanistic explanations [5].

- Implement Human-in-the-Loop (HITL) Verification: Establish a protocol where a pharmacologist or clinician manually verifies key virtual trial outputs against real-world evidence or preclinical data. Do not rely on AI as a standalone resource for critical go/no-go decisions [5].

- Audit Training Data: Hallucinations often stem from biased or non-representative training data. Audit your historical clinical trial dataset for diversity in demographics, disease subtypes, and determinants of health. Use data augmentation or synthetic data generation techniques to improve coverage [37] [40].

- Test for Resiliency: Pose the same clinical query (e.g., "predicted HbA1c change at 12 weeks for subgroup X") multiple times using slightly different phrasings. A high-resiliency model should provide consistent, reproducible answers [5].

Frequently Asked Questions (FAQs)

Q1: What are the primary data requirements for building a valid digital twin of a patient population? A: The foundation is high-quality, multi-scale data. This includes baseline clinical variables, genomics, proteomics, longitudinal biomarker data, and real-world evidence from sources like disease registries [37] [41]. Crucially, data must be representative of the target population to avoid bias. Incorporate social determinants of health where possible, as their absence in standard EHRs limits model generalizability [37]. The model's accuracy is directly dependent on the quality and relevance of its training data [38].

Q2: How can we validate a digital twin model before using it to simulate a clinical trial? A: Employ a "blind prediction" protocol. Train your model on historical data, then ask it to predict the outcomes of a completed clinical trial without using that trial's results. Compare the simulation's output to the actual trial data [41]. Key validation metrics include Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC), with an AUROC >0.80 often considered good [40]. External validation on an independent dataset is mandatory to ensure generalizability [40].

Q3: Can digital twins reduce the number of patients needed in a randomized controlled trial (RCT)? A: Yes. By generating synthetic control arms, digital twins can reduce the number of patients assigned to a placebo or standard-of-care group. Each real participant in the treatment arm can be paired with a highly matched digital twin that simulates the disease course under control conditions [37]. Industry reports indicate this can reduce control arm size by approximately 33% and save over 4 months in enrollment time [42]. This approach is recognized by regulatory bodies like the EMA and FDA [42].

Q4: What is the most significant limitation of current digital twin technology in pharmacology? A: The technology is most robust for diseases with well-understood biology, such as single-gene disorders. Its predictive power diminishes for complex, multifactorial diseases (e.g., many cancers, neurological conditions) where the underlying pathways, genetic influences, and microenvironment interactions are not fully characterized [41]. The "black box" nature of some complex AI models also poses challenges for regulatory explainability [11].

Q5: How do we address the ethical concerns of using digital twins in clinical research? A: Key concerns include data privacy, algorithmic bias, and informed consent. Solutions involve implementing robust data anonymization, actively seeking diverse training data to mitigate bias, and developing clear patient consent forms that explain the use of their data for creating synthetic cohorts [37]. Institutional Review Boards (IRBs) must develop expertise to evaluate these unique ethical challenges [37].

Experimental Protocols & Workflows