Beyond Cosine: A Strategic Guide to Evaluating Spectral Similarity Scores for Accurate Compound Identification

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate and select spectral similarity scoring methods for mass spectrometry-based compound identification.

Beyond Cosine: A Strategic Guide to Evaluating Spectral Similarity Scores for Accurate Compound Identification

Abstract

This article provides a comprehensive framework for researchers, scientists, and drug development professionals to evaluate and select spectral similarity scoring methods for mass spectrometry-based compound identification. It explores foundational algorithms like Cosine Correlation and Shannon Entropy, examines cutting-edge machine learning models, addresses critical preprocessing and noise challenges, and establishes robust validation methodologies. By synthesizing the latest research, this guide aims to equip practitioners with the knowledge to improve identification accuracy, reduce false discoveries, and accelerate discovery in metabolomics, natural products research, and pharmaceutical development.

Core Concepts: Understanding the Landscape of Spectral Similarity Scoring Algorithms

The Central Role of Similarity Scores in Mass Spectrometry Workflows

In mass spectrometry-based metabolomics and proteomics, the identification of unknown compounds represents a fundamental challenge. The process fundamentally relies on comparing experimentally acquired fragmentation spectra against reference libraries or in-silico predictions. At the heart of this comparison lies the calculation of a spectral similarity score, a quantitative metric that serves as a proxy for structural similarity between molecules [1] [2]. The accuracy, efficiency, and reliability of compound identification are therefore intrinsically tied to the performance of these scoring algorithms.

Similarity scores are broadly categorized into binary and continuous measures. Binary scores simplify spectra into presence/absence data of peaks, while continuous measures utilize the full intensity information, typically yielding more reliable identifications [1] [3]. The choice of scoring algorithm impacts every downstream application, from library matching and molecular networking to the emerging fields of untargeted exposomics and biomarker discovery [1]. This guide provides a comparative analysis of established and next-generation similarity scoring methods, detailing their experimental performance, computational demands, and optimal application contexts to inform researchers' selection for their specific workflows.

Comparative Performance of Core Similarity Algorithms

This section presents a direct comparison of the most widely used and recently developed spectral similarity scoring methods. The data, synthesized from recent comparative studies, highlights key metrics such as identification accuracy and computational efficiency.

Table 1: Performance Comparison of Primary Similarity Scoring Algorithms

| Similarity Score | Type | Key Principle | Reported Top-1 Accuracy | Computational Cost | Best Application Context |

|---|---|---|---|---|---|

| Cosine Correlation (Dot Product) [1] | Continuous | Angle between spectral intensity vectors | High (Enhanced with weight factor) [1] | Very Low [1] | General-purpose LC-MS/GC-MS library search |

| Weighted Cosine Similarity (WCS) [1] [4] | Continuous | Cosine correlation with m/z-dependent weighting | High [4] | Low | Standard for GC-MS; robust baseline for LC-MS |

| Shannon Entropy Correlation [1] | Continuous | Information entropy of matched peaks | Moderate to High [1] | High [1] | LC-MS metabolomics (without weight factor) |

| Tsallis Entropy Correlation [1] | Continuous | Generalized entropy with tunable parameter | Higher than Shannon [1] | Very High [1] | Research on specialized, non-extensive systems |

| Spec2Vec [2] [4] | ML-Based | Unsupervised word2vec embeddings from peak co-occurrence | High (Superior to cosine) [2] | Medium (Fast similarity computation) [2] | Large-scale library matching & molecular networking |

| LLM4MS [4] | ML-Based | Embeddings from a fine-tuned Large Language Model | 66.3% (Recall@1, highest reported) [4] | Very High (Training), Very Low (Query) [4] | Ultra-fast, accurate search in million-scale libraries |

Table 2: Comparison of Binary Similarity Measures for Structure-Based Identification

| Similarity Measure | Theoretically Identical Accuracy Group [3] | Performance in EI-MS (GC-MS) [3] | Performance in ESI-MS (LC-MS) [3] | Notes |

|---|---|---|---|---|

| Jaccard (Tanimoto) | Group 1 (1,2,3,4,12) [3] | Moderate | Moderate | Most widely used without formal justification [3] |

| Dice, Sokal-Sneath, Kulczynski | Group 1 (1,2,3,4,12) [3] | Moderate | Moderate | Mathematically order-preserving with Jaccard [3] |

| McConnaughey | Group 3 (7,8) [3] | Best Performance [3] | N/A | Top performer for EI mass spectra [3] |

| Cosine (Binary) | Group 2 (5,15) [3] | N/A | Best Performance [3] | Top performer for ESI mass spectra [3] |

| Fager-McGowan | Unique [3] | Second-Best [3] | Second-Best [3] | Most robust across EI and ESI platforms [3] |

Experimental Protocols and Methodological Insights

The performance of similarity scores is highly dependent on correct spectral preprocessing and algorithmic implementation. Below are detailed protocols for key experiments and critical preprocessing steps cited in the literature.

Protocol: Evaluating Continuous Measures with Weight Factor Transformation

This protocol is derived from the comparative analysis of Cosine, Shannon Entropy, and Tsallis Entropy correlations [1].

- Spectral Library Curation: Obtain reference libraries. For LC-MS, use an ESI mass spectral library (e.g., MassBank). For GC-MS, use an EI mass spectral library (e.g., NIST) [1].

- Preprocessing:

- Apply standard preprocessing: centroiding, noise removal, and peak matching [1].

- Apply Weight Factor Transformation: Transform peak intensities using a function like weight = (m/z)^k or weight = log(m/z) to increase the importance of high-mass fragment ions [1].

- Note: The order is critical. For optimal Cosine Correlation, apply the weight factor after noise removal but before final normalization [1].

- Similarity Calculation:

- Validation: Use a leave-one-out cross-validation. Rank library matches by score and record the Top-1 and Top-10 identification accuracy [1].

Protocol: Noise Filtering for Improved Similarity and Networking

This protocol is based on research demonstrating that noise removal significantly improves score reliability and molecular network quality [5].

- Data Acquisition: Collect MS/MS spectra for a set of standard compounds and complex biological samples.

- Denoising:

- Apply an intensity-based threshold (e.g., remove peaks with intensity < 0.5-2% of base peak).

- Alternatively, use a data-specific method to determine the optimal threshold by analyzing the distribution of peak intensities and the number of fragment ions explainable by in-silico fragmentation [5].

- Similarity Score Calculation: Compute pairwise modified cosine or Spec2Vec scores for denoised spectra.

- Molecular Network Construction: Create networks where nodes are spectra and edges represent similarity scores above a threshold (e.g., >0.7).

- Evaluation:

- Quantitative: Perform Minimum Spanning Tree (MST) analysis on the network. Denoised networks should show denser clustering of related compounds and longer distances between unrelated clusters [5].

- Qualitative: Assess the reduction in false-positive connections and the enhanced interpretability of compound families [5].

Implementation: Fast Library Searching with Locality-Sensitive Hashing (LSH)

This method, implemented in tools like msSLASH, dramatically accelerates library searches [6].

- Indexing (Offline):

- Convert all library spectra to sparse intensity vectors.

- Apply L random SimHash functions to each library spectrum, generating an L-bit hash string (signature) for each [6].

- Store spectra in hash tables bucketed by their signatures.

- Querying (Online):

- For a query spectrum, generate its L-bit hash string using the same SimHash functions.

- Retrieve all library spectra from buckets whose hash strings are within a small Hamming distance of the query's string.

- Compute the exact cosine similarity only against this small subset of candidate spectra, not the entire library [6].

- Outcome: This probabilistic method achieves a 2-9x speedup over exhaustive searching (e.g., SpectraST) while maintaining high identification sensitivity [6].

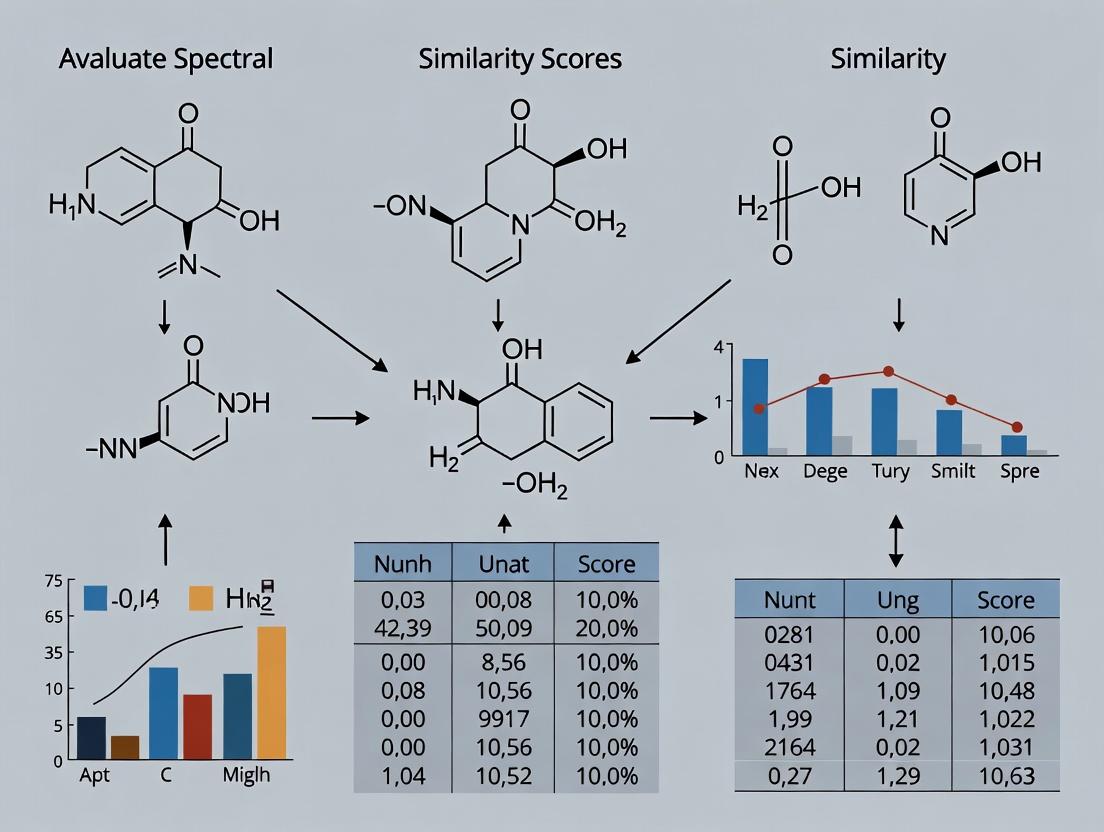

Diagram Title: Core Workflow for Spectral Similarity-Based Compound Identification

The Evolution of Algorithms: From Cosine to AI-Based Embeddings

The development of similarity scores has progressed from simple geometric measures to sophisticated AI-driven models that learn complex relationships within spectral data.

Diagram Title: Evolution of Spectral Similarity Scoring Algorithms

Traditional Scores (Cosine & Entropy): The weighted cosine similarity remains a robust benchmark due to its simplicity and low computational cost. Its performance is significantly enhanced by the weight factor transformation, which emphasizes higher m/z fragments [1]. Entropy-based measures like the Shannon and Tsallis correlations offer a different theoretical framework, with Tsallis providing tunable performance at a higher computational cost [1].

Machine Learning Embeddings (Spec2Vec & LLM4MS): Spec2Vec represents a paradigm shift, using unsupervised learning (Word2Vec) on peak co-occurrences to create spectral embeddings. This allows it to recognize structural analogues even with few direct peak matches, leading to a better correlation with structural similarity than cosine scores [2]. The state-of-the-art LLM4MS method fine-tunes a Large Language Model to generate spectral embeddings. It incorporates latent chemical knowledge, allowing it to prioritize diagnostically critical peaks (like the base peak), achieving a 13.7% improvement in Recall@1 accuracy over Spec2Vec on a million-scale library test [4].

Table 3: Essential Research Reagent Solutions and Computational Tools

| Tool/Resource Name | Type | Primary Function in Workflow | Key Reference/Resource |

|---|---|---|---|

| NIST MS/MS Library | Spectral Library | Gold-standard reference library of experimental EI and MS/MS spectra for library-based searching. | NIST [4] |

| MassBank / GNPS | Public Spectral Repository | Public, community-curated databases of mass spectra for library matching and training ML models like Spec2Vec. | MassBank [1], GNPS [2] |

| In-silico EI-MS Library (Yang et al.) | Predicted Spectral Library | A million-scale library of predicted EI-MS spectra used to evaluate scalable search algorithms like LLM4MS. | Yang et al. [4] |

| Weight Factor Transformation | Preprocessing Algorithm | Critical preprocessing step that weights peak intensities by m/z to improve Cosine Correlation accuracy. | Kim et al. [1] |

| Locality-Sensitive Hashing (LSH) | Computational Index | Hashing technique to group similar spectra, enabling fast approximate nearest-neighbor searches in large libraries. | Implemented in msSLASH [6] |

| Noise Filtering Algorithm | Preprocessing Algorithm | Removes low-intensity noise peaks from spectra to improve similarity score reliability and molecular network clarity. | Dalla Valle et al. [5] |

Selecting the optimal similarity score requires balancing accuracy, computational cost, and the specific identification context.

- For general-purpose, robust library searching: Start with Weighted Cosine Similarity. Ensure the weight factor transformation is correctly applied during preprocessing, as this is essential for high accuracy [1]. It provides an excellent, computationally cheap baseline.

- For identifying structural analogues and molecular networking: Use Spec2Vec. Its unsupervised embeddings correlate better with structural similarity and are highly scalable for large databases and network analyses [2].

- For maximum identification accuracy in large libraries: Consider cutting-edge methods like LLM4MS, which currently sets the state-of-the-art for recall accuracy by leveraging embedded chemical knowledge [4].

- For structure-based identification (in-silico predictions): Use binary measures. Select McConnaughey for GC-MS (EI) data and binary Cosine for LC-MS (ESI) data, as they were identified as top performers in their respective domains [3].

- For working with large-scale datasets: Implement computational optimizations like Locality-Sensitive Hashing (LSH) to achieve order-of-magnitude speedups in library search times without significant loss of sensitivity [6].

- Always preprocess effectively: Incorporate noise filtering tailored to your data to eliminate spurious peaks that degrade score reliability and complicate molecular networks [5].

The field is moving rapidly toward AI-driven methods that learn complex spectral relationships. However, traditional scores, when properly implemented with key preprocessing steps, remain indispensable tools. The choice is not necessarily one or the other; a tiered strategy employing fast traditional filters followed by refined AI-based matching may offer the most powerful and efficient solution for the modern mass spectrometry workflow.

In compound identification research, selecting an appropriate spectral similarity score is a foundational decision that directly impacts the accuracy and reliability of results. This guide provides an objective comparison between binary and continuous similarity measures, contextualized within metabolomics and mass spectrometry. Binary scores, operating on presence/absence data, are mathematically distinct from continuous scores, which utilize full intensity values. Recent experimental data indicate that no single measure is universally superior; optimal performance depends on the data type (e.g., EI vs. ESI mass spectra), available computational resources, and the specific identification task [3] [7]. Emerging approaches, such as ensemble methods and probabilistic scores, demonstrate promising pathways to overcome the limitations of individual metrics [8] [9].

Definitions and Core Mathematical Principles

The fundamental distinction between these score types lies in their input data and mathematical formulation.

Binary Similarity Scores: These measures compare binary fingerprints, where molecular or spectral features are encoded as 1 (present) or 0 (absent). They are based on counting coincidences in bit positions. Common examples include:

- Jaccard (Tanimoto): Ratio of shared "on" bits to the total number of "on" bits across both samples.

- Dice (Sørensen-Dice): Emphasizes shared presence, giving double weight to the intersection. These measures are computationally efficient and are the only choice for structure-based identification where reliable continuous intensity predictions are not yet possible [3].

Continuous Similarity Scores: These measures compare full vector representations, utilizing continuous intensity or abundance values. They calculate similarity based on both the pattern and magnitude of features.

- Cosine Similarity: Measures the cosine of the angle between two vectors, assessing profile shape independently of magnitude.

- Dot Product: A foundational measure, often weighted or normalized, that considers both intensity and coincidence.

- Entropy-Based Correlations (e.g., Shannon, Tsallis): Gauge the shared information content between spectra, potentially capturing non-linear relationships [7].

Extended (n-ary) Similarity: A novel framework extends similarity calculations beyond pairwise comparisons to simultaneously assess multiple objects. This approach, which can be applied to both binary and continuous data, offers significant computational speed-ups for tasks like diversity analysis and provides a single metric for set compactness [10].

Table 1: Core Characteristics of Binary and Continuous Similarity Scores

| Characteristic | Binary Similarity Scores | Continuous Similarity Scores |

|---|---|---|

| Input Data | Binary fingerprints (0/1) | Continuous vectors (intensities, abundances) |

| Typical Use Case | Structure-based prediction; presence/absence of features | Library matching; comparison of full spectral profiles |

| Key Advantage | Computational simplicity; invariant to scaling | Utilizes full information content; can capture intensity relationships |

| Primary Limitation | Discards intensity information | Sensitive to noise and normalization; computationally heavier |

| Common Examples | Jaccard, Dice, Sokal-Sneath, Cosine (binary variant) | Dot product, Cosine similarity, Spectral entropy |

Comparative Analysis of Methods and Performance

Performance is highly dependent on the analytical technique and data context.

Performance in Mass Spectrometry-Based Metabolomics

Direct comparisons reveal that the best-performing metric varies with the ionization method and data structure.

- Electron Ionization (EI) Mass Spectra: For GC-EI-MS data, binary measures like McConnaughey and Driver–Kroeber have shown top identification accuracy. The Fager–McGowan measure has been noted for its robustness, being the second-best performer across different data types [3].

- Electrospray Ionization (ESI) Mass Spectra: For LC-ESI-MS data, binary Cosine and Hellinger measures have demonstrated superior performance [3]. In continuous scoring, the Cosine correlation, when combined with a weight factor transformation during preprocessing, achieves high accuracy with low computational expense. While novel measures like Tsallis Entropy Correlation can outperform Shannon Entropy, they come with increased computational cost [7].

- Theoretical Equivalency: Mathematical analysis proves that groups of binary measures (e.g., {Jaccard, Dice, Sokal–Sneath} and {Cosine, Hellinger}) are strictly order-preserving. This means they will produce identical ranking and identification accuracy despite generating different raw score values [3].

Table 2: Experimental Performance of Select Similarity Scores in Compound Identification

| Similarity Score | Type | Optimal Context (Data) | Reported Key Finding | Source |

|---|---|---|---|---|

| McConnaughey / Driver–Kroeber | Binary | EI Mass Spectra (GC-MS) | Best identification accuracy for EI data. | [3] |

| Cosine / Hellinger | Binary | ESI Mass Spectra (LC-MS) | Best identification accuracy for ESI data. | [3] |

| Fager–McGowan | Binary | EI & ESI Mass Spectra | Most robust (second-best in both EI & ESI). | [3] |

| Cosine Correlation (with weight factor) | Continuous | LC-MS & GC-MS | Highest accuracy with lowest computational expense. | [7] |

| Tsallis Entropy Correlation | Continuous | LC-MS | Outperforms Shannon Entropy but is more computationally expensive. | [7] |

| Harmonic Mean of KS Statistics | Probabilistic | Replicate EI Spectra | Accuracy comparable to High Dimensional Consensus (HDC) score. | [9] |

Ensemble and Probabilistic Approaches

Given the lack of a single standard metric, advanced strategies are being developed.

- Ensemble Methods: These approaches combine the collective information from multiple individual similarity metrics to form a globally improved, more representative score. Evaluations on over 88,000 spectra show ensemble metrics improve the accurate ranking of the correct reference spectrum compared to any single score [8].

- Probabilistic Scores: For analyzing sets of replicate spectra, novel scores based on averaged Kolmogorov-Smirnov or t-test statistics compare peak intensity distributions. These methods, such as the harmonic mean of KS statistics, can outperform traditional scores while minimizing user-defined parameters and distributional assumptions [9].

Experimental Protocols and Methodologies

The evaluation of similarity scores requires standardized workflows.

- Data Preparation: Convert experimental and library mass spectra to binary strings, where a bit represents the presence (1) or absence (0) of a signal at a given m/z value.

- Measure Selection: Select a panel of binary similarity measures (e.g., Jaccard, Dice, Cosine, McConnaughey, Fager–McGowan).

- Similarity Calculation: For each query spectrum, calculate its similarity score against all reference spectra in the library using each selected measure.

- Ranking & Identification: Rank the reference spectra for each query from highest to lowest similarity score. The top match is proposed as the identity.

- Accuracy Assessment: Compare proposed identities against known truth. Calculate accuracy metrics (e.g., top-1 accuracy, ROC-AUC) for each similarity measure across the entire dataset, stratified by ionization technique (EI vs. ESI).

- Preprocessing: Apply necessary spectral preprocessing (normalization, alignment). Critically, apply a weight factor transformation to intensity values if evaluating measures like Cosine correlation.

- Library Matching: Calculate continuous similarity scores (e.g., Cosine, Dot Product, Shannon Entropy, Tsallis Entropy) between query and reference spectra.

- Performance Evaluation: Assess performance using receiver operating characteristic (ROC) curves and associated area under the curve (AUC) values. Compute false discovery rates (FDR) at different similarity thresholds.

- Computational Benchmarking: Record the computational time required for similarity searches using each measure on standardized hardware/software.

Decision Workflow for Selecting Similarity Score Type

Ensemble Method for Spectral Similarity Scoring

Practical Implications and Selection Guidelines

Choosing the right similarity score is context-dependent. Researchers should consider the following:

- For Structure-Based Identification where only predicted fragments are available, binary similarity measures are the mandatory choice. Within this category, selection should be guided by the ionization method: McConnaughey/Driver–Kroeber for EI data and Cosine/Hellinger for ESI data, with Fager–McGowan as a robust general-purpose alternative [3].

- For Library Matching with full experimental spectra, continuous similarity measures generally leverage more information. The Cosine correlation with proper weight factor transformation offers a strong balance of accuracy and speed [7].

- When Maximum Reliability is Critical, especially with complex or noisy spectra, ensemble approaches that combine multiple metrics provide a more robust and accurate identification by mitigating the weaknesses of any single measure [8].

- For Analyzing Technical Replicates, novel probabilistic scores based on statistical tests of intensity distributions offer a principled alternative to traditional metrics [9].

Future Directions and the Evolving Toolkit

The field is moving beyond the binary vs. continuous dichotomy towards more integrative and intelligent systems.

- Hybrid and Ensemble Scoring: The future lies in systematically combining scores from multiple mathematical families to create more powerful meta-scores, as demonstrated by recent ensemble methods [8].

- Machine Learning Integration: Similarity metrics are increasingly serving as features for machine learning models that learn to weigh different spectral features or even different similarity concepts for optimal identification.

- Extended (n-ary) Comparisons: The ability to compute the similarity of an entire set of objects simultaneously (e.g., a cluster of related spectra) provides new tools for assessing dataset diversity, cluster compactness, and for accelerating large-scale comparisons [10].

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Tools and Resources for Spectral Similarity Research

| Item / Resource | Type | Primary Function in Similarity Research |

|---|---|---|

| Mass Spectral Libraries(e.g., NIST, MassBank, GNPS) | Data | Provide reference spectra for calculating similarity scores and benchmarking identification accuracy. |

| Cheminformatics Toolkits(e.g., RDKit, CDK) | Software | Generate molecular fingerprints (for binary similarity) and handle chemical data for structure-based prediction. |

| Spectral Processing Software(e.g., MZmine, MS-DIAL) | Software | Preprocess raw spectra (peak picking, alignment, normalization) to prepare data for continuous similarity calculation. |

| Custom Scripts for Extended Similarity(e.g., Python code from [10]) | Software/Code | Enable calculation of n-ary similarity indices for comparing multiple spectra or molecules simultaneously. |

| Probabilistic Scoring Algorithms(e.g., KS-statistic based methods [9]) | Algorithm | Provide statistical frameworks for comparing sets of replicate spectra, moving beyond deterministic scores. |

The accurate identification of chemical compounds in complex biological and environmental samples represents a foundational challenge in analytical chemistry, with direct implications for drug discovery, metabolomics, and exposomics research [11]. This process predominantly relies on matching experimental mass spectra against reference libraries, where the spectral similarity score is the decisive metric [12]. Among the array of available algorithms, the cosine similarity measure—often termed cosine correlation or dot product in this context—has achieved widespread adoption as a benchmark due to its computational efficiency and intuitive geometric interpretation [13] [14]. However, the pursuit of higher confidence identifications and lower false discovery rates (FDR) has spurred the development of numerous variants and competing algorithms [11] [4].

This guide provides an objective, data-driven comparison of cosine similarity and its principal variants within the critical application of compound identification. Framed within a broader thesis on evaluating spectral similarity scores, we synthesize findings from recent, large-scale benchmarking studies to compare performance metrics such as identification accuracy and robustness to noise. We detail experimental protocols, present quantitative results in structured tables, and outline the essential computational toolkit for researchers and scientists engaged in method selection and development.

Theoretical Foundations and Algorithmic Variants

Core Cosine Similarity Algorithm

Cosine similarity measures the directional alignment between two non-zero vectors, independent of their magnitude. For two n-dimensional vectors representing spectra, A and B, it is defined as the cosine of the angle between them [13]: [ SC(A,B) = \cos(\theta) = \frac{A \cdot B}{\|A\|\|B\|} = \frac{\sum{i=1}^{n} Ai Bi}{\sqrt{\sum{i=1}^{n} Ai^2} \cdot \sqrt{\sum{i=1}^{n} Bi^2}} ] The resulting score ranges from -1 (perfectly opposite) to +1 (identical), with 0 indicating orthogonality [13]. In mass spectrometry, vectors typically contain peak intensities at corresponding mass-to-charge (m/z) values, and the score is often referred to as the "dot product" [14]. Its key advantage is invariance to scale, making it suitable for comparing spectra of differing total ion counts [15].

Key Variants and Related Measures

The standard formulation has been adapted to address specific challenges in spectral matching.

- Weighted Cosine Similarity: Recognizes that peaks at higher m/z, though often less intense, can be more informative. It applies a transformation to intensity (raised to power a) and m/z (raised to power b) before calculating similarity [14]. Optimal weights (e.g., a=0.53, b=1.3 for certain libraries) are database-dependent [14].

- Composite Measures: Combine cosine similarity with other features. The Stein and Scott composite similarity integrates the dot product with a measure of peak ratio similarity (

SR) [14]. - Correlation-Based Variants:

- Pearson Correlation: Often confused with cosine similarity, Pearson correlation is a doubly normalized measure. It subtracts the vector means before computing cosine similarity, making it sensitive to linear relationships but invariant to both scale and absolute offset [16] [17]. The formulas coincide only when the vector means are zero.

- Partial & Semi-Partial Correlation: Advanced variants introduced for mass spectrometry that aim to isolate the unique relationship between two spectra by removing the common features each shares with a set of other reference spectra, potentially reducing false matches among structurally similar compounds [14].

Competing Algorithm Families

Cosine similarity belongs to the Inner Product family of metrics. Large-scale evaluations categorize spectral similarity scores into distinct families with different mathematical properties [12]:

- Correlative Family: Includes Pearson, Spearman, and Kendall Tau correlations.

- Intersection Family: Utilizes minimum or maximum operations per m/z bin.

L_pDistance Family: Includes Euclidean (L2) and Manhattan (L1) distances.- Entropy-Based Measures: A fundamentally different approach, such as spectral entropy similarity, which treats a normalized spectrum as a probability distribution and measures the shared information content [11].

Diagram: Hierarchical Classification of Spectral Similarity Algorithms. This diagram maps the relationship between the core cosine similarity algorithm, its direct variants, and other major families of competing metrics used in compound identification [12].

Performance Comparison: Experimental Data and Benchmarks

Recent large-scale studies provide empirical data to objectively compare the performance of these algorithms. The following tables summarize key findings on identification accuracy and robustness.

Large-Scale Benchmarking of Algorithm Families

A 2023 study evaluating 66 similarity metrics across over 4.5 million candidate matches provides a high-level comparison of algorithm family performance [12].

Table 1: Performance of Spectral Similarity Algorithm Families (GC-MS Data)

| Algorithm Family | Representative Metrics | Average True Positive Identification Rate | Key Characteristics |

|---|---|---|---|

| Inner Product | Cosine, Weighted Cosine | High | Robust, performs well across diverse spectra [12]. |

| Correlative | Pearson, Partial Correlation | High | Good for linear relationships; partial variants reduce common noise [12] [14]. |

| Intersection | Wave-Hedges | Moderate | Sensitive to peak presence/absence [12]. |

L_p Distance |

Euclidean, Manhattan | Moderate to Low | Sensitive to magnitude and small intensity changes [12]. |

| Entropy-Based | Spectral Entropy | N/A (See Table 2) | Models information content, robust to noise [11]. |

Note: The study concluded that Inner Product and Correlative families tended to outperform others, but no single metric was optimal for all spectra [12].

Head-to-Head Comparison in MS/MS Identification

A pivotal 2021 study compared spectral entropy similarity directly against the classical dot product (cosine similarity) and 41 other alternatives using a large tandem MS (MS/MS) library [11].

Table 2: Performance Comparison in MS/MS Library Matching

| Similarity Metric | Test Library | Key Performance Outcome | False Discovery Rate (FDR) at Threshold |

|---|---|---|---|

| Dot Product (Cosine) | NIST20 (434,287 spectra) | Baseline performance | Not explicitly stated; outperformed by entropy. |

| Spectral Entropy Similarity | NIST20 (434,287 spectra) | Outperformed all 42 alternative metrics, including dot product [11]. | <10% at entropy similarity score ≥ 0.75 [11]. |

| Dot Product (Cosine) | 37,299 Natural Product Spectra | Baseline performance | Higher than entropy method. |

| Spectral Entropy Similarity | 37,299 Natural Product Spectra | Superior robustness to added noise ions [11]. | <10% at entropy similarity score ≥ 0.75 [11]. |

Advanced and Emerging Algorithms

The landscape continues to evolve with methods that move beyond direct spectral comparison.

Table 3: Performance of Advanced and Next-Generation Algorithms

| Algorithm | Category | Key Advantage | Reported Performance (Recall@1) |

|---|---|---|---|

| Partial/Semi-Partial Correlation [14] | Correlation Variant | Removes common background, improving specificity in GC-MS. | 84.6% accuracy, outperforming standard composite dot product [14]. |

| Spec2Vec [4] | Machine Learning Embedding | Captures spectral context via word2vec model. | State-of-the-art baseline for embedding methods. |

| LLM4MS (2025) [4] | LLM-Derived Embedding | Leverages implicit chemical knowledge from pre-trained LLMs. | 66.3%, a 13.7% improvement over Spec2Vec on a million-scale library [4]. |

Experimental Protocols and Methodologies

To ensure reproducibility and provide context for the data in the comparison tables, this section outlines the standard and advanced methodologies cited in the referenced studies.

Protocol for Benchmarking Similarity Metrics (GC-MS)

The comprehensive evaluation of 66 metrics [12] followed this workflow:

- Data Acquisition: Samples (fungi, soil, human biofluids) were analyzed using an Agilent GC 7890A coupled with a 5975C MSD (mass range: 50–550 m/z).

- Spectral Matching: Query spectra were matched to a reference library using CoreMS software (v1.0.0). This generated over 4.5 million candidate spectral matches.

- Truth Annotation: A qualified chemist manually verified all matches using the Automated Spectral Deconvolution and Identification System (AMDIS) to label true positives, true negatives, and unknowns.

- Metric Calculation & Evaluation: All 66 similarity metrics were coded in Python. Their effectiveness was evaluated based on the ability to rank true positive matches higher than incorrect matches across the diverse sample types.

Protocol for Evaluating Spectral Entropy (MS/MS)

The study establishing spectral entropy's superiority [11] used this method:

- Library & Query Sets: The high-quality NIST20 tandem MS library (434,287 spectra) served as the reference. Separate query sets were derived from NIST20 replicates and 37,299 experimental natural product spectra.

- Noise Robustness Testing: Controlled levels of random noise ions were added to query spectra to test metric robustness.

- Metric Calculation: Spectral entropy was calculated by first normalizing a spectrum to a probability distribution. The entropy similarity between two spectra was derived from their joint entropy and marginal entropies, quantifying shared information.

- Performance Assessment: Accuracy was measured by the metric's ability to rank the correct library entry first. False Discovery Rate (FDR) was calculated across similarity score thresholds.

Protocol for Partial Correlation in GC-MS

The protocol for the partial and semi-partial correlation method is as follows [14]:

- Data Preparation: The NIST mass spectral library is used as the reference. Query spectra are from a replicate library.

- Intensity Transformation: Both query and reference spectral intensities are transformed using an optimal weighting (e.g., intensity^0.53 * m/z^1.3).

- Correlation Matrix Computation: A matrix of pairwise correlations (e.g., Pearson) is computed between the query spectrum and all reference spectra, and among reference spectra.

- Partial Correlation Calculation: For a query spectrum q and a candidate reference spectrum r, the partial correlation removes the linear influence of a third reference spectrum z (or a set Z). It is calculated using the standard formula based on the correlation matrix.

- Mixture Similarity Score: The final score is a weighted composite of the partial correlation and the traditional transformed dot product, optimizing identification accuracy.

Diagram: Generalized Workflow for Compound Identification via Spectral Matching. This workflow underpins the experimental protocols for benchmarking similarity algorithms, from sample analysis to final validation [11] [12] [14].

The Scientist's Toolkit: Research Reagent Solutions

Selecting and implementing spectral similarity algorithms requires both software tools and curated data resources. The following table details essential "research reagents" for this field.

Table 4: Essential Computational Tools and Data for Spectral Similarity Research

| Item Name | Type | Function & Purpose | Key Feature / Note |

|---|---|---|---|

| CoreMS [12] | Open-Source Software | A framework for processing mass spectrometry data and performing spectral library matching. | Used in large-scale benchmarking studies; allows for implementation of custom similarity metrics. |

| Spectral Entropy Python Package [11] | Open-Source Code | Implements the calculation of spectral entropy similarity. | Available on GitHub; provides the algorithm that outperformed cosine similarity in MS/MS. |

| NIST Mass Spectral Library | Reference Database | The industry-standard library of reference spectra for GC-MS and LC-MS/MS. | Commercial; essential as a ground-truth reference for developing and testing algorithms [11] [14]. |

| MassBank of North America | Reference Database | A public domain repository of mass spectral data. | Free resource for accessing experimental spectra for testing [11]. |

| Scikit-learn | Python Library | Provides optimized, production-ready functions for calculating cosine similarity, Euclidean distance, and other metrics. | Essential for efficient implementation and integration into data pipelines [15]. |

| Million-Scale In-Silico EI-MS Library [4] | Reference Database | A large library of predicted Electron Ionization (EI) mass spectra. | Used for testing next-generation algorithms (e.g., LLM4MS) at scale; addresses coverage gaps in experimental libraries. |

The experimental data indicates that while classical cosine similarity (dot product) remains a robust and widely implemented benchmark, specific variants and alternative algorithms can offer superior performance depending on the context. For GC-MS data, weighted cosine and partial correlation methods have demonstrated higher accuracy by emphasizing informative peaks and removing shared background [14]. For tandem MS (MS/MS) identification, spectral entropy similarity has shown remarkable robustness and lower false discovery rates compared to the dot product and a wide array of alternatives [11].

The emerging trend is a shift from purely mathematical comparisons of peak lists towards machine learning and knowledge-informed methods. Algorithms like Spec2Vec and the recently proposed LLM4MS generate spectral embeddings that capture deeper contextual and chemical relationships, leading to significant gains in identification accuracy on large-scale libraries [4].

For researchers and drug development professionals selecting a spectral similarity algorithm, the choice should be guided by the instrumentation (GC-MS vs. LC-MS/MS), the size and quality of the reference library, and the required balance between sensitivity and specificity. Implementing a multi-metric approach or adopting the latest embedding-based methods may provide the most confident compound identifications, ultimately strengthening downstream biological conclusions.

The quantitative evaluation of similarity is a foundational task in computational sciences, directly impacting the accuracy of applications ranging from compound identification in metabolomics to medical image analysis and outcome prediction. Within this context, entropy-based measures from information theory have emerged as powerful tools for quantifying uncertainty, information content, and distributional similarity. Shannon entropy, the cornerstone of classical information theory, is extensively used due to its well-understood properties and extensive theoretical framework [18]. Its generalization, Tsallis entropy, introduces a tuning parameter that enables the modeling of non-extensive systems and offers flexibility in handling complex, real-world data where Shannon entropy may be suboptimal [1].

This comparison guide objectively evaluates the performance of Shannon and Tsallis entropy measures within a critical area of analytical science: evaluating spectral similarity scores for compound identification. This process is vital in fields like drug development, untargeted metabolomics, and exposomics, where correctly identifying molecules from tandem mass spectrometry (MS/MS) data is paramount [19]. The guide synthesizes findings from recent, high-impact studies that apply these entropies not only in spectral matching but also in related biomedical research contexts such as cancer recurrence prediction and ion channel gating analysis [18] [20]. By presenting comparative experimental data, detailed methodologies, and practical considerations, this guide aims to equip researchers and scientists with the knowledge to select and implement the most appropriate entropy measure for their specific analytical challenges.

Foundational Concepts and Mathematical Formulation

At their core, both Shannon and Tsallis entropy measure the uncertainty or information content inherent in a probability distribution. Their mathematical divergence leads to significantly different behaviors in practical applications.

Shannon Entropy (H) : For a discrete probability distribution ( P = (p1, p2, ..., pk) ), Shannon entropy is defined as ( H(P) = -\sum{i=1}^{k} pi \log(pi) ). In spectral similarity analysis, the probability distribution is often derived by normalizing the intensity vector of a mass spectrum so that all fragment ion intensities sum to one [19]. The corresponding cross-entropy, used as a loss function in machine learning, is ( H(Q;P) = -\sum{i=1}^{k} pi \log(q_i) ), which measures the difference between the true distribution ( P ) and the estimated distribution ( Q ) [18].

Tsallis-Havrda-Charvat Entropy (Hα) : This is a generalized, non-extensive entropy defined by the parameter ( \alpha ) (where ( \alpha > 0, \alpha \neq 1 )): ( H\alpha(P) = \frac{1}{\alpha - 1} \left( 1 - \sum{i=1}^{k} pi^\alpha \right) ) [18]. The associated cross-entropy is ( H\alpha(Q;P) = \frac{1}{\alpha - 1} \left( 1 - \sum{i=1}^{k} qi^{\alpha-1} pi \right) ). A key property is that Shannon entropy is a special case of Tsallis entropy when the parameter ( \alpha ) approaches 1 [18] [21]. This parameter provides a tunable "knob": values of ( \alpha < 1 ) enhance the influence of low-probability events, while ( \alpha > 1 ) amplifies the influence of high-probability events. This allows Tsallis entropy to be tailored to specific data characteristics or system behaviors, such as those with long-range interactions or fractal structures [1].

From Entropy to Spectral Similarity : For compound identification, entropy is used to compute a similarity score between two mass spectra. One advanced method involves creating a "mixed spectrum" from the query and reference spectra and calculating the entropy distance. The similarity is derived from the Jensen-Shannon divergence or related constructs, effectively measuring how much information (or "chaos") increases when the two spectra are combined [19].

Diagram: Conceptual relationship between generalized entropy measures and their primary applications in spectral analysis and machine learning. Shannon entropy is a limiting case of the more general Tsallis entropy.

Performance Comparison in Key Application Domains

The theoretical advantages of Tsallis entropy translate into measurable performance differences in practical experiments, though the optimal choice depends heavily on the task, data characteristics, and computational constraints.

The table below summarizes key experimental findings comparing the performance of Shannon and Tsallis-based methods against standard benchmarks like the dot product (cosine similarity).

| Application Domain | Metric | Shannon Entropy Performance | Tsallis Entropy Performance | Benchmark (e.g., Dot Product) | Notes & Experimental Context |

|---|---|---|---|---|---|

| MS/MS Spectral Similarity for Compound ID [19] [1] | Top-1 Identification Accuracy | Outperformed dot product and 41 other algorithms. [19] | Tsallis Entropy Correlation showed higher accuracy than Shannon in LC-MS/MS tests. [1] | Lower accuracy than entropy methods; highly sensitive to noise ions. [19] | Tested on NIST20 library (434,287 spectra). Tsallis performance is parameter (α)-dependent. |

| False Discovery Rate (FDR) at Score 0.75 | FDR <10% for natural product spectra. [19] | Not explicitly reported, but implied to be competitive or superior. [1] | Higher FDR than entropy methods for equivalent similarity thresholds. [19] | Study on 37,299 experimental spectra of natural products. | |

| Cancer Recurrence Prediction [18] [21] | Prediction Accuracy (Dataset: 580 patients) | Served as the baseline (α=1). | Achieved better performance for some α values (α ≠ 1). [18] | Not applicable (entropy used as loss function, not a similarity score). | Multitask deep neural network using CT images and clinical data. |

| Computational Cost [1] | Relative Expense | Lower computational cost. | Higher computational cost than Shannon. [1] | Lowest computational expense (especially with weighting). [1] | Cosine correlation is the simplest to compute. Tsallis requires exponentiation for α. |

In-Depth Analysis of Core Applications

Mass Spectrometry-Based Compound Identification : A landmark study demonstrated that spectral entropy similarity, based on Shannon entropy, outperformed the classical dot product and 42 other alternative similarity algorithms when searching hundreds of thousands of experimental spectra against reference libraries [19]. The entropy method proved significantly more robust to the addition of random noise ions, a common problem in MS/MS data. Building on this, a subsequent comparative analysis introduced a Tsallis Entropy Correlation measure. While this novel measure showed potential for higher accuracy than the Shannon-based version, the study concluded that the cosine correlation with a weight factor transformation achieved the best balance of top accuracy and the lowest computational expense [1]. This highlights a critical trade-off: Tsallis may offer a tunable advantage, but it comes with increased computational cost.

Biomedical Prediction Models : In a medical imaging context, researchers quantitatively compared loss functions derived from both entropies for training a deep neural network to predict cancer recurrence. The network used CT images and patient data from 580 individuals. The key finding was that the Tsallis cross-entropy loss function, with its tunable α parameter, could achieve better prediction accuracy than the standard Shannon cross-entropy loss [18] [21]. This illustrates Tsallis's utility in optimizing complex machine learning models for specific, data-scarce biomedical tasks where even a small performance gain is valuable.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear technical understanding, this section outlines the core methodologies from the cited comparative studies.

- Objective : To compare the accuracy and robustness of spectral entropy similarity against the dot product and other algorithms for compound identification via library matching.

- Data : 434,287 MS/MS spectra queried against the high-quality, manually validated NIST20 mass spectral library. Additional testing used 37,299 spectra of natural products.

- Preprocessing : Spectra were typically centroided and peak-matched. For entropy calculation, the intensity vector of each spectrum is normalized to sum to 1, creating a discrete probability distribution.

- Similarity Calculation (Shannon-based Spectral Entropy) :

- Given two normalized spectra A and B, a mixed spectrum M is constructed (e.g., M = (A + B)/2).

- The Jensen-Shannon distance (D) is computed: ( D = H(M) - \frac{1}{2}[H(A) + H(B)] ), where H is Shannon entropy.

- The entropy similarity score (S) is derived: ( S = 1 - \frac{D}{\ln(4)} ), scaling the result between 0 and 1.

- Evaluation : Performance was assessed using top-1 identification accuracy (correct match ranked first) and false discovery rate (FDR) at specific similarity score thresholds. Robustness was tested by adding random noise ions to spectra.

- Objective : To quantitatively compare Shannon and Tsallis-Havrda-Charvat cross-entropy as loss functions for a multitask deep network predicting cancer recurrence.

- Model Architecture : A multitask neural network with a U-Net backbone. One branch performed recurrence prediction (classification task), and another performed image reconstruction (for feature learning).

- Loss Functions :

- Task 1 (Reconstruction) : Mean Squared Error (MSE).

- Task 2 (Prediction) : Cross-entropy loss. For a true class

i0(Dirac distribution) and predicted probability vectorq, the losses are:- Shannon Cross-Entropy: ( L{SH} = -\log(q{i0}) )

- Tsallis Cross-Entropy: ( L{Ts}(\alpha) = \frac{1}{\alpha-1}(1 - q{i0}^{\alpha-1}) )

- Training & Evaluation : The model was trained on a dataset of 580 patients with head-neck or lung cancers, using both CT images and clinical data. The influence of the Tsallis parameter

αon final prediction accuracy was systematically studied.

Diagram: A generalized experimental workflow for evaluating spectral entropy similarity scores, showing parallel paths for Shannon and Tsallis-based methods leading to a common evaluation stage.

Successfully implementing entropy-based similarity measures requires both data and software tools. The following table details key resources referenced in the studies.

| Resource Name | Type | Primary Function in Research | Key Characteristics & Relevance |

|---|---|---|---|

| NIST20 Tandem Mass Spectral Library [19] [1] | Reference Database | Provides the ground-truth reference spectra for evaluating and benchmarking similarity search algorithms. | High-quality, manually curated commercial library. Used as the primary benchmark in performance studies. |

| MassBank of North America (MassBank.us) [19] | Reference Database | A public repository of mass spectra used for library matching in open-source workflows. | Contains publicly submitted spectra; broader coverage but potentially more variable quality than NIST. |

| Global Natural Products Social (GNPS) Molecular Networking [19] | Database & Platform | A crowdsourced platform for sharing mass spectra, particularly of natural products and microbial metabolites. | Contains diverse, experimentally rich data but may include noisy spectra; useful for testing robustness. |

| Weight Factor Transformation [1] | Data Preprocessing Method | Enhances the contribution of heavier fragment ions (with larger m/z) to the similarity score, as they are often more informative. | Critical for achieving high accuracy with cosine correlation; also improves Shannon/Tsallis entropy correlation performance. |

| Low-Entropy Transformation [1] | Data Preprocessing Method | Applied prior to entropy calculation to address the relative importance of large fragment ions. | Used in conjunction with Shannon Entropy Correlation to boost its performance. |

| U-Net Neural Network Architecture [18] | Deep Learning Model | Serves as a backbone for feature extraction from medical images in multitask learning scenarios. | Used in the cancer prediction study where entropy functions served as the loss for the classification branch. |

Discussion and Strategic Guidance for Researchers

The comparative data indicates that there is no universal "best" entropy measure. The choice between Shannon and Tsallis entropy, or even a classical metric like the weighted dot product, is situational.

When to Prioritize Shannon Entropy : Shannon entropy is the most straightforward choice for establishing a robust baseline. It is well-understood, computationally efficient, and has been proven to significantly outperform a wide array of traditional similarity measures like the dot product, especially in noisy MS/MS data [19]. It is ideal when interpretability, speed, and a lack of need for hyperparameter tuning are priorities.

When to Explore Tsallis Entropy : Tsallis entropy should be considered when there is evidence of system non-extensivity (where the whole cannot be described as the sum of its independent parts) or when initial results with Shannon entropy suggest room for optimization. Its tunable α parameter allows researchers to adapt the sensitivity of the measure to specific data characteristics, such as emphasizing rare or common spectral features. This can lead to marginal but critical gains in accuracy, as seen in medical prediction models [18] [21]. However, researchers must be prepared for increased computational cost and the need for parameter optimization [1].

Critical Consideration of Preprocessing : A pivotal insight from recent studies is that preprocessing choices can outweigh the choice of similarity function itself. The application of a weight factor transformation, which emphasizes higher m/z fragment ions, was shown to be essential for achieving top performance, regardless of whether cosine, Shannon, or Tsallis correlation was used [1]. Therefore, researchers should invest equal effort in optimizing their spectral preprocessing pipeline as in selecting their core similarity algorithm.

Future Outlook : The integration of these entropy measures into end-to-end deep learning frameworks represents a promising frontier. Rather than being used as standalone scoring functions, they can be embedded as loss functions or layers within neural networks (e.g., Spec2Vec, MS2DeepScore) [1]. This approach can learn chemically informed representations where the power of entropy-based comparison is leveraged within a more powerful, data-driven model.

In compound identification research, particularly in fields like untargeted metabolomics and exposome studies, the accuracy of matching experimental tandem mass spectrometry (MS/MS) spectra against reference libraries is paramount [19]. The measured spectral data, however, is inherently contaminated by various sources of interference, including instrumental noise, baseline drift, scattering effects, and artifacts from co-eluting compounds [22] [19]. These perturbations degrade measurement accuracy and can severely bias the feature extraction crucial for machine learning-based analysis [22]. Preprocessing serves as the essential first line of defense, transforming raw, noisy spectral data into a clean, reliable signal. Within this context, the choice of spectral similarity scoring algorithm—the mathematical function that quantifies the match between two spectra—becomes a critical downstream decision that is profoundly influenced by the quality of the preprocessing upstream [19] [2]. This guide provides a comparative evaluation of leading similarity scoring methods, focusing on their performance, robustness, and practical implementation, to inform researchers in drug development and related fields.

Comparative Analysis of Spectral Similarity Scoring Algorithms

Selecting the optimal similarity score is fundamental to confident compound identification. The following table provides a high-level comparison of the most significant algorithms.

Table 1: Overview of Key Spectral Similarity Scoring Algorithms

| Algorithm | Core Principle | Key Strengths | Primary Limitations | Typical Use Case |

|---|---|---|---|---|

| Dot Product (Cosine) | Cosine of the angle between two spectra treated as vectors in intensity space [19]. | Simple, intuitive, computationally fast; well-established benchmark [19] [2]. | Sensitive to noise and low-abundance ions; poor at identifying structurally related analogues [19] [2]. | Initial library screening; applications where spectral purity is high. |

| Spectral Entropy | Measures the difference in information content (Shannon entropy) between spectra [19]. | Highly robust to noise ions; superior false discovery rate (FDR) control; reflects spectral information content [19]. | Conceptually more complex; requires understanding of entropy calculations. | High-confidence identification in noisy data (e.g., natural products, complex matrices). |

| Spec2Vec | Unsupervised machine learning; learns fragment relationships from spectral corpora to create abstract embeddings [2]. | Excels at identifying structural analogues; scalable to large databases; captures latent spectral relationships [2]. | Requires a large training corpus of spectra; model performance depends on training data quality and relevance. | Molecular networking; analogue search; exploring unknown chemical space. |

| Modified Cosine | Adapts dot product to account for potential mass shifts by aligning peaks using precursor mass difference [2]. | Improved over dot product for spectra collected at different collision energies or on different instruments. | Still inherits dot product's sensitivity to noise; limited to addressing mass shifts only [2]. | Comparing spectra of the same compound acquired under varying instrument conditions. |

The performance of these algorithms has been rigorously tested in controlled experiments. A landmark study evaluated 42 similarity metrics by searching 434,287 query spectra against the high-quality NIST20 library [19]. The spectral entropy similarity method consistently outperformed all others, including dot product. Crucially, it demonstrated exceptional robustness when up to 50% random noise ions were added to test spectra, maintaining high accuracy while traditional scores degraded [19]. When applied to 37,299 experimental spectra of natural products, a false discovery rate (FDR) of less than 10% was achieved at an entropy similarity threshold of 0.75 [19].

In a separate comparative study focused on structural similarity, Spec2Vec was trained on nearly 13,000 unique molecules from the GNPS library [2]. The correlation between spectral similarity and true structural similarity (measured by Tanimoto scores on molecular fingerprints) was significantly stronger for Spec2Vec than for cosine-based methods [2]. For the top 0.1% of spectral matches, Spec2Vec retrieved pairs with a mean structural similarity nearly twice as high as those retrieved by the modified cosine score [2].

Table 2: Experimental Performance Comparison of Scoring Algorithms

| Evaluation Metric | Dot Product / Cosine | Spectral Entropy | Spec2Vec | Experimental Context |

|---|---|---|---|---|

| Library Match Robustness to Noise | Performance degrades significantly with added noise ions [19]. | >99% accuracy maintained even with 50% added noise ions [19]. | Not explicitly tested in noise model, but learns from data containing noise [2]. | Searching 434,287 spectra against NIST20 [19]. |

| False Discovery Rate (FDR) Control | Higher FDR at comparable match thresholds [19]. | <10% FDR at a similarity threshold of 0.75 for natural products [19]. | N/A | Analysis of 37,299 experimental spectra of natural products [19]. |

| Correlation with Structural Similarity | Weak to moderate correlation; high false positive rate for analogues [2]. | Not the primary metric for this algorithm. | Strongest correlation; retrieves analogue pairs with high structural similarity [2]. | Analysis of 12,797 unique compound spectra from GNPS [2]. |

| Computational Scalability | Fast, but can be burdensome for all-pairs comparisons in large databases [2]. | Computationally efficient for pairwise comparison. | Highly scalable; once trained, similarity calculation is very fast, ideal for large DB searches [2]. | Molecular networking and searching large spectral libraries [2]. |

Workflow and Methodological Protocols

Implementing these advanced scoring methods requires an integrated workflow from raw data to confident identification. The following diagram illustrates this process, highlighting where preprocessing and different scoring choices have their impact.

Spectral Identification Workflow from Preprocessing to Scoring

The core innovation of spectral entropy scoring lies in its application of information theory. The following diagram details the calculation process for entropy similarity, which is fundamental to its robustness.

Calculating Spectral Entropy Similarity Score

Key Experimental Protocol for Evaluating Preprocessing & Scoring Based on comparative studies [19] [23], a robust protocol for evaluating pipeline performance is:

- Dataset Curation: Obtain a high-quality, annotated spectral library (e.g., NIST20 for well-validated data or GNPS for diverse natural products [19]). Split into a reference library and a query set, ensuring each query has at least one correct match.

- Controlled Perturbation: Introduce realistic noise, baseline artifacts, or scaled intensities to the query set to simulate experimental variance [19] [23]. This tests robustness.

- Preprocessing Application: Apply a standardized preprocessing sequence (e.g., baseline correction, followed by normalization such as Standard Normal Variate (SNV) or Total Ion Current) [23].

- Similarity Scoring & Evaluation: Calculate matches using different algorithms (Dot Product, Spectral Entropy, Spec2Vec). Evaluate based on:

Transitioning to advanced methods requires specific tools and resources. The following table outlines key software and libraries.

Table 3: Research Reagent Solutions for Spectral Analysis

| Tool / Resource Name | Type | Primary Function | Relevance to Preprocessing & Scoring |

|---|---|---|---|

| MS2DeepScore / Spec2Vec | Python Library | Implements Spec2Vec and related ML-based similarity scoring [2]. | Enables state-of-the-art analogue search and molecular networking. Must be trained on a relevant corpus of spectra. |

| Matchms | Python Toolkit | Provides standardized workflows for processing MS/MS data, including filtering, cleaning, and computing similarity scores (cosine, entropy, etc.) [19] [2]. | Essential for reproducible preprocessing and for calculating entropy and other scores in a unified pipeline. |

| NIST MS/MS Library | Commercial Database | A manually curated library of high-resolution MS/MS spectra with extensive metadata [19]. | The gold-standard reference library for benchmarking and high-confidence identification. Critical for training and evaluation. |

| GNPS Public Spectral Libraries | Open-Access Database | A large, crowdsourced repository of MS/MS spectra, particularly rich in natural products [19] [2]. | Ideal for discovering novel compounds and analogues. Useful for training Spec2Vec models on specialized chemical spaces. |

| Standard Normal Variate (SNV) | Preprocessing Algorithm | Scales each spectrum by subtracting its mean and dividing by its standard deviation [23]. | A highly effective normalization method shown to reduce glare and height variation artifacts while preserving chemical contrast in hyperspectral data [23]. |

The experimental data clearly indicates that moving beyond the traditional dot product is necessary for rigorous compound identification. The choice of algorithm should be strategic, based on the specific research question and data quality:

- For Maximum Confidence and Low FDR in Noisy Data: Spectral Entropy is the recommended choice. Its mathematical foundation in information theory makes it inherently robust to noise and low-abundance interfering ions, directly translating to lower false discovery rates, as validated in large-scale studies [19].

- For Structural Analogue Search and Molecular Networking: Spec2Vec and related machine-learning approaches are superior. By learning the latent relationships between fragment ions, they can identify structurally related compounds even when spectra share few direct peak matches, a task where cosine-based methods fail [2].

- For Standardized, High-Purity Screening: The traditional Dot Product (Cosine) remains a fast, understandable benchmark. However, its results should be interpreted with caution, especially in complex matrices, due to its sensitivity to uncorrected noise and its poor performance in identifying analogues [19] [2].

Ultimately, preprocessing is not a separate step but the foundational stage that determines the ceiling of performance for any subsequent scoring algorithm. A pipeline combining rigorous preprocessing (like SNV normalization) [23] with an advanced, purpose-driven similarity score like spectral entropy or Spec2Vec represents the current standard for confident, high-throughput compound identification in critical applications like drug development.

Within the framework of a broader thesis on evaluating spectral similarity scores for compound identification, the selection of appropriate performance metrics is not merely a technical formality but a foundational determinant of scientific validity. In mass spectrometry-based metabolomics—a field critical to One Health modeling that connects human, animal, plant, and environmental ecosystems—the metabolomic "snapshot" is only as reliable as the compounds identified within it [12]. The process hinges on matching a query mass spectrum against a reference library, ranking candidates using a spectral similarity (SS) score. With dozens of available metrics, the lack of consensus introduces analytical uncertainty and threatens reproducibility across studies [12]. This comparison guide objectively evaluates the central triumvirate of performance metrics—Accuracy, Receiver Operating Characteristic (ROC) curves (and the Area Under the Curve, AUC), and Computational Cost—within this specific research context. It synthesizes recent experimental data to provide researchers, scientists, and drug development professionals with evidence-based recommendations for designing robust and interpretable compound identification workflows.

Foundational Metrics: Definitions, Interpretations, and Pitfalls

Accuracy

Accuracy is defined as the proportion of total correct predictions (both positive and negative) among the total number of cases examined [24]. Mathematically, for binary classification, it is expressed as (TP + TN) / (TP + TN + FP + FN), where TP, TN, FP, and FN denote True Positives, True Negatives, False Positives, and False Negatives, respectively [25].

- Interpretation and Use Case: Its simplicity and intuitiveness make accuracy a common starting point for reporting model performance. It is most reliable and informative when evaluating datasets with balanced class distributions and when the costs of different types of errors (false positives vs. false negatives) are roughly equivalent [26].

- The Critical Pitfall – The Accuracy Paradox: Accuracy becomes a severely misleading metric in the presence of class imbalance, a hallmark of spectral matching where correct hits (true positives) are vastly outnumbered by incorrect candidates (true negatives) [12] [24]. A model can achieve deceptively high accuracy by simply predicting the majority class (e.g., always calling a "non-match"), thereby failing entirely to identify the compounds of interest. This paradox underscores that high accuracy does not equate to a useful model for the task at hand [24].

ROC Curves and AUC

The Receiver Operating Characteristic (ROC) curve is a graphical plot that illustrates the diagnostic ability of a binary classifier across all possible classification thresholds [25]. It plots the True Positive Rate (TPR/Sensitivity) against the False Positive Rate (FPR; 1-Specificity) [25] [27].

- Interpretation: A curve that arcs toward the top-left corner indicates better performance. The diagonal line from (0,0) to (1,1) represents the performance of random guessing (AUC = 0.5) [28].

- Area Under the Curve (AUC): The AUC provides a single scalar value summarizing the overall ranking ability of the model. An AUC of 1.0 denotes perfect discrimination, while 0.5 indicates no discriminative power [29]. Conventionally, AUC values are interpreted as: 0.9-1.0 = outstanding; 0.8-0.9 = excellent; 0.7-0.8 = acceptable; 0.6-0.7 = poor; 0.5-0.6 = very poor [27].

- Key Theoretical Insight: Recent research clarifies that ROC-AUC is invariant to class imbalance in datasets [30]. Its calculation depends on the ranking of predictions, not on absolute numbers predicted correctly. Therefore, it provides a fairer comparison of models across datasets with different imbalances compared to metrics like precision-recall AUC, which are intrinsically tied to the imbalance ratio [30].

Computational Cost

Computational Cost refers to the resources required to compute a spectral similarity score, typically measured in terms of execution time and memory usage. This pragmatic metric determines the feasibility of applying a scoring algorithm to large-scale libraries or high-throughput workflows.

- Interpretation: Cost is influenced by algorithmic complexity (e.g., O(n) for simple dot products vs. more complex transformations), implementation efficiency, and required pre-processing steps (e.g., normalization, alignment) [12].

- Trade-off: There is often, but not always, a trade-off between discriminatory performance (high accuracy/AUC) and computational efficiency. The optimal metric balances sufficient performance with practical runtime constraints.

Table 1: Core Metric Summary and Primary Applications

| Metric | Primary Calculation | Optimal Use Case | Key Weakness |

|---|---|---|---|

| Accuracy | (TP+TN) / Total Samples [25] | Balanced datasets; Initial baseline assessment | Highly misleading under class imbalance [24] |

| ROC-AUC | Area under TPR vs. FPR curve [27] | Model ranking & comparison; Imbalanced data [30] [29] | Does not indicate optimal threshold; Less intuitive |

| Computational Cost | Execution time & memory usage | Scaling to large libraries; Real-time applications | Context-dependent; Requires benchmarking |

Application to Spectral Similarity Scoring: An Evidence-Based Comparison

Experimental Insights from Large-Scale Evaluations

A landmark 2023 study evaluated 66 similarity metrics across ten metric families using over 4.5 million hand-verified candidate spectra matches from diverse biological samples (fungi, soil, human biofluids) [12]. This work provides the most comprehensive empirical basis for comparing metric performance in GC-MS identification.

Table 2: Performance Summary of Spectral Similarity Metric Families [12]

| Metric Family | Representative Metrics | Key Characteristics | Reported Performance |

|---|---|---|---|

| Inner Product | Cosine Similarity, Dot Product | Uses product of query and reference intensities; widely adopted. | Tends to perform better than most other families. |

| Correlative | Pearson, Spearman Correlation | Measures linear correlation; range from -1 to 1. | Tends to perform better; effective for linearly related data. |

| Intersection | Intersection, Wave Hedges | Utilizes min/max intensity per m/z; sensitive to outliers. | Tends to perform better. |

| Lp / L1 | Euclidean (L2), Manhattan (L1) | Calculates geometric or absolute distance; sensitive to small changes. | Variable performance. |

| Entropy-Based | Shannon, Rényi, Tsallis | Assumes peak independence (often violated in MS) [12]. | Generally underperforms traditional leaders. |

Findings: The study concluded that no single metric was optimal for all spectra, but Inner Product (e.g., Cosine), Correlative, and Intersection families consistently demonstrated superior ability to delineate true positives from true negatives [12]. This research underscores the importance of family-level characteristics over individual metrics.

Direct Performance Comparison: Accuracy and AUC

Specific validation studies offer direct numeric comparisons. A study on an open-source spectral matching package reported the following accuracy on two reference libraries [31]:

- NIST GC-MS Library: Cosine Similarity achieved 84.28% accuracy (95% CI: 83.75%, 84.73%), outperforming entropy-based measures (Shannon: 80.69%, Rényi: 81.09%, Tsallis: 81.68%) [31].

- GNPS LC-MS/MS Library: Cosine Similarity again led with 69.07% accuracy (CI: 67.26%, 71.01%), though confidence intervals overlapped with entropy-based measures [31].

While these accuracy figures are useful, the imbalanced nature of library searches (one true hit among many decoys) necessitates AUC analysis. The large-scale study [12] used AUC to fairly compare the 66 metrics across its imbalanced datasets, finding the top-performing families mentioned above. This aligns with the theoretical robustness of AUC to imbalance [30].

Computational Cost Considerations

Computational cost varies significantly. Simple metrics like Cosine Similarity and Euclidean distance have lower computational complexity and are extremely fast to compute, facilitating real-time search in large libraries. More complex metrics, including entropy-based measures or those requiring spectral alignment or weighted transformations, incur higher computational overhead [12] [31]. For large-scale or high-throughput applications, this cost can become a bottleneck, making simpler, high-performing metrics like Cosine attractive.

Table 3: Comparative Analysis of Key Metrics for Spectral Matching

| Evaluation Dimension | Accuracy | ROC-AUC | Computational Cost |

|---|---|---|---|

| Sensitivity to Class Imbalance | High (Misleading) [24] | Low (Robust) [30] | Not Applicable |

| Primary Use in Research | Reporting final hit rates (with caution) | Model/Algorithm comparison & selection [12] [29] | Workflow feasibility & scaling |

| Interpretability | High (intuitive) | Moderate (requires statistical understanding) | Concrete (time, memory) |

| Outcome of Optimization | Maximizing correct classifications | Maximizing ranking quality | Minimizing resource usage |

| Guidance for Spectral Matching | Use only with clear context of balance; supplement with other metrics. | Preferred metric for evaluating and comparing similarity scores. | Critical for practical implementation; benchmark against needs. |

Experimental Protocols and Implementation

Protocol for Evaluating Spectral Similarity Scores

The following workflow synthesizes best practices from recent studies [12] [31]:

Diagram 1: Spectral Similarity Score Evaluation Workflow (7 nodes)

- Data Acquisition & Curation: Use authentic, diverse biological samples (e.g., from fungi, human biofluids, environmental isolates) [12]. Acquire spectra using standard instruments (e.g., GC-MS).

- Library Matching & Ground Truth Annotation: Match query spectra against a curated reference library (e.g., NIST, GNPS). Crucially, candidate matches must be manually verified by an expert chemist to establish a definitive ground truth for "true positive" and "true negative" matches [12]. This step is non-negotiable for reliable evaluation.

- Metric Computation: Implement a broad set of metrics spanning different families (e.g., Inner Product, Correlative, L_p). Studies have evaluated up to 66 metrics [12]. Use consistent pre-processing (normalization, peak alignment).

- Performance Evaluation: Calculate ROC-AUC as the primary comparison metric to account for class imbalance [30] [12]. Report accuracy and precision-recall metrics as secondary, context-specific measures.

- Statistical Comparison: Formally compare AUC values using established methods (e.g., DeLong test for paired curves) [27] to determine if performance differences are statistically significant.

Protocol for ROC Curve Generation and Analysis

- Data Preparation: For each similarity score, generate a list of prediction scores (the similarity values) and the corresponding true binary labels (1 for verified match, 0 for verified non-match) [25] [27].

- Threshold Sweep: Vary the classification threshold from the minimum to the maximum observed similarity score. At each threshold, calculate the TPR and FPR from the resulting confusion matrix [27] [28].

- Curve Plotting & AUC Calculation: Plot all (FPR, TPR) points to form the ROC curve. Calculate the AUC using the trapezoidal rule or a dedicated statistical function [27].

- Optimal Threshold Selection: The "optimal" threshold depends on the application cost. Use the Youden Index (J = Sensitivity + Specificity - 1) to find a threshold that maximizes overall discriminative ability if costs are equal [27]. For imbalanced problems like compound identification where finding true hits is critical, a threshold favoring higher sensitivity may be preferable.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 4: Key Computational Tools & Resources for Evaluation

| Tool / Resource | Function | Relevance to Performance Evaluation |

|---|---|---|

| CoreMS / Custom Python Scripts [12] | Frameworks for calculating a wide array of spectral similarity metrics. | Essential for implementing and benchmarking the 66+ metrics evaluated in recent studies. |

| ROC Curve Calculators (e.g., StatsKingdom, MedCalc) [25] [27] | Tools to generate ROC curves, compute AUC, confidence intervals, and compare curves statistically. | Critical for robust AUC analysis without extensive programming; uses established methods like DeLong [27]. |

| scikit-learn (Python) | Machine learning library with built-in functions roc_curve, auc, accuracy_score. |

The standard for integrated metric calculation within custom analysis pipelines. |

| Manual Verification Protocol [12] | Expert-led inspection of spectral matches using tools like AMDIS. | The ultimate "reagent" for generating reliable ground truth data, the foundation of all valid evaluation. |

| Predictive Analytics Platforms (e.g., DataRobot, SAS Viya) [32] | Automated machine learning platforms with model evaluation suites. | Useful for broader ML model development that may incorporate spectral scores as features, offering advanced evaluation dashboards. |

Synthesizing the experimental evidence and theoretical analysis: