Benchmarking Natural Product Scaffold Diversity: A Comparative Analysis with Synthetic Drug Libraries for Enhanced Drug Discovery

This article provides a comprehensive analysis of benchmarking natural product scaffold diversity against synthetic drug collections, tailored for researchers and drug development professionals.

Benchmarking Natural Product Scaffold Diversity: A Comparative Analysis with Synthetic Drug Libraries for Enhanced Drug Discovery

Abstract

This article provides a comprehensive analysis of benchmarking natural product scaffold diversity against synthetic drug collections, tailored for researchers and drug development professionals. It covers foundational concepts of natural product chemical space and its importance in drug discovery, methodological approaches including computational screening and AI-driven techniques, troubleshooting strategies for common challenges, and validation through comparative studies. The scope integrates insights from recent advances in virtual screening, scaffold-hopping, and benchmark sets to evaluate diversity, offering practical guidance for leveraging natural products in modern therapeutic development.

Unlocking Nature's Chemical Blueprint: The Foundation of Natural Product Scaffold Diversity

The strategic analysis of scaffold diversity—the variation in core molecular frameworks within a compound collection—is a fundamental pursuit in drug discovery. It serves as a critical benchmark for assessing the potential of chemical libraries to yield novel bioactive leads. This guide provides a comparative analysis of scaffold diversity in two paramount sources of bioactive compounds: natural products (NPs) and synthetic drug collections. NPs, honed by millions of years of evolutionary selection, represent a unique reservoir of biologically pre-validated chemical scaffolds [1]. In contrast, modern drug collections, including commercial screening libraries and make-on-demand spaces, are designed to explore vast tracts of synthetic chemical space with an emphasis on drug-like properties [2] [3]. Framed within a broader thesis on benchmarking NP scaffold diversity, this guide objectively compares the structural characteristics, design principles, and performance of these two sources, providing researchers with a framework for informed library selection and design.

Comparative Analysis of Scaffold Diversity

The assessment of scaffold diversity requires quantitative metrics and qualitative insights. The following tables compare NPs and synthetic drug collections across key dimensions.

Table 1: Quantitative Comparison of Scaffold Diversity Metrics

| Metric | Natural Products (Microbial Focus) | Synthetic Drug Collections (e.g., Make-on-Demand) | Implication for Diversity |

|---|---|---|---|

| Representative Source/Size | Natural Products Atlas (36,454 compounds) [4] | Enamine REAL Space (Billions of compounds) [3] | Synthetic libraries offer unparalleled scale. |

| Scaffold Clustering Profile | 82.6% of compounds fall into 4,148 clusters; median cluster size = 3 [4]. | Designed for high uniqueness; lower inherent clustering by scaffold [2]. | NPs show "islands" of highly related scaffolds; synthetic libraries aim for uniform spread. |

| Structural Complexity (avg.) | Higher fraction of sp³-hybridized carbons (Fsp³), more stereogenic centers [1] [5]. | Typically lower Fsp³, fewer stereocenters, optimized for synthetic accessibility [1]. | NP scaffolds are more three-dimensional, which may influence target selectivity [5]. |

| Biological Relevance | Evolutionarily pre-validated; scaffolds result from co-evolution with biological targets [6] [1]. | Designed for drug-likeness (e.g., Rule of 5); bio-relevance is a design goal, not an inherent trait [7] [3]. | NPs sample a "biologically relevant" region of chemical space, potentially increasing hit rates for certain targets. |

| Discovery Rate of Novel Scaffolds | Slowing; high rates of known scaffold rediscovery [4]. | Extremely high; scaffolds are computationally enumerated or derived from novel reactions [2] [7]. | Synthetic chemistry is the primary engine for novel scaffold generation. |

Table 2: Performance in Biological Screening

| Aspect | Natural Product-Inspired Libraries | Traditional/Generic Synthetic Libraries | Supporting Evidence |

|---|---|---|---|

| Hit Rate Enrichment | Often higher in phenotypic and target-based screens due to biological relevance [1] [5]. | Can be lower; hit rates improve when libraries are biased toward "bio-like" molecules [3]. | A PNP collection of 154 compounds yielded unique inhibitors for four distinct pathways [5]. |

| Breadth of Bioactivity | Capable of yielding diverse bioactivities from a single collection [5]. | Bioactivity is highly dependent on library design; can be broad or narrow. | Cheminformatic diversity in a PNP library translated directly to diverse phenotypic profiles [5]. |

| Scaffold Novelty vs. Utility | New scaffolds are rare but often highly impactful (e.g., new modes of action) [4]. | Novel scaffolds are common, but translation to useful probes/drugs requires optimization [2]. | The "great biosynthetic gene cluster anomaly" suggests many novel NP scaffolds remain undiscovered [4]. |

| Role of AI in Screening | AI models predict NP activity and mechanism, accelerating identification from complex mixtures [8]. | AI is crucial for virtual screening ultra-large libraries (billions of compounds) [8] [3]. | AI bridges the scale-relevance gap, prioritizing NPs or synthetic compounds for testing [8] [9]. |

Methodologies for Analyzing and Generating Scaffold Diversity

Experimental Protocols for Cheminformatic Analysis

A standard protocol for quantifying scaffold diversity, as applied to NP databases [4], involves:

- Data Standardization: Curate a compound set (e.g., SDF file) using software like RDKit. Remove salts, standardize tautomers, and enforce correct chirality.

- Molecular Framing: Apply an algorithm (e.g., the Bemis-Murcko method) to extract the central scaffold (ring systems with connecting linkers) from each molecule.

- Fingerprint Generation: Encode each scaffold using a molecular fingerprint. The Morgan fingerprint (circular fingerprint, radius 2) is widely used for its balance of detail and computational efficiency [4].

- Similarity Calculation & Clustering: Calculate pairwise similarities using the Dice coefficient (Tanimoto similarity for binary fingerprints). Cluster scaffolds using a threshold (e.g., similarity ≥ 0.75) [4]. Hierarchical clustering or sphere-exclusion algorithms are commonly used.

- Diversity Metrics Calculation:

- Number of Unique Clusters: The total count of distinct scaffold clusters.

- Mean Pairwise Dissimilarity:

1 - average(similarity)for all pairs in the set. - Scaffold Hit Rate (SHR): The number of active compounds divided by the number of unique scaffolds they represent. A higher SHR indicates a library where actives are not concentrated on a few scaffolds.

Synthesis Protocol for Diverse Pseudo-Natural Products (PNPs)

The synthesis of a diverse Pseudo-Natural Product (dPNP) library [5] exemplifies a modern strategy to merge NP-like relevance with high scaffold diversity:

- Design: Select biologically relevant NP fragments (e.g., indole, indanone). Combine them in novel arrangements not found in nature using a "divergent intermediate" strategy.

- Key Dearomatization Reaction:

- Substrate: Prepare 3-alkylindole with a tethered aryl bromide at the alkyl chain.

- Conditions: React substrate with N-formyl saccharin (CO surrogate), Pd(OAc)₂ (5 mol%), Xantphos ligand (10 mol%), and Na₂CO₃ base in DMF at 100°C for 16 hours [5].

- Outcome: A palladium-catalyzed carbonylation/intramolecular dearomatization cascade yields a complex spiroindolylindanone scaffold (PNP Class A).

- Diversification: Subject the common divergent intermediate (Class A) to various pairing reactions (e.g., reduction, amidation, cross-coupling) to generate multiple distinct compound classes (e.g., Classes B-E) [5].

- Cheminformatic Validation: Analyze the final collection to confirm it occupies diverse, NP-like chemical space distinct from common screening libraries [5].

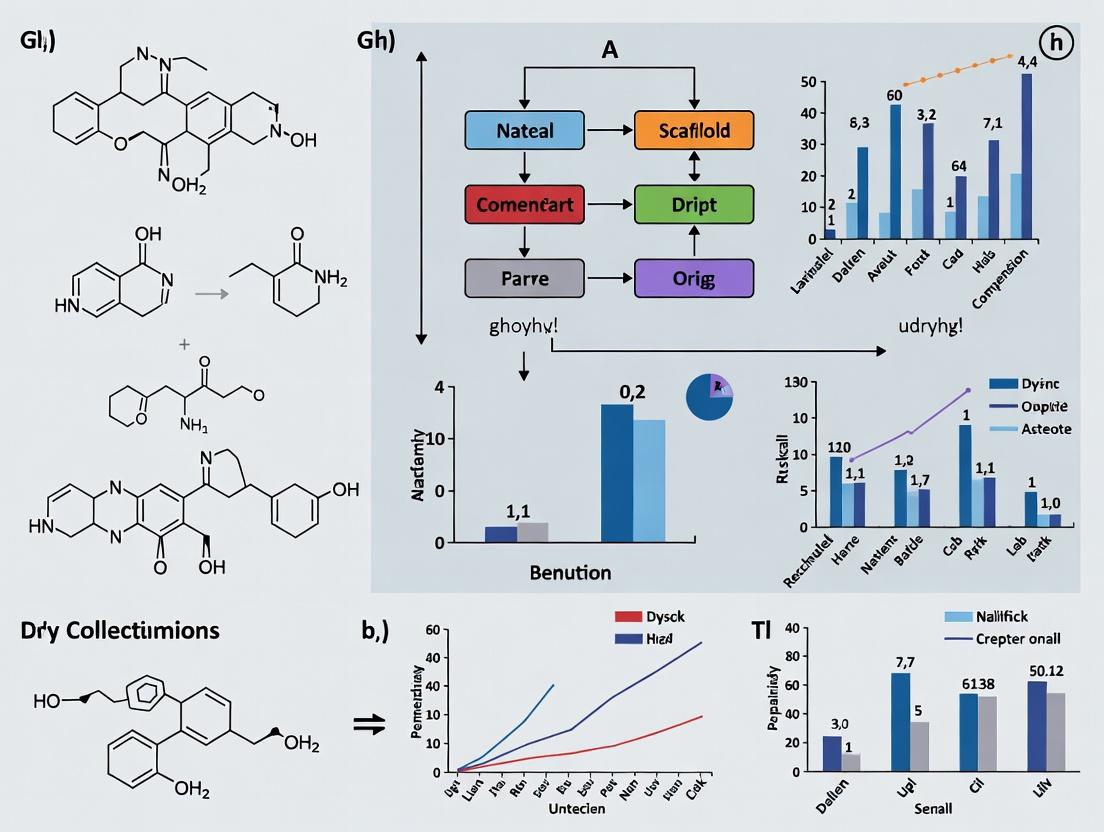

Scaffold diversity analysis workflow for comparative benchmarking.

Strategic Design Principles for Diverse Collections

The design of compound libraries exists on a continuum from purely synthetic to naturally derived [1].

Continuum of library design strategies based on similarity to natural product scaffolds.

- Biology-Oriented Synthesis (BIOS) & Pseudo-Natural Products (PNPs): BIOS uses an NP scaffold as a starting point for analog synthesis [1]. PNPs deconstruct NPs into fragments and recombine them into novel, non-natural scaffolds that retain biological relevance [5]. The diverse PNP (dPNP) strategy combines the PNP concept with diversification tactics from Diversity-Oriented Synthesis (DOS) to generate libraries with high scaffold diversity from a common intermediate [5].

- Diversity-Oriented Synthesis (DOS): Aims to synthesize structurally complex and diverse small molecules, often incorporating NP-like features (high sp³ content), but not necessarily starting from an NP template [1].

- Make-on-Demand & Focused Libraries: These are often designed using combinatorial reaction principles or are focused around a specific pharmacophore ("informacophore") [3]. Their primary goal is to maximize accessible chemical space or optimize a known activity, rather than prioritize NP-like complexity [2] [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Reagents, Databases, and Tools for Scaffold Diversity Research

| Item | Type | Function in Scaffold Diversity Research | Example / Source |

|---|---|---|---|

| Natural Products Atlas | Database | Curated database of microbial NP structures for analyzing natural scaffold distribution and clustering [4]. | https://www.npatlas.org/ |

| MolPILE Dataset | Database | Large-scale, curated dataset of 222M compounds for training ML models to better represent chemical space [7]. | Publicly available dataset [7]. |

| N-Formyl Saccharin | Chemical Reagent | Safe and efficient in-situ carbon monoxide surrogate used in key dearomatization reactions to build complex PNP scaffolds [5]. | Commercial chemical supplier (e.g., Sigma-Aldrich, TCI). |

| RDKit | Software Cheminformatics | Open-source toolkit for standardizing molecules, generating fingerprints (Morgan/ECFP), calculating descriptors, and scaffold analysis. | https://www.rdkit.org/ |

| Pd(OAc)₂ / Xantphos System | Catalysis | Catalyst-ligand system for facilitating pivotal carbonylation and cross-coupling reactions in complex scaffold synthesis [5]. | Commercial chemical supplier. |

| Enamine REAL Space | Virtual Library | Make-on-demand virtual compound library representing billions of synthetically accessible, diverse scaffolds for virtual screening [2] [3]. | https://enamine.net/compound-collections/real-compounds |

| AI/ML Models (e.g., GNNs) | Computational Tool | Graph Neural Networks and other models learn complex molecular representations to predict activity, classify scaffolds, or generate novel NP-like structures [8] [9]. | Implementations in libraries like PyTorch Geometric and DeepChem. |

The systematic comparison of chemical libraries is a foundational exercise in modern drug discovery. Research consistently demonstrates that the chemical space occupied by synthetic screening libraries is both limited and heavily biased towards flat, aromatic structures that adhere to conventional "drug-like" rules [10]. This homogeneity contributes to high attrition rates and a failure to engage novel biological targets. In contrast, natural products (NPs) are validated by evolution as privileged scaffolds with superior chemical diversity, structural complexity, and biological relevance. Framed within a thesis on benchmarking, this guide provides an objective, data-driven comparison between natural product-derived compounds and those from purely synthetic origins. It aims to equip researchers with the analytical frameworks and experimental evidence necessary to quantify this diversity gap and leverage natural product scaffolds for next-generation library design.

Comparative Analysis of Structural and Physicochemical Properties

A principal component analysis of New Chemical Entities (NCEs) approved between 1981–2010 provides quantitative evidence of the divergent chemical spaces explored by natural product-derived versus purely synthetic drugs [10]. The analysis categorizes drugs as Natural Products (NP), Natural Product-Derived (ND), Synthetic with a natural product pharmacophore (S*), and Purely Synthetic (S).

Table 1: Cheminformatic Comparison of Approved Drug Origins (1981-2010) [10]

| Property | Natural Products (NP) | Natural Product-Derived (ND) | Synthetic, NP-Pharmacophore (S*) | Purely Synthetic (S) | Implication for Drug Discovery |

|---|---|---|---|---|---|

| Molecular Weight | Higher | Higher | Moderate | Lower | NPs access "beyond Rule of 5" space effectively. |

| Fraction sp3 (Fsp3) | Highest (>0.5) | High | Moderate | Lowest (<0.3) | Greater 3D shape complexity enhances target selectivity. |

| Number of Stereocenters | Highest | High | Moderate | Lowest | Increased chiral complexity is linked to successful clinical progression. |

| Aromatic Ring Count | Lowest | Low | Moderate | Highest | Synthetic libraries are biased towards flat, aromatic scaffolds. |

| Topological Polar Surface Area | Higher | Higher | Moderate | Lower | NPs tend to be more polar and less hydrophobic. |

| Calculated LogP | Lower | Lower | Moderate | Higher | Lower hydrophobicity may reduce off-target toxicity. |

The data confirms that NPs and ND drugs occupy a broader, more complex region of chemical space characterized by greater three-dimensionality (high Fsp3), enriched stereochemistry, and lower aromatic ring fraction. Synthetic drugs based on NP pharmacophores (S*) retain some of these advantageous traits, bridging the gap between purely synthetic compounds and true NPs. This structural diversity directly translates to biological target diversity; for instance, approximately 67% of anti-infective and 83% of anticancer small-molecule drugs are natural products or derivatives [11].

Table 2: Coverage Gaps in Commercial Compound Libraries [12]

| Chemical Space Region | Coverage in Commercial Libraries | Example Query Type | Status in NP Libraries |

|---|---|---|---|

| Classic 'Drug-like' (Lipinski) | Excellent | Flat, aromatic scaffolds | Present, but not dominant |

| Polar / Hydrophilic | Significant blind spot | Nucleotides, charged groups | Highly represented (e.g., glycosides) |

| Natural-Product-like (sp3-rich) | Significant blind spot | High Fsp3, stereocomplexity | Core competency; highly represented |

| bRo5 (Beyond Rule of 5) | Limited | Macrocycles, peptides | Well-represented (e.g., cyclosporine) |

| Medium-Sized Rings (7-11 membered) | Under-represented | Polycyclic with 8-10 membered rings | Accessible via NP diversification [13] |

A 2025 benchmark study of commercial combinatorial spaces and enumerated libraries identified a critical blind spot: these sources consistently fail to provide analogs for complex, hydrophilic, and natural-product-like compounds [12]. This deficiency stems from a lack of suitable building blocks and the synthetic challenge of creating such molecules, underscoring the irreplaceable value of naturally evolved scaffolds.

Experimental Protocols for Assessing and Generating NP Diversity

Protocol 1: Cheminformatic Analysis for Library Benchmarking

This protocol outlines the principal component analysis used to generate the data in Table 1 [10].

- Objective: To quantify and visualize differences in the structural and physicochemical properties of drug molecules from different origins.

- Materials: A curated dataset of New Chemical Entities (NCEs) approved between 1981-2010, categorized by origin (NP, ND, S*, S). Software for molecular descriptor calculation (e.g., RDKit, OpenBabel) and multivariate analysis (e.g., R, Python with scikit-learn).

- Procedure:

- Data Curation: Compile SMILES or structure files for each NCE. Annotate each compound with its origin category using established criteria [10].

- Descriptor Calculation: For each molecule, calculate a standard set of 20+ 2D and 3D molecular descriptors. Essential descriptors include Molecular Weight (MW), Fraction sp3 (Fsp3), number of stereocenters, number of aromatic rings (RngAr), Topological Polar Surface Area (TPSA), and calculated LogP/LogD.

- Data Normalization: Scale all descriptor values to a common range (e.g., zero mean and unit variance) to prevent bias from parameter magnitude.

- Principal Component Analysis (PCA): Perform PCA on the normalized descriptor matrix. The first 2-3 principal components typically capture the majority of variance.

- Visualization & Interpretation: Generate 2D/3D scatter plots of the compounds, colored by origin category. Analyze the loadings of the original descriptors on the principal components to interpret the chemical meaning of the spatial distribution.

Cheminformatic Benchmarking Workflow

Protocol 2: Diversifying NP Scaffolds via C-H Oxidation & Ring Expansion

This protocol details a modern chemical strategy to synthetically amplify NP diversity, as demonstrated with polycyclic terpenes [13].

- Objective: To generate diverse, complex libraries with medium-sized rings from a common natural product scaffold.

- Materials: Natural product starting material (e.g., steroid like dehydroepiandrosterone), C-H oxidation reagents (electrochemical set-up or chemical oxidants like Cr or Cu complexes), ring-expansion reagents (e.g., diazo compounds for cycloaddition, reagents for Schmidt or Beckmann rearrangements). Purification equipment (HPLC, flash chromatography).

- Procedure:

- Site-Selective C-H Functionalization: Employ a selective C-H oxidation method (e.g., electrochemical, metal-mediated) on the NP core to install new oxygen-based functional handles (alcohols, ketones) at previously inaccessible positions.

- Intermediate Characterization: Purify and fully characterize (NMR, HRMS) the functionalized intermediates.

- Ring Expansion Reaction: Subject the ketone or alcohol intermediates to ring-expanding transformations. For example, perform a Beckmann rearrangement on a ketone to form a medium-sized lactam, or a formal [2+2] cycloaddition with a dialkyne to expand a cyclic β-ketoester.

- Library Synthesis: Systematically vary the oxidation site and the ring-expansion pathway to produce a library of analogs featuring varied ring sizes (7-11 membered) and functional group arrangements.

- Chemical Space Analysis: Calculate physicochemical descriptors for the new library and map them alongside the parent NP and commercial libraries to confirm entry into underexplored chemical space [13].

The Natural Product Discovery and Diversification Pipeline

The journey from a biological specimen to a diversified natural product-inspired library involves a multi-stage pipeline. Contemporary approaches integrate traditional microbiology with modern genomics, synthetic biology, and chemistry [14] [11].

Modern NP Discovery & Diversification Pipeline

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Resources for NP Diversity Research

| Category | Item / Resource | Function / Description | Source / Example |

|---|---|---|---|

| Biological Resources | Actinobacterial Strain Collection | Primary source of NP diversity; >125k strains with an estimated 3.75M BGCs [11]. | Natural Products Discovery Center [11] |

| Culturing Media Kits | Maximizes expression of secondary metabolites via varied nutrient stress. | ISP, R2A, and custom media formulations [11] | |

| Analytical Tools | HPLC-HRMS/MS System | Critical for dereplication, metabolite profiling, and structural characterization. | e.g., UHPLC coupled to Q-TOF or Orbitrap MS [14] |

| NMR Spectroscopy | Definitive tool for determining planar and stereochemical structure of purified NPs. | High-field (≥500 MHz) with cryoprobes [14] | |

| Chemical Reagents | C-H Oxidation Reagents | Enables site-selective diversification of NP cores (e.g., electrochemical, Cr, Cu setups) [13]. | Commercial catalysts & electrochemical cells |

| Ring-Expansion Reagents | Facilitates synthesis of underexplored medium-sized rings (e.g., diazo compounds) [13]. | e.g., Ethyl diazoacetate, DMAD | |

| Computational Resources | NP-Specific Databases | Source of known structures for benchmarking and training generative models. | COCONUT, NP Atlas [15] |

| Generative AI Models (RNN/LSTM) | Expands virtual NP chemical space by orders of magnitude for in silico screening [15]. | Custom models trained on NP SMILES strings | |

| Cheminformatics Software (RDKit) | Calculates molecular descriptors, fingerprints, and similarity scores for analysis. | Open-source cheminformatics toolkit |

The evolutionary advantage of natural products is quantifiable: they exhibit superior scaffold diversity, greater three-dimensional complexity, and a proven track record of hitting challenging therapeutic targets. Benchmarking studies reveal that this chemical space remains largely untapped by commercial synthetic libraries [10] [12]. The future of NP-inspired drug discovery lies in integrating this evolutionary wisdom with cutting-edge technologies. This includes leveraging genome mining to access silent biosynthetic pathways [11], employing generative AI to design vast virtual libraries of NP-like molecules (67 million+ and growing) [15], and using synthetic chemistry strategies like C-H functionalization to diversify complex cores into novel regions of chemical space [13]. For researchers, the imperative is to adopt these benchmarking and diversification strategies to build the next generation of screening libraries that finally capture the full, potent diversity honed by nature.

The strategic evaluation of chemical starting points is a cornerstone of modern drug discovery. Within this context, natural products (NPs) represent a unique class of biologically pre-validated scaffolds that have historically contributed to a disproportionate number of approved therapies [16] [14]. Despite a decline in dedicated NP programs within the pharmaceutical industry since the 1990s, approximately half of all new small-molecule drug approvals continue to trace their structural origins to a natural product [10] [17]. This enduring success, contrasted with the high attrition rates of purely synthetic libraries, necessitates a rigorous, data-driven benchmarking approach.

This comparison guide objectively analyzes the performance of natural product-derived scaffolds against synthetic compound collections. The core thesis is that NPs occupy a distinct and privileged region of chemical space characterized by greater structural complexity, three-dimensionality, and scaffold diversity, which directly correlates with higher success rates in clinical development [10] [17]. We present comparative quantitative data, detailed experimental protocols for key benchmarking analyses, and visual tools to guide researchers in leveraging NP scaffolds for library design and lead discovery.

Benchmark Comparisons: Quantitative Performance Data

The following tables consolidate key experimental and cheminformatic data comparing natural product-derived compounds with their synthetic counterparts across critical parameters for drug discovery success.

Table 1: Comparison of Physicochemical Properties and Structural Features

| Parameter | Natural Products & Derivatives (NP, ND) | Synthetic Drugs (S) | Synthetic, NP-Inspired (S*) | Implication for Drug Discovery |

|---|---|---|---|---|

| Molecular Weight | Larger | Smaller | Intermediate | NPs explore beyond strict "Rule of 5" space [10]. |

| Fraction sp3 (Fsp3) | Higher (~0.45) | Lower (~0.33) | Intermediate | Higher Fsp3 correlates with clinical success and greater 3D complexity [10]. |

| Number of Stereocenters | Greater | Fewer | Intermediate | Increased stereochemical content is linked to improved binding selectivity [10]. |

| Calculated LogP/LogD | Lower (Less hydrophobic) | Higher (More hydrophobic) | Intermediate | Favors better solubility and absorption profiles [10]. |

| Number of Aromatic Rings | Fewer | More | Intermediate | Reduces molecular flatness, potentially improving target selectivity [10]. |

| Oxygen Atom Count | Higher | Lower | Varies | Reflects biosynthetic origins and influences polarity [10]. |

| Nitrogen Atom Count | Lower | Higher | Varies | Differentiates biosynthetic pathways from common synthetic chemistry [10]. |

Table 2: Clinical Development Success Rates (2018-2022 Analysis)

| Development Phase | Proportion of Synthetic Compounds (%) | Proportion of NP & NP-Derived Compounds (%) | Trend & Implication |

|---|---|---|---|

| Phase I Entry | ~65% | ~35% (NP: ~20%, Hybrid: ~15%) | Synthetic compounds dominate initial clinical entry [17]. |

| Phase III | ~55.5% | ~45% (NP: ~26%, Hybrid: ~19%) | Significant increase in NP/NP-derived share [17]. |

| FDA Approval (1981-2019) | ~25% (Purely Synthetic) | ~75% (All NPs, Derivatives & Mimics) | NP-inspired compounds show markedly higher approval success [17]. |

Table 3: Scaffold Diversity Analysis of Commercial vs. NP Libraries

| Library / Database | Description | Key Scaffold Diversity Metric | Comparative Insight |

|---|---|---|---|

| Traditional Chinese Medicine Database (TCMCD) | 54,206 natural product compounds [18]. | High structural complexity but more conservative core scaffolds [18]. | Scaffolds are biologically relevant but may offer less peripheral diversity for combinatorial chemistry. |

| Commercial Libraries (ChemBridge, Mucle, etc.) | Large, purchasable screening libraries (e.g., Mucle: ~4.9M compounds) [18]. | High overall scaffold diversity in standardized subsets [18]. | Diversity is broad but may lack the biological pre-validation and complexity of NP scaffolds. |

| FDA-Approved Drugs | Reference set of successful drug molecules. | NP-derived drugs occupy a broader, more diverse region of chemical space than synthetic drugs [10]. | Validates the NP chemical space as a rich source for lead-like scaffolds. |

Experimental Protocols for Benchmarking Scaffold Diversity

To objectively compare chemical libraries, standardized experimental and computational protocols are essential. The following methodologies are central to the analyses cited in this guide.

Protocol: Cheminformatic Analysis of Physicochemical Properties

This protocol is used to generate the data in Table 1 and is foundational for comparing chemical spaces [10].

- Compound Set Curation: Assemble datasets of approved drugs categorized by origin (NP, ND, S, S*) [10]. Standardize structures: remove salts, neutralize charges, and generate canonical tautomers.

- Descriptor Calculation: For each molecule, calculate a panel of 20+ structural and physicochemical descriptors. Essential parameters include:

- Molecular Weight (MW), Hydrogen Bond Donors/Acceptors (HBD/HBA)

- Fraction sp3 (Fsp3): (Number of sp3 hybridized carbons) / (Total carbon count).

- Topological Polar Surface Area (tPSA), Calculated LogP/LogD (e.g., using ALOGP or XLOGP methods).

- Number of Stereocenters, Number of Aromatic Rings (RngAr), Counts of Oxygen and Nitrogen atoms.

- Rotatable Bonds, Number of Ring Systems (RngSys).

- Statistical Comparison: Perform principal component analysis (PCA) or other multivariate analyses on the descriptor matrix. Statistically compare the mean and distribution of each parameter between compound classes (e.g., NP-derived vs. purely synthetic) using t-tests or Mann-Whitney U tests.

- Visualization: Plot compounds in 2D or 3D chemical space using the first principal components to visualize the distinct regions occupied by different compound classes.

Protocol: Scaffold Diversity Analysis Using the Scaffold Tree

This protocol, based on the Scaffold Tree methodology, is used to analyze and compare the scaffold composition of libraries (Table 3) [18] [19].

- Library Standardization: Download and curate compound libraries (e.g., from ZINC15). Apply filters: remove inorganic molecules, salts, and duplicates. Generate a standardized subset with a matched molecular weight distribution (e.g., 100-700 Da) for fair comparison [18].

- Scaffold Generation:

- Murcko Framework Generation: For each molecule, generate the Murcko framework by removing all side chain atoms, retaining only ring systems and linkers between them.

- Hierarchical Scaffold Tree Construction: For each Murcko framework, iteratively prune rings based on a set of prioritization rules (e.g., retain heterocycles over carbocycles, larger rings before smaller ones) until a single ring remains. This creates a hierarchical tree where each level represents a simplified scaffold [18].

- Diversity Metrics Calculation:

- Scaffold Counts: Calculate the total number of unique scaffolds (Level 1 or Murcko frameworks) and the number of singletons (scaffolds appearing only once).

- Scaffold Recovery Curves: Plot the cumulative fraction of compounds recovered (Y-axis) against the cumulative fraction of scaffolds analyzed from most to least frequent (X-axis). Calculate the Area Under the Curve (AUC); a lower AUC indicates greater scaffold diversity [19].

- Shannon Entropy (SE): Calculate SE based on the frequency distribution of scaffolds. A higher SE indicates a more even distribution of compounds across scaffolds, signifying higher diversity [19].

- Comparative Visualization: Use Tree Maps to visualize the relative abundance of different scaffold clusters within each library, providing an intuitive comparison of scaffold diversity and coverage [18].

Protocol: Assessing Clinical Trial Progression Rates

This methodology underpins the longitudinal analysis of success rates shown in Table 2 [17].

- Data Compilation:

- Patent Analysis (Early Stage Proxy): Mine patent databases (e.g., via SureChEMBL) for compounds, classifying them as Synthetic, NP, or Hybrid (NP-derived). Track annual filing proportions over decades.

- Clinical Trial Data Extraction: Aggregate data from clinical trial registries (e.g., ClinicalTrials.gov) for phases I, II, and III. Link trial compounds to their structural classifications.

- Approved Drug List: Use authoritative sources (e.g., FDA Orange Book, Newman & Cragg reviews) to compile approved drugs and classify their origin.

- Classification Logic: Apply a consistent rule set for chemical classification:

- NP: Unaltered natural product.

- ND: Semisynthetic derivative of an NP scaffold.

- Hybrid/S*: Synthetic compound whose pharmacophore is inspired by an NP.

- Synthetic: Purely synthetic compound with no NP-inspired pharmacophore.

- Longitudinal Tracking & Statistical Analysis: For each development phase, calculate the proportion of compounds belonging to each class. Perform trend analysis (e.g., Chi-squared test for trend) to determine if the change in proportion from Phase I to Phase III/Approval is statistically significant. This reveals the differential attrition rates between classes.

Visualizing the Pathway: From Natural Product to Clinical Success

The following diagram, generated using Graphviz DOT language, illustrates the differential progression of natural product-inspired versus purely synthetic compounds through the drug development pipeline, based on the comparative success rates analyzed [17].

Diagram 1: Comparative clinical progression pathways for NP-inspired versus purely synthetic drug candidates, illustrating the "survival rate" advantage of NP-inspired compounds [17].

Experimental Workflow for Chemical Space Analysis

A critical step in benchmarking is mapping the chemical space of different compound collections. The following diagram outlines a standardized computational workflow for comparative scaffold diversity analysis [18] [19].

Diagram 2: A standardized cheminformatic workflow for the scaffold diversity analysis of compound libraries, enabling objective comparison between natural product collections and synthetic libraries [18] [19].

The Scientist's Toolkit: Key Research Reagents & Solutions

The experimental protocols described rely on specific software tools, databases, and chemical resources. This table details essential components of the benchmarking toolkit.

Table 4: Essential Research Reagents & Computational Tools for Scaffold Benchmarking

| Tool/Resource | Type | Primary Function in Benchmarking | Key Application / Note |

|---|---|---|---|

| ZINC15 Database | Online Database | Primary source for downloading purchasable compound libraries (e.g., Mcule, Enamine) [18]. | Provides standardized structures for synthetic library analysis. |

| Traditional Chinese Medicine Compound Database (TCMCD) | Specialized Database | Curated collection of NP structures from herbal medicine for comparative diversity analysis [18]. | Serves as a representative, biologically relevant NP library. |

| RDKit | Open-Source Cheminformatics Toolkit | Python library for molecular standardization, descriptor calculation, fingerprint generation, and scaffold manipulation [20]. | Core engine for curating datasets and calculating properties in Protocols 3.1 & 3.2. |

| Molecular Operating Environment (MOE) | Commercial Software Suite | Used for structure curation, physicochemical property calculation, and generating Scaffold Trees via its sdfrag command [18]. |

Commonly used in cited studies for detailed scaffold analysis. |

| Pipeline Pilot | Data Science Platform | Provides workflow components for high-throughput molecular filtering, duplicate removal, and fragment generation [18]. | Facilitates the preprocessing of large compound libraries. |

| Consensus Diversity Plot (CDP) Tool | Web Application | Generates 2D plots integrating diversity metrics from scaffolds, fingerprints, and properties for global library comparison [19]. | Implements the visualization method described in Protocol 3.2. |

| PubChem PUG REST API | Web Service | Retrieves standardized chemical structures (SMILES) using CAS numbers or names for dataset curation [20]. | Essential for reconciling and standardizing compound identifiers from diverse sources. |

| Opera (QSAR Models) | Open-Source Software Battery | Provides robust QSAR models for predicting key physicochemical properties (e.g., LogP, solubility) for property-based analysis [20]. | Useful for augmenting experimental property data in cheminformatic comparisons. |

The pursuit of novel bioactive compounds remains a central challenge in drug discovery. This guide objectively compares two foundational sources of chemical matter: Natural Products (NPs) and Synthetic Compound Libraries (SCs). The analysis is framed within the broader thesis that natural products provide superior and underutilized scaffold diversity compared to conventional synthetic libraries, a diversity that is crucial for interrogating novel biological targets and overcoming discovery bottlenecks [10] [21].

Historically, NPs have been the source of approximately half of all approved small-molecule drugs [10]. However, the rise of combinatorial chemistry and high-throughput screening (HTS) in the late 20th century led the pharmaceutical industry to prioritize synthetic libraries, often designed under strict "drug-like" filters like Lipinski's Rule of Five [10] [21]. This shift did not yield the expected surge in new molecular entities, in part due to the limited structural diversity and "flatness" of many synthetic collections [21]. Consequently, a renaissance in NP research is underway, driven by the hypothesis that NPs occupy distinct and more biologically relevant regions of chemical space [14] [22].

This guide employs a cheminformatic lens to benchmark NPs against SCs. We define chemical space as a multidimensional framework where molecules are positioned based on calculated structural and physicochemical properties [10] [23]. The core thesis posits that NPs exhibit greater scaffold complexity, three-dimensionality, and structural uniqueness, making them a critical resource for expanding the frontiers of druggable chemical space [10] [21] [22].

Quantitative Comparison of Chemical Spaces

A direct, data-driven comparison reveals fundamental and statistically significant differences between NPs and SCs. These differences underscore the complementary value of NPs in discovery campaigns.

Physicochemical and Structural Properties

A principal component analysis of drugs approved between 1981–2010 shows that drugs derived from or inspired by NPs occupy larger, more diverse regions of chemical space than completely synthetic drugs [10]. The following table summarizes key differentiating properties.

Table 1: Comparative Physicochemical and Structural Properties of Natural Products and Synthetic Compounds

| Property / Descriptor | Natural Products (NPs) | Synthetic Compounds (SCs) | Biological & Discovery Implication |

|---|---|---|---|

| Molecular Complexity | Higher | Lower | NPs are more likely to achieve selective target binding [10]. |

| Fraction of sp³ Carbons (Fsp³) | Higher (>0.35 avg.) [10] | Lower | Correlates with clinical success; contributes to 3D shape [10]. |

| Number of Stereocenters | Significantly higher [10] | Lower | Increases specificity and reduces off-target effects [10]. |

| Aromatic Ring Count | Fewer [10] | More prevalent [21] | SCs are often "flatter," potentially limiting target scope [10]. |

| Oxygen & Nitrogen Content | More oxygen atoms [10] [21] | More nitrogen atoms [21] | Reflects different biosynthetic vs. synthetic building blocks. |

| Hydrophobicity (LogP/D) | Generally lower [10] [22] | Often higher | NPs maintain bioavailability despite larger size, partly via lower LogP [22]. |

| Molecular Weight/Size | Generally larger [10] [21] | Constrained by "drug-like" rules [21] | NP complexity isn't captured by simple molecular weight rules [22]. |

| Scaffold & Ring Systems | Larger, more fused/aliphatic rings [21] | More aromatic rings, smaller systems [21] | NP scaffolds offer more complex, pre-validated structural templates. |

Scaffold and Fragment Diversity

Fragment-based analysis provides a granular view of core structural diversity. A 2025 study comparing fragment libraries derived from large NP databases (COCONUT, LANaPDB) with a synthetic library (CRAFT) quantified these differences [24].

Table 2: Fragment Library Diversity Analysis [24]

| Library (Source) | Number of Parent Compounds | Number of Fragments | Key Diversity Finding |

|---|---|---|---|

| COCONUT NP Library | ~695,133 NPs | ~2.58 million | Fragments exhibit high structural complexity and uniqueness. |

| LANaPDB NP Library | ~13,578 NPs | ~74,193 | Covers distinct, often underrepresented, chemical space. |

| CRAFT Synthetic Library | Not specified | ~1,214 | Based on novel heterocycles & NP-inspired cores; more focused. |

| Comparative Conclusion | NP-derived fragments access broader, more complex chemical space, providing a rich source of novel scaffolds for design [24]. |

Evolution of Chemical Space Over Time

A critical 2024 time-dependent analysis reveals that NPs and SCs have evolved along divergent trajectories [21].

- NPs have become larger, more complex, and more hydrophobic over recent decades, with increases in molecular weight, ring count, and glycosylation. Their chemical space has expanded and become less concentrated [21].

- SCs have shown a continuous shift in properties but within a constrained range dictated by synthetic feasibility and historical "drug-like" filters. While their structural diversity is broad, their biological relevance may be declining [21].

This divergent evolution underscores that SCs have not converged toward NP-like chemical space, reinforcing the uniqueness and enduring value of NPs for discovery [21].

Experimental Protocols for Chemical Space Comparison

Robust comparison of vast chemical spaces requires specialized computational methodologies. Below are detailed protocols for two key approaches cited in the literature.

Protocol 1: Principal Component Analysis (PCA) of Drug Properties

This protocol, based on the analysis in [10], is used to visualize and compare the chemical space of different compound sets (e.g., NP-derived vs. synthetic drugs).

1. Compound Curation & Categorization:

- Source a dataset of approved New Chemical Entities (NCEs) with associated approval dates.

- Categorize each compound by origin: Natural Product (NP), Natural Product-Derived (ND), Natural Product-Inspired Synthetic (S*), or Completely Synthetic (S) using established criteria [10].

2. Molecular Descriptor Calculation:

- For all compounds, calculate a standardized panel of 20+ structural and physicochemical descriptors [10]. Essential descriptors include:

- Molecular Weight (MW), Hydrogen Bond Donors/Acceptors (HBD/HBA)

- Topological Polar Surface Area (tPSA), Number of Rotatable Bonds

- Fraction sp³ (Fsp³), Number of Stereocenters

- Number of Aromatic Rings, Calculated LogP/D

3. Data Standardization & PCA Execution:

- Standardize the descriptor matrix (mean-centering and scaling to unit variance).

- Perform PCA using standard linear algebra packages (e.g., in Python or R) to reduce dimensionality.

- Retain the first 2-3 principal components (PCs), which typically capture the majority of variance.

4. Visualization & Interpretation:

- Generate 2D/3D scatter plots (PC1 vs. PC2).

- Color-code points by compound origin category.

- Analyze the distribution: Greater spread and occupancy of distinct regions by NP-based categories indicate broader chemical space coverage [10].

Protocol 2: Query-Based Comparison of Ultra-Large Chemical Spaces

Standard pairwise comparisons fail for billion-molecule "make-on-demand" libraries. This protocol, adapted from [23], uses a query-centric approach.

1. Selection of Query Panel:

- Assemble a panel of 100 reference molecules considered biologically relevant (e.g., randomly selected marketed drugs passing standard drug-like filters) [23].

2. Neighborhood Searching in Fragment Spaces:

- Define the target ultra-large chemical spaces (e.g., Enamine REAL, KnowledgeSpace) [23].

- For each query, use a fuzzy, topology-preserving similarity search method (e.g., Feature Trees / FTrees-FS) [23].

- Retrieve the top 10,000 most similar molecules from each target space for every query.

3. Overlap and Uniqueness Analysis:

- For each chemical space, compile a unique set of all retrieved hits.

- Calculate the intersection of these unique hit sets across different spaces. A very low overlap (e.g., single-digit common molecules) indicates high complementarity [23].

- Analyze the distribution of overlaps per query to identify regions of chemical space where libraries converge or diverge.

4. Feasibility and Density Assessment (Optional):

- Apply synthetic feasibility scores (e.g., SAscore, rsynth) to the hit sets [23].

- Assess the local "density" of molecules around queries within each space to infer library coverage granularity.

Visualization of Core Concepts and Workflows

Diagram 1: Evolutionary Trajectories of Chemical Space

Diagram Title: Divergent Evolution of Natural and Synthetic Chemical Spaces

Diagram 2: Workflow for Comparing Ultra-Large Libraries

Diagram Title: Query-Based Comparison Workflow for Vast Chemical Spaces

Table 3: Key Reagents, Databases, and Software for Chemical Space Analysis

| Item / Resource Name | Type | Primary Function in Analysis | Relevant Citation |

|---|---|---|---|

| Dictionary of Natural Products (DNP) | Database | Authoritative source for curated NP structures for time-series and property analysis. | [21] |

| COCONUT / LANaPDB | Database | Large, publicly available NP collections for generating fragment libraries and diversity assessments. | [24] |

| Enamine REAL Space | Make-on-Demand Library | Ultra-large (billions) virtual library of readily synthesizable compounds; used as a benchmark for synthetic chemical space. | [23] [3] |

| RDKit | Software Cheminformatics Toolkit | Open-source platform for descriptor calculation, fingerprint generation, scaffold decomposition, and standardization. | [25] [21] |

| Feature Trees (FTrees) / FTrees-FS | Software / Descriptor | Topological pharmacophore descriptor and search system for scaffold-hopping and similarity searching in fragment spaces. | [23] |

| Principal Component Analysis (PCA) | Statistical Method | Dimensionality reduction technique to project high-dimensional chemical descriptor data into 2D/3D for visual comparison. | [10] [21] |

| SAscore & rsynth | Predictive Model | Computes synthetic accessibility score (SAscore) and retrosynthetic feasibility (rsynth) to assess compound practicality. | [23] |

| MolPILE Dataset | Machine Learning Dataset | Large-scale, curated dataset of 222M compounds for training ML models to better navigate and predict chemical space properties. | [25] |

Cutting-Edge Methodologies: Benchmarking Scaffold Diversity with Computational and AI Tools

Within modern drug discovery, assessing and exploiting molecular diversity is paramount for identifying novel bioactive compounds. This is particularly critical in the context of benchmarking natural product (NP) scaffold diversity against synthetic drug collections, as NPs occupy unique and biologically relevant regions of chemical space often under-represented in conventional screening libraries [26] [27]. Computational tools, specifically Virtual Screening (VS) and Inverse Virtual Screening (iVS), have become indispensable for navigating this vast chemical landscape. VS efficiently prioritizes compounds likely to bind a single protein target from immense libraries, while iVS elucidates the potential protein targets of a single query compound, crucial for understanding polypharmacology and deconvoluting phenotypic screening results [28] [29]. This guide objectively compares the performance, applications, and experimental underpinnings of these complementary computational methodologies, providing researchers with a framework for their effective deployment in diversity-oriented drug discovery campaigns.

Comparison of Computational Methodologies

Virtual Screening (VS) and Inverse Virtual Screening (iVS) are complementary strategies applied at different stages of the drug discovery pipeline. The table below summarizes their core principles, objectives, and applications.

| Feature | Virtual Screening (VS) | Inverse Virtual Screening (iVS) |

|---|---|---|

| Primary Objective | Identify ligands that bind to a defined protein target from a chemical library. | Identify potential protein targets for a defined query compound. |

| Typical Query | A single, prepared 3D structure of a protein target. | A single, prepared 3D structure of a small-molecule ligand. |

| Screened Library | Large database of small molecule compounds (e.g., ZINC, commercial libraries, NP databases). | A panel of prepared protein structures (e.g., a focused target family, or a proteome-wide database). |

| Key Challenge | Balancing computational speed with scoring accuracy for ligand pose and affinity prediction. | Managing the structural and chemical diversity of the protein panel to ensure fair, comparable docking scores. |

| Main Application | Hit identification and lead optimization in target-based drug discovery. | Target identification/deconvolution, mechanism of action studies, drug repurposing, and side-effect prediction [29]. |

| Representative Outcome | A ranked list of candidate compounds for experimental testing. | A ranked list of potential protein targets for the query ligand. |

Performance Benchmarking and Experimental Data

The efficacy of VS and iVS workflows is critically dependent on the performance of their constituent docking algorithms and scoring functions. Rigorous benchmarking using standardized datasets is essential to guide tool selection.

Scaffold Diversity in Compound Libraries

A foundational analysis of scaffold diversity reveals significant gaps in current screening libraries. A comparative study of public molecular datasets quantified the overlap of molecular frameworks (scaffolds) between different compound classes [26].

Table: Scaffold Diversity Analysis Across Biologically Relevant Compound Classes [26]

| Dataset | Key Finding on Scaffold Space | Implication for Library Design |

|---|---|---|

| Current Lead Libraries | Only 23% of scaffolds are shared with human metabolites. | Limited sampling of biologically pre-validated chemical space. |

| Approved Drugs | 42% of drug scaffolds are shared with human metabolites. | Drugs show a two-fold enrichment of metabolite-like scaffolds vs. lead libraries. |

| Natural Products (NPs) | Only 5% of NP scaffold space is shared with current lead libraries. | Vast, untapped reservoir of unique scaffolds exists in NPs. |

| Synthetic Toxics | Drugs are more similar to toxics than to metabolites in physicochemical property space. | Highlights the importance of selectivity and ADMET filtering. |

Conclusion for Thesis Context: This data directly supports the thesis that NP collections possess vast, under-utilized scaffold diversity compared to conventional lead and drug libraries. Computational tools are required to efficiently mine this unique chemical space [26] [27].

Benchmarking Docking Tools and Machine Learning Re-Scoring

Performance in structure-based VS varies significantly between tools and is enhanced by machine learning (ML). A 2025 study benchmarked three docking programs against wild-type and drug-resistant Plasmodium falciparum Dihydrofolate Reductase (PfDHFR), with and without ML-based re-scoring [30].

Table: Benchmarking Docking and ML Re-scoring Performance for PfDHFR Variants [30]

| Docking Tool | Re-scoring Function | Wild-Type (WT) PfDHFR EF1% | Quadruple Mutant (Q) PfDHFR EF1% | Key Insight |

|---|---|---|---|---|

| AutoDock Vina | None (Default) | Worse-than-random | Worse-than-random | Default scoring may be insufficient for challenging targets. |

| AutoDock Vina | CNN-Score | Better-than-random | Better-than-random | ML re-scoring significantly rescues performance. |

| PLANTS | None (Default) | 15 | 18 | Good baseline performance. |

| PLANTS | CNN-Score | 28 | 25 | Optimal combination for WT variant. |

| FRED | None (Default) | 12 | 20 | Strong performance against the resistant variant. |

| FRED | CNN-Score | 22 | 31 | Optimal combination for Q resistant variant. |

Experimental Protocol Summary (Benchmarking) [30]:

- Dataset Preparation: The DEKOIS 2.0 protocol was used to create benchmark sets for WT and Q PfDHFR, each containing 40 known active molecules and 1,200 property-matched decoy molecules (1:30 ratio).

- Protein Preparation: Crystal structures (PDB: 6A2M for WT, 6KP2 for Q) were prepared by removing water, adding hydrogens, and optimizing.

- Ligand Preparation: Active and decoy molecules were prepared (e.g., generating multiple conformers) using tools like Omega2 and standardized into SDF files.

- Docking Experiments: Three docking programs (AutoDock Vina, PLANTS, FRED) were used to screen each benchmark set against its respective protein structure.

- ML Re-scoring: The top poses from each docking run were re-scored using two pretrained ML scoring functions: CNN-Score and RF-Score-VS v2.

- Performance Evaluation: Enrichment Factor at 1% (EF1%), area under the precision-recall curve (pROC-AUC), and chemotype enrichment plots were used to evaluate and compare the success of each docking/re-scoring combination in prioritizing active compounds over decoys.

Detailed Methodologies and Workflows

Integrated iVS Platform for Target Deconvolution

A 2025 study demonstrated an advanced iVS workflow integrated with omics data for the target identification of novel antitumor compounds from a diversity-oriented synthesis (DOS) library [28].

Experimental Protocol Summary (Integrated iVS) [28]:

- Phenotypic Screening: A DOS library was synthesized and screened for antitumor activity in cell-based assays, identifying hit compounds (e.g., compounds 31 and 63).

- Target Database Curation: A panel of protein structures related to oncology pathways (e.g., kinases, apoptosis regulators) was prepared for docking.

- Inverse Docking: The 3D structures of hit compounds were docked against the entire curated protein panel using a molecular docking program.

- Bioinformatics & Omics Integration: Docking predictions were integrated with transcriptomic and proteomic data from compound-treated cells to identify consistently implicated pathways and targets.

- Biophysical Validation: Top-ranked candidate targets (e.g., proteins involved in calcium regulation and ER stress) were validated using surface plasmon resonance (SPR) and cellular thermal shift assays (CETSA).

- Functional Confirmation: Target involvement in the compound's mechanism was confirmed via gene knockdown/overexpression and downstream pathway analysis in cellulo.

Expanding NP-Like Chemical Space with Generative AI

Traditional VS/iVS screens known chemical space. Generative AI models now enable the de novo creation of novel, NP-like compounds, massively expanding explorable space. A 2023 study used a Recurrent Neural Network (RNN) trained on known NP SMILES strings to generate a database of 67 million novel, NP-like compounds [15].

Key Workflow Steps [15]:

- Model Training: An RNN with Long Short-Term Memory (LSTM) units was trained on ~325,000 known NP structures from the COCONUT database.

- SMILES Generation: The trained model generated 100 million novel SMILES strings.

- Curration & Filtering: Generated SMILES were filtered for chemical validity, uniqueness, and "NP-likeness" using tools like RDKit and the NP Score, resulting in 67 million final compounds.

- Analysis: The generated library showed a similar distribution of NP-likeness scores and biosynthetic pathway classifications (via NPClassifier) to real NPs, but covered a significantly broader physicochemical space, confirming the generation of novel yet biologically plausible scaffolds.

Visualizing Key Workflows and Relationships

Diagram: Integrated Inverse Virtual Screening (iVS) Workflow for Target Deconvolution

Diagram: Structure-Based Virtual Screening (SBVS) Benchmarking Process

The Scientist's Toolkit: Research Reagent Solutions

Essential computational and data resources for conducting VS/iVS studies in NP diversity assessment include:

| Resource Name | Type | Primary Function in VS/iVS | Key Feature / Relevance to NPs |

|---|---|---|---|

| COCONUT Database [15] | Compound Library | Provides authentic NP structures for training generative models or as a screening library. | Largest open collection of ~400,000 curated NPs; the reference set for "NP-likeness". |

| 67M NP-Like Database [15] | Generated Library | Expands screening space with novel, synthetically accessible compounds inspired by NP scaffolds. | 165-fold expansion of NP chemical space via AI (RNN), enabling discovery of novel scaffolds. |

| DEKOIS 2.0 [30] | Benchmarking Set | Evaluates docking tool performance with challenging decoys, preventing false optimism. | Provides rigorous, target-specific benchmarks to select the best VS pipeline before screening NPs. |

| AlphaFold Protein DB [31] | Protein Structure DB | Provides high-accuracy predicted 3D models for targets without experimental structures. | Enables iVS across the proteome; caution: models may require refinement for docking success [31]. |

| AutoDock Vina, FRED, PLANTS [30] | Docking Engine | Performs the core molecular docking calculation to predict ligand-receptor binding poses and scores. | Each has strengths/weaknesses; benchmarking (as above) is required for optimal tool selection. |

| CNN-Score / RF-Score-VS [30] | ML Scoring Function | Re-scores docking outputs to improve ranking of true active compounds (hits). | Crucially improves enrichment in benchmarks, especially for difficult targets like resistant enzymes. |

| NP Score & NPClassifier [15] | Analysis Tool | Quantifies "NP-likeness" and classifies compounds into biosynthetic pathways. | Essential for analyzing and filtering screening outputs or generated libraries for NP-like properties. |

Computational tools for diversity assessment, namely Virtual Screening and Inverse Virtual Screening, are powerful and complementary engines for drug discovery. Benchmarking data reveals that ML-enhanced docking pipelines (e.g., FRED/PLANTS with CNN-Score) significantly outperform traditional methods, a critical consideration for successfully screening complex NP-like chemical space [30]. The experimental success of integrated iVS platforms demonstrates their utility in deconvoluting the mechanism of action for novel scaffolds emerging from diversity-oriented synthesis [28]. Crucially, these tools are essential for addressing the core thesis that natural products represent a vast, under-exploited reservoir of scaffold diversity [26] [27]. By leveraging generative AI to create expansive NP-inspired libraries [15] and applying rigorously benchmarked VS/iVS pipelines, researchers can systematically explore this privileged chemical space to identify novel, biologically pre-validated starting points for next-generation therapeutics.

The convergence of artificial intelligence (AI) and medicinal chemistry is fundamentally reshaping the early stages of drug discovery. A core challenge in this field is the efficient exploration of chemical space to identify novel, bioactive scaffolds—core molecular structures with therapeutic potential. This guide provides a comparative analysis of contemporary computational methodologies that leverage machine learning (ML) to predict bioactivity while explicitly accounting for and enriching scaffold diversity. Framed within the critical context of benchmarking natural product scaffolds against synthetic drug collections, this review equips researchers with an objective evaluation of tools and protocols designed to overcome chemical bias and accelerate the discovery of innovative lead compounds [3].

Comparison of AI/ML Approaches for Scaffold and Bioactivity Prediction

The following section objectively compares four dominant paradigms in AI-driven drug discovery, evaluating their performance, experimental underpinnings, and specific utility for scaffold-diverse hit identification.

Scaffold-Centric Cheminformatics & Machine Learning

This approach directly utilizes chemical structure representations to build predictive models and assess library design, making scaffold analysis a central, interpretable component.

- Performance Comparison Table

| Method / Tool Name | Key Features & Algorithms | Reported Performance Metrics | Impact on Scaffold Diversity | Experimental Validation |

|---|---|---|---|---|

| Murcko Scaffold-Based Predictive Model [32] | Uses Bemis-Murcko scaffolds for representation; Random Forest classifier; addresses dataset bias. | Model accuracy: ~0.85; Identified two previously proven hit molecules from DrugBank virtual screen. | Explicitly uses scaffold-based splits to ensure model generalizability across diverse cores. | Validated via molecular docking and molecular dynamics simulations (200 ns) against DPP-4 target [32]. |

| DEL Scaffold & Target Analysis Tool [33] | Combines scaffold network analysis with ML classification; evaluates library "target-orientedness." | Enables distinction between generalist (hit-finding) and focused (hit-optimization) library designs. | Quantifies scaffold diversity within DNA-encoded libraries (DELs) to guide design. | Case study applied to two in-house DELs; tool available as a web app and Python script [33]. |

| Benchmark Set Analysis (e.g., BioSolveIT) [12] | Uses PCA-balanced benchmark sets (e.g., Set S with ~2.9k molecules) to probe chemical space coverage. | Finds combinatorial "Spaces" yield more/better analogs than enumerated libraries; identifies blind spots (e.g., polar, NP-like compounds). | Directly measures ability of commercial sources to deliver unique scaffolds similar to bioactive queries. | Screened 6 combinatorial Spaces (billions-trillions) & 4 enumerated libraries; used FTrees, SpaceLight, SpaceMACS search methods [12]. |

- Detailed Experimental Protocol: Murcko Scaffold-Based Model Development [32]

- Data Preparation and Scaffold Generation: Curate a dataset of known active and inactive compounds for a target (e.g., DPP-4 inhibitors). Process each molecule to extract its Bemis-Murcko scaffold using a toolkit like RDKit, representing the core ring system and linker atoms.

- Descriptor Calculation & Splitting: Calculate molecular descriptors or fingerprints for each compound. Critically, split the data into training and test sets based on scaffold similarity to ensure scaffolds in the test set are not represented in the training set, preventing chemical bias and testing true generalizability.

- Model Training and Evaluation: Train a machine learning classifier (e.g., Random Forest) to predict activity using the training set. Optimize hyperparameters via cross-validation. Evaluate the final model on the scaffold-separated test set, using metrics like AUC-ROC, accuracy, and precision.

- Virtual Screening & Validation: Apply the trained model to screen a large virtual database (e.g., DrugBank). Top predicted actives undergo molecular docking into the target's binding site to assess pose and interaction plausibility. Final validation involves molecular dynamics simulations (e.g., 200 ns) to confirm binding stability and calculate free energy of binding (ΔG) using methods like MM/GBSA [32].

Workflow for Scaffold-Based Virtual Screening and Validation

Phenotypic Profiling & Deep Learning

This paradigm shifts from chemical structure to biological response, using cellular imaging data to predict bioactivity, thereby facilitating scaffold hopping.

- Performance Comparison Table

| Method / Tool Name | Key Features & Algorithms | Reported Performance Metrics | Impact on Scaffold Diversity | Experimental Validation |

|---|---|---|---|---|

| Cell Painting Bioactivity Prediction [34] | Uses deep learning (ResNet50) on Cell Painting images; trained with single-concentration activity data. | Average ROC-AUC of 0.744 ± 0.108 across 140 diverse assays; 30% of assays achieved AUC ≥0.8 [34]. | Outperforms structure-based models in the structural diversity of top-ranked actives; enables scaffold hopping. | In vitro follow-up assays confirmed enrichment of active compounds; validated on public datasets (JUMP-CP) [34]. |

| Morphological Profiling Benchmarks [34] | Compares fluorescence vs. brightfield images and image-based vs. structure-based models. | Brightfield-only models performed nearly as well as fluorescence in many cases. | Image-based models consistently identified chemically distinct actives compared to structure-based models. | Performance analyzed across assay types, technologies, and target classes; kinases and cell-based assays were particularly predictable [34]. |

- Detailed Experimental Protocol: Cell Painting-Based Bioactivity Prediction [34]

- Cell Painting Assay Execution: Seed cells (e.g., U2OS) in multi-well plates. Treat with a diverse library of compounds (e.g., 8,300) at a single concentration. Stain with the Cell Painting dye set (labeling nuclei, nucleoli, ER, mitochondria, actin, Golgi, plasma membrane, RNA). Acquire high-content microscopy images for all channels.

- Image Processing & Feature Extraction: Segment cells and extract morphological features (e.g., shape, texture, intensity) or use deep learning embeddings directly from image patches.

- Model Training: Assemble a training set pairing morphological profiles with binary bioactivity labels from primary HTS (single-concentration). Train a multi-task deep neural network (e.g., a pre-trained ResNet50 adapted for multi-channel input) to predict activity profiles across multiple assays simultaneously.

- Prediction & Scaffold Hopping Analysis: Apply the model to predict activity for all compounds in a larger, untested library. Rank compounds by predicted activity. Analyze the chemical scaffolds of top predictions and compare their diversity to those identified by traditional QSAR models to quantify scaffold-hopping potential [34].

Generative AI for De Novo Design & Scaffold Invention

These methods learn the grammar of chemical structures and bioactivity to generate novel molecular entities from scratch, prioritizing desired properties.

- Performance Comparison Table

| Method / Tool Name | Key Features & Algorithms | Reported Performance Metrics | Impact on Scaffold Diversity | Experimental Validation |

|---|---|---|---|---|

| Generative AI Frameworks (e.g., GANs, VAEs) [35] | Deep Generative Models (DGMs) learn chemical space; conditioned on properties or target constraints. | Can generate novel, synthetically accessible scaffolds with predicted high activity and drug-likeness. | Directly creates new scaffold diversity not present in training libraries, ideal for exploring uncharted chemical space. | Case studies show progression to in vitro testing; challenges remain in synthetic accessibility and high-fidelity experimental confirmation [35]. |

| PoLiGenX (Pose-Conditioned Ligand Generator) [36] | Diffusion model conditioned on 3D protein pocket and a reference ligand pose. | Generates ligands with lower steric clashes and strain energy compared to other diffusion models. | Generates novel ligands tailored to a specific binding geometry, potentially yielding new core structures for a target. | Validation via computational docking scores and molecular mechanics calculations of generated molecules [36]. |

| CardioGenAI (for hERG Mitigation) [36] | Autoregressive Transformer conditioned on scaffold and properties; filters outputs with hERG toxicity models. | Demonstrated re-engineering of known drugs (e.g., astemizole) to reduce hERG liability while preserving activity. | Retains the core scaffold but suggests decorative modifications to optimize safety, a key step in lead optimization. | Validated by in silico property prediction and comparison to known structure-activity relationships [36]. |

- Detailed Experimental Protocol for Generative AI Scaffold Design

- Model Training: Train a generative model (e.g., Variational Autoencoder (VAE), Generative Adversarial Network (GAN), or Transformer) on a large corpus of chemical structures (e.g., SMILES strings from ChEMBL). The model learns the probability distribution of chemical space.

- Conditioning and Sampling: Condition the model on desired properties, such as a target activity prediction from a separate QSAR model, or on a 3D pharmacophore or molecular shape. Sample new molecules from the conditioned model's latent space.

- Filtering and Prioritization: Pass generated molecules through a filter cascade: a) Synthetic accessibility (SA) score, b) Drug-likeness filters (e.g., Rule of Five), c) In silico activity prediction, d) Off-target toxicity prediction (e.g., hERG).

- Experimental Cycle: Synthesize and test top-priority, novel scaffolds in biochemical or cellular assays. Use the resulting experimental data to refine and retrain the generative model, creating an iterative design-make-test-analyze (DMTA) cycle [36] [35].

Iterative Generative AI Design Cycle

Robust model evaluation and training require high-quality, unbiased data that includes both active and confirmed inactive compounds.

- Performance Comparison Table

| Method / Tool Name | Key Features & Algorithms | Reported Performance Metrics | Impact on Scaffold Diversity | Experimental Validation |

|---|---|---|---|---|

| Bioactive Benchmark Sets (e.g., Set S) [12] | PCA-balanced subsets of ChEMBL (e.g., ~2,900 molecules); designed for uniform chemical space coverage. | Enables systematic benchmarking of library and chemical space coverage; identifies regional blind spots. | Directly assesses a source's ability to provide scaffolds similar to diverse bioactive queries. | Used to evaluate commercial compound sources; results show combinatorial spaces outperform enumerated libraries in scaffold uniqueness [12]. |

| InertDB (Inactive Compound Database) [37] | Contains 3,205 Curated Inactive Compounds (CICs) from PubChem and 64,368 Generated Inactives (GICs) via AI. | 97.2% of CICs comply with Rule of Five. Provides reliable negative data, improving model accuracy versus random decoys. | Expands coverage of "inactive" chemical space, reducing model bias toward actives and improving generalizability. | CICs selected via NLP-based bioassay diversity metric (Dassay) and stringent inactivity criteria; improves phenotypic activity prediction models [37]. |

The Scientist's Toolkit: Key Research Reagent Solutions

The following materials and software are essential for implementing the experimental protocols discussed above.

| Item Name | Type (Software/Physical) | Primary Function in Research | Key Feature / Application |

|---|---|---|---|

| Cell Painting Assay Kit | Physical Reagent | Provides the optimized set of fluorescent dyes to stain key cellular components for high-content morphological profiling [34]. | Enables generation of phenotypic profiles for bioactivity prediction models. |

| DNA-Encoded Library (DEL) | Physical Chemical Collection | An ultra-large library of compounds (10⁸–10¹²) tethered to DNA barcodes for affinity-based ultra-high-throughput screening [33]. | Hit discovery from vast chemical space; requires scaffold analysis tools for design/interpretation. |

| Benchmark Compound Set (e.g., Set S) [12] | Digital/Physical Collection | A PCA-balanced, scaffold-diverse set of known bioactive molecules used to evaluate chemical library coverage and diversity. | Essential for benchmarking natural product-like and drug-like chemical space coverage. |

| RDKit or OpenChemLib | Software (Cheminformatics) | Open-source toolkits for chemical informatics, including Murcko scaffold decomposition, fingerprint generation, and descriptor calculation [32]. | Core component for scaffold-based analysis and featurization in ML models. |

| NovaWebApp / Python Script [33] | Software (Web App/Script) | Dedicated tool for evaluating scaffold diversity and target addressability of DNA-encoded libraries (DELs). | Guides decision-making between generalist vs. focused library design for specific projects. |

| Gnina 1.3 [36] | Software (Structure-Based) | Deep learning-based molecular docking software with convolutional neural network scoring functions, including for covalent docking. | Provides high-accuracy pose prediction and scoring for validating virtual screening hits. |

| Generative AI Model (e.g., PyTorch/TensorFlow) | Software (AI Framework) | Implementation of VAEs, GANs, or Transformers for de novo molecular generation, often conditioned on biological activity [35]. | Used to invent novel scaffolds with optimized properties in unexplored regions of chemical space. |

This guide compares contemporary computational scaffold-hopping techniques for translating bioactive natural product (NP) features into synthetically accessible mimetics. Framed within a broader thesis on benchmarking natural product scaffold diversity against drug collections, we evaluate methods on their ability to discover novel, isofunctional synthetic chemotypes from complex NP starting points, a key challenge in expanding viable chemical space for drug discovery [38] [39].

Performance Comparison of Scaffold-Hopping Techniques

The following table compares the core methodologies, performance metrics, and key advantages of leading scaffold-hopping techniques, with a focus on applications involving natural products.

Table 1: Comparison of Leading Scaffold-Hopping Techniques for Natural Product Translation

| Technique (Representation Type) | Core Methodology | Key Performance Metric (Natural Product Context) | Demonstrated NP-to-Synthetic Success | Key Advantage for NP Translation |

|---|---|---|---|---|

| WHALES (3D Holistic) [38] [39] | Holistic 3D descriptors capturing atom distribution, shape, and partial charges via atom-centered Mahalanobis distances. | Scaffold Diversity (SDA%) of 89-92% in benchmarking; 35% experimental hit rate for novel cannabinoid receptor modulators from phytocannabinoid queries [38] [39]. | 7 novel synthetic CB1/CB2 modulators identified from 4 phytocannabinoid templates; 4 novel RXR agonist chemotypes from synthetic queries [38] [39]. | Captures overall pharmacophore and shape of complex NPs without relying on specific fragments or connectivity. |

| ChemBounce (Fragment & Shape-Based) [40] | Systematic scaffold replacement using a library of synthesis-validated fragments, filtered by Tanimoto and 3D electron shape similarity. | Generates compounds with higher synthetic accessibility (SAscore) and drug-likeness (QED) than several commercial tools [40]. | Validated on diverse molecules including peptides and macrocycles; generates patentable novel cores with retained pharmacophores [40]. | Explicitly prioritizes synthetic accessibility and uses a large, validated fragment library for practical design. |

| ShapeAlign/CSNAP3D (3D Shape & Pharmacophore) [41] | Ligand alignment maximizing combined shape overlap and pharmacophore feature matching (ComboScore). | Achieved >95% success rate in target prediction benchmarks; effective for identifying diverse HIV reverse transcriptase inhibitor scaffolds [41]. | Applied to identify novel Taxol-like microtubule stabilizers with different scaffolds but similar 3D pharmacophore [41]. | Excellent for "true" scaffold hops where core topology differs but 3D binding pose is conserved. |

| LEMONS Analysis (2D Fingerprint Benchmarking) [42] | Algorithm to enumerate hypothetical modular NP structures and benchmark similarity methods' ability to recognize biosynthetically related scaffolds. | Circular fingerprints (ECFP) performed best among 2D methods; retrobiosynthetic alignment (GRAPE/GARLIC) was superior for recognizing NP analogs [42]. | Provides framework to evaluate method performance specifically on NP-like chemical space (e.g., peptides, polyketides) [42]. | Provides critical benchmarking specific to the structural complexity and modularity of natural products. |

| Modern AI-Driven Representations (Graph/Language Models) [9] | Deep learning models (e.g., GNNs, Transformers) learn continuous molecular embeddings from large datasets. | Enable generation of novel scaffolds absent from existing libraries and exploration of broader chemical space [9]. | Increasingly applied to de novo generation of NP-inspired scaffolds with desired properties [9]. | Data-driven discovery of non-obvious scaffolds beyond the constraints of rule-based or similarity search. |

Experimental Protocols & Methodologies

The successful application of these techniques relies on rigorous computational and experimental workflows. Below are detailed protocols for two key prospective studies.

This protocol details the study that validated WHALES descriptors for scaffold hopping from natural products.

1. Query Selection & Preparation:

- Queries: Four phytocannabinoids (Δ9-tetrahydrocannabinol, cannabidiol, cannabigerol, cannabichromene).

- Conformation Generation & Minimization: Generate a single, low-energy 3D conformation for each query molecule. Use the MMFF94 force field for geometry optimization [38].

- Partial Charge Calculation: Calculate Gasteiger-Marsili partial charges for all atoms [38].

2. WHALES Descriptor Calculation:

- For each atom

jin the molecule, compute a weighted atom-centered covariance matrix (Sw(j)), using atomic coordinates and the absolute values of partial charges as weights [38]. - For every atom pair (

i,j), calculate the Atom-Centered Mahalanobis (ACM) distance. This creates an ACM matrix representing normalized interatomic distances [38]. - From the ACM matrix, derive three atomic indices for each atom: Remoteness (row average), Isolation degree (column minimum), and their ratio [38].

- Convert these atomic indices into a fixed-length molecular descriptor vector by calculating their deciles, minimum, and maximum values (33 descriptors total) [38].

- For each atom

3. Database Screening:

- Database: Screen a large library of commercially available synthetic compounds (e.g., ZINC).

- Similarity Search: Calculate WHALES descriptors for all database compounds. Rank the database based on cosine similarity to each of the four NP queries.

- Compound Selection: Visually inspect top-ranked compounds and select 20 candidate molecules for experimental testing, prioritizing structural novelty versus known cannabinoid receptor ligands [38].

4. Experimental Validation:

- Assay: Test purchased compounds in cell-based functional assays (e.g., cAMP accumulation or β-arrestin recruitment) for human CB1 and CB2 receptor activity.

- Hit Criteria: Confirm dose-dependent agonist or antagonist activity with potencies (EC50 or IC50) in the low-micromolar range [38].

- Result: 7 out of 20 tested compounds (35%) were confirmed as activators or inhibitors, with five representing novel chemotypes compared to ChEMBL annotations [38].

This protocol outlines the process for using the open-source ChemBounce tool to generate novel, synthetically accessible analogs.

1. Input Preparation:

- Provide the SMILES string of the active input molecule (can be an NP or any lead compound).

- (Optional) Specify any core substructures (