Benchmarking Dereplication Algorithms on GNPS Datasets: A Comprehensive Guide for Metabolomics and Drug Discovery

This article provides a systematic evaluation of dereplication algorithms using the Global Natural Products Social (GNPS) mass spectrometry data ecosystem.

Benchmarking Dereplication Algorithms on GNPS Datasets: A Comprehensive Guide for Metabolomics and Drug Discovery

Abstract

This article provides a systematic evaluation of dereplication algorithms using the Global Natural Products Social (GNPS) mass spectrometry data ecosystem. Aimed at researchers and drug development professionals, it explores the foundational principles of dereplication, details the methodologies of key algorithms like DEREPLICATOR+ and VInSMoC, addresses common troubleshooting and optimization challenges in large-scale analysis, and presents comparative validation frameworks. The synthesis offers actionable insights for selecting and improving tools to accelerate natural product discovery and biomedical research.

Foundations of Dereplication and the GNPS Ecosystem: Core Concepts and Dataset Landscape

Dereplication is the critical process of rapidly identifying known compounds within a complex natural extract before engaging in time-intensive isolation and structure elucidation [1]. Its primary role is to prevent the redundant "re-discovery" of common metabolites, ubiquitous nuisance compounds, or previously reported active agents, thereby conserving resources and accelerating the discovery pipeline [1] [2]. In the context of metabolomics, dereplication is equally vital for accurate metabolite annotation, distinguishing known from novel biochemical features in untargeted profiling studies [3] [4].

This process is foundational to a broader thesis on benchmarking dereplication algorithms using GNPS datasets. The Global Natural Products Social (GNPS) molecular networking infrastructure represents a massive, crowdsourced repository of tandem mass spectrometry data, serving as the ultimate proving ground for computational tools [5] [6]. Effective dereplication algorithms must navigate the scale and complexity of GNPS to reliably annotate spectra, a challenge that drives continuous methodological innovation. This guide compares the leading analytical approaches and computational strategies that define the modern dereplication toolkit.

Core Analytical Approaches and Technologies

Dereplication strategies are built on integrated analytical platforms that separate and characterize complex mixtures. The choice of technique significantly influences the depth, speed, and accuracy of the process.

Table: Comparison of Key Analytical Platforms for Dereplication

| Platform | Core Principle | Key Advantages | Primary Limitations | Best Suited For |

|---|---|---|---|---|

| LC-MS(/MS) | Separation by liquid chromatography followed by mass spectral detection/fragmentation [2]. | Broad applicability, excellent sensitivity, enables MS/MS for structure [3]. | Can miss poorly ionizing compounds; requires robust libraries [6]. | Untargeted profiling of semi-polar to polar metabolites (e.g., most NPs) [3]. |

| GC-MS | Separation by gas chromatography of volatile or derivatized compounds [7]. | Highly reproducible, robust EI spectra libraries, excellent for volatiles [7]. | Requires derivatization for many metabolites; limited to thermally stable compounds [7]. | Targeted analysis of primary metabolites, fatty acids, volatiles [7]. |

| SFC-MS | Separation by supercritical fluid chromatography [1]. | Fast separations, "greener" solvents, complementary selectivity to LC [1]. | Less established; narrower range of available columns and methods [1]. | Chiral separations, lipophilic compound analysis [1]. |

| Direct MS/MS Analysis | Ambient ionization or direct infusion without prior chromatography [2]. | Extreme high-throughput; minimal sample prep [2]. | Prone to ion suppression; limited dynamic range [2]. | Rapid screening of microbial colonies or simple mixtures [2]. |

The workflow integrates these platforms with informatics: an extract is analyzed, spectra are acquired, and computational tools search these against spectral or structural databases to provide putative identifications [4]. Advanced strategies like micro-fractionation link biological activity to specific chromatographic peaks, while molecular networking on platforms like GNPS visualizes spectral similarity, grouping related compounds and propagating annotations within clusters [2] [6].

Benchmarking Dereplication Algorithms on GNPS Datasets

The GNPS platform provides a standardized environment to benchmark algorithm performance on real-world, complex data. Key metrics include the number of unique identifications, false discovery rate (FDR), sensitivity for variant discovery, and computational speed [8] [6]. The following table compares three seminal algorithms designed to tackle the dereplication challenge at scale.

Table: Benchmarking Performance of Advanced Dereplication Algorithms

| Algorithm | Core Innovation | Reported Performance on GNPS Data | Key Strength | Identified Limitation |

|---|---|---|---|---|

| DEREPLICATOR (2017) | Spectral network propagation for variant identification of peptidic natural products (PNPs) [8]. | Identified hundreds of PNPs & variants [6]. | First to enable high-throughput PNP variant discovery via networks [8]. | Limited to PNPs; relies on network having a known "parent" node [8]. |

| DEREPLICATOR+ (2018) | Extended fragmentation graph approach to multiple NP classes (PKs, terpenes, etc.) [6]. | 5x more unique IDs than DEREPLICATOR; ID'd 488 compounds at 1% FDR in Actinomyces set [6]. | Broad class coverage; detailed fragmentation model improves sensitivity [6]. | Computationally intensive for very large structural databases [6]. |

| VarQuest (2018) | Modification-tolerant search without dependency on spectral networks [8]. | Found an order of magnitude more PNP variants than prior tools; illuminated 78% "orphan" networks [8]. | Unlocks "dark matter" (networks without known parents); extremely fast [8]. | Initially focused on PNPs; modification mass may combine multiple changes [8]. |

A critical insight from benchmarking is the prevalence of "orphan" molecular families in GNPS data—clusters with no known reference spectrum. VarQuest revealed that 78% of PNP families in GNPS were orphans, underscoring the limitation of network-propagation methods and the vast uncharted chemical space [8]. The latest algorithms, like VInSMoC (2025), continue this evolution by enabling scalable database searches for molecular variants across billions of spectra, identifying tens of thousands of unreported variants [9].

Experimental Protocols for Method Validation

Robust benchmarking requires standardized experimental and computational protocols. Below are detailed methodologies for two critical aspects: validating dereplication accuracy and preparing samples for analysis.

Protocol 1: Validating Dereplication Accuracy with Spiked Extracts This protocol tests an algorithm's ability to identify known compounds in a complex matrix.

- Spike Solution Preparation: Prepare standard solutions of 2-3 well-characterized natural products relevant to the sample type (e.g., an antibiotic for a microbial extract) [2].

- Complex Matrix Preparation: Generate a crude natural extract expected to be devoid of the spike compounds (e.g., from a different taxonomic source) [7].

- Sample Spiking: Spike the crude extract with the standard solutions at low, medium, and high concentrations (e.g., 1, 10, and 100 µM). Prepare unspiked controls and solvent blanks [3].

- LC-MS/MS Analysis: Analyze all samples using a standardized reversed-phase LC-ESI-MS/MS method. Use data-dependent acquisition to collect MS2 spectra for top ions [3] [4].

- Data Processing & Dereplication:

- Validation Metrics: Calculate the recall (percentage of spikes correctly identified) and precision (percentage of correct IDs among all reported IDs for the spiked samples) at each concentration level.

Protocol 2: GC-MS-Based Dereplication for Plant Metabolomics This protocol details a optimized GC-MS workflow for identifying known metabolites, integrating deconvolution tools to improve accuracy [7].

- Sample Derivatization: Dry 50-100 µL of plant extract under nitrogen. Add 20 µL of methoxyamine hydrochloride (20 mg/mL in pyridine) and incubate at 30°C for 90 minutes. Then add 80 µL of MSTFA (N-methyl-N-trimethylsilyltrifluoroacetamide) and incubate at 37°C for 30 minutes [7].

- GC-MS Analysis: Inject 1 µL in splitless mode. Use a non-polar capillary column (e.g., DB-5MS). Employ a temperature gradient (e.g., 60°C to 330°C). Set the electron ionization source to 70 eV and collect full-scan data (e.g., m/z 50-600) [7].

- Data Deconvolution & Identification:

- Process raw data with AMDIS using parameters optimized via factorial design to balance sensitivity and specificity [7].

- Apply a Compound Detection Factor (CDF) to filter false positives from AMDIS results [7].

- For co-eluting peaks with poor AMDIS deconvolution, apply the Ratio Analysis of Mass Spectrometry (RAMSY) tool as a complementary digital filter to recover low-intensity ions [7].

- Database Matching: Match deconvoluted spectra against retention-index locked libraries (e.g., the Fiehn GC/MS Metabolomics RTL Library) using matching criteria (e.g., similarity >700) [7].

The Scientist's Toolkit: Essential Research Reagents & Materials

Successful dereplication relies on both analytical standards and computational resources.

Table: Key Research Reagent Solutions for Dereplication

| Category | Item / Solution | Function in Dereplication | Example / Specification |

|---|---|---|---|

| Chromatography | UHPLC / HPLC Grade Solvents | Mobile phase for high-resolution separation, minimizing background noise [2]. | Methanol, Acetonitrile, Water (with 0.1% Formic Acid for LC-MS). |

| Sample Prep | Derivatization Reagents | Chemically modifies metabolites for volatile analysis by GC-MS [7]. | MSTFA with 1% TMCS; Methoxyamine hydrochloride [7]. |

| Internal Standards | Stable Isotope-Labeled Compounds | Controls for extraction efficiency, instrument response, and quantitative normalization [3]. | ¹³C or ²H-labeled amino acids, fatty acids, or generic internal standards. |

| Reference Libraries | Authentic Natural Product Standards | Provides Level 1 identification confidence; essential for validating algorithm hits [4]. | Commercially available purified compounds (e.g., from Sigma-Aldrich, Cayman Chemical). |

| Computational | Spectral & Structural Databases | Reference for matching experimental MS/MS or EI spectra [6]. | GNPS Spectral Libraries, NIST EI Library, AntiMarin, Dictionary of Natural Products [7] [6]. |

| Software & Platforms | Dereplication Algorithms & Workflows | Executes the core computational identification and annotation tasks. | DEREPLICATOR+ [6], VarQuest [8], GNPS Molecular Networking [5], VInSMoC [9]. |

Dereplication has evolved from a simple library matching exercise into a sophisticated computational discipline central to natural product discovery and metabolomics. Benchmarking on GNPS datasets has driven progress, revealing that modern algorithms must be scalable, modification-tolerant, and capable of illuminating the "dark matter" of orphan molecular families [9] [8].

The future of dereplication lies in the deeper integration of orthogonal data types. The next generation of tools will likely correlate spectral networks with genomic predictions (e.g., from antiSMASH), using in-silico MS/MS prediction powered by machine learning to score candidate structures [9]. Furthermore, the adoption of FAIR data principles and public repositories like GNPS and MetaboLights will provide ever-larger, higher-quality training data for these models, creating a virtuous cycle of improvement [3] [4]. For researchers, the strategic application of the compared platforms and algorithms—selecting LC-MS with DEREPLICATOR+ for broad profiling or GC-MS with advanced deconvolution for targeted volatile analysis—will be key to efficiently navigating the complex chemistry of life.

The Global Natural Products Social (GNPS) molecular networking platform represents a paradigm shift in mass spectrometry data sharing and analysis for natural products and metabolomics [10]. As a community-curated knowledge base, GNPS provides an open-access infrastructure where researchers can deposit, analyze, and collaboratively interpret raw, processed, and identified tandem mass (MS/MS) spectrometry data [10]. The platform addresses a critical bottleneck in the field by transforming the traditionally isolated analysis of natural products into a high-throughput, data-driven science capable of processing hundreds of millions of spectra [11] [6].

This capacity for large-scale data generation creates an urgent need for robust dereplication algorithms—computational tools that identify known compounds in experimental samples to avoid redundant rediscovery and prioritize novel chemistry [11] [6]. Effective dereplication is the cornerstone of efficient natural product discovery pipelines. Benchmarking these algorithms on authentic GNPS datasets is therefore essential for assessing their real-world performance, guiding tool selection, and driving methodological improvements within the framework of a broader thesis on computational metabolomics [12].

Comparative Performance Analysis of Dereplication Tools

The performance of dereplication algorithms is measured by their accuracy, sensitivity, and scope when analyzing complex mass spectrometry datasets. The table below provides a quantitative comparison of leading tools benchmarked on GNPS data.

Table 1: Performance Benchmarking of Dereplication Algorithms on GNPS Datasets

| Algorithm | Primary Scope | Key Benchmark Dataset | Identifications at 1% FDR | Unique Metabolite Classes Identified | Variable Dereplication | Statistical Framework |

|---|---|---|---|---|---|---|

| DEREPLICATOR [11] | Peptidic Natural Products (PNPs: NRPs & RiPPs) | SpectraGNPS (248M spectra) | 8,622 PSMs (150 unique peptides) [11] | Peptides and amino acid derivatives [6] | Yes, via spectral networks [11] | p-values via MS-DPR; FDR via decoy database [11] |

| DEREPLICATOR+ [6] | Broad NP classes (PNPs, Polyketides, Terpenes, etc.) | SpectraActiSeq (Actinomyces) | 488 unique compounds (8,194 MSMs) [6] | Peptides, Lipids, Benzenoids, Terpenes, Polyketides [6] | Yes, via molecular networking [6] | Score-based threshold; FDR estimation [6] |

| Classic Molecular Networking [10] [13] | Global metabolomics, analog discovery | Variable (user datasets) | Not directly comparable (library matching) | All (depends on reference libraries) [10] | Yes, via network proximity [10] | Cosine score thresholds; FDR via decoy spectra [13] |

| GNPS Library Search [10] | Library-based annotation | All public GNPS data | ~1.01% of public spectra matched [10] | All (limited by library coverage) [10] | Limited (analog search) | Cosine score; optional FDR [5] [13] |

Analysis of Key Performance Metrics: The data reveals a clear evolution in capability. DEREPLICATOR+ represents a fivefold increase in identified unique compounds over its predecessor when analyzing Actinomyces spectra, demonstrating the critical advantage of expanding beyond a peptide-only fragmentation model [6]. A significant challenge across all methods is the limited coverage of reference spectral libraries; even the aggregated libraries in GNPS initially matched only about 1% of public spectra, highlighting the vast "dark matter" of metabolomics [10]. This underscores the value of molecular networking and variable dereplication, which propagate annotations within clusters of related spectra, thereby extending identification beyond exact library matches [11] [6].

Experimental Protocols for Benchmarking on GNPS

Benchmarking dereplication algorithms requires standardized workflows to ensure fair and reproducible comparisons. The following protocols are derived from methodologies used in foundational studies.

Protocol for Benchmarking Dereplication Algorithms

A robust benchmarking experiment involves several critical steps:

Dataset Selection and Curation: Select appropriate, well-characterized public datasets from the GNPS/MassIVE repository [6]. Common benchmarks include

SpectraGNPS(broad scale),SpectraActiSeq(for microbial metabolites), andSpectraLibrary(for validation against known standards) [11] [6]. Ensure metadata on sample origin (e.g., bacterial strain, plant extract) is available.Reference Database Preparation: Prepare a target database of known chemical structures (e.g., AntiMarin, Dictionary of Natural Products) [6]. Generate a corresponding decoy database of the same size, typically by randomizing stereochemistry or introducing unnatural modifications, to facilitate False Discovery Rate (FDR) estimation [11].

Algorithm Execution with FDR Control: Run the dereplication algorithm (e.g., DEREPLICATOR+) against the combined target and decoy database. Use the tool's inherent scoring system (e.g., p-values from MS-DPR for DEREPLICATOR) [11] or a standardized score like the modified cosine score [13].

Calculation of False Discovery Rate (FDR): For a given score threshold, calculate the FDR. A standard approach is FDR = (Decoy Hits) / (Target Hits). Set a threshold (e.g., 1% FDR) and report all identifications above this threshold [13]. This controls the rate of false positive annotations.

Validation and Manual Curation: For high-priority identifications, especially of novel variants, validate results by inspecting raw spectral matches, checking for supporting genomic data (e.g., from

MIBiG), and reviewing the context within a molecular network [6] [14].

Protocol for Constructing a Molecular Network for Validation

Molecular networking is used both as a dereplication tool and to validate and extend algorithm results [10].

Data Preparation: Convert raw LC-MS/MS files to open formats (.mzXML, .mzML). Optionally, group files by experimental attribute (e.g., strain, treatment) in a metadata table [5] [13].

Network Creation via GNPS: Submit files to the GNPS "Molecular Networking" job. Key parameters include: Precursor ion mass tolerance (0.02 Da for high-res), Fragment ion tolerance (0.02 Da), Minimum matched peaks (6), and Minimum cosine score (e.g., 0.7) [5] [13]. The cosine score measures spectral similarity.

Library Annotation: Enable library search against GNPS spectral libraries. Set the score threshold based on an FDR estimation workflow (e.g., using the

Passatuttotool) to ensure annotation reliability [13].Network Analysis and Interpretation: Visualize the network (e.g., in Cytoscape). Identified nodes act as anchors. The propagation of annotations to neighboring nodes in the network enables the "variable dereplication" of structural analogs, even if their spectra are not in reference libraries [11] [6].

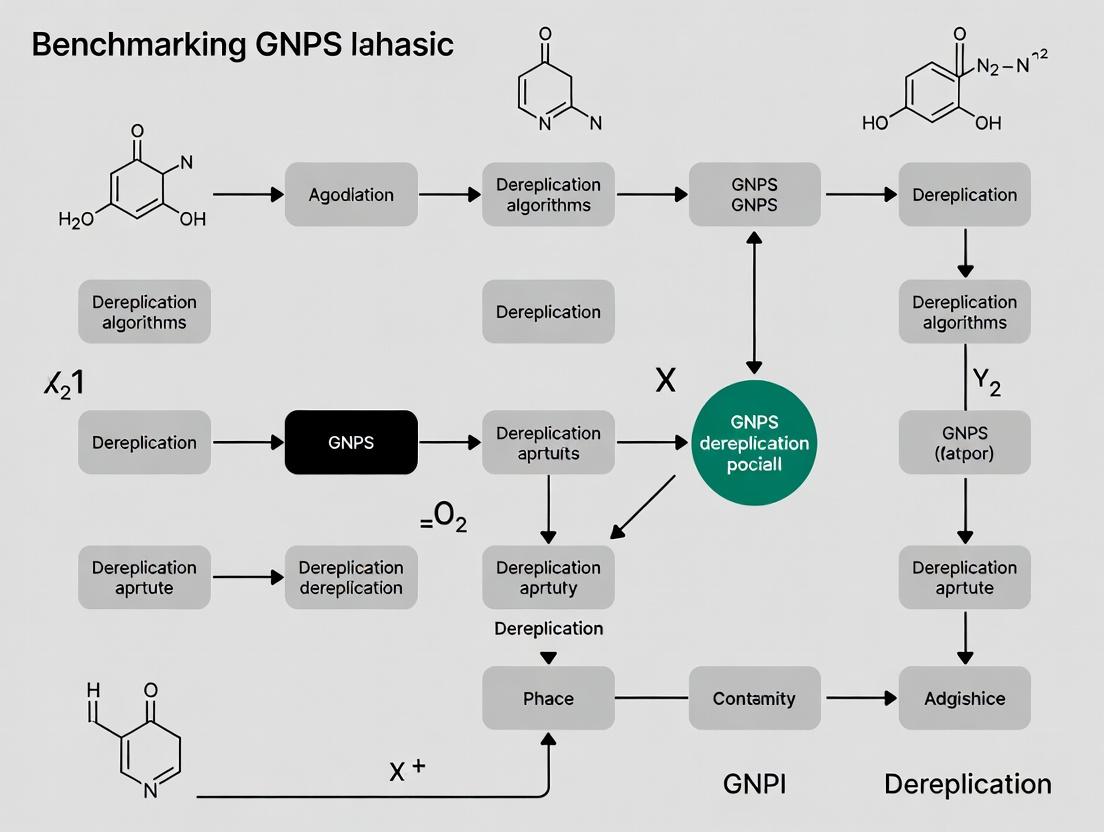

Diagram Title: Benchmarking Workflow for Dereplication Algorithms

Visualization of Algorithm Architectures and Workflows

Understanding the logical flow of advanced dereplication tools is key to comparing their approaches. The diagram below contrasts the architectures of DEREPLICATOR and DEREPLICATOR+.

Diagram Title: Architecture of DEREPLICATOR vs. DEREPLICATOR+

Architectural Comparison: The core difference lies in the fragmentation model. DEREPLICATOR uses a rule-based model specific to peptide bonds (amide bond disconnections) [11], while DEREPLICATOR+ first converts a metabolite's structure into a general metabolite graph, from which it generates a more comprehensive fragmentation graph that can represent breaks in various chemical backbones (e.g., polyketide chains) [6]. This allows DEREPLICATOR+ to dereplicate a vastly expanded array of natural product classes.

The Scientist's Toolkit for Dereplication Research

Conducting rigorous benchmarking research requires a specific set of data, software, and reference material resources.

Table 2: Essential Research Reagent Solutions for Dereplication Benchmarking

| Tool/Resource Name | Type | Primary Function in Benchmarking | Key Features for Comparison Studies |

|---|---|---|---|

| GNPS Platform [10] | Data Repository & Analysis Infrastructure | Hosts public datasets, spectral libraries, and provides analysis workflows (networking, library search). | Centralized access to benchmark datasets (e.g., SpectraGNPS); enables reproducible workflow execution [10] [13]. |

| MassIVE Repository | Data Repository | Stores and shares mass spectrometry raw data linked to GNPS. | Source for downloading specific dataset files for local benchmarking and validation [10]. |

| GNPS Spectral Libraries (GNPS-Collections, Community) [10] | Reference Data | Gold-standard spectra for validating algorithm identifications and training models. | Tiered curation (Gold/Silver/Bronze) indicates confidence; essential for calculating precision/recall [10]. |

| AntiMarin & Dictionary of Natural Products (DNP) [6] | Chemical Structure Databases | Target databases of known natural products for dereplication algorithms to search against. | Provide the "ground truth" chemical structures for generating theoretical spectra [11] [6]. |

| DEREPLICATOR+ Software [6] | Dereplication Algorithm | The primary tool being benchmarked for broad-class natural product identification. | Command-line tool for large-scale database search; outputs scores and FDR estimates [6]. |

| matchMS Library Cleaning Pipeline [15] | Data Curation Software | Cleans and harmonizes public spectral libraries (like GNPS) before use in benchmarking. | Ensures high-quality, reproducible training/validation data by fixing annotations and metadata [15]. |

| Cytoscape with GNPS Plugin | Visualization Software | Visualizes molecular networks to manually verify and contextualize algorithm hits. | Allows inspection of annotation propagation within spectral networks, a key validation step [14] [13]. |

| GNPS Dashboard [16] | Collaborative Data Exploration Tool | Enables remote, collaborative inspection of raw LC-MS data linked to network results. | Critical for validating hits by examining raw chromatograms and spectra, supporting reproducible research [14] [16]. |

Benchmarking studies on GNPS datasets have unequivocally demonstrated that algorithmic advancements directly translate to discoveries. The evolution from DEREPLICATOR to DEREPLICATOR+ increased identification yields fivefold and expanded the chemical space accessible to dereplication [6]. The integration of these tools with the molecular networking and community data sharing facets of GNPS creates a powerful, iterative cycle for natural product discovery [10].

Future benchmarking efforts must address several frontiers. First, as machine learning-based annotation tools proliferate, standardized benchmarks on common GNPS datasets are urgently needed to prevent performance ambiguity [12]. Second, the quality and curation of reference data remain a limiting factor. Tools like the matchMS cleaning pipeline are vital for creating reliable "ground truth" datasets [15]. Finally, benchmarking should expand beyond identification to assess how well algorithms prioritize novel and bioactive compounds, the ultimate goal of discovery pipelines. As GNPS continues to grow into a "living data" repository with continuous reanalysis [10], it will provide the ever-improving substrate for these essential computational evaluations.

Scope and Significance of GNPS Datasets for Algorithm Benchmarking

The Global Natural Products Social Molecular Networking (GNPS) platform has evolved from a collaborative spectral library into a foundational ecosystem for benchmarking computational metabolomics algorithms [5]. Its vast, publicly available repository of mass spectrometry data provides an essential, real-world testbed for evaluating the performance of tools designed for metabolite annotation, dereplication, and identification [12]. In the context of a broader thesis on benchmarking dereplication algorithms, GNPS datasets address a critical community need: the ability to compare novel computational methods against standardized, large-scale data to assess their accuracy, scalability, and practical utility [12]. This objective comparison is vital as the field moves beyond isolated validation studies, helping researchers and drug development professionals select optimal tools for discovering novel molecules and variants, such as microbial natural products with therapeutic potential [9] [17].

The benchmarking significance of GNPS stems from several key attributes. First, it provides access to millions of experimental mass spectra from diverse biological sources, enabling stress-testing of algorithms against the complexity and noise inherent in real data [9] [5]. Second, its datasets facilitate the evaluation of different algorithmic strategies—from classic spectral library matching and molecular networking to modern machine learning-based variant discovery—under consistent conditions [9] [12]. Finally, by serving as a common reference point, GNPS helps clarify methodological trade-offs, such as the balance between annotation speed and accuracy or the sensitivity for detecting known versus novel molecular variants [18]. The following sections provide a performance comparison of leading algorithms benchmarked on GNPS data, detail their experimental protocols, and visualize the integrated workflows that define this field.

Performance Benchmarking of Key Dereplication and Processing Algorithms

Benchmarking studies on GNPS and related mass spectrometry datasets reveal distinct performance profiles across different algorithmic categories. The quantitative comparisons below highlight strengths in accuracy, speed, and novel compound discovery.

Table 1: Benchmarking Performance of Spectral Search and Annotation Algorithms

| Algorithm | Core Function | Key Benchmark Metric (GNPS/Related Data) | Reported Performance | Primary Advantage |

|---|---|---|---|---|

| VInSMoC [9] | Variant-tolerant database search | Identification of knowns & novel variants from 483M GNPS spectra | 43k knowns; 85k novel variants identified [9] | Discovers structural variants beyond exact matches |

| MS2DeepScore [12] | Deep learning spectral similarity | Accuracy of analogue search vs. traditional cosine score | Improved ranking of correct annotations [12] | Better handles spectra from different instruments |

| MS2Query [12] | Mass spectral analogue search | Reliability of predicting analogous structures | Enables large-scale analogue search [12] | Integrates spectral similarity with metadata |

| MassCube Feature Detection [18] | LC-MS peak picking & processing | Accuracy vs. speed on synthetic benchmark data | 96.4% accuracy; 64 min for 105 GB data [18] | High accuracy & speed; excellent isomer detection |

| DeepRTAlign [19] | Retention time alignment | Alignment accuracy on large cohort proteomic/metabolomic data | Improved ID sensitivity without compromising quant accuracy [19] | Handles both monotonic & non-monotonic RT shifts |

Table 2: Comparative Analysis of Integrated Workflow Platforms

| Platform / Workflow | Typical Use Case | Benchmarking Focus | Strengths | Limitations / Challenges |

|---|---|---|---|---|

| Classic GNPS MN [5] [17] | Dereplication via molecular networking | Network connectivity & annotation propagation | Visual discovery of related compounds; community tools [17] | Less automated; requires manual inspection |

| Feature-Based MN (FBMN) [17] | Integrating chromatographic data | Improved isomer separation & quantitative analysis | Links spectral similarity with LC peak shape [17] | Dependent on upstream feature detection accuracy |

| VInSMoC Large-Scale Search [9] | Exhaustive search for variants | Scalability & statistical significance | Searched 483M spectra vs. 87M molecules [9] | Computational resource requirements |

| End-to-End Pipeline (e.g., MassCube) [18] | Full raw data to annotation workflow | Overall accuracy, false positive rate, speed | 100% signal coverage; integrated modules reduce errors [18] | Newer platform; community size vs. established tools |

Detailed Experimental Protocols for Benchmarking Studies

Adopting standardized experimental protocols is essential for reproducible and meaningful benchmarking. The following methodologies are derived from key studies that have utilized GNPS data for algorithm evaluation.

Protocol 1: Benchmarking Variant-Tolerant Database Search (Based on VInSMoC Study [9]) This protocol evaluates an algorithm's ability to identify both known molecules and novel structural variants from large-scale spectral libraries.

- Dataset Curation: Compile a benchmark dataset from GNPS, consisting of millions of tandem mass spectra (e.g., the 483 million spectra used by VInSMoC). Simultaneously, prepare a structured molecular database (e.g., from PubChem, COCONUT) [9].

- Algorithm Execution: Run the search algorithm in two modes: (a) Exact search mode, matching spectra to identical molecular structures, and (b) Variable or "variant-tolerant" mode, allowing for small structural differences (e.g., substitutions, rearrangements).

- Statistical Validation: For each match, calculate a statistical significance score (e.g., p-value or E-value) to estimate the false discovery rate (FDR). Apply a threshold to control the FDR at a specified level (e.g., 1%).

- Performance Assessment: Quantify outputs: (i) Number of known molecules correctly identified (validated against reference libraries). (ii) Number of high-confidence novel structural variants reported. (iii) Computational time and scalability metrics.

- Biological Validation: For a subset of novel variant predictions (e.g., putative modified natural products), attempt to confirm the prediction through genomic analysis (e.g., identifying biosynthetic gene clusters with AntiSMASH) [9].

Protocol 2: Evaluating Dereplication Workflows for Complex Plant Extracts (Based on Sophora flavescens Study [17]) This protocol compares the complementary strengths of different spectral acquisition and analysis methods for dereplication.

- Sample Preparation & Data Acquisition:

- Prepare an extract from a well-studied biological source (e.g., plant root).

- Analyze the same sample using both Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA) modes on a high-resolution LC-MS/MS system [17].

- Parallel Data Processing:

- For DDA Data: Process raw files (e.g., with MZmine). Submit the resulting MS/MS spectral file directly to GNPS for classic molecular networking and library search [17].

- For DIA Data: Process raw files with software capable of deconvolution (e.g., MS-DIAL) to reconstruct pseudo-MS/MS spectra. Submit the resulting spectral file to GNPS for feature-based molecular networking (FBMN) [17].

- Result Integration & Analysis:

- Combine annotations from both the DDA-direct search and the DIA-FBMN approach.

- Use Extracted Ion Chromatograms (EICs) to separate and confirm isomeric compounds that have similar spectra but different retention times [17].

- Validate annotations using authentic chemical standards where available.

- Metric Calculation: Report the (i) total number of compounds annotated, (ii) number of annotations unique to each method (DDA vs. DIA), and (iii) the ability to resolve isomers.

Protocol 3: Benchmarking Feature Detection and Alignment Algorithms (Based on MassCube & DeepRTAlign Studies [19] [18]) This protocol assesses the foundational steps of peak detection and cross-sample alignment, which underpin all quantitative and comparative analyses.

- Dataset Creation: Use both synthetic datasets (with known, inserted peak properties) and real experimental datasets (e.g., from GNPS or controlled metabolomic studies) [18].

- Synthetic Benchmarking: For feature detection, inject synthetic peaks with varying signal-to-noise ratios, peak resolutions, and intensity ratios into a real MS data matrix. The "ground truth" is precisely known, allowing for exact calculation of true positive and false positive rates [18].

- Experimental Benchmarking: For retention time (RT) alignment, use datasets from large cohort studies where a subset of features are identified via database search. These identifications provide anchor points to assess alignment accuracy between runs [19].

- Algorithm Comparison: Run multiple tools (e.g., MassCube, XCMS, MZmine, DeepRTAlign) on the same datasets. For feature detection, compare accuracy, sensitivity, and speed [18]. For RT alignment, compare the number of correctly aligned features across samples and the preservation of quantitative fidelity [19].

- Downstream Impact Assessment: Feed the results from different processing tools into a standard classification model (e.g., to predict a phenotype). The resulting classifier performance (accuracy, AUC) indicates the practical, biological relevance of the data processing quality [19] [18].

Methodological and Conceptual Frameworks

The benchmarking of algorithms relies on well-defined computational and experimental workflows. The following diagrams, generated using Graphviz DOT language, illustrate the logical relationships and standard processes in the field.

Diagram 1: GNPS-Centric Benchmarking Workflow for Dereplication Algorithms

Diagram 2: Integrated Dereplication Strategy Combining DDA and DIA Data

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful benchmarking and dereplication studies require a combination of reliable chemical reagents, standardized samples, and specialized software.

Table 3: Key Research Reagent Solutions for GNPS Benchmarking Studies

| Category | Item / Solution | Function in Benchmarking | Example from Literature |

|---|---|---|---|

| Reference Standards | Authentic chemical standards | Provide ground truth for validating algorithm identifications (MSI Level 1 evidence). | Matrine, sophoridine used to validate Sophora annotations [17]. |

| Standardized Extracts | Well-characterized biological extracts | Serve as complex, real-world test samples with known components. | Sophora flavescens root extract used in dereplication workflow [17]. |

| Chromatography Reagents | LC-MS grade solvents & modifiers | Ensure reproducible chromatographic separation, critical for isomer resolution and retention time alignment. | Ammonium acetate/water and acetonitrile used in mobile phase [17]. |

| Data Processing Software | Open-source pipelines (e.g., MZmine, MS-DIAL) | Convert raw data into formats suitable for GNPS and perform essential pre-processing (feature detection, deconvolution). | MZmine used for DDA data; MS-DIAL for DIA deconvolution [17]. |

| Benchmarking Databases | Curated spectral libraries (e.g., GNPS itself) | Act as the reference against which search and annotation algorithms are evaluated. | GNPS libraries, PubChem, COCONUT used in large-scale searches [9]. |

| Validation Tools | Genomic analysis software (e.g., AntiSMASH) | Provide orthogonal, biological validation for putative novel natural product variants predicted by algorithms. | AntiSMASH used to link variants to biosynthetic pathways [9]. |

The systematic benchmarking of dereplication algorithms on GNPS datasets represents a cornerstone for progress in computational metabolomics and natural products discovery. As evidenced by the comparative data, no single algorithm excels in all metrics; rather, tools like VInSMoC for variant discovery, MassCube for high-fidelity data processing, and integrated DDA/DIA workflows for dereplication each address specific challenges [9] [18] [17]. The significance of GNPS lies in its role as a neutral, large-scale proving ground that allows these methodological trade-offs to be objectively quantified.

For researchers and drug development professionals, the outcome of such benchmarking is not merely academic. It directly informs the selection of efficient pipelines to prioritize novel chemical entities from vast biological datasets, thereby accelerating the discovery of new therapeutic leads [9] [17]. Moving forward, the community must adopt the standardized experimental protocols and performance metrics outlined here to ensure benchmarking studies are reproducible and comparable. The continued expansion and curation of GNPS datasets, coupled with rigorous algorithm evaluation, will be critical in transforming untargeted metabolomics from a predominantly analytical technique into a more predictive and reliable discovery science.

Key Challenges in Metabolite Identification that Dereplication Aims to Solve

Core Challenges in Metabolite Identification

The identification of metabolites from complex biological samples via mass spectrometry (MS) is a cornerstone of modern natural product discovery and drug development. This process, however, is fraught with significant challenges that impede efficiency and the rate of novel compound discovery.

A primary challenge is the high rediscovery rate of known compounds. Researchers invest substantial resources in isolating and characterizing molecules, only to find they are already documented, a process wasted on "knowns" rather than uncovering "unknowns" [6]. Dereplication directly addresses this by screening datasets against libraries of known compounds early in the pipeline.

The extreme chemical diversity of natural products presents another major hurdle. Metabolites span numerous classes—including peptidic natural products (PNPs), polyketides, terpenes, and alkaloids—each with unique and complex fragmentation patterns [11] [6]. Traditional spectral library searches fail when a compound's spectrum is absent from reference libraries. Furthermore, structural variations such as mutations, modifications (e.g., methylation, oxidation), and adducts generate families of related molecules, making precise identification difficult [11].

Finally, the sheer scale of modern MS datasets, exemplified by repositories like the Global Natural Products Social (GNPS) molecular networking infrastructure which contains hundreds of millions of spectra, has created a computational bottleneck [6] [20]. Manual analysis is impossible, and existing tools have struggled with speed, accuracy, and the reliable statistical validation of identifications across this vast chemical space [11].

The following diagram illustrates this multifaceted challenge and the role of dereplication in the natural product discovery workflow.

Diagram: The dereplication workflow solves key bottlenecks in metabolite identification.

Algorithm Comparison: Capabilities and Performance

Dereplication algorithms have evolved to tackle the outlined challenges. The table below compares key algorithms, highlighting the progression from class-specific tools to more comprehensive solutions.

Table 1: Comparison of Dereplication Algorithms and Their Capabilities

| Algorithm | Primary Scope | Key Innovation | Handles Variants (Variable Dereplication) | Statistical Validation | Integration with GNPS |

|---|---|---|---|---|---|

| NRP-Dereplication [11] | Cyclic Non-Ribosomal Peptides (NRPs) | Early computational dereplication for cyclic peptides | Yes | Limited | Limited |

| iSNAP [11] | Cyclic & Branch-Cyclic Peptides | Expanded structural scope beyond NRP-Dereplication | No | Limited | Limited |

| DEREPLICATOR [11] | Peptidic Natural Products (PNPs: NRPs & RiPPs) | Spectral networks for variant discovery; Decoy DB for FDR | Yes | Yes (p-values, FDR) | Yes, high-throughput |

| DEREPLICATOR+ [6] | Broad metabolites (PNPs, Polyketides, Terpenes, Alkaloids, etc.) | Extended fragmentation model & graph theory for diverse classes | Yes | Yes (p-values, FDR) | Yes, high-throughput |

Performance Benchmarking on GNPS Datasets Benchmarking on real GNPS data quantitatively demonstrates the evolution of these tools. DEREPLICATOR set a new standard by applying a rigorous statistical framework, using decoy databases to estimate false discovery rates (FDR), a method adapted from proteomics [11].

Table 2: Benchmarking Performance on GNPS Datasets

| Dataset (Description) | Algorithm | Key Benchmarking Result | Statistical Threshold |

|---|---|---|---|

| SpectraGNPS (All GNPS spectra) | DEREPLICATOR [11] | 8,622 PSMs*, 150 unique peptides identified | 0.2% PSM-FDR (p<10⁻¹⁰) |

| Spectra4 (4 low-res datasets) | DEREPLICATOR [11] | 374 PSMs, 37 unique PNPs identified; 0 decoy hits | Estimated 0% FDR (p<10⁻¹¹) |

| SpectraActiSeq (Actinomyces extracts) | DEREPLICATOR [6] | 73 unique compounds identified | 1% FDR |

| SpectraActiSeq (Actinomyces extracts) | DEREPLICATOR+ [6] | 488 unique compounds identified (6.7x more than DEREPLICATOR) | 1% FDR |

| SpectraActiSeq (Actinomyces extracts) | DEREPLICATOR+ [6] | 154 unique compounds identified (2.3x more than DEREPLICATOR) | 0% FDR (p<10⁻⁸) |

*PSM: Peptide-Spectrum Match

DEREPLICATOR+ demonstrated a dramatic improvement in coverage. At a stringent 0% FDR, it identified over twice as many unique compounds as DEREPLICATOR from the same Actinomyces dataset [6]. Critically, its expanded scope was confirmed by the identification of crucial non-peptidic compound classes—such as the polyketide chalcomycin and its variants—which were entirely missed by the PNP-focused DEREPLICATOR [6].

Experimental Protocols for Benchmarking

Robust benchmarking requires standardized methodologies. The following protocols are derived from the foundational studies of DEREPLICATOR and DEREPLICATOR+ [11] [6].

Protocol 1: Standard Dereplication and FDR Estimation

This protocol outlines the core steps for database matching and statistical validation used by both DEREPLICATOR and DEREPLICATOR+.

- Database Preparation: Compile a target database of known compound structures (e.g., AntiMarin). Generate a corresponding decoy database of the same size by shuffling or randomizing molecular structures within the target database to model false matches [11].

- Theoretical Spectrum Generation: For each compound in the target and decoy databases, generate an in silico theoretical tandem mass spectrum. DEREPLICATOR models fragmentation by disconnecting amide bonds and bridges in peptides [11]. DEREPLICATOR+ uses a more generalized fragmentation graph approach, breaking bonds between heavy atoms for diverse metabolite classes [6].

- Spectral Matching & Scoring: Search each experimental MS/MS spectrum from the test dataset (e.g., a GNPS subset) against all theoretical spectra. Compute a similarity score (e.g., cosine score) for each compound-spectrum match (CSM).

- Statistical Significance & FDR Calculation:

- Compute a p-value for each CSM using algorithms like MS-DPR to estimate the probability of achieving the observed score by chance [11].

- Apply a p-value threshold to filter CSMs.

- Calculate the False Discovery Rate (FDR) as the ratio of the number of accepted decoy database matches to the number of accepted target database matches at the chosen threshold [11].

Protocol 2: Variable Dereplication via Molecular Networking

This advanced protocol enables the discovery of structural variants of known compounds, a key feature of modern dereplication.

- Molecular Network Construction: Create a spectral network where nodes are MS/MS spectra. Connect two nodes with an edge if their spectral similarity (cosine score) exceeds a threshold (e.g., >0.7), implying structural relatedness [11] [20].

- Annotation Propagation: Identify nodes that have been confidently annotated via Protocol 1 (standard dereplication at high confidence/FDR).

- Variant Discovery: Propagate annotations through the network. Spectra connected to an annotated node are hypothesized to be structural variants (e.g., with a methylation, oxidation, or amino acid substitution) of the known compound [11]. This allows for the identification of compound families.

The following diagram integrates these protocols into a complete benchmarking methodology for evaluating dereplication algorithms.

Diagram: Integrated experimental protocol for benchmarking dereplication algorithms.

Successful dereplication relies on a suite of computational and data resources. The table below details key components used in the featured studies.

Table 3: Essential Resources for Dereplication Research

| Resource Name | Type | Primary Function in Dereplication | Key Feature / Relevance |

|---|---|---|---|

| Global Natural Products Social (GNPS) [11] [6] [20] | Mass Spectrometry Data Repository & Ecosystem | Provides the massive, real-world spectral datasets required for benchmarking and discovery. | Public repository of hundreds of millions of MS/MS spectra; includes analysis tools like molecular networking [20]. |

| AntiMarin Database [11] [6] | Chemical Structure Database | Serves as a core target database of known microbial natural products for spectral matching. | Contains approximately 60,000 compounds, extensively used for benchmarking dereplication algorithms [11]. |

| Dictionary of Natural Products (DNP) [6] | Chemical Structure Database | Provides a broader collection of natural product structures for expanded dereplication scope. | Used by DEREPLICATOR+ to extend identification beyond peptides to diverse chemical classes [6]. |

| Molecular Networking [11] [20] | Computational Analysis Method | Enables variable dereplication by grouping related spectra to discover structural variants. | Core feature of GNPS; allows annotation propagation from known to unknown spectra in a network [11] [20]. |

| Decoy Database [11] | Computational Control | Enables estimation of False Discovery Rates (FDR), critical for validating algorithm accuracy. | Generated by randomizing target databases; matches to decoys estimate the rate of false positives [11]. |

| ClassyFire [6] | Chemical Classification Tool | Automatically classifies identified compounds into chemical classes (e.g., peptide, polyketide). | Used to analyze and report the diversity of compounds identified by DEREPLICATOR+ [6]. |

Algorithmic Approaches and Practical Workflows for Dereplication on GNPS

The exponential growth of public mass spectrometry data, primarily through the Global Natural Products Social (GNPS) molecular networking infrastructure, has transformed natural product discovery [21] [11]. A central challenge in this field is dereplication—the rapid identification of known compounds within complex mixtures to prioritize novel chemical entities for isolation and characterization [21] [11]. As datasets scale to hundreds of millions of spectra, traditional spectral library matching becomes insufficient due to limited library coverage and an inability to identify structural variants of known molecules [9].

This analysis compares three advanced dereplication algorithms—DEREPLICATOR+, VInSMoC, and MS2query—framed within the context of benchmarking studies on GNPS datasets. These tools represent a paradigm shift from simple spectral matching to in-silico fragmentation and database search against extensive structural databases, enabling the identification of known compounds and their unreported variants [9] [21] [22]. Their performance directly impacts the efficiency of drug discovery pipelines by reducing redundant rediscovery and highlighting novel chemical space.

The following table summarizes the core characteristics and benchmarked performance of the three dereplication algorithms based on large-scale GNPS dataset analyses.

Table: Benchmark Comparison of Dereplication Algorithms on GNPS Datasets

| Algorithm | Core Innovation | Benchmark Dataset (GNPS) | Key Reported Performance | Primary Compound Classes |

|---|---|---|---|---|

| DEREPLICATOR+ [21] [22] | Generalized fragmentation graph (N–C, O–C, C–C bonds; multi-stage fragmentation). | 248.1 million spectra (SpectraGNPS); 178,635-11.9M spectra from Actinomyces, Cyanobacteria [21]. | Identified 5x more molecules than prior tools; 1.2% of spectra in Actinomyces set matched at 1% FDR [21]. | Peptides, polyketides, terpenes, benzenoids, alkaloids, flavonoids [21] [22]. |

| VInSMoC [9] | Variable search for molecular variants with statistical significance estimation. | 483 million spectra searched against 87 million molecules from PubChem/COCONUT [9]. | Revealed 43,000 known molecules and 85,000 previously unreported variants [9]. | Broad small molecules, demonstrated on promothiocin B, depsidomycin variants [9]. |

| MS2query [9] | Analog search using MS2 deep learning similarity (MS2deepscore). | Not explicitly detailed in provided results; cited as a reliable and scalable analogue search method [9]. | Described as a reliable and scalable MS2 mass spectra-based analogue search tool [9]. | Broad small molecules (analogue search) [9]. |

Detailed Experimental Protocols for Benchmarking

The benchmarking of dereplication tools requires standardized protocols for data processing, database search, and statistical validation. The following methodologies are synthesized from the key publications.

- Dataset Curation: Benchmarking used specific subsets of GNPS, including SpectraActiSeq (Actinomyces strains), SpectraCyan (cyanobacteria), and the comprehensive SpectraGNPS (248.1 million spectra).

- Database Preparation: Searches were performed against structural databases like AntiMarin (≈60k compounds) and the Dictionary of Natural Products (≈255k compounds), with duplicates removed.

- Fragmentation Graph Construction: The algorithm constructs metabolite graphs from chemical structures and generates theoretical fragmentation graphs considering multiple bond types (N–C, O–C, C–C) and multi-stage fragmentation.

- Decoy Database & Scoring: Decoy fragmentation graphs are constructed for false discovery rate (FDR) estimation. Metabolite-spectrum matches (MSMs) are scored based on shared peaks.

- Statistical Validation: The MS-DPR algorithm computes p-values for individual MSMs. FDR is controlled at thresholds (e.g., 0% or 1%) by using the decoy database to estimate the false positive rate [21] [11].

- Result Expansion via Molecular Networking: Identifications are propagated through spectral networks to discover structural variants of the core identified metabolites.

- Unprecedented Scale Search: The algorithm searched 483 million mass spectra from public GNPS repositories.

- Extensive Structural Database: The search space consisted of 87 million molecular structures aggregated from PubChem and the COCONUT natural products database.

- Variable Identification Mode: Unlike exact matching, VInSMoC performs a modification-tolerant "variable mode" search to identify structural variants of database molecules.

- Statistical Significance Estimation: A key feature is the algorithm's built-in estimation of the statistical significance of matches between spectra and molecular structures, which helps filter false identifications.

- Validation via Biosynthetic Pathways: Putative identifications of variant molecules (e.g., promothiocin B, depsidomycin) were cross-referenced with microbial biosynthesis pathways in the source organisms (Streptomyces bellus, Streptomyces sp. F-2747) for biological plausibility.

General GNPS Workflow Integration

All tools are integrated into the GNPS platform, requiring standardized data pre-processing [23] [22] [5]:

- Data Format Conversion: Raw instrument data is converted to open formats (mzML, mzXML, .MGF).

- Parameter Configuration: Users set mass tolerances (precursor and fragment ion), choose databases, and set statistical thresholds.

- Job Submission & Analysis: Jobs are submitted via the GNPS web interface, with results available for visualization, including spectral matching views and network propagation.

Logical Framework and Benchmarking Relationships

The diagram below illustrates the logical relationship between the GNPS data ecosystem, the core algorithmic functions of the three dereplication tools, and the benchmarking process that evaluates their performance.

The Scientist's Toolkit: Key Reagents and Parameters

Successful dereplication requires careful experimental and computational setup. The following toolkit details essential components derived from benchmark studies and platform documentation.

Table: Essential Research Toolkit for Dereplication Experiments

| Tool / Parameter | Typical Setting or Example | Function in Dereplication |

|---|---|---|

| GNPS Platform [23] [5] | gnps.ucsd.edu | Central repository and workflow environment for data analysis, algorithm access, and molecular networking. |

| Structural Databases | PubChem, COCONUT, AntiMarin, Dictionary of Natural Products [9] [21] | Reference libraries of known chemical structures used for in-silico fragmentation and matching. |

| Data Pre-processing Tools | MSConvert, MZmine2 [24] | Converts raw instrument data to open formats (mzML, mzXML) and performs feature detection for FBMN. |

| Precursor Mass Tolerance [23] [22] | ±0.02 Da (high-res), ±0.5 Da (low-res) | Defines the window for matching the parent ion mass between experimental and theoretical spectra. |

| Fragment Ion Mass Tolerance [23] [22] | ±0.02 Da (high-res), ±0.5 Da (low-res) | Defines the window for matching fragment ion masses. Critical for scoring spectrum matches. |

| False Discovery Rate (FDR) Threshold [21] [11] | 1% or 0% | Statistical cutoff, often estimated using decoy databases, to filter confident identifications. |

| LC-MS/MS Acquisition Parameters [25] | Optimized collision energy, precursors/cycle | Parameters like collision energy and number of precursors per cycle significantly affect spectral quality and network topology, impacting downstream dereplication success. |

The benchmarking of DEREPLICATOR+, VInSMoC, and MS2query underscores a significant evolution in dereplication capacity, moving from simple library lookups to high-throughput, statistically rigorous identification of molecules and their variants directly from massive GNPS spectral datasets [9] [21].

Each algorithm offers a distinct strategic advantage: DEREPLICATOR+ provides broad coverage across diverse natural product classes through its generalized fragmentation model [21] [22]; VInSMoC demonstrates unparalleled scalability and a specific focus on discovering statistically validated structural variants [9]; MS2query contributes a powerful deep learning-based approach for analog searching [9]. For researchers, the choice depends on the primary need: class breadth (DEREPLICATOR+), variant discovery at scale (VInSMoC), or analog similarity (MS2query).

Future development will likely involve the integration of these complementary approaches—combining robust fragmentation graphs with deep learning similarity measures and rigorous statistical validation—into unified workflows. Furthermore, tighter integration with genomic data for biosynthetic pathway validation, as previewed in VInSMoC's study [9], will enhance the biological relevance of identifications. As public spectral libraries grow, the continued benchmarking of these tools on standardized, challenging GNPS datasets will be essential for driving the next generation of high-throughput natural product and drug discovery.

The Global Natural Products Social Molecular Networking (GNPS) platform is a community-driven, web-based mass spectrometry ecosystem designed for organizing, sharing, and analyzing tandem mass spectrometry (MS/MS) data [26]. Its core function is to aid in the identification and discovery of molecules, particularly natural products and metabolites, throughout the data life cycle [26]. For researchers engaged in benchmarking dereplication algorithms—the process of efficiently identifying known compounds within complex mixtures to prioritize novel discoveries—GNPS provides an indispensable real-world testing environment. The platform hosts a vast, continuously growing repository of public MS/MS spectra against which new algorithms can be validated and compared [9] [27].

This guide details the step-by-step workflows within GNPS, with a specific focus on providing an objective comparison of its native tools against other emerging algorithms and informatic strategies. The analysis is framed within a thesis on benchmarking, evaluating performance based on experimental data related to identification accuracy, computational efficiency, and utility in drug development pipelines [28] [29].

Foundational Concepts: Molecular Networking and Dereplication

At the heart of GNPS analysis is Molecular Networking (MN), a visualization strategy that groups MS/MS spectra based on spectral similarity, implying structural relatedness [24]. Spectra (represented as nodes) are connected by edges when their cosine similarity score exceeds a defined threshold. This organizes complex datasets into visual "molecular families," dramatically streamlining the discovery process [26] [24].

Dereplication within this network is performed via library search, where experimental spectra are matched against reference spectral libraries. GNPS maintains extensive, curated public libraries for this purpose [26]. The benchmarking of dereplication algorithms centers on their ability to accurately and sensitively match spectra to known structures, and even more critically, to identify analogs and variants of known molecules—a key step in novel discovery [9].

Comprehensive GNPS Analysis Workflow

The end-to-end GNPS workflow transforms raw mass spectrometry data into biological insights through a series of standardized yet configurable steps.

Data Preparation and Upload

- File Conversion: Raw vendor-specific data files must be converted to open formats (e.g.,

.mzXML,.mzML,.mgf) using tools like MSConvert [24] [30]. - Account Creation & Upload: Users register for a free GNPS account. Data is uploaded via an FTP client (like FileZilla) to the GNPS/MassIVE repository [30].

Core Analytical Workflows

GNPS offers several interconnected workflows. The Feature-Based Molecular Networking (FBMN) workflow, which integrates chromatographic feature detection, is now the most widely used for its improved quantification and reduced redundancy [29] [24].

Diagram Title: The Complete GNPS Feature-Based Molecular Networking (FBMN) Workflow

Advanced and Specialized Workflows

Beyond classical networking, GNPS2 (an improved version) offers specialized workflows crucial for applied research:

- Drug Metabolism Studies: A protocol for identifying in vitro and in vivo drug metabolites using molecular networking and tools like ChemWalker [29].

- Reverse Metabolomics: A discovery framework starting with an MS/MS spectrum of interest. Researchers use the Mass Spectrometry Search Tool (MASST) to find all public datasets containing that spectrum, then use the ReDU interface to analyze associated biological metadata (e.g., disease state, sample type) [27]. This reverses the traditional hypothesis-driven approach.

Benchmarking Dereplication Tools: GNPS Versus Alternatives

A core thesis in modern metabolomics is evaluating the performance of different informatics tools. The table below benchmarks the native GNPS library search against other state-of-the-art algorithms, based on published studies [28] [9].

Table 1: Benchmarking Dereplication and Spectral Matching Tools

| Tool / Algorithm | Type / Platform | Key Strength | Reported Performance Metric | Primary Use Case |

|---|---|---|---|---|

| GNPS Library Search | Library-based, Web | Community-curated libraries, integrated networking [26]. | Standard for exact matching; analog search limited to pre-defined masses. | Initial dereplication within the GNPS ecosystem. |

| VInSMoC [9] | Database search algorithm | Scalable search of 483M spectra; identifies molecular variants (modified forms). | Identified 85,000 previously unreported variants from PubChem/COCONUT. | Discovering analogs and modified forms of known molecules. |

| MS2Query [9] | Analog search tool | Machine learning for reliable analog search. | Enables finding structurally similar compounds not in libraries. | Extended dereplication beyond exact matches. |

| MS2DeepScore [9] | Similarity measure | Deep learning-based spectral similarity score. | Superior to cosine score for structural similarity prediction. | Improving edge accuracy in molecular networks. |

| DIA-NN [28] | DIA Data Analysis Software | High quantitative precision (CV: 16.5-18.4%). | Quantified 11,348 ± 730 peptides in single-cell benchmark. | Quantitative proteomics/metabolomics data analysis. |

| Spectronaut (directDIA) [28] | DIA Data Analysis Software | High proteome coverage (3066 ± 68 proteins). | Highest identification coverage in single-cell benchmark. | Maximum identification in library-free DIA analysis. |

Experimental Protocol for Benchmarking

To objectively compare tools, a standardized experimental and computational protocol is essential. The following methodology is adapted from contemporary benchmarking studies [28] [29]:

- Sample Preparation: Use a defined mixture with ground-truth composition. For drug metabolism, incubate a parent drug (e.g., Sildenafil) with liver microsomes [29]. For complex mixtures, use simulated samples combining digest from different organisms (e.g., human, yeast, E. coli) in known ratios [28].

- LC-MS/MS Acquisition: Analyze samples using high-resolution tandem MS, preferably in data-dependent acquisition (DDA) mode for library building or data-independent acquisition (DIA) mode for quantitative benchmarks.

- Data Processing: Process the raw data through parallel pipelines:

- GNPS Workflow: Convert data, perform FBMN and classical library search.

- Alternative Tools: Export peak lists or feature tables for analysis with tools like VInSMoC [9] or MS2Query.

- Performance Evaluation: Compare outputs using metrics such as:

- Identification Metrics: Number of known compounds correctly dereplicated.

- Variant Discovery: Number of plausible novel analogs or metabolites found.

- Quantitative Accuracy: For spiked samples, calculate the coefficient of variation (CV) and accuracy of fold-change measurements [28].

- Computational Efficiency: Processing time and resource use.

Diagram Title: Framework for Benchmarking Dereplication and Analysis Algorithms

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for GNPS-Based Studies

| Item / Reagent | Function in Workflow | Application Notes |

|---|---|---|

| Methanol/Chloroform Solvent System | Biphasic liquid-liquid extraction of metabolites from biological samples [3]. | Classical Folch/Bligh & Dyer method. Ratios (e.g., 2:1 MeOH:CHCl3) can be optimized for polar vs. non-polar metabolites. |

| Stable Isotope-Labeled Internal Standards | Enables accurate quantification and corrects for variability during sample prep and analysis [3]. | Added at known concentration prior to extraction. Should mimic target metabolite classes. |

| Liver Microsomes (e.g., Human, Mouse) | In vitro metabolic system for drug metabolism studies [29]. | Used with NADPH cofactor to generate Phase I metabolites for identification workflows. |

| Quality Control (QC) Pooled Sample | Monitors instrument performance and data reproducibility throughout an LC-MS sequence [3]. | Created by pooling small aliquots of all experimental samples; injected at regular intervals. |

| Reference Standard Compounds | Provides authentic MS/MS spectra for library building and validation of identifications [29]. | Essential for confirming the structure of putative metabolites or novel compounds. |

Performance Comparison in Applied Research: Drug Development

GNPS workflows show distinct advantages and limitations in applied settings like drug development. A direct comparison can be made between using GNPS and using a streamlined commercial software suite for a specific task like metabolite identification.

Table 3: Comparison of GNPS and Alternative Workflows for Drug Metabolite ID

| Aspect | GNPS2 Molecular Networking Workflow [29] | Typical Commercial Software Suite |

|---|---|---|

| Core Methodology | Molecular networking based on MS/MS spectral similarity; analog search via MASST/ReDU [29] [27]. | Peak finding, isotope pattern matching, and fragment ion prediction from a parent drug structure. |

| Primary Output | Visual network of related spectra, highlighting clusters of parent drug and potential metabolites. | List of predicted metabolites with chromatographic peaks, requiring manual MS/MS verification. |

| Key Strength | Unbiased discovery of unexpected metabolites and analogs without prior knowledge [29]. Integrated public data search (reverse metabolomics) for biological context [27]. | Fast, automated processing with a structured workflow tailored to regulatory needs. |

| Key Limitation | Requires understanding of network interpretation; less automated for routine high-throughput analysis. | Relies heavily on prediction algorithms; may miss novel metabolic pathways not in its rulesets. |

| Best Suited For | Early discovery, investigating complex metabolism, and discovering entirely novel metabolite scaffolds. | Later-stage development where metabolism is more characterized and high-throughput sample analysis is needed. |

Integrated Dereplication Benchmarking Strategy

For a comprehensive thesis, benchmarking should evaluate the integrated performance of a workflow, not just a single algorithm. The most effective strategy for novel natural product or metabolite discovery often involves a sequential, hybrid approach:

Diagram Title: Hybrid Strategy for Sequential Dereplication and Novelty Prioritization

This strategy first removes knowns via GNPS, then uses advanced algorithms (VInSMoC) to find variants, clusters remaining unknowns via networking, and finally uses reverse metabolomics to prioritize spectra with interesting biological associations [9] [27]. Benchmarking this pipeline's overall efficiency and hit rate against standalone tools provides critical insight for the field.

GNPS provides a powerful, free, and community-accessible platform for MS/MS data analysis, with molecular networking and library search forming its core, benchmarkable dereplication functions. Experimental benchmarking studies reveal that while GNPS's native tools excel at exact matching and visualization, emerging algorithms like VInSMoC offer superior capabilities for identifying molecular variants at scale [9].

The future of dereplication lies in integrating these specialized tools into cohesive pipelines. The most robust benchmarking for a drug development thesis will not ask which single tool is best, but rather what sequence of tools—from fast exact matching to sensitive analog search and biological contextualization—maximizes the efficiency of novel compound discovery. As public data repositories grow, reverse metabolomics and tools like MASST will become increasingly critical for translating spectral data into biological and clinical insights [27].

The discovery of novel, biologically active natural products from microbial sources is a cornerstone of pharmaceutical development, particularly in the search for new antibiotics and anticancer agents. However, this process is significantly hindered by the frequent re-discovery of known compounds, which wastes valuable time and resources. Dereplication—the rapid identification of known molecules within complex extracts—is therefore a critical first step in the discovery pipeline [31].

Modern dereplication strategies are built upon mass spectrometry (MS) and genomic data, integrated through platforms like the Global Natural Products Social Molecular Networking (GNPS) infrastructure [32]. The challenge lies in developing and selecting algorithms that can accurately and efficiently sift through billions of mass spectra to annotate known compounds and highlight novelty. This guide provides a comparative benchmark of leading dereplication algorithms, specifically evaluating their performance on two prolific microbial groups: Actinomyces (notably Actinobacteria) and Cyanobacteria. These groups are renowned for their biosynthetic potential and are extensively studied within public GNPS datasets [33] [6].

The performance of an algorithm is not absolute but depends on the chemical class of the analyte (e.g., peptides, polyketides), the spectral quality, and the composition of the reference database. This comparison, framed within broader research on benchmarking methodologies [34] [35], aims to provide researchers with actionable insights for selecting the optimal tool for their specific GNPS dataset.

This section introduces the core algorithms benchmarked in this guide, focusing on their evolution and key design philosophies for handling microbial natural product data.

DEREPLICATOR was a seminal tool designed specifically for peptidic natural products (PNPs), including non-ribosomal peptides (NRPs) and ribosomally synthesized and post-translationally modified peptides (RiPPs). It operates by constructing theoretical spectra of peptides through in silico fragmentation of amide bonds [23] [6]. Its successor, DEREPLICATOR+, represents a major expansion. It extends the in silico fragmentation approach to a vast array of natural product classes, including polyketides, terpenes, benzenoids, and alkaloids, by utilizing a more general molecular graph fragmentation model [6].

NPLinker is not a dereplication algorithm per se but a metabologenomics integration platform. It addresses a related but distinct bottleneck: linking mass spectral features from metabolomics data to the Biosynthetic Gene Clusters (BGCs) identified in genomics data. It employs various scoring methods (e.g., its novel "Rosetta" metric) to predict which BGC likely produced which compound, thereby prioritizing strains based on combined genomic and chemical novelty [32].

Table 1: Core Algorithm Characteristics and Evolution

| Algorithm | Primary Purpose | Core Methodology | Chemical Class Coverage | Key Evolution |

|---|---|---|---|---|

| DEREPLICATOR | Dereplication of known compounds | In silico fragmentation of amide bonds in peptides | Peptidic Natural Products (NRPs, RiPPs) | First dedicated tool for PNPs on GNPS [6]. |

| DEREPLICATOR+ | Dereplication of known compounds | Generalized molecular graph fragmentation | Extended coverage: Peptides, Polyketides, Terpenes, Alkaloids, etc. [6] | Expanded beyond peptides; increased sensitivity for variant detection. |

| VarQuest (Mode of DEREPLICATOR) | Discovery of structural variants | Modification-tolerant database search | Peptidic Natural Products [23] | Enables "blind" search for analogs of known PNPs. |

| NPLinker | Metabologenomics linking | Correlative scoring between MS features & BGCs | Agnostic to compound class [32] | Integrates genomics & metabolomics to prioritize novel BGCs. |

The benchmarking workflow for evaluating these tools involves a structured process from raw data to performance metrics, as visualized in the following diagram.

Diagram 1: Workflow for Benchmarking Dereplication Algorithms. The process begins with specific GNPS datasets, utilizes reference databases and genomic data, and concludes with a performance report.

Experimental Protocols for Benchmarking

To ensure fair and reproducible comparisons, benchmarking studies must implement standardized protocols for data preparation, algorithm execution, and validation. The following methodologies are synthesized from key studies on Actinobacteria and Cyanobacteria.

Dataset Curation & Preparation

- Actinomyces Dataset (SpectraActiSeq): A benchmark dataset can be constructed from public GNPS datasets (e.g., MSV000078604, MSV000078839) containing LC-MS/MS spectra from extracts of 36 Actinomyces strains with sequenced genomes. This provides a direct link between chemical and genomic data [6]. Spectra should be converted to standard formats (e.g., .mzML, .mzXML, .mgf) and blank samples should be included to identify and filter background contaminants [23] [6].

- Cyanobacteria Dataset: Studies highlight the use of diverse strain collections. For example, one can use 62 cyanobacterial strains from Brazilian biomes [36] or 24 tropical marine filamentous cyanobacteria genomes and their associated metabolomes [33]. Organic extracts (e.g., methanol) are typically analyzed via high-resolution LC-MS/MS, and the resulting spectra are uploaded to GNPS.

Algorithm Execution Parameters

- DEREPLICATOR+: On the GNPS platform, key parameters must be set judiciously. For high-resolution mass spectrometer data (q-TOF, Orbitrap), precursor and fragment ion mass tolerances are typically set to ±0.02 Da. The "Search analog" (VarQuest) option should be enabled to detect variants. The Extended PNP database is recommended for broader coverage, albeit with longer processing time [23].

- NPLinker: The protocol involves independent generation of genomics and metabolomics data. Genomes are assembled (e.g., using SPAdes), and BGCs are predicted using antiSMASH. Metabolomics data are processed through GNPS to create a molecular network. NPLinker is then run to score potential links between spectral families (molecular clusters) and BGCs, using its built-in correlation metrics [32].

Validation & Ground Truth

Establishing ground truth is critical. For dereplication, a manually curated list of known compounds identified from literature for the specific strains serves as a positive control [31]. For metabologenomics links, validated pairs—where a BGC product has been conclusively identified—are used (e.g., linking the BGC for chloramphenicol to its spectrum) [32]. Performance is measured by the algorithm's ability to rediscover these known links while minimizing false positives.

Performance Evaluation on Target Datasets

Quantitative benchmarking reveals the distinct strengths and applications of each algorithm. The following data, drawn from large-scale studies, provides a clear comparison.

Table 2: Benchmarking Performance on Actinomyces and Cyanobacteria GNPS Datasets

| Performance Metric | DEREPLICATOR+ (on Actinomyces Data) [6] | NPLinker (on Polar Actinobacteria Data) [32] | Context & Notes |

|---|---|---|---|

| Identification Yield | 488 unique compounds (at 1% FDR) from ~652k spectra. | Successfully linked known compounds (ectoine, chloramphenicol) to their BGCs. | DEREPLICATOR+ identifies ~5x more compounds than original DEREPLICATOR on same data [6]. |

| Compound Class Coverage | Peptides (92), Lipids (32), Benzenoids (5), Terpenes (6), Polyketides (2). | Not designed for broad dereplication; focused on linking MS features to BGCs. | Demonstrates DEREPLICATOR+'s expansion beyond peptides [6]. |

| Variant Discovery | 24 high-confidence metabolites revealed 557 additional variants via molecular networking. | Can propose links for variant families if core structure-BGC link is established. | DEREPLICATOR+ with VarQuest is specifically engineered for analog detection [23]. |

| Integration Capability | Output can be mapped onto GNPS molecular networks for visualization. | Core function: Integrates genomic (BGC) and metabolomic (MS network) data. | NPLinker addresses the "missing link" in metabologenomics [32]. |

| Typical Use Case | High-throughput dereplication of known compounds from LC-MS/MS data. | Prioritizing strains and BGCs for novel compound discovery based on 'omics data. | Complementary tools in the discovery pipeline. |

The relationship between these algorithms and the types of data they process is shown in the following diagram, illustrating their positions in the discovery pipeline.

Diagram 2: Algorithm Roles in the Natural Product Discovery Pipeline. Tools like DEREPLICATOR+ act on metabolomic data to identify known compounds, while NPLinker integrates genomic and metabolomic results to propose novel discovery targets.

Successful execution of the described protocols relies on a suite of specialized bioinformatic tools and reference databases.

Table 3: Essential Research Tools and Databases for Dereplication Benchmarking

| Tool/Resource Name | Category | Primary Function in Benchmarking | Key Reference/Source |

|---|---|---|---|

| GNPS Platform | Analysis Infrastructure | Hosts dereplication algorithms (DEREPLICATOR+), molecular networking, and public datasets. | Global Natural Products Social [5] |

| AntiMarin / Dictionary of Natural Products (DNP) | Reference Database | Curated chemical structure databases used as the ground truth for dereplication searches. | Laatsch H.; Blunt J. [31] [6] |

| MIBiG Repository | Reference Database | Repository of experimentally characterized BGCs, used to validate genome mining and links. | Consortium Repository [6] |

| antiSMASH | Genome Mining Tool | Identifies and annotates Biosynthetic Gene Clusters (BGCs) in genomic data. | Blin et al. [32] [33] |

| BiG-SCAPE / CORASON | Genome Analysis Tool | Clusters BGCs into Gene Cluster Families (GCFs) for comparative analysis. | Navarro-Muñoz et al. [32] [33] |

| Cytoscape | Visualization Software | Visualizes molecular networks from GNPS with overlaid dereplication annotations. | Open Source Platform [23] |

| MaSS-Simulator | Benchmarking Utility | Simulates MS/MS spectra under controlled parameters to test algorithm performance. | Gul Awan & Saeed [35] |

Integrating Dereplication with Molecular Networking for Novel Variant Discovery

The discovery of novel Natural Products (NPs) with therapeutic potential is fundamentally hampered by two major bottlenecks: the efficient dereplication of known compounds and the subsequent identification of structural variants [37]. Dereplication, the process of early identification of known entities to avoid redundant rediscovery, is critical for focusing resources on truly novel chemistry [24] [37]. Traditional methods often struggle with the complexity of NP extracts and the sheer volume of data generated by modern liquid chromatography-tandem mass spectrometry (LC-MS/MS).