Benchmarking AI for Natural Product Discovery: A Practical Guide to Model Selection for Bioactivity Prediction

This article provides a comprehensive, comparative guide for researchers and drug development professionals on selecting and applying artificial intelligence (AI) models for predicting the bioactivity of natural products.

Benchmarking AI for Natural Product Discovery: A Practical Guide to Model Selection for Bioactivity Prediction

Abstract

This article provides a comprehensive, comparative guide for researchers and drug development professionals on selecting and applying artificial intelligence (AI) models for predicting the bioactivity of natural products. We explore the foundational principles of AI in this specialized domain, detail the methodologies of leading model architectures from graph neural networks to transformers, and address critical challenges like data scarcity and model interpretability. A core focus is the empirical validation and comparative benchmarking of models across different prediction tasks. By synthesizing current trends and practical considerations, this guide aims to equip scientists with the knowledge to effectively integrate AI into natural product-based drug discovery pipelines, accelerating the translation of complex chemical diversity into viable therapeutic candidates[citation:3][citation:6].

The AI Revolution in Natural Product Discovery: Foundations, Models, and Core Challenges

Why Natural Products Remain a Critical Frontier for AI-Driven Drug Discovery

Natural products (NPs)—chemical compounds produced by living organisms—have been the cornerstone of drug discovery for millennia, with over 30% of FDA-approved new molecular entities originating from or inspired by natural sources [1]. Their intricate, evolutionarily refined structures offer unmatched chemical diversity and a high propensity for biological activity, leading to a higher clinical trial success rate compared to synthetic compounds [1]. However, traditional NP discovery is notoriously slow, labor-intensive, and plagued by challenges such as complex mixture analysis, low yields, and rediscovery of known compounds [2].

The integration of Artificial Intelligence (AI) is transforming this field by turning these challenges into tractable problems. AI and machine learning (ML) models accelerate the entire pipeline—from predicting the bioactive components in a crude extract and elucidating novel structures to forecasting target pathways and optimizing ADMET (Absorption, Distribution, Metabolism, Excretion, and Toxicity) properties [3] [2]. This paradigm shift promises to unlock nature's chemical library with unprecedented speed and precision, making NP-based discovery more efficient, cost-effective, and scalable than ever before [1] [4].

Comparative Analysis of AI Models for Natural Product Activity Prediction

Different AI model architectures offer distinct strengths and weaknesses for various tasks in NP research. The selection of an appropriate model depends on the data type (e.g., molecular structures, spectral data, biological networks) and the specific prediction goal (e.g., activity, target, pharmacokinetics).

Table 1: Comparison of Key AI Model Classes for Natural Product Research

| Model Class | Key Subtypes/Examples | Primary Applications in NP Research | Strengths | Limitations & Challenges |

|---|---|---|---|---|

| Tree-Based Ensemble Models | Random Forest, XGBoost, LightGBM | Bioactivity classification, ADMET prediction, dereplication [2] [5]. | High interpretability, robust with small-to-medium datasets, handles diverse feature types. | Limited ability to generalize to novel chemical scaffolds outside training data. |

| Deep Neural Networks (DNNs) | Fully Connected Networks, Multi-Layer Perceptrons (MLPs) | Quantitative Structure-Activity Relationship (QSAR) modeling, property prediction [2]. | Can model complex, non-linear relationships in high-dimensional data. | Requires very large datasets; prone to overfitting on small NP datasets. |

| Graph Neural Networks (GNNs) | Message Passing Neural Networks (MPNNs), Graph Convolutional Networks | Molecular property prediction, binding affinity estimation, learning directly from molecular graphs [5]. | Natively models molecular structure (atoms as nodes, bonds as edges), capturing spatial relationships. | Computationally intensive; performance depends heavily on graph representation quality. |

| Generative Models | Variational Autoencoders (VAEs), Generative Adversarial Networks (GANs), Transformers | De novo design of NP-inspired compounds, scaffold hopping, generating novel structures [2] [6]. | Explores vast chemical space, designs molecules with optimized multi-parameter profiles. | Can generate synthetically infeasible structures; requires rigorous validation. |

| Knowledge Graph (KG) Models | Heterogeneous graph learning, link prediction algorithms | Target identification, mechanism inference, polypharmacology prediction, integrating multi-omics data [7]. | Integrates disparate data types (chemical, genomic, phenotypic), enables causal inference and hypothesis generation. | Complex to construct and maintain; relies on high-quality, structured data. |

Table 2: Performance Comparison of AI Models in Specific NP-Related Tasks (Experimental Data)

| Prediction Task | Model Type | Dataset & Key Metric | Reported Performance | Experimental Context & Notes |

|---|---|---|---|---|

| Pharmacokinetic (PK) Parameter Prediction | Stacking Ensemble (RF, XGBoost, GNN) | >10,000 compounds from ChEMBL; R², MAE [5]. | R² = 0.92, MAE = 0.062 | Outperformed standalone GNNs (R²=0.90) and Transformers (R²=0.89) in predicting ADME properties [5]. |

| Bioactivity Classification (e.g., Anticancer) | Graph Neural Network (GNN) | NP-specific library; Precision-Recall AUC [3]. | High predictive accuracy (validated by in vitro assays) | Several AI-predicted anticancer NPs were confirmed active in lab experiments, demonstrating translational potential [3]. |

| Dereplication & Novelty Detection | Ensemble of MLP & Random Forest | Tandem Mass Spectrometry (MS/MS) data from microbial extracts; Accuracy [7]. | Significantly reduces rediscovery rate | Core tool in modern workflows to prioritize unknown signals for isolation, saving months of wasted effort [2] [7]. |

| Target & Pathway Prediction | Knowledge Graph Link Prediction | Heterogeneous KG (herb–ingredient–target–pathway) [3] [7]. | Proposes synergistic mechanisms and polypharmacology | Maps NP signatures to clinical outcomes; foundational for network pharmacology approaches [3]. |

Detailed Experimental Protocols for Key Methodologies

Protocol for AI-Driven Pharmacokinetic Prediction of Natural Product-Like Compounds

This protocol is based on a study demonstrating state-of-the-art PK prediction using ensemble AI models [5].

- Data Curation: Compile a dataset of >10,000 molecules with experimentally measured PK parameters (e.g., clearance, volume of distribution, half-life) from sources like ChEMBL. Include both NPs and NP-like synthetic molecules.

- Molecular Representation:

- Generate 2D molecular graphs (SMILES notation).

- Calculate a suite of 200+ molecular descriptors (e.g., topological, electronic, thermodynamic) using software like RDKit.

- For GNNs, convert SMILES into graph objects where nodes are atoms (featurized with atom type, hybridization) and edges are bonds (featurized with bond type).

- Model Training & Ensemble Construction:

- Base Models: Train three separate models: a) a Random Forest (RF) on molecular descriptors, b) an XGBoost model on descriptors, and c) a Message-Passing Graph Neural Network (MP-GNN) on molecular graphs.

- Stacking Ensemble: Use the predictions from the RF, XGBoost, and GNN as meta-features to train a final "meta-learner" model (e.g., a linear regression or a shallow neural network).

- Hyperparameter Optimization: Employ Bayesian optimization to tune the hyperparameters (e.g., learning rate, network depth, tree depth) for each base model and the meta-learner, maximizing the R² score on a held-out validation set.

- Validation: Evaluate the final stacked ensemble model on a completely independent test set. Report key metrics: R² (coefficient of determination), MAE (Mean Absolute Error), and RMSE (Root Mean Square Error). Compare its performance against each individual base model.

Protocol for Knowledge Graph-Driven Target Identification for a Novel Natural Product

This protocol outlines the use of a biomedical knowledge graph to hypothesize mechanisms of action [7].

- Knowledge Graph (KG) Construction:

- Nodes (Entities): Ingest structured data for chemical compounds (from PubChem, NP Atlas), protein targets (UniProt), diseases (MONDO), pathways (KEGG), and side effects (SIDER).

- Edges (Relationships): Define relationships such as "compound-binds-target," "target-involved-in-pathway," "pathway-associated-with-disease," and "compound-causes-side-effect."

- Entity Linking: For a novel NP with an elucidated structure, query the KG to find the most similar known compounds based on chemical fingerprint (e.g., Tanimoto similarity > 0.85). Extract all known targets and associated pathways for these similar compounds.

- Link Prediction & Hypothesis Generation:

- Use a KG embedding algorithm (e.g., TransE, ComplEx) to learn vector representations of all nodes and edges.

- Apply a link prediction model to rank potential "binds" relationships between the novel NP node and all potential target nodes in the graph. Prioritize targets that are top-ranked and reside in pathways biologically relevant to the observed phenotypic activity of the NP.

- Experimental Triaging: The output is a ranked list of predicted protein targets. The top 3-5 high-confidence, druggable targets are selected for in vitro validation using binding assays (e.g., SPR) or functional cellular assays.

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Reagents and Platforms for AI-Enhanced Natural Product Research

| Tool/Reagent Category | Specific Examples/Names | Primary Function in AI-NP Workflow | Key Considerations |

|---|---|---|---|

| Public Chemical & Genomic Databases | NP Atlas, COCONUT, ChEMBL, PubChem, UniProt, GNPS [2] [7] | Provide structured, (semi-)annotated data for training AI models (e.g., structures, spectra, targets). | Data quality, completeness, and standardization vary; requires careful curation. |

| Mass Spectrometry & NMR Raw Data | Vendor-specific files (.raw, .d, .fid); Open formats (mzML) [7] | Raw analytical data for training AI on spectral interpretation and dereplication. | Critical for developing domain-specific AI for structure elucidation. |

| Specialized AI Software Platforms | Enveda Biosciences (AI/ML for metabolomics), Basecamp Research (AI on biodiversity data), Insilico Medicine (generative AI) [1] [4] | Turn-key or collaborative platforms applying proprietary AI to NP discovery challenges. | Often closed-box; access may be through partnerships or licensing. |

| In Silico Prediction & Modeling Suites | Schrödinger Suite, OpenEye Toolkits, RDKit (open-source), DeepChem [4] [6] | Provide environments for molecular modeling, descriptor calculation, and implementing custom AI pipelines. | Balance between user-friendly GUI (commercial) and flexibility (open-source). |

| Validated Bioassay Kits & Reagents | Cell-based reporter assays (e.g., for NF-κB, STAT pathways), recombinant proteins, fluorescence-based enzymatic assay kits [3] [6] | Generate high-quality experimental data to validate AI predictions and train new models. | Assay relevance to human biology is crucial for translational AI. |

| Knowledge Graph Construction Tools | Neo4j, Apache TinkerPop, semantic web toolkits (RDF, OWL) [7] | Enable researchers to build custom KGs integrating private and public NP data for advanced querying and inference. | Requires significant bioinformatics and data engineering expertise. |

AI has undeniably transformed the frontier of natural product drug discovery, offering powerful tools to navigate their complexity. However, significant challenges persist. A primary issue is data fragmentation and poor standardization; NP data is multimodal (spectral, genomic, activity-based), scattered, and often of inconsistent quality, making it difficult to train robust AI models [7]. There is a critical need for a unified, community-adopted Natural Product Knowledge Graph to integrate these disparate data streams and enable causal inference beyond simple prediction [7]. Furthermore, small, imbalanced datasets for many NP classes limit model generalizability, leading to issues of "domain shift" where models fail on truly novel scaffolds [3].

Future progress hinges on addressing these foundational data challenges while advancing AI methodologies. Key directions include:

- Developing hybrid models that combine the interpretability of knowledge graphs with the power of deep learning for more explainable predictions [7].

- Implementing active learning frameworks where AI guides which experiment to perform next, optimizing the costly and time-consuming process of NP isolation and testing [3].

- Expanding the use of generative AI not just for designing NP-mimetics, but for planning the synthesis of complex NPs and predicting optimal cultivation or engineering conditions for their production [2] [6].

By systematically tackling these challenges, the research community can fully realize the potential of AI to serve as an indispensable partner in deciphering nature's chemical code, accelerating the delivery of novel, effective, and safe therapeutics derived from the natural world.

The quest to discover and develop therapeutic agents from natural products is being transformed by artificial intelligence (AI). For researchers and drug development professionals, selecting the appropriate AI model is a critical decision that balances predictive performance, data requirements, and interpretability within a domain characterized by complex chemical structures and often limited, heterogeneous datasets [3] [8].

This guide provides a structured, evidence-based comparison of the AI landscape, from traditional machine learning (ML) to advanced deep learning (DL) architectures. The central thesis is that model selection must be driven by the specific research question—whether predicting bioactivity, elucidating biosynthetic pathways, or prioritizing compounds for synthesis. We objectively evaluate performance through published experimental data, detail core methodologies, and provide a practical toolkit to empower research in natural product-based drug discovery.

Traditional Machine Learning: The Accessible Workhorses

Traditional ML algorithms remain foundational tools for quantitative structure-activity relationship (QSAR) modeling and bioactivity prediction, particularly when well-curated datasets of moderate size are available. They are prized for their computational efficiency, relative interpretability, and strong performance on structured tabular data.

- Random Forest (RF): An ensemble method that constructs multiple decision trees, offering robustness against overfitting and the ability to rank feature importance [9] [10].

- Support Vector Machine (SVM): Effective in high-dimensional spaces, SVM finds an optimal hyperplane to separate different classes of compounds and is known for performance stability with smaller training sets [9] [11].

- XGBoost: A gradient-boosting algorithm that sequentially builds models to correct errors from previous ones, often achieving top-tier performance in classification and regression tasks [11].

Performance in Natural Product Activity Prediction: A 2024 study on predicting antioxidant activity from molecular structure provides a direct comparison. Using a cleaned dataset of ~1,900 compounds represented by ECFP-4 fingerprints, RF and SVM demonstrated superior and equivalent performance, outperforming logistic regression, XGBoost, and a deep neural network (DNN) in external validation on natural product data [11].

Table 1: Comparative Performance of Traditional ML Models in Antioxidant Activity Prediction (2024 Study) [11]

| Algorithm | Average Accuracy (5-Fold CV) | Average F1-Score (5-Fold CV) | Key Strength | Computational Efficiency |

|---|---|---|---|---|

| Random Forest (RF) | 0.91 | 0.92 | Robust to overfitting; provides feature importance | High |

| Support Vector Machine (SVM) | 0.90 | 0.91 | Effective with smaller datasets; stable performance | Medium |

| XGBoost | 0.88 | 0.89 | High predictive accuracy with tuned parameters | Medium |

| Logistic Regression (LR) | 0.85 | 0.86 | Highly interpretable; fast training | Very High |

| Deep Neural Network (DNN) | 0.87 | 0.88 | Can model complex non-linear relationships | Lower (requires GPU) |

Advanced Deep Learning: Modeling Complexity and Sequence

Deep learning architectures excel at automatically learning hierarchical feature representations from raw, complex data, bypassing the need for manual fingerprinting. They are particularly suited for tasks involving sequential data, molecular graphs, and multi-modal integration [12].

- Graph Neural Networks (GNNs) / Graph Convolutional Networks (GCNs): These operate directly on a molecular graph structure (atoms as nodes, bonds as edges), making them intrinsically suited for learning structural and topological features of natural products [3] [10].

- Transformer Networks: Originally designed for language, transformers use self-attention mechanisms to weigh the importance of different parts of a sequence (e.g., a SMILES string or a protein sequence). They drive state-of-the-art tools for reaction prediction and retrosynthesis [12] [13].

- Convolutional Neural Networks (CNNs): While traditionally for image data, 1D CNNs can be applied to spectral data (e.g., mass spectrometry, NMR) or textual representations of molecules [10].

Performance in Biosynthetic Pathway Prediction: The deep learning tool BioNavi-NP exemplifies the power of advanced architectures. It uses an ensemble of transformer models trained on both general organic and biosynthetic reactions to perform retrobiosynthesis planning. In evaluations, it identified pathways for 90.2% of test compounds and achieved a top-10 single-step precursor prediction accuracy of 60.6%, which was reported to be 1.7 times more accurate than conventional rule-based approaches [13].

Table 2: Key Deep Learning Architectures and Their Applications in NP Research

| Architecture | Best Suited For | Exemplar Tool / Study | Reported Advantage | Data Requirement |

|---|---|---|---|---|

| Transformer Networks | Retrobiosynthesis, reaction prediction | BioNavi-NP [13] | 1.7x more accurate than rule-based baselines; generalizes to novel scaffolds | Large reaction datasets (e.g., 30k+ reactions) |

| Graph Neural Networks (GNNs) | Molecular property prediction, binding affinity | Various QSAR/GCNN models [3] | Learns directly from molecular structure without predefined fingerprints | Moderate to large labeled datasets |

| Recurrent Neural Networks (RNNs) | Sequential molecule generation, peptide design | Early de novo design models [12] | Models sequential dependencies in strings (SMILES, peptides) | Large sequence databases |

| Multimodal Deep Learning | Integrating genomics, metabolomics, bioactivity | Knowledge Graph-based AI [8] | Enables causal inference across data types; mimics scientist reasoning | Heterogeneous, interconnected datasets |

Comparative Analysis and Decision Framework

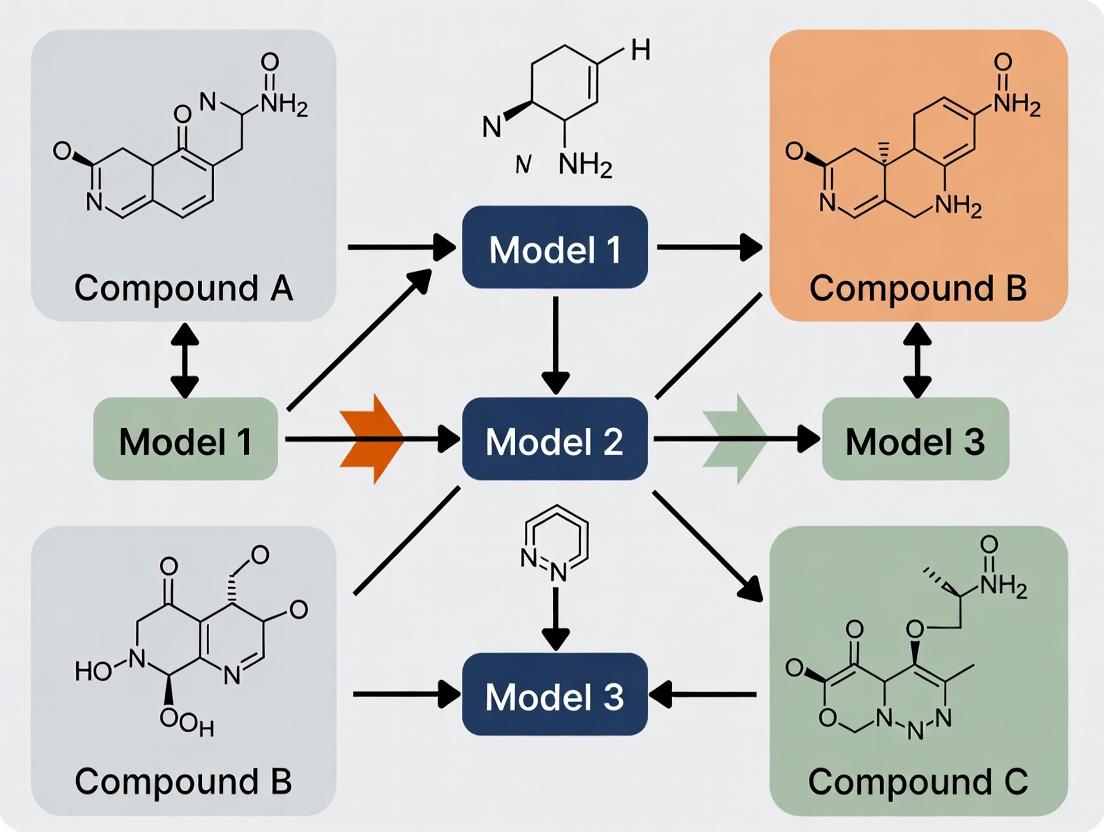

The choice between traditional ML and DL is not hierarchical but situational. The diagram below maps the logical relationship between research objectives, data constraints, and the recommended model class.

AI Model Selection Decision Flow

Key Decision Criteria:

- Data Size & Quality: Traditional ML (RF, SVM) can yield excellent results with hundreds to thousands of samples [11]. DL typically requires larger datasets (thousands to millions) but can learn from raw data representations [13].

- Research Task: For standard classification (active/inactive), traditional models are sufficient. For novel tasks like predicting complete biosynthetic pathways or generating molecules with multi-property optimization, DL is essential [3] [13].

- Interpretability Needs: If understanding molecular drivers of activity is crucial, RF's feature importance or simpler models are preferable. DL models are less interpretable, though methods like attention visualization (in transformers) are emerging [8] [10].

- Resource Constraints: Traditional ML trains quickly on CPUs. DL training is computationally intensive, requiring GPUs for efficient development [12].

Experimental Protocols for Key Comparisons

Protocol 1: Benchmarking ML Models for Bioactivity Prediction

This protocol is based on a 2024 study comparing ML algorithms for predicting antioxidant activity [11].

- Data Curation: Collect bioactivity data from public repositories (e.g., PubChem). Use specific assay types (e.g., DPPH, ABTS) for consistency. Clean data by removing duplicates (using InChIKeys) and resolving conflicting activity labels.

- Molecular Featurization: Convert SMILES strings to numerical fingerprints. The study used Extended-Connectivity Fingerprints (ECFP-4), a circular fingerprint capturing local atomic environments, with a 2048-bit length using the RDKit package.

- Data Splitting: Implement scaffold splitting using the RDKit Scaffold Network Generator to separate compounds into training and test sets based on core molecular frameworks. This evaluates a model's ability to generalize to novel chemotypes, a critical metric for natural product exploration.

- Model Training & Validation: Train multiple algorithms (e.g., SVM, RF, XGBoost, DNN) using default or optimized hyperparameters from libraries like scikit-learn. Perform 5-fold cross-validation repeated 100 times to ensure robust performance estimates.

- Evaluation Metrics: Report standard metrics: Accuracy, Precision, Recall, F1-Score, and Area Under the ROC Curve (AUROC). The F1-Score is particularly important for imbalanced datasets common in bioactivity data.

Protocol 2: Evaluating a Deep Learning Tool for Retrobiosynthesis

This protocol is adapted from the evaluation of the BioNavi-NP tool [13].

- Dataset Preparation for Training: Curate a set of known biochemical reactions from databases like MetaCyc or KEGG. Represent each reaction with Reaction SMILES, preserving stereochemistry. Augment the dataset with organic reactions involving natural product-like compounds (e.g., from USPTO) to improve model generalizability via transfer learning.

- Model Architecture & Training: Employ a transformer-based sequence-to-sequence model. The model takes the product SMILES as input and generates candidate precursor SMILES. Train using an ensemble strategy (multiple models with different random seeds) to improve robustness and top-N accuracy.

- Single-Step Evaluation: Hold out a test set of known reactions. For each product in the test set, generate ranked lists of predicted precursor sets. Calculate top-N accuracy (N=1, 3, 10), defined as the percentage of test reactions where the true precursor set appears in the top N predictions.

- Multi-Step Pathway Planning: Integrate the single-step model with a search algorithm (e.g., an AND-OR tree-based search) to plan multi-step pathways from a target molecule to available building blocks. Success is measured by the percentage of test compounds for which a plausible pathway is found and the percentage where the reported native building blocks are recovered.

The workflow for a typical AI-driven natural product discovery project, integrating both ML and DL approaches, is visualized below.

Workflow for AI-Driven Natural Product Discovery

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Software, Databases, and Resources for AI-Based NP Research

| Category | Tool / Resource | Primary Function | Relevance to NP Research | Access |

|---|---|---|---|---|

| Software & Libraries | RDKit | Cheminformatics toolkit for molecule manipulation, fingerprint generation, and descriptor calculation. | Essential for data preprocessing (SMILES handling, ECFP generation) for both ML and DL models [11]. | Open Source |

| Scikit-learn | Library for traditional ML algorithms (RF, SVM, etc.) and model evaluation. | Core platform for building and benchmarking traditional QSAR models [11]. | Open Source | |

| PyTorch / TensorFlow | Deep learning frameworks for building and training neural networks. | Required for developing custom GNNs, transformers, or other DL architectures [12] [10]. | Open Source | |

| Specialized AI Tools | BioNavi-NP | Deep learning tool for predicting biosynthetic pathways of natural products. | Guides the elucidation and heterologous reconstruction of NP pathways [13]. | Freely Available Web Tool |

| AlphaFold | AI system for predicting 3D protein structures. | Predicts structures of biosynthetic enzymes or therapeutic targets for NP docking studies [8]. | Open Source | |

| Key Databases | PubChem | Repository of chemical molecules, their properties, and bioactivity data. | Primary source for curating bioactivity datasets for model training and validation [11]. | Public |

| LOTUS Initiative | Wikidata-based resource organizing >750,000 natural product-organism pairs. | Provides structured, linked data to support knowledge graph construction and AI training [8]. | Public | |

| MetaCyc / KEGG | Databases of metabolic pathways and enzymatic reactions. | Source of known biochemical reactions for training retrobiosynthesis models like BioNavi-NP [13]. | Public | |

| Data Structures | Knowledge Graphs | Graph-based data structure integrating entities (NPs, genes, targets) and their relationships. | Proposed as the ideal foundation for multimodal AI that can reason across genomics, chemistry, and biology [8]. | Emerging Best Practice |

The landscape of AI for natural product research is richly populated with both proven traditional algorithms and powerful deep learning architectures. Random Forest and Support Vector Machine remain highly competitive for bioactivity prediction with structured data [9] [11], while transformer-based and graph-based models open new frontiers in retrobiosynthesis and complex pattern recognition [3] [13].

The future lies in the pragmatic integration of these approaches and in addressing fundamental field challenges: the scarcity of large, standardized datasets and the need for interpretable, biologically grounded predictions [3] [8]. By leveraging the comparative insights and experimental protocols outlined here, researchers can make informed choices, applying the right AI model to the right problem, thereby accelerating the discovery of the next generation of natural product-derived therapeutics.

The discovery and development of therapeutics from natural products (NPs) represent a cornerstone of modern medicine, with approximately one-third of current drugs originating directly or indirectly from nature [14]. However, the transition from traditional NP research to data-driven, artificial intelligence (AI)-powered discovery is severely hampered by a persistent data trilemma: datasets that are simultaneously small, imbalanced, and heterogeneous. This combination presents a unique and formidable central hurdle for researchers aiming to build predictive models for NP activity.

Unlike synthetic compound libraries, NP datasets are intrinsically complex. They often consist of "complex chemical entities" like essential oils or plant extracts containing dozens of bioactive constituents whose effects may be synergistic, antagonistic, or additive [14]. This chemical heterogeneity leads to biological response heterogeneity, making it difficult to establish clear structure-activity relationships. Furthermore, bioactivity data is scarce and expensive to generate, resulting in small sample sizes. These small datasets are invariably imbalanced, as active compounds against a specific target are vastly outnumbered by inactive ones [15] [16]. This imbalance, coupled with "small disjuncts" and overlapping feature spaces where active and inactive compounds share similar characteristics, catastrophically degrades the performance of standard machine learning classifiers, biasing them toward the majority (inactive) class and rendering them ineffective at identifying promising leads [16].

This guide provides a comparative analysis of AI modeling strategies designed to overcome this trilemma. By objectively evaluating performance across key metrics and detailing experimental protocols, we aim to equip researchers with the knowledge to select and implement the most effective computational tools for unlocking the therapeutic potential concealed within complex natural products.

Comparative Performance of AI Modeling Strategies

The following table summarizes the core approaches for handling challenging NP data, their key methodologies, reported performance metrics, and inherent advantages and limitations.

Table 1: Comparative Analysis of AI Modeling Strategies for NP Datasets

| Modeling Strategy | Core Methodology for Addressing Data Challenges | Reported Performance (Context) | Key Advantages | Major Limitations |

|---|---|---|---|---|

| Algorithm-Level Adaptations (e.g., SVM++) | Modifies the learning algorithm itself. Identifies and separates overlapped class regions, then maps critical overlapping samples to a higher-dimensional space to improve minority class visibility [16]. | Outperformed standard SVM, KNN, and SMOTE-SVM on 30 multi-class imbalanced datasets with overlap. Demonstrated significant improvement in precision for minority classes [16]. | Preserves original data distribution; addresses the root cause of classifier confusion in overlapped regions; no risk of overfitting from synthetic data. | Highly complex and algorithm-specific; requires deep expertise to implement and tune; less generalizable across different model architectures. |

| Data-Level Resampling Techniques | Balances class distribution pre-training. Includes oversampling the minority class (e.g., SMOTE), undersampling the majority class, or hybrid methods [15] [16]. | Widely used but performance varies. Simple random oversampling can cause overfitting; SMOTE can generate noisy samples in high-overlap regions, degrading performance [16]. | Conceptually simple; model-agnostic; can be combined with any classifier; effective for simple imbalance. | Risky with small datasets (loss of information or amplification of noise); can exacerbate overfitting; may not address fundamental feature-space overlap. |

| Cost-Sensitive Learning | Incorporates a penalty matrix into the training process. Assigns a higher misclassification cost to minority (active) class samples than to majority class samples [16]. | Improves recall for the minority class but often at the expense of overall accuracy. Effectiveness depends on accurate cost assignment. | Directly alters the learning objective to favor minority class recognition; no modification of original data. | Optimal cost matrix is non-trivial to define; can lead to severely skewed probability estimates; performance sensitive to cost parameters. |

| Ensemble Methods (Hybrid) | Combines data-level and algorithm-level approaches. Often uses resampling to create balanced subsets, then aggregates predictions from multiple base classifiers (e.g., Balanced Random Forests) [16]. | Generally provides more robust and stable performance than single-method approaches by reducing variance. | Mitigates overfitting from resampling; leverages wisdom of multiple classifiers; often state-of-the-art for imbalanced data. | Computationally expensive; complex to train and deploy; results can be less interpretable. |

| Federated Learning (FL) | Enables collaborative training without centralizing data. Models are trained locally on private datasets (e.g., at different institutions) and only model weights are aggregated [17]. | In a real-world radiology study, FL outperformed models trained on single-site data and centralized ensemble methods in segmentation tasks [17]. | Overcomes data scarcity and privacy constraints by pooling knowledge from multiple small, private sources. | Requires significant infrastructural and organizational coordination; introduces communication overhead; managing heterogeneous data formats is challenging [17]. |

| Transfer Learning | Leverages knowledge from a large, source domain (e.g., general chemical bioactivity data) to improve learning in a small, target NP domain. | Promising for small-sample scenarios. Pre-training on large datasets like ChEMBL or PubChem can provide robust feature representations [18]. | Dramatically reduces the amount of target-domain data needed; effective for initial feature learning. | Risk of negative transfer if source and target domains are too dissimilar; requires careful design of pre-training tasks. |

Experimental Protocols for Key Methodologies

Protocol for Algorithm-Level Adaptation (SVM++)

The SVM++ framework is designed to tackle combined imbalance and overlap [16]. The following workflow outlines its experimental implementation:

1. Data Preprocessing & Partitioning:

- Input: A multi-class NP bioactivity dataset with labeled active/inactive classes and molecular descriptors or fingerprints.

- Step 1 - Overlap Detection (Algorithm-1): For each pair of classes, a k-Nearest Neighbor (k-NN) rule is applied. A sample is identified as residing in an "overlapped region" if among its k nearest neighbors, at least one belongs to a different class. This partitions the dataset into overlapped and non-overlapped subsets [16].

- Step 2 - Critical Region Filtering (Algorithm-2): Within the overlapped subset, a "Critical-1 Region" is identified. This region contains samples where the local imbalance ratio is most severe, meaning minority class samples are surrounded predominantly by majority class neighbors, maximizing classification difficulty [16].

2. Model Training with Modified Kernel:

- Step 3 - Dimensionality Transformation (Algorithm-3): A custom kernel function is applied specifically to samples in the Critical-1 Region. This function maps these critical samples into a higher-dimensional space based on the mean of the maximum and minimum distances between majority and minority class clusters. The goal is to "stretch" the feature space to increase the separability and visibility of the minority class samples [16].

- Step 4: A standard Support Vector Machine (SVM) is then trained on the transformed dataset (non-overlapped samples + transformed Critical-1 samples) to find the optimal separating hyperplane.

3. Validation:

- Performance must be evaluated using metrics robust to imbalance, such as the Area Under the Precision-Recall Curve (AUPRC), F1-Score (for the active/minority class), and Geometric Mean (G-mean), rather than overall accuracy [16].

- Comparison against baseline classifiers (standard SVM, KNN) and common resampling techniques (SMOTE) is essential.

Workflow for the SVM++ Algorithm [16]

Protocol for Federated Learning in a Multi-Institutional Setting

Federated Learning (FL) is a strategic solution for aggregating knowledge from small, privately held NP datasets across different labs or companies [17].

1. Initiative Setup & Infrastructure:

- Organization & Legal: Establish a consortium or collaboration agreement among participating institutions. Define governance, data usage agreements, and intellectual property rights [17].

- Technical Infrastructure: Deploy a secure FL platform (e.g., based on open-source frameworks like Flower or NVIDIA FLARE). Each participant (client) hosts a local server. A central server coordinates the training rounds without accessing raw data [17].

2. FL Model Training Cycle:

- Step 1 - Initialization: The central server initializes a global AI model (e.g., a graph neural network for molecular property prediction) and shares it with all clients.

- Step 2 - Local Training: Each client trains the model on its local, private NP dataset for a set number of epochs.

- Step 3 - Aggregation: Clients send their updated model weights/gradients (not their data) to the central server. The server aggregates these updates, typically using the Federated Averaging (FedAvg) algorithm, to create a new improved global model [17].

- Step 4 - Redistribution: The updated global model is sent back to all clients.

- Step 5 - Iteration: Steps 2-4 repeat for multiple communication rounds until the model converges.

3. Evaluation:

- Personalization Performance: Evaluate the final global model, and potentially a model fine-tuned on local data, on each institution's private test set.

- Generalization Performance: Benchmark the FL-trained model against alternative approaches: 1) Local-Only Models (trained on single-site data), and 2) Ensemble Models (trained separately on each site and averaged). FL should demonstrate superior generalization, especially for sites with very small local datasets [17].

Federated Learning Workflow for Multi-Institutional NP Research [17]

Successfully applying AI to NP research requires both computational tools and high-quality experimental data. The following table details key resources.

Table 2: Research Reagent Solutions for AI-Driven NP Discovery

| Category | Item/Resource | Function & Relevance | Example/Source |

|---|---|---|---|

| Bioactivity & Toxicity Databases | ChEMBL [18] | A manually curated database of bioactive molecules with drug-like properties. Provides chemical structures, bioactivity data (IC50, Ki), and ADMET profiles essential for training and validating predictive models. | https://www.ebi.ac.uk/chembl/ |

| PubChem [18] | The world's largest collection of freely accessible chemical information. Contains massive data on substance structures, biological activities (BioAssay), and toxicity, crucial for sourcing NP data and negative samples. | https://pubchem.ncbi.nlm.nih.gov | |

| TOXRIC / ICE / DSSTox [18] | Specialized toxicology databases. Provide standardized toxicity endpoint data (e.g., LD50, carcinogenicity) for predicting and avoiding NP toxicity early in the discovery pipeline. | Various public and governmental repositories. | |

| Experimental Assay Kits | In Vitro Cytotoxicity Assays | Generate quantitative bioactivity data for model training. Measures cell viability after exposure to NP extracts or compounds, providing a primary toxicity/activity endpoint. | MTT Assay, CCK-8 Assay [18]. |

| Antioxidant & Antimicrobial Activity Assays | Provide specific bioactivity data relevant to NP mechanisms (e.g., cardiovascular protection [14]). Data from these standardized assays are used as labels for supervised learning. | DPPH/ABTS radical scavenging, Disk diffusion/MIC assays. | |

| Chemical Analysis Standards | Gas Chromatography-Mass Spectrometry (GC-MS) | The gold standard for characterizing volatile complex NPs like essential oils. Provides the detailed, semi-quantitative compositional data needed to link chemical heterogeneity to biological effect [14]. | Commercial GC-MS systems with established compound libraries. |

| Computational Tools | Resampling Libraries | Software implementations of algorithms to handle class imbalance before model training. | imbalanced-learn (Python library) for SMOTE, ADASYN, etc. |

| Federated Learning Frameworks | Enable the implementation of privacy-preserving, collaborative model training across distributed datasets. | Flower, NVIDIA FLARE, OpenFL [17]. | |

| Molecular Descriptors/ Fingerprints | RDKit / Mordred | Open-source cheminformatics toolkits. Generate numerical representations (descriptors, fingerprints) of NP chemical structures from SMILES strings, which serve as the feature input for AI models. | https://www.rdkit.org |

Navigating the central hurdle of small, imbalanced, and heterogeneous NP data requires a move beyond standard modeling approaches. Based on the comparative analysis:

- For Single, Challenging Datasets: Algorithm-level adaptations like SVM++ offer a powerful, data-preserving solution when feature-space overlap is a primary concern alongside imbalance [16].

- For Aggregating Disparate Private Data: Federated Learning presents a transformative paradigm for building robust models without data sharing, directly addressing the small-data problem while respecting privacy and intellectual property [17].

- For General Application: Hybrid Ensemble Methods that strategically combine careful resampling with strong base classifiers (like gradient-boosted trees) often provide the most reliable and generalizable performance for moderately sized datasets [15] [16].

The future of AI in NP discovery lies in the integration of multimodal data (chemical, genomic, phenotypic) and the development of explainable AI (XAI) models that can decode the complex synergies within natural products. By strategically employing the protocols and tools outlined here, researchers can transform the central hurdle from a barrier into a gateway for the next generation of natural product-based therapeutics.

From Structure to Prediction: Methodologies of AI Models for Activity Forecasting

This comparison guide provides an objective evaluation of molecular representation methods critical for AI-driven natural product (NP) research. Within the broader thesis of comparing AI models for NP activity prediction, we analyze the performance, applicability, and experimental backing of dominant encoding strategies: fingerprints, string-based representations, and molecular graphs. The unique chemical complexity of NPs—characterized by broad molecular weight distributions, multiple stereocenters, and high fractions of sp³-hybridized carbons—poses distinct challenges for these representations, influencing model selection and predictive accuracy [19].

Performance Comparison of Molecular Representations

The effectiveness of a molecular representation is highly dependent on the chemical domain and the specific prediction task. The table below provides a comparative summary of major representation types, synthesizing data from benchmark studies on both general and NP-specific datasets.

Table 1: Comparative Overview of Molecular Representation Methods for Natural Products

| Representation Type | Key Examples | Core Principle | Strengths for NPs | Key Limitations for NPs | Reported Performance (Sample Dataset/Task) |

|---|---|---|---|---|---|

| Molecular Fingerprints (Expert-based) | ECFP [19], MACCS [20], Functional Group (FG) [21], Morgan [21] | Encodes presence/absence/count of predefined or dynamically generated structural fragments. | Fast computation; Interpretable bits; Strong baseline for QSAR. Many perform well on NP bioactivity prediction [19]. | May miss NP-specific motifs; Performance varies—ECFP is not always optimal for NPs [19]. | Best FG+XGBoost AUROC: 0.753 [21]; MACCS performed best in regression tasks [22]. |

| String-Based Sequences | SMILES [23], SELFIES [23], t-SMILES [23] | Linear string notation describing molecular structure via traversal rules. | Simple, compact; Directly usable by NLP models. Fragment-based t-SMILES reduces invalid generation [23]. | Standard SMILES can yield invalid strings; Struggles with complex NP topology. | t-SMILES outperforms SMILES, SELFIES in goal-directed tasks and novelty [23]. |

| Molecular Graphs | GCN [22], GAT [22], MPNN [24] | Atoms as nodes, bonds as edges. Features assigned to both. | Naturally captures topology and connectivity; No pre-defined vocabulary needed. | Standard GNNs may struggle with long-range interactions [23]; Requires careful feature engineering. | Graph-based models show strong performance but are not universally superior to fingerprints [24] [20]. |

| Hybrid / Integrated Models | MoleculeFormer [22], FH-GNN [24], FP-GNN [22] | Combines multiple representations (e.g., graph + fingerprint + 3D). | Leverages complementary information; Often achieves state-of-the-art results. | Increased model complexity and computational cost. | MoleculeFormer shows robust performance across 28 diverse tasks [22]. FH-GNN outperforms baselines on MoleculeNet benchmarks [24]. |

Critical Insight for NPs: A systematic benchmark of over 20 fingerprints on NP databases (COCONUT, CMNPD) revealed that while Extended Connectivity Fingerprints (ECFP) are the de facto standard for drug-like compounds, other fingerprints can match or outperform them for NP bioactivity prediction [19]. This underscores that the optimal representation for the NP chemical space is not predetermined and requires empirical validation.

Detailed Experimental Protocols and Performance Data

The following tables and descriptions detail the methodologies from key studies that inform the comparison above.

Protocol 1: Benchmarking Fingerprints for NP Bioactivity Prediction

This protocol [19] is essential for selecting the optimal fingerprint for NP modeling.

1. Dataset Curation:

- Source: Natural products from the COCONUT and CMNPD databases [19].

- Preprocessing: Salts were removed, structures were standardized, and charges were neutralized using the ChEMBL curation package. Unparsable SMILES were discarded [19].

- Task Construction: For bioactivity prediction (e.g., "antibacterial"), all NPs annotated with the property formed the positive class. A random sample of unlabeled NPs from CMNPD formed the negative class, with a minimum dataset size of 1,000 compounds [19].

2. Fingerprint Calculation & Modeling:

- Fingerprints: 20 different algorithms were computed using default parameters from RDKit and other packages. Categories included circular (e.g., ECFP), path-based (e.g., Atom Pair), substructure-based (e.g., MACCS), pharmacophore-based, and string-based (e.g., MHFP) fingerprints [19].

- Model Training: A simple Random Forest classifier was trained for each fingerprint/dataset pair.

- Evaluation: Performance was evaluated using the Area Under the Receiver Operating Characteristic curve (AUROC). The study found no single fingerprint consistently dominated across all NP tasks [19].

Table 2: Fingerprint Performance on Natural Product Bioactivity Prediction Tasks [19]

| Fingerprint Category | Example Algorithm | Key Finding on NP Datasets |

|---|---|---|

| Circular | ECFP4, FCFP4 | Strong performance, but not universally the best. ECFP6 may be less effective for NPs than for drug-like molecules. |

| Path-Based | Atom Pair (AP), Topological Torsion (TT) | Often showed competitive or superior performance to circular fingerprints. |

| Substructure-Based | MACCS Keys (166 bits) | Delivered robust and consistent performance across multiple NP tasks. |

| String-Based | MinHashed Fingerprint (MHFP) | Performed well, offering a different and complementary view of chemical space. |

Protocol 2: Integrated Graph-Fingerprint Model Evaluation

This protocol [24] outlines the evaluation of hybrid models like the Fingerprint-enhanced Hierarchical GNN (FH-GNN).

1. Dataset & Benchmarking:

- Source: Standard MoleculeNet datasets (e.g., BACE, BBBP, Tox21, ESOL, Lipophilicity) [24].

- Splitting: Stratified splitting to maintain class balance.

2. Model Framework (FH-GNN):

- Hierarchical Graph Construction: Molecules are fragmented using the BRICS algorithm. A three-level graph (atom, motif, molecule) is constructed [24].

- Feature Encoding: A Directed-MPNN encodes the hierarchical graph. Simultaneously, a molecular fingerprint (e.g., Morgan) is encoded via a separate neural network [24].

- Adaptive Fusion: An attention mechanism dynamically weights and combines the features from the graph and the fingerprint modules [24].

- Prediction: The fused representation is fed into a Multi-Layer Perceptron for property prediction [24].

3. Results: FH-GNN demonstrated superior performance over baseline models that used only graphs or only fingerprints, validating the benefit of integrating complementary representations [24].

Workflow and Methodology Diagrams

Diagram 1: AI-Driven NP Activity Prediction Workflow

Diagram 2: Categorization and Relationships of Representation Methods

Diagram 3: Experimental Validation Protocol for Representation Comparison

Table 3: Key Software, Databases, and Resources for NP Representation Research

| Resource Name | Type | Primary Function in NP Representation Research | Key Reference/Source |

|---|---|---|---|

| RDKit | Open-Source Cheminformatics Library | Core toolkit for computing fingerprints (Morgan, Atom Pair, etc.), molecular descriptors, and generating molecular graphs from SMILES. Essential for standardization and feature extraction. | [21] [19] |

| COCONUT (COlleCtion of Open Natural prodUcTs) | Database | A large, open-access database of over 400,000 unique natural products. Serves as a primary source for unsupervised analysis and benchmarking representation methods on NP chemical space. | [19] |

| CMNPD (Comprehensive Marine Natural Products Database) | Database | A comprehensive database of marine natural products with associated bioactivity annotations. Used for constructing supervised QSAR modeling tasks for NP activity prediction. | [19] |

| MoleculeNet | Benchmark Suite | A standardized collection of molecular property prediction datasets. Used to benchmark and compare the performance of new representation methods and models in a controlled setting. | [22] [24] |

| PyTorch / TensorFlow | Deep Learning Framework | Libraries for building, training, and evaluating complex AI models, including Graph Neural Networks (GNNs), transformers, and hybrid architectures. | [22] [24] |

| GitHub Repository: NP_Fingerprints | Code Package | An open-source Python package provided by benchmark studies to compute the wide array of molecular fingerprints used in NP research, ensuring reproducibility. | [19] |

| KNIME / Nextflow | Workflow Management | Platforms for building reproducible, end-to-end computational pipelines that integrate data retrieval, preprocessing, representation, modeling, and evaluation steps. | (General best practice) |

| CETSA (Cellular Thermal Shift Assay) | Experimental Validation Platform | A target engagement assay used to experimentally validate the mechanistic predictions (e.g., binding) made by AI models in intact cellular environments, closing the in silico-in vitro loop [25]. | [25] |

The prediction of molecular bioactivity is a cornerstone of modern drug discovery, and the selection of an appropriate artificial intelligence (AI) model architecture is critical for success. Graph Neural Networks (GNNs), Transformers, and Convolutional Neural Networks (CNNs) represent three dominant paradigms, each with distinct approaches to processing molecular and biological data. The global machine learning in drug discovery market is experiencing significant growth, driven by the demand for these tools to analyze complex data and accelerate the identification of novel drug candidates [26]. This guide provides a comparative analysis of these architectures, grounded in experimental data and their application within natural product activity prediction research.

GNNs have emerged as a transformative tool by directly modeling molecules as graphs, where atoms are nodes and bonds are edges, thereby natively capturing topological structure [27] [28]. Transformers, renowned for their success in sequence processing, leverage self-attention mechanisms to model long-range dependencies, making them suitable for sequence-based molecular representations (like SMILES) and complex multimodal data such as transcriptomics [29] [30]. CNNs, traditionally applied to grid-like data, are effectively utilized for processing molecular fingerprints and image-like representations of molecules or for analyzing one-dimensional gene expression profiles [31].

The following table provides a high-level comparison of the three architectures:

Table: Core Architectural Comparison for Molecular Modeling

| Architecture | Core Molecular Representation | Key Strength | Typical Application in Bioactivity Prediction |

|---|---|---|---|

| Graph Neural Network (GNN) | Molecular Graph (Nodes=Atoms, Edges=Bonds) | Native encoding of topological structure and relational information [27] [28]. | Drug-target interaction, molecular property prediction, toxicity assessment [27] [32]. |

| Transformer | Sequence (e.g., SMILES, Amino Acids) or Multimodal Features | Captures long-range, non-local dependencies via self-attention; excels at integration of heterogeneous data [29] [30]. | Retrosynthesis prediction, multi-omics survival analysis, protein-ligand binding [29] [30]. |

| Convolutional Neural Network (CNN) | Grid/Tensor (e.g., Fingerprints, 2D Molecular Images, 1D Gene Vectors) | Efficient extraction of local hierarchical patterns and features through convolution filters [31]. | Processing gene expression profiles, image-based toxicity screening, fingerprint-QSAR models [31]. |

Core Principles and Evolution

Graph Neural Networks (GNNs): Learning from Molecular Topology

GNNs operate on the fundamental principle of message passing, where information from a node's local neighborhood is iteratively aggregated to build a refined representation of each node and the entire graph [33]. This mechanism is inherently suited to molecules, allowing the model to learn from functional groups and substructures directly. Recent innovations focus on overcoming traditional GNN limitations, such as over-smoothing, and enhancing expressivity. For instance, the Kolmogorov-Arnold GNN (KA-GNN) integrates novel learnable function modules based on the Kolmogorov-Arnold theorem, replacing static activation functions to improve both prediction accuracy and interpretability by highlighting chemically meaningful substructures [28]. Furthermore, frameworks like the eXplainable Graph-based Drug response Prediction (XGDP) enhance node features using circular fingerprint algorithms, providing a richer description of atomic environments within the message-passing framework [31].

Transformers: Capturing Global Context

Transformers abandon recurrent and convolutional inductive biases in favor of a self-attention mechanism, which dynamically computes the relevance between all elements in an input set [33]. This allows the model to capture complex, long-range dependencies crucial for understanding intricate molecular interactions or gene-gene relationships. In bioactivity prediction, their application has evolved from processing simple SMILES strings to sophisticated multimodal frameworks. The Transcriptome Transformer (TxT) exemplifies this by jointly analyzing transcriptomic data and clinical features to improve patient survival prediction, using attention to identify key gene pathways [29]. For natural products, the Graph-Sequence Enhanced Transformer (GSETransformer) hybridizes GNN and Transformer components to tackle the template-free prediction of biosynthetic pathways, a task of high relevance for natural product research [30].

Convolutional Neural Networks (CNNs): Extracting Local Features

CNNs apply a series of learnable convolution filters across input data to detect spatially local patterns, building hierarchical feature representations [31]. In drug discovery, 1D CNNs are frequently applied to gene expression vectors from cell lines to create latent representations for drug response prediction models [31]. While less common for direct molecular graph processing than GNNs, CNNs remain powerful for specific representations, such as treating molecular fingerprints as 1D tensors or generating 2D image-like projections of molecular structures.

The Convergence: Hybrid and Integrated Architectures

The boundaries between architectures are blurring with the development of high-performing hybrid models. These models aim to synergize the strengths of individual architectures. The Enhanced GNN and Transformer (EHDGT) model, for example, uses a parallelized architecture with a gated fusion mechanism to balance the local feature learning of GNNs with the global receptive field of Transformers [33]. Similarly, MoleculeFormer is a multi-scale model based on a GCN-Transformer architecture that integrates 3D structural information and prior molecular fingerprints for robust property prediction [34]. This trend toward integration is a defining characteristic of the current state-of-the-art.

Comparative Performance Analysis & Experimental Data

Quantitative Performance Benchmarks

Experimental evaluations across diverse public benchmarks reveal the relative strengths of different architectures and their hybrids. Performance is highly task-dependent, but hybrids consistently rank at the top.

Table: Performance Benchmarks Across Model Architectures on Key Tasks

| Model Architecture | Model Name | Key Task / Dataset | Performance Metric | Reported Result | Key Advantage Demonstrated |

|---|---|---|---|---|---|

| Advanced GNN | KA-GNN (Variant) [28] | Molecular Property Prediction (e.g., ESOL, FreeSolv) | RMSE (Lower is better) | Outperformed conventional GNNs (e.g., GCN, GAT) | Improved accuracy & parameter efficiency [28]. |

| GNN-Transformer Hybrid | MoleculeFormer [34] | Classification (e.g., BACE, BBBP) | AUC-ROC (Higher is better) | State-of-the-art or competitive on 28 datasets | Robust multi-scale feature integration [34]. |

| GNN-Transformer Hybrid | GSETransformer [30] | Single-step & Multi-step Retrosynthesis (USPTO) | Top-1 Accuracy | State-of-the-art on benchmark datasets | Effective for complex biosynthetic pathway prediction [30]. |

| Explainable GNN | XGDP [31] | Drug Response Prediction (GDSC) | Root Mean Squared Error (RMSE) | Outperformed prior methods (GraphDRP, tCNN) | High predictive accuracy with mechanistic insight [31]. |

| Pure Transformer | Transcriptome Transformer (TxT) [29] | Patient Survival Prediction (TCGA) | Concordance Index (C-index) | Outperformed existing survival prediction methods | Effective multimodal learning of transcriptomic & clinical data [29]. |

Detailed Experimental Protocols

To ensure reproducibility and provide context for the data above, here is a summary of common experimental methodologies derived from the cited research:

Table: Summary of Key Experimental Protocols

| Protocol Component | Typical Implementation in Reviewed Studies | Purpose & Rationale |

|---|---|---|

| Data Sourcing & Splitting | Use of standard public benchmarks (MoleculeNet, GDSC, USPTO) [28] [34] [31]. Stratified splitting by task or scaffold to avoid data leakage. | Ensures fair comparison and measures generalizability. Scaffold split tests model ability to generalize to novel chemotypes. |

| Molecular Featurization | GNNs: Atoms (node feat.: atomic number, degree) & Bonds (edge feat.: type, conjugation) [28] [31]. Transformers/Hybrids: SMILES strings or tokenized graphs; often combined with fingerprints (ECFP, MACCS) [30] [34]. | Input representation directly influences model capability. Hybrid featurization provides complementary information. |

| Model Training & Optimization | Use of Adam/AdamW optimizer. Loss function: MSE for regression, Cross-Entropy for classification. Extensive hyperparameter tuning (learning rate, dropout, depth). | Standard deep learning practice to ensure stable convergence and prevent overfitting. |

| Evaluation Metrics | Regression: RMSE, MAE. Classification: AUC-ROC, Accuracy. Retrosynthesis: Top-k accuracy [30] [34] [31]. | Task-specific metrics that align with the practical goal of the prediction (e.g., ranking candidate molecules). |

| Interpretability Analysis | Use of attention weight visualization (Transformers), gradient-based attribution (Integrated Gradients), or subgraph identification (GNNExplainer) [29] [31]. | Moves beyond "black box" predictions to provide mechanistic hypotheses (e.g., salient functional groups). |

Implementing and researching these AI models requires a suite of standardized data, software tools, and computational resources.

Table: Key Research Reagent Solutions for AI-Driven Bioactivity Prediction

| Resource Category | Specific Item / Tool | Function & Relevance in Research |

|---|---|---|

| Standardized Datasets | MoleculeNet [34], GDSC (Genomics of Drug Sensitivity in Cancer) [31], USPTO [30] | Curated, public benchmarks for training and fair comparison of models across diverse tasks (property, response, synthesis). |

| Molecular Featurization Libraries | RDKit [31], DeepChem [31] | Open-source toolkits to convert SMILES to molecular graphs, compute fingerprints (ECFP, MACCS), and generate atomic/bond descriptors. |

| Deep Learning Frameworks | PyTorch, PyTorch Geometric, TensorFlow | Core frameworks for building, training, and deploying GNN, Transformer, and CNN models. PyTorch Geometric is specialized for graph data. |

| Specialized Model Code | Public GitHub repositories for models like TxT [29], GSETransformer [30], XGDP [31] | Provides reference implementations, facilitating validation, extension, and application of state-of-the-art methods. |

| Computational Infrastructure | High-Performance GPU clusters (e.g., NVIDIA A100/V100), Cloud TPU/GPU services | Essential for training large-scale deep learning models, especially Transformers and deep GNNs on massive datasets. |

Diagram 1: Comparative Workflow of AI Architectures for Bioactivity Prediction. This diagram illustrates the parallel processing pathways for GNNs, Transformers, and CNNs, showing how different input representations (molecular graphs, sequences, and feature vectors) flow through distinct architectural paradigms before being integrated for a final prediction.

Diagram 2: High-Level Architecture of a Hybrid GNN-Transformer Model. This diagram depicts the synergistic design of a state-of-the-art hybrid model, where separate modules process graph and sequence information, which are subsequently fused via an attention mechanism to make a final prediction [30].

The comparative analysis reveals that no single architecture is universally superior; the optimal choice is dictated by the specific research question, data type, and desired output. GNNs are the default for tasks where molecular topology is paramount, such as predicting intrinsic molecular properties or drug-target interactions [27] [28]. Transformers excel in tasks involving long-range dependencies, sequence generation (like retrosynthesis), and the complex integration of heterogeneous biological data [29] [30]. CNNs remain a robust and efficient choice for processing vectorized data like gene expression profiles or pre-computed molecular fingerprints [31].

The most significant trend is the ascendancy of hybrid models, such as GNN-Transformer architectures, which consistently achieve state-of-the-art performance by leveraging complementary strengths [33] [30] [34]. For researchers embarking on natural product activity prediction, a strategic approach is recommended:

- Start with a well-established GNN baseline if the primary data is molecular structure.

- Incorporate Transformer components or attention mechanisms when modeling complex biosynthetic pathways (which are sequential and conditional) or when integrating multi-omics data [29] [30].

- Prioritize interpretability from the outset. Leveraging explainable AI (XAI) techniques intrinsic to attention-based models or tools like GNNExplainer is no longer ancillary but critical for generating testable biological hypotheses and guiding experimental validation [31].

Ultimately, the convergence of these architectures is driving a new paradigm in computational drug discovery, one that promises more accurate, efficient, and interpretable predictions to accelerate the journey from natural product discovery to viable therapeutic candidates.

The paradigm of drug discovery is shifting from a singular “one target–one drug” approach to a holistic, systems-level framework that embraces polypharmacology—the design of molecules to interact with multiple therapeutic targets simultaneously [35]. This shift is particularly critical for harnessing the potential of Natural Products (NPs), which are chemically complex and often exert their therapeutic effects through synergistic, multi-target mechanisms [3]. Traditional single-target prediction models fail to capture this complexity, leading to a high failure rate in translating NP bioactivity into effective therapies [2].

Artificial Intelligence (AI) is emerging as a transformative tool to decode this complexity. By integrating multimodal data—from chemical structures and omics profiles to high-throughput screening results—AI models can predict polypharmacological profiles, identify synergistic drug combinations, and infer mechanisms of action (MoA) [36]. This comparison guide objectively evaluates the performance, experimental protocols, and applicability of leading AI frameworks in this domain, providing researchers with a critical analysis to inform their work in NP-based drug discovery.

Comparative Performance of AI Frameworks

The following table summarizes the key performance metrics, strengths, and optimal use cases for major classes of AI models applied to polypharmacology and synergy prediction, based on a systematic review of recent studies [37] [36].

Table 1: Performance Comparison of AI Frameworks for Complex Pharmacology Predictions

| AI Model Category | Representative Model/Approach | Key Performance Metrics (Typical Range) | Primary Strength | Major Limitation | Best Suited For |

|---|---|---|---|---|---|

| Graph-Based Models (GBM) | Graph Neural Networks (GNNs), Knowledge Graph Embeddings | AUROC: 0.85-0.92; AUPRC: 0.30-0.45 [37] | Captures relational, network-level data (e.g., protein-protein, drug-target networks). Excels at link prediction for novel interactions [7]. | Requires high-quality, structured knowledge graphs. Performance can degrade with sparse or noisy data [37]. | Inferring MoA and discovering novel polypharmacology from biological networks. |

| Multimodal Deep Learning | Madrigal (attention bottleneck fusion) [36] | AUROC (split-by-drugs): 0.768; AUROC (split-by-pairs): 0.847 [36] | Integrates diverse data types (structure, transcriptomics, pathways). Robust to missing data modalities during inference [36]. | Computationally intensive; requires significant data from each modality for training. | Translating preclinical in vitro data (e.g., cell viability, transcriptomics) to clinical outcome predictions. |

| Traditional Machine Learning (ML) | Random Forest, Support Vector Machines (SVM) | AUROC: 0.80-0.88 [37] | Highly interpretable, efficient with smaller, tabular feature sets (e.g., chemical descriptors). | Struggles with raw, unstructured data and complex, high-dimensional relationships [38]. | Initial screening and activity prediction when using well-defined molecular descriptors. |

| Large Language Models (LLMs) & NLP | Transformer-based models for literature mining | Accuracy on relation extraction: >85% [2] | Unlocks information from unstructured text (research articles, patents). Powerful for hypothesis generation. | Risk of generating "plausible but inaccurate" hallucinations; requires careful grounding in biomedical knowledge [2]. | Curating novel drug-interaction hypotheses and expanding knowledge graphs from literature. |

Detailed Experimental Protocols for Key AI Approaches

Protocol for Multimodal Fusion (Madrigal Model)

Objective: To predict clinical adverse outcomes of drug combinations from preclinical data modalities [36].

- Data Acquisition & Curation:

- Sources: DrugBank (expert-curated interactions) [36], TWOSIDES (FAERS-derived adverse events) [36], LINCS (transcriptomic profiles) [36], PubChem (chemical structures).

- Representation:

- Structure: SMILES strings encoded via a dedicated molecular graph neural network [36].

- Pathway: Drug targets mapped to a biological knowledge graph (e.g., Reactome) [36].

- Transcriptomics: Gene expression signatures from perturbed cell lines (L1000 assays) [36].

- Cell Viability: Dose-response profiles across cancer cell lines [36].

- Model Architecture & Training:

- Modality-Specific Encoders: Each data type is processed by a separate neural network encoder (e.g., GNN for structure, CNN for viability profiles) [36].

- Contrastive Alignment: Encoder outputs are projected into a unified latent space using a contrastive learning loss, anchored on the universally available structural modality [36].

- Attention Bottleneck Fusion: A transformer-based module with bottleneck tokens fuses the aligned multimodal embeddings for a given drug. A cross-attention mechanism produces a final drug representation [36].

- Pairwise Prediction Head: Representations for two drugs are combined and fed through a classifier to predict risk scores for 953+ clinical outcomes [36].

- Evaluation & Validation:

- Benchmark Splits: Performance is rigorously tested under "split-by-drugs" (simulating novel compounds) and "split-by-pairs" settings [36].

- Metrics: Area Under the Receiver Operating Characteristic Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) are primary metrics [36].

- External Validation: Predictions are validated against findings from head-to-head clinical trials (e.g., differences in neutropenia risk) and patient-derived xenograft models [36].

Protocol for Polypharmacology Prediction via Knowledge Graphs

Objective: To infer multi-target mechanisms and synergistic relationships for natural products using a knowledge graph (KG) [7].

- Knowledge Graph Construction:

- Nodes (Entities): Include NP chemical structures, protein targets, diseases, biosynthetic gene clusters (BGCs), spectral fingerprints (MS/MS), and biological pathways [7].

- Edges (Relationships): Define predicates such as "bindsto," "treats," "hasbiosyntheticgenecluster," "co-occurswith," and "sharesscaffold_with" [7].

- Data Integration: Consolidate data from public databases (e.g., NPASS, COCONUT, LOTUS) and literature mining via NLP tools [7].

- Model Training for Link Prediction:

- Embedding: Use KG embedding algorithms (e.g., TransE, ComplEx, or graph neural networks) to represent nodes and edges as continuous vectors in a low-dimensional space [7].

- Task: Train the model to distinguish true edges (e.g., "Curcumin – binds_to – TNF-alpha") from false, randomly generated ones.

- Prediction & Inference:

- Querying: Pose queries to the trained model in the form of (head entity, relation, ?) or (?, relation, tail entity).

- Application Examples:

- Target Identification: (

Natural Product X,binds_to, ?) predicts novel protein targets. - MoA Inference: Identify shared targets and pathways between two NPs to hypothesize synergy.

- Dereplication: (

?,has_MS2_spectrum,Spectrum Y) helps identify known compounds [7].

- Target Identification: (

Visualization of Key AI Workflows and Biological Concepts

AI Model Pathways for Complex Pharmacology Predictions

Madrigal Multimodal AI Architecture for Clinical Prediction

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful AI-driven research in polypharmacology relies on both computational tools and high-quality experimental data. The following table details essential resources.

Table 2: Essential Research Reagents and Resources for AI-Driven Polypharmacology Studies

| Category | Item / Resource | Function & Description | Key Considerations for AI Readiness |

|---|---|---|---|

| Reference & Training Data | DrugBank [36], TWOSIDES [37], NPASS [3] | Provides structured, labeled data on drug targets, interactions, and NP bioactivity for model training and benchmarking. | Data completeness, standardization of identifiers (e.g., InChIKey, UniProt ID), and clear licensing for commercial use are critical. |

| Bioactivity Profiling | Cell Painting assay, LINCS L1000 transcriptomic profiling [36] | Generates high-content morphological and gene expression profiles for compounds, serving as rich input features for multimodal AI. | Assay standardization and batch effect correction are necessary to ensure data consistency for model training. |

| Chemical Library | Pure, isolated natural product fractions or synthesized analogs [2]. | Provides physical samples for experimental validation of AI predictions (e.g., synergy screening, target deconvolution). | Accurate, digitized metadata (source, purity, concentration) must be linked to each sample. |

| Omics for Validation | CRISPR knockout/knock-in screens, phosphoproteomics kits. | Enables experimental validation of predicted targets and mechanisms, closing the AI prediction-validation loop. | Results should be formatted in standardized tables (e.g., .csv) with controlled vocabularies for easy integration with AI pipelines. |

| Software & Infrastructure | KNIME, Python (PyTorch, DGL, PyKEEN), Neo4j graph database. | Provides the environment for building, training, and deploying multimodal and graph-based AI models. | Pipeline reproducibility (e.g., via Docker/CodeOcean) and interoperability between tools are essential for collaborative research. |

Critical Evaluation and Future Directions

The comparative analysis reveals a trade-off between model complexity and interpretability. While multimodal models like Madrigal achieve superior predictive performance by integrating diverse data [36], their "black-box" nature can obscure the rationale behind predictions, posing a challenge for scientific discovery and regulatory approval [38]. In contrast, knowledge graph approaches offer more transparent, relation-based reasoning that aligns with scientific intuition, but they depend on the breadth and accuracy of the underlying graph [7].

A significant, persistent challenge across all AI approaches is data scarcity and imbalance, particularly for understudied natural products and rare adverse outcomes [37] [3]. Future progress hinges on the development of federated, FAIR (Findable, Accessible, Interoperable, Reusable) data repositories and benchmark datasets specifically designed for polypharmacology and synergy prediction tasks [7]. Furthermore, the next generation of AI tools must tightly integrate generative models for de novo design of polypharmacological agents with mechanistic explainability features, transitioning from pure prediction to actionable, hypothesis-generating partners in the natural product drug discovery pipeline [35] [2].

This comparison guide evaluates artificial intelligence (AI) models and integrated workflows for predicting the biological activity of natural products (NPs). Framed within a broader thesis on comparing AI for NP research, it objectively assesses performance through experimental data and details the methodologies that couple computational prediction with multi-omics validation [3] [2].

Comparative Performance of AI Models and Integrated Workflows

The performance of AI-driven NP discovery is benchmarked by its predictive accuracy for bioactivity and its success in identifying candidates that are later validated experimentally. The integration of multi-omics data significantly enhances the robustness and clinical relevance of these predictions [39] [40].

Table 1: Performance Comparison of AI Models and Integrated Workflows for NP Discovery

| Model/Workflow Type | Key Applications | Reported Performance Metrics | Strengths | Limitations & Challenges |

|---|---|---|---|---|

| Tree Ensembles & Graph Neural Networks [3] | Predicting anticancer, anti-inflammatory, antimicrobial actions; target identification. | High validation rates in in vitro studies for AI-prioritized compounds [3]. | High interpretability (tree ensembles); excellent at modeling molecular structures and relationships (GNNs). | Risk of overfitting on small, imbalanced NP datasets [3]. |

| Multi-Omics Integrative AI Classifiers [39] | Early cancer detection, patient stratification, therapy response prediction. | AUC of 0.81–0.87 for early-detection tasks in precision oncology [39]. | Captures system-level disease biology; leads to more clinically actionable insights. | Requires extensive data harmonization and batch correction [39]. |

| Knowledge Graph-Based AI [7] | Causal inference, anticipating novel bioactivities and pathways, data integration. | Shows potential for anticipating new nodes and edges (relationships) within NP science [7]. | Integrates multimodal, fragmented data; enables reasoning beyond correlation. | Complex to construct and maintain; relies on comprehensive, high-quality data ingestion [7]. |

| Generative AI & De Novo Design [2] [6] | Design of novel NP-inspired molecules, lead optimization. | Successfully generates synthetically accessible compounds with target properties [6]. | Explores vast chemical space beyond existing libraries; accelerates hit discovery. | Generated molecules may have complex synthesis routes or poor ADMET properties. |

| End-to-End AI Platforms with Validation Gates [3] [40] | Streamlined workflow from AI screening to in vitro validation. | Increases translational potential by moving ranked candidates into reproducible validations [3]. | Reduces R&D timelines; integrates feedback loops for model improvement. | High computational resource demands; requires robust experimental partnerships [40]. |

Detailed Experimental Protocols

The following protocols describe the core methodologies for integrating AI prediction with downstream validation, forming the basis for the performance data in Table 1.

Protocol for AI-Guided Virtual Screening and Prioritization

This protocol outlines the initial computational triage of natural product libraries [3] [2].

- Data Curation & Featurization: Assemble a library of NP structures from databases. Featurize molecules using descriptors (e.g., molecular weight, logP) or learned representations from graph neural networks [2] [6].