AI-Powered In Silico Strategies: Revolutionizing Natural Product-Based Drug Discovery

This article provides a comprehensive guide to in silico methods for accelerating natural product-based drug discovery, tailored for researchers and development professionals.

AI-Powered In Silico Strategies: Revolutionizing Natural Product-Based Drug Discovery

Abstract

This article provides a comprehensive guide to in silico methods for accelerating natural product-based drug discovery, tailored for researchers and development professionals. It explores the foundational rationale for using computational approaches to overcome the unique challenges of natural products, such as structural complexity and data scarcity. The article details a suite of methodological applications, from virtual screening and machine learning to ADMET prediction and network pharmacology. It addresses common troubleshooting issues, including data quality and model interpretability, and outlines strategies for optimization. Finally, it examines validation frameworks and comparative analyses against experimental data, synthesizing key takeaways into a forward-looking perspective on integrating computational precision with biological insight for more efficient therapeutic development[citation:2][citation:3][citation:4].

The Computational Imperative: Why In Silico Methods Are Transforming Natural Product Discovery

The Historical Significance and Modern Challenges of Natural Products in Drug Discovery

Natural products (NPs) have been the cornerstone of pharmacotherapy for millennia, providing a vast array of structurally complex and biologically active compounds. This application note, framed within a thesis on in silico methods for NP-based drug discovery, details the enduring historical significance, contemporary challenges, and modern integrated protocols that combine computational and experimental approaches to harness NPs in drug development.

Historical Significance & Modern Revival: A Quantitative Perspective

Natural products continue to play a dominant role in modern medicine, particularly in anti-infective and anti-cancer therapies. Recent analyses of drug approvals underscore their ongoing relevance.

Table 1: Natural Product-Derived Drug Approvals (2019-2023)

| Therapeutic Area | Total New Drug Approvals | NP-Derived Approvals | Percentage (%) |

|---|---|---|---|

| Anti-infectives | 42 | 15 | 35.7 |

| Anticancer Agents | 87 | 22 | 25.3 |

| All Others | 188 | 11 | 5.9 |

| Total (All Areas) | 317 | 48 | 15.1 |

Data Source: Consolidated from recent FDA/EMA approval lists and review articles (2020-2024).

Core Challenges in Modern NP Drug Discovery

- Supply & Resupply: Sustainable sourcing of rare biological material.

- Structural Complexity: Difficulty in de novo synthesis and derivatization.

- Dereplication: Rapid identification of known compounds to avoid redundancy.

- Low Yields: Isolation of sufficient quantities for full biological testing.

IntegratedIn Silico& Experimental Protocols

Protocol 3.1:In Silico-Guided NP Prioritization and Dereplication

Objective: To computationally prioritize extracts or fractions and identify known NPs prior to costly isolation.

Materials & Workflow:

- Input: Crude extract LC-MS/MS data in .mzML format.

- Software Tools: GNPS (Global Natural Products Social Molecular Networking), SIRIUS for molecular formula prediction, and the NPASS database for bioactivity predictions.

- Process:

- Upload MS/MS data to GNPS to create a molecular network.

- Use feature-based molecular networking to cluster related spectra.

- Annotate nodes by matching spectra against GNPS libraries (e.g., GNPS, NIST).

- For unannotated nodes, use SIRIUS to predict molecular formula and structure.

- Query predicted structures against in-house or commercial NP databases (e.g., COCONUT, NPASS) for virtual bioactivity screening.

- Output: A prioritized list of unknown nodes with predicted bioactivities for targeted isolation.

Protocol 3.2: Target Fishing and Pathway Analysis for Novel NPs

Objective: To predict the potential protein targets and affected signaling pathways of a computationally or isolated novel NP structure.

Materials & Workflow:

- Input: 2D/3D chemical structure (SDF/MOL2 file).

- Software Tools: SwissTargetPrediction, PASS Online, or the SEA server for target prediction. Use KEGG or Reactome for pathway enrichment.

- Process:

- Submit the NP structure to multiple target prediction servers.

- Compile consensus predicted targets (e.g., targets predicted by ≥2 servers).

- Perform pathway enrichment analysis on the consensus target set using DAVID or Enrichr.

- Build a protein-protein interaction network (e.g., via STRINGdb) to identify hub targets.

- Output: A ranked list of high-probability macromolecular targets and associated disease pathways for experimental validation.

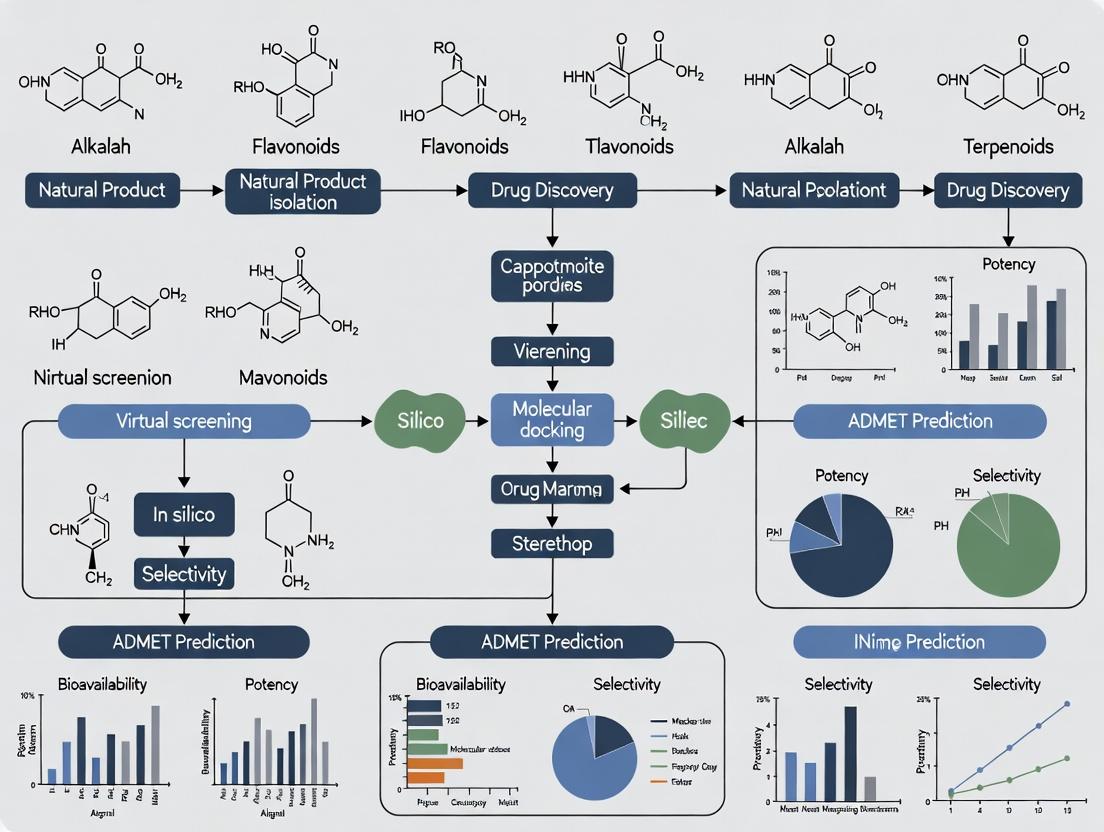

Visualization of Integrated Workflows

Diagram 1: Integrated In Silico-Experimental NP Discovery Pipeline

Diagram 2: In Silico Target Prediction & Pathway Mapping Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Materials for Integrated NP Research

| Item / Reagent | Function / Application |

|---|---|

| LC-MS Grade Solvents | High-purity solvents for reproducible UHPLC-MS/MS analysis and compound isolation. |

| Sephadex LH-20 | Size-exclusion chromatography medium for gentle desalting and fractionation of crude NP extracts. |

| Deuterated NMR Solvents | Essential for structure elucidation of novel NPs (e.g., DMSO-d6, CD3OD, CDCl3). |

| Cryoprobe for NMR | Increases sensitivity, enabling structure determination from microgram quantities of NP. |

| HTS Assay Kits | Validated biochemical or cell-based kits for rapid in vitro validation of predicted bioactivity. |

| Open-Access MS/MS Libraries | Reference spectral databases (e.g., GNPS, MassBank) for NP dereplication. |

| Cloud Computing Credits | For running computationally intensive tasks like molecular docking or machine learning-based predictions. |

| In-house NP Extract Library | A characterized, diverse physical library of pre-fractionated extracts for high-throughput screening. |

Unique Chemical and Pharmacological Characteristics of Natural Compounds

Natural products (NPs) are a cornerstone of modern pharmacotherapy, with a significant proportion of approved small-molecule drugs being derived directly or indirectly from natural sources [1]. Their unique value stems from evolutionary selection for bioactivity, resulting in unparalleled structural diversity, complex molecular architectures (including high stereochemical complexity), and privileged scaffolds capable of modulating challenging targets like protein-protein interactions [2] [3]. However, this same complexity presents formidable challenges for traditional drug discovery pipelines, including difficult isolation, synthetic inaccessibility, and unpredictable pharmacokinetics [4].

In silico methodologies have emerged as a critical framework for navigating these challenges, enabling the systematic exploration of natural chemical space within a broader thesis on computational drug discovery. These methods transform the NP discovery process by allowing for the virtual screening of immense compound libraries, predictive modeling of pharmacokinetic properties, and mechanistic simulation of bioactivity before any physical compound is sourced or synthesized [5] [6]. This paradigm leverages cheminformatics, machine learning (ML), and molecular modeling to de-risk and accelerate the translation of unique natural compound characteristics into viable therapeutic leads [7] [8].

A successful in silico campaign begins with access to high-quality, well-annotated data and the appropriate computational tools. Specialized databases and software suites form the essential infrastructure for this research.

2.1 Key Natural Product Databases Critical to any computational study is the selection of a suitable natural product database. These repositories vary in scope, annotation depth, and accessibility, influencing the virtual screening strategy [5].

Table 1: Select Natural Product Databases for In Silico Screening

| Database Name | Key Features | Primary Utility in Screening | Reference/Link |

|---|---|---|---|

| SuperNatural Database | Contains ~50,000 purchasable compounds with 3D structures and pre-computed conformers. Links to supplier information. | Ligand-based virtual screening (LBVS) using similarity searches and ready-to-dock 3D conformers. | [2] |

| Natural Product Atlas (NPA) | A curated database of microbial natural products focused on structural diversity. | LBVS and chemical space exploration for novel microbial-derived scaffolds. | [7] |

| ChEMBL | A large-scale database of bioactive molecules with drug-like properties, containing extensive bioactivity data. | Building ligand-based ML models and extracting known actives/inactives for target classes. | [8] |

| COCONUT (Compound Combination-Oriented NP Database) | Focuses on natural products and their combinations, with unified terminology. | Studying synergistic effects and network pharmacology of compound mixtures. | [9] |

2.2 The Scientist's Toolkit: Essential Software and Platforms The experimental workflow is supported by a suite of specialized software and platforms, each addressing a specific computational task.

Table 2: Research Reagent Solutions: Key Software Tools for In Silico NP Discovery

| Tool/Platform Name | Category | Primary Function | Application in NP Research |

|---|---|---|---|

| RDKit | Cheminformatics | An open-source toolkit for cheminformatics, including fingerprint generation, descriptor calculation, and molecular operations. | Standard for processing NP structures, calculating molecular descriptors, and generating fingerprints for ML [7] [8]. |

| RosettaVS / OpenVS Platform | Structure-Based Virtual Screening (SBVS) | A physics-based docking and virtual screening platform that models receptor flexibility. | High-accuracy docking and screening of ultra-large libraries against protein targets [6]. |

| PyRx (AutoDock Vina) | Molecular Docking | A graphical interface for automated molecular docking using the AutoDock Vina engine. | Accessible docking for binding pose prediction and affinity estimation of NP candidates [10]. |

| TAME-VS Platform | Machine Learning / LBVS | A target-driven ML platform that uses homology and known bioactivity data to train custom classifiers. | Hit identification for novel targets with limited known NP ligands [8]. |

| Gaussian | Quantum Mechanics | Software for electronic structure modeling, including Density Functional Theory (DFT) calculations. | Computing electronic properties, reactivity indices, and optimizing geometries of NPs [4] [10]. |

| GROMACS / AMBER | Molecular Dynamics (MD) | Software suites for performing all-atom MD simulations. | Assessing stability of NP-protein complexes, calculating binding free energies, and simulating conformational dynamics [10]. |

Diagram Title: In Silico NP Discovery Workflow from Target to Hit List

Core Methodologies and Application Protocols

This section provides detailed, executable protocols for key in silico experiments in natural product research.

3.1 Protocol: Machine Learning-Based Virtual Screening for Novel Inhibitors This protocol outlines a ligand-based virtual screening (LBVS) approach using machine learning to identify novel natural product inhibitors for a given protein target, based on methodologies from successful case studies [7] [8].

Objective: To train a binary classifier capable of distinguishing active from inactive compounds against a specific target and apply it to screen a natural product database.

Materials & Input:

- Bioactivity Data: A dataset of known active and inactive compounds for the target (e.g., from ChEMBL [8]). Activity is typically defined by an IC50/Ki cutoff (e.g., ≤ 1 μM for active [7]).

- NP Library: A database of natural product structures in SMILES format (e.g., Natural Product Atlas [7]).

- Software: Python environment with RDKit, scikit-learn, imbalanced-learn, and pandas libraries.

Procedure:

- Data Curation and Labeling:

- Retrieve bioactivity data from public databases using the target's UniProt ID.

- Apply a consistent activity threshold to label compounds as 'active' or 'inactive'. Remove duplicates.

- Critical Note: Address class imbalance (typically many more inactives) using techniques like the Synthetic Minority Oversampling Technique (SMOTE) [7].

- Feature Engineering (Vectorization):

- Model Training and Validation:

- Split the labeled dataset into training (70%) and hold-out test (30%) sets, ensuring representative clusters of active compounds are in the test set [7].

- Train multiple ML classifiers (e.g., Random Forest, Support Vector Machine, Neural Network). Optimize hyperparameters via grid or random search with cross-validation.

- Select the best model based on performance metrics (e.g., precision, AUC-ROC) on the cross-validated training set.

- Virtual Screening and Hit Prioritization:

- Apply the trained model to predict the probability of activity for each compound in the NP library.

- Rank NPs by the prediction score and select top candidates.

- Apply additional filters: assess drug-likeness (e.g., Lipinski's Rule of Five), screen for Pan-Assay Interference Compounds (PAINS), and evaluate chemical novelty [7].

- Applicability Domain Assessment:

- Perform Principal Component Analysis (PCA) on the combined training and NP library feature sets.

- Define the model's applicability domain (e.g., a convex hull around training data). Flag or deprioritize NPs falling outside this domain, as predictions for them are less reliable [7].

3.2 Protocol: Integrated Structure-Based Evaluation of NP Pharmacokinetics and Dynamics This protocol describes a multi-stage in silico evaluation of promising NP hits, integrating ADMET prediction, molecular docking, and dynamics simulations, as exemplified in recent studies [10].

Objective: To comprehensively evaluate the binding mode, stability, and drug-like properties of a prioritized natural product hit.

Materials & Input:

- NP Hit: 3D chemical structure file (e.g., .mol2, .sdf).

- Protein Target: High-resolution 3D structure from crystallography or homology modeling (e.g., .pdb file).

- Software: ADMET prediction tools (e.g., SwissADME, pkCSM), docking software (e.g., PyRx, AutoDock Vina), MD software (e.g., GROMACS), and quantum chemistry software (e.g., Gaussian).

Procedure: Part A: ADMET and Toxicity Profiling

- Use online platforms like SwissADME to predict key physicochemical (LogP, TPSA) and pharmacokinetic (GI absorption, CYP inhibition) parameters.

- Employ toxicity prediction tools to assess alerts for mutagenicity, hepatotoxicity, and other endpoints. Consider both top-down (e.g., QSAR models trained on large toxicity datasets) and bottom-up (e.g., molecular docking against toxicity-related proteins like hERG) approaches [9].

Part B: Molecular Docking and Binding Pose Analysis

- Prepare the protein: remove water, add hydrogen atoms, assign charges (e.g., using AutoDockTools).

- Prepare the ligand: optimize geometry, assign rotatable bonds.

- Define the binding site grid based on known active site residues.

- Perform docking simulations (≥ 50 runs) to generate multiple binding poses. Select the pose with the most favorable binding energy and biologically plausible interactions (e.g., hydrogen bonds, hydrophobic contacts).

Part C: Molecular Dynamics Simulation for Complex Stability

- Set up the system: place the protein-ligand complex in a solvation box (e.g., TIP3P water), add ions to neutralize charge.

- Energy minimization: remove steric clashes using steepest descent/conjugate gradient algorithms.

- Equilibration: perform short simulations under NVT and NPT ensembles to stabilize temperature and pressure.

- Production run: execute an unrestrained MD simulation (recommended ≥ 100 ns [10]).

- Trajectory analysis:

- Calculate the Root Mean Square Deviation (RMSD) of the protein backbone and ligand to assess overall complex stability.

- Calculate the Root Mean Square Fluctuation (RMSF) to determine residual flexibility.

- Compute the Radius of Gyration (Rg) to monitor protein compactness.

- Monitor intermolecular hydrogen bonds throughout the simulation to evaluate interaction persistence [10].

Part D: Electronic Structure Analysis (Optional, for Mechanism)

- For the isolated ligand or key ligand-protein fragments, perform Density Functional Theory (DFT) calculations (e.g., using Gaussian at the B3LYP/6-311+G* level [4]).

- Analyze frontier molecular orbitals (HOMO-LUMO) to predict reactivity and nucleophilic/electrophilic sites that may be involved in metabolism or target interaction.

Diagram Title: Multi-Stage In Silico NP Lead Validation Funnel

Validation and Case Studies in Therapeutic Areas

The efficacy of in silico protocols is demonstrated through their application in identifying leads for challenging diseases.

4.1 Case Study: Targeting HIV-1 Integrase with Machine Learning A study demonstrated the use of an ML-based LBVS pipeline to discover novel natural product inhibitors of HIV-1 Integrase (IN) [7]. Researchers trained a Random Forest model on 7,165 compounds with known IN activity from BindingDB. After addressing class imbalance, the model was used to screen the Natural Product Atlas. The workflow successfully identified NP candidates predicted to be active, which were subsequently clustered to ensure chemical diversity. This approach showcases how ML can leverage existing bioactivity data to efficiently mine NP space for anti-infective leads.

4.2 Case Study: Discovery of Colon Cancer Therapeutics from Annona muricata A comprehensive in silico evaluation of phytochemicals from soursop leaves for colon cancer treatment provides a prototypical example of an integrated protocol [10]. After initial GC-MS identification and drug-likeness filtering, seven top compounds were selected. Molecular docking against the DNA mismatch repair protein MLH1 revealed superior binding affinities compared to the standard drug 5-fluorouracil. Subsequent ADMET predictions indicated favorable pharmacokinetics and low toxicity. Crucially, 100 ns molecular dynamics simulations confirmed the stability of the NP-protein complexes, as evidenced by low RMSD and stable hydrogen bonding patterns for hits like alpha-tocopherol. This end-to-end study validates the protocol's ability to prioritize stable, drug-like NPs for experimental testing.

Table 3: Performance of Select In Silico Methods in NP Research

| Method Category | Specific Tool/Approach | Reported Performance Metric | Application Context |

|---|---|---|---|

| Structure-Based VS | RosettaVS (VSH mode) | Enrichment Factor at 1% (EF1%) = 16.72; Top performer on CASF2016 benchmark [6]. | General virtual screening accuracy. |

| Machine Learning (LBVS) | Random Forest Classifier | Used to screen NP Atlas for HIV-1 IN inhibitors; model trained on BindingDB data [7]. | Identification of novel anti-HIV natural products. |

| Molecular Dynamics | 100 ns MD Simulation (GROMACS/AMBER) | Stable complex RMSD (< 0.3 nm) and persistent H-bonds demonstrated for alpha-tocopherol-MLH1 [10]. | Validation of binding stability for cancer-related target. |

| ADMET Prediction | QSAR and PBPK Modeling | Applied to overcome challenges of NP instability, solubility, and first-pass metabolism prediction [4]. | Early-stage pharmacokinetic profiling. |

4.3 Emerging Framework: Target-Driven Machine Learning Screening The TAME-VS platform represents an advanced, automated framework for hit identification [8]. Starting with a single protein target ID, it performs homology-based target expansion, retrieves relevant bioactivity data from ChEMBL, trains bespoke ML models, and screens custom compound libraries. This modular platform is particularly valuable for novel targets with few known NP ligands, as it leverages information from homologous proteins. Its public availability increases accessibility to advanced ML-enabled VS for the research community.

In silico methods provide an indispensable, multidisciplinary framework for elucidating and leveraging the unique chemical and pharmacological characteristics of natural compounds. By integrating cheminformatics, machine learning, and molecular modeling, researchers can systematically navigate NP complexity—from virtual screening of billions of compounds to predicting metabolic fate and simulating target engagement dynamics.

The future of this field lies in enhancing the accuracy of predictability and the depth of integration. Key directions include: 1) Developing NP-specific predictive models for ADMET and toxicity to overcome biases in models trained primarily on synthetic molecules [4] [9]; 2) Advancing hybrid screening protocols that seamlessly combine ligand- and structure-based methods with active learning to explore ultra-large chemical spaces [6] [8]; and 3) Embracing systems pharmacology approaches to model polypharmacology and synergistic effects characteristic of many natural extracts [9] [1]. As databases grow and algorithms evolve, in silico strategies will become even more central, transforming natural product discovery into a more predictable, efficient, and mechanism-driven endeavor.

The pharmaceutical industry faces a persistent productivity crisis, often described by Eroom's Law—the observation that the number of new drugs approved per billion US dollars spent on R&D has halved roughly every nine years [11]. The traditional drug development paradigm is characterized by excessive costs, averaging $2.6 billion per approved drug, protracted timelines of 10-15 years, and catastrophic attrition rates, with approximately 90% of candidates failing in clinical trials [11] [12]. This model is especially challenging for natural product (NP)-based drug discovery, where promising bioactive compounds face additional hurdles such as complex isolation, limited availability, chemical instability, and undefined pharmacokinetics [4] [1].

In silico methodologies, powered by artificial intelligence (AI) and advanced computational modeling, are emerging as core disruptive drivers to reverse this trend. By integrating computational intelligence across the entire pipeline—from target identification to clinical trial design—these tools offer a strategic framework to accelerate timelines, drastically reduce costs, and mitigate high attrition rates by failing early and cheaply [11] [13]. This shift is being catalyzed by regulatory evolution, notably the U.S. FDA's 2025 decision to phase out mandatory animal testing for many drug types, affirming in silico evidence as a credible pillar of biomedical research [13].

Within the specific context of NP research, in silico methods address unique constraints. They enable the virtual screening of vast, structurally diverse chemical spaces without the need for physical compound isolation, predict ADME (Absorption, Distribution, Metabolism, Excretion) properties to flag pharmacokinetic liabilities early, and leverage generative AI to design optimized NP-inspired analogs [4] [14] [1]. This document details the application notes and experimental protocols that operationalize these in silico drivers, providing a practical guide for integrating computational acceleration into NP-based drug discovery workflows.

Quantitative Impact: The Data SupportingIn SilicoAcceleration

The integration of AI and in silico tools directly targets the core inefficiencies of drug development. The following tables summarize key performance metrics comparing traditional and AI-augmented approaches, and the phase-specific attrition where in silico prediction can have maximum impact.

Table 1: Comparative Analysis of Traditional vs. AI-Augmented Drug Discovery Metrics

| Performance Metric | Traditional Approach | AI-Augmented / In Silico Approach | Data Source & Notes |

|---|---|---|---|

| Average Cost per Approved Drug | ~$2.6 billion [11] | Potential for significant reduction; early failure of unsuitable candidates saves late-stage costs. | [11] Cost avoided by predictive toxicology & ADME. |

| Discovery to Phase I Timeline | ~5 years [15] | 18-24 months (e.g., Insilico Medicine's IPF candidate) [15]. | [15] Generative AI can compress early stages. |

| Clinical Trial Success Rate | ~10% (overall) [12] | Aim to increase via better candidate selection and patient stratification. | [11] [12] Target of AI is to improve this rate. |

| Typical Hit Rate from HTS | ~2.5% [12] | Greatly enhanced by virtual screening of larger, virtual chemical libraries (e.g., >10³³ molecules) [11]. | [12] AI pre-filters candidates for physical testing. |

| Lead Optimization Cycle Efficiency | Industry standard baseline. | Reported ~70% faster design cycles requiring 10x fewer synthesized compounds [15]. | [15] Data from Exscientia's AI-driven platform. |

Table 2: Major Causes of Clinical Attrition and Corresponding *In Silico Mitigation Strategies*

| Development Phase | Approximate Attrition Rate | Primary Cause of Failure | In Silico Mitigation Strategy |

|---|---|---|---|

| Preclinical | Not quantified (high) | Poor pharmacokinetics (ADME), toxicity [11]. | Predictive ADME/Tox models (e.g., QSAR, PBPK) [4] [13]. |

| Phase I | ~37% [11] | Human safety, adverse reactions [11]. | Improved preclinical toxicity prediction using digital twins & organ-on-chip models [13]. |

| Phase II | ~70% [11] | Lack of efficacy in patients [11]. | Target validation via AI/omics; patient stratification biomarkers; in silico efficacy models [11] [14]. |

| Phase III | ~42% [11] | Insufficient efficacy vs. standard of care, safety in larger population [11]. | Synthetic control arms, trial simulation, and digital twin forecasting [13]. |

FoundationalIn SilicoMethodologies: Application Notes

Predictive ADME/Tox Profiling for Natural Products

Application Note: Early prediction of pharmacokinetic and safety profiles is critical to avoid late-stage attrition [4]. NPs often possess complex scaffolds that violate traditional drug-likeness rules (e.g., Lipinski’s Rule of Five), making experimental ADME testing challenging due to low solubility, chemical instability, or scarce material [4]. In silico tools provide a viable first pass.

- Tools & Techniques: Utilize a combination of:

- Quantum Mechanics (QM) Calculations: To assess chemical reactivity and metabolic susceptibility. For example, studying the regioselectivity of CYP450 metabolism for compounds like estrone [4].

- Quantitative Structure-Activity Relationship (QSAR) Models: To predict properties like permeability, solubility, and hepatic metabolic stability.

- Physiologically Based Pharmacokinetic (PBPK) Modeling: To simulate compound concentration-time profiles in tissues and plasma, informing first-in-human dosing [4].

- Specialized Platforms: ADMETlab, ProTox-3.0, and DeepTox for toxicity endpoint prediction [13].

Considerations: Predictions are only as good as the training data. The unique chemical space of NPs may fall outside the applicability domain of models trained predominantly on synthetic molecules. Cross-validation with sparse experimental data for NPs is essential.

Virtual Screening and AI-Powered Hit Identification

Application Note: Replacing or prioritizing expensive high-throughput screening (HTS) with virtual screening allows exploration of vastly larger chemical spaces, including virtual NP libraries and de novo generated structures [11] [1].

- Ligand-Based Approaches: Used when the structure of the target is unknown but active ligands are known. Techniques include pharmacophore modeling and molecular similarity searching.

- Structure-Based Approaches: Used when a 3D target structure (experimental or homology-modeled) is available.

- Molecular Docking: Predicts the binding pose and affinity of a small molecule within a target binding site. Crucial for understanding NP-target interactions [1].

- Molecular Dynamics (MD) Simulations: Assesses the stability of the ligand-target complex and calculates binding free energies with higher accuracy than static docking [4] [1].

- AI-Enhanced Screening: Graph Neural Networks (GNNs) and other deep learning models can screen billions of virtual compounds in silico, learning complex structure-activity relationships to prioritize synthesis [14] [12].

Generative AI for Lead Optimization and Novel Design

Application Note: Beyond screening, generative AI models can design novel, optimized NP analogs with desired properties.

- Model Architectures:

- Generative Adversarial Networks (GANs): A generator network creates new molecular structures, while a discriminator network evaluates their authenticity, driving the generation of realistic molecules [11].

- Variational Autoencoders (VAEs): Encode molecules into a latent space where interpolation and optimization can generate novel structures with specific property profiles [11].

- Transformers: Adapted from natural language processing, they treat molecular structures as "sentences" to generate novel sequences [14].

- Workflow: The AI is conditioned on a multi-parameter Target Product Profile (e.g., potency on target, selectivity over antitargets, predicted ADME properties). It then generates novel molecular structures that maximize this profile, enabling a rapid design-make-test-analyze cycle [15].

AI-Driven Lead Optimization Cycle [11] [15]

Network Pharmacology for Complex Mechanism Prediction

Application Note: NPs, especially herbal extracts, often exert therapeutic effects through polypharmacology—modulating multiple targets simultaneously. Network pharmacology provides a systems-level view.

- Methodology: Constructs multi-layered networks linking:

- NP compounds to their predicted protein targets.

- Protein targets to associated biological pathways.

- Pathways to disease phenotypes.

- Outcome: Identifies key target nodes and synergistic effects, moving beyond a single "magic bullet" target to a holistic mechanism of action, which can be validated experimentally [14] [1].

Detailed Experimental Protocols

Protocol 1:In SilicoADME and Toxicity Prediction Pipeline for a Novel Natural Product

Objective: To computationally profile the pharmacokinetic and safety liabilities of a newly isolated or designed NP prior to resource-intensive experimental assays.

Materials (The Scientist's Toolkit):

- Hardware: Standard high-performance computing cluster or workstation.

- Software: Commercial suites (e.g., Schrödinger's Small Molecule Drug Discovery Suite, MOE) or open-source tools (OpenBabel, RDKit, AutoDock Vina).

- Compound Structure: 2D or 3D chemical structure file (e.g., .sdf, .mol2) of the NP.

- Prediction Platforms: Access to web servers like SwissADME [4], ProTox-3.0 [13], or ADMETlab [13].

Procedure:

- Structure Preparation:

- Convert the NP structure to a 3D format.

- Perform conformational search and geometry optimization using molecular mechanics (MMFF94) or semi-empirical (PM6) methods [4].

- Output the lowest energy conformer for subsequent analysis.

- Physicochemical Property Prediction:

- Calculate key descriptors: Molecular weight, logP (lipophilicity), topological polar surface area (TPSA), hydrogen bond donors/acceptors.

- Assess compliance with drug-likeness rules (e.g., Lipinski, Veber).

- ADME Prediction:

- Absorption: Predict Caco-2 permeability or human intestinal absorption using QSAR models.

- Metabolism: Predict sites of metabolism (e.g., via CYP450) using reactivity models from QM or machine learning. Identify potential for being a substrate or inhibitor of major CYP enzymes [4].

- Excretion: Predict renal clearance or likelihood of being a P-glycoprotein substrate.

- Toxicity Prediction:

- Run predictions for mutagenicity (Ames test), carcinogenicity, hepatotoxicity, and cardiotoxicity (hERG channel inhibition) using platforms like ProTox-3.0 [13].

- Data Integration & Risk Assessment:

- Compile all predictions into a risk scorecard.

- Flag severe liabilities (e.g., predicted hERG inhibition, mutagenicity, or poor permeability). A compound with multiple severe flags may be deprioritized.

Protocol 2: AI-Enhanced Virtual Screening of a Natural Product Library Against a Novel Target

Objective: To identify potential hit compounds from a large virtual NP library for a disease target with a known 3D structure.

Materials:

- Target Structure: High-resolution X-ray crystal structure or a high-confidence AlphaFold2 model of the target protein. Prepare the structure by adding hydrogens, assigning bond orders, and optimizing side-chain orientations.

- Compound Library: A database of 3D NP structures in a suitable format (e.g., multi-molecule .sdf file). Libraries can be sourced from ZINC, COCONUT, or proprietary collections.

- Software: Docking software (e.g., Glide, GOLD, AutoDock); AI/ML screening platform (e.g., from Atomwise or using a custom GNN model) [15] [12].

Procedure:

- Target Preparation:

- Define the binding site (from co-crystallized ligand or literature).

- Generate grid files for docking centered on the binding site.

- Ligand Library Preparation:

- Standardize structures: remove salts, generate tautomers, and protonate at physiological pH.

- Perform a conformational search for each ligand.

- Hierarchical Screening: a. Ultra-Fast Filtering (Optional): Use a trained Graph Neural Network classifier to score all library compounds for likely activity, rapidly filtering from millions to hundreds of thousands [12]. b. High-Throughput Docking: Dock the top candidates from (a) or the entire prepared library into the target binding site. Rank compounds by docking score (e.g., GlideScore, binding energy). c. Interaction Analysis & Clustering: Visually inspect top-ranked poses for key interactions (hydrogen bonds, hydrophobic contacts). Cluster results to ensure chemical diversity among hits. d. Refinement with Molecular Dynamics: Subject the best 10-20 complexes to short (50-100 ns) MD simulations in explicit solvent to assess binding stability and calculate more accurate binding free energies (e.g., via MM-PBSA/GBSA) [1].

- Hit Selection & Prioritization:

- Integrate scores from docking, MD, and in silico ADMET predictions (from Protocol 1).

- Select 5-10 chemically diverse, synthetically tractable hits with favorable predicted profiles for in vitro experimental validation.

Hierarchical AI & Physics-Based Virtual Screening Workflow [1] [12]

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key Computational Tools and Resources for In Silico NP Drug Discovery

| Tool/Resource Name | Category | Primary Function | Access / Example |

|---|---|---|---|

| AlphaFold2 | Protein Structure Prediction | Predicts highly accurate 3D protein structures from amino acid sequences, invaluable for targets without experimental structures. | DeepMind; EMBL-EBI repository. |

| Schrödinger Suite | Comprehensive Drug Discovery Platform | Integrates solutions for molecular modeling, simulation, and prediction (Glide for docking, Desmond for MD, QikProp for ADME). | Commercial platform [15]. |

| RDKit | Cheminformatics Toolkit | Open-source library for cheminformatics and machine learning, used for molecule manipulation, descriptor calculation, and model building. | Open source (rdkit.org). |

| SwissADME | Web-based ADME Prediction | Free tool for fast prediction of key pharmacokinetic properties and drug-likeness. | Web server [4]. |

| ProTox-3.0 | Web-based Toxicity Prediction | Predicts various toxicity endpoints, including organ toxicity, toxicity pathways, and molecular targets. | Web server [13]. |

| Pharma.AI (Insilico Medicine) | End-to-End AI Platform | Generative AI platform for target discovery (PandaOmics), molecule generation (Chemistry42), and clinical trial prediction (InClinico). | Commercial platform [15] [16]. |

| Exscientia AI Platform | AI-Driven Design Platform | Integrates generative AI with automated synthesis and testing for closed-loop optimization. | Commercial platform [15]. |

| COCONUT | Natural Product Database | A comprehensive, freely accessible database of NP structures for virtual screening library building. | Web database. |

Future Directions and Integration into the Broader Thesis

The trajectory of in silico methods points toward even deeper integration and sophistication, shaping the broader thesis on NP drug discovery:

- The Rise of Digital Twins and In Silico Trials: The creation of virtual patient populations that mirror real-world heterogeneity will enable clinical trial simulation. This allows for optimizing trial design, predicting outcomes, and serving as synthetic control arms, dramatically reducing the scale, cost, and risk of human trials [13]. For NPs with complex mechanisms, digital twins can model systems-level effects.

- Federated Learning and Data Collaboration: To overcome the "small data" problem common in NP research, federated learning allows AI models to be trained on distributed, proprietary datasets (e.g., from different pharmaceutical companies or herbarium collections) without sharing the raw data, improving model robustness [11] [14].

- Explainable AI (XAI) for Mechanistic Insight: Next-generation models will not only make predictions but also provide interpretable explanations for why a compound is predicted to be active or toxic. This is crucial for building scientific trust and generating novel mechanistic hypotheses for experimental validation [14] [12].

- Regulatory Adoption as a Primary Evidence Source: As evidenced by the FDA's evolving stance, Model-Informed Drug Development (MIDD) submissions incorporating in silico evidence will become standard. The community must develop standardized validation frameworks to ensure the credibility and reproducibility of computational models used for regulatory decision-making [13].

In conclusion, in silico methodologies are the foundational core drivers addressing the existential challenges of cost, time, and attrition in drug discovery. Their application to the rich but challenging domain of natural products is not merely additive but transformative. By adopting the protocols and frameworks outlined here, researchers can systematically harness these tools to accelerate the journey of NPs from traditional remedies to optimized, globally relevant medicines, thereby validating the central thesis of computational revolution in this field.

The modern paradigm of natural product (NP)-based drug discovery is fundamentally integrated with in silico methodologies. Computational approaches enable the efficient mining, dereplication, bioactivity prediction, and target identification for NPs, accelerating the transition from compound discovery to lead candidate. This document provides detailed application notes and experimental protocols for leveraging key databases and resources within this workflow.

Core Database Compendium: Classification and Quantitative Analysis

The following tables summarize the primary databases, their content scope, and key quantitative metrics essential for research planning.

Table 1: Comprehensive Natural Product Chemical & Spectral Databases

| Database Name | Primary Content | Total Entries (Approx.) | Key Features | Access Model |

|---|---|---|---|---|

| COCONUT (COlleCtion of Open Natural prodUcTs) | NP structures, predicted properties | ~450,000 unique NPs | Open-access, no redundancy, includes predicted molecular descriptors. | Free, Web/Download |

| NPASS (Natural Product Activity and Species Source) | NPs, species source, target activities | ~35,000 NPs, ~300,000 activity entries | Quantitative activity data (IC50, Ki, EC50) against biological targets. | Free, Web/Download |

| LOTUS (The Natural Products Occurrence Database) | NPs, occurrence in biological organisms | ~700,000 curated occurrences | Links structures to organism names via Wikidata, emphasizes provenance. | Free, Web/API |

| GNPS (Global Natural Products Social Molecular Networking) | MS/MS spectral data, molecular networks | Millions of community spectra | Community-contributed spectral library, molecular networking tools. | Free, Web/Cloud |

| PubChem | Compounds, bioassays, literature | Over 1 million NPs/subset | Extensive bioassay data, links to PubMed, vendor information. | Free, Web/API |

| CMAUP (Collective Molecular Activities of Useful Plants) | NPs from medicinal plants, target activities | ~47,000 NPs, 26,000 targets | Annotated with gene targets, pathways, and associated diseases. | Free, Download |

Table 2: Specialized Target Prediction & ADMET Databases

| Database Name | Application Focus | Data Type | Utility in NP Discovery | |

|---|---|---|---|---|

| SuperNatural 3.0 | NP target prediction & analogues | ~500,000 compounds with predicted targets | Facilitates virtual screening and polypharmacology studies. | Free, Web |

| Seaweed Metabolite Database | Marine NP chemistry & bioactivity | ~800 compounds from seaweeds | Specialized resource for marine biodiscovery. | Free, Web |

| ADMETlab 3.0 | In silico ADMET prediction | Web-based prediction platform | Evaluates drug-likeness, toxicity, and pharmacokinetics of NP hits. | Free, Web/API |

Detailed Application Notes & Experimental Protocols

Protocol 3.1: Integrated Workflow for NP Dereplication and Prioritization

Objective: To efficiently identify known compounds and prioritize novel NPs with potential bioactivity from a crude extract using in silico tools.

Research Reagent Solutions & Essential Materials:

- LC-HRMS/MS System: For generating high-resolution mass and fragmentation spectra of the sample.

- Crude Natural Product Extract: Fractionated or unfractionated.

- GNPS Account: For spectral data submission and networking.

- Local Installation of SIRIUS/CSI:FingerID: For in-depth structure annotation.

- Software: MZmine 3 (for LC-MS data processing), Cytoscape (for network visualization).

Step-by-Step Protocol:

Data Acquisition:

- Analyze the NP extract via LC-HRMS/MS in data-dependent acquisition (DDA) mode.

- Export raw data in an open format (e.g., .mzML).

Data Pre-processing with MZmine 3:

- Import the .mzML file.

- Perform mass detection, chromatogram building, deconvolution, and isotopic peak grouping.

- Align peaks across samples (if multiple).

- Export the feature table (containing m/z, RT, and MS/MS spectra) as a .mgf file for GNPS.

Molecular Networking on GNPS:

- Upload the .mgf file to the GNPS workspace (https://gnps.ucsd.edu).

- Create a molecular network using the standard workflow. Set parameters: Precursor Ion Mass Tolerance (0.02 Da), Fragment Ion Tolerance (0.02 Da).

- Execute the job. Visualize the resulting network in the browser or Cytoscape.

- Dereplication: Nodes (clusters) colored by spectral matches to library entries (e.g., GNPS, NIST) indicate known compounds.

In-Depth Annotation of Novel Clusters:

- For nodes without library matches, export the representative MS/MS spectrum.

- Process this spectrum through the SIRIUS software (local install):

- Input m/z and MS/MS data.

- Run SIRIUS to predict molecular formula and CSI:FingerID for structural class prediction.

- Cross-reference predicted structures with databases like COCONUT via their SMILES.

Bioactivity & Target Prioritization:

- For novel or prioritized structures, generate canonical SMILES.

- Input SMILES into SuperNatural 3.0 or NPASS to predict potential protein targets or retrieve analogues with known activities.

- Use ADMETlab 3.0 to assess the pharmacokinetic and toxicity profile.

- Prioritize NPs with favorable predicted activity against a disease-relevant target and acceptable ADMET properties for in vitro validation.

Diagram Title: NP Dereplication & Prioritization Workflow

Protocol 3.2:In SilicoTarget Fishing and Pathway Analysis for a Novel NP

Objective: To predict the protein targets and affected signaling pathways of a purified, structurally elucidated novel natural product.

Research Reagent Solutions & Essential Materials:

- Validated NP Structure: Canonical SMILES or 3D SDF file of the pure compound.

- Target Prediction Servers: SuperNatural 3.0, SwissTargetPrediction, PharmMapper.

- Pathway Analysis Tools: KEGG, Reactome, STRING database.

- Visualization Software: Cytoscape with appropriate plugins.

Step-by-Step Protocol:

Structure Preparation:

- Generate the low-energy 3D conformer of the NP using cheminformatics software (e.g., Open Babel, RDKit). Save as .mol2 or .sdf.

Consensus Target Prediction:

- Submit the NP's SMILES string to SwissTargetPrediction and SuperNatural 3.0.

- For a 3D pharmacophore approach, submit the 3D structure to PharmMapper.

- Compile all predicted targets (Gene Symbols) from the three servers. Assign a confidence score based on the consensus (e.g., targets predicted by ≥2 tools).

Pathway Enrichment Analysis:

- Take the list of high-confidence target genes and submit to the KEGG Pathway or Reactome over-representation analysis tool.

- Use a significance cutoff (e.g., p-value < 0.05, FDR corrected). Identify the top 5-10 enriched pathways relevant to the disease of interest (e.g., "PI3K-Akt signaling pathway", "Apoptosis").

Protein-Protein Interaction (PPI) Network Construction:

- Input the target genes into the STRING database (https://string-db.org) to retrieve known and predicted interactions.

- Set a high confidence score (e.g., > 0.7). Download the network file (.tsv or .xgmml).

Integrated Network Visualization & Hypothesis Generation:

- Import the PPI network into Cytoscape.

- Overlay the results of the pathway enrichment analysis by coloring nodes (proteins) according to their membership in key pathways.

- The resulting network visually contextualizes the polypharmacology of the NP, highlighting central hub targets and the interplay between affected pathways. This forms a testable hypothesis for downstream experimental validation.

Diagram Title: In Silico Target Fishing & Pathway Analysis Protocol

The In Silico Toolkit: Core Methods and Their Practical Applications in NP Workflows

The integration of structure-based computational methods has fundamentally reshaped the landscape of drug discovery, offering a powerful strategy to harness the therapeutic potential of natural products. Natural compounds, derived from plants, marine organisms, and microorganisms, are renowned for their immense structural diversity and historical success as drug leads; approximately two-thirds of modern small-molecule drugs have origins related to natural products [1]. However, their development is hampered by challenges such as limited availability, complex purification processes, and a scarcity of robust bioactivity data [1] [4]. In silico approaches—encompassing molecular docking, molecular dynamics (MD) simulations, and homology modeling—provide a cost-effective and efficient solution, enabling the virtual screening, optimization, and mechanistic analysis of natural compounds long before resource-intensive laboratory work begins [17].

These computational techniques are embedded within the broader paradigm of Computer-Aided Drug Design (CADD), which aims to reduce the high attrition rates and exorbitant costs (averaging $1.8 billion per approved drug) associated with traditional discovery pipelines [17]. By leveraging the three-dimensional structures of biological targets, researchers can prioritize the most promising natural product hits for experimental validation, thereby accelerating the development of new therapies for diseases such as cancer, viral infections, and inflammatory disorders [18] [19]. This article details the application notes, protocols, and essential toolkits for deploying these critical in silico methods in natural product-based drug discovery research.

Comparative Analysis of Core Structure-Based Methods

The selection of an appropriate in silico method depends on the research question, the availability of structural data, and the desired balance between computational speed and predictive accuracy. The following table summarizes the primary applications, strengths, and limitations of molecular docking, molecular dynamics, and homology modeling within the context of natural product research.

Table: Comparative Analysis of Core Structure-Based Methods for Natural Product Research

| Method | Primary Applications | Key Strengths | Key Limitations | Typical Output |

|---|---|---|---|---|

| Molecular Docking | Virtual screening of compound libraries, prediction of ligand binding pose and affinity [20] [21]. | High throughput; rapid scoring of thousands of compounds; identifies potential binding modes and key interactions [22]. | Static view of binding; limited account for protein flexibility and solvation effects; scoring function inaccuracies [22]. | Ranked list of compounds by binding energy (kcal/mol); 3D visualization of ligand-receptor complexes. |

| Molecular Dynamics (MD) | Assessment of binding stability, analysis of conformational changes, calculation of binding free energies (MM/GBSA/PBSA) [20] [18]. | Accounts for full flexibility and dynamics of the system; provides time-evolved insight into interactions and stability [23]. | Computationally expensive; limited timescale (nanoseconds to microseconds); requires significant expertise [22]. | Trajectory files for analysis; metrics like RMSD, RMSF, Rg; quantitative binding free energy estimates (ΔG). |

| Homology Modeling | Prediction of 3D protein structure when experimental structures are unavailable [1] [18]. | Enables structure-based studies for novel targets; cost-effective alternative to experimental determination [17]. | Model quality depends on template sequence identity and alignment accuracy; errors can propagate to downstream steps [22]. | Predicted 3D atomic coordinates of the target protein; model quality scores (e.g., DOPE score, Ramachandran plot). |

Detailed Experimental Protocols and Application Notes

Integrated Workflow for Identifying Natural Product Inhibitors

A standard, multi-step computational pipeline for natural product discovery integrates the three core methods, often supplemented with machine learning and ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) profiling [20] [18]. The following diagram illustrates this synergistic workflow.

Protocol 1: Homology Modeling for Target Structure Preparation

When an experimental structure for the target protein is unavailable from the Protein Data Bank (PDB), homology modeling is employed [18].

- Step 1 – Template Identification & Alignment: Retrieve the target protein's amino acid sequence from a database like UniProt. Use tools like BLAST or HMMER against the PDB to identify suitable template structures with high sequence identity (>30-40%) and resolution (<2.5 Å). Perform a multiple sequence alignment between the target and template[s [18].

- Step 2 – Model Generation: Use specialized software such as MODELLER to generate multiple 3D models of the target protein [18]. The software uses spatial restraints derived from the template to build the unknown structure.

- Step 3 – Model Selection & Validation: Select the best model using intrinsic scoring functions like the Discrete Optimized Protein Energy (DOPE) score [18]. Critically validate the model using:

- Stereo-chemical quality: Analyze the Ramachandran plot (e.g., via PROCHECK) to ensure >90% of residues are in favored/allowed regions [18].

- Statistical potential scores: Use tools like Verify3D or ProSA-web to assess the overall fold compatibility.

- Step 4 – Binding Site Preparation: For docking, prepare the modeled structure by adding hydrogen atoms, assigning partial charges, and defining the binding site (often based on the template's ligand location or computational prediction) [20].

Protocol 2: Molecular Docking and Virtual Screening

This protocol is used to screen large libraries of natural compounds (e.g., from ZINC or specialized natural product databases) against a prepared protein target [20] [18].

- Step 1 – Library Preparation: Download or curate a database of natural compounds in a standard format (e.g., SDF). Filter compounds based on basic drug-likeness rules (e.g., Lipinski's Rule of Five) using tools like FAF-Drugs4 [20]. Convert the remaining compounds into the required format for docking (e.g., PDBQT for AutoDock Vina) after adding polar hydrogens and charges.

- Step 2 – Receptor and Grid Preparation: Prepare the protein structure (from PDB or homology model) by removing water molecules and co-crystallized ligands, adding hydrogens, and assigning charges [20] [21]. Define a 3D grid box centered on the binding site of interest. The box size should be large enough to accommodate ligand movement (e.g., 40x40x40 ų).

- Step 3 – Docking Validation (Critical): Perform a re-docking experiment. Extract the native co-crystallized ligand from the experimental structure (if available) and dock it back into the binding site. A successful validation is indicated by a low root-mean-square deviation (RMSD < 2.0 Å) between the docked pose and the original crystal pose [20].

- Step 4 – High-Throughput Virtual Screening: Run the docking simulation for all compounds in the prepared library using software like AutoDock Vina or Glide. Use a lower exhaustiveness setting for the initial screen to save time, then re-dock the top hits (e.g., top 10%) with higher precision [20].

- Step 5 – Pose Analysis and Induced-Fit Docking (IFD): Visually inspect the top-scoring complexes using molecular visualization software (PyMOL, Chimera). Analyze key interactions (hydrogen bonds, hydrophobic contacts). For promising leads, perform IFD to account for side-chain flexibility in the binding site, which can optimize the binding pose and provide a more accurate affinity estimate [20].

Protocol 3: Molecular Dynamics Simulation and Binding Free Energy Calculation

MD simulations are used to evaluate the stability of the docked complexes and calculate more rigorous binding free energies [20] [18].

- Step 1 – System Setup: Use a tool like the CHARMM-GUI or GROMACS

pdb2gmxto solvate the protein-ligand complex in a water box (e.g., TIP3P model). Add ions (e.g., Na⁺, Cl⁻) to neutralize the system's charge and simulate physiological ion concentration. Assign force field parameters (e.g., CHARMM36, AMBER ff19SB) to the protein and small molecule. Ligand parameters can be generated using tools like CGenFF or antechamber [23]. - Step 2 – Energy Minimization and Equilibration: Minimize the energy of the system to remove steric clashes. Then, perform a two-step equilibration in the NVT (constant Number, Volume, Temperature) and NPT (constant Number, Pressure, Temperature) ensembles to stabilize the temperature (e.g., 310 K) and pressure (1 bar) of the system [23].

- Step 3 – Production MD Run: Run the final, unrestrained simulation. For assessing binding stability, a simulation length of 100-200 nanoseconds is often considered sufficient [20] [18]. Save the atomic coordinates (trajectory) at regular intervals (e.g., every 10-100 picoseconds).

- Step 4 – Trajectory Analysis:

- Stability: Calculate the Root Mean Square Deviation (RMSD) of the protein backbone and the ligand to assess the overall stability of the complex.

- Flexibility: Calculate the Root Mean Square Fluctuation (RMSF) of protein residues to identify flexible regions.

- Interactions: Monitor the persistence of key hydrogen bonds and hydrophobic contacts throughout the simulation.

- Step 5 – Binding Free Energy Calculation: Use the Molecular Mechanics/Generalized Born Surface Area (MM/GBSA) or Poisson-Boltzmann Surface Area (MM/PBSA) method on frames extracted from the stable part of the MD trajectory. This method provides a more accurate estimate of binding affinity than docking scores alone [20] [18]. The result is a calculated ΔGbind in kcal/mol, which can be used to rank final candidates.

The Scientist's Toolkit: Essential Research Reagent Solutions

Successful execution of the protocols above relies on a suite of specialized software tools and databases. The following table details these essential digital "reagents."

Table: Key Software and Database Resources for Structure-Based Natural Product Discovery

| Category | Tool/Database Name | Primary Function | Application Note |

|---|---|---|---|

| Protein Structure Database | Protein Data Bank (PDB) [22] [20] | Repository of experimentally determined 3D structures of proteins and nucleic acids. | The primary source for retrieving target structures or templates for homology modeling. Quality metrics (resolution) must be evaluated [22]. |

| Natural Product Libraries | ZINC Natural Product Subset [18], African Natural Products Databases [20] | Curated collections of purchasable or annotated natural product compounds in ready-to-dock formats. | Provides the chemical starting points for virtual screening. Libraries should be filtered for drug-likeness before use [20]. |

| Bioactivity Data | ChEMBL [22], PubChem [21] | Public repositories of bioactive molecules and their assay results (e.g., IC₅₀, Ki). | Used for model validation, training machine learning classifiers, or benchmarking docking protocols. |

| Homology Modeling | MODELLER [18] | Software for comparative protein structure modeling by satisfaction of spatial restraints. | Standard tool for generating 3D models from sequence alignments. Requires a template structure. |

| Molecular Docking | AutoDock Vina [20] [18], Glide | Programs for performing virtual screening and predicting ligand binding poses and affinities. | Vina is widely used for its speed and accuracy. Glide (Schrödinger) offers high-performance commercial-grade docking. |

| Molecular Dynamics | GROMACS [23], AMBER, NAMD | Software suites for performing all-atom MD simulations. | GROMACS is open-source and highly optimized for performance on CPUs and GPUs. Essential for stability analysis. |

| Visualization & Analysis | PyMOL [20] [21], UCSF Chimera | Molecular graphics systems for visualizing structures, trajectories, and interaction analyses. | Critical for preparing structures, analyzing docking poses, and creating publication-quality figures. |

| ADMET Prediction | SwissADME [4], pkCSM | Web servers for predicting pharmacokinetic, drug-likeness, and toxicity properties from chemical structure. | Used to filter virtual screening hits or prioritize leads based on predicted absorption and safety profiles [18]. |

Structure-based in silico methods have become indispensable for advancing natural product-based drug discovery. By integrating molecular docking, homology modeling, and molecular dynamics simulations, researchers can efficiently navigate vast chemical space, identify promising bioactive compounds, and gain deep mechanistic insights at the atomic level. This integrated approach, as demonstrated in recent studies targeting KRAS(G12C) and βIII-tubulin, significantly de-risks and accelerates the early stages of the drug discovery pipeline [20] [18].

Future advancements lie in enhancing the accuracy and scalability of these methods. Key challenges include improving scoring functions to better predict binding affinities, incorporating full receptor flexibility more efficiently, and accurately simulating the complex role of water molecules in binding [22]. Furthermore, the integration of machine learning with traditional physics-based methods is a rapidly growing frontier. ML can enhance virtual screening accuracy, predict ADMET properties with greater reliability, and even guide the de novo design of natural product-inspired analogs [22] [18]. As computational power grows and algorithms become more sophisticated, the synergy between in silico predictions and experimental validation will continue to drive the successful discovery of novel therapeutics from nature's chemical repertoire.

Application Notes

Within the framework of a thesis on in silico methods for natural product (NP)-based drug discovery, ligand-based approaches are indispensable for elucidating structure-activity relationships (SAR) when the 3D structure of the biological target is unknown. These methods leverage known bioactive molecules to predict and design new candidates.

1. Quantitative Structure-Activity Relationship (QSAR): QSAR models correlate molecular descriptors (quantitative representations of chemical structure) with biological activity. For NPs, this helps prioritize derivatives or analogs for synthesis. Recent AI-driven QSAR utilizes deep neural networks (DNNs) to automatically extract relevant features from molecular graphs or SMILES strings, surpassing traditional methods like Partial Least Squares (PLS) in predictive accuracy for complex datasets.

2. Pharmacophore Modeling: A pharmacophore model abstracts the essential steric and electronic features necessary for molecular recognition. In NP research, it can be derived from a set of active compounds to screen virtual libraries for novel scaffolds that share the same feature arrangement, enabling scaffold hopping from complex NPs to synthetically tractable leads.

3. Machine Learning (ML) Integration: ML unifies and enhances these methods. Ensemble methods (Random Forest, Gradient Boosting) improve QSAR robustness. Deep learning architectures, such as graph convolutional networks (GCNs), simultaneously learn from molecular structure and associated bioactivity data, enabling highly predictive models that can guide the optimization of NP-derived hits.

Table 1: Comparison of Key Ligand-Based & AI-Driven Methods

| Method | Primary Input | Key Output | Typical Algorithm (Current) | Application in NP Discovery |

|---|---|---|---|---|

| 2D/3D QSAR | Molecular descriptors (e.g., logP, MW, topological indices) | Predictive model (pIC50, pKi) | PLS, Support Vector Machine (SVM), Random Forest | Predicting activity of semi-synthetic NP analogs |

| Pharmacophore Modeling | Aligned set of active ligands (and sometimes inactive) | 3D arrangement of chemical features (HBA, HBD, hydrophobic, charged) | HipHop, Common Feature Approach, DeepPharmaco (GCN-based) | Virtual screening for novel chemotypes mimicking NP binding |

| Deep Learning QSAR | Molecular graphs or SMILES strings | Activity/Property prediction with confidence estimation | Graph Neural Network (GNN), Transformer | De novo design of NP-inspired molecules with optimized properties |

Protocols

Protocol 1: Developing a Robust QSAR Model for Natural Product Derivatives Objective: To build a predictive QSAR model for the inhibition of a target enzyme (e.g., SARS-CoV-2 Mpro) using a dataset of coumarin derivatives.

- Dataset Curation: Collect a minimum of 50 compounds with consistent experimental pIC50 values. Apply rigorous curation: remove duplicates, standardize structures, and check for errors using software like RDKit or OpenBabel.

- Descriptor Calculation & Diversity Analysis: Calculate a comprehensive set of 2D and 3D molecular descriptors (e.g., using Dragon software or Mordred package). Perform Principal Component Analysis (PCA) to visualize chemical space coverage.

- Data Splitting: Split data into training (70-80%) and test (20-30%) sets using a clustering-based method (e.g., Kennard-Stone) to ensure representativeness.

- Feature Selection: Apply genetic algorithm or Boruta method to select the most relevant, non-redundant descriptors from the training set to avoid overfitting.

- Model Building & Validation: Train multiple algorithms (SVM, Random Forest, Gradient Boosting). Validate using 5-fold cross-validation on the training set. Assess the final model on the external test set. Report key metrics: R², Q², RMSE.

Protocol 2: Generation and Validation of a Ligand-Based Pharmacophore Model Objective: To create a pharmacophore hypothesis from known active flavonoids for virtual screening.

- Ligand Preparation: Select 10-15 diverse, highly active flavonoids. Prepare their 3D structures: generate likely tautomers and protonation states at physiological pH (pH 7.4). Perform conformational sampling for each molecule.

- Common Feature Pharmacophore Generation: Use software like Discovery Studio or PHASE. Input the multiple conformers of the active compounds. Run the "Common Feature Pharmacophore Generation" protocol to identify conserved chemical features (e.g., two hydrogen bond acceptors, one hydrophobic aromatic region, one ring aromatic feature).

- Hypothesis Scoring & Selection: Evaluate generated hypotheses based on ranking scores (e.g., fit value, survival score). Select the top-ranked hypothesis.

- Model Validation: Use a decoy set containing known actives and inactives. Screen this set using the pharmacophore model as a query. Calculate enrichment factors (EF) and area under the ROC curve (AUC-ROC) to validate model discriminative power.

Protocol 3: Implementing a Graph Neural Network for Activity Prediction Objective: To train a GNN model to predict antibacterial activity of terpenoid compounds.

- Graph Representation: Represent each terpenoid molecule as a graph: atoms as nodes (featurized with atomic number, degree, hybridization) and bonds as edges (featurized with bond type, conjugation).

- Model Architecture: Implement a GNN using a framework like PyTorch Geometric. The architecture should include:

- Three Message Passing layers (e.g., GCNConv, GINConv) to aggregate neighbor information.

- A global mean pooling layer to generate a single molecular fingerprint.

- Two fully connected (dense) layers leading to a single output node (predicted pMIC).

- Training Loop: Use Mean Squared Error (MSE) as the loss function and the Adam optimizer. Train for 500 epochs with a learning rate of 0.001. Employ early stopping based on validation loss.

- Evaluation: Apply the trained model to a held-out test set. Report standard regression metrics and visualize predictions vs. experimental values.

Ligand-Based & AI Drug Discovery Workflow

GNN for Molecular Property Prediction

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software & Tools for Ligand-Based & AI-Driven Discovery

| Item | Category | Primary Function in Protocols |

|---|---|---|

| RDKit | Open-Source Cheminformatics | Protocol 1, 3: Molecule standardization, descriptor calculation, and molecular graph generation for ML. |

| PyTorch Geometric | Deep Learning Library | Protocol 3: Provides built-in modules and layers for easy implementation of Graph Neural Networks (GNNs). |

| Schrödinger Suite (Phase) | Commercial Software | Protocol 2: Pharmacophore model generation, refinement, and virtual screening. |

| MOE (Molecular Operating Environment) | Commercial Software | Protocol 1, 2: Integrated platform for QSAR, pharmacophore modeling, and conformational analysis. |

| KNIME Analytics Platform | Data Analytics/Workflow | Protocol 1, 3: Visual workflow construction for data preprocessing, model training, and integration of cheminformatics nodes. |

| PubChem | Public Database | Source of bioactivity data for model training and decoy sets for pharmacophore validation. |

| ZINC20 | Public Database | Source of commercially available compounds for virtual screening using generated pharmacophore or QSAR models. |

Application Notes: Integrating In Silico ADME/Tox in Natural Product Research

Within the broader thesis that in silico methods are indispensable for streamlining and de-risking natural product-based drug discovery, this document provides practical protocols for early-stage pharmacokinetic and toxicological profiling. Natural compounds present unique challenges, including complex stereochemistry, scaffold novelty, and frequent promiscuity against targets and metabolizing enzymes. The following notes and protocols outline a validated workflow to prioritize lead compounds and guide synthetic optimization.

Key Quantitative Predictions and Benchmarks: A consolidated summary of common endpoints and typical acceptance thresholds used for virtual screening is provided below.

Table 1: Key ADME/Tox Parameters and Ideal Profiles for Oral Drugs

| Parameter | Prediction Method (Example) | Ideal Range/Profile for Oral Drugs | Rationale |

|---|---|---|---|

| Lipophilicity | Calculated LogP (cLogP, XLogP3) | < 5 | High lipophilicity links to poor solubility, increased metabolic clearance, and promiscuity. |

| Water Solubility | ESOL Method | > -6 log(mol/L) | Essential for gastrointestinal absorption. |

| Human Intestinal Absorption (HIA) | QSAR Model | > 80% (High) | Predicts fraction absorbed in the gut. |

| Blood-Brain Barrier (BBB) Penetration | BOILED-Egg Model | CNS: Yes; Peripheral: No | Target-dependent. Rule-of-thumb for CNS-active compounds. |

| CYP450 Inhibition | Structural Ligand-Based (e.g., CYP3A4, 2D6) | Low probability for major isoforms | Avoids drug-drug interaction liabilities. |

| Hepatotoxicity | QSAR Model (e.g., DILI) | Low probability | Mitigates risk of drug-induced liver injury. |

| Cardiotoxicity (hERG) | Pharmacophore/QSAR Model | pIC50 < 5 | Avoids blockage of hERG potassium channel, linked to TdP arrhythmia. |

| AMES Mutagenicity | Statistical-based (e.g., Benigni/Bossa rules) | Negative | Screens for potential DNA-reactive mutagenic compounds. |

| Pan-Assay Interference (PAINS) | Structural Alerts Filtering | No alerts | Flags compounds with promiscuous, non-specific bioactivity. |

| Pharmacokinetic Volume (VDss) | Machine Learning (e.g., OLS-based) | ~0.7 L/kg | Predicts distribution. High VD may indicate extensive tissue binding. |

| Clearance (CL) | In Vitro-in Vivo Extrapolation (IVIVE) | Low to Moderate | Predicts rate of drug elimination from the body. |

| Half-life (T1/2) | Calculated from CL & VD | > 3 hours for QD dosing | Influences dosing frequency. |

Table 2: Representative In Silico Toolkits & Platforms (2024)

| Platform/Tool | Type | Primary ADME/Tox Use | Access Model |

|---|---|---|---|

| SwissADME | Web Suite | ADME profiling, BOILED-Egg, bioavailability radar | Free, Web-based |

| pkCSM | Web Tool | ADME/Tox prediction (broad endpoints) | Free, Web-based |

| ProTox-3.0 | Web Tool | Compound toxicity (hepatotoxicity, ecotoxicity, etc.) | Free, Web-based |

| admetSAR 2.0 | Web Database/Server | Comprehensive ADMET prediction with large dataset | Free, Web-based |

| Schrödinger QikProp | Software Module | Physicochemical & ADME prediction within Maestro | Commercial |

| Simcyp Simulator | PBPK Platform | Population-based PBPK modeling for clinical translation | Commercial |

| Mozilla Molecule | Python Library | Calculates molecular descriptors for ML workflows | Open Source |

| KNIME Analytics | Workflow Platform | Custom in silico ADME/Tox pipeline creation | Freemium/Commercial |

Experimental Protocol: Integrated In Silico ADME/Tox Profiling Workflow

Protocol Title: Multi-Platform Virtual Screening for Natural Compound ADME/Tox Profiling.

Objective: To computationally predict the pharmacokinetic and safety profiles of a library of natural compounds prior to in vitro or in vivo testing.

I. Compound Preparation & Curation

- Input: Compile SMILES strings or 2D/3D structures of natural compounds (e.g., from NPASS, PubChem).

- Standardization: Use a cheminformatics toolkit (e.g., RDKit, OpenBabel) to:

- Neutralize structures.

- Remove salts and solvents.

- Generate canonical tautomers and stereo-enumerations where applicable.

- Minimize energy using a molecular mechanics force field (e.g., MMFF94).

- Format Output: Save the curated library in a common format (e.g., .sdf, .mol2) for subsequent analysis.

II. Physicochemical & ADME Property Prediction

- Primary Screening (SwissADME):

- Upload the prepared .sdf file or input SMILES list to the SwissADME server (http://www.swissadme.ch).

- Execute the analysis. Key outputs include: Lipophilicity (LogP, LogD), solubility, pharmacokinetics (GI absorption, BBB permeant), drug-likeness (Lipinski, Ghose, Veber rules), and medicinal chemistry friendliness.

- Visualization: Analyze the Bioavailability Radar chart. A compound must have all six parameters (LIPO, SIZE, POLAR, INSOLU, INSATU, FLEX) within the pink area to be considered drug-like.

- Secondary Pharmacokinetics (pkCSM):

- Input the same SMILES strings into the pkCSM server (https://biosig.lab.uq.edu.au/pkcsm/).

- Extract predictions for: Caco-2 permeability, VDss, CL, T1/2, and fraction unbound in plasma.

III. Toxicity & Safety Profiling

- Structural Alerts (SwissADME): Review the "Pan-Assay Interference Compounds (PAINS)" and "Brenk/Structural Alerts" filters from the SwissADME results. Flag any compounds with alerts.

- Toxicological Endpoints (ProTox-3.0):

- Navigate to the ProTox-3.0 server (https://tox.charite.de/protox3/).

- Input SMILES strings individually or as a batch.

- Record predictions for: Hepatotoxicity, AMES mutagenicity, hERG inhibition, carcinogenicity, and cytotoxicity (LD50).

- Examine the predicted toxicity pathways and molecular targets where available.

IV. Data Integration & Decision Making

- Consolidate Data: Compile all results from SwissADME, pkCSM, and ProTox-3.0 into a single spreadsheet.

- Apply Filters: Establish a multi-parameter filter based on your project needs. Example:

- Must have: No PAINS alerts, No Structural Alerts for mutagenicity, High GI absorption.

- Must meet at least 4 of 5: LogP < 4, TPSA < 140 Ų, MW < 500 g/mol, hERG inhibition probability < 0.3, Hepatotoxicity probability < 0.5.

- Visual Prioritization: Generate a scatter plot (e.g., LogP vs. TPSA, colored by Hepatotoxicity score) to identify promising compounds in desirable property space.

Visualizations

Title: In Silico ADME/Tox Screening Workflow

Title: Key ADME/Tox Pathways for an Oral Drug

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for In Silico ADME/Tox Profiling

| Item/Resource | Function & Explanation | Example/Provider |

|---|---|---|

| Cheminformatics Suite | Libraries for automated molecule manipulation, descriptor calculation, and file format conversion. Essential for preparing compound libraries. | RDKit (Open Source), KNIME (Platform), Schrödinger Maestro (Commercial) |

| Molecular Descriptor Calculator | Generates numerical representations of molecular structures (e.g., LogP, TPSA, molecular weight) used as input for QSAR models. | Mordred, PaDEL-Descriptor, MOE Descriptors |

| Web-Based Prediction Servers | Freely accessible platforms that host pre-trained models for a wide array of ADME/Tox endpoints. Ideal for initial screening. | SwissADME, pkCSM, ProTox-3.0, admetSAR |

| Commercial ADMET Prediction Software | Integrated, high-performance software with validated models, advanced visualization, and customer support for industrial R&D. | Schrödinger QikProp, Simulations Plus ADMET Predictor, BIOVIA Discovery Studio |

| Toxicity Pathway Database | Curated databases linking compounds to toxic outcomes and molecular initiating events, aiding mechanistic interpretation. | Comparative Toxicogenomics Database (CTD), ToxCast, LINCS |

| Natural Product Database | Source of structurally diverse natural compound libraries in machine-readable formats for virtual screening. | NPASS, COCONUT, CMAUP, PubChem |

| High-Performance Computing (HPC) Cluster | Enables large-scale virtual screening of thousands of compounds against multiple complex models (e.g., molecular dynamics for CYP binding). | Local institutional clusters, Cloud computing (AWS, Azure) |

| Data Visualization Software | Tools to create interpretable plots (e.g., radar charts, scatter matrices) for multi-parameter optimization and team decision-making. | Spotfire, Tableau, Python (Matplotlib/Seaborn), R (ggplot2) |

The discovery of novel therapeutics from natural products (NPs) has long been hindered by the inherent complexity of these compounds. Traditional reductionist approaches, which focus on isolating single active ingredients against single targets, often fail to capture the synergistic therapeutic effects and polypharmacology that underlie the efficacy of traditional medicines [24] [25]. This gap necessitates a paradigm shift toward systems-level analysis and design. In silico methods, particularly the integration of network pharmacology (NP) and generative artificial intelligence (AI), represent this transformative shift, offering a holistic framework for deciphering complex bioactivity and accelerating the design of next-generation, natural product-inspired drugs [26] [27].