AI-Powered Drug-Target Interaction Prediction in Herbal Medicine: Methods, Validation, and Future Roadmap

This article provides a comprehensive analysis of artificial intelligence (AI) applications in predicting drug-target interactions for herbal medicines.

AI-Powered Drug-Target Interaction Prediction in Herbal Medicine: Methods, Validation, and Future Roadmap

Abstract

This article provides a comprehensive analysis of artificial intelligence (AI) applications in predicting drug-target interactions for herbal medicines. It addresses the foundational need for computational approaches to decipher the complex 'multi-component, multi-target' nature of herbal formulas, reviews advanced methodological frameworks including graph neural networks and knowledge graphs, examines critical challenges related to data quality and model interpretability, and evaluates current validation paradigms and comparative performance of AI tools. Aimed at researchers and drug development professionals, the synthesis offers a roadmap for the rigorous, clinically relevant, and ethically sound integration of AI into herbal pharmacology research, bridging traditional knowledge with modern computational science.

The Imperative for AI in Herbal Pharmacology: Deciphering Complexity and Defining the Landscape

The systematic investigation of herbal medicine (HM) for modern drug discovery presents a fundamental scientific challenge: deconvoluting the therapeutic effects of complex mixtures containing dozens to hundreds of bioactive phytochemicals, each with the potential to interact with multiple biological targets and pathways [1]. This multi-component, multi-target, and multi-pathway nature stands in stark contrast to the conventional "one drug, one target" paradigm, making traditional pharmacological methods inadequate for elucidating mechanisms of action [2]. The core challenge, therefore, is to develop robust, reproducible, and scalable methodologies to bridge this gap—from characterizing the complex chemical space of herbs to identifying precise molecular targets and elucidating integrated network pharmacology.

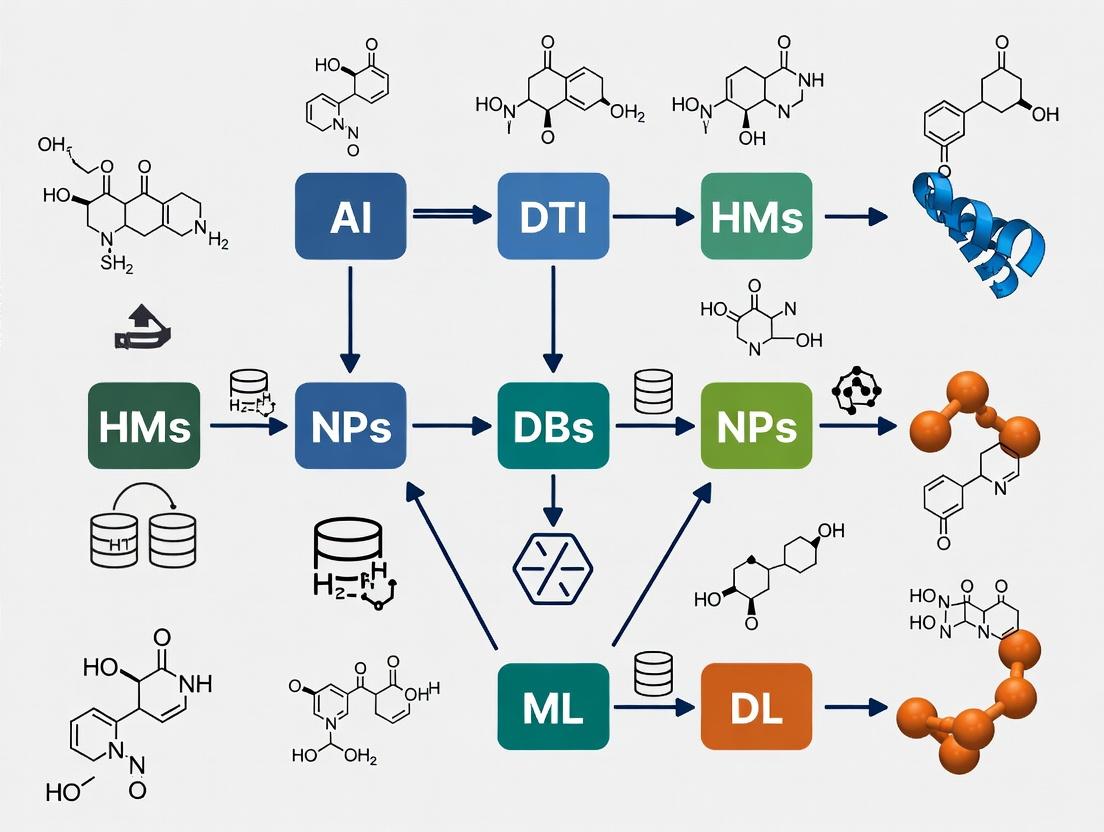

Artificial Intelligence (AI) emerges as a pivotal force in addressing this challenge. By leveraging machine learning (ML) and deep learning (DL) models, researchers can integrate and analyze vast, heterogeneous datasets—including phytochemical structures, pharmacokinetic properties, protein-protein interaction networks, and multi-omics data—to predict biologically relevant drug-target interactions (DTIs) with high accuracy [3]. This computational guide details a validated, integrative workflow that synergizes bioinformatics screening, AI-powered prediction, and experimental validation to transform herbal medicine from an empirical practice into a source of precisely characterized, target-driven therapeutic leads.

Methodological Framework: Computational and AI-Driven Pipelines

A successful transition from herbs to targets requires a multi-stage pipeline. The following sections detail the core computational and experimental methodologies, with summarized protocols presented in Table 1.

Table 1: Core Methodological Pipelines for Target Identification from Herbal Medicine

| Stage | Primary Objective | Key Tools & Techniques | Output & Success Criteria |

|---|---|---|---|

| 1. Compound Sourcing & Characterization | Establish a comprehensive, chemically accurate library of herbal constituents. | Bibliometric analysis, database mining (TCMSP, PubChem), text mining, high-resolution metabolomics [1] [4]. | A curated database of compounds with associated structures (e.g., SDF, SMILES formats). |

| 2. Pharmacokinetic & Bioactivity Screening | Filter compounds for favorable drug-like properties and potential bioavailability. | In silico models: PreOB (Oral Bioavailability), PreDL (Drug-Likeness), SwissADME [1] [5]. | A refined list of "active components" (e.g., OB ≥ 30%, DL ≥ 0.18) [1]. |

| 3. Target Prediction & Prioritization | Identify putative protein targets for the active compounds. | AI/ML Models: SysDT, drugCIPHER-CS, CA-HACO-LF; Similarity-based and network-based methods [1] [2] [3]. | A list of predicted protein targets with associated interaction scores or likelihoods. |

| 4. Network & Enrichment Analysis | Place predicted targets in biological context and identify key pathways. | Bioinformatics: Cytoscape for C-T/P networks; GO & KEGG enrichment (clusterProfiler, ShinyGO); PPI analysis (STRING) [1] [5]. | Identification of hub genes and significantly enriched signaling pathways (e.g., PI3K-Akt, MAPK). |

| 5. Computational Validation | Assess the structural feasibility of predicted compound-target binding. | Molecular docking (Glide, AutoDock), Molecular Dynamics (MD) simulations (Desmond, GROMACS), binding free energy calculations (MM/GBSA) [1] [5]. | Docking scores, stable MD trajectories, and calculated binding affirms key interactions. |

| 6. Experimental Validation | Biologically confirm predicted interactions and efficacy. | In vitro assays (binding, cell viability), in vivo disease models (e.g., CHD rat model), multi-omics validation (metabolomics) [2] [4]. | Dose-dependent biological activity confirming the predicted mechanism. |

AI and Machine Learning Models for Target Prediction

AI models are essential for scalable target prediction. They generally fall into three categories, each with strengths for herbal medicine research [3]:

- Similarity-based methods infer interactions based on the principle that chemically similar compounds share targets. They are interpretable but can miss interactions for structurally novel phytochemicals [3].

- Network-based methods leverage biological networks (e.g., protein-protein interaction) to predict targets within a functional context, capturing indirect relationships but relying on network completeness [2] [3].

- Hybrid ML/DL methods integrate diverse data (chemical, genomic, phenotypic) for superior predictive performance. For example, the Context-Aware Hybrid Ant Colony Optimized Logistic Forest (CA-HACO-LF) model uses optimized feature selection and classification for DTI prediction [6]. The SysDT model combines Random Forest and Support Vector Machine algorithms, requiring thresholds (e.g., RF ≥ 0.8, SVM ≥ 0.7) to define a high-confidence interaction [1].

Experimental Validation Protocols

Computational predictions require rigorous biological validation. Two key protocol summaries are provided below.

Protocol A: In Vivo Validation for Cardiovascular Herbal Formula This protocol validates targets for an herbal formula (e.g., Qishenkeli, QSKL) in a coronary heart disease (CHD) model [2].

- Animal Model Induction: Anesthetize Sprague-Dawley rats and perform left thoracotomy. Ligate the left anterior descending coronary artery to induce myocardial infarction. Sham controls undergo surgery without ligation.

- Treatment Administration: Post-operation, randomly assign animals to model, control, and treatment (e.g., QSKL at 508 mg/kg/day) groups. Administer treatment via daily oral gavage for 28 days.

- Functional Assessment: Perform echocardiography before sacrifice to measure left ventricular function parameters (e.g., ejection fraction, fractional shortening).

- Sample Collection & Analysis: Collect serum via abdominal aorta puncture. Use ELISA or similar assays to measure levels of target pathway biomarkers (e.g., renin, angiotensin II for the RAAS pathway).

- Outcome: A statistically significant improvement in functional parameters and modulation of predicted biomarkers in the treatment group validates the formula's activity on the predicted targets [2].

Protocol B: Multi-Omics Validation via Metabolomics This protocol uses metabolomics to decode active components and targets by observing systemic metabolic changes [4].

- Study Design: Administer the herbal extract or compound to animal models or cell cultures. Include control and disease model groups.

- Sample Collection: Collect biofluids (serum, urine) or tissue homogenates at multiple time points.

- Metabolite Profiling: Analyze samples using high-throughput platforms like UPLC-Q-TOF/MS or GC-MS to generate comprehensive metabolic profiles.

- Data Analysis: Use multivariate statistical analysis (PCA, OPLS-DA) to identify differentially expressed metabolites between groups. Map these metabolites to biological pathways via KEGG.

- Integration & Target Inference: Overlay the disrupted metabolic pathways with computationally predicted target networks. The convergence points, where predicted protein targets regulate the perturbed metabolic pathways, provide high-confidence candidates for further mechanistic validation [4].

The Scientist's Toolkit: Essential Research Reagents and Materials

Critical reagents and their functions for key experiments in this field are listed below.

Table 2: Essential Research Reagent Solutions for Herbal Medicine Target Research

| Reagent/Material | Primary Function | Application Context |

|---|---|---|

| OPLS4 Force Field | Energy minimization and optimization of molecular structures. | Protein and ligand preparation for molecular docking and dynamics simulations [5]. |

| Tetrazolium-based Assay (e.g., MTT, CCK-8) | Measures cell metabolic activity as a proxy for viability/proliferation. | In vitro validation of compound efficacy against cancer or other cell lines. |

| LigandPrep Software | Generates accurate, low-energy 3D structures with correct ionization and tautomeric states for small molecules. | Essential pre-processing step for molecular docking studies [5]. |

| Desmond Molecular Dynamics System | Simulates the dynamic behavior of protein-ligand complexes over time in a solvated system. | Validates docking pose stability and calculates binding free energy (MM/GBSA) [5]. |

| Cytoscape Software | Visualizes and analyzes complex biological networks (e.g., compound-target-pathway). | Network pharmacology analysis and identification of hub genes [1] [5]. |

| SwissADME Web Tool | Predicts key pharmacokinetic parameters and drug-likeness. | Initial computational screening of herbal compounds for oral bioavailability [5]. |

| R clusterProfiler Package | Performs statistical analysis and visualization of functional profiles for genes. | Gene Ontology (GO) and KEGG pathway enrichment analysis [1]. |

| String Database | Retrieves known and predicted protein-protein interactions. | Constructing PPI networks to contextualize predicted herbal targets [5]. |

Integrated Workflow: From Herbs to Validated Targets

The complete, iterative workflow for translating multi-component herbs to molecular targets integrates all previously described stages. AI acts as the connecting thread, enhancing each step with predictive power and data integration capabilities [3] [7].

Diagram 1: AI-Integrated Workflow from Herbs to Validated Targets (Max width: 760px)

Case Study Application & Key Signaling Pathways

A practical application of this workflow identified therapeutic mechanisms for herbal medicines in prostate cancer (PCa). Bioinformatics analysis of differentially expressed genes in PCa patients versus predicted herbal targets revealed five hub genes (CCNA2, CDK2, CTH, DPP4, SRC). Network and enrichment analysis further integrated these into four core signaling pathways: PI3K-Akt, MAPK, p53, and the cell cycle [1]. These pathways, central to cancer progression, illustrate how herbal compounds can exert a coordinated multi-target effect, as visualized in Diagram 2.

Diagram 2: Herbal Medicine Action on Key Cancer Signaling Pathways (Max width: 760px)

Conceptual Framework of the Core Challenge

The fundamental challenge in herbal medicine research is navigating the high complexity of both the herbal input and the biological system. This is conceptually framed as a problem of mapping a high-dimensional chemical space onto a high-dimensional biological space, where AI serves as the essential tool for pattern recognition, prediction, and data reduction.

Diagram 3: Conceptual Framework of the Core Research Challenge (Max width: 760px)

The path from multi-component herbs to molecular targets is being fundamentally reshaped by AI and integrative computational workflows. The methodology outlined—combining systematic compound screening, AI-driven target prediction, network pharmacology, and multi-scale validation—provides a robust template for demystifying herbal medicine's mechanisms. Future progress hinges on improving the quality and standardization of herbal compound databases [7], developing more interpretable (explainable) AI models that provide mechanistic insights alongside predictions [3], and fostering closer collaboration between computational scientists and experimental biologists to iteratively refine and validate predictions. This structured, data-driven approach promises to unlock the full therapeutic potential of herbal medicine, transforming it from a traditional practice into a cornerstone of next-generation, network-based precision drug discovery.

The discovery and validation of novel therapeutic targets represent the foundational, and often most formidable, stage in the drug development pipeline. In the context of herbal medicine research, this challenge is amplified by the inherent complexity of phytochemical mixtures, multi-target mechanisms, and the historical reliance on empirical observation rather than molecular-level deconstruction [3] [8]. Traditional experimental paradigms for target discovery are characterized by serial, labor-intensive processes that contribute to unsustainable costs and protracted timelines, creating a significant bottleneck that slows the translation of traditional knowledge into evidence-based, precision therapies [9] [10].

This document provides a technical examination of this bottleneck, quantifying its impact, detailing the core experimental methodologies, and framing the transformative potential of artificial intelligence (AI) for drug-target interaction (DTI) prediction. By integrating AI-driven computational models, the field is poised to transition from a high-cost, low-efficiency paradigm to one of accelerated, rational discovery, particularly for the unique challenges presented by multi-compound herbal formulations [11] [6].

Quantifying the Bottleneck: Time and Cost Analyses

The financial and temporal burdens of traditional drug discovery are well-documented, with oncology serving as a critical case study due to the complexity of disease mechanisms and high clinical attrition rates [9]. The following tables summarize the core quantitative metrics that define the bottleneck.

Table 1: Traditional vs. AI-Augmented Drug Discovery Timelines

| Development Phase | Traditional Timeline | AI-Augmented Timeline | Key Activities & Notes |

|---|---|---|---|

| Target Identification & Validation | 2-5 years | 6-12 months | AI integrates multi-omics data & literature mining for rapid hypothesis generation [9] [11]. |

| Lead Compound Discovery | 3-6 years | 1-2 years | AI enables in silico screening and generative chemistry for novel molecular design [9] [6]. |

| Preclinical Development | 1-2 years | ~1 year | AI improves PK/PD and toxicity prediction, optimizing candidate selection [3]. |

| Clinical Trials (Phases I-III) | 6-7 years | 5-6 years (potential optimization) | AI aids in patient stratification, biomarker discovery, and trial design [9]. |

| Total Timeline | ~12-15 years | ~8-10 years | AI's major impact is in compressing early research stages [9] [11]. |

Table 2: Economic Burden of Traditional Drug Discovery

| Cost Category | Estimated Cost (USD) | Description & Contributing Factors |

|---|---|---|

| Average Total Cost per Approved Drug | ~$2.4 billion | Median cost increased ~20% from 2013-2022, reflecting growing complexity [11]. |

| Early-Stage R&D (Preclinical) | High proportion of total cost | Includes target discovery, HTS, lead optimization. High attrition rate makes this phase particularly costly [10]. |

| Clinical Trial Expenses | Often exceeds $1 billion | Patient recruitment, monitoring, and lengthy trial durations are major cost drivers [9]. |

| Cost of Failure (Attrition) | Extremely high | ~90% of oncology drug candidates fail in clinical development, amortizing their cost to successful drugs [9]. |

| AI Implementation (Initial Investment) | Significant but offsetting | Costs for computational infrastructure, data curation, and expertise are offset by reduced experimental cycles and failure rates [6]. |

Core Experimental Methodologies and Protocols

The traditional target discovery workflow is a multi-stage process reliant on extensive laboratory experimentation. The protocols below outline the standard approaches that contribute to the time and cost metrics detailed above.

Target Identification & Hypothesis Generation

- Objective: To identify a biomolecule (e.g., protein, gene) whose modulation is expected to have a therapeutic effect.

- Classical Protocol:

- Disease Association Studies: Employ genome-wide association studies (GWAS), transcriptomics (RNA-seq), and proteomics to identify genes/proteins differentially expressed in diseased versus healthy tissues [9].

- Functional Genomics: Use CRISPR-Cas9 or RNA interference (RNAi) screens to systematically knock out or knock down genes in disease models and assess impact on cell viability or phenotype [11].

- Literature & Pathway Analysis: Manual curation of scientific literature to construct disease-relevant signaling pathways and identify potential key nodes for intervention [10].

- Bottlenecks: Low-throughput, expensive functional screens; manual literature review is slow and incomplete; difficulty in distinguishing driver targets from passenger phenomena.

High-Throughput Screening (HTS) & Hit Identification

- Objective: To experimentally test hundreds of thousands to millions of compounds for activity against a validated target.

- Classical Protocol:

- Assay Development: Develop a robust biochemical (e.g., enzyme activity) or cell-based (e.g., reporter gene) assay that quantifies target modulation. This can take 3-6 months to optimize for HTS robustness [10].

- Library Screening: Screen a diverse chemical library (often >1 million compounds) using automated liquid handling and detection systems. Throughput can reach 100,000 compounds per day [10].

- Hit Triage: Apply statistical thresholds to identify "hits" (e.g., compounds showing >50% inhibition at 10 µM). Confirm hits in dose-response experiments to determine potency (IC50/EC50).

- Bottlenecks: Extremely high reagent and infrastructure costs; high false-positive/negative rates; "needle-in-a-haystack" approach yields many non-drug-like hits requiring extensive optimization [10].

Lead Optimization & Validation

- Objective: To transform a confirmed hit into a "lead" compound with improved potency, selectivity, and drug-like properties.

- Classical Protocol:

- Medicinal Chemistry Cycles: Synthesize analog series around the hit's chemical scaffold. This is an iterative process of synthesis -> testing -> analysis (SAR).

- In vitro ADME-Tox Profiling: Assess permeability (Caco-2), metabolic stability (microsomes), cytochrome P450 inhibition, and early cytotoxicity.

- Target Engagement & Phenotypic Validation: Use techniques like cellular thermal shift assay (CETSA) or surface plasmon resonance (SPR) to confirm direct target binding. Test in more complex disease models (e.g., 3D co-culture, patient-derived organoids) [10].

- Bottlenecks: Each synthesis-test cycle can take weeks to months; poor pharmacokinetic properties often discovered late, leading to dead ends; requires extensive specialized expertise in chemistry and biology.

AI as a Disruptive Solution: Frameworks for Herbal Medicine

AI, particularly machine learning (ML), deep learning (DL), and large language models (LLMs), provides a suite of tools to address each segment of the traditional bottleneck. In herbal medicine research, these tools are adapted to handle multi-component, multi-target complexity [3] [8].

1. AI for Enhanced Target Discovery in Complex Systems:

- Network Pharmacology & Multi-Omics Integration: AI algorithms can integrate transcriptomic, proteomic, and metabolomic data from cells treated with herbal extracts to reverse-engineer their mechanism of action. This identifies not just single targets, but perturbed networks and key hub targets [3] [8].

- Literature Mining with Biomedical LLMs: Domain-specific LLMs like BioBERT and BioGPT can process vast volumes of historical and modern scientific text, including traditional medicine treatises and modern phytochemistry papers, to extract latent relationships between herbs, compounds, and diseases [11] [8].

2. Predicting Polypharmacology & Drug-Herb Interactions:

- Multi-Target DTI Prediction: Unlike single-target synthetic drugs, herbal compounds often exhibit polypharmacology. Graph neural networks (GNNs) and other DL models can predict interactions between multiple phytochemicals and a panel of potential protein targets simultaneously, mapping a "footprint" of bioactivity [3] [6].

- Safety and Interaction Risk Assessment: AI models trained on chemical structures and known adverse events can predict potential herb-drug interactions (HDIs), particularly risks like drug-induced liver injury (DILI) or modulation of drug-metabolizing enzymes (e.g., CYP450) [3] [8].

3. Virtual Screening & In Silico Validation for Herbal Constituents:

- Structure-Based Virtual Screening: When a 3D protein structure is available (experimentally or via AlphaFold2), molecular docking simulations powered by AI scoring functions can prioritize which herbal constituents are most likely to bind from a library of thousands [11] [6].

- Generative Chemistry for Analog Design: If a promising herbal-derived scaffold is identified but has suboptimal properties, generative AI models can design novel analog structures with improved potency, selectivity, and pharmacokinetic profiles [9] [6].

Visualizing Workflows and Pathways

The following diagrams, generated using DOT language, illustrate the core concepts and workflows described.

Diagram 1: The Traditional Target Discovery Bottleneck This diagram visualizes the sequential, time-intensive stages of traditional drug target discovery, highlighting the phases where time and cost accumulate most significantly.

Diagram 2: AI-Augmented Workflow for Herbal Target Discovery This diagram shows how AI models integrate diverse data sources specific to herbal medicine to generate multiple, prioritized hypotheses for experimental validation.

Diagram 3: Example Signaling Pathway with Herbal Intervention Points This diagram maps a simplified inflammatory (NF-κB) pathway, highlighting key proteins that are common targets for anti-inflammatory herbal constituents and demonstrating the multi-target potential of such compounds.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for Target Discovery

| Reagent / Material Category | Specific Examples | Primary Function in Target Discovery |

|---|---|---|

| Recombinant Proteins | Purified human kinases, GPCRs, disease-associated enzymes. | Serve as the direct target in biochemical HTS assays for hit finding [12] [10]. |

| Cell-Based Assay Systems | Reporter gene cell lines (e.g., NF-κB luciferase), isogenic disease cell pairs, primary patient-derived cells. | Enable functional, phenotypic screening in a more biologically relevant context [9] [10]. |

| Chemical Libraries | Diverse small-molecule collections, fragment libraries, natural product-derived libraries. | Source of potential hit compounds for screening campaigns [10]. |

| Affinity-Based Probes | Biotinylated or photoaffinity-labeled small molecules, activity-based protein profiling (ABPP) probes. | Used for target deconvolution—identifying the protein targets of an active but uncharacterized herbal compound [11]. |

| CRISPR Screening Libraries | Genome-wide or pathway-focused sgRNA libraries. | For functional genomic screens to identify genes essential for cell survival or disease phenotype (target identification/validation) [11]. |

| Antibodies & Detection Kits | Phospho-specific antibodies, ELISA kits, TR-FRET/AlphaLISA detection systems. | Critical for developing sensitive and specific assays to measure target modulation or downstream signaling events [10]. |

| AI-Ready Datasets & Software | Curated herb-compound-target databases (e.g., TCMSP), protein-ligand affinity data, AI model platforms (PandaOmics, Chemistry42). | Provide the structured, high-quality data necessary to train and deploy predictive AI models for herbal research [11] [6] [8]. |

The traditional bottleneck in experimental target discovery, characterized by exorbitant costs and decade-long timelines, is no longer a tenable constraint, especially for the nuanced field of herbal medicine [11] [13]. The integration of AI and computational prediction into the research workflow represents a paradigm shift. By front-loading the discovery process with intelligent prioritization—of targets, of herbal constituents, and of polypharmacological networks—AI drastically reduces the empirical search space [6] [8].

The future of herbal medicine research lies in a hybrid, iterative model. AI-generated predictions guide focused, high-value experimental validation. The results from these wet-lab experiments then feed back to refine and retrain the AI models, creating a virtuous cycle of increasing accuracy and efficiency [3] [8]. This synergy between computational prediction and experimental validation is key to overcoming the historical bottlenecks, ultimately enabling the precise, personalized, and evidence-based application of traditional herbal wisdom in modern therapeutic regimes [13].

The Core Challenge: From Herbal Complexity to Computational Prediction

The global paradigm in drug discovery is shifting, with traditional, complementary, and integrative medicine (TCIM) used in 170 countries and serving billions of people [14] [15]. This widespread use is anchored in millennia of observational evidence and holistic practice. However, the scientific validation and integration of herbal medicines into modern pharmacopeia face a fundamental challenge: the mismatch between holistic complexity and reductionist analysis. Herbal products are inherently multicomponent systems, where a single plant may contain hundreds of bioactive phytochemicals acting on multiple biological targets simultaneously [3]. This polypharmacology, while potentially the source of efficacy and synergistic benefits, creates immense analytical hurdles.

The primary obstacle in predicting Drug-Herb Interactions (DHIs) or discovering novel drug candidates from herbs is the "multi-unknown" problem: unknown active constituents, unknown protein targets, and unknown interaction mechanisms [3]. This is compounded by variability in plant composition due to genetics, geography, and processing methods. Consequently, the traditional high-throughput screening paradigm, designed for single-compound libraries against single targets, is often inefficient and ill-suited for herbal medicine research [16].

This is where Artificial Intelligence (AI) serves as a critical bridge. By applying machine learning (ML) and deep learning (DL) to vast, integrated datasets, AI can decode complex patterns and predict drug-target interactions (DTIs) within the phytochemical milieu [17] [18]. The thesis of this whitepaper is that AI-driven bioinformatics transforms herbal medicine from an empirical practice into a data-driven discovery engine. It enables the predictive mapping of traditional knowledge onto modern biological pathways, accelerating the identification of safe, synergistic, and efficacious multi-target therapies while providing mechanistic insights that respect the holistic foundations of these ancient systems.

Foundational Infrastructure: Data Integration from Ancient Texts to Omics

The efficacy of any AI model is contingent on the quality, quantity, and diversity of its training data. Integrating traditional medicine with bioinformatics requires constructing a unified data infrastructure that harmonizes historical knowledge with contemporary molecular data.

Digitizing Traditional Knowledge: Global efforts are underway to preserve and structure ancestral knowledge. The cornerstone is the WHO Traditional Medicine Global Library (TMGL), launching in December 2025, which will be the world's most comprehensive digital repository for TCIM [19]. By mid-2025, it had already integrated over 1.5 million records, including evidence maps, journals, and clinical policies [19]. Initiatives like India's Traditional Knowledge Digital Library (TKDL) use AI to protect this knowledge from biopiracy while making it available for research [15]. For computational research, this textual and clinical knowledge must be converted into structured data. This involves natural language processing (NLP) to extract entities like herb names, formulas, indications, and preparation methods from classical texts and modern literature, linking them to standardized biomedical ontologies.

Multi-Omics Characterization of Herbs: Modern analytics provide the molecular lexicon for traditional concepts. A systems biology approach is essential:

- Genomics/Transcriptomics: Sequencing the genomes of medicinal plants (e.g., Salvia miltiorrhiza, Artemisia annua) identifies genes involved in the biosynthesis of key secondary metabolites [20].

- Metabolomics: Techniques like LC-MS and GC-MS provide the most direct readout of the chemical composition of an herbal extract, identifying and quantifying hundreds of phytochemicals simultaneously [21].

- Proteomics & Network Pharmacology: This involves identifying the protein targets of herbal compounds in human cells. When combined with bioinformatics, it allows for the construction of "herb-target-pathway-disease" networks, offering a systems-level view of mechanism [3].

Table 1: Core Multi-Omics Data Types for Herbal Medicine Research

| Data Type | Description | Role in AI-Driven Discovery | Example Sources/Tools |

|---|---|---|---|

| Cheminformatics | Chemical structures, properties (e.g., SMILES strings, molecular fingerprints). | Enables similarity search, ADMET prediction, and virtual screening. | PubChem, ChEMBL, RDKit [18]. |

| Genomics | Whole genome sequences of medicinal plants. | Identifies biosynthetic gene clusters for key metabolites. | NCBI, PlantGDB [20]. |

| Metabolomics | Comprehensive profiles of small-molecule metabolites in plant or patient samples. | Provides the definitive chemical profile of an herb; links composition to effect. | GNPS, MetaboAnalyst [21]. |

| Proteomics | Large-scale study of protein expression and interaction. | Identifies potential protein targets of herbal compounds in human biology. | UniProt, STRING database [18]. |

| Pharmacological | Known drug-target interactions, pathway data, adverse event reports. | Provides ground truth for training DTI prediction models. | DrugBank, BindingDB, KEGG [18]. |

- Creating Interoperable Knowledge Graphs: The ultimate goal is to integrate the above data types into a dynamic knowledge graph. In this graph, nodes represent entities (e.g., "Curcumin," "CYP3A4," "Inflammation," Curcuma longa"), and edges represent their relationships (e.g., "inhibits," "treats," "contains") [3] [18]. AI can then traverse this graph to generate novel hypotheses, such as predicting which compounds in a novel herb might interact with a specific disease-associated protein network.

AI Methodologies for Drug-Target Interaction Prediction in Herbal Medicine

AI transforms the integrated data into predictive insights. For herbal medicine, DTI prediction models must handle the unique challenges of polypharmacy and data sparsity. Current methodologies form a hierarchical toolkit.

Similarity-Based Methods: These foundational approaches operate on the principle that chemically similar compounds likely share biological targets. For an herbal compound, its molecular fingerprint is compared against large libraries of known drugs (e.g., DrugBank) to find neighbors. While interpretable and fast, these methods struggle with "activity cliffs" (where small chemical changes cause large biological effects) and are less effective for novel, structurally unique natural products [3] [18].

Network-Based & Knowledge Graph Methods: These methods excel at capturing the systemic, multi-target nature of herbs. By constructing a heterogeneous network connecting herbs, compounds, proteins, pathways, and diseases, predictions can be made via graph inference algorithms. For example, if two herbs share multiple compounds that target a cluster of proteins in a cancer pathway, a novel herb with similar compounds can be predicted to affect that pathway [3]. Graph Neural Networks (GNNs) are particularly powerful for learning embeddings from such network structures [18].

Deep Learning & Hybrid Models: This represents the state-of-the-art, using complex architectures to learn high-level features directly from raw data.

- Structure-Based Models: With the advent of AlphaFold, which predicts protein 3D structures with high accuracy, molecular docking simulations can be performed in silico at scale [17] [22]. DL models can then predict binding affinity from the docked poses or even directly from 3D structural data.

- Multimodal & Hybrid Models: The most robust models fuse multiple data types. For instance, a model might take a compound's SMILES string (chemical structure), a protein's amino acid sequence (biological function), and known side-effect profiles (phenotypic data) as concurrent inputs. Transformer-based architectures and attention mechanisms are increasingly used to weigh the importance of different features and data modalities [18].

Table 2: AI/ML Approaches for Herb-Target Interaction Prediction

| Method Category | Key Algorithms | Strengths for Herbal Research | Key Limitations |

|---|---|---|---|

| Similarity-Based | Molecular fingerprint similarity, Euclidean distance. | Simple, interpretable, fast screening. | Fails for novel scaffolds; ignores polypharmacology. |

| Network-Based | Random walk, graph inference, Network Propagation. | Captures system-level effects; predicts indirect relationships. | Dependent on completeness of underlying network data. |

| Classical ML | SVM, Random Forest, Gradient Boosting. | Effective with well-curated feature vectors (e.g., chemical descriptors). | Requires manual feature engineering; may not capture deep patterns. |

| Deep Learning | Graph Neural Networks (GNNs), Transformers, CNNs. | Learns features automatically; excels with multimodal data (sequence, structure). | High computational cost; requires large datasets; "black box" interpretability. |

| Generative AI | Generative Adversarial Networks (GANs), VAEs. | Can design novel, drug-like molecules inspired by natural product scaffolds. | Risk of generating unrealistic or unsynthesizable molecules. |

From Prediction to Validation: Experimental Workflows and Protocols

AI predictions are hypotheses that require rigorous experimental validation. A closed-loop, iterative pipeline ensures that computational insights inform and are refined by laboratory science.

Protocol 1: In Silico Screening & Prioritization

- Input Preparation: From the knowledge graph, extract all unique phytochemicals from a herb of interest (e.g., 500 compounds). Retrieve 3D structures from PubChem or generate them using tools like CORINA. For a disease of interest (e.g., Alzheimer's), compile a relevant target set (e.g., AChE, BACE1, NMDA receptor) using AlphaFold-predicted or PDB structures.

- Virtual Screening: Perform high-throughput molecular docking using software like AutoDock Vina or Glide. Use an ensemble docking approach to account for protein flexibility.

- AI-Powered Affinity Prediction: Feed the docking poses and compound/target features into a pre-trained DTA (Drug-Target Affinity) model (e.g., DeepDTA, GraphDTA) [22] to obtain a more accurate binding score.

- ADMET & Synergy Prediction: Filter top hits through AI models predicting pharmacokinetic properties (absorption, distribution, metabolism, excretion, toxicity) and assess potential multi-target synergy using network-based scores.

- Output: A prioritized list of 10-20 lead phytochemicals with predicted targets, binding affinities, and favorable ADMET profiles for experimental testing.

Protocol 2: In Vitro Validation of Multi-Target Effects

- Compound Acquisition: Source the top-priority pure phytochemicals from commercial suppliers or isolate them from the authenticated plant material using preparative HPLC.

- Target-Based Assays: For each predicted primary target, perform a confirmatory biochemical assay (e.g., enzyme inhibition assay for AChE).

- Cell-Based Phenotypic Screening: To capture polypharmacology, use relevant cell lines (e.g., neuronal SH-SY5Y cells for Alzheimer's) treated with the compound. Employ high-content imaging or omics readouts.

- Mechanistic Confirmation: For hits from phenotypic screens, use techniques like Cellular Thermal Shift Assay (CETSA) or drug affinity responsive target stability (DARTS) to confirm direct physical engagement with the AI-predicted protein targets in a cellular context.

- Data Feedback: The experimentally validated (or invalidated) interactions are fed back into the knowledge graph as new ground-truth labels, retraining and improving the AI models.

- The Scientist's Toolkit: Essential Research Reagent Solutions*

*Table 3: Key Research Reagents & Platforms for AI-Guided Herbal Research

Category Item/Platform Function in Workflow Key Characteristics Bioinformatics AlphaFold Suite Provides high-accuracy 3D protein structures for structure-based virtual screening. Essential for targets without crystal structures [17] [22]. Cheminformatics RDKit Open-source toolkit for cheminformatics; used to generate molecular descriptors and fingerprints from SMILES. Enables featurization of phytochemicals for ML models [18]. Multi-Omics LC-MS/MS System Workhorse for untargeted metabolomics; profiles the complete small-molecule composition of herbal extracts. Generates critical input data for linking chemistry to bioactivity [21]. Functional Genomics CRISPR-Cas9 Screening Kit Validates AI-predicted novel targets by creating knockouts in cell lines and observing phenotypic changes. Establishes causal relationships, not just correlations [20]. AI Platform Insilico Medicine PandaOmics / DeepMind AlphaFold Integrated AI platforms for target discovery, biomarker identification, and multi-omics analysis. Provides end-to-end computational discovery environments [17] [22].

Case Studies and Translational Impact

The translational power of this integrative approach is moving from concept to clinical reality.

Case Study: AI-Deciphered Mechanisms of Known Herb-Drug Interactions. St. John's Wort (Hypericum perforatum) is notorious for its interactions with drugs like warfarin and cyclosporine, but the exact multi-compound, multi-mechanism nature was complex. AI models integrating chemoinformatic, metabolomic (induction of CYP enzymes), and pharmacodynamic (serotonin modulation) data have successfully deconvoluted its effects. They clarified how hyperforin causes initial inhibition followed by long-term induction of CYP3A4 and P-glycoprotein, providing a systems-level explanation for its clinical interaction profile [3]. This demonstrates AI's power in retrospective mechanistic elucidation.

Case Study: AI-Driven Discovery of Novel Therapeutics from Herbs. A forward-looking application is de novo discovery. For example, AI platforms have been used to screen virtual libraries of natural product-inspired compounds against novel targets (e.g., TNIK for fibrosis) identified by AI from genomic data. Insilico Medicine's AI-discovered drug for idiopathic pulmonary fibrosis (INS018_055) entered clinical trials in a notably short timeframe [17] [22]. While not exclusively derived from an herb, this pipeline is directly applicable: an herb's phytochemicals can serve as the seed structures for generative AI to design optimized, novel drug candidates that retain desired multi-target profiles while improving drug-like properties.

Broader Impact on Drug Development: The integration addresses key bottlenecks. It provides a rational framework for herbal drug repurposing and synergistic formulation design (e.g., identifying optimal herb pairs in Traditional Chinese Medicine formulas) [21]. By predicting and mitigating DHIs early, it enhances patient safety in an era of increasing concurrent use of herbs and pharmaceuticals [3].

Ethical, Cultural, and Data Sovereignty Frameworks

The application of AI to traditional knowledge carries significant ethical obligations. The WHO/ITU/WIPO technical brief explicitly warns against AI becoming a tool for "automated biopiracy"—the systematic mining and patenting of traditional knowledge without consent or benefit-sharing [15].

- Indigenous Data Sovereignty (IDSov): A core principle is that data derived from Indigenous and local community knowledge must be governed by the communities themselves. This includes control over how knowledge is collected, used, stored, and who benefits from its commercialization [14] [15].

- Ethical AI Development Guidelines:

- Free, Prior, and Informed Consent (FPIC): Must be obtained from traditional knowledge holders before data digitization and use in AI training sets.

- Benefit-Sharing Agreements: Legal frameworks, such as the 2024 WIPO Treaty on Intellectual Property, Genetic Resources and Associated Traditional Knowledge, must underpin partnerships to ensure fair and equitable sharing of monetary and non-monetary benefits [14] [15].

- Co-Design with Practitioners: AI tools should be developed in collaboration with traditional medicine practitioners to ensure they address real-world needs and respect epistemic frameworks.

- Transparency & Explainability (XAI): Models should be as interpretable as possible to build trust and allow practitioners to understand the basis of predictions [3].

Future Trajectory and Strategic Recommendations

The field is evolving rapidly. Future directions include the integration of quantum chemistry simulations for ultra-precise binding energy calculations, the use of large language models (LLMs) to better mine unstructured historical texts, and the application of federated learning to train AI models on distributed, sensitive traditional knowledge databases without centralizing the data [18].

Strategic recommendations for research institutions and consortia are:

- Invest in Foundational Data Resources: Support the expansion and curation of platforms like the WHO TMGL and TKDLs, with strict adherence to IDSov principles.

- Develop and Benchmark Specialized AI Models: Foster the creation of open-source AI models specifically pretrained on natural product chemistry and traditional medicine data.

- Establish Standardized Experimental Protocols: Create consensus protocols for generating omics data from herbal specimens and for validating AI-predicted multi-target interactions.

- Create Interdisciplinary Training Programs: Cultivate a new generation of scientists fluent in both bioinformatics/AI and the cultural, historical, and ethical dimensions of traditional medicine.

In conclusion, AI acts as the essential translational bridge, converting the deep, complex wisdom of traditional medicine into a format that modern computational biology can interrogate and expand upon. This synergy does not seek to reduce traditional medicine to single targets but to understand its holistic efficacy through a modern, systemic lens. The responsible and ethical integration of these fields holds the promise of unlocking a vast, previously inaccessible reservoir of safe and effective therapeutic strategies for the future of global health.

The investigation of herbal medicines, particularly within systems like Traditional Chinese Medicine (TCM), is fundamentally challenged by their multi-component, multi-target, and multi-pathway nature [23]. This holistic therapeutic strategy contrasts sharply with the conventional single-target drug discovery paradigm, necessitating innovative analytical frameworks. Network pharmacology (NP) emerged as a critical bridge, offering a systems-level view by modeling the complex networks connecting herbal compounds, biological targets, and disease pathways [23]. However, traditional NP approaches are often limited by static analysis, high-dimensional data noise, and difficulties in capturing dynamic, cross-scale biological mechanisms [23].

The integration of Artificial Intelligence (AI)—encompassing machine learning (ML), deep learning (DL), and graph neural networks (GNNs)—has catalyzed a transformative shift. AI-driven network pharmacology (AI-NP) now enables the systematic decoding of herbal medicine's actions from molecular interactions to patient-level efficacy [23]. Concurrently, AI has become indispensable for a critical translational challenge: predicting and assessing the safety profiles of herbal medicines and their interactions with conventional drugs [3]. This article provides an in-depth technical guide to these current applications, framing them within the overarching thesis that AI-powered drug-target interaction (DTI) prediction is the cornerstone for modernizing and validating herbal medicine research, ultimately ensuring its efficacy and safety.

Evolution and Enhancement: From Network Pharmacology to AI-Driven Analysis

Network pharmacology provides the foundational conceptual model for studying polypharmacology in herbal medicine. Its core premise is the construction and analysis of interconnected networks, typically a "compound-target-pathway-disease" network, to elucidate systemic mechanisms [23].

Table 1: Comparative Analysis of Traditional and AI-Driven Network Pharmacology

| Comparison Dimension | Traditional Network Pharmacology | AI-Driven Network Pharmacology (AI-NP) | Key Advancement |

|---|---|---|---|

| Data Acquisition & Integration | Relies on fragmented public databases (e.g., TCMSP) and literature mining; slow updates [23]. | Integrates multimodal, high-dimensional data (omics, clinical records, real-world evidence) dynamically [23] [24]. | AI enables deep fusion of heterogeneous data, creating a richer, more current knowledge foundation. |

| Algorithmic Core | Based on statistics, topology analysis, and expert-driven correlation networks [23]. | Utilizes ML, DL, and GNNs to autonomously identify latent, non-linear patterns [23] [18]. | Shift from experience-driven to data-driven discovery, significantly enhancing predictive power. |

| Model Interpretability | Generally high, as networks are built on known relationships [23]. | Often low ("black-box"); addressed by Explainable AI (XAI) tools like SHAP and LIME [23] [3]. | Trade-off between predictive performance and transparency; XAI is critical for building scientific trust. |

| Computational Scalability | Manual or semi-automated curation; low efficiency for large-scale networks [23]. | High-throughput, parallel computing suitable for massive biological networks [23]. | Enables analysis at a scale that matches the complexity of herbal formulations and human biology. |

| Temporal Dynamics | Predominantly static analysis of interactions [23]. | Capable of modeling dynamic and time-series data to capture pathway activation and feedback loops [23]. | Moves from a snapshot to a movie-like understanding of pharmacological action. |

AI-NP addresses the critical limitations of its predecessor. For instance, GNNs excel at directly operating on the graph-structured data inherent to biological networks, learning meaningful representations of compounds and targets within their interaction context [23] [18]. Furthermore, AI facilitates multi-scale mechanism analysis, integrating insights from molecular, cellular, tissue, and patient levels to form a coherent explanatory model [23].

Core AI Methodologies for Drug-Target Interaction Prediction in Herbal Medicine

Predicting interactions between herbal compounds and protein targets is the central computational task. AI methodologies have evolved to handle the unique challenges of herbal data, including mixture complexity, sparse labeled data, and the need to model polypharmacology.

1. Data Types and Representation: The predictive performance of AI models hinges on input data representation. For herbal medicine research, this involves multi-modal data:

- Drug/Chemical Representation: Simplified Molecular Input Line Entry System (SMILES) strings, molecular fingerprints (e.g., ECFP), or 2D/3D molecular graphs [18] [24].

- Target Representation: Protein amino acid sequences, structural data (e.g., from AlphaFold), or gene ontology annotations [18].

- Interaction Data: Known DTIs from databases like BindingDB, STITCH, or specialized TCM databases (e.g., TCMSP, TCMID) [18] [24].

- Omics Data: Transcriptomic, proteomic, and metabolomic profiles are integrated to contextualize targets within disease pathways [25] [24].

2. Algorithmic Approaches:

- Similarity-Based Methods: Infer interactions based on chemical or genomic similarity. While interpretable, they struggle with novel, structurally unique natural products [3].

- Network-Based Methods: Leverage protein-protein interaction (PPI) networks or heterogeneous knowledge graphs. They can predict indirect interactions but depend on network completeness [3] [26].

- Machine & Deep Learning: These are the most impactful approaches. Models range from classic classifiers (e.g., Random Forests, SVMs) to advanced architectures:

- Graph Neural Networks (GNNs): Naturally model the graph structure of molecules and biological networks, becoming the state-of-the-art for DTI prediction [23] [18].

- Transformer Models: Process sequential data (SMILES, protein sequences) using self-attention mechanisms, capturing long-range dependencies effectively [18].

- Multimodal Fusion Models: Integrate multiple data types (e.g., chemical structure, gene expression, clinical outcomes) to improve prediction robustness and biological relevance [24].

Table 2: Common Public Data Resources for AI-Driven Herbal Medicine Research

| Data Type | Resource Name | Primary Content | Application in Herbal Research |

|---|---|---|---|

| Herbal Compounds | TCMSP, TCMID | Chemical compounds, ADMET properties, targets from TCM herbs [23] [24]. | Source of herbal metabolite structures and putative targets for model training. |

| General DTIs | BindingDB, STITCH, DrugBank | Experimentally validated drug-target interactions [18]. | Ground truth data for training and validating predictive models. |

| Omics Data | TCGA, GEO, Human Proteome Map | Genomic, transcriptomic, and proteomic data from diseases and treatments [25] [26] [24]. | For contextualizing targets and constructing disease-specific networks. |

| Protein Data | UniProt, PDB, AlphaFold DB | Protein sequences, functions, and 3D structures [18]. | For target representation and structure-based prediction. |

| Clinical & Phenotypic | FAERS, ClinicalTrials.gov | Adverse event reports and clinical trial results [27] [3]. | For safety signal detection and validating predicted interactions. |

3. Experimental Workflow for AI-Based DTI Prediction: A standard protocol for building a DTI prediction model for herbal compounds involves:

- Data Curation and Preprocessing: Collect positive (known interacting) and negative (non-interacting) pairs of herbal compounds and human targets. Negative sampling must be done carefully to avoid bias [18]. Standardize compound representations (e.g., convert SMILES to fingerprints) and protein representations (e.g., encode sequences).

- Feature Engineering/Selection: For ML models, extract relevant features (e.g., molecular descriptors, protein sequence features). DL models often perform automatic feature learning from raw data.

- Model Selection and Training: Split data into training, validation, and test sets. Choose an appropriate model architecture (e.g., GNN, Transformer). Train the model to minimize a loss function (e.g., binary cross-entropy for classification).

- Validation and Interpretation: Evaluate performance using metrics like AUC-ROC, precision, recall, and F1-score on the held-out test set. Use XAI methods to interpret predictions and identify which molecular features drove the decision [3].

- Experimental Prioritization and Validation: The model's output is a ranked list of novel, high-probability DTIs. These predictions must be prioritized for in vitro validation (e.g., binding assays, functional cellular assays) and later in vivo studies [28] [22].

AI-NP Workflow for Herbal DTI Prediction

Application Frontier: AI in Safety Assessment and Drug-Herb Interaction Prediction

A paramount application of AI-predicted DTIs is in the proactive assessment of safety risks, particularly for drug-herb interactions (DHIs). DHIs, which can be pharmacokinetic (PK) or pharmacodynamic (PD), pose significant clinical challenges due to the complexity of herbal mixtures [3].

1. AI Models for DHI Prediction: AI models integrate diverse data to predict DHIs:

- PK-DHI Prediction: Models are trained on data involving key metabolic enzymes (e.g., CYP450 isoforms) and transporters (e.g., P-glycoprotein). Features include chemical inhibitors/inducers of these proteins and herb constituent profiles [3]. For example, models can predict St. John's Wort's induction of CYP3A4 and P-gp, leading to reduced plasma levels of co-administered drugs [3].

- PD-DHI Prediction: Models analyze target affinity profiles of both drug and herb constituents. If an herb compound targets the same pathway (synergistically or antagonistically) or an off-target linked to adverse events, a PD interaction is flagged [27] [3]. Network-based models are particularly useful here for mapping compounds onto shared disease or adverse event pathways.

2. A Structured Safety Assessment Framework Enhanced by AI: A science-based methodology for combination safety risk assessment provides a robust framework that can be augmented with AI [27]. The steps include:

- Step 1: Gather Information: Compile all known safety, pharmacological, and omics data for each herbal product and conventional drug involved. AI can automate the mining and synthesis of this information from literature and databases.

- Step 2: Review Overlapping & Non-Overlapping Components: Systematically compare the profiles. AI excels here by performing high-dimensional comparison of target sets, pathway enrichments, and adverse event signatures.

- Step 3: Predict Combined Risk Profile: Use AI models to simulate the network perturbations caused by the combination. Predict novel, emergent risks not obvious from single-agent profiles by analyzing network topology (e.g., cascade effects, pathway crosstalk) [27] [26].

AI-Augmented Safety Assessment Framework

3. Case Study: Predicting Interactions with St. John's Wort (SJW): SJW, containing hyperforin and hypericin, is a classic example of complex DHI mechanisms [3].

- AI Analysis: A multimodal AI model would integrate SJW's chemical data, known effects on CYP enzymes/P-gp (PK), and its serotonergic activity (PD).

- Prediction: For a patient taking warfarin (metabolized by CYP2C9), the model would predict a PK interaction: SJW induces CYP2C9, potentially reducing warfarin efficacy. For a patient taking an SSRI, the model would predict a PD interaction: combined serotonergic action increases the risk of serotonin syndrome.

- Validation: These AI-driven hypotheses are supported by clinical reports, demonstrating the model's translational relevance [3].

Mechanisms of Drug-Herb Interactions (St. John's Wort Example)

Table 3: Research Reagent Solutions for Experimental Validation

| Reagent/Tool Category | Specific Example | Function in Validation | Key Consideration |

|---|---|---|---|

| Target Protein | Recombinant human enzymes (e.g., CYP450 isoforms), purified receptor proteins. | In vitro binding (SPR, thermal shift) and enzyme activity assays to confirm direct DTI [22]. | Ensure protein activity and correct post-translational modifications. |

| Cellular Assay Systems | Engineered cell lines (e.g., with reporter genes, overexpressed targets), primary hepatocytes. | Functional validation of target modulation (e.g., luciferase assay, Ca2+ flux), cytotoxicity (MTT), and transporter assays [28]. | Choose cell lines relevant to the target's native tissue and disease context. |

| Omics Profiling Kits | RNA-Seq, phospho-proteomic, or metabolomic profiling kits. | To confirm predicted pathway alterations and polypharmacology post-treatment [25] [24]. | Requires robust bioinformatics support for data analysis. |

| Chemical Standards | Certified reference standards of predicted active herbal compounds. | For use as positive controls in assays and to ensure experimental reproducibility [28]. | Purity and provenance are critical; batch-to-batch variability in herbs is a major challenge. |

| AI & Software Tools | RDKit (cheminformatics), DeepChem (DL), GNN libraries (PyTorch Geometric, DGL). | For building in-house prediction models, processing chemical structures, and generating features [18]. | Requires significant computational expertise and infrastructure. |

Detailed Experimental Protocol for In Vitro Validation of AI-Predicted Herbal DTIs:

Objective: To validate the binding and functional interaction between a predicted herbal compound (H) and its target protein (T). Materials:

- Recombinant human target protein (T).

- Purified herbal compound (H) and a known positive control inhibitor/activator.

- Appropriate assay buffer and substrates.

- Microplate reader or other suitable detector. Method:

- Binding Affinity Assay (e.g., Surface Plasmon Resonance - SPR):

- Immobilize protein T on a sensor chip.

- Flow compound H at a range of concentrations over the chip.

- Measure the association and dissociation rates in real-time to calculate the equilibrium dissociation constant (KD).

- Interpretation: A dose-dependent binding signal with a calculable KD confirms the direct physical interaction predicted by the AI model.

- Functional Enzyme Inhibition/Activation Assay:

- In a microplate, mix protein T with its natural substrate in reaction buffer.

- Pre-incubate T with varying concentrations of compound H or controls.

- Initiate the reaction and monitor product formation spectrophotometrically or fluorometrically over time.

- Calculate the half-maximal inhibitory/effective concentration (IC50/EC50).

- Interpretation: A concentration-dependent change in enzyme activity confirms the compound's functional effect on the target, supporting the AI prediction's biological relevance.

- Cellular Functional Validation:

- Culture a cell line expressing target T.

- Treat cells with compound H across a concentration range.

- Measure downstream effects: e.g., phosphorylation status (via western blot), reporter gene activity, or cytokine release (via ELISA).

- Interpretation: Cellular activity confirms the interaction in a more physiologically relevant environment, accounting for membrane permeability and cellular metabolism.

The integration of AI with network pharmacology has fundamentally advanced the study of herbal medicines, transitioning it from a descriptive to a predictive and mechanistic science. AI-driven DTI prediction serves as the critical engine, powering both the elucidation of complex therapeutic mechanisms and the proactive assessment of safety risks. This dual application is essential for bridging the gap between traditional herbal knowledge and modern evidence-based medicine.

Future progress hinges on addressing several challenges: improving the interpretability of complex AI models to foster trust among researchers and clinicians [23] [3]; creating standardized, high-quality datasets for herbal compounds to mitigate data sparsity and variability [28] [24]; and developing dynamic, multi-scale models that can predict temporal and dose-dependent effects of herbal mixtures [23]. As AI methodologies continue to evolve—incorporating generative AI for novel herb-inspired molecule design, large language models for mining unstructured data, and digital twins for personalized simulation—their role in validating, optimizing, and safely delivering the therapeutic potential of herbal medicine will only become more profound.

引言:AI在中药靶点发现中的机遇与核心挑战

中医药(Traditional Chinese Medicine, TCM)采用多成分、多靶点的整体干预策略来治疗复杂疾病,这与现代西方医学的“单一药物-单一靶点”范式形成鲜明对比 [29]。这种整体观虽具优势,但也导致其活性代谢物、治疗靶点及协同作用机制极难阐明 [30]。人工智能(AI),特别是机器学习(ML)和深度学习(DL),凭借其强大的数据分析和非线性建模能力,为系统解析中药的复杂药理提供了革命性工具,正在推动中医药向精准医学和数据驱动研究的方向转型 [30] [31]。

AI在中药研究中的应用覆盖了从靶点预测、活性成分筛选到方剂优化的全链条 [31]。网络药理学通过构建“药物-靶点-疾病”多层次网络,为理解中药的多靶点效应提供了框架 [29]。而大语言模型(LLM)和图神经网络(GNN)等先进AI技术,能够整合海量多模态数据(如基因组学、文献、临床数据),挖掘潜在模式,从而显著提升靶点识别和机制阐释的能力 [29]。

然而,将AI成功应用于中药靶点发现,正面临三大相互关联的核心壁垒:数据异质性、小数据集和新兴的监管路径。这些壁垒深深植根于中医药本身的知识体系特性和现代研发的监管环境中。本技术指南将深入剖析这些挑战,并提供基于当前最新技术发展的解决方案与实验方案。

核心壁垒深度剖析

数据异质性:多源异构数据的整合困境

数据异质性是阻碍AI模型训练与泛化的首要挑战。它主要体现在数据来源、结构和语义三个层面。

来源与结构异质性:中药研究数据分散于古籍文献、现代科研论文、电子病历、不同组学平台以及各机构私有数据库中 [31]。这些数据格式不一,包括非结构化的文本(如古籍描述)、半结构化的临床记录、结构化的分子数据以及图像(如舌象、脉象图) [31]。例如,中药的命名存在古今差异(如“山药”又称“薯蓣”),缺乏统一标准,导致数据整合困难 [31]。

语义与理论隔阂:中医药的核心概念(如“气”、“阴阳”、“经络”)具有抽象性和整体性,缺乏与现代生物学直接对应的量化指标 [31]。现有AI算法多基于西方还原论科学体系开发,难以理解和模拟中医“辨证论治”中动态、个性化的复杂逻辑关系 [31]。这造成了AI算法与中医药理论之间的“文化隔阂” [31]。

多模态融合挑战:中医诊疗中高达70%的信息为非文本数据(如舌象、脉象),而现有AI对多模态数据的处理与融合能力仍显不足 [31]。早期融合、中期融合和晚期融合等策略虽被提出,但如何有效整合异质数据以形成统一且富含语义的特征表示,仍是待解难题 [30]。

表1:中医药研究中的数据异质性主要表现与影响

| 异质性维度 | 具体表现 | 对AI模型的影响 |

|---|---|---|

| 来源与格式 | 古籍文本、现代文献、电子病历、组学数据、影像数据并存;格式不统一 [31]。 | 数据清洗与预处理成本高昂,需要复杂的ETL(提取、转换、加载)流程。 |

| 语义与术语 | 古今药名差异大;中医抽象概念(如“气虚”)缺乏标准化量化定义 [31]。 | 导致特征工程困难,模型难以学习有效表征,易产生偏差。 |

| 理论体系 | 中医强调整体观和辨证论治,与现代生物医学的还原论范式不同 [31]。 | 通用AI模型难以直接应用,需要开发“文化适配”的新型算法 [31]。 |

| 数据模态 | 文本、图像(舌诊、面诊)、时序信号(脉诊)、结构化数据混合 [31]。 | 要求模型具备多模态融合能力,技术复杂度高,且缺乏高质量标注数据。 |

小数据集:高质量标注数据的稀缺性

与化学药或生物药相比,针对特定中药复方或活性成分的高通量实验数据规模有限,这直接制约了数据驱动型AI模型的性能。

标注成本与专家依赖:中药数据的标注高度依赖于领域专家(如老中医、中药药理学家),但专家资源稀缺,且标注过程主观性强、效率低下,导致高质量标注数据集的构建极其困难和昂贵 [31]。

数据碎片化与孤岛:有价值的中医药数据广泛分布于不同的医疗机构、科研院所和企业中,由于缺乏统一的数据标准和共享机制,形成了大量的“数据孤岛” [31]。临床数据的完整性也因机构间采集标准不一而受到影响 [31]。

小样本下的模型风险:在有限的数据集上训练复杂的深度学习模型,极易导致过拟合,即模型完美记忆训练数据但泛化到新样本的能力很差 [30]。此外,数据偏差可能被放大,使得模型预测结果不可靠。

新兴监管路径:标准、伦理与验证框架的缺失

AI驱动中药研发的监管环境尚处于萌芽阶段,构成了产品转化和临床应用的“隐形门槛” [31]。

标准化体系不完善:中医药在术语、诊断标准、疗效评价等方面尚未形成广泛接受的国际标准 [31]。这使得基于AI开发的诊断工具或疗效预测模型缺乏一致的评估基准,难以获得监管机构的认可。

伦理与数据治理挑战:中医药数据包含大量患者隐私和传统知识,其采集、使用和共享涉及严峻的伦理与数据安全问题 [31]。目前,关于医疗数据的所有权、授权流程等核心问题的法律法规尚不健全 [31]。

模型验证与责任界定困难:现有的药品和医疗器械监管框架难以完全适应AI驱动的研发新模式 [31]。当AI辅助诊断或靶点预测出现错误时,责任应由开发者、使用者还是算法本身承担,目前界定模糊 [31]。监管机构对AI模型作为医疗设备软件的临床验证要求(例如,需要前瞻性临床试验证明其有效性和安全性)对于许多研究型AI工具而言是一个高昂的壁垒。

表2:AI在天然产物/中药发现中的关键挑战与解决方案一览 [32]

| 挑战类别 | 具体问题 | * proposed 解决方案* |

|---|---|---|

| 数据质量 | 数据来源混乱,标注信息不全,缺乏标准化。 | 建立MI-AI-NP(最小信息AI天然产物)数据标准,强制要求收录来源、化学指纹图谱、伦理声明等 [32]。 |

| 模型泛化 | 对训练集外的新植物属或结构预测性能骤降。 | 采用动态验证机制(如滚动测试集)、引入合成生物学约束、建立跨实验室基准测试 [32]。 |

| 临床转化 | 体外活性与体内疗效脱节,转化成功率低。 | 构建微生理系统数字孪生(如类器官模型),开发基于患者分子特征的智能分组算法 [32]。 |

| 监管适配 | 现有框架滞后,审批路径不明确。 | 建议建立AI模型分级认证制度,制定动态验证标准,探索监管沙盒机制 [32]。 |

技术策略与实验方案

面对上述壁垒,研究人员正在开发一系列创新的技术策略和实验方案。

应对数据异质性与小样本的技术

- 多模态融合策略:采用中期或晚期融合架构,分别处理不同模态数据(如文本、图像、分子图),再在特征层或决策层进行整合,以保留各模态特异性并挖掘关联性 [30]。

- 小样本与自监督学习:利用迁移学习,将在大型通用化学或生物数据集(如ChEMBL、PubMed)上预训练的模型,迁移到中药小数据集上进行微调 [31]。自监督学习(如MolE)可在无标注数据上预训练模型学习分子的一般表示,再用于下游预测任务 [32]。

- 知识图谱与LLM增强:构建整合了中药成分、靶点、通路、疾病和古籍知识的大型知识图谱,为模型提供丰富的先验知识 [29]。利用大语言模型(LLM)的语义理解能力,深度挖掘古籍文献和现代文献中的隐性知识,生成可计算的结构化假设 [29]。

前沿实验协议与验证方法

1. PDGrapher:基于图神经网络的系统药理学模型

- 实验设计:该模型旨在识别能逆转细胞疾病状态的基因或药物靶点 [33]。

- 核心方法:构建一个包含基因、蛋白质及其相互作用的细胞信号网络图。使用治疗前后的大量细胞转录组等数据训练图神经网络(GNN),使其学习从疾病状态到健康状态的动态转换规律 [33]。

- 验证流程:在训练中刻意排除已知有效药物靶点的数据,然后在独立的癌症数据集上测试。模型成功预测了已知靶点(如KDR)和新的潜在靶点(如TOP2A),并通过与临床前研究证据对比进行验证 [33]。

- 性能:在未见过的数据中,对正确靶点的预测排名比其他方法高35%,计算速度快25倍 [33]。

2. DrugCLIP:超高通量虚拟筛选引擎的验证实验

- 实验设计:验证AI模型从大型化合物库中筛选特定蛋白靶点抑制剂的有效性 [34]。

- 核心方法:采用对比学习技术,将蛋白质口袋和小分子共同编码到同一向量空间,使具有强结合力的分子在空间上聚集。将筛选任务转化为高效的向量近邻搜索 [34]。

- 验证案例:

- 性能指标:日处理能力达31万亿次蛋白-配体打分,较传统对接方法提升百万倍 [34]。

研究试剂与关键技术工具包

表3:中药AI靶点发现关键研究试剂与工具

| 类别 | 名称/示例 | 功能描述 | 应用场景/实验 |

|---|---|---|---|

| 计算模型与平台 | DrugCLIP [34] | AI驱动的超高通量虚拟筛选引擎,将对接问题转为向量检索,实现毫秒级分子打分。 | 针对已知或AlphaFold预测的蛋白结构,从上亿分子库中快速筛选先导化合物 [34]。 |

| PDGrapher [33] | 基于图神经网络的系统药理学模型,预测能逆转细胞疾病状态的基因或靶点组合。 | 识别复杂疾病(如癌症)的新型治疗靶点及联合用药策略 [33]。 | |

| PandaOmics (Insilico Medicine) [35] [36] | 集成多组学、专利和临床数据的AI靶点发现平台。 | 生成新的疾病靶点假设,并评估其新颖性和可成药性。 | |

| 数据资源与数据库 | 中医药专用知识图谱 | 结构化整合中药成分、靶点、疾病、方剂和古籍知识的数据库。 | 为AI模型提供先验知识,辅助网络药理学分析和机制解释 [29]。 |

| 人类蛋白组筛选数据库 [34] | 基于DrugCLIP构建,覆盖约1万个人类蛋白靶点、5亿小分子的筛选结果数据库。 | 为科研人员提供预先计算好的蛋白-配体相互作用数据,加速早期发现。 | |

| 实验验证试剂与工具 | 表面等离子共振 (SPR) | 实时、无标记测量生物分子间相互作用亲和力的技术。 | 验证AI预测的化合物与靶蛋白的结合能力(如验证DrugCLIP筛选结果) [34]。 |

| 同位素配体转运实验 | 用于测定转运蛋白(如NET)抑制剂活性的经典方法。 | 验证针对特定转运蛋白靶点的抑制剂功效(IC50测定) [34]。 | |

| 冷冻电镜 (Cryo-EM) | 用于解析大分子(如膜蛋白)与药物配体复合物的高分辨率结构。 | 从结构生物学角度确认AI筛选化合物的结合模式与机制 [34]。 |

未来展望与跨学科路径

突破当前壁垒需要技术、法规和人才的多维度协同创新。

技术架构升级:未来AI平台将向多模态深度融合和可解释性增强方向发展。通过注意力机制等可视化技术,使AI的决策过程对研究人员更透明 [32]。开发真正融合中医整体观(如将“阴阳五行”学说关系网络化)的新型算法模型,是解决“文化隔阂”的根本路径 [31]。

监管科学创新:业界和监管机构需共同推动建立适应AI特性的动态监管框架。这可能包括AI模型的分级分类管理、基于风险的验证要求,以及设立“监管沙盒”允许在可控环境下进行真实世界数据积累和性能评估 [31] [32]。

复合型人才培养:中医药的AI创新亟需既精通中医理论和现代药理学,又掌握数据科学与AI技术的复合型人才 [31]。改革教育体系,设立交叉学科专业,是支撑领域长远发展的基石。

综上所述,AI为解析中药的复杂性并加速其现代化研发带来了前所未有的机遇。然而,数据异质性、小数据集和新兴监管路径构成了必须系统应对的核心挑战。通过采用多模态融合、小样本学习等先进AI策略,结合严谨的湿实验验证,并积极推动监管框架和人才体系的建设,我们有望逐步突破这些壁垒,最终实现AI在中医药创新中的全面、可靠和负责任的应用。

Advanced AI Frameworks in Action: From Graph Neural Networks to Predictive Models

The integration of artificial intelligence (AI) into pharmaceutical research heralds a transformative era for drug discovery, particularly within the complex domain of herbal medicine. The process of identifying and validating interactions between drug compounds and their biological targets (DTI) is a foundational, yet bottleneck, step. Traditional experimental methods are prohibitively time-consuming and expensive, struggling to scale against the vast combinatorial space of herbal phytochemicals and human proteome targets [37]. This challenge is acutely felt in herbal medicine research, where natural products are not single entities but complex mixtures of numerous bioactive constituents, each with potentially multiple targets and synergistic or antagonistic effects [38].

This whitepaper posits that graph-based AI approaches, specifically knowledge graphs (KGs) and heterogeneous network embedding models, provide the essential computational framework to overcome these hurdles. By representing drugs, targets, diseases, and their multifaceted relationships as interconnected networks, these methods move beyond simplistic pairwise prediction. They enable a systems-level understanding crucial for herbal medicine, where the therapeutic effect often arises from network pharmacology—multiple compounds modulating multiple targets within a biological network [38]. Framing DTI prediction within this graph paradigm allows researchers to reason over biological pathways, infer novel interactions through relational logic, and embed prior knowledge from diverse sources into predictive models. The subsequent sections provide an in-depth technical guide to constructing these knowledge graphs, implementing state-of-the-art embedding techniques like heterogeneous graph neural networks, and validating predictions within the rigorous context of herbal medicine research.

Foundational Concepts and Constructs

Knowledge Graphs (KGs) for Biomedical Integration

A biomedical knowledge graph is a structured, semantic network that integrates entities (nodes) and their relationships (edges) from disparate data sources. In the context of herbal medicine, a comprehensive KG unifies:

- Entities: Herbal compounds (e.g., berberine, curcumin), purified natural products, protein targets, genes, diseases (e.g., Type II Diabetes, Rheumatoid Arthritis), biological pathways, side effects, and anatomical concepts.

- Relationships: Compound-bindsTo-Protein, compound-treats-Disease, protein-participatesIn-Pathway, protein-associatedWith-Disease, herb-contains-Compound.

KGs are built by harmonizing data from curated databases (DrugBank, ChEMBL, TCMSP), biomedical ontologies (Gene Ontology, Disease Ontology), and scientific literature via relation extraction [38] [39]. The resulting graph is a rich, queryable repository of mechanistic knowledge that supports tasks like hypothesis generation and logical inference for novel DTI prediction.

Heterogeneous Information Networks (HINs) and Embedding

A Heterogeneous Information Network is a special type of graph containing multiple node and edge types. A DTI HIN typically includes node types for Drugs, Proteins, Diseases, and Side Effects, interconnected by various relation types (e.g., drug-drug similarity, protein-protein interaction, drug-disease indication) [40] [41]. The core challenge is to learn meaningful, low-dimensional vector representations (embeddings) for each node that encapsulate both its attributes and its topological context within the HIN.

Heterogeneous Network Embedding techniques, such as meta-path-based models and heterogeneous graph neural networks (HGNNs), solve this. They propagate and aggregate features across different node and edge types, transforming the complex graph structure into a continuous vector space. In this space, geometric relationships (e.g., proximity) reflect biological relationships, enabling efficient similarity calculation and link prediction for unknown drug-target pairs [41] [37].

The Critical Challenge of Data Imbalance and "Cold Start"

A pervasive issue in training DTI prediction models is extreme class imbalance. Known, validated DTIs (positive samples) are vastly outnumbered by unknown pairs (treated as negative samples), often at ratios exceeding 1:100 [40]. Naively training on such data biases models towards predicting "no interaction." Furthermore, the "cold-start" problem refers to the inability to make predictions for new herbs or compounds entirely absent from the training graph, a common scenario in novel herbal research [37]. Advanced graph methods address these through techniques like contrastive learning with adaptive negative sampling and inductive learning frameworks that can generate embeddings for unseen nodes based on their features [40] [37].

Table 1: Benchmark Performance of Advanced Graph-Based DTI Models

| Model | Core Architecture | Key Innovation | Reported AUC | Reported AUPR | Strength for Herbal Medicine |

|---|---|---|---|---|---|

| GHCDTI [40] | HGNN with Graph Wavelet Transform | Multi-scale feature extraction & contrastive learning | 0.966 ± 0.016 | 0.888 ± 0.018 | Captures dynamic protein conformations; robust to imbalance. |

| Hetero-KGraphDTI [37] | GCN with Knowledge Regularization | Integrates ontological knowledge as regularization | 0.98 (avg) | 0.89 (avg) | Enhances biological plausibility of predictions. |

| DrugMAN [39] | GAT with Mutual Attention | Fuses multiple drug/protein networks via attention | Best in cold-start | Best in cold-start | Superior generalization to novel entities. |

| DHGT-DTI [41] | GraphSAGE & Graph Transformer | Dual-view (local neighbor & global meta-path) learning | State-of-the-art | State-of-the-art | Comprehensively captures network structure. |

| ComplEx (on NP-KG) [38] | KG Embedding | Tensor factorization for relational learning | Top performer in intrinsic eval. | N/A | Effective for inferring complex interaction types in KGs. |

Technical Methodology: From Graph Construction to Prediction

Workflow for Herbal Medicine DTI Prediction

The end-to-end pipeline for applying graph-based AI to herbal DTI prediction involves sequential stages from data integration to experimental validation. The following diagram outlines this generalized workflow.

Detailed Experimental Protocols

Protocol 1: Construction of a Natural Product-Focused Knowledge Graph (NP-KG) [38]

- Data Source Curation:

- Collect raw data from: a) OBO Foundry Ontologies (e.g., Gene Ontology, ChEBI) for entity typing and hierarchy, b) Open Databases (e.g., DrugBank, PubChem) for known interactions, c) Full-text scientific articles for natural product pharmacokinetics and pharmacology.

- For herbal constituents, extract compounds from authoritative monographs (e.g., EMA Herbal Monographs) and the Global Substance Registration System (G-SRS).

- Graph Assembly using PheKnowLator:

- Employ the PheKnowLator (Phenotype Knowledge Translator) workflow.

- Parse ontology files to create hierarchical node sets.

- Map database records and literature-extracted relations to ontology terms.

- Define custom relations (e.g.,

'contains_constituent') to link herb entities to their compound nodes. - Output a directed, labeled, property graph (e.g., in RDF/Neo4j format).

- Pre-processing for Embedding:

- Convert the graph into a set of triples: (headentity, relation, tailentity).

- Collapse multiple edges between the same node pair into a single, unique edge type.

- Split triples into training, validation, and test sets (e.g., 80/10/10), ensuring no data leakage across splits.

Protocol 2: Training a Heterogeneous Graph Neural Network (HGNN) Model [40] [41]

- Node Feature Initialization:

- Drug/Compound Nodes: Encode using extended-connectivity fingerprints (ECFP4) or pre-trained molecular transformers.

- Protein/Target Nodes: Encode using amino acid sequence embeddings (e.g., from ESM-2) or physicochemical property vectors.

- Disease/Side Effect Nodes: Use ontology-derived feature vectors or bag-of-words from descriptive text.

- Heterogeneous Graph Convolution:

- Implement a Heterogeneous Graph Convolutional Network (HGCN) or Heterogeneous Graph Attention Network (HGAT).

- For each node type, define a type-specific transformation matrix.

- Perform neighborhood aggregation: For a target node, aggregate messages from its neighboring compound, disease, and other protein nodes. The aggregation is relation-aware, meaning messages passed via a

'binds'edge are weighted differently than via a'participates_in'edge. - Use a multi-head attention mechanism to dynamically weigh the importance of different neighbors.

- Multi-View Learning and Contrastive Loss:

- Generate two views of the graph: a topological view (via HGCN) and a feature view (via graph wavelet transform for multi-scale features) [40].

- For the same node, maximize agreement between its embeddings from the two views using a contrastive loss function (e.g., InfoNCE).

- This self-supervised step improves robustness and representation quality, especially for imbalanced data.

- Link Prediction Head & Training:

- Take the final embeddings of a drug node

d_iand a target nodet_j. - Compute an interaction score via a decoder, such as a bilinear decoder:

score = σ(d_i^T * M_r * t_j), whereM_ris a learnable relation-specific matrix, andσis the sigmoid function. - Train the model end-to-end using binary cross-entropy loss, with a strategically sampled negative set (e.g., negative samples are compounds and proteins not known to interact but within plausible biological distance in the graph) [37].

- Take the final embeddings of a drug node

Protocol 3: Extrinsic Validation using a Gold-Standard Herbal DTI Dataset

- Benchmark Dataset Compilation [38]:

- Extract known herb/compound-target interactions from specialized resources: NatMed Pro, NaPDI Database, Stockley’s Herbal Medicines Interactions.

- Manually curate and unify entries, resolving conflicts based on level of evidence (e.g., clinical trial > in vitro study).

- Map all herb and target names to standard identifiers (e.g., PubChem CID, UniProt ID) to align with the constructed KG.

- Evaluation Procedure:

- Hold out a portion of the gold-standard interactions as the test set.

- Use the trained model to predict scores for all possible herb/compound-target pairs in the test set.

- Calculate standard metrics: Area Under the ROC Curve (AUC-ROC), Area Under the Precision-Recall Curve (AUPRC) (more informative for imbalanced data), and Recall@k.

- Case Study Analysis:

- Select a high-ranking, novel prediction for a well-studied herb (e.g., Salvia miltiorrhiza for cardiovascular targets).

- Perform in silico validation via molecular docking simulation to assess binding pose and affinity.

- Design in vitro validation using a binding assay (e.g., surface plasmon resonance) or a functional cellular assay to confirm biological activity.

Table 2: The Scientist's Toolkit: Essential Resources for Herbal DTI Graph Research

| Category | Resource Name | Description & Function in Research |

|---|---|---|

| Knowledge Bases & Databases | DrugBank [39] | Comprehensive database containing drug, target, and interaction information, essential for building benchmark sets. |

| TCMSP, HIT | Traditional Chinese Medicine specific databases providing herb-compound-target relationships. | |

| ChEMBL, BindingDB [40] [39] | Curated databases of bioactive molecules with quantitative binding data, used for positive DTI labels. | |

| Gene Ontology (GO) [37] | Provides standardized functional annotations for proteins, used for node features and relational inference. | |

| Software & Libraries | PyTorch Geometric (PyG), Deep Graph Library (DGL) | Libraries for implementing Graph Neural Networks, including heterogeneous graph models. |

| PheKnowLator [38] | Automated workflow for constructing large-scale, ontology-aware biomedical knowledge graphs. | |