AI-Driven Feature Enhancement in Network Pharmacology: Advanced Techniques for Multi-Target Drug Discovery

This article provides a comprehensive exploration of cutting-edge feature enhancement techniques in network pharmacology, a paradigm-shifting approach in drug discovery.

AI-Driven Feature Enhancement in Network Pharmacology: Advanced Techniques for Multi-Target Drug Discovery

Abstract

This article provides a comprehensive exploration of cutting-edge feature enhancement techniques in network pharmacology, a paradigm-shifting approach in drug discovery. Targeting researchers, scientists, and drug development professionals, it bridges the gap between foundational concepts and advanced computational methodologies. The scope encompasses the foundational shift from 'one drug-one target' to network-based polypharmacology [citation:5][citation:6], the application of AI and deep learning models like Graph Neural Networks (GNNs) for superior molecular representation [citation:1][citation:8], strategic solutions for prevalent data and methodological challenges [citation:6][citation:9], and the critical frameworks for validating and comparatively analyzing network pharmacology predictions through integration with experimental and clinical data [citation:3][citation:7]. This guide serves as a strategic resource for leveraging computational power to decipher complex drug-disease interactions and accelerate the development of multi-target therapies.

From Single Targets to Network Targets: The Foundational Shift Enabling Feature Enhancement

Welcome to the Technical Support Center for Network Pharmacology Research. This resource is designed for researchers, scientists, and drug development professionals navigating the shift from traditional, single-target drug discovery to the multi-target, systems-based approach of network pharmacology. The content here is framed within a broader thesis on feature enhancement techniques for network pharmacology, providing practical troubleshooting guides, FAQs, and detailed protocols to address common experimental challenges and optimize your research workflow [1] [2].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental philosophical difference between traditional drug discovery and network pharmacology? Traditional drug discovery operates on a reductionist "one drug–one target" paradigm. It aims to identify a single, highly selective compound to modulate a specific protein or pathway, with the goal of minimizing off-target effects [3] [2]. In contrast, network pharmacology is founded on a holistic "network-target, multiple-component therapeutics" paradigm. It acknowledges that complex diseases like cancer and neurodegenerative disorders arise from perturbations in biological networks and seeks to modulate multiple targets within those networks simultaneously for a more effective therapeutic outcome [3] [1]. This approach aligns with the mechanisms of many natural products and traditional medicines, which often exert effects through polypharmacology [3] [4].

Q2: My network analysis predicts hundreds of potential targets. How do I prioritize the most important ones for experimental validation? Prioritization is a critical step. Focus on nodes with high network centrality metrics (e.g., high degree, betweenness centrality), as these are likely key regulatory hubs [5]. Subsequently, perform functional enrichment analysis (e.g., via KEGG, GO) on clusters of targets to identify if they converge on biologically relevant pathways such as PI3K-Akt or TNF signaling [5] [4]. Finally, use literature mining and existing disease databases (like OMIM or GeneCards) to cross-reference your prioritized targets with known disease-associated genes. Tools like NeXus v1.2 automate this integration of topology and enrichment analysis, significantly speeding up the process [5] [6].

Q3: How can I validate the predicted interactions from my computational network model? A robust validation pipeline is multi-layered:

- In silico Validation: Use molecular docking (with tools like AutoDock) to assess the binding affinity and pose of your compound(s) to the prioritized protein targets [1] [4].

- In vitro Validation: Employ cell-based assays (e.g., gene knockdown/overexpression, Western blot, qPCR) to confirm that the compound modulates the activity or expression of the predicted targets and downstream pathway markers [4].

- In vivo Validation: Use relevant animal models to demonstrate the predicted therapeutic effect and, if possible, use techniques like phospho-proteomics or transcriptomics to confirm network-level changes in response to treatment [4].

Q4: What are the major regulatory challenges for multi-target drugs developed through network pharmacology? Current regulatory frameworks (e.g., FDA, EMA) are historically built around the single-target paradigm, requiring clear identification of a primary mechanism of action [2]. The main challenges for network pharmacology-based drugs include:

- Demonstrating definable mechanism(s) of action for a multi-component/multi-target agent.

- Establishing reproducible quality and standardization, especially for complex natural product mixtures, where chemical fingerprint and biological activity signature must be linked [3].

- Designing clinical trials that adequately capture polypharmacological effects and synergistic outcomes. The emerging Model-Informed Drug Development (MIDD) framework, particularly the ICH M15 guidelines, promotes the use of quantitative modeling and may provide a more adaptable pathway for validating network-based therapeutics [7].

Q5: Which AI platforms are best suited for different stages of network pharmacology research? The choice depends on your specific need [8]:

| Research Stage | Recommended AI Platform | Primary Utility |

|---|---|---|

| Target & Protein Structure | DeepMind AlphaFold | Provides highly accurate, free protein structure predictions for target identification and docking studies [8]. |

| Hit Identification & Screening | Atomwise, Schrödinger AI | Uses deep learning (AtomNet) or physics-based ML for high-throughput virtual screening of compound libraries [8]. |

| Generative Molecular Design | Insilico Medicine, Exscientia | Employs generative AI to design novel molecular structures optimized for multiple targets or properties [8] [4]. |

| Polypharmacology & Safety | Cyclica AI | Specializes in predicting off-target interactions and polypharmacology profiles for safety screening [8]. |

| Knowledge Integration | BenevolentAI | Leverages large biomedical knowledge graphs to identify novel target-disease relationships [8]. |

Troubleshooting Guides

Issue 1: Low Predictive Power or Biologically Irrelevant Networks

- Problem: The constructed "herb-compound-target-disease" network yields obvious or non-specific results, failing to generate novel mechanistic insights.

- Solution:

- Audit Your Data Sources: Ensure you are using curated, high-quality databases. For TCM research, rely on TCMSP, ETCM, and HERB. For general targets, use DrugBank, GeneCards, and STRING [1] [4].

- Apply Stringent Filters: When screening active compounds, apply pharmacokinetic filters such as Oral Bioavailability (OB) ≥ 30% and Drug-Likeness (DL) ≥ 0.18 to focus on drug-like molecules [4].

- Refine the Disease Target Set: Use multiple disease databases (e.g., OMIM, DisGeNET) and set a relevance score threshold to avoid including weakly associated genes, which introduces noise [4].

- Upgrade Your Analysis Tool: Manual pipeline integration is prone to error. Switch to an automated platform like NeXus v1.2, which integrates network construction, multi-method enrichment analysis (ORA, GSEA, GSVA), and visualization, reducing analysis time by over 95% and improving reproducibility [5] [6].

Issue 2: Difficulty in Translating Computational Findings toIn VitroExperiments

- Problem: Compounds or targets identified in silico show no activity in cell-based assays.

- Solution:

- Check Bioavailability & Concentration: The compound may not be cell-permeable or may require metabolism to become active. Review ADMET predictions and consider using prodrugs. Also, ensure the in vitro concentration tested is physiologically relevant, as many studies use supraphysiological doses [3].

- Consider Synergy: If studying a multi-herb formulation, test compounds in combination. The therapeutic effect may rely on synergistic interactions (reinforcement, potentiation) that are not apparent when testing single compounds in isolation [3].

- Validate Target Engagement: Don't just measure a phenotypic outcome. Use techniques like Cellular Thermal Shift Assay (CETSA) or drug affinity responsive target stability (DARTS) to confirm that your compound is physically engaging with the predicted protein target in the cellular environment.

- Employ Multi-Omics Validation: Use transcriptomics or proteomics to profile the cell's response to treatment. The gene/protein expression changes should significantly overlap with the pathways enriched in your original network model (e.g., PI3K-Akt, MAPK signaling) [5] [4].

Issue 3: Managing and Integrating Heterogeneous, Multi-Layer Data

- Problem: Data from herbs, compounds, targets, omics, and clinical variables are in disparate formats, making integration and analysis cumbersome.

- Solution:

- Adopt a Standardized Workflow: Implement a structured pipeline: Data Collection → Network Construction & Analysis → Experimental Validation [4].

- Use Platforms for Multi-Layer Networks: Utilize tools specifically designed for hierarchical data. For example, NeXus v1.2 can seamlessly handle the "plant-compound-gene" relationship triad, identifying shared compounds and multi-target genes while managing incomplete data [5].

- Leverage Multi-Omics Integration: Correlate findings across layers. For instance, if transcriptomics shows pathway X is altered, check if proteomics confirms changes in key proteins of that pathway. This convergence strengthens your mechanistic hypothesis [4].

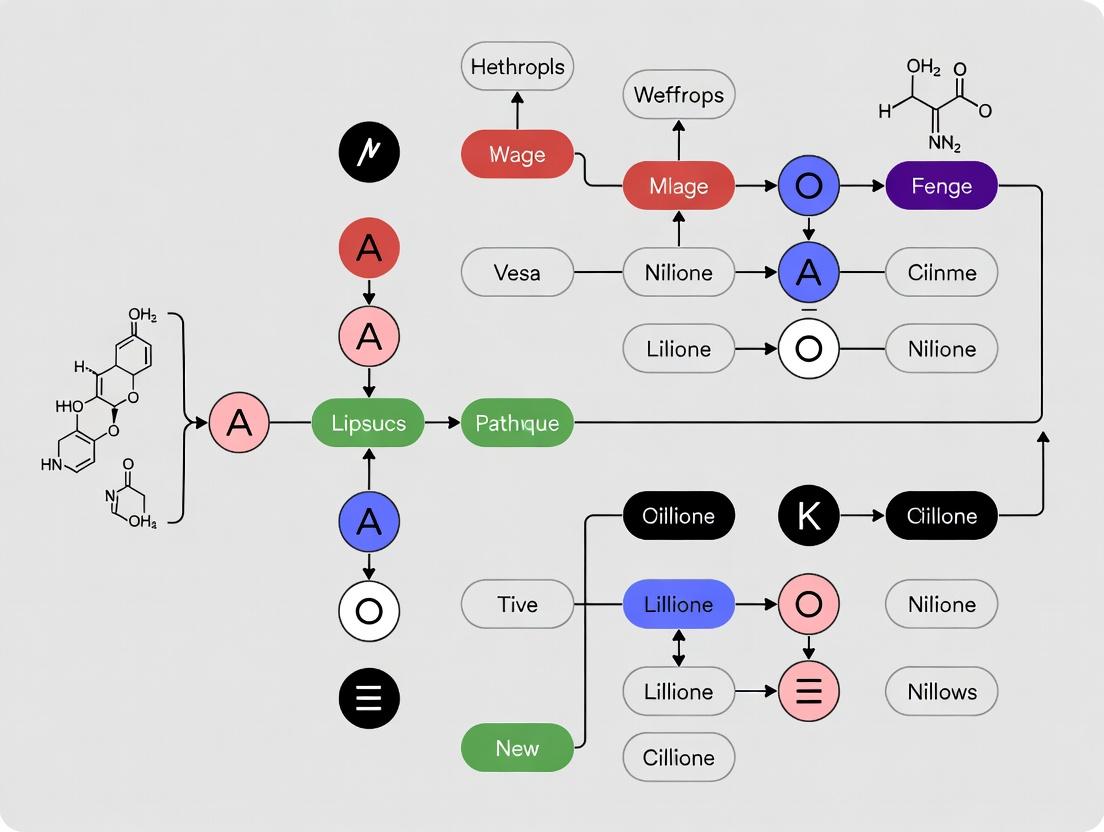

- Diagram Your Workflow: Map out your data flow to identify bottlenecks. The following diagram illustrates an advanced, feature-enhanced integrated workflow that addresses these challenges.

Diagram 1: Feature-Enhanced Integrated Network Pharmacology Workflow. This automated pipeline integrates diverse data sources and analytical methods to generate testable hypotheses.

Issue 4: Inconsistent or Unreproducible Results with Natural Product Extracts

- Problem: Experimental results with a botanical extract cannot be replicated across batches or labs.

- Solution:

- Standardize the Extract: This is non-negotiable. Establish a detailed Standard Operating Procedure (SOP) for extraction and a chemical fingerprint (using HPLC/UPLC) for every batch. The biological activity ("signature") must be linked to a consistent chemical profile [3].

- Identify Active Markers: Go beyond fingerprinting. Use bioassay-guided fractionation or network-prediction-guided isolation to identify the key active marker compounds responsible for the observed effect. Quality control should then monitor these specific markers [3].

- Document Everything: Record the plant's botanical identity, geographical origin, part used, harvest time, and extraction solvent. All can dramatically influence chemical composition [3].

Featured Experimental Protocol: Validating a Network Pharmacology Prediction Using an Automated Platform

This protocol outlines the steps to use an automated platform (exemplified by NeXus v1.2) to predict and initiate validation of the mechanisms of a herbal formula [5] [6].

Objective: To identify the key bioactive compounds and synergistic targets of a multi-herb formulation (e.g., a three-herb combination) in the context of a specific disease (e.g., inflammation).

Materials & Software:

- Herbal Compound Data: List of chemical constituents for each herb, sourced from TCMSP or PubChem [4].

- Disease Target Data: List of genes/proteins associated with the disease from GeneCards, OMIM, or DisGeNET.

- Analysis Platform: NeXus v1.2 (or similar automated network pharmacology platform) [5].

- Validation Software: Molecular docking software (e.g., AutoDock Vina), Cytoscape for visualization.

- Cell Line: Disease-relevant cell line (e.g., macrophage cell line for inflammation).

Procedure: Part A: Automated Network Construction & Analysis (Expected time: <5 min with NeXus) [5]

- Data Preparation: Format your input data into three main lists: a) Plant/Herb names, b) Compound IDs (e.g., PubChem CID), and c) Disease-related Gene Symbols.

- Platform Input: Load the three data lists into NeXus v1.2. The platform will automatically map relationships using integrated databases.

- Run Integrated Analysis: Execute the automated pipeline. NeXus will:

- Construct a unified "herb-compound-gene" network.

- Perform topological analysis to identify hub compounds and targets.

- Conduct multi-method enrichment analysis (ORA, GSEA, GSVA) on gene clusters to pinpoint affected pathways (e.g., TNF, PI3K-Akt signaling).

- Generate publication-quality visualizations of the network and enrichment results.

- Output Review: Analyze the results. The platform will output a ranked list of high-degree (hub) compounds, a ranked list of key target genes, and the top significantly enriched KEGG pathways. This forms your core mechanistic hypothesis.

Part B: In Silico and In Vitro Validation

- Molecular Docking: Select the top 3-5 hub compounds and top 3-5 hub target proteins from Part A. Perform molecular docking to predict binding affinity and pose, providing initial validation of compound-target interactions [1] [4].

- Cell-Based Assay Design:

- Treat disease-relevant cells with the individual herb extracts, a combination of them, and the isolated hub compounds.

- Measure the expression (mRNA via qPCR, protein via Western blot) of the prioritized hub targets.

- Assess the activity of the predicted enriched pathways by measuring key markers (e.g., p-AKT/AKT ratio for PI3K-Akt pathway).

- Compare the effects of single herbs versus the combination to look for synergistic patterns [3].

| Item Name | Function & Utility in Network Pharmacology | Key Examples/Specifications |

|---|---|---|

| Specialized Databases | Provide curated data on compounds, targets, and diseases essential for network construction. | TCMSP [4], HERB [4] (TCM-specific); DrugBank [1], GeneCards [4] (targets); KEGG [4], GO (pathways). |

| Network Analysis & Visualization Software | Enables construction, topological analysis, and visualization of biological networks. | Cytoscape [1] [4] (core visualization); STRING [1] (protein interactions); NeXus v1.2 [5] (automated, integrated analysis). |

| Molecular Docking Tools | Validates predicted compound-target interactions in silico by simulating binding. | AutoDock Vina [1], Schrödinger Suite [8]. |

| Multi-Omics Technologies | Provides systems-level data for unbiased validation of network predictions and mechanism elucidation. | Transcriptomics (RNA-seq), Proteomics (LC-MS/MS), Metabolomics [3] [4]. |

| AI/ML Platforms | Enhances target prediction, molecular design, and data integration capabilities. | AlphaFold (protein structure) [8]; Insilico Medicine [8], Chemistry42 [4] (generative chemistry). |

| Standardized Botanical Reference Materials | Ensures reproducibility in natural product research by providing a consistent chemical baseline. | Certified Reference Standards for key active markers in herbs (e.g., berberine, ginsenosides). Essential for QC [3]. |

Paradigm Comparison & Performance Metrics

The following table quantitatively contrasts the two paradigms and highlights the efficiency gains from modern, automated tools.

| Feature | Traditional "One Drug–One Target" Paradigm | Network Pharmacology "Network-Target" Paradigm | Performance Metric (NP Tool) |

|---|---|---|---|

| Core Philosophy | Reductionist, linear causality [3] [2]. | Holistic, systems biology-based [3] [1]. | -- |

| Therapeutic Strategy | High-affinity modulation of a single target [2]. | Moderate modulation of multiple network targets [3] [1]. | -- |

| Typical Drug Source | Synthetic small molecules, biologics [3]. | Natural products, multi-component formulas, repurposed drugs [3] [1]. | -- |

| Success Rate Challenge | High attrition due to poor efficacy/toxicity in complex diseases [2]. | Addresses complexity but faces validation & regulatory hurdles [2] [7]. | -- |

| Analysis Workflow | Manual, multi-tool integration (Cytoscape, STRING, DAVID). | Automated, unified platforms. | NeXus v1.2 reduces analysis time from 15-25 min to <5 s (>95% reduction) [5]. |

| Data Handling | Often requires complete, clean relationship data. | Robust to incomplete data; handles multi-layer (plant-compound-gene) natively [5]. | Processes networks with 111 to 10,847 genes in under 3 minutes [5]. |

| Enrichment Analysis | Typically limited to Over-Representation Analysis (ORA). | Integrates ORA, GSEA, and GSVA for complementary insights [5]. | Applies all three methods automatically within a single workflow [5] [6]. |

Visualizing a Core Signaling Pathway in Network Pharmacology

Many network pharmacology studies on diseases like cancer and inflammation identify central signaling pathways such as PI3K/AKT as key targets for multi-compound formulations [5] [4]. The following diagram depicts this canonical pathway and how multiple compounds (C1, C2, C3) from a network analysis might interact with it at different nodes, demonstrating a polypharmacological strategy.

Diagram 2: Multi-Target Modulation of the PI3K/AKT/mTOR Pathway. This shows how multiple compounds predicted by network analysis can synergistically target different nodes in a key disease-associated pathway.

Technical Support Center: Troubleshooting Guides and FAQs for Network Pharmacology Research

This technical support center is designed within the context of advancing feature enhancement techniques for network pharmacology research. It addresses the core computational and methodological challenges in representing and analyzing complex, multi-component systems to accelerate robust, multi-target drug discovery [9] [10].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental shift in perspective from classical to network pharmacology, and why is it critical for complex diseases? A1: Classical pharmacology largely follows a "one drug, one target" paradigm, which is effective for monogenic or infectious diseases but has high failure rates for complex, multifactorial diseases like cancer or neurodegeneration [10]. Network pharmacology represents a paradigm shift to a systems-based, multi-target approach. It views diseases as perturbations within intricate biological networks (protein-protein interactions, signaling pathways) and aims to identify compounds or formulas that restore network balance by modulating multiple nodes simultaneously [9] [1]. This holistic perspective is critical because complex diseases often involve redundant pathways and feedback loops, making single-target interventions insufficient [11] [10].

Q2: What are the most common data integration challenges when constructing a multi-layer drug-target-disease network, and how can they be resolved? A2: A primary challenge is harmonizing data from disparate sources (e.g., compound structures from PubChem/ChEMBL, targets from DrugBank, disease genes from DisGeNET, and protein interactions from STRING) which use different identifiers and confidence metrics [12] [10].

- Issue: Inconsistent nomenclature and missing relationships create fragmented, unreliable networks.

- Solution: Implement rigorous data curation pipelines: standardize all identifiers (e.g., to UniProt or Ensembl IDs), apply confidence score filters (e.g., STRING score >0.7), and leverage integrated platforms like NeXus or UNIQ that automate some of these processes [9] [12] [13]. For traditional medicine research, using specialized databases like TCMSP or HERB for herb-compound relationships is essential [9].

Q3: My network analysis yields hundreds of potential targets. How do I identify the most biologically relevant "hub" targets or key functional modules? A3: Use graph-theoretical topological analysis to quantitatively prioritize candidates.

- Method: Calculate centrality metrics for nodes (targets) in your constructed network:

- Degree Centrality: Number of connections. High-degree nodes are "hubs."

- Betweenness Centrality: Frequency of a node lying on the shortest path between others. High-betweenness nodes are "bottlenecks."

- Use community detection algorithms (e.g., Louvain, MCODE) to identify densely connected clusters (modules) that often correspond to functional pathways [12] [10].

- Next Step: Subject the top nodes and modules to functional enrichment analysis (GO, KEGG) to interpret their biological context. For example, a module enriched in "PI3K-AKT signaling" and "apoptosis" is highly relevant for cancer research [14] [13].

Q4: How can I move from in silico network predictions to experimentally validated mechanisms? What is a standard validation workflow? A4: A robust validation workflow integrates computational and experimental tiers.

- Computational Validation: Perform molecular docking (e.g., with AutoDock Vina) to assess binding affinity and pose of your top compounds to the active sites of prioritized hub targets. Follow with molecular dynamics simulations (e.g., Desmond) to evaluate complex stability over time [14] [13].

- In Vitro Experimental Validation: Test the compound/formula in relevant cell models. Measure:

- Expression changes of hub target genes/proteins (qPCR, Western blot).

- Downstream pathway activity (e.g., p-AKT/AKT ratio for PI3K-AKT pathway).

- Phenotypic effects (e.g., cell proliferation, apoptosis) [13].

- In Vivo Experimental Validation: Use established animal disease models. Administer the compound and assess:

- Behavioral or symptomatic improvement.

- Target engagement and pathway modulation in tissue samples.

- Use specific agonists/antagonists to confirm pathway necessity (e.g., a PI3K inhibitor like LY294002 to block the predicted effect) [13].

Q5: Traditional medicine formulas involve dozens of compounds. How can I model their multi-component, multi-target action without being overwhelmed? A5: The key is to adopt a multi-layer network representation and focus on system-level features.

- Representation: Construct a hierarchical network with distinct layers for Herbs -> Bioactive Compounds -> Protein Targets -> Pathways -> Disease Phenotypes. This clarifies which herbs contribute shared compounds and how compounds synergize on common pathways [9] [12].

- Analysis: Move beyond single-target analysis. Use enrichment methods like GSEA or GSVA (implemented in platforms like NeXus) that consider the entire ranked list of perturbed genes, revealing pathways collectively targeted by the formula [12]. Conceptually, employ hypergraphs—where an edge can connect more than two nodes—to model complex, multi-way relationships inherent in such systems (e.g., a single compound influencing a protein complex of three targets simultaneously) [15].

Q6: When analyzing high-dimensional omics data (transcriptomics, proteomics) within a network framework, how do I avoid false positives and enhance feature relevance? A6: This is a core feature enhancement challenge. Mitigation strategies include:

- Leveraging Prior Knowledge Networks: Do not rely solely on correlation in omics data. Integrate your data (e.g., differentially expressed genes) with established, high-confidence interaction networks from databases like STRING or BioGRID. This constrains the hypothesis space [10].

- Employing Advanced AI Methods: Shift from simple machine learning on object features to methods that learn relationships. Graph Neural Networks (GNNs) can directly learn from the graph structure of biological networks, automatically enhancing feature representation by incorporating network topology [9]. Techniques like network embedding create low-dimensional representations of nodes that preserve their structural and functional roles within the large network [9].

Troubleshooting Common Experimental & Computational Issues

| Problem Area | Specific Issue | Possible Cause | Recommended Solution |

|---|---|---|---|

| Network Construction | Sparse, disconnected network with poor biological plausibility. | Overly stringent filters on interaction data; using generic instead of tissue- or context-specific networks. | Use tissue-specific PPI data if available; adjust confidence score thresholds (e.g., STRING score from 0.4 to 0.7); incorporate more relationship types (activation, inhibition). |

| Target Prediction | Poor overlap between predicted targets from different algorithms (e.g., SEA vs. docking). | Each algorithm has different biases and data dependencies. | Use consensus prediction: retain only targets predicted by ≥2 independent methods. Validate top consensus targets with literature mining for direct experimental evidence. |

| Enrichment Analysis | Enriched pathways are too generic (e.g., "Cancer pathways") or not statistically significant. | Input gene list is too broad or noisy; using only Over-Representation Analysis (ORA) which relies on arbitrary thresholds. | Refine input gene list using tighter differential expression cutoffs or network-based prioritization. Use GSEA or GSVA, which are more sensitive to coordinated subtle shifts across a pathway [12]. |

| Validation Discrepancy | In vitro results do not support key network predictions (e.g., a hub target shows no change). | The cellular model may lack the disease-relevant context; the compound may be metabolized; the network may have missed a critical indirect regulator. | Use more disease-relevant cell models (primary cells, patient-derived cells). Test not only the hub target but also its direct upstream regulators and downstream effectors from the network. |

| Multi-Omics Integration | Difficulty integrating transcriptomic and proteomic data into a coherent network model. | Data from different layers (mRNA, protein) are discordant due to post-transcriptional regulation and have different scales/distributions. | Use network-based data fusion tools or multi-omics factor analysis (MOFA). Focus on constructing a layered network where mRNA and protein nodes for the same gene are distinct but connected, allowing for regulatory inference [10]. |

Detailed Experimental Protocol: Integrated Network Pharmacology Workflow

This protocol outlines a standard workflow for elucidating the mechanism of a herbal medicine (e.g., Epimedium) for a complex disease (e.g., Spinal Cord Injury), integrating network analysis, molecular docking, and experimental validation [13].

Phase 1: In Silico Network Construction & Analysis

- Compound Screening & Target Identification:

- Retrieve bioactive compounds from the herb using TCMSP or HERB [9]. Apply ADME filters (Oral Bioavailability ≥30%, Drug-likeness ≥0.18) [13].

- Predict putative protein targets for each compound using SwissTargetPrediction, PharmMapper, and perform similarity search via SEA.

- Retrieve known disease-associated targets from GeneCards, OMIM, and DisGeNET using "Spinal Cord Injury" as keyword.

- Intersect herb targets and disease targets to obtain potential therapeutic targets.

Network Construction & Topology Analysis:

- Input the potential therapeutic targets into the STRING database to obtain a Protein-Protein Interaction (PPI) network. Set minimum interaction confidence score >0.7 [13].

- Import the PPI network into Cytoscape. Use the CytoNCA plugin to calculate centrality values (Degree, Betweenness). Identify the top 10 hub targets.

- Perform module analysis using the MCODE plugin to detect densely connected clusters.

Enrichment & Pathway Analysis:

- Submit the hub targets to the

clusterProfilerR package for Gene Ontology (GO) and KEGG pathway enrichment analysis (p-value cutoff = 0.05) [13]. - Identify key enriched pathways (e.g., PI3K-Akt signaling pathway, inflammatory response).

- Submit the hub targets to the

Molecular Docking Validation:

- Retrieve 3D structures of key hub targets (e.g., AKT1, PI3K) from the PDB database. Prepare proteins (remove water, add hydrogens).

- Obtain 3D structures of the herb's core bioactive compounds (e.g., Icariin from Epimedium) from PubChem.

- Perform molecular docking using AutoDock Vina. A binding energy ≤ -7.0 kcal/mol generally indicates good binding affinity [14].

- Visualize docking poses with PyMOL or Discovery Studio.

Network Pharmacology Computational Workflow

Phase 2: In Vivo Experimental Validation

- Animal Model Establishment:

- Use adult Sprague-Dawley rats. Anesthetize and perform a laminectomy at the T10 vertebral level.

- Induce a moderate contusion SCI using a standardized impactor device (e.g., 10g weight dropped from 5cm height). Sham group undergoes laminectomy only [13].

Drug Administration & Grouping:

- Randomly assign rats into four groups (n=10): Sham, SCI (model), SCI + Herb (e.g., Epimedium extract), SCI + Herb + Inhibitor (e.g., PI3K inhibitor LY294002).

- Administer the herb extract via oral gavage at a pharmacologically relevant dose (e.g., 135 mg/kg/day) for 4 weeks [13].

Functional & Molecular Assessment:

- Behavioral Test: Weekly, assess locomotor recovery using the Basso, Beattie, Bresnahan (BBB) locomotor rating scale.

- Molecular Validation: At endpoint, harvest spinal cord tissue at the injury epicenter.

- Perform Western blot to measure expression and phosphorylation levels of hub targets (e.g., p-PI3K/PI3K, p-AKT/AKT ratios).

- Measure oxidative stress markers (MDA, SOD, GSH) to validate predicted anti-oxidative effects.

- Use histological staining (e.g., Nissl, GFAP) to assess neuronal survival and glial activation.

Data Analysis & Mechanism Confirmation:

- Compare behavioral scores and molecular markers across groups using appropriate statistical tests (e.g., one-way ANOVA).

- The key confirmation is that the herb's therapeutic effects (improved BBB score, reduced oxidative stress) are reversed or attenuated by co-administration of the specific pathway inhibitor (LY294002), proving the predicted pathway (PI3K-AKT) is essential for the herb's mechanism [13].

PI3K-AKT Signaling Pathway Activation

The Scientist's Toolkit: Essential Research Reagent Solutions

| Category | Tool/Reagent/Database | Primary Function in Network Pharmacology | Key Consideration |

|---|---|---|---|

| Compound & Herb Databases | TCMSP, HERB, ETCM [9] | Provide curated information on herbal compounds, pharmacokinetics (ADME), and putative targets. Essential for traditional medicine research. | Filter compounds by OB and DL to prioritize drug-like candidates. Cross-reference between databases. |

| Target & Disease Databases | DrugBank, SwissTargetPrediction, GeneCards, DisGeNET [14] [10] | Identify drug-protein and disease-protein associations. Critical for building the "drug-target-disease" triad. | Use for both target prediction (SwissTargetPrediction) and disease gene compilation (GeneCards). |

| Interaction & Pathway Databases | STRING, BioGRID, KEGG, Reactome [12] [10] | Provide high-confidence protein-protein interactions and curated pathway maps. The backbone for network construction. | Use a high confidence score (e.g., >0.7 in STRING). KEGG is vital for functional enrichment analysis. |

| Network Analysis & Visualization | Cytoscape (with plugins), NeXus Platform, Gephi [12] [10] | Construct, analyze, and visualize complex networks. Plugins (CytoNCA, MCODE) enable topological and module analysis. | NeXus automates multi-layer network analysis and integrates multiple enrichment methods (ORA, GSEA, GSVA) [12]. |

| Computational Validation Tools | AutoDock Vina, PyMOL, Desmond (Schrödinger) [14] [13] | Perform molecular docking to predict binding affinity and pose, and molecular dynamics to assess complex stability. | Docking provides a static snapshot; MD simulations (50-100 ns) offer dynamic stability and interaction insights. |

| Experimental Reagents (Example) | LY294002 (PI3K Inhibitor) [13] | Pharmacological inhibitor used for in vivo or in vitro "rescue" experiments to confirm a predicted pathway's causal role. | The reversal of the therapeutic effect by the inhibitor is strong evidence for the predicted mechanism. |

Technical Support Center: Troubleshooting Common Experimental Challenges

This section addresses frequent technical and methodological issues encountered by researchers applying Network Target Theory and AI-driven network pharmacology in their experimental workflows.

FAQ 1: My computational model for predicting drug-disease interactions performs well on training data but generalizes poorly to new disease networks. How can I improve its robustness?

- Answer: Poor generalization often stems from overfitting to sparse or imbalanced datasets. A primary strategy is to incorporate transfer learning frameworks that leverage knowledge from large-scale biological networks to inform predictions on smaller, disease-specific datasets [16]. Furthermore, ensure your training data encompasses diverse biological contexts. The model cited in [16], which identified 88,161 drug-disease interactions, successfully addressed sample imbalance. For validation, always use strict hold-out test sets representing novel network topologies (e.g., a cancer type not seen during training). Implementing graph neural networks (GNNs) that integrate prior biological knowledge as a form of regularization can also significantly enhance generalizability, as they reduce the effective dimensionality of the problem [17] [18].

FAQ 2: I am trying to construct a disease-specific biological network as my therapeutic "network target." What are the best strategies to integrate high-throughput multi-omics data while minimizing noise?

- Answer: Effective integration requires moving beyond simple overlap analysis. We recommend a supervised integration framework that uses biological prior knowledge to guide the process. The GNNRAI framework, for example, models relationships between molecular features (e.g., genes within a pathway) using knowledge graphs from databases like Pathway Commons [17]. This approach processes transcriptomics and proteomics data through GNN-based feature extractors, aligning the data modalities to find shared patterns relevant to the disease phenotype [17]. For cancer research, always start with curated, disease-specific data from repositories like The Cancer Genome Atlas (TCGA) or the Cancer Cell Line Encyclopedia (CCLE) to ensure biological relevance [16] [19]. Tools like MOFA (Multi-Omics Factor Analysis) can be used for initial, unsupervised exploration of shared factors across omics layers [19].

FAQ 3: My network analysis of a multi-herb formula yields an overly complex and uninterpretable "hairball" network. How can I extract functionally meaningful modules?

- Answer: A dense "hairball" network indicates a need for topological and community analysis. First, apply graph-theoretical measures (degree, betweenness centrality) to identify hub nodes that may be critical regulators [10]. Next, use community detection algorithms (e.g., Louvain, MCODE) to partition the network into functionally coherent modules [12] [10]. As demonstrated by the NeXus platform, these modules often align with specific biological pathways (e.g., inflammatory response, metabolic regulation) [12]. Follow this with module-specific enrichment analysis using Gene Ontology (GO) or KEGG databases to assign biological meaning. This "de-network" strategy shifts focus from thousands of individual interactions to a handful of targetable functional modules, which is the core premise of Network Target Theory.

FAQ 4: How can I validate computationally predicted "network targets" or synergistic drug combinations in a wet-lab setting?

- Answer: Computational predictions require multi-tiered experimental validation. Begin with in vitro cell-based assays.

- For a predicted synergistic drug combination, perform dose-response matrix assays (e.g., using a system like DrugCombDB [16]) on relevant disease cell lines. Calculate combination indices (e.g., Chou-Talalay) to quantify synergy.

- To validate a perturbed network target (e.g., a signaling module), use techniques like Western blotting or phospho-proteomics to measure changes in key protein nodes and pathway activity downstream of drug treatment [16].

- Gene knockdown/knockout experiments (siRNA, CRISPR-Cas9) on central hub genes within the predicted module can confirm their functional role in the drug's mechanism [16]. The ultimate goal is to demonstrate that the intervention shifts the disease network state toward a healthier phenotype, not just that it hits a list of single targets.

FAQ 5: When using AI models like GNNs for prediction, how can I maintain interpretability to understand the biological rationale behind the model's output?

- Answer: The "black box" problem is a key challenge. Adopt explainable AI (XAI) techniques integrated into your pipeline. For GNNs, use post-hoc attribution methods such as integrated gradients or integrated Hessians [17]. These methods calculate the contribution of each input feature (e.g., a gene's expression level) to the model's final prediction, allowing you to identify which nodes and edges in the biological knowledge graph were most influential [17]. Furthermore, you can build interpretability into the architecture itself, as seen in models that provide attention weights over different network neighborhoods or biodomains [17]. Always correlate the model's explanations with established biological knowledge to assess their plausibility.

Table 1: Summary of Core Technical Challenges and Recommended Solutions

| Challenge Area | Common Symptom | Recommended Solution & Key Tools | Primary Reference |

|---|---|---|---|

| Model Generalization | High training accuracy, low validation/test accuracy on new data. | Use transfer learning; integrate biological prior knowledge as regularization; employ GNN architectures. | [16] [17] [18] |

| Multi-omics Integration | Noisy, inconsistent, or non-informative combined data. | Use supervised integration frameworks (e.g., GNNRAI) with biological knowledge graphs; leverage tools like MOFA for exploration. | [17] [19] |

| Network Interpretability | Overly dense, uninterpretable networks ("hairballs"). | Apply topological analysis (centrality metrics) and community detection; perform module-enrichment analysis. | [12] [10] |

| Experimental Validation | Difficulty translating computational predictions to lab results. | Design multi-tiered validation: cell-based synergy assays, pathway activity measurement, and genetic perturbation. | [16] |

| AI Model Explainability | Inability to understand the biological basis for an AI prediction. | Implement explainable AI (XAI) methods like integrated gradients; use attention-based model architectures. | [17] |

Core Experimental Protocols & Methodologies

This section provides detailed, actionable protocols for key experiments central to Network Target Theory research.

Protocol 1: Constructing a Disease-Specific Network Target for a Cancer Subtype

Objective: To build a contextualized protein-protein interaction (PPI) network representing a specific cancer type for use as a therapeutic network target [16].

Materials:

- Data Source: The Cancer Genome Atlas (TCGA) transcriptomics data for your cancer of interest and matched normal samples [16] [19].

- PPI Template: A high-quality signed interaction network (e.g., Human Signaling Network with activation/inhibition annotations) [16].

- Software: R/Python for analysis; network visualization tools (Cytoscape, Gephi) [10].

Procedure:

- Differential Expression Analysis: Process RNA-Seq data from TCGA. Identify significantly differentially expressed genes (DEGs) between tumor and normal samples (e.g., |log2FC| > 1, adjusted p-value < 0.05).

- Network Pruning: Extract a sub-network from the master signed PPI network. Include only nodes (proteins/genes) that are either (a) DEGs, or (b) direct first neighbors of DEGs within the master network.

- Contextual Weighting (Optional): Weight the edges of the pruned network using gene expression correlation coefficients from the TCGA tumor samples to reflect co-expression patterns in the disease state.

- Topological Analysis: Calculate network centrality measures (degree, betweenness) for all nodes. Identify potential hub and bottleneck proteins within this disease-specific network.

- Functional Enrichment: Perform pathway enrichment analysis (KEGG, Reactome) on the genes in the final network or its topologically defined modules to confirm its relevance to known cancer biology.

Protocol 2: In Vitro Validation of a Predicted Synergistic Drug Combination

Objective: To experimentally test the synergistic effect of a drug pair predicted by a network target model [16].

Materials:

- Cell Line: A relevant human cancer cell line (e.g., from ATCC).

- Drugs: The two candidate drugs, dissolved in appropriate solvent (e.g., DMSO).

- Assay Kit: Cell viability assay (e.g., MTT, CellTiter-Glo).

- Equipment: Plate reader, cell culture facilities.

Procedure:

- Dose-Response Setup: Seed cells in 96-well plates. The next day, treat cells with a matrix of serial dilutions of Drug A and Drug B (e.g., a 6x6 matrix covering a range from IC₁₀ to IC₉₀ for each single agent).

- Single-Agent Controls: Include wells treated with each drug alone across the same concentration range, as well as solvent-only control wells.

- Incubation & Measurement: Incubate for 72-96 hours. Measure cell viability according to your assay's protocol.

- Data Analysis: Normalize viability data to controls. Use specialized software (e.g., Combenefit, SynergyFinder) or the Chou-Talalay method to calculate a Combination Index (CI) for each dose pair.

- CI < 1 indicates synergy

- CI = 1 indicates additivity

- CI > 1 indicates antagonism

- Visualization: Generate isobologram or 3D synergy plots to visualize the regions of strongest synergy.

Table 2: Performance Metrics from a Representative Network Target Study

| Evaluation Metric | Single Drug-Disease Interaction Prediction | Drug Combination Prediction (Fine-tuned) | Description & Significance |

|---|---|---|---|

| Area Under Curve (AUC) | 0.9298 [16] | Not Specified | Measures overall model discriminative ability. An AUC > 0.9 is considered excellent. |

| F1 Score | 0.6316 [16] | 0.7746 [16] | Harmonic mean of precision and recall. The higher score for combinations suggests the model excels at identifying multi-target interactions. |

| Scale of Discovery | 88,161 interactions (7,940 drugs; 2,986 diseases) [16] | 2 novel synergistic combinations identified for cancer [16] | Demonstrates the high-throughput discovery potential of the network target approach. |

Visualization of Workflows and Pathways

Diagram 1: AI-Driven Network Pharmacology Multi-Scale Workflow

Diagram 2: Disease-Specific Network Target Construction

Table 3: Key Resources for Network Target Research

| Category | Resource Name | Primary Function in Network Target Research | Key Features / Notes |

|---|---|---|---|

| Data Repositories | The Cancer Genome Atlas (TCGA) [16] [19] | Provides multi-omics profiles (RNA-Seq, DNA methylation, etc.) for thousands of tumor samples across cancer types. Essential for building disease-contextualized networks. | Includes clinical data, enabling survival-based validation of network targets. |

| Data Repositories | DrugBank [16] [10] | Comprehensive database containing drug structures, targets, and drug-target interaction information. Used for building drug-centric networks and validation. | Curated information on FDA-approved and experimental drugs. |

| Data Repositories | Comparative Toxicogenomics Database (CTD) [16] | Source of curated drug-disease and chemical-gene/protein relationships. Used for training and benchmarking prediction models. | Includes interaction types (e.g., therapeutic, marker). |

| Interaction Databases | STRING [16] [10] | Database of known and predicted protein-protein interactions. Serves as the foundational scaffold for constructing biological networks. | Includes confidence scores and physical/functional interaction types. |

| Interaction Databases | Pathway Commons [17] | Aggregates pathway information from multiple public sources. Used to provide prior knowledge graphs for supervised model training and pathway enrichment. | Enables construction of biologically meaningful feature graphs for GNNs. |

| Analytical Platforms | NeXus [12] | Automated platform for network pharmacology and multi-method enrichment analysis (ORA, GSEA, GSVA). Streamlines network construction and module analysis. | Reduces analysis time by >95% compared to manual workflows; handles plant-compound-gene hierarchies. |

| Analytical Platforms | Cytoscape [12] [10] | Open-source software platform for visualizing, analyzing, and modeling molecular interaction networks. The standard for network visualization and basic topology analysis. | Highly extensible via plugins (e.g., MCODE for clustering, CytoHubba for hub identification). |

| Analytical Platforms | GNNRAI Framework [17] | A supervised Graph Neural Network framework for integrating multi-omics data with biological prior knowledge. Used for predictive modeling and biomarker identification. | Incorporates explainability methods (integrated gradients) to interpret model predictions. |

| Validation Resources | Cancer Cell Line Encyclopedia (CCLE) [19] | Repository of genomic and pharmacological data from hundreds of cancer cell lines. Provides models for in vitro validation of predicted drug targets/combinations. | Gene expression, mutation, and drug sensitivity data are linked. |

| Validation Resources | DrugCombDB [16] | Database of drug combination screening data and associated analysis tools. Used as a source for training combination prediction models and benchmarking synergy predictions. | Facilitates analysis of dose-response matrix data. |

Welcome to the Technical Support Center for Network Pharmacology Research. This resource is designed for researchers, scientists, and drug development professionals navigating the complexities of modern pharmacological analysis. The field has evolved from a "one-drug-one-target" paradigm to a systems-level approach that models multi-target, multi-component interactions, particularly relevant for studying traditional medicines and natural products [3] [20].

A core challenge in this data-rich environment is moving beyond simple feature vectors and shallow models that fail to capture the intricate, non-linear relationships within biological networks. This support center provides targeted troubleshooting guides, FAQs, and detailed protocols to help you implement advanced feature enhancement strategies, thereby improving the predictive power and biological relevance of your computational models [9] [21].

Troubleshooting Common Experimental Issues

Issue 1: Inconsistent or Non-Reproducible Results from Network Predictions

- Problem: The key targets or pathways identified for an herb or formula change significantly when using different databases or analysis parameters.

- Diagnosis: This is a frequently cited limitation stemming from inconsistencies across databases and a lack of standardized analytical workflows [3] [22]. Different databases have varying coverage and curation standards for compound-target interactions.

- Solution:

- Cross-Database Validation: Never rely on a single database. Perform your target collection from at least 2-3 reputable sources (e.g., TCMSP, HERB, DrugBank) and take the intersection of results as a higher-confidence target set [22] [20].

- Employ Robust Network Metrics: When analyzing your constructed network, use multiple centrality measures (degree, betweenness, closeness) to identify key targets. A target consistently ranked high by different algorithms is more reliable [22].

- Document Parameters Rigorously: Record all software versions, database download dates, and algorithmic parameters (e.g., confidence scores for protein-protein interactions) to ensure future reproducibility.

Issue 2: Model Predictions Fail Experimental Validation

- Problem: Computational models predict strong activity for a compound or formula, but subsequent in vitro or in vivo experiments show weak or no effect.

- Diagnosis: A common pitfall is the disconnect between computational pharmacokinetic availability and the models used. Many network analyses consider all compounds in a herb, ignoring absorption, distribution, metabolism, and excretion (ADME) properties [3].

- Solution:

- Incorporate ADME Screening: Filter your list of candidate bioactive compounds using OB (oral bioavailability) and DL (drug-likeness) thresholds before target prediction and network construction [20].

- Validate with Dose-Response Data: Be wary of supraphysiological concentrations used in some literature that inform databases. Where possible, consult experimental data for pharmacologically relevant concentrations [3].

- Utilize Advanced AI Models: Transition from simple statistical models to advanced graph neural networks (GNNs) like GNNBlockDTI. These models use feature enhancement strategies to learn more accurate representations of molecular structure and protein-ligand interaction spaces, leading to better predictions [9] [21].

Issue 3: Inability to Decipher Synergistic Mechanisms in Multi-Component Formulas

- Problem: Your analysis identifies a list of targets for a complex formula but cannot explain how the combination of herbs or compounds produces a synergistic effect greater than the sum of its parts.

- Diagnosis: Simple feature vectors for each component, analyzed in isolation, cannot capture the network topology and systems-level perturbations induced by the combination.

- Solution:

- Construct a Comprehensive Formula-Disease Network: Integrate the formula's compound-target network with disease-associated gene networks and signaling pathway maps. Look for network modules or clusters where the formula's targets densely overlap with disease-related genes [9] [1].

- Analyze Pathway Enrichment and Crosstalk: Don't just list enriched pathways. Use tools to visualize how multiple targeted pathways (e.g., PI3K-Akt, MAPK) may interact or share common nodes, revealing potential synergistic points [23] [24].

- Apply "Network Target" Theory: Frame the formula's mechanism not as hitting individual targets, but as modulating a specific disease-associated network module back to a healthy state. Methods for "network target navigating" are designed for this purpose [9].

Frequently Asked Questions (FAQs)

Q1: What is feature enhancement in the context of network pharmacology, and why is it better than using simple molecular descriptors? A1: Simple molecular descriptors (e.g., molecular weight, LogP) are static, handcrafted vectors that provide a limited view of a compound's properties. Feature enhancement refers to computational techniques, particularly in AI, that learn richer, hierarchical representations directly from complex data structures like molecular graphs or protein sequences [21]. For example, a Graph Neural Network (GNN) can learn to represent a drug molecule not just as a list of atoms, but as a graph where the features of each atom are enhanced by iteratively aggregating information from its neighbors and the overall molecular context. This captures substructural motifs and spatial relationships critical for biological activity, leading to more accurate predictions of drug-target interactions and multi-target effects [9] [21].

Q2: How do I choose the right databases to start my network pharmacology study, given the many options available? A2: Your choice should be guided by your research focus and a strategy for cross-verification. Below is a comparison of essential resources.

Table 1: Key Research Databases and Tools for Network Pharmacology

| Category | Name | Primary Function | Key Consideration |

|---|---|---|---|

| Compound/Herb Database | TCMSP [20], HERB [9] | Provides chemical compounds of herbs, with ADME parameters and predicted targets. | Coverage varies; use multiple sources. |

| General Drug Database | DrugBank [1], ChEMBL [9] | Contains comprehensive drug/compound information and known targets. | High-quality, curated data for validation. |

| Protein Interaction Database | STRING [1], BioGRID [9] | Provides protein-protein interaction (PPI) data to build biological networks. | Set appropriate confidence thresholds. |

| Pathway Database | KEGG [9] [24] | Curated maps of molecular pathways and diseases. | Essential for functional enrichment analysis. |

| Network Analysis Tool | Cytoscape [1] [20] | Open-source platform for visualizing and analyzing complex networks. | The standard for network visualization and topology analysis. |

Q3: My network analysis yields hundreds of potential targets. How do I prioritize them for experimental validation? A3: Prioritization requires a multi-faceted filtering approach:

- Topological Analysis: In your compound-target-disease network, calculate centrality measures. Targets with high degree (many connections) and high betweenness centrality (bridge between clusters) are often more critical to the network's function [22].

- Functional Convergence: Prioritize targets that appear in the enrichment results of multiple key signaling pathways (e.g., a target that is part of both the PI3K-AKT and MAPK pathways) [23] [24].

- Literature & Disease Relevance: Cross-reference your list with known, well-validated targets for the disease under study from genetic (OMIM, DisGeNET) and literature databases.

- Experimental Feasibility: Consider the availability of assay protocols, reagents (antibodies, cell lines), and disease models for the shortlisted targets.

Q4: What are the essential steps to validate findings from a computational network pharmacology study? A4: Computational predictions are hypotheses that require rigorous experimental confirmation. A robust validation workflow proceeds from simple, targeted assays to complex, systems-level models:

- In Vitro Validation: Begin with molecular and cellular assays.

- Binding Affinity: Use surface plasmon resonance (SPR) or microscale thermophoresis (MST) to confirm direct physical interaction between the active compound and the predicted target protein.

- Cellular Activity: In relevant cell lines, measure changes in target protein phosphorylation, gene expression (qPCR), or pathway activity (reporter assay) upon treatment.

- In Vivo Validation: Confirm activity in a whole-organism context.

- Use established animal models of the disease (e.g., adenine-induced chronic kidney disease in rats [24]).

- Administer the herb/extract/compound and measure not only phenotypic improvement but also the modulation of the predicted key targets and pathways in tissue samples (via western blot, immunohistochemistry).

- Multi-Omics Validation: For the highest level of systems confirmation, use transcriptomics or proteomics on treated versus control samples to see if the broader gene/protein expression changes align with your predicted network perturbations [22].

Detailed Experimental Protocols

This protocol outlines a methodology for implementing a state-of-the-art graph-based model that uses feature enhancement to overcome the limitations of shallow learning.

Objective: To accurately predict novel interactions between herbal compounds and human protein targets. Principle: Represents molecules as graphs and uses stacked Graph Neural Network blocks (GNNBlocks) with feature enhancement units to capture complex sub-structural features that are predictive of biological activity.

Materials & Software:

- Data: Compound SMILES strings (from TCMSP, HERB); Protein amino acid sequences (from UniProt).

- Tools: RDKit (for converting SMILES to molecular graphs); PyTor or TensorFlow with Deep Graph Library (DGL) or PyTorch Geometric; Pre-trained protein language model (e.g., ProtBERT).

Procedure:

- Data Preparation:

- For each compound, use RDKit to generate a molecular graph. Node features include atom type, degree, hybridization, etc. Edge features represent bond type.

- For each protein, use a pre-trained language model to generate a per-residue feature vector from its amino acid sequence.

- Model Architecture - Drug Encoder:

- Construct the encoder using multiple GNNBlocks in series. Each GNNBlock contains several GNN layers (e.g., 3-4) to expand the receptive field and capture local substructures.

- Critical Feature Enhancement Step: Within each GNNBlock, implement an "expansion-then-refinement" module. Map the node features to a higher-dimensional space, apply a non-linear activation, then project them back down. This enhances the model's expressive power.

- Insert a gating unit between GNNBlocks. This unit uses a learnable gate to filter out redundant information from the previous block and preserve essential features for the next.

- Model Architecture - Protein Encoder:

- Process the sequence-based residue features with 1D convolutional neural networks (CNNs) to capture local motif information around putative binding pockets.

- Interaction Prediction & Training:

- Combine the global representation of the drug graph and the protein representation.

- Pass the combined vector through a multi-layer perceptron (MLP) to predict an interaction probability.

- Train the model on known drug-target pairs (from DrugBank, BindingDB) using binary cross-entropy loss.

Objective: To systematically identify the potential active components, core targets, and synergistic mechanisms of a multi-herbal formula. Principle: Integrates database mining, network construction, topological analysis, and molecular docking in a sequential workflow, with AI methods enhancing key steps like target prediction.

Workflow Diagram:

Procedure:

- Active Compound Identification: Retrieve all chemical constituents of the formula from databases (TCMSP, HERB). Screen for oral bioavailability (OB) and drug-likeness (DL) to filter for potentially bioactive compounds.

- Target Prediction: For the filtered compounds, predict protein targets. Supplement traditional similarity-based methods with AI-based target prediction models (e.g., DrugCIPHER, HGNA-HTI [9]) for enhanced accuracy and novelty.

- Disease Target Collection: Collect genes associated with the disease of interest from public databases.

- Network Construction and Analysis:

- Build a compound-target network and a disease-gene network.

- Merge them to create a formula-target-disease network. Use STRING to add protein-protein interaction data and build a PPI network of the overlapping targets.

- Analyze the network topology in Cytoscape. Use CytoHubba to identify hub targets based on multiple algorithms (MCC, Degree, Betweenness).

- Enrichment Analysis and Mechanism Hypothesis: Perform KEGG pathway and Gene Ontology enrichment analysis on the core targets. The significantly enriched pathways (e.g., PI3K-Akt, HIF-1) form the basis of the mechanistic hypothesis [23].

- Computational Validation: Perform molecular docking of the key active compounds with the hub target proteins to assess binding affinity and pose, providing preliminary validation of the network-predicted interactions.

- Experimental Validation Planning: Based on the core targets and pathways, design in vitro and in vivo experiments for biological validation (see FAQ A4).

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Resources for Network Pharmacology Research

| Item | Function in Research | Example/Specification |

|---|---|---|

| Curated Knowledge Databases | Provide the foundational data on compounds, targets, and diseases for network construction. | TCMSP, DrugBank, STRING, KEGG [9] [1] [20]. |

| Network Analysis & Visualization Software | Enables construction, visualization, and topological analysis of biological networks. | Cytoscape (with plugins like CytoHubba, MCODE) [1] [20]. |

| AI/ML Modeling Frameworks | Provides environment to build and train feature-enhanced predictive models (e.g., GNNs). | Python with PyTorch Geometric, Deep Graph Library (DGL), TensorFlow [21]. |

| Molecular Docking Software | Computationally validates predicted compound-target interactions by simulating binding. | AutoDock Vina, SYBYL [1] [24]. |

| Pathway Enrichment Analysis Tools | Statistically identifies biological pathways significantly enriched with network targets. | clusterProfiler R package, DAVID, MetaboAnalyst [24]. |

| In Vitro Validation - Kinase Assay Kit | Measures the effect of a compound on the activity of a predicted kinase target (e.g., AKT, PI3K). | Commercial luminescent or ELISA-based kinase activity kits. |

| In Vivo Validation - Disease Animal Model | Provides a physiological system to test the therapeutic effect and mechanism of the formula. | e.g., STZ-induced diabetic nephropathy mouse model [24], DSS-induced colitis mouse model [24]. |

| Multi-Omics Validation Platform | Enables systems-level validation of network predictions through gene/protein expression profiling. | RNA-Seq for transcriptomics, LC-MS/MS for proteomics and metabolomics [22]. |

Visualization of Advanced AI Model Architecture

The following diagram details the architecture of an advanced AI model (GNNBlockDTI) that exemplifies feature enhancement for drug-target interaction prediction, addressing the limitations of shallow models [21].

This technical support center provides targeted troubleshooting and methodological guidance for researchers utilizing key databases in network pharmacology. The content is framed within a thesis on feature enhancement techniques, aiming to streamline data acquisition, integration, and network construction to improve predictive robustness.

Troubleshooting Guides & FAQs

Q1: When downloading compound-target data from TCMSP, the file is empty or contains only column headers. What could be the cause and solution? A: This is often due to exceeding the database's unannounced query result limit or a session timeout.

- Solution: Break down your query. Instead of searching for all compounds of a herb like "Salvia miltiorrhiza" at once, search by specific compound classes (e.g., "tanshinones," "phenolic acids") and merge the results locally. Ensure you are logged into the TCMSP system before initiating the download.

Q2: After retrieving gene IDs from GeneCards, I get "No identifiers found" when uploading them to STRING for network construction. Why does this happen? A: This discrepancy arises from identifier namespace mismatches. GeneCards primarily provides HGNC symbols or Ensembl Gene IDs, while STRING requires stable, species-specific identifiers.

- Solution: Use the "Multiple Proteins by Names/Identifiers" tool on the STRING website. In the "Advanced Options," select the correct species (e.g., "Homo sapiens") and change the "Input Method" to "Your identifiers are:" and select "Gene names" if you have HGNC symbols. For bulk jobs, use the STRING API, ensuring you specify the

speciesparameter (e.g., 9606 for human) andformat=json.

Q3: My PPI network from STRING appears overly dense and non-specific to my disease context. How can I refine it? A: A default network with a low confidence score (e.g., 0.15) will include many non-specific interactions.

- Solution: Apply stringent filters. Increase the minimum required interaction score to "High confidence (0.700)" or higher within the STRING interface. Use the "Active Interaction Sources" to select only experimentally validated or curated database channels. Furthermore, after export, intersect your PPI network with differentially expressed genes from your relevant disease transcriptomic dataset to retain a biology-specific sub-network.

Q4: How do I resolve inconsistencies in compound or gene nomenclature when merging data from TCMSP, GeneCards, and other sources? A: Inconsistent naming is a major source of data integration failure.

- Solution: Standardize all identifiers to a common, stable namespace before merging. For genes, map all aliases and commercial database IDs to official Entrez Gene IDs or UniProt IDs using the

clusterProfiler(R) ormygene(Python) packages. For compounds, standardize to PubChem CID or InChIKey using theChemSpiderorPubChemPyAPIs. Create a mapping dictionary for your project.

Table 1: Common Database Issues and Resolutions

| Database | Typical Issue | Root Cause | Recommended Solution |

|---|---|---|---|

| TCMSP | Incomplete data download | Query limit / timeout | Modularize queries; confirm login state. |

| STRING | IDs not recognized | Identifier namespace mismatch | Use STRING's batch tool with correct species & ID type settings. |

| GeneCards | Information overload; irrelevant data | Broad search queries | Use the "Query By Source" filter to limit to UniProt, KEGG, etc. |

| Data Integration | Failed node matching | Nomenclature inconsistency | Standardize all identifiers to Entrez Gene ID (genes) and PubChem CID (compounds). |

Detailed Experimental Protocols for Network Construction

Protocol 1: Acquisition of Active Compounds and Target Genes from TCMSP

- Define Research Scope: Identify the traditional Chinese medicine (TCM) formula or herb of interest.

- Compound Screening: On the TCMSP platform, search for the herb. Apply the standard ADME screening criteria: Oral Bioavailability (OB) ≥ 30% and Drug-likeness (DL) ≥ 0.18. Download the list of qualified compounds.

- Target Prediction: For each screened compound, retrieve its predicted target proteins from the "Related Targets" section. The targets are based on the SysDT model and HERB database.

- Gene Annotation: Manually or via script, convert the target protein names to official gene symbols using the UniProt database to ensure consistency for downstream analysis.

- Data Storage: Save the final herb-compound-target triplets in a structured table (e.g., CSV format).

Protocol 2: Construction of a Protein-Protein Interaction (PPI) Network using STRING

- Input Gene List Preparation: Prepare a text file containing your gene of interest list, one gene symbol per line.

- STRING Database Query:

- Navigate to the STRING website (string-db.org).

- Select "Multiple Proteins" > "Protein by names."

- Paste your gene list, select the correct organism (e.g., "Homo sapiens"), and click "Search."

- Network Parameter Configuration:

- Under "Settings," set the minimum required interaction score to "High confidence (0.700)."

- In "Advanced," limit the active interaction sources to "Experiments" and "Databases" for higher reliability.

- Optional: Disable "show disconnected nodes" to simplify the network.

- Network Export: Once satisfied, export the network. For topological analysis, download the "TSV Formatted List of Edges" file, which contains protein1, protein2, and combined_score columns.

Protocol 3: Disease Gene Retrieval and Functional Enrichment via GeneCards & Enrichment Tools

- Disease Gene Mining: On GeneCards, search for your disease (e.g., "Rheumatoid Arthritis"). Navigate to the "Function" section and open the "GeneAnalytics" report. Download the list of genes associated with the disease, prioritizing those with high relevance scores.

- Gene List Intersection: Intersect the disease-associated gene list with the target gene list obtained from TCMSP (Protocol 1). This yields potential "disease-target" genes.

- Functional Enrichment Analysis:

- Use the intersected gene list as input for an enrichment analysis tool like DAVID or clusterProfiler.

- Perform Gene Ontology (GO) enrichment (Biological Process, Cellular Component, Molecular Function) and Kyoto Encyclopedia of Genes and Genomes (KEGG) pathway analysis.

- Apply a Benjamini-Hochberg correction, retaining terms with an adjusted p-value (FDR) < 0.05.

- Visualization: Visualize the top enriched terms using bar plots, dot plots, or pathway maps.

Visualizations

Diagram 1: Workflow for Constructing a Herb-Disease Network

Diagram 2: Data Integration and Format Conversion Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Digital Tools and Resources for Network Pharmacology

| Tool / Resource | Function | Key Application in Protocol |

|---|---|---|

| TCMSP Database | Provides ADME properties and predicted targets for TCM compounds. | Initial screening of bioactive herbal constituents (Protocol 1). |

| STRING Database | A repository of known and predicted protein-protein interactions. | Constructing the core PPI network with confidence scoring (Protocol 2). |

| GeneCards Suite | An integrative database of human genes and their annotations. | Retrieving and prioritizing disease-associated genes (Protocol 3). |

| UniProt ID Mapping Tool | Converts between various protein/gene identifier namespaces. | Standardizing gene identifiers for data integration (Troubleshooting Q4). |

| Cytoscape Software | An open-source platform for network visualization and analysis. | Visualizing constructed networks and calculating topological features. |

| clusterProfiler (R package) | Performs statistical analysis and visualization of functional profiles. | Conducting GO and KEGG enrichment analysis (Protocol 3). |

| Python/R Scripting Environment | Programming environments for data manipulation and automation. | Automating data retrieval, cleaning, merging, and batch API calls. |

Leveraging AI and Deep Learning for Advanced Feature Representation and Encoding

Troubleshooting Common Experimental & Computational Issues

Q1: My residue interaction network (RIN) analysis of a molecular dynamics (MD) trajectory yields inconsistent centralities. How can I stabilize the results? A: Fluctuating centrality metrics are common when analyzing individual frames from an MD simulation. To obtain a stable, representative RIN, construct a dynamic or probabilistic interaction graph [25]. Method:

- Generate an unweighted RIN for each frame of your trajectory using a tool like RING 3.0 or PyInteraph [25].

- Combine all frames to create a single network where edges are weighted by their persistence (frequency of occurrence) across the entire trajectory [25].

- Calculate centrality metrics (e.g., betweenness, closeness) on this weighted, consensus network. This approach identifies interactions and communication pathways that are statistically relevant over the simulation timescale.

Q2: When constructing a protein-protein interaction (PPI) network from public databases, the network is too dense and non-specific. How do I refine it for my disease context? A: A dense, non-specific PPI network often includes indirect associations. Refine it using targeted filtering and intersection analysis [26]:

- Disease-Specific Filtering: Use disease target databases (e.g., DisGeNET, TTD) to obtain a core set of genes/proteins validated for your disease of interest [26].

- Intersection Analysis: Perform a Venn analysis to intersect your broad PPI network nodes with the core disease targets. Focus subsequent analysis only on the intersecting nodes and the edges between them.

- Contextual Enrichment: Perform pathway enrichment (e.g., KEGG) on the intersected targets. This confirms the biological relevance of your refined network and identifies key signaling pathways for further investigation [26].

Q3: Molecular docking suggests good binding affinity, but my molecular dynamics simulation shows the ligand quickly dissociates. What might be wrong? A: This discrepancy often points to issues with the initial docking pose or the force field parameters.

- Pose Validation: Re-examine the top docking poses. The pose with the best score may be geometrically favorable but kinetically unstable. Check for poses that better satisfy persistent interaction patterns (e.g., conserved hydrogen bonds or hydrophobic contacts) seen in similar protein-ligand complexes [25].

- System Preparation: Ensure the protein protonation states are correct for the simulation pH. Confirm the ligand's atomic charges and force field parameters are accurately derived.

- Simulation Protocol: Extend the equilibration phase. A short simulation may not allow the complex to relax from the docked conformation into a stable bound state. Consider running multiple simulations from different docking poses.

Q4: How can I use RINs to prioritize residues for mutagenesis in protein engineering? A: Use RIN centrality metrics to identify structurally and functionally critical residues [25].

- Calculate betweenness centrality for all residues in your protein's RIN. High-betweenness residues act as bridges in communication pathways.

- Calculate closeness centrality. High-closeness residues are topologically central and can efficiently communicate with the rest of the structure.

- Target Selection: Residues with high betweenness and/or closeness are often critical for allosteric communication and structural integrity. Avoid mutating these in stability-focused engineering [25]. For altering functional dynamics, target residues with medium centrality that are adjacent to active site residues.

- Evolutionary Check: Use a "meta-RIN" or Key Interaction Network (KIN) analysis across a protein family to see if candidate residues are part of evolutionarily conserved interactions—another reason to avoid mutating them [25].

Frequently Asked Questions (FAQs)

Q1: What's the fundamental difference between a Residue Interaction Network (RIN) and a Protein-Protein Interaction (PPI) network? A: They operate at different scales. A RIN is an intra-molecular network representing non-covalent interactions (hydrogen bonds, salt bridges, etc.) within a single protein structure, where nodes are amino acid residues [25]. A PPI network is an inter-molecular network representing physical or functional associations between different protein molecules, where nodes are entire proteins [26].

Q2: Which file format is essential to start building a RIN? A: A 3D atomic coordinate file, most commonly in the Protein Data Bank (PDB) format. This file can come from experimental methods (X-ray crystallography, NMR) or from computational structure prediction tools like AlphaFold [27].

Q3: Can I apply RIN analysis to an AlphaFold2-predicted model? A: Yes. AlphaFold2 models are highly accurate and provided in PDB format, making them excellent starting points for RIN construction. In fact, the Evoformer module within AlphaFold2 internally uses a form of residue-residue interaction graph [25].

Q4: What is a "meta-RIN" and how is it useful? A: A "meta-RIN" is a comparative analysis of RINs built from multiple related proteins (e.g., a protein family or orthologs). It helps identify interaction patterns that are evolutionarily conserved versus those that are variable. This is powerful for understanding functional divergence and for protein engineering, highlighting which interaction networks are critical to preserve [25].

Q5: My compound is not in any drug database. How can I represent it as a molecular graph? A: You can generate a molecular graph representation from its chemical structure.

- Draw the 2D structure or obtain its SMILES string.

- Use cheminformatics toolkits (e.g., RDKit, Open Babel) to parse the structure.

- In the graph representation, atoms are typically represented as nodes, and chemical bonds as edges. You can add node features (e.g., atom type, charge) and edge features (e.g., bond type, length) to enrich the representation.

Detailed Experimental Protocols

Protocol 1: Constructing a Multi-Scale Network Pharmacology Workflow

This protocol integrates compound screening, target prediction, and network analysis [26].

1. Active Compound Screening & Target Prediction:

- Input: List of candidate compounds (e.g., from a natural product extract).

- Tools: Use TCMSP, SwissADME, or similar to screen for drug-likeness (Oral Bioavailability > 30%, Drug-likeness > 0.18) [26].

- Action: For screened compounds, predict protein targets using SwissTargetPrediction, SEA, or Similarity Ensemble Approach.

- Output: A list of potent compounds and their predicted protein targets.

2. Disease Target Identification:

- Input: Disease of interest (e.g., "prostate cancer").

- Tools: Query DisGeNET, Therapeutic Target Database (TTD), and OMIM.

- Action: Collect and unify all related gene/protein targets.

- Output: A curated list of known disease-associated targets.

3. Network Construction & Intersection Analysis:

- Action: Construct a "Compound-Target" bipartite network. Separately, construct a "Target-Disease" association network.

- Core Refinement: Perform a Venn analysis to find the intersection between predicted compound targets and known disease targets. These intersecting targets form the core network for your study [26].

- Output: A focused "Compound-Core Target-Disease" network.

4. Enrichment & Pathway Analysis:

- Action: Submit the list of core targets to enrichment analysis tools (DAVID, Metascape) for Gene Ontology (GO) and KEGG pathway analysis.

- Output: Identification of significantly enriched biological pathways that mechanistically link your compound to the disease [26].

5. Molecular Docking Validation:

- Action: For top core targets, obtain 3D structures (PDB). Dock your top screened compounds into the target's binding site using AutoDock Vina or Glide.