AI-Driven Drug Repurposing for Natural Products: Unlocking Hidden Therapeutic Potential

This article explores the transformative role of Artificial Intelligence (AI) in repositioning natural products for new therapeutic uses.

AI-Driven Drug Repurposing for Natural Products: Unlocking Hidden Therapeutic Potential

Abstract

This article explores the transformative role of Artificial Intelligence (AI) in repositioning natural products for new therapeutic uses. It examines the foundational value and unique challenges of natural products as a source for drug discovery. The article details key AI methodologies, including machine learning, deep learning, and network-based approaches, that are being applied to predict new indications. It addresses critical challenges such as data quality, model interpretability, and validation, offering strategies for optimization. Finally, the article presents validation frameworks, case studies across diseases like Alzheimer's and cancer, and a comparative analysis of leading AI platforms. Aimed at researchers and drug development professionals, it provides a comprehensive roadmap for integrating AI into natural product research to accelerate the development of safe, effective, and cost-efficient therapies[citation:1][citation:3][citation:8].

From Ancient Remedies to AI Pipelines: The Foundational Value of Natural Products in Drug Repurposing

The Historical Significance and Untapped Potential of Natural Products

For millennia, natural products (NPs) derived from plants, microbes, and other biological sources have formed the foundation of human pharmacotherapy. Their historical significance is profound, with early written records of herbal remedies dating back to ancient Egyptian (circa 1500 BCE), Chinese, and Sumerian civilizations [1]. This traditional knowledge has directly led to some of the most impactful medicines in the modern arsenal, including the analgesic morphine, the antimalarial artemisinin, and the anticancer agent paclitaxel [2] [1]. Approximately one-third of all FDA-approved small-molecule drugs over the past four decades are based on natural products or their direct derivatives, a statistic underscoring their irreplaceable role in treating critical areas like infectious diseases and oncology [2] [3].

Despite this legacy, the vast potential of nature's chemical library remains largely untapped. NPs exhibit unique structural complexity, biochemical specificity, and evolutionary optimization that make them privileged scaffolds for modulating human biology, particularly for challenging targets like protein-protein interactions [4] [3]. However, their very complexity has presented formidable challenges for modern, high-throughput drug discovery, leading to a decline in industrial pursuit from the 1990s onward [4].

Today, a transformative convergence is occurring. Advances in analytical chemistry, omics technologies, and—most critically—artificial intelligence (AI) are revitalizing NP research. This whitepaper posits that AI-driven drug repositioning represents a powerful and efficient strategy to unlock the latent therapeutic value of natural products. By applying machine learning (ML) and deep learning (DL) to decipher the polypharmacology of NPs, researchers can systematically identify new therapeutic indications for known natural compounds, accelerating the translation of nature's chemistry into novel treatments for unmet medical needs [5] [6].

Historical Legacy and Modern Challenges of Natural Product Drug Discovery

The Enduring Historical Impact

The historical journey of NPs from traditional medicine to modern drugs is marked by seminal discoveries. The 19th-century isolation of morphine from opium poppy established the paradigm of purifying single active ingredients from plants [1]. The 20th century witnessed the golden age of antibiotics from microbes (e.g., penicillin) and critical chemotherapeutics from plants (e.g., vinblastine, taxol) [2]. These successes are not historical artifacts; they demonstrate nature's ability to produce compounds with optimal bioactivity and drug-like properties. NPs typically possess greater molecular rigidity, more oxygen atoms, and higher stereochemical complexity compared to synthetic libraries, enabling them to interact with a broader swath of biological target space [4] [3].

Table 1: Representative Landmark Natural Product-Derived Drugs and Their Origins

| Drug | Natural Source | Original/Primary Indication | Historical Significance |

|---|---|---|---|

| Morphine | Opium Poppy (Papaver somniferum) | Analgesia | One of the first pure plant isolates (1804); established the model for pharmacologically active compound isolation [1]. |

| Quinine | Cinchona tree bark | Malaria | Early antimalarial; prototype for synthetic antimalarials [2] [1]. |

| Penicillin | Penicillium mold | Bacterial Infections | First widely used antibiotic, revolutionizing medicine [2]. |

| Artemisinin | Sweet Wormwood (Artemisia annua) | Malaria | Nobel Prize-winning discovery (2015); key for combating drug-resistant malaria [2] [1]. |

| Paclitaxel (Taxol) | Pacific Yew tree (Taxus brevifolia) | Ovarian, Breast Cancer | Complex diterpene demonstrating efficacy in major cancers; spurred supply chain innovations [2] [1]. |

| Dimethyl Fumarate | Derived from fumaric acid (found in Fumaria officinalis) | Psoriasis, Multiple Sclerosis | Example of a natural compound derivative successfully repositioned from psoriasis to MS [4]. |

Intrinsic Challenges in the Modern Pipeline

The transition to target-based, high-throughput screening in the late 20th century exposed key challenges in NP discovery:

- Technical Complexity: NP extracts are complex mixtures incompatible with standardized assays. Bioactivity-guided isolation is slow, and dereplication (avoiding rediscovery of known compounds) is difficult [4] [3].

- Supply and Synthesis: Sustainable sourcing and total synthesis of complex NPs are often non-trivial, hindering development [4].

- Intellectual Property (IP) and Access: Legal frameworks like the Nagoya Protocol create complexities regarding benefit-sharing and access to genetic resources [4].

- Mechanistic Deconvolution: NPs often act via polypharmacology or synergistic effects, making their precise molecular mechanisms of action (MoA) difficult to elucidate using reductionist approaches [2].

These challenges contributed to a waning of interest from major pharmaceutical companies. However, they also define the very opportunities that modern technologies, especially AI, are now poised to address.

The AI Revolution: A Framework for Natural Product Repositioning

AI, particularly ML and DL, provides a suite of tools to systematically analyze the complex, multi-dimensional data associated with NPs, thereby enabling rational drug repositioning. Repositioning existing NPs offers distinct advantages: known safety and pharmacokinetic profiles, reduced development costs (estimated at ~$300 million vs. $2.6 billion for de novo drugs), and a faster timeline to clinic (3-6 years on average) [6].

Table 2: Core AI/ML Methodologies for Natural Product Repositioning

| Method Category | Key Techniques | Application in NP Repositioning | Key Advantage |

|---|---|---|---|

| Classical Machine Learning | Random Forest, Support Vector Machines (SVM), Logistic Regression [7] [6]. | Building quantitative structure-activity relationship (QSAR) models to predict bioactivity or new targets for known NP structures. | Effective with smaller, curated datasets; good interpretability. |

| Deep Learning (DL) | Graph Neural Networks (GNNs), Convolutional Neural Networks (CNNs), Multilayer Perceptrons (MLPs) [5] [6] [8]. | Directly learning from molecular graphs of NPs (e.g., SMILES, 2D/3D structure) to predict properties, targets, or disease associations. | Automates feature extraction; excels with large, complex data. |

| Network Pharmacology & Knowledge Graphs (KGs) | Heterogeneous network analysis, random walk algorithms, KG embedding (e.g., TransE, PairRE) [5] [6] [9]. | Mapping NPs into multimodal networks linking herbs, ingredients, targets, pathways, and diseases to infer MoA and synergistic effects. | Captures system-level polypharmacology; ideal for multi-target NP actions. |

| Foundation Models & Zero-Shot Learning | Large-scale pre-trained models (e.g., TxGNN) on massive biomedical KGs [9]. | Making predictions for diseases with no known treatments by transferring knowledge from biologically similar diseases. | Addresses the "cold-start" problem for rare/orphan diseases. |

The Integrated AI-NP Repositioning Workflow

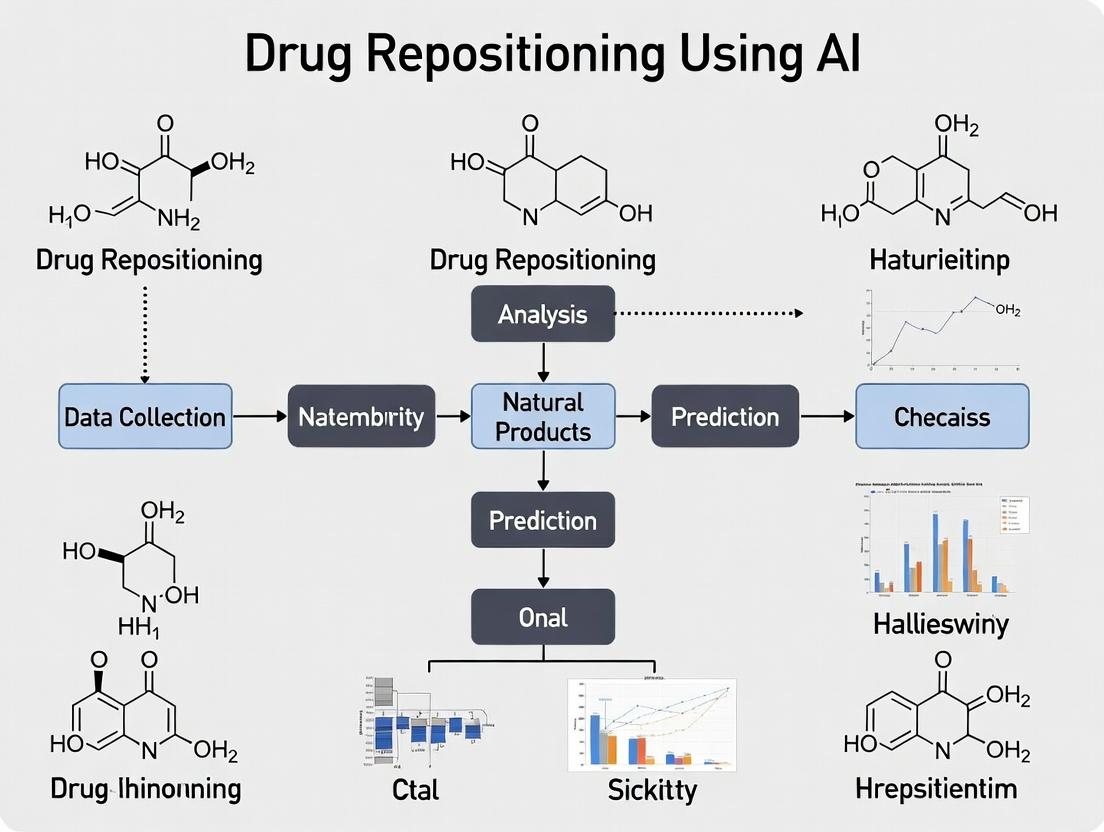

A state-of-the-art workflow for AI-driven NP repositioning integrates several steps, from data curation to experimental validation.

Title: AI-Driven Workflow for Natural Product Repositioning

- Data Integration & Curation: Diverse data on NPs (chemical structures, genomic, transcriptomic, bioactivity data) are aggregated from literature and databases. Knowledge Graphs (KGs) are constructed to link entities (drugs, targets, diseases, pathways) [5] [9].

- Model Development & Prediction: AI models are trained on this data. For instance, GNNs learn from the KG structure, while foundation models like TxGNN are pre-trained on vast biomedical networks to generate embeddings for drugs and diseases [8] [9].

- Candidate Ranking & Mechanistic Insight: Models score NP-disease pairs for potential activity. Network pharmacology approaches and explainable AI (XAI) modules (like TxGNN's Explainer) extract predictive rationales, such as key signaling pathways or target networks [5] [9].

- Experimental Validation: Top-ranked candidates undergo in silico validation (e.g., molecular docking) followed by in vitro and in vivo testing to confirm efficacy and proposed MoA [5] [10].

Case Studies & Experimental Validation of AI-Predicted Repurposing

Case Study 1: Network-Based Repurposing for Viral Infections

During the COVID-19 pandemic, AI demonstrated rapid repurposing potential. A knowledge graph approach identified baricitinib (an FDA-approved JAK1/2 inhibitor for rheumatoid arthritis) as a candidate for COVID-19. The model predicted its ability to inhibit host proteins (AAK1) involved in viral entry and its anti-inflammatory effect. This prediction was validated in vitro (reduced viral load in human liver spheroids) and later in clinical trials, leading to its emergency authorization [10].

Case Study 2: Unlocking the Therapeutic Potential of Broccoli – Sulforaphane

Sulforaphane, an isothiocyanate from broccoli, is a potent natural activator of the KEAP1/NRF2 pathway, a master regulator of cytoprotective and anti-inflammatory genes [4]. While its chemopreventive properties were known, AI and network analyses have helped systematically explore its repositioning potential for various conditions:

- Neurodevelopmental Disorders: Network pharmacology linking NRF2 activation to oxidative stress and inflammation pathways in autism spectrum disorder (ASD) provided a rationale. A subsequent placebo-controlled trial showed sulforaphane improved social responsiveness and behavior in young men with ASD [4].

- Oncology (Drug Resistance): AI-driven analysis of resistance pathways in estrogen receptor-positive (ER+) breast cancer identified NRF2 as a key node. This led to the development of SFX-01 (a stabilized sulforaphane formulation), which showed promise in phase II trials for reversing endocrine therapy resistance [4].

- Environmental Detoxification: Omics signatures of pollutant exposure guided trials in China, where sulforaphane-rich broccoli sprout extracts significantly increased the detoxification and excretion of airborne toxins like benzene [4].

Title: KEAP1/NRF2 Pathway: A Key Target for NP Repositioning

Detailed Experimental Protocol for Validating AI Predictions

Following AI-based prioritization, a tiered experimental validation protocol is essential.

Protocol: Multi-tier Validation of an AI-Predicted NP for a New Indication

Objective: To experimentally validate the predicted anti-inflammatory activity of a candidate NP (e.g., a flavonoid) for rheumatoid arthritis (RA).

Tier 1: In Silico and Biochemical Confirmation

- Molecular Docking & Dynamics: Perform docking of the NP into the predicted target (e.g., TNF-α, COX-2, or a kinase like JAK) using software like AutoDock Vina or Schrödinger Suite. Follow with molecular dynamics simulations to assess binding stability.

- Target-Based Biochemical Assay: Conduct a recombinant enzyme or protein-binding assay (e.g., ELISA, fluorescence polarization, kinase activity assay) to confirm direct interaction and measure IC₅₀.

Tier 2: In Vitro Phenotypic and Omics Analysis

- Cell-Based Assay: Treat relevant human cell lines (e.g., THP-1 macrophages or primary human synovial fibroblasts) with the NP. Measure the secretion of pro-inflammatory cytokines (IL-6, TNF-α, IL-1β) via ELISA or multiplex Luminex assay.

- Transcriptomics/Proteomics: Perform RNA-seq or quantitative proteomics on treated vs. untreated cells. Use pathway enrichment analysis (e.g., GSEA, Ingenuity Pathway Analysis) to verify if the NP's gene/protein signature reverses the disease-associated signature identified by the AI model.

- Network Pharmacology Validation: Construct a protein-protein interaction (PPI) network from omics-derived differentially expressed genes. Overlap this network with the AI model's predicted subnetwork to confirm key target nodes.

Tier 3: Ex Vivo and In Vivo Validation

- Ex Vivo Tissue Model: Test the NP on human RA synovial tissue explants in culture, assessing cytokine release and tissue viability.

- In Vivo Disease Model: Administer the NP in a standard murine collagen-induced arthritis (CIA) model. Monitor clinical scores (paw swelling), perform histopathological analysis of joints, and quantify systemic inflammatory markers.

Table 3: The Scientist's Toolkit: Key Reagents & Platforms for NP Repositioning Research

| Research Reagent / Platform | Function & Application | Rationale |

|---|---|---|

| LC-HRMS (Liquid Chromatography-High Resolution Mass Spectrometry) | Metabolite profiling, dereplication, and characterization of NPs in complex extracts [4] [3]. | Essential for ensuring compound identity, purity, and for annotating unknown analogues in bioactivity-guided fractionation. |

| NMR Spectroscopy | Definitive structural elucidation of novel NPs and confirmation of known structures [4]. | Gold standard for determining the planar and stereochemical structure of complex natural products. |

| Knockout/Knockdown Cell Lines (CRISPR-Cas9) | Functional validation of AI-predicted molecular targets [4]. | Confirms if the NP's bioactivity is dependent on the predicted target protein. |

| Multiplex Cytokine Assay Panels (Luminex/MSD) | High-throughput, quantitative profiling of inflammatory mediators in cell supernatants or serum [5]. | Enables phenotypic validation of immunomodulatory NPs across multiple signaling pathways simultaneously. |

| Human iPSC-Derived Cells or Organoids | Phenotypic screening in disease-relevant human cell models [4]. | Provides a more physiologically relevant in vitro system than immortalized cell lines for complex diseases. |

| Molecular Docking Software (e.g., AutoDock, Glide) | In silico prediction of NP binding poses and affinities to target proteins [10]. | Provides a quick, cost-effective first pass for validating AI-predicted drug-target interactions. |

The future of AI in NP repositioning lies in addressing current limitations and integrating emerging technologies. Key directions include:

- Overcoming Data Scarcity: Developing better "few-shot" and "zero-shot" learning models like TxGNN to make predictions for NPs or diseases with sparse data [9]. Creating standardized, minimal information (MI) standards for NP metadata (provenance, extraction, biological testing) is crucial for building high-quality datasets [5].

- Embracing Complexity: Moving beyond single-compartment models to micro-physiological systems (MPS) and digital twins that can model the synergistic, multi-target effects of NP formulations and their systemic pharmacokinetics [5].

- Generative AI for NP Optimization: Using generative models (e.g., GANs, VAEs) trained on NP chemical space to design optimized derivatives or novel scaffolds inspired by natural architectures, thereby overcoming synthesis or IP hurdles [5] [8].

- Explainable AI (XAI) for Trust and Discovery: Tools like TxGNN's Explainer, which reveals the multi-hop knowledge paths behind a prediction, are vital for building researcher trust and for generating novel biological hypotheses [9].

In conclusion, natural products possess an unparalleled historical significance and a vast, untapped reservoir of chemical diversity with direct therapeutic relevance. The integration of AI-driven drug repositioning strategies is poised to systematically mine this reservoir with unprecedented speed and precision. By transforming the discovery process from one of serendipity to one of prediction and rational design, this confluence of biology and computation holds the promise of unlocking a new generation of natural product-derived therapies for the most challenging human diseases.

Drug repositioning, the identification of new therapeutic uses for existing drugs, presents a strategic pathway to accelerate the development of treatments, particularly for diseases with limited options. This approach leverages existing safety and pharmacokinetic data, significantly reducing the time, cost, and risk associated with traditional drug development [6]. Artificial Intelligence (AI) has emerged as a transformative force in this field, capable of analyzing complex, high-dimensional biomedical datasets to predict novel drug-disease associations that are not immediately obvious [6].

The integration of AI is especially promising for the domain of Natural Product (NP) research. NPs, with their immense structural diversity and proven historical success as drug leads, represent a rich but notoriously challenging source for discovery. AI models are now being applied to predict the anticancer, anti-inflammatory, and antimicrobial activities of NPs, infer their mechanisms of action, and prioritize candidates for experimental validation [5].

However, the effective application of AI to NP-driven drug repositioning is hampered by three interrelated core challenges: the inherent chemical and biological complexity of NPs, the acute scarcity of high-quality, standardized data, and the pervasive irreproducibility of computational and experimental findings. This whitepaper provides an in-depth technical analysis of these challenges, framed within the AI drug repositioning paradigm, and offers detailed methodologies and solutions for researchers and drug development professionals.

Deconstructing the Core Challenges

The Multifaceted Complexity of Natural Products

The complexity of NPs is not a single barrier but a series of interconnected hurdles that complicate every stage of AI-driven analysis.

- Structural and Mixture Complexity: Unlike synthetic compound libraries, NPs are often isolated as complex mixtures. A single botanical extract contains hundreds of unique metabolites. This complexity confounds standard chemical representation methods (like SMILES strings) used in machine learning models and creates a "needle-in-a-haystack" problem for identifying the active constituent [5].

- Pharmacological Polyphony: NPs frequently exert therapeutic effects through polypharmacology—simultaneously modulating multiple biological targets and pathways. While this can be advantageous for treating complex diseases, it complicates the clear elucidation of a Mechanism of Action (MoA). AI models trained on single-target, single-pathway data may fail to capture or accurately predict these synergistic, network-wide effects [5].

- Provenance and Variability: The chemical profile of an NP is not static. It is influenced by a multitude of factors including plant genetics, growing conditions (soil, climate), harvest time, and post-harvest processing [11]. This batch-to-batch variability introduces significant noise into datasets, where the same species label may correspond to chemically distinct material, undermining model training and validation.

The following workflow diagram illustrates how this complexity propagates through a standard AI-driven NP discovery pipeline, creating points of ambiguity and uncertainty.

Diagram 1: Complexity in AI-NP Workflow. The diagram shows how inherent NP variability and chemical complexity introduce noise and uncertainty at multiple stages of the discovery pipeline.

The Critical Limitation of Data Scarcity

AI models are data-hungry. The performance of deep learning architectures, in particular, scales with the volume and quality of training data. NP research suffers from a severe data deficit, characterized by:

- Small and Imbalanced Datasets: High-quality, annotated bioactivity data for NPs is limited. Available datasets are often small and imbalanced, with many more known inactive compounds than active ones. This leads to models that are prone to overfitting and poor generalization to new chemical scaffolds [5].

- The "Long-Tail" Problem of Disease: A significant challenge for drug repositioning is addressing the "long tail" of diseases—those that are rare, complex, or poorly understood—which often have little to no approved therapies or associated research data. For example, approximately 92% of the 17,080 diseases in a large-scale medical knowledge graph had no FDA-approved drugs [9]. AI models struggle to make accurate predictions for these data-poor diseases.

- Heterogeneous and Non-Standardized Data: Existing NP data is scattered across publications, patents, and proprietary databases in inconsistent formats. Critical metadata regarding provenance, extraction methodology, and assay conditions is frequently missing, rendering data integration and model training difficult [11].

Table 1: Comparative Analysis of Drug Development Pathways

| Development Metric | Traditional De Novo Drug Development | AI-Driven Drug Repositioning (General) | AI-Driven NP Repositioning (Current Challenge) |

|---|---|---|---|

| Average Cost | ~$2.6 billion [6] | ~$300 million [6] | Potentially lower, but data acquisition/standardization costs are high. |

| Development Timeline | 10-15 years [6] | 3-6 years [6] | Timeline extended by need for extensive NP characterization and validation. |

| Primary Data Challenge | High cost of generating novel compound & clinical data. | Integrating diverse, pre-existing biomedical datasets. | Extreme data scarcity, heterogeneity, and lack of standardized NP metadata [5]. |

| Failure Risk in Late Stage | Very High | Reduced (known safety profile) | Uncertain: MoA for new indication may be complex/polypharmacological [5]. |

The Reproducibility Crisis in Computational NP Research

Reproducibility—the ability of an independent team to achieve the same results using the same data and methods—is a cornerstone of science. In AI-driven NP research, it is under severe threat from multiple angles [12].

- Computational Non-Determinism: Many AI models, especially complex deep learning architectures, have inherent non-determinism. Random factors in weight initialization, data shuffling, dropout regularization, and hardware-specific floating-point operations can lead to different outcomes across repeated training runs, even with identical code and data [12].

- Data Preprocessing Variability: Steps like normalization, feature selection, and handling missing data are often ad hoc and poorly documented. Applying different preprocessing pipelines to the same raw dataset can yield drastically different model inputs and, consequently, different predictions [12].

- Environment and Dependency Hell: Computational workflows depend on specific versions of software libraries, programming languages, and operating systems. A model that runs successfully in one environment may fail in another due to silent dependency conflicts, making long-term reproducibility nearly impossible without deliberate preservation efforts [13].

Table 2: Sources and Impacts of Irreproducibility in AI-NP Research

| Source of Irreproducibility | Technical Description | Impact on NP Research |

|---|---|---|

| Model Non-Determinism | Stochastic elements in training (e.g., SGD, dropout, random seeds) lead to variable model parameters and outputs [12]. | Different labs may validate different candidate rankings from the "same" AI screen, wasting resources. |

| Data Leakage | Information from the test set inadvertently influences the training process, inflating performance metrics [12]. | Published models appear highly accurate but fail completely when applied to new NP libraries or biological assays. |

| Incomplete Data Documentation | Lack of detailed metadata on NP provenance, extraction, and assay conditions [11]. | Impossible to recreate the exact training data conditions, preventing fair comparison or validation of published models. |

| Software & Environment Drift | Changes in underlying libraries or system architecture break computational workflows over time [13]. | Landmark AI models for NP discovery become inoperable within a few years, halting follow-up research. |

The diagram below maps the technical, data-centric, and human factors that converge to create the reproducibility crisis.

Diagram 2: Convergence to Irreproducibility. Multiple technical, data-centric, and human factors interact to undermine the reproducibility of AI-driven NP research.

Methodologies and Experimental Protocols for Addressing Challenges

Advanced AI Methodologies for Data-Scarce Environments

To overcome data scarcity, researchers must move beyond standard supervised learning.

Protocol for Zero-Shot Learning with Foundation Models: Foundation models like TxGNN are pre-trained on massive, heterogeneous knowledge graphs (KGs) that integrate information on diseases, genes, pathways, and drugs [9]. For NP repositioning:

- Knowledge Graph Construction: Integrate NP-specific data (structures, bioactivities, traditional uses) into a biomedical KG containing entities like genes, diseases, and approved drugs.

- Model Pre-training: Use a Graph Neural Network (GNN) to learn embeddings for all entities and relationships in a self-supervised manner. The model learns to propagate information through the graph.

- Zero-Shot Inference: To predict drugs for a disease with no known treatments, the model uses metric learning. It calculates a "disease signature" based on its neighboring entities in the KG (e.g., associated genes, phenotypes) and finds diseases with similar signatures. Knowledge is transferred from these similar, data-rich diseases to the target disease [9].

- Explanation Generation: Employ an explainer module (e.g., GraphMask) to extract the subgraph of relationships (e.g., NP → modulates → Protein → involved_in → Disease) that contributed most to the prediction, providing a testable mechanistic hypothesis [9].

Protocol for Few-Shot Learning with Transfer Learning:

- Pre-train a Model on a Large, Source Domain: Train a deep learning model (e.g., a Graph Neural Network or Transformer) on a large, general-purpose chemical dataset with associated bioactivity (e.g., ChEMBL).

- Feature Extraction or Fine-Tuning: For a small, target dataset of NP bioactivities:

- Feature Extraction: Use the pre-trained model as a fixed feature extractor for the NP structures.

- Fine-Tuning: Gently update the weights of the final layers (or the entire model) using the small NP dataset. Heavy regularization (e.g., dropout, weight decay) is critical to prevent overfitting [14].

Robust Experimental Validation Workflows

AI predictions are hypotheses that require rigorous biological validation. A reproducible validation protocol is essential.

- Candidate Prioritization & Orthogonal Confirmation:

- Tiered Screening: Use AI to rank NP candidates. Subject the top-tier candidates to in silico docking or pharmacophore modeling for initial triaging.

- Orthogonal Assay Design: Validate predicted activity using an assay method independent of the data used for training the AI model. For example, if the model was trained on gene expression data, validate with a cell viability assay or a protein-binding assay (e.g., SPR) [5].

- Mechanistic "Add-Back" Experiments: If the AI model predicts a specific target or pathway, design experiments to confirm it (e.g., gene knockdown/overexpression, use of selective inhibitors). If the prediction is polypharmacological, attempt to reconstitute the full effect by combining selective modulators of the individual predicted targets [5].

Implementing Reproducible Computational Practices

Reproducibility must be engineered into the computational workflow from the start.

Protocol for Containerized, Reproducible Analysis (e.g., using Neurodesk/Apptainer):

Environment Capture: At the beginning of a project, use a containerization tool (e.g., Apptainer, Docker) to define the exact software environment, including OS, library versions, and analysis tools.

Workflow Scripting: Write analysis scripts (in Python/R) that read data from a specified input directory and write results to an output directory. Avoid hard-coded paths.

- Persistent Citation: Upload the finalized container to a repository like Zenodo or CodeOcean to obtain a Digital Object Identifier (DOI). Publish the analysis scripts and a "run.sh" master script in a version-controlled repository (e.g., GitHub) [13].

- Peer Review & Replication: Reviewers or other researchers can download the container and the scripts, execute the "run.sh" file, and perfectly replicate the computational environment and results, regardless of their local system configuration [13].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Reagents and Resources for Reproducible NP Research

| Item Category | Specific Item/Resource | Function & Importance for Reproducibility |

|---|---|---|

| NP Reference Standards & Controls | Certified Reference Materials (CRMs) from NIST, NIFDC, or USP. Commercially available, chemically pure compounds (e.g., curcumin, resveratrol). | Provide an unambiguous chemical benchmark for identity, purity, and quantitative analysis. Essential for calibrating instruments, validating extraction yields, and serving as positive/negative controls in bioassays [11]. |

| Standardized Plant Extracts | Extracts with defined chemical fingerprints (e.g., via HPLC/UPLC), available from specialized suppliers (e.g., ChromaDex, Sigma-Aldrich's Extrasynthese). | Mitigate provenance and batch variability. Using the same characterized extract across labs allows for direct comparison of biological results. Critical for in vivo studies where chemical consistency is paramount [11]. |

| Orthogonal Assay Kits | Cell-based reporter assay kits (e.g., luciferase-based NF-κB, AP-1). ELISA kits for cytokine detection. Commercial kinase or epigenetic enzyme panels. | Enable independent validation of AI-predicted mechanisms. Using a different methodological principle than the training data strengthens the evidence for a predicted bioactivity [5]. |

| Metabolomics Standards | Stable isotope-labeled internal standards (e.g., 13C-labeled amino acids, lipids). MS/MS spectral libraries (e.g., GNPS, MassBank). | Ensure accurate quantification and identification of metabolites in complex NP mixtures. Labeled standards correct for instrument variability and recovery losses. Public spectral libraries aid in transparent compound annotation [5]. |

| FAIR Data Repositories | GNPS for metabolomics [5]. PubChem for bioactivity. The Natural Products Atlas. GitHub/GitLab for code. Zenodo/Synapse for datasets. | Facilitate Findable, Accessible, Interoperable, and Reusable (FAIR) data and code sharing. Depositing raw data, processed features, and analysis code is non-negotiable for reproducible, collaborative science [13]. |

| Computational Environment Tools | Containerization platforms (Apptainer, Docker). Workflow managers (Nextflow, Snakemake). Package managers (Conda, Pipenv). | Freeze the computational environment to guarantee that software dependencies and versions are preserved, eliminating "works on my machine" problems and ensuring long-term executable reproducibility [13]. |

A Strategic Roadmap for the Field

To advance AI-driven NP drug repositioning, a concerted effort across the community is required. The following integrated strategy addresses the tripartite challenge:

- Establish Minimal Information Standards: Develop and adopt community-agreed "Minimal Information for Natural Product AI (MINPAI)" standards. These should mandate reporting of critical metadata: precise biological source, extraction protocol, quantitative chemical characterization data (e.g., HPLC fingerprint, LC-MS feature table), and detailed assay conditions for any data used to train or validate AI models [5] [11].

- Create Curated, Benchmark Datasets: Funding agencies and consortia should sponsor the creation of publicly available, gold-standard benchmark datasets. These would include rigorously characterized NP libraries (physical or virtual) paired with standardized, multi-assay bioactivity profiles. They will serve as common ground for developing and fairly comparing AI algorithms [5].

- Mandate Reproducible Research Artifacts: Journals and funding bodies must strengthen mandates. Publication should require the sharing of both data and code within containerized, executable research objects that carry a DOI [13]. The computational peer review of these artifacts should be incentivized.

- Adopt Advanced AI Paradigms Institutionally: Research groups should prioritize investing expertise in next-generation AI methods specifically designed for data-scarce, complex scenarios. Mastery of foundation models, few-shot/zero-shot learning, and geometric deep learning (for graph-structured NP data) will be a key differentiator for impactful research [14] [9].

- Integrate Microphysiological Systems (MPS): To generate more predictive human-relevant data and tackle complexity, invest in MPS ("organ-on-a-chip") and their digital twin counterparts. These systems can model polypharmacology in tissue-level contexts and generate high-quality data for training next-generation AI models [5].

By systematically addressing complexity through rigorous characterization, combating data scarcity with innovative AI and shared resources, and engineering reproducibility into every step of the pipeline, the field of AI-driven natural product research can fully realize its potential to deliver novel, effective, and repurposed therapeutics to patients.

Drug repurposing (also known as drug repositioning, reprofiling, or retasking) is defined as the strategic identification and development of new therapeutic applications for existing drugs, whether they are approved, shelved, or in clinical investigation [15]. This approach stands in stark contrast to traditional de novo drug discovery, offering a compelling alternative that maximizes the therapeutic and commercial potential of known molecular entities [16]. The core value proposition lies in leveraging the extensive existing knowledge of a compound's safety, pharmacokinetics, and manufacturability, thereby bypassing many of the most resource-intensive and failure-prone stages of early development [17] [15].

The evolution of drug repurposing marks a transition from serendipitous discovery to a systematic, data-driven science [16]. Historic successes, such as sildenafil (from angina to erectile dysfunction) and thalidomide (from a sedative to a treatment for multiple myeloma), were often born from astute clinical observation [18] [16]. Today, the field is propelled by advances in computational biology, artificial intelligence (AI), and network pharmacology, enabling the rational prediction of new drug-disease associations [19] [20]. This whitepaper delineates the definitive economic and temporal advantages of drug repurposing over conventional discovery, framing the discussion within the transformative context of AI-driven repositioning of natural products.

Quantifying the Advantage: Economic and Temporal Efficiencies

The economic burden of traditional drug discovery has become a critical impediment to innovation. Analyses consistently show that developing a novel drug requires an investment ranging from $2 billion to $3 billion and a timeline spanning 10 to 17 years, from initial concept to market approval [19] [16]. This process is characterized by exceptionally high attrition, with only approximately 11% of candidates entering Phase I trials ultimately achieving approval [19].

Drug repurposing fundamentally alters this risk-reward calculus. By building upon established safety and manufacturing data, repurposing candidates can reach the market in 3 to 12 years, representing an average acceleration of 5 to 7 years [16] [15]. Financially, the mean development cost is estimated at $300 million, constituting a 50-60% reduction compared to de novo discovery [19] [15]. This efficiency stems primarily from bypassing or significantly de-risking preclinical through Phase I clinical stages [16]. Consequently, the probability of regulatory success for a repurposed drug that has passed Phase I is substantially higher, with estimates as high as 30% [16] [15].

Table 1: Comparative Analysis of De Novo Discovery vs. Drug Repurposing

| Development Metric | De Novo Drug Discovery | Drug Repurposing | Advantage |

|---|---|---|---|

| Average Timeline | 10–17 years [19] [16] | 3–12 years [16] [15] | 5–7 years faster [15] |

| Average Cost | $2–3 billion [19] [16] | ~$300 million [19] [15] | 50-60% cost reduction [15] |

| Typical Approval Rate (from Phase I) | ~11% [19] | Up to 30% [16] [15] | ~3x higher success rate |

| Key Risk Profile | High risk of failure due to unknown safety/toxicity [16] | Lower risk; established human safety profile [17] | Substantially de-risked |

Mechanistic Approaches to Systematic Repurposing

Modern repurposing strategies have moved beyond chance observation to structured methodologies, which can be categorized by their starting point.

- Disease-Centric Approaches: Beginning with a specific medical condition, researchers analyze disease mechanisms, genetic signatures, and molecular pathways to identify existing drugs that could counteract pathological processes [20]. This approach is dominant in the market, holding a 43% revenue share due to its focused and efficient identification of drug-disease relationships [21].

- Target-Centric Approaches: This method focuses on a specific biological target (e.g., a protein or pathway) implicated in a disease and screens existing drug libraries for compounds that interact with it [20]. It is increasingly powered by advances in genomics, proteomics, and AI [21].

- Drug-Centric Approaches: Starting with a known compound, researchers explore its polypharmacology—its interactions with multiple biological targets—to predict new therapeutic applications based on its molecular structure or side-effect profile [20].

- Therapeutic Area Expansion: Repurposing within the same therapeutic area (e.g., from one cancer type to another) accounts for 68% of current activity, as shared disease biology reduces uncertainty [21]. Conversely, cross-therapeutic repurposing (e.g., from infectious disease to oncology) represents a significant growth frontier driven by AI [21].

The AI Revolution in Drug Repurposing

Artificial Intelligence has become the cornerstone of modern, systematic repurposing, capable of integrating and analyzing vast, heterogeneous biomedical datasets to generate testable hypotheses [6].

Core AI/ML Methodologies:

- Machine Learning (ML): Algorithms such as Random Forests, Support Vector Machines (SVM), and Logistic Regression are used to classify drug-disease associations and predict repurposing success based on features derived from chemical, biological, and clinical data [6] [20].

- Deep Learning (DL): Convolutional Neural Networks (CNNs) and Graph Neural Networks (GNNs) excel at processing complex molecular structures and biological interaction networks, uncovering non-obvious patterns [6] [20].

- Network-Based Approaches: These methods model biological systems as interconnected graphs (e.g., protein-protein, drug-target, disease-gene networks). Techniques like random walk algorithms quantify the proximity between drugs and disease modules within these networks to identify repurposing candidates [6].

- Literature Mining & Semantic Inference: Natural Language Processing (NLP) analyzes the vast corpus of scientific literature and clinical records to extract latent relationships between drugs, targets, and diseases that are not captured in structured databases [22] [20].

Table 2: Key AI/ML Algorithms in Drug Repurposing

| Algorithm Category | Example Techniques | Primary Application in Repurposing | Typical Data Sources |

|---|---|---|---|

| Classical Machine Learning | Random Forest, SVM, Logistic Regression [6] | Classifying drug-disease pairs; ranking candidate likelihood [20] | Chemical descriptors, target profiles, clinical outcomes |

| Deep Learning | Convolutional Neural Networks (CNN), Graph Neural Networks (GNN) [6] | Predicting molecular binding affinity; analyzing heterogeneous biological networks [20] | Molecular graphs, omics data, protein structures |

| Network Analysis | Random Walk, Network Propagation [6] | Measuring drug-disease proximity in interactomes; identifying module perturbations [6] | Protein-protein interactions, drug-target maps, disease genes |

| Natural Language Processing (NLP) | Named Entity Recognition, Relation Extraction [22] | Mining novel associations from literature and clinical notes [20] | PubMed abstracts, electronic health records, patent texts |

Diagram 1: AI-Driven Systematic Repurposing Workflow (100 chars)

AI-Driven Repositioning of Natural Products: A Thesis Framework

Natural products (NPs) and traditional medicine formulations represent an invaluable reservoir of chemical diversity with proven bioactivity but often ill-defined mechanisms of action. AI is uniquely positioned to unlock their repurposing potential, creating a powerful synergy between traditional knowledge and cutting-edge computation [5].

AI Applications in NP Repurposing:

- Activity and Target Prediction: Machine learning and deep learning models (e.g., graph neural networks) are trained to predict anticancer, anti-inflammatory, and antimicrobial activities of NP-derived compounds, guiding experimental validation [5].

- Mechanism Elucidation: Network pharmacology models construct herb-ingredient-target-pathway graphs to propose synergistic effects and plausible mechanisms for complex traditional formulations [5].

- Omics Integration: AI gates operational multi-omics data—such as transcriptomic signature reversal, proteome-scale target engagement, and metabolomic feature networking—to prioritize NP candidates for reproducible lab validation [5].

Critical Challenges & Thesis Focus: A thesis on this topic must address persistent field-wide barriers: the "small data" problem of unique NPs, batch variability, incomplete provenance, and data imbalance [5]. Proposed solutions include developing minimal information standards for NP metadata, applying scaffold and time-split benchmarks for model validation, and using uncertainty-aware AI models to gate experimental work [5]. This frames a critical research agenda for using AI to transition NP repurposing from retrospective analysis to prospectively validated, mechanistically grounded translation.

Diagram 2: AI-Powered Natural Product Repurposing Pipeline (96 chars)

The Scientist's Toolkit: Essential Research Reagents & Platforms

Transitioning from computational prediction to validated therapeutic hypothesis requires a suite of advanced experimental platforms.

Table 3: Research Reagent Solutions for Validation

| Tool/Platform | Function in Repurposing Research | Key Application |

|---|---|---|

| High-Throughput/Content Screening (HTS/HCS) | Rapid phenotypic screening of drug libraries against disease-relevant cellular models [15]. | Initial in vitro validation of AI-predicted candidates. |

| Organoids & Organ-on-a-Chip | Microphysiological systems that mimic human tissue and organ complexity for efficacy and toxicity testing [18] [15]. | Translational bridge between cell assays and in vivo models. |

| CRISPR-Cas9 Screening | Genome-wide functional genomics to identify essential genes and validate drug mechanism of action [16]. | Confirming on- and off-target effects of repurposed drugs. |

| Proteomics & Chemoproteomics | System-wide profiling of protein expression and drug-protein interactions [16]. | Uncovering novel binding partners and polypharmacology. |

| Validated Reporter Cell Lines | Engineered cells with luminescent or fluorescent readouts for specific pathways (e.g., Wnt/β-catenin, NF-κB) [18]. | Mechanistic validation of drug effects on signaling pathways. |

Navigating Challenges and Future Directions

Despite its advantages, drug repurposing faces significant headwinds. Intellectual property (IP) protection for new uses of existing molecules, especially off-patent drugs, is complex and can undermine commercial incentives [17] [15]. Regulatory pathways, while flexible (e.g., FDA's 505(b)(2)), still require robust evidence for the new indication [17] [19]. Scientific challenges include the frequent lack of dose rationale for the new disease and the fact that pharmacological inhibition does not always phenocopy genetic target perturbation [17] [19].

The future market is poised for growth, projected to reach $59.30 billion by 2034 [21]. Key trends include the rising dominance of biologics repurposing (62% market share) due to their target specificity and the accelerated growth of target-centric approaches driven by AI [21]. The future of the field, particularly for NPs, hinges on creating collaborative networks that unite academia, industry, and regulators, alongside continued investment in explainable AI and standardized validation frameworks to translate computational promise into patient benefit [17] [5].

Drug repurposing definitively offers a faster, less costly, and de-risked alternative to de novo drug discovery. Its economic and temporal advantages are quantifiable and significant, reshaping pharmaceutical R&D strategy. The integration of advanced AI methodologies is transforming repurposing from a serendipitous endeavor into a predictive, systematic discipline. This is particularly transformative for the natural product domain, where AI can decode complex mechanisms and unlock vast, untapped therapeutic potential. As the field matures, overcoming translational, IP, and data-quality challenges through collaborative innovation will be crucial to fully realizing the promise of repurposing for addressing unmet medical needs.

The convergence of artificial intelligence (AI) and natural product (NP) science represents a foundational shift in drug discovery. Natural products, with their unparalleled structural diversity and proven biological relevance, have historically been a prolific source of therapeutics. However, their modern repurposing for new diseases has been hampered by complexity, data fragmentation, and the serendipity of traditional methods [5] [23]. AI emerges as the critical catalyst to systematically unlock this potential, transforming repurposing from a low-probability endeavor into a high-throughput, rational pipeline.

The economic and temporal imperative is clear. Traditional de novo drug development costs approximately $2.6 billion and spans 10-15 years, while repurposing an existing compound can cost around $300 million and take 3-6 years [6]. For natural products, which often have established safety profiles from traditional use or prior investigation, this advantage is magnified. The global drug repurposing market, valued at $34.08 billion in 2024, is projected to grow to $53.69 billion by 2033, driven significantly by AI and big data integration [24]. This whitepaper delineates the technical architecture of AI-driven natural product repurposing, providing researchers with a roadmap to harness these transformative tools.

Foundational AI Methodologies for Repurposing

AI in drug repurposing is not a monolithic tool but a suite of complementary methodologies, each suited to different aspects of the prediction and validation pipeline. Understanding their operational principles is essential for experimental design.

Core Machine Learning (ML) Frameworks

ML algorithms learn patterns from data to make predictions without explicit programming [6]. Their application in NP repurposing is varied:

- Supervised Learning: Used for quantitative structure-activity relationship (QSAR) models and bioactivity classification. Algorithms like Random Forest (RF) and Support Vector Machines (SVM) are trained on labeled datasets (e.g., compounds with known "active" or "inactive" status against a target) to predict the activity of new NPs [6].

- Unsupervised Learning: Applied to explore the chemical space of natural products, identify novel clusters, or detect patterns in untargeted metabolomics data. Principal Component Analysis (PCA) is fundamental for dimensionality reduction and visualization [6].

- Semi-supervised Learning: Crucial for leveraging the vast amounts of unlabeled NP data (e.g., uncharacterized spectral features) alongside smaller, labeled datasets to build more robust models [6].

Deep Learning (DL) and Advanced Architectures

DL, a subset of ML based on deep artificial neural networks, excels at processing high-dimensional, unstructured data [6].

- Graph Neural Networks (GNNs): This is a pivotal architecture for NPs. GNNs directly operate on molecular graphs where atoms are nodes and bonds are edges, natively learning structural and topological features that are critical for NP bioactivity [5].

- Convolutional Neural Networks (CNNs): While known for image analysis, CNNs can be applied to spectral data (e.g., mass spectrometry, NMR) or 2D molecular grid representations to extract diagnostic features for classification [6].

- Natural Language Processing (NLP) & Large Language Models (LLMs): These tools mine vast scientific literature and unstructured biomedical databases to extract hidden drug-disease associations, standardize herbal medicine information, and generate testable hypotheses [5] [23].

Network-Based & Multimodal Approaches

These methods move beyond the single molecule to model complex biological systems.

- Network Pharmacology: Constructs "herb–ingredient–target–pathway–disease" graphs to propose mechanisms and synergistic effects of complex NP mixtures [5].

- Heterogeneous Knowledge Graph Mining: Integrates multimodal data (chemical, genomic, phenotypic) into a unified graph structure. Algorithms then perform link prediction to infer novel, non-obvious relationships between a natural product node and a disease node [6] [23]. The TxGNN model, for example, uses this approach to predict drug candidates for rare diseases [24].

Table 1: Core AI/ML Approaches in Natural Product Repurposing

| Approach Category | Key Algorithms/Models | Primary Application in NP Repurposing | Typical Input Data |

|---|---|---|---|

| Classical Machine Learning | Random Forest (RF), SVM, PCA | Bioactivity classification, QSAR, chemical space exploration | Structural fingerprints, assay data, physicochemical descriptors |

| Deep Learning (DL) | Graph Neural Networks (GNNs), CNNs, Multilayer Perceptrons (MLPs) | Molecular property prediction, spectral data analysis, advanced QSAR | Molecular graphs, mass/NMR spectra, 3D conformers |

| Natural Language Processing | Transformer-based LLMs (e.g., BERT, GPT variants) | Literature mining, hypothesis generation, data curation | Scientific text, patents, electronic health records |

| Network & Knowledge-Based | Network propagation, Graph embedding, Link prediction | Mechanism inference, polypharmacology, predicting novel indications | Protein-protein interaction networks, omics data, biomedical knowledge graphs |

Data Integration: The Critical Path from Fragmentation to Knowledge

The single greatest technical challenge in AI-driven NP research is data modality and fragmentation [23]. NP data is inherently multimodal—encompassing genomic (BGCs), spectroscopic (MS, NMR), structural (2D/3D), and phenotypic (assay) information—and is scattered across specialized, non-interoperable repositories.

The Knowledge Graph as a Unifying Solution

A Natural Product Science Knowledge Graph (NP-KG) is proposed as the essential data infrastructure to overcome this barrier [23]. Unlike a traditional database, a KG represents entities (e.g., a compound, a gene, a disease) as nodes and the relationships between them (e.g., "binds to," "inhibits," "is associated with") as edges. This structure natively captures the complexity and interconnectedness of biological systems.

- Construction: An NP-KG integrates diverse data: chemical structures from COCONUT or NPASS, spectral libraries from GNPS, genomic data from MIBiG, and bioactivity data from ChEMBL, linked via standardized ontologies [23].

- Function: It enables sophisticated causal inference and reasoning. An AI model can traverse the graph to answer complex queries like, "Which NPs with a xanthone scaffold that target kinase X are also predicted to modulate pathway Y implicated in disease Z?"

Diagram 1: Structure of a multimodal Natural Product Knowledge Graph (NP-KG).

The AI-Driven Repurposing Workflow

An integrated workflow leverages the NP-KG and AI models to systematically identify repurposing candidates.

Diagram 2: Integrated AI workflow for natural product repurposing.

Experimental Validation: From AI Prediction to Bench Verification

AI predictions are hypotheses requiring rigorous biological validation. A tiered experimental protocol is essential.

Protocol for Validating AI-Predicted NP-Target Interactions

Objective: To confirm the binding and functional activity of an AI-predicted natural product against a novel target protein.

- Compound Acquisition & Preparation: Source the predicted NP (e.g., from commercial libraries, in-house collections, or custom synthesis). Prepare a 10 mM stock solution in DMSO, with serial dilutions for assays. Critical Control: Include a well-characterized inhibitor/activator of the target as a positive control.

- Primary Binding Assay: Employ a surface plasmon resonance (SPR) or microscale thermophoresis (MST) assay to measure direct binding affinity (KD). Perform experiments in triplicate across a minimum of six compound concentrations.

- Functional Enzymatic/Cellular Assay: Based on target biology, perform a functional assay (e.g., kinase activity, receptor antagonism/agonism). Use a cell line engineered with a reporter (e.g., luciferase) under the control of the target pathway. Data Analysis: Generate dose-response curves to calculate IC50/EC50 values.

- Specificity Screening: Counter-screen against a panel of related targets (e.g., kinase panel) to assess selectivity and validate the AI model's precision.

- Phenotypic Confirmation in Complex Models: Test the NP in a disease-relevant ex vivo or micro-physiological system (e.g., patient-derived organoid). Measure downstream phenotypic endpoints (e.g., cytokine secretion, cell viability, biomarker expression) to confirm the predicted therapeutic effect [5].

The Scientist's Toolkit: Key Research Reagent Solutions

Table 2: Essential Research Tools for AI-Driven NP Repurposing Validation

| Reagent/Material Category | Specific Examples | Function in Validation Pipeline |

|---|---|---|

| AI-Prioritized Compound Libraries | NPCARE, NORMAN, In-house NP fraction libraries | Source of physical compounds for testing AI-generated hypotheses. |

| High-Content Screening Assays | Multiparameter imaging, High-content cytometers (e.g., ImageStream) | Enable phenotypic screening in complex cell models, generating rich data for AI model feedback. |

| Multi-Omics Analysis Kits | RNA-Seq kits, Phosphoproteomic arrays, Untargeted metabolomics platforms | Generate mechanistic data (transcriptomic signature reversal, proteomic engagement) to confirm AI-predicted MOA [5]. |

| Biosensor-Enabled Systems | SPR chips (Biacore), MST-capable systems, Label-free cellular impedance systems | Provide quantitative, real-time binding and functional data for target validation. |

| Advanced Cell Culture Models | Patient-derived organoids (PDOs), 3D spheroids, Organ-on-a-chip microfluidic systems | Provide physiologically relevant models for confirming therapeutic efficacy and safety predictions. |

Market Landscape, Challenges, and Strategic Future

Economic and Regional Landscape

The AI-driven repurposing market is growing dynamically, with distinct regional drivers [24] [25].

Table 3: Global Drug Repurposing Market Landscape and Projections

| Region | Market Size (2025E) | Projected CAGR (2025-2033) | Key Growth Drivers |

|---|---|---|---|

| North America | Dominant Share (38.7%) [24] ~$280.9M [25] | 13.4% [25] | Strong R&D investment, AI biotech hubs, high rare disease prevalence. |

| Europe | ~29% Share [24] ~$220.2M [25] | 13.9% [25] | EU-funded initiatives (e.g., REMEDi4ALL), adaptive EMA regulations. |

| Asia-Pacific | ~24% Share [24] ~$182.2M [25] | 17.6% (Fastest) [25] | Rising healthcare investment, government incentives, expanding CRO sector. |

| Global Total | $35.84B (2025) [24] / $759.2M (2025) [25] | 5.18% [24] / 15.6% [25] | Note: Size disparity due to different study scopes (total market vs. segment). |

Persistent Challenges and Technical Hurdles

Despite progress, significant barriers remain:

- Data Quality & Bias: NP datasets are often small, imbalanced, and suffer from "batch effect" variability, leading to model overfitting and poor generalizability [5] [23].

- Interpretability & Causality: Many complex AI models are "black boxes." Explaining why a prediction was made is critical for scientific acceptance and mechanism-driven research [5].

- IP and Regulatory Pathways: Repurposing off-patent NPs presents commercial challenges. Regulatory agencies are developing pathways like the FDA's 505(b)(2), but clarity is still evolving [24].

Future Directions: The Road to Autonomous Discovery

The field is evolving towards more integrated and intelligent systems:

- Generative AI for NP Design: Using models like Generative Adversarial Networks (GANs) to design optimized, synthetically tractable NP analogs with desired properties [5] [6].

- Self-Driving Experimentation: Coupling AI prediction with automated robotic synthesis and screening platforms for closed-loop, iterative discovery.

- Prospective Clinical Validation: Implementing "AI clinical trials" using real-world data and digital twins to simulate trial outcomes and optimize patient stratification for repurposed NP therapies [5].

The confluence of AI and natural product science is not merely an incremental improvement but a necessary modernization. By providing the computational power to integrate fragmented data, discern hidden patterns, and generate testable, mechanistic hypotheses, AI is the key that unlocks the vast, untapped repurposing potential of the natural world. The path forward requires collaborative efforts to build standardized knowledge infrastructures, develop interpretable models, and establish clear translational pipelines. For researchers and drug developers, mastering this confluence is no longer optional—it is the cornerstone of the next generation of efficient, rational, and impactful therapeutic discovery.

The AI Toolkit: Methodologies Powering Natural Product Repurposing Predictions

Disease-Centric, Target-Centric, and Drug-Centric Computational Strategies

This technical guide provides a comprehensive analysis of the three principal computational strategies driving modern drug repositioning: disease-centric, target-centric, and drug-centric approaches. Framed within the urgent need to accelerate and de-risk drug discovery—particularly for natural products—these methodologies leverage artificial intelligence (AI) and vast biomedical datasets to identify new therapeutic uses for existing compounds. The guide details the core principles, quantitative performance, and experimental protocols for each strategy, supported by structured data comparisons and workflow visualizations. It further explores the transformative integration of AI and network pharmacology in overcoming historical challenges in natural product research, such as chemical complexity and limited data. The synthesis of these computational paradigms offers a systematic, data-driven framework to harness the untapped therapeutic potential of known molecules, thereby addressing critical unmet medical needs.

The traditional de novo drug discovery pipeline is notoriously inefficient, characterized by extended timelines of 10-15 years, exorbitant costs averaging $2-3 billion, and high failure rates exceeding 90% [26] [27]. In this context, drug repurposing (or repositioning) has emerged as a strategic alternative, seeking new therapeutic indications for existing drugs, investigational compounds, or, as is increasingly relevant, characterized natural products [26] [28]. This approach capitalizes on established safety and pharmacokinetic profiles, dramatically reducing development risk, cost (estimated at ~$300 million), and time to market (approximately 6 years) [26] [6]. Approximately 30% of newly marketed drugs in the U.S. now result from repurposing strategies, underscoring its clinical and commercial significance [26] [27].

The evolution from serendipitous discovery—epitomized by cases like sildenafil—to systematic, computational-driven methods marks a paradigm shift [26]. This shift is powered by the explosion of multi-omics data, sophisticated AI algorithms, and expansive biomedical knowledge graphs [8] [29]. For natural products, which are a prolific source of novel pharmacophores but are hindered by complexities of mixtures, limited scalability, and incomplete annotation, AI-driven computational repositioning offers a revolutionary path forward [5]. AI tools can predict bioactivity, infer mechanisms of action, and prioritize natural compounds for experimental validation, thereby integrating these complex entities into mainstream drug development pipelines [5].

This guide details the three foundational computational strategies—disease-centric, target-centric, and drug-centric—that form the backbone of systematic repurposing. Each approach offers distinct advantages and is suited to different research questions and data landscapes. The following sections dissect their methodologies, provide comparative analysis, and outline integrated workflows tailored for the unique challenges and opportunities presented by natural product-based drug discovery.

Core Repositioning Strategies: Definitions and Data-Driven Comparison

Systematic computational repositioning is categorized into three primary approaches based on the starting point of the investigation: the disease, the biological target, or the drug itself [28]. A large-scale analysis of over 100 repurposed drugs revealed a clear distribution in their application, highlighting the prevailing trends in the field [28].

Table 1: Prevalence and Characteristics of Core Computational Repositioning Strategies

| Strategy | Primary Starting Point | Core Hypothesis | Prevalence in Reported Cases [28] | Typical Data Inputs |

|---|---|---|---|---|

| Disease-Centric | A specific disease or pathological phenotype. | Drugs effective for a related or phenotypically similar disease may be effective for the new disease. | >60% | Clinical data, disease omics (genomics, transcriptomics), electronic health records (EHRs), phenotypic screens. |

| Target-Centric | A specific protein or molecular pathway implicated in disease. | A drug known to modulate a particular target may treat any disease where that target is dysregulated. | ~30% | Protein 3D structures, protein-protein interaction networks, pathway databases, target-based assay data. |

| Drug-Centric | A specific drug molecule or compound. | A drug’s polypharmacology (action on multiple targets) may yield therapeutic benefits in unforeseen disease contexts. | <10% | Drug chemical structure (e.g., SMILES), side-effect profiles, drug-induced gene expression signatures, binding assays. |

Disease-Centric Strategy

The disease-centric approach begins with a deep characterization of a disease’s molecular and phenotypic signature. The goal is to identify existing drugs that can reverse or counteract this signature [26] [27]. This is often operationalized through the "signature reversion" principle, where computational tools search for drugs whose gene expression profiles inversely correlate with the disease profile [27]. For natural products, this involves constructing detailed herb–ingredient–target–pathway graphs from multi-omics data to model synergistic effects and propose repurposing candidates for complex diseases like cancer or neurodegeneration [5].

Target-Centric Strategy

This approach is rooted in molecular biology and structural chemistry. It starts with a validated disease target and screens for compounds, including natural product libraries, that can modulate its activity [26] [28]. The key advantage is the ability to screen virtually any compound with a known structure against a target of interest. However, it is inherently limited to known biology and cannot identify novel, off-target mechanisms [26] [27]. Advanced structure-based methods, such as molecular docking and binding-site similarity analysis, are central to this strategy [28] [29].

Drug-Centric Strategy

The drug-centric strategy explores the principle of polypharmacology. It starts with a single compound and aims to comprehensively map its interaction profile across the proteome to predict novel therapeutic indications [28]. This approach is particularly powerful for natural products with complex bioactivities but poorly defined mechanisms. AI models can predict a natural compound's binding affinities to hundreds of targets, generating new, testable hypotheses for its use [5]. Despite its potential, it remains the least utilized approach, in part due to the complexity of fully characterizing a compound's mechanistic landscape [28].

Methodological Foundations and Experimental Protocols

The execution of each repositioning strategy relies on a suite of computational and experimental protocols. The following workflows and detailed methodologies outline the step-by-step processes.

Disease-Centric Workflow & Protocol

The disease-centric pipeline translates clinical and omics observations into candidate drug hypotheses.

Disease-Centric Computational-Experimental Protocol

Disease Profiling:

- Input: Collect and integrate multi-omics data (e.g., differential gene expression from RNA-seq of patient tissues) and phenotypic data from electronic health records (EHRs) [27].

- Computation: Use bioinformatic tools (e.g., GSEA, pathway enrichment analysis) to define a robust disease-specific molecular signature.

Signature Reversal Screening:

- Input: Access large-scale drug perturbation databases like the Connectivity Map (CMap), which contains gene expression profiles from cell lines treated with thousands of compounds [27].

- Computation: Employ pattern-matching algorithms (e.g., Kolmogorov-Smirnov statistics, cosine similarity) to rank drugs whose perturbation signatures most strongly negatively correlate with the disease signature.

Candidate Prioritization & Validation:

- Computation: Integrate additional data layers (drug pharmacokinetics, structural similarity, literature evidence) to filter and rank candidates.

- Experimental Validation: Top candidates proceed to in vitro validation using disease-relevant cell models (e.g., patient-derived cells) to assess efficacy in reversing pathological phenotypes [26].

Target-Centric Workflow & Protocol

This protocol focuses on identifying ligands for a specific protein target, often through structural bioinformatics.

Target-Centric Computational-Experimental Protocol

Target Preparation:

- Input: Obtain a high-resolution 3D structure of the target protein from PDB or via homology modeling. For natural product targets, this may include plant or microbial enzymes [29].

- Computation: Define the binding pocket and generate a pharmacophore model describing essential interaction features (e.g., hydrogen bond donors/acceptors, hydrophobic regions).

Ultra-Large Virtual Screening:

- Input: Prepare a virtual compound library. For natural products, this includes libraries of characterized metabolites (e.g., ZINC Natural Products) [29].

- Computation: Employ high-throughput molecular docking software (e.g., AutoDock Vina, Glide) to screen billions of compounds. Advanced iterative screening combines fast deep learning-based pre-screening with precise physics-based docking to maximize efficiency [29].

Hit Identification & Validation:

- Computation: Rank compounds based on docking scores (binding energy estimates) and analyze predicted binding poses.

- Experimental Validation: Synthesize or procure top-ranking compounds for in vitro binding assays (e.g., Surface Plasmon Resonance) and functional enzyme/cell-based assays to confirm target engagement and biological activity [28].

Drug-Centric Workflow & Protocol

This protocol builds a comprehensive interaction network for a given drug to reveal novel indications.

Drug-Centric Computational-Experimental Protocol

Comprehensive Drug Profiling:

- Input: Aggregate all available data on the compound: chemical structure (SMILES), known protein targets, adverse event reports, and drug-induced omics signatures [28].

Predictive Modeling of Interactions:

- Computation: Apply machine learning models trained on known drug-target interaction databases. Graph neural networks (GNNs) are particularly effective, learning from the relational structure of biomedical knowledge graphs to predict novel interactions for the query drug [8].

Network-Based Indication Discovery:

- Computation: Integrate predicted new targets into a heterogeneous network linking drugs, targets, and diseases. Use network algorithms (e.g., random walk, network proximity measures) to identify disease modules that are topologically "close" to the drug's target profile [6] [27].

- Hypothesis Generation: Diseases whose molecular networks are significantly impacted by the drug's multi-target profile are nominated as new indication hypotheses.

Experimental Deconvolution:

- Experimental Validation: Use chemoproteomic techniques (e.g., affinity chromatography coupled with mass spectrometry) to experimentally validate predicted novel targets. Follow with functional assays in disease models relevant to the newly hypothesized indications [5].

Validation, Integration, and The Scientist's Toolkit

Validation Frameworks

Computational predictions require rigorous validation [26].

- Computational Validation: Use metrics like Area Under the ROC Curve (AUROC) and Area Under the Precision-Recall Curve (AUPRC) via cross-validation on held-out datasets. For advanced AI models like the Unified Knowledge-Enhanced deep learning framework for Drug Repositioning (UKEDR), performance in "cold-start" scenarios (predicting for entirely new entities) is a critical benchmark [26] [8].

- Experimental Validation: A tiered approach is essential:

- In vitro binding and functional assays confirm target engagement.

- In vitro phenotypic assays in disease-relevant cell models assess functional efficacy.

- In vivo studies in animal models evaluate physiological impact and pharmacokinetics.

- Retrospective analysis of real-world patient data (EHRs) can provide supporting clinical evidence [26].

Integrated AI Frameworks

Modern platforms integrate the three strategies into unified AI systems. For example, the UKEDR framework combines knowledge graph embedding (capturing relational data between drugs, targets, diseases), pre-trained attribute representations (e.g., from molecular structures and disease descriptions), and an attention-based recommendation system to make accurate predictions, even for novel natural compounds not in the original knowledge graph [8]. This addresses the critical "cold-start" problem prevalent in natural product research.

Table 2: Key Resources for Computational Drug Repositioning Research

| Category | Resource/Solution | Description & Function |

|---|---|---|

| Compound Libraries | ZINC20, ChEMBL, NPC (Natural Product Atlas), In-house natural product libraries. | Curated databases of purchasable or characterized compounds for virtual and experimental screening [29]. |

| Bioactivity & Omics Data | Connectivity Map (CMap), LINCS, GEO (Gene Expression Omnibus). | Databases of drug-induced gene expression profiles and disease omics signatures for signature-based screening [27]. |

| Target & Pathway Data | PDB (Protein Data Bank), STRING, KEGG, Reactome. | Sources of protein structures, protein-protein interactions, and curated pathway maps for target-centric and network analysis [28] [29]. |

| AI/ML Platforms & Tools | DeepChem, PyTorch Geometric, TensorFlow, Schrödinger Suite, OpenEye Toolkits. | Software libraries and platforms for building deep learning models (e.g., GNNs), and for molecular docking and simulation [8] [29]. |

| Knowledge Graphs | Hetionet, DRKG (Drug Repurposing Knowledge Graph), Integrated biomedical KGs from PubMed. | Large-scale graphs integrating millions of relationships between biomedical entities to fuel network-based and KG-driven prediction models [8]. |

| Validation Assay Kits | ADP-Glo Kinase Assay, CellTiter-Glo Viability Assay, Proteomics & Metabolomics Kits. | Standardized biochemical, cell-based, and omics assay kits for experimental validation of computational predictions. |

Therapeutic Applications and Case Studies

Computational repositioning strategies have demonstrated significant impact across diverse therapeutic areas:

- Oncology & Rare Diseases: Dominant areas for repurposing due to high unmet need and complex biology. Disease-centric approaches using patient genomic data have identified novel uses for existing drugs in specific cancer subtypes [30].

- Neurodegenerative Diseases: Target-centric approaches have proposed kinase inhibitors (e.g., nilotinib, originally for cancer) for Parkinson's disease based on shared target pathways (e.g., ABL1 kinase) [28].

- Infectious Diseases: The COVID-19 pandemic served as a catalyst, with AI-driven drug-centric and target-centric screens rapidly identifying candidates like baricitinib (an anti-inflammatory) for clinical testing [26] [6].

Future Directions: AI and Natural Product Synergy

The convergence of advanced AI with natural product research defines the future frontier [5]:

- Overcoming Data Scarcity: Developing federated learning and few-shot learning techniques to build predictive models from small, imbalanced natural product datasets.

- Mechanistic Deconvolution: Using explainable AI (XAI) to interpret model predictions and illuminate the complex, polypharmacological mechanisms of natural product mixtures.

- Generative AI for Optimization: Employing generative models to design optimized, synthetically accessible analogs of complex natural product scaffolds, balancing efficacy and drug-like properties.

- Digital Twins: Creating patient- or system-specific in silico models ("digital twins") to simulate the effects of natural product interventions, enabling personalized repositioning hypotheses.

Disease-centric, target-centric, and drug-centric strategies provide complementary and powerful frameworks for systematic drug repositioning. The integration of these approaches within unified AI architectures, such as knowledge graph-enhanced deep learning models, is dramatically increasing the scale, accuracy, and translational potential of predictions. For the rich yet challenging domain of natural products, these computational strategies are indispensable. They offer a path to systematically decode complex bioactivities, predict novel indications, and accelerate the integration of these historically important compounds into the next generation of precision therapeutics. As data resources continue to expand and algorithms evolve, computational repositioning will solidify its role as a cornerstone of efficient, intelligent, and patient-centric drug discovery.

Drug repositioning, the identification of new therapeutic uses for existing drugs, represents a paradigm shift in pharmaceutical research [6]. By leveraging compounds with established safety and pharmacokinetic profiles, this strategy significantly reduces the time, cost, and risk associated with traditional de novo drug discovery [31]. The conventional drug development pipeline is notoriously prolonged, spanning 10–15 years with costs averaging $2.6 billion, while repurposing can bring a drug to a new market in approximately 3–6 years for about $300 million [6]. Artificial Intelligence (AI), particularly machine learning (ML) and deep learning (DL), has emerged as a transformative force in this field. These technologies can analyze complex, high-dimensional biological data—including genomic, transcriptomic, and chemical structures—to predict novel and non-obvious drug-disease associations that elude conventional methods [6] [8].