Accurate FDR Calculation for Dereplication Algorithms: A Practical Guide for Omics Researchers

This article provides a comprehensive guide to false discovery rate (FDR) calculation specifically for dereplication algorithms in proteomics and metabolomics.

Accurate FDR Calculation for Dereplication Algorithms: A Practical Guide for Omics Researchers

Abstract

This article provides a comprehensive guide to false discovery rate (FDR) calculation specifically for dereplication algorithms in proteomics and metabolomics. It covers the foundational importance of rigorous FDR control for validating biomarker discovery and compound identification, explores methodological approaches including target-decoy competition and entrapment strategies, addresses common troubleshooting and optimization challenges in real-world applications, and presents validation frameworks for benchmarking algorithm performance. Aimed at researchers and drug development professionals, this resource synthesizes current best practices to enhance the reliability and reproducibility of high-throughput omics analyses.

Why FDR Control is Non-Negotiable in Modern Dereplication Workflows

Uncontrolled false discoveries represent a critical failure point in modern biomedical research. In the context of high-dimensional biomarker and drug discovery, where thousands of molecular features are tested simultaneously, inadequate control of the False Discovery Rate (FDR) leads to a proliferation of spurious findings [1]. These false positives corrupt the scientific literature, misdirect research resources into dead-end validation studies, and ultimately contribute to the high failure rates in drug development [2]. The stakes extend beyond wasted funding; they encompass lost time for patients awaiting effective therapies and a erosion of confidence in translational research. This guide compares contemporary computational frameworks designed to control FDR in the face of complex data dependencies, providing researchers with objective criteria to select methodologies that ensure the reproducibility and reliability of their discoveries.

Comparative Analysis of FDR Control Frameworks for Biomarker Discovery

The selection of an appropriate FDR-controlling algorithm is paramount, as conventional methods like Benjamini-Hochberg (BH) can fail catastrophically in the presence of strong feature dependencies common in omics data [3]. The following table compares state-of-the-art frameworks, highlighting their approaches to managing dependency and their application contexts.

Table 1: Comparison of Advanced FDR Control Frameworks for High-Dimensional Biomarker Discovery

| Framework Name | Core Methodology | Key Innovation for FDR Control | Best-Suited Data/Application | Reported Advantages & Limitations |

|---|---|---|---|---|

| Dependency-Aware T-Rex Selector [1] | Hierarchical graphical models integrated into the T-Rex framework. | Explicitly models general dependency structures among variables using graphical models. Martingale theory provides proof of FDR control. | High-dimensional genomic data with strong inter-gene correlations (e.g., cancer survival analysis). | Strength: Theoretically guaranteed FDR control under dependency. Limitation: Computational complexity of modeling high-dimensional dependencies. |

| Expression Graph Network Framework (EGNF) [4] | Graph Neural Networks (GCNs/GATs) on biologically-informed networks. | Leverages graph structure and attention mechanisms to learn robust, generalizable features less prone to overfitting spurious correlations. | Complex diseases with interconnected biology (e.g., glioblastoma subtyping, treatment response). | Strength: Superior classification accuracy and interpretability of biomarker modules. Limitation: Requires significant data for training; less of a pure statistical FDR controller. |

| GEE-CLR-CTF Framework (metaGEENOME) [5] | Generalized Estimating Equations (GEE) with CLR transformation and CTF normalization. | Uses GEE to account for within-subject correlations in longitudinal studies, preventing inflated false positives from repeated measures. | Microbiome data and other compositional, sparse, longitudinal omics data. | Strength: Robust FDR control in longitudinal/correlated designs; handles compositionality. Limitation: Primarily designed for differential abundance analysis. |

| Causal Bio-Miner Framework [6] | Causal inference with propensity score matching on features from discriminant analysis. | Selects biomarkers based on causal effect estimates on treatment outcome, moving beyond association to causality. | Randomized Controlled Trial (RCT) transcriptomics data for discovering predictive biomarkers of treatment response. | Strength: Identifies causally relevant features with high subgroup classification accuracy using few features. Limitation: Dependent on RCT-style data structure for reliable causal inference. |

Experimental Protocols from Key Validation Studies

Robust biomarker discovery requires a multi-stage pipeline from initial high-throughput screening to biological validation. The protocols below detail two rigorous approaches that integrate FDR control with machine learning and experimental validation.

Table 2: Detailed Experimental Protocols for Integrated Biomarker Discovery and Validation

| Study Focus | Primary Discovery & Screening Protocol | Machine Learning & Biomarker Refinement Protocol | Experimental Validation Protocol |

|---|---|---|---|

| Identifying Mitochondrial Biomarkers for OCD [7] | 1. Data Source: Analyzed peripheral blood (GSE78104) and brain tissue (GSE60190) transcriptomic datasets.2. Differential Expression: Used limma R package with thresholds |log2FC| ≥ 0.5 and FDR-adjusted p ≤ 0.05.3. Candidate Gene Intersection: Intersected differentially expressed genes (DEGs) with mitochondrial (MRG) and programmed cell death (PCD-RG) gene sets. Weighted Gene Co-expression Network Analysis (WGCNA) identified key modules correlated with OCD. |

1. Feature Selection: Applied Support Vector Machine-Recursive Feature Elimination (SVM-RFE) and univariate logistic regression to candidate genes.2. Biomarker Selection: Selected biomarkers (NDUFA1, COX7C) based on consistent significance in ML models and differential expression in both independent datasets.3. Pathway Analysis: Conducted Gene Set Enrichment Analysis (GSEA) on correlated genes to identify enriched pathways (e.g., oxidative phosphorylation). | 1. In-Vitro Validation: Performed RT-qPCR on peripheral blood samples from new cohorts of OCD patients and healthy controls.2. Measurement: Quantified mRNA expression levels of NDUFA1 and COX7C.3. Analysis: Confirmed significant downregulation of both biomarkers in OCD patients, validating the computational findings. |

| Identifying m6A Regulators as Biomarkers for Diabetic Foot Ulcers (DFU) [8] | 1. Multi-Omics Data: Analyzed bulk RNA-seq (GSE134431), microarray datasets (GSE80178, GSE68183), and single-cell RNA-seq (scRNA-seq, GSE165816) for DFU.2. Differential Expression: Identified Differentially Expressed Methylation-Related Genes (DE-MRGs) using Wilcoxon rank-sum test (FDR < 0.05, |log2FC| > 1).3. Immune Microenvironment: Quantified immune cell infiltration using CIBERSORT. Adjusted for immune cell composition in downstream analyses. | 1. Multi-Algorithm Feature Selection: Applied four ML algorithms (LASSO, Random Forest, Gradient Boosting Machine, SVM-RFE) to DE-MRGs in the training set.2. Consensus Biomarker Identification: Selected consensus genes (METTL16, NSUN3, IGF2BP2) identified by all algorithms.3. Diagnostic Model: Built a multivariable logistic regression model. Evaluated performance via Leave-One-Out Cross-Validation (LOOCV), ROC-AUC, and Decision Curve Analysis (DCA). | 1. In-Vitro Functional Assays: Used high glucose-treated human skin fibroblasts (HSFs).2. Genetic Manipulation: Investigated the role of METTL16.3. Outcome Measures: Assessed cellular migration (scratch assay), collagen synthesis (e.g., Sirius Red staining), and oxidative stress markers (e.g., ROS levels). |

Quantitative Performance Data from Discovery Studies

The ultimate test of a well-controlled discovery pipeline is the performance of its identified biomarkers in independent validation. The data below summarize the validation outcomes for biomarkers discovered using stringent protocols.

Table 3: Validation Performance Metrics for Biomarkers Identified with FDR-Aware Pipelines

| Biomarker(s) | Disease Context | Discovery Cohort Performance | Independent Validation Performance | Key Supporting Functional Data |

|---|---|---|---|---|

| NDUFA1 & COX7C [7] | Obsessive-Compulsive Disorder (OCD) | Identified via SVM-RFE and logistic regression from 12 candidate genes. Significantly downregulated in GSE78104 blood dataset. | Confirmed significant downregulation in:1. GSE60190 brain tissue dataset (p < 0.05).2. RT-qPCR on independent patient blood samples (p < 0.05). | GSEA linked both genes to "Oxidative Phosphorylation" and "Ribosome" pathways. |

| METTL16, NSUN3, IGF2BP2 [8] | Diabetic Foot Ulcers (DFU) | LASSO-RF-GBM-SVM consensus model. Diagnostic ROC-AUC of 0.93 in training set (GSE134431). | Model AUC of 0.89 in integrated external validation set (GSE80178 & GSE68183). Decision Curve Analysis showed net clinical benefit. | scRNA-seq: METTL16 expression dynamics mapped to fibroblast subpopulations. Functional Assay: METTL16 overexpression in HSFs enhanced migration and collagen synthesis under high glucose. |

| Causal Bio-Miner Features [6] | Lithium Treatment Response in Bipolar Disorder | Framework selected minimal feature set based on causal estimate > 0.15. | Using 3 features (causal score >=0.2):- Lithium response subgroup classification accuracy: 83.33%- Non-response subgroup accuracy: 93.75% | Framework validated on breast cancer chemo-response data (GSE20271), achieving >81% accuracy for treatment subgroup classification. |

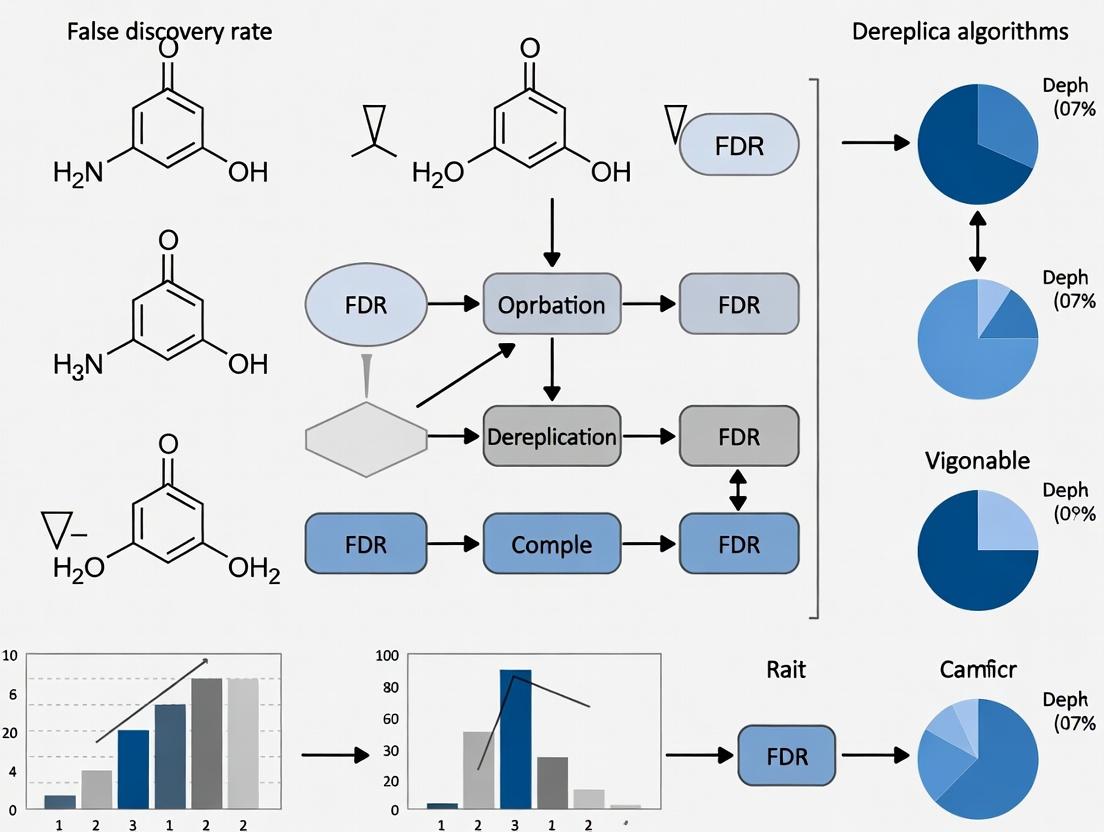

Visualizing Workflows and Pathways

Biomarker Discovery Workflow with Critical FDR Control

METTL16 m6A Regulation Pathway in Diabetic Foot Ulcer Healing

Multi-Stage Experimental Validation Workflow for Biomarkers

Table 4: Key Research Reagent Solutions for Biomarker Discovery and Validation

| Tool / Resource Category | Specific Item / Software | Primary Function in Biomarker Research | Example Use Case |

|---|---|---|---|

| Public Data Repositories | Gene Expression Omnibus (GEO), The Cancer Genome Atlas (TCGA) | Provide large-scale, publicly available transcriptomic and genomic datasets for initial discovery and independent validation [7] [8]. | Identifying differentially expressed genes in disease vs. control tissues. |

| Bioinformatics Software & Packages | R packages: limma, DESeq2, WGCNA, clusterProfiler, metaGEENOME (GEE-CLR-CTF) |

Perform core statistical analyses: differential expression, network analysis, pathway enrichment, and specialized FDR-controlled analysis [7] [5]. | Running a differential expression analysis with FDR correction or performing WGCNA to find co-expression modules. |

| Machine Learning Libraries | R: glmnet (LASSO), randomForest, e1071 (SVM). Python: scikit-learn, PyTorch Geometric (for GNNs) [4]. |

Enable advanced feature selection and classification model building to refine biomarker candidates from high-dimensional data [8] [6]. | Applying SVM-Recursive Feature Elimination (SVM-RFE) to select the most predictive gene subset. |

| Experimental Validation Reagents | RT-qPCR primers and probes, siRNA/shRNA for gene knockdown, overexpression plasmids, specific antibodies (for Western Blot). | Used to confirm the expression, functional role, and mechanistic importance of candidate biomarkers in laboratory models [7] [8]. | Validating the downregulation of a candidate gene via RT-qPCR or assessing its functional impact via siRNA-mediated knockdown. |

| Specialized Analysis Platforms | String-db (protein interactions), Cytoscape (network visualization), GeneMANIA (functional network analysis). | Facilitate the interpretation of candidate biomarkers by placing them in biological context through interaction networks and pathway mapping [7]. | Constructing a protein-protein interaction network for a shortlist of candidate biomarkers. |

In the analysis of high-dimensional biological data, such as that generated by mass spectrometry-based dereplication algorithms, controlling the rate of false positives is paramount. Traditional statistical corrections that control the Family-Wise Error Rate (FWER), like the Bonferroni correction, are often excessively conservative for exploratory research, dramatically reducing statistical power [9]. The False Discovery Rate (FDR) framework, introduced by Benjamini and Hochberg in 1995, provides a more balanced alternative by controlling the expected proportion of false positives among all declared discoveries [10] [9].

Within this framework, three interrelated core concepts are essential:

- False Discovery Rate (FDR): The expected value (theoretical average) of the False Discovery Proportion (FDP). It is the long-run average proportion of false discoveries researchers are willing to accept [11] [10].

- False Discovery Proportion (FDP): The actual, experiment-specific proportion of false discoveries among all reported discoveries in a given study. The FDP is a random variable that cannot be directly observed [11] [12].

- q-value: A p-value that has been adjusted for multiple testing using an FDR-controlling procedure. An FDR-adjusted p-value (q-value) of 0.05 indicates that 5% of the significant results are expected to be false positives [9] [13]. It can also be defined as the minimum FDR at which a given test result would be deemed significant [9].

The relationship between these concepts is foundational: the FDR is the expected value of the unobservable FDP, while q-values are the practical output of procedures designed to control the FDR [11] [12].

Table: Core Definitions in the FDR Framework

| Term | Formal Definition | Key Interpretation |

|---|---|---|

| False Discovery Rate (FDR) | FDR = E[FDP] = E[V / max(R,1)] [10] |

The expected or average proportion of false discoveries among all declared positives. |

| False Discovery Proportion (FDP) | FDP = V / R (where R>0) [12] |

The actual proportion of false discoveries in a specific experiment's results. It varies between experiments. |

| q-value | q(p_i) = inf{ FDR threshold at which p_i is rejected } [9] |

The minimum FDR threshold at which an individual test result would be called significant. An FDR-adjusted p-value. |

Comparative Analysis of Major FDR Control Procedures

Several statistical procedures have been developed to control the FDR, each with different assumptions, strengths, and weaknesses. The choice of procedure critically impacts the validity and power of analyses in dereplication and proteomics.

Table: Comparison of Primary FDR Control Methods

| Method | Control Guarantee | Key Assumptions | Primary Use Case & Notes |

|---|---|---|---|

| Benjamini-Hochberg (BH) | Controls FDR at level α if assumptions hold [10] [9]. | Independent test statistics, or certain types of positive dependence [10] [9]. | The default and most widely used method. Offers the greatest power under independence [9]. |

| Benjamini-Yekutieli (BY) | Controls FDR under arbitrary dependency structures [10] [9]. | Makes no assumptions about dependency. | Used for highly correlated data (e.g., fMRI, genomics with linkage disequilibrium). More conservative than BH [9] [12]. |

| Storey’s q-value | Directly estimates and controls the FDR [9]. | Estimates the proportion of true null hypotheses (π₀) from the data's p-value distribution [9] [14]. | Common in genomics and proteomics. Often more powerful than BH when many tests are from the alternative hypothesis [9]. |

| Target-Decoy Competition (TDC) | Can be proven to control FDR in spectrum-centric searches, given specific assumptions [11]. | Requires a correctly constructed decoy database and that target and decoy matches are equally likely a priori [11]. | The standard for false discovery estimation in mass spectrometry proteomics [11]. |

Recent theoretical work has expanded this landscape. The concept of compound p-values—which are only required to be valid on average across all true null hypotheses, not for each individually—generalizes standard p-values [15]. When the Benjamini-Hochberg procedure is applied to compound p-values, FDR control is still maintained but can be inflated, with an upper bound of approximately 1.93α under independence and potentially by a factor of O(log m) under positive dependence [15]. This is particularly relevant in complex omics experiments where perfect p-value validity is difficult to guarantee.

The Critical Role of Validation: Entrapment Experiments

A fundamental challenge in applying FDR control is that the FDP for any single experiment is unknown [11]. Therefore, simply using an FDR-controlling procedure does not guarantee its correctness for a specific tool or dataset. This is where rigorous validation through entrapment experiments becomes essential, especially for evaluating bioinformatics pipelines like dereplication algorithms [11].

An entrapment experiment involves spiking a dataset with known false signals (e.g., peptides from an organism not present in the sample) and verifying that the tool's reported FDR (or q-values) accurately bounds the proportion of these entrapment discoveries that are incorrectly reported [11]. The 2025 Nature Methods study by Moulder et al. systematically assessed this and identified three common, but not equally valid, analytical approaches for entrapment data [11].

Table: Methods for Analyzing Entrapment Experiments [11]

| Method | Formula | Provides | Common Use & Validity |

|---|---|---|---|

| Valid Upper Bound (Combined Method) | FDP_est = (N_E * (1 + 1/r)) / (N_T + N_E) |

An estimated upper bound on the true FDP. | Evidence for successful FDR control if curve falls below the y=x line. Proven valid under TDC-like assumptions [11]. |

| Lower Bound (Often Misapplied) | FDP_low = N_E / (N_T + N_E) |

A provable lower bound on the true FDP. | Only indicates failure of FDR control if curve is above y=x. Invalid for claiming successful control [11]. |

| Strict Target FDP Estimation | Estimates FDP among only the original target discoveries. | A focused estimate on the discoveries of actual interest. | More complex but can be valid and well-powered [11]. |

The study's application of this framework yielded critical insights for the field of proteomics, which are highly relevant to dereplication. While established Data-Dependent Acquisition (DDA) tools generally showed valid FDR control, none of the three popular Data-Independent Acquisition (DIA) tools (DIA-NN, Spectronaut, EncyclopeDIA) evaluated consistently controlled the FDR at the peptide level across all datasets, with performance worsening markedly at the protein level and in single-cell data [11]. This underscores that the choice of algorithm and its proper validation are not mere technical details but directly determine the reliability of scientific conclusions.

Experimental Protocols for FDR Assessment

Protocol for Entrapment Experiment Analysis

To rigorously assess whether a computational tool (e.g., a dereplication algorithm) provides valid FDR control, researchers can implement the following entrapment protocol based on current best practices [11]:

- Database Expansion: Create an analysis database that concatenates the true target database (e.g., expected natural product spectra) with an entrapment database containing decoy or biologically irrelevant entries (e.g., shuffled sequences, spectra from unrelated organisms). The ratio (

r) of the size of the entrapment to the target database should be recorded [11]. - Tool Execution: Run the tool under evaluation on a representative dataset using the expanded database. The tool must be blind to which entries are targets and which are entrapments.

- Result Segregation: From the tool's output, segregate the list of accepted discoveries into:

N_T: Count of discoveries matching the original target database.N_E: Count of discoveries matching the entrapment database.

- FDP Estimation: Calculate the estimated FDP using the Valid Upper Bound (Combined) method:

FDP_est = (N_E * (1 + 1/r)) / (N_T + N_E)[11]. - Visualization & Interpretation: Plot the estimated FDP (y-axis) against the tool's own reported q-value or FDR threshold (x-axis) for a range of thresholds. A tool is empirically shown to control the FDR if this entrapment-estimated FDP curve lies at or below the y=x line (where reported FDR equals observed FDP) [11].

Protocol for Assessing Impact of Feature Dependency

Given the severe impact of correlated tests on FDR variance [12], a separate validation protocol is recommended:

- Synthetic Null Generation: For a given dataset, create a synthetic null by randomly shuffling sample labels (e.g., case/control) or experimental conditions. This ensures all null hypotheses are globally true [12].

- Full Pipeline Execution: Apply the entire analysis pipeline (including normalization, statistical testing, and FDR correction) to the synthetic null dataset.

- Result Evaluation: Record the number and proportion of features reported as significant at a chosen FDR threshold (e.g., 5%). As the null is true, any discovery is a false positive. This procedure directly estimates the empirical FDP under a global null.

- Iteration: Repeat steps 1-3 many times (e.g., 100-1000 iterations) to understand the distribution of false discoveries. As highlighted by [12], in highly correlated omics data (e.g., methylation arrays, metabolomics), one may observe that while most iterations yield zero findings, a non-trivial subset yield a very high number of false discoveries, revealing instability in FDR control.

Visualizing Core Relationships and Workflows

Logical Relationship Between FDR, FDP, and q-values

Experimental Workflow for Entrapment-Based Validation

Table: Key Research Reagent Solutions for FDR-Focused Studies

| Category | Item / Resource | Function in Research |

|---|---|---|

| Experimental Reagents | Entrapment Sequences/Spectra | Peptide or spectral libraries from organisms definitively absent from the sample. Spiked to generate known false positives for validation [11]. |

| Synthetic Null Datasets | Datasets with randomly assigned labels (e.g., shuffled treatment groups). Used to empirically assess the false positive rate and FDR control under a global null hypothesis [12]. | |

| Software & Algorithms | DDA Search Tools (e.g., Mascot, MaxQuant) | Established tools for Data-Dependent Acquisition mass spectrometry data. Serve as benchmarks where FDR control via Target-Decoy Competition is better understood [11]. |

| DIA Search Tools (e.g., DIA-NN, Spectronaut, EncyclopeDIA) | Tools for Data-Independent Acquisition data. Require rigorous entrapment validation, as recent studies show inconsistent FDR control [11]. | |

FDR Power Calculators (e.g., R package FDRsamplesize2) |

Software to compute required sample size to achieve a desired average power while controlling the FDR at a specified level, incorporating estimates of true null proportion (π₀) [14]. | |

| Simulation Frameworks (e.g., GraphPad Prism, custom R/Python scripts) | Platforms to run Monte Carlo simulations for understanding FDR behavior under specific conditions, such as low prior probability or dependency structures [12] [16]. | |

| Statistical Procedures | Benjamini-Hochberg (BH) Procedure | The standard step-up procedure for FDR control. Default in many omics pipelines but assumes independence or positive dependency [10] [9]. |

| Benjamini-Yekutieli (BY) Procedure | A more conservative adjustment that guarantees FDR control under arbitrary dependency structures. Crucial for correlated data like metabolomics or methylation arrays [9] [12]. | |

| Storey’s q-value Method | A procedure that estimates the proportion of true nulls (π₀) from the data, often providing higher power in genomic/proteomic screens with many true discoveries [9] [14]. |

For researchers applying dereplication algorithms and interpreting high-throughput data, a strategic approach to FDR is necessary:

- Explicitly Define the Error Rate: Decide whether controlling the FWER (strict, for confirmatory work) or the FDR (more lenient, for exploratory discovery) is appropriate for the research phase [9].

- Choose and Validate Methods Contextually: Do not assume software defaults are correct. Select an FDR control procedure (BH, BY, Storey’s) based on the expected dependency structure of your data [9] [12]. For critical tools, perform entrapment experiments or synthetic null analyses to empirically validate FDR control [11] [12].

- Interpret q-values Correctly: A q-value of 0.05 does not mean there is a 5% chance the finding is false for that specific item. It means that among all findings with q ≤ 0.05, an estimated 5% are false positives on average [13] [16].

- Account for Prior Probability and Power: Be aware that the actual false discovery rate in a set of findings is infl uenced by the pre-experiment likelihood of true effects (prior probability) and the statistical power of the test. Underpowered studies exploring low-probability hypotheses can generate a majority of false positives even with statistically significant p-values [17] [13] [16].

- Plan Studies with FDR in Mind: Use power and sample size calculation methods designed for FDR (e.g., [14]) during experimental design to ensure the study is adequately powered to make reliable discoveries at the desired FDR threshold.

In conclusion, effectively untangling FDR, FDP, and q-values requires moving beyond their formulas to understand their practical interpretation and validation. This is especially critical in dereplication algorithm research, where the validity of the entire discovery pipeline hinges on robust and correctly implemented statistical error control.

Dereplication, the process of identifying and filtering redundant data entries—whether microbial isolates, genome sequences, or chemical compounds—is a foundational bioinformatics bottleneck in modern life sciences [18]. Its importance has surged with the advent of high-throughput technologies capable of generating millions of data points, such as mass spectra from culturomics studies or sequencing reads from metagenomes [19] [20]. The core challenge transcends simple duplicate removal; it involves distinguishing biologically or chemically meaningful uniqueness from technical variation within massive, interdependent datasets. This process is critical for conserving resources, focusing discovery efforts on novel entities, and ensuring the statistical validity of downstream analyses.

The field now grapples with a dual challenge: managing the volume of high-throughput data while accounting for the complex dependencies within it. These dependencies include shared evolutionary ancestry between microbial strains, conserved biosynthetic pathways for natural products, and correlated spectral features in mass spectrometry. Ignoring these relationships can lead to inflated false discovery rates (FDR) in downstream analyses, misallocation of research resources, and ultimately, a failure to discover truly novel biology or chemistry [21]. This comparison guide objectively evaluates contemporary dereplication tools and workflows, framing their performance and methodology within the critical context of FDR calculation and control. We compare algorithms across three key domains—microbial isolate profiling, genomic analysis, and natural product discovery—providing researchers with the data needed to select appropriate tools for their specific dereplication challenges.

Comparative Analysis of Dereplication Tools and Workflows

The following section provides a structured comparison of prominent dereplication tools, summarizing their core algorithms, optimal use cases, and key performance metrics as reported in experimental validations.

Table 1: Comparison of High-Throughput Dereplication Tools Across Applications

| Tool / Workflow | Primary Application & Data Input | Core Algorithm / Strategy | Reported Performance Highlights | Key Experimental Benchmark |

|---|---|---|---|---|

| SPeDE [19] | Microbial isolate dereplication; MALDI-TOF MS spectra | Identifies Unique Spectral Features (USFs) via mixed global/local peak matching with Pearson correlation validation. | Precision: >99.8%. Dereplication Ratio: ~70.5% (at PPMC threshold 50%). Exceeds taxonomic resolution of global similarity methods [19]. | 5,228 spectra from 167 bacterial strains across 132 genera [19]. |

| skDER [22] | Microbial genomic dereplication; genome assemblies | Average Nucleotide Identity (ANI)-based clustering using the skani algorithm. Offers 'greedy' and 'dynamic' selection modes. | Efficiency: Handles 1,000s of genomes. Accuracy: Strictly adheres to user-defined ANI/AF cutoffs. Comparable pangenome coverage to other tools [22]. | Applied to Enterococcus genus and E. faecalis; benchmarked against other ANI-based tools [22]. |

| CiDDER [22] | Microbial genomic dereplication; genome assemblies | Protein-cluster saturation assessment; iteratively selects genomes until a target percentage of total protein space is covered. | Coverage: Directly optimizes for pangenome breadth. A convenient alternative to ANI-based methods for gene-centric studies [22]. | Benchmarking on Enterococcus; demonstrates selection of representatives covering 90% of protein clusters [22]. |

| DEREPLICATOR+ [23] | Natural product dereplication; tandem MS (MS/MS) spectra | Fragmentation graph matching against chemical structure databases, integrated with molecular networking and FDR estimation. | Sensitivity: Identifies 5x more molecules than predecessor. At 1% FDR: 488 compounds (8194 MSMs) identified in Actinomyces dataset [23]. | 248+ million spectra from GNPS; validated on Actinomyces, fungal, and cyanobacterial datasets [23]. |

| DAS Tool [20] | Metagenomic bin dereplication; bins from multiple algorithms | Dereplication, Aggregation, and Scoring of bins from multiple binning tools to produce a non-redundant, optimized set. | Completeness: Recovers substantially more near-complete genomes than any single binning method alone [20]. | Applied to simulated communities and environmental samples (human gut, oil seeps, soil) [20]. |

| Passatutto / FDR Estimation [21] | Metabolomics annotation; MS/MS spectral matches | Target-decoy strategy with re-rooted fragmentation trees to generate decoy libraries and estimate FDR for spectral matching. | Utility: Enables project-specific scoring parameter adjustment, increasing annotations by an average of +139% while controlling FDR [21]. | Evaluation on 70 public metabolomics datasets from GNPS [21]. |

Detailed Experimental Protocols and Methodologies

Spectral Dereplication of Microbial Isolates with SPeDE

SPeDE is designed for high-throughput dereplication of MALDI-TOF mass spectra from bacterial isolates [19]. The protocol involves:

- Spectra Preprocessing & Quality Control: Input spectra are subjected to a quality filter, rejecting spectra with fewer than 5 peaks having a signal-to-noise ratio (S/N) > 30 [19].

- Peak Matching and Unique Feature Identification: For each pair of spectra, peaks are matched if their m/z values fall within a user-defined peak accuracy window (e.g., 500-1000 ppm) [19].

- Validation via Local Spectral Correlation: To avoid missing true discriminating features, the Pearson product-moment correlation (PPMC) of the raw spectra is calculated in a local region around each matched or unique peak. A peak is confirmed as a Unique Spectral Feature (USF) if the local PPMC falls below a set threshold [19].

- Operational Isolation Unit (OIU) Formation: Spectra pairs where all features of one are shared with the other are deemed redundant. Non-redundant spectra are grouped into OIUs, each represented by a single reference spectrum [19]. Optimization: The critical parameter is the local PPMC threshold. Increasing it improves precision but reduces the dereplication ratio. A threshold of 50% balances high precision (~95.3%) with a dereplication ratio of 70.5% [19].

Genomic Dereplication with skDER and CiDDER

These tools address the dereplication of thousands of microbial genome assemblies [22].

- skDER (ANI-based) Protocol:

- ANI Calculation: Pairwise ANI and aligned fraction (AF) are estimated for all genomes using the skani algorithm [22].

- Representative Selection (Two Modes):

- Greedy Mode: Genomes are scored (connectivity x assembly N50) and sorted. The highest-scoring genome becomes a representative. All genomes meeting ANI/AF cutoffs with it are marked as redundant. The process iterates through the sorted list [22].

- Dynamic Mode: Processes genome pairs. The genome with the lower score in a similar pair is marked redundant. The final representative set consists of genomes not marked redundant, approximating single-linkage clustering [22].

- Output: A non-redundant set of representative genomes. Non-representative genomes can be linked to their closest representative [22].

- CiDDER (Protein-Cluster Based) Protocol:

- Gene Prediction & Clustering: Protein-coding genes are predicted for all input genomes and clustered into groups of homologous proteins (e.g., using CD-HIT) [22].

- Iterative Saturation Analysis: Genomes are iteratively selected as representatives. After each selection, the cumulative percentage of the total protein cluster space covered is calculated [22].

- Stopping Criterion: Selection continues until a user-defined saturation threshold (default: 90% of total protein clusters) is reached [22].

FDR-Controlled Dereplication of Natural Products with DEREPLICATOR+

This workflow identifies known metabolites in MS/MS data while controlling false positives [23].

- Fragmentation Graph Construction: Theoretical fragmentation graphs are generated from candidate chemical structures in databases (e.g., AntiMarin). Decoy graphs are created via a re-rooting strategy for FDR estimation [23] [21].

- Spectral Matching & Scoring: Experimental MS/MS spectra are annotated against target and decoy fragmentation graphs. Metabolite-Spectrum Matches (MSMs) are scored [23].

- FDR Calculation & Validation: The false discovery rate is estimated using the target-decoy approach. Matches to decoy graphs approximate the distribution of false positives. A score threshold is set to achieve a desired FDR (e.g., 1%) [23] [21].

- Confidence Expansion via Molecular Networking: High-confidence identifications are used as seeds in molecular networks to discover structurally related variants within the dataset [23].

Workflow and Algorithm Visualizations

SPeDE Spectral Dereplication Workflow

SPeDE Algorithm for MALDI-TOF MS Dereplication

Target-Decoy FDR Estimation for Spectral Annotation

Target-Decoy FDR Estimation Workflow for Metabolomics

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Reagents and Materials for Dereplication Experiments

| Item / Solution | Primary Function in Dereplication | Example Use Case / Note |

|---|---|---|

| MALDI Matrix Solution (e.g., α-cyano-4-hydroxycinnamic acid) | Enables soft ionization of microbial proteins/peptides for MALDI-TOF MS analysis by absorbing laser energy [19]. | Essential for generating mass spectral fingerprints of bacterial isolates for tools like SPeDE [19]. |

| LC-MS Grade Solvents (Acetonitrile, Methanol, Water with modifiers) | Mobile phase for chromatographic separation of complex natural product extracts prior to MS analysis [23] [24]. | Critical for generating high-quality MS/MS data for dereplication with DEREPLICATOR+ [23]. |

| DNA Extraction & Purification Kits (for microbes) | High-yield, high-purity genomic DNA isolation from microbial cultures or environmental samples [20] [22]. | Required input for whole-genome sequencing and subsequent genomic dereplication with skDER/CiDDER [22]. |

| Reference Spectral Libraries (e.g., Commercial MALDI DB, GNPS) | Curated databases of known spectra for comparison and identification, serving as the ground truth for dereplication [19] [23] [21]. | SPeDE avoids dependency on them, while DEREPLICATOR+ and FDR tools actively search against them [19] [23]. |

| Chemical Structure Databases (e.g., AntiMarin, Dictionary of Natural Products) | Repositories of known compound structures used to generate theoretical fragmentation patterns [23]. | Core resource for in silico spectrum generation in dereplication algorithms like DEREPLICATOR+ [23]. |

| Internal MS Calibration Standards | Provides precise m/z calibration points within a mass spectrometry run to ensure measurement accuracy [19] [21]. | Vital for reproducible peak detection, which is the foundation of spectral comparison and dereplication. |

| Target-Decoy Database Software (e.g., Passatutto) | Generates decoy spectral or sequence libraries to model the null distribution of matches for robust FDR estimation [21]. | Enables statistically rigorous confidence assessment in high-throughput annotation workflows [21]. |

In modern high-throughput biology, from genomics to mass spectrometry-based proteomics, researchers routinely perform thousands to millions of simultaneous statistical tests. The False Discovery Rate (FDR) has become the dominant statistical framework for managing the inevitable type I errors that arise from these multiple comparisons [10]. Conceptually, the FDR is defined as the expected proportion of "discoveries" (e.g., identified peptides, differentially expressed genes) that are falsely declared significant. Formally, it is expressed as FDR = E[V/R | R>0], where V is the number of false positives and R is the total number of rejections [10].

The adoption of FDR, particularly through procedures like the Benjamini-Hochberg (BH) linear step-up procedure, represented a paradigm shift from the more conservative family-wise error rate (FWER) control [10]. This shift was driven by technological advances that enabled the measurement of vast numbers of variables (e.g., gene expression levels) from relatively small sample sizes, creating a need for a less stringent error rate that could highlight promising findings for follow-up work without being overwhelmed by corrections for multiplicity [10].

However, the very advantage of FDR—its greater statistical power—becomes a critical vulnerability when its control is invalid. In the context of dereplication algorithms, which aim to identify known compounds in complex mixtures and are crucial in natural product discovery and drug development, invalid FDR control does more than just risk individual false discoveries. It systematically corrupts the comparative studies and benchmarks used to evaluate software tools, instruments, and workflows. A tool that liberally underestimates its FDR will appear to discover more compounds, creating an unfair advantage in performance comparisons and leading researchers to select fundamentally flawed methodologies [11] [25]. This article examines the mechanisms of this invalidation and provides a framework for rigorous, FDR-aware benchmarking.

Foundational Concepts and Common Pitfalls in FDR Control

Understanding how invalid FDR control corrupts benchmarking first requires a clear grasp of standard control procedures and where they fail.

Standard FDR Control Procedures

The Benjamini-Hochberg (BH) procedure is the most widely used method. For m independent hypotheses with ordered p-values P_(1) ≤ … ≤ P_(m), it finds the largest k for which P_(k) ≤ (k/m)α and rejects all hypotheses for i = 1, …, k, controlling the FDR at level α [10]. Variants exist for different dependency structures. The Benjamini-Yekutieli procedure controls FDR under arbitrary dependence by using a more conservative denominator [10], while Storey's q-value method estimates the positive FDR (pFDR), providing a measure for each individual hypothesis [26] [27].

The Target-Decoy Competition (TDC) Strategy in Omics

In mass spectrometry proteomics, the theoretical control of FDR is often implemented practically via the target-decoy competition (TDC) strategy. Spectra are searched against a database containing real ("target") and artificially generated false ("decoy") peptides. The FDR is estimated based on the number of decoy hits above a given score threshold [11] [25]. This method, while powerful, rests on key assumptions—principally, that decoys are statistically indistinguishable from false target matches—which, if violated, compromise FDR validity.

Prevalent Errors in FDR Validation

A critical analysis reveals that many studies incorrectly validate their FDR control. A survey of entrapment experiments—where a tool's input is expanded with verifiably false "entrapment" sequences—identified three common estimation methods for the false discovery proportion (FDP), the realized proportion of false positives in a given experiment [11] [25]:

- An invalid method that yields neither a reliable upper nor lower bound.

- A lower-bound method, often misused to claim control.

- A valid upper-bound method, which is under-powered.

A frequent and serious error is the misuse of the lower-bound estimator. The valid combined estimator for the FDP among all discoveries is: FDP̂ = N_E(1 + 1/r) / (N_T + N_E) where N_E is the number of entrapment discoveries, N_T is the number of target discoveries, and r is the effective database size ratio [11] [25]. Many studies incorrectly omit the (1 + 1/r) term, which transforms the estimate into a lower bound. Using a lower bound to "validate" that a tool's FDP is below a threshold is statistically unsound; it can only provide evidence that a tool fails to control the FDR [11] [25].

Table 1: Common FDP Estimation Methods in Entrapment Experiments

| Method Name | Key Formula | Provides | Common Use Case | Validity for Proving FDR Control |

|---|---|---|---|---|

| Combined (Valid Upper Bound) | FDP̂ = N_E(1 + 1/r) / (N_T + N_E) | Estimated upper bound for true FDP | Demonstrating a tool may be controlling FDR | Valid evidence when curve is below y=x |

| Incorrect Lower Bound | FDP̂ = N_E / (N_T + N_E) | Estimated lower bound for true FDP | Incorrectly used to "validate" control | Invalid. Can only show a tool fails. |

| Target-Only | More complex, excludes N_E from denominator | Direct estimate of FDP for target discoveries | Evaluating error rate for primary findings | Valid but often under-powered [25] |

Consequences for Tool Benchmarking and Comparative Studies

Invalid FDR control creates a cascade of problems that fundamentally undermine the integrity of comparative analyses.

The Illusion of Performance

The most direct consequence is the creation of an unfair advantage for tools with liberal bias. In a benchmark where all tools are assessed at the same nominal FDR threshold (e.g., 1%), a tool that systematically underestimates its error rate will report a greater number of discoveries. This inflates performance metrics like sensitivity or identification depth, making the tool appear superior, even if its findings are less reliable [11] [28]. This illusion corrupts the tool selection process for the wider research community.

Compromised Workflow and Platform Comparisons

The problem extends beyond software to the evaluation of entire workflows and instrument platforms. For instance, comparisons between Data-Dependent Acquisition (DDA) and Data-Independent Acquisition (DIA) mass spectrometry modes, or assessments of new chromatography setups, rely on identification metrics from downstream software. If the software's FDR control is invalid, the comparison of the upstream experimental techniques becomes meaningless, as differences in reported identifications may stem from statistical error rather than true technical performance [11].

Case in Point: Benchmarking of DIA Analysis Tools

Recent systematic evaluations highlight this crisis. A 2025 study using rigorous entrapment found that three popular DIA search tools (DIA-NN, Spectronaut, and EncyclopeDIA) did not consistently control the FDR at the peptide level across diverse datasets. The problem was exacerbated at the protein level [11] [25]. A separate, comprehensive benchmark of machine learning strategies within DIA tools further illustrates the interplay between model training and FDR validity [28].

Table 2: Performance of DIA Tool ML Strategies in Benchmarking (Adapted from [28])

| Tool / Training Strategy | Primary Classifier | Reported Identifications | Consistency of Reported vs. External FDR | Risk of Over/Underfitting |

|---|---|---|---|---|

| Semi-Supervised (e.g., mProphet, PyProphet) | Linear Discriminant Analysis (LDA) / SVM | Lower | Generally conservative, lower risk of overfitting | Lower power, risk of underfitting |

| Fully Supervised (e.g., DIA-NN, Beta-DIA) | Ensemble Neural Networks | Highest | Can diverge; high risk of overfitting without care | High risk of overfitting, invalidating FDR |

| K-Fold Training (e.g., MaxDIA) | XGBoost | High | Best balance and consistency | Mitigated by separated training/test sets |

| Fully Supervised (e.g., Dream-DIA) | XGBoost | High | Good, but depends on implementation | Moderate |

The benchmark concluded that K-fold training combined with a robust classifier like XGBoost or a multilayer perceptron generally achieved the best balance between identification depth and reliable FDR control [28]. Tools using fully supervised learning on the entire dataset, while sometimes reporting the highest numbers, carried the greatest risk of overfitting, which directly compromises the assumptions of independence underlying TDC and leads to invalid FDR estimates.

Experimental Protocols for Valid FDR Assessment in Benchmarks

To prevent invalid comparisons, benchmarking studies must incorporate direct assessments of FDR control. The entrapment experiment is the gold standard for this validation [11] [25].

Designing a Valid Entrapment Experiment

- Database Expansion: Create an entrapment database comprising sequences that are verifiably absent from the study sample (e.g., peptides from a distant species like Arabidopsis thaliana in a human proteomics study). This database is then mixed with the legitimate target database at a defined ratio (r) and presented to the tool as a single search space [11] [28].

- Hidden Design: The tool must be "blind" to the origin (target vs. entrapment) of each sequence during its primary scoring and FDR estimation process.

- Post-Hoc Analysis: After the tool outputs its list of discoveries (e.g., peptides) at a claimed FDR threshold, the researcher separates the entrapment hits (N_E) from the target hits (N_T).

- FDP Estimation: Apply the valid combined method (from Table 1) to calculate an estimated upper bound for the true FDP. This process is repeated across a range of tool-reported FDR thresholds (or q-values).

Interpreting Results

The estimated FDP is plotted against the tool-reported FDR. For a tool that validly controls FDR, the entrapment-estimated FDP curve should fall at or below the line y=x (the line of perfect agreement). A curve consistently above this line indicates a liberal bias and a failure to control the FDR at the stated level [11] [25].

Entrapment experiment workflow for FDR validation.

A Framework for Rigorous, FDR-Aware Benchmarking

Drawing from essential benchmarking guidelines [29], comparative studies must evolve to incorporate FDR validation as a core component.

Pre-Benchmark Validation Phase

Before comparing performance metrics (speed, depth, precision), a mandatory initial phase should assess each tool's statistical calibration:

- Use well-characterized, standard datasets spiked with entrapment sequences.

- Apply the entrapment analysis protocol (Section 4) to each tool.

- Qualify tools for the performance benchmark based on a predefined criterion (e.g., entrapment FDP curve remains within 20% of the y=x line up to a 5% FDR threshold). Tools failing this calibration should be flagged or excluded, as their performance metrics are not trustworthy.

Transparent Reporting and Neutral Design

Benchmarks must be designed neutrally, avoiding bias from familiarity with a particular tool [29]. All parameter tuning efforts should be equivalent across tools. The report must transparently detail:

- The exact formulas used for FDR/FDP estimation.

- The methodology and database used for entrapment.

- Clear separation between validation of statistical control and comparison of calibrated performance.

Consequences of invalid FDR control in tool benchmarking.

The Scientist's Toolkit: Essential Reagents for FDR Validation

Table 3: Key Research Reagent Solutions for FDR Validation Experiments

| Reagent / Material | Function in FDR Validation | Example / Specification |

|---|---|---|

| Entrapment Sequence Database | Provides verifiably false discoveries to estimate the false discovery proportion (FDP). | Purified proteome from a phylogenetically distant organism (e.g., A. thaliana for human studies). |

| Benchmark Datasets with Known Truth | Enables calculation of ground-truth sensitivity and precision for tool performance comparison. | Publicly available spike-in datasets (e.g., with known protein/peptide concentrations). |

| Standardized Search Database Mix | Ensures a controlled ratio (r) of target to entrapment sequences for accurate FDP calculation. | A FASTA file combining target and entrapment sequences at a defined molar or copy-number ratio (e.g., 1:1). |

| Validation Software Pipeline | Implements the entrapment analysis workflow, including sorting, FDP calculation, and plotting. | Custom scripts or packages that implement the "combined method" formula and generate FDP vs. FDR plots. |

| High-Performance Computing (HPC) Resources | Allows for large-scale, replicated entrapment analyses to average out random variation in the FDP. | Access to cluster computing for parallel processing of multiple tools and datasets. |

Advanced Topics and Future Directions

Dependency-Aware FDR Control

A key assumption of standard FDR methods is the independence (or specific dependency) of tests. In biological data, features are often highly correlated (e.g., genes in pathways, peptides from the same protein). New methods like the dependency-aware T-Rex selector are emerging, using hierarchical models and martingale theory to provide FDR control guarantees for dependent data, which is crucial for applications in genomics and survival analysis [1].

Subgroup FDR for Specialized Analyses

In fields like post-translational modification (PTM) discovery, the global FDR for all peptides can mask a much higher error rate for the subgroup of modified peptides. The transferred subgroup FDR method addresses this by leveraging the relationship between global and subgroup FDR, allowing accurate error estimation even for rare modifications with few identifications [30]. This is directly relevant to dereplication searching for modified natural products.

Logical Workflow of Target-Decoy Competition

The TDC strategy, while common, is often a black box. Its logic can be summarized as follows:

Logic of target-decoy competition (TDC) for FDR estimation.

Invalid FDR control is not merely a technical statistical error; it is a fundamental flaw that invalidates the conclusions of comparative studies and tool benchmarks. It creates a perverse incentive for tool developers to prioritize liberal bias over statistical rigor to "win" on performance charts. To restore integrity to computational method evaluation, the community must adopt new standards:

- Mandatory Validation: Benchmarks must include a mandatory, upfront entrapment experiment to qualify tools based on their statistical calibration before any performance comparison.

- Correct Methodology: Researchers must use and report the valid combined method for estimating FDP from entrapment experiments, abandoning the incorrect use of the lower-bound estimator.

- Transparent Reporting: Studies must clearly separate sections on "FDR Control Validation" from "Performance Comparison of Statistically Validated Tools."

- Tool Development Focus: Developers should prioritize robust, dependency-aware FDR control methods and strategies like K-fold learning that mitigate overfitting, rather than chasing marginal gains in discovery counts at the cost of statistical integrity.

By anchoring comparative studies in rigorous, empirically validated error control, researchers in drug development and beyond can make reliable choices about the tools and algorithms that underpin discovery, ensuring that progress is built on a foundation of statistical truth rather than an illusion of performance.

Implementing Robust FDR Estimation: From Target-Decoy to Advanced Spatial Methods

In the research field of dereplication—the process of efficiently identifying known compounds within complex mixtures to prioritize novel discoveries—controlling the false discovery rate (FDR) is a foundational statistical challenge [10]. High-throughput technologies, such as mass spectrometry in proteomics or metabolomics, generate vast datasets where thousands of hypotheses (e.g., peptide or compound identifications) are tested simultaneously [10]. The Target-Decoy Competition (TDC) approach has emerged as a dominant, intuitive method for FDR estimation in this context, particularly within shotgun proteomics [31]. Its principle is straightforward: by searching data against a database containing real (target) and artificial (decoy) sequences, the decoy matches provide a direct estimate of false discoveries [31]. This guide objectively examines TDC's performance, its inherent assumptions, and compares it to alternative FDR control methodologies relevant to modern dereplication algorithms.

Core Principles and Workflow of the TDC Method

The TDC protocol is built on a simple yet powerful model. It assumes that decoy hits are indistinguishable from incorrect target hits, allowing decoy counts to directly estimate the number of false target discoveries [31].

Standard TDC Workflow: The canonical TDC procedure follows a three-step process [31]:

- Database Search: A set of spectra is searched against a concatenated database containing both target and decoy (e.g., reversed or shuffled) sequences.

- Competition: For each spectrum, the best-scoring target match and the best-scoring decoy match are compared. Only the higher-scoring match (the "winner") is retained.

- FDR Estimation: At any given score threshold (ρ), the FDR is estimated as the ratio of accepted decoy PSMs to accepted target PSMs. A common implementation, "TDC+," adds a "+1" correction to the decoy count to ensure conservative control [31].

Diagram 1: Standard TDC Workflow (95 characters)

Key Assumptions and Limitations: TDC's validity rests on critical assumptions. The decoy database must be generated such that decoys are equally likely to match spectra as incorrect targets. Violations of this assumption, or the presence of dependencies between hypotheses, can compromise FDR control [1] [32]. A recognized practical limitation is decoy-induced variability: for a fixed dataset, different random decoy databases can yield meaningfully different FDR estimates and discovery lists, especially with small datasets or stringent FDR thresholds [31].

Quantitative Performance Comparison of FDR Control Methods

The following table summarizes the key operational characteristics and performance metrics of TDC against other prominent FDR-control methods.

Table 1: Comparative Analysis of FDR Control Methods for High-Throughput Identification

| Method | Core Principle | Key Strength | Key Limitation | Typical Application Context |

|---|---|---|---|---|

| Target-Decoy Competition (TDC+) | Empirical FDR estimation via decoy database counts [31]. | Intuitive, easy to implement, no distributional assumptions. | High variability from decoy generation; discards some true positives during competition [31]. | Shotgun proteomics, spectrum identification. |

| Averaged TDC (aTDC) | Averages results over multiple independent decoy databases [31]. | Significantly reduces variability of standard TDC; improves reproducibility [31]. | Increased computational cost for multiple searches. | Proteomics studies with limited spectra or stringent FDR needs [31]. |

| Benjamini-Hochberg (BH) Procedure | Step-up p-value correction based on ranked significance [10] [33]. | Strong theoretical guarantees for independent tests; widely adopted. | Can be conservative; control under complex dependency is not guaranteed [10]. | Genomic microarray data, general multiple testing. |

| Dependency-Aware T-Rex Selector | Integrates hierarchical graphical models to account for variable dependencies [1]. | Provides proven FDR control for high-dimensional, dependent data [1]. | Methodological complexity; requires modeling dependency structure. | Genomics, finance, any data with structured dependencies [1]. |

| FDR Envelope (Resampling-Based) | Uses resampling to build graphical acceptance/rejection regions for functional data [32]. | Direct visualization of results; handles complex correlation structures non-parametrically [32]. | Computationally intensive; designed for functional/geospatial test statistics. | Neuroimaging, spatial statistics, functional regression [32]. |

Experimental Data Highlighting TDC Variability and aTDC Improvement: A key experiment demonstrates TDC's instability. Searching a single mass spectrometry run (15,083 spectra) against the human proteome with ten different shuffled decoy databases yielded different numbers of accepted peptides at a 1% FDR threshold, ranging from 4,757 to 4,987 discoveries—a 4.7% variability [31]. This problem worsens with smaller datasets: reducing the search to 1,000 spectra increased variability to 10.0% at a 5% FDR threshold [31]. The Averaged TDC (aTDC) protocol mitigates this by aggregating results from multiple decoy sets. An improved variant of aTDC not only reduces variability but also recovers more true discoveries at a fixed FDR threshold by modifying how decoys are counted across multiple runs [31].

Diagram 2: Averaged TDC (aTDC) Protocol (87 characters)

Comparative Experimental Protocols

To ensure reproducible comparison between FDR methods, standardized protocols are essential.

Protocol 1: Evaluating Decoy-Induced Variability in TDC. This protocol quantifies the instability inherent in standard TDC [31].

- Input: A fixed set of tandem mass spectrometry spectra.

- Database: A target protein sequence database.

- Decoy Generation: Generate K independent decoy databases (e.g., K=10) using the same shuffling or reversal algorithm.

- Search & Competition: For each decoy database k, search the spectra against the concatenated target-decoy database using a chosen search engine (e.g., Crux, SEQUEST). Perform per-spectrum competition to retain only the winning PSMs [31].

- Analysis: For each run k, apply a series of FDR thresholds (e.g., 1%, 5%, 10%) using the TDC+ formula and record the number of accepted target PSMs. Calculate variability as

(max - min) / ((max + min)/2) * 100%across the K runs [31].

Protocol 2: Benchmarking FDR Control Power and Accuracy. This general protocol compares the discovery power of different FDR methods on a dataset with (partially) known ground truth.

- Dataset Preparation: Use a labeled benchmark dataset (e.g., a proteomics mixture with known standard proteins) or a simulated dataset where true/false identifications are known.

- Method Application: Apply each FDR control method (TDC, BH, Dependency-Aware selector, etc.) to the dataset. For methods like the T-Rex selector, configure the appropriate dependency model [1].

- Metrics Calculation: For each method at its nominal FDR level (e.g., 5%), calculate:

- Actual FDR: (Number of False Discoveries) / (Total Discoveries).

- Power (True Positive Rate): (Number of True Discoveries) / (Total Possible True Discoveries).

- Stability: Repeat the analysis on subsampled data or with different random seeds and measure the fluctuation in discovery lists.

- Comparison: The method that maintains the actual FDR at or below the nominal level while maximizing power and stability is preferred. Studies show dependency-aware methods can uniquely achieve control where others fail in high-dimensional settings [1].

The Scientist's Toolkit: Essential Research Reagents & Software

Table 2: Key Research Reagent Solutions for TDC and FDR Benchmarking Studies

| Item / Resource | Function / Purpose | Example / Notes |

|---|---|---|

| Decoy Database Generation Tool | Creates shuffled or reversed peptide/protein sequences to form the null model. | Crux generate-decoys tool, DecoyPyrat. Critical for TDC and aTDC protocols [31]. |

| Search Engine Software | Matches experimental spectra to theoretical spectra from target/decoy sequences. | Crux [31], SEQUEST, MS-GF+. Outputs scores for competition step. |

| FDR Control Software Packages | Implements various FDR algorithms for statistical analysis. | R Packages: TRexSelector (T-Rex method) [1], multtest [32], fdrtool [32], qvalue [32]. |

| Benchmark Datasets | Provides data with known identities for validating FDR control accuracy. | Complex protein mixture standard datasets (e.g., with spiked-in known proteins). |

| Visualization & Envelope Tool | Creates graphical FDR envelopes for functional test statistics. | R package GET (Global Envelope Tests) [32]. Useful for spatial/functional data. |

Methodological Relationships and Evolution in FDR Control

The development of FDR methods represents an evolution from generic corrections to specialized, context-aware tools. The foundational Benjamini-Hochberg (BH) procedure provided the first practical framework [10] [33]. TDC adapted this principle to a specific domain (proteomics) by using decoys as a built-in null model, trading some assumptions for great intuitive appeal [31]. Recognition of TDC's limitations, like variability, led to refinements like aTDC [31]. In parallel, advances like the Benjamini-Yekutieli procedure (for arbitrary dependencies) [10] [33] and two-stage adaptive procedures (estimating the proportion of true nulls) [33] improved generic methods. The latest frontier is represented by dependency-aware models (e.g., T-Rex) [1] and visual inference tools (e.g., FDR envelopes) [32], which address complex data structures common in modern 'omics and dereplication science.

Diagram 3: Evolution of FDR Control Methodologies (87 characters)

The Target-Decoy Competition approach remains a gold standard in proteomics due to its conceptual simplicity and direct empirical estimation of FDR. However, this comparison reveals that its performance is not uniform. Researchers must be acutely aware of its susceptibility to decoy-induced variability, particularly when working with small datasets or demanding low FDR thresholds. The Averaged TDC (aTDC) protocol is a recommended enhancement to standard practice to mitigate this issue [31].

For the broader field of dereplication algorithm research, the choice of FDR method must be context-driven. When hypotheses are highly structured or dependent (e.g., related metabolic pathways, spectral series), generic p-value correction or standard TDC may be insufficient. In these scenarios, dependency-aware methods like the T-Rex selector [1] or specialized visual inference tools [32] offer more robust control and insightful results. The guiding principle should be to match the methodological assumptions of the FDR control tool with the underlying structure of the data and the specific goals of the dereplication analysis.

The advancement of untargeted metabolomics has generated a pressing need for robust statistical frameworks to control the rate of false discoveries. Unlike in proteomics, where target-decoy competition (TDC) is a standardized method for false discovery rate (FDR) estimation, the field of metabolomics has struggled with the lack of universally accepted FDR control methods [34]. This gap is critical because without reliable FDR estimation, the confidence in reported metabolite identifications remains uncertain, often relying on subjective manual validation [34] [21]. The core challenge lies in the fundamental difference between the molecules studied: peptides are linear polymers of 20 amino acids, while metabolites constitute a vast, structurally diverse set of small molecules, making the generation of plausible decoys for metabolite databases a non-trivial task [34] [35].

This guide objectively compares emerging methods for FDR estimation in metabolomics spectral matching, framed within the broader research thesis on refining dereplication algorithms. We present experimental data and detailed protocols for key methods, focusing on their adaptation of the proteomics-born TDC concept to the complexities of small molecule analysis.

Core Concepts: FDR, q-values, and the Target-Decoy Framework

- False Discovery Rate (FDR): In high-throughput experiments testing thousands of hypotheses (e.g., spectral matches), the FDR is the expected proportion of false positives among all discoveries declared significant. It is defined as FDR = FP / (FP + TP), where FP is the number of false positives and TP is the number of true positives [34].

- q-values: These are p-values adjusted for multiple testing using an FDR-controlling procedure. A q-value of 0.05 for a specific discovery means that among all features with a q-value at least as small as this one, an estimated 5% are false positives [36]. This is distinct from a p-value, which estimates the probability of a false positive for that single test without considering the full set of tests.

- Target-Decoy Competition (TDC): A method where query spectra are searched against a composite database containing real ("target") entries and artificial ("decoy") entries that are known to be false. The distribution of matches to decoys is used to model the null distribution of false matches to targets, allowing for the estimation of the FDR at any given score threshold [37] [25]. Its validity hinges on the decoys being indistinguishable from targets under the null hypothesis.

Comparative Performance of Metabolomics FDR Estimation Methods

The following tables synthesize performance data and characteristics of principal methods developed to estimate FDR in metabolite spectral matching.

Table 1: Quantitative Performance Comparison of Decoy Generation Methods [21]

| Method | Core Principle | Avg. Annotation Increase vs. Default* | P-value Distribution Under Null | Key Strength | Key Limitation |

|---|---|---|---|---|---|

| Naive Decoy | Random assignment of fragment ions from a global pool. | Baseline | Not fully uniform | Simple and fast to compute. | Poorly mimics real spectra; can lead to biased FDR estimates. |

| Spectrum-Based Decoy | Builds decoy spectra by drawing fragment ions that co-occur in real spectra. | +125% | Improved uniformity over naive | Captures some spectral covariance structure. | May not accurately model complex fragmentation pathways. |

| Fragmentation Tree-Based Decoy (Passatutto) | Re-roots and re-grafts in silico fragmentation trees to generate plausible alternate spectra. | +139% (range: -92% to +5705%) | Most uniform distribution | Generates chemically informed, realistic decoys; integrated into GNPS. | Computationally intensive; requires high-quality MS/MS for tree computation. |

| Empirical Bayes | Models the distribution of scores for true and false matches without explicit decoys. | Comparable to tree-based | Uniform | Does not require decoy generation. | Relies on distributional assumptions that may not always hold. |

*Reported as the average percentage increase in annotations at a controlled FDR when using project-optimized scoring parameters versus a default GNPS parameter set [21].

Table 2: Methodological Characteristics and Applicability

| Method | Required Input | Implementation / Tool | Best Suited For | FDR Control Level |

|---|---|---|---|---|

| Fragmentation Tree-Based [21] | High-resolution MS/MS spectra for library compounds. | Passatutto (in GNPS workflow) | Untargeted discovery with spectral libraries. | Spectrum-match (annotation) level. |

| Octet Rule Violation (H2C) [35] | Molecular formulas and structures from a database (e.g., HMDB, PubChem). | JUMPm pipeline; adaptable to mzMatch, MZmine. | Database search (structure-based) identification. | Metabolite assignment level. |

| Implausible Adduct Method [35] | High-resolution MS1 data. | Specialized imaging MS workflows. | Mass-only searches, particularly in imaging MS. | Feature discovery level. |

| Knockoff Filters [37] [38] | Multivariate quantitative data (e.g., abundances across conditions). | knockoff R package; generalizable frameworks. |

Differential analysis and network inference (e.g., volcano plots). | Hypothesis (biomarker) selection level. |

Experimental Protocols for Key Methods

This protocol outlines the generation of decoy spectra via fragmentation tree re-rooting, as implemented in the Passatutto tool.

Objective: To create a target-decoy spectral library for FDR-controlled matching of query MS/MS spectra. Materials: A curated target library of reference MS/MS spectra with associated structures (SMILES/InChI). Procedure:

- Fragmentation Tree Computation: For each reference spectrum in the target library, compute a fragmentation tree. This tree represents hierarchical relationships between the precursor ion and its fragments, with nodes as fragment formulas and edges as neutral losses.

- Decoy Generation via Re-rooting: For each target tree, select a new root node. The probability of selecting a node is weighted by 1/(n+1), where n is the number of edges that would need to be re-grafted.

- Re-grafting: After selecting a new root, propagate molecular formulas along the tree. If a chemically impossible formula (e.g., negative atom count) is generated for a subtree, that subtree is detached and re-grafted onto a randomly selected node elsewhere in the tree.

- Decoy Spectrum Construction: The reconstituted tree, with the new precursor ion formula at its root, is used to generate a new MS/MS spectrum. The m/z values of the fragments are calculated from their new formulas, while the relative intensities are copied from the original target spectrum.

- Library Creation & Search: Combine the original target spectra and the newly generated decoy spectra into a single composite library. Search query spectra against this composite library using a similarity score (e.g., modified cosine score).

- FDR Calculation: For any given score threshold, estimate the FDR as: FDR = (2 × Ndecoy) / Ntotal, where Ndecoy is the number of decoy matches above the threshold and Ntotal is the total number of matches (target + decoy) above the threshold. The factor of 2 corrects for the equal size of the target and decoy sets.

This protocol details a database-search-oriented method that creates decoys by generating chemically invalid structures.

Objective: To estimate the FDR for metabolite identifications based on formula and structure database searches. Materials: A target database of metabolite structures (e.g., HMDB); LC-MS/MS data. Procedure:

- Decoy Formula Generation: For each molecular formula in the target database, create one or more decoy formulas by adding a small, odd number of hydrogen atoms (e.g., +1, +3, +5). This violates the chemical octet rule, guaranteeing the decoy formula corresponds to no known stable metabolite.

- Decoy Structure Generation: For a target metabolite structure, generate a corresponding decoy structure using the "H2C" method: a. Use the target structure to generate a theoretical MS/MS spectrum (e.g., using MetFrag). b. Randomly select one carbon atom in the target structure and conceptually change it to a "CH3" group (adding +H2). c. Adjust the exact mass of every theoretical product ion in the predicted spectrum that contains this modified carbon by adding +1.007825 Da.

- Composite Database Search: Create a search database containing the original target structures and their paired decoy structures. Search MS/MS query spectra against this composite database using a matching score (e.g., a hypergeometric test-derived Mscore in JUMPm).

- FDR Estimation: The FDR at a given score threshold is estimated as: FDR = Ndecoy / Ntarget, where Ndecoy and Ntarget are the numbers of decoy and target matches passing the threshold, respectively. This assumes decoys are equally likely to be matched by chance as incorrect targets.

Entrapment is a meta-method used to assess whether a given analysis pipeline's internal FDR estimation is accurate.

Objective: To empirically evaluate the validity of a search tool's reported FDR. Materials: A standard dataset; a set of "entrapment" sequences or spectra known to be absent from the sample. Procedure:

- Database Expansion: Create an analysis database by combining the standard target database with an "entrapment" database. Entrapment entries should be biologically implausible in the sample (e.g., peptides from a distant species, or metabolites from a non-relevant organism) but otherwise statistically similar to true targets.

- Blinded Analysis: Run the search tool on the experimental data using the combined database. The tool must be unaware of which entries are targets and which are entrapments.

- Result Separation: After analysis, separate the reported identifications into "true target discoveries" (NT) and "entrapment discoveries" (NE).

- FDP Estimation & Validation: Calculate the observed False Discovery Proportion (FDP) among the discoveries related to the original target database. A rigorous combined estimator is: FDP = NE × (1 + 1/r) / (NT + NE), where r is the size ratio of the entrapment to the original target database [11] [25].

- Interpretation: Plot the estimated FDP against the tool's internally reported q-value or FDR threshold. If the tool's FDR control is valid, the estimated FDP curve should generally lie at or below the line y=x (the reported FDR). A curve consistently above this line indicates the tool is underestimating the true error rate.

Diagram: Workflow for an entrapment experiment to validate a tool's FDR control. The core step is the blinded search against a combined database, followed by calculation of the observed false discovery proportion (FDP) to check against the tool's internal FDR claim.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents, Software, and Data Resources

| Item | Function in Experiment | Example / Source | Key Consideration |

|---|---|---|---|

| Curated Spectral Library | Serves as the target database for spectrum matching. | GNPS MassIVE, MassBank, NIST MS/MS [21]. | Library quality (resolution, annotation confidence) directly impacts FDR reliability. |

| Decoy Generation Software | Creates the decoy spectra or structures needed for TDC. | Passatutto (for tree-based decoys) [21], In-house scripts for H2C method [35]. | Method must generate decoys that are "plausible but false" under the null hypothesis. |

| Spectral Matching Engine | Performs the comparison between query and library spectra. | GNPS spectral networking workflow, SIRIUS, MS-DIAL. | Scoring algorithm (e.g., cosine, dot product) must be compatible with decoy method. |

| FDR Calculation Scripts | Implements the statistical estimation of FDR from target/decoy match counts. | Custom R/Python scripts, integrated tools within pipelines like JUMPm [35]. | Must use the correct formula (e.g., with size correction factor). |

| Entrapment Database | Provides known-false entries for validation experiments. | Peptides/metabolites from an irrelevant organism (e.g., cow proteins in a yeast study) [11] [25]. | Must be hidden from the search tool and statistically comparable to true targets. |

| Reference Standard Compounds | Provides Level 1 identification for validating true positives and calibrating scores. | Commercial metabolite standards. | Essential for final validation but impractical for large-scale FDR estimation. |

Critical Analysis and Future Directions

Current research indicates that no single FDR control method is universally perfect or applicable across all metabolomics workflows [37]. The choice depends on the data type (spectral vs. database search), available resources, and required confidence level. Notably, recent rigorous evaluations using entrapment experiments suggest that many widely used tools, especially in data-independent acquisition (DIA) proteomics and by extension in complex metabolomics analyses, may fail to consistently control the FDR at the stated level [11] [25]. This underscores the importance of the validation protocols described in Section 4.3.

Future developments are likely to focus on:

- Hybrid Methods: Combining the strengths of decoy-based and model-based (e.g., empirical Bayes) approaches.

- Integration with Knockoff Filters: Leveraging the rigorous mathematical framework of knockoffs from statistics, which generalizes the TDC concept, for controlled variable selection in differential and network analysis in metabolomics [37] [38].

- Community Standards: Adoption of standardized validation practices, like rigorous entrapment, for benchmarking new and existing metabolite identification tools.

In conclusion, adapting FDR control from proteomics to metabolomics requires moving beyond simple sequence reversal. Successful methods like fragmentation tree re-rooting and octet rule violation creatively generate chemically-aware decoys. For researchers, the imperative is to actively select and, more importantly, validate an FDR estimation method appropriate to their experimental design, rather than relying on default software outputs whose error control may be unverified.