Accelerating Discovery: How AI Transforms Lead Optimization in Natural Product-Based Drug Development

This article explores the transformative integration of artificial intelligence (AI) in optimizing lead compounds derived from natural products (NPs) for drug discovery.

Accelerating Discovery: How AI Transforms Lead Optimization in Natural Product-Based Drug Development

Abstract

This article explores the transformative integration of artificial intelligence (AI) in optimizing lead compounds derived from natural products (NPs) for drug discovery. It first establishes the historical significance and unique chemical space of NPs and outlines the traditional challenges that AI aims to address. It then details core AI methodologies—including machine learning for activity prediction, generative models for novel analog design, and tools for ADMET property optimization—and presents specific case studies. The discussion critically examines persistent hurdles such as data scarcity, model interpretability, and the 'dereplication' problem, offering strategies for integration with traditional experimental workflows. Finally, the article validates the impact by comparing AI-driven and conventional approaches, highlighting market trends, clinical-stage successes, and the tangible improvements in efficiency, cost, and success rates. This synthesis provides researchers and drug development professionals with a comprehensive roadmap for leveraging AI to unlock the full therapeutic potential of nature's chemistry.

The Convergence of Nature and Silicon: Defining the Role of AI in Natural Product Lead Optimization

For millennia, natural products (NPs) have been the cornerstone of medicinal therapy, providing humanity with its most essential drugs. Approximately 50% of FDA-approved medications between 1981 and 2006 were NPs, their semi-synthetic derivatives, or synthetic compounds inspired by NP pharmacophores [1]. Landmark drugs like the anticancer agent paclitaxel and the immunosuppressant fingolimod originated from the Pacific yew tree and the fungus Isaria sinclairii, respectively [1]. This historical success is rooted in the unparalleled chemical diversity and evolutionary-tuned biological activity of NPs. However, modern drug discovery faces escalating demands for speed, efficiency, and success rates. The traditional NP discovery pipeline, often a decades-long, labor-intensive process of extraction, bioassay-guided fractionation, and structure elucidation, is increasingly unsustainable on its own [1].

The integration of Artificial Intelligence (AI) represents a paradigm shift, offering a powerful framework to overcome these historical bottlenecks. This article details how AI, particularly machine learning (ML) and deep learning (DL), is being applied to streamline NP discovery, with a specific focus on lead optimization. We provide application notes on current platforms and detailed experimental protocols for AI-enhanced workflows, framing this within the broader thesis that AI is an indispensable tool for unlocking the next generation of NP-derived medicines [1] [2].

Historical Legacy and the Data Foundation

The legacy of NPs in medicine is undisputed, with compounds like vincristine, irinotecan, and vancomycin serving as critical chemotherapeutic and anti-infective agents [3]. Their structural complexity, which often confounds synthetic chemists, is precisely what enables high-affinity binding and modulation of challenging biological targets. Despite a waning interest in the late 20th century due to the rise of combinatorial chemistry, NPs have regained prominence. It is estimated that about 40% of the chemical scaffolds found in published NPs are unique and have not been synthesized in a laboratory, highlighting their irreplaceable role in exploring novel chemical space [3].

The modern resurgence is fundamentally data-driven. The advent of large, publicly accessible chemical and biological databases has transformed the field from one reliant on serendipity to one empowered by informatics. These databases form the essential substrate for AI model training and validation.

Table 1: Key Public Databases for Natural Product Research

| Database Name | Primary Content | Key Features for AI/NP Research | Reference |

|---|---|---|---|

| PubChem | Chemical structures, bioactivity data, biological properties for >100 million substances. | Largest public repository; enables linkage from chemical structure to bioassay results (AID) and protein targets; essential for SAR and polypharmacology studies [3]. | [3] |

| NPAtlas | Curated database of known natural products with microbial origin. | Focus on microbial metabolites; includes data on sources and isolation; used for dereplication and biosynthetic studies [4]. | [4] |

| COCONUT | Collection of Open Natural ProdUcTs. | A large, open resource of NPs with non-redundant structures; valuable for virtual screening and generative model training [4]. | [4] |

| CAS Content Collection | Human-curated collection of published scientific information. | Contains over 600,000 NP-related publications; used for trend analysis and knowledge graph construction [5]. | [5] |

Modern AI Applications in the NP Discovery Pipeline

AI is not a single tool but a suite of technologies applied across the entire NP value chain, from initial compound identification to lead optimization and beyond. Current research, as analyzed from publication landscapes, shows AI applications are most prevalent in discovering anti-tumor agents, followed by antiviral and antibacterial agents [5].

AI-Driven Dereplication and Compound Identification

Dereplication—the early identification of known compounds—is crucial to avoid redundant research. AI massively accelerates this process. Advanced algorithms can now analyze spectral data (NMR, MS) to predict molecular structures and query databases with unprecedented speed and tolerance for structural variants [4].

- Application Note: VInSMoC for Mass Spectrometry: The Variable Interpretation of Spectrum–Molecule Couples (VInSMoC) algorithm addresses scalability and flexibility in mass spectral database searching. Unlike exact-match tools, VInSMoC can identify known molecules and their previously unreported variants by estimating the statistical significance of spectrum-structure matches. In a benchmark search of 483 million spectra against 87 million molecules, VInSMoC identified 43,000 known molecules and 85,000 novel variants [4]. This capability is transformative for quickly pinpointing novel analogues in complex NP extracts.

Predictive Bioactivity and Lead Optimization

This is the core of AI's value proposition for lead optimization. ML models can predict the biological activity, target engagement, and pharmacological properties of NP-derived compounds, prioritizing the most promising candidates for costly experimental validation.

- Application Note: DeepDTAGen for Target-Aware Design: DeepDTAGen is a multitask deep learning framework that simultaneously predicts drug-target binding affinity (DTA) and generates novel, target-aware drug variants [6]. By learning from a shared feature space that encodes ligand-receptor interactions, it ensures generated molecules are conditioned on desired target activity. On benchmark datasets (KIBA, Davis), DeepDTAGen outperformed previous models like GraphDTA, achieving a Concordance Index (CI) of 0.897 and 0.890, respectively, indicating high predictive accuracy [6]. This integrated predict-and-generate approach significantly accelerates the ideation and optimization cycles for NP-inspired leads.

Table 2: Select Clinical-Stage Drug Candidates Discovered/Aided by AI Platforms

| AI Platform/Company | Key AI Approach | Candidate (Indication) | Development Phase (as of 2025) | Relevance to NP Discovery |

|---|---|---|---|---|

| Exscientia | Generative AI for design; "Centaur Chemist" iterative optimization. | DSP-1181 (OCD), EXS-74539 (LSD1 inhibitor, oncology). | Phase I (first AI-designed drug in trials). | Platform exemplifies accelerated design-make-test cycles; approach applicable to optimizing NP scaffolds [7]. |

| Insilico Medicine | Generative AI for target discovery and molecule design. | ISM001-055 (TKI for Idiopathic Pulmonary Fibrosis). | Phase IIa (positive results reported). | Demonstrated AI can drive a program from target to Phase I in ~18 months; generative chemistry can inspire NP-like molecules [7]. |

| Schrödinger | Physics-based ML (combining molecular modeling & ML). | Zasocitinib (TYK2 inhibitor, autoimmune diseases). | Phase III. | Platform can screen ultra-large libraries (billions of compounds); suitable for virtual screening of NP databases and derivatives [7]. |

Detailed Experimental Protocols

Protocol: AI-Enhanced Dereplication Using LC-MS/MS and VInSMoC

Objective: To rapidly identify known natural products and their novel structural variants in a crude extract. Workflow Summary: Crude Extract → LC-MS/MS Analysis → Data Preprocessing → VInSMoC Database Search → Result Validation [4].

AI-Enhanced Dereplication Workflow for Natural Products

Materials:

- Sample: Crude natural product extract (e.g., from plant, marine, or microbial source).

- Instrumentation: High-resolution LC-MS/MS system (e.g., Q-TOF or Orbitrap).

- Software: VInSMoC (accessible via web app at run.npanalysis.org) [4]; standard MS data processing software (e.g., MZmine, MS-DIAL).

- Databases: Local or cloud-accessible copies of PubChem, COCONUT, or NPAtlas spectral libraries [4].

Procedure:

- Sample Preparation & LC-MS/MS:

- Prepare the crude extract in a suitable LC-MS compatible solvent (e.g., methanol, acetonitrile).

- Inject onto a reversed-phase UHPLC column. Use a gradient elution method (e.g., water/acetonitrile with 0.1% formic acid).

- Acquire data in data-dependent acquisition (DDA) mode. Collect full-scan MS spectra (e.g., m/z 100-1500) followed by MS/MS fragmentation of the most intense ions.

Data Preprocessing:

- Convert raw data files to an open format (e.g., .mzML).

- Perform peak picking, alignment, and deisotoping using data processing software.

- Export a list of consensus MS/MS spectra (precursor m/z, retention time, fragmentation spectrum).

VInSMoC Database Search:

- Input the list of consensus MS/MS spectra into the VInSMoC tool.

- Configure search parameters: select "variable mode" to allow identification of variants, set appropriate precursor and fragment mass tolerances.

- Execute the search against a configured database (e.g., PubChem).

Analysis & Validation:

- Review results ranked by statistical score (e.g., p-value or false discovery rate). High-scoring matches indicate confident identifications of known compounds.

- Examine identified "variants" – these represent structural analogues of known database entries and are high-priority candidates for novel compounds.

- Validate key findings by comparing retention time and MS/MS patterns with authentic standards, if available, or by targeted isolation and NMR analysis.

Protocol: AI-Driven Lead Optimization with DeepDTAGen

Objective: To predict binding affinities of NP-like molecules against a target of interest and generate novel optimized analogues. Workflow Summary: Data Collection → Model Training/Finetuning → Affinity Prediction → Target-Aware Molecule Generation → In silico Prioritization [6].

AI-Driven Lead Optimization Workflow with DeepDTAGen

Materials:

- Data: Curated dataset of drug-target pairs with binding affinity values (e.g., KIBA, Davis datasets). Supplement with proprietary NP bioactivity data if available.

- Software: DeepDTAGen model code (framework described in [6]); cheminformatics toolkits (RDKit, Open Babel); compute infrastructure (GPU recommended).

- Input: For prediction: SMILES strings of NP candidates and target protein sequence. For generation: Target protein sequence as a conditioning input.

Procedure:

- Data Preparation:

- Format the training data into pairs: (CompoundSMILES, TargetSequence, BindingAffinityValue).

- Split data into training, validation, and test sets (e.g., 80/10/10). Apply necessary featurization (e.g., convert SMILES to molecular graphs, tokenize protein sequences).

Model Training/Fine-Tuning:

- Initialize the DeepDTAGen model, which uses a shared encoder for compounds and targets and separate decoders for affinity prediction and molecule generation.

- Train the model on the training set, using the FetterGrad algorithm to balance gradient conflicts between the two tasks [6].

- Monitor performance on the validation set using metrics like Mean Squared Error (MSE) and Concordance Index (CI).

Affinity Prediction & Compound Generation:

- Prediction: Input SMILES of isolated NPs or NP-inspired derivatives alongside the target protein sequence into the trained model's prediction head. Obtain a predicted binding affinity score.

- Generation: Input the target protein sequence into the generation head. The model will generate novel, valid SMILES strings conditioned on interacting with that target. Use stochastic generation methods to explore a diverse chemical space [6].

Prioritization and In silico Evaluation:

- Filter generated compounds for drug-likeness (e.g., Lipinski's Rule of Five), synthetic accessibility score, and predicted ADMET properties using standard cheminformatics tools.

- Select top-ranking candidates from both the predicted active NPs and the generated analogues for in vitro experimental validation.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Research Reagent Solutions for AI-Enhanced NP Discovery

| Reagent / Material / Tool | Function in NP Discovery Workflow | Application in AI Context |

|---|---|---|

| High-Resolution LC-MS/MS System | Provides accurate mass and fragmentation data for compound identification. | Generates the experimental spectral data used for training AI identification models (e.g., spectral predictors) and for dereplication searches [4]. |

| PubChem / COCONUT Database | Public repositories of chemical structures and associated biological data. | Serve as the primary source of truth for chemical space, used for model training, validation, and as search libraries for dereplication algorithms [3] [4]. |

| VInSMoC Web Application | Algorithm for tolerant mass spectral database search. | Enables rapid dereplication and, critically, the discovery of novel structural variants of known NPs, expanding the "hittable" chemical space from a single extract [4]. |

| DeepDTAGen-like MTL Framework | Multitask learning model for affinity prediction & molecule generation. | Directly addresses lead optimization by predicting activity of NP candidates and generating improved, target-focused analogues in a single, integrated process [6]. |

| RDKit Cheminformatics Toolkit | Open-source toolkit for cheminformatics and ML. | Used for processing SMILES strings, calculating molecular descriptors, filtering compounds by properties, and evaluating generated molecules—essential for pre- and post-processing AI model inputs/outputs. |

Persistent Challenges and Future Directions

Despite transformative progress, significant challenges remain at the intersection of AI and NP discovery:

- Data Quality and Bias: AI models are limited by their training data. NP datasets are often small, imbalanced, and plagued with inconsistent annotation [2]. Developing standardized, high-quality, and curated NP-specific datasets is paramount.

- The "Black Box" Problem: The complexity of deep learning models can obscure the rationale behind predictions, making it difficult for chemists to trust and act on AI-generated leads. Improving model interpretability is an active area of research.

- Scalability of Validation: AI can generate thousands of novel candidates rapidly. The bottleneck has shifted to the experimental validation of these candidates. Integrating AI with automated synthesis and high-throughput biology (lab automation, micro-physiological systems) is critical to close this loop [7] [8].

Future directions point toward more integrated and sophisticated systems:

- Generative AI for NP-Inspired Libraries: Beyond prediction, generative models will design entirely novel, synthetically accessible libraries inspired by NP scaffolds, exploring regions of chemical space that blend the advantages of NPs with drug-like properties [1] [5].

- Knowledge Graphs and Network Pharmacology: AI will increasingly model the polypharmacology of NPs—how they interact with multiple targets and pathways—to predict efficacy and side effects, and to design combination therapies [2].

- Ethical and Sustainable Sourcing: AI can assist in the sustainable sourcing of NPs by modeling ecological impacts and identifying cultivable sources or guiding total synthesis routes for rare compounds [5].

The legacy of natural products in medicine is not a relic of the past but a living foundation for future innovation. The modern challenge of translating their complex potential into viable drugs is being met by the power of artificial intelligence. From dereplicating complex extracts in minutes to generating optimized, target-aware lead compounds, AI is systematically de-risking and accelerating the NP discovery pipeline. The detailed protocols and toolkits outlined here provide a roadmap for researchers to integrate these technologies. As AI models become more sophisticated, interpretable, and deeply integrated with experimental automation, they will fulfill their promise of delivering a new wave of effective, safe, and diverse therapeutics derived from nature's blueprint. The future of NP drug discovery is a synergistic partnership between human expertise and artificial intelligence.

The Unique Chemical and Biological Landscape of Natural Products for Drug Discovery

Natural products (NPs) and their derivatives have historically been a cornerstone of drug discovery, accounting for a significant proportion of approved therapeutics. Analysis of drug approvals from 2014 to 2024 shows that 56 (9.7%) of the 579 new drugs were NPs or NP-derived, including 44 new chemical entities and 12 antibody-drug conjugates [9]. Despite this enduring value, traditional NP discovery is challenged by low rediscovery rates, complex chemistry, and inefficient empirical screening. Concurrently, artificial intelligence (AI) has evolved from an experimental tool to a core component of pharmaceutical R&D, with over 75 AI-derived molecules reaching clinical stages by the end of 2024 [7] [10]. AI-driven platforms claim to drastically shorten early-stage timelines; for example, AI-designed candidates have progressed from target to Phase I trials in as little as 18 months, a fraction of the typical five-year timeline [7].

This document frames the unique chemical and biological attributes of NPs within the context of a modern thesis: that AI is the critical engine for lead optimization in NP discovery. By integrating machine learning with advanced biosynthetic engineering and predictive pharmacology, researchers can systematically navigate NP complexity to identify and optimize novel drug candidates with enhanced efficiency and success rates.

The Chemical and Biological Landscape of Natural Products

Structural Diversity and Biosynthetic Origins

The unparalleled structural diversity of NPs arises from evolutionarily optimized biosynthetic machinery. Key enzyme families include:

- Non-Ribosomal Peptide Synthetases (NRPS): Assemble peptides without a ribosomal template, incorporating hundreds of different proteinogenic and non-proteinogenic amino acids, often with cycles, branches, and heterocycles.

- Polyketide Synthases (PKS): Utilize acyl-CoA precursors in an assembly-line fashion, generating complex scaffolds with diverse stereochemistry and oxidation states [11].

- Hybrid NRPS-PKS Systems: Combine both enzymatic logics, exponentially increasing structural complexity and bioactivity potential.

This biosynthetic programming results in chemical features rare in synthetic libraries, such as high sp3 carbon count, structural rigidity, diverse chiral centers, and macrocyclic rings. These features are often linked to better target specificity and success in development [11] [9].

Clinical Relevance and Development Trends

NPs remain a vital source of new pharmacophores. Between January 2014 and June 2025, 58 NP-related drugs were launched, averaging about five new approvals per year [9]. As of December 2024, 125 NP and NP-derived compounds were in active clinical trials or registration phases [9]. This pipeline is fed by continuous discovery, though the rate of identifying truly new pharmacophores has slowed, with only one discovered in the past 15 years [9]. This underscores the need for innovative approaches to unlock novel chemical space from NP sources.

Table 1: Clinical Status of NP-Derived Drugs (2014-2025)

| Category | Number (2014-2024) | Percentage of Total Approvals | Key Characteristics |

|---|---|---|---|

| NP-Derived New Chemical Entities (NCEs) | 44 | 7.6% (of all drugs); 11.3% (of all NCEs) | Novel scaffolds, often complex synthesis. |

| NP Antibody-Drug Conjugates (ADCs) | 12 | 2.1% (of all drugs); 6.3% (of all NBEs) | NPs (e.g., auristatins, maytansinoids) as cytotoxic warheads. |

| Total NP-Derived Drugs | 56 | 9.7% | Fluctuating annual approvals (0-8), average of 5/year. |

| Compounds in Clinical Trials (as of Dec 2024) | 125 | N/A | Includes 33 new pharmacophores not in approved drugs [9]. |

AI-Driven Methodologies for NP Exploration and Optimization

AI methodologies are being tailored to address the specific challenges of NP research, from initial discovery to lead optimization.

Predictive Target Identification and Polypharmacology

Predicting protein targets for NPs is difficult due to limited bioactivity data and complex structures. Similarity-based tools like CTAPred address this by using focused reference datasets of compounds with known targets. Its two-stage approach—creating a focused compound-target activity dataset and then performing similarity searches—optimizes prediction by considering only the top three most similar reference compounds, balancing accuracy and false positives [12]. More complex AI models, including graph neural networks (GNNs) and self-supervised molecular embeddings, can infer mechanisms of action and polypharmacology by modeling the complex relationships between herb ingredients, targets, and disease pathways [2].

Lead Optimization and Property Prediction

AI accelerates the iterative design-make-test-learn cycle crucial for lead optimization. For NPs, this involves:

- Generative Chemistry: AI models propose novel analogs that retain core bioactive scaffolds while optimizing properties like solubility, metabolic stability, and selectivity [7] [10].

- ADMET Prediction: Machine learning models trained on chemical descriptors predict absorption, distribution, metabolism, excretion, and toxicity, prioritizing compounds with a higher probability of clinical success [10] [13].

- Synergy Prediction: Network pharmacology models analyze herb-ingredient-target-pathway graphs to predict synergistic effects in multi-component NP mixtures [2].

Table 2: AI-Designed Molecules in Clinical Trials (Representative Examples)

| Compound | Company/Platform | Target/Indication | Clinical Stage (2025) | AI Application Highlight |

|---|---|---|---|---|

| INS018_055 | Insilico Medicine | TNIK / Idiopathic Pulmonary Fibrosis | Phase IIa | Generative AI for novel target and molecule design [7] [10]. |

| GTAEXS617 | Exscientia (now Recursion) | CDK7 / Solid Tumors | Phase I/II | Centaur Chemist approach: AI-human collaborative design [7]. |

| REC-4881 | Recursion | MEK / Familial Adenomatous Polyposis | Phase II | Phenomics-first AI platform identifying novel drug-disease relationships [10]. |

| RLY-4008 | Relay Therapeutics | FGFR2 / Cholangiocarcinoma | Phase I/II | Computational modeling of protein dynamics for highly selective inhibitor design [10]. |

Application Notes & Experimental Protocols

Application Note: AI-Guided Genome Mining for Novel NP Discovery

Objective: To rapidly identify and prioritize microbial strains encoding novel biosynthetic gene clusters (BGCs) for NRPS/PKS-derived compounds. Thesis Context: This protocol replaces low-throughput activity-based screening with AI-powered in silico prioritization, directly feeding the lead discovery pipeline.

Protocol 4.1: AI-Prioritized Genome Mining and Heterologous Expression

- Materials:

- Microbial genomic DNA samples.

- Bioinformatics Workstation (e.g., antiSMASH, PRISM, DeepBGC software).

- AI Model (e.g., trained GNN for BGC novelty prediction).

- Cloning vectors and heterologous host (Streptomyces coelicolor, Pseudomonas putida).

- HPLC-HRMS for metabolite analysis.

- Method:

- Sequencing & Primary Annotation: Perform whole-genome sequencing. Use rule-based tools (antiSMASH) to identify all putative BGCs.

- AI-Powered Novelty Scoring: Encode each BGC as a graph (nodes=enzyme domains, edges=co-linearity). Input into a pre-trained GNN model to generate a novelty score versus known BGCs in databases (MIBiG) [11] [2].

- Prioritization & Cluster Selection: Rank BGCs by novelty score and predicted chemical class. Select the top 3-5 candidates with no close known analogs.

- Heterologous Expression: Clone the prioritized BGC into a suitable expression vector. Transform into a heterologous host optimized for secondary metabolism [11] [14].

- Metabolite Analysis & Dereplication: Culture hosts, extract metabolites, and analyze by HPLC-HRMS. Use feature-based molecular networking (GNPS) to compare produced metabolites against spectral libraries, confirming novelty [2].

- Expected Outcome: Isolation of 1-2 novel NP scaffolds per prioritized BGC, ready for biological screening.

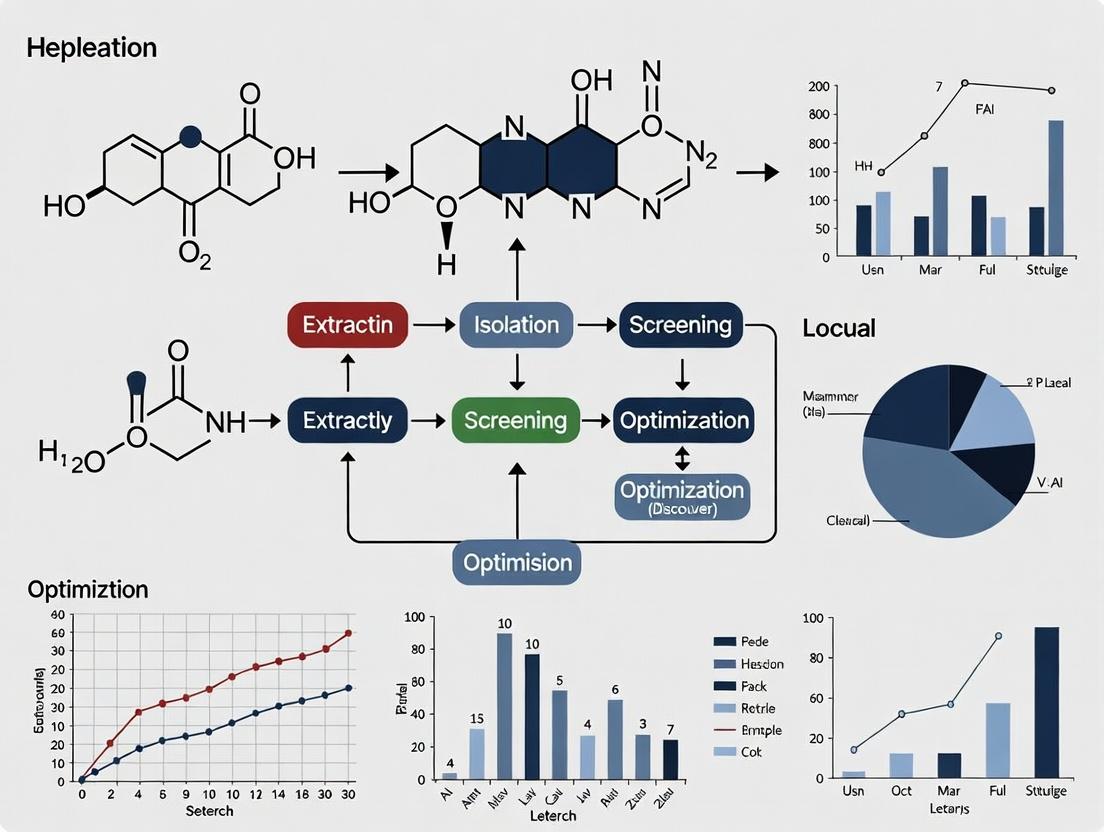

Diagram 1: AI-Guided Genome Mining for Novel NP Discovery (max-width: 760px).

Application Note: Predictive Target Deconvolution and Validation for an Isolated NP

Objective: To identify the protein target(s) and mechanism of action of a bioactive NP with unknown target using in silico prediction followed by experimental validation. Thesis Context: This protocol demonstrates how AI-driven target hypotheses can replace blind mechanistic studies, focusing validation efforts and accelerating the understanding crucial for lead optimization.

Protocol 4.2: Target Prediction and Cellular Validation

- Materials:

- Purified NP compound.

- CTAPred software and associated Compound-Target Activity (CTA) dataset [12].

- SwissTargetPrediction or SEA web servers for comparison.

- Cell line relevant to the NP's observed phenotype (e.g., cancer cell line for cytotoxicity).

- Reagents for Cellular Thermal Shift Assay (CETSA) or Drug Affinity Responsive Target Stability (DARTS).

- siRNA or CRISPR-Cas9 reagents for genetic knockdown/knockout.

- Method:

- Ligand-Based Target Prediction:

- Generate a standard molecular descriptor (e.g., ECFP4 fingerprint) for the NP.

- Run CTAPred using the default "top 3 similar compounds" parameter to retrieve a ranked list of predicted protein targets [12].

- Cross-reference predictions with other tools (e.g., SwissTargetPrediction) to generate a consensus shortlist of 5-10 high-probability targets.

- Experimental Target Engagement (CETSA):

- Treat live cells with the NP or vehicle control.

- Heat cells to a gradient of temperatures to denature proteins.

- Lyse cells and isolate soluble protein fraction. Target proteins bound to the NP will show increased thermal stability, detectable by western blot for each shortlisted target.

- Functional Genetic Validation:

- Using siRNA, knock down expression of the top predicted target(s) from step 2.

- Treat knockdown and control cells with the NP. A significant reduction in the NP's bioactivity (e.g., loss of cytotoxicity) in knockdown cells confirms the target is functionally required for the phenotype.

- Ligand-Based Target Prediction:

- Expected Outcome: Confirmation of one or more primary macromolecular targets for the NP, providing a mechanistic basis for subsequent medicinal chemistry.

Application Note: AI-Driven Lead Optimization of a NP-derived Analog

Objective: To improve the drug-like properties (e.g., metabolic stability, solubility) of a bioactive but suboptimal NP lead compound using generative AI and in silico ADMET prediction. Thesis Context: This is the core of the thesis, illustrating a closed-loop AI-empowered cycle to optimize NP leads while preserving their unique bioactivity.

Protocol 4.3: Iterative AI Design and In Vitro Testing Cycle

- Materials:

- NP lead compound with known structure and in vitro bioactivity (e.g., IC50).

- Generative AI Platform (e.g., REINVENT, Molecular Transformer) or commercial suite (e.g., Exscientia's DesignStudio).

- ADMET Prediction Suite (e.g., QSAR models for microsomal stability, Caco-2 permeability, hERG inhibition).

- In vitro assay kit for primary biological activity.

- In vitro ADMET assays: human liver microsomes (HLM), Caco-2 cell permeability assay.

- Method:

- Define Target Product Profile (TPP): Set quantitative goals (e.g., potency IC50 < 100 nM, microsomal half-life > 30 min, no hERG liability).

- Initial Generative Design:

- In Silico Screening & Prioritization:

- Filter the 1,000 generated molecules using ADMET QSAR models.

- Apply synthetic accessibility scoring to remove unrealistic structures.

- Select the top 20-30 candidates for synthesis.

- Synthesis, Testing, and Data Feedback:

- Synthesize the prioritized analogs.

- Test in parallel for primary bioactivity and key ADMET properties (HLM stability).

- Feed the new chemical structures and experimental results (potency, stability) back into the AI model as training data.

- Iterative Optimization: Repeat steps 2-4 for 2-3 cycles, with the AI model learning from experimental outcomes to propose increasingly optimized compounds.

Table 3: The Scientist's Toolkit for AI-Enhanced NP Discovery

| Tool/Reagent Category | Specific Example | Function in NP Discovery & AI Integration |

|---|---|---|

| Bioinformatics & AI Software | antiSMASH, DeepBGC | Identifies biosynthetic gene clusters (BGCs) from genomic data for AI novelty scoring [11]. |

| CTAPred | Open-source tool for predicting protein targets of NPs using similarity-based AI [12]. | |

| Graph Neural Network (GNN) Models | Encodes molecular or BGC graphs to predict properties, targets, or generate novel analogs [2]. | |

| Biosynthetic Engineering | CRISPR-Cas9 for genome editing | Activates silent BGCs or engineers heterologous hosts for NP production [14]. |

| Cell-free protein synthesis systems | Rapidly produces and tests individual enzymes or entire pathways for NP synthesis [14]. | |

| Heterologous Hosts (S. coelicolor, P. putida) | Plug-and-play platforms for expressing prioritized BGCs to produce NPs [11]. | |

| Analytical & Screening | Feature-Based Molecular Networking (GNPS) | Dereplicates known compounds and visualizes novel chemical families from metabolomics data [2]. |

| High-Content Phenotypic Screening | Generates rich biological response data to train AI models linking NP structure to complex phenotypes [7]. | |

| ADMET Prediction | QSAR Models for Microsomal Stability, hERG | AI models used in silico to prioritize NP analogs with improved drug-like properties [10] [13]. |

Diagram 2: AI-Driven Lead Optimization Cycle for Natural Products (max-width: 760px).

Application Note: Predictive Toxicity Profiling for NP Candidates

Objective: To employ AI models for early identification of potential toxicity liabilities in NP-derived lead candidates. Thesis Context: Integrating toxicity prediction early in the optimization funnel reduces late-stage attrition. This protocol compares two AI approaches for NP toxicity assessment [13].

Protocol 4.4: Computational Toxicity Risk Assessment

- Materials:

- Chemical structures of NP analogs (in SMILES or SDF format).

- Top-Down Models: Access to databases/APIs like EPA ToxCast or pre-trained Random Forest/Support Vector Machine (SVM) classifiers on known toxicity endpoints.

- Bottom-Up Models: Molecular docking software (AutoDock Vina, Glide) and protein structures of common toxicity targets (e.g., hERG channel, CYP450s).

- Method:

- Top-Down Approach (Data-Driven):

- Calculate molecular descriptors (e.g., Morgan fingerprints, molecular weight, logP) for each NP analog.

- Input descriptors into a pre-trained ensemble model (e.g., Random Forest) that classifies compounds as "toxic" or "non-toxic" for specific endpoints (e.g., hepatotoxicity, mutagenicity).

- The model provides a probability score, flagging high-risk compounds based on structural similarity to known toxicants [13].

- Bottom-Up Approach (Mechanism-Driven):

- For targets like the hERG potassium channel, perform molecular docking of the NP analog into the channel's binding site.

- Analyze the computed binding affinity (docking score) and binding pose. Strong, stable binding suggests a potential cardiotoxicity risk.

- Run short molecular dynamics (MD) simulations for top-scoring docked poses to assess binding stability over time [13].

- Consensus Risk Assessment:

- Triage compounds flagged as high-risk by either approach for experimental testing (e.g., in vitro hERG assay).

- Prioritize compounds that pass both in silico filters for further development.

- Top-Down Approach (Data-Driven):

- Expected Outcome: Early identification and elimination of NP analogs with high predicted toxicity, focusing resources on safer leads.

Diagram 3: AI Models for Predictive Toxicity Profiling of NP Candidates (max-width: 760px).

The integration of AI into NP discovery is moving beyond simple prediction to active design and generation. Key future directions include:

- Generative Biosynthetic Design: AI models that design novel, synthetically accessible NRPS/PKS enzyme assemblies to produce "non-natural" natural products with desired properties [11] [2].

- Digital Twins for NP Pharmacology: Creating computational models of disease pathways that simulate the polypharmacological effects of NP mixtures, predicting efficacy and side effects in silico before clinical trials [2].

- Large Language Models (LLMs) for Knowledge Integration: Using LLMs to mine centuries of ethnobotanical and clinical literature, creating structured knowledge graphs that propose new NP sources for modern diseases [2].

In conclusion, the unique and privileged chemical space of NPs remains indispensable for drug discovery. The convergence of advanced biosynthetic engineering, high-throughput analytical technologies, and sophisticated AI creates a powerful new paradigm. By framing NP complexity not as a barrier but as a rich, data-dense landscape for AI to navigate, researchers can systematically unlock its therapeutic potential. The protocols outlined here provide a roadmap for employing AI as the central engine for lead optimization, transforming NP discovery from a serendipitous endeavor into a predictable, engineered science.

Introduction: The Lead Optimization Imperative in Natural Product (NP) Drug Discovery Lead optimization represents the critical, resource-intensive phase in drug discovery where a promising initial “hit” compound is systematically modified into a preclinical drug candidate. For natural products (NPs), this stage is particularly complex and constitutes a major bottleneck [2]. NP scaffolds, while offering unparalleled biological relevance and structural diversity, often present significant optimization challenges including poor pharmacokinetics, synthetic complexity, and limited intellectual property scope [2]. The traditional iterative cycle of “Design-Make-Test-Analyze” (DMTA) is slow and costly, with industry averages of 5 years and millions of dollars to advance a single candidate [7]. Consequently, the global market for lead optimization services is expanding rapidly, projected to grow from USD 4.65 billion in 2025 to USD 10.26 billion by 2034, underscoring its economic and strategic significance [15]. This document frames lead optimization within a broader thesis on leveraging artificial intelligence (AI) to overcome these intrinsic NP challenges, compress timelines, and rationally design optimized, drug-like candidates from complex natural scaffolds [10] [16].

1. Quantitative Landscape: The Scale of the Bottleneck The inefficiency of traditional drug discovery, particularly at the lead optimization stage, is well-documented. The following tables quantify the time, cost, and success rate challenges, and illustrate the accelerating impact of AI integration.

Table 1: Traditional vs. AI-Accelerated Lead Optimization Metrics

| Metric | Traditional Process | AI-Accelerated Process | Data Source/Example |

|---|---|---|---|

| Discovery to Preclinical Timeline | ~5 years [7] | 18-24 months [7] | Insilico Medicine’s IPF drug [7] |

| DMTA Cycle Speed | Months per cycle | Weeks per cycle; ~70% faster design [7] [17] | Exscientia platform report [7] |

| Compounds Synthesized | High (100s-1000s) | 10x fewer compounds required [7] | Exscientia platform report [7] |

| Clinical Trial Success Rate | 8.1% overall (from Phase I) [10] | To be determined (Most AI drugs in early trials) [7] | Industry analysis [10] |

| Market Growth (Services) | — | 9.23% CAGR (2025-2034) [15] | Lead Optimization Services Market [15] |

Table 2: AI-Designed Molecules in Clinical Development (Representative Examples)

| Molecule | Company | Target/Pathway | Stage (as of 2025) | Indication |

|---|---|---|---|---|

| INS018_055 (Rentosertib) | Insilico Medicine | TNIK [10] | Phase IIa [2] [10] | Idiopathic Pulmonary Fibrosis (IPF) |

| GTAEXS617 | Exscientia | CDK7 [7] [10] | Phase I/II [7] | Solid Tumors |

| ISM3091 | Insilico Medicine | USP1 [10] | Phase I [10] | BRCA mutant cancer |

| REC4881 | Recursion | MEK [10] | Phase II [10] | Familial adenomatous polyposis |

| DSP1181 | Exscientia (with Sumitomo) | Serotonin Receptor | Phase I (First AI-designed drug) [7] [16] | Obsessive-Compulsive Disorder |

2. AI as a Strategic Enabler: Frameworks and Techniques AI and machine learning (ML) provide a multi-faceted toolkit to de-bottleneck NP lead optimization. These techniques move beyond simple prediction to enable generative design and multi-parameter balancing [16].

2.1 Core AI/ML Paradigms in Drug Discovery:

- Supervised Learning: Used for building Quantitative Structure-Activity Relationship (QSAR) models, predicting binding affinity, ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties, and toxicity endpoints. Algorithms include Random Forests and Support Vector Machines [10] [16].

- Unsupervised Learning: Applied to cluster large NP libraries, identify novel chemical scaffolds, and reduce dimensionality of high-throughput screening data [16].

- Deep Learning (DL): Employs graph neural networks (GNNs) and convolutional neural networks (CNNs) to directly learn from molecular structures (e.g., SMILES strings, 3D conformations) for superior activity and property prediction [2] [10].

- Generative AI & Reinforcement Learning (RL): The most transformative approach. Models like Generative Adversarial Networks (GANs) and Variational Autoencoders (VAEs) generate novel, synthetically accessible molecular structures de novo. RL agents are then used to optimize these structures against a multi-objective reward function (e.g., potency, selectivity, ADMET) [16].

2.2 Integrated AI Workflow for NP Optimization: A modern AI-driven workflow integrates these techniques into a cohesive, iterative cycle.

3. Application Notes & Detailed Experimental Protocols This section outlines specific protocols for implementing AI-enhanced lead optimization of NPs, from computational design to experimental validation.

Protocol 1: In Silico Multi-Parameter Optimization (MPO) of an NP Scaffold

- Objective: To optimize a bioactive NP hit for improved potency, solubility, and metabolic stability while maintaining selectivity.

- Materials: Chemical structure of NP hit (e.g., SDF file); software/platform for molecular docking (e.g., AutoDock Vina) and ADMET prediction (e.g., SwissADME, pKCSM); access to an AI generative chemistry platform or Python libraries (e.g., RDKit, DeepChem).

- Procedure:

- Initial Profiling: Calculate baseline molecular descriptors (cLogP, TPSA, HBD/HBA) and predict ADMET properties for the parent NP hit [17].

- Define MPO Scoring Function: Create a weighted scoring function. Example: Score = (0.4 * pIC50pred) + (0.2 * Solubilityscore) + (0.2 * MetabolicStabilityscore) + (0.1 * Selectivityindex) - (0.1 * Toxicity_alert).

- Generative Expansion: Using a generative model (e.g., VAE or GAN trained on drug-like molecules), generate a focused library of analogs (~5,000-10,000) by modifying permitted regions (e.g., side chains, terminal groups) of the NP core [16]. Employ RL to bias generation towards higher MPO scores.

- Virtual Screening & Ranking: Filter generated library for synthetic accessibility (SAscore < 4). Dock top ~1,000 candidates to the target protein structure. Integrate docking scores with predicted ADMET properties into the MPO function to rank candidates [10].

- Output: A priority list of 50-100 novel analog designs with predicted superior overall profiles.

- Validation: The success of this protocol is measured by the in vitro confirmation rate. A 2025 study demonstrated that such an approach could achieve >50-fold hit enrichment over traditional screening [17].

Protocol 2: Experimental Validation of AI-Designed NP Analogs

- Objective: To synthesize and biologically validate the top AI-prioritized NP analogs.

- Materials: Chemical synthesis resources; assay reagents for primary target potency (e.g., enzymatic assay); cell lines for cytotoxicity and selectivity assessment; equipment for solubility and metabolic stability (e.g., LC-MS/MS).

- Procedure:

- Synthesis: Employ parallel and/or automated medicinal chemistry to synthesize the top 20-30 AI-prioritized analogs [7].

- Primary Potency Assay: Test all synthesized compounds in a dose-response primary assay. Compare experimental IC50/EC50 values to AI-predicted values to validate the model.

- Selectivity & Cytotoxicity: Test active compounds against related off-targets and in a general cytotoxicity assay (e.g., HepG2 cells) to establish a preliminary therapeutic index.

- Mechanistic Validation - Target Engagement: Confirm direct target binding in a physiologically relevant system using the Cellular Thermal Shift Assay (CETSA). Treat cells with compound, heat to denature unbound protein, and quantify remaining soluble target via western blot or mass spectrometry [17].

- Early ADME Profiling: Perform high-throughput solubility (kinetic, pH-dependent) and microsomal stability assays. Data feeds back into AI models for retraining.

- Key Consideration: This protocol closes the DMTA loop. The generated experimental data must be curated and fed back into the AI models to improve their predictive accuracy for subsequent optimization cycles [2].

Protocol 3: Network Pharmacology Analysis for Polypharmacology of NP Optimized Leads

- Objective: To predict and validate the multi-target (polypharmacology) effects of an optimized NP lead, which is common and often therapeutically relevant for NPs [2].

- Materials: Chemical structure of optimized lead; access to network pharmacology databases (e.g., STITCH, SwissTargetPrediction); gene expression data from treated vs. untreated cells (RNA-seq).

- Procedure:

- In Silico Target Prediction: Use multiple inverse docking and similarity-based tools to predict a broad set of potential protein targets for the NP lead.

- Pathway & Network Construction: Map predicted targets onto protein-protein interaction and signaling pathway databases (e.g., KEGG). Build a compound-target-pathway-disease network to hypothesize synergistic mechanisms and potential adverse effects [2].

- Transcriptomic Validation: Treat a relevant cell line with the NP lead and perform RNA-sequencing. Conduct gene set enrichment analysis (GSEA) to identify significantly perturbed pathways. Overlap these with in silico predicted pathways for validation.

- Functional Multi-Target Assay: Design a multiplexed or phenotypic assay (e.g., high-content imaging) to confirm modulation of the key pathways identified.

- Significance: This systems-level approach aligns with the holistic mechanism of action of many NPs and can identify superior, multi-target optimized leads while flagging polypharmacology-related toxicity risks early.

The Scientist's Toolkit: Essential Reagents & Platforms

Table 3: Key Research Reagent Solutions for AI-Enhanced NP Lead Optimization

| Category | Item/Platform | Function in Lead Optimization | Example/Supplier |

|---|---|---|---|

| AI/Software | Generative Chemistry Platform | De novo design of novel, optimized analogs based on NP scaffolds. | Exscientia's Centaur Chemist [7], Insilico Medicine's Chemistry42 [10] |

| AI/Software | Molecular Modeling & Docking Suite | Predicts binding mode and affinity of designed analogs to the target. | Schrödinger Suite [7], AutoDock [17] |

| AI/Software | ADMET Prediction Tool | Virtually screens for pharmacokinetic and toxicity properties prior to synthesis. | SwissADME [17], pKCSM |

| Assay Technology | Cellular Thermal Shift Assay (CETSA) Kit | Empirically validates direct target engagement of compounds in live cells/tissues. | Commercial CETSA kits [17] |

| Assay Technology | High-Content Screening (HCS) System | Enables complex phenotypic and multi-target validation in disease-relevant cell models. | Used in Recursion's phenomics platform [7] |

| Chemistry | Automated Synthesis & Purification System | Accelerates the "Make" phase of DMTA cycles by enabling parallel synthesis of AI-designed compounds. | Integration in Exscientia's AutomationStudio [7] |

| Data Management | Integrated Lab Informatics Platform | Manages and structures experimental data from diverse assays for seamless AI model training and analysis. |

4. Integrated AI-NP Lead Optimization Workflow Diagram The following diagram synthesizes the computational and experimental protocols into a complete, iterative workflow for AI-driven NP lead optimization.

Conclusion: From Bottleneck to Launchpad Lead optimization remains the pivotal gatekeeper in NP-based drug development. However, the integration of AI—from predictive QSAR and ADMET models to generative molecular design—is fundamentally transforming this phase from a formidable bottleneck into a strategic, data-driven launchpad [2] [16]. By enabling the rational exploration of vast chemical spaces around privileged NP scaffolds and balancing multiple optimization parameters in silico, AI dramatically reduces the number of costly synthetic and experimental cycles [7]. The future of NP lead optimization lies in tightly closed-loop systems where AI not only designs molecules but also learns continuously from automated experimental feedback, accelerating the delivery of safer, more effective drugs derived from nature's chemical arsenal [2].

Why AI? The Compelling Case for Computational Power in Navigating NP Complexity

In computational complexity theory, NP (nondeterministic polynomial time) is a class of decision problems where a proposed solution can be verified quickly, but finding a solution from scratch is computationally difficult, with no known efficient algorithm [18] [19]. The core challenge, encapsulated in the famous P versus NP problem, is that many problems inherent to drug discovery—such as molecular docking, protein folding, and exploring vast chemical spaces—are NP-hard or NP-complete [20]. This means the computational resources required to find optimal solutions can grow exponentially with problem size, creating a fundamental bottleneck.

This is especially critical in natural product (NP) lead optimization. Natural products possess unparalleled stereochemical and topological complexity, making them potent drug candidates but also placing their systematic optimization firmly within the realm of NP-hard problems. Exhaustively evaluating all possible derivatives of a complex natural scaffold for potency, selectivity, and synthesizability is computationally intractable with traditional methods [21]. Artificial Intelligence (AI), particularly machine learning (ML), provides a powerful heuristic pathway to navigate this complexity. By learning from data and generating intelligent approximations, AI can efficiently traverse the massive search space of NP-inspired compounds, identifying promising regions for experimental validation and effectively sidestepping the brute-force limitations imposed by NP-completeness [18] [8]. This document details the application notes and protocols for leveraging AI to overcome these barriers in a research setting.

Quantitative Landscape: AI Performance in Navigating Chemical Complexity

The following tables summarize key quantitative data demonstrating AI's impact on addressing NP-complex problems in drug discovery, particularly in screening efficiency and predictive accuracy.

Table 1: Comparative Efficiency of Computational Screening Methods

| Screening Method | Library Size | Reported Hit Rate | Key Advantage | Source/Example |

|---|---|---|---|---|

| Traditional HTS | 10^5 - 10^6 compounds | Typically <1% [22] | Experimental readout | Conventional industry standard |

| Structure-Based Virtual Screening (SBVS) | 10^7 - 10^9 compounds | ~0.01-0.1% | Exploits 3D target structure | Docking billions of compounds [8] |

| AI-Powered Virtual Screening (e.g., ML QSAR) | 10^7 - 10^9 compounds | 2.7% - 22.5% [22] | Data-driven enrichment; learns from active/inactive compounds | Bayesian models for tuberculosis [22] |

| Generative AI Design | Effectively infinite (de novo) | N/A (novel chemotypes) | Creates novel, optimized structures guided by multi-parameter objectives | DDR1 kinase inhibitors discovered in 21 days [8] |

| Ultra-Large Docking + AI Iteration | >11 billion compounds | Identification of sub-nM leads | Combines physics-based docking with ML prioritization | GPCR ligand discovery [8] |

Table 2: AI Model Performance in Key NP Discovery Tasks

| AI Task | Model Type | Key Performance Metric | Implication for NP Complexity |

|---|---|---|---|

| Activity Prediction | Bayesian Learning | >10-fold enrichment in active identification [22] | Drastically reduces search space for NP analog optimization. |

| Synthesizability Scoring | Retrosynthesis Planner (e.g., AIZYNTH) | Predicts feasible routes for >80% of novel NPs [21] | Mitigates the combinatorial explosion of synthetic pathways. |

| "NP-Likeness" Prediction | Neural Network (e.g., NP-Scout) | Quantifies similarity to bioactive natural scaffolds [21] | Guides exploration of chemical space towards regions with higher probability of success. |

| Property Prediction (ADMET) | Graph Neural Networks (GNNs) | High accuracy (AUC >0.9) in early-stage toxicity prediction [21] | Enables parallel multi-parameter optimization, an NP-hard problem. |

| Quantum System Simulation | Neural Quantum States | Models >100 atoms with strong electron correlation [23] [24] | Provides a classical AI alternative to quantum computing for accurate molecular simulation. |

Application Notes & Experimental Protocols

3.1. Protocol: AI-Augmented Workflow for Natural Product Lead Optimization

This protocol outlines an end-to-end workflow for optimizing a hit natural product (NP) using AI.

I. Input Preparation & Data Curation

- Define the Optimization Objective: Clearly specify primary (e.g., IC50 against target X < 100 nM) and secondary goals (e.g., selectivity index >10, improved metabolic stability).

- Construct the Training Set: Assay data for the parent NP and available analogs. If data is scarce (<100 compounds), employ data augmentation: generate 2D/3D molecular descriptors (RDKit) and use them to find similar compounds in public databases (ChEMBL, PubChem) for transfer learning [21].

- Standardize & Featurize: Standardize structures (e.g., using

molvs). Generate features: a) Morgan fingerprints (radius=2, nBits=2048) for similarity; b) Graph-based features (atom/bond types) for GNNs; c) Physicochemical descriptors (LogP, TPSA, H-bond donors/acceptors).

II. Iterative AI-Driven Design Cycle

- Train a Predictive Ensemble Model: Use the curated dataset to train separate models for primary activity and key ADMET properties. A Random Forest or XGBoost model is recommended for initial, interpretable SAR. For complex relationships, use a Message-Passing Neural Network (MPNN). Implement k-fold cross-validation and assess performance using ROC-AUC and precision-recall curves.

- Generate Novel Candidates: Input the parent NP scaffold into a generative model (e.g., a Transformer or VAE fine-tuned on NP libraries) [21]. Condition the generation on desired properties using the trained predictive models as scoring functions (Reinforcement Learning or Bayesian optimization).

- Filter & Prioritize: Pass generated molecules through sequential filters:

- Synthetic Accessibility: Use a retrosynthesis planner (e.g., AiZynthFinder) to score and retain only molecules with a predicted feasible route [21].

- NP-Likeness: Use a dedicated scorer (e.g., NP-Scout) to ensure generated structures retain favorable NP-like characteristics [21].

- Multi-Parameter Optimization: Rank the filtered list using a weighted sum of predicted scores (e.g., 0.5Activity + 0.3Selectivity - 0.2*Toxicity).

- Experimental Validation & Feedback Loop: Synthesize and test the top 10-20 prioritized compounds. Integrate the new experimental results into the training set. Retrain the predictive models with the expanded data and initiate the next design cycle.

3.2. Protocol: Building a Bayesian Model for Hit Enrichment from Large Libraries

This protocol details the construction of a dual-event Bayesian model to prioritize compounds with high target activity and low cytotoxicity from ultra-large libraries [22].

I. Data Preparation

- Source Data: Collect two distinct sets: a) Actives: Compounds with confirmed activity (e.g., IC90 < 10 µM) against the target. b) Inactives: Compounds confirmed inactive at a relevant concentration.

- Fingerprint Generation: For every compound in both sets, calculate extended-connectivity fingerprints (ECFP4) using a toolkit like RDKit. These fingerprints serve as the molecular descriptor.

- Create a Dual-Event Training Set: Label each compound with two binary outcomes: 1)

Active(1 for actives, 0 for inactives), and 2)Selective(1 for actives with a selectivity index >10 in a cytotoxicity assay, 0 for all others) [22].

II. Model Development with Scikit-Learn

- Train Naïve Bayes Classifiers: Train two separate classifiers:

Model_Activity: Uses ECFP4 features to predict theActivelabel.Model_Selectivity: Uses ECFP4 features to predict theSelectivelabel.

- Calculate Bayesian Scores: For a new compound with fingerprint

X, the final enrichment score is a weighted sum:Score = logP(Active|X) + w * logP(Selective|X), wherewis a weight (e.g., 0.7) emphasizing selectivity. The probabilities are derived from the trained classifiers [22]. - Library Screening: Generate ECFP4 fingerprints for all compounds in the virtual library (e.g., ZINC20, Enamine REAL). Calculate the Bayesian score for each. Sort the entire library in descending order of this score.

- Validation: Use a held-out test set to evaluate enrichment. A successful model should identify >10% of true active-and-selective hits in the top 1% of the ranked library [22].

3.3. Protocol: Simulating Quantum Interactions for NP-Target Binding Using Neural Networks

For NPs acting on targets with strong electron correlation (e.g., metalloenzymes), accurate binding affinity prediction requires advanced quantum mechanical simulation. This protocol uses a neural network to approximate the solution to the Schrödinger equation [23] [24].

I. Training Data Generation via Density Functional Theory (DFT)

- System Selection: Choose a representative fragment of the NP-target binding site (50-100 atoms), focusing on the quantum-mechanically critical region (e.g., a transition metal ion and its ligands).

- Conformational Sampling: Use molecular dynamics to sample key conformational states of the bound complex.

- Run DFT Calculations: For each sampled structure, perform a DFT single-point energy calculation using a hybrid functional (e.g., B3LYP) and a moderate basis set (e.g., 6-31G*). The output is the electronic wavefunction and total energy. This creates a dataset of

(molecular structure, quantum energy)pairs [25].

II. Neural Quantum State (NQS) Model Training

- Model Architecture: Implement a neural network (e.g., a Restricted Boltzmann Machine or a recurrent neural network) to represent the complex-valued wavefunction Ψ(σ), where σ represents the configuration of electron spins [23].

- Training via Variational Monte Carlo (VMC):

- The network parameters are optimized to minimize the total energy expectation value,

<E> = Σ_σ |Ψ(σ)|^2 * E_loc(σ), whereE_locis the local energy derived from the Hamiltonian. - Sampling is performed via the Metropolis-Hastings algorithm using

|Ψ(σ)|^2as the probability distribution. - The network's gradients are computed, and parameters are updated using stochastic gradient descent.

- The network parameters are optimized to minimize the total energy expectation value,

- Inference for Novel Complexes: For a new NP analog, the optimized NQS model can predict the ground state energy of its bound complex with the target. The relative change in energy compared to the parent NP provides a quantum-mechanically informed estimate of binding affinity change.

Visualizing AI-NP Discovery Workflows and Relationships

AI-Augmented Natural Product Lead Optimization Pipeline

Bayesian Dual-Event Model for Library Screening & Enrichment

AI vs. Quantum Computing for Quantum Mechanical Simulation

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Key AI & Computational Tools for NP Lead Optimization

| Tool/Resource Category | Specific Examples & Vendors | Primary Function in NP Research |

|---|---|---|

| Natural Product Databases | COCONUT, NPASS, LOTUS, CMAUP | Provide curated structural and bioactivity data for training AI models and for dereplication [21]. |

| Cheminformatics & Modeling Software | Schrödinger Suite, OpenEye Toolkits, BIOVIA Discovery Studio | Perform structure-based design, molecular docking, and generate physicochemical descriptors. |

| Machine Learning Platforms | Atomwise (AI biophysics), Insilico Medicine (generative chemistry), Collaborative Drug Discovery (CDD) Vault | Offer specialized, pre-built AI models for virtual screening, toxicity prediction, and data management [22]. |

| Generative AI & De Novo Design | REINVENT, MolGPT, CogDL (graph-based) | Generate novel, synthetically accessible molecular structures inspired by NP scaffolds [21]. |

| Retrosynthesis Planning | AiZynthFinder (open-source), ASKCOS, IBM RXN for Chemistry | Predict feasible synthetic routes for AI-generated NP analogs, a critical feasibility filter [21]. |

| Quantum Chemistry & Simulation | PySCF (DFT), FermiNet (Neural QM), Qiskit (Quantum) | Calculate accurate electronic properties for NPs, especially those with complex metal interactions [25]. |

| High-Performance Computing (HPC) | Cloud GPU instances (AWS, GCP, Azure), Institutional Clusters | Provides the computational power necessary for training large AI models and running ultra-large virtual screens. |

Synergistic Foundations in Traditional Medicine and the Modern Discovery Challenge

The holistic use of multicomponent plant extracts in Traditional Medicine (TM) systems is not arbitrary but a sophisticated approach to managing complex diseases. Clinical and pharmacological evidence consistently demonstrates that the therapeutic efficacy of a crude herbal extract often surpasses that of its isolated, purified active constituents [26]. This phenomenon, termed pharmacokinetic synergy, is primarily attributed to the presence of coexisting "pharmacokinetic synergists" within the extract that significantly enhance the bioavailability of active compounds [26].

Quantitative analyses reveal stark differences in systemic exposure. For instance, the area under the curve (AUC) for the active compound liquiritigenin is 133 times higher when administered as part of a Glycyrrhiza uralensis extract compared to its pure form [26]. Similar profound enhancements are documented for other key phytochemicals, as summarized in Table 1. These synergists operate through defined biochemical mechanisms: improving aqueous solubility, inhibiting first-pass metabolism enzymes (e.g., CYP450) and efflux transporters (e.g., P-glycoprotein), and increasing membrane permeability [26]. Furthermore, some herbal extracts spontaneously form natural nanoparticles, which act as intrinsic drug delivery systems, further promoting absorption [26].

Table 1: Quantitative Enhancement of Bioavailability for Active Constituents in Herbal Extracts vs. Pure Form [26]

| Plant Source | Active Constituent | Key Pharmacokinetic Metric (AUC Extract / AUC Pure) |

|---|---|---|

| Glycyrrhiza uralensis (Licorice) | Liquiritigenin | 133 |

| Glycyrrhiza uralensis (Licorice) | Isoliquiritigenin | 109 |

| Artemisia annua (Sweet Wormwood) | Artemisinin | >40 |

| Salvia miltiorrhiza (Danshen) | Tanshinone IIA | 19.1 |

| Coptis chinensis (Coptis) | Berberine | 15.3 |

| Cnidium monnieri | Osthole | >13.5 |

| Panax ginseng (Ginseng) | Ginsenoside Re | 3.9 |

| Aconitum carmichaelii (Aconite) | Hypaconitine | 2.7 |

This creates a central paradox for modern drug discovery: while reductionist isolation identifies the active principle, it often discards the very context that ensures its biological efficacy. This complexity presents a formidable challenge for lead optimization in natural product research, where the goal is to develop a safe, effective, and manufacturable drug candidate. The multifactorial nature of synergy—involving multi-target effects, physicochemical modulation, and resistance interference—defies simple analysis [27]. Network pharmacology, which models drug actions within biological networks, has emerged as a key framework for understanding these holistic effects [28]. However, the vast combinatorial space of plant constituents, their targets, and disease pathways requires computational power beyond traditional methods. This is where Artificial Intelligence (AI) becomes an indispensable partner, offering tools to decode, predict, and optimize the synergistic potential inherent in traditional ethnobotanical knowledge.

AI as a Translational Bridge: From Traditional Knowledge to Optimized Leads

Artificial Intelligence, particularly machine learning (ML), deep learning (DL), and generative AI (GenAI), provides a suite of tools to systematize traditional knowledge and accelerate the discovery of synergistic natural product leads. The integration of AI establishes a powerful translational bridge from ethnobotanical data to testable pharmacological hypotheses and novel molecular designs.

Digitizing and Decoding Traditional Knowledge: A primary bottleneck is the fragmented, non-digitized state of much traditional knowledge. Generative AI models, including large language models (LLMs) equipped with natural language processing (NLP), can process vast corpora of historical texts, ethnobotanical field notes, and clinical records in multiple languages [29]. These systems can extract entities (e.g., plant names, ailments, preparation methods), identify recurring formulations for specific conditions, and construct knowledge graphs. These graphs map relationships between plants, their chemical constituents, traditional uses, and modern biomedical targets, creating a structured, queryable resource for hypothesis generation [29].

Predicting Synergy and Bioactivity: AI models trained on diverse datasets can predict the polypharmacology and potential synergistic interactions of plant extracts or specific compound mixtures. By integrating data on chemical structures, known biological activities, and network pharmacology pathways, ML algorithms can predict which combinations of compounds are likely to produce an effect greater than the sum of their parts [1] [28]. Furthermore, quantitative structure-activity relationship (QSAR) models and more advanced DL architectures can predict key ADMET (Absorption, Distribution, Metabolism, Excretion, Toxicity) properties early in the discovery pipeline [1]. This is critical for natural products, which often have suboptimal pharmacokinetic profiles when isolated. AI can flag compounds with poor predicted bioavailability or high toxicity risk, allowing researchers to prioritize leads with a higher chance of success or to understand which "synergist" compounds in a crude extract might be mitigating these issues.

Generative Design for Lead Optimization: This represents the most advanced AI complement to traditional knowledge. Generative AI models can be used to design new molecules inspired by natural product scaffolds. In the context of lead optimization, these models can be guided by multiple objectives:

- Potency and Selectivity: Using 3D structural information of target proteins, AI can generate molecules that optimally fit a binding site, a process enhanced by incorporating pharmacophore constraints [30].

- ADMET Optimization: Models can generate structural analogs that maintain activity while improving predicted solubility, metabolic stability, or reducing toxicity [30].

- Preserving Synergistic Features: By learning from the chemical space of known synergistic plant compounds, generative models can propose new molecules or simplified mixtures that retain key features responsible for multi-target or bioavailability-enhancing effects.

The workflow below illustrates this integrative AI-empowered pipeline, from knowledge mining to lead generation.

AI-Empowered Workflow for Synergistic Lead Discovery

Application Notes & Experimental Protocols

This section details practical methodologies for validating AI-generated hypotheses regarding natural product synergy, focusing on pharmacokinetic enhancement and multi-target activity.

Protocol 1: Validating Pharmacokinetic Synergy for an AI-Prioritized Plant Extract

- Objective: To experimentally confirm AI-predicted enhanced bioavailability of a marker compound in a crude extract versus its purified form.

- Background: AI models analyzing phytochemical and metabolic data may predict that a specific plant extract contains solubility enhancers (e.g., saponins) or metabolic inhibitors that boost a compound's bioavailability [26].

- Materials: See "Research Reagent Solutions" table below.

- Method:

- Sample Preparation: Prepare three test articles: (A) AI-prioritized crude plant extract (standardized to contain dose X of marker compound M), (B) purified marker compound M at dose X, and (C) a positive control formulation (e.g., compound M with a known solubilizer).

- In Vitro Solubility & Permeability: Determine equilibrium solubility of M in FaSSIF (Fasted State Simulated Intestinal Fluid) for each article. Assess permeability using a Caco-2 cell monolayer model. Measure apparent permeability (Papp) and monitor for efflux transporter activity (e.g., P-gp) using specific inhibitors.

- In Vitro Metabolism: Incubate articles with pooled human liver microsomes (HLM) or recombinant CYP enzymes. Quantify the depletion rate of M over time to calculate intrinsic clearance. Compare metabolic stability.

- In Vivo Pharmacokinetics: Administer articles (A, B, C) orally to rodent cohorts (n=6). Collect serial blood plasma samples over 24 hours. Quantify M and major metabolites using a validated LC-MS/MS method.

- Data Analysis: Calculate key PK parameters: AUC0-t, Cmax, Tmax, and half-life (t1/2). Perform statistical comparison (e.g., ANOVA) of AUC and Cmax for the crude extract (A) versus pure compound (B). A significant increase (p<0.05) confirms pharmacokinetic synergy. Isobologram analysis can be used to model the interaction between M and suspected synergists if they are co-administered in purified form [27].

Protocol 2: Experimental Workflow for Multi-Target Synergy Validation

- Objective: To test an AI-predicted polypharmacology hypothesis for a natural product mixture across multiple disease-relevant pathways.

- Background: Network pharmacology analysis may suggest a plant formulation acts on targets T1, T2, and T3 within a specific disease pathway [28]. This protocol validates that activity.

- Method:

- Target-Based Assays: Conduct orthogonal in vitro assays for each predicted primary target (T1, T2, T3). Examples include enzymatic inhibition assays, binding displacement assays (SPR, FRET), or functional cellular reporter assays.

- Fractionation & Deconvolution: If activity is confirmed, employ bioassay-guided fractionation. Sequentially fractionate the active extract (e.g., by liquid-liquid partitioning, followed by HPLC). Test each fraction in the primary target assays to identify active fractions.

- Compound Identification: Perform LC-HRMS (Liquid Chromatography-High Resolution Mass Spectrometry) and NMR (Nuclear Magnetic Resonance) spectroscopy on active fractions to identify constituent compounds.

- Combination Index Analysis: For identified pure compounds (P1, P2, P3...), assess their individual and combined effects using the Chou-Talalay Combination Index (CI) method [27].

- Design a matrix of dose combinations for the compounds.

- Measure the dose-effect curve for each compound alone and for combinations.

- Use software (e.g., CompuSyn) to calculate the CI for a given effect level (e.g., ED50). CI < 1 indicates synergy, CI = 1 indicates additivity, and CI > 1 indicates antagonism.

- Systems-Level Validation: Finally, test the original crude extract and the optimal combination of pure compounds in a relevant phenotypic assay or disease model (e.g., an inflamed cell model, a rodent model of the disease) to confirm the functional, synergistic outcome predicted by the AI model.

Table 2: Research Reagent Solutions for Synergy Validation Experiments

| Reagent / Material | Function in Protocol | Key Characteristics & Purpose |

|---|---|---|

| FaSSIF (Fasted State Simulated Intestinal Fluid) | In vitro solubility assessment [26] | Mimics intestinal fluid composition; predicts dissolution and solubilization potential of compounds. |

| Caco-2 Cell Line | In vitro permeability & transport assessment [26] | Human colon adenocarcinoma cell line that differentiates to model intestinal epithelium; used for Papp and efflux studies. |

| Pooled Human Liver Microsomes (HLM) | In vitro metabolic stability assay [26] | Contains a physiological mix of CYP450 enzymes; predicts phase I metabolic clearance. |

| Specific CYP450 or P-gp Inhibitors (e.g., Ketoconazole for CYP3A4, Verapamil for P-gp) | Mechanistic pharmacokinetic studies [26] | Used to identify specific enzymes or transporters involved in compound metabolism/efflux. |

| LC-MS/MS System | Bioanalytical quantification | Gold standard for sensitive and specific quantification of drugs and metabolites in biological matrices (e.g., plasma). |

| Recombinant Target Proteins (e.g., kinases, receptors) | Target-based biochemical assays [30] | Provide pure protein for high-throughput screening of inhibitory or binding activity. |

| CompuSyn or Similar Software | Data analysis for synergy [27] | Implements the Chou-Talalay method for calculating Combination Index (CI) and dose-reduction index (DRI). |

The experimental pathway for validating multi-target synergy, from in silico prediction to mechanistic confirmation, is visualized below.

Experimental Pathway for Multi-Target Synergy Validation

Future Directions and Ethical Integration

The convergence of AI and ethnobotany is poised to deepen, driven by advancements in multimodal AI models that can integrate text, chemical structures, spectral data (NMR, MS), and biological images [29]. A critical frontier is the application of generative AI for designing optimized polypharmaceutical formulations. These systems could propose novel, simplified combinations of natural product-inspired compounds that recapitulate or enhance the synergy of a complex crude extract while improving pharmaceutical properties.

This powerful integration must be guided by a robust ethical framework. Key principles include:

- Equitable Benefit-Sharing: Ensuring that communities providing traditional knowledge are recognized as stakeholders and benefit from any resulting discoveries [29]. This aligns with frameworks like the CARE (Collective Benefit, Authority to Control, Responsibility, Ethics) principles for Indigenous data governance.

- Knowledge Sovereignty and Consent: Obtaining prior informed consent for the use of traditional knowledge in AI training sets and respecting the cultural context and restrictions surrounding sacred or specialized knowledge [29] [31].

- Combating Bias and Ensuring Reproducibility: Actively working to overcome biases in training data (e.g., over-representation of well-studied plants) and implementing rigorous, transparent validation protocols to ensure AI predictions are reliable and experimentally testable [31].

By adhering to these principles, the field can move towards an inclusive and data-driven future. In this model, AI acts not as a replacement for traditional knowledge or pharmacological rigor, but as a catalytic amplifier—preserving cultural heritage, deciphering complex synergies, and accelerating the translation of time-tested botanical resources into the next generation of optimized, effective, and safe medicines.

From Data to Drug Candidates: Core AI Methodologies Powering NP Lead Optimization

The integration of Artificial Intelligence (AI) into drug discovery represents a paradigm shift, moving from serendipitous finding and high-throughput brute-force screening to a predictive, knowledge-driven science. Within the specific context of a broader thesis on AI for lead optimization in natural product discovery, this application note details the protocols and models for virtual screening and prioritization. Natural products (NPs) offer unparalleled structural diversity and bioactivity but are hindered by complex mixtures, unknown mechanisms, and labor-intensive purification processes [2]. AI, particularly machine learning (ML) and deep learning (DL), directly addresses these bottlenecks by enabling the prediction of bioactivity and potential targets from chemical structure, thereby intelligently prioritizing which fractions or compounds to isolate and test [2] [32].

This document outlines the foundational ML models, provides detailed application protocols from published case studies, and presents the essential tools and visual workflows that constitute a modern, AI-augmented pipeline for natural product research. The ultimate goal is to compress the Design-Make-Test-Analyze (DMTA) cycle, reducing the time and cost from plant extract or microbial broth to validated lead compound [33].

Foundational Machine Learning Models for Prediction

Selecting the appropriate ML model is critical and depends on the nature of the data (e.g., continuous activity values vs. binary active/inactive labels) and the desired interpretability. The following models form the core toolkit for building predictive virtual screening platforms.

- Tree-Based Ensembles (Random Forest, Gradient Boosting): These are highly effective for structured data, such as molecular fingerprints or descriptors. They work by constructing multiple decision trees during training and outputting a consensus prediction (e.g., mean regression value or majority vote for classification). They are robust to outliers, can model non-linear relationships, and provide intrinsic feature importance scores, which help identify which chemical substructures contribute most to activity—a key form of interpretability for chemists [34].

- Deep Neural Networks (DNNs) & Graph Neural Networks (GNNs): DNNs excel at learning complex, hierarchical patterns from high-dimensional data. In drug discovery, they are often applied to molecular fingerprints or one-hot encoded sequences. GNNs are a specialized architecture that operate directly on a molecule's graph structure (atoms as nodes, bonds as edges), learning representations that encapsulate both local chemical environments and global topology. This makes them exceptionally powerful for predicting properties inherent to molecular structure [35] [32].

- Support Vector Machines (SVM): Effective for binary classification tasks (e.g., active vs. inactive), SVMs find the optimal hyperplane that separates classes in a high-dimensional space. They perform well with clear margins of separation but can be less interpretable than tree-based methods [34].

The performance of these models is quantitatively assessed using standard metrics, as illustrated in the comparative table below, which summarizes results from recent NP screening studies [36] [37].

Table 1: Performance Metrics of ML Models in Representative Natural Product Virtual Screening Studies

| Study Focus | Best-Performing Model | Key Performance Metrics | Dataset & Application |

|---|---|---|---|